Abstract

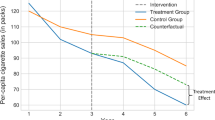

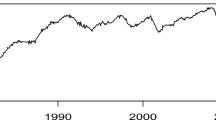

In this paper, we consider composite quantile estimation for the partial functional linear regression model with errors from a short-range dependent and strictly stationary linear processes. The functional principal component analysis method is employed to estimate the slope function and the functional predictive variable, respectively. Under some regularity conditions, we obtain the optimal convergence rate of the slope function, and the asymptotic normality of the parameter vector. Simulation studies demonstrate that the proposed new estimation method is robust and works much better than the least squares based method when there are outliers in the dataset or the autoregressive error distribution follows a heavy-tailed distribution. Finally, we apply the proposed methodology to electricity consumption data.

Similar content being viewed by others

References

Aneiros-Pérez G, Vieu P (2008) Nonparametric time series prediction: a semi-functional partial linear modeling. J Multivar Anal 99(5):834–857

Aneiros-Pérez G, Raña P, Vieu P, Vilar J (2018) Bootstrap in semi-functional partial linear regression under dependence. Test 27(3):659–679

Beran J, Liu H (2016) Estimation of eigenvalues, eigenvectors and scores in FDA models with dependent errors. J Multivar Anal 147:218–233

Bosq D (2012) Linear processes in function spaces: theory and applications. Springer, New York

Cai T, Hall P (2006) Prediction in functional linear regression. Ann Stat 34(5):2159–2179

Cardot H, Crambes C, Sarda P (2005) Quantile regression when the covariates are functions. J Nonparametr Stat 17(7):841–856

Chen K, Müller HG (2012) Conditional quantile analysis when covariates are functions, with application to growth data. J R Stat Soc Ser B 74(1):67–89

Hall P, Hooker G (2016) Truncated linear models for functional data. J R Stat Soci Ser B 78(3):637–653

Hall P, Horowitz JL (2007) Methodology and convergence rates for functional linear regression. Ann Stat 35(1):70–91

Horváth L, Kokoszka P (2012) Inference for functional data with applications. Springer, New York

Imaizumi M, Kato K (2018) PCA-based estimation for functional linear regression with functional responses. J Multivar Anal 163:15–36

Jiang X, Jiang J, Song X (2012) Oracle model selection for nonlinear models based on weighted composite quantile regression. Stat Sin 22:1479–1506

Kato K (2012) Estimation in functional linear quantile regression. Ann Stat 40(6):3108–3136

Knight K (1998) Limiting distributions for \(L_1\) regression estimators under general conditions. Ann Stat 26(2):755–770

Kong D, Xue K, Yao F, Zhang H (2016) Partially functional linear regression in high dimensions. Biometrika 103(1):147–159

Lovric M (2011) International Encyclopedia of statistical science. Springer, New York

Lu Y, Du J, Sun Z (2014) Functional partially linear quantile regression model. Metrika 77(2):317–332

Ma HQ, Bai Y, Zhu ZY (2016) Dynamic single-index model for functional data. Sci China Math 59(12):2561–2584

Shin H (2009) Partial functional linear regression. J Stat Plan Inference 139(10):3405–3418

Tang QG, Cheng LS (2014) Partial functional linear quantile regression. Sci China Math 57(12):2589–2608

Tang Y, Song X, Zhu Z (2015) Variable selection via composite quantile regression with dependent errors. Stat Neerl 69(1):1–20

Wu WB (2007) M-estimation of linear models with dependent errors. Ann Stat 35(2):495–521

Yao F, Müller HG, Wang JL (2005) Functional linear regression analysis for longitudinal data. Ann Stat 33(6):2873–2903

Yu D, Kong L, Mizera I (2016a) Partial functional linear quantile regression for neuroimaging data analysis. Neurocomputing 195(26):74–87

Yu P, Zhang Z, Du J (2016b) A test of linearity in partial functional linear regression. Metrika 79(8):953–969

Yu P, Zhu Z, Zhang Z (2018) Robust exponential squared loss-based estimation in semi-functional linear regression models. Comput Stat. https://doi.org/10.1007/s00180-018-0810-2

Yuan M, Cai T (2010) A reproducing kernel Hilbert space approach to functional linear regression. Ann Stat 38(6):3412–3444

Zhang L, Wang HJ, Zhu Z (2017) Composite change point estimation for bent line quantile regression. Ann Inst Stat Math 69(1):145–168

Zhou J, Chen Z, Peng Q (2016) Polynomial spline estimation for partial functional linear regression models. Comput Stat 31(3):1107–1129

Zou H, Yuan M (2008) Composite quantile regression and the oracle model selection theory. Ann Stat 36(3):1108–1126

Acknowledgements

This work was supported by National Natural Science Foundation of China (Grant Nos. 11671096, 11690013, 11731011, 11771032).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Proofs of the Theorems

Proofs of the Theorems

Proof of Theorem 1

Let \(\delta _n=n^{-\frac{2b-1}{2(a+2b)}}\), \(\varvec{S}_n=\delta _n^{-1}(\varvec{\hat{\alpha }}-\varvec{\alpha }_0)\), \(\varvec{V}_n=\delta _n^{-1}(\varvec{\hat{\gamma }}-\varvec{\gamma }_0)\), \(W_{nk}=\delta _n^{-1}(\hat{b}_k-b_{0k})\), \(\varvec{W}_n=(W_{n1},\ldots , W_{nK})^T\), \(r_i=\int _{0}^{1}\beta _0(t)X_i(t)dt-{\hat{\varvec{U}}}_i^T\varvec{\gamma }_0\), \(\mathcal {F}_n=\Big \{(\varvec{S}_n,\varvec{V}_n,\varvec{W}_n){:}\,\big \Vert (\varvec{S}_n^T,\varvec{V}_n^T, \varvec{W}_n^T)^T\big \Vert =L\Big \}\), where L is a large enough constant, \({\varLambda }_n=\text {diag}(\hat{\lambda }_1,\ldots , \hat{\lambda }_m)\), \(\varvec{{\varPi }}=E(\varvec{z}_i\varvec{z}_i^T)\), \(T_n=\Big \{\left( \varvec{z}_1,X_1(\cdot )\right) ,\ldots , \left( \varvec{z}_n,X_n(\cdot )\right) \Big \}\). We next show that, for any given \(\eta >0\), there exists a sufficient large constant \(L=L_\eta \) such that

This implies with the probability at least \(1-\eta \) that there exists a local minimizer \(\varvec{\hat{\alpha }}\) and \(\varvec{\hat{\gamma }}\) in the ball \(\Big \{(\varvec{S}_n,\varvec{V}_n,\varvec{W}_n){:}\,\big \Vert (\varvec{S}_n^T,\varvec{V}_n^T, \varvec{W}_n^T)^T\big \Vert \le L\Big \}\) such that \(\Vert \varvec{\hat{\alpha }}-\varvec{\alpha }_0\Vert =O_p(\delta _n)\) and \(\Vert \varvec{\hat{\gamma }}-\varvec{\gamma }_0\Vert =O_p(\delta _n)\), which is exactly what we want to show.

Firstly, by \( \Vert v_j-\hat{v}_j\Vert ^2=O_p(n^{-1}j^2)\) (see e.g., Shin 2009; Yu et al. 2016a), one has

For \(\text {A}_1\), by conditions C1, C2 and the Hölder inequality, it is obtained

As for \(\text {A}_2\), due to

one has

Taking these together, we have

Let

By the Knight identity (1998)

we have

Then we can write \(P_n(\varvec{S}_n,\varvec{V}_n,\varvec{W}_n)\) as follows:

where

Note that, by conditions C2 and C4, respectively, one has \(\Vert {\varLambda }_n\Vert =O(1)\), \(\Vert \varvec{{\varPi }}\Vert =O(1)\), and \(\lim _{n\rightarrow \infty }E\varvec{B}_n^T\varvec{V}_n=0\), \(E\big \{(\varvec{B}_n^T\varvec{V}_n)^2\big \}=\varvec{V}_n^TE(\varvec{B}_n\varvec{B}_n^T)\varvec{V}_n=O(\Vert \varvec{V}_n\Vert ^2)\). Then we have \(\varvec{B}_n^T\varvec{V}_n=O_p(\Vert \varvec{V}_n\Vert )\). Similarly, using condition C4, we get \(\varvec{A}_n^T\varvec{S}_n=O_p(\Vert \varvec{S}_n\Vert )\). This combined with (19) leads to

Invoking condition C9, a simple calculation yields

Similarly,

Hence

Then, we can obtain

Taking these together, we can obtain that \(P_n(\varvec{S}_n,\varvec{V}_n,\varvec{W}_n)\) is dominated by the positive quadratic term \(n\delta _n^2\sum _{k=1}^{K}f(b_{0k})\left( W^2_{nk}+\varvec{V}_n^T{\varLambda }_n\varvec{V}_n+\varvec{S}_n^T{\varPi }\varvec{S}_n\right) \) as long as L is large enough. Hence, Eq. (15) holds, and there exists local minimizer \(\hat{\varvec{\gamma }}\) such that

Observe that

Invoking Eq. (16), condition C2, the orthogonality of \(\{\hat{v}_j\}\) and \( \Vert v_j-\hat{v}_j\Vert ^2=O_p(n^{-1}j^2)\), one has

Then, combining Eqs. (23)–(25), we can complete the proof of Theorem 1. \(\square \)

Proof of Theorem 2

According to Theorem 1, we know that, as \(n\rightarrow \infty \), with probability tending to 1, \( Q_n(\varvec{\alpha }, \varvec{\gamma }, \varvec{b})\) attains the minimal value at \((\hat{\varvec{\alpha }},\hat{\varvec{\gamma }},\hat{\varvec{b}})\). Then, we have the following score equations

where \(\psi _{\tau _k}(u)=\rho '_{\tau _k}(u)=\tau _k-{I}(u<0)\) is score function. By Eqs. (26) and (27), we have

Further, we can write Eq. (28) as

where

Invoking Taylor expansion and Theorem 1, a simple calculation yields

By direct calculation of the mean and variance, we can show, as in Jiang et al. (2012), that \(B^{(k)}_{n2}=o_p(\delta _n).\) Thus, we have

Similarly, we have

Let \({\varPhi }_n=\frac{1}{n}\sum _{i=1}^{n}\hat{\varvec{U}}_i\hat{\varvec{U}}_i^T\), \({\varPsi }_n=\frac{1}{n}\sum _{i=1}^{n}\hat{\varvec{U}}_i\varvec{z}_i^T\), \({\varUpsilon }_n=\frac{1}{n}\sum _{i=1}^{n}\hat{\varvec{U}}_i\left[ I(e_i<b_{0k}+r_i)-\tau _k\right] \). By Eq. (32), we have

Substituting Eq. (33) into Eq. (31), we can obtain that

Note that

According to Eqs. (34)–(36), it is easy to show that

where \(\widetilde{\varvec{z}}_i={\varvec{z}}_i-{{\varPsi }}_n^T{\varPhi }_n^{-1}\hat{\varvec{U}}_i\). According to Lemma 1 in Yu et al. (2016b) and condition C6, as \({n \rightarrow \infty }\), one has

Note that

As the random errors are from a stationary process, thus the correlation between \(e_i\) and \(e_j\) only depends on \(|i-j|\), and thus, \(E(\eta _i\eta _{i+s})=E(\eta _1\eta _s)\). Invoking conditions C6–C7, we have

Using the central limits theorem, we have

where \(\varvec{{\varSigma }}_{\text {CQR}}=\frac{\sum _{k,k'=1}^{K}\min (\tau _k,\tau _{k'})\left( 1-\max (\tau _k,\tau _{k'})\right) }{\left( \sum _{k=1}^{K}f({b_{0k}})\right) ^2}\varvec{{\varSigma }}^{-1}\varvec{{\varXi }}\varvec{{\varSigma }}^{-1}\). We complete the proof of Theorem 2. \(\square \)

Rights and permissions

About this article

Cite this article

Yu, P., Li, T., Zhu, Z. et al. Composite quantile estimation in partial functional linear regression model with dependent errors. Metrika 82, 633–656 (2019). https://doi.org/10.1007/s00184-018-0699-3

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00184-018-0699-3

Keywords

- Composite quantile estimation

- Functional principal component analysis

- Functional linear regression model

- Short-range dependence

- Strictly stationary