Abstract

In this paper, we solve a system of fractional differential equations within a fractional derivative involving the Mittag-Leffler kernel by using the spectral methods. We apply the Chebyshev polynomials as a base and obtain the necessary operational matrix of fractional integral using the Clenshaw–Curtis formula. By applying the operational matrix, we obtain a system of linear algebraic equations. The approximate solution is computed by solving this system. The regularity of the solution investigated and a convergence analysis is provided. Numerical examples are provided to show the effectiveness and efficiency of the method.

Similar content being viewed by others

1 Introduction

Despite the long history of fractional calculus in the field of mathematics, a large amount of real world applications of this field has appeared mainly during the last decades. This type of calculus has become so wide that almost no branch of science and engineering cannot be found without fractional calculus and a lot of books have been written in these regards (see for example Refs. [1–4] and the references therein). Increasing the use of fractional calculations has increased the variety of questions and resulted in various basic definitions for fractional integral and derivative. We recall that the Riemann–Liouville definition entails physically unacceptable initial conditions [1]; conversely for the Liouville–Caputo fractional derivative, the initial conditions are expressed in terms of integer-order derivatives having direct physical significance [1, 5]. A few years ago Caputo and Fabrizio [6] have opened the following subject of debate within the mathematical community: is it possible to describe all nonlocal phenomena within the same basic kernels, namely the power kernel involved within the definition of Riemann–Liouville derivative and some other few basic fractional derivatives. If we analyze, step by step, the way Caputo has introduced his classical fractional derivative [5], we will realize that during the last step he generalized the classical integral to the fractional Riemann–Liouville integral (see for more details [5]). After that, about almost 50 years later, he kindly asked the mathematical community how the Gamma function appears into the description of real phenomena, and why only some existing fractional operators are required by experiments [6]. Immediately, Nieto and Losada found, by using the Laplace transform, the associated integral of the so-called Caputo–Fabrizio fractional derivative [7].

Also, regarding the extension of the Liouville–Caputo derivative reported recently in [8, 9], it was suggested a new fractional-order integral and derivative involving the Mittag-Leffler function with nonlocal property in [10]. This concept was tested with success in many fields including chaotic behavior, epidemiology, thermal science, hydrology, mechanical engineering and biology [11–23].

The dynamics of many applied physical or biological problem can be modeled by a system of fractional differential equations (FDEs) (for example see [24] for a relaxation system). A system of Mittag-Leffler non-singular FDEs can be described by

where A is a constant matrix of dimension \(\nu\times\nu\), \(\nu\in \mathcal{N}\) is the dimension of the system, \(\mathbf{f}:\mathcal{R} \rightarrow\mathcal{R}^{\nu}\) is a known vector-valued function, \({_{\quad0 }^{ABC}D^{\alpha}_{t}}\mathbf{y}(t)\) is a fractional derivative involving Mittag-Leffler functions (also known as AB type [10]) and \(\mathbf{y}: \mathcal{R} \rightarrow \mathcal{R}^{\nu}\) is the unknown function. Recently, it was observed that the system (1) is more successful for modeling of suspension concentration distribution in turbulent flows than other models [25].

The conditions for the existence and uniqueness of the solution to exponential non-singular system can be found in [26]. The consistency condition

is one of them. It seems that this condition is also important for system (1) with Mittag-Leffler non-singular kernels and as mentioned in [27], we should consider the initial condition carefully. This imposes some restriction on system (1). However, due to the important dynamics of the solutions of system (1), it is significant to solve the system (1) analytically or numerically [28]. Because of the novelty and the newness of this topic there are a few articles on this subject. We found only, a linear piecewise polynomial base method for solving this system numerically [29]. However, solving this system with Chebyshev base polynomials has not been studied yet.

The spectral methods using Chebyshev polynomials are well known for differential and partial differential equations [30–33]. For smooth problems in simple geometries, they offer exponential rates of convergence or spectral accuracy. An important advantage of these methods over finite-difference methods is that computing the coefficient of the approximation, completely determines the solution at any point of the desired interval. Therefore, numerical solution of the system (1) using operational matrix spectral methods based on Chebyshev polynomials is very important.

The discrete orthogonality properties of the Chebyshev polynomials are the advantages over other orthogonal polynomials like Legendre polynomials. Also, the zeros of the Chebyshev polynomials are known analytically. These properties lead to the Clenshaw–Curtis formula which makes integration easy. We use this formula to obtain the operational matrix of the fractional integration.

The aim of this paper is to obtain an efficient numerical method to solve the system (1) using the operational matrix based on Chebyshev polynomials. For this purpose, we obtain the operational matrix approximation for fractional integral operator. We transform the system (1) to a system of weak singular integral equation and then using an operational matrix we obtain a system of linear algebraic equations. Solving the obtained algebraic system, we get the numerical approximation. We investigate the existence and convergence of the numerical solution. To this end, we also study the regularity of the exact solutions.

The structure of this paper is as follows. In Sect. 2, we review new definition of the fractional calculus and related results and the Chebyshev polynomials. In Sect. 3, we review the approximations of multi-variable functions in terms of the shifted Chebyshev polynomials and we obtain the operational matrix approximation for fractional integral operator. In Sect. 4, we propose a spectral method based on the operational matrix for solving system of Mittag-Leffler, non-singular FDEs. In Sect. 5, we obtain the regularity of the solutions. In Sect. 6, we study a convergence analysis for proposed method without discretization. In Sect. 7, we obtain the convergence results for discretized version. Finally, in Sect. 8, we provide some numerical examples to show the efficiency of the introduced method, and we present a comparison between the solution of the AB type FDEs and the Liouville–Caputo type FDEs.

2 Definitions and preliminaries

In this section, we first recall some basic definitions related and results to the Mittag-Leffler function [34]. Then we recall some basic definitions and results related to the new non-singular fractional derivative and integral formulas [10].

2.1 The Mittag-Leffler function

The Mittag-Leffler function is the cornerstone of fractional calculus. Several books and excellent papers [34–37] describe the importance of these types of operators. The concept of Mittag-Leffler calculus was introduced in [10] and the integral associated to the non-singular fractional operator with Mittag-Leffler kernel was found by using the Laplace transform [38].

Throughout the paper, the symbol \(E_{\alpha}\) shows the one parameter Mittag-Leffler function [39] defined by

The two-parameter Mittag-Leffler function is defined as

Here, the notation Γ denotes the gamma function. An interesting book containing the history, applications and the effect of the gamma functions on the progress in mathematics and the progress in describing the real phenomena can be found in [40].

Theorem 2.1

([35])

Let \(\rho, \mu, \upsilon, \omega\in\mathcal{C}\) (\(\operatorname {Re}(\rho), \operatorname{Re}(\mu), \operatorname{Re}(\upsilon)>0\)).Then

2.2 The non-singular fractional derivative and integral involving Mittag-Leffler kernel

We use a Sobolev space defined by

to define the fractional derivative as follows.

Definition 2.2

For \(f\in{\mathrm{H}}^{1}[t_{0},t_{f}]\) and \(0< \alpha<1\), the (left) fractional derivative involving the Mittag-Leffler kernel in the Liouville–Caputo sense is defined by [10]

where \(B(\alpha)\) is a normalization function obeying \(B(0)=B(1)=1\).

The associated fractional integral is also defined by [10]

The fractional integral of \((t-t_{0})^{\beta}\) (\(\beta>-1\)) for \(\alpha >0\) is

and

The Newton–Leibniz formula for this fractional derivative and integral is obtained in [38, 41].

Proposition 2.3

For \(0<\alpha<1\), we have [38]

From Theorem 2.1, the fractional derivative of a monomial \(t^{\beta}\) (\(\beta>0\)) is

2.3 Chebyshev polynomials

Here, we review some basic definitions and results related to the Chebyshev polynomials [30, 42].

Definition 2.4

Let \(x=\cos(\theta)\). Then the Chebyshev polynomial \(T_{n}(x)\), \(n\in \mathbb{N}\cup\{0\}\), over the interval \([-1,1]\), is defined by the relation

The Chebyshev polynomials are orthogonal with respect to the weight function \(w(x)=\frac{1}{\sqrt{1-x^{2}}}\) and the corresponding inner product is

The well-known recursive formula

with \(T_{0}(x)=1\) and \(T_{1}(x)=x\) is important for numerical computing of these polynomials, whereas we may use

to compute Chebyshev polynomials in analysis. Since the range of interest of the problem (1) is \([0,T]\), we can define the shifted Chebyshev polynomials \(T^{*}_{n}(x)\) by

with corresponding weight function \(w^{*}(x)=w(\frac{2}{T}x-1)\). Using \(T_{n}(2x-1)=T_{2n}(\sqrt{x})\) (see [30], Sect. 1.3) we could compute the shifted Chebyshev polynomials by

The discrete orthogonality of Chebyshev polynomials leads to the Clenshaw–Curtis formula:

where \(x_{k}\) for \(k=1,\ldots, N+1\) are zeros of \(T_{N+1}(x)\). Therefore, we have

Also, the norm of \(T^{*}_{n}(x)\),

will be of importance later.

3 Function approximation

A function \(f(t)\) defined over the interval \([0,T]\), may be expanded as

where \({p}_{N}:C[0,T]\mapsto\pi_{N}\) (\(N\in\mathcal{N}\)), is an orthogonal projection, \(\pi_{N}\) is the space of polynomials with degree not exceeding N, C and Ψ are the matrices of size \((N+1)\times1\)

and

The following error estimate for the Dini–Lipschitz continuous function f provides the convergence of approximation by Chebyshev polynomials.

Theorem 3.1

([30] (Theorem 5.7))

Let \(g\in\mathbb{C}[0,T]\) and g satisfy the Dini–Lipschitz condition, i.e.,

where ω is the modulus of continuity. Then \(\|g-p_{n}g\|_{\infty }\rightarrow0 \) as \(n\rightarrow\infty\).

Theorem 3.2

Let \(0<\alpha<1\) and \(N,M\in\mathbb{N}\). Then

where \(P_{\alpha,M}=(p_{n,r})\) is the operational matrix of dimension \(N\times N\) and its elements can be computed using

for \(r=1, \ldots, N\), and

where

for \(n=1, \ldots, N\) and \(r=0, \ldots, N\).

Proof

Taking the fractional integral on both sides of (11), we get

where

for \(n>0\), and

For \(n=0\), it can easily be checked that

Now, applying (15) to the \(f(x)=x^{n-k+\alpha}\), we obtain

By substituting the coefficients of \(T^{*}_{r}(x)\) from (19) into (17) and (18) we obtain the desired result. □

Remark 3.3

For \(f\in C[-1,1]\), the maximum error of Clenshaw–Curtis formula is less than \(4\|f-p_{N}f\|_{\infty}\) [43]. Hence, the Clenshaw–Curtis formula for \(x^{n-k+\alpha}\) in the proof of 3.2 is convergent and we conclude that

where \(P_{\alpha}=\lim_{M\rightarrow\infty} P_{\alpha,M}\).

4 Constructing the method

Taking fractional integration from both sides of system (1) and using (5), the system (1) can be written in the following form:

Let \(E=\mathbf{I}-\frac{1-\alpha}{B(\alpha)}A\). Then, using the following lemma, one can guarantee the invertibility of E.

Lemma 4.1

Let A be a constant matrix, \(0<\alpha<1\), be such that \(1-\frac {B(\alpha)}{\|A\|}<\alpha\), and I denotes the identity matrix. Then the matrix

is invertible.

Proof

The proof is a direct result of the geometric series theorem. □

Now, multiplying by \(E^{-1}\) both sides of (21), we obtain the second kind of weakly singular integral equations of the form

In order to obtain a numerical method, we suppose

to be the approximate solution, \(\mathbf{f}(t)=F^{T}\Psi(t)\) and \(Y_{0}=[y_{0},0,\ldots,0]^{T}\). Substituting them into (22) and using the operational matrix of Theorem 3.2, we obtain

where

Solving the linear system (24), we obtain \(Y^{T}\), and finally we obtain the approximate solution using (23).

To solve (24), we can use the vectorization operators to obtain system of linear algebraic equations in the standard form. We denote by vec the vectorization of a matrix

We note that

\(I_{\nu\times\nu}\) is the identity matrix and the notation ⊗ is for the Kronecker product. By the vec notation, the system (24) can be transformed to the standard form

which it can be solved by mathematic software like MATLAB.

5 Regularity of the solution

For the simplicity of notation and analysis, we may write the system (22) as follows:

where

and

The system (25) is a system of second kind Volterra integral equations with weakly singular kernel. But the regularity of its solution is different due to the presence of the \(I^{\alpha} \mathbf {f}(t)\). Therefore, we introduce the space \(\mathcal{C}^{m,\lambda }(0,T]\), \(0< \lambda\), with \(m\in\mathbb{N}_{0}:=\mathbb{N}\cup\{0\} \). The set of all continuously differentiable functions \(g:(0,T]\mapsto \mathbb{R}\) is in \(\mathcal{C}^{m,\lambda}(0,T]\), if there exists \(g_{i}\in C[0,T]\), for \(i=0,\ldots,m\) and a real number \(c\in\mathbb {R}\) such that \(g=t^{\lambda}g_{0}(t)+c\) and

It is straightforward to show that the space \(\mathcal{C}^{m,\lambda }(0,T]\) equipped with the norm

is a Banach space. We note that, for \(0<\lambda_{1}<\lambda_{2}\leq1\), we have

Remark 5.1

We note that, for \(f\in\mathcal{C}^{m,\lambda}(0,T]\) there exists a positive constant \(c>0\) such that

and for \(f\in\mathcal{C}^{0,\lambda}(0,T]\) the norms \(\|f\|_{\infty }\) and \(\|f\|_{0,\lambda}\) are equivalent.

Lemma 5.2

Suppose \(0< \alpha\leq1\).

-

Let \(f \in\mathbb{C}^{m}[0,T]\), for \(m\in\mathbb{N}\). Then \(I^{\alpha}f\in\mathcal{C}^{m,\alpha}(0,T]\).

-

Let \(f \in\mathbb{C}^{m,\lambda}(0,T]\), for \(m\in\mathbb {N}\). Then \(I^{\alpha}f\in\mathcal{C}^{m,\alpha+\lambda }(0,T]\subset\mathcal{C}^{m,\min(\alpha,\lambda)}(0,T]\).

Proof

By integral substitution (\(\tau=tz\)), we obtain

where

It is obvious that \(g\in\mathbb{C}^{m}[0,T]\) if \(f\in\mathbb {C}^{m}[0,T]\) and \(g\in\mathbb{C}^{m,\alpha}[0,T]\) if \(f\in\mathbb{C}^{m,\alpha }[0,T]\), which completes the proof. □

Here, we should be concerned that the systems we investigated are of dimension \(\nu\geq1\) and we use the norm

with \(\mathbf{f}=[f_{1},\ldots,f_{\nu}]^{T}\in(\mathbb{C}^{m,\lambda }[0,T])^{\nu}\) \(\lambda>0\), and \(m\in\mathbb{N}_{0}\).

Theorem 5.3

Assume that \(\mathbf{f} \in(\mathbb{C}^{m}[0,T])^{\nu}\) or \(\mathbf {f} \in(\mathbb{C}^{m,\alpha}(0,T])^{\nu}\), for \(\alpha\in(0,1)\). Then the system (25) has a unique solution \(\mathbf{y}\in (\mathbb{C}^{m,\alpha}(0,T])^{\nu}\). Furthermore, \((I-\mathcal{T})^{-1}\) is a bounded operator.

Proof

By using Lemma 5.2, \(\tilde{\mathbf{f}}(t)\in (\mathbb {C}^{m,\alpha}(0,T])^{\nu}\). Consider a Picard iteration corresponding to the system (25)

with \(y_{0}=\tilde{f}\). The first iteration can be written in the form

and the second iteration can be written in the form

Using the variable transformation \(s=\tau+(t-\tau)z\), we obtain

where

Proceeding by this procedure and by an argument similar to [44], Chap. 6 (note that we have used \(1-\alpha\) instead of α), one can show that

where

and

Therefore, \((I-\mathcal{T})^{-1}\) is a bounded operator in \((\mathbb {C}^{m,\alpha}(0,T])^{\nu}\). Other parts of the proof are straightforward. □

6 Convergence analysis

The orthogonal projection \(\mathbf{p}_{N}:(C[0,T])^{\nu}\mapsto(\pi _{N})^{\nu}\) (\(m,N\in\mathcal{N}\)) can be defined by

where \({p}_{N}\) is defined by (13). The introduced method can be written in the form

where \(\widetilde{\mathbf{f}}_{N}=E^{-1}\mathbf{y}_{0}+\frac {1}{B(\alpha)}E^{-1} ((1-\alpha)\mathbf{f}(t)+\alpha I^{\alpha } \mathbf{p}_{N}\mathbf{f}(t) )\) and \(\mathbf{y}_{N}\in(\pi _{N})^{\nu}\).

It is well known that the operator \(I^{\alpha}\) is compact on \(\mathbb {C}[0,T]\) (see [45]) and hence is compact on \((\mathbb{C}^{m,\alpha}[0,T])^{\nu}\subset(\mathbb{C}[0,T])^{\nu }\). Briefly, consider a bounded sequence \((\mathbf{f}_{n})\), \(\mathbf {f}_{n}=[f_{n1},\ldots,f_{n\nu}]^{T}\) in \((\mathbb{C}^{m,\alpha }[0,T])^{\nu}\) where each of its elements is bounded on \(\mathbb {C}[0,T]\), using (26). By compactness of \(I^{\alpha}\) on \(\mathbb{C}[0,T]\), the sequence \(I^{\alpha}{f_{nj}}\) (\(j=1,\ldots,\nu\)) contains a convergent subsequence in \(\mathbb{C}[0,T]\). This subsequence is in the space \(\mathbb{C}^{m,\alpha}[0,T]\) since \(f_{nj}\in\mathbb{C}^{m,\alpha }[0,T]\) and converges to an element of \(\mathbb{C}^{m,\alpha}[0,T]\) since it is a compact space. That means \(I^{\alpha}\mathbf {f}_{n}=[I^{\alpha}f_{n1},\ldots,I^{\alpha}f_{n\nu}]^{T}\) contains a convergent subsequence in \((\mathbb{C}^{m,\alpha}[0,T])^{\nu}\). Therefore, \(I^{\alpha}\) is compact on \((\mathbb{C}^{m,\alpha }[0,T])^{\nu}\).

Lemma 6.1

Let \(\mathbf{f}\in(\mathbb{C}^{0,\alpha}[0,T])^{\nu}\), \(0<\alpha <1\), \(m\in\mathbb{N}_{0}\), then \(\|\mathbf{f}-\mathbf{p}_{N}\mathbf {f}\|_{\infty}\rightarrow0\) and \({\|\mathbf{f}-\mathbf{p}_{N}\mathbf {f}\|}_{0,\alpha,\nu}\rightarrow0\) as \(N\rightarrow\infty\).

Proof

Since \(t^{\alpha}\) satisfies the Dini–Lipschitz condition, f satisfies the Dini–Lipschitz condition and, by Theorem 3.1, \(\|\mathbf{f}-\mathbf{p}_{n}\mathbf{f}\|_{\infty}\rightarrow 0\), as \(N\rightarrow\infty\). The latter can be obtained by equivalency of the norms. □

Theorem 6.2

([46])

Let X be a Banach space, and let \(\{\mathbf{p}_{N}\}\) be a family of bounded projections on X with \(\mathbf{p}_{N}x\rightarrow x\), as \(N\rightarrow \infty\), for \(x\in X\). Let \(\mathbf{T}:X\mapsto X\) be compact. Then \(\|{\mathbf{T}}-\mathbf{p}_{N}{\mathbf{T}}\|\rightarrow0\), as \(N\rightarrow\infty\).

Setting \(X=(\mathbb{C}^{0,\alpha}[0,T])^{\nu}\), \(\mathbf {T}=\mathcal{T}\) and using Theorem 6.2, we have

as \(N\rightarrow\infty\). Here, the notation \(L((\mathbb{C}^{0,\alpha }[0,T])^{\nu})\) shows the space of linear operators on \((\mathbb {C}^{0,\alpha}[0,T])^{\nu}\), and the operator norm is induced norm. The operator \(\mathcal{T}\) is compact and due to the fact that compact linear operators are bounded (see [45]), the operator \(\mathcal{T}\) is also bounded. In order to obtain the convergence of the proposed method, we can use the following lemma to show that the operator \((I-\mathbf{p}_{N}\mathcal {T})^{-1}\) exists and is bounded for all sufficiently large N.

Theorem 6.3

([46], Theorem 3.1.1 with \(\lambda=1\))

Assume \(\mathcal{T}:X\mapsto X\) is bounded, with X a Banach space, and assume \(I-\mathcal{T}:X\mapsto X\) to be a bijective operator. Further assume

Then, for all sufficiently large N, say \(N > N_{0}\), the operator \((I-p_{N}\mathcal{T})^{-1}\) exists as a bounded operator from X to X. Moreover, it is uniformly bounded:

Remark 6.4

By Theorem 6.3, the operator \((I-\mathbf{p}_{N}\mathcal {T})^{-1}\) exists for sufficiently large N. This fact guarantees the existence of a numerical method for sufficiently large N, since

by using (29).

Taking into account

we can write

Now, taking the norm from both sides of Eq. (31), we obtain

Finally, we note that

where c is a constant number, and we can state the following theorem.

Theorem 6.5

Assume that \(\mathbf{f} \in(\mathbb{C}^{0,\alpha}(0,T])^{\nu}\), for \(\alpha\in(0,1)\). Then, for sufficiently large N, the approximated solution of system (1), say \(\mathbf{y}_{N}\), obtained by (23) and (24) exists and converges to the exact solution y. Furthermore, we have

where c is a constant number.

Also, the result of Theorem 6.5 is true with the norm \(\|\cdot\| _{\infty}\). This holds by considering the equivalency of the norms \(\| \cdot\|_{0,\alpha,\nu}\) and \(\|\cdot\|_{\infty}\).

7 Existence and convergence results for discretized version

Often, we cannot compute the infinite series of \(P_{\alpha}\), and instead we use \(P_{\alpha,M}\) \(M\in\mathbb{N}\). We call the obtained method the discretized version, because we discretized the corresponding integral by the Clenshaw–Curtis formula. For \(\mathbf{y}_{N}(t)=Y^{T}\Psi(t)\), we can define \(\mathcal{T}_{M}y_{N}\) by

and we have

The numerical approximation by the discretized version can now be obtained by solving

where \(\mathbf{y}_{N,M}(t)=Y_{M}^{T}\Psi(t)\). According to Remark 3.3, we have

as \(M\rightarrow\infty\) on \(\mathbb{C}^{0,\lambda}\) with \(\lambda >0\). Hence, by Theorem 6.2,

as \(M\rightarrow\infty\) on Banach space \(\mathbb{C}^{0,\lambda}\). In order to show that \(I-\mathbf{p}_{N}\mathcal{T}_{M}\) is invertible, we note that

Regarding the arguments of previous section, \((I-\mathbf{p}_{N}\mathcal {T})\) is invertible for sufficiently large N. Also, \((I+(I-\mathbf {p}_{N}\mathcal{T})^{-1}(\mathbf{p}_{N}\mathcal{T}\mathbf{y}_{N}-\mathbf {p}_{N}\mathcal{T}_{M}))\) is invertible by geometric series theorem for sufficiently large M, since \(\|(I-\mathbf{p}_{N}\mathcal {T})^{-1}(\mathbf{p}_{N}\mathcal{T}\mathbf{y}_{N}-\mathbf{p}_{N}\mathcal {T}_{M})\|_{\infty}\rightarrow0\) as \(M\rightarrow\infty\). Thus, we conclude that, for all sufficiently large M and N, say \(M>M_{0}\) and \(N>N_{0}\), the operator \(I-\mathbf{p}_{N}\mathcal{T}_{M}\) is invertible. This fact guarantees the existence of the numerical solution and we can write

In order to provide the convergence analysis, we note that

Therefore, it remains to provide the convergence for \(\|\mathbf {y}_{N,M}-\mathbf{y}_{N}\|_{\infty}\). Since

we conclude that, for sufficiently large M and N, we have

and hence

as \(M,N\rightarrow\infty\).

8 Numerical examples

To show the effectiveness and efficiency of the method some examples are presented for illustration. In the rest of the paper, we assume that \(B(\alpha)=1\), and we obtain the maximum error by

for \(i=1,\ldots,n\). Here, \(y_{iN}\) and \(y_{i}\) (\(i=1,\ldots,n\)) show the ith component of the approximate and the exact solution, respectively. In all the following numerical experiments, we set \(M=N\), unless otherwise stated.

Example 8.1

([29])

Let us consider the initial value problem described by (1) with \(A=0\), \(f(t)=t\) and \(y_{0}=0\). The exact solution of this system is

Table 1 shows the results of the approximation and the exact solution on \(t=0,0.2,\ldots,1\), for different values of N. This table shows that with increasing N, the approximate solution converges to the exact solution.

Example 8.2

Consider a non-homogeneous systems (1), with constant vector-valued functions \(A=\mathbf{I}\), \(\mathbf{y}_{0}=[1,0,\ldots ,0]^{T}\), and

with exact solution \(\mathbf{y}_{od}(t)=[1,t,t^{2},\ldots,t^{n-1}]^{T}\), on \([0,1]\). Tables 2 and 3 show the maximum error for \(\alpha=0.5\) and \(\alpha=0.9\) and various N. As these tables show, with increasing N the maximum error decreases rapidly and the proposed method converges to the exact solution.

By Theorem 6.5, \(\|\mathbf{y}-\mathbf{y}_{N}\|_{\infty}\) is less than \(c(\|\mathbf{y}-\mathbf{p}_{N}\mathbf{y}\|_{\infty}+\| \mathbf{f}-\mathbf{p}_{N} \mathbf{f}\|_{\infty})\), Therefore, we set \(c=e^{3}\) by experiment and plot the natural logarithm of them versus N. Figs. 1 and 2 show that \(\|\mathbf{y}-\mathbf {y}_{N}\|_{\infty}\) and \(c(\|\mathbf{y}-\mathbf{p}_{N}\mathbf{y}\| _{\infty}+\|\mathbf{f}-\mathbf{p}_{N} \mathbf{f}\|_{\infty})\) are similar, which confirms the theoretical analysis. This numerical experiments are obtained by setting \(M=N+30\) and \(\alpha=0.9\). For the first component, the two functions f and y are polynomials and we expect the approximate solution to be exact up to the floating point error. Tables 2 and 3 confirm this fact.

The natural logarithm of the \(\|y-y_{N}\|_{\infty}\) versus N, in Example 8.2

The natural logarithm of the \(e^{3}(\|y-p_{N}y\|_{\infty}+\| f-p_{N} f\|_{\infty})\) versus N, in Example 8.2

Example 8.3

Consider the system 1, with

\(\mathbf{y}_{0}=[0,1]^{T}\), and \(\mathbf{f}(t)=\mathbf{f}_{1}+\mathbf {f}_{2}t\) with

We can obtain the exact solution using the Laplace transform as follows:

Table 4 shows the maximum error for \(\alpha=0.9\) and various N. Figures 3 and 4 show the numerical solution for various α on \([0,15]\). We observe that, as α approaches 0, the solution of this system approaches the algebraic linear system

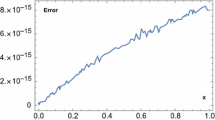

Figure 5 shows the error behavior of this example, for \(\alpha =0.5\). Theoretically, we expect \(\|y-y_{N}\|_{\infty}\) is less than \(c(\| y-p_{N}y\|_{\infty}+\|f-p_{N} f\|_{\infty})\) up to a constant multiplier c. We observe a similar behavior for both components of the error with \(c=e^{0.5}\). This is in complete agrement with the error analysis.

Numerical solution of Example 8.3 for the first component with \(N=10\)

Numerical solution of Example 8.3 for the second component with \(N=10\)

The natural logarithm of the \(e^{0.5}(\|y-p_{N}y\|_{\infty}+\| f-p_{N} f\|_{\infty})\) and \(\|y-y_{N}\|_{\infty}\) versus N, in Example 8.3

8.1 Application

Since dynamical systems with ordinary or fractional differential equations are abundant in natural phenomenon, we expect that, like other type of FDEs, the AB type FDEs will also be successful in modeling of natural phenomenon. This was confirmed with the comparison in the previous section. Beside the fact that the AB type derivative has been recently introduced, many applications in this field can be found in the literature we talked about in the introduction section. One of many examples for modeling of natural phenomenon using AB type FDEs is the amount of drug lidocaine in the bloodstream and body tissue [47]. The human disease of ventricular arrythmia or irregular heartbeat is treated clinically using the drug lidocaine. Let \(X(t)\) be the amount of lidocaine in the bloodstream and \(Y(t)\) be the amount of lidocaine in body tissue. Then the dynamics of the drug therapy obeys the following system:

This system is equivalent to the system of Example 8.3, with \(\mathbf{f}=[0,0]^{T}\), and was solved by ordinary and Liouville–Caputo fractional derivatives in the literature. The solution of this system with AB type fractional derivative is illustrated in Figs. 6 and 7 for various value of α.

8.2 A comparison of the proposed method with other methods

Due to the novelty of the subject, there are few numerical methods available in the literature for solving the system (1). We found only a type of predictor–corrector method introduced in [29] for solving such systems numerically. We use the numerical values reported in that paper to compare our proposed method with that one. Consider Example 4.1 of [29]. It corresponds to \(A=0\), and \(f(t)=0\), with the notations of this paper. As we see in Table 5, the result of our method, even with small \(N=1, 2,3\), is much better than the result of [29].

9 Conclusion

We used the Clenshaw–Curtis quadrature to obtain an operational matrix of a fractional integral based on Chebyshev polynomials. Then, by taking the fractional integral from both sides of the system of FDEs involving the Mittag-Leffler kernel, we obtained a system of second kind weakly singular equations involving the fractional integral of the non-homogeneous part. This system was transformed to a system of linear algebraic equations, and using vectorization operator we obtained the system of standard linear algebraic equations. Solving it we obtained an approximate solution of the system of AB type FDEs. Numerical examples showed that the proposed method effectively and accurately solves the system of FDEs. The successfulness of the new definition of the fractional derivative and integral, involving the Mittag-Leffler kernel, makes it important to improve numerical methods for nonlinear system of FDEs and to implement numerical methods in other bases. Therefore, future studies with this new type of calculus are to find more experimental data and to compare the related results to the Liouville–Caputo and Riemann–Liouville derivatives. Also, the stability of the related fractional differential equations needs more investigation.

References

Podlubny, I.: Fractional Differential Equations: An Introduction to Fractional Derivatives, Fractional Differential Equations, to Methods of Their Solution and Some of Their Applications, vol. 198. Academic Press, San Diego (1998)

Rudolf, H.: Applications of Fractional Calculus in Physics. World Scientific, Singapore (2000)

Kilbas, A.A., Srivastava, H.M., Trujillo, J.J.: Theory and Applications of Fractional Differential Equations. Elsevier, Amsterdam (2006)

Baleanu, D.: Fractional Calculus: Models and Numerical Methods. World Scientific, Singapore (2012)

Caputo, M.: Linear models of dissipation whose Q is almost frequency independent II. Geophys. J. Int. 13, 529–539 (1967)

Caputo, M., Fabrizio, M.: A new definition of fractional derivative without singular kernel. Prog. Fract. Differ. Appl. 1, 1–13 (2015)

Losada, J., Nieto, J.J.: Properties of a new fractional derivative without singular kernel. Prog. Fract. Differ. Appl. 1, 87–92 (2015)

Luchko, Y., Yamamoto, M.: General time-fractional diffusion equation: some uniqueness and existence results for the initial-boundary-value problems. Fract. Calc. Appl. Anal. 19, 676–695 (2016)

Atanacković, T.M., Pilipović, S., Zorica, D.: Properties of the Caputo–Fabrizio fractional derivative and its distributional settings. Fract. Calc. Appl. Anal. 21, 29–44 (2018)

Abdon, A., Dumitru, B.: New fractional derivatives with nonlocal and non-singular kernel; theory and application to heat transfer model. Therm. Sci. 20, 763–769 (2016)

Atangana, A., Nieto, J.J.: Numerical solution for the model of RLC circuit via the fractional derivative without singular kernel. Adv. Mech. Eng. 7, 1–7 (2015)

Atangana, A., Koca, I.: Chaos in a simple nonlinear system with Atangana–Baleanu derivatives with fractional order. Chaos Solitons Fractals 89, 447–454 (2016)

Alkahtani, B.S.T.: Chua’s circuit model with Atangana–Baleanu derivative with fractional order. Chaos Solitons Fractals 89, 547–551 (2016)

Coronel-Escamilla, A., Gómez-Aguilar, J.F., Baleanu, D., Escobar-Jiménez, R.F., Olivares-Peregrino, V.H., Abundez-Pliego, A.: Formulation of Euler–Lagrange and Hamilton equations involving fractional operators with regular kernel. Adv. Differ. Equ. 2016, 283 (2016)

Gómez-Aguilar, J.F., Morales-Delgado, V.F., Taneco-Hernández, M.A., Baleanu, D., Escobar-Jiménez, R.F., Al Qurashi, M.M.: Analytical solutions of the electrical RLC circuit via Liouville–Caputo operators with local and non-local kernels. Entropy 18, 1–12 (2016)

Koca, I.: Analysis of rubella disease model with non-local and non-singular fractional derivatives. Int. J. Optim. Control 8, 17–25 (2018)

Gómez-Aguilar, J.: Irving–Mullineux oscillator via fractional derivatives with Mittag-Leffler kernel. Chaos Solitons Fractals 95, 179–186 (2017)

Gomez-Aguilar, J., Baleanu, D.: Schrödinger equation involving fractional operators with non-singular kernel. J. Electromagn. Waves Appl. 31, 752–761 (2017)

Tateishi, A.A., Ribeiro, H.V., Lenzi, E.K.: The role of fractional time-derivative operators on anomalous diffusion. Front. Phys. 5, 1–9 (2017)

Morales-Delgado, V., Gómez-Aguilar, J., Taneco-Hernandez, M.: Analytical solutions for the motion of a charged particle in electric and magnetic fields via non-singular fractional derivatives. Eur. Phys. J. Plus 132, 527 (2017)

Gómez-Aguilar, J.F., Atangana, A.: Fractional derivatives with the power-law and the Mittag-Leffler kernel applied to the nonlinear Baggs–Freedman model. Fractal Fract. 2, 1–14 (2018)

Coronel-Escamilla, A., Gómez-Aguilar, J., Torres, L., Escobar-Jiménez, R.: A numerical solution for a variable-order reaction–diffusion model by using fractional derivatives with non-local and non-singular kernel. Phys. A, Stat. Mech. Appl. 491, 406–424 (2018)

Zuñiga-Aguilar, C., Gómez-Aguilar, J., Escobar-Jiménez, R., Romero-Ugalde, H.: Robust control for fractional variable-order chaotic systems with non-singular kernel. Eur. Phys. J. Plus 133, 1–13 (2018)

Gorenflo, R., Mainardi, F., Srivastava, H.M.: Special functions in fractional relaxation-oscillation and fractional diffusion-wave phenomena. In: Proceedings of the Eighth International Colloquium on Differential Equations, Plovdiv, 1997, pp. 195–202 (1998)

Kundu, S.: Suspension concentration distribution in turbulent flows: an analytical study using fractional advection-diffusion equation. In press

Abdeljawad, T.: Fractional operators with exponential kernels and a Lyapunov type inequality. Adv. Differ. Equ. 2017, 313 (2017)

Srivastava, H.M.: Remarks on some families of fractional-order differential equations. Integral Transforms Spec. Funct. 28, 560–564 (2017)

Gómez-Aguilar, J.F., López-López, M.G., Alvarado-Martínez, V.M., Baleanu, D., Khan, H.: Chaos in a cancer model via fractional derivatives with exponential decay and Mittag-Leffler law. Entropy 19, 681 (2017)

Djida, J., Atangana, A., Area, I.: Numerical computation of a fractional derivative with non-local and non-singular kernel. Math. Model. Nat. Phenom. 12, 4–13 (2017)

Mason, J.C., Handscomb, D.C.: Chebyshev Polynomials. CRC Press, Boca Raton (2002)

Dabiri, A., Butcher, E.A.: Numerical solution of multi-order fractional differential equations with multiple delays via spectral collocation methods. Appl. Math. Model. 56, 424–448 (2018)

Dabiri, A., Butcher, E.A.: Efficient modified Chebyshev differentiation matrices for fractional differential equations. Commun. Nonlinear Sci. Numer. Simul. 50, 284–310 (2017)

Dabiri, A., Butcher, E.A.: Stable fractional Chebyshev differentiation matrix for the numerical solution of multi-order fractional differential equations. Nonlinear Dyn. 90(1), 185–201 (2017)

Gorenflo, R., Kilbas, A.: Mittag-Leffler functions, related topics and applications

Srivastava, H.M.: Some families of Mittag-Leffler type functions and associated operators of fractional calculus (survey). TWMS J. Pure Appl. Math. 7, 123–145 (2016)

Tomovski, Ž., Hilfer, R., Srivastava, H.M.: Fractional and operational calculus with generalized fractional derivative operators and Mittag-Leffler type functions. Integral Transforms Spec. Funct. 21(11), 797–814 (2010)

Srivastava, H., Tomovski, Ž.: Fractional calculus with an integral operator containing a generalized Mittag-Leffler function in the kernel. Appl. Math. Comput. 211, 198–210 (2009)

Baleanu, D., Fernandez, A.: On some new properties of fractional derivatives with Mittag-Leffler kernel. Commun. Nonlinear Sci. Numer. Simul. 59, 444–462 (2018)

Mittag-Leffler, G.: Sur la representation analytique d’une fonction monogene cinquieme note. Acta Math. 29, 101–181 (1905)

Artin, E.: The Gamma Function. Dover, Mineola (2015)

Abdeljawad, T., Baleanu, D.: Integration by parts and its applications of a new nonlocal fractional derivative with Mittag-Leffler nonsingular kernel. J. Nonlinear Sci. Appl. 29, 1098–1107 (2017)

Szeg, G.: Orthogonal Polynomials. Am. Math. Soc., Rhode Island (1939)

Trefethen, L.N.: Is Gauss quadrature better than Clenshaw–Curtis? SIAM Rev. 50, 67–87 (2008)

Brunner, H.: Collocation Methods for Volterra Integral and Related Functional Differential Equations. Cambridge University Press, Cambridge (2004)

Kress, R.: Linear Integral Equations, vol. 82. Springer, Berlin (2012)

Atkinson, K., Han, W.: Theoretical Numerical Analysis, vol. 39. Springer, Berlin (2005)

Khalil, H., Khan, R.A.: The use of Jacobi polynomials in the numerical solution of coupled system of fractional differential equations. Int. J. Comput. Math. 92, 1452–1472 (2015)

Acknowledgements

The second author also would like to thank Professor Hristov and Professor Juan J. Nieto for useful and valuable discussions regarding the fractional operators with non-singular kernels.

Availability of data and materials

Not applicable.

Funding

Not applicable.

Author information

Authors and Affiliations

Contributions

All the authors drafted the manuscript, and read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare that they have no competing interests.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Baleanu, D., Shiri, B., Srivastava, H.M. et al. A Chebyshev spectral method based on operational matrix for fractional differential equations involving non-singular Mittag-Leffler kernel. Adv Differ Equ 2018, 353 (2018). https://doi.org/10.1186/s13662-018-1822-5

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s13662-018-1822-5