Abstract

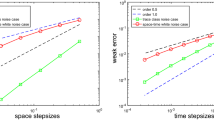

We prove strong rate resp. weak rate \({{\mathcal {O}}}(\tau )\) for a structure preserving temporal discretization (with \(\tau \) the step size) of the stochastic Allen–Cahn equation with additive resp. multiplicative colored noise in \(d=1,2,3\) dimensions. Direct variational arguments exploit the one-sided Lipschitz property of the cubic nonlinearity in the first setting to settle first order strong rate. It is the same property which allows for uniform bounds for the derivatives of the solution of the related Kolmogorov equation, and then leads to weak rate \({{\mathcal {O}}}(\tau )\) in the presence of multiplicative noise. Hence, we obtain twice the rate of convergence known for the strong error in the presence of multiplicative noise.

Similar content being viewed by others

1 Introduction

We use time discretisation to approximate the stochastic Allen–Cahn equation

in \({\mathcal {Q}}_T:=(0,T)\times \mathbb {T}^d\), where \({\mathbb {T}}^d = \left( (-\pi , \pi )|_{\{ - \pi , \pi \}} \right) ^d\) (supplemented with periodic boundary conditions) with \(T>0\) and \(d=1,2,3\). The use of periodic boundary conditions is for an ease of presentation only, see Remark 5.1, 4. The unknown \(u\) in (1.1) is defined on a given filtered probability space \((\Omega ,{\mathfrak {F}},({\mathfrak {F}}_t)_{t\ge 0},\mathbb {P})\), and \(u_0\) is a given initial datum. Here W denotes a cylindrical Wiener process and \(\Phi \) takes values in the space of Hilbert–Schmidt operators; see Sect. 2.1 for details.

The deterministic version of (1.1) is the well-known Allen–Cahn equation—a phase-field model to approximate the dynamics of an (material) interface by a diffuse interface; see e.g. [20] for a recent review of (deterministic) phase-field models. The related mathematical and physical conclusions usually base on the underlying Helmholtz free energy functional \({{\mathcal {E}}}: W^{1,2}(\mathbb {T}^d) \rightarrow {{\mathbb {R}}}\), where

Here, in particular, the latter energy part accounts for the interfacial/mixing energy, and is related to \(f(x) = x^3-x\) in (1.1) by \(F' = f\). As a consequence, the Allen–Cahn equation is the gradient flow of (1.2), i.e.,

where \(D {{\mathcal {E}}}(u)\) denotes the Fréchet derivative of \({{\mathcal {E}}}\) at u: multiplication of (1.3) with \(D {{\mathcal {E}}}(u)\), integration in space, and the chain rule then lead to the energy identity

For \({{\mathcal {E}}}(u_0) < \infty \), this identity may serve in mathematical analysis to deduce (a priori) bounds for solutions in physically relevant norms—reflecting the fact that the Helmholtz energy \({{\mathcal {E}}}\) is the proper functional to explain the dynamics of (1.3).

This energy-driven approach also serves as guidance to construct the numerical scheme (1.5) below to properly address the specific nature of (1.3). But before we also mention here ‘general-purpose’ operator-splitting methods, where the nonlinearity in \(D{{\mathcal {E}}}(u)\) is treated explicitly in a time marching context to avoid the use of nonlinear numerical solvers: it is, however, the concomitant violation of the dissipative energy law (1.4) on a discrete level, see also (1.6) below, that a related stability/convergence analysis for splitting schemes usually only gives a priori bounds in non-physical norms, and its derivation is based on a discrete Gronwall type estimate—rather than identity (1.4)—, which heavily affects discrete long time stability. This drawback, in particular, may usually only be compensated in simulations by using (comparatively) much smaller step sizes than in the context of the structure-preserving discretization below; we also mention here that the usual application of model (1.3) in sciences (e.g., multiphase flow in complicated, or even moving domains, [20, Ch. 2]) or geometric PDEs (e.g., approximation of mean curvature flows, [19]) involves a small scaling factor \(\varepsilon >0\) under which realistic (diffuse) interface motion holds—which even further worsens inherent discrete instabilities of structure notably; see also [20, Ch.’s 4 & 5] for a related further discussion.

For these reasons we favor as starting point for a temporal discretisation for (1.1) one which inherits the (gradient flow) structure of the original problem—as is the following implicit Euler discretisation for (1.3) governing iterates \(( u_m)_{m=0}^M\),

on an equi-distant mesh \((t_m)_{m=0}^M \subset [0,T]\) of size \(0< \tau < 1\), and satisfying the discrete energy inequality (see [21])

In this work, we derive weak rates of convergence for the time iteration scheme

with \(\Delta _mW=W(t_{m})-W(t_{m-1})\), to address the SPDE (1.1) of gradient type, generalising (1.5). Again, its construction is motivated by the demand to inherit properties in terms of the underlying energy \({{\mathcal {E}}}\), for which the concept of a variational strong solution for (1.1) is natural—see Definition 2.1 below—which satisfies the following energy identity

and therefore generalises property (1.4) for (1.3); see e.g. [22], where so-called strong variational solutions to stochastic equations of gradient-type are considered.

A discretisation close to (1.7) for (1.1) has been studied in [23] in this spirit, and it was shown that the strong error—i.e., the expectation of the discrete \(L^\infty (0,T;L^2_x)\cap L^2(0,T;W^{1,2}_x)\) distance of discrete and continuous solution—is of order \(O(\sqrt{\tau })\). This is certainly optimal in general due to the low temporal regularity of the driving Wiener process in (1.1). Our goal in this work is to verify first order weak error estimates for (1.7); it turns out, that its derivation crucially depends on the kind of noise, which we assume to be spatially smooth throughout:

- (a):

-

If \(\Phi (u) \equiv \Phi \) in (1.1) generates additive noise, we even verify strong convergence rate \({{\mathcal {O}}}(\tau )\) for scheme (1.7) in Theorem 3.1 for \(d=1,2\) and 3. Its derivation is based on focusing on the random PDE (3.2) for the transformation \(y(t) = u(t)- \Phi W(t)\), which is now differentiable in time (see Corollary 3.1), and a simple use of the binomial formula to express \(f\bigl ( u(t)\bigr ) = f\bigl ( y(t) + \Phi W(t)\bigr )\) right below (3.2). No discrete stability for iterates of (1.7) is needed here, but the implicit use of the one-sided Lipschitz nonlinearity f, as well as its explicit form are exploited to verify the strong error bounds in Sect. 3. This approach is motivated from [10].

- (b):

-

If \(\Phi (u)\) in (1.1) exerts multiplicative noise, we use the Kolmogorov equation (4.45) associated with (1.1), in combination with higher order discrete stability for iterates \((u_m)_{m=0}^M\) from scheme (1.7); cf. Lemma 4.4. For estimate (4.32) and \(d=3\) concerning the second derivative of the time-discrete solution we are forced to work under the assumption of an affine linear noise, see (N2) below, whereas for \(d=2\) the same argument even works for a general nonlinear noise, see (N1) below. For the weak error analysis, we conceptually borrow tools from [5, 7, 17]; see Remark 5.1, 3. in particular, where the structural restrictions are detailed. As in [5, 17, 18], the error \({\mathbb {E}}[\varphi (u_m)-\varphi (u(t_m))]\) for a smooth function \(\varphi \) can be linked to the solution of the Kolmogorov equation by means of an application of Itô’s formula. Therefore we need time-continuous interpolation of the discrete iterates \((u_m)_{m=0}^M\), which yields an \(({\mathfrak {F}}_t)\)-adapted process. Since we work with the fully implicit scheme (1.7) this is more complicated than in previous works [5, 17, 18]. A natural candidate for the interpolation is

$$\begin{aligned} u_\tau (t)=\frac{t_{m}-t}{\tau }u_{m-1}+{\mathcal {T}}_\tau \bigg (\frac{1}{\tau }\int _{t_{m-1}}^tu_{m-1}\, \textrm{d}s+\int _{t_{m-1}}^t\Phi (u_{m-1})\,\mathrm dW\bigg ) \end{aligned}$$for \(,t\in [ t_{m-1}, t_m]\), where the solution map \({\mathcal {T}}_\tau \) for (4.1) is the discrete nonlinear semi-group corresponding to \(D{\mathcal {E}}\), which we analyse in Sect. 4.1. We observe that the nonlinearity does not appear explicitely in the formula for \(u_\tau \). In previous works the linear discrete semigroup \({\mathcal {S}}_\tau =(\textrm{Id}-\tau \Delta )^{-1}\) is used instead and f is explicitly evaluated. It turns out that the nonlinear map \({\mathcal {T}}_\tau \) has nice properties similar to the linear case as a consequence of the one-sided Lipschitz property of f; see Lemma 4.1. In particular, \({\mathcal {T}}_\tau \) can be linearised around the identity with an error of order \(O(\tau )\) in various norms; see Corollary 4.2.

The goal in this paper is a weak error analysis of (1.7) as an example for an implementable scheme in a broad setting of applications, including multiphase flow dynamics in complicated domains, which inherits (long time) discrete stability even for scalings \(\varepsilon \ll 1\) to properly simulate diffuse phase field dynamics; see [19, 20], and also item 5. in Remark 3.1. In fact, there exist several other works on weak error analysis for different schemes to solve (1.1) in the literature, most of which apply to restricted data settings, such as domains for \(d=1\) being intervals \((a,b) \subset {{\mathbb {R}}}\), or related drift operators being generators of linear semigroups, for which spectral properties need be available for actual computations; see items 3.–4. of Remark 3.1. In this context, admissible spatial meshes are often needed to be equi-distant, which clearly affects the capacity of related schemes to simulate multiscale phase evolution via (1.1) for general data settings.

2 Mathematical framework

2.1 Probability setup

Let \((\Omega ,{\mathfrak {F}},({\mathfrak {F}}_t)_{t\ge 0},\mathbb {P})\) be a stochastic basis with a complete, right-continuous filtration. The process W is a cylindrical Wiener process, that is, \(W(t)=\sum _{k\ge 1}\beta _j(t) e_j\) with \((\beta _i)_{i\ge 1}\) being mutually independent real-valued standard Wiener processes relative to \(({\mathfrak {F}}_t)_{t\ge 0}\), and \((e_i)_{i\ge 1}\) a complete orthonormal system in a separable Hilbert space \(\mathfrak {U}\). Let us now give the precise definition of the diffusion coefficient \(\varPhi \) taking values in the set of Hilbert–Schmidt operators \(L_2({\mathfrak {U}};{\mathbb {H}})\), where \({\mathbb {H}}\) can take the role of various Hilbert spaces such as \(L^2(\mathbb {T}^d)\), \(W^{1,2}(\mathbb {T}^d)\) and \(W^{2,2}(\mathbb {T}^d)\) for which we use the shorthand notations \(L^2_x, W^{1,2}_x\) and \(W^{2,2}_x\).

In the following we formulate two sets of assumptions regarding the (regularity of the) diffusion coefficient \(\Phi \), which will allow us to derive high moment bounds for higher derivatives of the solution of (1.1) in Sect. 2.2. In the first case (a) below we consider a general nonlinear multiplicative noise. In the second case (N2) we assume that \(\Phi \) is an affine linear function of u. As we shall see below, assumption (N1) is always sufficient for our analysis in the case \(d=1,2\); see Remark 5.1, 3.

- (N1):

-

- (a):

-

For \(z\in L^2(\mathbb {T}^d)\) let \(\,\varPhi (z):\mathfrak {U}\rightarrow L^2(\mathbb {T}^d)\) be defined by \(\Phi (z)e_k=\mathfrak {g}_k(\cdot ,z(\cdot ))\), where \(\mathfrak {g}_k\in C^1(\mathbb {T}^d\times {\mathbb {R}})\) and,Footnote 1

$$\begin{aligned} \begin{aligned} \sum _{k\ge 1}|\mathfrak {g}_k(x,\xi )|^2 \le c(1+|\xi |^2)&,\qquad \sum _{k\ge 1}|\nabla _{\xi } \mathfrak {g}_k(x,\xi )|^2\le c,\\ \sum _{k\ge 1}|\nabla _x \mathfrak {g}_k(x,\xi )|^2&\le c(1+|\xi |^2),\quad x\in \mathbb {T}^d,\,\xi \in {\mathbb {R}}. \end{aligned} \end{aligned}$$(2.1)Note that this implies

$$\begin{aligned} \Vert \Phi (u)-\Phi (v)\Vert _{L_2({\mathfrak {U}};L^2_x)}&\le \,c\Vert u-v\Vert _{L^2_x}\qquad \forall u,v\in L^2_x, \end{aligned}$$(2.2)$$\begin{aligned} \Vert \Phi (u)\Vert _{L_2({\mathfrak {U}};W^{1,2}_x)}&\le \,c\big (1+\Vert u\Vert _{W^{1,2}_x}\big )\qquad \forall u\in W^{1,2}_x, \end{aligned}$$(2.3)$$\begin{aligned} \Vert D\Phi (u)\Vert _{L_2({\mathfrak {U}};{\mathcal {L}}( L^{2}_x))}&\le \,c\qquad \forall u\in L^{2}_x. \end{aligned}$$(2.4) - (b):

-

We require \(\mathfrak {g}_k\in C^2(\mathbb {T}^d\times {\mathbb {R}})\), together with

$$\begin{aligned} \begin{aligned} \sum _{k\ge 1}|\nabla _x^2\mathfrak {g}_k(x,\xi )|^2 \le c(1+|\xi |^2)&,\qquad \sum _{k\ge 1}|\nabla _{x,\xi }\nabla _{\xi } \mathfrak {g}_k(x,\xi )|^2&\le c, \end{aligned} \end{aligned}$$(2.5)for \(x\in \mathbb {T}^d,\,\xi \in {\mathbb {R}}\) and sometimes also

$$\begin{aligned} \begin{aligned} \sum _{k\ge 1}|\nabla _{x}^3\mathfrak {g}_k(x,\xi )|^2 \le c(1+|\xi |^2)&, \qquad \sum _{k\ge 1}|\nabla _\xi \nabla _{x,\xi }^2 \mathfrak {g}_k(x,\xi )|^2 \le c. \end{aligned} \end{aligned}$$(2.6) - (N2):

-

We assume that \(\Phi (z)e_k=\alpha _k(\cdot )z(\cdot )+\beta _k(\cdot )\), where \(\alpha _k,\beta _k\in C^3(\mathbb {T}^d)\) and

$$\begin{aligned} \sum _{k\ge 1}\Vert \alpha _k\Vert _{C^3(\mathbb {T}^d)}+ \sum _{k\ge 1}\Vert \beta _k\Vert _{C^3(\mathbb {T}^d)}\le c. \end{aligned}$$(2.7)

Note that (2.1) and (2.5) imply

whereas (2.1), (2.5) and (2.6) together yield

Assumption (2.1) allows us to define stochastic integrals. Given an \(({\mathfrak {F}}_t)\)-adapted process \(u\in L^2(\Omega ;C([0,T];L^2(\mathbb {T}^d)))\), the stochastic integral

is a well-defined process taking values in \(L^2(\mathbb {T}^d)\) (see [16] for a detailed construction). Moreover, we can multiply by test functions to obtain

Similarly, we can define stochastic integrals with values in \(W^{1,2}(\mathbb {T}^d)\) and \(W^{2,2}(\mathbb {T}^d)\) respectively if \(u\) belongs to the corresponding class.

2.2 The concept of solutions

In this section we give a precise definition of a solution to (1.1) and derive some of its basic properties.

Definition 2.1

Let \((\Omega ,\mathfrak {F},(\mathfrak {F}_t)_{t\ge 0},\mathbb {P})\) be a given stochastic basis with a complete right-continuous filtration and an \((\mathfrak {F}_t)\)-cylindrical Wiener process W. Suppose that \(\Phi \) satisfies (N1) (a) and that \(d=1,2,3\). Let \(u_0\) be an \(\mathfrak {F}_0\)-measurable random variable with values in \(L^2(\mathbb {T}^d)\). Then \(u\) is called a weak pathwise solution to (1.1) with the initial condition \(u_0\) provided

-

(a)

the function \(u\) is \((\mathfrak {F}_t)\)-adapted and

$$\begin{aligned} u\in C([0,T];L^2(\mathbb {T}^d))\cap L^2(0,T; W^{1,2}(\mathbb {T}^d))\quad {\mathbb {P}}\text {-a.s.}, \end{aligned}$$ -

(b)

the equation

$$\begin{aligned}&\int _{\mathbb {T}^d}u(t)\,\varphi \, \textrm{d}x-\int _{\mathbb {T}^d}u_0\,\varphi \, \textrm{d}x\\&\quad =-\int _0^t\int _{\mathbb {T}^d}f(u)\,\varphi \, \textrm{d}x\,\textrm{d}t-\int _0^t\int _{\mathbb {T}^d}\nabla u\cdot \nabla \varphi \, \textrm{d}x\,\textrm{d}s+\int _0^t\int _{\mathbb {T}^d}\Phi (u)\,\varphi \, \textrm{d}x\,\textrm{d}W \end{aligned}$$holds \({\mathbb {P}}\)-a.s. for all \(\varphi \in W^{1,2}(\mathbb {T}^d)\) and all \(t\in [0,T]\).

The existence of a solution can be shown by the popular variational approach; see e.g. [25].

Theorem 2.1

Let \((\Omega ,\mathfrak {F},(\mathfrak {F}_t)_{t\ge 0},\mathbb {P})\) be a given stochastic basis with a complete right-continuous filtration and an \((\mathfrak {F}_t)\)-cylindrical Wiener process W. Suppose that \(\Phi \) satisfies (N1) (a) and that \(d=1,2,3\). Let \(u_0\) be an \(\mathfrak {F}_0\)-measurable random variable such that \(u_0\in L^q(\Omega ;L^2({\mathbb {T}}^d))\) for some \(q>2\). Then there exists a unique weak pathwise solution to (1.1) in the sense of Definition 2.1 with the initial condition \(u_0\).

Lemma 2.1

Suppose that \(\Phi \) satisfies (N1) (a) and let \(u\) be the weak pathwise solution to (1.1).

-

(a)

Assume that \({\mathcal {E}}(u_0)\in L^{q}(\Omega )\) for some \(q\ge 1\). Then we have

$$\begin{aligned} {\mathbb {E}}\bigg [\bigg (\sup _{0\le s\le T}{\mathcal {E}}(u(s))+\int _0^T\Vert D{\mathcal {E}}(u(s))\Vert _{L^2_x}^2\,\textrm{ds}\bigg )^q\bigg ]&\le \,c\,{\mathbb {E}}\big [\big ({\mathcal {E}}(u_0)+1\big )^q\big ], \end{aligned}$$(2.13)where \(c=c(q,T,u_0)>0\).

-

(b)

Assume that \({\mathcal {E}}(u_0)\in L^{1}(\Omega )\). Then we have for any \(\tau \in (0,T)\)

$$\begin{aligned} {\mathbb {E}}\bigg [\sup _{0\le s\le \tau }\Vert u(s)-u_0\Vert ^{2}_{L^{2}_x}+\int _0^\tau \Vert \nabla u(s)\Vert ^2_{L^2_x}\,\textrm{ds}\bigg ]&\le \,c\tau , \end{aligned}$$(2.14)where \(c=c(T,u_0)>0\) is independent of \(\tau >0\).

Proof

Part (a) is standard and similar results can be found in the literature, see, e.g., [23]). For the reader’s convenience we decided to give the details nevertheless. Applying Itô’s formula (this can be justified by truncating the function F and applying the Itô-formula in Hilbert spaces from [16, Theorem 4.17].) to the function \(t\mapsto {\mathcal {E}}\bigl (u(t)\bigr )\) yields

We have by (2.1)

Similarly, by Burkholder–Davis–Gundy inequality,

For the second estimate we apply Itô’s formula to \(t\mapsto \frac{1}{2}\Vert {u}(t)-u_0\Vert _{L^2_x}^2\) and obtain similarly to (2.20)

We clearly have

using (2.13). Now (2.13) implies

Finally,

by (2.1) and (2.13). Arguing as above in the proof of (2.13) we have

using (2.13). Plugging all together shows (2.14). \(\square \)

The following lemma collects moment bounds (i.e., \(p\ge 2\)) in higher norms and is reminiscent of [23, Lemma 3.1].

Lemma 2.2

Suppose that \(\Phi \) satisfies (N1) (a) and let \(u\) be the weak pathwise solution to (1.1).

-

(a)

Assume that \(u_0\in L^{p}(\Omega ,L^{p}(\mathbb {T}^d))\) for some \(p\ge 2\). Then we have

$$\begin{aligned} {\mathbb {E}}\bigg [\sup _{0\le s\le T}\Vert u(s)\Vert ^{p}_{L^{p}_x}+\int _0^T\Vert \nabla |u(s)|^{\frac{p}{2}}\Vert ^2_{L^2_x}\,\textrm{ds}\bigg ]&\le \,c\,{\mathbb {E}}\big [\Vert u_0\Vert ^{p}_{L^{p}_x}+1\big ]. \end{aligned}$$(2.15) -

(b)

Assume that \(u_0\in L^{p}(\Omega ,W^{1,p}(\mathbb {T}^d))\) for some \(p>1\). Then we have

$$\begin{aligned} {\mathbb {E}}\bigg [\sup _{0\le s\le T}\Vert \nabla u(s)\Vert ^{p}_{L^{p}_x}+\int _0^T\Vert \nabla |\nabla u(s)|^{\frac{p}{2}}\Vert ^2_{L^2_x}\,\textrm{ds}\bigg ]&\le \,c\,{\mathbb {E}}\big [\Vert \nabla u_0\Vert ^{p}_{L^{p}_x}+1\big ]. \end{aligned}$$(2.16) -

(c)

Assume that \(u_0\in L^{p}(\Omega ,W^{2,p}(\mathbb {T}^d))\cap L^{2p}(\Omega ,W^{1,p}(\mathbb {T}^d))\) hold and that \(\Phi \) satisfies additionally (N1) (b). Then we have

$$\begin{aligned} \begin{aligned} {\mathbb {E}}\bigg [\sup _{0\le s\le T}\Vert \nabla ^2u(s)\Vert ^{p}_{L^{p}_x}&+\int _0^T\Vert \nabla |\nabla ^2u(s)|^{\frac{p}{2}}\Vert ^2_{L^2_x}\,\textrm{ds}\bigg ]\\&\le \,c\,{\mathbb {E}}\big [\Vert \nabla ^2u_0\Vert ^{p}_{L^{p}_x}+\Vert \nabla u_0\Vert ^{2p}_{L^{2p}_x}+1\big ]. \end{aligned} \end{aligned}$$(2.17)

Here \(c=c(T,\Phi ,p)>0\).

Proof

Ad (a). We apply Itô’s formula (see [16, Thm. 4.17]) to the functional \(t\mapsto \frac{1}{p}\Vert u(t)\Vert _{L^p_x}^p\) and obtain

We have

On account of (2.1) we obtain

Finally, we use Burkholder–Davis–Gundy inequality, (2.1) and Young’s inequality to conclude

where Gronwall’s lemma comes again into play. Combining everything, choosing \(\kappa \) small enough and using Gronwall’s lemma yields the claim.

Ad (b). Differentating (1.1) with respect to \(\gamma \in \{1,2,3\}\) yields

Applying Itô’s formula to \(t\mapsto \frac{1}{p}\Vert \partial _\gamma u(t)\Vert _{L^p_x}^p\) we obtain

We have

On account of (2.1) we obtain

Finally, we use Burkholder–Davis–Gundy inequality, (2.1) and Young’s inequality to conclude

where Gronwall’s lemma comes again into play. Combining everything, choosing \(\kappa \) small enough and using Gronwall’s lemma yields the claim.

Ad (c). Differentating (2.19) again yields

where \(\gamma _1,\gamma _2\in \{1,2,3\}\). The nonlinearity can be handled as in (b), but for the noise we obtain the two terms

The first four of them can be estimated similarly to (b) using (2.5) but the last one requires more care (note that it disappears for affine linear noise). For the correction term we have

on account of (N1). Applying expectations and using part (b) (with the assumption \(u_0\in L^{2p}(\Omega ;W^{1,p}(\mathbb {T}^d))\) the second term is bounded, whereas the first one can be handled by Gronwall’s lemma. The supremum of the corresponding stochastic integral

can be estimated by similar means. \(\square \)

2.3 The semigroup of the Laplace operator

In this subsection we recall some well-known facts concerning the Laplace operator and (the discretisation of) its semigroup. We denote by \({\mathcal {L}}({\mathbb {X}},{\mathbb {Y}})\) the space of bounded linear operators between two Banach spaces \({\mathbb {X}}\) and \({\mathbb {Y}}\) and write \({\mathcal {L}}({\mathbb {X}})\) for \({\mathcal {L}}({\mathbb {X}},{\mathbb {X}})\). It is classical that there is a basis of \(L^2(\mathbb {T}^d)\) consisting of eigenfunctions \((v_j)_{j\ge 1}\) of \({\mathcal {A}}=-\Delta \) with positive eigenvalues \((\nu _j)_{j\ge 1}\) such that \(\nu _j\rightarrow \infty \) as \(j\rightarrow \infty \). We can use that to define powers of \({\mathcal {A}}\) by setting

for functions \(u\) from

Similarly, we can define for \(r<0\) the space \(W^{r,2}(\mathbb {T}^d)\) as the closure of \(L^2\) with respect to the norm

It is classical that \({\mathcal {A}}\) generates a strongly continuous semigroup and it is well-known that its discretisation \({\mathcal {S}}_{\tau }=(\textrm{Id}+\tau {\mathcal {A}})^{-1}\) satisfies

Note that (2.23) follows from \(\sup _t\Vert t{\mathcal {A}}{\mathcal {S}}(t)\Vert _{{\mathcal {L}}(L^2_x)}<\infty \), while (2.24) is a consequence of \(\textrm{Id}-{\mathcal {S}}_{\tau }=\tau {\mathcal {A}} {\mathcal {S}}_{\tau }\) and (2.22) with \(k=1\). Let us also mention the following estimate which controls the error between \({\mathcal {S}}(\tau )\) and \({\mathcal {S}}_{\tau }\)

Finally, (2.25) easily implies

2.4 Malliavin calculus

We recall some basic facts from Malliavin calculus, see [26] for a thorough introduction. Given a cylindrical \(({\mathfrak {F}}_t)\)-Wiener process as in Sect. 2.1, a smooth real-valued function F defined on \({\mathfrak {U}}^n\) and smooth random variables with values in \(\psi _1,\ldots ,\psi _n\in L^2(0,T;{\mathfrak {U}})\) we set

This allows to define \(\mathcal DF \) by \(\mathcal DF(t)f={\mathcal {D}}_t^{f}F\). With this definition it can be shown \({\mathcal {D}}\) defines a closable operator on \(L^2(\Omega \times (0,T);{\mathfrak {U}})\). A natural domain space for \({\mathcal {D}}\) is given by \({\mathbb {D}}^{1,2}\) which is the closure of the random variables (taking values in the set of smooth functions on \({\mathfrak {U}}^n\)) with respect to the norm

Note that \({\mathcal {D}}_{t} F=0\) for \(t\ge t'\) provided F is \(({\mathfrak {F}}_{t'})\)-adapted. We have a version of the chain rule: For \(\varphi \in C^1_b({\mathbb {R}})\) and \(F\in {\mathbb {D}}^{1,2}\) we have \(\varphi (F)\in {\mathbb {D}}^{1,2}\) together with the usual form \({\mathcal {D}} \varphi (F)=\varphi '(F){\mathcal {D}} F\).

Most important for us is the Malliavin integration by parts formula

It holds for all \(F\in {\mathbb {D}}^{1,2}\) and all adapted \(\psi \in L^2(\Omega \times (0,T);{\mathfrak {U}})\) with \({\mathbb {E}}\big [\int _0^T\int _0^T|{\mathcal {D}}_s\psi (t)|^2\,\mathrm ds\, \textrm{d}t\big ]<\infty \).

Similarly, we can define the Malliavin derivative of random variables taking values in \(L^2(\mathbb {T}^d)\). We denote by \({\mathbb {D}}^{1,2}(L^2(\mathbb {T}^d))\) the set of random variables \(G\in L^2(\Omega ;(L^2(\mathbb {T}^d)))\) with \(G=\sum _{j\ge 1} \alpha _j v_j\) with \((\alpha _j)\subset {\mathbb {D}}^{1,2}\) and \(\sum _{j\ge 1}\int _0^T|{\mathcal {D}}_s G_j|^2\,\mathrm ds<\infty \). Here \((v_j)_{j\ge 1}\) denotes a basis of \(L^2(\mathbb {T}^d)\) consisting of eigenfunctions of \(-\Delta \). For \(G\in {\mathbb {D}}^{1,2}(L^2(\mathbb {T}^d))\) with \(U=\sum _{j\ge 1} \alpha _j v_j\) and \(f\in {\mathfrak {U}}\) we define

Again the chain rule holds: For \(U\in {\mathbb {D}}^{1,2}(L^2(\mathbb {T}^d))\) with \(U=\sum _{j\ge 1} \alpha _j v_j\) and \(\varphi \in C^1_b(L^2(\mathbb {T}^d))\) we have \(\varphi (U)\in {\mathbb {D}}^{1,2}(L^2(\mathbb {T}^d))\) with \({\mathcal {D}} \varphi (U)=\langle D\varphi (U),{\mathcal {D}} U\rangle _{L^2_x}\). Finally, for \(U\in {\mathbb {D}}^{1,2}(L^2(\mathbb {T}^d))\), \(u\in C^2_b(L^2(\mathbb {T}^d))\) and \(\psi \in L^2\bigl (\Omega \times (0,T);L_2({\mathfrak {U}};L^2(\mathbb {T}^d)\bigr )\) adapted we have

3 Strong first order convergence rate for additive noise

In [23], strong order convergence rate \({{\mathcal {O}}}(\sqrt{\tau })\) is shown for a time-implicit discretisation of (1.1) with multiplicative noise. In this section, this result is improved to \({{\mathcal {O}}}(\tau )\) in the presence of additive noise \(\Phi (u) \equiv \Phi \) in (1.1). For this purpose, we use the transform

to recast (1.1) into the form

where we employ the following calculation for the last identity,

Assuming sufficient regularity of \(\Phi \), Lemma 2.2 implies the following corollary concerning the regularity of y.

Corollary 3.1

Let \(u\) be the unique weak pathwise solution to (1.1).

-

(a)

Assume that \(u_0\in L^p(\Omega ,W^{2,2}(\mathbb {T}^d))\) for some \(p\ge 2\) and \(\Phi \in L_2({\mathfrak {U}};W^{2,2}(\mathbb {T}^d))\). Then we have

$$\begin{aligned} {\mathbb {E}}\bigg [\sup _{0\le s\le T}\Vert \partial _ty(s)\Vert _{L^{2}_x}^p\bigg ]+ {\mathbb {E}}\bigg [\sup _{0\le s\le T}\Vert y(s)\Vert _{W^{2,2}_x}^p\bigg ]&\le c(p,T,\Phi ,u_0). \end{aligned}$$(3.3) -

(b)

Assume that \(u_0\in L^2(\Omega ,W^{3,2}(\mathbb {T}^d))\) and \(\Phi \in L_2({\mathfrak {U}};W^{3,2}(\mathbb {T}^d))\). Then we have

$$\begin{aligned} {\mathbb {E}}\bigg [\sup _{0\le s\le T}\Vert \nabla \partial _t y(s)\Vert _{L^{2}_x}^2\bigg ]\le c(T,\Phi ,u_0). \end{aligned}$$(3.4)

A reformulation corresponding to (3.2) was used in [10] to accomplish a corresponding goal, leading to an improved (local) rate of convergence for a discretisation of the 2D Navier-Stokes equation with additive noise (see [11] for the results in the multiplicative case). In comparison, the nonlinearity \(f= F'\) in (1.1) is again non-Lipschitz, but now is one-sided Lipschitz, which here leads to a discretisation scheme with strong rate \({{\mathcal {O}}}(\tau )\).

For its derivation, we define \(y^m:= u^m - \Phi W(t_m)\) for iterates \((u^m)_{m=1}^M\) from (1.7), which satisfy the following identity,

for \(m \ge 1\), and \(y^0 = u_0\). Here \(d_t\) denotes the discrete time derivative, that is \(d_t y^m=\tau ^{-1}(y^m-y^{m-1})\) for \(m\ge 1\). We make the following observations:

-

(1)

An equivalent derivation of Eq. (3.5) is via an implicit Euler-based time discretisation for the random PDE (3.2). Equation (3.5) may be used instead of (1.7) as an alternative scheme to solve (1.1).

-

(2)

In the strong error analysis for \((y^m)_{m=1}^M\) below, we prove order \({{\mathcal {O}}}(\tau )\); the proof rests on bounds for the time-derivative of the (weak variational) solution y from (3.2) in different norms, which are now available. Since \(y^m - y(t_m) = u^m - u(t_m)\), this result transfers to (1.7); see also Remark 3.1, item 4.

The following perturbation analysis evidences the role that the involved nonlinearity \(f = F'\) will play in the strong error analysis in Sect. 3.2.

3.1 A perturbation analysis for (3.5)

We start with a perturbation analysis, where we refer to \((y^m_i)_{m=1}^M\) as the solution of (3.5), with \(y^0_i =y_{0,i}\), for \(i \in \{1,2\}\). Subtracting both identities then leads to (\(e^m:= y^m_2-y^m_1\))

where \(d_t e^m:= \frac{1}{\tau } (e^m - e^{m-1})\). We write

with an obvious meaning of the terms \(\texttt {I}^m\), \(\texttt {II}^m\) and \(\texttt {III}^m\). When written in weak form, we test with \(e^m\), and treat resulting terms for \(\texttt {I}^m\) and \(\texttt {II}^m\) independently. For the first term \(\texttt {I}^m\) we use the following identity which is based on binomial formula (\(a,b \in {{\mathbb {R}}}\)),

With its help, we obtain that

And, by binomial formula, and (3.7),

where we used that

by Young’s inequality. By now using these calculations for the error identity, as well as the index m, and taking into account that

we find

and the implicit version of Gronwall’s lemma settles the estimate

3.2 Error analysis for (3.5)

We integrate in (3.2) over \([t_{m-1},t_m]\) to get

and define

In order to apply the parts from Sect. 3.1, we write

for \(s\in [t_{m-1},t_m]\), where

For the error \(E^m:= y(t_m) - y^m\), on using \(\texttt {IV}^m(t_{m-1})\), we may now easily deduce an equation of the form (3.6), with terms similar to \(\texttt {I}^m, \texttt {II}^m\), and \(\texttt {III}^m\), and a non-vanishing right-hand side \(\int _{t_{m-1}}^{t_m} \texttt{rest}_{\texttt {IV}^m}(s)\, {\textrm{d}}s\) instead. When tested with \(E^m\), the arguments to estimate the first three terms may then be estimated as in Sect. 3.1, leading to inequality (3.8), such that we finally arrive at \({{\mathbb {P}}}\)-a.s.:

Note that \( {{\mathbb {E}}}[\Vert \texttt {V}^m\Vert ^2_{L^2_x}] \le \tau ^2 t_m^3\); for the remaining term on the right-hand side, we treat the three terms \(\{ \texttt {rest}^{(i)}_{\texttt {IV}^m}\}_{i=1}^3\) separately:

where the first term is bounded by \(C \tau ^2\), thanks to (3.4), and Corollary 3.1. By Sobolev’s embedding, we estimate for \(\kappa >0\) arbitrary

where we also used interpolation of \(L^3_x\) between \(L^2_x\) and \(W^{1,2}_x\). Now we can absorb the last term into the left-hand side choosing \(\kappa \) sufficiently small. By (3.10) and Corollary 3.1 we can estimate the first term by \(C \tau ^2\). The missing term \( {{\mathbb {E}}}\bigl [ \bigl \langle \int _{s}^{t_m} \partial _t y(\xi )\, {\textrm{d}}\xi , E^m\bigr \rangle _{L^2_x}\bigr ]\) in \(\texttt {rest}^{(1)}_{\texttt{IV}^m}(s)\) can be estimated via an analogous (even easier) chain of inequalities. We resume with

and use \({{\mathbb {E}}}[\Vert \Phi (W(s) - W(t_m))\Vert ^2_{L^\infty _x}\bigl \vert {{\mathcal {F}}}_s] \le C\tau \), which is a consequence of assumption (2.1), to further estimate

Also, since \(\big ({{\mathbb {E}}}[\Vert y(s) - y(t_m)\Vert _{L^2_x}^2]^3\big )^{1/3} \le C\tau ^2\) which easily follows from (3.10) and Corollary 3.1,

The estimate for \(\texttt {rest}^{(2)}_{\texttt {IV}^m}(s)\) is analogous to that for \(\texttt {rest}^{(3)}_{\texttt {IV}^m}(s)\) such that we conclude

Estimating the final term by \(C\tau ^2\) and applying the discrete Gronwall lemma, the above considerations lead to the following theorem.

Theorem 3.1

Let \((\Omega ,\mathfrak {F},(\mathfrak {F}_t)_{t\ge 0},\mathbb {P})\) be a given stochastic basis with a complete right-continuous filtration and an \((\mathfrak {F}_t)\)-cylindrical Wiener process W. Let \(T>0\) be fixed. Assume that \(u_0\in L^p(\Omega ,W^{2,2}(\mathbb {T}^d))\cap L^2(\Omega ,W^{3,2}(\mathbb {T}^d))\) for some \(p\ge 8\) and \(\Phi \in L_2({\mathfrak {U}};W^{3,2}(\mathbb {T}^d))\). Let u be the solution to (1.1), and \((u^m)_{m=1}^M\) be the solution to (1.7). Then we have the error estimate

By its proof, this result also applies to \((y^m)_{m=1}^M\) from (3.5), and y from (3.2).

Remark 3.1

-

1.

Problem (1.1) usually involves a small-scale parameter \(\varepsilon >0\) to address the width of diffuse interfaces of adjacent material phases, whose resolution in terms of numerical scalings is crucial for accurate simulation; see [1]. In this work, we choose \(\varepsilon = 1\) to address non-Lipschitzness of f only, but expect a corresponding analysis as in [1] to go through for \(\varepsilon \ll 1\)—avoiding a factor \(\exp (\frac{T}{\varepsilon })\) that otherwise would occur in a straight-forward application of Gronwall’s lemma.

-

2.

The proof of a strong rate \({{\mathcal {O}}}(\sqrt{\tau })\) for an implicit time discretisation—which slightly differs from (1.5)—in the case of (1.1) with multiplicative noise in [23] exploits its character as a structure preserving discretisation, and therefore inherits the gradient structure of the problem, and related energy estimates. The crucial step in the error analysis then uses the weak monotonicity property of the cubic nonlinearity (see [23, (4.11)]) to avoid a truncation argument for the nonlinearity in the sense of [27] for the stochastic Navier–Stokes equation; see also [10].

-

3.

The construction and analysis of numerical schemes in [1, 23] is based on the strong variational solution concept for (1.1)—which is in contrast to other numerical works in the literature where the linear semi-group \({{\mathscr {S}}}:= \{ {{\mathscr {S}}}(t);\, t \ge 0\}\), for \({{\mathscr {S}}}(t) = e^{t\Delta }\) is used as building block to set up the mild solution concept for (1.1) with additive noise for \(d=1\) and \(\varepsilon =1\),

$$\begin{aligned} u(t){} & {} = {{\mathscr {S}}}(t) u_0 + \int _0^t {{\mathscr {S}}}(t-s) f\bigl ( u(s)\bigr )\, {\textrm{d}}s\nonumber \\{} & {} \qquad + \int _0^t {{\mathscr {S}}}(t-s) \, {\textrm{d}}W(s) \qquad \forall \, t \ge 0. \end{aligned}$$(3.16)In this setting, the authors of [6, 8] prove strong rates of convergence for a splitting scheme, even addressing space-time white noise. Splitting schemes have a long tradition in evolutionary problems to avoid solving nonlinear PDEs for iterates, but cause structure violation of (1.1); in fact, the underlying stability bounds in [8, Prop. 3] and [6, Lemma 3.1] are not in the natural energy norm of the underlying problem (1.1) with gradient structure, and also require a Gronwall-type estimation—which reflects usually needed smaller mesh sizes in ‘splitting-scheme based’ simulations at intermediate or large times \(T \gg 1\) to keep accuracy. In comparison, the (energy) structure-preserving schemes (1.5) and (3.5) are nonlinear, but the larger computational effort caused by Newton-type fast nonlinear solvers here usually goes along with admissible larger mesh sizes to attain the same accuracy.

-

4.

In [6], the strong rate \({{\mathcal {O}}}(\tau )\) is shown for the scheme [6, (1.2)] in [6, Thm. 4.6]. A crucial step in their proof is also to employ a transformation, see [6, (2.4) and (2.7)], which is similar conceptionally to (3.1)—however in [6] on the level of mild solutions, using (3.16); in fact, the (corresponding) identity

$$\begin{aligned} u(t) = Y(t) + {\widetilde{W}}^{\Delta }(t) \end{aligned}$$(3.17)is used instead of SPDE (3.16). Eventually, only the random PDE

$$\begin{aligned} Y(t) = {{\mathscr {S}}}(t) u_0 + \int _0^t {{\mathscr {S}}}(t-s) f \bigl ( Y(t) + \widetilde{W}^{\Delta }(t)\bigr )\, {\textrm{d}}s \qquad \forall \, t \ge 0 \end{aligned}$$(3.18)is studied in the main part of the analysis in [6]. Here the last term is the ‘stochastic convolution process \(\widetilde{W}^{\Delta }:= \{\widetilde{W}^{\Delta }(t);\, t \ge 0\}\)’. From a practical point of view, however, the authors in [6] clearly mention the restricted applicability of their scheme, which requires the explicit knowledge of \({{\mathscr {S}}}\), and (an approximation) of \(\widetilde{W}^{\Delta }\): as such, its efficient use requires to know its spectrum—which restricts its practical application to prototypic domains \({{\mathcal {O}}} \subset {{\mathbb {R}}}^d\) where eigenvalues of \(\Delta \) are explicitly known. On the other hand, the approach in [6] allows less regular noise compared to here. Corresponding restrictions hold for schemes that are studied in [2, 3, 14, 24], where again the construction rests on (3.18) and (3.17), and involves (the approximation of) \(\widetilde{W}^{\Delta }\).

-

5.

To our knowledge, optimal strong convergence \({{\mathcal {O}}}(\tau )\) for a time discretization to solve (1.1) with additive trace-class noise was first established in [28]Footnote 2: here, again, the authors start with a mild solution for (1.1), and base their error analysis of (1.5) on its reformulation [28, (4.2)], and the known (smoothing) properties of the semigroup \({{\mathscr {S}}}_t = e^{t \Delta }\). The error analysis provided in this section rather exploits variational arguments: it bases on reformulation (3.5), as well as simple, explicit calculations for the specific f in Sect. 3.1, and may easily be generalized to weakly elliptic (e.g. non-selfadjoint) operators which do not generate a semi-group; see e.g. [20, Section 2.5] for the convected or degenerate Allen–Cahn equation as prominent examples in multiphase fluid flow models, or the anisotropic Allen–Cahn equation in [19, Section 8].

4 Preparations for the weak error analysis

4.1 The nonlinear semigroup

In this section we study properties of the discrete nonlinear semigroup \({\mathcal {T}}_\tau \) on \(L^2(\mathbb {T}^d)\), which is the solution operator to the equation

where \(\mathfrak {g}\in L^2(\mathbb {T}^d)\) is given. We start with some stability estimates. We can write (4.1) equivalently as

Due to the choice of the continuous interpolation \(u_\tau \) in (4.26) below, the weak error analysis in Sect. 5 heavily depends on stability estimates for \({\mathcal {T}}_\tau \), which we derive in the following lemma. Eventually, we estimate the distance of \({\mathcal {T}}_\tau \) to the identity and consider its Fréchet-derivatives. They are used for the same purpose due to the representation for \(u_\tau \) in (4.27).

Lemma 4.1

Suppose that \(\tau <\frac{1}{2}\) and \(p\ge 2\). Then we have

for all functions \(\mathfrak {g}\) for which the quantities on the right-hand side are finite. Here \(c=c(p)>0\) is independent of \(\tau \) and \(\mathfrak {g}\).

Proof

Ad (4.3). Testing (4.1) by \(|v|^{p-2}v\) shows

with \(c(p)=\frac{4(p-1)}{p^2}\), where we used

The term on the right-hand side can be handled by Young’s inequality, while we have

This proves (4.3) for \(\tau <\frac{1}{2}\).

Ad (4.4). Similarly, by applying \(\partial _i\) to (4.1), multiplying with \(|\partial _iv|^{p-2}\partial _iv\) (and arguing as in (4.6)) integrating in spaceFootnote 3 and summing with respect to \(i\in \{1,2,3\}\) yields

The two last terms can be handled analogously to the proof of (4.3), such that (4.4) follows.

Ad (4.5). Now we apply \(\partial _i\partial _j\) to the equation and multiply with \(|\partial _i\partial _jv|^{p-2}\partial _i\partial _jv\). It remains to control the nonlinear term as the rest can be handled analogously to the estimates above. We obtain on the right-hand side

After integration over \(\mathbb {T}^d\) the first term can be absorbed, while the other two can be controlled using (4.3) and (4.4). \(\square \)

On using \({\mathcal {T}}_\tau :L^2(\mathbb {T}^d)\rightarrow W^{2,2}(\mathbb {T}^d)\), cf. Eq. (4.1), we can write

Using Eq. (4.7) and estimates (2.22)–(2.24) one can derive the following from Lemma 4.1.

Corollary 4.1

Under the assumptions of Lemma 4.1 we have

where \(c>0\) is independent of \(\tau \).

Writing now \(\textrm{Id}-{\mathcal {T}}_{\tau }=\textrm{Id}-{\mathcal {S}}_\tau +{\mathcal {S}}_\tau -{\mathcal {T}}_{\tau }\), and combining (4.8)–(4.10) with (2.24) we obtain the following result.

Corollary 4.2

Under the assumptions of Lemma 4.1 we have

where \(c>0\) is independent of \(\tau \).

Now we turn to the Fréchet derivative of \({\mathcal {T}}_\tau \) and prove estimates on \(L^p(\mathbb {T}^d)\), \(W^{1,2}(\mathbb {T}^d)\) and \(W^{2,2}(\mathbb {T}^d)\), respectively.

Lemma 4.2

Let \(\tau <\frac{1}{2}\) and \(p\ge 2\).

-

(a)

For all \(\mathfrak {g}\in L^p(\mathbb {T}^d)\) we have

$$\begin{aligned} \Vert D{\mathcal {T}}_\tau (\mathfrak {g})\Vert _{{\mathcal {L}}(L^p_x)}\le 1 \end{aligned}$$(4.14) -

(b)

For all \(\mathfrak {g}\in L^6(\mathbb {T}^d)\) we have

$$\begin{aligned} \Vert D{\mathcal {T}}_\tau (\mathfrak {g})\Vert _{{\mathcal {L}}(W^{1,2}_x)}\le \,c\Big (1+c\tau \Vert \mathfrak {g}\Vert _{L^{6}_x}^{4}\Big ). \end{aligned}$$(4.15) -

(c)

For all \(\mathfrak {g}\in W^{2,2}(\mathbb {T}^d)\).We have

$$\begin{aligned} \Vert D{\mathcal {T}}_\tau (\mathfrak {g})\Vert _{{\mathcal {L}}(W^{2,2}_x)}\le \,c\Big (1+\tau \Vert \mathfrak {g}\Vert _{W^{2,2}_x}^{8}\Big ). \end{aligned}$$(4.16)

Proof

Ad (a) By inverse function theoremFootnote 4 for Fréchet derivatives we have

The operator \(D{\mathcal {T}}^{-1}_\tau (\mathfrak {g})\) is coercive on \(L^2_x\) as

independently of \(\mathfrak {g}\). A similar argument applies in \(L^p(\mathbb {T}^d)\) for \(p>2\) since

Hence the claim follows.

Ad (b) We obtain

The last term does not have an obvious sign and needs to be estimated. We have

such that we conclude

Replacing \(\mathfrak {h}\) by \(D{\mathcal {T}}_\tau (\mathfrak {g})\mathfrak {h}\) (recall that \({\mathcal {T}}_\tau :L^2(\mathbb {T}^d)\rightarrow W^{2,2}(\mathbb {T}^d)\), cf. Eq. 4.1) and using (4.14) shows

and the claim follows.

Ad (c) We have

where the first term can be estimated by

by interpolation and Sobolev’s embedding. We further conclude

and the second term in (4.18) has the lower bound

We conclude as in the proof of (4.15)

Replacing \(\mathfrak {h}\) by \(D{\mathcal {T}}_\tau (\mathfrak {g})\mathfrak {h}\) and using (4.15) shows

which yields the claim. \(\square \)

Finally, we estimate the distance between \(D{\mathcal {T}}_\tau \) and the identity.

Lemma 4.3

Let \(\tau <\frac{1}{2}\).

-

(a)

For all \(\mathfrak {h}\in W^{2,2}(\mathbb {T}^d)\) and \(\mathfrak {g}\in L^6(\mathbb {T}^d)\) we have

$$\begin{aligned} \Vert (D{\mathcal {T}}_\tau (\mathfrak {g})-{{\,\textrm{Id}\,}})\mathfrak {h}\Vert _{L^2_x}&\le \,c\tau \Vert \mathfrak {h}\Vert _{W^{2,2}_x}\Big (1+\Vert \mathfrak {g}\Vert _{L^{6}_x}^{2}\Big ). \end{aligned}$$(4.19) -

(b)

For all \(\mathfrak {h}\in W^{4,2}(\mathbb {T}^d)\) and \(\mathfrak {g}\in W^{2,2}(\mathbb {T}^d)\) we have

$$\begin{aligned} \begin{aligned} \Vert (D{\mathcal {T}}_\tau (\mathfrak {g})-{{\,\textrm{Id}\,}})\mathfrak {h}\Vert _{W^{2,2}_x}&\le \,c\tau \Big (1+\Vert \mathfrak {g}\Vert _{W^{2,2}_x}^{8}\Big )\Vert \mathfrak {h}\Vert _{W^{4,2}_x}. \end{aligned} \end{aligned}$$(4.20) -

(c)

For all \(\mathfrak {g}\in L^6(\mathbb {T}^d)\) we have

$$\begin{aligned} \Vert D^2{\mathcal {T}}_\tau (\mathfrak {g})\Vert _{{\mathcal {L}}(W^{1,2}_x\times W^{1,2}_x;L^2_x)}\le \,c\tau \Big (1+\Vert \mathfrak {g}\Vert _{L^{6}_x}\Big ). \end{aligned}$$(4.21)

Proof

We rewrite formula (4.17) as

Now we estimate the term on the right-hand side by means of Lemma 4.2.

Ad (a). By (4.14) it follows that

Ad (b). Similarly to (a), (4.15) yields

using also (4.3) and (4.4). Employing also (4.15) and (4.4) an analogous chain gives

Using (4.5) we can finish the proof.

Ad (c). Now we turn to the second derivative which can be written as

Using (4.14) and (4.3) with \(p=6\) yields

for all \(\mathfrak {h}_1,\mathfrak {h}_2\in W^{1,2}_x\). Hence the claim follows. \(\square \)

4.2 Semi-discretisation in time

We consider an equidistant partition of [0, T] with mesh size \(\tau =T/M\) and set \(t_m=m\Delta t\). Let \(u_0\) be an \({\mathfrak {F}}_0\)-measurable random variable with values in \(W^{1,2}(\mathbb {T}^d)\). We aim at constructing iteratively a sequence of \({\mathfrak {F}}_{t_m}\)-measurable random variables \(u_{m}\) with values in \(W^{1,2}(\mathbb {T}^d)\) such that for every \(\varphi \in W^{1,2}(\mathbb {T}^d)\) it holds true \({\mathbb {P}}\)-a.s.

where \(\Delta _m W=W(t_m)-W(t_{m-1})\). The existence of a unique \(u^m\) (given \(u_{m-1}\) and \(\Delta _m W\)) solving (4.23) follows from its re-interpretation as a convex minimisation problem. Moreover, the discrete energy estimate

holds under the assumptions made in Lemma 4.4. In fact, we will study the stability of \(u_m\) in detail in Sect. 4.3 and derive more general (and higher order) estimates in Lemma 4.4.

For the weak error analysis in Sect. 5 it will turn out to be useful to write (4.23) as

where \({\mathcal {T}}_\tau \) is the discrete nonlinear semigroup corresponding to \(D{\mathcal {E}}\), which we analysed in Sect. 4.1 above. Note that different to previous works on weak error analysis, cf. [5, 7, 17], we treat the nonlinearity implicitly. Hence it is more complicated to define a time-continuous interpolant which coincides with \(u_m\) in \(t_m\) and is still progressively measurable. Note, however, that \({\mathcal {T}}_\tau \) features nice properties similar to those that we have seen in the previous subsection.

Setting

we introduce the \(({\mathfrak {F}}_t)\)-adapted process

for \(t\in [t_{m-1},t_m]\), which coincides with \(u_{m-1}\) in \(t_{m-1}\) and with \(u_m\) in \(t_m\). In the following we linearise this formula around \(U_\tau \), which gives a part which is (given \(U_\tau \)) linear in \(u_{m-1}\) (similar to the method from [5, 7, 17]) plus an error term. The latter will turn our to be globally of order \(\tau \) as required. Applying Itô’s formula to the second term in (4.26) yields

for \(t\in [t_{m-1},t_m]\).

Finally, we derive some uniform estimates for \(U_\tau \) in terms of \(u_m\). By the definition of \(U_\tau \), the Burkholder–Davis–Gundy inequality, and estimates (4.3) and (2.1), we have for all \(q\ge 2\)

for \(t\in [t_{m-1},t_m)\). A similar argument applies when we replace \(L^2(\mathbb {T}^d)\) by \(W^{1,2}(\mathbb {T}^d)\) or \(W^{2,2}(\mathbb {T}^d)\) using this time (2.22) combined with (2.1) and (4.4) or (2.22), (2.5) and (4.5) respectively. We conclude

uniformly in \(\tau \) for \(q\ge 2\), \(t\in [t_{m-1},t_m]\) and \(k=0,1,2\). By the estimates (4.3) and (4.4) in Lemma 4.2, formula (4.26) thus yields

uniformly in \(\tau \) for \(q\ge 2\), \(t\in [t_{m-1},t_m]\) and \(k=0,1\). Similarly, (4.5) in Lemma 4.2 implies

Note that controlling the right-hand sides of (4.28)–(4.30) is not straightforward and will be done in the next subsection.

4.3 Estimates for the time-discrete solution

We now derive some uniform estimates for the solution of the time-discrete problem (4.23). These estimates involve the energy \({{\mathcal {E}}}(\cdot )\) from (1.2) as relevant Liapunov functional, reflecting that the discrete problem (4.27) inherits the relevant gradient flow property of the original problem (1.1).

Lemma 4.4

Let \((\Omega ,\mathfrak {F},(\mathfrak {F}_t)_{t\ge 0},\mathbb {P})\) be a given stochastic basis with a complete right-continuous filtration and an \((\mathfrak {F}_t)\)-cylindrical Wiener process W. Let \(T\equiv t_M >0\), assume that \({\mathcal {E}}(u_0)\in L^{2^q}(\Omega )\) for some \(q \in {{\mathbb {N}}}_0\). Choose \(\tau \le \frac{1}{4}\). The iterates \((u_m)_{m=1}^M\) from (4.23) satisfy the following estimates.

-

(a)

Suppose that either (N1) (a) holds and that \(\Phi \) is bounded and summable in the sense that \(\sup _ {x,\xi }|\Phi (x,\xi )e_k|\le \,\mu _k\) with \(\sum _{k\ge 1}\mu _k<\infty \) or that assumption (N2) is in place. For all \(q\in {\mathbb {N}}_0\) there exists \(c=c(q,T,u_0)\), such that

$$\begin{aligned} {\mathbb {E}}\bigg [\max _{0\le m\le M} \bigl [{{\mathcal {E}}}(u_m)\bigr ]^{2^q} + \tau \sum _{m=1}^M {\bigl [{{\mathcal {E}}}(u_m)\bigr ]^{2^q-1}}\Vert D{{\mathcal {E}}}(u_m)\Vert ^2_{L^2_x} \bigg ]&\le \,c \, . \end{aligned}$$(4.31) -

(b)

Assume that \(u_0\in L^{2^q}(\Omega ,W^{2,2}(\mathbb {T}^d))\), \({\mathcal {E}}(u_0)\in L^{2^{q+2}}(\Omega )\) for some \(q\in {\mathbb {N}}_0\) and that \(\Phi \) satisfies (N1) if \(d=1,2\), and (N2) if \(d=3\). Then we have

$$\begin{aligned} {\mathbb {E}}\bigg [\max _{1\le m\le M}\Vert u_m\Vert ^{2^q}_{W^{2,2}_x}+\tau \sum _{m=1}^M {\Vert u_m\Vert _{W^{2,2}_x}^{2^q-2}}\Vert \nabla ^3u_m\Vert ^2_{L^2_x}\bigg ]&\le \,c\, . \end{aligned}$$(4.32) -

(c)

Assume that \(u_0\in L^{2^q}(\Omega ,W^{3,2}(\mathbb {T}^d))\cap L^{2^{q+2}}(\Omega ,W^{2,2}(\mathbb {T}^d))\), \({\mathcal {E}}(u_0)\in L^{2^{q+4}}(\Omega )\) for some \(q\in {\mathbb {N}}\) and that \(\Phi \) satisfies (N1) if \(d=1,2\), and (N2) if \(d=3\). Then we have

$$\begin{aligned} {\mathbb {E}}\bigg [\max _{1\le m\le M}\Vert u_m\Vert ^{2^q}_{W^{3,2}_x}+\tau \sum _{m=1}^M {\Vert u_m\Vert _{W^{3,2}_x}^{2^q-2}}\Vert \nabla ^4u_m\Vert ^2_{L^2_x}\bigg ]&\le \,c\, . \end{aligned}$$(4.33)

Here \(c=c(q,T,u_0)>0\) is independent of \(\tau \).

Remark 4.1

Part (a) is an energy estimate for the solution \((u_m)_{m=1}^M\) from (4.23); in the context of phase-field models where the mesh width \(\varepsilon >0\) enters, it avoids exponential growth with respect to \(\varepsilon ^{-1}\) in time—as its continuous counterpart. Its derivation below is close to [1, Lemma 3.2], but here generalizes to noise of type (N2). The remaining parts (b) and (c) give higher norm estimates.

Proof of Lemma 4.4

Ad (a). Choose \(q=0\) first. We proceed in several steps: I. derives a first energy estimate, which creates a new term, which is then bounded independently in II. To extend the estimate (a) to \(q \ge 1\) then follows from a general inductive argument as in [1, Lemma 3.2].

I. (Formally) choose \(\varphi = D {{\mathcal {E}}}(u_m)\) in (4.23) and integrate to get

which we write as

where \(\texttt {I}^m=\texttt {I}^m_\texttt {A}+\texttt {I}^m_\texttt {B}\) with

The following estimate holds (see e.g. [21, estimate (35)])

whose short proof is recalled here for the reader’s convenience, and which is based on repeated use of binomial formulae: since

we have that

By coming back to (4.34), we then obtain after summation

To proceed we must control the stochastic term \({\mathcal {N}}_m:=\sum _{n=1}^m \texttt {II}^n\), where

It follows from the definition of \( {{\mathcal {E}}}(u^n)\) that

Corresponding terms are \({\mathcal {N}}_m^\texttt {A}\), \({\mathcal {N}}_m^\texttt {B}\), and \({\mathcal {N}}_m^\texttt {C}\), which we now bound independently.

\({{\textbf {I}}}_{{\textbf {1}}}\)) We have by Young’s inequality and Itô-isometry for \(\kappa >0\) arbitrary

using also (2.1) in the last step. The first term can be absorbed for \(\kappa \ll 1\) on the left-hand side of (4.36) and, since \(\big \Vert \nabla u_{n-1}\big \Vert _{ L^2_x}^2\le \,2{\mathcal {E}}(u_{n-1})\), the final term can be handled by (discrete) Gronwall’s lemma there.

\({{\textbf {I}}}_{{\textbf {2}}}\)) We split the next term as \({\mathcal {N}}_m^\texttt {B}:= {\mathcal {N}}_m^{\texttt {B},1} + {\mathcal {N}}_m^{\texttt {B},2}\), which is motivated by the algebraic identity

By generalized Young’s inequality we estimate (\(\kappa > 0\))

For \(\kappa \ll 1\) sufficiently small, the first term may be absorbed on the left-hand side of (4.36); for the second term, we note that

The latter part in the last inequality is part of \({{\mathcal {E}}}(u_n)\), which is why this term may now be bounded via discrete Gronwall inequality. For the final term we use estimates for the stochastic integral in UMD-Banach spaces, see [29]. For an UMD Banach space \((X;\Vert \cdot \Vert )\) and a separable Hilbert space \({\mathbb {H}}\) with orthonormal basis \((h_k)_{k\ge 1}\) we denote by \(\gamma ({\mathbb {H}},X)\) the space of \(\gamma \)-radonifying operators from \({\mathbb {H}}\rightarrow X\) with norm

where \((\gamma _k)_{k\ge 1}\) is a sequence of standard Gaussian random variables given on probability space \((\Omega ',{\mathfrak {F}}',{\mathbb {P}}')\) with expectation \({\mathbb {E}}'\). Note that this differs from the original probability space \((\Omega ,{\mathfrak {F}},{\mathbb {P}})\). However, in the case of Hilbert spaces the norm above coincides with the Hilbert–Schmidt operator norm. In particular, the additional randomness disappears. In our case, it will be removed by the following estimate and is only introduced to quote the estimate from [29]. We obtain using the assumption made on \(\Phi \)

Using now the estimate from [29] for \(X=L^4_x\) as well as (2.1) we obtain

To bound the term \({\mathcal {N}}_m^{\texttt {B},2}\), we distinguish the type of admissible noise:

\({{\textbf {I}}}_{{\textbf {2}}_{{\textbf {1}}}}\)) Let \(\Phi \) satisfy (N1) (a) and be bounded. Then there exists \(c\ge 0\) such that (\(\kappa >0\) arbitrary):

The first term will again be be bounded via discrete Gronwall inequality, while the second term will be bounded below.

\({{\textbf {I}}}_{{\textbf {2}}_{{\textbf {2}}}}\)) Let \(\Phi \) satisfy (N2). We use a compatibility property of data \(\Phi \) and f from \(D{{\mathcal {E}}}\). For the derivation of the bound, it suffices to consider the case that all \(\beta _k \equiv 0\) in (N2) only. Now fix one \(k \in {{\mathbb {N}}}\). Then

After summation, and taking expectations, we first use Young’s inequality, and then use Itô-isometry as well as (2.7) (\(\kappa > 0\))

The leading term may now be absorbed on the left-hand side of (4.36), while the last term is the same as in (4.38), and will be bounded below. The estimation of \({{\mathbb {E}}}\bigl [ \max _{1\le m\le M}\sum _{n=1}^m \texttt {B}_n^2\bigr ]\) is immediate.

\({{\textbf {I}}}_{{\textbf {3}}}\)) Since \({\mathcal {N}}_m^\texttt {C}\) is a martingale we finally obtain by Burkholder–Davis–Gundy inequality, the expression \(D{\mathcal {E}}(u_{n-1})=-\Delta u_{n-1}+f(u_{n-1})\) and (2.1),

which can be handled by absorption (for \(\kappa \) small enough) and Gronwall’s lemma.

By inserting the estimates \({{\textbf {I}}}_{{\textbf {1}}}\))–\({{\textbf {I}}}_{{\textbf {3}}}\)) into (4.36) and choosing \(\kappa \ll 1\) small enough to allow absorption of terms, there exists \(c \equiv c(t_M)> 0\) such

II. To bound the last term in (4.40) we choose \(\varphi = u_{m}- u_{m-1}\) in (4.23) to get

where the terms \(\texttt {I}^m_\texttt {A}\) and \(\texttt {I}^m_\texttt {B}\) are from (4.35). Hence, we resume that

For \(\tau \le \frac{1}{4}\), and after summation over \(1 \le m \le M\),

By Itô-isometry, the last term is bounded by

upon inserting this estimate into (4.40) settles the estimate (a) for \(q = 0\).

III. We may now proceed inductively to settle (a) for \(q \ge 1\), by multiplying (4.34) with \(\bigl [{{\mathcal {E}}}(u_{m})\bigr ]^{2^q-1}\) before summation; it is the implicit numerical treatment of drift terms in scheme (4.23) that generates new numerical diffusion terms which then control newly arising terms; see [1, Lemma 3.2] for details.

Ad (b). Before we come to the proof of (4.32) we need some preliminary estimates for lower order deriavtives. We choose \(u_{m}\) as a test function to get

where, clearly,

By binomial formula, and iterating this estimate and applying Gronwall’s lemma proves

where

Since \(u_{m-1}\) is \({\mathfrak {F}}_{t_{m-1}}\)-measurable \({\mathscr {M}}_m^1\) is a (discrete) martingale such that, by Burkholder–Davis–Gundy inequality, (2.1) and Young’s inequality,

for any \(\kappa >0\). Furthermore, we have for arbitrary \(\kappa >0\)

due to Young’s inequality, Itô-isometry and (2.1). Absorbing the \(\kappa \)-terms and applying Gronwall’s lemma we conclude

Let now \(q\in {\mathbb {N}}\): we argue by induction as in (a) (first multiply (4.42) by \(\Vert u_n\Vert ^{2^{q}-2}_{L^2_x}\), then take expections to get bounds for involved; afterwards, use these bounds when \(\max \) is applied first before taking expectations; see [1, Lemma 3.2] for details) to get

By formally testing with \(-\Delta u_m\), using that

and controlling the stochastic integral with the help of (2.3) we obtain similarly

and again for \(q\in {\mathbb {N}}\), by multiplication with \(\Vert u_m\Vert _{W^{1,2}_x}^{2^{q}-2}\) before taking expectations,

Now we come to the proof of (4.32). We formally test the equation with \(\Delta ^2 u_m\). For the nonlinear term we obtain (\(\kappa >0\))

Here the second term can be absorbed for \(\kappa \ll 1\), whereas the first one (in expectation and summed form) can be controlled by (4.44) (with \(q=3\)). If \(d=3\) we use that \(\Phi \) is assumed to be affine linear in u, cf. assumption (N2). In this situation the stochastic terms can be estimated exactly as in the proof of (4.43). If \(d=1,2\) this problem can be overcome by Ladyshenskaya’s inequality and (N1). In particular, we have by (2.8)

which is uniformly bounded by (4.32) with \(q=2\). This settles the proof of (4.32) for \(q=1\). An inductive argument may now be employed to complete the proof for (4.32) for \(q\in {\mathbb {N}}\).

Ad (c). The proof works along the same lines testing with \(\Delta ^3u_m\) and estimating the stochastic terms by means of (2.7). For the nonlinear term we obtain

The second term on the right-hand side can be absorbed provided we choose \(\kappa >0\) small enough. The first one (summed with respect to m) ins bounded by (4.32). \(\square \)

4.4 The Kolmogorov equation

We set

where \(u(t,\mathfrak {h})\) is the solution to (1.1) with \(u_0=\mathfrak {h}\) and \(\varphi \in C_b^1(L^2_x)\). It is well-known that \({\mathscr {U}}\) solves

In the following we derive some estimates for \({\mathscr {U}}\). The proofs are only formal and can be made rigorous by considering a finite-dimensional Galerkin approximationFootnote 5 of (1.1) (leading to a finite-dimensional Kolmogorov equation approximating (4.45)) and establishing estimates which are uniform with respect to the Galerkin parameter. Such a procedure is technical and tedious but standard in literature. We refer to [12] and [16, Chapter 9] for a detailed analysis of Kolmogorov equations.

A crucial ingredient in our proof is the monotonicity of the leading term in \(f(u)=u^3-u\). It is currently an open problem to obtain similar results for semilinear equations without this property such as the 2D Navier–Stokes equations. Since we allow more regularity for the solution to (1.1) through more regular data when compared to previous papers, we only require estimates in \(L^2_x\) for the weak error analysis. For example, the estimates in [5, 7, 17] are given in fractional Sobolev spaces (with differentiability strictly smaller than 1/2) for the derivatives of \({\mathscr {U}}\) are proved instead; morover, the situation here is more complicated than that in [5, 17] due to the non-Lipschitz nonlinearity in (1.1). In [7] the Kolmogorov equation for (1.1) in 1D is considered (with additive space-time white noise), while we study (1.1) with smooth multiplicative noise in cases \(d=1,2,3\) in the following.

Lemma 4.5

Let \(\varphi \in C^1_b(L^p(\mathbb {T}^d))\) for some \(p\in (1,\infty )\) and suppose that (N1) (a) holds. Then we have for \(t\in (0,T)\) and \(\mathfrak {h}\in L^p(\mathbb {T}^d)\)

Proof

We proceed formally. A rigorous proof can be obtained as follows:

-

We first proof the result for \(p=2\) by means of a Galerkin approximation and pass to the limit obtaining well-defined infinite-dimensional objects.

-

In particular, we obtain a variational solution to (4.47) below to which we can apply Itô’s formula. This can be justified by truncating the function \(z\mapsto z^p\) and applying the Itô-formula in Hilbert spaces from [16, Theorem 4.17].

Differentiating \({\mathscr {U}}\) with respect to \(\mathfrak {h}\) in direction \(\mathfrak {g}\in L^{p'}(\mathbb {T}^d)\) with \(p':=p/(p-1)\) yields

where \(\eta ^{\mathfrak {h},\mathfrak {g}}\) solves

Since \(D\varphi \) is bounded we have

Applying Itô’s formula to (4.47) yields

Since \(f'\ge -1\), the second term on the right-hand side is clearly bounded by \(\int _0^t\Vert \eta ^{\mathfrak {h},\mathfrak {g}}(t)\Vert _{L^{p'}_x}^{p'}\, \textrm{d}s\), while the third one vanishes under the expectation. Using (N1), the same bound follows for the last term. We conclude

such that, by Gronwall’s lemma,

Plugging this into (4.48) and taking the supremum with respect to \(\mathfrak {g}\) proves the claim. \(\square \)

Note that it is easy to generalise the argument above to obtain

for all \(q\ge p'\). For that purpose it is sufficient to argue as before and estimate the stochastic integral by means of the Burkholder-Davis-Gundy inequality and (2.1). By a parabolic interpolation (4.50) (with \(p'=2\)) yields

for all \(q\ge 2\).

Lemma 4.6

Let \(\varphi \in C^2_b(L^2(\mathbb {T}^d))\) and suppose that (N1) holds. Then we have for \(t\in (0,T)\), \(\mathfrak {h},\mathfrak {g}\in L^2(\mathbb {T}^d)\) and \(\mathfrak {k}\in L^6(\mathbb {T}^d)\)

Proof

Differentiating (4.46) again with respect to \(\mathfrak {h}\) (in direction \(\mathfrak {k}\in L^2(\mathbb {T}^d)\)) gives

where \(\zeta ^{\mathfrak {h},\mathfrak {g},\mathfrak {k}}\) solves

with \(\zeta ^{\mathfrak {h},\mathfrak {g},\mathfrak {k}}(0)=0\), which can be seen from differentiating (4.47). Note that the second term in (4.52) can be estimated by means of (4.51). In order to estimate the first one, we apply Itô’s formula to (4.53) yielding

By Hölder’s inequality we can bound the last line by the second last one, whereas the stochastic integrals have zero expectation and thus can be ignored. By (2.1) we have

Clearly, it also holds that

The remaining two terms are more complicated. First of all, (2.5) yields

the expectation of which can be controlled by \(\Vert \mathfrak {g}\Vert _{L^2_x}\) and \(\Vert \mathfrak {k}\Vert _{L^6_x}\) using (4.50). The most critical term is

We can absorb the first term, whereas using the embedding \(L^{6/5}_x\hookrightarrow W^{-1,2}_x\) the second one is bounded by

Hence we obtain from (2.13) (with \(p=6\)) and (4.51)

We conclude for (4.54) that

which finishes the proof by bilinearity of \(D^2{\mathscr {U}}(t,\mathfrak {h})\). \(\square \)

Estimate (4.55) above can be strengthened to

for any \(q\ge 2\): By taking the power q/2 in (4.54), the only difference is that the stochastic integrals do not vanish and must be estimated. Using Burkholder-Davis-Gundy inequality and (4.51) we obtain the upper bounds (assuming that \(q\ge 4\))

as well as

which can be handled by Gronwall’s lemma. By a parabolic interpolation we obtain (4.56) as well as

for any \(q\ge 2\).

Lemma 4.7

Let \(\varphi \in C^3_b(L^2(\mathbb {T}^d))\) and suppose that (N1) holds. Then we have for \(t\in (0,T)\) and \(\mathfrak {h}\in L^2(\mathbb {T}^d)\) and \(\mathfrak {k},\mathfrak {g},\mathfrak {l}\in L^6(\mathbb {T}^d)\)

Proof

Differentiating (4.52) again with respect to \(\mathfrak {h}\) (in direction \(\mathfrak {l}\in L^2(\mathbb {T}^d)\)) gives

where (by differentiating (4.53))

with \(\xi ^{\mathfrak {h},\mathfrak {g},\mathfrak {k},\mathfrak {l}}(0)=0\). The last three terms in (4.58) can be estimated by means of (4.51) and (4.56). So, our focus is on the first one. We apply now Itô’s formula and argue similarly to the proof of Lemmas 4.5 and 4.6. The correction terms can be handled as there using (2.1)–(2.6). For instance, we have

using (4.51) and (4.57). We clearly have

Let us now focus on the remaining terms arising from the nonlinearity being more critical. It holds

Estimating the \(W^{-1,2}_x\)-norm by the \(L^2_x\)-norm and applying Hölder’s inequality, the \(c(\kappa )\) terms are controlled (line by line) by the terms

Using (4.51) and (4.56) their expectations are bounded by the \(L^6_x\)-norms of \(\mathfrak {g},\mathfrak {k}\) and \(\mathfrak {l}\). \(\square \)

5 Weak first order convergence rate

This section is the heart of the paper and is dedicated to the proof of the following theorem, which establishes an optimal weak error rate for the time discretisation (4.23).

Theorem 5.1

Let \((\Omega ,\mathfrak {F},(\mathfrak {F}_t)_{t\ge 0},\mathbb {P})\) be a given stochastic basis with a complete right-continuous filtration and an \((\mathfrak {F}_t)\)-cylindrical Wiener process W. Suppose that \(u_0\in W^{3,2}(\mathbb {T}^d)\) and that \(\Phi \) satisfies (N1) if \(d=1,2\), and (N2) if \(d=3\). Let \(u\) be the unique pathwise solution to (1.1) in the sense of Definition (2.1) and let \((u_m)_{m=1}^M\) be the solution to (4.23). For any \(\varphi \in C^2_b(L^2(\mathbb {T}^d))\) we have

where \(c>0\) depends on \(\varphi ,u_0,T\) and \(\Phi \).

Remark 5.1

-

1.

For \(d=1\) and additive white noise in (1.1), a related result has been obtained in [7, Theorem 3.3] for the implementable splitting scheme [7, (1.1)\(_2\)], using bounds for related iterates from [8, Proposition 3] in supremum norm, whose derivation used a Gronwall argument. The result is obtained under a compatibility condition for white noise and drift operator [7, Section 2.1.2] whose physical interpretation is not immediate, and crucially exploits the additive character of noise to accomplish the estimate right before [7, Section 2.1.2]. The key part of the weak error analysis then is based on the Kolmogorov equation [7, (2.11)], where the appearing \({{\mathscr {L}}}^{(\Delta t)}\) is generator of the semigroup generated by the regularized problem [7, (2.7)] with solution \(X^{(\Delta t)}\)—rather than for (1.1) as we do here; note that this modification also gives rise to a modified energy \({{\mathcal {E}}}^{(\Delta t)}\) for the scalar order parameter u, if compared to the Helmholtz free energy functional \({{\mathcal {E}}}\) in (1.2).—In this work, we heavily profited from the tools given in the error analysis in [7], as well as [5, 17, 18].

-

2.

The estimates for the solution of the Kolmogorov equation from Sect. 4.4 are somewhat weaker than those used for the weak error analysis in [5, 7, 17, 18] (see also [4, 13, 15] for related results). We compensate this by assuming more regularity for the data (initial datum and noise) which results in the higher order estimates for the time-discrete solution from Sect. 4.3. Our analysis in the additive case in Sect. 3 is also based on higher spatial regularity of the diffusion coefficient.

-

3.

The reason for the restriction to affine linear noise in Theorem 5.1 for \(d=3\) is only due to estimate (4.32) in Lemma 4.4 which is heavily used. Apart from that the proof of Theorem 5.1 does not require this restriction. In particular, in the 2D case the result also hold for nonlinear noise.

-

4.

A large part of the analysis in this paper directly extends to the Dirichlet case—at least if the underlying domain is sufficiently smooth. Only the results from Sect. 4.1 require some additional technical effort: as we are working with nonlinear test functions it is not possible to justify the estimates from Lemma 4.1 by means of a Galerkin approximation (using eigenfunctions of the Laplace operator) anymore. Instead one has to prove localised estimates then by means of cut-off functions. In order to obtain global estimates one has to parametrise the boundary with local charts, change the coordinates and reflect the transformed solution at the hence obtained flat boundary. The local estimates can then be applied to the reflected transformed solution. Such a procedure is tedious and technical but standard in literature. A detailed presentation can be found in detail, e.g., in [9, Section 4].

-

5.

Large parts of the analysis of this section extend to the case of more general functions f in Eq. (1.1) with q-growth for some \(q\ge 2\). However, controlling the terms in (5.8) below requires that the leading part of f is exactly of the form \(f(z)=az^q\) with \(a>0\) and \(q\in {\mathbb {N}}\).

The remainder of this section is dedicated to the proof of Theorem 5.1, which is split into several subsections corresponding to the estimates of individual error terms.

We start by decomposing the error in several parts which will be analysed in the subsequent subsections. Let \(u\) be the solution of (1.1) and \((u_m)_{m=1}^M\) be the solution to its time-discretisation (4.23), both with respect to the initial datum \(u_0=\mathfrak {h}\in L^2(\mathbb {T}^d)\). The error is

where \({\mathscr {U}}\) is the solution to the Kolmogorov Eq. (4.45) with initial condition \(\varphi \in C^2_b(L^2(\mathbb {T}^d))\). We decompose

Recalling the definition

we rewrite Eq. (4.27) as

We apply Itô’s formula (see [16, Thm. 4.17]) to \(\Psi (t,u_{\tau })\) to get for \(t\in [t_{m-1},t_m)\)

such that

Setting \(\Psi (t,\mathfrak {h})={\mathscr {U}}(T-t,\mathfrak {h})\) we obtain by (4.45)

We will estimate the terms \((\textrm{I})\)–\((\textrm{V})\) in the following five subsections.

5.1 The initial error \((\textrm{I})\)

Using \({\mathscr {U}}(T,\mathfrak {h})={\mathbb {E}}[\varphi (u(T))]={\mathbb {E}}[{\mathscr {U}}(T-\tau ,u(\tau ))]\) and Lemma 4.5 we have

where, by (4.27),

We estimate now the right-hand side term by term. Using (2.26) (with \(\beta =0\) and \(r=1\)) we obtain

using the regularity of \(\mathfrak {h}\). We further obtain

by (2.23) and (2.15). Furthermore, it holds by (4.19) and (2.24)

Hence we obtain by (4.28)

We write the stochastic term from (5.3) as

Itô-isometry yields

as a consequence of (2.1), (2.26) and (2.14). Similarly, we have

by (2.24). Furthermore,

due to (2.1), (2.22) and (2.14). Finally, by (4.19), (2.24), Itô-isometry, (4.28) and (2.1)

Finally, we have due to (4.21) and (4.28)

Hence we conclude

5.2 The error in the linear part \((\textrm{II})\)

We use \({\mathcal {A}}{\mathcal {S}}_\tau ={\mathcal {S}}_\tau {\mathcal {A}}\) and \({\mathcal {A}}-{\mathcal {S}}_\tau {\mathcal {A}}=-\tau {\mathcal {S}}_\tau {\mathcal {A}}^2\) to get

We clearly have

using (2.23), Lemmas 4.5 and 4.4. Using Malliavin claculus, cf. Sect. 2.4, the term \((\textrm{II})_2\) can be decomposed into the sum of

taking into account (4.27), (2.27) and \({\mathcal {D}}_su_\tau ^\lambda =D{\mathcal {T}}_{\tau }(U_\tau )\Phi (u_{m-1})\) for \(t\in (t_{m-1},t_m)\) and \(s\in [t_{m-1},t]\). We obtain from (4.20), Lemmas 4.5, (4.28) and 4.4

Moreover, it holds by (4.21) and Lemma 4.4

with the help of (2.1) and (4.30). Finally, we obtain by (4.14), (4.15), (4.16) and Lemma 4.6

such that, by (2.5) and (4.28),

using Lemma 4.4 in the last step. Plugging all together we have shown

5.3 The error in the non-linear part \((\textrm{III})\)

The non-linear part can be written as

where

due to (2.24) and Lemma 4.5. The expectation is bounded by \(c\tau \) on account of Lemma 4.4. Estimating \((\textrm{III})_2\) requires more effort. Introducing the notation \(f^j(v)=( f(v),v_j)_{L^2_x}\) with the orthonormal basis \((v_j)\subset L^2(\mathbb {T}^d)\) allows us to write

In order to proceed we apply Itô’s formula to \(f^j(u_{\tau })\) and recall the definition of \(u_\tau \) in (4.27). Using the orthonormal basis \((e_i)_{i\ge 1}\) of the Hilbert space \(\mathfrak {U}\) introduced in Sect. 2.1 we obtain

such that

where \(\partial _ju\) and Du are evaluated at \((T-s,u_{\tau })\). We have by Lemma 4.5, (4.21) and (4.14)

using also (4.3)–(4.5) in the last step. Finally, by (2.1),

on account of Lemma 4.4 and (4.29). In order to estimate \((\textrm{III})_2^2\) we rewrite

We obtain further with the help (4.19)

using also Lemma 4.4, as well as (4.28) and (4.30). With the aid of the integration by parts rule for Malliavin derivatives, cf. Eq. (2.27), and using again \({\mathcal {D}}_\sigma u_\tau =D{\mathcal {T}}_{\tau }(U_\tau )\Phi (u_{m-1})\) for \(s\in (t_{m-1},t_m)\) and \(\sigma \in [t_{m-1},t]\) we rewrite

using also (4.14). We estimate further with the help of (2.1), Lemma 4.6 and (4.30)

using Lemma 4.4. By collecting the previous estimates we conclude that

5.4 The corrector error \((\textrm{IV})\)

Now we are concerned with \((\textrm{IV})\), which is the error which arises from the linearisation and does not occur in related papers dealing with linear semigroups. We call it the corrector error. We have by (4.21) and (4.28)

using Lemma 4.4 in the last step. This proves

5.5 The interpolation error \((\textrm{V})\)

In order to estimate \((\textrm{V})\) we differentiate (4.7) and obtain for \(f(z)=z^3-z\)

where

One easily checks that the first term disappears when integrating over \([t_{m-1},t_m]\) using that \(f(0)=0\). As far as the second term is concerned we write

The first term vanishes under the expectation and hence can be ignored, while the expectation of the (\(L^2_x\)-norm of the) second one can be controlled by \(\tau {\mathbb {E}}\big [\Vert u_{m-1}\Vert _{L^{2}_x}\Vert \Phi (u_{m-1})\Vert ^2_{L_2({\mathfrak {U}};W^{2,2}_x)}\big ]\). as a consequence of Itô-isometry. By (4.3) and (4.12)

Finally,

using (4.19) and (4.5). On account of Lemma 4.5, (4.28) and Lemma 4.4 we conclude

5.6 The error in the correction term \((\textrm{VI})\)

First of all we decompose

By Lemma 4.6, (2.1), (4.14) and (4.19) we obtain

Hence we obtain

using (4.28) and Lemma 4.4. The same idea can be used to estimate \((\textrm{VI})_3\). In order to estimate \((\textrm{VI})_1\) and \((\textrm{VI})_2\) we apply Itô’s formula to the functional

taking \({\mathbb {P}}\)-a.s. values in \(L^2(\mathbb {T}^d)\). We obtain from (4.27) for \(t\in [t_{m-1},t_m]\)

The last term leads us to

where we used (2.27) and \({\mathcal {D}}_su_\tau =D{\mathcal {T}}_{\tau }(U_\tau )\Phi (u_{m-1})\) for \(s\in [t_{m-1},t_m]\). Using Lemma 4.7 the term involving \(D^3{\mathscr {U}}\) can be estimated by

taking into account (4.14), (2.1) and Lemma 4.4. For the other term we obtain similarly by Lemma 4.6 the bound

The \(L^2_x\)-norm of the remaining terms in (5.10) can be estimated by the sum of

using boundedness of \(D\Phi \) and \(D^2\Phi \), cf. (2.1). By the properties of \({\mathcal {T}}_\tau \) from (4.14), (4.19) and (4.21) we bound these terms by

We conclude

using (4.28) and Lemma 4.4. The estimate for \((\textrm{IV})_2\) is analogous and we conclude

Data availability

Data sharing is not applicable to this article as no datasets were generated or analysed during the current study.

Notes

We denote by \(\nabla _x\) the derivative with respect to first variable, i.e. the d-valued spatial variable and by \(\nabla _\xi \) the derivative with respect to the second variable.

The focus in [28] is, however, on a finite-element based space-time discretisation.

This step can be made rigorous be working with difference quotients.

Note that \(D{\mathcal {T}}^{-1}_\tau = \textrm{id}+\tau {\mathcal {A}}+\tau f'(\cdot )\) is clearly invertible.

Though some care is required for Lemma 4.5.

References

Antonopoulou, D., Banas, L., Nürnberg, R., Prohl, A.: Numerical approximation of the stochastic Cahn–Hilliard equation near the sharp interface limit. Num. Math. 147, 505–551 (2021)

Becker, S., Gess, B., Jentzen, A., Kloeden, P.E.: Strong convergence rates for explicit space-time discrete numerical approximations of stochastic Allen–Cahn equations. Stoch. Partial Differ. Equ. Anal. Comput. 11, 211–268 (2023)

Becker, S., Jentzen, A.: Strong convergence rates for nonlinearitytruncated Euler-type approximations of stochastic Ginzburg–Landau equations. Stoch. Process. Appl. 129, 28–69 (2019)

Bréhier, C.E.: Approximation of the invariant distribution for a class of ergodic SPDEs using an explicit tamed exponential Euler scheme. ESAIM: MAN 56, 151–175 (2022)

Bréhier, C.-E., Debussche, A.: Kolmogorov equations and weak order analysis for SPDEs with nonlinear diffusion coefficient. J. Math. Pures Appl. 119, 193–254 (2018)

Bréhier, C.-E., Cui, J., Hong, J.: Strong convergence rates of semidiscrete splitting approximations for the stochastic Allen–Cahn equation. IMA J. Numer. Anal. 39, 2096–2134 (2019)

Bréhier, C.-E., Goudenège, L.: Weak convergence rates of splitting schemes for the stochastic Allen–Cahn equation. BIT Num. Math. 60, 543–582 (2020)

Bréhier, C.-E., Goudenège, L.: Analysis of some splitting schemes for the stochastic Allen–Cahn equation. Discr. Cont. Dyn. Syst. B 24, 4169–4190 (2019)

Breit, D., Cianchi, A., Diening, L., Schwarzacher, S.: Global Schauder estimates for the \(p\)-Laplace system. Arch. Ration. Mech. Anal. 243, 201–255 (2022)

Breit, D., Prohl, A.: Numerical analysis of two-dimensional Navier–Stokes equations with additive stochastic forcing. IMA J. Numer. Anal. 43, 1391–1421 (2023)

Breit, D., Prohl, A.: Error analysis for 2D stochastic Navier–Stokes equations in bounded domains with Dirichlet data. Found. Comp. Math. (2023). https://doi.org/10.1007/s10208-023-09621-y

Cerrai, S.: Second Order PDE’s in Finite and Infinite Dimension. A probabilistic Approach. Lecture Notes in Mathematics, vol. 1762. Springer, Berlin (2001)

Cui, J., Hong, J.: Strong and weak convergence rates of a spatial approximation for stochastic partial differential equation with one-sided Lipschitz coefficient. SIAM J. Num. Anal. 57, 1815–1841 (2019)

Cui, J., Hong, J., Sun, L.: Strong convergence of full discretization for stochastic Cahn–Hilliard equation driven by additive noise. SIAM J. Numer. Anal. 59, 2866–2899 (2021)

Cui, J., Hong, J., Sun, L.: Weak convergence and invariant measure of a full discretization for parabolic SPDEs with non-globally Lipschitz coefficients. Stoch. Proc. Appl. 134, 55–93 (2021)

Da Prato, G., Zabczyk, J.: Stochastic Equations in Infinite Dimensions, Encyclopedia Math. Appl. Cambridge University Press, Cambridge (1992)

Debussche, A.: Weak approximation of stochastic partial differential equations: the nonlinear case. Math. Comp. 273, 89–117 (2011)

Debussche, A., Printems, J.: Weak order for the discretisation of the stochastic heat equation. Math. Comp. 266, 845–863 (2009)

Deckelnick, K., Dziuk, G., Elliott, C.M.: Computation of geometric partial differential equations and mean curvature flow. Acta Numer. 14, 139–232 (2005)

Du, Q., Feng, X.: The phase field method for geometric moving interfaces and their numerical approximations. Handb. Numer. Anal. Chapter 5 21, 425–508 (2020)