Abstract

In this article we discuss quantitative properties of convex integration solutions arising in problems modeling shape-memory materials. For a two-dimensional, geometrically linearized model case, the hexagonal-to-rhombic phase transformation, we prove the existence of convex integration solutions \(u\) with higher Sobolev regularity, i.e., there exists \(\theta _{0}>0\) such that \(\nabla u \in W^{s,p}_{loc}( \mathbb{R}^{2})\cap L^{\infty }(\mathbb{R}^{2})\) for \(s\in (0,1)\), \(p\in (1,\infty )\) with \(0< sp < \theta _{0}\). We also recall a construction which shows that in very specific situations with additional symmetry much better regularity properties hold.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

In this article we are concerned with the detailed analysis of certain convex integration solutions which arise in the modeling of solid-solid, diffusionless phase transformations in shape-memory materials. We seek to precisely analyze the regularity properties of these constructions in a simple, two-dimensional, geometrically linear model case.

Shape-memory materials undergo a solid-solid, diffusionless phase transition upon temperature change (see e.g. [6] and the references given there): in the high temperature phase, the austenite phase, the materials form very symmetric lattices. Upon cooling down the material, the symmetry of the lattice is reduced, the material transforms into the martensitic phase. Due to the loss of symmetry, there are different variants of martensite, which make these materials very flexible at low temperature and give rise to a variety of different microstructures. Mathematically, it has proven very successful to model this behavior variationally in a continuum framework as the following minimization problem [4]:

Here \(\varOmega \subset \mathbb{R}^{n}\) is the reference configuration of the undeformed material. The mapping \(y:\varOmega \rightarrow \mathbb{R} ^{n}\) describes the deformation of the material with respect to the reference configuration. It is assumed to be of a suitable Sobolev regularity. The function \(W:\mathbb{R}^{n\times n} \times \mathbb{R} \rightarrow [0,\infty )\) denotes the energy density of a given deformation gradient \(M\in \mathbb{R}^{n \times n}\) at a certain temperature \(\theta \in \mathbb{R}\). Due to frame indifference, \(W\) is required to be invariant with respect to rotations, i.e.,

Modeling the behavior of shape-memory materials, the energy density further reflects the physical properties of these materials. In particular, it is assumed that at high temperatures \(\theta > \theta _{c}\) the energy density \(W\) has a single minimum (modulo \(\mathit{SO}(n)\) symmetry), which (upon normalization) we may assume to be given by the \(\mathit{SO}(n)\) orbit of \(\alpha (\theta ) \mathit{Id}\), where \(\alpha : \mathbb{R} \rightarrow (0,\infty )\) with \(\alpha (\theta _{c})=1\) (cf. [3]). This is the (austenite) energy well at temperature \(\theta \). Upon lowering the temperature below a critical temperature \(\theta _{c}\), the function \(W\) displays a (discrete) multi-well behavior (modulo \(\mathit{SO}(n)\)): There exist finitely many matrices \(U_{1}(\theta ),\ldots ,U_{m}(\theta ) \in \mathbb{R}^{n\times n}_{+}\), \(m\in \mathbb{N}\), such that

The matrices \(U_{j}(\theta )\) represent the variants of martensite at temperature \(\theta <\theta _{c}\) and are referred to as the (martensite) energy wells. At the critical temperature \(\theta = \theta _{c}\) both the austenite and the martensite wells are energy minimizers.

In the sequel, we assume that \(\theta <\theta _{c}\) is fixed, so that only the variants of martensite are energy minimizers. We seek to study the quantitative behavior of minimizers for energies of the type (1). Here we make the following simplifications:

- (i)

Reduction to the\(m\)-well problem. Instead of studying the full variational problem (1), we only focus on exact minimizers. Restricting to the low temperature regime, this implies that we seek solutions to the differential inclusion

$$\begin{aligned} \nabla y \in \bigcup_{j=1}^{m} \mathit{SO}(n) U_{j}(\theta ), \end{aligned}$$(2)for some \(\theta < \theta _{c}\).

- (ii)

Small deformation gradient case, geometric linearization. We further modify (2) and assume that \(\nabla y\) is close to the identity. This allows us to linearize the problem around this constant value (cf. Chap. 11 in [6] and also [5] for a comparison of the linearized and the nonlinear theories). Instead of considering (2), we are thus lead to the inclusion problem

$$\begin{aligned} e(\nabla u):=\frac{\nabla u + (\nabla u)^{T}}{2} \in \{e_{1},\dots ,e _{m}\}. \end{aligned}$$(3)The symmetrized gradient \(e(\nabla u)\) represents the infinitesimal displacement strain associated with the displacement\(u\), which is defined as \(u(x):=y(x)-x\). The symmetric matrices \(e_{1},\ldots ,e_{m} \in \mathbb{R}^{n\times n}\) are the exactly stress-free strains representing the variants of martensite. After rescaling, we may assume that they are of a size of order one. While this procedure linearizes the geometry of the problem (by replacing the symmetry group \(\mathit{SO}(n)\) by an invariance with respect to the linear space \(\operatorname{Skew}(n)\)), the differential inclusion (3) preserves the inherent physical nonlinearity which arises from the multi-well structure of the problem. In order to ensure the validity of the geometric linearization assumption, in the sequel we will pay particular attention to deriving solutions with bounded displacement gradients of order one, and hence have skew parts of order one (we recall the normalization that our exactly stress-free strains are of order one). For more detailed comments on this, we refer to the discussion on the \(L^{\infty }\) bounds for \(\nabla u\) below (Q2), to (ii) in Sect. 1.3 and to Algorithm 30 in Sect. 3.2 and the discussion following it.

- (iii)

Reduction to two dimensions and the hexagonal-to-rhombic phase transformation. In the sequel studying an as simple as possible model case, we restrict to two dimensions and a specific two-dimensional phase transformation, the hexagonal-to-rhombic phase transformation (this is for instance used in studying materials such as \(\mbox{Mg}_{2}\mbox{Al}_{4} \mbox{Si}_{5}\mbox{O}_{18}\) or Mg-Cd alloys undergoing a (three-dimensional) hexagonal-to-orthorhombic transformation, cf. [11, 34], and also for closely related materials such as \(\mbox{Pb}_{3}(\mbox{VO}_{4})_{2}\), which undergo a (three-dimensional) hexagonal-to-monoclinic transformation, cf. [11, 40, 41]). From a microscopic point of view, the hexagonal-to-rhombic phase transformation occurs, if a hexagonal atomic lattice is transformed into a rhombic atomic lattice. From a continuum point of view, we model it as solutions to the differential inclusion

$$ \begin{aligned} & u: \mathbb{R}^{2} \rightarrow \mathbb{R}^{2}, \\ &\frac{1}{2}\bigl(\nabla u + (\nabla u)^{T}\bigr) \in K \quad\mbox{a.e. in }\varOmega , \end{aligned} $$(4)where \(\varOmega \subset \mathbb{R}^{2}\) is a bounded Lipschitz domain and

$$ \begin{aligned} &K:=\bigl\{ e^{(1)}, e^{(2)}, e^{(3)}\bigr\} \quad\mbox{with} \\ &e^{(1)}:= \begin{pmatrix} 1 & 0 \\ 0& -1 \end{pmatrix} ,\quad e^{(2)}:= \frac{1}{2} \begin{pmatrix} -1 & \sqrt{3} \\ \sqrt{3}& 1 \end{pmatrix} ,\quad e^{(3)}:= \frac{1}{2} \begin{pmatrix} -1 & -\sqrt{3} \\ -\sqrt{3}& 1 \end{pmatrix} . \end{aligned} $$(5)We note that all the matrices in \(K\) are trace-free, which corresponds to the (infinitesimal) volume preservation of the transformation. We note that the set \(K \) is “large” (its convex hull is a two-dimensional set in the three-dimensional ambient space of two-by-two, symmetric, tracefree matrices, cf. Lemma 10).

In the sequel, we study the problem (4), (5) and investigate regularity properties of its solutions.

1.1 Main Result

The geometrically linearized hexagonal-to-rhombic phase transformation is a very flexible transformation, which allows for numerous exact solutions to the associated three-well problem (4) with different types of boundary data. Here the simplest possible solutions are so-called simple laminates, for which the strain is a one-dimensional function \(e(\nabla u)(x)=f(x\cdot n)\) for some vector \(n \in S^{1}\) and for which

i.e., \(e(\nabla u)\) only attains two values. The possible directions of these laminates, as given by the vector \(n \in S^{1}\) are (up to sign reversal) six discrete values which arise as the symmetrized rank-one directions between the energy wells: For each \(i_{1},i_{2} \in \{1,2,3\}\) with \(i_{1} \neq i_{2}\) there exists (up to sign reversal and exchange of the roles of \(a_{i_{1},i_{2}}\) and \(n_{i_{1},i_{2}}\) and renormalization) exactly one pair \((a_{i_{1},i_{2}},n_{i_{1},i_{2}}) \in \mathbb{R}^{2} \setminus \{0\}\times S^{1}\) with the property that

The possible vectors are collected in Lemma 16.

In addition to these “simple” constructions, there are further exact solutions to the three-well problem associated with the hexagonal-to-rhombic phase transformation, e.g., there are patterns involving all three variants as depicted in Figs. 24 and 25 in the Appendix.

In the sequel, we study solutions to the hexagonal-to-rhombic phase transformation with affine boundary conditions, i.e., we consider \(u\in W^{1,p}_{\text{loc}}(\mathbb{R}^{2})\) with \(p\in (2,\infty ]\) such that

Here we investigate the rigidity/ non-rigidity of the problem by asking whether it has non-affine solutions:

- (Q1)

Are there (non-affine) solutions to (6) with \(M \in \mathbb{R}^{2\times 2}\)?

Clearly, a necessary condition for this is that \(e(M)\in \operatorname{conv}(K)\). Using the method of convex integration, Müller and Šverák [43] (cf. also the Baire category arguments of [21, 22]) constructed multiple solutions to related differential inclusions, displaying the existence of a variety of solutions to the problem. Noting that these techniques are applicable to our set-up of the three-well problem, ensures that for any \(M\) with \(e(M)\in \operatorname{intconv}(K)\) there exists a non-affine solution to (6). We point out that in the setting of the geometrically linearized hexagonal-to-rhomobic phase transformation, all convex hulls coincide, see Lemma 10, which allows us to work with convex instead of lamination, rank-one or quasiconvex hulls.

In general these convex integration solutions are however very “wild” in the sense that they do not possess very strong regularity properties (cf. [26]). As our inclusion (6) is motivated by a physical problem, a natural question addresses the relevance of this multitude of solutions:

- (Q2)

Are all the convex integration solutions physically relevant? Or are they only mathematical artifacts? Is there a mechanism distinguishing between the “only mathematical” and the “really physical” solutions?

Guided by the physical problem at hand and the literature on these problems, natural criteria to consider are surface energy constraints and surface energy regularizations of the minimization problem (1) (cf. for instance [9, 10, 14, 35, 36]). Here the presence of the higher order surface energy contributions gives rise to a length scale which is also reflected in physical experiments (e.g., branching structures are predicted), cf. also our follow-up work [50] for an energetic point of view on convex integration solutions. On the level of our differential inclusion surface energy constraints translate into regularity constraints and lead to the question, whether unphysical convex integration solutions have a natural regularity threshold. Here an immediate regularity property of solutions to (6) is that \(e(\nabla u)\in L^{\infty }( \mathbb{R}^{2})\). With slightly more care, it is also possible to obtain solutions with the property that \(u\in W^{1,\infty }_{\text{loc}}( \mathbb{R}^{2})\) (which in an abstract way follows from the stability arguments from Chap. 3 in [33] but which in this text we will implement by an explicit construction. In particular this allows us to explicitly control the size of the skew part, see Proposition 34, thus justifying the use of the geometrically linearized theory of elasticity). However, prior to this work it was not known whether these solutions can enjoy more regularity, i.e., whether for instance there are convex integration solutions with \(\nabla u \in W^{s,p}(\varOmega )\) for some \(s>0\), \(p\geq 1\). In particular, this shows that there are exact solutions to the (geometrically linearized version of the) minimization problem (1) which enjoy better regularity properties. This makes convex integration solutions potentially also interesting from an energy minimization point of view for energies involving elastic and surface energy contributions (cf. the experimental work of Inamura [29] for first experimental results capturing rather wild microstructures which might be related to convex integration). We however emphasize that our findings do not answer the question (Q2) on the physical relevance of convex integration solutions. They should be viewed as a first attempt at approaching this question.

Motivated by these questions, in this article, we study the regularity of a specific convex integration construction and obtain higher Sobolev regularity properties for the resulting solutions:

Theorem 1

Let\(\varOmega \subset \mathbb{R}^{2}\)be a bounded Lipschitz domain. Let\(K\)be as in (5) and let\(M\in \mathbb{R}^{2\times 2}\)be such that\(e(M):= \frac{M+M^{T}}{2} \in \operatorname{intconv}(K)\). Then there exist a value\(\theta _{0}\in (0,1)\), depending only on\(\frac{\operatorname{dist}(e(M), \partial \operatorname{conv}(K))}{ \operatorname{dist}(e(M),K)}\), and a displacement\(u: \mathbb{R}^{2} \rightarrow \mathbb{R}^{2}\)with\(u\in W^{1,\infty }_{loc}(\mathbb{R} ^{2})\)such that (6) holds and such that\(\nabla u\in W ^{s,p}_{loc}(\mathbb{R}^{2})\cap L^{\infty }(\mathbb{R}^{2})\)for all\(s\in (0,1)\), \(p\in (1,\infty )\)with\(s p< \theta _{0}\).

Let us comment on this result: to the best of our knowledge it represents the first \(W^{s,p}\) higher regularity result for convex integration solutions arising in differential inclusions for shape-memory materials. In addition to providing a regularity result for the displacement gradient \(\nabla u\), we also show higher \(W^{s,p}\) regularity for the characteristic functions of the martensitic phases. Invoking results of Sickel [52], this also implies bounds on the dimension of the singular set of the characteristic functions (cf. Remark 6). This in turn can be interpreted as a measure of the “fractality” of the constructed solutions. In this context, we also point out that our solutions are “piecewise affine” in the sense of Sect. 4 in [33], i.e., at almost every point of the domain, the iterative convex integration solution turns into an exact solution after a finite number of steps (where the number of steps however depends on the respective point). This entails that although our solutions are expected to be “wild”, they are not as wild as solutions to other convex integration schemes, where it might be necessary to iterate infinitely often at each point in the domain.

The given quantitative dependences for \(\theta _{0}\) are certainly not optimal in the specific constants. While it is certainly possible to improve on these numeric values, a more interesting question deals with the qualitative expected dependences: Is it necessary that \(\theta _{0}\) depends on \(\frac{\operatorname{dist}(e(M), \partial \operatorname{conv}(K))}{\operatorname{dist}(e(M),K)}\)?

Since for \(M\in \mathbb{R}^{2\times 2}\) with \(e(M)\in \partial \operatorname{conv}(K)\) there are no non-affine solutions to (6), it is natural to expect that convex integration constructions deteriorate for matrices \(M\) with \(e(M)\) approaching the boundary of \(\operatorname{conv}(K)\). The precise dependence on the behavior towards the boundary however is less intuitive. In this context, it is interesting to note that the regularity threshold \(\theta _{0}>0\) does not depend on the distance to the boundary of \(K\), but rather on the angle which is formed between the initial matrix \(e(M)\) and the boundary of \(\operatorname{conv}(K)\). This is in agreement with the intuition that the larger the angle is, the better the convex integration algorithm becomes, as it moves the values of the iterations which are used to construct the displacement \(u\) further into the interior of \(K\). In the interior of \(K\) it is possible to use larger length scales which increases the regularity of solutions. Whether this dependence is necessary in the value of the product of \(sp\) or whether the product \(sp\) should be independent of this and only the value of the corresponding norm should deteriorate with a smaller angle, is an interesting open question. In a follow-up work, [51], we introduce a different construction which provides a uniform lower bound on the attainable regularity. However, that construction is then not anymore “piecewise affine”.

We remark that in the very special case of additional symmetries it is possible to construct much better solutions. An example is given in the appendix for the case \(M=0\) (cf. also [48] and [11]). For these boundary data and specific domain geometries we show that it is possible to construct a solution \(u\) of the associated differential inclusion such that \(e(\nabla u) \in K\) and \(e(\nabla u)\in BV\). The skew part of the displacement gradient however diverges (and is “unphysical” in this sense). For the hexagonal-to-rhombic phase transformation the boundary data \(M=0\), which correspond to \(e(M)\) lying exactly in the barycenter of the three energy wells, is the only example with such substantially improved regularity properties that we are aware of (cf. [19] for similar examples in the geometrically nonlinear setting). The high symmetry situation with the improved solutions is thus very non-generic in this sense and requires very strong symmetries. It is an important and challenging open question, whether it is possible to exploit further symmetries and thus to construct further solutions with these much better regularity properties.

1.2 Literature and Context

A fascinating problem in studying solid-solid, diffusionless phase transformations modeling shape-memory materials is the dichotomy between rigidity and non-rigidity. Since the work of Müller and Šverák [43], who adapted the convex integration method of Gromov [27, 28] and Nash-Kuiper [39, 44] to the situation of solid-solid phase transformations, and the work of Dacorogna and Marcellini [21, 22], it is known that under suitable conditions on the convex hulls of the energy wells, there is a very large set of possible minimizers to (1) (cf. also [53] and [33] for a comparison of these two methods). More precisely, the set of minimizers forms a residual set (in the Baire sense) in the associated function spaces. However, in general convex integration solutions are “wild”; they do not enjoy very good regularity properties. This has rigorously been proven for the case of the geometrically nonlinear two-well problem [25, 26], the geometrically nonlinear three-well problem in three dimensions (the “cubic-to-tetragonal phase transformation”) [17, 32] and (under additional assumptions) for the geometrically linear six-well problem (the “cubic-to-orthorhombic phase transformation”) [49]. In these works it has been shown that on the one hand convex integration solutions exist, if the displacement gradient is only assumed to be \(L^{\infty }\) regular. If on the other hand, the displacement gradient is \(BV\) regular (or a replacement of this), then solutions are very rigid and for most constant matrices \(M\) the analogue of (6) does not possess a solution.

Thus, in these specific examples convex integration solutions cannot exist at \(BV\) regularity for the displacement gradient; at this regularity solutions are rigid. At \(L^{\infty }\) regularity they are however flexible and a multitude of solutions exist. Similarly as in

the related (though much more complicated) situation of the Onsager conjecture for Euler’s equations (cf. for instance [8, 24, 30, 53] and the references therein),

the situation of orientation preserving Young measures (cf. [37]),

it is hence natural to adopt a more quantitative point of view and to ask whether there is a regularity threshold which distinguishes between the rigid and the flexible regime.

It is the purpose of this article to make a first, very modest step into the understanding of this dichotomy by analyzing the \(W^{s,p}\) regularity of a (known) convex integration scheme in an as simple as possible model case.

1.3 Main Ideas

In our construction of solutions to the differential inclusion (6) we follow the ideas of Müller and Šverák [43] (in the version of [47]) and argue by an iterative convex integration algorithm. For the hexagonal-to-rhombic transformation this is particularly simple, since the laminar convex hull equals the convex hull of the wells and since all matrices in the convex hull are symmetrized rank-one-connected with the wells (cf. Lemma 10). As a consequence it is possible to construct piecewise affine solutions (in the language of [33], Chap. 4). This simplifies the convergence of the iterative construction drastically. It is one of the reasons for studying the hexagonal-to-rhombic phase transformation as a model problem.

Yet, in spite of the (relative) simplicity of obtaining convergence of the iterative construction to a solution of (6) and hence of showing existence, substantially more care is needed in addressing regularity. In this context we argue by an interpolation result (cf. Theorem 2 and Proposition 66): While our approximating displacements \(u_{k}:\mathbb{R}^{2} \rightarrow \mathbb{R}^{2}\) are such that the \(BV\) norms of the iterations increase (exponentially), the \(L^{1}\) norm of their difference decreases exponentially. If the threshold \(\theta _{0}>0\) is chosen appropriately, the \(W^{s,p}\) norm for \(0< sp< \theta _{0}\) is controlled by an interpolation of the \(BV\) and the \(L^{1}\) norms, which can be balanced to be uniformly bounded. This is based on the interpolation results from [13] which characterizes Besov spaces as interpolation spaces of \(L^{p}\) and \(BV\) spaces and is similar to the strategy from for instance [18] or [53]. To derive the associated bounds, we have to make the iterative algorithm quantitative in several ways which distinguishes it from the “usual” convex integration schemes:

- (i)

Tracking the error in strain space. In order to iterate the convex integration construction, it is crucial not to leave the interior of the convex hull of \(K\) in the iterative modification steps. In qualitative convex integration algorithms, it suffices to use errors which become arbitrarily small, and to invoke the openness of \(\operatorname{intconv}(K)\). As the admissible error in strain space is however coupled to the length scales of the convex integration constructions (cf. Lemma 21) and as these in turn are directly reflected in the solutions’ regularity properties, in our quantitative algorithm we have to keep track of the errors in strain space very carefully. Here we seek to maximize the possible length scales (and hence the aspect ratio of the building block constructions) without leaving \(\operatorname{intconv}(K)\) in each iteration step. This leads to the distinction of various possible cases (the “stagnant”, the “push-out”, the “parallel” and the “rotated” case, cf. Notation 25, Definition 29 and Algorithm 27). In these we quantitatively prescribe the admissible error according to the given geometry in strain space.

- (ii)

Controlling the skew part without destroying the structure of (i). Seeking to construct \(W^{1,\infty }\) solutions, we have to control the skew part of our construction. Due to the results of Kirchheim, it is known that this is generically possible (cf. [33], Chap. 3). However, in our quantitative construction, we cannot afford to arbitrarily change the direction of the rank-one connection which is chosen in the convex integration algorithms at an arbitrary iteration step. This would entail \(BV\) bounds which could not be compensated by the exponentially decreasing \(L^{1}\) bounds in the interpolation argument. Hence we have to devise a detailed description of controlling the skew part (cf. Algorithm 30).

- (iii)

Precise covering construction. In order to carry out our convex integration scheme we have to prescribe an iterative covering of our domain by constructions which successively modify a given gradient. As our construction in Lemma 21 relies on triangles, we have to ensure that there is a class of triangles which can be used for these purposes (cf. Section 4). Seeking to control both the \(L^{1}\) and the \(BV\) norms of the resulting convex integration solutions, we have to control competing requirements: On the one hand, we have to quantitatively control the overall perimeter (which can be viewed as a measure of the BV norm of \(\nabla u_{k}\)) of the covering at a given iteration step of the convex integration algorithm. This crucially depends on the specific case (“rotated” or “parallel”) in which we are. On the other hand, we have to ensure that a sufficiently large volume fraction of the underlying domain is covered by our building block constructions (which however costs surface energy) in order to obtain good \(L^{1}\) bounds. That it is possible to satisfy both requirements simultaneously is the content of Proposition 45.

For a further discussion of the quantitative aspects of our convex integration scheme and the differences with respect to more standard convex integration algorithms we refer to the discussion in Sect. 3.2 following the presentation of Algorithms 27 and 30.

1.4 Organization of the Article

The remainder of the article is organized as follows: After briefly collecting preliminary results in the next section (interpolation results, results on the convex hull of the hexagonal-to-rhombic phase transition), in Sect. 3 we begin by describing the convex integration scheme which we employ. Here we first recall the main ingredients of the qualitative scheme (Sect. 3.1) and then introduce our more quantitative algorithms in Sects. 3.2–3.3.2. As this algorithm crucially relies on the existence of an appropriate covering, we present an explicit construction of this in Sect. 4. Here we also address quantitative covering estimates for the perimeter and the volume. The ingredients from Sects. 3 and 4 are then combined in Sect. 5, where we prove Theorem 1 for a specific class of domains. In Sect. 6 we explain how this can be generalized to arbitrary Lipschitz domains. Finally, in the Appendix, we recall a very special symmetry based construction for a solution to (6) with \(M=0\) with much better regularity properties for \(e(\nabla u)\) but with unbounded skew part.

2 Preliminaries

In this section we collect preliminary results which will be relevant in the sequel. We begin by stating the interpolation results of [13] on which our \(W^{s,p}\) bounds rely. Next, in Sect. 2.2 we recall general facts on matrix space geometry and in particular apply this to the hexagonal-to-rhombic phase transformation and its convex hulls.

2.1 An Interpolation Inequality and Sickel’s Result

Seeking to show higher Sobolev regularity for convex integration solutions, we rely on the characterization of \(W^{s,p}\) Sobolev functions. Here we recall the following two results on an interpolation characterization [13] and on a geometric characterization of the regularity of characteristic functions [52]:

Theorem 2

(Interpolation with BV, [13])

We have the following interpolation results:

- (i)

Let\(p\in [2,\infty )\)and assume that\(\frac{1}{q} = \frac{1- \theta }{p} + \theta \)for some\(\theta \in (0,1)\). Then

$$\begin{aligned} \|u\|_{W^{\theta ,q}(\mathbb{R}^{n})} \leq C \|u\|_{L^{p}(\mathbb{R} ^{n})}^{1-\theta } \|u\|_{BV(\mathbb{R}^{n})}^{\theta } . \end{aligned}$$(7) - (ii)

Let\(p\in (1,2]\)and let\(\frac{1}{q} = \frac{1-\theta }{p} + \theta \)for some\(\theta \in (0,1)\). Let further\((\theta _{1}, q_{1}) \in (0,1)\times (1,\infty )\)be such that

$$\begin{aligned} \frac{1}{q_{1}} &= \frac{1-\theta _{1}}{2} + \theta _{1}, \\ \bigl(\theta , q^{-1}\bigr) &= \tau (0,1-) + (1-\tau ) \bigl(\theta _{1}, q_{1}^{-1}\bigr), \end{aligned}$$for some\(\tau \in (0,1)\), where 1− denotes an arbitrary positive number slightly less than 1. Then,

$$\begin{aligned} \| u \|_{W^{\theta , q}(\mathbb{R}^{n} )} \leq C \bigl(\|u\|_{L^{1+}( \mathbb{R}^{n})}^{\frac{\tau }{1-\theta }} \|u\|_{L^{2}(\mathbb{R} ^{n})}^{1-\frac{\tau }{1-\theta }} \bigr)^{1-\theta } \|u \|_{BV( \mathbb{R}^{n})}^{\theta }, \end{aligned}$$(8)with\(1+ := (1-)^{-1}\).

Before proceeding to the proof of Theorem 2, we present an immediate corollary of it: For functions which are “essentially” characteristic functions we obtain the following unified result:

Corollary 3

Let\(u:\mathbb{R}^{n} \rightarrow \mathbb{R}^{n}\)be a function, such that

Then, for any\(p\in (1,\infty )\)we have that

where\(\frac{1}{q} = \frac{1-\theta }{p} + \theta \)and\(\theta \in (0,1)\).

In the sequel, we will mainly rely on Corollary 3, since in our applications (e.g. in Propositions 66, 69) we will mainly deal with functions which are “essentially” characteristic functions.

Proof of Corollary 3

By virtue of Theorem 2 (i) and (7), it suffices to consider the regime \(p\in (1,2)\). In this case the statement follows from a combination of (8) and the fact that for functions satisfying (9) we have

for \(1< p_{1} \leq r \leq p_{2}\), \(r^{-1}= \sigma p_{1}^{-1} + (1- \sigma )p_{2}^{-1} \) and \(\sigma \in (0,1)\). We postpone a proof of (11) to the end of this proof, and observe first that it indeed suffices to show (11) to conclude the claim of (10). To this end, we note that the exponents in (8) obey the relation

This in turn is a consequence of the three identities

Here we note that \(\frac{\tau }{1-\theta }=1-\frac{(1-\tau )(1-\theta _{1})}{1-\theta }\in (0,1)\). Hence (11) (applied to \(r=p\), \(p_{1} = 1+\), \(p_{2} = 2\) and \(\sigma = \frac{\tau }{1-\theta }\)) together with (8) yields the claim of (10).

It thus remains to prove (11). To this end, we observe that for any \(r \in [1,\infty ]\)

where for abbreviation we have set \(C_{1}:=\|u\|_{L^{\infty }( \mathbb{R}^{n})}\). With this we infer

This concludes the argument. □

After this discussion, we come to the proof of Theorem 2:

Proof of Theorem 2

If \(p\geq 2\), the interpolation result is a special case of Theorem 1.4 in [13] (where in the notation of [13] we have chosen \(s=0\), \(t=\theta \)): Indeed, for \(\gamma < 1-\frac{1}{n}\) and \((s,p)\) satisfying \((s-1)p^{\ast } \frac{1}{n} = \gamma -1\) with \(p^{\ast }\) being the dual exponent of \(p\), the estimate in Theorem 1.4 from [13] reads

where

We note that in the setting of Theorem 2 the estimate (12) is applicable, as in the notation of [13] and with dimension \(n\) we have that \(\gamma := - \frac{p}{p-1}\frac{1}{n} + 1= 1- \frac{1}{n} - \frac{1}{p-1} \frac{1}{n}< 1- \frac{1}{n}\), which implies the validity of (7). The simplification from (12) to (7) is then a consequence of the facts that

for \(s\notin \mathbb{Z}\) we have \(W^{s,p}(\mathbb{R}^{n}) = B_{p,p} ^{s}(\mathbb{R}^{n})\) (cf. [7, 12]),

and for \(p\geq 2\) the embedding \(L^{p}(\mathbb{R}^{n}) \hookrightarrow B_{p,p}^{0}(\mathbb{R}^{n})\) is valid (Theorem 2.41 in [2]).

This concludes the argument for (i).

To obtain (ii), we combine (i) with an additional interpolation inequality, which becomes necessary, as the inclusion \(L^{p}( \mathbb{R}^{n}) \hookrightarrow B_{p,p}^{0}(\mathbb{R}^{n})\) is no longer valid for \(p\in (1,2)\). Hence, we rely on the following interpolation estimate (cf. Lemma 3 in [7])

which is valid for \(-\infty < s_{0} <s_{1}<\infty \), \(0< q_{0},q_{1} \leq \infty \), \(0< p_{0},p_{1}\leq \infty \), \(0< \tau < 1\) with

Here the spaces \(\tilde{F}^{s}_{r,l}\) denote the (modified) Triebel-Lizorkin spaces from [7]. The main advantage of the estimate (13), which goes back to Oru [45], is that there are no conditions on the relations between \(l\), \(l_{0}\), \(l_{1}\) in this estimate. In particular, we can choose \(l_{0}=2\), \(l_{1} = p_{1}\) and \(l=r\). Using that

\(\tilde{F}^{s}_{r,2}(\mathbb{R}^{n}) = L^{s,r}(\mathbb{R}^{n})\) for \(s\in \mathbb{R}\), \(1< r<\infty \) and that for this range \(L^{0,r}( \mathbb{R}^{n})=L^{r}(\mathbb{R}^{n})\),

\(\tilde{F}^{s}_{r,r}(\mathbb{R}^{n})= W^{s,r}(\mathbb{R}^{n})\) for \(0< s<\infty \), \(s\notin \mathbb{Z}\), \(1\leq p <\infty \),

we can simplify (13) to yield

which is valid for \(0< s_{1} < \infty \), \(1< p_{0} < \infty \), \(1< p_{1} <\infty \) with

We apply (14) with \(p_{0}=1+\), \(s=\theta \), \(r=q\) and \((s_{1},p_{1})=(\theta _{1},q_{1})\) lying on the boundary of the interpolation region from (i) (cf. the blue region in Fig. 1), i.e.,

In particular, these equations uniquely determine \(\tau \in (0,1)\). Hence, we obtain

We conclude the proof of (ii) by noting that \((1-\tau )\theta _{1}= \theta \) and that

For functions which are “essentially” characteristic functions in the sense that condition (9) of Corollary 3 holds we obtain the interpolation inequality (10), which is valid in the whole coloured region in the figure (green and blue). Here the blue region is already covered in Theorem 2(i). In order to also obtain the green region, we have to be able to simplify the statement of (8), which in general is only valid for functions which are “essentially” characteristic functions. In our application of Corollary 3 (cf. Propositions 66, 69), we will restrict to the region to the left of the line connecting \((\theta _{0},1)\) with \((0,0)\). Remark 5 shows that having a bound for the product of the right hand side of (10) for a specific value \((\theta _{0},1)\) already allows to deduce a bound for all exponents \((\tilde{\theta },q)\) on the associated line connecting \((\theta _{0},1)\) with \((0, \infty )\) (Color figure online)

□

As an alternative to the interpolation approach, a more geometric criterion for regularity is given by Sickel:

Theorem 4

(Sickel, [52])

Let\(\theta \in (0,1)\)and\(q\in [1,\infty )\)be arbitrary but fixed. Let\(E\subset \mathbb{R}^{n}\)be a bounded set satisfying

where

Then, \(\chi _{E}\in W^{\theta ,q}(\mathbb{R}^{n})\).

Although this theorem provides good geometric intuition and could have been used as an alternative means of proving Theorem 1, we do not pursue this further in the sequel, but postpone its discussion to future work.

Remark 5

We note that the estimate (15) in Theorem 4 yields a condition on the product\(\theta q>0\), while, at first sight, Theorem 2 and Corollary 3 pose a restriction on \(\theta \), \(q\) individually. As we are dealing with bounded (or even characteristic) functions, we however observe that it is also possible to obtain an analogous condition on the product \(\theta q\) in Theorem 2 and Corollary 3: Indeed, assume that \(u\in L^{\infty }(\mathbb{R}^{2})\) is such that for some \(\theta _{0} \in (0,1)\) the product

is bounded. Then, we claim that for

also the product

is bounded. To derive this, we first observe that the \(L^{\infty }\) bound for \(u\) allows us to infer that for any \(p\in (1,\infty )\)

As a consequence, we deduce that

Here we have made use of the specific choices of exponents from (17) and the boundedness of \(u\), which allowed us to invoke (18). This concludes the argument for the claim.

Thus, relying on the bound (19), we infer that given a bound on (16), we obtain that for all exponents \(q\), \(\tilde{\theta }\), \(p\) from (17)

Here we applied Theorem 2 (or Corollary 3), for which we noted that the respective exponents are admissible. On the one hand, this is the desired analogue of the condition from Theorem 4 and allows us to obtain a whole family of \(W^{\theta ,q}\) bounds for \(u\), where \(\theta q < \theta _{0}\). On the other hand, it shows that although \(p=1\) is not admissible in Theorem 2 and Corollary 3, for our purposes, it still suffices to consider the case \(p=1\) and to prove a control for (16), which then gives the full range of expected exponents in the form of the estimate (20).

Remark 6

(Fractal Packing Dimension)

Following Sickel [52], Proposition 3.3 (cf. also [31], Theorem 2.2) we remark that for a characteristic function its \(W^{s,p}\) regularity has direct consequences on the packing dimension (cf. [31, 42]), which we denote by \(\dim _{P}\), of its boundary: If for some set \(E \subset \mathbb{R} ^{n}\) its characteristic function \(\chi _{E}\) satisfies \(\chi _{E} \in W^{s,p}(\mathbb{R}^{n})\) for some \(s>0\) and \(1\leq p <\infty \), then

Here

\(B_{\epsilon }(x):=\{x'\in \mathbb{R}^{n}: |x-x'|\leq \epsilon \}\) and \(E^{c}\) denotes the complement of \(E\).

2.2 Matrix Space Geometry

Before discussing our convex integration scheme, we recall some basic notions and properties of the hexagonal-to-rhombic phase transformation, which we will use in the sequel.

We begin by introducing notation for the symmetric and antisymmetric part of two matrices.

Definition 7

(Symmetric and Antisymmetric Parts)

Let \(M\in \mathbb{R}^{n\times n}\). We denote the uniquely determined symmetric and antisymmetric parts of \(M\) by

2.2.1 Lamination Convexity Notions

Relying on the notation from Definition 7, in the sequel we discuss the different notions of lamination convexity. Here we distinguish between the usual lamination convex hull (defined by successive rank-one iterations) and the symmetrized lamination convex hull (defined by successive symmetrized rank-one iterations):

Definition 8

(Lamination Convex Hull, Symmetrized Lamination Convex Hull)

We define the following notions of lamination convex hulls:

- (i)

Let \(U\subset \mathbb{R}^{n\times n}\). Then we set

$$\begin{aligned} \mathcal{L}^{0}(U) &:= U, \\ \mathcal{L}^{k}(U) &:= \bigl\{ M\in \mathbb{R}^{2\times 2}: M= \lambda A+ (1- \lambda ) B \mbox{ with } A-B = a \otimes n, \lambda \in [0,1], \\ & \quad \quad A,B \in \mathcal{L}^{k-1}(U)\bigr\} , \quad k\geq 1, \\ U^{lc} &:= \bigcup_{k=0}^{\infty } \mathcal{L}^{k}(U). \end{aligned}$$We refer to \(U^{lc}\) as the laminar convex hull of\(U\) and to \(\mathcal{L}^{k}(U)\) as the laminates of order at most\(k\).

- (ii)

Let \(U\subset \mathbb{R}^{n \times n}_{sym}\). Then we define

$$\begin{aligned} \mathcal{L}^{0}_{sym}(U) &:= U, \\ \mathcal{L}^{k}_{sym}(U) &:= \bigl\{ M\in \mathbb{R}^{2\times 2}: M= \lambda A+ (1-\lambda ) B \mbox{ with } A-B = a \odot n, \lambda \in [0,1], \\ & \quad \quad A,B \in \mathcal{L}^{k-1}_{sym}(U)\bigr\} , \quad k \geq 1, \\ U^{lc}_{sym} &:= \bigcup_{k=0}^{\infty } \mathcal{L}^{k}_{sym}(U). \end{aligned}$$Here \(a\odot b:= \frac{1}{2}(a\otimes b + b\otimes a)\). We refer to \(U^{lc}_{sym}\) as the symmetrized laminar convex hull of\(U\) and to \(\mathcal{L}^{k}_{sym}(U)\) as the symmetrized laminates of order at most\(k\).

- (iii)

We denote the convex hull of a set \(U\subset \mathbb{R}^{m}\) by \(\operatorname{conv}(U)\).

Remark 9

We note that if \(U \subset \mathbb{R}^{n\times n}\) or \(U\subset \mathbb{R}^{n\times n}_{sym}\) is (relatively) open, then also \(U^{lc}\) or \(U^{lc,sym}\) is (relatively) open.

Lemma 10

(Convex Hull = Laminar Convex Hull)

Let\(K\)be as in (5). Then

Moreover, each element\(e\in \operatorname{intconv}(K)\)is symmetrized rank-one connected with each element in\(K\).

Proof

The first point follows from an observation of Bhattacharya (cf. [6] and also Lemma 4 in [49]). The second point either follows from a direct calculation or by an application of Lemma 11 below. □

The following lemma establishes a relation between rank-one connectedness and symmetrized rank-one connectedness. It in particular shows that in two dimensions all symmetric trace-free matrices are pairwise symmetrized rank-one connected.

Lemma 11

(Rank-One vs Symmetrized Rank-One Connectedness)

Let\(e_{1},e_{2} \in \mathbb{R}^{n\times n}_{sym}\)with\(\operatorname{tr}(e_{1})=0=\operatorname{tr}(e_{2})\). Then the following statements are equivalent:

- (i)

There exist vectors\(a\in \mathbb{R}^{n}\setminus \{0\}, n \in S^{n-1}\)such that

$$\begin{aligned} e_{1}-e_{2} = a\odot n. \end{aligned}$$ - (ii)

There exist matrices\(M_{1},M_{2} \in \mathbb{R}^{n\times n}\)and vectors\(a\in \mathbb{R}^{n}\setminus \{0\}, n \in S^{n-1}\)such that

$$\begin{aligned} M_{1} - M_{2} &= a\otimes n, \\ e(M_{1}) &= e_{1}, e(M_{2}) = e_{2}. \end{aligned}$$ - (iii)

\(\operatorname{rank}(e_{1}-e_{2})\leq 2\).

Proof

We refer to [49], Lemma 9 for a proof of this statement. □

This lemma allows us to view symmetrized rank-one connectedness essentially as equivalent to rank-one connectedness.

2.2.2 Skew Parts

We discuss some properties of the associated skew symmetric parts of rank-one connections which occur between points in the interior of \(\operatorname{intconv}(K)\). To this end, we introduce the following identification:

Notation 12

(Skew Symmetric Matrices)

As the two dimensional skew symmetric matrices are all of the form

we use the mapping \(S\mapsto \tilde{\omega }\) to identify \(\operatorname{Skew}(2)\) with ℝ. We define an ordering on \(\operatorname{Skew}(2)\) by the corresponding ordering on ℝ, i.e.,

if \(\tilde{\omega }_{1} \leq \tilde{\omega }_{2}\).

We begin by estimating the symmetric and skew-symmetric parts of a symmetrized rank-one connection:

Lemma 13

Let\(a \in \mathbb{R}^{2}, n \in S^{1}\)with\(a \cdot n=0\). Then

Here\(\|\cdot \|\)denotes the spectral norm, i.e., \(\|A\|:= \sup_{|e|=1}\{e\cdot A e\}\), where\(|\cdot |\)denotes the\(\ell _{2}\)norm.

Proof

Since \(a \bot n\), we obtain that

As \(n\), \(\frac{1}{|a|}a\) forms an orthonormal basis, this shows that \(\|a \odot n\|=|a|/2\).

Similarly, we obtain that

and hence \(\| \omega (a \otimes n)\|=|a|/2\). □

Using the previous result, we can control the size of the skew part which occurs in rank-one connections with \(K\):

Lemma 14

For all matrices\(N\)with\(e(N)\in \operatorname{intconv}(K)\)and with\(N\)being rank-one connected with a matrix\(e^{(j)} \in K\)it holds

Proof

For each \(e\in \operatorname{intconv}(K)\) there are exactly two matrices \(M_{e,i}^{\pm }\) such that

Let \(e-e^{(i)}= \frac{1}{2}(a \otimes n + n \otimes a)\) for some \(a \in \mathbb{R}^{2}\setminus \{0\}, n \in S^{1}\). Then, \(\omega (M _{e,i}^{\pm })= \omega (M_{e,i}^{\pm }) - \omega (e^{(i)})\) is explicitly given by \(\pm \frac{1}{2} (a \otimes n -n \otimes a)\). Thus, Lemma 13 implies

since \(n \in S^{1}\) and \(a \cdot n =0\) by the trace-free condition. As \(\operatorname{conv}(K)\) is a compact set, \(e-e^{(i)}\) is uniformly bounded. Moreover, the diameter of \(\operatorname{conv}(K)\) is less than five, which yields the desired bound. □

In the interest of accessibility to a larger audience, the following subsections are phrased in standard matrix space formulation. It would have equally been possible to phrase these results in terms of conformal and anti-conformal coordinates, which would allow for a more concise formulation of some statements. For a work using these methods, see for instance [1].

2.2.3 Geometry of the Hexagonal-to-Rhombic Phase Transformation

In this subsection, we discuss the specific matrix space geometry of the hexagonal-to-rhombic phase transformation. To this end we decompose each matrix of the form into a component in and a component in direction which essentially corresponds to introducing conformal and anti-conformal coordinates.

With this notation we make the following observations:

Lemma 15

Let\(v_{1}, v_{2} \in \mathbb{R}^{2\times 2}_{sym}\)be as above, and\(\varphi \in \mathbb{R}\). Then

Furthermore, we have that

Proof

Using the trigonometric identities

an immediate computation shows the claim. □

In other words, Lemma 15 allows us to identify all lines in matrix space (through the origin) by their rotation angle. In particular, this gives a simple description of the possible rank-one connections between the energy wells (cf. also Fig. 2(a)). In our application to the hexagonal-to-rhombic phase transformation we have to take into account that non-trivial differences of the matrices \(e^{(1)}\), \(e^{(2)}\), \(e^{(3)}\) lie on the sphere of radius \(\sqrt{3}\) in matrix space (with respect to the spectral norm), which yields slightly different normalization factors for \(a\):

The possible normals between the wells (cf. Lemma 16) (left) and the angles that arise in Lemma 18 (right). The figure on the left depicts the possible normals between the wells, the respective pairs \((a_{ij},n_{ij})\) are marked in the same color. We note that by symmetry it is also possible to pass from \((a,n)\) to \((-a,-n)\). After normalizing appropriately, symmetry further allows to exchange the roles of \(a\), \(n\). The figure on the right depicts the normals which arise in the decomposition of differences \(e-e^{(i)}\) with \(e^{(i)}\in K\) and \(e\in C_{d}\) (cf. Lemma 18). The colors represent the well, with which \(e\in C_{d}\) is connected: Red corresponds to the cone at \(e^{(1)}\), blue to the cone at \(e^{(2)}\) and black to the cone at \(e^{(3)}\). As \(C_{d}\) does not contain the full convex hull of \(K\) (cf. Fig. 3), only vectors which lie within the colored zones arise as possible normals (in particular, there is a gap between these vectors and the vectors which arise as decomposition of differences of the wells) (Color figure online)

Lemma 16

Let\(K\)be as in (5). Then we have that

with (up to rotation symmetry by an angle of\(\pi \))

Proof

This is a consequence of Lemma 15 and the form of the matrices in (5). □

With Lemma 15 at hand, we can also compute the possible (symmetrized) rank-one connections which occur between each well and any possible matrix in \(\operatorname{conv}(K)\):

Lemma 17

Let\(e^{(i)}\in K\)and let\(e\in \operatorname{conv}(K)\). Let\(\varphi \in (-\pi ,\pi ]\)denote the angle from the decomposition from Lemma15for the matrix\(\frac{e^{(i)}-e}{\|e^{(i)}-e\|}\), where\(\|\cdot \|\)denotes the spectral matrix norm. Then,

and

with

Proof

This is a direct consequence of Lemma 15 and of the fact that the set \(K\) forms an equilateral triangle in strain space. □

As an immediate consequence of Lemma 16 and Lemma 17, we infer the following result, which is graphically illustrated in Fig. 2(b):

Lemma 18

Let\(K\)be as in (5), let\(\tilde{C}_{d,j}:= \operatorname{conv}\{e^{(j)}, P_{d}, Q P_{d} Q^{T}, Q^{2} P_{d} (Q ^{2})^{T}\}\)with\(j\in \{1,2,3\}\), \(Q\)being a rotation by\(\frac{2\pi }{3}\)and

where\(d \in (0,\frac{1}{2})\). Further let\(C_{d}\)be the star-shaped domain given by their union depicted in Fig. 3:

Let\(\hat{e}, \bar{e} \in C_{d}\). Suppose that

with\(e^{(i_{1})}, e^{(i_{2})} \in K\)and\(e^{(i_{1})} \neq e^{(i_{2})}\). Define\(\alpha _{m_{1},m_{2}} \in (0,\pi )\)as

where

Then there exists a constant\(C=C(d)\in (0,\pi /4)\)such that

Proof

Arguing as for (21) by using the definition of the set \(C_{d}\), we infer that the angles \(\varphi \) that occur in the representation from Lemma 15 for \(\frac{e-e^{(i)}}{\|e-e ^{(i)}\|}\) with \(e\in C_{d}\) and \(e^{(i)}\in K\) satisfy

Here \(C(d)\in (0,\pi /3)\) is a constant, which depends only on \(d\). The associated symmetrized rank-one connection is determined by \(\varphi \) as stated in Lemma 17. Applied to the situation in Lemma 18 this implies that \(a_{i_{1}}\), \(n_{i_{1}}\), \(a _{i_{2}}\), \(n_{i_{2}}\) are expressed in terms of \(\varphi \) (as in Lemma 17). Since \(d>0\), the sectors parametrized by \(\varphi \) however do not overlap for \(i_{1}\neq i_{2}\). As a consequence of this and of the options in (22), only the claimed angles \(\alpha _{m_{1},m_{2}}\) occur. □

3 The Convex Integration Algorithm

In this section we present and analyze our convex integration algorithm (cf. Algorithms 27 and 30). Our discussion of this consists of four parts: First in Sect. 3.1 we introduce a replacement construction in which a displacement gradient can be modified (cf. Lemmas 19–23). Here we follow Otto’s Minneapolis lecture notes [47] and refer to this construction as a version of Conti’s construction (cf. [15, 20] (Appendix) but also [33]).

Next in Sect. 3.2 we explain how the Conti construction can be exploited to formulate the convex integration algorithm (cf. Algorithms 27 and 30). Here we deviate from the more common qualitative algorithms by precisely prescribing error estimates in strain space, by specifying a covering construction and by controlling the skew part quantitatively.

In Sect. 3.3 we analyze our algorithms and show that they are well-defined (cf. Proposition 31). We further provide a control on the skew part of the resulting construction (cf. Proposition 34).

Finally, in Sect. 3.4 we use Algorithms 27 and 30 to deduce the existence of solutions to the inclusion problem (6), cf. Proposition 36.

We remark that our version of the convex integration scheme is based on particular properties of our set of strains: For the hexagonal-to-rhombic phase transition the laminar convex hull equals the convex hull (cf. Lemma 10). Moreover, we can connect any matrix in \(\operatorname{intconv}(K)\) with the wells \(K\) (cf. Lemma 11). For a general inclusion problem this is no longer possible and hence more sophisticated arguments are necessary. In spite of the restricted applicability of the scheme, we have decided to focus on the hexagonal-to-rhombic phase transformation, as it yields one of the simplest instances of convex integration and illustrates the difficulties and ingredients which have to be dealt with in proving higher Sobolev regularity in the simplest possible set-up.

3.1 The Replacement Construction

In this section we describe the replacement construction that allows us to modify constant gradients by replacing them with an affine construction that preserves the boundary values. Moreover, the resulting new gradients are controlled (cf. Lemma 23).

We will make use of a construction by Otto [47], see also the video at [46], which is a variant of a construction by Conti [15]. We will recall the construction here in detail since it is not publicly available in printed form.

Lemma 19

(Variable Conti Construction)

Let\(\varOmega =(-1,1)^{2}\). Let\(\lambda \in (0,1)\). Define

Then there exists\(u: \mathbb{R}^{2}\rightarrow \mathbb{R}^{2}\)Lipschitz such that

In our applications we make use of a version of this construction with slightly different conventions:

Corollary 20

Let\(\varOmega =(-1,1)^{2}\)and\(\lambda \in (0,1)\). Then there exists\(u: \mathbb{R}^{2}\rightarrow \mathbb{R}^{2}\)Lipschitz such that

where

and the volumes of the level sets of\(\nabla u\) (total volume 4) satisfy

Proof of Corollary 20

We apply the construction of Lemma 19 with \(1-\lambda \) in place of \(\lambda \) and multiply the resulting function by \(\frac{1}{2 \lambda }\). We remark that the matrices of this corollary are the ones given on p. 56 of [47]. □

Proof of Lemma 19

In order to construct the function \(u\), we prescribe the value of \(u\) at the points \((0,\pm \lambda )\), \(( \pm \lambda , 0)\) for \(\lambda \in (0,1)\) to be determined and then consider linear interpolations. This then yields a piecewise affine Lipschitz map. It remains to verify that all matrices are as claimed in the lemma (cf. also Fig. 4).

The level sets of \(\nabla u\) in Conti’s construction of Lemma 19 for \(\lambda =\frac{1}{2}\)

We start with the ansatz given in Fig. 5. The value of \(u\) at the points \((\pm \lambda ,0)\), \((0,\pm \lambda )\) is chosen in such a way that linear interpolation in the triangles on the sides of the square in Fig. 4 yields \(\nabla u \in \{M _{0},M_{4}\}\), i.e.,

By linear interpolation on the inner diamond we hence obtain that

Choosing \(\lambda =\frac{1}{2}\), we thus obtain \(\nabla u = M_{1}\). It remains to check the value of \(\nabla u\) on the triangles which interpolate between the sides of the inner diamond and the corners of the outer square. By symmetry it suffices to consider the lower left triangle. Using again linear interpolation, there \(\nabla u\) has to satisfy

Setting \(\lambda = 1/2\) this equals

which is the matrix \(M_{2}\) from Lemma 19.

□

Using this construction as a basic building block, the following lemma allows us to replace a general matrix \(M \in \mathbb{R}^{2\times 2}\) and to restrict the replacement matrices to an \(\epsilon \)-neighborhood of a rank-one line passing through \(M\).

Lemma 21

(Deformed Conti Construction, p. 57 of [47])

Let\(M\), \(M_{0}\), \(M_{1}\)be given matrices such that

Then, for every\(\epsilon >0\)there exist matrices\(\tilde{M}_{1}\), \(\tilde{M}_{2}\), \(\tilde{M}_{3}\), \(\tilde{M}_{4}\)with

a rectangle\(\varOmega \subset \mathbb{R}^{2}\)of aspect ratio\(\delta =\frac{\epsilon }{20 |a|}\)and a Lipschitz map\(u: \mathbb{R} ^{2}\rightarrow \mathbb{R}^{2}\)such that

Furthermore, the level sets of\(\nabla u\)are given by the union of at most 16 triangles.

Proof

Mapping \(u \mapsto u - Mx\), it suffices to consider the case \(M=0\). Furthermore, rotating the rectangular domain by \(x \mapsto Qx, Q \in \mathit{SO}(2)\) and scaling \(u \mapsto \frac{1}{|a|} u\), we may assume that \(n= (1,0)\) and \(a=(0,-1)\). Hence,

where \(\lambda =\frac{1}{4}\) and \(-\frac{\lambda }{1-\lambda ^{2}}= - \frac{4}{15}=\frac{1}{5} (4) + \frac{4}{5}(-\frac{4}{3})\). Applying the construction of Corollary 20 (with \(\lambda = \frac{1}{4}\) instead) rescaled by \(\frac{2}{\lambda }\), we obtain a Lipschitz function \(v : \mathbb{R}^{2} \rightarrow \mathbb{R}^{2}\), which vanishes outside the rectangle \(\tilde{\varOmega }=(-1,1)^{2}\) and satisfies

However, the values of \(\nabla v\) as given in (23) in Corollary 20 are not yet in an \(\epsilon \)-neighborhood of \(\{M,M_{1},M_{2}\}\). Hence, we consider the following change of coordinates and the following modified displacement:

We remark that this transforms the domain \(\tilde{\varOmega }=(-1,1)^{2}\) into the domain \((-1,1)\times (-\delta ,\delta )\) and moreover note that this scaling preserves volume fractions. Rewriting \(\nabla _{x} v(x)\) into \(\nabla _{y} u\) yields

which in particular leaves \(M_{0}\) invariant. Letting \(\delta \) be sufficiently small, we thus obtain the desired \(\epsilon \)-closeness. Undoing the initial rescaling with \(|a|\) leads to the precise requirement

This implies the claimed ratio for \(\varOmega \) by noting that \(|\nabla _{x} v| \leq 20\). □

Remark 22

We remark that both the side ratio \(\delta \) as well as the error \(\epsilon \) remain unchanged under rescalings of the form \(\mu u(\frac{x}{ \mu })\) (as this leaves the gradient invariant).

We now show how to apply Lemma 21 to the setting of symmetric matrices in our three-well problem (5):

Lemma 23

(Application to the three-well-problem, p. 60 ff. of [47])

Suppose that\(M \in \mathbb{R}^{2\times 2}\)with

and let\(\epsilon _{0}\leq \frac{\operatorname{dist}(e(M), \partial \operatorname{conv}(K))}{100}\). Let\(e^{(i)}\)with\(i\in \{1,2,3\}\)be such that

Then, for every\(0<\epsilon <\epsilon _{0}\)there exist a Lipschitz function\(u:\mathbb{R}^{2}\rightarrow \mathbb{R}^{2}\), a rectangular domain\(\varOmega \) (with ratio\(1:\delta \)and\(\delta =\frac{\epsilon }{20|e(M)-e^{(i)}|}\)), and matrices\(\tilde{M_{0}}, \dots , \tilde{M_{4}}\)with symmetric parts\(e^{(i)}\), and\(\tilde{e}_{1}, \dots ,\tilde{e}_{4} \in \operatorname{intconv}(K)\), such that

Remark 24

We point out that the strain \(e^{(i)}\) chosen in (26) is not required to be the one closest to \(e(M)\) if the distance to \(e^{(i)}\) is not much larger than the distance to the closest well. This avoids changing the wells constantly, if a matrix has a symmetrized part very close to the middle between two wells. This becomes important in our quantitative analysis in Sects. 4 and 5 since the “rotated” case behaves considerably worse than the “parallel” case.

Proof

Let \(M\) and \(e^{(i)}\) be given. Since \(e^{(1)}\), \(e^{(2)}\), \(e^{(3)}\) are arranged in an equilateral triangle with side lengths \(\sqrt{3}\) (with respect to the spectral norm) and as (26) holds, there exists \(\tilde{e}_{1} \in \operatorname{intconv}(K)\) such that

Next let \(S:=\omega (M) \in \operatorname{Skew}(2)\) and let \(\tilde{S} \in \operatorname{Skew}(2)\) to be determined. Then we obtain

Since we are in two dimensions, any two symmetric, trace-free matrices are symmetrized rank-one connected (cf. Lemma 11). Thus, there exist vectors \(a\in \mathbb{R}^{2}\setminus \{0\}\), \(n\in S^{1}\) such that

Furthermore, as \(\operatorname{tr}(e^{(i)})= \operatorname{tr}( \tilde{e}_{1})\), \(a\) and \(n\) are orthogonal. Choosing

we thus obtain that the matrices

are rank-one connected (with difference \(a \otimes n\) or \(n \otimes a\), respectively) and

We may hence apply the construction of Lemma 21 with \(M\), \(M_{0}\), \(M_{1}\) as defined above. Noting that \(\|e(M)-e^{(i)} \|= \frac{|a|}{2} \) (cf. Lemma 13), Lemma 21 implies the statement on the side ratio for \(\varOmega \). Finally, we note that the \(\epsilon \)-closeness of the matrices \(\tilde{M}_{1}, \ldots , \tilde{M}_{4}\) also implies that their symmetric parts are \(\epsilon \)-close. □

Notation 25

In the preceding Lemma 23 the matrices \(\tilde{M} _{0}, \ldots , \tilde{M}_{4}\) obey the same (convexity) relations as the ones in Lemma 21, where for the matrices \(M_{0}\) and \(M_{1}\) we insert the ones from (29), cf. Figs. 6 and 7. The error estimates in (24) thus

motivate us to refer to the matrix \(\tilde{M}_{4}\) as stagnant (with respect to the replaced matrix \(M\)).

The matrices \(\tilde{M}_{1}\), \(\tilde{M}_{2}\), \(\tilde{M}_{3}\) will also be called pushed-out matrices (with the factors \(\frac{4}{3}\) and \(\frac{16}{15}\) respectively), since by construction

$$ \frac{4}{3} \bigl\vert e(M)-e^{(i)} \bigr\vert -\epsilon \leq \bigl\vert e( \tilde{M}_{1})-e^{(i)} \bigr\vert \leq \frac{4}{3} \bigl\vert e(M)-e^{(i)} \bigr\vert + \epsilon , $$and similarly for the other matrices.

In order to emphasize the dependence on \(M\), we also use the notation

Although the matrices \(\tilde{M}_{0},\dots ,\tilde{M}_{4}\) also depend on the choice of \(e^{(i)}\), in the sequel we will often suppress this additional dependence for convenience as the reference well will be clear in most of our applications.

Relative positions of the symmetric part of the matrices inside the convex hull. Along the dashed rank-one line, the ordering of the matrices here is the same as in Fig. 6

We refer to the construction of Lemma 23 as the\((\epsilon , \delta )\)Conti construction with respect to\(M\),\(e^{(i)}\). If some of the parameters of this are self-evident from the context, we also occasionally omit them in the sequel.

We emphasize that in our construction in Lemma 23, we have the choice between two different solutions, which differ in the sign of their skew symmetric component and thus in the choice of the corresponding rank-one connection (cf. (28)). This freedom of choice is a central ingredient in the control over the skew symmetric part of the iterated constructions. We summarize this observation in the following corollary.

Corollary 26

Let\(M\), \(e^{(i)}\), \(\epsilon _{0}\), \(\epsilon \)be as in Lemma23, and\(a\), \(n\)as given in (27) in the proof of Lemma23. Then there exist two Lipschitz functions\(u_{+}, u_{-}: \mathbb{R}^{2}\rightarrow \mathbb{R}^{2}\)such that on the set where\(e(\nabla u_{\pm }) =e^{(i)}\)

Furthermore, up to an error of size\(\epsilon \)the skew parts on the other level sets are given by

Proof

From (29) we read off the skew symmetric parts of \(M_{0}\), \(M_{1}\). The skew symmetric part of \(M_{2}:= \frac{1}{5}M_{0} + \frac{4}{5}M_{1}\) is a consequence of that. The result then follows from Lemma 21. □

3.2 The Convex Integration Algorithm

In this subsection we formulate our convex integration algorithm. It consists of two parts, Algorithms 27 and 30. The first part (Algorithm 27) determines the symmetric part of the iterated displacement vector field, while the second part (Algorithm 30) deals with the choice of the “correct” skew component.

After formulating the algorithms, we prove their well-definedness (i.e. show that it is indeed possible to iterate this construction as claimed).

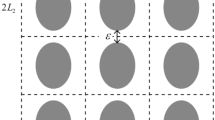

In the whole section we assume that the domain \(\varOmega \) and the matrix \(M\) in (6) fit together in the sense that \(\varOmega = Q_{ \beta }[0,1]^{2}\), where \(Q_{\beta }\) is the rotation of the Conti construction from Lemma 21 for \(M\) (and the closest energy well \(e^{(i)}\)). We emphasize here the rotation angle \(\beta \), which will become important in our analysis. These “special” domains will play the role of the essential building blocks of the construction of convex integration solutions in general Lipschitz domains (cf. Sect. 6).

We define our convex integration scheme:

Algorithm 27

(Quantitative Convex Integration Algorithm, I)

We consider the following construction:

- Step 0::

State space and data.

- (a)

State space. Our state space is given by

$$\begin{aligned} SP_{j}:=\bigl(j,u_{j},\{\varOmega _{j,k}\}_{k\in \{1,\dots ,J_{j}\}}, e_{j} ^{(p)},\epsilon _{j}, \delta _{j}\bigr). \end{aligned}$$(30)Here \(j \in \mathbb{N}\) and \(u_{j}: \varOmega \rightarrow \mathbb{R}^{2}\) is a piecewise affine function. The sets

$$\begin{aligned} \varOmega _{j,k}\subset \varOmega \cap \{\nabla u_{j}=\mathrm{const} \}\cap \bigl\{ e(\nabla u_{j})\notin K\bigr\} \end{aligned}$$are closed triangles, which form a (up to null sets) disjoint, finite partition of the level sets of \(\nabla u_{j}\), for which \(e(\nabla u _{j}) \notin K\). Let \(\varOmega _{j}:= \bigcup_{k=1}^{J_{j}} \varOmega _{j,k}\) denote the set on which \(e(\nabla u_{j})\) is not yet in one of the energy wells.

The function

$$\begin{aligned} e^{(p)}_{j}:\varOmega \rightarrow K \end{aligned}$$is constant on each of the sets \(\varOmega _{j,k}\). It essentially keeps track of the well closest to \(e(\nabla u_{j}|_{\varOmega _{j,k}})\) for each \(j\), \(k\).

The functions

$$\begin{aligned} \epsilon _{j}, \delta _{j}: \varOmega \rightarrow \mathbb{R}, \end{aligned}$$are constant on each set \(\varOmega _{j,k}\) and vanish in \(\varOmega \setminus \varOmega _{j}\). They correspond to the error and side ratio in the Conti construction which is to be applied in \(\varOmega _{j,k}\). The functions \(\epsilon _{j}\), \(\delta _{j}\) are coupled by the relation

$$\begin{aligned} \delta _{j} = \frac{\epsilon _{j}}{10^{2} d_{K}}, \mbox{ where } d_{K}:= \operatorname{dist}\bigl(e(M),K\bigr). \end{aligned}$$Hence, in the following (update) steps, we will mainly focus on \(\epsilon _{j}\) and assume that \(\delta _{j}\) is modified accordingly.

- (b)

Data. Let \(M\in \mathbb{R}^{2\times 2}\) with \(e(M)\in \operatorname{intconv}(K)\). Let \(\varOmega = Q_{\beta }[0,1]^{2}\) with \(Q_{\beta }\) denoting the rotation associated with \(M\) (cf. explanations above). Further set

$$\begin{aligned} d_{0} &:= \operatorname{dist}\bigl(e(M), \partial \operatorname{conv}(K)\bigr), \\ \epsilon _{0} &:= \min \biggl\{ \frac{d_{0}}{100}, \frac{1}{1600} \biggr\} , \qquad \delta _{0} := \frac{\epsilon _{0}}{10^{2} d_{K}}. \end{aligned}$$

- (a)

- Step 1::

Initialization, definition of \(SP_{1}\). We consider the data from Step 0 (b) and in addition define

$$\begin{aligned} u_{0}(x) &=Mx- \omega (M)x, \\ e^{(p)}_{0} &=\operatorname{argmin}\operatorname{dist} { \bigl(e(M), K\bigr)}. \end{aligned}$$In the case of non-uniqueness in the above minimization problem, we arbitrarily choose any of the possible options.

Possibly dividing \(\delta _{0}\) by a factor up to 100, we may assume that \(K_{0,0}:=\delta _{0}^{-1}\in \mathbb{N}\). We cover \(\varOmega =Q _{\beta }[0,1]^{2}\) by \(K_{0,0}\) many (translated) up to null-sets disjoint \((\epsilon _{0},\delta _{0})\) Conti constructions with respect to \(\nabla u_{0}\) and \(e^{(p)}_{0}\) (cf. Notation 25). We denote these sets by \(R_{0,1}^{1},\dots , R_{0,K_{0,0}}^{1}\). We remark that \(\varOmega = \bigcup_{l=1}^{K_{0,0}} R_{0,l}^{1}\) is possible with (up to null sets) disjoint choices of \(R_{0,l}^{1}\), \(l\in \{1,\dots ,K_{0,0}\}\), as by definition of the domain \(\varOmega \) the sets \(R_{0,l}^{1}\), \(l\in \{1,\dots ,K_{0,0}\}\), are parallel to one of the sides of \(\varOmega \) and as \(\delta _{0}^{-1} \in \mathbb{N}\). We apply Step 2(b) on these sets. As a consequence we obtain \(SP_{1}\).

- Step 2::

Update. Let \(SP_{j}\) be given. Let \(M_{j,k}:=\nabla u _{j}|_{\varOmega _{j,k}}\) for some \(k\in \{1,\dots , J_{j}\}\). We explain how to update \(u_{j}\) and \(\epsilon _{j}\), \(\delta _{j}\) on \(\varOmega _{j,k}\).

We seek to apply the construction of Lemma 23 with \(\epsilon _{j,k}:=\epsilon _{j}|_{\varOmega _{j,k}}\), \(\delta _{j,k}:=\delta _{j}|_{\varOmega _{j,k}}\) and

$$\begin{aligned} e^{(p)}_{j,k}, \ M_{j,k} \end{aligned}$$(31)in a part of \(\varOmega _{j,k}\). To this end, we cover the domain \(\varOmega _{j,k}\) by a union of finitely many (up to null sets) disjoint triangles and rectangles. The rectangles are chosen as translated and rescaled versions of the domains in the \((\epsilon _{j,k}, \delta _{j,k})\) Conti construction with respect to the matrices from (31). We denote these rectangles by \(R_{j,l}^{k}\), \(l\in \{1,\dots ,K_{j,k}\}\), for some \(K_{j,k}\in \mathbb{N}\) and require that they cover at least a fixed volume fraction \(v_{0}>0\) of the overall volume of \(\varOmega _{j,k}\) (which is always possible, cf. Sect. 4 for our precise covering algorithm).

We define new sets \(\tilde{\varOmega }_{j+1,l}^{k}\), \(l\in \{1,\dots , \tilde{K}_{j,k}\}\): These are given by the triangles which are in \(\varOmega _{j,k}\setminus \bigcup_{l=1}^{K_{j,k}}R_{j,l}^{k}\) and by the triangles which form the level sets of the deformed Conti constructions on the rectangles \(R_{l}^{k}\).

- (a)

For \(x\in \varOmega _{j,k}\setminus \bigcup_{l=1}^{K _{j,k}}R_{j,l}^{k}\) we define

$$\begin{aligned} u_{j+1}(x) &:=u_{j}(x), \\ \epsilon _{j+1}(x) &:= \epsilon _{j}(x) \quad \bigl(\mbox{and hence } \delta _{j+1}(x):= \delta _{j}(x)\bigr), \\ e^{(p)}_{j+1}(x) &:= e^{(p)}_{j}(x). \end{aligned}$$Further we set \(\varOmega _{j+1,l}^{k}:=\tilde{\varOmega }_{j+1,l}^{k}\). Carrying this out for all \(k\in \{1,\dots ,J_{j}\}\) hence yields a collection of triangles

$$ \bigl\{ \varOmega _{j+1,l}^{k}\bigr\} _{k\in \{1,\dots ,J_{j}\},l\in \{1,\dots ,K_{j,k} \}} $$covering \(\varOmega _{j}\setminus \bigcup_{l=1}^{K_{j,k}}R_{j,l} ^{k}\).

- (b)

In the sets \(R_{j,l}^{k}\) we apply the Conti construction with the matrices from (31). In this application we choose the skew part according to Algorithm 30. With \(\tilde{\varOmega }_{j+1,l}^{k} \subset \bigcup_{k=1}^{J_{j}} \bigcup_{l=1}^{K_{j,k}} R_{j,l}^{k}\) as defined in Step 2 (a), we define \(u_{j+1}|_{\tilde{\varOmega }_{j+1,l}^{k}}\) as the function from the corresponding Conti construction. More precisely, in each of the rectangles \(R_{j,l}^{k}\) the matrix \(M_{j,k}\) has been replaced by the matrices

$$\begin{aligned} \tilde{M}_{0}(M_{j,k}), \dots , \tilde{M}_{4}(M_{j,k}), \end{aligned}$$with \(e(\tilde{M}_{0}(M_{j,k})) = e^{(p)}_{j,k}\). For each \(x\in \tilde{\varOmega }_{j+1,l}^{k}\) with \(\tilde{\varOmega }_{j+1,l}^{k}\) as above, we define

$$\begin{aligned} \epsilon _{j+1}(x):= \textstyle\begin{cases} \epsilon _{0} &\mbox{for } \nabla u_{j+1}|_{\tilde{\varOmega }_{j+1,k}} \in \{\tilde{M}_{1}(M_{j,k}),\dots ,\tilde{M_{3}}(M_{j,k})\}, \\ \epsilon _{j}(x)/2 &\mbox{for } \nabla u_{j+1}|_{\tilde{\varOmega }_{j+1,k}} = \tilde{M}_{4}(M_{j,k}), \\ 0 &\mbox{for } \nabla u_{j+1}|_{\tilde{\varOmega }_{j+1,k}} = \tilde{M}_{0}(M_{j,k}). \end{cases}\displaystyle \end{aligned}$$For the definition of \(\delta _{j+1}\) we recall its coupling with \(\epsilon _{j+1}\). We further set

$$\begin{aligned} e^{(p)}_{j+1}(x) := \textstyle\begin{cases} \operatorname*{argmin} _{i\in \{1,2,3\}} & \{|e(\nabla u_{j+1})|_{ \tilde{\varOmega }_{j+1,k}} - e^{(i)}|\} \\ &\mbox{for } \nabla u_{j+1}|_{\tilde{\varOmega }_{j+1,k}} \in \{ \tilde{M}_{1}(M_{j,k}),\dots ,\tilde{M_{3}}(M_{j,k})\}, \\ e^{(p)}_{j}(x) &\mbox{for } \nabla u_{j+1}|_{\tilde{\varOmega }_{j+1,k}} = \tilde{M}_{4}(M_{j,k}), \\ e^{(p)}_{j}(x) &\mbox{for } \nabla u_{j+1}|_{\tilde{\varOmega }_{j+1,k}} = \tilde{M}_{0}(M_{j,k}). \end{cases}\displaystyle \end{aligned}$$Here we choose an arbitrary possible minimizer if there is non-uniqueness. Finally, we possibly split each of the sets \(\tilde{\varOmega }_{j+1,l}^{k} \in \bigcup_{l=1}^{K_{j,l}}R_{j,l} ^{k}\) into at most four smaller triangles (cf. Sect. 4.2) and add them to the collection \(\{\varOmega _{j+1,l} ^{k}\}_{k\in \{1,\dots , J_{j}\}, l \in \{1,\dots , K_{j,k}\}}\). Upon relabeling this yields a new collection \(\{\varOmega _{j+1,k}\}_{k\in \{1, \dots , J_{j+1}\}}\).

As a result of Steps 2 (a) and (b) we obtain \(SP_{j+1}\).

- (a)

While this algorithm prescribes the symmetric part of the iteration, we complement it with an algorithm which defines the choice of the skew part. Here the main objectives are to keep the resulting skew parts uniformly bounded (which is necessary, if we seek to obtain bounded solutions to (6)) and simultaneously to ensure the choice of the “right” rank-one direction (cf. Sect. 5, Lemma 63). Here the rank-one direction has to be chosen “correctly” in the sense that the successive Conti constructions are not rotated too much with respect to one another (which corresponds to the “parallel” case, cf. Definition 29).

In order to make this precise, we introduce two definitions: the first (Definition 28) allows us to introduce an “ordering” on the triangles in \(\{\varOmega _{j,k}\}_{k\in \{1,\dots ,J_{j}\}}\) for different values of \(j\in \mathbb{N}\). With this at hand, we then define the notions of being parallel or rotated (cf. Definition 29).

Definition 28

Let \(D\in \{\varOmega _{j,k}\}_{k\in \{1,\dots ,J_{j}\}}\) for \(j\geq 1\). Then a triangle \(\hat{D} \subset D\) is a descendant of\(D\)of order\(l\), if \(\hat{D}\in \{\varOmega _{j+l,k}\}_{k\in \{1,\dots ,J_{j+l} \}}\) is (part of) a level set of \(\nabla u_{j+l}\) and is obtained from \(D\) by an \(l\)-fold application of the update step of Algorithm 27 (where we specify the covering to be the one described in Sect. 4). The set of descendants of\(D\)of order\(l\) is denoted by \(\mathcal{D}_{l}(D)\). We define \(\mathcal{D}(D):=\bigcup_{l=1}^{\infty }\mathcal{D}_{l}(D)\).

A triangle \(\bar{D} \in \{\varOmega _{j,k}\}_{k\in \{1,\dots ,J_{j}\}}\) is a predecessor of order\(l\)of\(D\), if \(D\in \mathcal{D}_{l}( \bar{D})\). We then write \(\bar{D}\in \mathcal{P}_{l}(D)\) and also use the notation \(\mathcal{P}(D)\) for the set of all predecessors of \(D\).

With this we define the parallel and the rotated cases:

Definition 29

Let \(e^{(p)}_{j,k}\) be as in Algorithm 27. Let \(D\in \{\varOmega _{j,k}\}_{k\in \{1,\dots ,J_{j}\}}\) for \(j\geq 1\). Let \(j_{0}\neq 0\) be the smallest index for which \(\mathcal{P}_{j_{0}}(D) \ni \bar{D} \neq D\) (i.e. \(\mathcal{P}_{j_{0}}(D)\) was the last triangle in Algorithm 27 to which Step 2 (b) was applied instead of Step 2 (a)). Then, if for a.e. \(x\in D\)

we say that in step\(j\)the triangle\(D\)is in the parallel case. If there is no possible confusion, we also just refer to \(D\) as in the parallel case.

If for a.e. \(x \in D\)

we say that in step\(j\)the triangle\(D\)is in the rotated case. If there is no possible confusion, we also just refer to \(D\) as in the rotated case.

Let us comment on this definition: Intuitively, its objective is to describe whether successive Conti constructions can be chosen as essentially parallel or whether they are necessarily substantially rotated with respect to each other (hence, these notions will also play a crucial role in Sect. 4, where we construct our precise covering). More precisely, let \(SP_{j}\) be as in Algorithm 27 and let \(j\), \(j_{0}\), \(D\), \(\bar{D}\) be as in Definition 29. Then, at the iteration step \(j_{0}\) the triangle \(\bar{D}\) was a subset of one of the Conti rectangles \(R_{j-j_{0},l}^{k}\). Thus, \(u_{j-j_{0}}\) is modified according to the Conti construction with respect to \(\nabla u_{j-j_{0}}|_{\bar{D}}\), \(e_{j-j_{0}}^{(p)}|_{\bar{D}}\) in this domain. In particular, the difference of the matrices \(e(\nabla u_{j-j_{0}}|_{\bar{D}})\), \(e_{j-j_{0}}^{(p)}|_{\bar{D}}\) determines a direction \(e\) in strain space (up to a choice of the skew direction (cf. Corollary 26) this is directly related to the orientation of the Conti rectangle \(R_{j-j_{0},l}^{k}\)). By virtue of Corollary 20 all of the new matrices \(e(\tilde{M}_{0}(\nabla u_{j-j_{0}}|_{\bar{D}})),\dots \), \(e( \tilde{M}_{4}(\nabla u_{j-j_{0}}|_{\bar{D}}))\) essentially lie on the line \(e\) in strain space. Hence the direction which is determined by the difference of \(e^{(p)}_{j-j_{0}+1}|_{D}\) and \(e(\nabla u_{j-j_{0}+1}|_{D})\), is still essentially parallel to the directions \(e\) (in strain space). As by definition (we are now in Step 2(a) of Algorithm 27) the values of \(e^{(p)}_{j-j _{0} + l}|_{D}\) and of \(\nabla u_{j-j_{0} +l}|_{D}\) do not change further until \(l=j_{0}\) is reached, the requirement in (32) implies that the direction \(e\) spanned by \(e(\nabla u_{j-j_{0}}|_{\bar{D}})\), \(e^{(p)}_{j-j_{0}}|_{\bar{D}}\) and the one spanned by \(e(\nabla u_{j}|_{D})\), \(e^{(p)}_{j}|_{D}\) are essentially parallel (cf. Lemma 37 and Remark 38 for the precise statements). If we choose the correct skew directions in Step 2(b) of Algorithm 27, we can hence ensure that the successive Conti constructions are essentially parallel, if (32) is satisfied.

We remark that for this argument to hold and for it to yield new, significant information, it was necessary in Definition 29 to mod out the cases in which Step 2(a) was active, i.e., \(\mathcal{P}_{l}(D) = \{D\}\), as during these there are no changes.

If (33) holds, then the directions of the successive Conti constructions are necessarily substantially rotated with respect to each other (cf. Lemma 39 for the precise bounds). In this case we cannot substantially improve the situation to being more parallel by choosing the skew part appropriately in Corollary 26. Thus, in the sequel, we will exploit these instances as possibilities to control the size of the skew part and to use this, if necessary, to change the sign of the skew direction. The precise formulation of this is the content of Algorithm 30.

Algorithm 30

(Quantitative Convex Integration Algorithm, II)

Let \(\varOmega \), \(u_{j}:\varOmega \rightarrow \mathbb{R}^{2}\) and \(SP_{j}\) for \(j\geq 1\) be as in Algorithm 27. We further consider

This function will be defined to be piecewise constant on \(\varOmega \) and to be constant on each triangle \(\varOmega _{j,k}\). It will define the skew part of \(\nabla u_{j}\) on \(\varOmega _{j,k}\), i.e.,

- Step 1::

Initialization. Let \(M\) be as in Step 1 in Algorithm 27. Then we define

$$\begin{aligned} \omega _{0}(x) = 0 \mbox{ for a.e. } x \in \varOmega . \end{aligned}$$In the initialization step of Algorithm 27 we choose \(\omega _{1}\) arbitrarily.

- Step 2::

Update. Let \(j\in \mathbb{N}, j\geq 1\). Let \(\omega _{j}\) and \(\varOmega _{j,k}\) be given. Suppose that \(\tilde{\varOmega }_{j+1,l} ^{k}\) with \(\tilde{\varOmega }_{j+1,l}^{k}\in \mathcal{D}_{1}(\varOmega _{j,k})\) is constructed from \(\varOmega _{j,k}\) by our covering argument (cf. Step 2 in Algorithm 27). Then we define \(\omega _{j+1}\) as follows:

- (a)

If \(\tilde{\varOmega }_{j+1,l}^{k}\) is not part of one of the Conti constructions in the covering, then we set

$$\begin{aligned} \omega _{j+1}|_{\tilde{\varOmega }_{j+1,l}^{k}} = \omega _{j}|_{\varOmega _{j,k}}. \end{aligned}$$ - (b)

If \(\tilde{\varOmega }_{j+1,l}^{k}\) is part of one of the Conti constructions in the covering, then by Algorithm 27 we seek to apply the construction of Lemma 23 with scale \(\epsilon _{j}|_{\varOmega _{j,k}}\) and \(e^{(p)}_{j}|_{\varOmega _{j,k}}\), \(\nabla u_{j}|_{\varOmega _{j,k}}\). Thus, by Corollary 26 we have two possible choices for the skew part of \(\nabla u_{j+1}\). These are determined by their sign. To define the sign, let \(j_{0}\in \mathbb{N}\) be the smallest integer such that \(D:=\mathcal{P}_{j_{0}}(\varOmega _{j,k}) \neq \varOmega _{j,k}\). We then choose the sign of the new skew direction \(\omega _{j+1}|_{\tilde{\varOmega }_{j+1,l}^{k}}\) (and hence determine the whole corresponding skew part) according to

$$\begin{aligned} &\operatorname{sgn}(\omega _{j+1}|_{\tilde{\varOmega }_{j+1,l}^{k}} - \omega _{j}|_{\tilde{\varOmega }_{j+1,l}^{k}}) \\ &\quad := \left\{ \textstyle\begin{array}{l@{\quad}l} \operatorname{sgn}(\omega _{j}|_{\varOmega _{j,k}}- \omega _{j-j_{0}}|_{ \varOmega _{j,k}}) & \mbox{if } e^{(p)}_{j}|_{\varOmega _{j,l}^{k}} = e^{(p)} _{j-j_{0}}|_{D}, \\ -1 & \mbox{if } e^{(p)}_{j}|_{\varOmega _{j,l}^{k}} \neq e^{(p)}_{j-j _{0}}|_{D} \wedge \omega _{j}|_{\varOmega _{j,k}}\geq 0, \\ 1 & \mbox{if } e^{(p)}_{j}|_{\varOmega _{j,l}^{k}} \neq e^{(p)}_{j-j _{0}}|_{D} \wedge \omega _{j}|_{\varOmega _{j,k}}< 0. \end{array}\displaystyle \right. \end{aligned}$$

After having carried out the relabeling step, in which we pass from \(\tilde{\varOmega }_{j+1,l}^{k}\) to \(\varOmega _{j+1,l}\), the function \(\omega _{j+1}\) is constant on each of the triangles in \(\varOmega _{j+1,l}\). Together with Algorithm 27 this completes the construction of \(\nabla u_{j+1}\).

- (a)