Abstract

We develop a globalized Proximal Newton method for composite and possibly non-convex minimization problems in Hilbert spaces. Additionally, we impose less restrictive assumptions on the composite objective functional considering differentiability and convexity than in existing theory. As far as differentiability of the smooth part of the objective function is concerned, we introduce the notion of second order semi-smoothness and discuss why it constitutes an adequate framework for our Proximal Newton method. However, both global convergence as well as local acceleration still pertain to hold in our scenario. Eventually, the convergence properties of our algorithm are displayed by solving a toy model problem in function space.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Subject of this work is to generalize the idea of Proximal Newton methods for composite objective functions to a Hilbert space setting, aiming for the efficient solution of non-convex, non-smooth variational problems. The optimization problem reads

where \(f: X \rightarrow \mathbb {R}\) is assumed to be smooth in some adequate sense and \(g: X \rightarrow \mathbb {R}\) is possibly not. The domain of both f and g is given by a subset of an arbitrary Hilbert space X.

Originally, Fukushima and Mine introduced the Proximal Gradient method in the Euclidean \(\mathbb {R}^n\) for optimization problems of the above form, cf. [8]. More specifically, this early version of the Proximal Gradient method constitutes a special case of a procedure studied by Tseng and Yun, cf. [27]. Further research showed that variously defined line search techniques lead to global convergence of the algorithm even under appropriate inexactness conditions for the solutions of the subproblem for step computation, cf. for example [3, 7, 9, 15, 22, 24]. Additionally, local acceleration results have been achieved by utilizing second order information of the smooth part close to optimal solutions of the original minimization problem.

Obviously, further assumptions on the form of the composite objective functional open the door to more specific adaptions of the solution algorithm. For example in [6, 17, 25], the authors assume convexity and self-concordance of the smooth part f in order to employ damped Proximal Newton methods. Alternatively, reformulations of the original minimization problem can be useful. As a consequence, methods which have been proven to work for other problem classes can also be applied in our case. For example in [4, 5, 18] fixed point algorithms were employed or consider [1] for a reformulation of (1) as a constrained problem.

A different point of view onto this class of problems was taken by Milzarek and Ulbrich in [20]. For \(g(x) :=\lambda \bigl \Vert x \bigl \Vert _{1}\) with \(\lambda > 0\), they considered a semi-smooth Newton method with filter globalization which Milzarek later on generalized to work also for arbitrary convex functions for g, cf. [19].

Recently, Kanzow and Lechner discussed a globalized, inexact and possibly non-convex Proximal Newton-type method in Euclidean space \(\mathbb {R}^n\), cf. [13]. There, the algorithm resorted to Proximal Gradient steps in the case of insufficient descent together with a line-search procedure in order to achieve global convergence and cope with lacking convexity of the objective functional.

The work of Lee and Saunders [16] gives an instructive overview of a generic version of the Proximal Newton method as well as several convergence results. Our contributions beyond [16] can be summarized as follows: Most obviously, we generalize the Euclidean space setting to a Hilbert space one. Additionally, in [16] only elliptic bilinear forms for the second order model are considered and the non-smooth part g is required to be convex. We use a more general framework of convexity assumptions for the composite objective function F. Furthermore, we do not demand second order differentiability with Lipschitz-continuous second order derivative of the smooth part f but instead settle for adequate semi-smoothness assumptions. We replace the simple line-search approach for globalization with a more sophisticated proximal arc-search method which additionally softens the convexity assumptions on the objective functional. Eventually, we establish a more refined version of the global convergence proof and also give a dual interpretation for the stopping criterion of the algorithm. To our knowledge, also the notion of second order semi-smoothness for f is yet to appear in literature. On the other hand, our work here covers neither inexact nor Proximal Quasi-Newton methods.

An important practical aspect of splitting methods, such as Proximal Newton, is that the non-smooth part g of the composite objective functional F yields a proximity operator \(\mathrm {prox}_g\) that can be evaluated easily. This is, for example, the case, if g and also the employed scalar product have diagonal structure. Then the solution of the subproblem within the proximity operator can be computed cheaply in a componentwise fashion. In function space problems, in particular if Sobolev spaces are involved, it is known that instead of a diagonal structure, a multi-level structure should be used in order to reflect the topology of the function space properly. Diagonal proximal operators would suffer from mesh-dependent condition numbers. In our numerical computations we therefore employ non-smooth multi-grid techniques to compute the Proximal Newton steps, in particular Truncated Non-smooth Newton Multigrid Methods, cf. [10].

Let us first specify the setting in which we will discuss the convergence properties of Proximal Newton methods in a real Hilbert space \((X,\langle \cdot ,\cdot \rangle _X)\) with corresponding norm \(\Vert v\Vert _X=\sqrt{\langle v,v\rangle _X}\) and dual space \(X^*\). The Hilbert space structure of X also gives us access to the Riesz-Isomorphism \({\mathcal {R}} : X \rightarrow X^*\), defined by \(\mathcal Rx=\langle x,\cdot \rangle _X\), which satisfies \(\bigl \Vert \mathcal {R} x \bigl \Vert _{X^*} = \bigl \Vert x \bigl \Vert _{X}\) for every \(x\in X\). Since \({\mathcal {R}}\) is non-trivial in general, we will not identify X and \(X^*\).

We will assume the smooth part of our objective functional \(f:X \rightarrow \mathbb {R}\) to be continuously differentiable with Lipschitz-continuous derivative \(f':X\rightarrow X^*\), i.e., we can find some constant \(L_f > 0\) such that for every \(x,y \in X\) the estimate

holds.

Next we will specify our assumptions on the second order model for f. In what follows, we will notationally identify the linear operators \(H_x \in \mathcal {L}(X,X^*)\) with the corresponding symmetric bilinear form \(H_x : X \times X\rightarrow \mathbb {R}\), and write \((H_x v)(w)=H_x(v,w)\), using the abbreviation \(H_x(v)^2=H_x(v,v)\). We will assume uniform boundedness of \(H_x\) along the sequence \((x_k)\) of iterates:

In addition, along the sequence of iterates \(x_k\) we assume a uniform bound of the form

For \(\kappa _1> 0\) estimate (3) represents ellipticity of \(H_x\) with constant \(\kappa _1\). When considering exact (and smooth) Proximal Newton methods, where \(H_x\) is given by the second-order derivative of f at some point \(x \in X\), (3) is equivalent to \(\kappa _1\)-strong convexity of f. In the case \(\kappa _1> 0\) we may also define an energy-norm and write:

For most of the paper we may choose \(H_x\) freely in the above framework. For fast local convergence, however, we will impose a semi-smoothness assumption, cf. (15). Semi-smooth Newton methods in function space have been discussed, for example, in [12, 23, 28, 29]. Furthermore, in order to guarantee transition of our globalization scheme to fast local convergence, we suppose f to suffice the notion of second order semi-smoothness (cf. Sect. 5) which generalizes second order differentiability in our setting and the definition of which slightly differs from semi-smoothness of \(f'\) in (15).

We assume that the non-smooth part g is lower semi-continuous and satisfies a bound of the form

for all \(x,y \in X\) and all \(s \in [0,1]\) for some \(\kappa _2\in \mathbb {R}\). For \(\kappa _2> 0\) estimate (4) represents \(\kappa _2\)-strong convexity of g. It is known that \(\kappa _2\)-strong convexity of g implies that g is bounded from below, its level-sets \(L_\alpha g\) bounded for all \(\alpha \in \mathbb {R}\) and their diameter shrinks to 0, if \(\alpha \rightarrow \inf _{x\in X} g\). In the case of \(\kappa _2< 0\), g is allowed to be non-convex in a limited way.

The theory behind Proximal Newton methods and the respective convergence properties evolves around the convexity estimates stated in (3) and (4). We will assign particular importance to the interplay of the convexity properties of f and g, i.e., the sum \(\kappa _1 + \kappa _2\) will continue to play an important part over the course of the present treatise.

Let us now shortly outline the structure of our work: In Sect. 3 we will consider undamped update steps computed as the solution of an adequately formulated subproblem. These can also be represented using (scaled) proximal mappings the definition and key properties of which we shortly address. Afterwards, local superlinear convergence of the Proximal Newton method is shown. In Sect. 4 we present a modification of the aforementioned subproblem in order to damp update steps and globalize the Proximal Newton method. This enables the proof of optimality of all limit points of the sequence of iterates generated by our method. Section 5 concerns the introduction of second order semi-smoothness for f and showcases how it helps to verify the admissibility of both full and damped update steps sufficiently close to optimal solutions in Sect. 6. This in turn enables local fast convergence of our globalized method. In Sect. 7 the performance of our algorithm is substantiated by numerical results.

As a start, we want to introduce the definition of undamped update steps and investigate the behavior of the ensuing Proximal Newton method close to optimal solutions of problem (1).

2 General dual proximal mappings

We compute a full step for the Proximal Newton method at a current iterate \(x \in X\) by solving the subproblem

In this section \(H_x\) denotes a general bilinear form, as introduced above. If a minimizer exists, we determine the next iterate via \(x_+ :=x + \varDelta x\). We will consider this update scheme and investigate its convergence properties close to optimal solutions, and in particular fast local convergence if \(H_x\) is adequately chosen as a so-called Newton derivative from \(\partial _N f'(x)\), also known as the generalized differential \(\partial ^* f'(x)\) in the sense of Chapter 3.2 in [29].

Proposition 1

If \(\kappa _1+\kappa _2>0\), then (5) admits a unique solution.

Proof

By assumption, the functional to be minimized is lower semi-continuous, and \(\kappa _1+\kappa _2>0\) implies that it is strictly convex as well as radially unbounded. Since X is a Hilbert space a minimizer exists and is unique. \(\square\)

Remark 1

Let us shortly elaborate on both constants \(\kappa _1\) and \(\kappa _2\) as well as the assumption \(\kappa _1 + \kappa _2> 0\). While \(\kappa _2\) is a global convexity constant for g, \(\kappa _1\) is a purely local quantity which differs from iterate to iterate together with the corresponding second order bilinear form \(H_{x_k}\). This has two immediate consequences: On the one hand, ellipticity of the second order bilinear forms can locally compensate for non-convexity of g and on the other hand (global) convexity of g enables us to locally use non-elliptic \(H_x\) even close to optimal solutions of our minimization problem. Comparing these convexity assumptions to similar works on the topic, we recognize that the authors in both [16] and [13] require ellipticity of their \(\nabla ^2 f (x_*)\) in addition to convexity of g. In contrast, our \((\kappa _1,\kappa _2)\)-formalism from above suitably quantifies the contribution to convexity of both f and g.

For the following discussion we keep the assumption \(\kappa _1+\kappa _2>0\). To introduce an adequate definition of a proximal mapping in Hilbert space we reformulate (5) directly for the updated iterate \(x_+\) via

In the literature existence of a continuous inverse \(H_x^{-1} : X^* \rightarrow X\) is frequently assumed, giving rise to a mapping \(H_x^{-1}f': X \rightarrow X\). Then (6) can be rearranged to

In [16], this form of the updated iterate is considered and the notion of a proximal mapping is introduced by

such that there (7) takes the form \(x_+ =\mathrm {prox}_g^{H_x} \big (x - H_x^{-1}f'(x)\big )\).

However, in this work we want to follow a different, more direct approach towards proximal mappings which allows us to use the structure of the dual space \(X^*\) more accurately and dispense with an invertibility assumption on \(H_x\). In [25] (scaled) proximal mappings are introduced for \(X=\mathbb {R}^n\) according to

Observing that \(x^T\) represents a dual element in \(\mathbb {R}^n\) here, we generalize this notion to the setting of Hilbert spaces and consider

obtaining a mapping from the dual space back to the primal space.

With this definition in mind, (6) can directly be rewritten as

Our notion allows us to dispense with the use of the inverse \(H_x^{-1}\), which would require in addition \(\kappa _1>0\). We will refer to (8) as the direct or dual formulation of scaled proximal mappings.

First order conditions for the minimization problem posed in (9) yield the equation

in the dual space \(X^*\) for some (Frechét-)subderivative \(\eta \in \partial _F g (x_+)\) (if g is convex, \(\partial _F g\) coincides with the convex subdifferential \(\partial g\), cf. [14]). As we rearrange this identity, one could formally write:

If \(H_x\) is additionally invertible, this is equivalent to

which once again substantiates the interpretation of proximal-type methods as forward-backward splitting algorithms. Note that in particular the subdifferential of g is evaluated at the updated point \(x_+\).

We can shift convexity properties of the respective parts of the composite objective functional by inserting adequate bilinear form terms. However, this procedure does not affect the sequence of iterates generated by the update formula from above:

Lemma 1

Let \(q:X \rightarrow \mathbb {R}\) be a continuous quadratic function and denote its second derivative (which is independent of x) by \(Q :=q''(x) : X \rightarrow X^*\). Consider the modified (but obviously equivalent) minimization problem

Then, the update steps computed via (9) are identical for both problems (1) and (10) if we choose \({{\tilde{H}}}_x = H_x - Q\) as the corresponding bilinear form.

Remark 2

If we choose \(q(x) :=\frac{\kappa }{2} \bigl \Vert x \bigl \Vert _{X}^2\) for some \(\kappa \in \mathbb {R}\), the modified quantities \({{\tilde{H}}}_x\) and \({{\tilde{g}}}\) suffice estimates (3) and (4) for \({\tilde{\kappa }}_1 = \kappa _1 - \kappa\) and \({\tilde{\kappa }}_2 = \kappa _2 + \kappa\). In particular, \(\kappa _1 + \kappa _2 = {\tilde{\kappa }}_1 + {\tilde{\kappa }}_2\) remains unchanged and \({{\tilde{g}}}\) is \((\kappa + \kappa _2)\)-strongly convex for \(\kappa > -\kappa _2\).

Proof

The only claim which is not apparent is the identity of update steps. To this end, we consider the fundamental definition of the update step for problem (10) at some \(x \in X\) given by

and consequently for \(q(y) = \frac{1}{2} Q (y)^2 + ly + c\) and \(c \in \mathbb {R}\) constant

which directly shows the asserted identity of update steps. \(\square\)

Remark 3

If the bilinear form for update step computation is chosen as \(H_x \in \partial _N f'(x)\) and thereby as \({{\tilde{H}}}_x \in \partial _N {{\tilde{f}}}'(x)\) in the modified case, we have \({{\tilde{H}}}_x = H_x - Q\), automatically.

3 Regularity and fast local convergence

The representation of the updated iterate as the image of a scaled proximal mapping in (9) will turn out to be very useful in what follows which is why we dedicate the next two propositions to the properties of scaled proximal mappings in our scenario. The first proposition generalizes the assertions of the so called second prox theorem, cf. e.g. [2], to our notion of proximal mappings.

Proposition 2

Let H and g satisfy the assumptions (3) and (4) with \(\kappa _1 + \kappa _2> 0\). Then for any \(\varphi \in X^*\) the image of the corresponding proximal mapping \(u :=\mathcal {P}_g^H(\varphi )\) satisfies the estimate

for all \(\xi \in X\).

Proof

The proof of the estimate above is an easy consequence of the characterization of the convex subdifferential of \(g_H :=g +\frac{1}{2} H(\cdot , \cdot )\) and (4). First order conditions of the minimization problem in (8) yield

where \(\partial\) denotes the convex subdifferential since in particular \(g_H\) is convex due to the positivity of the sum \(\kappa _1 + \kappa _2\). This inclusion directly implies the estimate

for arbitrary \(y \in X\) which is equivalent to

As pointed out before, now we want to take advantage of the convexity assumptions on g according to (4). To this end, we insert \(y = y(s) :=s\xi + (1-s)u\) above for \(s \in ]0,1]\) and use (4) on the right-hand side. This yields

where we now divide by \(s \ne 0\) and subsequently evaluate the limit of s to zero. This procedure provides us with the asserted estimate for \(\xi\), \(\varphi\) and u as specified above. \(\square\)

The inequality from Proposition 2 can be used in order to prove several useful continuity results for general scaled proximal mappings in Hilbert spaces. However, for our purposes it suffices to assert and verify the following result, which generalizes non-expansivity of proximal mappings in Euclidean space to our setting. It plays a similar role as boundedness of the inverse of the derivative in Newton’s method.

Corollary 1

(Regularity of the Prox-Mapping) Let H and g satisfy the assumptions (3) and (4) with \(\kappa _1 + \kappa _2> 0\). Then, for all \(\varphi _1,\varphi _2 \in X^*\) the following Lipschitz-estimate holds:

Proof

Let us choose H and \(\varphi _1,\varphi _2\) as stated above. According to Proposition 2, the first order conditions for the respective minimization problems yield the inequalities

since we can choose \(\xi :=u_2\) or \(\xi :=u_1\) respectively. Now, we add (12) and (13) which yields

As we rearrange this inequality we obtain

and eventually assumption (3) on H yields the assertion of the proposition. \(\square\)

Even though the above continuity result for proximal mappings will turn out to be an important tool for the proof of local acceleration of the Proximal Newton method, we still have to deduce some crucial properties of the full update step \(\varDelta x\). These will help us to characterize optimal solutions of (1) as fixed points of the method and then verify local acceleration afterwards.

Lemma 2

The undamped update steps computed via (5) are descent directions of the composite objective functional, i.e., the following estimate holds:

Proof

Since f is assumed to be continuously differentiable and g suffices the estimate (4), we can deduce the following bound on the composite objective functional:

Let us now deduce an estimate for the term in brackets on the right-hand side of (14). To this end, we remember the proximal mapping representation of updated iterates in (9) and consider the corresponding estimate from Proposition 2 for \(\xi :=x\) which is given by

or equivalently

which we insert into (14) and directly obtain the asserted inequality. Note that over the course of this section we assume the positivity of the sum \(\kappa _1+\kappa _2\) which indeed implies from above that \(\varDelta x\) is a descent direction. \(\square\)

As mentioned beforehand, this directly enables a more insightful characterization of optimal solutions of the composite minimization problem.

Proposition 3

Consider f continuously differentiable with Lipschitz derivative as well as \(H \in \mathcal {L}(X,X^*)\) which satisfies (3) with \(\kappa _1 + \kappa _2> 0\) and \(\kappa _2\) from (4) for g. Then, the search direction \(\varDelta x_*\) according to (5) is zero at every local minimizer \(x_* \in X\) of problem (1). In particular, we obtain the fixed point equation

Proof

If \(x_*\) is a local minimizer, \(F(x_*+s\varDelta x) \ge F(x_*)\) for sufficiently small \(s>0\). By Lemma 2 this implies \(\varDelta x=0\). \(\square\)

Having in mind these properties of update steps and optimal solutions in addition to the continuity result for scaled proximal mappings from Proposition 1, we can now prove the local acceleration result for our Proximal Newton method with undamped steps near optimal solutions.

For the following we require \(f'\) to be semi-smooth near an optimal solution \(x_*\) of our problem (1) with respect to \(H_x\), i.e., the following approximation property holds:

As pointed out before, adequate definitions of \(H_x\) can be given via the Newton derivative \(H_x \in \partial _N f'(x)\) for Lipschitz-continuous operators in finite dimension as well as for corresponding superposition operators, cf. Chapter 3.2 in [29].

Theorem 1

(Fast Local Convergence) Suppose that \(x_* \in X\) is an optimal solution of problem (1). Consider two consecutive iterates \(x, x_+ \in X\) which have been generated by the update scheme from above and are close to \(x^*\). Furthermore, suppose that (15) holds for \(H_x\) in addition to the assumptions from the introductory section with \(\kappa _1 + \kappa _2> 0\). Then we obtain:

Proof

Consider the proximal mapping representations deduced above for both the updated iterate \(x_+\) in (9) and for the optimal solution \(x_*\) in Proposition 3 via

Next, we directly take advantage of these identities together with the continuity result for scaled proximal mappings from Proposition 1 in order to deduce the estimate

where in the last step also the semi-smoothness of \(f'\) played a crucial role. This directly verifies the asserted local acceleration result. \(\square\)

In particular, this implies local superlinear convergence of our Proximal Newton method if we can additionally verify global convergence to an optimal solution. Note that even for the local acceleration result, ellipticity of \(H_x \in \partial _N f'(x)\) does not necessarily have to be demanded. Also here, all that matters is strong convexity of the composite functional. This might be surprising since what actually accelerates the method is the second order information on the (possibly non-convex) but differentiable part f with semi-smooth derivative \(f'\). As a consequence, this means that the (strong) convexity of g can not only contribute to the well-definedness of update steps as solutions of (5) but also to the local acceleration of our algorithm.

The main reason for this generalization of the local acceleration result is our slightly generalized notion of proximal mappings. In particular, we did not deduce (firm) non-expansivity in the scaled norm as for example in [16] but also there took advantage of the strong convexity of the composite objective functional in the form of assumptions (3) and (4) with \(\kappa _1 + \kappa _2> 0\).

Note that for the above results to hold it was crucial that the current iterate x is already close to an optimal solution of problem (1) which is why over the course of the next section we want to address one possibility to globalize our Proximal Newton method. We will see that eventually we will be in the position to use undamped update steps for the computation of iterates and thereby benefit from the local acceleration result in Theorem 1.

4 Globalization via an additional norm term

Let us consider the following modification of (5) and define the damped update step at a current iterate x as a minimizer of the following modified model functional:

As a consequence, we define

Here \(\omega >0\) is an algorithmic parameter that can be used to achieve global convergence. Setting \({{\tilde{H}}} :=H_x+\omega {\mathcal {R}}\) with the Riesz-isomorphism \({\mathcal {R}}:X \rightarrow X^*\) we observe that (16) is of the form (5) with \({\tilde{\kappa }}_1 = \kappa _1+\omega\), so that the existence and regularity results of the previous sections apply.

The updated iterate then takes the form \(x_+ (\omega ) :=x + \varDelta x (\omega )\). Apparently, the update step in (16) is well defined if \(\omega + \kappa _1 + \kappa _2> 0\). Consequently, for what follows, we only consider \(\omega > -(\kappa _1 + \kappa _2)\) in order to guarantee unique solvability of the update step subproblem. The full update steps from (5) are here damped along a curve in X which is parametrized by the regularization parameter \(\omega \in ]-(\kappa _1 + \kappa _2),\infty [\).

However, note that here the Hilbert space structure of X is also important for the strong convexity of functions of the form \(g +\frac{\omega }{2}\bigl \Vert \cdot \bigl \Vert _{X}^2\) with g as in (4) for arbitrary \(\kappa _2 \in \mathbb {R}\). In a general Banach space setting, we can not assume additional norm terms to compensate disadvantageous convexity assumptions, cf. [2], Remark 5.18].

Let us now take a look at how we can rearrange the subproblem for finding an updated iterate by using the scalar product \(\langle \cdot ,\cdot \rangle _X\) as well as the Riesz-Isomorphism \({\mathcal {R}}\):

Note that \(H_x + \omega \mathcal {R}: X \times X \rightarrow \mathbb {R}\) satisfies (3) with constant \((\kappa _1 + \omega )\) such that the combination of g and \(H_x + \omega \mathcal {R}\) still suffices the requirements for the results from Proposition 2 for all \(\omega > -(\kappa _1 + \kappa _2)\). Additionally, the results of Lemma 1 apparently also hold in the globalized case.

The formulation of updated iterates via the above scaled proximal mapping enables us to establish some helpful properties of the damped update steps \(\varDelta x(\omega )\).

Proposition 4

Under the assumptions (3) for \(H_x\) and (4) for g the inequality

holds for the update step \(\varDelta x(\omega )\) as defined in (16) and arbitrary \(-(\kappa _1 + \kappa _2)< \omega < \infty.\)

Proof

The proof here follows along the same lines as the derivation of the auxiliary estimate for the bracket term in the proof of Lemma 2. Due to the structure of the update formula in (17) we can take advantage of the estimate from Proposition 2 with \(\varphi = (H_x + \omega \mathcal {R}) x - f'(x)\), \(H = H_x + \omega \mathcal {R}\) and \(\xi = x\) which yields \(u = \mathcal {P}_{g}^H (x) = x_+\) and thereby

This inequality is equivalent to the asserted estimate. \(\square\)

With the above estimate for damped update steps at hand, let us now formulate a criterion for sufficient decrease which will help us to verify a global convergence result of our Proximal Newton method. We call a value of the regularization parameter \(\omega > -(\kappa _1 + \kappa _2)\) admissible for sufficient decrease if the inequality

for some prescribed \(\gamma \in ]0,1[\) is satisfied. We may interpret \(\lambda _\omega (\varDelta x(\omega ))\) as a predicted decrease and rewrite the condition (18) as follows:

This is the classical ratio of actual decrease and predicted decrease which is often used for trust-region algorithms. Before now trying to verify that the descent criterion in (18) is fulfilled for sufficiently large values of \(\omega\), we note that the assertion in Proposition 4 implies the insightful estimate

which yields that once the criterion is satisfied, update steps unequal to zero provide real descent in the composite objective function F according to

Let us now take a look at the existence of sufficiently large values of the regularization parameter \(\omega\). Here, the Lipschitz-continuity of \(f'\) comes into play for the first time.

Lemma 3

For f, \(H_x\) and g as above the criterion for sufficient descent introduced via (18) is satisfied for \(\gamma \in ]0,1[\) if \(\omega\) satisfies

Proof

By our lower bound on \(\omega\) and (19) we obtain:

The Lipschitz-continuity of \(f'\) directly yields the estimate

from where we immediately obtain an estimate for the descent in the composite objective functional via

This estimate is equivalent to (18) and thereby concludes the proof of the assertion. \(\square\)

Additionally, for global convergence, it turns out that we have to guarantee that

A simple way to achieve this is to impose the following restriction:

for some prescribed upper bound \({\overline{M}}\). Due to (19) this can be achieved for a sufficiently large choice of \(\omega _k\). All in all, this results in the following algorithm:

Now that we have formulated the algorithm and can be sure that we can always damp update steps sufficiently such that they yield descent according to (18), we will verify the stationarity of limit points of the sequence of iterates generated by Algorithm 1. To this end, we will first prove that the norm of the corresponding update steps converges to zero along the sequence of iterates.

Lemma 4

Let \((x_k) \subset X\) be the sequence generated by the Proximal Newton method globalized via (16) for admissible values of the regularization parameter \(\omega _k\) starting at any \(x_0 \in \mathrm {dom}g\). Then either \(F(x_k) \rightarrow -\infty\) or \(\lambda _{\omega _k}(\varDelta x_k(\omega _k))\) and \(\bigl \Vert \varDelta x_k(\omega _k) \bigl \Vert _{X}\) converge to zero for \(k \rightarrow \infty\).

Proof

By (20) the sequence \(F(x_k)\) is monotonically decreasing. Thus, either \(F(x_k) \rightarrow -\infty\) or \(F(x_k) \rightarrow {\underline{F}}\) for some \({\underline{F}}\in \mathbb {R}\) and thus in particular \(F(x_{k})-F(x_{k+1})\rightarrow 0\). Since \(\gamma > 0\), also \(\lambda _{\omega _k}(\varDelta x(\omega ))\rightarrow 0\). Since, by assumption, \(\omega _k +\kappa _1 + \kappa _2> 0\) this implies \(\bigl \Vert \varDelta x_k(\omega _k) \bigl \Vert _{X} \rightarrow 0\).\(\square\)

If we take a look at the optimality conditions for the step computation in (16) at \(x_+(\omega )\), we obtain

with the Frechét-subdifferential of \(g_\omega ^{H_x}:X\rightarrow \mathbb {R}, y \mapsto g(y) + \frac{1}{2} H_x(y)^2 + \frac{\omega }{2}\bigl \Vert y \bigl \Vert _{X}^2\) on the right-hand side. This directly yields the existence of some \(\eta \in \partial _F g(x_+(\omega ))\) such that

This implies the estimate:

Thus, by Lemma 4 and

we obtain

as long as \(L_f< \infty\) exists, \(\bigl \Vert H_{x_k} \bigl \Vert _{\mathcal {L}(X,X^*)} \le M\) is bounded, and \(\omega _k\) is bounded. The latter can be guaranteed via Lemma 3 if the “appropriate increase” of \(\omega _k\) is done by no more than a fixed factor \(\rho >1\).

Remark 4

With some additional technical effort, the assumption on Lipschitz-continuity of \(f'\) could be relaxed to a uniform continuity assumption.

Observe that we can indeed interpret \(\bigl \Vert \varDelta x_k(\omega _k) \bigl \Vert _{X} \le \varepsilon\) as a condition for the optimality of our the subsequent iterate up to some prescribed accuracy. However, small step norms \(\bigl \Vert \varDelta x_k(\omega _k) \bigl \Vert _{X}\) can also occur due to very large values of the damping parameter \(\omega _k\) as a consequence of which the algorithm would stop even though the sequence of iterates is not even close to an optimal solution of the problem. In order to rule out this inconvenient case, we consider the scaled version \((1+\omega _k)\bigl \Vert \varDelta x_k(\omega _k) \bigl \Vert _{X}\) as the stopping criterion in Algorithm 1.

Now we are in the position to discuss subsequential convergence of our algorithm to a stationary point. In the following, we will assume throughout that \(F(x_k)\) is bounded from below. We start with the case of convergence in norm:

Theorem 2

Under the assumptions explained in the introductory section, all accumulation points \({\bar{x}}\) (in norm) of the sequence of iterates \((x_k)\) generated by the Proximal Newton method globalized via (16) are stationary points of problem (1).

Proof

Let us consider a modified version of our minimization problem as in (10) in Lemma 1 and choose \(q(x) = \frac{1}{2} Q(x)^2\) for \(Q: X \times X \rightarrow \mathbb {R}\) such that \({{\tilde{g}}} = g + q\) is (strongly) convex on its domain.

This is always possible by (4). According to Lemma 1, the sequence of iterates remains unchanged and step computation takes the form

with first order optimality conditions

where \(\partial {{\tilde{g}}} (x_{k+1})\) denotes the convex subdifferential of \({{\tilde{g}}}\) at \(x_{k+1}\). Consequently, we know that there exists some \({\tilde{\eta }}_k \in \partial {{\tilde{g}}} (x_{k+1})\) such that

with the remainder term on the right-hand side given by

holds. As before, the remainder term \({{\tilde{r}}}_{x_k}\big (\varDelta x_k (\omega _k)\big ) = r_{x_k}\big (\varDelta x_k (\omega _k)\big )\) tends to zero for \(k \rightarrow \infty\), i.e., we have \({\tilde{\eta }} :=\lim _{k \rightarrow \infty } {\tilde{\eta }}_k = -f'({\bar{x}}) + Q {\bar{x}}\). The definition of the convex subdifferential \(\partial {{\tilde{g}}}\) together with the lower semi-continuity of \({{\tilde{g}}}\) directly yields

for any \(u \in X\) which proves the inclusion \({\tilde{\eta }} \in \partial {{\tilde{g}}}({\bar{x}})\). The evaluation of the latter limit expression can easily be retraced by splitting

In particular, we recognize \({\tilde{\eta }} \in \partial {{\tilde{g}}}({\bar{x}})\) as \(-f'({\bar{x}}) + Q {\bar{x}} \in \partial {{\tilde{g}}}({\bar{x}})\) and equivalently \(-f'({\bar{x}}) \in \partial _F g({\bar{x}})\) for the Frechét-subdifferential \(\partial _F\). This implies \(0 \in \partial _F F ({\bar{x}})\), i.e., the stationarity of our limit point \({\bar{x}}\). \(\square\)

Also note that in general the above global convergence result does not rely on the strong convexity of the composite objective function F but yields stationarity of limit points also in the non-convex case of \(\kappa _1 + \kappa _2< 0\) and \(\omega _k > -(\kappa _1 + \kappa _2)\) chosen adequately. In particular, this ensures that also independent of strong convexity assumptions near optimal solutions, the algorithm approaches the optimal solution and can then benefit from additional convexity at later iterations.

While bounded sequences in finite dimensional spaces always have convergent subsequences, we can only expect weak subsequential convergence in general Hilbert spaces in this case. As one consequence, existence of minimizers of nonconvex functions on Hilbert spaces can usually only be established in the presence of some compactness. On this count we note that in (23) even weak convergence of \(x_k \rightharpoonup {\bar{x}}\) would be sufficient. Unfortunately, in the latter case we cannot evaluate \(f'(x_k) \rightarrow f'({\bar{x}})\).

In order to extend our proof to this situation, we require some more structure for both of the parts of our composite objective functional. To this end, we remember the following well-known definition of compact operators:

Definition 1

A linear operator \(K:X \rightarrow Y\) between two normed vector spaces X and Y is called compact if one of the following equivalent statements holds:

-

1.

The image of the unit ball of X is relatively compact in Y (, i.e., its closure is compact).

-

2.

For any bounded sequence \((x_n)_{n \in \mathbb {N}} \subset X\) the image sequence \((Kx_n)_{n \in \mathbb {N}} \subset Y\) contains a strongly convergence subsequence \(\big (x_{n_k} \big )_{k \in \mathbb {N}} \subset X\).

With this notion at hand, we can formulate the following global convergence theorem:

Theorem 3

Let f be of the form \(f(x)= {\hat{f}}(x) + {\check{f}}(Kx)\) where K is a compact operator. Additionally, assume that \(g + {\hat{f}}\) is convex and weakly lower semi-continuous in a neighborhood of stationary points of (1). Then weak convergence of the sequence of iterates \(x_k \rightharpoonup {\bar{x}}\) suffices for \({\bar{x}}\) to be a stationary point of (1).

If F is strictly convex and radially unbounded, the whole sequence \(x_k\) converges weakly to the unique minimizer \(x_*\) of F. If F is \(\kappa\)-strongly convex, with \(\kappa > 0\), then \(x_k \rightarrow x_*\) in norm.

Proof

We can employ the same proof as above replacing g by \(g+{\hat{f}}\) and using that \({{\tilde{f}}}'(Kx_k) \rightarrow {\check{f}}'(K{\bar{x}})\) in norm, by compactness. This then shows finally

i.e., \(\eta = -{\check{f}}'(K{\bar{x}})K \in \partial (g+{\hat{f}})({\bar{x}}) = \partial _F g({\bar{x}})+\{{\hat{f}}'({\bar{x}})\}\) which in particular implies

This again constitutes \(0 \in \partial _F F({\bar{x}})\) and thereby the stationarity of the weak limit point \({\bar{x}}\).

Let us now consider the second assertion: F being strictly convex as well as radially unbounded yields that problem (1) has a unique solution \(x_*\). Additionally, we know that our sequence of iterates is bounded as a consequence of which we can select a weakly convergent subsequence. The first assertion of the theorem then implies that the limit of each subsequence we choose is a stationary point of problem (1), and thus by convexity to the unique optimal solution \(x_*\). A standard argument then shows that the whole sequence converges to \(x_*\) weakly.

If F is \(\kappa\)-strongly convex, then as discussed below (4) the diameter the level sets \(L_{F(x_k)}\) tends to 0 as \(k\rightarrow \infty\), since \(F(x_k)\rightarrow F(x_*)\). This implies \(\Vert x_k-x_*\Vert _X \rightarrow 0\). \(\square\)

5 Second order semi-smoothness

In order to be able to benefit from the local acceleration result in Theorem 1, we have to ensure that under the assumptions on F stated in Sect. 1 eventually also full steps are admissible for sufficient descent according to our criterion formulated in (18). To this end, we want to introduce a new notion of differentiability, which we call second order semi-smoothness, and investigate how it interacts with our Proximal Newton method.

For the smooth part f of our composite objective function F we define a second order semi-smoothness property at some \(x_* \in \mathrm {dom} f\) by

for any \(\xi \in X\). This will be precisely the assumption that we need to conclude transition to fast local convergence in the following section.

We give a general definition for operators. Denote by \(L^{(2)}(X,Y)\) the normed space of bounded vector valued bilinear forms \(X\times X \rightarrow Y\), equipped with usual norm:

Definition 2

Let X, Y be normed linear spaces and let \(D\subset X\) be a neighborhood of \(x_*\). Consider a continuously differentiable operator \(T : D \rightarrow Y\), and a bounded mapping

We call T second order semi-smooth at \(x_* \in X\) with respect to \(T''\), if the following estimate holds:

Since \(T''\) is evaluated at \(x_*+\xi\), the choice of \(T''\) is far from unique. Twice continuously differentiable operators apparently are second order semi-smooth:

Proposition 5

Assume that T is twice continuously differentiable at \(x_*\). Then T is second order semi-smooth at \(x_*\) with respect to the ordinary second derivative \(T''\).

Proof

This follows by a simple computation:

Both terms in square brackets are \(o(\bigl \Vert \xi \bigl \Vert _{X}^2)\). The first by Fréchet differentiability of T, the second by continuity of \(T''(x)\). \(\square\)

It is an obvious remark that the sum of two second order semi-smooth functions is second order semi-smooth again with linear and quadratic terms defined via sums. Furthermore, the following chain rule can be shown:

Theorem 4

Suppose that \(S:D_S\rightarrow Y\) and \(T:D_T\rightarrow Z\) with \(S(D_S)\subset D_T\) are second order semi-smooth at \(x_* \in D_S\) and \(y_*=S(x_*)\) with respect to \(S''\) and \(T''\), respectively. Then \(T\circ S\) is second order semi-smooth with respect to \((T\circ S)''\), defined as follows:

Proof

We introduce the notations \(y_*=S(x_*)\), \(x=x_*+\xi\), \(y=S(x)\), and \(\eta = y-y_*\). With these prerequisites we can, as usual for chain rules, split the remainder term:

We will show that each of the expressions (25)–(28) is \(o(\bigl \Vert \xi \bigl \Vert _{X}^2)\). For (25) this follows from second order semi-smoothness of T, while second order semi-smoothness of S implies the desired result for (26). Continuity of \(T'\) and boundedness of \(S''\) yield that (27) is \(o(\bigl \Vert \xi \bigl \Vert _{X}^2)\). Finally, (28) can be reformulated via the third binomial formula:

By continuous differentiablity of S (which is a prerequisite of second order semi-smoothness by our definition) we estimate:

which finally yields the desired result. \(\square\)

Remark 5

In the case \(T'(y_*)=0\), we observe from (26) that S only needs to be continuously differentiable and we may set \(S''=0\).

Second order semi-smoothness of T and semi-smoothness of \(T'\) as in (15) are closely related but are not equivalent in general. Even in the case of \(T''(x) :=\partial _N T'(x)\) we cannot conclude one condition from the other, e.g. via the fundamental theorem of calculus, because of the lack of continuity of \(\partial _N T'\).

Let us shortly give a both simple and illustrative example: Consider the function

which is continuously differentiable with \(h'(x) = x\big [3x\sin \big (\frac{1}{x}\big ) - \cos \big ( \frac{1}{x} \big )\big ]\), \(x \ne 0\), and \(h'(0) = 0\). The cubic asymptotics of h suggest that \(T''(x) \equiv 0\) is a possible definition for second order semi-smoothness of h at \(x_* = 0\) as above. Apparently, we obtain for \(x\in \mathbb {R}\) and \(\delta x = x - x_* = x\):

i.e., that h is indeed second order semi-smooth at \(x_* = 0\) with respect to \(T''\). On the other hand, we have

which implies that \(h'\) is indeed not semi-smooth at \(x_* = 0\) with respect to the same \(T''\), cf. (15). However, in many cases of practical interest, both conditions can be shown to hold.

For instance, the function \(\phi (x)= \max \{0,x\}^2\) is second order semi-smooth at the point \(x=0\) with respect to

as well as twice Fréchet differentiable (and thus also second-order semi-smooth, cf. Proposition 5) at any other point \(x\ne 0\) with the same \(\phi ''(\xi )\). By standard techniques we can lift this property to superposition operators on \(L_p\)-spaces for appropriate p.

For convenience, we recapitulate the following lemma, which is a slight generalization of a standard result on continuity of superposition operators.

Lemma 5

Let \(\varOmega\) a measurable subset of \(\mathbb {R}^d\), and \(\psi : \mathbb {R}\times \varOmega \rightarrow \mathbb {R}\). For each measurable function \(x:\varOmega \rightarrow \mathbb {R}\) assume that the function \(\varPsi (x)\), defined by \(\varPsi (x)(t) = \psi (x(t),t)\) is measurable. Let \(x_* \in L_p(\varOmega ,\mathbb {R})\) be given. Then the following assertion holds:

If \(\psi\) is continuous with respect to x at \((x_*(t),t)\) for almost all \(t\in \varOmega\), and \(\varPsi\) maps \(L_p(\varOmega ,\mathbb {R})\) into \(L_s(\varOmega ,\mathbb {R})\) for \(1 \le p,s < \infty\), then \(\varPsi\) is continuous at \(x_*\) in the norm topology.

Proof

cf. e.g. [23, Lemma 3.1]. \(\square\)

The standard text book result requires \(\psi\) to be a Caratheodory function, and thus in particular continuous in x for all \(t\in \varOmega\). This assumption, is slightly weakened here to the almost everywhere sense. It is known, for example, that pointwise limits and suprema of Caratheodory functions yield superposition operators that map measurable functions to measurable functions. The mapping \(\phi ''\) as defined above is an example. Importantly, this result is not true for the case \(p<s=\infty\).

Proposition 6

Consider a real function \(\phi :\mathbb {R}\rightarrow \mathbb {R}\) with globally Lipschitz-continuous derivative \(\phi ' : \mathbb {R}\rightarrow \mathbb {R}\), which is second order semi-smooth with respect to a bounded function \(\phi '' : \mathbb {R}\rightarrow \mathbb {R}\). Let \(\varOmega \subset \mathbb {R}^d\) be a set of finite measure and assume that the composition \(\phi ''\circ u\) is measurable for any measurable function \(u : \varOmega \rightarrow \mathbb {R}\). Let \(p>2\). Then for each \(x\in L_p(\varOmega )\) the superposition operator \(\varPhi : L_p(\varOmega ) \rightarrow L_1(\varOmega )\) is second order semi-smooth with respect to \(\varPhi ''(x)\in L_2(L_p(\varOmega ),L_1(\varOmega ))\) defined by \(\varPhi ''(x)(\xi _1,\xi _2)(\omega )=\phi ''(x(\omega ))\xi _1(\omega )\xi _2(\omega )\) almost everywhere.

Proof

Consider a representative of \(x\in L_p(\varOmega )\) and the function

which is defined for \(t\ne 0\) and \(r_x(\omega ,t):=0\) for \(t=0\). By Lipschitz-continuity of \(\phi '\) and boundedness of \(\phi ''\) we observe that \(r_x\) is bounded uniformly on \(\varOmega \times \mathbb {R}\). Thus, the superposition operator \(R_x : L_p(\varOmega ) \rightarrow L_s(\varOmega )\) : \(R_x(\xi )(\omega )=r_x(\omega ,\xi (\omega ))\) is well defined for any \(1 \le s\le \infty\). By second order semi-smoothness \(r_x(\omega ,\cdot )\) is continuous at \(t=0\) for almost all \(\omega \in \varOmega\). Hence, by Lemma 5\(R_x\) is continuous as an operator at \(\xi =0\) for any \(s<\infty\). By the Hölder inequality with \(1/s+2/p=1\) we conclude the desired estimate:

\(\square\)

Unsurprisingly and in analogy to the theory of semi-smooth superposition operators, there is a norm gap in the sense that Proposition 6 is false for \(p=2\). This is closely related to the so call two-norm discrepancy (cf. e.g. [26]).

As in the above example, \(\phi ''(\xi )\) has a discontinuity at \(\xi =0\), so we cannot expect that \(\varPhi ''\) is a continuous mapping on a given open set. However, we can show the following result:

Proposition 7

Let \(p>2\) and \(x_* \in L_p(\varOmega )\) be fixed. Assume that function \((\omega ,t) \rightarrow \phi ''(x_*(\omega )+t)\) is continuous in t for almost all \(\omega \in \varOmega\). Then the mapping \(\varPhi '' : L_p(\varOmega ) \rightarrow L^{(2)}(L_p(\varOmega ),L_1(\varOmega ))\) is continuous at \(x_*\).

Proof

We apply Lemma 5 to the superposition operator \({\tilde{\varPhi }}''(x)(\omega ):=\phi ''(x(\omega ))\), which maps \(L_p(\varOmega )\rightarrow L_s(\varOmega )\) and the use the Hölder inequality to conclude:

\(\square\)

In our example \(\phi (x)=\max \{0,x\}^2\) fulfills the hypothesis of this theorem at \(x_*\in L_p(\varOmega )\), if \(x_*(\omega )=0\) only on a set of measure 0 in \(\varOmega\). This kind of regularity assumption can also be found frequently in the literature on semi-smooth Newton methods (cf. e.g. [11]).

6 Transition to fast local convergence

Let us now turn our attention back to our Proximal Newton method and consider the admissibility of undamped update steps near optimal solutions of problem (1). Both the semi-smoothness of \(f'\) from (15) and the second order semi-smoothness of f from (24) will contribute a crucial part to the proof of this result. Additionally, the local acceleration result from Theorem 1 will play an important role.

However, an algorithm that tests in every iterate, whether the undamped Newton step is acceptable is likely to compute many unnecessary trial iterates during the early phase of globalization. Thus, it is of interest, whether damped Newton steps are acceptable as well close to the solution.

In order to establish the corresponding proposition of admissibility we will first have to investigate the relation between damped and undamped steps more closely.

Lemma 6

Let \(H_x\) be a bilinear form as in (3) and assume that g suffices (4) where \(\kappa _1 + \kappa _2> 0\) holds and \(x \in X\) is arbitrary. Then the damped update step \(\varDelta x(\omega )\) from (16) and the undamped update step \(\varDelta x\) from (5) satisfy the estimates

for any \(\omega \ge 0\).

Proof

The above set of estimates can all be deduced from adequate proximal representations of the respective update steps. We can characterize the undamped step via \(\varDelta x = x_+ - x\) where the updated iterate is given by

Now, consider the corresponding inequality from Proposition 2 for \(\varphi = H_x (x)- f'(x)\), \(H = H_x\) and \(\xi :=x_+(\omega )\) given by

which can be rearranged to a more useful form via

For the damped update step we want to consider a different form than in (17) and attribute the additional norm term \(\frac{\omega }{2}\bigl \Vert \cdot \bigl \Vert _{X}^2\) to the lower argument function g. This results in the proximal representation

The deduction of the respective inequality induced by the first order conditions of the proximal subproblem will turn out to be slightly more complicated. We use \(H = H_x\) and \(\varphi = H_x (x) + \omega {\mathcal {R}} x - f'(x)\) together with \(\xi = x_+\) in Proposition 2. Note here that the lower argument function \(g +\frac{\omega }{2}\bigl \Vert \cdot \bigl \Vert _{X}^2\) satisfies (4) with constant \(\kappa _2+ \omega\). Thus, we obtain

We bring the Riesz-term \(\omega \mathcal {R} \big (x , \varDelta x - \varDelta x(\omega ) \big )\) to the right-hand side of (34) and recognize

which results in

This inequality will be of importance once more later on. For now, we estimate the term

such that (35) takes the form

Now, we add (33) and (36) which yields

Here we can use assumption (3) on \(H_x\) and rearrange the resulting estimate to

This is exactly the first asserted inequality (31) if we divide by \(\bigl \Vert \varDelta x - \varDelta x(\omega ) \bigl \Vert _{X}\) which we can assume to be non-zero without loss of generality. From here, we can directly deduce the second part of (32) since we can take advantage of (31) by

The first part of (32) on the other hand requires some more consideration. We start at (35) but now take another route and directly add it to (33) which yields

and thereby

as we use (3) for \(H_x\). All prefactors in (37) are positive due to our assumptions such that the first part of (32) follows. This completes the proof. \(\square\)

The equivalence result for damped and undamped update steps in the form of (32) enables the proof of the following Corollary which will turn out to be useful for the admissibility of damped steps close to optimal solutions.

Corollary 2

Close to an optimal solution \(x_*\) of (1) we can find constants \(c_1 , c_2 > 0\) such that the following estimates hold:

Proof

For the deduction of both asserted inequalities, we will take advantage of the local superlinear convergence stated in Theorem 1, i.e., \(\bigl \Vert x_+ - x_* \bigl \Vert _{X} = o\big ( \bigl \Vert x-x_* \bigl \Vert _{X} \big )\) in the limit of \(x \rightarrow x_*\). Consequently, we can write

for some function \(\psi :[0,\infty [ \rightarrow [0,\infty [\) with \(\psi (t) \rightarrow 0\) for \(t \rightarrow 0\). With this helpful representation at hand, we estimate

By the definition of \(\psi\) above, this directly implies the first asserted inequality. We can deduce the second one similarly quickly via

We can assume \(\psi \big ( \bigl \Vert x-x_* \bigl \Vert _{X} \big ) < 1\) close to the optimal solution \(x_*\) and thereby deduce

with the additional help of (32). Taking into account that \(\omega\) remains bounded completes the proof of the second asserted inequality. \(\square\)

Now we are in the position to prove the admissibility of both undamped and damped steps close to optimal solutions of the composite minimization problem (1). We will see that undamped steps will generally be admissible whereas for the admissibility of damped steps we will have to assume an additional property of the second order model bilinear forms \(H_x\).

Proposition 8

Let \(x_* \in X\) be an optimal solution of (1) and let \(H_x \in \partial _N f'(x)\) suffice (3) as well as g suffice (4) with \(\kappa _1 + \kappa _2> 0\) in a neighborhood of \(x_*\). Additionally, suppose that (24) holds for f as well as (15) holds for \(f'\) at \(x_*\).

Steps as in (16) for any \(\omega \ge 0\) are admissible for sufficient descent according to (18) for any \(\gamma < 1\) if the second order bilinear forms \(H_x\) satisfy a bound of the form

In particular:

-

1.

full steps \(\varDelta x\) as defined in (5) are eventually admissible.

-

2.

if the mapping \(x \mapsto H_x\) is continuous at \(x=x_*\), then eventually all steps are admissible.

Proof

Let us take a look at the descent in the composite objective function F when performing an update step and see which estimates we can deduce with the help of the assumptions and results preceding this proposition.

We will denote the update by \(\varDelta x (\omega )\) or \(x_+(\omega ) = x + \varDelta x (\omega )\) respectively for some arbitrary \(\omega \ge 0\) such that the notation comprises both the damped and undamped case for the update step. Now, we write

and estimate the descent in the smooth part of the objective function \(f(x+\varDelta x(\omega )) - f(x)\). By telescoping we obtain the following identity:

In the last step we used second order semi-smoothness of f and semi-smoothness of \(f'\) at \(x_*\).

We observe that the only critical term is

We conclude

by Corollary 2 and then directly deduce

Now, we have to consider an estimate for the critical term \(\rho\) defined as above. We can define a prefactor function \(\gamma : X \times [0,\infty [ \rightarrow \mathbb {R}\) for the admissibility criterion (18) by

which should be larger than some \({\tilde{\gamma }} \in ]0,1[\). We may assume that the numerator of the latter expression is non-positive, otherwise this inequality is trivially fulfilled. Thus, by decreasing the positive denominator via (19) we obtain that for any \(\varepsilon >0\) there is a neigbourhood of \(x^*\), such that for any iterate x in this neighbourhood

where the latter \(\varepsilon\)-term arises from \(o(\Vert \varDelta x(\omega )\Vert _X^2)/\Vert \varDelta x(\omega )\Vert _X^2\) and can be chosen arbitrarily small for \(\Vert \varDelta x(\omega )\Vert _X \rightarrow 0\) which holds by the estimate

The \(\rho\)-term then vanishes by assumption (39), which is implied by i) or ii) in the following way:

\(\square\)

The seemingly paradoxical behavior that full Newton steps yield a better model approximation than damped Newton steps comes from the fact that \(f'\) is not Fréchet differentiable in general. The only prerequisite that we can take advantage of is (24) at fixed \(x_*\).

The continuity assumption ii) on \(H_x\) can be verified for superposition operators via Proposition 7, it holds, for example, for \(\max (0,t)^2\), if \(x_*(\omega )=0\) only on a set of zero measure.

7 Numerical results

We consider the following problem on \(\varOmega = [0,1]^2\subset {\mathbb {R}}^2\): Find \(u \in H^1_0(\varOmega ,{\mathbb {R}})\) that minimizes the composite objective functional F defined via

with parameters \(c > 0\) and \(\alpha ,\beta \in \mathbb {R}\) as well as a force field \(\rho : \varOmega \rightarrow \mathbb {R}\). The norm \(\bigl \Vert \cdot \bigl \Vert _{\mathbb {R}^2}\) denotes the Euclidean 2-norm on \(\mathbb {R}^2\). In the sense of the theory of the preceding sections we can identify the smooth part of F as \(f:H^1_0(\varOmega ,{\mathbb {R}}) \rightarrow \mathbb {R}\) given by

We have to note here that f technically does not satisfy the assumptions made on the smooth part of the composite objective functional specified above in the case \(\alpha \ne 0\) due to the lack of semi-smoothness of the corresponding squared max-term. The use of the derivative \(\nabla u\) instead of function values u creates a norm-gap which cannot be, as usual, compensated by Sobolev-embeddings and hinders the proof of semi-smoothness of the respective superposition operator. However, we think that slightly going beyond the framework of theoretical results for numerical investigations can be instructive.

For our implementation of the solution algorithm we chose the force field \(\rho\) to be constant on its domain and equal to some so called load-factor \({\tilde{\rho }} > 0\) which we will from now on refer to as simply \(\rho\). Consequently, the non-smooth part of the objective functional g only consists of the scaled integral over the absolute value term which apparently also satisfies the specifications made on g before. Note that the underlying Hilbert space is given by \(X = H^1_0(\varOmega ,{\mathbb {R}})\) which also determines the norm choice for regularization of the subproblem.

In the following we will dive deeper into the specifics of our implementation of the algorithm: In order to differentiate the smooth part of the composite objective functional and create a second order model of it around some current iterate, we take advantage of the automatic differentiation software package adol-C, cf. [30]. With the second order model at hand we can then consider subproblem (16) which has to be solved in order to obtain a candidate for the update of the current iterate. For the latter endeavor we employ a so called Truncated Non-smooth Newton Multigrid Method with a direct linear solver. We can summarize this method as a mixture of exact, non-smooth Gauß-Seidel steps for each component and global truncated Newton steps enhanced with a line-search procedure. The scheme is analytically proven to converge for convex and coercitive problems; for a more detailed description of the algorithm and its convergence properties consider [10].

However, the most delicate issue concerning the implementation of our algorithm and its application to the problem described above is the choice of the regularization parameter \(\omega \ge 0\) along the sequence of iterates \((x_k)\subset X\). For now, we want to confine ourselves to displaying the convergence properties of the class of Proximal Newton methods in the scenario presented above and not attach too much value to algorithmic technicalities. As a consequence, we took the rather heuristic approach of simply doubling \(\omega\) in the case that the sufficient descent criterion (18) (for \(\gamma = \frac{1}{2}\)) is not satisfied by the current update step candidate and on the other hand multiplying \(\omega\) by \(\big (\frac{1}{2}\big )^{n}\) where \(n \in \mathbb {N}\) denotes the number of consecutive accepted update steps. The latter feature ensures that local fast convergence is recognized by the algorithm and the regularization parameter quickly decreases once the iterates come close to the minimizer. For the superlinear convergence demonstrated in Theorem 1 to arise, undamped update steps have to be conducted, i.e., the regularization parameter has to be zero and not merely sufficiently small. For this reason we set \(\omega = 0\) once it reaches a threshold value \(\omega _0\) following the procedure described beforehand. On the contrary, if a full update step is not accepted by the sufficient descent criterion, we set \(\omega = \omega _0\) and from there on proceed as usual.

Even though the choice of \(\omega\) considered here is rather heuristic and not problem-specific at all, it stands in perfect conformity with the theory established over the course of the previous sections and also successfully displays the global convergence and local acceleration of our Proximal Newton method for the model problem of minimizing (41) over \(H^1_0(\varOmega ,\mathbb {R})\). Moreover, we added a threshold value for the descent considering the modified quadratic model \(\lambda _\omega \big (\varDelta x (\omega )\big )\) as a stopping criterion for our algorithm, i.e., the computation stops as soon as we have \(|\lambda _\omega \big (\varDelta x (\omega )\big )| < 10^{-14}\) for an admissible step \(\varDelta x (\omega )\).

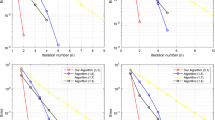

Figure 1a constitutes a logarithmic plot of correction norms \(\bigl \Vert \varDelta x_k (\omega _k) \bigl \Vert _{H^1(\varOmega )}\) for constant values of \(c = 80\), \(\beta = 40\) and \(\rho = -100\) while \(\alpha\) is increased from 0 to 240 in equidistant steps of 40. Quite predictably from the structure of the functional, increasing values of \(\alpha\) make the minimization problem more and more difficult to solve for our method but eventually the local superlinear convergence is evident also for larger values of \(\alpha\). Figure 1b shows the corresponding values of the regularization parameter \(\omega\) which were used along the accepted steps on the way to the minimizer.

Apart from those considerations, it is always very insightful to compare the performance of our algorithm with other existing methods for similar problems to the one introduced in (41). To this end, we considered two alternatives: Firstly, we used a simple Proximal Gradient procedure with \(H^1\)-regularization by ignoring the second order bilinear form \(H_x\) in the update step subproblem (16) and secondly, we took advantage of acceleration strategies for such Proximal Gradient methods by implementing the FISTA-algorithm as presented in [21]. In Fig. 2a and b, the norms of update steps are plotted for both variants for solving the same problem as above, i.e., \(c = 80\), \(\beta = 40\) and \(\rho = -100\) while \(\alpha\) we increase in equidistant steps of 40 from 0 to 160. We recognize a clear difference in performance in the transition both from Proximal Gradient to FISTA and from FISTA to Proximal Newton across all \(\alpha\)-variations of the considered toy problem. Even in the rather mild case of \(\alpha = 0\) Proximal Gradient takes \(N = 5326\) and FISTA takes \(N = 2498\) iterations to reach the minimizer. Note that in this case we only used four uniform grid refinements due to the very high computational effort of the simulations which does not diminish the qualitative significance of our observations.

Furthermore, Table 1 displays the total number of iterations required in order to reach the minimizer of (1) considering different grid sizes for the discretization of the objective function for the values of the prefactor \(\alpha\) investigated beforehand. In the case \(\alpha > 0\) we observe some moderate increase in iteration numbers, which is attributed to the presence of a norm-gap in the corresponding term.

8 Conclusion

Now that we have sufficiently displayed the global and local convergence properties of our Proximal Newton method, it is time to both reflect on what we have achieved here as well as discuss some possible improvements on the algorithm and its implementation which are a topic of future research:

We have developed a globally convergent and locally accelerated Proximal Newton method in a Hilbert space setting which demands neither second order differentiability of the smooth part nor convexity of either part of the composite objective function. Concerning differentiability, we have introduced the notion of second order semi-smoothness. Concerning non-convexity, our theoretical framework uses quantified information on lacking convexity instead of simply resorting to a different first order update scheme in the non-convex case. The globalization scheme takes advantage of a proximal arc search procedure and thereby establishes stationarity of all limit points of the sequence of iterates. Additional convexity close to optimal solutions of the original problem leads to local acceleration of our method which in particular does not rely on strong convexity of the smooth part, but only on the strong convexity of the composite functional thanks to a well-thought definition of proximal mappings within the theoretical framework. The application of our method to actual function space problems is enabled by using an efficient solver for the step computation subproblem, the Truncated Non-smooth Newton Multigrid Method. We have displayed global convergence and local acceleration of our algorithm by considering a toy model problem in function space.

As we have already mentioned beforehand, the choice of the regularization parameter we employed here is rather heuristic and not problem-specific at all. This issue can be addressed by using an estimate for the residual term of the quadratic model established in subproblem (16), as seen in [31] for adaptive affine conjugate Newton methods where non-convex but smooth minimization problems for nonlinear elastomechanics have been thoroughly investigated. The idea behind the procedure is to evaluate actual residual terms for formerly computed correction candidates and then use them as a regularization parameter for the computation of the next update step candidate.

Another focal concern of our future work is taking into account inexactness in the computation of update steps. Inexact solutions of subproblem (16) are then required to at least satisfy certain inexactness criteria which still give access to similar global and local convergence properties of the ensuing algorithm as the exact version discussed throughout the present treatise.

Additionally, these inexactness criteria should be sufficiently simple to evaluate since they have to be considered within every iteration of solving the subproblem for update step computation. However, the discussion of inexact Proximal Newton methods then opens up the possibility of considering more challenging real-world applications like energetic formulations of finite strain plasticity.

Data availability statement

The datasets generated and analysed during the current study are not publicly available due the fact that they constitute an excerpt of research in progress but are available from the corresponding author on reasonable request.

References

Argyriou, A., Micchelli, C.A., Pontil, M., Shen, L., Xu, Y.: Efficient first order methods for linear composite regularizers. Preprint (2011)

Beck, A.: First-order methods in optimization. Soc. Indus. Appl. Math. (2017). https://doi.org/10.1137/1.9781611974997

Byrd, R.H., Nocedal, J., Oztoprak, F.: An inexact successive quadratic approximation method for l-1 regularized optimization. Math. Program. 157(2), 375–396 (2015). https://doi.org/10.1007/s10107-015-0941-y

Chen, D.Q., Zhou, Y., Song, L.J.: Fixed point algorithm based on adapted metric method for convex minimization problem with application to image deblurring. Adv. Comput. Math. 42(6), 1287–1310 (2016). https://doi.org/10.1007/s10444-016-9462-3

Chen, P., Huang, J., Zhang, X.: A primal-dual fixed point algorithm for minimization of the sum of three convex separable functions. Fixed Point Theory Appl. 2016(1) (2016). https://doi.org/10.1186/s13663-016-0543-2

Dinh, Q.T., Kyrillidis, A., Cevher, V.: A proximal newton framework for composite minimization: Graph learning without cholesky decompositions and matrix inversions. Presented at the (2013)

Fountoulakis, K., Tappenden, R.: A flexible coordinate descent method. Comput. Optim. Appl. 70(2), 351–394 (2018). https://doi.org/10.1007/s10589-018-9984-3

Fukushima, M., Mine, H.: A generalized proximal point algorithm for certain non-convex minimization problems. Int. J. Syst. Sci. 12(8), 989–1000 (1981). https://doi.org/10.1080/00207728108963798

Ghanbari, H., Scheinberg, K.: Proximal quasi-newton methods for regularized convex optimization with linear and accelerated sublinear convergence rates. Comput. Optim. Appl. 69(3), 597–627 (2017). https://doi.org/10.1007/s10589-017-9964-z

Gräser, C., Sander, O.: Truncated nonsmooth newton multigrid methods for block-separable minimization problems. IMA J. Numer. Anal. 39(1), 454–481 (2018). https://doi.org/10.1093/imanum/dry073

Hintermüller, M., Ulbrich, M.: A mesh-independence result for semismooth Newton methods. Math. Program. 101(1, Ser. B), 151–184 (2004). https://doi.org/10.1007/s10107-004-0540-9

Hintermüller, M., Ito, K., Kunisch, K.: The primal-dual active set strategy as a semismooth newton method. SIAM J. Optim. 13(3), 865–888 (2002). https://doi.org/10.1137/s1052623401383558

Kanzow, C., Lechner, T.: Globalized inexact proximal newton-type methods for nonconvex composite functions. Comput. Optim. Appl. (2020). https://doi.org/10.1007/s10589-020-00243-6

Kruger, A.Y.: On fréchet subdifferentials. J. Math. Sci. 116(3), 3325–3358 (2003). https://doi.org/10.1023/a:1023673105317

Lee, C.-P., Wright, S.J.: Inexact successive quadratic approximation for regularized optimization. Comput. Optim. Appl. 72(3), 641–674 (2019). https://doi.org/10.1007/s10589-019-00059-z

Lee, J.D., Sun, Y., Saunders, M.A.: Proximal newton-type methods for minimizing composite functions. SIAM J. Optim. 24(3), 1420–1443 (2014). https://doi.org/10.1137/130921428

Li, J., Andersen, M.S., Vandenberghe, L.: Inexact proximal newton methods for self-concordant functions. Math. Methods Oper. Res. 85(1), 19–41 (2016). https://doi.org/10.1007/s00186-016-0566-9

Li, Q., Shen, L., Xu, Y., Zhang, N.: Multi-step fixed-point proximity algorithms for solving a class of optimization problems arising from image processing. Adv. Comput. Math. 41(2), 387–422 (2014). https://doi.org/10.1007/s10444-014-9363-2

Milzarek, A.: Numerical methods and second order theory for nonsmooth problems. Ph.D. thesis, TU München (2016)

Milzarek, A., Ulbrich, M.: A semismooth newton method with multidimensional filter globalization for \(l_1\)-optimization. SIAM J. Optim. 24(1), 298–333 (2014). https://doi.org/10.1137/120892167

Scheinberg, K., Goldfarb, D., Bai, X.: Fast first-order methods for composite convex optimization with backtracking. Found. Comput. Math. 14(3), 389–417 (2014). https://doi.org/10.1007/s10208-014-9189-9

Scheinberg, K., Tang, X.: Practical inexact proximal quasi-newton method with global complexity analysis. Math. Program. 160(1–2), 495–529 (2016). https://doi.org/10.1007/s10107-016-0997-3

Schiela, A.: A simplified approach to semismooth Newton methods in function space. SIAM J. Optim. 19(3), 1417–1432 (2008). https://doi.org/10.1137/060674375

Stella, L., Themelis, A., Patrinos, P.: Forward–backward quasi-newton methods for nonsmooth optimization problems. Comput. Optim. Appl. 67(3), 443–487 (2017). https://doi.org/10.1007/s10589-017-9912-y

Tran-Dinh, Q., Li, Y.H., Cevher, V.: Composite convex minimization involving self-concordant-like cost functions. In: Advances in Intelligent Systems and Computing, pp. 155–168. Springer International Publishing (2015). https://doi.org/10.1007/978-3-319-18161-5_14

Tröltzsch, F.: In: Optimal Control of Partial Differential Equations. In: Graduate Studies in Mathematics, vol. 112. Theory, Methods and Applications, Translated from the 2005 German original by Jügen Sprek. American Mathematical Society, Providence, RI (2010). https://doi.org/10.1090/gsm/112

Tseng, P., Yun, S.: A coordinate gradient descent method for nonsmooth separable minimization. Math. Program. 117(1–2), 387–423 (2007). https://doi.org/10.1007/s10107-007-0170-0

Ulbrich, M.: Nonsmooth newton-like methods for variational inequalities and constrained optimization problems in function spaces. Habilitation thesis (2002)

Ulbrich, M.: Semismooth Newton methods for variational inequalities and constrained optimization problems in function spaces. Soc. Indus. Appl. Math. (2011). https://doi.org/10.1137/1.9781611970692

Walther, A., Griewank, A.: Getting started with ADOL-c. In: Combinatorial Scientific Computing, pp. 181–202. Chapman and Hall/CRC (2012). https://doi.org/10.1201/b11644-8

Weiser, M., Deuflhard, P., Erdmann, B.: Affine conjugate adaptive newton methods for nonlinear elastomechanics. Optim. Methods Softw. 22(3), 413–431 (2007). https://doi.org/10.1080/10556780600605129

Funding

Open Access funding enabled and organized by Projekt DEAL. This work was funded by the DFG SPP 1962: Non-smooth and Complementarity-based Distributed Parameter Systems – Simulation and Hierarchical Optimization; Project number: SCHI 1379/6-1

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Pötzl, B., Schiela, A. & Jaap, P. Second order semi-smooth Proximal Newton methods in Hilbert spaces. Comput Optim Appl 82, 465–498 (2022). https://doi.org/10.1007/s10589-022-00369-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10589-022-00369-9