Abstract

We present a benchmark study of Optical Flow (OpF) methods for fluid mechanics applications. It is aimed at assessing the performance of three OpF methods, Lucas and Kanade (in: Proceedings of the 7th 1519 international joint conference on artificial intelligence, 1981), Horn and Schunck (AI 17:185–203, 1981) and Farnebäck (Two-frame motion estimation based on polynomial expansion, in: Bigun, Gustavsson (eds) Image analysis, Springer, 2003), combined or not with the Liu and Shen (JFM 614:253–291, 2008) algorithm. The performance of the OpF methods, evaluated exclusively as the difference between the values of the methods and of ground-truth (reference values), is benchmarked for deformation dominated, rotation-dominated and uniform flows. For each flow type, relative and absolute errors are computed for different tracer displacements, noise levels, pixel particle sizes, image bit-depths and particle concentrations. The accuracy of the OpF methods seems mainly affected by the magnitude of velocity gradients and convective accelerations. It does not seem to be affected by the relative preponderance of rotational and deformation components. The inner region of the Poiseuille flow and the saddle point in the Rankine vortex combined with uniform flow pose significant difficulties to all methods. The performance of the Lucas-Kanade/Liu-Shen combination is the best for all flow types, image conditions and image bit depths. The Farnebäck/Liu-Shen combination has a similar high performance but only for image depths of 10 bit or higher. These OpF methods maintain a high performance for tracer sizes and concentrations outside the PIV optimal range. Horn-Schunck is the worst performing method, due to high sensitiveness to particle concentration variations or particle sizes. These results can be used to plan new particle-based velocimetry experiments or to retrieve further information from existing PIV databases.

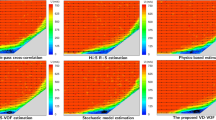

Graphical abstract

Similar content being viewed by others

References

Adam P, Burg B, Zavidovique B (1986) Dynamic programming for region based pattern recognition. In: ICASSP ’86. IEEE International conference on acoustics, speech, and signal processing. 11: 2075–2078. https://doi.org/10.1109/ICASSP.1986.1168952

Allaoui R, Mouane HH, Asrih Z, Mars S, El Hajjouji I, El mourabit A (2017) Fpga-based implementation of optical flow algorithm. In: 2017 international conference on electrical and information technologies (ICEIT), pp 1–5, https://doi.org/10.1109/EITech.2017.8255246

Anandan P (1989) A computational framework and an algorithm for the measurement of visual motion. Int J Comput Vis 2(3):283–310. https://doi.org/10.1007/BF00158167

Aubert G, Deriche R, Kornprobst P (1999) Computing optical flow via variational techniques. SIAM J Appl Math 60(1):156–182. https://doi.org/10.1137/S0036139998340170

Barron JL, Fleet DJ, Beauchemin SS (1994) Performance of optical flow techniques. Int J Comput Vis 12(1):43–77. https://doi.org/10.1007/BF01420984

Bung DB, Valero D (2016) Optical flow estimation in aerated flows. J Hydraul Res 54(5):575–580. https://doi.org/10.1080/00221686.2016.1173600

Burt PJ (1984) The pyramid as a structure for efficient computation. Springer, Berlin, Heidelberg, pp 6–35. https://doi.org/10.1007/978-3-642-51590-3_2

Burt P, Adelson E (1983) The laplacian pyramid as a compact image code. IEEE Trans Commun 31(4):532–540. https://doi.org/10.1109/TCOM.1983.1095851

Cai S, Mémin E, Dérian P, Xu C (2017) Motion estimation under location uncertainty for turbulent fluid flows. Exp Fluids 59(1):8. https://doi.org/10.1007/s00348-017-2458-z

Carlier J, Wieneke B (2005) Report 1 on production and diffusion of fluid mechanics images and data. fluid project deliverable 1.2. European Project’Fluid image analisys and description’(FLUID). http://fluid.irisa.fr/data-eng.htm

Cassisa C, Simoens S, Prinet V, Shao L (2011) Subgrid scale formulation of optical flow for the study of turbulent flow. Exp Fluids 51(6):1739–1754. https://doi.org/10.1007/s00348-011-1180-5

Champagnat F, Plyer A, Le Besnerais G, Leclaire B, Davoust S, Le Sant Y (2011) Fast and accurate piv computation using highly parallel iterative correlation maximization. Exp Fluids 50(4):1169. https://doi.org/10.1007/s00348-011-1054-x

Chantas G, Gkamas T, Nikou C (2014) Variational-bayes optical flow. J Math Imag Vis 50(3):199–213. https://doi.org/10.1007/s10851-014-0494-3

Corpetti T, Heitz D, Arroyo G, Mémin E, Santa-Cruz A (2006) Fluid experimental flow estimation based on an optical-flow scheme. Exp Fluids 40(1):80–97. https://doi.org/10.1007/s00348-005-0048-y

Dérian P, Héas P, Herzet C, Mémin E (2013) Wavelets and optical flow motion estimation. Numer Math A J Chin Univ 6:116–137

Dosovitskiy A, Fischer P, Ilg E, Häusser P, Hazirbas C, Golkov V, Smagt Pvd, Cremers D, Brox T (2015) Flownet: Learning optical flow with convolutional networks. In: 2015 IEEE international conference on computer vision (ICCV), pp 2758–2766, https://doi.org/10.1109/ICCV.2015.316

Farnebäck G (2003) Two-frame motion estimation based on polynomial expansion. In: Bigun J, Gustavsson T (eds) Image analysis. Springer, Berlin, Heidelberg, pp 363–370. https://doi.org/10.1007/3-540-45103-X_50

Fleet DJ, Jepson AD (1990) Computation of component image velocity from local phase information. Int J Comput Vision 5(1):77–104. https://doi.org/10.1007/BF00056772

Frost G, Pitt B, Freeman J, Mendes L, et al (2021) Aparapi v3.0.0 - a parallel api java open-source framework for gpgpu. https://git.qoto.org/aparapi/aparapi/-/releases/v3.0.0, accessed 29 September 2021

Głomb G, Świrniak G (2019) A hybrid method for velocity field of fluid flow estimation based on optical flow. In: Lehmann P, Osten W, Jr AAG (eds) Optical measurement systems for industrial inspection XI, international society for optics and photonics, SPIE. 11056: 969–980. https://doi.org/10.1117/12.2525711

Haggui O, Tadonki C, Sayadi F, Ouni B (2019) Efficient gpu implementation of lucas-kanade through openacc. In: Proceedings of the 14th international joint conference on computer vision, imaging and computer graphics theory and applications: volume 5: VISAPP,, INSTICC, SciTePress, pp 768–775, https://doi.org/10.5220/0007272107680775

Heeger DJ (1988) Optical flow using spatiotemporal filters. Int J Comput Vision 1(4):279–302. https://doi.org/10.1007/BF00133568

Heitz D, Héas P, Mémin E, Carlier J (2008) Dynamic consistent correlation-variational approach for robust optical flow estimation. Exp Fluids 45(4):595–608. https://doi.org/10.1007/s00348-008-0567-4

Heitz D, Mémin E, Schnörr C (2010) Variational fluid flow measurements from image sequences: synopsis and perspectives. Exp Fluids 48(3):369–393. https://doi.org/10.1007/s00348-009-0778-3

Horn BK, Schunck BG (1981) Determining optical flow. Artif Intell 17(1):185–203. https://doi.org/10.1016/0004-3702(81)90024-2

Kähler CJ, Astarita T, Vlachos PP, Sakakibara J, Hain R, Discetti S, La Foy R, Cierpka C (2016) Main results of the 4th international piv challenge. Exp Fluids 57(6):97. https://doi.org/10.1007/s00348-016-2173-1

Kajo I, Malik AS, Kamel N (2016) An evaluation of optical flow algorithms for crowd analytics in surveillance system. In: 2016 6th International conference on intelligent and advanced systems (ICIAS), pp 1–6, https://doi.org/10.1109/ICIAS.2016.7824064

Keane RD, Adrian RJ (1992) Theory of cross-correlation analysis of PIV images. Appl Sci Res 49(3):191–215. https://doi.org/10.1007/BF00384623

Kondermann D, Abraham S, Brostow G, Förstner W, Gehrig S, Imiya A, Jähne B, Klose F, Magnor M, Mayer H, Mester R, Pajdla T, Reulke R, Zimmer H (2012) On performance analysis of optical flow algorithms. In: Dellaert F, Frahm JM, Pollefeys M, Leal-Taixé L, Rosenhahn B (eds) Outdoor and large-scale real-world scene analysis. Springer, Berlin, Heidelberg, pp 329–355. https://doi.org/10.1007/978-3-642-34091-8_15

Le Besnerais G, Champagnat F (2005) Dense optical flow by iterative local window registration. In: IEEE international conference on image processing 2005. 1: I–137, https://doi.org/10.1109/ICIP.2005.1529706

Lecordier B, Westerweel J (2004) The europiv synthetic image generator (s.i.g.). In: Stanislas M, Westerweel J, Kompenhans J (eds) Particle image velocimetry: recent improvements. Springer, Berlin, Heidelberg, pp 145–161. https://doi.org/10.1007/978-3-642-18795-7_11

Liu T (2017) Openopticalflow: an open source program for extraction of velocity fields from flow visualization images. J Open Res Softw 5(1):29. https://doi.org/10.5334/jors.168

Liu T, Salazar D (2021) Openopticalflow_piv: an open source program integrating optical flow method with cross-correlation method for particle image velocimetry. J Open Res Softw 9(1):3. https://doi.org/10.5334/jors.326

Liu T, Shen L (2008) Fluid flow and optical flow. J Fluid Mech 614:253–291. https://doi.org/10.1017/S0022112008003273

Liu T, Merat A, Makhmalbaf MHM, Fajardo C, Merati P (2015) Comparison between optical flow and cross-correlation methods for extraction of velocity fields from particle images. Exp Fluids 56(8):166. https://doi.org/10.1007/s00348-015-2036-1

Liu T, Salazar DM, Fagehi H, Ghazwani H, Montefort J, Merati P (2020) Hybrid optical-flow-cross-correlation method for particle image velocimetry. J Fluids Eng 142(5):054501. https://doi.org/10.1115/1.4045572

Lucas BD, Kanade T (1981) An iterative image registration technique with an application to stereo vision. In: Proceedings of the 7th international joint conference on artificial intelligence - Volume 2, Morgan Kaufmann Publishers Inc., San Francisco, CA, USA, IJCAI’81, p 674–679, https://dl.acm.org/doi/10.5555/1623264.1623280

Lyasheva S, Rakhmankulov R, Shleymovich M (2020) Frame interpolation in video stream using optical flow methods. J Phys Conf Ser 1488:012024. https://doi.org/10.1088/1742-6596/1488/1/012024

Mémin E, Pérez P (2002) Hierarchical estimation and segmentation of dense motion fields. Int J Comput Vision 46(2):129–155. https://doi.org/10.1023/A:1013539930159

Mendes L, Bernardino A, Ferreira RML (2020) piv-image-generator: an image generating software package for planar piv and optical flow benchmarking. https://doi.org/10.1016/j.softx.2020.100537. https://www.sciencedirect.com/science/article/pii/S2352711020300339

Mendes L, Ricardo A, Ferreira RML (2019) A customizable open-source piv software platform. In: Proceedings of Hydrosensoft 2019 - international symposium and exhibition on hydro-environment sensors and software

Ohta Y, Kanade T (1985) Stereo by intra- and inter-scanline search using dynamic programming. IEEE Trans Pattern Anal Mach Intell PAMI 7(2):139–154. https://doi.org/10.1109/TPAMI.1985.4767639

Okamoto K, Nishio S, Saga T, Kobayashi T (2000) Standard images for particle-image velocimetry. Meas Sci Technol 11(6):685–691. https://doi.org/10.1088/0957-0233/11/6/311

Papadakis N, Mémin E (2008) A variational technique for time consistent tracking of curves and motion. J Math Imag Vis 31(1):81–103. https://doi.org/10.1007/s10851-008-0069-2

Papenberg N, Bruhn A, Brox T, Didas S, Weickert J (2006) Highly accurate optic flow computation with theoretically justified warping. Int J Comput Vision 67(2):141–158. https://doi.org/10.1007/s11263-005-3960-y

Papenberg N, Bruhn A, Brox T, Weickert J (2003) Numerical justification for multi-resolution optical flow computation. In: International workshop on computer vision and image analysis (IWCVIA), IWCVIA03. 26: 7–12

Plyer A, Le Besnerais G, Champagnat F (2016) Massively parallel lucas kanade optical flow for real-time video processing applications. J Real-Time Image Proc 11(4):713–730. https://doi.org/10.1007/s11554-014-0423-0

Prasad AK, Adrian RJ, Landreth CC, Offutt PW (1992) Effect of resolution on the speed and accuracy of particle image velocimetry interrogation. Exp Fluids 13(2):105–116. https://doi.org/10.1007/BF00218156

Quénot GM, Pakleza J, Kowalewski TA (1998) Particle image velocimetry with optical flow. Exp Fluids 25(3):177–189. https://doi.org/10.1007/s003480050222

Raffel M, Willert C, Wereley S, Kompenhans J (2007) Particle image velocimetry: a practical guide. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-72308-0

Ren Z, Yan J, Ni B, Liu B, Yang X, Zha H (2017) Unsupervised deep learning for optical flow estimation. Proceedings of the AAAI conference on artificial intelligence 31(1), https://ojs.aaai.org/index.php/AAAI/article/view/10723

Roth S, Black MJ (2007) On the spatial statistics of optical flow. Int J Comput Vision 74(1):33–50. https://doi.org/10.1007/s11263-006-0016-x

Ruhnau P, Schnörr C (2007) Optical stokes flow estimation: an imaging-based control approach. Exp Fluids 42(1):61–78. https://doi.org/10.1007/s00348-006-0220-z

Ruhnau P, Kohlberger T, Schnörr C, Nobach H (2005) Variational optical flow estimation for particle image velocimetry. Exp Fluids 38(1):21–32. https://doi.org/10.1007/s00348-004-0880-5

Schmidt BE, Sutton JA (2019) High-resolution velocimetry from tracer particle fields using a wavelet-based optical flow method. Exp Fluids 60(3):37. https://doi.org/10.1007/s00348-019-2685-6

Seong JH, Song MS, Nunez D, Manera A, Kim ES (2019) Velocity refinement of piv using global optical flow. Exp Fluids 60(11):174. https://doi.org/10.1007/s00348-019-2820-4

Stanislas M, Okamoto K, Kähler CJ, Westerweel J (2005) Main results of the second international piv challenge. Exp Fluids 39(2):170–191. https://doi.org/10.1007/s00348-005-0951-2

Stanislas M, Okamoto K, Kähler CJ, Westerweel J, Scarano F (2008) Main results of the third international piv challenge. Exp Fluids 45(1):27–71. https://doi.org/10.1007/s00348-008-0462-z

Sun J, Quevedo FJ, Bollt E (2018) Bayesian optical flow with uncertainty quantification. Inverse Prob 34(10):105008. https://doi.org/10.1088/1361-6420/aad7cc

Tomasi C, Kanade T (1991) Detection and tracking of point features. Tech. rep., International Journal of Computer Vision

Tu Z, Xie W, Zhang D, Poppe R, Veltkamp RC, Li B, Yuan J (2019) A survey of variational and cnn-based optical flow techniques. Signal Process Image Commun 72:9–24. https://doi.org/10.1016/j.image.2018.12.002

Wang B, Cai Z, Shen L, Liu T (2015) An analysis of physics-based optical flow. J Comput Appl Math 276:62–80. https://doi.org/10.1016/j.cam.2014.08.020

Weickert J, Bruhn A, Brox T, Papenberg N (2006) A survey on variational optic flow methods for small displacements. Springer, Berlin, Heidelberg, pp 103–136. https://doi.org/10.1007/978-3-540-34767-5_5

Westerweel J (1993) Digital particle image velocimetry: Theory and application. PhD thesis, Mechanical Maritime and Materials Engineering, Delft

Westerweel J (1997) Fundamentals of digital particle image velocimetry. Meas Sci Technol 8(12):1379–1392. https://doi.org/10.1088/0957-0233/8/12/002

Wills J, Agarwal S, Belongie S (2006) A feature-based approach for dense segmentation and estimation of large disparity motion. Int J Comput Vision 68(2):125–143. https://doi.org/10.1007/s11263-006-6660-3

Yang Z, Johnson M (2017) Hybrid particle image velocimetry with the combination of cross-correlation and optical flow method. J Vis 20(3):625–638. https://doi.org/10.1007/s12650-017-0417-7

Acknowledgements

This research was partially supported by Portuguese and European funds, within the COMPETE 2020 and PORL-FEDER programs, through project PTDC/CTA-OHR/29360/2017 RiverCure and by the Ph.D. Grant SFRH/BD/137967/2018 from the Portuguese Foundation for Science and Technology (FCT).

Author information

Authors and Affiliations

Contributions

All authors contributed to the study conception and design. Material preparation, data collection and analysis were performed by LPNM. The successive versions of the manuscript were written by LPNM and RMLF. RMLF and AJMB commented the versions of the manuscript. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

1.1 PIV and optical flow methods

This sub-section is dedicated to bring PIV, in a loose sense, into context with OpF. We present in Fig. 12 a brief comparison between JPIV v21.08 (Java), OpenPIV v0.23.6 (Python) and QuickLab PIV v0.5.7 (our reference implementation). From that figure we can see that all three PIV implementations perform better for particle concentrations of \(N_i \ge 6\) (higher reliability) as well as with particle spot sizes of 2.0 or 3.0 px diameters (higher precision) except OpenPIV which also deals well with 6.0 px partice spot sizes. Furthermore, none of the implementations is able to cope well with 1.0 px particle spot sizes. These PIV implementations match the theoretical expected behavior for PIV methods (see Raffel et al. 2007).

OpF methods are compared with PIV by dividing all images in into interrogation areas of 16 px by 16 px, as employed by PIV software, rather than considering all the individual OpF vectors. Thus, a SIG absolute error and a SIG relative error are computed per each interrogation area based on the optical flow vectors that are contained in each interrogation area. Only at the final step, the average of the errors for all the interrogation areas is taken mimicking the error computation process that is made for the PIV accuracy evaluation.

Observing Fig. 13 we see that the combined dense Lucas-Kanade/Liu-Shen performs well when compared to our PIV reference implementation, both in terms of SIG absolute and relative error. A few combinations of parameters work marginally better with our PIV reference implementation, particularly with the uniform flow and displacements of 4.0 px (\(\mathscr {E}=-0.16 dBpx\) and \(\mathscr {E}_R=-0.16 dB\)). Nevertheless, those exceptions do not present relevant expression and it also does not mean that the tested OpF method is superior to existing state of the art PIV solutions. Our PIV implementation is a classic PIV implementation and will likely not perform on par with the current state of the art solutions.

The comparison chart in Fig. 13 confirms that the combined dense Lucas-Kanade/Liu-Shen method is valid for PIV processing of images with 8-bit depths. With an image bit depth of 10-bits it becomes possible to employ Farnebäck/Liu-Shen combination. The comparison results shown in Fig. 14 confirm that this method also performs well in comparison to our PIV reference implementation, both in terms of SIG absolute and relative error. The only relevant exceptions, albeit only in occurrence number, not in error value, occur for the uniform flow and displacements of 4.0 px where \(\mathscr {E}=-0.28 dBpx\) and \(\mathscr {E}_R=-0.28 dB\). From these results one can conclude that the Farnebäck/Liu-Shen combination is suitable for processing images with 10- or 12-bit depths. Our implementation of PIV does not show accuracy improvements with increasing bit depths above 8-bits.

1.2 Pyramidal versus non-pyramidal optical flow and bit-depths

Table 5 details the effects of employing pyramidal processing with the base algorithms at the various image bit depth levels, as a function of the maximum velocity considered (0.05 IA, 0.10 IA, 0.25 IA). It forms the base for the discussion of the pyramidal effects on accuracy. The values obtained for this table are averages of the SIG errors (both absolute and relative) as described in Sect. 3.

From Table 5 it can be seen that the base version of Farnebäck is similar to its two level pyramidal version, while Horn-Schunck shows a small improvement for larger displacements of 4.0 pixels in respect to the two level pyramidal version. Instead, Lucas-Kanade has significant benefits with the two level pyramidal variant for the larger displacements. The distribution of the errors shown in Fig. 16 clarify the overall benefits of the pyramidal implementation. When comparing Lucas-Kanade against its own two level pyramidal version, we find that the pyramidal version is able to track 4.0 pixel displacements (0.25 IA width) much better than the non-pyramidal versions (see Table 5 and Fig. 15).

Table 6 discriminates the benefits across types of flow. It can be seen that dense Lucas-Kanade pyramidal version is especially better for the uniform flow, followed by the parabolic flow (see Table 6). Finally, rankine vortex, rankine with superimposed uniform and stagnation are only marginally improved. For smaller displacements, like 1.6 pixels (0.10 IA width) and 0.8 pixels (0.05 IA width), there is almost no measurable loss of accuracy, except a small error increment above the threshold for particles with 1.0 pixel diameter spots, with a particle number concentration per volume of 1 and a 15 dB WGIN (last entry of Table 6). Where, when considering a threshold of 1.0 dB for the \(\mathcal{E}_R\) and 1.2 dB for the \(\mathcal{E}\), these thresholds are slightly exceeded.

Figure 15 shows that the pyramidal version provides accuracy improvements even at the lowest image bit-depth considered of 8-bits, where one can see that the errors are quite reduced. Moving to 10- or 12-bit image depths does not improve the accuracy for this method (see Table 6).

Referring again to Table 5, but now regarding to Farnebäck versus two level pyramidal Farnebäck, it can be seen that the accuracy benefits only occur with a 4.0 px maximum displacement. The Farnebäck non-pyramidal method is already capable of tracking 4.0 px displacements, better than the other methods, just not as good as the pyramidal version.

From Table 7, the Pyramidal results for Farnebäck can be seen in more detail. The benefits occur specifically at 4.0 px maximum displacement for the uniform flow for all the bit-depths, and for the Poiseuille flow for 10 and 12-bit depths. This is the only case where 1.2 dBpx SIG absolute error improvement or the 1.0 dB SIG relative error improvement are exceeded, resulting in slightly improved accuracy. There are no accuracy drawbacks for any of the displacements, 0.8 px, 1.6 px or 4.0 px which exceed the considered thresholds (\(> 1.2\) dBpx SIG absolute error or the \(> 1.0\) dB SIG relative error improvements) for the tested bit depths. Figure 16 shows the accuracy improvement achieved for images with 12-bit image depths, being somewhat similar to what happens with 10-bit image depths, both with better results than the 8-bit image depths (see Table 7). For 8-bit image depths and 4.0 px displacements the gains are smaller, but the loss is not related with the pyramidal processing method, since the 8-bit depth non-pyramidal version also displays much worse performance than the higher bit-depths. As such, the accuracy loss lies in the base Farnebäck method, which does not perform so well for 8-bit depths.

When considering Horn-Schunck versus two level pyramidal Horn-Schunck, and referring to Table 5, one can observe a reduction in the average errors accompanied with an increase in the standard deviation. Still, despite spreading the errors across a larger range, relevant accuracy improvements are obtained for 4.0 px displacements (see Fig. 17).

Table 8 further details the results for Horn-Schunck. From that table it can be seen that for 4.0 px maximum displacements the improvement occurs especially for the uniform flow, while also improving Poiseuille flow and slightly improving Rankine vortex, Rankine vortex with superimposed uniform flow and flow with stagnation point. The method has no accuracy reductions, for most displacements, except for one situation which comprehends a set of special conditions. It occurs for 8-bit depth and parabolic flow at 1.6 px maximum displacement and 3.0 px diameter particle at a particle volume concentration of 12 and 0 dB WGIN where a slight accuracy loss occurs exclusively for the SIG absolute error improvement that exceeds the 1.2 dB threshold. The SIG relative error improvement, for the same parameters combination, is still within the 1.0 dB threshold, meaning that the error is not significant when compared to the absolute vector magnitude (see Table 8). Moving to 10 or 12-bit image depths does not improve the accuracy for this method.

Horn-Schunck’s Lagrange multiplier parameter must be carefully selected for each pyramidal level, as it also significantly influences the attained results. In fact, it also significantly depends on the particle volume concentration and bit-depths.

When combining Liu-Shen optical flow with each of the considered base algorithms we can verify the same trend regarding non-pyramidal versus 2-level pyramidal. Details can be found in table 9. When employing 2-level pyramidal for the Liu-Shen / Lucas-Kanade there are accuracy improvements similar to the ones observed for the base Lucas-Kanade as shown in Fig. 15. Regarding Liu-Shen / Farnebäck and its non-pyramidal versus 2-level pyramidal implementation, the behavior follows closely the one presented for the base Farnebäck like in Fig. 16. Finally, the results for the Liu-Shen / Horn-Schunck pyramidal and non-pyramidal version are once again, quite similar when comparing with the base Horn-Schunck algorithm in Fig. 17.

Rights and permissions

About this article

Cite this article

Mendes, L.P.N., Ricardo, A.M.C., Bernardino, A.J.M. et al. A comparative study of optical flow methods for fluid mechanics. Exp Fluids 63, 7 (2022). https://doi.org/10.1007/s00348-021-03357-7

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s00348-021-03357-7