Abstract

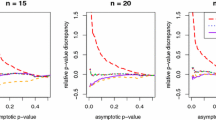

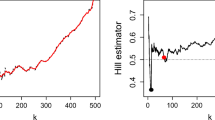

This paper studies the applications of extreme value theory on analysis for closing price data of the Dow-Jones industrial index and Danish fire insurance claims data. The generalized extreme value (GEV) distribution is considered in analyzing the real data, and the hypothesis testing problem for the shape parameter of GEV distribution is investigated based on a new test statistic—the \(L_q\)-likelihood ratio (\(L_q\)R) statistic. The \(L_q\)R statistic can be treated as a generalized form of the classical likelihood ratio (LR) statistic. We show that the asymptotic behavior of proposed statistic is characterized by the degree of distortion \(q\). For small and modest sample sizes, the \(L_q\)R statistic is still available when \(q\) is properly chosen. By simulation studies, the proposed statistic not only performs the asymptotic properties, but also outperforms the classical LR statistic as the sample sizes are modest or even small. Meanwhile, the test power based on the new statistic is also simulated by Monte Carlo methods. At last, the models are diagnosed by graphical methods.

Similar content being viewed by others

References

Attouch H (1985) Variational convergence for function and operators. Pitman, London

Bali TG (2003) An extreme value approach to estimating volatility and value at risk. J Bus 76(1):83–108

Barmi H, Dykstra R (1999) Likelihood ratio test against a set of inequality constraints. J Nonparametr Stat 11(1):233–250

Beisel CJ, Rokyta DR, Wichman HA, Joyce P (2007) Testing the extreme value domain of attraction for distributions of beneficial fitness effects. Genetics 176(4):2441–2449

Billingsley P (1968) Convergence of probability measures. Wiley, New York

Brazauskas V, Kleefeld A (2009) Robust and efficient fitting of the generalized Pareto distribution with actuarial applications in view. Insur Math Econ 45(3):424–435

Burkhardt TW, Györgyi G, Moloney NR, Rácz Z (2007) Extreme statistics for time series: distribution of the maximum relative to the initial value. Phys Rev E 76(4):041119

Coles S (2001) An introduction to statistical modeling of extreme values. Springer, London

Cox DR, Hinkley DV (1974) Theoretical statistics. Chapman& Hall, London

Efron B, Tibshirani RJ (1993) An introduction to the bootstrap. Chapman& Hall, New York

Embrechts P, Kluppelbern C, Mikosch T (1997) Modelling extreme events for insurance and finance. Springer, New York

Ferrari D, Yang Y (2007) Estimation of tail probability via the maximum Lq-likelihood method. Technical report 659, School of statistics, University of Minnesota

Ferrari D, Paterlini S (2009) The maximum Lq-likelihood method: an application to extreme quantile estimation in finance. Methodol Comput Appl Probab 11(1):3–19

Ferrari D, Yang Y (2010) Maximum Lq-likelihood estimation. Ann Stat 38(2):753–783

Ferrari D, La Vecchia D (2012) On robust estimation via pseudo-additive information. Biometrika 99(1):238–244

Fisher RA, Tippet LHC (1928) Limiting forms of the frequency distributions of the largest or smallest member of a sample. Proc Camb Philos Soc 24(2):180

Givens GH, Hoeting JA (2005) Comput Stat. Wiley, New Jersey

Gnedenko BV (1943) Sur la distribution limite du terme maximum d’un serie aleatoire. Ann Math 44:423

Gomes MI, Teresa AM (1986) Inference in a multivariate generalized extreme value model- asymptotic properties of two test statistics. Scand J Stat 13(4):291–300

Hosking JRM (1984) Testing whether the shape parameter is zero in the generalized extreme value distribution. Biometrika 71(2):367–374

Huang C, Lin JG, Ren YY (2012) Statistical inferences for generalized Pareto distribution based on interior penalty function algorithm and Bootstrap methods and applications in analyzing stock data. Comput Econ 39(2):173–193

Hüsler J, Li D (2006) On testing extreme value conditions. Extremes 9(1):69–86

Koopman SJ, Shephard N, Creal D (2009) Testing the assumptions behind importance sampling. J Econom 149(1):2–11

Lin J, Huang C, Zhuang Q, Zhu L (2010) Estimating generalized state density of near-extreme events and its applications in analyzing stock data. Insur Math Econ 47(1):13–20

Liu X (2007) Likelihood ratio test for and against nonlinear inequality constraints. Metrika 65(1):93–108

Marimoutou V, Raggad B, Trabelsi A (2009) Extreme value theory and value at risk: application to oil market. Energy Econ 31(4):519–530

McNeil AJ, Frey R, Embrechts P (2005) Quantitative risk management: concepts, techniques and tools. Princeton University Press, Princeton

Mohtadi H, Murshid AP (2009) Risk of catastrophic terrorism: an extreme value approach. J Appl Econ 24(4):537–559

Park HW, Sohn W (2006) Parameter estimation of the generalized extreme value distribution for structural health monitoring. Probab Eng Mech 21(4):366–376

Prakasa Rao BLS (1975) Tightness of probability measures generated by stochastic processes on metric spaces. Bull Inst Math Acad Sin 3(2):353–367

Roberts SJ (2000) Extreme value statistics for novelty detection in biomedical signal processing. IEEE Proc Sci Technol Meas 47(6):363–367

Shapiro A (1988) Towards a unified theory of inequality constrained testing in multivariate analysis. Int Stat Rev 56(1):49–62

Smith RL (1985) Maximum likelihood estimation in a class of non-regular cases. Biometrika 72(1):67–90

Smith RL (2001) Extreme value statistics in meteorology and environment. Environmental statistics. http://www.stat.unc.edu/postscript/rs/envstat/env.html

Stephens MA (1976) Asymptotic results for goodness-of-fit statistics with unknown parameters. Ann Stat 4(2):357–369

Stephens MA (1977) Goodness-of-fit for the extreme value distribution. Biometrika 64(3):583–588

Thompson ML, Reynolds J, Cox LH, Guttorp P, Sampson PD (2001) A review of statistical methods for the meteorological adjustment of tropospheric ozone. Atmos Environ 35(3):617–630

von Mises R (1954) (1936) La distribution de la plus grande de n valeurs. Reprinted in selected papers vol II, American Mathematical Society, Providence, RI, pp 271–294

Wang JZ, Cooke P, Li S (1996) Determination of domains of attraction based on a sequence of maxima. Aust J Stat 38(2):173–181

Wilks SS (1938) The large-sample distribution of the likelihood ratio for testing composite hypotheses. Ann Math Stat 9(1):60–62

Author information

Authors and Affiliations

Corresponding author

Additional information

The project supported by NSFC 11171065, NSFJS BK2011058 and SSFC 12BTJ015.

Appendices

Appendix A: Definitions and Lemmas

First of all, some definitions are presented below.

-

\(l(\varvec{\theta };x)=\log [g(x;\varvec{\theta })], \dot{l}(\varvec{\theta };x)=\frac{\partial }{\partial \varvec{\theta }}l(\varvec{\theta };x), l^{(2)}(\varvec{\theta };x)=\frac{\partial ^2}{\partial \varvec{\theta }\partial \varvec{\theta }^T}l(\varvec{\theta };x)\);

-

\(l_{q_n}(\varvec{\theta };x)=l_{q_n}[g(x;\varvec{\theta })], \dot{l}_{q_n}(\varvec{\theta };x)=\frac{\partial }{\partial \varvec{\theta }}l_{q_n}(\varvec{\theta };x), l^{(2)}_{q_n}(\varvec{\theta };x)=\frac{\partial ^2}{\partial \varvec{\theta }\partial \varvec{\theta }^T}l_{q_n}(\varvec{\theta };x)\);

-

\(K_n(\varvec{\theta })=E_{\varvec{\theta }_0}[\dot{l}_{q_n}(\varvec{\theta };x)\dot{l}_{q_n}(\varvec{\theta };x)^T], J_n(\varvec{\theta })=E_{\varvec{\theta }_0}[l^{(2)}_{q_n}(\varvec{\theta };x)]\), where \(E_{\varvec{\theta }_0}[\cdot ]\) denote the expectation with respect to the true p.d.f \(g(x;\varvec{\theta }_0)\);

-

\({\varvec{\theta }}=(\xi ,\varvec{\phi }^T)^T, \varvec{\phi }=(\mu ,\sigma )^T, \widehat{\varvec{\theta }}_n= (\widehat{\xi },\widehat{\varvec{\phi }}^T)^T\) is the \(\text{ ML}_\mathrm{q}\text{ E}\) under the whole parameter space \(\varvec{\Theta }\) and \(\bar{\varvec{\theta }}= (\xi _0,\bar{\varvec{\phi }}^T)^T\) is the \(\text{ ML}_\mathrm{q}\text{ E}\) under \(\varvec{\Theta }_0\) of the hypothesis test (2) and (4);

-

\(\Delta \varvec{\theta }=\widehat{\varvec{\theta }}_n-\bar{\varvec{\theta }}=(\widehat{\xi }-\xi _0,\widehat{\varvec{\phi }}^T-\bar{\varvec{\phi }}^T)^T \doteq (\Delta \widehat{\xi },\Delta \bar{\varvec{\phi }}^T)^T\);

-

\(\dot{L}_{q_n}(\varvec{\theta })=(\dot{L}_{q_{n}}^1(\varvec{\theta }),\dot{L}_{q_{n}}^2(\varvec{\theta })^T)^T\), where \(\dot{L}_{q_{n}}^1(\varvec{\theta })=\frac{\partial }{\partial \xi }L_{q_n}(\varvec{\theta }), \dot{L}_{q_{n}}^2(\varvec{\theta })=\frac{\partial }{\partial \varvec{\phi }}L_{q_n}(\varvec{\theta })\);

-

$$\begin{aligned} L_{q_n}^{(2)}(\varvec{\theta })=\left(\begin{array}{l l}L_{q_n11}(\varvec{\theta })&L_{q_n12}(\varvec{\theta })\\ L_{q_n21}(\varvec{\theta })&L_{q_n22}(\varvec{\theta })\end{array} \right), \end{aligned}$$

where \(L_{q_n11}(\varvec{\theta })=\frac{\partial ^2}{\partial \xi ^2}L_{q_n}(\varvec{\theta }), L_{q_n12}(\varvec{\theta })=L_{q_n21}(\varvec{\theta })=\frac{\partial ^2}{\partial \xi \partial \varvec{\phi }}L_{q_n}(\varvec{\theta }), L_{q_n22}(\varvec{\theta })=\frac{\partial ^2}{\partial \varvec{\phi }\partial \varvec{\phi }^T}L_{q_n}(\varvec{\theta })\);

-

$$\begin{aligned}{}[L_{q_n}^{(2)}(\varvec{\theta })]^{-1}=\left(\begin{array}{l l}L_{q_n}^{11}(\varvec{\theta })&L_{q_n}^{12}(\varvec{\theta })\\ L_{q_n}^{21}(\varvec{\theta })&L_{q_n}^{22}(\varvec{\theta })\end{array} \right), \end{aligned}$$

where the partitioning form of \([L_{q_n}^{(2)}(\varvec{\theta })]^{-1}\) is the same as that of \(L_{q_n}^{(2)}(\varvec{\theta })\);

-

\(h(\varvec{\theta })=\xi -\xi _0, \dot{h}(\varvec{\theta })=(1,0,0)^T, h^{(2)}(\varvec{\theta })=\varvec{0}_{3\times 3}\);

-

\(P=I^{-1}(\varvec{\theta }_0)-I^{-1}(\varvec{\theta }_0)\dot{h}(\varvec{\theta }_0)(\dot{h}^T(\varvec{\theta }_0) I^{-1}(\varvec{\theta }_0)\dot{h}(\varvec{\theta }_0))^{-1}\dot{h}^T(\varvec{\theta }_0)I^{-1}(\varvec{\theta }_0)\);

-

Let \(M_n(\varvec{\theta })=2[L_{q_n}(\bar{\varvec{\theta }})-L_{q_n}({\varvec{\theta }})]\) and \(D_n(\varvec{\beta })=M_n(n^{-1/2}\varvec{\beta }+\varvec{\theta }_0)\), where \(\varvec{\beta }=n^{1/2}(\varvec{\theta }-\varvec{\theta }_0)\);

-

\(D(\varvec{\beta })=(\varvec{Z}-\varvec{\beta })^TI(\varvec{\theta }_0)(\varvec{Z}-\varvec{\beta })-\varvec{Z}^T(I-PI(\varvec{\theta }_0))^TI(\varvec{\theta }_0)(I-PI(\varvec{\theta }_0))\varvec{Z}\), where \(\varvec{Z}\sim N(\varvec{0},I^{-1}(\varvec{\theta }_0))\) and \(I\) is a \(3\times 3\) unit matrix;

-

\(S_n=\{\varvec{\beta }: h(n^{-1/2}\varvec{\beta }+\varvec{\theta }_0)\ge 0\}, S_0=\{\varvec{\beta }: \dot{h}^T(\varvec{\theta }_0)\varvec{\beta }\ge 0\}\).

Lemma 5.1

If \(\varvec{\theta }_n^*\) is the value such that \(E_{\varvec{\theta }_0}[\dot{l}_{q_n}(\varvec{\theta }_n^*;x)]=0\), then we have

Proof

First we denote \(A(\varvec{\theta })=E_{\varvec{\theta }_0}[\dot{l}_{q_n}(\varvec{\theta };x)]\), then from the definition of \(\varvec{\theta }_n^*\), it is easily seen that \(A(\varvec{\theta }_n^*)=0\). By Taylor’s theorem, we can obtain that

where \(\breve{\varvec{\theta }}=\alpha \varvec{\theta }_n^*+(1-\alpha )\varvec{\theta }_0, 0\le \alpha \le 1\). Now will prove that \(A(\varvec{\theta }_0)=\mathcal O (1-q_n)\) and the eigenvalue of \(\triangledown _{\varvec{\theta }}A(\breve{\varvec{\theta }})\) is bounded away from zero.

We denote \(B(y,\varvec{\theta }_0)=E_{\varvec{\theta }_0}[\dot{l}_{1-y}(\varvec{\theta }_0;x)]\), where \(B(1-q_n,\varvec{\theta }_0)=A(\varvec{\theta }_0)\). then substitute the expression of \(\dot{l}_{1-y}(\varvec{\theta };x)\) into \(B(y,\varvec{\theta }_0)\), we have

where \(\Vert \cdot \Vert \) denote the \(l_2\) norm. The last inequality in (16) holds according the Cauchy–Schwarz inequality. As the eigenvalues of the Fisher information matrix \(E_{\varvec{\theta }_0}[\dot{l}(\varvec{\theta }_0;x)\dot{l}(\varvec{\theta }_0;x)^T]\) are bounded, we can derive that \(\{E_{\varvec{\theta }_0}[\Vert \dot{l}(\varvec{\theta }_0;x)\Vert ^2]\}^{\frac{1}{2}}\) is also bounded. By substituting the expression of \(g(\varvec{\theta }_0;x)\) into \(E_{\varvec{\theta }_0} [\Vert g^{y}(\varvec{\theta }_0;x)\Vert ^2]\), it can be easily verified that,

Thus, for any given \(0\le y\le 1, B(y,\varvec{\theta }_0)\) is bounded. Then by Taylor’s theorem, we derive the Taylor’s series expansion at \(y=0\),

where, according to the property of the MLE, we have \(B(0,\varvec{\theta }_0)=0\). As \(B(y,\varvec{\theta }_0)\) is bounded, then

Similar to the argument below (16), by substituting \(g(\varvec{\theta }_0;x)\) into \(E_{\varvec{\theta }_0} [\Vert \log [g(\varvec{\theta }_0;\) \( x)]\Vert ^2]\), we can obtain that \(\triangledown _yB(0,\varvec{\theta }_0)\) is bounded. Therefore, it can be obviously found that \(B(y,\varvec{\theta }_0)=\mathcal O (y)\), in other word,

Next we consider the term \(\triangledown _{\varvec{\theta }}A(\breve{\varvec{\theta }})\) in the right hand of (15). Then it can be derived that

According to the definition of \(\breve{\varvec{\theta }}\) and the fact \(\lim \nolimits _{n\rightarrow \infty }\varvec{\theta }_n^*=\varvec{\theta }_0\), it can be found that \(\breve{\varvec{\theta }}\rightarrow \varvec{\theta }_0\) as \(n\rightarrow \infty \). As \(J_n({\varvec{\theta }})\) is continuous at \(\varvec{\theta }_0\), then for any given \(\epsilon _1>0\), there exists an integer \(N_1>0\), as \(n\ge N_1\), we have \(\Vert J_n(\breve{\varvec{\theta }})-J_n({\varvec{\theta }}_0)\Vert <\epsilon _1\). Now we consider the expression of \(J_n({\varvec{\theta }}_0)\). By substituting the expression of \(g(\varvec{\theta }_0;x)\) into \(J_n({\varvec{\theta }}_0)\), it can be derived that

Denote \(H_1(y)=E_{\varvec{\theta }_0}[l^{(2)}({\varvec{\theta }}_0;x)g^{y}(\varvec{\theta }_0;x)]\) and \(H_2(y)=E_{\varvec{\theta }_0}[\dot{l}({\varvec{\theta }}_0;x)\dot{l}^T({\varvec{\theta }}_0;x)\) \(g^{y}(\varvec{\theta }_0;x)]\). As \(H_1(y)\) and \(H_2(y)\) are both continuous at \(y=0\), then for any given \(\epsilon _2\), there exits an integer \(N_2>0\), as \(n\ge N_2\), we have \(\Vert H_1(1-q_n)-H_1(0)\Vert <\epsilon _2\) and \(\Vert H_2(1-q_n)-H_2(0)\Vert <\epsilon _2\), where \(-H_1(0)\) and \(H_2(0)\) are both the Fisher information matrix of \(G(x;\varvec{\theta }_0), I(\varvec{\theta }_0)\).

Without loss of generality, we suppose that there exits an integer \(N_3>0\), as \(n\ge N_3, 1-q_n<\frac{1}{6}\). Now we set \(\epsilon _1=\frac{1}{6}\Vert H_1(0)\Vert , \epsilon _2=\frac{1}{7}\Vert H_1(0)\Vert \), then there exits an integer \(N_0>\max \{N_1, N_2, N_3\}\), as \(n\ge N_0\), it can be derived that

As a result, it is easily proved that the eigenvalue of \(\triangledown _{\varvec{\theta }}A(\breve{\varvec{\theta }})\) is bounded away from zero.

Rearrange (15) and we can get the result below

As the properties of \(A(\varvec{\theta }_0)\) and \(\triangledown _{\varvec{\theta }}A(\breve{\varvec{\theta }})\) we derived above, it can be obviously found that

which establishes the lemma. \(\square \)

Lemma 5.2

As \(1-q_n\sim cn^{-\alpha }\) for some positive constant \(c\), if \(\alpha \ge \frac{1}{2}\), we have

Proof

By Taylor’s theorem, we derive the Taylor’s series expansion of \(L_{q_n}(\varvec{\theta })\) at \(\varvec{\theta }=\bar{\varvec{\theta }}\),

where \(R_n=\frac{1}{2}\Delta \varvec{\theta }^TL_{q_n}^{(3)}(\breve{\varvec{\theta }})\Delta \varvec{\theta }, \breve{\varvec{\theta }}=\alpha \widehat{\varvec{\theta }}_n+(1-\alpha )\bar{\varvec{\theta }}, 0\le \alpha \le 1.\) By Lemma 5.1, it can be easily obtained that

Ferrari and Yang (2010) presented that \(\sqrt{n}(\widehat{\varvec{\theta }}_n-\varvec{\theta }_n^*)=\mathcal O _p(1)\) and \(\frac{1}{n}L_{q_n}^{(3)}(\breve{\varvec{\theta }})=\mathcal O _p(1)\), as \(1-q_n\sim cn^{-\alpha },\alpha \ge \frac{1}{2}\), we have \(R_n=\mathcal O _p(1)\).

Since \(\dot{L}_{q_n}(\widehat{\varvec{\theta }}_n)=0\), by substituting the definition of \(\dot{L}_{q_n}(\varvec{\theta })\) and \(\dot{L}^{(2)}_{q_n}(\varvec{\theta })\), we can derive that

As \(\dot{L}^2_{q_n}(\bar{\varvec{\theta }})=0\), we have \(0=-L_{q_n21}(\bar{\varvec{\theta }})\Delta \widehat{\xi }-L_{q_n22}(\bar{\varvec{\theta }})\Delta \bar{\varvec{\phi }} +\mathcal O _p(1)\). As \(J_n(\varvec{\theta })\) is continuous at \(\varvec{\theta }=\varvec{\theta }_0\), similar to the argument in Lemma 5.1, it can be derived that \(J_n(\bar{\varvec{\theta }})=\mathcal O (1)\). Then the fact \(L_{q_n}^{(2)}(\bar{\varvec{\theta }})=\mathcal O _p(n)\) follows. By taking some simple arrangements, we have

The second result of the lemma follows. Meanwhile, we also have

Then we have

This establishes the lemma. \(\square \)

Lemma 5.3

As \(1-q_n\sim cn^{-\alpha }\), where \(c>0, \alpha >\frac{1}{2}\), for the hypothesis test (4), suppose that \(\varvec{\theta }_0\in \varvec{\Theta }_0\), we have

Proof

Denote that \(\varvec{\psi }=(\varvec{\theta }^T,\lambda )^T, \bar{\varvec{\psi }}=(\bar{\varvec{\theta }}^T,\bar{\lambda })^T, \varvec{\psi }_0=(\varvec{\theta }_0^T,\lambda _0)^T\) and \(T_{q_n}(\varvec{\psi })=L_{q_n}(\varvec{\theta })+\lambda h(\varvec{\theta })\). By Taylor’s theorem, we derive the Taylor’s series expansion of \(T_{q_n}(\varvec{\psi })\) at \(\varvec{\psi }=\varvec{\psi }_0\),

where \(R_n=(\bar{\varvec{\psi }}-\varvec{\psi }_0)^TT^{(3)}_{q_n}(\breve{\varvec{\psi }})(\bar{\varvec{\psi }}-\varvec{\psi }_0), \breve{\varvec{\psi }}=\alpha \bar{\varvec{\psi }}+(1-\alpha )\varvec{\psi }_0, 0\le \alpha \le 1.\) According to the definition of \(h(\varvec{\theta })\) and the fact \(\bar{\varvec{\psi }}-\varvec{\psi }_0=\mathcal O _p(n^{-1/2}), n^{-1}T^{(3)}_{q_n}(\breve{\varvec{\psi }})=\mathcal O _p(1)\), we have \(R_n=\mathcal O _p(1)\).

Since \(\lambda _0=0, h({\varvec{\theta }}_0)=0\) and \(\dot{T}_{q_n}(\bar{\varvec{\psi }})=0\), then by taking some arrangements of (31), it can be written as

where \(P=I^{-1}(\varvec{\theta }_0)-I^{-1}(\varvec{\theta }_0)\dot{h}(\varvec{\theta }_0)(\dot{h}^T(\varvec{\theta }_0) I^{-1}(\varvec{\theta }_0)\dot{h}(\varvec{\theta }_0))^{-1}\dot{h}^T(\varvec{\theta }_0)I^{-1}(\varvec{\theta }_0)\). The last equality holds by applying the matrix theory and the fact \(-n^{-1}L_{q_n}^{(2)}({\varvec{\theta }}_0)=I(\varvec{\theta }_0)+o_p(1)\). As a result, we can derive that

Thus the desired result follows. \(\square \)

Lemma 5.4

As \(1-q_n\sim cn^{-\alpha }\), where \(c>0, \alpha >\frac{1}{2}\), for any fixed \(\varvec{\beta }, D_n(\varvec{\beta })\) converges to \(D(\varvec{\beta })\) in distribution, that is,

Proof

First we consider the function series \(M_n(\varvec{\theta })\) instead of \(D_n(\varvec{\beta })\).

By taking Taylor’s series expansion of \(L_{q_n}({\varvec{\theta }})\) at \(\varvec{\theta }=\widehat{\varvec{\theta }}_n\), it is known that,

By taking Taylor’s series expansion of \(L_{q_n}(\bar{\varvec{\theta }})\) at \(\varvec{\theta }=\widehat{\varvec{\theta }}_n\) and applying Lemma 5.3, we have

where \(I\) is a \(3\times 3\) unit matrix. According to the fact \(J_n(\widehat{\varvec{\theta }}_n)\rightarrow -I(\varvec{\theta }_0), K_n(\widehat{\varvec{\theta }}_n)\rightarrow I(\varvec{\theta }_0)\) as \(n\rightarrow \infty \) and \(\sqrt{n}(J_n^{-1}(\widehat{\varvec{\theta }}_n)K_n(\widehat{\varvec{\theta }}_n)J_n^{-1}(\widehat{\varvec{\theta }}_n))^{-1/2} (\widehat{\varvec{\theta }}_n-\varvec{\theta }_0)\mathop {\longrightarrow }\limits ^{d}N(\varvec{0},I)\) (see Ferrari and Yang 2010), through appealing to Theorem 5.1 of Billingsley (1968) and combining (36)–(38), Lemma 5.4 has been proved. \(\square \)

Lemma 5.5

As \(1-q_n\sim cn^{-\alpha }\), where \(c>0, \alpha >\frac{1}{2}\), the stochastic processes \(\{D_n(\varvec{\beta }), \varvec{\beta }\in \mathcal D \}\) converge in distribution to \(\{D(\varvec{\beta }),\varvec{\beta }\in \mathcal D \}\), that is,

Furthermore, the test statistic for (4) \(L_{q_n}R=-D_n(\widetilde{\varvec{\beta }}_n)\) converges in distribution to \(-D(\widetilde{\varvec{\beta }})\), where \(\widetilde{\varvec{\beta }}_n\) and \(\widetilde{\varvec{\beta }}\) are the optimal solution under \(S_n\) and \(S_0\) respectively.

Proof

According to the theory on the convergence of stochastic processes (see Prakasa Rao 1975), \(\{D_n(\varvec{\beta }),\varvec{\beta }\in \mathcal D \}\) converges in distribution to \(\{D(\varvec{\beta }),\varvec{\beta }\in \mathcal D \}\) if and only if the following two conditions are satisfied:

-

(1)

Any finite dimensional distribution of process \(\{D_n(\varvec{\beta }),\varvec{\beta }\in \mathcal D \}\) converges weakly to the corresponding finite dimensional distribution of \(\{D(\varvec{\beta }),\varvec{\beta }\in \mathcal D \}\);

-

(2)

For any \(\epsilon >0\) it holds that

$$\begin{aligned} \lim _{n\rightarrow \infty }\sup _{h\rightarrow 0}P\left\{ \sup _{\Vert \varvec{\beta }^{(1)}-\varvec{\beta }^{(2)}\Vert \le h}|D_n(\varvec{\beta }^{(1)})- D_n(\varvec{\beta }^{(2)})|>\epsilon , \varvec{\beta }^{(1)},\varvec{\beta }^{(2)}\in \mathcal D \right\} =0. \nonumber \\ \end{aligned}$$(40)

Now we check the two conditions, first, for condition (1), by Cramér-Wold Theorem, it suffices to show that for any \(\alpha _1,\ldots ,alpha_r\in \mathbb{R }\) and any \(\varvec{\beta }^{(1)},\ldots ,\varvec{\beta }^{(r)}\in \mathcal D \), we have

This convergence result follows as the same way as Lemma 5.4. The details is omitted here.

Next we discuss the condition (2), for \(\varvec{\beta }^{(1)},\varvec{\beta }^{(2)}\) in \(\mathcal D \), we have

By Taylor’s Theorem, for \(i=1,2\), it can be obtained that

As a result, when \(n\rightarrow \infty \) and \(\Vert \varvec{\beta }^{(1)}-\varvec{\beta }^{(2)}\Vert \rightarrow 0\), for any given \(\epsilon >0\), we can derive that

and then the following result holds with probability approaching one

As \(\mathcal D \) is compact and \(n^{-1/2}\dot{L}^T_{q_n}({\varvec{\theta }}_0)=\mathcal O _p(1)\), we have

Since the two conditions are satisfied, \(\{D_n(\varvec{\beta }),\varvec{\beta }\in \mathcal D \}\) converges in distribution to \(\{D(\varvec{\beta }),\varvec{\beta }\in \mathcal D \}\). Then by the similar arguments as has been applied in Theorem 4(1) (see Liu 2007), where the continuous mapping theorem (see Billingsley 1968) is used, the test statistic for (4) \(L_{q_n}R=-D_n(\widetilde{\varvec{\beta }}_n)\) converges in distribution to \(-D(\widetilde{\varvec{\beta }})\), where \(\widetilde{\varvec{\beta }}_n\) and \(\widetilde{\varvec{\beta }}\) are the optimal solution under \(S_n\) and \(S_0\) respectively. Therefore Lemma 5.5 has been proved. \(\square \)

Appendix B: Proofs of Theorems

1.1 Proof of Theorem 2.1

By Taylor’s theorem, we derive the Taylor’s series expansion of \(L_{q_n}(\varvec{\theta })\) at \(\varvec{\theta }=\bar{\varvec{\theta }}\), then the test statistic \(L_{q_n}\)R can be written as

where \(R_n=\frac{1}{3}n^{-\frac{1}{2}}\sum _i^3\sum _j^3\sum _k^3n^{-1}L_{q_ni,j,k}(\breve{\varvec{\theta }}) \sqrt{n}\Delta \varvec{\theta }_i\sqrt{n}\Delta \varvec{\theta }_j\sqrt{n}\Delta \varvec{\theta }_k.\) As \(n^{-1}\) \(L_{q_ni,j,k}(\breve{\varvec{\theta }})\) is bounded and \(\sqrt{n}\Delta \varvec{\theta }=\mathcal O _p(1)\). Then we have \(R_n=\mathcal O _p(n^{-\frac{1}{2}})\). By Lemma 5.2 and the partitioned matrix result \(L_{q_n}^{11}(\bar{\varvec{\theta }})=[L_{q_n11}(\bar{\varvec{\theta }})-L_{q_n12}(\bar{\varvec{\theta }})L^{-1}_{q_n22}(\bar{\varvec{\theta }})\) \( L_{q_n21}(\bar{\varvec{\theta }})]^{-1}\), (47) can be written as

According to the asymptotical normality of the \(\text{ ML}_\mathrm{q}\text{ E}\) showed in Ferrari and Yang (2010),

where \(V_n(\varvec{\theta })=J_n^{-1}(\varvec{\theta })K_n(\varvec{\theta })J_n^{-1}(\varvec{\theta })\). As the null hypothesis is true, by Lemma 5.1 and the continuity of \(K_n(\varvec{\theta }),J_n(\varvec{\theta })\) at \(\varvec{\theta }\), it can be found that \(V_n(\varvec{\theta }_n^*)\rightarrow I^{-1}({\varvec{\theta }}_0)\) as \(n\rightarrow \infty \). Furthermore, we have

where \(\xi ^*\) is the first component of \(\varvec{\theta }_n^*\). As the null hypothesis of (2) is true, then \(\bar{\varvec{\theta }}\) converges to the true parameter \(\varvec{\theta }_0\) in probability. Thus (48) can be written as

If \(\alpha >\frac{1}{2}\), we have \([I^{11}({\varvec{\theta }}_0)]^{\frac{1}{2}}\sqrt{n}(\widehat{\xi }-\xi _0+\xi _0-\xi ^*) =[I^{11}({\varvec{\theta }}_0)]^{\frac{1}{2}}\sqrt{n}\Delta \widehat{\xi }\), combine (50) and (51), it can be derived that \(L_{q_n}\)R is asymptotically \(\chi ^2\)-distributed with one degree of freedom.

If \(\alpha =\frac{1}{2}\), we have \([I^{11}({\varvec{\theta }}_0)]^{\frac{1}{2}}\sqrt{n}(\widehat{\xi }-\xi _0+\xi _0-\xi ^*) =[I^{11}({\varvec{\theta }}_0)]^{\frac{1}{2}}\sqrt{n}\Delta \widehat{\xi }+[I^{11}({\varvec{\theta }}_0)]^{\frac{1}{2}}\sqrt{n}(\xi _0-\xi ^*)\), where \([I^{11}({\varvec{\theta }}_0)]^{\frac{1}{2}}\sqrt{n}\Delta \widehat{\xi }\mathop {\longrightarrow }\limits ^{d}N(0,1)\) as \(n\rightarrow \infty \) and \([I^{11}({\varvec{\theta }}_0)]^{\frac{1}{2}}\sqrt{n}(\xi _0-\xi ^*)=\mathcal O (1)\). As a result, if \(\lim _{n\rightarrow \infty }n(\xi _0-\xi ^*)^2I^{11}({\varvec{\theta }}_0)\ne 0\), the test statistic \(L_{q_n}\)R is asymptotically noncentral \(\chi ^2\)-distributed with one degree of freedom and noncentral parameter \(\delta =\lim _{n\rightarrow \infty }n(\xi _0-\xi ^*)^2I^{11}({\varvec{\theta }}_0)\).

If \(\alpha <\frac{1}{2}\), it can be derived that \([I^{11}({\varvec{\theta }}_0)]^{\frac{1}{2}}\sqrt{n}(\widehat{\xi }-\xi _0+\xi _0-\xi ^*)=\mathcal O _p(n^{\frac{1}{2}-\alpha })\), therefore the statistic \(L_{q_n}\)R diverges as \(n\rightarrow \infty \). Thus the desired result is obtained.

1.2 Proof of Theorem 2.2

Similar to the proof of Theorem 2.1, by Taylor’s theorem, we derive the Taylor’s series expansion of \(L_{q_n}R\) at \(\varvec{\theta }=\bar{\varvec{\theta }}\), then the test statistic \(L_{q_n}R\) can be written as

where \(R_n=\frac{1}{3}n^{-\frac{1}{2}}\sum _i^3\sum _j^3\sum _k^3n^{-1}L_{q_ni,j,k}(\breve{\varvec{\theta }}) \sqrt{n}\Delta \varvec{\theta }_i\sqrt{n}\Delta \varvec{\theta }_j\sqrt{n}\Delta \varvec{\theta }_k, \breve{\varvec{\theta }}=\alpha \widehat{\varvec{\theta }}_n+(1-\alpha )\bar{\varvec{\theta }}, 0\le \alpha \le 1.\) As the sequence of alternatives \(H_n: \xi =\xi _0+h/\sqrt{n}\) are true, then we have \(\sqrt{n}(\bar{\varvec{\theta }}-{\varvec{\theta }}_0)=\mathcal O _p(1)\). Consequently, it can be derived that \(n^{-1}L_{q_ni,j,k}(\breve{\varvec{\theta }})=\mathcal O _p(1), \sqrt{n}(\widehat{\varvec{\theta }}-\bar{\varvec{\theta }})=\mathcal O _p(1)\) and then \(R_n=\mathcal O _p(n^{-\frac{1}{2}})\). By Lemma 5.2 and the partitioned matrix result, the same result for (52) can also be obtained

According to the asymptotical normality of the modified MSPE, when the alternatives are true, we have

As the alternatives are true, then \(\bar{\varvec{\theta }}\) converges to the true parameter \(\varvec{\theta }_0\) in probability. (53) can be written as

where \(\eta _n\mathop {\longrightarrow }\limits ^{d}N(0,I^{11}(\varvec{\theta }_0))\) as \(n\rightarrow \infty \). According to the definition of the noncentral \(\chi ^2\) distribution, when the alternatives are true, the proposed test statistic \(L_{q_n}R\) is asymptotically noncentral \(\chi ^2\)-distributed with one degree of freedom and noncentral parameter \(\delta =h^2[I^{11}({\varvec{\theta }}_0)]^{-1}\). Thus the desired result is obtained.

1.3 Proof of Theorem 2.3

In Lemma 5.5, we have pointed out that, for the hypothesis test (4),

where \(\widetilde{\varvec{\beta }}_n\) and \(\widetilde{\varvec{\beta }}\) are the optimal solution under \(S_n\) and \(S_0\) respectively. as a result, here we discuss the distribution of \(-D(\widetilde{\varvec{\beta }})\) instead. According to the definition of \(D(\varvec{\beta })\), we have

From the definition of \(\bar{\chi }^2\) distribution given by Shapiro (1988), we have

and the polar cone of \(S_0\) is given by

According to the definition of the polar cone and the equation (3.5) in Shapiro (1988), \(A_1\) can be written as

where \(X=(\dot{h}^T(\varvec{\theta }_0)I^{-1}(\varvec{\theta }_0)\dot{h}(\varvec{\theta }_0))^{-1}\dot{h}^T(\varvec{\theta }_0)\varvec{Z}, Q(\varvec{\theta }_0)=\dot{h}^T(\varvec{\theta }_0)I^{-1}(\varvec{\theta }_0)\dot{h}(\varvec{\theta }_0)\). According to the behavior of \(\varvec{Z}\), we have the result \(X\sim N(0,Q^{-1}(\varvec{\theta }_0))\). \(A_3\) can be written as

Now we show that \(A_2=A_3\), as \(P=I^{-1}(\varvec{\theta }_0)-I^{-1}(\varvec{\theta }_0)\dot{h}(\varvec{\theta }_0)(\dot{h}^T(\varvec{\theta }_0) I^{-1}(\varvec{\theta }_0)\) \(\dot{h}(\varvec{\theta }_0))^{-1}\dot{h}^T(\varvec{\theta }_0)I^{-1}(\varvec{\theta }_0)\), then

Thus, we can derive that, together with (57) and (60),

Consequently, \(-D(\widetilde{\varvec{\beta }})\sim \bar{\chi }^2(Q^{-1}(\varvec{\theta }_0),(\mathbb{R }^+)^0)\), where \((\mathbb{R }^+)^0\) is the polar cone of \(\mathbb{R }^+\). In other word,

where the \(\chi ^2_0\) is a degenerate distribution with all its probability mass at zero and \(\chi ^2_1\) is a \(\chi ^2\) distribution with one degree of freedom. According to Shapiro (1988) and Barmi and Dykstra (1999), the exact form of the weights here are as follows:

where \(X\sim N(0,Q^{-1}(\varvec{\theta }_0))\). Then it can be obviously found that \(w_0=w_1=\frac{1}{2}\). As a result, the asymptotical behavior of the test statistic \(L_{q_n}\)R under the null hypothesis of (4) is asymptotically \(\frac{1}{2}\chi ^2_0+\frac{1}{2}\chi ^2_1\).

Rights and permissions

About this article

Cite this article

Huang, C., Lin, JG. & Ren, YY. Testing for the shape parameter of generalized extreme value distribution based on the \(L_q\)-likelihood ratio statistic. Metrika 76, 641–671 (2013). https://doi.org/10.1007/s00184-012-0409-5

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00184-012-0409-5