Abstract

We investigate the emergence of a collective periodic behavior in a frustrated network of interacting diffusions. Particles are divided into two communities depending on their mutual couplings. On the one hand, both intra-population interactions are positive; each particle wants to conform to the average position of the particles in its own community. On the other hand, inter-population interactions have different signs: the particles of one population want to conform to the average position of the particles of the other community, while the particles in the latter want to do the opposite. We show that this system features the phenomenon of noise-induced periodicity: in the infinite volume limit, in a certain range of interaction strengths, although the system has no periodic behavior in the zero-noise limit, a moderate amount of noise may generate an attractive periodic law.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Robust periodic behaviors are frequently encountered in life sciences and are indeed one of the most commonly observed self-organized dynamics. For instance, spontaneous brain activity exhibits rhythmic oscillations called alpha and beta waves [14]. From a theoretical standpoint, the mechanism driving the emergence of periodic behaviors in such systems is poorly understood. For example, neurons neither have any tendency to behave periodically on their own, nor are subject to any periodic forcing; nevertheless, they organize to produce a regular motion perceived at the macroscopic scale [28]. Various models of large families of interacting particles showing self-sustained oscillations have been proposed; we refer the reader to [1, 2, 4, 5, 7, 9,10,11, 13, 15, 16, 19, 20], where possible mechanisms leading to a rhythmic behavior are discussed and many related references are given.

Here we mention two mechanisms—which are of interest to us—capable to induce or enhance periodic behaviors in stochastic systems with many degrees of freedom. The first one is noise. The role of the noise is twofold: on the one hand, it can lead to oscillatory laws in systems of nonlinear diffusions whose deterministic counterparts do not display any periodic behavior [24, 25]; on the other hand, it can facilitate the transition from an equilibrium solution to macroscopic self-organized oscillations [6, 18, 27].

The second mechanism is the topology of the interaction network. It has been recently pointed out in [8, 13, 26] that a specific network structure may favor the emergence of collective rhythms. In particular, in [8, 26], the large volume dynamics of a two-population generalization of the mean field Ising model is considered. The system is shown to undergo a transition from a disordered phase, where the magnetizations of both populations fluctuate around zero, to a phase in which they both display a macroscopic regular rhythm. Such a transition is driven by inter- and intra-population interactions of different strengths and signs leading to dynamical frustration.

In the present paper we combine the two mechanisms described above and we design a toy model of frustratedly interacting diffusions that shows noise-induced periodicity, in the sense that periodic oscillations appear for an intermediate amount of noise. The peculiar feature of the model under consideration is that the structure of the interaction network depends on the noise in that it is the noise that switches on the interaction terms, thus leading to periodic dynamics.

2 Description of the model and outline of the results

Let us consider a system of N diffusive particles on \({\mathbb {R}}\). We divide the N particles into two disjoint communities of sizes \(N_1\) and \(N_2\) respectively and we denote by \(I_1\) (resp. \(I_2\)) the set of sites belonging to the first (resp. second) community. In this setting, we indicate with \(\left( x^{(N)}_{j}(t)\right) _{j=1, \dots , N_{1}}\) the positions at time t of the particles of population \(I_1\) and with \(\left( y^{(N)}_{j}(t)\right) _{j=1, \dots , N_{2}}\) the positions at time t of the particles of population \(I_2\), so that

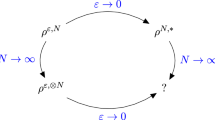

represents the state of the whole system at time t. The basic feature of our model is that the strength of the interaction between particles depends on the community they belong to: \(\theta _{11}\) and \(\theta _{22}\) tune the interaction between sites of the same community, whereas \(\theta _{12}\) and \(\theta _{21}\) control the coupling strength between particles of different groups. In fact, we construct the network of interacting diffusions visualized in Fig. 1.

A schematic representation of the interaction network. Particles are divided into two communities, \(I_1\) and \(I_2\). Ignoring inter-population interactions, each community taken alone is a mean field system with interaction strength \(\theta _{11}\) (\(i=1 \text{ or } 2\)). When we couple the two communities, population \(I_1\) (resp. \(I_2\)) influences the dynamics of population \(I_2\) (resp. \(I_1\)) through the average position of its particles with strength \(\theta _{21}\) (resp. \(\theta _{12}\))

A crucial feature for the system to show periodic behavior is frustration of the network, i.e. the inter-community interactions must have opposite signs. Now we introduce the microscopic dynamics we are interested in. Let

be the empirical means of the positions of the particles in populations \(I_1\) and \(I_2\), respectively, at time t. Moreover, denote by \(\alpha := \frac{N_1}{N}\) the fraction of sites belonging to the first group. Then, omitting time dependence for notational convenience, the interacting particle system we are going to study reads as

where \(\left( w_j(t); t \ge 0\right) _{j=1,\dots ,N}\) are N independent copies of a standard Brownian motion. Here \(\sigma \ge 0\) is the parameter that tunes the amount of noise in the system, since the diffusion coefficient is the same for each coordinate.

Remark 2.1

Existence and uniqueness of a strong solution to (2.1) can be established via the Khasminskii criterion [17, 21]: by taking the norm-like function

one obtains an inequality of the form \({\mathcal {L}}V\left( {\textbf{z}}^{(N)}\right) \le k \left[ 1 + V\left( {\textbf{z}}^{(N)}\right) \right] \), for some \(k>0\), with \({\mathcal {L}}\) the infinitesimal generator of diffusion (2.1).

Notice that in system (2.1) the two groups of particles interact only through their empirical means. This makes our model mean field and, in particular, when \(\theta _{11}=\theta _{22}=\theta _{12}=\theta _{21}=\theta > 0\), the system of equations (2.1) reduces to the mean field interacting diffusions considered in [12]. In a general setting, all the interaction parameters can be either positive or negative allowing both cooperative/conformist and uncooperative/anti-conformist interactions. In the present paper, we focus on the case \(\theta _{11}>0\), \(\theta _{22}>0\) and \(\theta _{12} \theta _{21}<0\). Moreover, without loss of generality, we make the specific choice \(\theta _{12}>0\) and \(\theta _{21}<0\), which means that particles in \(I_1\) tend to conform to the average particle position of community \(I_2\), whereas particles in \(I_2\) prefer to differ from the average particle position of community \(I_1\) (see Eq. (2.1)).

Numerical simulations of system (2.1) with large N show that \(m^{(N)}_1(t)\) and \(m^{(N)}_2(t)\) display an oscillatory behavior in appropriate regions of the parameter space (see Sect. 3). This led us to investigate the thermodynamic limit of our system of interacting diffusions, that is, the limit when the number of particles goes to infinity. It is known that solutions of SDEs like (2.1) cannot have a time-periodic law, as these solutions are either positive recurrent, null recurrent or transient; see [24, 25] and references therein. However, the mean field interaction in (2.1) has a peculiar feature. When the interaction is of this type, at any time t, the empirical average of the particle positions in (2.1) is expected to converge, as the number of particles goes to infinity, to a limit given by the solution of a nonlinear SDE. Nonlinear SDEs are SDEs where the coefficients depend on the law of the solution itself and, in contrast with systems like (2.1), such nonlinear SDEs might have solutions with periodic law, see [25]. Therefore, the oscillations in the trajectories of \(m_1^N(t)\) and \(m_2^N(t)\) shown by simulations can be theoretically explained via the thermodynamic limit of the system.

We outline here the main results presented in the sequel. We follow an approach similar to the one adopted in [6].

-

1.

In Sect. 4.1 we prove that, starting from i.i.d. initial conditions, independence propagates in time when taking the infinite volume limit. In particular, as N grows large, the time evolution of a pair of representative particles, one for each population, is described by the limiting dynamics

$$\begin{aligned} dx= & {} \left[ -x^3+x-\alpha \theta _{11}\left( x-{\mathbb {E}}[x]\right) -(1-\alpha )\theta _{12}\left( x-{\mathbb {E}}[y]\right) \right] dt+\sigma dw_1 \nonumber \\ dy= & {} \left[ -y^3+y-\alpha \theta _{21}\left( y-{\mathbb {E}}[x]\right) -(1-\alpha )\theta _{22}\left( y-{\mathbb {E}}[y]\right) \right] dt+\sigma dw_2, \end{aligned}$$(2.2)where notation \({\mathbb {E}}\) stands for the expectation with respect to the probability measure \( Q(t;\cdot )=\text {Law}(x(t),y(t))\), for every \(t \in [0,T]\), and \((w_1(t); 0 \le t \le T)\) and \((w_2(t); 0 \le t \le T)\) are two independent standard Brownian motions. In particular, we show that, for all \(T > 0\) and for all \(t\in [0,T]\), any random vector of the form \(\left( x^{(N)}_{i_1}(t),\dots ,x^{(N)}_{i_{k_1}}(t), y^{(N)}_{j_1}(t),\dots , y^{(N)}_{j_{k_2}}(t)\right) \) converges in distribution, as N goes to infinity, to a vector \((x_1(t), \dots , x_{k_1}(t),\) \(y_1(t), \dots , y_{k_2}(t))\), whose entries are independent random variables such that \(x_i(t)\) (\(i=1, \dots , k_1\)) are copies of the solution to the first equation in (2.2) and \(y_i(t)\) (\(i=1, \dots , k_2\)) are copies of the solution to the second equation in (2.2). This is usually referred to as the phenomenon of the propagation of chaos. See [3] for a proof in a general framework of weakly interacting diffusions with jumps. Notice that our model is a two-population version of the model in Section 4 in [3], as here there are no jumps and the drift term in (2.2) satisfies their Assumption 3.

-

2.

Being nonlinear, system (2.2) is a good candidate for having a solution with periodic law. It is however very hard to gain insight into its long-time behavior or to find periodic solutions as the problem is infinite dimensional, due to the presence of nonlinearity and noise. As a first step, in Sect. 4.2 we study the limiting system (2.2) in the absence of noise and, in particular, we argue that oscillatory behaviors are not observed when \(\sigma =0\). This remains true for small values of \(\sigma >0\) in some parameter regimes. See Sect. 3 for details.

-

3.

In Sects. 4.3 and 4.4 we tackle system (2.2) with noise. We show that, in the presence of an appropriate amount of noise, the limiting positions of representative particles of the two populations evolve approximately as a pair of independent Gaussian processes (small-noise Gaussian approximation). This reduces the problem to a finite dimensional one, since we provide the explicit (deterministic) equations for the mean and variance of those processes. The dynamical system describing the time evolution of means and variances has a Hopf bifurcation and, as a consequence, in a certain range of the noise intensity, it has a limit cycle as a long-time attractor, implying that the laws of the previously mentioned Gaussian processes are periodic. Thus, the small-noise Gaussian approximation gives a good qualitative description of the emergence of the self-sustained oscillations observed for system (2.1) (see Sect. 3).

Trajectories of \(\left( m_1^{(N)}(t),m_2^{(N)}(t)\right) \) obtained with numerical simulations of system (2.1), in the absence of noise (first column), in the presence of an intermediate amount of noise (second column) and of a high-intensity noise (third column). In all cases, we considered \(10^6\) iterations with a time-step \(dt=0.005\), 1000 particles, \(\alpha = 0.5\), \(\theta _{11}=\theta _{22}=8\). From top to bottom: \(A-1<B<A+2\), in particular, \(A=2\) and \(B=2.5\); \(B=A+2\), in particular, \(A=2\) and \(B=4\); \(B>A+2\), in particular, \(A=2\) and \(B=7\).

We see that, during a time interval of the same length (namely, \(10^6\) iterations), when the intensity of the noise is below a certain threshold (first column, \(\sigma =0\) in all the three panels) no periodic behavior arises in any of the three considered cases and the system ends up in one of the stable equilibria. On the contrary, when the intensity of the noise is large (third column, \(\sigma =5\) in all the three panels), the zero-mean Brownian disturbance dominates and the trajectories resemble random excursions around the origin. Whenever the amount of noise is intermediate (second column, from top to bottom: \(\sigma =0.5\), \(\sigma =0.1\) and \(\sigma =0.6\)), self-sustained oscillations appear; for further details about this scenario see Fig. 3

Analysis of the period of the trajectories of \(\left( m_1^{(N)}(t),m_2^{(N)}(t)\right) \) obtained via numerical simulations of system (2.1), in the presence of an intermediate amount of noise. In all cases, we considered \(10^6\) iterations with a time-step \(dt=0.005\), 1000 particles, \(\alpha = 0.5\), \(\theta _{11}=\theta _{22}=8\). From top to bottom: \(A-1<B<A+2\), in particular, \(A=2\) and \(B=2.5\), with \(\sigma =0.5\); \(B=A+2\), in particular, \(A=2\) and \(B=4\), with \(\sigma =0.1\); \(B>A+2\), in particular, \(A=2\) and \(B=7\), with \(\sigma =0.6\).

In the first column, we plotted the relevant spectral region of the averaged modulus of the discrete Fourier transform \(P\left( \nu \right) \) of \(m^{(N)}_2\) against the frequencies \(\nu \). For these figures we employed the Fourier function of Mathematica applied to a trajectory of \(m^{(N)}_2\) over \(10^{6}\) steps and averaged the obtained spectrum over \(M=50\) simulations. The average periods in the three cases were obtained as the reciprocals of the frequencies highlighted by the red peaks. In the second column, we plotted the time evolution of \(m^{(N)}_2\). The third column shows a trajectory of \(\left( m^{(N)}_1(t),m^{(N)}_2(t)\right) \). There, red dashed horizontal lines mark the Poincaré sections we employed for the computation of the average period

Intuitively, the mechanism behind the emergence of periodicity in our system is similar to the one in [8] and can be described as follows. Imagine to start with two independent communities, that is, particles evolve according to system (2.1) with \(\theta _{12}=\theta _{21}=0\). When the intra-population interaction strengths \(\theta _{11}\) and \(\theta _{22}\) are large enough, each population tends to its own rest state, that one may guess to be (close to) one of the minima of the double well potential \(V(x)=\frac{x^4}{4}-\frac{x^2}{2}\) (see [12]). The key aspect, which we believe makes the model under consideration interesting, is that linking the two populations together within an interaction network with \(\theta _{12}\theta _{21}<0\) is not enough for periodic behaviors to appear. Dynamical frustration and, in turn, oscillations arise only when the noise intensity is large enough, as the interaction terms in system (2.1) are switched on by the noise. Indeed, when \(\sigma =0\) and all the particles in a same population share the same initial condition, the system is attracted to an equilibrium point where \(x_j^{(N)}=y_k^{(N)}=m_1^{(N)}=m_2^{(N)}\) (\(j=1, \dots , N_1\); \(k=1, \dots , N_2\))-see Fig. 2 - and, thus, the interaction terms vanish. It follows that the zero-noise dynamics does not display any periodic behavior. On the contrary, if \(\sigma \) is positive and sufficiently large, particles do not get stuck at equilibrium points, as diffusion is enhanced, and the interaction terms start playing a role, generating dynamical frustration. The two populations form now a frustrated pair of systems where the rest state of the first is not compatible with the rest position of the second. As a consequence, the dynamics does not settle down to a fixed equilibrium and keeps oscillating. Therefore, the noise is responsible for the emergence of a stable rhythm (see Sect. 4). This feature is the hallmark of the phenomenon of noise-induced periodicity.

3 Noise-induced periodicity: numerical study

In this section, we present numerical simulations of the finite-size system (2.1), aimed at giving evidences of the phenomenon of noise-induced periodicity.

In the setting introduced in Sect. 2, we ran several simulations of (2.1) for different choices of \(\sigma \) and several values of the interaction strengths. In all cases, we performed simulations with \(10^{6}\) iterations with time-step \(dt = 0.005\) for a system of 1000 particles equally divided between the two populations (\(\alpha =0.5\)). All particles in the same population were given the same initial condition. We fixed \(\theta _{11}=\theta _{22}=8\) and let \(A{:}{=}\left( 1-\alpha \right) \theta _{12}>0\) and \(B{:}{=}-\alpha \theta _{21}>0\) vary. The results are displayed in Figs. 2, 3 and Table 1, where also the specific values we employed for A, B and \(\sigma \) are reported. The choices of the parameters are discussed in more detail in Sect. 4, as they correspond to different regimes of the limiting noiseless dynamics (i.e., system (2.2) with \(\sigma =0\)), namely, \(A-1<B<A+2\), \(B=A+2\) and \(B>A+2\).

We observe the following:

-

1.

If \(\sigma =0\) the system is attracted to a fixed point (see the first column of Fig. 2). Numerical evidences support the idea that, in the regimes \(A-1< B < A+2\) and \(B > A+2\), this behavior persists for small \(\sigma >0\).

-

2.

When the intensity of the noise is tuned to an intermediate range of values, an oscillatory behavior is observed in the \(\left( m^{(N)}_{1}, m^{(N)}_{2}\right) \) plane throughout the duration of the simulation, suggesting the presence of a periodic law (see the second column of Fig. 2). Thus, our model seems to exhibit noise-induced periodicity. This phenomenon, which at the best of our knowledge lacks a full theoretical comprehension, can be loosely described in the following terms: an intermediate amount of noise may create/stabilize some attractors and destabilize others. In our case it seems that the noise destabilizes (some of the) fixed points and generates a stable rhythmic behavior of the empirical averages of the particle positions of the two communities. We would like to mention that, in the regime \(A=B+2\), an arbitrarily small value of \(\sigma >0\) seems to be sufficient to induce periodicity.

-

3.

Letting \(\sigma \gg 1\) completely alters the dynamics that essentially becomes a Brownian motion (see the third column of Fig. 2).

In Fig. 3 and Table 1 the oscillatory behavior emerging in system (2.1) is analyzed further. We computed the average return time of the system to the Poincaré section \(\left\{ m^{(N)}_2=0, m^{(N)}_1>0\right\} \) and its standard deviation, in the various regimes. These are reported in the third column of Table 1. The Poincaré section is plotted as a red line in Fig. 3. In addition, we computed the discrete Fourier transform, averaged over \(M=50\) simulations, for the average particle position of the second population, \(m^{(N)}_2\). From the peak of the Fourier transform we recovered the period of the trajectory of \(m^{(N)}_2(t)\). The average period and its standard deviation are reported in the fourth column of Table 1 for different values of the parameters.

4 Propagation of chaos and small-noise approximation

In this section we give our main results. We begin with a propagation of chaos statement, allowing to get the macroscopic description (2.2) of our system. Then, we analyze the noiseless version of the macroscopic dynamics and we show the absence of limit cycles as attractors. Finally, in a small-noise regime, we derive a Gaussian approximation of the infinite volume evolution (2.2) that displays an oscillatory behavior.

4.1 Propagation of chaos

Propagation of chaos claims that, as \(N\rightarrow \infty \), the evolution of each particle remains independent of the evolution of any finite subset of the others. This is coherent with the fact that individual units interact only through the empirical means of the two populations, over which the influence of a finite number of particles becomes negligible when taking the infinite volume limit. In our case the limiting evolution of a pair of representative particles, one for each population, is the process \(((x(t),y(t)), 0 \le t \le T)\) described by the stochastic differential equation (2.2).

Under the assumptions \({\mathbb {E}}[x(0)]<\infty \) and \({\mathbb {E}}[y(0)]<\infty \), it is easy to prove that system (2.2) has a unique strong solution (see Theorem A.1 in Appendix A). Moreover, by a coupling argument, we obtain the following theorem.

Theorem 4.1

Fix \(T>0\). Let \(\Big (\left( x^{(N)}_{1}(t),\dots ,x^{(N)}_{N_1}(t), y^{(N)}_{1}(t),\dots ,y^{(N)}_{N_2}(t)\right) , 0 \le t \le T \Big )\) be the solution to Eq. (2.1) with an initial condition satisfying the following requirements:

-

the collection \(\left( x^{(N)}_{1}(0), \dots , x^{(N)}_{N_1}(0), y^{(N)}_{1}(0), \dots , y^{(N)}_{N_2}(0)\right) \) is a family of independent random variables.

-

the random variables \(\left( x^{(N)}_{1}(0), \dots , x^{(N)}_{N_1}(0)\right) \) (resp. \(\left( y^{(N)}_{1}(0), \dots , y^{(N)}_{N_2}(0)\right) \)) are identically distributed with law \(\lambda _x\) (resp. \(\lambda _y\)). We assume that \(\lambda _x\) and \(\lambda _y\) have finite second moment.

-

the random variables \(x^{(N)}_{j}(0)\) and \(y^{(N)}_{k}(0)\) are independent of the Brownian motions \(\left( w_{i}(t), 0 \le t \le T\right) _{i=1,\dots ,N}\) for all \(j=1,\dots , N_1\) and \(k=1,\dots ,N_2\).

Moreover, let \(\left( \left( x_{1}(t), \dots , x_{N_1}(t), y_{1}(t), \dots , y_{N_2}(t)\right) , 0 \le t \le T \right) \) be the process whose entries are independent and such that \((x_j(t), 0 \le t \le T)_{j=1, \dots , N_1}\) (resp. \((y_k(t), 0 \le t \le T)_{k=1, \dots , N_2}\)) are copies of the solution to the first (resp. second) equation in (2.2), with the same initial conditions and the same Brownian motions used to define system (2.1). Here, “the same” means component-wise equality.

Define the index sets \({\mathcal {I}}=\{i_1,\ldots ,i_{k_1}\}\subseteq \{1,\ldots ,N_1\}\), with \(|{\mathcal {I}}|=k_1\), and \({\mathcal {J}}=\{j_1,\ldots ,j_{k_2}\}\subseteq \{1,\ldots ,N_2\}\), with \(|{\mathcal {J}}|=k_2\). Then, we have

with \(\left| {\textbf{z}}\right| \) the \(\ell ^1\)-norm of a vector \({\textbf{z}}\), \({\textbf{z}}^{(N)}_{k_1,k_2}(t) = \left( x^{(N)}_{i_1}(t), \dots , x^{(N)}_{i_{k_1}}(t), y^{(N)}_{j_1}(t), \dots ,\right. \left. y^{(N)}_{j_{k_2}}(t)\right) \) and \({\textbf{z}}_{k_1,k_2}(t) =\left( x_{1}(t),\dots ,x_{k_1}(t), y_{1}(t),\dots ,y_{k_2}(t)\right) \).

The proof of Theorem 4.1 is postponed to Appendix B. Recall that the convergence in Theorem 4.1 implies, for \(t \in [0,T]\), convergence in distribution of any finite-dimensional vector \({\textbf{z}}^{(N)}_{k_1,k_2}(t)\) to \({\textbf{z}}_{k_1,k_2}(t)\).

4.2 Analysis of the zero-noise dynamics

In this section we consider system (2.2) with \(\sigma =0\). Notice that, in the zero-noise version of (2.2), the terms \(\alpha \theta _{11}\left( x- {\mathbb {E}}[x]\right) \) and \((1-\alpha )\theta _{22}\left( y-{\mathbb {E}}[y]\right) \) are both zero. Thus, setting

system (2.2) reduces to

At this point, we make the following assumption. We will focus on the case

-

(H) \(A>1\) and \(B>A-1\).

The reason for this choice is that in this parameter regime one can obtain an analytic characterization of the phase portrait of system (4.2), still displaying a rich variety of cases. The central concern in the subsequent sections will be the investigation of the conditions under which noise-induced periodicity occurs.

To this end, we studied the location and the nature of the fixed points of system (4.2) by varying A and B under the regime given by hypothesis (H) and checked that no local bifurcation generating limit cycles occurs. Unfortunately, the global analysis of the system turns out to be very involved and we are able to exclude the existence of limit cycles only by numerical evidences (see Fig. 4).

System (4.2) admits the following equilibria:

-

\(\bullet \) The fixed points \(\left( 0,0\right) \) and \(\pm \left( 1,1\right) \) are present for any value of A and B. However, their nature changes depending on the parameters. More specifically,

-

\(\bullet \) when \(A-1<B<A+2\), \(\left( 0,0\right) \) is an unstable node and \(\pm \left( 1,1\right) \) are stable nodes.

-

\(\bullet \) when \(B=A+2\), \(\left( 0,0\right) \) is an unstable node and \(\pm \left( 1,1\right) \) have a neutral and a stable direction.

-

\(\bullet \) for \(B>A+2\), \(\left( 0,0\right) \) is an unstable node and \(\pm \left( 1,1\right) \) are saddle points.

-

-

\(\bullet \) Depending on the values of A and B, there may be two additional equilibria. In particular, three situations may arise:

-

\(\bullet \) when \(A-1<B<A+2\), there exists \(\beta >0\) such that the points \(\pm \left( x,\beta x\right) \) are fixed points for (4.2), with \(0<x<1\) and \(\beta <1\). That is, the equilibria are \(\left( 0,0\right) \), \(\pm \left( 1,1\right) \) and \(\pm \left( x,\beta x\right) \), symmetrically located in the first and the third quadrants. The fixed points \(\pm \left( x,\beta x\right) \) are saddle points.

-

\(\bullet \) when \(B=A+2\), no other fixed points are present apart from \(\left( 0,0\right) \) and \(\pm \left( 1,1\right) \).

-

\(\bullet \) when \(B>A+2\), there exists \(\beta >0\) such that \(\pm \left( x,\beta x\right) \) are fixed points for (4.2), with \(x>1\) and \(\beta >1 \). That is, system (4.2) has five equilibria: \(\left( 0,0\right) \), \(\pm \left( 1,1\right) \) and \(\pm \left( x,\beta x\right) \), symmetrically located in the first and the third quadrants. The fixed points \(\pm \left( x,\beta x\right) \) are stable nodes.

-

Phase portraits of system (4.2) for diverse values of A and B. a Case \(A-1<B<A+2\) with \(A=2\) and \(B=2.5\). Fixed points: \(\left( 0,0\right) \) is an unstable node, \(\pm \left( 1,1\right) \) are stable nodes and \(\pm \left( 0.78,0.63\right) \) (numerically obtained coordinates) are saddle points. b Case \(B=A+2\) with \(A=2\) and \(B=4\). Fixed points: \(\left( 0,0\right) \) is an unstable node and \(\pm \left( 1,1\right) \) have a negative and a zero eigenvalue. c Case \(B>A+2\) with \(A=2\) and \(B=7\). Fixed points: \(\left( 0,0\right) \) is an unstable node, \(\pm \left( 1,1\right) \) are saddle points and \(\pm \left( 1.24, 1.58\right) \) (numerically obtained coordinates) are stable spirals. Red dots mark the equilibria. Streamline colors correspond to the magnitude of the vector field scaled to [0, 1] (relative magnitude). A detailed analysis of the nature of the fixed points in the three regimes can be found in Appendix C

The depicted scenarios are summarized in Table 2. We refer the reader to Appendix C for a detailed proof. In Fig. 4, we display numerically obtained phase portraits for specific values of the parameters in the three cases \(A-1<B<A+2\), \(B=A+2\) and \(B>A+2\). In all these cases, numerical investigations strongly corroborate the absence of limit cycles for system (4.2).

We remark that the main results of this paper, given in Sects. 4.1 and 4.4, hold for all \(A,\, B >0\), as one can see from the proofs in the Appendices. Furthermore, qualitatively analogous behaviors were numerically observed in the case \(0<A\le 1\), \(B>0\), when extra fixed points for system (4.2) may exist.

4.3 The Fokker–Planck equation

The long-time behavior of the law of the solution to system (2.2) may be investigated by considering the corresponding Fokker–Planck equation, that reads as

where time and space dependencies have been left implicit for simplicity of notation. Here \(\langle z,q_i \rangle := \int z q_i(z;t) dz\), with \(i=1,2\). The regularizing effect of the second-order partial derivatives guarantees that, for \(t \in [0,T]\), the laws of x(t) and y(t) have respective densities \(q_1(\cdot ;t)\) and \(q_2(\cdot ;t)\) solving (4.3). By using the finite element method [23], we performed numerical simulations of system (4.3) starting from the initial distributions \(q_1(z;0)=q_2(z;0)=\delta _{0.8}(z)\). These initial conditions correspond to what we did in Sect. 3, where we initialized the particles of both groups at \(z=0.8\) in the simulations of the microscopic system. We observed that \(q_1\) and \(q_2\) both assume a bell shape during the simulation, while the average positions of the two populations, \(\langle z, q_i\rangle \) (\(i=1,2\)), computed numerically, display an oscillatory behavior. We show the results of these simulations in Fig. 5. The above considerations justify the idea of the Gaussian approximation for system (2.2) that will be analyzed in the following section.

Temporal evolution of the average positions \(\langle z, q_1\rangle \) and \(\langle z, q_2\rangle \) of the two populations in the thermodynamic limit. Parameter values: \(A=2\) and \(B=2.5\); the other regimes are analogous. The insets show the densities \(q_1\) (orange) and \(q_2\) (blue) at some times during the simulation

4.4 Small-noise approximation

In this section we derive a small-noise approximation of system (2.2). In particular, motivated by what we observed in Sect. 4.3, we build a pair of independent Gaussian processes \(\left( \left( {\tilde{x}}(t), {\tilde{y}}(t)\right) , 0 \le t \le T \right) \) that closely follows \(((x(t),y(t)), 0 \le t \le T)\), solution to (2.2), when the noise is small. Although such an approximation holds rigorously true in the limit of vanishing noise, numerical simulations suggest it remains valid also beyond the assumption \(\sigma \ll 1\) and that it explains the qualitative behavior of system (2.1) shown in Sect. 3. We give the precise statement of our result below, whereas the proof is postponed to Appendix D. Here we remark that it is possible to take \(({\tilde{x}}(t), 0 \le t \le T)\) independent of \(({\tilde{y}}(t), 0 \le t \le T)\) because of the specific form of the equations in (2.2), that do not have mixed terms (i.e. of the type \(x^n\,y^m\)).

The first step towards the Gaussian approximation of (2.2) is the derivation of the equations of the moments of x(t) and y(t) in system (2.2). Since the approximation will be given by a pair of independent processes, we can avoid computing mixed moments (see Appendix D). By applying Itô’s rule to system (2.2), we can obtain the SDEs solved by \(x^p(t)\) and \(y^p(t)\) for any \(p \ge 1\). This yields

Let \(m_{p}^{x}(t)={\mathbb {E}}[x^p(t)]\) and \(m_{p}^{y}(t)={\mathbb {E}}[y^p(t)]\) be the p-th moments of the variables x(t) and y(t) solving system (2.2), respectively. Taking the expectation in (4.4), we obtain

Since the p-th moments in (4.5) depend on the \((p+2)\)-th moments, the system is infinite dimensional-and hence hardly tractable-unless higher-order moments of x(t) and y(t) are functions of the first moments. The latter would be the case if x(t) and y(t) were Gaussian processes. In general, the processes x(t) and y(t) are neither Gaussian nor independent, however we prove in Appendix D that it is possible to build a Gauss-Markov process \(\left( \left( {\tilde{x}}(t), {\tilde{y}}(t)\right) , 0 \le t \le T \right) \), with independent components, which stays close to \(\left( \left( x(t), y(t)\right) , 0 \le t \le T \right) \) when the noise size is small. We have the following theorem.

Theorem 4.2

Fix \(T>0\). Let \(\left( \left( x(t), y(t)\right) , 0 \le t \le T \right) \) solve Eq. (2.2) with deterministic initial conditions \(x(0) = x_0\) and \(y(0) = y_0\). There exists a Gaussian Markov process \(\left( \left( {\tilde{x}}(t), {\tilde{y}}(t)\right) , 0 \le t \le T \right) \) with \({\tilde{x}}(0)=x_0\) and \({\tilde{y}}(0)=y_0\) satisfying the properties:

-

1.

The first two moments of \({{\tilde{x}}}(t)\) and \({{\tilde{y}}}(t)\) satisfy the respective equations in (4.5) for \(p=1,2\).

-

2.

For all \(T > 0\), there exists a constant \(C_T>0\) such that, for every \(\sigma >0\), it holds

$$\begin{aligned} {\mathbb {E}}\left[ \sup _{t\in [0,T]}\left\{ \left| x(t) -\tilde{x}(t)\right| +\left| y(t) -{{\tilde{y}}}(t)\right| \right\} \right] \le C_T \sigma ^2. \end{aligned}$$This means that the processes \(\left( {\tilde{x}}(t), 0 \le t \le T \right) \) and \(\left( {\tilde{y}}(t), 0 \le t \le T\right) \) are simultaneously \(\sigma \)-closed to the solutions of (2.2).

Time evolution of the mean \(m_2\) and the variance \(v_2\) according to the dynamical system (4.6). In all cases, we considered \(10^6\) iterations with a time-step \(dt=0.005\), \(\alpha = 0.5\), \(\theta _{11}=\theta _{22}=8\). From top to bottom: \(A-1<B<A+2\), in particular, \(A=2\) and \(B=2.5\), with \(\sigma =0.5\); \(B=A+2\), in particular, \(A=2\) and \(B=4\), with \(\sigma =0.1\); \(B>A+2\), in particular, \(A=2\) and \(B=7\), with \(\sigma =0.6\)

Projected dynamics of system (4.6) in the \(\left( m_1,\, m_2\right) \) plane. In all cases, we considered \(10^6\) iterations with a time-step \(dt=0.005\), \(\alpha = 0.5\), \(\theta _{11}=\theta _{22}=8\). From top to bottom: \(A-1<B<A+2\), in particular, \(A=2\) and \(B=2.5\); \(B=A+2\), in particular, \(A=2\) and \(B=4\); \(B>A+2\), in particular, \(A=2\) and \(B=7\). In the first column we plot the trajectories of the system in the zero-noise case (\(\sigma =0\)), in the second column we consider an intermediate intensity for the noise (\(\sigma =0.5\), 0.1 and 0.6 respectively) and in the third one we set \(\sigma =5\)

Since \({{\tilde{x}}}(t)\) and \({{\tilde{y}}}(t)\) are Gaussian, their higher-order moments are polynomial functions of the first two moments. In particular, the laws of \({{\tilde{x}}}(t)\) and \({{\tilde{y}}}(t)\) are completely determined by the dynamics of the respective mean and variance. Thus, rather than studying the infinite dimensional system (4.5), it suffices to analyze the subsystem describing the time evolution of the mean and the variance of each approximating process. We will show in Appendix D that such a system is

where \(m_1(t)\) (resp. \(m_2(t)\)) is the expectation of \({\tilde{x}}(t)\) (resp. \({\tilde{y}}(t)\)) and \(v_1(t)\) (resp. \(v_2(t)\)) is the variance of \({\tilde{x}}(t)\) (resp. \({\tilde{y}}(t)\)). As before, we have set \(A{:}{=}\left( 1-\alpha \right) \theta _{12}\) and \(B{:}{=}-\alpha \theta _{21}\).

For the values of \(\theta _{11}\), \(\theta _{22}\), A and B considered in this paper (i.e., \(\theta _{11}=\theta _{22}=8\) and A and B as reported in Table 1), the dynamical system (4.6) features a subcritical Hopf bifurcation [22] at the equilibrium \((m_1,m_2,v_1,v_2)=\left( 0,0,{{\tilde{v}}}_1,{{\tilde{v}}}_2\right) \), for a critical value \(\sigma _c=\sigma _c(\theta _{11}, \theta _{22}, A,B)\) of the noise size, as reported in Appendix E. In other words, when the noise intensity decreases to cross the threshold value \(\sigma _c\), the fixed point \(\left( 0,0,{{\tilde{v}}}_1,\tilde{v}_2\right) \) changes its nature from stable to unstable and, at the same time, a stable limit cycle appears. Thus, in an intermediate range of noise size, system (4.6) displays stable rhythmic oscillations that disappear for \(\sigma =0\). Indeed, when \(\sigma =0\), \(v_1=v_2=0\) is a fixed point of the subsystem formed by the third and fourth equations in (4.6). As a consequence, the zero-noise limit of the first two equations in (4.6) reduces to the noiseless version of system (2.2), which does not display any oscillatory behavior.

Simulations of system (4.6), with values of A and B as in Table 1 and Fig. 2, gave the results shown in Figs. 6 and 7, where rhythmic oscillations for intermediate values of noise were detected.

Our analysis shows that the behavior of system (2.1) for different noise sizes is well described, at least qualitatively, by the Gaussian approximation (4.6).

References

Aleandri, M., Minelli, I.G.: Opinion dynamics with Lotka–Volterra type interactions. Electron. J. Probab. 24, 1–31 (2019)

Aleandri, M., Minelli, I.G.: Delay-induced periodic behaviour in competitive populations. J. Stat. Phys. 185(6), 1–21 (2021)

Andreis, L., Dai Pra, P., Fischer, M.: McKean–Vlasov limit for interacting systems with simultaneous jumps. Stoch. Anal. Appl. 36(6), 960–995 (2018)

Andreis, L., Tovazzi, D.: Coexistence of stable limit cycles in a generalized Curie–Weiss model with dissipation. J. Stat. Phys. 173(1), 163–181 (2018)

Cerf, R., Dai Pra, P., Formentin, M., Tovazzi, D.: Rhythmic behavior of an Ising model with dissipation at low temperature. ALEA 18, 439–467 (2021)

Collet, F., Dai Pra, P., Formentin, M.: Collective periodicity in mean-field models of cooperative behavior. NoDEA 22(5), 1461–1482 (2015)

Collet, F., Formentin, M.: Effects of local fields in a dissipative Curie–Weiss model: Bautin bifurcation and large self-sustained oscillations. J. Stat. Phys. 176(2), 478–491 (2019)

Collet, F., Formentin, M., Tovazzi, D.: Rhythmic behavior in a two-population mean field Ising model. Phys. Rev. E 94(4), 042139 (2016)

Dai Pra, P., Fischer, M., Regoli, D.: A Curie–Weiss model with dissipation. J. Stat. Phys. 152(1), 37–53 (2013)

Dai Pra, P., Formentin, M., Pelino, G.: Oscillatory behavior in a model of non-Markovian mean field interacting spins. J. Stat. Phys. 179, 690–712 (2021)

Dai Pra, P., Giacomin G., Regoli, D.: Noise-induced periodicity: some stochastic models for complex biological systems. In: Mathematical Models and Methods for Planet Earth, pp. 25–35. Springer, Cham (2014)

Dawson, D.A.: Critical dynamics and fluctuations for a mean-field model of cooperative behavior. J. Stat. Phys. 31(1), 29–85 (1983)

Ditlevsen, S., Löcherbach, E.: Multi-class oscillating systems of interacting neurons. Stoch. Process. Appl. 127(6), 1840–1869 (2015)

Ermentrout, G.B., Terman, D.H.: Mathematical Foundations of Neuroscience. Springer, New York (2010)

Fernández, R., Fontes, L.R., Neves, E.J.: Density-profile processes describing biological signaling networks: almost sure convergence to deterministic trajectories. J. Stat. Phys. 136(5), 875–901 (2009)

Giacomin, G., Poquet, C.: Noise, interaction, nonlinear dynamics and the origin of rhythmic behaviors. Braz. J. Prob. Stat. 29(2), 460–493 (2015)

Khasminskii, R.: Stochastic Stability of Differential Equations. Springer, Berlin (2012)

Lindner, B., Garcıa-Ojalvo, J., Neiman, A., Schimansky-Geier, L.: Effects of noise in excitable systems. Phys. Rep. 392(6), 321–424 (2004)

Luçon, E., Poquet, C.: Emergence of oscillatory behaviors for excitable systems with noise and mean-field interaction: a slow–fast dynamics approach. Commun. Math. Phys. 373, 907–969 (2020)

Luçon, E., Poquet, C.: Periodicity induced by noise and interaction in the kinetic mean-field FitzHugh–Nagumo model. Ann. Appl. Probab. 31(2), 561–593 (2021)

Meyn, S.P., Tweedie, R.L.: Stability of Markovian processes III: Foster–Lyapunov criteria for continuous-time processes. Adv. Appl. Prob. 25, 518–548 (1993)

Perko, L.: Differential Equations and Dynamical Systems. Springer, New York (2001)

Quarteroni, A.: Numerical Models for Differential Problems. Springer, New York (2017)

Scheutzow, M.: Noise can create periodic behavior and stabilize nonlinear diffusions. Stoch. Process. Appl. 20(2), 323–331 (1985)

Scheutzow, M.: Some examples of nonlinear diffusion processes having a time-periodic law. Ann. Probab. 13(2), 379–384 (1985)

Touboul, J.: The hipster effect: when anticonformists all look the same. Discrete Contin. Dyn. Syst. Ser. B 24(8), 4379–4415 (2019)

Touboul, J., Hermann, G., Faugeras, O.: Noise-induced behaviors in neural mean field dynamics. SIAM J. Appl. Dyn. Syst. 11(1), 49–81 (2012)

Wallace, E., Benayoun, M., van Drongelen, W., Cowan, J.D.: Emergent oscillations in networks of stochastic spiking neurons. PLoS ONE 6(5), e14804 (2011)

Acknowledgements

We thank Paolo Dai Pra and Daniele Tovazzi for useful discussions, comments and suggestions. MF thanks Serena Bonvicini that partially studied the model numerically in her master thesis. EM acknowledges financial support from Progetto Dottorati—Fondazione Cassa di Risparmio di Padova e Rovigo. LA, FC and MF did part of this work during a stay at the Institut Henri Poincaré-Centre Emile Borel in occasion of the trimester “Stochastic Dynamics Out of Equilibrium”. They thank this institution for hospitality and support.

Funding

Open access funding provided by Universitá degli Studi di Padova within the CRUI-CARE Agreement.

Author information

Authors and Affiliations

Contributions

LA, FC and MF proposed the model. EM performed numerical simulations and prepared the figures. The authors contributed equally to the other parts of the research project. All the authors contributed in writing the manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no conflicts of interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendices

Here we detail the proofs of the statements in the main text.

Well-posedness of system (2.2)

Theorem A.1

Fix \(T>0\). For any initial condition \(\left( x(0), y(0)\right) = (\xi _x, \xi _y)\), with \(\xi _x\), \(\xi _y\) real random variables having finite first moment and being independent of the Brownian motions \((w_i(t), 0 \le t \le T)_{i=1,2}\), system (2.2) has a unique strong solution.

Proof

We follow the argument in [12], based on a Picard iteration. We define recursively two sequences of stochastic processes \(\left( x_n(t), 0 \le t \le T\right) \) and \(\left( y_n(t), 0 \le t \le T\right) \), indexed by \(n\ge 1\), via their Itô’s differentials

all with the same initial condition \((x_n(0),y_n(0))=(\xi _x,\xi _y)\). By subtracting two subsequent sets of equations, written in integral form, for every \(t \in [0,T]\), we obtain

where we have employed the identity \(a^3 -b^3 = \left( a-b\right) (a^2 + b^2 + ab)\) and we have set \(f_n(s){:}{=}x^2_{n+1}(s)+x^2_n(s)+x_{n+1}(s) x_n(s)\) and \(g_n(s){:}{=}y^2_{n+1}(s)+y^2_n(s)+y_{n+1}(s) y_n(s)\). Observe that \(f_n(t), g_n(t) \ge 0\) for all \(t \in [0,T]\).

Eq. (A.1) and Eq. (A.2) are of the form \(\varphi (t) = \int _0^t \varphi (s)H(s)ds + \int _0^t Q(s)ds\), where \(\varphi (t)\) is given by \(x_{n+1}(t)-x_n(t) \) and \(y_{n+1}(t)-y_n(t)\), respectively. The solution to an equation of this form can be explicitly written as \(\varphi (t) = \varphi (0) +\int _{0}^t Q(s) e^{\int _{s}^{t} H(r) dr} ds\). In our case, \(\varphi (0) = 0\) since \(x_n(0)=\xi _x\) and \(y_n(0)=\xi _y\) for all \(n\ge 1\) by assumption. Therefore, for \(t \in [0,T]\), the solutions of Eq. (A.1) and Eq. (A.2) are

We now get into the core of the proof.

Step 1: auxiliary property. We will show that, for every \(T>0\), the sequences \(\left( {\mathbb {E}}\left[ x_n(t)\right] , 0 \le t \le T\right) _{n \ge 1}\) and \(\left( {\mathbb {E}}\left[ y_n(t)\right] , 0 \le t \le T\right) _{n\ge 1}\) are Cauchy sequences in the space \({\mathcal {C}}\left( [0,T]\right) \), equipped with the supremum norm

As a consequence, since \(({\mathcal {C}}([0,T]), d)\) is a complete metric space, we will obtain convergence to elements \((m_x(t), 0 \le t \le T), (m_y(t), 0 \le t \le T) \in {\mathcal {C}}\left( [0,T]\right) \).

We take the absolute value and the expectation in both Eq. (A.3) and Eq. (A.4). If we denote by \(\phi _n(t) {:}{=}\sup _{s \in [0,t]} {\mathbb {E}}\left[ \left| x_{n+1}(s)-x_n(s) \right| \right] \) and \(\psi _n(t) {:}{=}\sup _{s\in [0,t]}{\mathbb {E}}\left[ \left| y_{n+1}(s)-y_n(s) \right| \right] \), from Eq. (A.3) we obtain

for some positive constants \({{\tilde{C}}}_t\) and \({{\tilde{D}}}_t\). From Eq. (A.4) we get an analogous inequality for \(\psi _{n}(t)\). The inequalities are valid for all \(t \in [0,T]\). Iteratively employing inequality (A.5) gives

for suitable positive constants \(C_T\) and \(D_T\). Similarly we bound \(\psi _n(T)\). Hence, \(\phi _n(T)\) and \(\psi _n(T)\) go to zero as \(n\rightarrow +\infty \). Thus, it follows that \(\left( {\mathbb {E}}\left[ x_n(t)\right] , 0 \le t\right. \left. \le T\right) _{n\ge 1}\) and \(\left( {\mathbb {E}}\left[ y_n(t)\right] , 0 \le t \le T\right) _{n\ge 1}\) are Cauchy sequences in \({\mathcal {C}}\left( [0,T]\right) \) and converge to the continuous limits \((m_x(t), 0 \le t \le T)\) and \((m_y(t), 0 \le t \le T)\), respectively.

Step 2: existence of the solution to (2.2). Consider the following system of stochastic differential equations

with initial condition \((x(0),y(0))=(\xi _x,\xi _y)\). Since the functions \(m_x\) and \(m_y\) are bounded for every \(t \in [0,T]\), existence and uniqueness of a strong solution for (A.6) follows from a Khasminskii’s test with norm-like function \(V(x,y)=\frac{x^4}{4}+\frac{x^2}{2}+\frac{y^4}{4}+\frac{y^2}{2}\). See [17, 21].

Let \(((x(t),y(t)), 0 \le t \le T)\) be the unique strong solution for (A.6). We construct the differences

and

in \({\mathcal {C}}\left( [0,T]\right) \). Therefore, since we have already showed that

and

we have that \({\mathbb {E}}\left[ x(t)\right] = m_x(t)\) and \({\mathbb {E}}\left[ y(t)\right] = m_y(t)\) for all \(t \in [0,T]\). Hence, system (A.6) coincides with system (2.2) and its solution \(\left( (x(t), y(t)), 0 \le t \le T \right) \) provides a solution for (2.2).

Step 3: uniqueness of the solution to (2.2). Let \(((u(t),v(t)), 0 \le t \le T)\) be another solution to (2.2). We write the integral equations for \(x(t)-u(t)\) and \(y(t)-v(t)\) and we use them to get estimates for \(\phi (t)=\vert {\mathbb {E}}[x(t)-u(t)]\vert \) and \(\psi (t)=\vert {\mathbb {E}}[y(t)-v(t)]\vert \). By mimicking the computations above, we obtain

for suitable positive constants \({\hat{C}}_{T}\) and \({\hat{D}}_{T}\). Summing up the two previous inequalities and using Gronwall’s lemma yields \(\phi (t)+\psi (t) \le 0\) for all \(t \in [0,T]\). This shows that \({\mathbb {E}}[x(t)]={\mathbb {E}}[u(t)]\) and \({\mathbb {E}}[y(t)]={\mathbb {E}}[v(t)]\) for all \(t \in [0,T]\). Thus, \(((x(t),y(t)), 0 \le t \le T)\) and \(((u(t),v(t)), 0 \le t \le T)\) are both solutions to (A.6) with the same pair \((m_x,m_y)\) and the same initial condition. It follows that \((x(t),y(t))=(u(t),v(t))\) for all \(t \in [0,T]\). \(\square \)

Proof of Theorem 4.1

The proof of Theorem 4.1 is standard and it relies on a coupling method [3, 6]. We want to prove (4.1). Without loss of generality, we take \({\mathcal {I}}=\{1,\ldots , k_1\}\) and \({\mathcal {J}}=\{1,\ldots , k_2\}\). Since

to conclude it suffices to show that each of the \(k_1+k_2\) terms goes to zero in the limit \(N\rightarrow \infty \). In the sequel we will consider only the term \({\mathbb {E}}\left[ \sup _{t\in [0,T]} \left| x^{(N)}_1(t)-x_1(t)\right| \right] \); the other terms can be dealt with similarly. We only sketch our computations as they use the very same tricks as in the proof of Theorem A.1 in Appendix A. Since the processes \(\left( x_1^{(N)}(t), 0 \le t \le T\right) \) and \((x_1(t), 0 \le t \le T)\) are initiated at the same position, we obtain

where we have employed the identity \(a^3-b^3 = (a-b)(a^2 +b^2+ ab)\) and we have set \(f(s) {:}{=}\left( x^{(N)}_1(s)\right) ^2+x_1^2(s) +x^{(N)}_1(s) x_1(s)\), and where

Observe that Eq. (B.1) is of the form \(\varphi (t) = \int _{0}^t \varphi (s)H(s) ds + \int _{0}^t Q(s) ds\), with \(\varphi (t)=x^{(N)}_1(t)-x_1(t)\). Therefore, as the solution of the latter equation is \(\varphi (t) = \varphi (0) +\int _{0}^t Q(s) e^{\int _{s}^{t} H(r) dr} ds\) and, in our case, \(x^{(N)}_1(0)=x_1(0)\) by assumption, for every \(t \in [0,T]\), we can estimate

for some positive constant \( C_{T}\). At this point, taking the supremum and the expectation of both sides of the previous inequality, for \({\tilde{t}} \in [0,T]\), we obtain

We need an upper bound for \({\mathbb {E}}\left[ \left| \mu (s)\right| \right] \). We have

and, by adding and subtracting \(\frac{1}{N_1}\sum _{i=1}^{N_1}x_i(s)\) (resp. \(\frac{1}{N_2}\sum _{i=1}^{N_2}y_i(s)\)) inside the first (resp. second) absolute value, we get

Since the limiting variables \((x_i(t))_{i=1,\dots ,N_1}\) and \((y_i(t))_{i=1,dots,N_2}\) are i.i.d. families and have uniformly bounded second moments for all \(t \in [0,T]\) (due to the well-posedness of system (2.2)), the standard CLT assures that there exists a positive constant \(K_T\) such that, uniformly for all \(s \in [0,T]\), it holds

Moreover we have

and

These last terms are in fact independent of the index i due to the symmetry of the system which, in turn, is due to the choice of the initial conditions and the mean field assumption. Thus, recalling that \(\alpha =\frac{N_1}{N}\), we obtain

By employing Eq. (B.3) in Eq. (B.2), it is easily seen that there exists a constant D, depending on T and on the parameters \(\alpha , \theta _{11}, \theta _{22}, \theta _{12}\), and \(\theta _{21}\), such that

Similarly, we obtain also

If we define

by summing up the inequalities (B.4) and (B.5), we obtain

An application of Gronwall’s lemma leads to the conclusion. Indeed, we get the inequality \(g(T) \le \frac{2De^{2DT}}{\sqrt{N}}\), whose right-hand side goes to zero as \(N \rightarrow +\infty \).

Equilibria of the noiseless dynamics

We consider system (4.2) and study the nature of its fixed points, depending on the values of the parameters A and B. Throughout this analysis, we use the basic theory of dynamical systems as it can be found for instance in [22]. Moreover, unless otherwise specified, we assume for the moment \(A>1\) and \(B>0\).

-

1.

The fixed points \(\left( 0,0\right) \) and \(\pm \left( 1,1\right) \) are present for any values of A and B.

-

(a)

The linearized system around the origin has the eigenvalues

$$\begin{aligned} \lambda _1=1 \text{ and } \lambda _2=1-A+B=1-\gamma , \end{aligned}$$where \(\gamma {:}{=}A-B\). Thus \(\left( 0,0\right) \) is a saddle when \(\gamma > 1\), it has an unstable and a neutral direction for \(\gamma = 1\) and it is an unstable node otherwise.

-

(b)

Eigenvalues of the linearized system around \(\pm \left( 1,1\right) \) are

$$\begin{aligned} \lambda _1=-2 \text{ and } \lambda _2=-2-A+B=-2-\gamma . \end{aligned}$$As a consequence, \(\pm \left( 1,1\right) \) are stable nodes for \(\gamma > -2\). They have a neutral and a stable direction when \(\gamma =-2\) and they are saddle points otherwise.

In conclusion: i) for \(\gamma <-2\), \(\left( 0,0\right) \) is unstable and \(\pm \left( 1,1\right) \) are saddle points; ii) when \(-2<\gamma <1\), \(\left( 0,0\right) \) is unstable and \(\pm \left( 1,1\right) \) are stable nodes; iii) for \(\gamma > 1\), \(\left( 0,0\right) \) is a saddle point and \(\pm \left( 1,1\right) \) are stable nodes.

-

(a)

-

2.

Depending on the values of the parameters A and B, there might be two additional equilibria. We search for equilibria of the form \((x,\beta x)\) with \(\beta \ne 0\), \(x\ne 0\). Notice that all the possible equilibria except for (0, 0) have such a form. Substituting \(y=\beta x\) in the first equation of (4.2), we get

$$\begin{aligned} \bar{x}_{\beta }=\pm \left( \sqrt{1-A\left( 1-\beta \right) },\,\beta \sqrt{1-A\left( 1-\beta \right) }\right) , \end{aligned}$$(C.1)subject to the condition

$$\begin{aligned} \beta >\frac{A-1}{A}. \end{aligned}$$(C.2)Notice that no \(\beta <0\) fulfills (C.2), since we have assumed \(A>1\). Therefore, system (4.2) can possibly have extra fixed points of the form \(\left( x, \beta x\right) \) only if they lie in the first and the third quadrant. The second equation in (4.2) leads to the fixed point equation

$$\begin{aligned} \beta = f\left( \beta \right) \quad \text { with } \quad f\left( \beta \right) := \sqrt{\frac{1-B\frac{1-\beta }{\beta }}{1-A\left( 1-\beta \right) }}. \end{aligned}$$(C.3)Observe that, for \(\beta = 1\), we recover the equilibria \(\pm \left( 1,1\right) \). Therefore, fixed points of the type \({\bar{x}}_{\beta }\) may exist if condition (C.2) is satisfied and Eq. (C.3) has real solutions, that is if

$$\begin{aligned} \beta >\max \left\{ \frac{A-1}{A}, \frac{B}{1+B}\right\} , \end{aligned}$$(C.4)which is equivalent to

$$\begin{aligned} {\left\{ \begin{array}{ll} \beta>\frac{A-1}{A} = \frac{B}{1+B}, &{} \text {if } B=A-1\\ \beta>\frac{A-1}{A}, &{} \text {if } B<A-1\\ \beta>\frac{B}{1+B}, &{} \text {if } B>A-1. \end{array}\right. } \end{aligned}$$Therefore, we have the following.

-

If \(B=A-1\), Eq. (C.3) becomes \(\beta = \frac{1}{\sqrt{\beta }}\), whose unique solution is \(\beta =1\). In this case, \(\gamma = 1\), so \(\pm \left( 1,1\right) \) are stable nodes and \(\left( 0,0\right) \) has a zero eigenvalue, thus it is not a hyperbolic fixed point and the linearization cannot give information about the phase portrait close to it. The dynamical system (4.2) can be rewritten as

$$\begin{aligned} {\dot{x}}&= -x^{3} - x\left( A-1\right) +A y\nonumber \\ {\dot{y}}&= -y^{3} + x -A\left( x-y\right) . \end{aligned}$$(C.5)Observe that the linear terms in both the components of the vector field in Eq. (C.5) are positive above the line \(y=\frac{A-1}{A}x\) and negative below it. Thus, the third-order terms can be neglected close to the equilibrium and the linearization gives an accurate sketch of the phase portrait of the system locally. Along the line \(y=\frac{A-1}{A}x\), that is the eigendirection of the zero eigenvalue, only the third-order terms count and we get \({\dot{x}}<0\), \({\dot{y}}<0\) in the first quadrant and \({\dot{x}}>0\), \({\dot{y}}>0\) in the third one. Overall, the eigendirection of the zero eigenvalue is locally stable.

-

If \(B<A-1\), due to condition (C.4), we expect to find solutions to Eq. (C.3) only for \(\beta >\frac{A-1}{A}\). Observe that \(f\left( \beta \right) \) has a vertical asymptote to positive infinity as \(\beta \) approaches \(\frac{A-1}{A}\) and it has a horizontal asymptote to zero as \(\beta \) grows to infinity. Moreover, \(\frac{\partial f}{\partial \beta }\left( \beta \right) = 0\) when

$$\begin{aligned} \beta _\pm = \frac{A B \pm \sqrt{A B\left( B-(A-1)\right) }}{A\left( 1+B\right) }, \end{aligned}$$(C.6)which are complex for \(B<A-1\). Therefore, \(f\left( \beta \right) \) is strictly decreasing. Thus, its graph cannot have more than one intersection with the line \(y=\beta \) and this unique intersection must be at \(\beta =1\). Overall, when \(B<A-1\), no fixed points of the type \(\left( x, \beta x\right) \) are present, except for \(\pm \left( 1,1\right) \). In this setting, we already established that \(\left( 0,0\right) \) is a saddle point and \(\pm \left( 1,1\right) \) are stable nodes.

-

If \(B>A-1\), we have already pointed out that \(\left( 0,0\right) \) is unstable, while the nature of \(\pm \left( 1,1\right) \) can change according to \(\gamma \) being less than or greater than \(-2\). From condition (C.4), it follows that we have to look for solutions to Eq. (C.3) for \(\beta > \frac{B}{B+1}\).

Observe that \(f\left( \frac{B}{1+B}\right) = 0\) and \(\lim _{\beta \rightarrow +\infty }f\left( \beta \right) = 0\). The points \(\beta _\pm \), given in (C.6), where \(\frac{\partial f}{\partial \beta }\left( \beta \right) = 0\), are real and distinct in this case. Moreover, \(\beta _{-}<\frac{B}{1+B}\). Hence, the function \(f\left( \beta \right) \) has only a critical point (maximum) at \(\beta =\beta _{+} > \frac{B}{1+B}\), so it may cross the line \(y=\beta \) once, twice or never. In particular, since we know the solution \(\beta =1\) to be always present, the intersections might coincide (\(\beta =1\) itself) or be distinct (\(\beta =1\) and a second intersection, for \(\beta \) greater or less than 1).

We distinguish three subcases.

-

\(\bullet \) The intersection at \(\beta =1\) is the unique solution to Eq. (C.3) if the graphs of \(y=\beta \) and \(y=f\left( \beta \right) \) are tangent at that point, i.e., if \(\frac{\partial f}{\partial \beta }\left( 1\right) =1\). This holds only if \(B=A+2\). In this case, the analysis of the linearized system tells us that \(\pm \left( 1,1\right) \) have a negative and a zero eigenvalue and to check stability one has to take into account higher-order terms.

We study only the point \(\left( 1,1\right) \), the analysis of \(-(1,1)\) being similar. To make our computations easier, we translate the vector field so that the fixed point \(\left( 1,1\right) \) is shifted to \(\left( 0,0\right) \). We make the change of variables \({\hat{x}}=x-1\) and \({\hat{y}}=y-1\). In the new coordinates \(({\hat{x}},{\hat{y}})\), system (4.2) becomes

$$\begin{aligned} \dot{{\hat{x}}}&= - \left( A+2\right) {\hat{x}}+A {\hat{y}} -3{\hat{x}}^{2}-{\hat{x}}^{3}\nonumber \\ \dot{{\hat{y}}}&= - \left( A+2\right) {\hat{x}}+A {\hat{y}} -3{\hat{y}}^{2}-{\hat{y}}^{3}. \end{aligned}$$(C.7)The line \({\hat{y}}=\frac{A+2}{A}{\hat{x}}\), along which the first-order terms in (C.7) vanish, that is the eigendirection of the zero eigenvalue, always lies above the line \({\hat{y}}={\hat{x}}\), that is the eigendirection of the non-zero eigenvalue. The first-order terms in Eq. (C.7) are positive above the line \({\hat{y}}=\frac{A+2}{A}{\hat{x}}\) and negative below it. So, out of this line, higher-order terms can be neglected close to the origin, whereas the second-order terms become non-negligible as soon as we are along that line, where it is immediate to see that the vector field points downward-left.

-

\(\bullet \) If \(0<\frac{\partial f}{\partial \beta }\left( 1\right) <1\), i.e., if \(B<A+2\), two intersections are present, one at \(\beta =1\) and one at \(\beta =\beta _{\times }(A,B) < 1\). The smallest solution to Eq. (C.3) gives rise to two extra fixed points of the type (C.1), with both coordinates smaller than 1 in absolute value.

-

\(\bullet \) If \(\frac{\partial f}{\partial \beta }\left( 1\right) >1\), i.e., \(B>A+2\), in addition to the intersection at \(\beta =1\), we have a second intersection at \(\beta =\beta _{\times }(A,B)>1\) and we get two fixed points of the type (C.1), with both coordinates greater than 1 in absolute value.

-

-

In what follows we restrict to the case \(B>A-1\) and we examine in more detail what happens in the three cases that we considered in the main text and that are shown in Fig. 4. The point (0, 0) is an unstable fixed point in all the scenarios. We will give information on the other equilibria.

Case 1. If \(A=2\), \(B=2.5\), Eq. (C.3) has the solutions \(\beta = 1\) and \(\beta = \beta _{\times }(A,B) < 1\), numerically obtained. From Eq. (C.1), we obtain respectively the fixed points \(\pm {\bar{x}}_{1} = \pm \left( 1,1\right) \) and \(\pm {\bar{x}}_{\beta _{\times }}=\pm \left( 0.78,0.63\right) \). The eigenvalues of the linearized system around \(\pm {\bar{x}}_{1}\) are both real and negative, implying that the points are stable nodes. The fixed points \(\pm {\bar{x}}_{\beta _{\times }}\) turn out to be saddle points. The phase portrait numerically obtained for this first case is shown in Fig. 4a.

Case 2. If \(A=2\), \(B=4\), Eq. (C.3) has the unique solution \(\beta = 1\), so the only fixed points, apart from \(\left( 0,0\right) \), are \(\pm {\bar{x}}_1\). We refer the reader to the analysis above, which holds for any \(A>1\), \(B=A+2\), and to Fig. 4b.

Case 3. If \(A=2\), \(B=7\), Eq. (C.3) has two solutions: \(\beta =1\) and \(\beta =\beta _{\times }(A,B) >1\). The fixed points \(\pm {{\bar{x}}}_{1}\) can be easily seen to be saddle points, while the fixed points \(\pm {{\bar{x}}}_{\beta _{\times }}=\pm \left( 1.24, 1.58\right) \) have complex conjugate eigenvalues with negative real part, thus they are stable spirals. The phase portrait in this case is shown in Fig. 4c.

Proof of Theorem 4.2

The proof of Theorem 4.2 follows the strategy used in [6], where an analogous result for a one population system of mean field interacting particles with dissipation is given.

Recall that \(((x(t),y(t)), 0 \le t \le T)\) is the unique solution to system (2.2). If \(Z\sim N(\mu ,v)\) is a Gaussian random variable with mean \(\mu \) and variance v, we have that \({\mathbb {E}}(Z^3)= \mu ^3 + 3 \mu v\) and \({\mathbb {E}}(Z^4)= \mu ^4 + 6 \mu ^2 v+3 v^2\). Therefore, plugging these identities into Eq. (4.5), we get differential equations for the mean and variance of two processes having the same first and second moments as x(t) and y(t), but with Gaussian-like higher-order moments. This is how we obtained system (4.6). Therefore, part 1 of Theorem 4.2 is true by construction.

We turn to the second part of the statement. Notice that system (4.6) has a unique global solution, since the four-dimensional vector field is continuous in each variable and has continuous partial derivatives at each point.

Let \(((m_1(t),m_2(t),v_1(t),v_2(t)), t \ge 0)\) be the unique solution to (4.6), with initial conditions \(m_1(0)=x(0)\), \(m_2(0)=y(0)\), \(v_1(0)=v_2(0)=0\), and set \(V_i(t) {:}{=}\sigma ^{-2} \, v_i(t)\) (\(i=1,2\)). The first step of the proof is to define two centered Gaussian processes, \((\xi _1(t),0 \le t \le T)\) and \((\xi _2(t), 0 \le t \le T)\), so that \({\mathbb {E}}[\xi ^2_i(t)]=V_i(t)\) for all \(t \in [0,T]\) (\(i=1,2\)). We consider the process \((\xi _1(t), 0 \le t \le T)\) first. If we write its differential as that of a generic Itô’s process, i.e. \(d\xi _1(t) = \psi (t)dt + \phi (t) \, dw_1(t)\), with \(\phi \), \(\psi \) suitable functions and \((w_1(t), 0 \le t \le T)\) a standard Brownian motion, by Itô’s formula we get

In turn, we obtain

and we can impose \(\phi (t)=1\) and \(\xi _1(t)\psi (t) =\xi ^2_1(t) {{\tilde{\psi }}}(t)\), where \({{\tilde{\psi }}}(t)\) is a deterministic factor such that \({\mathbb {E}}[\xi ^2_1(t)]\) satisfies the equation for \(V_1(t)\), obtained from Eq. (4.6). Namely, we must require that \({{\tilde{\psi }}}(t) = -3 \sigma ^2 V_1(t) -3 \left( m_1(t)\right) ^2+1-\alpha \theta _{11}-A\). With straightforward modifications, we also obtain a differential characterization for the process \((\xi _2(t), 0 \le t \le T)\). Putting everything together, we get the following system of SDEs

The processes \((\xi _i(t), 0 \le t \le T)_{i=1,2}\) are both Gaussian Markov processes, with zero mean and such that \(\text{ Var }\left[ \xi _i(t)\right] = V_i(t)\) for all \(t \in [0,T]\) (\(i=1,2\)). Moreover, they are well-defined, since we have uniqueness of the solution for system (4.6).

Now we define two new processes:

which can be easily seen to be Markovian and Gaussian. Moreover, their respective means \(m_1(t)\), \(m_2(t)\) and their respective variances \(v_1(t)=\sigma ^2 \, V_1(t)\), \(v_2(t)=\sigma ^2 \, V_2(t)\) satisfy Eq. (4.6) by construction. As a consequence, the processes \(({\tilde{x}}(t), 0 \le t \le T)\) and \(({\tilde{y}}(t), 0 \le t \le T)\) have first and second moments satisfying Eq. (4.5).

To conclude the proof we need to upper bound the right-hand side of the following inequality

By using together Eq. (D.1), Eq. (D.2) and Eq. (4.6), we obtain

and, analogously,

At this point we follow the very same steps we used before in Appendices A and B. We have

with \(f_1(s) = x^2(s)+{\tilde{x}}^2(s)+x(s){{\tilde{x}}}(s)\). Equation (D.4) is of the form \(\varphi (t) = \int _0^t\varphi (s) H(s) ds +\int _0^t Q(s) ds\), with \(\varphi (t)=x(t)-{\tilde{x}}(t)\). As \(\varphi (0)=0\), the solution to Eq. (D.4) is given by \(\varphi (t) = \int _0^t Q(s) e^{\int _s^t H(r)dr} ds\), where

and

Hence, we have

and, therefore,

for some \({\tilde{C}}_T>0\). The last inequality follows from the fact that we have introduced \({\tilde{Q}}(s)=\sigma ^{-2}Q(s)\), that, being a polynomial function of a Gauss-Markov process, has a time-locally bounded \(L^1\)-norm.

An analogous estimate holds for the second term in the right-hand side of (D.3), so that

for a suitable positive constant \({\tilde{D}}_T\). Putting together Eq. (D.3), Eq. (D.5) and Eq. (D.6) yields the conclusion of the proof of part 2 in Theorem 4.2.

Subcritical Hopf bifurcation

We consider the dynamical system (4.6) with \(\theta _{11}=\theta _{22}=8\) and A and B chosen in the regime given by assumption (H). We study the nature of the equilibrium point \((m_1,m_2,v_1,v_2)=\left( 0,0,{{\tilde{v}}}_1,\tilde{v}_2\right) \), where

as the noise intensity \(\sigma >0\) varies. The following analysis reveals the presence of a subcritical Hopf bifurcation at the point \((m_1,m_2,v_1,v_2)=\left( 0,0,{{\tilde{v}}}_1,{{\tilde{v}}}_2\right) \), in all the three parameter regimes examined in the main text (see Table 1 and Fig. 4). Recall that a Hopf bifurcation occurs when a stable periodic orbit arises from an equilibrium point as, at some critical value of the parameter, it loses stability. Subcritical means that-as in the present case-such a transition happens when moving the parameter from larger to smaller values. A Hopf bifurcation can be detected by checking whether a pair of complex eigenvalues of the linearized system around the equilibrium crosses the imaginary axis as the parameter changes. See Theorem 2, Chapter 4.4 in [22]. We briefly analyze our case.

Real parts (blue) and absolute values of the imaginary parts (orange) of the eigenvalues \(\lambda _1\), \(\lambda _2\) of the Jacobian matrix \(J(\sigma )\) as functions of \(\sigma \) with: a \(A=2\), \(B=2.5\), \(\sigma \) ranging from 1.4 to 1.9 with step 0.05; b \(A=2\), \(B=4\), \(\sigma \) ranging from 1.75 to 2.25 with step 0.05; c \(A=2\), \(B=7\), \(\sigma \) ranging from 2.2 to 2.7 with step 0.05

The Jacobian matrix relative to system (4.6) at \(\left( 0,0,{{\tilde{v}}}_1, {{\tilde{v}}}_2\right) \) reads

and its eigenvalues are

The eigenvalues \(\lambda _3\) and \(\lambda _4\) are negative for all \(\sigma >0\). The eigenvalues \(\lambda _1\) and \(\lambda _2\) are complex conjugate when (a) \(A=2\) and \(B=2.5\), (b) \(A=2\) and \(B=4\), (c) \(A=2\) and \(B=7\), and we checked numerically they cross the imaginary axis with negative derivative at the respective critical values (a) \(\sigma _c\simeq 1.65\), (b) \(\sigma _c\simeq 2\), (c) \(\sigma _c\simeq 2.45\) (see Fig. 8). These evidences confirm the presence of a subcritical Hopf bifurcation for the regimes examined in the main text.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Marini, E., Andreis, L., Collet, F. et al. Noise-induced periodicity in a frustrated network of interacting diffusions. Nonlinear Differ. Equ. Appl. 30, 34 (2023). https://doi.org/10.1007/s00030-022-00839-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s00030-022-00839-3

Keywords

- Collective rhythmic behavior

- Interacting diffusions

- Mean field interaction

- Frustrated dynamics

- Markov processes

- Propagation of chaos

- Gaussian approximation