Abstract

In this work, we study the stability regions of linear multistep or multiderivative multistep methods for initial value problems by using techniques that are straightforward to implement in modern computer algebra systems. In many applications, one is interested in (i) checking whether a given subset of the complex plane (e.g., a sector, disk, or parabola) is included in the stability region of the numerical method, (ii) finding the largest subset of a certain shape contained in the stability region of a given method, or (iii) finding the numerical method in a parametric family of multistep methods whose stability region contains the largest subset of a given shape. First, we describe a simple procedure to exactly calculate the stability angle α in the definition of A(α)-stability by representing the root locus curve of the multistep method as an implicit algebraic curve. As an illustration, we consider two finite families of implicit multistep methods. We exactly compute the stability angles for the k-step BDF methods (3 ≤ k ≤ 6) and discover that the values of tan(α) are surprisingly simple algebraic numbers of degree 2, 2, 4, and 2, respectively. In contrast, the corresponding values of tan(α) for the k-step second-derivative multistep methods of Enright (3 ≤ k ≤ 7) are much more complicated; the smallest algebraic degree here is 22. Next, we determine the exact value of the stability radius in the BDF family for each 3 ≤ k ≤ 6, that is, the radius of the largest disk in the left half of the complex plane, symmetric with respect to the real axis, touching the imaginary axis and lying in the stability region of the corresponding method. These radii turn out to be algebraic numbers of degree 2, 3, 5, and 5, respectively. Finally, we demonstrate how some Schur–Cohn-type theorems of recursive nature and not relying on the root locus curve method can be used to exactly solve some optimization problems within infinite parametric families of multistep methods. As an example, we choose a two-parameter family of implicit-explicit (IMEX) methods: we identify the unique method having the largest stability angle in the family, then we find the unique method in the same family whose stability region contains the largest parabola.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In the stability theory of one-step or multistep methods for initial value problems, one is often interested in various geometric properties of the stability region \({\mathcal {S}}\subset {\mathbb {C}}\) of the method. In this work, we study the shape of the stability region of linear multistep methods (LMMs) or multiderivative multistep methods (also known as generalized LMMs) as follows.

Suppose we are given

-

a)

a stability region \({\mathcal {S}}\) or

-

b)

a family of stability regions \({\mathcal {S}}_{\beta }\) parametrized by some \(\beta \in \mathbb {R}^{d}\)

and a family of subsets of \({\mathbb {C}}\), denoted by \({\mathfrak {F}}\). Due to their relevance in applications, we will consider the following three classes:

-

\({\mathfrak {F}}={\mathfrak {F}}^{\text { sect}}_{\alpha }\) is the family of infinite sectors in the left half of \({\mathbb {C}}\), with vertex at the origin, symmetric about the negative real axis, and parametrized by the sector angle α ∈ (0,π/2).

-

\({\mathfrak {F}}={\mathfrak {F}}^{\text { disk}}_{r}\) is the family of disks in the left half of \({\mathbb {C}}\), symmetric with respect to the real axis, touching the imaginary axis, and parametrized by the disk radius r > 0.

-

\({\mathfrak {F}}={\mathfrak {F}}^{\text { para}}_{m}\) is the family of parabolas in the left half of \({\mathbb {C}}\), symmetric with respect to the real axis, touching the imaginary axis, and parametrized by some m > 0.

Our goal is to find the set \(H\in {\mathfrak {F}}\) with the largest parameter (α, r, or m) such that

-

\(H\subset {\mathcal {S}}\) in case a;

-

\(H\subset {\mathcal {S}}_{\beta _{\text {opt}}}\) for some stability region in the family in case b, but \(H\not \subset {\mathcal {S}}_{\beta }\) for β≠βopt.

We will present some tools to handle these shape optimization questions and, as an illustration, exactly solve some of them by using Mathematica version 11 in the BDF (backward differentiation formula) and Enright families (as LMMs and multiderivative multistep methods, respectively), and in an infinite family of IMEX methods with d = 2 parameters.

1.1 Motivation and main results

When solving stiff ordinary differential equations, one desirable property of the numerical method is A-stability: a method is A-stable if the closed left half-plane \(\{z\in {\mathbb {C}} : \text {Re}(z)\le 0\}\) belongs to \({\mathcal {S}}\). Many useful methods are not A-stable, still, \({\mathcal {S}}\) contains a sufficiently large infinite sector in the left half-plane with vertex at the origin and symmetric about the negative real axis. This leads to the notion of A(α)-stability: a method is A(α)-stable with some 0 < α < π/2 if

When solving stiff ordinary differential equations, one desirable property of the numerical method is A-stability: a method is A-stable if the closed left half-plane \(\{z\in {\mathbb {C}} : \text {Re}(z)\le 0\}\) belongs to \({\mathcal {S}}\). Many useful methods are not A-stable, still, \({\mathcal {S}}\) contains a sufficiently large infinite sector in the left half-plane with vertex at the origin and symmetric about the negative real axis. This leads to the notion of A(α)-stability: a method is A(α)-stable with some 0 < α < π/2 if

where the argument of a non-zero complex number satisfies − π < arg ≤ π. The largest 0 < α < π/2 such that (1) holds is referred to as the stability angle of the method [17]. Various other stability concepts—such as A(0)-stability, A0-stability, \(\overset {\circ }{\text {A}}\)-stability, stiff stability, or asymptotic A(α)-stability—have also been defined, and theorems are devised to test whether a given multistep method is stable in one of the above senses (see, for example, [3, 9, 11, 20,21,22,23, 26, 34, 40]). There are various techniques to test A(α)-stability for a given α value. In [3], for example, the sector on the left-hand side of (1) is decomposed into an infinite union of disks, and a bijection between each disk and the left half-plane is established via fractional linear transformations to employ a Routh–Hurwitz-type criterion. Another way of studying A(α)-stability is to consider the root locus curve (RLC) of the multistep method [17]. Based on the RLC and some theorems from complex analysis, [38] presents a criterion for a LMM to be A(α)-stable for a given α; the stability angle is then obtained as the solution of an optimization problem involving Chebyshev polynomials. The procedure in [38] is formulated only for LMMs but not for multiderivative multistep methods.

The first goal of the present work is to describe an elementary approach to exactly determine the stability angle of a LMM or multiderivative multistep method: by eliminating the complex exponential function from the RLC and using a tangency condition, a system of polynomial equations in two variables is set up whose solution yields the stability angle. This process is easily implemented in computer algebra systems. As an illustration, we consider two finite families: the BDF methods [13, 17, 38] as LMMs and the second-derivative multistep methods of Enright [6, 10, 17]. With \(\alpha _{k}^{\text {BDF}}\) denoting the stability angle of the k-step BDF method for 3 ≤ k ≤ 6, we show that \(\tan \left (\alpha _{k}^{\text {BDF}}\right )\) is an unexpectedly simple algebraic number, having degree 2 for k ∈{3,4,6} and degree 4 for k = 5 (see Table 1). For the k-step Enright methods with 3 ≤ k ≤ 7, the corresponding constants \(\tan \left (\alpha _{k}^{\text {Enr}}\right )\) (with approximate values listed in Table 2) are much more complicated algebraic numbers of increasing degree (starting with 22). As far as we know, exact values α ∈ (0,π/2) for the stability angles of multistep methods were not presented earlier in the literature.

Remark 1.1

The k-step BDF methods for k ∈{1,2} are A-stable. For k ≥ 7 they are not zero-stable [7, 8, 16], therefore not interesting from a practical point of view.

Remark 1.2

In [38, Table 1], one finds some approximate values for the BDF stability angles; however, some of these values are not correct. The k = 3 value is wrong because the polynomial R3 is not computed properly (a factor 2 is missing, the correct form would have been R3(x) = − 12(x − 1)2(4x − 1)). The approximate values for k = 4 and k = 5 given in [38, Table 1] are correct (up to the given precision). The value for k = 6 is again incorrect because an error was committed in the minimization process. If the optimization in [38, Section 3] is carried out exactly with the correct Rj polynomials, we recover the stability angle values in our Table 1. The errors in [38, Table 1] propagated in the literature (see, for example, [33, p. 242]). As a consequence, some works that appeared in the current millennium also contain the erroneous angles. In [17, Chapter V.2, (2.7)], the correct approximate values are presented.

Remark 1.3

At the time of writing this document, we learned (through personal communication) that [1] also contains the exact stability angles for the BDF methods with 3 ≤ k ≤ 6 steps: although they use a different technique to derive the results and the arcsin function to express the final constants, the values given in [1] and our Table 1 are the same. Notice, however, that the stability angle for k = 5 given in [1] has a slightly more complicated structure than the value in our Table 1.

Remark 1.4

The k-step Enright methods are A-stable again for k ∈{1,2} (see [17]) and unstable for k ≥ 8. More precisely, [11] proves that these methods are not A0-stable for k ≥ 8; hence, they cannot be stiffly stable either (see [23, Theorem 3]) (cf. [22, 26]). However, in [17, Chapter V.3, p. 276, Exercise 2], the stiff instability of the Enright formulae for k ≥ 8 is still mentioned as an open problem.

The stability radius of a multistep method is the largest number r > 0 such that the inclusion

The stability radius of a multistep method is the largest number r > 0 such that the inclusion

holds. The stability radius plays an important role when analyzing the boundedness properties of multistep methods. For example, it has been proved [42, Theorem 3.1] that this radius is the largest step-size coefficient for linear boundedness of a LMM satisfying some natural assumptions.

Remark 1.5

For LMMs (and for more general methods as well), various other step-size coefficients have been introduced in the context of linear or non-linear problems. These coefficients govern the largest allowable step-size guaranteeing certain monotonicity or boundedness properties of the LMM, including the TVD and SSP properties [15]. These properties are relevant, for example, in the time integration of method-of-lines semi-discretizations of hyperbolic conservation laws [19, 35, 41].

Remark 1.6

In [31], the largest inscribed and smallest circumscribed (semi)disks are computed for certain one-step methods.

The second goal of the present work is to compute the stability radius for some multistep methods. We will achieve this by using again the algebraic form of the RLCs. Table 3 contains the exact values in the BDF family for 3 ≤ k ≤ 6.

The RLC, as the graph of a \([0,2\pi ]\to \mathbb {C}\) function (or a union of such functions for generalized LMMs), yields information about the boundary of the stability region, \(\partial {\mathcal {S}}\). It is known, however, that in general the RLC does not coincide with \(\partial {\mathcal {S}}\) (see Fig. 3). This does not pose a problem when a fixed multistep method is considered—one can evaluate the roots of the characteristic polynomial at finitely many test points sampled from different components of \({\mathbb {C}}\) determined by the RLC to see which component belongs to \({\mathcal {S}}\) and which one to \({\mathbb {C}}\setminus {\mathcal {S}}\). But when working with parametric families of multistep methods, the precise identification of the stability region boundaries or components can become challenging with the RLC method. One can overcome this difficulty for example by invoking a reduction process, the Schur–Cohn reduction, formulated in, e.g., [37]. Instead of using auxiliary fractional linear transformations and applying Routh–Hurwitz-type criteria [28, 36] as mentioned above, these Schur–Cohn-type theorems in [37] are directly tailored to the context of multistep methods to locate the roots of the characteristic polynomials with respect to the unit disk.

The RLC, as the graph of a \([0,2\pi ]\to \mathbb {C}\) function (or a union of such functions for generalized LMMs), yields information about the boundary of the stability region, \(\partial {\mathcal {S}}\). It is known, however, that in general the RLC does not coincide with \(\partial {\mathcal {S}}\) (see Fig. 3). This does not pose a problem when a fixed multistep method is considered—one can evaluate the roots of the characteristic polynomial at finitely many test points sampled from different components of \({\mathbb {C}}\) determined by the RLC to see which component belongs to \({\mathcal {S}}\) and which one to \({\mathbb {C}}\setminus {\mathcal {S}}\). But when working with parametric families of multistep methods, the precise identification of the stability region boundaries or components can become challenging with the RLC method. One can overcome this difficulty for example by invoking a reduction process, the Schur–Cohn reduction, formulated in, e.g., [37]. Instead of using auxiliary fractional linear transformations and applying Routh–Hurwitz-type criteria [28, 36] as mentioned above, these Schur–Cohn-type theorems in [37] are directly tailored to the context of multistep methods to locate the roots of the characteristic polynomials with respect to the unit disk.

The third goal of the present work is to demonstrate the effectiveness of the Schur–Cohn reduction when we solve two optimization case studies in a family of implicit-explicit (IMEX) multistep methods taken from [18]. On the one hand, we find the method in the IMEX family that has the largest stability angle, that is, the method whose stability region contains the largest sector (see our Theorem 5.3). On the other hand, we illustrate the versatility of the reduction technique by also finding the method whose stability region contains the largest parabola (see Theorem 6.1); the inclusion of a parabola-shaped region in \({\mathcal {S}}\) is relevant when studying semi-discretizations of certain partial differential equations (PDEs) of advection-reaction-diffusion type [5, 18, 28]. The chosen IMEX family is described by two real parameters, and the corresponding characteristic polynomial is cubic. The Schur–Cohn reduction process recursively decreases the degree of the characteristic polynomial, so instead of analyzing the roots of high-degree polynomials, we finally need to check polynomial inequalities in the parameters present in the coefficients of the original polynomial. Besides the two real parameters, two complex variables are involved in our calculations—the non-trivial interplay between these six real variables determines the optimum in both cases. We emphasize that we solve the optimization problem exactly, and RLCs are not relied on in the rigorous part of the proofs (only when setting up conjectures about the optimal values).

Remark 1.7

The Schur–Cohn reduction is also used in [25] to explore certain properties of a discrete parametric family of multistep methods. Conditions for disk or segment inclusions in the stability regions of a two-parameter family of multistep methods are formulated in [39]. Optimality questions about the size and shape of the stability regions of one-step or multistep methods are investigated in detail in [27]. Properties of optimal stability polynomials and stability region optimization in parametric families of one-step methods are discussed, for example, in [29, 30].

1.2 Structure of the paper

In Section 2.1, we introduce some notation. In Sections 2.2–2.3, we review the Schur–Cohn reduction and the definition of the stability region of a multistep method. In Sections 2.4–2.5, the definition of the root locus curve is recalled in two special cases: for linear multistep methods and for second-derivative multistep methods. Here, we consider the BDF and Enright families as concrete examples.

Regarding the new results, a simple algebraic technique is described in Section 3.1 to exactly compute the stability angle of a linear multistep or multiderivative multistep method. Stability angles for the BDF and Enright families are tabulated in Sections 3.2–3.3. In Section 4, we exactly compute the stability radii in the BDF family by using the same approach. In Section 5, we first describe a two-parameter family of IMEX multistep methods, in which we determine the unique method with the largest stability angle, then, in Section 6, the unique method whose stability region contains the largest parabola. The techniques in Sections 5–6 do not rely on root locus curves but use the Schur–Cohn reduction instead; the full proofs are deferred to Appendices A and B.

2 Preliminaries

2.1 Notation

The set of natural numbers {0,1,…} is denoted by \(\mathbb {N}\). For \(z\in \mathbb {C}\), Re(z), Im(z), and \(\overline {z}\) denote the real and imaginary parts and the conjugate of z, respectively, and i is the imaginary unit. The boundary of a (possibly unbounded) set \(H\subset \mathbb {C}\) is \(\partial H\subset \mathbb {C}\). When describing certain algebraic numbers of higher degree, a polynomial \({\sum }_{j=0}^{n} a_{j} x^{j}\) with \(a_{j}\in \mathbb {Z}\), an≠ 0 and n ≥ 3 will be represented simply by its coefficient list {an,an− 1,…,a0}. For a polynomial \(Q(z)={\sum }_{j=0}^{n}a_{j} z^{j}\) with \(0\le n \in \mathbb {N}\), \(a_{j}\in \mathbb {C}\) (0 ≤ j ≤ n), and an≠ 0, we denote its degree, leading coefficient and constant coefficient by deg Q = n, \(\mathfrak {l c} Q=a_{n}\), and \(\mathfrak {c c} Q=a_{0}\). The acronyms RLC and LMM stand for root locus curve and linear multistep method, respectively.

2.2 The Schur–Cohn reduction

In the rest of this section, we assume that Q is a univariate polynomial with deg Q ≥ 1, and follow the terminology of [37]—we have explicitly added the deg Q ≥ 1 condition, being implicit in [37]. We say that:

-

Q is a Schur polynomial, Q ∈Sch, if its roots lie in the open unit disk.

-

Q is a von Neumann polynomial, Q ∈vN, if its roots lie in the closed unit disk.

-

Q is a simple von Neumann polynomial, Q ∈svN, if Q ∈vN and roots with modulus 1 are simple.

Remark 2.1

The class Sch is referred to as strongly stable polynomials in [4, p. 345].

Remark 2.2

The property Q ∈svN is often expressed by saying that Q satisfies the root condition.

The reduced polynomial of \(Q(z)={\sum }_{j=0}^{n}a_{j} z^{j}\) is defined as

so we have deg Qr ≤ (deg Q) − 1. When this reduction process is iterated, we write Qrr for (Qr)r, for example. The following theorems from [37] use the notion of the reduced polynomial and the derivative to formulate necessary and sufficient conditions for a polynomial to be in the above classes. In all three theorems below, it is assumed that \(\mathfrak {l c} Q \ne 0 \ne \mathfrak {c c} Q\) and deg Q ≥ 2.

Theorem 2.3

\(Q\in \mathbf {Sch} \Leftrightarrow (|\mathfrak {l c} Q|>|\mathfrak {c c} Q| \text { and } Q^{\mathbf {r}}\in \mathbf {Sch})\) .

Theorem 2.4

\(Q\in \mathbf {vN} \Leftrightarrow \text {either } (|\mathfrak {l c} Q|>|\mathfrak {c c} Q| \text { and } Q^{\mathbf {r}}\in \mathbf {vN}) \text { or } (Q^{\mathbf {r}}\equiv 0 \text { and } Q^{\prime }\in \mathbf {vN})\) .

Theorem 2.5

\(Q\in \mathbf {svN} \Leftrightarrow \text { either } (|\mathfrak {l c} Q|>|\mathfrak {c c} Q| \text { and } Q^{\mathbf {r}}\in \mathbf {svN}) \text { or } (Q^{\mathbf {r}}\equiv 0 \text { and } Q^{\prime }\in \mathbf {Sch})\) .

Remark 2.6

Let us consider the following example when applying the theorems above, e.g., Theorem 2.4. For any λ > 0, we set Qλ(z) := z2 + λiz + 1. Then the roots of Qλ satisfy |z1(λ)| < 1 < |z2(λ)|, so Qλ∉vN, and \(Q_{\lambda }^{\mathbf {r}}=2\lambda i\). This shows that it can happen that the degree of the original polynomial is > 1, but its reduced polynomial is a non-zero constant, so the relation Qr ∈vN is undefined. In these cases, when Qr is a non-zero constant, notice that neither |Qr| < 1 nor |Qr| = 1 nor |Qr| > 1 can help us in general to determine whether Q ∈vN or not (of course, the other condition \(|\mathfrak {l c} Q|>|\mathfrak {c c} Q|\) is violated now) (cf. the sentence above [37, Theorem 5.1]).

2.3 The stability region of a multistep method

Stability properties of a broad class of numerical methods (including Runge–Kutta methods, linear multistep methods, or multiderivative multistep methods) for solving initial value problems of the form

can be analyzed by studying the stability region of the method. When an s-stage k-step method (s ≥ 1, k ≥ 1 fixed positive integers; for k = 1 we have a one-step method, while for k ≥ 2 a multistep method) with constant step size h > 0 is applied to the linear test equation y′ = λy (\(\lambda \in {\mathbb {C}}\) fixed, y(t0) = y0 given), the method yields a numerical solution \((y_{n})_{n\in \mathbb {N}}\) that approximates the exact solution y at time tn := t0 + nh and satisfies a recurrence relation of the form [27]

The characteristic polynomial associated with the method takes the form

With Φ(⋅,μ) abbreviating the polynomial ζ↦Φ(ζ,μ), the stability region of the method is defined as

Remark 2.7

Some other variations of the above definition of the stability region of a multistep method have also been proposed in the literature (see, e.g., [24]). In [4, p. 344], the “open stability region” is defined as the set

(see also [44, p. 348], [12, p. 452], or [33]). In, e.g., [17, 32], the stability region of the method (3) is defined as

that is, essentially, Φ(⋅,μ) ∈svN. In [27, Formula (2.5)], the stability region is given by

with \(\overline {\mathbb {C}}\) denoting the extended complex plane.

We can regroup the terms in (4) as \({\Phi }(\zeta ,\mu )={\sum }_{\ell =0}^{k} C_{\ell }(\mu )\zeta ^{\ell }\) with some suitable polynomials Cℓ. The inequality condition in (3) implies that the leading coefficient Ck does not vanish identically; it may happen that for some exceptional μ values the leading coefficient is zero:

For example, for the implicit Euler (IE) method Φ(ζ,μ) = ρ(ζ) − μσ(ζ) = (1 − μ)ζ − 1 with ρ(ζ) := ζ − 1 and σ(ζ) := ζ, so \(\mathcal {E}=\{1\}\). For the 2-step BDF method (BDF2), Φ(ζ,μ) = (3 − 2μ)ζ2 − 4ζ + 1; hence \({,\mathcal {E}}=\{3/2\}\). If definition (6) (or (7)) is interpreted formally, we have for the IE method that \({\mathcal {E}}=\{1\}\subset {\mathcal {S}}\) (because (6) is satisfied vacuously). Similarly, for the BDF2 method, \({\mathcal {E}}=\{3/2\}\subset {\mathcal {S}}\) (because then the unique root of Φ(ζ,3/2) = 0 is ζ = 1/4).

However, elements of \({\mathcal {E}}\) or \({\mathcal {E}}\cap {\mathcal {S}}\) can be problematic.

-

(i)

For \(\mu \in {\mathcal {E}}\), the order of the recursion (3) decreases, thus, in general, the starting values y0,y1,…,yk− 1 of the numerical method cannot be chosen arbitrarily.

-

(ii)

Some exceptional values \(\mu \in {\mathcal {E}}\cap {\mathcal {S}}\) can be surrounded by points of instability of the method—this is the case for example for both the IE and BDF2 methods. When the step size h > 0 is chosen in a way that \(\mu \in {\mathcal {E}}\cap {\mathcal {S}}\) is such an isolated value, the recursion (3) generated by the numerical method becomes practically useless (it quickly “blows up” for arbitrarily small perturbations of h).

-

(iii)

RLCs are often used to identify the boundary \(\partial {\mathcal {S}}\) of the stability region (see Sections 2.4–2.5 below). In [27, Definition (2.21)], the RLC is given by

$${\Gamma}:=\{ \mu\in \overline{{\mathbb{C}}} : \exists \zeta\in{\mathbb{C}}\text{ with } |\zeta|=1 \text{ and } {\Phi}(\zeta,\mu)=0\}.$$It can happen that \(\partial {\mathcal {S}}\) is a proper subset of the corresponding RLC (see, for example, our Fig. 3), but in [27, Corollary 2.6] it is shown that for a numerical method satisfying Property C (see [27, Formula (2.9)] or [17, Definition 4.7]), the RLC coincides with \(\partial {\mathcal {S}}\). According to [17, Section V.4], all one-step methods have Property C, so the IE method also has. And indeed, applying [27, Proposition 2.7] to the IE method we now have that ρ and σ have no common root and ρ/σ is univalent on the set \(\{ z\in \overline {\mathbb {C}} : |z-1|>1\}\), so Q(μ) = 1/(1 − μ) has Property C. Thus for the IE method \(\partial {\mathcal {S}}={\Gamma }\). As we have seen above, \(1\in {\mathcal {E}}\cap {\mathcal {S}}\), so \(1\in \partial {\mathcal {S}}\). On the other hand, Φ(ζ,1) = ρ(ζ) − σ(ζ) = − 1, so \(1\notin {\Gamma }=\partial {\mathcal {S}}\). This apparent contradiction seems to indicate that the authors of [27] interpreted definition (7) intuitively: a root ζ = ∞ is tacitly introduced as soon as the leading coefficient Ck(μ) becomes zero. So [27, Corollary 2.6], for example, actually relies on definition (5) rather than on definition (7) (or (6)).

The problem of vanishing leading coefficient is implicitly avoided in [33, p. 66], or in [40], because they impose a requirement on “all the roots rs (s = 1,…,k).” Definition (5) above with the non-vanishing leading coefficient essentially appears, for example, in [41, Section 2.1] (where it is formulated for LMMs, that is, for s = 1 in (3)), or in [42, Section 2].

Notice that, with the theorems cited in our Section 2.2, one can directly investigate the stability region of a numerical method, without constructing the corresponding RLC or without analyzing the relation between \(\partial {\mathcal {S}}\) and the RLC (see Sections 5–6 below).

Finally, we remark that the above considerations also play an important role, e.g., in control theory [2, Chapter 1], where a “degree invariance” (i.e., “no degree loss”) condition is incorporated in the Boundary Crossing Theorem. Bhattacharyya et al. [2, Chapter 1] also recalls several stability results for polynomials, e.g., the Routh–Hurwitz, Jury, or the recursive Schur(–Cohn) stability tests.

2.4 The RLC of a LMM

A linear multistep method for (2) has the form

where fm := f(tm,ym), and the numbers \(\alpha _{j}\in \mathbb {R}\) and \(\beta _{j}\in \mathbb {R}\) (j = 0,…,k) are the suitably chosen method coefficients with αk≠ 0. The method is implicit, if βk≠ 0. By setting

the associated characteristic polynomial (4) becomes

One way to study the stability region (5), or its boundary \(\partial {\mathcal {S}}\) in the complex plane is to depict the RLC corresponding to the method [17]: observe that P1 is linear in μ, so P1(ζ,μ) = 0 implies μ = ρ(ζ)/σ(ζ) (for σ(ζ)≠ 0). The RLC is then the image of the parametric curve

2.4.1 RLCs for the BDF methods

Each member of the BDF family is a special case of (8). The k-step BDF method (having order k) is given by

where ∇ denotes the backward difference operator ∇yn+ 1 := yn+ 1 − yn, and ∇jyn+ 1 := ∇j− 1yn+ 1 −∇j− 1yn (for j > 1). It is known [17] that the corresponding RLC is

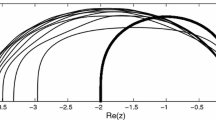

Figures 1, 2, and 3 show the RLCs for some BDF methods.

RLC for the unstable 7-step BDF method in red (left) and a close-up near the origin (right). For comparison, the curves from Fig. 1 are also superimposed as dashed gray curves

The black curve in the left figure shows the boundary \(\partial {\mathcal {S}}\) of the stability region of the (unstable) 7-step BDF method; \(\partial {\mathcal {S}}\) is non-differentiable at one point. The stability region is the unbounded outer component. The red curve segment near the origin is not part of \(\partial {\mathcal {S}}\); it is a subset only of the RLC as displayed in Fig. 2. The small brown rectangle in the center is shown in detail in the right figure. The red curve in the right figure is again the RLC. The 6 black dots depict the set of μ values such that P1(⋅,μ) in (9) has multiple roots (there are no other \(\mu \in \mathbb {C}\) parameters with this property for k = 7). The polynomial P1(⋅,μ) has 1, 2, and 3 roots outside the unit disk for μ values in the dark brown, light brown and orange regions, respectively; P1(⋅,μ) cannot have 4 or more roots outside the unit disk. Each of the three self-intersections of the RLC in this figure (as well as the self-intersection of the RLC seen only in the left figure) corresponds to a μ value for which P1(⋅,μ) has two distinct roots with modulus 1. Exactly computing, for example, the unique value of μ‡≈− 2.68886 ⋅ 10− 6 + 0.275988i in the open upper half-plane where the RLC crosses itself was a non-trivial task: it took Mathematica 86 minutes to explicitly determine the coefficients of the integer polynomial defining μ‡ and having degree 30. The RLCs for the k-step BDF methods with 1 ≤ k ≤ 6 do not have any self-intersections; other singularities may occur, see Fig. 6

2.5 The RLC of a multiderivative multistep method

A second-derivative multistep method is more general than (8) and can be written as

where gn := g(tn,yn) with g(t,y) := ∂1f(t,y) + ∂2f(t,y) ⋅ f(t,y), and the method is determined by the coefficients αj, βj, and γj, see [17]. Now the associated characteristic polynomial (4) becomes

This time we have two RLCs:

where μ1,2 are the two solutions of P2 (ei𝜗,μ) = 0. For any choice of the method coefficients αj, βj, and γj, one can construct μ1,2 explicitly, since P2 is only quadratic in μ.

2.5.1 RLCs for the Enright methods

The Enright methods are special cases of (12), and for k ≥ 1 they are defined [17] as

where

with the usual extension of the binomial coefficients. From (14) one obtains the RLCs of the Enright methods, see Figs. 4 and 5. The order of the k-step Enright method is k + 2.

RLCs for the unstable 8-step Enright method in red. The stability region \({\mathcal {S}}\) is not connected, \({\mathbb {C}}\setminus {\mathcal {S}}\) is the annulus-like region. For comparison, the curves from Fig. 4 are displayed as dashed gray curves

3 Optimal sector inclusions

3.1 The RLC in implicit algebraic form

Computing the stability angle of a method with stability region \({\mathcal {S}}\) is equivalent to finding the slope of the unique line L that passes through the origin, touches \(\partial {\mathcal {S}}\) at some point in the open upper left half-plane such that \(\partial {\mathcal {S}}\) lies on the right-hand side of L (viewed from the origin) in this quadrant. This last requirement is necessary since \(\partial {\mathcal {S}}\cap L\) can consist of more points, even in the open upper left half-plane (see Fig. 7).

Assume now that \(\partial {\mathcal {S}}\) can be represented by the RLC of the method (cf. Remark 2.7). As we have seen, the RLC is the image of the function μ(⋅) in (10) for LMMs, or the union of the images of the functions μ1,2(⋅) in (13) for second-derivative multistep methods. The function μ is given as a simple ratio, but to get the explicit forms of μ1,2, one should solve a quadratic equation. As the value of k gets larger, these explicit formulae for μ1,2 corresponding to a k-step second-derivative multistep method become more and more complicated. Moreover, obtaining explicit and practically useful parametrized formulae for the RLCs associated with multistep methods based on higher-than-second-order derivatives would be almost impossible.

To avoid these difficulties, we now describe a more general and effective technique which reduces the determination of the stability angles to the solution of a suitable system of polynomial equations. Let us consider the equation Φ(ei𝜗,μ) = 0 (see (4)). By using the well-known Weierstrass substitution [43, pp. 382–383]

we have ei𝜗 = (i − t)/(i + t); so instead of solving Φ(ei𝜗,μ) = 0 for μ, we can solve

without trigonometric functions. Notice that originally we have 𝜗 ∈ [0,2π] in ei𝜗, or equivalently, 𝜗 ∈ (−π,π], but π is not in the range of the function 2arctan; therefore, we define

to restore the missing μ value(s) due to the reparametrization. Then, clearly, (15) can be brought to the form Q(t,μ)/R(t) = 0 with some (complex) polynomials Q and R. By writing μ = a + bi (\(a, b \in \mathbb {R}\)), we get that there exist two real polynomials \(Q_{\text {re}}:\mathbb {R}^{3}\to \mathbb {R}\) and \(Q_{\text {im}}:\mathbb {R}^{3}\to \mathbb {R}\) such that the solutions of Q(t,μ) = 0 for any fixed \(t\in \mathbb {R}\) are obtained as the solutions of the system

Now, we eliminate t by taking the resultant [14] of Qre and Qim with respect to this parameter, and get that there exists a real polynomial \(F:\mathbb {R}^{2}\to \mathbb {R}\) such that if (16) holds for some \(t\in \mathbb {R}\), then F(a,b) = 0 should hold with some \(a, b \in \mathbb {R}\). Hence, after identifying \(\mathbb {C}\) with \(\mathbb {R}^{2}\), we see that the RLC can be represented as the implicit algebraic curve C ∪ M− 1 with \(C:=\{ (a,b)\in \mathbb {R}^{2} : F(a,b)=0\}\). Assuming that the set M− 1 is finite (it has at most two elements in the case of the BDF and Enright methods we are interested in), we ignore this component and focus only on C. Suppose now that a line L passes through the origin and touches C in the open upper left half-plane at some (a0,b0) with a0 < 0 < b0. By assuming that C can be represented locally as the graph of an implicit function near (a0,b0) ∈ C, we easily get, by differentiating a↦F(a,b(a)), that (a0,b0) satisfies

By taking again the resultant of the first two polynomial equations, one of the variables, say b0, is eliminated. The resulting univariate polynomial yields in the general case finitely many possible a0 values to choose from. With α denoting the angle (in radians) between L and the negative half of the real axis, we get that tan(α) = −b0/a0. To select the appropriate solution (a0,b0) (and hence the appropriate tangent line L), we verify in the concrete case that \((a_{0},b_{0})\in \partial {\mathcal {S}}\subset \mathbb {C}=\mathbb {R}^{2}\), and determine whether \(\partial {\mathcal {S}}\) lies on the right-hand side of L. The appropriately chosen α angle then yields the desired stability angle.

3.2 Results for the BDF methods

The simplest non-trivial case illustrating the steps in Section 3.1 is the determination of the stability angle for the 3-step BDF method. Formula (11) with k = 3 yields the following trigonometric parametrization of the RLC in \(\mathbb {R}^{2}\) after a simplification:

After eliminating the trigonometric functions, (15) can be written as

Then Qre and Qim in (16) become

We eliminate t from this system and obtain

Now, b is eliminated from the first two equations of (17), and we get that the possible choices for a0 are the negative real roots of

yielding the unique value a0 = − 405/5324. Substituting this a0 into (17), we get the unique value \(b_{0}={987 \sqrt {35}}/{5324}\); hence, \(\tan (\alpha )=-b_{0}/a_{0}=({329 \sqrt {7/5}})/{27}\) is the only possible value for the tangent of the stability angle. Finally, we verify that the corresponding tangent line L passing through the origin has no other intersection point with \(\partial {\mathcal {S}}\) in the open upper left quadrant, and \(\partial {\mathcal {S}}\) lies on the right side of L.

Remark 3.1

The above RLC for the 3-step BDF method can also be parametrized as

Here, \(M_{-1}=\{(20/3, 0)\}\subset \mathbb {R}^{2}\), corresponding to the t →±∞ limiting value of the parametrization.

The remaining stability angle values for 4 ≤ k ≤ 6 can be computed analogously, so Table 1 shows only the final exact results.

Remark 3.2

For 3 ≤ k ≤ 6, the BDF stability region includes an interval along the imaginary axis and containing the origin if and only if k = 5 or k = 6. For k = 5 and k = 6 the two intervals are

and

respectively.

Remark 3.3

The boundary curve of the stability region of the 6-step BDF method contains two cusp singularities (see Fig. 6 and compare with Fig. 3). No other \(\partial {\mathcal {S}}\) curve has this type of degeneracy in the BDF family for 1 ≤ k ≤ 5 or k = 7. Since the cusp points for k = 6 are not part of \({\mathcal {S}}\), the stability region in this case is not closed (nor open).

Cusp singularities of \(\partial {\mathcal {S}}\) for the 6-step BDF method, denoted by red dots in the left figure. The singularities are located at \(\mu _{\pm }:=\frac {7}{120}\pm i \frac {21 \sqrt {3}}{40}\approx 0.0583\pm 0.9093 i\). For each such μ value, P1(⋅,μ) in (9) has a double root with modulus equal to 1. Therefore, \(\mu _{\pm }\in \partial {\mathcal {S}}\setminus {\mathcal {S}}\); hence, this \({\mathcal {S}}\) is not closed. The right figure depicts the 6 roots of P1(⋅,μ+), and the double root is located at \(\frac {1}{2} \left (1+i \sqrt {3}\right )\) (note that \(\mu _{+}\in \mathbb {C}\setminus \mathbb {R}\), so these roots are not symmetric with respect to the real axis)

3.3 Results for the Enright methods

By applying the algorithm described in Section 3.1, we can exactly determine the stability angles for the Enright methods (see Table 2). But since the \({c_{k}^{\text {Enr}}}\) values are much more complicated algebraic numbers than the corresponding \({c_{k}^{\text {BDF}}}\) constants in Table 1, Table 2 contains only a numerical approximation to the exact stability angles.

Remark 3.4

By rounding the values of \(\alpha _{k}^{\text {Enr}}\) given in Table 2 to two decimal places, we recover the approximate values of these stability angles in [17, Chapter V.3, Table 3.1].

It turns out that \(c_{3}^{\text {Enr}}\) is an algebraic number of degree 22, being the unique positive root of the following even polynomial with coefficients

Remark 3.5

Besides the stability angle, there are other measures of stability for A(α)-stable methods. One of these characteristics is the stiff stability abscissa, being the smallest constant D > 0 such that \(\{ z\in \mathbb {C} : \text {Re}(z)\le -D\}\subset {\mathcal {S}}\). For example, for the 3-step Enright method, Table 3.1 in [17, Chapter V.3] contains the approximate value D ≈ 0.103. By using our implicit representation of \(\partial {\mathcal {S}}\), it is straightforward to determine the exact value of D ≈ 0.10341810907195; it is an algebraic number of degree 12, and the total number of digits in the coefficients of its defining integer polynomial is 529.

As for the k = 4 case, the algebraic degree of \(c_{4}^{\text {Enr}}\) is 28. The constants \(c_{5}^{\text {Enr}}\), \(c_{6}^{\text {Enr}}\), and \(c_{7}^{\text {Enr}}\) can be given as roots of increasingly more involved integer polynomials, so we do not reproduce these polynomials here. During the computations in the k = 7 case, for example, we had to manipulate intermediate polynomials of degree of a few hundred, or polynomials with a total number of coefficient digits of approximately 470000. We could describe the final defining polynomial for \(c_{7}^{\text {Enr}}\) by ≈ 175000 characters in Mathematica.

Remark 3.6

Let us consider the Enright stability region corresponding to k = 7. As we already remarked earlier, there are exactly two lines that pass through the origin and are locally tangent to the boundary curve at some point in the open upper left half-plane (see Fig. 7). Within the BDF family for 1 ≤ k ≤ 6 or in the Enright family for 1 ≤ k ≤ 7, this phenomenon occurs only in the present case.

Part of the boundary of the stability region of the 7-step Enright method near the origin (solid black curve) together with the two (dashed red and black) lines that pass through the origin and are locally tangent to the boundary curve at some point in the open upper left half-plane. Due to the scaling, the dashed black line is seen only in the larger plot window on the right. The stability angle \(\alpha _{7}^{\text {Enr}}\approx 37.6^{\circ }\) of the method is determined by the dashed black line; the red line has additional intersection points with the boundary curve. The angle between the dashed red line and the negative half of the real axis has also been computed exactly; its approximate value is ≈ 89.9999527∘

4 Optimal disk inclusions

As for the largest inscribed disk in the stability region \({\mathcal {S}}\), we again expect—similarly to Section 3.1—that \(\partial {\mathcal {S}}\) (or the RLC) and the optimal disk possess a common tangent line (with point of tangency different from the origin). By using:

-

the implicit algebraic form F(a,b) = 0 of the RLC

-

the implicit equation (a + r)2 + b2 − r2 = 0 for the boundary of the inscribed disk

-

and the condition for a common tangent line

$$ -\frac{\partial_{a} F(a,b)}{\partial_{b} F(a,b)} = - \frac{\partial_{a} \left( (a+r)^{2}+b^{2}-r^{2}\right)}{\partial_{b} \left( (a+r)^{2}+b^{2}-r^{2}\right)}, $$

we obtain a system of 3 polynomial equations in 3 unknowns (a,b,r). By taking resultants and successively eliminating the variables (a,b), we obtain a univariate polynomial in r whose positive root will yield the optimum value of the stability radius. The exact optimal stability radii \(r_{k}^{\text { BDF}}\) for the k-step BDF methods (3 ≤ k ≤ 6) are found in Table 3 (see also Fig. 8). The degree of the algebraic number \(r_{k}^{\text { BDF}}\) is 2,3,5,and 5 for 3 ≤ k ≤ 6, respectively.

Remark 4.1

It is quite surprising that the algebraic numbers listed in Table 3 have such a low degree for the following reasons. For the 3-step BDF method, the univariate polynomial in r mentioned above has degree 28, but it can be split into several factors of lower degree and has a unique positive root \(r_{3}^{\text {BDF}}\approx 7.0497\). For the 4-step BDF method, the corresponding r-polynomial has degree 52 and a unique positive root ≈ 2.7272. The r-polynomial for the 5-step BDF method has degree 88 and a unique positive root ≈ 1.3579. Finally, the r-polynomial for the 6-step BDF method has degree 128 and a unique positive root ≈ 0.5599.

The largest inscribed disk |z + r|≤ r (with red boundary) in the stability region of the k-step BDF method for k = 4 (left) and k = 6 (right), see Table 3

5 Optimal stability angle in a family of multistep methods

In [18], ODEs of the form u′(t) = F(u(t)) + G(u(t)), u(0) = u0 are considered, with F and G representing non-stiff and stiff parts of the equation, respectively. To solve these equations numerically, the authors construct several implicit-explicit (IMEX) LMMs and thoroughly analyze them from the viewpoint of numerical monotonicity, boundedness, and stability. Their analysis involves finding optimal methods with respect to various criteria in certain families.

Here, we take their simplest case study from [18, Section 3.2.1], a 2nd-order, 3-step explicit method augmented by an implicit method (note that the time step is now denoted by Δt instead of h, and we changed their notation from bj to βj):

The values of β2 := − 3β0 − 2β1 + 3 and \(\beta _{3}:=2 \beta _{0}+\beta _{1}-\frac {3}{2}\) are determined from the order conditions, so (18) becomes a 2-parameter family of methods, with real parameters β1 and β0. The three figures in [18, Figure 1] then depict the A(α)-stability angles, the “damping factors,” and the “absolute error constants,” respectively, of members of the family (18). In what follows, we do not consider these last two categories but focus only on the leftmost figure in [18, Figure 1]—as the authors conclude in [18, Section 3.2.1], a method with large stability angle does not necessarily have a good damping factor or a small error constant, and vice versa; the different optimization criteria are often conflicting. In other words, our goal in this section is to find the IMEX method in the family (18) with the largest stability angle.

To begin the A(α)-stability investigation, the authors of [18] define the usual linear test functions \(F(u):=\hat {\lambda } u\) and G(u) := λu. They then assume that \({\Delta } t\cdot \hat {\lambda }=i\eta \) and Δt ⋅ λ = ξ with \(\eta \in \mathbb {R}\) and \(\mathbb {R}\ni \xi \le 0\): this choice is relevant “for example, for advection-diffusion equations if central finite differences or spectral approximations are used in space.” These assumptions lead to the following characteristic polynomial of the IMEX multistep family (see [18, (2.4)–(2.7)]):

To create the leftmost figure in [18, Figure 1] approximately indicating the optimal stability angle within the family, the authors use (19) to construct the RLCs and study these curves “for ξ →−∞” to estimate the stability angles.Footnote 1

In the rest of this section, we confirm their numerical findings, but we solve the optimization problem rigorously and exactly. We have selected family (18) because the final result—the optimal stability angle—has a particularly simple form (see our Theorem 5.3 below), and, at the same time, our straightforward approach based on the theorems cited in Section 2.2 is readily illustrated. We emphasize that our analysis avoids the construction of the RLCs: as we have seen (for example, in Fig. 3), they may have complicated self-intersections, and it is often not obvious a priori whether a particular segment of the RLC coincides with the stability region boundary or not.

5.1 Summary of the main steps and results

By rearranging (19) and inserting the values of β2 and β3 given below (18), we define

where \(\zeta \in \mathbb {C}\), \((\beta _{1}, \beta _{0})\in \mathbb {R}^{2}\), ξ ≤ 0 and \(\eta \in \mathbb {R}\). Our goal is to find the parameters (β1,β0) such that the stability region

contains the infinite sector

with the largest m > 0 in the definition of A(α)-stability. In other words, we are to find (β1,β0) such that

holds with the largest possible m > 0. Note that for convenience we have identified \(\mathbb {C}\) with \(\mathbb {R}^{2}\); hence, stability regions in this section are subsets of \(\mathbb {R}^{2}\).

As a first step, Lemma 5.1 below yields a necessary condition for the inclusion (22). In its proof—presented in Appendix A.1—we use the argument proposed in [18] and consider the ξ →−∞, η = 0 limiting values. At this point, it is convenient to recall the notion of \(\overset {\circ }{\text {A}}\)-stability [17, Chapter V.2]: a method is \(\overset {\circ }{\text {A}}\)-stable, if its stability region includes the non-positive reals \(\{\xi \in \mathbb {R} : \xi \le 0\}\). Clearly,

Lemma 5.1

Let us define

Then a method of the form (18) is not \(\overset {\circ }{\text {A}}\)-stable for (β1,β0)∉W.

As a consequence, from now on, we can assume (β1,β0) ∈ W (see Fig. 9). Note that the orientation of the axes in Fig. 9 and in the leftmost figure in [18, Figure 1] is the same: the β1-axis is horizontal, while the β0-axis is vertical. Lemma 5.1 thus also proves that the wedge-like object in the parameter space in the leftmost figure in [18, Figure 1] is indeed a perfect (infinite) wedge given by W.

Remark 5.2

The assumption (β1,β0) ∈ W implies β0 > 0, so due to ξ ≤ 0, the leading coefficient of (20), 1 − β0ξ, cannot vanish (cf. Remark 2.7).

Then in Appendix A.2 we prove the main result of Section 5.

Theorem 5.3

Suppose that (β1,β0) ∈ W.Then the largestm > 0 such that (22) holds ism ≡ mopt := 1/2.

In the proof, we show that finding the optimal (β1,β0) ∈ W is equivalent to finding the largest positive real root of a suitable polynomial in m with coefficients depending on β1 and β0. We verify that this optimal root is located at mopt, corresponding to the unique method with (β1,β0) = Wopt := (3/8,3/4) ∈ W and represented as a red dot in the parameter space in Fig. 9. The black curve in the left half-plane in Fig. 12 is the boundary of the optimal stability region, and the dashed red lines bound the largest inscribed infinite sector \({\mathcal {A}}_{1/2}\): the optimal stability angle satisfies tan(α) = mopt. As a conclusion, the highest value in the scale adjacent to the leftmost figure in [18, Figure 1] should be exactly α = arctan(1/2) ≈ 0.463648, that is, α ≈ 26.5651∘.

Remark 5.4

Unlike in Section 6 (see Remark B.2), the boundary of the optimal sector \({\mathcal {A}}_{1/2}\) does not touch (or intersect) the boundary of the optimal stability region \({\mathcal {S}}_{3/8, 3/4}\) in the open left half-plane.

Remark 5.5

In [18, Section 3.2.1, (3.4)–(3.5)], the stability angles for two particular schemes from the family (18) are also approximated. For the IMEX-Shu(3,2) scheme

they obtain αShu ≈ 0.06, and for the IMEX-SG(3,2) scheme

they get αSG ≈ 0.38. Our technique easily yields the exact values

and

6 Optimal parabola inclusion in a family of multistep methods

In the previous section, we demonstrated how one can find the optimal sector in a family of stability regions of multistep methods. Here, we show that the same algebraic approach allows us to replace the sector with more general shapes: we use again the multistep family (18) as a test example and determine the optimal stability region that contains the largest parabola. The motivation for considering the shape of a parabola comes from [18] (“for advection-diffusion equations, stability within a parabolaFootnote 2 can be more relevant than for a wedge”), or from [5, Sections 3–4] (where linearly implicit Runge–Kutta methods are developed for the numerical integration of semidiscrete equations originating from spatial discretizations of PDEs of advection-reaction-diffusion type).

With \(P_{\beta _{1},\beta _{0}}\) and \({\mathcal {S}}_{\beta _{1},\beta _{0}}\) defined in (20)–(21), we are now looking for the largest possible m > 0 such that the stability region of a suitable member of the family (18) contains the parabola

that is, the inclusion

holds. Clearly, we need \(\overset {\circ }{\text {A}}\)-stability again to have (25) with some m > 0, so from now on, by Lemma 5.1, we can assume that (β1,β0) ∈ W (see Fig. 9).

In Appendix B.1, we apply a simple geometric argument: we first formulate the RLCs for the members of the multistep family as implicit curves \(\{(\xi , \eta )\in \mathbb {R}^{2} : F_{\beta _{1}, \beta _{0}}(\xi ,\eta )=0\}\), then invoke the notion of discriminant [14] to construct a polynomial in m (and depending on the parameters β1 and β0) whose suitable root can yield the optimal value \(\widetilde {m}_{\text {opt}}\) in (25). The simple observation is the same as the one used in Section 3.1 (or in Section 4): the optimal inscribed object (now a parabola) touches the boundary of the optimal stability region.

Based on this technique and by using Mathematica, we conjecture that the parameter values β1 = 1/5 and β0 = 37/40 give \(\widetilde {m}_{\text {opt}}=6/5\). In Appendix B.2, we use a uniqueness argument to rigorously prove this conjecture. We emphasize that, similarly to Appendix A.2, no RLCs are involved in this uniqueness proof; the RLCs are used only as auxiliary objects to conjecture the optimum. Given the complexity of intermediate calculations, it is again surprising that the final result \(\widetilde {m}_{\text {opt}}\) is a simple rational number. In summary, we have the following theorem.

Theorem 6.1

Suppose that (β1,β0) ∈ W.Then the largestm > 0 such that (25) holds is\(m\equiv \widetilde {m}_{\text {opt}}:=6/5\).

Remark 6.2

The authors of [18] observe that “for the methods considered in this paper, a large angle α will correspond to a large β” (with α and β interpreted in our Footnotes 1 and 2). According to our results, the optimal (β1,β0) parameter pairs (3/8,3/4) and (1/5,37/40)—determining the stability regions with the largest inscribed sector and parabola, respectively—do not coincide, although they are both located on the right boundary of W in Fig. 9 (see also Remark B.1).

Notes

When the stability angle α of a method is defined in [18, Sections 2.3 and 3.2.1] notice that we should require that the sector

$$ \xi\le 0, \quad |\eta/\xi|\le \tan(\alpha)\quad \text{ with angle } \alpha\le\pi/2 $$be included in the stability region in the (ξ,η)-plane (with the ξ = 0 and α = π/2 cases interpreted appropriately). In other words, arctan(α) in [18] is to be replaced by tan(α); otherwise, the sector would not “open wide enough” and A-stability would not be recovered in the α → π/2− limit. See also Footnote 2.

Similarly to Footnote 1, an analogous typo is present in [18, Section 2.3] when the notion of “stability within a parabola” is defined. There we should have again tan instead of arctan, that is,

$$ \xi\le 0, \quad |\eta^{2}/\xi|\le \tan(\beta)\quad \text{ with some angle } 0< \beta\le\pi/2. $$

References

Akrivis, G., Katsoprinakis, E.: Maximum angles of A(𝜗)-stability of backward difference formulae, submitted for publication

Bhattacharyya, S.P., Chapellat, H., Keel, L.H.: Robust Control: the Parametric Approach. Prentice Hall PTR (1995)

Bickart, T.A., Jury, E.I.: Arithmetic tests for A-stability, A[α]-stability, and stiff-stability. BIT 18(1), 9–21 (1978)

Butcher, J.C.: Numerical Methods for Ordinary Differential Equations. Wiley, Chichester (2008)

Calvo, M.P., de Frutos, J., Novo, J.: Linearly implicit Runge–Kutta methods for advection-reaction-diffusion equations. Appl. Numer. Math. 37(4), 535–549 (2001)

Chakravarti, P.C., Kamel, M.S.: Stiffly stable second derivative multistep methods with higher order and improved stability regions. BIT 23(1), 75–83 (1983)

Creedon, D.M., Miller, J.J.H.: The stability properties of q-step backward difference schemes. BIT 15(3), 244–249 (1975)

Cryer, C.W.: On the instability of high order backward-difference multistep methods. BIT 12, 17–25 (1972)

Cryer, C.W.: A new class of highly-stable methods: A0-stable methods. BIT 13, 153–159 (1973)

Enright, W.H.: Second derivative multistep methods for stiff ordinary differential equations. SIAM J. Numer. Anal. 11(2), 321–331 (1974)

Friedli, A., Jeltsch, R.: An algebraic test for A0-stability. BIT 18(4), 402–414 (1978)

Gautschi, W.: Numerical Analysis. Springer Science+Business Media, New York (2012)

Gear, C.W.: Numerical Initial Value Problems in Ordinary Differential Equations. Prentice-Hall, Englewood Cliffs (1971)

Gelfand, I.M., Kapranov, M.M., Zelevinsky, A.V.: Discriminants, Resultants, and Multidimensional Determinants. Birkhäuser, Boston (1994)

Gottlieb, S., Ketcheson, D., Shu, C.-W.: Strong Stability Preserving Runge–Kutta and Multistep Time Discretizations. World Scientific Publishing Co., Hackensack (2011)

Hairer, E., Wanner, G.: On the instability of the BDF formulas. SIAM J. Numer. Anal. 20(6), 1206–1209 (1983)

Hairer, E., Wanner, G.: Solving Ordinary Differential Equations II. Stiff and Differential-Algebraic Problems. Springer, Berlin (2002)

Hundsdorfer, W., Ruuth, S.J.: IMEX extensions of linear multistep methods with general monotonicity and boundedness properties. J. Comput. Phys. 225(2), 2016–2042 (2007)

Hundsdorfer, W., Mozartova, A., Spijker, M.N.: Stepsize restrictions for boundedness and monotonicity of multistep methods. J. Sci. Comput. 50(2), 265–286 (2012)

Jeltsch, R.: A necessary condition for A-stability of multistep multiderivative methods. Math. Comp. 30(136), 739–746 (1976)

Jeltsch, R.: Note on A-stability of multistep multiderivative methods. BIT 16 (1), 74–78 (1976)

Jeltsch, R.: Stiff stability and its relation to A0- and A(0)-stability. SIAM J. Numer. Anal. 13(1), 8–17 (1976)

Jeltsch, R.: Stiff stability of multistep multiderivative methods. SIAM J. Numer. Anal. 14(4), 760–772 (1977)

Jeltsch, R.: On the stability regions of multistep multiderivative methods. In: Numerical treatment of differential equations (Proc. Conf., Math. Forschungsinst., Oberwolfach, 1976), Lecture Notes in Math, vol. 631, pp 63–80. Springer, Berlin (1978)

Jeltsch, R., Kratz, L.: On the stability properties of Brown’s multistep multiderivative methods. Numer. Math. 30(1), 25–38 (1978)

Jeltsch, R.: Corrigendum: stiff stability of multistep multiderivative methods. SIAM J. Numer. Anal. 16(2), 339–345 (1979)

Jeltsch, R., Nevanlinna, O.: Stability and accuracy of time discretizations for initial value problems. Numer. Math. 40(2), 245–296 (1982)

Jeltsch, R.: Stability of time discretization, Hurwitz determinants and order stars. In: Stability theory (Ascona, 1995), Internat. Ser. Numer. Math., vol. 121, pp 191–204. Birkhäuser, Basel (1996)

Jeltsch, R., Torrilhon, M.: Essentially optimal explicit Runge–Kutta methods with application to hyperbolic-parabolic equations. Numer. Math. 106(2), 303–334 (2007)

Ketcheson, D.I., Ahmadia, A.J.: Optimal stability polynomials for numerical integration of initial value problems. Commun. Appl. Math. Comput. Sci. 7(2), 247–271 (2012)

Ketcheson, D.I., Kocsis, T.A., Lóczi, L.: On the absolute stability regions corresponding to partial sums of the exponential function. IMA J. Numer. Anal. 35 (3), 1426–1455 (2015)

Kirlinger, G.: Linear multistep methods applied to stiff initial value problems—a survey. Math. Comput. Modelling 40(11–12), 1181–1192 (2004)

Lambert, J.D.: Computational Methods in Ordinary Differential Equations. Wiley, London (1973)

Liniger, W.: A criterion for A-stability of linear multistep integration formulae. Computing 3, 280–285 (1968)

Lóczi, L.: Exact optimal values of step-size coefficients for boundedness of linear multistep methods. Numer. Algor. 77(4), 1093–1116 (2018)

Marden, M.: Geometry of Polynomials, 2nd edn. Mathematical Surveys, No. 3. American Mathematical Society, Providence (1966)

Miller, J.J.H.: On the location of zeros of certain classes of polynomials with applications to numerical analysis. J. Inst. Math. Appl. 8, 397–406 (1971)

Nørsett, S.P.: A criterion for A(α)-stability of linear multistep methods. BIT 9, 259–263 (1969)

de Oliveira, P., Patrício, F.: On fitting stability regions of second-derivative multi-step methods. Comm. Appl. Numer. Methods 8(6), 351–360 (1992)

Sanz-Serna, J.M.: Some aspects of the boundary locus method. BIT 20(1), 97–101 (1980)

Spijker, M.N.: The existence of stepsize-coefficients for boundedness of linear multistep methods. Appl. Numer. Math. 63, 45–57 (2013)

Spijker, M.N.: Stability and boundedness in the numerical solution of initial value problems. Math. Comp. 86(308), 2777–2798 (2017)

Spivak, M.: Calculus. Cambridge University Press, Cambridge (2006)

Süli, E., Mayers, D.F.: An Introduction to Numerical Analysis. Cambridge University Press, Cambridge (2003)

Acknowledgment

Open access funding provided by Eötvös Loránd University (ELTE). The author would like to thank the anonymous referees for their suggestions, which have improved the presentation of the material.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The project is supported by the Hungarian Government and co-financed by the European Social Fund, EFOP-3.6.3-VEKOP-16-2017-00001: Talent Management in Autonomous Vehicle Control Technologies.

Appendices

Appendix A

1.1 A.1 Proof of Lemma 5.1

Proof

Let us fix some \((\beta _{1}, \beta _{0})\in \mathbb {R}^{2}\). For ξ < 0, \(P_{\beta _{1},\beta _{0}}(\zeta ,\xi ,0)=0\) is equivalent to LHS(ζ) = RHS(ζ) with

and RHSξ(ζ) := (ζ3 − 3ζ2/4 − 1/4)/ξ. Clearly, if |ξ| is large enough, the coefficients of the RHS polynomial can be arbitrarily close to 0. So by the fact that the roots of a polynomial are continuous functions of its coefficients, we get that “the ζj roots of LHS(ζ) = RHS(ζ) can be made arbitrarily close to those of LHS(ζ) = 0 by choosing |ξ| large.” To make the previous “statement” precise, we distinguish two cases according to whether the leading coefficient of LHS vanishes or not: for β0 = 0, the LHS polynomial has at most two roots, whereas the difference LHS −RHS has three.

-

Case I:

β0≠ 0. By the above statement we easily see that if \(\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\notin \mathbf {vN}\), then \(P_{\beta _{1},\beta _{0}}(\cdot ,\xi ,0)\notin \mathbf {svN}\) for |ξ| large enough. We now show that

$$ (\beta_{1},\beta_{0})\notin W \implies \text{LHS}_{\beta_{1},\beta_{0}}(\cdot)\notin \mathbf{vN}. $$(26)So let us suppose in the rest of Case I that (β1,β0)∉W and β0≠ 0.

-

Case Ia. First, we check the case when \(\mathfrak {c c} \text {LHS}_{\beta _{1},\beta _{0}}(\cdot )=0\). Then

$$ \text{LHS}_{\beta_{1},\beta_{0}}(\zeta)=\zeta/4 \left[\left( 2 \beta_{1}-3\right) \zeta^{2}-4 \beta_{1} \zeta +2 \beta_{1}-3\right], $$and, since now 2β1 − 3 ≠ 0, we can apply Theorem 2.4 to the above polynomial in [⋯]: due to [⋯]r ≡ 0 we have that [⋯] ∈vN if and only if ζ↦[⋯]′ = 2 (2β1 − 3) ζ − 4β1 ∈vN. But we directly see that this last linear polynomial ∉vN, because (β1,β0)∉W and \(\mathfrak {c c} \text {LHS}_{\beta _{1},\beta _{0}}(\cdot )=0\) imply β1 > 3/4.

-

Case Ib. The conditions \(\mathfrak {c c} \text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\ne 0 \ne \beta _{0}\) mean that we can apply Theorem 2.4 to \(\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\). It is easy to verify that \(\left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {r}}\) does not vanish identically, so \( \text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\in \mathbf {vN} \) if and only if

$$ \left|\mathfrak{l c} \text{LHS}_{\beta_{1},\beta_{0}}(\cdot)\right| > \left|\mathfrak{c c} \text{LHS}_{\beta_{1},\beta_{0}}(\cdot)\right| \text{ and } \left( \text{LHS}_{\beta_{1},\beta_{0}}(\cdot)\right)^{\mathbf{r}} \in\mathbf{vN}. $$(27)We show in Cases Ib1 and Ib2 below that (27) never occurs. First, we observe that the inequality constraint in (27) yields that \(\mathfrak {l c} \left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {r}} \ne 0\).

-

Case Ib1. If \(\mathfrak {c c} \left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {r}} = 0\), then the polynomial \(\left (\text {LHS}_{\beta _{1},\beta _{0}}(\zeta )\right )^{\mathbf {r}}\) has exactly two roots: ζ1 = 0 and

$$ \zeta_{2}=2-\frac{3}{2 \left( 6 \beta_{0}+2 \beta_{1}-3\right)}+\frac{3}{2 \left( 2 \beta_{0}+2 \beta_{1}-3\right)}. $$One directly checks that \(\mathfrak {c c} \left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {r}} = 0\) and (β1,β0)∉W imply |ζ2| > 1.

-

Case Ib2. If \(\mathfrak {c c} \left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {r}} \ne 0\), we apply Theorem 2.4 to get that the quadratic polynomial \(\left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {r}}\in \mathbf {vN}\) if and only if either Case Ib2α or Ib2β below occurs.

-

Case Ib2 α: when \(\left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {rr}}\equiv 0\) and \(\left [\left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {r}}\right ]'\in \mathbf {vN}\). In this case, however, the unique root of the polynomial \(\left [\left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {r}}\right ]'\),

$$ \zeta_{1}=1-\frac{3}{4 \left( 6 \beta_{0}+2 \beta_{1}-3\right)}+\frac{3}{4 \left( 2 \beta_{0}+2 \beta_{1}-3\right)}, $$has absolute value > 1.

-

Case Ib2 β: when \(\left |\mathfrak {l c} \left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {r}}\right |> \left |\mathfrak {c c} \left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {r}}\right |\) and \(\left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {rr}} \in \mathbf {vN}\). But then the unique root of \(\left (\text {LHS}_{\beta _{1},\beta _{0}}(\cdot )\right )^{\mathbf {rr}}\) is

$$ \zeta_{1}=1-\frac{3 \left( 2 \beta_{0}+2 \beta_{1}-3\right)}{24 {\beta_{0}^{2}}+32 \beta_{1} \beta_{0}-36 \beta_{0}+8 {\beta_{1}^{2}}-18 \beta_{1}+9}, $$for which we again have |ζ1| > 1, completing Case I.

-

-

Case II:

β0 = 0. Then

$$ P_{\beta_{1}, 0}(\zeta,\xi,0)= \zeta^{3}-\zeta^{2} \left( \beta_{1} \xi +\frac{3}{4}\right)-\left( 3-2 \beta_{1}\right) \xi \zeta - \left( \beta_{1} \xi -\frac{3 \xi }{2}+\frac{1}{4}\right), $$and the leading coefficient of this cubic polynomial is 1. For each fixed \(\beta _{1}\in \mathbb {R}\) we see that at least one of its coefficients is unbounded as ξ →−∞, so (by Vieta’s formulae) at least one of its roots ζ(ξ) is unbounded as ξ →−∞. Hence, \((-\infty ,0)\times \{0\} \subset {\mathcal {S}}_{\beta _{1}, 0}\) cannot hold.

□

1.2 A.2 Proof of Theorem 5.3

Proof

In the proof, we suppose m > 0 and, due to Lemma 5.1, that (β1,β0) ∈ W.

-

Step 1. Let us apply the same ideas as in Section A.1 but along the ray η = −mξ. For ξ < 0, we consider the roots of \(P_{\beta _{1},\beta _{0}}(\cdot ,\xi , -m\xi )\) and get that

$$ \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\notin \mathbf{vN} \implies P_{\beta_{1},\beta_{0}}(\cdot,\xi, -m\xi)\notin \mathbf{svN} $$for some |ξ| large enough, where the corresponding “modified left-hand side” is defined as

$$ \text{MLHS}_{\beta_{1},\beta_{0}, m}(\zeta):=\text{LHS}_{\beta_{1},\beta_{0}}(\zeta)-\frac{3}{2} i m \zeta^{2}, $$and we have also taken into account that \(\mathfrak {l c} \text {MLHS}_{\beta _{1},\beta _{0}, m}(\cdot )=\beta _{0} \ne 0\). (The corresponding “modified right-hand side” would be the same RHSξ(ζ) as in Section A.1.) Hence, if the inclusion (22) holds with some m > 0, then \(\text {MLHS}_{\beta _{1},\beta _{0}, m}(\cdot )\in \mathbf {vN}\).

-

Step 2. In this step, we derive a necessary condition for \(\text {MLHS}_{\beta _{1},\beta _{0}, m}(\cdot )\in \mathbf {vN}\). First, one simply checks via Theorem 2.4 that \(\mathfrak {c c} \text {MLHS}_{\beta _{1},\beta _{0}, m}(\cdot ) = 0\), \(\text {MLHS}_{\beta _{1},\beta _{0}, m}(\cdot )\in \mathbf {vN}\) and m > 0 cannot be simultaneously true. So we can suppose

$$\mathfrak{l c} \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot) \ne 0 \ne \mathfrak{c c} \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot).$$We check that \(\left (\text {MLHS}_{\beta _{1},\beta _{0}, m}(\cdot )\right )^{\mathbf {r}}\) does not vanish identically, and that

$$\left|\mathfrak{l c} \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\right| > \left| \mathfrak{c c} \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\right|.$$Then by Theorem 2.4 we have that

$$ \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\in \mathbf{vN} \Longleftrightarrow \left( \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\right)^{\mathbf{r}} \in \mathbf{vN}. $$Now we see that

$$ \mathfrak{l c} \left( \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\right)^{\mathbf{r}} \ne 0 \ne \mathfrak{c c} \left( \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\right)^{\mathbf{r}}, $$and \(\left (\text {MLHS}_{\beta _{1},\beta _{0}, m}(\cdot )\right )^{\mathbf {rr}}\) does not vanish identically. Thus, Theorem 2.4 yields that

$$\left( \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\right)^{\mathbf{r}} \in \mathbf{vN}$$if and only if

$$ \left|\mathfrak{l c} \left( \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\right)^{\mathbf{r}}\right| > \left| \mathfrak{c c} \left( \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\right)^{\mathbf{r}}\right| $$(28)and

$$ \left( \text{MLHS}_{\beta_{1},\beta_{0}, m}(\cdot)\right)^{\mathbf{rr}} \in \mathbf{vN}. $$(29)Clearly, \(\deg \left (\text {MLHS}_{\beta _{1},\beta _{0}, m}(\cdot )\right )^{\mathbf {rr}}\le 1\), and we directly confirm that (28) implies that the degree is exactly 1. From this, we obtain that (28) and (29) hold if and only if (28) and

$$ \left| 1+i m+\frac{2 i m \beta_{0}}{4 \beta_{0}+2 \beta_{1}-3}- \right. $$$$ \left. \frac{3(1+i m) \left[ \beta_{0} \left( 6 m^{2}+2\right)+\left( 2 \beta_{1}-3\right) \left( m^{2}+1\right)\right]}{24 {\beta_{0}^{2}}+4 \beta_{0} \left( 8 \beta_{1}+3 m^{2}-9\right)+\left( 2 \beta_{1}-3\right) \left( 4 \beta_{1}+3 m^{2}-3\right)}\right| \leq 1 $$(30)hold. In particular, (28) guarantees that the denominators appearing in (30) are non-zero; hence, from now on, we can restrict the parameters (β1,β0) ∈ W to the set (β1,β0) ∈ W ∖ L with

$$ L:=\left\{(\beta_{1},\beta_{0}) \in\mathbb{R}^{2}: \beta_{0}= \frac{3-2 \beta_{1}}{4}\right\}, $$(31)being the left edge of the wedge W (see Fig. 10).

By defining

$$ C_{4}:= -9 \left( 4 \beta_{0}+2 \beta_{1}-3\right)^{2}, $$$$ C_{2}:= 2 \left[864 {\beta_{0}^{4}}+864 \left( 2 \beta_{1}-3\right) {\beta_{0}^{3}}+288 \left( 4 {\beta_{1}^{2}}-13 \beta_{1}+10\right) {\beta_{0}^{2}}+\right. $$$$\left.4 \left( 80 {\beta_{1}^{3}} - 420 {\beta_{1}^{2}} + 684 \beta_{1} - 351\right) \beta_{0}+\left( 3-2 \beta_{1}\right){}^{2} \left( 8 {\beta_{1}^{2}}-36 \beta_{1}+27\right)\right], $$$$ C_{0}:=-3 \left( 4 \beta_{0}+2 \beta_{1}-3\right){}^{2} \left( 8 \beta_{0}+8 \beta_{1}-9\right) $$and

$$ Q_{\beta_{1},\beta_{0}}(m):=C_{4} m^{4}+C_{2} m^{2}+C_{0}, $$it is easily verified after some factorization and simplification that

$$ (28) \text{ and } (30) \Longleftrightarrow (28) \text{ and } Q_{\beta_{1},\beta_{0}}(m)\ge 0. $$In particular, \(\text {MLHS}_{\beta _{1},\beta _{0}, m}(\cdot )\in \mathbf {vN}\) implies \(Q_{\beta _{1},\beta _{0}}(m)\ge 0\).

-

Step 3. We see that C4 < 0 and C0 ≥ 0 for (β1,β0) ∈ W ∖ L; hence, we can denote the largest real root of the polynomial \(Q_{\beta _{1},\beta _{0}}(\cdot )\) by m∗(β1,β0) ∈ [0, +∞). Consequently, if \(\text {MLHS}_{\beta _{1},\beta _{0}, m}(\cdot )\in \mathbf {vN}\), then m ≤ m∗(β1,β0). We now conjecture (by using Mathematica’s Maximize command, for example) that

$$ m^{*}(\beta_{1},\beta_{0})\le \frac{1}{2}\quad\text{for}\quad (\beta_{1},\beta_{0})\in W\setminus L, $$(32)and m∗(β1,β0) = 1/2 occurs precisely for (β1,β0) = (3/8, 3/4) (see Fig. 11). With this conjectured optimal m∗ value, we can prove (32) and the uniqueness property in an elementary way.

By introducing the shifted variable M := m − 1/2, we rewrite \(Q_{\beta _{1},\beta _{0}}(m)\) as

$$ \sum\limits_{j=0}^{4} \widehat{C}_{j}(\beta_{1},\beta_{0}) M^{j}. $$(33)Then we check that

$$ (\beta_{1},\beta_{0})\in W\setminus L \implies \widehat{C}_{j}(\beta_{1},\beta_{0})<0 \text{ for } 1\le j\le 4. $$Moreover, we have

$$ \begin{array}{@{}rcl@{}} \widehat{C}_{0}(\beta_{1},\beta_{0})&\equiv& 6912 {\beta_{0}^{4}}+768 \left( 18 \beta_{1}-35\right) {\beta_{0}^{3}}\\ &&+48 \left( 192 {\beta_{1}^{2}}-880 \beta_{1}+813\right) {\beta_{0}^{2}}\\ &&+40 \left( 64 {\beta_{1}^{3}}-528 {\beta_{1}^{2}}+1062 \beta_{1}-621\right) \beta_{0}\\ &&+\left( 3-2 \beta_{1}\right)^{2} \left( 64 {\beta_{1}^{2}}-672 \beta_{1}+639\right), \end{array} $$$$ (\beta_{1},\beta_{0})\in W\setminus L \implies \widehat{C}_{0}(\beta_{1},\beta_{0})\le 0 $$and

$$ \left[(\beta_{1},\beta_{0})\in W\setminus L \text{\ and \ } \widehat{C}_{0}(\beta_{1},\beta_{0})=0\right] \Longleftrightarrow (\beta_{1},\beta_{0})=(3/8,3/4). $$On the one hand, these mean that (33) is negative for M > 0 and (β1,β0) ∈ W ∖ L. On the other hand, for M = 0 the polynomial (33) is zero if and only if (β1,β0) = (3/8, 3/4).

Therefore, we have proved that if (22) holds with some m > 0, then m ≤ 1/2, and if m = 1/2 is possible at all, then (β1,β0) = (3/8, 3/4).

-

Step 4. In this final step we show that m = 1/2 in (22) can be achieved, by showing that \({\mathcal {A}}_{1/2}\subset {\mathcal {S}}_{{3}/{8}, {3}/{4}}\), that is,

$$ (\xi,\eta)\in{\mathcal{A}}_{1/2}\implies P_{3/8,3/4}(\cdot,\xi, \eta) \in \mathbf{svN}. $$(34)Let us fix such a pair (ξ,η). One sees that

$$ \left|\mathfrak{l c} P_{3/8,3/4}(\cdot,\xi, \eta)\right| > \left|\mathfrak{c c} P_{3/8,3/4}(\cdot,\xi, \eta)\right|, $$and in the \(\mathfrak {c c} P_{3/8,3/4}(\cdot ,\xi , \eta ) = 0\) case (34) is easily verified to hold. Otherwise, if \(\mathfrak {c c} \ne 0\), we check that (P3/8,3/4(⋅,ξ,η))r does not vanish identically; so by Theorem 2.5, we have that P3/8,3/4(⋅,ξ,η) ∈svN if and only if

$$ \left( P_{3/8,3/4}(\cdot,\xi, \eta)\right)^{\mathbf{r}}\in \mathbf{svN}. $$(35)We have that \(\mathfrak {l c} \left (P_{3/8,3/4}(\cdot ,\xi , \eta )\right )^{\mathbf {r}}\ne 0\). Moreover, \(\mathfrak {c c} \left (P_{3/8,3/4}(\cdot ,\xi , \eta )\right )^{\mathbf {r}}= 0\) for ξ = − 2 or ξ = − 2/3, in which cases (35) holds. So we can suppose from now on that \(\mathfrak {c c} \left (P_{3/8,3/4}(\cdot ,\xi , \eta )\right )^{\mathbf {r}}\ne 0\). Then one proves that

$$ \mathfrak{l c} \left( P_{3/8,3/4}(\cdot,\xi, \eta)\right)^{\mathbf{rr}}= $$$$ -\frac{81 \eta^{2} \xi^{2}}{256}-\frac{27 \eta^{2} \xi }{64}-\frac{9 \eta^{2}}{64}+\frac{81 \xi^{4}}{512}-\frac{783 \xi^{3}}{512}+\frac{441 \xi^{2}}{128}-\frac{423 \xi }{128}+\frac{27}{32}\ne 0, $$so deg (P3/8,3/4(⋅,ξ,η))rr = 1. Hence, by using Theorem 2.5 again, we get that (35) holds if and only if

$$ \left|\mathfrak{l c} \left( P_{3/8,3/4}(\cdot,\xi, \eta)\right)^{\mathbf{r}}\right| > \left|\mathfrak{c c} \left( P_{3/8,3/4}(\cdot,\xi, \eta)\right)^{\mathbf{r}}\right| \text{ and } \left( P_{3/8,3/4}(\cdot,\xi, \eta)\right)^{\mathbf{rr}}\in \mathbf{svN} $$hold. Finally, we check that these last two conditions are satisfied for any \((\xi ,\eta )\in {\mathcal {A}}_{1/2}\) pair not excluded earlier during the case separations.

□

Remark A.1 1

By defining

and applying Theorem 2.5, it is straightforward to show (cf. Step 4 in the above proof) that

see Fig. 12 and cf. Remark B.1.

These figures show the stability region \({\mathcal {S}}_{3/4, 3/8}\) corresponding to the method with (β1,β0) = (3/4,3/8) (i.e., the vertex of the wedge in Fig. 9). Such methods with (β1,β0) ∈ W ∩ L (see (31)) are \(\overset {\circ }{\text {A}}\)-stable, but \({\mathcal {A}}_{m}\subset {\mathcal {S}}_{\beta _{1},\beta _{0}}\) (see (22)) does not hold with any m > 0

The function m∗ defined in Step 3 in Appendix A.2. Its maximum value is located at (β1,β0,m∗) = (3/8,3/4,1/2)

The implicit curve \(\{(\xi , \eta )\in \mathbb {R}^{2} : F_{\text {opt}}(\xi ,\eta )=0\}\) (see Remark A.1), being the boundary of the optimal stability region \({\mathcal {S}}_{3/8, 3/4}\) in the left half-plane, is shown in the left figure and a close-up in the right figure in black. The dashed red lines represent the boundary of the largest infinite sector \({\mathcal {A}}_{1/2}\) that can be included in the stability region

Appendix B

1.1 B.1 Locating the candidate optimum for Theorem 6.1

For a given (β1,β0) ∈ W pair, we can represent the RLC of the corresponding multistep method of the family (18) as an implicit curve of the form

by using the transformations in Section 3.1 as follows. First, we perform the substitution \(\zeta \mapsto \frac {i-t}{i+t}\) in the polynomial (20), then eliminate \(t\in \mathbb {R}\) by taking the resultant of the real and imaginary parts of \(P_{\beta _{1},\beta _{0}}\left (\frac {i-t}{i+t},\xi ,\eta \right )\). The resulting polynomial can be factored to get \( 2^{34}\cdot 9 \cdot \left (1-\beta _{0} \xi \right )^{6}\cdot F_{\beta _{1}, \beta _{0}}(\xi ,\eta )\); the normalization with \(F_{\beta _{1}, \beta _{0}}(0, 1)=9\) has been used to make this polynomial \(F_{\beta _{1}, \beta _{0}}\) unique. The term (1 − β0ξ)6 (cf. the leading coefficient of \(P_{\beta _{1},\beta _{0}}(\cdot ,\xi ,\eta )\)) does not vanish now due to ξ ≤ 0 and (β1,β0) ∈ W; hence, (36) is obtained. We are not going to display the polynomial \(F_{\beta _{1}, \beta _{0}}(\xi ,\eta )\) explicitly: it contains 82 terms in its expanded form and its degree in the variables/parameters (ξ,η,β1,β0) is (6, 4, 4, 4).

Now supposing that the RLC (36) describes the boundary of the stability region of the multistep method determined by the given pair (β1,β0), it is reasonable to expect that, say, the upper branch of the largest parabola inscribed in \({\mathcal {S}}_{\beta _{1},\beta _{0}}\), \( \{(\xi , \eta )\in \mathbb {R}^{2} : \xi < 0, \eta >0, \eta ^{2}=-m \xi \}\), touches the RLC (36) at some finite point. In this case, the polynomial

has a multiple root there—it is indeed a polynomial, because in our situation \(F_{\beta _{1}, \beta _{0}}(\xi ,\eta )\) contains only even powers of η (namely, η2 and η4). Moreover, we now have

where \(\widetilde {Q}_{\beta _{1},\beta _{0}, m}(\cdot )\) is a quartic polynomial. The existence of a multiple root of \(\widetilde {Q}_{\beta _{1},\beta _{0}, m}(\cdot )\) implies that the discriminant of this polynomial (with respect to ξ), denoted by \(\widetilde {\Delta }_{\beta _{1},\beta _{0}}(m)\), vanishes. Mathematica yields that

where the “⋯” symbols contain 57 and 228 terms, respectively. We see that the factor 9β0 + 4β1 − 6 above is always positive in W. In this way, we can determine the parameter m of the largest parabola within the region bounded by the RLC for any fixed (β1,β0) ∈ W.

Remark B.1 1

By setting (β1,β0) = (3/8, 3/4) for example (corresponding to the “sector-optimal” method in Section A.2), we have that the RLC in (36) is identical to 3/16 ⋅ Fopt(ξ,η) in Remark A.1, implying that the RLC in the left half-plane ξ ≤ 0 represents the boundary of the stability region \({\mathcal {S}}_{3/8, 3/4}\). Now, \(\widetilde {\Delta }_{3/8, 3/4}(m)\) can be written as

from which we can prove that the largest parabola \({\mathcal {P}_{m}}\) contained in \({\mathcal {S}}_{3/8, 3/4}\) has m ≈ 1.11226 (being the unique positive root of the polynomial {36, 1362, 343,− 2116}).

By studying the positive roots of \(\widetilde {\Delta }_{\beta _{1},\beta _{0}}(\cdot )\) as (β1,β0) varies within W, we can conjecture that the value of m in (25) cannot be greater than 6/5 for the family (18). Moreover, m = 6/5 occurs only for β1 = 1/5 and β0 = 37/40, and in this case the RLC and the upper parabola branch touch each other at \((\xi ,\eta )=(-10/7, 2\sqrt {3/7})\). Since the polynomial \(\widetilde {\Delta }_{\beta _{1},\beta _{0}}(m)\) is much more complicated than the corresponding polynomial \(Q_{\beta _{1},\beta _{0}}(m)\) in Appendix A.2, this time Mathematica could not confirm in a reasonable amount of computing time that the value m = 6/5 is indeed the optimal one.

1.2 B.2 Proof of optimality in Theorem 6.1