Abstract

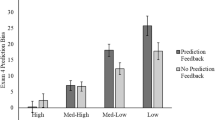

Accurately judging one’s performance in the classroom can be challenging considering most students tend to be overconfident and overestimate their actual performance. The current work draws upon the metacognition and decision making literatures to examine improving metacognition in the classroom. Using historical data from several semesters of an upper-level undergraduate course (N = 127), we analyzed students’ judgments of their performance and their actual performance for two exams. Students were instructed on the concepts of overconfidence, received feedback on exams, and were given incentives for accurate calibration. We found results consistent with the “unskilled and unaware” effect Kruger & Dunning (Journal of Personality and Social Psychology, 77(6), 1121–1134, 1999) where lower performing students initially displayed overconfidence and the highest performing students initially displayed underconfidence. Importantly, students were able to change both judgments and performance such that metacognitive accuracy improved significantly from the first to the second exam. In a second study, two additional semesters for the same course used in Study 1 were examined (N = 90). For one of the semesters feedback was not provided, allowing us to determine whether feedback can improve both metacognitive judgments and performance. Our findings revealed significant improvements in performance paired with decreases in overconfidence on Exam 2, but only for students who received feedback about their performance and judgments. We postulate that feedback may be an important component in improvement metacognitive judgments.

Similar content being viewed by others

Notes

Because this was a historical data set spanning 5 semesters from Fall 2009 to Fall 2011 semesters and the classes were not taught with the objective of analyzing course performance and judgments, we only have gender as demographic data on the students and more detailed demographic information is not included in the class rosters provided to instructors.

Because of the historical nature of the data set, item by item responses and some of the individual level data for exams’ content multiple choice versus other content is incomplete and therefore is not presented in the table.

A range of −2 to 2 was chosen because it corresponded to the range of calibration that received the largest incentive.

References

Bol, L., Hacker, D. J., O’Shea, P., & Allen, D. (2005). The influence of overt practice, achievement level, and explanatory style on calibration accuracy and performance. The Journal of Experimental Education, 73(4), 269–290.

Brenner, L. A., Koehler, D. J., Liberman, V., & Tversky, A. (1996). Overconfidence in probability and frequency judgments: a critical examination. Organizational Behavior and Human Decision Processes, 65, 212–219.

Cao, L., & Nietfeld, J. L. (2007). College students’ metacognitive awareness of difficulties in learning the class content does not automatically lead to adjustment of study strategies. Australian Journal of Educational and Developmental Psychology, 7, 31–46.

Delhomme, P. (1991). Comparing one’s driving with others’: assessment of abilities and frequency of offences: evidence for a superior conformity of self-bias? Accident Analysis and Prevention, 23(6), 493–508.

Dunlosky, J., & Rawson, K. A. (2012). Overconfidence produces underachievement: inaccurate self evaluations undermine students’ learning and retention. Learning and Instruction, 22(4), 271–280.

Dunning, D., Johnson, K., Ehrlinger, J., & Kruger, J. (2003). Why people fail to recognize their own incompetence. Current Directions in Psychological Science, 12(3), 83–87.

Finn, B., & Metcalfe, J. (2007). The role of memory for past test in the underconfidence with practice effect. Journal of Experimental Psychology: Learning, Memory, and Cognition, 33(1), 238–244.

Fischhoff, B., Slovic, P., & Lichtenstein, S. (1977). Knowing with certainty: the appropriateness of extreme confidence. Journal of Experimental Psychology: Human Perception and Performance, 3(4), 552–564.

Goodman-Delahunty, J., Granhag, P. A., Hartwig, M., & Loftus, E. F. (2010). Insightful or wishful: Lawyers’ ability to predict case outcomes. Psychology, Public Policy, and Law, 16(2), 133–157.

Hacker, D. J., Bol, L., Horgan, D. D., & Rakow, E. A. (2000). Test predictions and performance in a classroom context. Journal of Educational Psychology, 92(1), 160–170.

Hacker, D. J., Bol, L., & Bahbahani, K. (2008a). Explaining calibration accuracy in classroom contexts: the effects of incentives, reflection, and explanatory style. Metacognition Learning, 3, 101–121.

Hacker, D. J., Bol, L., & Keener, M. C. (2008b). Metacognition in education: a focus on calibration. In J. Dunlosky & R. Bjork (Eds.), Handbook of memory and metacognition (pp. 429–455). Mahway: Lawrence Erlbaum Associates.

Hartwig, M. K., & Dunlosky, J. (2014). The contribution of judgment scale to the unskilled-and-unaware phenomenon: How evaluating others can exaggerate over- (and under-) confidence. Memory & Cognition, 42, 164–173.

Huff, J. D., & Nietfeld, J. L. (2009). Using strategy instruction and confidence judgments to improve metacognitive monitoring. Metacognition and Learning, 4(2), 161–176.

Juslin, P., Winman, A., & Olsson, H. (2000). Naive empiricism and dogmatism in confidence research: a critical examination of the hard-easy effect. Psychological Review, 107(2), 384–396.

Keren, G. (1987). Facing uncertainty in the game of bridge: a calibration study. Organizational Behavior and Human Decision Processes, 39, 98–114.

Keren, G. (1991). Calibration and probability judgments: conceptual and methodological issues. Acta Psychologica, 77(3), 217–273.

Koellinger, P., Minniti, M., & Schade, C. (2007). ‘I think I can, I think I can’: overconfidence and entrepreneurial behavior. Journal of Economic Psychology, 28(4), 502–527.

Koriat, A., & Goldsmith, M. (1996). Monitoring and control processes in the strategic regulation of memory accuracy. Psychological Review, 103(3), 490–517.

Kruger, J., & Dunning, D. (1999). Unskilled and unaware of it. How difficulties recognizing one’s own incompetence lead to inflated self-assessments. Journal of Personality and Social Psychology, 77(6), 1121–1134.

Lichtenstein, S., & Fischhoff, B. (1980). Training for calibration. Organizational Behavior and Human Performance, 26(2), 149–171.

Lichtenstein, S., Fischhoff, B., & Phillips, L. D. (1982). Calibration of probabilities: The state of the art to 1980. In D. Kahneman, P. Slovic, & A. Tversky (Eds.), Judgments under uncertainty: Heuristics and biases (pp. 306–334). New York: Cambridge University Press.

Ludwig, S., & Nafziger, J. (2011). Beliefs about overconfidence. Theory and Decision, 70(4), 475–500.

Maki, R. H., Shields, M., Wheeler, A. E., & Zacchilli, T. L. (2005). Individual differences in absolute and relative metacomprehension accuracy. Journal of Education & Psychology, 97(4), 723–731.

Maki, R. H., Willmon, C., & Pietan, A. (2009). Basis of metamemory judgments for text with multiple-choice, essay, and recall tests. Applied Cognitive Psychology, 23(2), 204–222.

McDaniel, M. A., Anderson, J. L., Derbish, M. H., & Morrisette, N. (2007). Testing the testing effect in the classroom. European Journal of Cognitive Psychology, 19, 494–513.

Metcalfe, J. (2009). Metacognitive judgments and control of study. Current Directions in Psychological Science, 18(3), 159–163.

Metcalfe, J., & Finn, B. (2008). Evidence that judgments of learning are causally related to study choice. Psychonomic Bulletin & Review, 15(1), 174–179.

Miller, T. M., & Geraci, L. (2011a). Training metacognitive in the classroom: the influence of incentives and feedback on exam prediction. Metacognition and Learning, 6(3), 303–314.

Miller, T. M., & Geraci, L. (2011b). Unskilled but aware: reinterpreting overconfidence in low-performing students. Journal of Experimental Psychology: Learning, Memory, and Cognition, 37(2), 502–506.

Nietfeld, J. L., & Schraw, G. (2002). The effect of knowledge and strategy training on monitoring accuracy. The Journal of Educational Research, 95(3), 131–142.

Nietfeld, J. L., Cao, L., & Osborne, J. W. (2005). Metacognitive monitoring accuracy and students performance in the postsecondary classroom. The Journal of Experimental Education, 74(1), 7–28.

Rawson, K. A., & Dunlosky, J. (2007). Improving students’ self-evaluation of learning for key concepts in textbook materials. European Journal of Cognitive Psychology, 19, 559–579.

Renner, C. H., & Renner, M. J. (2001). But I thought I knew that: using confidence estimation as a debiasing technique to improve classroom performance. Applied Cognitive Psychology, 15(1), 23–32.

Schraw, G., Potenza, M. T., & Nebelsick-Gullet, L. (1993). Constraints on the calibration of performance. Contemporary Educational Psychology, 18(4), 445–463.

Schraw, G., Kuch, F., & Gutierrez, A. P. (2013). Measure for measure: calibrating ten commonly used calibration scores. Learning and Instruction, 24, 48–57.

Veenman, M. V. J., Van Hout-Wolters, B. H. A., & Afflerbach, P. (2006). Metacognition and learning: conceptual and methodological considerations. Metacognition and Learning, 1(1), 3–14.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Callender, A.A., Franco-Watkins, A.M. & Roberts, A.S. Improving metacognition in the classroom through instruction, training, and feedback. Metacognition Learning 11, 215–235 (2016). https://doi.org/10.1007/s11409-015-9142-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11409-015-9142-6