Abstract

Let \(X_1,X_2, \ldots \) be independent random uniform points in a bounded domain \(A \subset \mathbb {R}^d\) with smooth boundary. Define the coverage threshold \(R_n\) to be the smallest r such that A is covered by the balls of radius r centred on \(X_1,\ldots ,X_n\). We obtain the limiting distribution of \(R_n\) and also a strong law of large numbers for \(R_n\) in the large-n limit. For example, if A has volume 1 and perimeter \(|\partial A|\), if \(d=3\) then \(\mathbb {P}[n\pi R_n^3 - \log n - 2 \log (\log n) \le x]\) converges to \(\exp (-2^{-4}\pi ^{5/3} |\partial A| e^{-2 x/3})\) and \((n \pi R_n^3)/(\log n) \rightarrow 1\) almost surely, and if \(d=2\) then \(\mathbb {P}[n \pi R_n^2 - \log n - \log (\log n) \le x]\) converges to \(\exp (- e^{-x}- |\partial A|\pi ^{-1/2} e^{-x/2})\). We give similar results for general d, and also for the case where A is a polytope. We also generalize to allow for multiple coverage. The analysis relies on classical results by Hall and by Janson, along with a careful treatment of boundary effects. For the strong laws of large numbers, we can relax the requirement that the underlying density on A be uniform.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

This paper is primarily concerned with the following random coverage problem. Given a specified compact region A in a d-dimensional Euclidean space, what is the probability that A is fully covered by a union of Euclidean balls of radius r centred on n points placed independently uniformly at random in A, in the large-n limit with \(r =r(n)\) becoming small in an appropriate manner?

This is a very natural type of question with a long history; see for example [3, 7, 11,12,13, 16, 20]. Potential applications include wireless communications [3, 18], ballistics [13], genomics [2], statistics [8], immunology [20], stochastic optimization [23], and topological data analysis [4, 9].

In an alternative version of this question, one considers a smaller compact region \(B \subset A^o\) (\(A^o\) denotes the interior of A), and asks whether B (rather than all of A) is covered. This version is simpler because boundary effects are avoided. This alternative version of our question was answered independently in the 1980s by Hall [12] and Janson [16]. Another way to avoid boundary effects would be to consider coverage of a smooth manifold such as a sphere (as in [20]), and this was also addressed in [16].

However, the original question does not appear to have been addressed systematically until now (at least, not when \(d>1\)). Janson [16, p. 108] makes some remarks about the case where \(A= [0,1]^d\) and one uses balls of the \(\ell _\infty \) norm, but does not consider more general classes of A or Euclidean balls.

This question seems well worth addressing. In many of the applications areas, it is natural to consider the influence only of the random points placed within the region A rather than also hypothetical points placed outside A, for example in the problem of statistical set estimation which we shall discuss below.

We shall express our results in terms of the coverage threshold \(R_n\), which we define to be the the smallest radius of balls, centred on a set \(\mathscr {X}_n\) of n independent uniform random points in A, required to cover A. Note that \(R_n\) is equal to the Hausdorff distance between the sets \(\mathscr {X}_n\) and A. More generally, for \(k \in \mathbb {N}\) the k-coverage threshold \(R_{n,k}\) is the smallest radius required to cover A k times. These thresholds are random variables, because the locations of the centres are random. We investigate their probabilistic behaviour as n becomes large.

We shall determine the limiting behaviour of \(\mathbb {P}[R_{n,k} \le r_n]\) for any fixed k and any sequence of numbers \((r_n)\), for the case where A is smoothly bounded (for general \(d \ge 2\)) or where A is a polytope (for \(d=2 \) or \(d= 3\)). We also obtain similar results for a high-intensity Poisson sample in A, which may be more relevant in some applications, as argued in [12].

We also derive strong laws of large numbers showing that \(nR_{n,k}^d/\log n\) converges almost surely to a finite positive limit, and establishing the value of the limit. These strong laws carry over to more general cases where k may vary with n, and the distribution of points may be non-uniform. We give results of this type for A smoothly bounded, or for A a convex polytope.

We emphasise that in all of these results, the limiting behaviour depends on the geometry of \(\partial A\), the topological boundary of A. For example, we shall show that when \(d=3\) and the points are uniformly distributed over a polyhedron, the limiting behaviour of \(R_n\) is determined by the angle of the sharpest edge if this angle is less than \(\pi /2\). If this angle exceeds \(\pi /2\) then the location in A furthest from the sample \(\mathscr {X}_n\) is asymptotically uniformly distributed over \(\partial A\), but if this angle is less than \(\pi /2\) the location in A furthest from \(\mathscr {X}_n\) is asymptotically uniformly distributed over the union of those edges which are sharpest, i.e. those edges which achieve the minimum subtended angle.

We restrict attention here to coverage by Euclidean balls of equal radius. The work of [12, 16] allowed for generalizations such as other shapes or variable radii, in their versions of our problem. We do not attempt to address these generalizations here; in principle it may be possible, but it would add considerably to the complication of the formulation of results.

One application lies in statistical set estimation. One may wish to estimate the set A from the sample \(\mathscr {X}_n\). One possible estimator in the literature is the union of balls of radius \(r_n\) centred on the points of \(\mathscr {X}_n\), for some suitable sequence \((r_n)_{n \ge 1}\) decreasing to zero. In particular, when estimating the perimeter of A one may well wish to take \(r_n\) large enough so that these balls fully cover A, that is, \(r_n \ge R_n\). For further discussion see Cuevas and Rodríguez-Casal [8].

We briefly discuss some related concepts. One of these is the maximal spacing of the sample \(\mathscr {X}_n\). This is defined to be volume of the largest Euclidean ball that can be fitted into the set \(A {\setminus } \mathscr {X}_n\) (the reason for the terminology becomes apparent from considering the case with \(d=1\)). More generally, the maximal k-spacing of the sample is defined to be volume of the largest Euclidean ball that can be fitted inside the set A while containing fewer than k points of \(\mathscr {X}_n\).

The maximal spacing also has a long history; see for example [1, 10, 14, 16]. As described in [1], there are many statistical applications. Essentially, it differs from the coverage threshold \(R_{n}\) only because of boundary effects (we shall elaborate on this in Sect. 2), but these effects are often important in determining the asymptotic behaviour of the threshold.

Another interpretation of the coverage threshold is via Voronoi cells. Calka and Chenavier [6] have considered, among other things, extremes of circumscribed radii of a Poisson–Voronoi tessellation on all of \(\mathbb {R}^d\) (the circumscribed radius of a cell is the radius of the smallest ball centred on the nucleus that contains the cell). To get a finite maximum they consider the maximum restricted to those cells having non-empty intersection with some bounded window \(A \subset \mathbb {R}^d\). This construction avoids dealing with delicate boundary effects, and the limit distribution, for large intensity, is determined in [6] using results from [16].

It seems at least as natural to consider Voronoi cells with respect to the Poisson sample restricted to A. A little thought (similar to arguments given in [6]) shows that the largest circumradius of the Voronoi cells inside A (i.e., the intersections with A of the Voronoi cells), with respect to the sample \(\mathscr {X}_n\), is equal to \(R_n\), and likewise for a Poisson sample in A; thus, our results add to those given in [6].

A somewhat related topic is the convex hull of the random sample \(\mathscr {X}_n\). For \(d=2\) with A convex, the limiting behaviour of the Hausdorff distance from this convex hull to A is obtained in [5]. The limiting behaviour of the Hausdorff distance from \(\mathscr {X}_n\) itself to A (which is our \(R_n\)) is not the same as for the convex hull.

2 Definitions and notation

Throughout this paper, we work within the following mathematical framework. Let \(d \in \mathbb {N}\). Suppose we have the following ingredients:

-

A compact, Riemann measurable set \(A \subset \mathbb {R}^d\) (Riemann measurability of a bounded set in \(\mathbb {R}^d\) amounts to its boundary having zero Lebesgue measure).

-

A Borel probability measure \(\mu \) on A with probability density function f.

-

A specified set \(B \subset A\) (possibly \(B=A\)).

-

On a common probability space \((\mathbb {S},{{\mathscr {F}}},\mathbb {P})\), a sequence \(X_1,X_2,\ldots \) of independent identically distributed random d-vectors with common probability distribution \(\mu \), and also a unit rate Poisson counting process \((Z_t,t\ge 0)\), independent of \((X_1,X_2,\ldots )\) (so \(Z_t\) is Poisson distributed with mean t for each \(t >0\)).

For \(n \in \mathbb {N}\), \(t >0\), let \(\mathscr {X}_n:= \{X_1,\ldots ,X_n\}\), and let \({{\mathscr {P}}}_t:= \{X_1,\ldots ,X_{Z_t}\}\). These are the point processes that concern us here. Observe that \({{\mathscr {P}}}_t\) is a Poisson point process in \(\mathbb {R}^d \) with intensity measure \(t \mu \) (see e.g. [19]).

For \(x \in \mathbb {R}^d\) and \(r>0\) set \(B(x,r):= \{y \in \mathbb {R}^d:\Vert y-x\Vert \le r\}\) where \(\Vert \cdot \Vert \) denotes the Euclidean norm. (We write \(B_{(d)} (x,r)\) for this if we wish to emphasise the dimension.) For \(r>0\), let \(A^{(r)}:=\{ x \in A: B(x,r) \subset A^o\}\), the ‘r-interior’ of A.

Also, define the set \(A^{[r]}\) to be the interior of the union of all hypercubes of the form \(\prod _{i=1}^d[n_ir,(n_i+1)r] \), with \(n_1,\ldots ,n_d \in \mathbb {Z}\), that are contained in \(A^o\) (the set \(A^{[r]}\) resembles \(A^{(r)}\) but is guaranteed to be Riemann measurable).

For any point set \(\mathscr {X}\subset \mathbb {R}^d\) and any \(D \subset \mathbb {R}^d\) we write \(\mathscr {X}(D)\) for the number of points of \(\mathscr {X}\) in D, and we use below the convention \(\inf \{\} := +\infty \).

Given \(n, k \in \mathbb {N}\), and \(t \in (0,\infty )\), define the k-coverage thresholds \(R_{n,k}\) and \(R'_{t,k}\) by

and define also the interior k-coverage thresholds

Set \(R_n : = R_{n,1}\), and \(R'_t:= R'_{t,1}\), and \({\tilde{R}}_n: = {\tilde{R}}_{n,1}\). Then \(R_n\) is the coverage threshold. Observe that \(R_n = \inf \{ r >0: B \subset \cup _{i=1}^n B(X_i,r) \}\). In the case \(B=A\), this agrees with our earlier definition of \(R_n\).

We are chiefly interested in the asymptotic behaviour of \(R_n\) for large n. More generally, we consider \(R_{n,k}\) where k may vary with n. We are especially interested in the case with \(B=A\).

Observe that \(\tilde{R}_{n,k} \) is the smallest r such that \( B \cap A^{(r)} \) is covered k times by the balls of radius r centred on the points of \(\mathscr {X}_n\). It can be seen that when \(B=A\), the maximal k-spacing of the sample \(\mathscr {X}_n\) (defined earlier) is equal to \(\theta _d {\tilde{R}}_n^d\), where \(\theta _d := \pi ^{d/2}/\varGamma (1 + d/2)\), the volume of the unit ball in \(\mathbb {R}^d\).

We use the Poissonized k-coverage threshold \(R'_{t,k}\), and the interior k-coverage thresholds \({\tilde{R}}_{n,k}\) and \({\tilde{R}}_{Z_t,k}\), mainly as stepping stones towards deriving results for \(R_{n,k}\) and \({\tilde{R}}_{n,k}\) respectively, but they are also of interest in their own right. Indeed, some of the literature [3, 12, 13, 18] is concerned more with \(R'_t\) than with \(R_n\), and we have already mentioned the literature on the maximal spacing.

We now give some further notation used throughout. For \(D \subset \mathbb {R}^d\), let \({\overline{D}}\) and \(D^o\) denote the closure of D and interior of D, respectively. Let |D| denote the Lebesgue measure (volume) of D, and \(|\partial D|\) the perimeter of D, i.e. the \((d-1)\)-dimensional Hausdorff measure of \(\partial D\), when these are defined. Write \(\log \log t\) for \(\log (\log t)\), \(t >1\). Let o denote the origin in \(\mathbb {R}^d\). Set \(\mathbb {H}:= \mathbb {R}^{d-1}\times [0,\infty )\) and \(\partial \mathbb {H}:= \mathbb {R}^{d-1}\times \{0\}\).

Given two sets \(\mathscr {X},{{\mathscr {Y}}}\subset \mathbb {R}^d\), we set \( \mathscr {X}\triangle {{\mathscr {Y}}}:= (\mathscr {X}{\setminus } {{\mathscr {Y}}}) \cup ({{\mathscr {Y}}}{\setminus } \mathscr {X})\), the symmetric difference between \(\mathscr {X}\) and \({{\mathscr {Y}}}\). Also, we write \(\mathscr {X}\oplus {{\mathscr {Y}}}\) for the set \(\{x+y: x \in \mathscr {X}, y \in {{\mathscr {Y}}}\}\). Given also \(x \in \mathbb {R}^d\) we write \(x+{{\mathscr {Y}}}\) for \(\{x\} \oplus {{\mathscr {Y}}}\).

Given \(x,y \in \mathbb {R}^d\), we denote by [x, y] the line segment from x to y, that is, the convex hull of the set \(\{x,y\}\). We write \(a \wedge b\) (respectively \(a \vee b\)) for the minimum (resp. maximum) of any two numbers \(a,b \in \mathbb {R}\).

Given \(m \in \mathbb {N}\) and functions \(f: \mathbb {N}\cap [m,\infty ) \rightarrow \mathbb {R}\) and \(g: \mathbb {N}\cap [m,\infty ) \rightarrow (0,\infty )\), we write \(f(n) = O(g(n))\) as \(n \rightarrow \infty \) if \(\limsup _{n \rightarrow \infty }|f(n)|/g(n) < \infty \). We write \(f(n)= o(g(n))\) as \(n \rightarrow \infty \) if \(\lim _{n \rightarrow \infty } f(n)/g(n) =0\). We write \(f(n)= \varTheta (g(n))\) as \(n \rightarrow \infty \) if both \(f(n)= O(g(n))\) and \(g(n)= O(f(n))\) (and \(f > 0\)). Given \(s >0\) and functions \(f: (0,s) \rightarrow \mathbb {R}\) and \(g:(0,s) \rightarrow (0,\infty )\), we write \(f(r) = O(g(r))\) as \(r \downarrow 0\), or \(g(r) = \varOmega (f(r))\) as \( r \downarrow 0\), if \(\limsup _{r \downarrow 0} |f(r) |/g(r) < \infty \). We write \(f(r)= o(g(r))\) as \(r \downarrow 0\) if \(\lim _{r \downarrow 0 } f(r)/g(r) =0\), and \(f(r) \sim g(r)\) as \(r \downarrow 0\) if this limit is 1.

3 Convergence in distribution

The main results of this section are concerned with weak convergence for \(R_{n,k}\) (defined at (2.1)) as \(n \rightarrow \infty \) with k fixed, in cases where f is uniform on A and \(B=A\).

Our first result concerns the case where A has a smooth boundary in the following sense. We say that A has \(C^2\) boundary if for each \(x \in \partial A\) there exists a neighbourhood U of x and a real-valued function f that is defined on an open set in \(\mathbb {R}^{d-1}\) and twice continuously differentiable, such that \(\partial A \cap U\), after a rotation, is the graph of the function f.

Given \(d \in \mathbb {N}\), define the constant

Note that \(c_1=c_2=1\), and \(c_3 =3 \pi ^2/32\). Moreover, using Stirling’s formula one can show that \(c_d^{1/d} \sim e (\pi /(2d))^{1/2}\) as \(d \rightarrow \infty \). Given also \(k \in \mathbb {N}\), for \(d \ge 2\) set

By some tedious algebra, one can simplify this to

with \(c_{d,k} = c_{d,1}(1-1/d)^{k-1}/(k-1)!\) for \(k >1\). Note that \(c_{2,k} = 2^{1-k} \pi ^{-1/2}/(k-1)! \) and \(c_{3,k}= 2^{k-5} 3^{1-k} \pi ^{5/3}/(k-1)!\), and \(c_{d,1}^{1/d} \sim e/(2d)^{1/2}\) as \(d \rightarrow \infty \).

In all limiting statements in the sequel, n takes values in \(\mathbb {N}\) while t takes values in \((0,\infty )\).

Theorem 3.1

Suppose that \(d\ge 2\) and \(f=f_0\textbf{1}_A\), where \(A \subset \mathbb {R}^d\) is compact, and has \(C^2\) boundary, and \(f_0 := |A|^{-1}\). Assume \(B=A\). Let \(k \in \mathbb {N}\), \(\zeta \in \mathbb {R}\).

Then

When \(d=2, k=1 \) the exponent in (3.3) has two terms. This is because the location in A furthest from the sample \(\mathscr {X}_n\) might lie either in the interior of A, or on the boundary.

When \(d \ge 3\) or \(k \ge 2\), the exponent of (3.3) has only one term, because the location in A with furthest k-th nearest point of \(\mathscr {X}_n\) is located, with probability tending to 1, on the boundary of A, and likewise for \({{\mathscr {P}}}_t\). Increasing either d or k makes it more likely that this location lies on the boundary, and the exceptional nature of the limit in the case \((d,k)=(2,1)\) reflects this.

We also consider the case where A is a polytope, only for \(d=2\) or \(d=3\). All polytopes in this paper are assumed to be bounded, connected, and finite (i.e. have finitely many faces). We do not require the polytope to be convex here.

Theorem 3.2

Suppose \(d=2\), \(B=A\), and \(f = f_0 \textbf{1}_A\), where \(f_0 : = |A|^{-1}\). Assume that A is compact and polygonal.

Let \(|\partial A|\) denote the length of the boundary of A. Let \(k \in \mathbb {N}\), \(\zeta \in \mathbb {R}\). Then

One might seek to extend Theorem 3.2 to a more general class of sets A including both polygons (covered by Theorem 3.2) and sets with \(C^2\) boundary (covered by Theorem 3.1). One could take sets A having piecewise \(C^2\) boundary, with the extra condition that the corners of A are not too pointy, in the sense that for each corner q, there exists a triangle with vertex at q that is contained in A. We would expect that it is possible to extend the result to this more general class.

When \(d=3\) and A is polyhedral, there are several cases to consider, depending on the value of the angle \(\alpha _1\) subtended by the ‘sharpest edge’ of \(\partial A\). The angle subtended by an edge e is defined as follows. Denote the two faces meeting at e by \(F_1,F_2\). Let p be a point in the interior of the edge, and for \(i=1,2\) let \(\ell _i\) be a line segment starting from p that lies within \(F_i\) and is perpendicular to the edge e. Let \(\theta \) denote the angle between the line segments \(\ell _1\) and \(\ell _2\) (so \(0< \theta < \pi \)). The angle subtended by the edge e is \(\theta \) if there is a neighbourhood U of p such that \(A \cap U\) is convex, and is \(2 \pi - \theta \) if there is no such neighbourhood of p.

If \(\alpha _1 < \pi /2\) then the location in A furthest from the sample \(\mathscr {X}_n\) is likely to be on a 1-dimensional edge of \(\partial A\), while if \(\alpha _1 > \pi /2\) the furthest location from the sample is likely to be on a 2-dimensional face of \(\partial A\), in the limit \(n \rightarrow \infty \). If \(\alpha _1 = \pi /2\) (for example, for a cube), both of these possibilities have non-vanishing probability in the limit.

Since there are several cases to consider, to make the statement of the result more compact we put it in terms of \(\mathbb {P}[R_{n,k} \le r_n]\) for a sequence of constants \((r_n)\).

Theorem 3.3

Suppose \(d=3\), A is polyhedral, \(B=A\) and \(f = f_0 \textbf{1}_A\), where \(f_0 := |A|^{-1}\). Denote the 1-dimensional edges of A by \(e_1,\ldots ,e_\kappa \). For each \(i \in \{1,\ldots , \kappa \}\), let \(\alpha _i\) denote the angle that A subtends at edge \(e_i\) (with \(0< \alpha _i < 2 \pi \)), and write \(|e_i|\) for the length of \(e_i\). Assume the edges are listed in order so that \(\alpha _1 \le \alpha _2 \le \cdots \le \alpha _\kappa \). Let \(|\partial _1 A|\) denote the total area (i.e., 2-dimensional Hausdorff measure) of all faces of A, and let \(|\partial _2 A| \) denote the total length of those edges \(e_i\) for which \(\alpha _i= \alpha _1\). Let \(\beta \in \mathbb {R}\) and \(k \in \mathbb {N}\). Let \((r_t)_{t >0}\) be a family of real numbers satisfying (as \(t \rightarrow \infty \))

Then

We now give a result in general d for \({\tilde{R}}_{n,k}\), and for \(R_{n,k}\) in the case with \({\overline{B}} \subset A^o\) (now we no longer require \(B=A\)). These cases are simpler because boundary effects are avoided. In fact, the result stated below has some overlap with already known results; it is convenient to state it here too for comparison with the results just given, and because we shall be using it to prove those results.

Proposition 3.4

Suppose A is compact with \( |A| > 0\) and \(f = f_0 \textbf{1}_A\), where \(f_0 := |A|^{-1}\), and \(B \subset A\) is Riemann measurable. Let \(k \in \mathbb {N}\) and \(\beta \in \mathbb {R}\). Then

If moreover B is closed with \(B \subset A^o\), then

The case \(k=1\) of the second equality of (3.7) can be found in [6]. It provides a stronger asymptotic result than the one in [18]. A similar statement to the case \(k=1\) of (3.6) can be found in [17].

Remark 3.5

Let \(k \in \mathbb {N}\). The definition (2.1) of the coverage threshold \(R_{n,k}\) suggests we think of the number and (random) locations of points as being given, and consider the smallest radius of balls around those points needed to cover B k times.

Alternatively, as in [16], one may think of the radius of the balls as being given, and consider the smallest number of balls (with locations generated sequentially at random) needed to cover B k times. That is, given \(r>0\), define the random variable

and note that \(N(r,k) \le n\) if and only if \(R_{n,k} \le r\). In the setting of Theorem 3.1, 3.2 or 3.3, one may obtain a limiting distribution for N(r, k) (suitably scaled and centred) as \(r \downarrow 0\) by using those results together with the following (we write \({\mathop {\longrightarrow }\limits ^{{{\mathscr {D}}}}}\) for convergence in distribution):

Proposition 3.6

Let \(k \in \mathbb {N}\). Suppose Z is a random variable with a continuous cumulative distribution function, and \(a, b, c, c' \in \mathbb {R}\) with \(a>0, b >0\) are such that \( a n R_{n,k}^d - b \log n -c \log \log n {- c' } {\mathop {\longrightarrow }\limits ^{{{\mathscr {D}}}}}Z \) as \(n \rightarrow \infty \). Then as \(r \downarrow 0\),

For example, using Theorem 3.1, and applying Proposition 3.6 with \(a = \theta _d f_0/2\), \(b= (d-1)/d\), \(c = d+ k -3 +1/d\), and \(c' = ((d-1)/d) \log f_0\), we obtain the following:

Corollary 3.7

Suppose that \(d\ge 2\) and \(f=f_0\textbf{1}_A\), where \(A \subset \mathbb {R}^d\) is compact, and has \(C^2\) boundary, and \(f_0 := |A|^{-1}\). Assume \(B=A\). Let \(k \in \mathbb {N}\), \(\zeta \in \mathbb {R}\). Then

4 Strong laws of large numbers

The results in this section provide strong laws of large numbers (SLLNs) for \(R_{n}\). For these results we relax the condition that f be uniform on A. We give strong laws for \(R_n\) when \(A=B\) and A is either smoothly bounded or a polytope. Also for general A we give strong laws for \(R_n\) when \({\overline{B}} \subset A^o\), and for \({\tilde{R}}_n\) for general B.

More generally, we consider \(R_{n,k}\), allowing k to vary with n. Throughout this section, assume we are given a constant \(\beta \in [0,\infty ]\) and a sequence \(k: \mathbb {N}\rightarrow \mathbb {N}\) with

We make use of the following notation throughout:

Observe that \(-H(\cdot )\) is unimodal with a maximum value of 0 at \(t=1\). Given \(a \in [0,\infty )\), we define the function \(\hat{H}_a: [0,\infty ) \rightarrow [a,\infty )\) by

with \(\hat{H}_0(0):=0\). Note that \(\hat{H}_a(x)\) is increasing in x, and that \(\hat{H}_0(x)=x\) and \(\hat{H}_a(0)=a\).

Throughout this paper, the phrase ‘almost surely’ or ‘a.s.’ means ‘except on a set of \(\mathbb {P}\)-measure zero’. We write \(f|_A\) for the restriction of f to A. If \(f_0=0,\) \( b>0\) we interpret \(b/f_0\) as \(+\infty \) in the following limiting statements, and likewise for \(f_1\).

Theorem 4.1

Suppose that \(d \ge 2\) and \(A \subset \mathbb {R}^d\) is compact with \(C^2\) boundary, and that \(f|_A\) is continuous at x for all \(x \in \partial A\). Assume also that \(B=A\) and (4.1) holds. Then as \(n \rightarrow \infty \), almost surely

In particular, if \(k \in \mathbb {N}\) is a constant, then as \(n \rightarrow \infty \), almost surely

We now consider the case where A is a polytope. Assume the polytope A is compact and finite, that is, has finitely many faces. Let \(\varPhi (A)\) denote the set of all faces of A (of all dimensions). Given a face \(\varphi \in \varPhi (A)\), denote the dimension of this face by \(D(\varphi )\). Then \(0 \le D(\varphi ) \le d-1\), and \(\varphi \) is a \(D(\varphi )\)-dimensional polytope embedded in \(\mathbb {R}^d\). Set \(f_\varphi := \inf _{x \in \varphi }f(x)\), let \(\varphi ^o\) denote the relative interior of \(\varphi \), and set \(\partial \varphi := \varphi {\setminus } \varphi ^o\). Then there is a cone \({{\mathscr {K}}}_\varphi \) in \(\mathbb {R}^d\) such that every \(x \in \varphi ^o\) has a neighbourhood \(U_x\) such that \(A \cap U_x = (x+ {{\mathscr {K}}}_\varphi ) \cap U_x\). Define the angular volume \(\rho _\varphi \) of \(\varphi \) to be the d-dimensional Lebesgue measure of \({{\mathscr {K}}}_\varphi \cap B(o,1)\).

For example, if \(D(\varphi )=d-1\) then \(\rho _\varphi = \theta _d/2\). If \(D(\varphi ) = 0\) then \(\varphi = \{v\}\) for some vertex \(v \in \partial A\), and \(\rho _\varphi \) equals the volume of \(B(v,r) \cap A\), divided by \(r^d\), for all sufficiently small r. If \(d=2\), \(D(\varphi )=0\) and \(\omega _\varphi \) denotes the angle subtended by A at the vertex \(\varphi \), then \(\rho _{\varphi } = \omega _\varphi /2\). If \(d=3\) and \(D(\varphi )=1\), and \(\alpha _\varphi \) denotes the angle subtended by A at the edge \(\varphi \) (which is either the angle between the two boundary planes of A meeting at \(\varphi \), or \(2 \pi \) minus this angle), then \(\rho _{\varphi } = 2 \alpha _\varphi /3\).

Our result for A a polytope includes a condition that the polytope be convex; we conjecture that this condition is not needed. We include connectivity in the definition of a polytope, so for \(d=1\) a polytope is defined to be an interval.

Theorem 4.2

Suppose A is a convex compact finite polytope in \(\mathbb {R}^d\). If \(d \ge 4\), assume moreover that A is convex. Assume that \(f|_A\) is continuous at x for all \(x \in \partial A\), and set \(B=A\). Assume \(k(\cdot )\) satisfies (4.1). Then, almost surely,

In the next three results, we spell out some special cases of Theorem 4.2.

Corollary 4.3

Suppose that \(d=2\), A is a convex polygon with \(B=A \), and \(f|_A\) is continuous at x for all \(x \in \partial A\). Let V denote the set of vertices of A, and for \(v \in V\) let \(\omega _v\) denote the angle subtended by A at vertex v. Assume (4.1) with \(\beta < \infty \). Then, almost surely,

In particular, for any constant \(k \in \mathbb {N}\), \( \lim _{n \rightarrow \infty } \left( \frac{ n \pi R_{n,k}^2}{\log n}\right) = \max \left( \frac{1}{f_0}, \frac{1}{ f_1} \right) = \frac{1}{f_0} . \)

Corollary 4.4

Suppose \(d=3\) (so \(\theta _d = 4\pi /3\)), A is a convex polyhedron with \(B=A \), and \(f|_A\) is continuous at x for all \(x \in \partial A\). Let V denote the set of vertices of A, and E the set of edges of A. For \(e\in E\), let \(\alpha _e\) denote the angle subtended by A at edge e, and \(f_e\) the infimum of f over e. For \(v \in V\) let \(\rho _v\) denote the angular volume of vertex v. Suppose (4.1) holds with \(\beta < \infty \). Then, almost surely,

In particular, if \(\beta =0\) the above limit comes to \(\max \left( \frac{3}{ 4 \pi f_0}, \frac{1}{\pi f_1}, \max _{e \in E} \left( \frac{1 }{2 \alpha _e f_e } \right) \right) \).

Corollary 4.5

Suppose \(A=B = [0,1]^d\), and \(f|_A\) is continuous at x for all \(x \in \partial A\). For \(1 \le j \le d\) let \(\partial _j\) denote the union of all \((d-j)\)-dimensional faces of A, and let \(f_j\) denote the infimum of f over \(\partial _j\). Assume (4.1) with \(\beta < \infty \). Then

It is perhaps worth spelling out what the preceding results mean in the special case where \(\beta =0\) (for example, if k(n) is a constant) and also \(\mu \) is the uniform distribution on A (i.e. \(f(x) \equiv f_0\) on A). In this case, the right hand side of (4.6) comes to \((2-2/d)/f_0\), while the right hand side of (4.8) comes to \(f_0^{-1}\max (1/ \theta _d, \max _{\varphi \in \varPhi (A)} \frac{D(\varphi )}{(d \rho _\varphi )})\). The limit in (4.9) comes to \(1/(\pi f_0)\), while the limit in Corollary 4.4 comes to \(f_0^{-1} \max [ 1/\pi , \max _e (1 /(2 \alpha _e))]\), and the right hand side of (4.10) comes to \(2^{d-1}/(\theta _d d)\).

Remark 4.6

The notion of coverage threshold is analogous to that of connectivity threshold in the theory of random geometric graphs [21]. Our results show that the threshold for full coverage by the balls \(B(X_i,r)\), is asymptotically twice the threshold for the union of these balls to be connected, if \(A^o\) is connected, at least when A has a smooth boundary or A is a convex polytope. This can be seen from comparison of Theorem 4.1 above with [21, Theorem 13.7], and comparison of Corollary 4.5 above with [22, Theorem 2.5].

Remark 4.7

We compare our results with [8]. In the setting of our Theorem 4.1, [8, Theorem 3] and [8, Remark 1] give an upper bound on \(\limsup _{n \rightarrow \infty }(n \theta _d R_n^d/\log n)\) of \(\max (f_0^{-1}, 2f_1^{-1})\) in probability or \(\max (2f^{-1}_0, 4f_1^{-1})\) almost surely. In the setting of our Theorem 4.2, they give an upper bound of \(f_0^{-1} \vee \max _{\varphi } (\theta _d/(f_\varphi \rho _\varphi ))\) in probability, or twice this almost surely. Our (4.6) and (4.8) improve significantly on those results.

Remark 4.8

In Theorem 4.2 we do not consider the case where A is a non-convex polytope. To generalize the proof of Lemma 6.12 to non-convex polytopes for general d would seem to require considerably more work.

Our final result is a law of large numbers for \({\tilde{R}}_{n,k}\), no longer requiring \(B=A\). In the case where B is contained in the interior of A, this easily yields a law of large numbers for the k-coverage threshold \(R_{n,k}\). Recall from Sect. 2 that \(\mu \) denotes the probability measure on A with density f.

Proposition 4.9

Suppose that either (i) B is compact and Riemann measurable with \(\mu (B) >0\), and \(B \subset A^o\), and f is continuous on A; or (ii) \(B=A\). Assume (4.1) holds. Then, almost surely,

In particular, if \(k \in \mathbb {N}\) is a constant then

In Case (i), all of the above almost sure limiting statements hold for \(R_{n,k(n)}\) as well as for \({\tilde{R}}_{n,k(n)}\).

Proposition 4.9 has some overlap with known results; the uniform case with \(A=B = [0,1]^d\) and \(f \equiv 1\) on A is covered by [10, Theorem 1]. Taking \(\textbf{C}\) of that paper to be the class of Euclidean balls centred on the origin, we see that the quantity denoted \(M_{1,m}\) in [10] equals \(\tilde{R}_n\). In [10, Example 3] it is stated that the Euclidean balls satisfy the conditions of [10, Theorem 3]. See also [17]. Note also that [15] has a result similar to the case of Proposition 4.9 where \(d=2\), \(A=B= [0,1]^2\) and f is uniform over A.

5 Strategy of proofs

We first give an overview of the strategy for the proofs, in Sect. 6, of the strong laws of large numbers that were stated in Sect. 4.

For \(n \in \mathbb {N}\) and \(p \in [0,1]\) let \(\textrm{Bin}(n,p)\) denote a binomial random variable with parameters n, p. Recall that \(H(\cdot )\) was defined at (4.3), and \(Z_t\) is a Poisson(t) variable for \(t>0\). The proofs in Sect. 6 rely heavily on the following lemma.

Lemma 5.1

(Chernoff bounds) Suppose \(n \in \mathbb {N}\), \(p \in (0,1)\), \(t >0\) and \(0< k < n\).

-

(a)

If \(k \ge np\) then \(\mathbb {P}[ \textrm{Bin}(n,p) \ge k ] \le \exp \left( - np H(k/(np) ) \right) \).

-

(b)

If \(k \le np\) then \(\mathbb {P}[ \textrm{Bin}(n,p) \le k ] \le \exp \left( - np H(k/(np)) \right) \).

-

(c)

If \(k \ge e^2 np\) then \(\mathbb {P}[ \textrm{Bin}(n,p) \ge k ] \le \exp \left( - (k/2) \log (k/(np))\right) \le e^{-k}\).

-

(d)

If \(k < t\) then \(\mathbb {P}[Z_t \le k ] \le \exp (- t H(k/t))\).

-

(e)

If \(k \in \mathbb {N}\) then \(\mathbb {P}[Z_t = k ] \ge (2 \pi k)^{-1/2}e^{-1/(12k)}\exp (- t H(k/t))\).

Proof

See e.g. [21, Lemmas 1.1, 1.2 and 1.3]. \(\square \)

Recall that \(R_{n,k}\) is defined at (2.1), that we assume \((k(n))_{n \ge 1}\) satisfies (4.1) for some \(\beta \in [0,\infty ]\), and that \(\hat{H}_\beta (x)\) is defined to be the \( y \ge \beta \) such that \(y H(\beta /y) =x\), where \(H(\cdot )\) was defined at (4.3).

If \(f \equiv f_0\) on A for a constant \(f_0\), and \(r>0\), then for \(x \in A^{(r)}\), \(\mathscr {X}_n(B(x,r))\) is binomial with mean \(n f_0 \theta _d r^d =: M\), say. Hence if \(M > k(n)\), then parts (b) and (e) of Lemma 5.1 suggest that we should have

If \(\beta < \infty \), and we choose \(r=r_n\) so that \(M = a \log n\) with \(a = \hat{H}_\beta (1)\) this probability approximates to \(\exp ( - a (\log n) H( (\beta \log n) / (a \log n)))\), which comes to \(n^{-1}\). Since we can find \(n^{1+o(1)}\) disjoint balls of radius \(r_n\) where this might happen, this suggests \(a = \hat{H}_\beta (1)\) is the critical value of \(a := M/\log n\), below which the interior region \(A^{(r_n)}\) is not covered (k(n) times) with high probability, and above which it is covered. We can then improve the ‘with high probability’ statement to an ‘almost surely for large enough n’ statement using Lemma 6.1. If f is continuous but not constant on A, the value of \(f_0\) defined at (4.2) still determines the critical choice of a. If \(\beta = \infty \) instead, taking \(M= a' k(n)\) the critical value of \(a'\) is now 1. These considerations lead to Proposition 4.9.

Now consider the boundary region \(A {\setminus } A^{(r)}\), in the case where \(\partial A\) is smooth. We can argue similarly to before, except that for \(x \in \partial A\) the approximate mean of \(\mathscr {X}_n(B(x,r))\) changes to \(nf_1 \theta r^d/2 =: M'\). If \(\beta < \infty \), and we now choose \(r = r_n\) so \(M' = a' \log n\) with \(a' = \hat{H}_\beta (1-1/d)\), then \(\mathbb {P}[\mathscr {X}_n(B(x,r_n) ) < k(n) ] \approx n^{-(1-1/d)}\). Since we can find \(n^{1-1/d + o(1)}\) disjoint balls of radius \(r_n\) centred in \(A {\setminus } A^{(r_n)}\), this suggests \(a' := \hat{H}_\beta (1-1/d)\) is the critical choice of \(a'\) for covering \(A {\setminus } A^{(r_n)}\).

For polytopal A we consider covering the regions near to each of the lower dimensional faces of \(\partial A\) in an analogous way; the dimension of a face affects both the \(\mu \)-content of a ball centred on that face, and the number of disjoint balls that can packed into the region near the face.

Next, we describe the strategy for the proof, in Sect. 7, of the weak convergence results that were stated in Sect. 3.

First, we shall provide a general ‘De-Poissonization’ lemma (Lemma 7.1), as a result of which for each of the Theorems in Sect. 3 it suffices to prove the results for a Poisson process rather than a binomial point process (i.e., for \(R'_{t,k}\) rather than for \(R_{n,k}\)).

Next, we shall provide a general lemma (Lemma 7.2) giving the limiting probability of covering (k times, with k now fixed) a bounded region of \(\mathbb {R}^d\) by a spherical Poisson Boolean model (SPBM) on the whole of \(\mathbb {R}^d\), in the limit of high intensity and small balls. This is based on results from [16] (or [12] if \(k=1\)). Applying Lemma 7.2 for an SPBM with all balls of the same radius yields a proof of Proposition 3.4.

Next, we shall consider the SPBM with balls of equal radius \(r_t\), centred on a homogeneous Poisson process of intensity \(t f_0 \) in a d-dimensional half-space. In the large-t limit, with \(r_t\) shrinking in an appropriate way, in Lemma 7.4 we determine the limiting probability that the a given bounded set within the surface of the half-space is covered, by applying Lemma 7.2 in \(d-1\) dimensions, now with balls of varying radii. Moreover we will show that the probability that a region in the half-space within distance \(r_t\) of that set is covered with the same limiting probability.

We can then complete the proof of Theorem 3.2 by applying Lemma 7.4 to determine the limiting probability that the region near the boundaries of a polygonal set A is covered, and Proposition 3.4 to determine the limiting probability that the interior region is covered, along with a separate argument to show the regions near the corners of polygonal A are covered with high probability.

For Theorem 3.3 we again use Proposition 3.4 to determine the limiting probability that the interior region is covered, and Lemma 7.4 to determine the limiting probability that the region near the faces (but not the edges) of polyhedral A are covered. To deal with the region near the edges of A, we also require a separate lemma (Lemma 7.10) determining the limiting probability that a bounded region near the edge of an infinite wedge-shaped region in \(\mathbb {R}^3\) is covered by an SPBM restricted to that wedge-shaped region.

The proof of Theorem 3.1 requires further ideas. Let \(\gamma \in (1/(2d),1/d)\). We shall work simultaneously on two length scales, namely the radius \(r_t\) satisfying (7.15) (and hence satisfying \(r_t = \varTheta (((\log t)/t)^{1/d})\)), and a coarser length-scale given by \(t^{-\gamma }\). If \(d=2\), we approximate to \(\partial A\) by a polygon of side-length that is \(\varTheta (t^{-\gamma })\). We approximate to \({{\mathscr {P}}}_t\) by a Poisson process inside the polygon, and can determine the asymptotic probability of complete coverage of this approximating polygon by considering a lower-dimensional Boolean model on each of the edges. In fact we shall line up these edges by means rigid motions, into a line segment embedded in the plane; in the limit we obtain the limiting probability of covering this line segment with balls centred on a Poisson process in the half-space to one side of this line segment, which we know about by Lemma 7.4. By separate estimates we can show that the error terms either from the corners of the polygon, or from the approximation of Poisson processes, are negligible in the large-t limit.

For \(d \ge 3\) we would like to approximate to A by a polyhedral set \(A_t\) obtained by taking its surface to be a triangulation of the surface of A with side-lengths \(\varTheta (t^{-\gamma })\). However, two obstacles to this strategy present themselves.

The first obstacle is that in 3 or more dimensions, it is harder to be globally explicit about the set \(A_t\) and the set difference \(A \triangle A_t\). We deal with this by triangulating \(\partial A\) locally rather than globally; we break \(\partial A\) into finitely many pieces, each of which lies within a single chart within which \(\partial A\), after a rotation, can be expressed as the graph of a \(C^2\) function on a region U in \(\mathbb {R}^{d-1}\). Then we triangulate U (in the sense of tessellating it into simplices) explicitly and use this to determine an explicit local triangulation (in the sense of approximating the curved surface by a union of \((d-1)\)-dimensional simplices) of \(\partial A\).

The second obstacle is that the simplices in the triangulation cannot in general be reassembled into a \((d-1)\)-dimensional cube. To get around this, we shall pick \(\gamma ' \in (\gamma ,1/d)\) and subdivide these simplices into smaller \((d-1)\)-dimensional cubes of side \(t^{-\gamma '}\); we can reassemble these smaller \((d-1)\)-dimensional cubes into a cube in \(\mathbb {R}^{d-1}\), and control the boundary region near the boundaries of the smaller \((d-1)\)-dimensional cubes, or near the boundary of the simplices, by separate estimates.

6 Proof of strong laws of large numbers

In this section we prove the results stated in Sect. 4. Throughout this section we are assuming we are given a constant \(\beta \in [0,\infty ]\) and a sequence \((k(n))_{n \in \mathbb {N}}\) satisfying (4.1). Recall that \(\mu \) denotes the distribution of \(X_1\), and this has a density f with support A, and that \(B \subset A\) is fixed, and \(R_{n,k}\) is defined at (2.1).

We shall repeatedly use the following lemma. It is based on what in [21] was called the ‘subsequence trick’. This result says that if an array of random variables \(U_{n,k}\) is monotone in n and k, and \(U_{n,k(n)}\), properly scaled, converges in probability to a constant at rate \(n^{-\varepsilon }\), one may be able to improve this to almost sure convergence.

Lemma 6.1

(Subsequence trick) Suppose \((U_{n,k},(n,k) \in \mathbb {N}\times \mathbb {N})\) is an array of random variables on a common probability space such that \(U_{n,k} \) is nonincreasing in n and nondecreasing in k, that is, \(U_{n+1,k} \le U_{n,k} \le U_{n,k+1} \) almost surely, for all \((n,k) \in \mathbb {N}\times \mathbb {N}\). Let \(\beta \in [0,\infty ), \varepsilon>0, c >0\), and suppose \((k(n),n \in \mathbb {N})\) is an \(\mathbb {N}\)-valued sequence such that \(k(n)/\log n \rightarrow \beta \) as \(n \rightarrow \infty \).

-

(a)

Suppose \( \mathbb {P}[n U_{n, \lfloor (\beta + \varepsilon ) \log n \rfloor } > \log n] \le c n^{-\varepsilon }, \) for all but finitely many n. Then \(\mathbb {P}[ \limsup _{n \rightarrow \infty } n U_{n,k(n)} /\log n \le 1] =1\).

-

(b)

Suppose \(\varepsilon < \beta \) and \( \mathbb {P}[n U_{n, \lceil (\beta - \varepsilon ) \log n \rceil } \le \log n] \le c n^{-\varepsilon }, \) for all but finitely many n. Then \(\mathbb {P}[ \liminf _{n \rightarrow \infty } n U_{n,k(n)} /\log n \ge 1] =1\).

Proof

(a) For each n set \(k'(n) := \lfloor (\beta + \varepsilon ) \log n \rfloor \). Pick \(K \in \mathbb {N}\) with \(K \varepsilon >1\). Then by our assumption, we have for all large enough n that \(\mathbb {P}[n^K U_{n^K, k'(n^K)} > \log (n^K) ]\le c n^{-K \varepsilon }\), which is summable in n. Therefore by the Borel-Cantelli lemma, there exists a random but almost surely finite N such that for all \(n \ge N\) we have

and also \(k(m) \le (\beta + \varepsilon /2) \log m\) for all \(m \ge N^K\), and moreover \((\beta + \varepsilon /2) \log ((n+1)^K) \le (\beta + \varepsilon ) \log (n^K) \) for all \(n \ge N\). Now for \(m \in \mathbb {N}\) with \(m \ge N^K\), choose \(n \in \mathbb {N}\) such that \(n^K \le m < (n+1)^K\). Then

so \(k(m) \le k'(n^K)\). Since \(U_{m,k}\) is nonincreasing in m and nondecreasing in k,

which gives us the result asserted.

(b) The proof is similar to that of (a), and is omitted. \(\square \)

6.1 General lower and upper bounds

In this subsection we present asymptotic lower and upper bounds on \(R_{n,k(n)}\), not requiring any extra assumptions on A and B. In fact, A here can be any metric space endowed with a probability measure \(\mu \), and B can be any subset of A. The definition of \(R_{n,k}\) at (2.1) carries over in an obvious way to this general setting.

Later, we shall derive the results stated in Sect. 4 by applying the results of this subsection to the different regions within A (namely interior, boundary, and lower-dimensional faces).

Given \(r>0, a>0\), define the ‘packing number’ \( \nu (B,r,a) \) be the largest number m such that there exists a collection of m disjoint closed balls of radius r centred on points of B, each with \(\mu \)-measure at most a. The proof of the following lemma implements, for a general metric space, the strategy outlined in Sect. 5.

Lemma 6.2

(General lower bound) Let \(a >0, b \ge 0\). Suppose \(\nu (B,r,a r^d) = \varOmega (r^{-b})\) as \(r \downarrow 0\). Then, almost surely, if \(\beta = \infty \) then \(\liminf _{n \rightarrow \infty } \left( n R_{n,k(n)}^d/k(n) \right) \ge 1/a\). If \(\beta < \infty \) then \(\liminf _{n \rightarrow \infty } \left( n R_{n,k(n)}^d/\log n \right) \ge a^{-1} \hat{H}_\beta (b/d)\), almost surely.

Proof

First suppose \(\beta = \infty \). Let \(u\in (0,1/a )\). Set \(r_n := \left( uk(n)/n \right) ^{1/d}\), \(n \in \mathbb {N}\). By (4.1), \(r_n \rightarrow 0\) as \(n \rightarrow \infty \). Then, given n sufficiently large, we have \(\nu (B,r_n,ar_n^d ) >0\) so we can find \(x \in B\) such that \( \mu (B(x,r_n)) \le a r_n^d\), and hence \( n \mu (B(x,r_n)) \le a uk(n) . \) If \(k(n) \le e^2 n \mu (B(x,r_n))\) (and hence \(n \mu (B(x,r_n)) \ge e^{-2} k(n)\)), then since \(\mathscr {X}_n(B(x,r_n))\) is binomial with parameters n and \(\mu (B(x,r_n))\), by Lemma 5.1(a) we have that

while if \(k (n) > e^2 n \mu (B(x,r_n))\) then by Lemma 5.1(c), \(\mathbb {P}[ R_{n,k(n)} \le r_n] \le e^{-k(n)}\). Therefore \(\mathbb {P}[ R_{n,k(n)} \le r_n]\) is summable in n because we assume here that \(k(n)/\log n \rightarrow \infty \) as \(n \rightarrow \infty \). Thus by the Borel-Cantelli lemma, almost surely \(R_{n,k(n)} > r_n\) for all but finitely many n, and hence \( \liminf n R_{n,k(n)}^d /k(n) \ge u\). This gives the result for \(\beta = \infty \).

Now suppose instead that \(\beta < \infty \) and \(b=0\), so that \(\hat{H}_\beta (b/d) = \beta \). Assume that \(\beta >0\) (otherwise the result is trivial). Let \(\beta ' \in (0, \beta )\). Let \(\delta > 0\) with \(\beta ' < \beta - \delta \) and with \( \beta ' H \left( \frac{ \beta - \delta }{\beta ' } \right) > \delta . \) For \(n \in \mathbb {N}\), set \(r_n := ( (\beta ' \log n)/ (a n) )^{1/d}\) and set \(k'(n)= \lceil (\beta - \delta ) \log n \rceil \). By assumption \(\nu (B,r_n,a r_n^d) = \varOmega (1)\), so for all n large enough, we can (and do) choose \(x_n \in B\) such that \( n \mu (B(x_{n},r_n)) \le n a r_n^d = \beta ' \log n. \) Then by a simple coupling, and Lemma 5.1(a),

Hence \(\mathbb {P}[ n R_{n,k'(n)}^d \le (\beta '/a) \log n ] = \mathbb {P}[ R_{n,k'(n)} \le r_n] \le n^{-\delta }\). Then by the subsequence trick (Lemma 6.1(b)), we may deduce that \( \liminf ( n R_{n,k(n)}^d /\log n) \ge \beta '/a , \) almost surely, which gives us the result for this case.

Now suppose instead that \(\beta < \infty \) and \(b > 0\). Let \(u\in ( 0, a^{-1} \hat{H}_\beta (b/d))\), so that \(ua H(\beta /(ua)) < b/d\). Choose \(\varepsilon >0\) such that \((1+ \varepsilon ) ua H(\beta /(ua)) < (b/d)-9 \varepsilon \).

For each \(n \in \mathbb {N}\) set \(r_n= (u(\log n)/n)^{1/d}\). Let \(m_n := \nu (B,r_n,a r_n^d)\), and choose \(x_{n,1},\ldots ,\) \(x_{n,m_n} \in B\) such that the balls \(B(x_{n,1},r_n),\ldots ,B(x_{n,m_n},r_n)\) are pairwise disjoint and each have \(\mu \)-measure at most \(ar_n^d\). Set \(\lambda (n):= n+ n^{3/4}\). For \(1 \le i \le m_n\), if \(k(n) > 1\) then by a simple coupling, and Lemma 5.1(e),

Now \(\lambda (n)r_n^d/\log n \rightarrow u\) so by (4.1), \((k(n)-1)/(\lambda (n) a r_n^d) \rightarrow \beta /(ua)\) as \(n \rightarrow \infty \). Thus by the continuity of \(H(\cdot )\), provided n is large enough and \(k(n) > 1\), for \(1 \le i \le m_{n}\),

If \(k(n)=1\) for infinitely many n, then \(\beta =0\) and (6.1) still holds for large enough n.

By (6.1) and our choice of \(\varepsilon \), there is a constant \(c >0 \) such that for all large enough n and all \(i \in \{1,\ldots ,m_n\}\) we have

Hence, setting \(E_n:= \cap _{i=1}^{m_n} \{ {{\mathscr {P}}}_{\lambda (n)}(B(x_{n,i},r_n)) \ge k(n) \}\), for all large enough n we have

By assumption \(m_n = \nu (B,r_n , a r_n^d) = \varOmega (r_n^{-b})\) so that for large enough n we have \(m_n \ge n^{(b/d) - \varepsilon }\), and therefore \(\mathbb {P}[E_n]\) is is summable in n.

By Lemma 5.1(d), and Taylor expansion of H(x) about \(x=1\) (see the print version of [21, Lemma 1.4] for details; there may be a typo in the electronic version), for all n large enough \( \mathbb {P}[Z_{\lambda (n)} < n] \le \exp ( - \frac{1}{9} n^{1/2}), \) which is summable in n. Since \(R_{m,k}\) is nonincreasing in m, by the union bound

which is summable in n by the preceding estimates. Therefore by the Borel-Cantelli lemma,

so the result follows for this case too. \(\square \)

Given \(r>0\), and \(D \subset A\), define the ‘covering number’

We need a complementary upper bound to go with the preceding asymptotic lower bound on \(R_{n,k(n)}\). For this, we shall require a condition on the ‘covering number’ that is roughly dual to the condition on ‘packing number’ used in Lemma 6.2. Also, instead of stating the lemma in terms of \(R_{n,k}\) directly, it is more convenient to state it in terms of the ‘fully covered’ region \(F_{n,k,r}\), defined for \(n,k \in \mathbb {N}\) and \(r>0\) by

We can characterise the event \(\{{\tilde{R}}_{n,k} \le r\} \) in terms of the set \(F_{n,k,r}\) as follows:

Indeed, the ‘if’ part of this statement is clear from (2.3). For the ‘only if’ part, note that if there exists \(x \in (B \cap A^{(r)}) {\setminus } F_{n,k,r}\), then there exists \(s >r\) with \(x \in B \cap A^{(s)} {\setminus } F_{n,k,s}\). Then for all \(s' <s\) we have \(x \in B \cap A^{(s')} {\setminus } F_{n,k,s}\), and therefore \({\tilde{R}}_{n,k} \ge s > r\).

Lemma 6.3

(General upper bound) Suppose \(r_0, a, b \in (0,\infty )\), and a family of sets \(A_r \subset A,\) defined for \(0< r < r_0\), are such that for all \(r \in (0,r_0)\), \(x \in A_r\) and \(s \in (0,r)\) we have \(\mu (B(x,s)) \ge a s^d\), and moreover \(\kappa (A_r,r) = O(r^{-b})\) as \(r \downarrow 0\).

If \(\beta = \infty \) then let \(u> 1/a\) and set \(r_n = (uk(n)/n)^{1/d}\), \( n \in \mathbb {N}\). Then with probability one, \(A_{r_n} \subset F_{n,k(n),r_n}\) for all large enough n.

If \(\beta < \infty \), let \(u>a^{-1} \hat{H}_\beta (b/d)\) and set \(r_n = (u(\log n)/n)^{1/d}\). Then there exists \(\varepsilon >0\) such that \( \mathbb {P}[\{A_{r_n} \subset F_{n,\lfloor (\beta + \varepsilon ) \log n\rfloor ,r_n} \}^c ] = O(n^{-\varepsilon }) \) as \(n \rightarrow \infty \).

Proof

Let \(\varepsilon \in (0,1)\); if \(\beta = \infty \), assume \(a (1-\varepsilon )^d u> 1+ \varepsilon \). If \(\beta < \infty \), assume

This can be achieved because \(a uH (\beta /(a u)) > b/d\) in this case. Set \(m_n = \kappa (A_{r_n},\varepsilon r_n)\). Then \(m_n= O(r_n^{-b}) = O(n^{b/d})\) (in either case). Let \(x_{n,1},\ldots ,x_{n,m_n} \in A_{r_n}\) with \(A_{r_n} \subset \cup _{i=1}^{m_n} B(x_{n,i}, \varepsilon r_n)\). Then for \(1 \le i \le m_n\), if \(\mathscr {X}_n(B(x_{n,i}, (1-\varepsilon )r_n) \ge k(n)\) then \(B(x_{n,i},\varepsilon r_n) \subset F_{n,k(n),r_n}\). Therefore

Suppose \(\beta = \infty \). Then for \(1 \le i \le m_n\),

so that by (6.5), the union bound, and Lemma 5.1(b),

This is summable in n, since \(m_n = O(n^{b/d})\) and \(k(n)/\log n \rightarrow \infty \). Therefore by the first Borel-Cantelli lemma, we obtain the desired conclusion for this case.

Now suppose instead that \(\beta < \infty \). Then

Therefore setting \(k'(n) := \lfloor (\beta + \varepsilon ) \log n \rfloor \), by (6.5) we have

which yields the desired conclusion for this case. \(\square \)

6.2 Proof of Proposition 4.9

Throughout this subsection we assume that either: (i) B is compact and Riemann measurable with \(\mu (B)>0\) and \(B \subset A^o\), or (ii) \(B=A\). We do not (yet) assume f is continuous on A. Recall from (4.2) that \(f_0: = \mathrm{ess~inf}_{x \in B} f(x)\).

We shall prove Proposition 4.9 by applying (6.4) and Lemma 6.3 to derive an upper bound on \({\tilde{R}}_{n,k(n)}\) (Lemma 6.6), and Lemma 6.2 to derive a lower bound (Lemma 6.5). For the lower bound we also require the following lemma (recall that \(\nu (B,r,a)\) was defined just before Lemma 6.2).

Lemma 6.4

Let \(\alpha > f_0\). Then \(\liminf _{r \downarrow 0} r^d \nu (B,r, \alpha \theta _d r^d) > 0\).

Proof

Let \(\kappa = 3^{-d} \theta _d\). Note that \(0< \kappa <1\). Set

By the Riemann measurability assumption, \(\mathrm{ess~inf}_{B^{(\varepsilon )}} (f) \downarrow f_0\) as \(\varepsilon \downarrow 0\). Therefore we can and do choose \(\delta >0\) with \(\mu (B^{(\delta )} ) >0\) and with \(\mathrm{ess~inf}_{B^{(\delta )}}(f) < \alpha '\).

Note that \(f_0< \alpha ' < \alpha \). Let \(x_0 \in B^{(\delta )}\) with \(f(x_0) < \alpha '\) and \(x_0\) a Lebesgue point of f. Then take \(r_0 \in (0,\delta )\) such that \(\mu (B(x_0,r_0)) < \alpha ' \theta _d r_0^d\).

For \(r>0\) sufficiently small, we can and do take a collection of disjoint balls \(B(x_{r,1},r),\ldots ,B(x_{r, \sigma '(r)},r)\), all contained in \(B(x_0,r_0)\), with \(\sigma '(r) > (\kappa /2) (r_0/r)^d\). Indeed, we can take \((1+ o(1))\theta _d r_0^d (3r)^{-d}\) [as \(r \downarrow 0\)] disjoint cubes of side 3r inside \(B(x_0,r_0)\) and can take a closed ball of radius r inside the interior of each of these cubes.

Given small r, write \(B_i\) for \(B(x_{r,i},r)\) and \(B_0\) for \(B(x_0,r_0)\). Suppose fewer than half of the balls \(B_i, 1 \le i \le \sigma '(r)\) satisfy \(\mu (B_i) \le \alpha \theta _d r^d\). Then more than half of them satisfy \(\mu (B_i) > \alpha \theta _d r^d\). Let D denote the union of the latter collection of balls. Denoting volume by \(|\cdot |\), we have \(\mu (D) \ge \alpha |D|\) and \(\mu (B_0 {\setminus } D) \ge f_0 |B_0 {\setminus } D|\), and

Then

which contradicts our original assumption about \(r_0\). Therefore at least half of the balls \(B_i, 1 \le i \le \sigma '(r)\) satisfy \(\mu (B_i) \le \alpha \theta _d r^d\). Thus \(\nu (B,r,\alpha \theta _dr^d) \ge \sigma '(r)/2\). \(\square \)

Lemma 6.5

It is the case that

Proof

Let \(\alpha '> \alpha > f_0\). Set \(r_n := (k(n)/(n \theta _d \alpha '))^{1/d}\) if \(\beta = \infty \), and set \(r_n:= (\hat{H}_\beta (1) (\log n)/(n \theta _d \alpha '))^{1/d}\) if \(\beta < \infty \).

Assume for now that B is compact with \(B \subset A^o\), so that there exists \(\delta >0\) such that \(B \subset A^{(\delta )}\). Then, even if f is not continuous on A, we can find \(x_0 \in B\) with \(f(x_0) < \alpha \), such that \(x_0\) is a Lebesgue point of f. Then for all small enough \(r>0\) we have \(\mu (B(x_0,r)) < \alpha \theta _d r^d\), so that \(\nu (B,r,\alpha \theta _d r^d) = \varOmega (1)\) as \(r \downarrow 0\).

If \(\beta = \infty \), then by Lemma 6.2 (taking \(b=0\)), \(\liminf _{n \rightarrow \infty } nR_{n,k(n)}^d/k(n) \ge (\theta _d \alpha )^{-1}\), almost surely. Hence for all large enough n we have \(R_{n,k(n)} > r_n\); provided n is also large enough so that \(r_n < \delta \) we also have \({\tilde{R}}_{n,k(n)} > r_n\), and (6.6) follows.

Suppose instead that \(\beta < \infty \). Then by Lemma 6.4, \(\nu (B,r, \alpha \theta _d r^{d}) = \varOmega (r^{-d})\) as \(r \downarrow 0\). Hence by Lemma 6.2, almost surely \(\liminf _{n \rightarrow \infty } \left( n R_{n,k(n)}^d /\log n \right) \ge (\alpha \theta _d)^{-1} \hat{H}_\beta (1)\), and hence for large enough n we have \(R_{n,k(n)} > r_n \) and also \({\tilde{R}}_{n,k(n)} > r_n \), which yields (6.7).

Finally, suppose instead that \(B=A\). Then by using e.g. [21, Lemma 11.12] we can find compact, Riemann measurable \(B' \subset A^o\) with \(\mu (B') >0\) and \(\mathrm{ess~inf}_{x \in B'} f(x) < \alpha \). Define \(S_{n,k}\) to be the smallest \(r \ge 0\) such that every point in \(B'\) is covered at least k times by balls of radius r centred on points of \(\mathscr {X}_n\). By the argument already given we have almost surely for all large enough n that \(S_{n,k(n)} > r_n \) and also \(B' \subset A^{(r_n)}\). For such n, there exists \(x \in B' \subset B \cap A^{(r_n)}\) with \(\mathscr {X}_n(B(x,r_n)) < k(n)\), and hence by (6.4), \({\tilde{R}}_{n,k(n)} > r_n\), which gives us (6.6) and (6.7) in this case too. \(\square \)

Now and for the rest of this subsection, we do assume in case (i) (with \(B \subset A^o\)) that f is continuous on A.

Lemma 6.6

Suppose that \(f_0 >0\). Then almost surely

Proof

We shall apply Lemma 6.3, here taking \(A_r = B \cap A^{(r)}\). To start, we claim that

This follows from the definition (4.2) of \(f_0\) when \(B=A\). In the other case (with \(B \subset A^o\)) it follows from (4.2) and the assumed continuity of f on A.

Suppose \(f_0 < \infty \) and let \(\delta \in (0,f_0)\). It is easy to see that \(\kappa (B \cap A^{(r)},r) = O(r^{-d})\) as \(r \downarrow 0\). Together with (6.10), this shows that the hypotheses of Lemma 6.3 (taking \(A_r = B \cap A^{(r)}\)) apply with \(a= \theta _d(f_0- \delta )\) and \(b= d\). Hence by (6.4) and Lemma 6.3, if \(\beta = \infty \) then for any \( u > (\theta _d(f_0-\delta ))^{-1}\), we have almost surely for large enough n that \({\tilde{R}}_{n,k(n)} \le (u k(n)/n)^{1/d}\), and (6.8) follows.

If \(\beta < \infty \), then by (6.4) and Lemma 6.3, given \(u > \hat{H}_\beta (1)/ (\theta _d (f_0-\delta )) \), there exists \(\varepsilon >0\) such that, setting \(k'(n):= \lfloor (\beta + \varepsilon ) \log n \rfloor \) and \(r_n:= (u (\log n)/n)^{1/d}\), we have

Therefore by Lemma 6.1, which is applicable since \({\tilde{R}}_{n,k}^d/u\) is nonincreasing in n and nondecreasing in k, we obtain that \( \limsup ( n {\tilde{R}}_{n,k(n)}^d /\log n) \le u\), almost surely. Since \(u > \hat{H}_\beta (1)/(\theta _d ( f_0 - \delta ))\) and \(\delta \in (0,f_0)\) are arbitrary, we therefore obtain (6.9). \(\square \)

Proof of Proposition 4.9

Under either hypothesis ((i) or (ii)), it is immediate from Lemmas 6.5 and 6.6 that (4.11) holds if \(\beta = \infty \) and (4.12) holds if \(\beta < \infty \).

It follows that almost surely \({\tilde{R}}_{n,k(n)} \rightarrow 0\) as \(n \rightarrow \infty \), and therefore if we are in Case (i) (with \(B \subset A^o\)) we have \({\tilde{R}}_{n,k(n)} = R_{n,k(n)}\) for all large enough n. Therefore in this case (4.11) (if \(\beta =\infty \)) or (4.12) (if \(\beta < \infty )\) still holds with \({\tilde{R}}_{n,k(n)}\) replaced by \(R_{n,k(n)}\). \(\square \)

6.3 Proof of Theorem 4.1

In this section and again later on, we shall use certain results from [21], which rely on an alternative characterization of A having a \(C^2\) boundary, given in the next lemma. Recall that \(S \subset \mathbb {R}^d\) is called a \((d-1)\)-dimensional submanifold of \(\mathbb {R}^d\) if there exists a collection of pairs \((U_i,\phi _i)\), where \(\{U_i\}\) is a collection of open sets in \(\mathbb {R}^d\) whose union contains S, and \(\phi _i\) is a \(C^2\) diffeomorphism of \(U_i\) onto an open set in \(\mathbb {R}^d\) with the property that \(\phi _i(U_i \cap S) = \phi _i(U_i) \cap (\mathbb {R}^{d-1} \times \{0\})\). The pairs \((U_i,\phi _i)\) are called charts. We shall sometimes also refer to the sets \(U_i\) as charts here.

Lemma 6.7

Suppose \(A \subset \mathbb {R}^d\) has a \(C^2\) boundary. Then \(\partial A\) is a \((d-1)\)-dimensional \(C^2\) submanifold of \(\mathbb {R}^d\).

Proof

Let \(x \in \partial A\). Let U be an open neighbourhood of x, \(V \subset \mathbb {R}^{d-1} \) an open set, and \(\rho \) a rotation on \(\mathbb {R}^d\) about x such that \( \rho ( \partial A \cap U) = \{(w,f(w)): w \in V\}\), and moreover \(\rho (U) \subset V \times \mathbb {R}\). Then for \((w,z) \in U\) (with \(w \in V \) and \(z \in \mathbb {R}\)), take \(\psi (w,z) = (w,z-f(w))\). Then \(\psi \circ \rho \) is a \(C^2\) diffeomorphism from U to \(\psi \circ \rho (U)\), with the property that \(\psi \circ \rho (U \cap \partial A) = \psi \circ \rho (U) \cap (\mathbb {R}^{d-1} \times \{0\})\), as required. \(\square \)

Remark 6.8

The converse to Lemma 6.7 also holds: if \(\partial A\) is a \((d-1)\)-dimensional submanifold of \(\mathbb {R}^d\) then A has a \(C^2\) boundary in the sense that we have defined it. The proof of this this implication is more involved, and not needed in the sequel, so we omit the argument.

We shall use the following lemma here and again later on.

Lemma 6.9

Suppose \(A \subset \mathbb {R}^d\) is compact, and has \(C^2\) boundary. Given \(\varepsilon >0\), there exists \(\delta >0\) such that for all \(x \in A\) and \(s \in (0,\delta )\), we have \(|B(x,s) \cap A| > (1- \varepsilon ) \theta _d s^d/2\).

Proof

Immediate from applying first Lemma 6.7, and then [21, Lemma 5.7]. \(\square \)

Recall that \(F_{n,k,r}\) was defined at (6.3). We introduce a new variable \(R_{n,k,1}\), which is the smallest radius r of balls required to cover k times the boundary region \(A {\setminus } A^{(r)} \):

Loosely speaking, the 1 in the subscript refers to the fact that this boundary region is in some sense \((d-1)\)-dimensional. For all n, k, we claim that

Indeed, if \(r > R_{n,k}\) then \(A \subset F_{n,k,r}\) so that \(A^{(r)} \subset F_{n,k,r}\) and \(A {\setminus } A^{(r)} \subset F_{n,k,r}\), and hence \(r \ge \max ({\tilde{R}}_{n,k},R_{n,k,1})\); hence, \(R_{n,k} \ge \max ({\tilde{R}}_{n,k},R_{n,k,1})\). For an inequality the other way, suppose \(r > \max ({\tilde{R}}_{n,k},R_{n,k,1})\); then by (2.3), there exists \(r' <r\) with \(A^{(r')} \subset F_{n,k,r'}\), and hence also \(A^{(r)} \subset F_{n,k,r}\). Moreover by (6.11) there exists \(s < r\) with \(A {\setminus } A^{(s)} \subset F_{n,k,s}\). Now suppose \(x \in A^{(s)} {\setminus } A^{(r)}\). Let \(z \in \partial A\) with \(\Vert z-x\Vert = \,\textrm{dist}(x,\partial A) \in (s,r]\). Let \(y \in [x,z]\) with \(\Vert y-z\Vert = s\). Then \(y \in A {\setminus } A^{(s)}\), so that \(y \in F_{n,k,s}\), and also \(\Vert x-y\Vert \le r-s\). This implies that \(x \in F_{n,k,r}\). Therefore \(A^{(s)} {\setminus } A^{(r)} \subset F_{n,k,r}\). Combined with the earlier set inclusions this shows that \(A \subset F_{n,k,r}\) and hence \(R_{n,k} \le r\). Thus \(R_{n,k} \le \min ({\tilde{R}}_{n,k},R_{n,k,1})\), and hence (6.12) as claimed.

Recall that we are assuming that \(k(n)/\log n \rightarrow \beta \in [0,\infty ]\) and \(k(n) /n \rightarrow 0\), as \(n \rightarrow \infty \), and \(f_1 := \inf _{\partial A} f\). We shall derive Theorem 4.1 using the next two lemmas.

Lemma 6.10

Suppose the assumptions of Theorem 4.1 apply. Then

Proof

Let \(\varepsilon >0\). By [21, Lemma 5.8], for each \(r >0 \) we can and do take \(\ell _r \in \mathbb {N}\cup \{0\}\) and points \(y_{r,1},\ldots ,y_{r,\ell _r} \in \partial A\) such that the balls \(B(y_{r,i},r), 1 \le i \le \ell _r\), are disjoint and each satisfy \(\mu (B(y_{r,i}, r)) \le (f_1+\varepsilon ) \theta _d r^d/2\), with \(\liminf _{r \downarrow 0} (r^{d-1} \ell _r) >0\). In other words, \( \liminf _{r \downarrow 0} r^{d-1} \nu (B,r,(f_1+\varepsilon )\theta _d r^d/2) >0\).

Hence, if \(\beta = \infty \) then by Lemma 6.2 we have that \(\liminf _{n \rightarrow \infty } \left( n R_{n,k(n)}^d/k(n) \right) \ge 2/(\theta _d (f_1+ \varepsilon ))\), almost surely, and this yields (6.13).

Now suppose \(\beta < \infty \); also we are assuming \(d \ge 2\). By taking \(a= (f_1+ \varepsilon ) \theta _d/2\) in Lemma 6.2, we obtain that, almost surely,

and hence (6.14). \(\square \)

Lemma 6.11

Under the assumptions of Theorem 4.1,

Proof

We shall apply Lemma 6.3, here taking \(A_r = A {\setminus } A^{(r)}\). Observe that by (6.11), event \(\{ A {\setminus } A^{(r)} \subset F_{n,k,r} \}\) implies \(R_{n,k,1} \le r\), for all \(r>0\), \(n, k \in \mathbb {N}\).

We claim that

To see this, let \(r>0\), and let \(x_1,\ldots ,x_m \in \partial A\) with \(\partial A \subset \cup _{i=1}^m B(x_i,r)\), and with \(m = \kappa (\partial A,r)\). Then \(A {\setminus } A^{(r)} \subset \cup _{i=1}^{m} B(x_{i}, 2r)\). Setting \(c := \kappa (B(o,4),1)\), we can cover each ball \(B(x_i,2r)\) by c balls of radius r/2, and therefore can cover \(A {\setminus } A^{(r)}\) by cm balls of radius r/2, denoted \(B_1,\ldots , B_{cm}\) say. Set \( {{\mathscr {I}}}:= \{i \in \{1,\ldots ,cm\}: A{\setminus } A^{(r)} \cap B_i \ne \varnothing \}\). For each \(i \in {{\mathscr {I}}}\), select a point \(y_i \in A {\setminus } A^{(r)} \cap B_i\). Then \(A {\setminus } A^{(r)} \subset \cup _{i \in {{\mathscr {I}}}} B(y_i,r) \), and hence \(\kappa (A {\setminus } A^{(r)}) \le c \kappa ( \partial A,r)\). By [21, Lemma 5.4], \(\kappa (\partial A,r) = O(r^{1-d})\), and (6.17) follows.

Let \(\varepsilon _1 \in (0,1)\). Since we assume \(f|_A\) is continuous at x for all \(x \in \partial A\), there exists \(\delta >0\) such that \(f(x) > (1 - \varepsilon _1)f_1\) for all \(x \in A\) distant less than \(\delta \) from \(\partial A\). Then, under the hypotheses of Theorem 4.1, by Lemma 6.9, there is a further constant \(\delta ' \in (0,\delta /2)\) such that for all \(r \in (0, \delta ')\) and all \(x \in A {\setminus } A^{(r)}\), \(s \in (0,r]\) we have \( \mu (B(x,s)) \ge (1- \varepsilon _1 )^{2} f_1 (\theta _d/2) s^d. \)

Therefore taking \(A_r = A {\setminus } A^{(r)}\), the hypotheses of Lemma 6.3 hold with \(a = (1-\varepsilon _1)^2 f_1 \theta _d/2\) and \(b= d-1\).

Thus if \(\beta = \infty \), then taking \(r_n = (u k(n)/n)^{1/d}\) with \(u > 2 (1-\varepsilon _1)^{-2}/(f_1 \theta _d)\), by Lemma 6.3 we have almost surely that for all n large enough, \(A {\setminus } A^{(r_n)} \subset F_{n,k(n),r_n}\) and hence \(R_{n,k(n),1} \le r_n\). That is, \(n R_{n,k(n),1}^d/k(n) \le u\), and (6.15) follows.

If \(\beta < \infty \), take \(u > (2 (1-\varepsilon _1)^{-2}/(f_1 \theta _d)) \hat{H}_\beta (1-1/d)\). By Lemma 6.3, if we set \(r_n= (u (\log n)/n)^{1/d}\), then there exist \(\varepsilon >0\) such that if we take \(k'(n)= \lfloor (\beta + \varepsilon ) \log n \rfloor \), then

Also \(R_{n,k,1}\) is nonincreasing in n and nondecreasing in k, so by Lemma 6.1, we have almost surely that \(\limsup _{n \rightarrow \infty } n R^d_{n,k(n),1} /\log n \le u\), and hence (6.16). \(\square \)

Proof of Theorem 4.1

By (6.12), Proposition 4.9 and Lemma 6.11,

almost surely. Moreover by (6.12), Proposition 4.9 and Lemma 6.10,

almost surely, and the result follows. \(\square \)

6.4 Polytopes: Proof of Theorem 4.2

Throughout this subsection we assume, as in Theorem 4.2, that A is a compact convex finite polytope in \(\mathbb {R}^d\). We also assume that \(B=A\), and \(f|_A\) is continuous at x for all \(x \in \partial A\), and (4.1) holds for some \(\beta \in [0,\infty ]\).

Given any \(x \in \mathbb {R}^d\) and nonempty \(S \subset \mathbb {R}^d\) we set \(\,\textrm{dist}(x,S):= \inf _{y \in S} \Vert x-y\Vert \).

Lemma 6.12

Suppose \(\varphi , \varphi '\) are faces of A with \(D(\varphi )>0\) and \(D(\varphi ') = d-1\), and with \(\varphi {\setminus } \varphi ' \ne \varnothing \). Then \(\varphi ^o \cap \varphi ' = \varnothing \), and \(K(\varphi ,\varphi ') < \infty \), where we set

Proof

If \(\varphi \cap \varphi ' = \varnothing \) then \(K(\varphi ,\varphi ') < \infty \) by an easy compactness argument, so assume \(\varphi \cap \varphi ' \ne \varnothing \). Without loss of generality we may then assume \(o \in \varphi \cap \varphi '\).

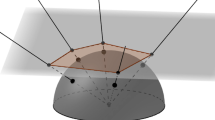

If \(d=3\), A is convex and \(D(\varphi )= D(\varphi ')=2\), \(D(\varphi \cap \varphi ') = 1\) and moreover \(\varphi , \varphi '\) are rectangular with angle \(\alpha \) between them and \(0< \alpha < \pi \), then \(K(\varphi ,\varphi ') = \sec \alpha \), as illustrated in Fig. 1. However to generalize to all d takes some care.

Illustration of Lemma 6.12 in in \(d=3\) with \(D(\varphi ) = D(\varphi ')= 2\). The dot represents a point in \(\varphi ^o\)

Let \(\langle \varphi \rangle \), respectively \(\langle \varphi ' \rangle \), be the linear subspace of \(\mathbb {R}^d\) generated by \(\varphi \), respectively by \(\varphi '\). Set \(\psi := \langle \varphi \rangle \cap \langle \varphi ' \rangle \).

Since we assume A is convex, \(A \cap \langle \varphi ' \rangle = \varphi '\), and \(\langle \varphi ' \rangle \) is a supporting hyperplane of A.

We claim that \(\varphi \cap \langle \varphi ' \rangle \subset \partial \varphi \). Indeed, let \(z \in \varphi \cap \langle \varphi '\rangle \) and \(y \in \varphi {\setminus } \varphi ' \). Then \(y \in \varphi {\setminus } \langle \varphi ' \rangle \), and for all \(\varepsilon >0\) the vector \(y + (1+ \varepsilon ) (z- y) \) lies in the affine hull of \(\varphi \) but not in A, since it is on the wrong side of the supporting hyperplane \(\langle \varphi ' \rangle \), and therefore not in \(\varphi \). This shows that \(z \in \partial \varphi \), and hence the claim.

Since \(\varphi \cap \psi \subset \varphi \cap \langle \varphi ' \rangle \), it follows from the preceding claim that \(\varphi \cap \psi \subset \partial \varphi \).

Now let \(x \in \varphi ^o\). Then \(x \in \langle \varphi \rangle {\setminus } \psi \). Let \(\pi _\psi (x)\) denote the point in \(\psi \) closest to x, and set \(a:= \Vert x - \pi _\psi (x) \Vert = \,\textrm{dist}(x, \psi )\). Then \(a >0\).

Set \(w:= a^{-1}(x - \pi _\psi (x))\). Then \(\Vert w \Vert =1,\) \( w \in \langle \varphi \rangle \) and \(w \perp \psi \) (i.e., the Euclidean inner product of w and z is zero for all \(z \in \psi \)), so \(\,\textrm{dist}(w,\langle \varphi ' \rangle ) \ge \delta \), where we set

If \(y \in \langle \varphi \rangle {\setminus } \psi \) then \(y \notin \langle \varphi ' \rangle \) so \(\,\textrm{dist}( y, \langle \varphi ' \rangle ) >0\). Therefore \(\delta \) is the infimum of a continuous, strictly positive function defined on a non-empty compact set of vectors y, and hence \(0< \delta < \infty \). Thus for \(x \in \varphi ^o\), with w, a as given above, we have

If \(\pi _\psi (x) \notin \varphi \), then there is a point in \([x, \pi _\psi (x)] \cap \partial \varphi \), while if \(\pi _\psi (x) \in \varphi \), then \(\pi _\psi (x) \in \partial \varphi \). Either way \(\,\textrm{dist}(x,\psi ) \ge \,\textrm{dist}(x, \partial \varphi )\), and hence by (6.19), \(\,\textrm{dist}(x,\varphi ') \ge \delta \,\textrm{dist}(x, \partial \varphi )\). Therefore \(K(\varphi ,\varphi ')\le \delta ^{-1} < \infty \) as required. \(\square \)

Recall that we are assuming (4.1). Also, recall that for each face \(\varphi \) of A we denote the angular volume of A at \(\varphi \) by \(\rho _{\varphi }\), and set \(f_\varphi := \inf _{\varphi } f(\cdot )\).

Lemma 6.13

Let \(\varphi \) be a face of A. Then, almost surely:

Proof

Let \(a > f_{\varphi }\). Take \(x_0 \in \varphi \) such that \(f (x_0) <a\). If \(D(\varphi ) >0\), assume also that \(x_0 \in \varphi ^o\). By the assumed continuity of f at \(x_0\), for all small enough \(r >0\) we have \(\mu (B(x_0,r)) \le a \rho _\varphi r^d\), so that \(\nu (B,r,a \rho _\varphi r^d) = \varOmega (1)\) as \(r \downarrow 0\). Hence by Lemma 6.2 (taking \(b=0\)), if \(\beta = \infty \) then almost surely \(\liminf _{n \rightarrow \infty } n R_{n,k(n)}^d/k(n) \ge 1/(a \rho _\varphi )\), and (6.20) follows. Also, if \(\beta < \infty \) and \(D(\varphi ) =0\), then by Lemma 6.2 (again with \(b=0\)), almost surely \(\liminf _{n \rightarrow \infty } (n R_{n,k(n)}^d/\log n) \ge \hat{H}_\beta (0)/(a \rho _\varphi )\), and hence (6.21) in this case.

Now suppose \(\beta < \infty \) and \(D(\varphi )>0\). Take \(\delta >0 \) such that \(f(x) < a\) for all \(x \in B(x_0, 2 \delta ) \cap A\), and such that moreover \(B(x_0,2 \delta ) \cap A = B(x_0,2 \delta ) \cap (x_0+ {{\mathscr {K}}}_\varphi )\) (the cone \({{\mathscr {K}}}_\varphi \) was defined in Sect. 4). Then for all \(x \in B(x_0,\delta ) \cap \varphi \) and all \(r \in (0,\delta )\), we have \(\mu (B(x,r)) \le a \rho _\varphi r^d\).

There is a constant \(c >0\) such that for small enough \(r >0\) we can find at least \(cr^{-D(\varphi )}\) points \(x_i \in B(x_0,\delta ) \cap \varphi \) that are all at a distance more than 2r from each other, and therefore \(\nu (B, r,a \rho _\varphi r^d ) = \varOmega ( r^{-D(\varphi )})\) as \(r \downarrow 0 \). Thus by Lemma 6.2 we have

almost surely, and (6.21) follows. \(\square \)

We now define a sequence of positive constants \(K_1,K_2,\ldots \) depending on A as follows. With \(K(\varphi ,\varphi ') \) defined at (6.18), set

which is finite by Lemma 6.12. Then for \(j=1,2,\ldots \) set \(K_j := j(K_A+1)^{j-1}\). Then \(K_1=1\) and for each \(j \ge 1 \) we have \(K_{j+1} \ge (K_A+1)(K_j+1)\).

For each face \(\varphi \) of A and each \(r >0\), define the sets \(\varphi _{r} : = \cup _{x \in \varphi } B(x,r) \cap A\), and also \((\partial \varphi )_{r} := \cup _{x \in \partial \varphi } B(x,r) \cap A\) (so if \(D(\varphi )=0\) then \((\partial \varphi )_{r} = \partial \varphi = \varnothing \)). Given also \(r>0\), define for each \(n,k \in \mathbb {N}\) the event \(G_{n,k,r,\varphi }\) as follows:

If \(D(\varphi ) = d-j\) with \(1 \le j \le d\), let \(G_{n,k,r,\varphi } := \{ (\varphi _{K_j r} {\setminus } (\partial \varphi )_{K_{j+1} r}) {\setminus } F_{n,k,r} \ne \varnothing \}\), the event that there exists \(x \in \varphi _{K_j r} {\setminus } (\partial \varphi )_{K_{j+1} r}\) such that \(\mathscr {X}_n ( B(x,r)) < k\).

Let \(R_{n,k,1}\) be the smallest radius r of balls required to cover k times the boundary region \(A {\setminus } A^{(r)} \), as defined at (6.11).

Lemma 6.14

Given \(r>0\) and \(n, k \in \mathbb {N}\), \( \{R_{n,k,1} > r\} \subset \cup _{\varphi \in \varPhi (A)} G_{n,k,r,\varphi }. \)

Proof

Suppose \(R_{n,k,1} > r\). Then we can and do choose a point \(x \in (A {\setminus } A^{(r)} ) {\setminus } F_{n,k,r}\), and a face \(\varphi _1 \in \varPhi (A) \) with \(D(\varphi _1) = d-1\), and with \(x \in (\varphi _1)_{r} = (\varphi _1)_{K_1 r}\).

If \(x \notin (\partial \varphi _1)_{K_2 r}\) then \(G_{n,k,r,\varphi _1}\) occurs. Otherwise, we can and do choose \(\varphi _2 \in \varPhi (A)\) with \(D(\varphi _2) = d-2\) and \(x \in (\varphi _2)_{K_2 r}\).

If \(x \notin (\partial \varphi _2)_{K_3 r}\) then \(G_{n,k,r,\varphi _2}\) occurs. Otherwise, we can choose \(\varphi _3 \in \varPhi (A)\) with \(D(\varphi _3) = d-3\) and \(x \in (\varphi _3)_{K_3 r}\).

Continuing in this way, we obtain a terminating sequence of faces \(\varphi _1 \supset \varphi _2 \supset \cdots \varphi _m\), with \(m \le d\), such that for \(j =1,2,\ldots ,m\) we have \(D(\varphi _j) = d-j\) and \(x \in (\varphi _j)_{K_j r}\), and \(x \notin (\partial \varphi _m)_{K_{m+1} r}\) (the sequence must terminate because if \(D(\varphi )=0\) then \((\partial \varphi )_s =\varnothing \) for all \(s>0\) by definition). But then \(G_{n,k,r,\varphi _m}\) occurs, completing the proof. \(\square \)

Lemma 6.15

Let \(r>0\) and \(j \in \mathbb {N}\). Suppose \(\varphi \in \varPhi (A)\) and \(x \in \varphi _{K_{j} r} {\setminus } (\partial \varphi )_{K_{j+1} r}\). Then for all \(\varphi ' \in \varPhi (A)\) with \(D(\varphi ') = d-1\) and \(\varphi {\setminus } \varphi ' \ne \varnothing \), we have \(\,\textrm{dist}(x, \varphi ') \ge r\).

Proof

Suppose some such \(\varphi '\) exists with \(\,\textrm{dist}(x,\varphi ') < r\). Then there exist points \(z \in \varphi \) and \(z' \in \varphi '\) with \(\Vert z-x\Vert \le K_j r\), and \(\Vert z'-x\Vert < r\), so that by the triangle inequality \(\Vert z'-z\Vert < (K_j+1)r\).

By (6.18) and (6.22), this implies that \(\,\textrm{dist}(z, \partial \varphi ) < K_A(K_j+1) r\). On the other hand, we also have that

and combining these inequalities shows that \(K_{j+1} - K_j < K_A(K_j+1)\), that is, \(K_{j+1}< K_j(K_A+1) +K_A < (K_j+1)(K_A+1)\). However, we earlier defined the sequence \((K_j)\) in such a way that \(K_{j+1} \ge (K_j+1)(K_A+1)\), so we have a contradiction. \(\square \)

Lemma 6.16

Let \(\varphi \) be a face of A. If \(\beta = \infty \) then let \(u > 1/(f_{\varphi } \rho _\varphi )\). If \(\beta < \infty \), let \(u > \hat{H}_\beta (D(\varphi )/d) / (f_{\varphi } \rho _\varphi ) \). For each \(n \in \mathbb {N}\), set \(r_n = (u k(n)/n )^{1/d}\) if \(\beta = \infty \), and set \(r_n = (u (\log n)/n )^{1/d}\) if \(\beta < \infty \). Then:

(i) if \(\beta = \infty \) then a.s. the events \(G_{n,k(n),r_n,\varphi }\) occur for only finitely many n;

(ii) if \(\beta < \infty \) then there exists \(\varepsilon >0\) such that, setting \(k'(n):= \lfloor (\beta + \varepsilon ) \log n\rfloor \), we have that \(\mathbb {P}[G_{n,k'(n),r_n,\varphi }] = O(n^{- \varepsilon })\) as \(n \rightarrow \infty \).

Proof

Set \(j \!=\! d\!-\!D(\varphi )\). We shall apply Lemma 6.3, now taking \(A_r \!=\! \varphi _{K_j r} {\setminus } (\partial \varphi )_{K_{j+1} r}\). With this choice of \(A_r\), observe first that \(\kappa (A_r,r) = O(r^{-D(\varphi )})\) as \(r \downarrow 0\).

Let \(\delta \in (0,1)\). Assume \(u > 1/(f_\varphi \rho _\varphi (1-\delta ))\) if \(\beta = \infty \) and assume that \(u > \hat{H}_\beta (D(\varphi )/d)/(f_\varphi \rho _\varphi (1-\delta ))\) if \(\beta < \infty \).

By Lemma 6.15, for all small enough r, and for all \(x \in \varphi _{K_j r} {\setminus } (\partial \varphi )_{K_{j+1} r}\), and all \(s \in (0,r]\), the ball B(x, s) does not intersect any of the faces of dimension \(d-1\), other than those which meet at \(\varphi \) (i.e., which contain \(\varphi \)). Also \(f(y) \ge (1- \delta ) f_\varphi \) for all \(y \in A \) sufficiently close to \(\varphi \). Also we are assuming A is convex, so \({{\mathscr {K}}}_\varphi \) is a convex cone. Hence

Therefore we can apply Lemma 6.3, now with \(A_r = \varphi _{K_j r} {\setminus } (\partial \varphi )_{K_{j+1} r}\), taking \(a = (1-\delta ) f_\varphi \rho _\varphi \) and \(b = D(\varphi )\).

If \(\beta = \infty \), taking \(u > 1/(f_\varphi \rho _\varphi (1-\delta ))\) and \(r_n = (u k(n)/n)^{1/d}\), by Lemma 6.3 we have with probability 1 that \( \varphi _{K_j r_n} {\setminus } (\partial \varphi )_{K_{j+1} r_n} \subset F_{n,k(n),r_n}\) for all large enough n, which gives part (i).

If \(\beta {<} \infty \), taking \(u {>} \hat{H}_\beta (D(\varphi )/d)/(f_\varphi \rho _\varphi (1-\delta ))\), and setting \(r_n = (u (\log n)/n)^{1/d}\), we have from Lemma 6.3 that there exists \(\varepsilon >0 \) such that setting \(k'(n) := \lfloor (\beta + \varepsilon ) \log n \rfloor \), we have \(\mathbb {P}[ \{ \varphi _{K_j r_n} {\setminus } (\partial \varphi )_{K_{j+1} r_n} \subset F_{n,k'(n),r_n} \}^c] = O(n^{-\varepsilon })\), which gives part (ii). \(\square \)

Proof of Theorem 4.2

Suppose \(\beta = \infty \). Let \(u > \max \left( \frac{1}{\theta _d f_0}, \max _{\varphi \in \varPhi (A)} \frac{1}{f_\varphi \rho _\varphi } \right) \). Setting \(r_n = (u k(n)/n)^{1/d}\), we have from Lemma 6.16 that \(G_{n,k(n),r_n,\varphi }\) occurs only finitely often, a.s., for each \(\varphi \in \varPhi (A)\). Hence by Lemma 6.14, \(R_{n,k(n),1} \le r_n\) for all large enough n, a.s. Hence, almost surely \( \limsup _{n \rightarrow \infty } \left( n R_{n,k(n),1}^d/k(n) \right) \le u. \)