Abstract

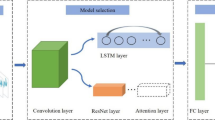

This paper describes a novel gait pattern recognition method based on Long Short-Term Memory (LSTM) and Convolutional Neural Network (CNN) for lower limb exoskeleton. The Inertial Measurement Unit (IMU) installed on the exoskeleton to collect motion information, which is used for LSTM-CNN input. This article considers five common gait patterns, including walking, going up stairs, going down stairs, sitting down, and standing up. In the LSTM-CNN model, the LSTM layer is used to process temporal sequences and the CNN layer is used to extract features. To optimize the deep neural network structure proposed in this paper, some hyperparameter selection experiments were carried out. In addition, to verify the superiority of the proposed recognition method, the method is compared with several common methods such as LSTM, CNN and SVM. The results show that the average recognition accuracy can reach 97.78%, which has a good recognition effect. Finally, according to the experimental results of gait pattern switching, the proposed method can identify the switching gait pattern in time, which shows that it has good real-time performance.

Similar content being viewed by others

References

Wang, L. K., Chen, C. F., Dong, W., Du, Z. J., Shen, Y., & Zhao, G. Y. (2019). Locomotion stability analysis of lower extremity augmentation device. Journal of Bionic Engineering, 16, 99–114.

Chen, C. F., Du, Z. J., He, L., Shi, Y. J., Wang, J. Q., Xu, G. Q., Wu, D. M., & Dong, W. (2019). Development and hybrid control of an electrically actuated lower limb exoskeleton for motion assistance. IEEE Access, 7, 169107–169122.

Zheng, T. J., Zhu, Y. H., Zhang, Z. W., Zhao, S. K., Chen, J., & Zhao, J. (2018). Parametric gait online generation of a lower-limb exoskeleton for individuals with paraplegia. Journal of Bionic Engineering, 15, 941–949.

Chen, C. F., Du, Z. J., He, L., Wang, J. Q., Wu, D. M., & Dong, W. (2019). Active disturbance rejection with fast terminal sliding mode control for a lower limb exoskeleton in swing phase. IEEE Access, 7, 72343–72357.

Yan, T. F., Cempini, M., Oddo, C. M., & Vitiello, N. (2015). Review of assistive strategies in powered lower-limb orthoses and exoskeletons. Robotics and Autonomous Systems, 64, 120–136.

Zhou, T., Brown, M., Snavely, N., Lowe, D. G. (2017). Unsupervised learning of depth and ego-motion from video. In: Proceedings of the IEEE conference on computer vision and pattern recognition, Maryland, USA, 1, 1851–1858.

Singh, J. P., Jain, S., Arora, S., & Singh, U. P. (2021). A survey of behavioral biometric gait recognition: Current success and future perspectives. Archives of Computational Methods in Engineering, 28, 107–148.

Zulcaffle, T. M. A., Kurugollu, F., Crookes, D., Bouridane, A., & Farid, M. (2018). Frontal view gait recognition with fusion of depth features from a time of flight camera. IEEE Transactions on Information Forensics and Security, 14, 1067–1082.

Deng, M. Q., & Wang, C. (2018). Human gait recognition based on deterministic learning and data stream of Microsoft Kinect. IEEE Transactions on Circuits and Systems for Video Technology, 29, 3636–3645.

Ben, X. Y., Zhang, P., Lai, Z. H., Yan, R., Zhai, X. L., & Meng, W. X. (2019). A general tensor representation framework for cross-view gait recognition. Pattern Recognition, 90, 87–98.

Ben, X. Y., Gong, C., Zhang, P., Yan, R., Wu, Q., & Meng, W. X. (2019). Coupled bilinear discriminant projection for cross-view gait recognition. IEEE Transactions on Circuits and Systems for Video Technology, 30, 734–747.

Lee, L., & Grimson, W. Gait analysis for recognition and classification. In: Proceedings of Fifth IEEE International Conference on Automatic Face Gesture Recognition, Washington, USA, 1, 155–162.

Yoo, J. H., Hwang, D., Moon, K. Y., & Nixon, M. S. (2006). Automated human recognition by gait using neural network. In: 2008 First Workshops on Image Processing Theory, Tools and Applications, Sousse, Tunisia, 1, 1–6.

Zhang, Z. Y., Tran, L., Yin, X., Atoum, Y., Liu, X. M., Wan, J., & Wang, N. X. (2019). Gait recognition via disentangled representation learning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, USA, 1, 4710–4719.

Deng, M. Q., Wang, C., Cheng, F. J., & Zeng, W. (2017). Fusion of spatial-temporal and kinematic features for gait recognition with deterministic learning. Pattern Recognition, 67, 186–200.

Kusakunniran, W. (2014). Attribute-based learning for gait recognition using spatio-temporal interest points. Image and Vision Computing, 32, 1117–1126.

Hu, M. D., Wang, Y. H., Zhang, Z. X., & Zhang, D. (2013). Incremental learning for video-based gait recognition with LBP flow. IEEE Transactions on Cybernetics, 43, 77–89.

Jeevan, M., Jain, N., Hanmandlu, M., & Chetty, G. (2013). Gait recognition based on gait pal and pal entropy image. In: IEEE International Conference on Image Processing, Melbourne, Australia, 2013, 4195–4199.

Takemura, N., Makihara, Y., Muramatsu, D., Echigo, T., & Yagi, Y. (2017). On input/output architectures for convolutional neural network-based cross-view gait recognition. IEEE Transactions on Circuits and Systems for Video Technology, 29, 2708–2719.

DeCann, B., & Ross, A. (2010). Gait curves for human recognition, backpack detection, and silhouette correction in a nighttime environment. In: Proceedings of SPIE—The International Society for Optical Engineering, 2010, 7667, 76670Q-76670Q-13.

Wolf, T., Babaee, M., & Rigoll, G. (2016). Multi-view gait recognition using 3D convolutional neural networks. In: IEEE International Conference on Image Processing, Phoenix, USA, 1, 4165–4169.

Micucci, D., Mobilio, M., & Napoletano, P. (2017). Unimib shar: A dataset for human activity recognition using acceleration data from smartphones. Applied Sciences, 7, 1101.

Kim, D. H., Cho, C. Y., & Ryu, J. (2014). Real-time locomotion mode recognition employing correlation feature analysis using EMG pattern. Etri Journal, 36, 99–105.

Au, S., Berniker, M., & Herr, H. (2008). Powered ankle-foot prosthesis to assist level-ground and stair-descent gaits. Neural Networks the Official Journal of the International Neural Network Society, 21, 654–666.

Wang, X. G., Wang, Q. N., Zheng, E. H., Wei, K. L., & Wang, L. (2013). A wearable plantar pressure measurement system: Design specifications and first experiments with an amputee. Advances in Intelligent Systems and Computing, 194, 273–281.

Yuan, K., Sun, S., & Wang. Z. (2013). A fuzzy logic based terrain identification approach to prosthesis control using multi-sensor fusion. In: Proceedings of the IEEE International Conference on Robotics and Automation, Karlsruhe, Germany, 1, 3376–3381.

Gao, F., Liu, G. Y., Liang, F. Y., & Liao, W. H. (2020). IMU-Based locomotion mode identification for transtibial prostheses, orthoses, and exoskeletons. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 28, 1334–1343.

Maqbool, H. F., Husman, M. A. B., Awad, M. I., Abouhossein, A., Iqbal, N., & Dehghani-Sanij, A. A. (2016). A real-time gait event detection for lower limb prosthesis control and evaluation. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 25, 1500–1509.

Martinez-Hernandez, U., Mahmood, I., & Dehghani-Sanij, A. A. (2017). Simultaneous Bayesian recognition of locomotion and gait phases with wearable sensors. IEEE Sensors Journal, 18, 1282–1290.

Chen, B. J., Zheng, E. H., & Wang, Q. (2014). A locomotion intent prediction system based on multi-sensor fusion. Sensors, 14, 12349–12369.

Long, Y., Du, Z. J., Wang, W. D., Zhao, G. Y., Xu, G. Q., He, L., Mao, X. W., & Dong, W. (2016). PSO-SVM-based online locomotion mode identification for rehabilitation robotic exoskeletons. Sensors, 16, 1408.

Young, A. J., Simon, A. M., Fey, N. P., & Hargrove, L. J. (2014). Intent recognition in a powered lower limb prosthesis using time history information. Annals of Biomedical Engineering, 42, 631–641.

Wu, Z. F., Huang, Y. Z., Wang, L., Wang, X. G., & Tan, T. N. (2017). A comprehensive study on cross-view gait based human identification with deep CNNs. IEEE Transactions on Pattern Analysis and Machine Intelligence, 39, 209–226.

He, Y. W., Zhang, J. P., Shan, H. M., & Wang, L. (2019). Multi-task GANs for view-specific feature learning in gait recognition. IEEE Transactions on Information Forensics and Security, 14, 102–113.

Acknowledgements

The authors thank for the support of Pre-research project 020202 in the field of manned spaceflight.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This work was supported by the Pre-research project in the manned space field, Project Number 020202, China.

Rights and permissions

About this article

Cite this article

Chen, Cf., Du, Zj., He, L. et al. A Novel Gait Pattern Recognition Method Based on LSTM-CNN for Lower Limb Exoskeleton. J Bionic Eng 18, 1059–1072 (2021). https://doi.org/10.1007/s42235-021-00083-y

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s42235-021-00083-y