Abstract

We review the development of numerical evolution codes for general relativity based upon the characteristic initial value problem. Progress is traced from the early stage of 1D feasibility studies to current 3D black hole codes that run forever. A prime application of characteristic evolution is Cauchy-characteristic matching, which is also reviewed.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

I review here the status of numerical relativity based upon characteristic evolution. In the spirit of the name “Living Reviews” I encourage readers to provide me with feedback at jeff@einstein.phyast.pitt.edu. This will help me update the review to include overlooked material. I also invite authored contributions on items of relevance that I may have omitted because of lack of expertise. Occasionally, I will point out where such contributions would be valuable and would make this review more useful than I could achieve by myself.

We are entering an era in which Einstein’s equations can effectively be considered solved at the local level. Several groups, as reported in these Reviews, have developed 3D codes which are stable and accurate in some sufficiently local setting. Global solutions are another matter. In particular, there is no single code in existence today which purports to be capable of computing the waveform of gravitational radiation emanating from the inspiral and merger of two black holes, the premier problem in classical relativity. Just as several coordinate patches are necessary to describe a space-time with nontrivial topology, the most effective attack on the binary black hole problem is likely to involve patching together regions of space-time handled by different codes.

Most work in numerical relativity is based upon the Cauchy “3 + 1” formalism [108], with the gravitational radiation extracted by perturbative Cauchy methods which introduce an artificial Schwarzschild background [1, 3, 2, 5]. These wave extraction methods have not been tested in a fully nonlinear 3D setting. Another approach specifically tailored to study radiation can be based upon the characteristic initial value problem. In the 1960’s, Bondi [22, 23] and Penrose [79] pioneered the use of null hypersurfaces to study radiation. This new approach has flourished in general relativity. The standard description of the “plus” and “cross” polarization modes of gravitational radiation is in terms of the real and imaginary parts of the Bondi news function at future null infinity (\({{\mathcal I}^+}\)).

From a computational standpoint, the major drawback of the characteristic approach arises from the formation of caustics in the light rays generating the null hypersurfaces. In the most ambitious scheme proposed at the theoretical level such caustics would be treated “head-on” as part of the dynamical problem [98]. This is a profoundly attractive idea. Only a few structural stable caustics can arise in numerical evolution, and their geometrical properties are well enough understood to model their singular behavior numerically [44]. However, a computational implementation of this approach has not been achieved. It seems to be a great idea that is ahead of its time.

In the typical setting for a characteristic initial value problem, the domain of dependence of a single nonsingular null hypersurface is empty. In order to obtain a nontrivial evolution problem, the null hypersurface must either be completed to a caustic-crossover region where it pinches off, or an additional boundary must be introduced. So far, the only caustics that have been successfully evolved numerically in general relativity are pure point caustics (the complete null cone problem). When spherical symmetry is not present, it turns out that the stability conditions near the vertex of a nonsingular light cone place a strong restriction on the allowed time step [65]. Point caustics in general relativity have been successfully handled this way for axisymmetric space-times [52], but the computational demands for 3D evolution would be prohibitive using current generation supercomputers. This is unfortunate because, away from the caustics, the characteristic evolution offers myriad computational and geometrical advantages.

As a result, at least in the near future, the computational application of characteristic evolution is likely to be restricted to some mixed form, in which boundary conditions are also set on a non-singular but incomplete initial null hypersurface and on a second nonsingular hypersurface (or perhaps several), which together with the initial null hypersurface present a nontrivial domain of dependence. This second hypersurface may itself be either (i) null, (ii) timelike or (iii) spacelike. These possibilities give rise to the (i) the double null problem, (ii) the nullcone-worldtube problem or (iii) the Cauchy-characteristic matching (CCM) problem.

In CCM, it is possible to choose the matching interface between the Cauchy and characteristic regions to be a null hypersurface, but it is more practical to match across a timelike worldtube. CCM combines the advantages of characteristic evolution in treating the outer radiation zone in spherical coordinates which are naturally adapted to the topology of the worldtube with the advantages of Cauchy evolution in Cartesian coordinates in the region where spherical coordinates would break down.

In this review, we trace the development of characteristic algorithms from model 1D problems to a 3D code for black holes that attains the Holy Grail of numerical relativity, as specified 12 years ago by Shapiro and Teukolsky [91]. And we trace the development of CCM from early feasibility studies through current attempts to treat the binary black hole problem.

2 The Characteristic Initial Value Problem

Characteristics have traditionally played an important role in the analysis of hyperbolic partial differential equations. However, the use of characteristic hypersurfaces to supply the foliation underlying an evolution scheme has been mainly restricted to relativity. This is perhaps natural because in curved space-time there is no longer the preferred Cauchy foliation provided by the Euclidean 3-spaces allowed in Galilean or special relativity. The method of shooting along characteristics is a standard technique in many areas of computational physics, but evolution based upon characteristic hypersurfaces is quite uniquely limited to relativity.

Bondi’s initial use of null coordinates to describe radiation fields [22] was followed by a rapid development of other null formalisms. These were distinguished either as metric based approaches, as developed for axisymmetry by Bondi, Metzner and van den Burg [23] and generalized by Sachs [87], or as null tetrad approaches in which the Bianchi identities appear as part of the set of equations, as developed by Newman and Penrose [76].

At the outset, null formalisms were applied to construct asymptotic solutions at null infinity by means of 1/r expansions. Soon afterwards, Penrose devised the conformal compactification of null infinity \({\mathcal I}\) (“scri”), thereby reducing to geometry the asymptotic description of the physical properties of radiative space-times, most notably the Bondi mass and news function [79]. The characteristic initial value problem rapidly became an important tool for the clarification of fundamental conceptual issues regarding gravitational radiation and its energy content. It laid bare and geometricized the far field “radiation zone” of the gravitational field.

The initial focus on asymptotic solutions clarified the kinematic properties of radiation fields but could not supply the waveform from a specific source. It was soon realized that instead of carrying out a 1/r expansion, one could reformulate the approach in terms of the integration of ordinary differential equations along the characteristics (null rays). The integration constants supplied on some inner boundary then determined the specific waveforms obtained at infinity. In the double-null initial value problem of Sachs [88], the integration constants are supplied at the intersection of outgoing and ingoing null hypersurfaces. In the worldtube-nullcone formalism, the sources inside a worldtube were represented by integration constants on the worldtube [99]. These early formalisms have gone through much subsequent revamping. Some have been reformulated to fit the changing styles of modern differential geometry. Some have been reformulated in preparation for implementation as computational algorithms. See the articles in [37] for a representative sample. Rather than including here a review of the extensive literature on characteristic formalisms in general relativity, I will concentrate here on those approaches which have been (or are in the process of being) implemented as computational evolution schemes. I also regret omission of the topic of well-posedness of the underlying boundary value problems. This topic has obvious relevance to the success of numerical simulations but would require a separate Living Review to do it justice.

All characteristic evolution schemes share the same skeletal form. The fundamental ingredient is a foliation by null hypersurfaces u = const which are generated by a 2-dimensional set of null rays, labeled by coordinates xA, with a coordinate λ varying along the rays. In (u, λ, xA) null coordinates, the main set of Einstein equations take the schematic form

and

Here F represents a set of hypersurface variables; G, a set of evolution variables; and HF and HG are nonlinear hypersurface operators, i.e. they operate locally on the values of F and G intrinsic to a single null hypersurface. In addition to these main equations, there is a subset of four Einstein equations which are satisfied by virtue of the Bianchi identities, provided that they are satisfied on a hypersurface transverse to the characteristics. These equations have the physical interpretation as conservation laws. Mathematically they are analogous to the constraint equations of the canonical formalism. But they are not elliptic, since they are imposed upon null or timelike hypersurfaces, rather than spacelike.

3 Characteristic Evolution Codes

Computational implementation of characteristic evolution may be based upon different versions of the formalism (i.e. metric or tetrad) and different versions of the initial value problem (i.e. double null or worldtube-nullcone). The performance and computational requirements of the resulting evolution codes can vary drastically with the particular choice. However, all characteristic evolution codes have certain common advantages:

-

(i)

There are no elliptic constraint equations. This eliminates the need for time consuming iterative methods to enforce constraints or to otherwise test the challenge of constraint free evolution.

-

(ii)

No second time derivatives appear so that the number of basic variables is half the number for the corresponding version of the Cauchy problem.

-

(iii)

The main Einstein equations form a system of coupled ordinary differential equations with respect to the parameter λ varying along the characteristics. This allows construction of an evolution algorithm in terms of a simple march along the characteristics.

-

(iv)

In problems with isolated sources, the radiation zone can be compactified into a finite grid boundary using Penrose’s conformal technique. Because the Penrose boundary is a null hypersurface, no extraneous outgoing radiation condition or other artificial boundary condition is required.

-

(v)

The grid domain is exactly the region in which waves propagate, which is ideally efficient for radiation studies. Since each null hypersurface of the foliation extends to infinity, the radiation is calculated immediately (in retarded time) with no need to propagate it across the grid.

-

(vi)

In black hole space-times, a large redshift at null infinity relative to internal sources is an indication of the formation of an event horizon and can be used to limit the evolution to the exterior region of space-time.

Characteristic schemes also share as a common disadvantage the necessity either to deal with caustics or to avoid them altogether. The scheme to tackle the caustics head on by including their development as part of the evolution is perhaps a great idea still ahead of its time, one that should not be forgotten. There are only a handful of structurally stable caustics, and they have well known algebraic properties. This makes it possible to model their singular structure in terms of Pade approximants. The structural stability of the singularities should in principle make this possible, and algorithms to evolve the elementary caustics have been proposed [35, 96]. In the axisymmetric case, cusps and folds are the only stable caustics, and they have already been identified in the horizon formation occurring in simulations of head-on collisions of black holes and in the temporarily toroidal horizons occurring in collapse of rotating matter [74, 92].

3.1 One+One Dimensional Codes

It is now often said that the solution of the general ordinary differential equation is essentially known, in light of the success of computational algorithms and present day computing power. Perhaps this is an overstatement because investigating singular behavior is still an art. But, in the same vein, it is fair to say that the general system of hyperbolic partial differential equations in one spatial dimension is a solved problem. At least, it seems to be true in general relativity.

One of the earliest characteristic evolution codes was constructed by Corkill and Stewart [35, 95] to treat space-times with two Killing vectors. The grid was based upon a double null coordinate system, with the null hypersurfaces intersecting in the surfaces spanned by the Killing vectors. This allowed simulation of colliding plane waves (as well as the Schwarzschild solution). They were able to evolve the Khan-Penrose [70] collision of impulsive (δ-function curvature) plane waves to within a few numerical zones from the singularity that forms after the collision, with extremely close agreement with the analytic results. Their simulations of collisions with more general waveforms, for which exact solutions are not known, have provided input to the theoretical understanding of singularity formation in this problem.

Most of the 1+1 dimensional applications of characteristic methods have been for spherically symmetric systems. Here matter must be included in order to make the system non-Schwarzschild. Except for some trivial applications to evolve dust cosmologies by characteristic evolution, matter has been represented by a massless Klein-Gordon field. This allows simulation of radiation effects in the simple context of spherical symmetry. Highly interesting results have been found this way.

On the analytic side, working in a characteristic initial value formulation based upon outgoing null cones, Christodoulou made a penetrating study of the existence and uniqueness of solutions to this problem. [29, 28, 31, 30] He showed that weak initial data evolve to Minkowski space asymptotically in time, but that sufficiently strong data form a horizon, with nonzero Bondi mass. In the latter case, he showed that the geometry is asymptotically Schwarzschild in the approach to I+ (future time infinity) from outside the horizon, thus establishing a rigorous version of the no-hair theorem. What this analytic tour-de-force did not reveal was the remarkable critical behavior in the transition between these two regimes, which was discovered by Choptuik [26, 27] using computational simulation.

A characteristic evolution algorithm for this problem centers about the evolution scheme for the scalar field, which constitutes the only dynamical field. Given the scalar field, all gravitational quantities can be determined by integration along the characteristics of the null foliation. But this is a coupled problem, since the scalar wave equation involves the curved space metric. It provides a good illustration of how null algorithms lead to a hierarchy of equations which can be integrated along the characteristics to effectively decouple the hypersurface and dynamical variables.

In a Bondi coordinate system based upon outgoing null hypersurfaces u = const, the metric is

Smoothness at r = 0 allows the coordinate conditions

The field equations consist of the wave equation □Φ = 0 for the scalar field and two hypersurface equations for the metric functions:

The wave equation can be expressed in the form

where g = rΦ and □(2) is the D’Alembertian associated with the two dimensional submanifold spanned by the ingoing and outgoing null geodesics. Initial null data for evolution consists of Φ(u0, r) at initial retarded time u0.

Because any two dimensional geometry is conformally flat, the surface integral of □(2)g over a null parallelogram Σ gives exactly the same result as in a flat 2-space, and leads to an integral identity upon which a simple evolution algorithm can be based [54]. Let the vertices of the null parallelogram be labeled by N, E, S and W corresponding, respectively, to their relative locations North, East, South and West in the 2-space. Upon integration of (7), curvature introduces an area integral correction to the flat space null parallelogram relation between the values of g at the vertices:

This identity, in one form or another, lies behind all of the null evolution algorithms that have been applied to this system. The prime distinction between the different algorithms is whether they are based upon double null coordinates or Bondi coordinates as in Eq. (3). When a double null coordinate system is adopted, the points N, E, S and W can be located in each computational cell at grid points, so that evaluation of the left hand side of Eq. (8) requires no interpolation. As a result, in flat space, where the right hand side of Eq. (8) vanishes, it is possible to formulate an exact evolution algorithm. In curved space, of course, there is truncation error arising from the approximation of the integral by evaluating the integrand at the center of Σ.

The identity (8) gives rise to the following explicit marching algorithm. Let the null parallelogram lie at some fixed θ and ϕ and span adjacent retarded time levels u0 and u0 + Δu. Imagine for now that the points N, E, S and W lie on the spatial grid, with rN − rW = rE − rS = Δr. If g has been determined on the initial cone u0, which contains the points E and S, and radially outward from the origin to the point W on the next cone u0 + Δu, then Eq. (8) determines g at the next radial grid point N in terms of an integral over Σ. The integrand can be approximated to second order, i.e. to O(ΔrΔu), by evaluating it at the center of Σ. To this same accuracy, the value of g at the center equals its average between the points E and W, at which g has already been determined. Similarly, the value of (V/r),r at the center of Σ can be approximated to second order in terms of values of V at points where it can be determined by integrating the hypersurface equations (5) and (6) radially outward from r = 0.

After carrying out this procedure to evaluate g at the point N, the procedure can then be iterated to determine g at the next radially outward grid point on the u0 + Δu level. Upon completing this radial march to null infinity, in terms of a compactified radial coordinate such as x = r/(1 + r), the field g is then evaluated on the next null cone at u0 + 2Δu, beginning at the vertex where smoothness gives the startup condition that g(u, 0) = 0.

In the compactified Bondi formalism, the vertices N, E, S and W of the null parallelogram Σ cannot be chosen to lie exactly on the grid because, even in Minkowski space, the velocity of light in terms of a compactified radial coordinate x is not constant. As a consequence, the fields g, β and V at the vertices of Σ are approximated to second order accuracy by interpolating between grid points. However, cancellations arise between these four interpolations so that Eq.(8) is satisfied to fourth order accuracy. The net result is that the finite difference version of (8) steps g radially outward one zone with an error of fourth order in grid size, O((Δu)2(Δx)2). In addition, the smoothness conditions (4) can be incorporated into the startup for the numerical integrations for V and β to insure no loss of accuracy in starting up the march at r = 0. The resulting global error in g, after evolving a finite retarded time, is then O(ΔuΔx), after compounding errors from 1/(ΔuΔx) number of zones.

Because of the explicit nature of this algorithm, its stability requires an analogue of the Courant-Friedrichs-Lewy (CFL) condition that the physical domain of dependence be contained in the numerical domain of dependence. In the present spherically symmetric case, this condition requires that the ratio of the time step to radial step be limited by (V/r)Δu ≤ 2Δr, where Δr = Δ[x/(1 − x)]. This condition can be built into the code using the value V/r = e2H, corresponding to the maximum of V/r at ℐ+. The strongest restriction on the time step then arises just before the formation of a horizon, where V/r → ∞ at ℐ+, This infinite redshift provides a mechanism for locating the true event horizon “on the fly” and restricting the evolution to the exterior space-time. Points near ℐ+ must be dropped in order to evolve across the horizon in this gauge.

Such algorithms have been applied to many interesting problems. A characteristic algorithm based upon double null coordinates was used by Goldwirth and Piran in a study of cosmic censorship [46]. Their early study lacked the sensitivity of adaptive mesh refinement which later enabled Choptuik to discover the critical phenomena appearing in this problem. The most accurate global treatment of this problem using a Cauchy code has been achieved by Marsa and Choptuik [73] using ingoing Eddington-Finklestein coordinates. This use of a null based time slicing enabled them to avoid problems with the singularity by excising the black hole exterior and to construct a 1D code that runs forever.

Gómez and Winicour [54] constructed a characteristic code for this problem based upon the compactified Bondi formalism outlined above. Studies with the code revealed interesting high amplitude behavior under the rescaling Φ → aΦ. As a → ∞, the red shift creates an effective boundary layer at \({{\mathcal I}^+}\) which causes the Bondi mass MB and the scalar field monopole moment Q to be related by \({M_B} \sim \pi |Q|/\sqrt 2\), rather than the quadratic relation of the weak field limit [54]. This can also be established analytically so that the high amplitude limit provides a check on the code’s ability to handle strongly nonlinear fields. In the small amplitude case, this work incorrectly reported that the radiation tails from black hole formation had an exponential decay characteristic of quasinormal modes rather than the polynomial 1/t or 1/t2 falloff expected from Price’s [82] work on perturbations of Schwarzschild black holes. In hindsight, the error here was not having confidence to run the code sufficiently long to see the proper late time behavior.

Gundlach, Price and Pullin [57, 58] subsequently reexamined the issue of power law tails using a double null code similar to that developed by Goldwirth and Piran. Their numerical simulations verified the existence of power law tails in the full nonlinear case, thus establishing consistency with analytic perturbative theory. They also found normal mode ringing at intermediate time, again with properties in accord with the perturbative results. The consistency they found between perturbation theory and numerical evolution of the nonlinear system is very reassuring. There is a region of space-time where the results of linearized theory are remarkably reliable even though highly nonlinear behavior is taking place elsewhere. These results have led to a methodology that has application beyond the confines of spherically symmetric problems, most notably in the “close approximation” for the binary black hole problem [83]. Power law tails and quasinormal ringing were also confirmed using Cauchy evolution [73].

The study of the radiation tail decay was subsequently extended by Gómez, Schmidt and Winicour [53] using a code based upon the nullcone-worldtube version of the Bondi formalism. They showed that the Newman-Penrose constant [78] for the scalar field is the factor which determines the exponent of the power law (not the static monopole moment as often stated). When this constant is non-zero, the tail decays as 1/t, as opposed to the 1/t2 decay for the vanishing case. (There are also ln t/tn corrections in addition to the exponentially decaying contributions of the quasinormal modes). This code was also used to study the instability of a topological kink in the configuration of the scalar field [12]. The kink instability provides the simplest example of the turning point instability [64, 94] which underlies gravitational collapse of static equilibria.

Hamadé and Stewart [61] have applied a double null code to the problem of critical phenomena. In order to obtain the accuracy necessary to confirm Choptuik’s results they developed the first example of a characteristic grid with adaptive mesh refinement (AMR). They did this with both the standard Berger and Oliger algorithm and their own simplified version. These different versions of AMR gave indistinguishable numerical results. Their simulations of critical collapse of a scalar field agree with Choptuik’s values for the universal parameters governing mass scaling and display the echoing associated with discrete self-similarity. Hamadé, Horne and Stewart [60] extended this study to the spherical collapse of an axion/dilaton system and found in this case that the self-similarity was a continuous symmetry of the critical solution.

The Southampton group has also constructed a 1+1 dimensional characteristic code for space-times with cylindrical symmetry [34, 40]. Their motivation was not to produce a stand-alone code for the study of cylindrically symmetric relativity but rather to use it as a test case for combining Cauchy and characteristic codes into a global scheme. Their work will be discussed later in this review under Cauchycharacteristic matching.

3.2 Two-D Codes

One dimensional characteristic codes enjoy a very special simplicity due to the two preferred sets (ingoing and outgoing) of characteristic null hypersurfaces. This eliminates a source of gauge freedom that otherwise exists in either two or three dimensional characteristic codes. However, the manner in which characteristics of a hyperbolic system determine domains of dependence and lead to propagation equations for shock waves is exactly the same as in the one dimensional case. This makes it desirable for the purpose of numerical evolution to enforce propagation along characteristics as extensively as possible. In basing a Cauchy algorithm upon shooting along characteristics, the infinity of characteristic rays (technically, bicharacteristics) at each point leads to an arbitrariness which, for a practical numerical scheme, makes it necessary either to average the propagation equations over the sphere of characteristic directions or to select out some preferred finite subset of propagation equations. The latter approach has been successfully applied by Butler [25] to the Cauchy evolution of 2-dimensional fluid flow but there seems to have been very little follow-up along these lines.

The formal ideas behind the construction of two or three dimensional characteristic codes are the same, although there are some additional technical complications in three dimensions associated with a nonsingular choice of angular coordinates for the null rays. Historically, most characteristic work graduated first from 1D to 2D because of the available computing power.

3.3 The Bondi Problem

The first characteristic code based upon the original Bondi equations for a twist-free axisymmetric space-time was constructed by Isaacson, Welling and Winicour [66]. The space-time was foliated by a family of null cones, complete with point vertices at which regularity conditions were imposed. The code accurately integrated the hypersurface and evolution equations out to compactified null infinity. This allowed studies of the Bondi mass and radiation flux on the initial null cone, but it could not be used as a practical evolution code because of an instability at the vertices of the null cones.

To a group uninitiated at the time to the pitfalls of numerical work, these instabilities came as a rude shock and led to a retreat to the simpler problem of axisymmetric scalar waves propagating in Minkowski space, with the metric

in outgoing null cone coordinates. A null cone code for this problem was constructed using an algorithm based upon Eq.(8), with the angular part of the flat space Laplacian replacing the curvature terms in the integrand on the right hand side. This simple setting allowed the instability to be traced to a subtle violation of the CFL condition near the vertices of the cones. In terms of grid spacings Δxα, the CFL condition in this coordinate system take the explicit form

where the coefficient K, which is of order 1, depends on the particular startup procedure adopted for the outward integration. Far from the vertex, this condition (10) on the time step Δu is quantitatively similar to the CFL condition for a standard Cauchy evolution algorithm in spherical coordinates. But the condition (10) is strongest near the vertex of the cone where (at the equator θ = π/2) it implies that

This is in contrast to the analogous requirement

for stable Cauchy evolution near the origin of a spherical coordinate system. The extra power of Δθ is the price that must be paid near the vertex for the simplicity of a characteristic code. Nevertheless, the enforcement of this condition still allowed efficient global simulation of axisymmetric scalar waves. Global studies of backscattering, radiative tail decay and solitons have been carried out for nonlinear axisymmetric waves [66], but 3-dimensional simulations extending to the vertices of the cones would be impractical on current machines.

Aware now of the subtleties of the CFL condition near the vertices, the Pittsburgh group returned to the Bondi problem, i.e. evolve the metric [23]

by means of the three hypersurface equations

and the evolution equation

The beauty of the Bondi set of equations is that they form a clean hierarchy. Given 7 on an initial null hypersurface, the equations can be integrated radially to determine β, U, V and γ, u on the hypersurface (in that order) in terms of integration constants determined by boundary or smoothness conditions. The initial data γ is unconstrained except by smoothness conditions. Because γ represents a spin-2 field, it must be O(sin2 θ) near the poles of the spherical coordinates and must consist of l ≥ 2 spin-2 multipoles.

In the computational implementation of this system [52], the null hypersurfaces were chosen to be complete null cones with nonsingular vertices, which (for simplicity) trace out a geodesic worldline r = 0. The smoothness conditions at the vertices were formulated by referring back to local Minkowski coordinates. The computational algorithm was designed to preserve these smoothness conditions.

The vertices of the cones did not turn out to be the chief source of difficulty. A null parallelogram marching algorithm, similar to that used in the scalar case, gave rise to an instability that sprang up throughout the grid. In order to reveal the source of the instability, physical considerations suggested looking at the linearized version of the Bondi equations, since they must be related to the wave equation. If this relationship were sufficiently simple, then the scalar wave algorithm could be used as a guide in stabilizing the evolution of γ. A scheme for relating γ to solutions Φ of the wave equation had been formulated in the original paper by Bondi, Metzner and van den Burgh [23]. However, in that scheme, the relationship of the scalar wave to γ was nonlocal in the angular directions and not useful for the stability analysis.

A local relationship between γ and solutions of the wave equation was found [52]. This provided a test bed for the null evolution algorithm similar to the Cauchy test bed provided by Teukolsky waves [100]. More critically, it allowed a simple von Neumann linear stability analysis of the finite difference equations, which revealed that the evolution would be unstable if the metric quantity U was evaluated on the grid. For a stable algorithm, the grid points for U must be staggered between the grid points for γ, β and V. This unexpected feature emphasizes the value of linear stability analysis in formulating stable finite difference approximations.

These considerations led to an axisymmetric code for the global Bondi problem which ran stably, subject to a CFL condition, throughout the regime in which caustics and horizons did not form. Stability in this regime was verified experimentally by running arbitrary initial data until it radiated away to \({{\mathcal I}^+}\). Also, new exact solutions as well as the linearized null solutions were used to perform extensive convergence tests that established second order accuracy. The code generated a large complement of highly accurate numerical solutions for the class of asymptotically flat, axisymmetric vacuum space-times, a class for which no analytic solutions are known. All results of numerical evolutions in this regime were consistent with the theorem of Christodoulou and Klainerman [32] that weak initial data evolve asymptotically to Minkowski space at late time.

An additional global check on accuracy was performed using Bondi’s formula relating mass loss to the time integral of the square of the news function. The Bondi mass loss formula is not one of the equations used in the evolution algorithm but follows from those equations as a consequence of a global integration of the Bianchi identities. Thus it not only furnishes a valuable tool for physical interpretation, but it also provides a very important calibration of numerical accuracy and consistency.

An interesting feature of the evolution arises in regard to compactification. By construction, the u-direction is timelike at the origin where it coincides with the worldline traced out by the vertices of the outgoing null cones. But even for weak fields, the u-direction generically becomes spacelike at large distances along an outgoing ray. Geometrically, this reflects the property that \({\mathcal I}\) is itself a null hypersurface so that all internal directions are spacelike, except for the null generator. For a flat space time, the u-direction picked out at the origin leads to a null evolution direction at \({\mathcal I}\), but this direction becomes spacelike under a slight deviation from spherical symmetry. Thus the evolution generically becomes “superluminal” near \({\mathcal I}\). Remarkably, there are no adverse numerical effects. This fortuitous property apparently arises from the natural way that causality is built into the marching algorithm so that no additional resort to numerical techniques, such as “causal differencing” [4], is necessary.

3.3.1 The Conformal-Null Tetrad Approach

J. Stewart has also implemented a characteristic evolution code which handles the Bondi problem by a null tetrad, as opposed to metric, formalism [97]. The geometrical algorithm underlying the evolution scheme, as outlined in [98, 44], is H. Friedrich’s [43] set of conformal-null equations. This casts the Einstein equations for a compactified space-time into a first order system of partial differential equations. The set of variables include the metric, the connection, and the curvature, as in a Newman-Penrose formalism, but in addition it includes the conformal factor (necessary for compactification of \({\mathcal I}\)) and its first derivatives. As a result, without assuming any symmetry, there are more than 7 times as many variables as in a metric based null scheme, and the corresponding equations do not decompose into as clean a hierarchy. This disadvantage, compared to the metric approach, is balanced by several advantages: (1) The equations form a symmetric hyperbolic system so that standard theorems can be used to establish that the system is well-posed. (2) Standard evolution algorithms can be invoked to ensure numerical stability. (3) The extra variables associated with the curvature tensor are not completely excess baggage, since they supply essential physical information. (4) The regularization necessary to treat \({\mathcal I}\) is built-in as part of the formalism so that no special numerical regularization techniques are necessary as in the metric case. (This last advantage is somewhat offset by the necessity of having to locate \({\mathcal I}\) by tracking the zeroes of the conformal factor.)

The code was intended to study gravitational waves emanating from an axisymmetric star. Since only the vacuum equations are evolved, the outgoing radiation from the star is represented in terms of data (Ψ4 in Newman-Penrose notation) on an ingoing null cone (an advanced time null hypersurface), which forms the inner boundary of the evolved domain. The inner boundary data is supplemented by Schwarzschild data on a initial outgoing null cone, which models an initially quiescent state of an isolated star. This provides the necessary data for a double-null initial value problem. The evolution would normally break down where the ingoing null hypersurface develops caustics. But by choosing a scenario in which a black hole is formed, it is possible to evolve the entire region exterior to the horizon. An obvious test bed is the Schwarzschild space-time for which a numerically satisfactory evolution was achieved (convergence tests were not reported).

Physically interesting results were obtained by choosing data corresponding to an outgoing quadrupole pulse of radiation with various waveforms. By increasing the initial amplitude of the data Ψ4, it was possible to evolve into a regime where the energy loss due to radiation was large enough to drive the total Bondi mass negative. Although such data is too grossly exaggerated to be consistent with an astrophysically realistic source, the formation of a negative mass is an impressive test of the robustness of the code.

3.3.2 Twisting Axisymmetry

The Southampton group, as part of its long range goal of combining Cauchy and characteristic evolution, has begun development of a code which extends the Bondi problem to full axisymmetry [38]. By dropping the requirement that the Killing direction ϕ be twist-free, they are able to include rotational effects, including radiation in the “cross” polarization mode (only the “plus” mode is allowed by twist-free axisymmetry).

They have completed the preliminary theoretical work [39]. The null equations and variables have been recast into a suitably regularized form to allow compactification of null infinity. Regularization at the vertices or caustics of the null hypersurfaces is not necessary, since they anticipate matching to an interior Cauchy evolution across a finite worldtube. The Southampton program offers promise of some highly interesting results which I hope will be reported in a not too future version of this review.

3.4 The Bondi Mass

Numerical calculations of asymptotic quantities such as the Bondi mass must overcome severe technical difficulties arising from the necessity to pick off nonleading terms in an asymptotic expansion about infinity. For example, in an asymptotically inertial frame (called a Bondi frame at \({{\mathcal I}^+}\)), the mass aspect \({\mathcal M}(u{.}\theta.\phi)\) must be picked off from the asymptotic expansion of Bondi’s metric quantity V, (see Eq. (16)) of the form \(V = r - 2{\mathcal M} + O(1/r)\). This is similar to the experimental task of determining the mass of an object by measuring its far field. What makes the job much more difficult is that, in most computational setups, (the global null problem or the Cauchy-characteristic matching problem), the gauge choice does not correspond to a Bondi frame at \({{\mathcal I}^+}\). One must deal with an arbitrary foliation of \({{\mathcal I}^+}\) into retarded time slices which are determined by the details of the interior geometry. As a result, V has the more complicated asymptotic behavior (given in the axisymmetric case)

where L, H and K are gauge dependent functions of (u, θ) which would vanish in a Bondi frame [99, 66]. The calculation of the Bondi mass requires regularization of this expression by numerical techniques so that the coefficient \({\mathcal M}\) can be picked off. The task is now similar to the experimental determination of the mass of an object by using non-inertial instruments in a far zone which contains O(1/r) radiation fields. But it can be done!

It was accomplished in Stewart’s code by reexpressing the formula for the Bondi mass in terms of the well-behaved fields of the conformal formalism [97]. In the Pittsburgh code, it is accomplished by re-expressing the Bondi mass in terms of renormalized metric variables which regularize all calculations at \({{\mathcal I}^+}\) and make them second order accurate [47]. The calculation of the Bondi news function (which provides the waveforms of both polarization modes) is an easier numerical task than the Bondi mass. It has also been implemented in both of these codes, thus allowing the important check of the Bondi mass loss formula.

Mainstream astrophysics is couched in Newtonian concepts, some of which have no well defined extension to general relativity. In order to provide a sound basis for relativistic astrophysics, it is crucial to develop general relativistic concepts which have well defined and useful Newtonian limits. Mass and radiation flux are most fundamental in this regard. The results of characteristic codes show that the energy of a radiating system can be evaluated rigorously and accurately according to the rules for asymptotically flat space-times, while avoiding the deficiencies that plagued the “pre-numerical” era of relativity: (i) the use of coordinate dependent concepts such as gravitational energy-momentum pseudotensors; (ii) a rather loose notion of asymptotic flatness, particularly for radiative space-times; (iii) the appearance of divergent integrals; and (iv) the use of approximation formalisms, such as weak field or slow motion expansions, whose errors have not been rigorously estimated.

The above codes have extended the role of the Bondi mass from that of a geometrical construct in the theory of gravitational radiation to that of a highly accurate computational tool. The Bondi mass loss formula provides an important global check on the preservation of the Bianchi identities. The mass loss rates themselves have important astrophysical significance. The numerical results demonstrate that computational approaches, rigorously based upon the geometrical definition of mass in general relativity, can be used to calculate radiation losses in highly nonlinear processes where perturbation calculations would not be meaningful.

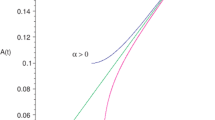

Numerical calculation of the Bondi mass has been used to explore both the Newtonian and the strong field limits of general relativity [47]. For a quasi-Newtonian system of radiating dust, the numerical calculation joins smoothly on to a post-Newtonian expansion of the energy in powers of 1/c, beginning with the Newtonian mass and mechanical energy as the leading terms. This comparison with perturbation theory has been carried out to O(1/c7), at which stage the computed Bondi mass peels away from the post-Newtonian expansion. It remains strictly positive, in contrast to the truncated post-Newtonian behavior which leads to negative values.

Another subtle numerical problem associated with the Bondi mass stems from its role as one component of the total energy-momentum 4-vector, whose calculation requires identification of the translation subgroup of the Bondi-Metzner-Sachs group [86]. This introduces boost freedom into the problem. Identifying the translation subgroup is tantamount to knowing the conformal transformation to a conformal Bondi frame [99] in which the time slices of I have unit sphere geometry. An important feature providing flexibility to both Stewart’s code and the Pittsburgh code is that the coordinates are adopted to simplify the description of the interior sources. This results in an arbitrary foliation of \({\mathcal I}\). The determination of the conformal factor which relates the 2-metric hab of a slice of \({\mathcal I}\) to the unit sphere metric is an elliptic problem equivalent to solving the second order partial differential equation for the conformal transformation of Gaussian curvature. In the axisymmetric case, the PDE reduces to an ODE with respect to the angle θ, and is straightforward to solve [47]. The integration constants determine the boost freedom along the axis of symmetry.

The non-axisymmetric case is more complicated. Stewart [97] proposes a promising approach based upon the dyad decomposition

The desired conformal transformation is obtained by first relating hab conformally to the flat metric of the complex plane. Denoting the complex coordinate of the plane by ζ, this relationship can be expressed as dζ = ef madxA. The conformal factor f can then be determined from the integrability condition

This is equivalent to the classic Beltrami equation for finding isothermal coordinates. It would appear to be a more effective scheme than tackling the second order PDE directly, but numerical implementation has not yet been carried out.

3.5 Three-D Characteristic Evolution

The Binary Black Hole Grand Challenge has fostered striking progress in developing a 3D characteristic code. At the outset of the Grand Challenge, the Pittsburgh group had just completed calibration of their axisymmetric characteristic code. Now, this has not only been extended to a full 3D code which calculates waveforms at infinity [20, 14], it has also been supplied with a horizon finder to successfully move distorted black holes [51, 49] on a computational grid. This has been accomplished with unlimited long term stability and demonstrated second order accuracy, in the harshest nonlinear physical regimes corresponding to radiation powers of a galactic rest mass per second, and with the harshest gauge conditions, corresponding to superluminal coordinate rotation.

The waveforms are initially calculated in arbitrary coordinates determined by the “3+1” gauge conditions on an inner worldtube. An important feature for the binary black hole problem is that these coordinates can be rigidly rotating, so that the evolution near infinity is highly superluminal, without affecting code performance. The waveforms are converted to the standard “plus and cross” inertial polarization modes by numerically carrying out the transformation to an inertial frame at infinity.

3.5.1 The eth Module

Spherical coordinates and spherical harmonics are standard analytic tools in the description of radiation, but, in computational work, spherical coordinates have mainly been used in axisymmetric systems, where polar singularities may be regularized by standard tricks. In the absence of symmetry, these techniques do not generalize and would be especially prohibitive to develop for tensor fields. A crucial ingredient of the 3D characteristic code is a module which allows use of spherical coordinates by implementing a computational version of the Newman-Penrose eth formalism [77]. The eth module covers the sphere with two overlapping stereographic coordinate grids (North and South). It provides everywhere regular, second order accurate, finite difference expressions for tensor fields on the sphere and their covariant derivatives [50].

3.5.2 Computer Algebra Scripts

Although the eth calculus both simplifies the equations and avoids spurious coordinate singularities, there is a large proliferation of angular derivatives of vector and tensor fields in reexpressing Einstein’s equations in eth form. MAPLE scripts have been developed which greatly facilitate reliable coding of the curvature and other tensors entering the problem. The translation of a formula from tensor to eth formalism is ideal for computer algebra: It is straightforward and algorithmic — but lengthy.

3.5.3 Code Tests

The code ran stably in all regimes of radiating, single black hole space-times, including extremely nonlinear systems with the Bondi news as large as N = 400 (in dimensionless geometric units). This means that the code can cope with an enormous power output N2c5/G ≈ 1060W in conventional units. This exceeds the power that would be produced if, in 1 second, the whole Galaxy were converted to gravitational radiation.

Code tests verified second order accuracy of the 3D code in an extensive number of testbeds:

-

Linearized waves on a Minkowski background in null cone coordinates

-

Boost and rotation symmetric solutions

-

Schwarzschild in rotating coordinates

-

Polarization symmetry of nonlinear twist-free axisymmetric waveforms

-

Robinson-Trautman waveforms from perturbed Schwarzschild black holes.

The simulations of nonlinear Robinson-Trautman space-times showed gross qualitative differences with perturbative waveforms once radiative mass losses rose above 3% of the initial energy.

3.5.4 Nonlinear Scattering Off a Schwarzschild Black Hole

The chief physical application of the code has been to the nonlinear version of the classic problem of scattering off a Schwarzschild black hole, first solved perturbatively by Price [82]. Here the inner worldtube for the initial value problem consists of the ingoing r = 2m surface (the past horizon), where Schwarzschild data is prescribed. The nonlinear problem of a gravitational wave scattering off a Schwarzschild black hole is then posed in terms of data on an outgoing null cone consisting of an incoming pulse with compact support.

The news function for this problem was studied as a function of incoming pulse amplitude. Here the computational eth formalism smoothly handles the complicated time dependent transformation between the non-inertial computational frame at \({{\mathcal I}^+}\) and the inertial (Bondi) frame necessary to obtain the standard “plus” and “cross” polarization modes. In the perturbative regime, the news corresponds to the backscattering of the incoming pulse off the effective Schwarzschild potential. However, for higher amplitudes the waveform behaves quite differently. Not only is its amplitude greater, but it also reveals the presence of extra oscillations. In the very high amplitude case, the mass of the system is dominated by the incoming pulse, which essentially backscatters off itself in a nonlinear way.

3.5.5 Moving Black Holes

The 3-D characteristic code was extended to handle evolution based upon a foliation by ingoing null hypersurfaces [49]. This code incorporates a null hypersurface version of an apparent horizon finder, which is used to excise black hole interiors from the computation. The code accurately evolves and tracks moving, distorted, radiating black holes. Test cases include moving a boosted Schwarzschild black hole across a 3D grid. A black hole wobbling relative to an orbiting characteristic grid has been evolved and tracked for over 10,000M, corresponding to about 200 orbits, with absolutely no sign of instability. These results can be viewed online. [56]. The surface area of distorted black holes is calculated and shown to approach the equilibrium value of the final Schwarzschild black hole which is built into the boundary conditions.

The code excises the singular region and evolves black holes forever with second order accuracy. It has attained the Holy Grail of numerical relativity as originally specified by Teukolsky and Shapiro. [91]

This exceptional performance opens a promising new approach to handle the inner boundary condition for Cauchy evolution of black holes by the matching methods reviewed below.

4 CCM

4.1 Computational Boundaries

Boundary conditions are both the most important and the most difficult part of a theoretical treatment of most physical systems. Usually, that’s where all the physics is. And, in computational approaches, that’s usually where all the agony is. Computational boundaries for hyperbolic systems pose special difficulties. Even with an analytic form of the correct physical boundary condition in hand, there are seemingly infinitely more unstable numerical implementations than stable ones. In general, a well posed problem places more boundary requirements on the finite difference equations than on the corresponding partial differential equations. Furthermore, the methods of linear stability analysis are often more unwieldy to apply to the boundary than to the interior evolution algorithm.

The von Neumann stability analysis of the interior algorithm linearizes the equations, while assuming a uniform infinite grid, and checks that the discrete Fourier modes do not grow exponentially. There is an additional stability condition that a boundary introduces into this analysis. Consider the one dimensional case. Normally the mode ekx, with k real, is not included in the von Neumann analysis. However, if there is a boundary to the right on the x-axis, one can legitimately prescribe such a mode (with k > 0) as initial data so that its stability must be checked. In the case of an additional boundary to the left, the Ryaben’kii-Godunov theory allows separate investigation of the interior stability and the stability of each individual boundary [93].

The correct physical formulation of any asymptotically flat, radiative Cauchy problem requires boundary conditions at infinity. These conditions ensure not only that the total energy and the energy loss by radiation are both finite, but also ensure the proper 1/r asymptotic falloff of the radiation fields. However, when treating radiative systems computationally, an outer boundary must be established artificially at some large but finite distance in the wave zone, i.e. many wavelengths from the source. Imposing an accurate radiation boundary condition at a finite distance is a difficult task even in the case of a simple radiative system evolving on a fixed geometric background. The problem is exacerbated when dealing with Einstein’s equation.

Nowhere is the boundary problem more acute than in the computation of gravitational radiation produced by black holes. The numerical study of a black hole space-time by means of a pure Cauchy evolution involves both inner and outer grid boundaries. The inner boundary is necessary to avoid the topological complications and singularities introduced by a black hole. For multiple black holes, the inner boundary consists of disjoint pieces. W. Unruh (see [102]) initially suggested what is now the accepted strategy for Cauchy evolution of black holes. An inner boundary located at (or near) an apparent horizon is used to excise the interior region. Below, we discuss an alternative version of this strategy, based upon matching to a characteristic evolution in the inner region.

First, consider the more universal outer boundary problem, in which Cauchy-characteristic matching has a natural application. In the Cauchy treatment of such a system, the outer grid boundary is located at some finite distance, normally many wavelengths from the source. Attempts to use compactified Cauchy hypersurfaces which extend to spatial infinity have failed because the phase of short wavelength radiation varies rapidly in spatial directions [67]. Characteristic evolution avoids this problem by approaching infinity along phase fronts.

Assuming that the system is nonlinear and not amenable to an exact solution, a finite outer boundary condition must necessarily introduce spurious physical effects into a Cauchy evolution. The domain of dependence of the initial Cauchy data in the region spanned by the computational grid shrinks in time along ingoing characteristics, unless data on a worldtube traced out by the outer grid boundary is included as part of the problem. In order to maintain a causally sensible evolution, this worldtube data must correctly substitute for the missing Cauchy data which would have been supplied if the Cauchy hypersurface had extended to infinity. In a scattering problem, this missing exterior Cauchy data might, for instance, correspond to an incoming pulse initially outside the outer boundary. In a problem where the initial radiation fields are confined to a compact region inside the boundary, this missing Cauchy data is easy to state when dealing with a constraint free field, such as a scalar field Φ where the Cauchy data outside the boundary would be Φ = Φ,t = 0. However, the determination of Cauchy data for general relativity is a global elliptic constraint problem so that there is no well defined scheme to confine it to a compact region. Furthermore, even if the data were known on a complete initial hypersurface extending to infinity, it would be a formidable nonlinear problem to correctly represent it by counterfeit data on the outer boundary.

Instead, in practice, some artificial boundary condition (ABC), such as an outgoing radiation condition, is imposed upon the boundary in an attempt to approximate the proper data for the exterior region. This ABC may cause partial reflection of an outgoing wave back into the system [71, 67, 63, 84], which contaminates the accuracy of the interior evolution and the calculation of the radiated waveform. Furthermore, nonlinear waves intrinsically backscatter, which makes it incorrect to try to entirely eliminate incoming radiation from the outer region. The errors introduced by these problems are of an analytic origin, essentially independent of computational discretization. In general, a systematic reduction of this error can only be achieved by simultaneously refining the discretization and moving the computational boundary to larger and larger radii. This is computationally very expensive, especially for three-dimensional simulations.

A traditional outer boundary condition for the wave equation is the Sommerfeld condition. For a 3D scalar field this takes the form g,t + g,r = 0, where g = rΦ. This condition is exact for a linear wave with spherically symmetric data and boundary. In that case, the exact solution is g = f1(t − r) + f2(t + r) and the Sommerfeld condition eliminates the incoming wave f2.

Much work has been done on formulating boundary conditions, both exact and approximate, for linear problems in situations that are not spherically symmetric and in which the Sommerfeld condition would be inaccurate. These boundary conditions are given various names in the literature, e.g. absorbing or non-reflecting. A variety of successful implementations of ABC’s have been reported for linear problems. See the recent articles [45, 84, 105, 85, 18] for a general discussion of ABC’s.

Local ABC’s have been extensively applied to linear problems with varying success [71, 42, 13, 104, 63, 21, 68]. Some of these conditions are local approximations to exact integral representations of the solution in the exterior of the computational domain [42], while others are based on approximating the dispersion relation of the so-called one-way wave equations [71, 104]. Higdon [63] showed that this last approach is essentially equivalent to specifying a finite number of angles of incidence for which the ABC’s yield perfect transmission. Local ABC’s have also been derived for the linear wave equation by considering the asymptotic behavior of outgoing solutions [13], which generalizes the Sommerfeld outgoing radiation condition. Although such ABC’s are relatively simple to implement and have a low computational cost, their final accuracy is often limited because the assumptions made about the behavior of the waves are rarely met in practice [45, 105]. In general, systematic improvement can only be achieved with local conditions by moving the computational boundary to a larger radius, which is computationally expensive — especially for three-dimensional simulations.

The disadvantages of local ABC’s have led some workers to consider the exact nonlocal boundary conditions based on integral representations of the infinite domain problem [103, 45, 105]. Even for problems where the Green’s function is known and easily computed, such approaches were initially dismissed as impractical [42]; however, the rapid increase in computer power has made it possible to implement exact nonlocal ABC’s even for the 3D linear wave equation [36]. If properly implemented, this kind of method can yield numerical solutions which converge to the exact infinite domain problem in the continuum limit, keeping the artificial boundary at a fixed distance. However, due to nonlocality, the computational cost per time step usually grows at a higher power of grid size (O(N4) per time step in three dimensions) than in a local approach [45, 36, 105], which in multidimensional problems may be very demanding even for today’s supercomputers.

The extension of ABC’s to nonlinear problems is much more difficult. The problem is normally treated by linearizing the region between the outer boundary and infinity, using either local or nonlocal linear ABC’s [105, 85]. The neglect of the nonlinear terms in this region introduces an unavoidable error at the analytic level. But even larger errors are typically introduced in prescribing the outer boundary data. This is a subtle global problem because the correct boundary data must correspond to the continuity of fields, and their normal derivatives have to be extended across the boundary into the linearized exterior. This is a clear requirement for any consistent boundary algorithm, since discontinuities in the field or its derivatives would otherwise act as spurious sheet source on the boundary, thereby contaminating both the interior and the exterior evolutions. But the fields and their normal derivatives constitute an overdetermined set of data for the linearized exterior problem. So it is necessary to solve a global linearized problem, not just an exterior one, in order to find the proper data. The designation “exact ABC” is given to an ABC for a nonlinear system whose only error is due to linearization of the exterior. An exact ABC requires the use of global techniques, such as the difference potential method, to eliminate back reflection at the boundary [105].

To date there have been only a few applications of ABC’s to strongly nonlinear problems [45]. Thompson [101] generalized a previous nonlinear ABC of Hedstrom [62] to treat 1D and 2D problems in gas dynamics. These boundary conditions performed poorly in some situations because of their difficulty in adequately modeling the field outside the computational domain [101, 45]. Hagstrom and Hariharan [59] have overcome these difficulties in 1D gas dynamics by a clever use of Riemann invariants. They proposed a heuristic generalization of their local ABC to 3D, but this has not yet been implemented.

In order to reduce the level of approximation at the analytical level, an artificial boundary for an nonlinear problem must be placed sufficiently far from the strong-field region. This sharply increases the computational cost in multidimensional simulations [42]. There seems to be no numerical method which converges (as the discretization is refined) to the infinite domain exact solution of a strongly nonlinear wave problem in multidimensions, while keeping the artificial boundary fixed.

Cauchy-characteristic matching is a strategy that eliminates this nonlinear source of error. In CCM, Cauchy and characteristic evolution algorithms are pasted together in the neighborhood of a worldtube to form a global evolution algorithm. The characteristic algorithm provides an outer boundary condition for the interior Cauchy evolution, while the Cauchy algorithm supplies an inner boundary condition for the characteristic evolution. The matching worldtube provides the geometric framework necessary to relate the two evolutions. The Cauchy foliation of the space-time cuts the worldtube into spherical slices. The characteristic evolution is based upon the outgoing null hypersurfaces emanating from these slices, with the evolution proceeding from one hypersurface to the next by the outward radial march described earlier. There is no need to truncate space-time at a finite distance from the sources, since compactification of the radial null coordinate makes it possible to cover the whole space-time with a finite computational grid. In this way, the true waveform may be directly computed by a finite difference algorithm. Although characteristic evolution has limitations in regions where caustics develop, it proves to be both accurate and computationally efficient in the treatment of exterior regions.

CCM evolves a mixed spacelike-null initial value problem in which Cauchy data is given in a spacelike region bounded by a spherical boundary \({\mathcal S}\) and characteristic data is given on a null hypersurface emanating from \({\mathcal S}\). The general idea is not entirely new. An early mathematical investigation combining space-like and characteristic hypersurfaces appears in [41]. The three chief ingredients for computational implementation are: (i) a Cauchy evolution module, (ii) a characteristic evolution module and (iii) a module for matching the Cauchy and characteristic regions across an interface. The interface is the timelike worldtube which is traced out by the flow of \({\mathcal S}\) along the worldlines of the Cauchy evolution, as determined by the choice of lapse and shift. Matching provides the exchange of data across the worldtube to allow evolution without any further boundary conditions, as would be necessary in either a purely Cauchy or a purely characteristic evolution.

CCM may be formulated as a purely analytic approach, but its advantages are paramount in the solution of nonlinear problems where analytic solutions would be impossible. One of the first applications of CCM was a hybrid numerical-analytical version, initiated by Anderson and Hobill for the 1D wave equation [7] (see below). There the characteristic region was restricted to the far field where it could be handled analytically by a linear approximation.

The full potential of CCM lies in a purely numerical treatment of nonlinear systems where its error converges to zero in the continuum limit of infinite grid resolution [15, 16, 33]. For high accuracy, CCM is also by far the most efficient method. For small target target error ε, it has been shown that the relative amount of computation required for CCM (Accm) compared to that required for a pure Cauchy calculation (Ac) goes to zero, ACCM/Ac → O as ε → O [20, 19]. An important factor here is the use of a compactified characteristic evolution, so that the whole space-time is represented on a finite grid. From a numerical point of view this means that the only error made in a calculation of the radiation waveform at infinity is the controlled error due to the finite discretization. Accuracy of a Cauchy algorithm which uses an ABC requires a large grid domain in order to avoid error from nonlinear effects in the exterior. The computational demands of matching are small because the interface problem involves one less dimension than the evolution problem. Because characteristic evolution algorithms are more efficient than Cauchy algorithms, the efficiency can be further enhanced by making the matching radius as small as possible consistent with avoiding caustics.

At present, the purely computational version of CCM is exclusively the tool of general relativists who are used to dealing with novel coordinate systems. A discussion of its potential appears in [15]. Only recently [33, 34, 40, 17] has its practicability been carefully explored. Research on this topic is currently being stimulated and guided by the requirements of the Binary Black Hole Grand Challenge Alliance. CCM is one of the strategies being pursued to provide the boundary conditions and determine the radiation waveforms for the binary black hole problem. But I anticipate that its use will eventually spread throughout computational physics because of its inherent advantages in dealing with hyperbolic systems, particularly in 3-dimensional problems where efficiency is necessary. A detailed study of the stability and accuracy of CCM for linear and non-linear wave equations has been presented in Ref. [18], illustrating its potential for a wide range of problems.

4.2 Perturbative CCM

In numerous purely analytic applications outside of general relativity, matching techniques have successfully cured pathologies in perturbative expansions [75]. Matching is a strategy for obtaining a global solution by patching together solutions obtained using different coordinate systems for different regions. By adopting each coordinate system to a length scale appropriate to its domain, a globally convergent perturbation expansion is sometimes possible in cases where a single coordinate system would fail. Burke showed that matching could be used to eliminate some of the divergences arising in perturbative calculations of gravitational radiation [24]. Kates and Kegles further showed that the use of a null coordinate system for the exterior region is essential in the perturbation expansions of curved space radiation fields [69]. They investigated the perturbative description of a scalar field on a Schwarzschild background, in which case the asymptotic behavior of the Schwarzschild light cones differs drastically from that of the artificial Minkowski light cones used in the perturbative expansions based upon the flat space Green function. Use of the Minkowski light cones leads to nonuniformities in the expansion of the radiation fields which are eliminated by the use of exterior coordinates based upon the true light cones. Kates, Anderson, Kegles and Madonna extended this work to the fully general relativistic case and reached the same conclusion [10].

Anderson later applied this approach to the slow motion approximation of a binary system and obtained a derivation of the radiation reaction effect on the orbital period which avoided some objections concerning earlier approaches [6]. The use of the true light cones was also essential in formulating as a mathematical theorem that the Bondi news function satisfies the Einstein quadrupole formula to leading order in a Newtonian limit [107]. Although questions of mathematical consistency still remain in the perturbative treatment of gravitational radiation, it is clear that the use of characteristic methods pushes these problems to a higher perturbative order. Characteristic techniques are used in present day codes for the Zerilli equation [72].

4.3 Analytic-Numerical Matching for Waves

One of the first computational applications of null coordinates in a matching scheme was a hybrid numerical-analytical version, initiated by Anderson and Hobill for the test problem of 1D scalar waves [7, 8, 9]. They were motivated by the circumstances that, although the nonlinear near field cannot be treated analytically in a general relativistic radiation problem, the far field can be handled by a perturbative expansion. Their strategy was to match an inner numerical solution to an approximate outer analytic solution of the problem.

The initial conditions for the exterior solution were fixed by the requirement that the interior sources be stationary prior to some fixed time, As a result, the exterior analytic solution is causal in the sense that it is stationary in the past of some null cone. This effectively introduces a condition that eliminates extraneous incoming radiation from the system in a physically plausible way and determines the exterior solution uniquely. Their strategy was to introduce an overlap region between the numerical interior and the analytic exterior. In the overlap, the numerical solution is matched to the causal analytic solution, resulting in an evolution that is everywhere causally meaningful.

This is the physically correct approach to a system which is stationary prior to a fixed time but is nontrivial to generalize, say, to the problem of radiation from an orbiting binary. Anderson and Hobill first tackled the 1D model problem of an oscillator coupled to a spherically symmetric, flat space, massless scalar field. The numerical results were in excellent agreement with the exact analytic solution (which could be obtained globally for this problem).

They extended the model to include spherical scalar waves propagating in a spherically symmetric curved background space-time. This introduces backscattering which obscures the concept of a purely outgoing wave. Also, no exact solution exists to this problem so that an approximation method is necessary to determine the exterior analytic solution. The approximation was based upon an expansion in terms of a scale parameter that controls the amount of backscatter. In the 0th approximation, the scale parameter vanishes and the problem reduces to the flat space case which can be solved exactly. The flat space Green function is then used to generate higher order corrections.

This is a standard perturbative approach, but a key ingredient of the scheme is that the wave equation is solved in retarded null coordinates (u, r) for the curved space metric, so that causality is built into the Green function at each order of approximation. The transformation from null coordinates (u, r) to Cauchy coordinates (t, r) is known analytically for this problem. This allows simple matching between the null and Cauchy solutions at the boundary of the Cauchy grid. Their scheme is efficient and leads to consistent results in the region that the numerical and analytic solutions overlap. It is capable of handling both strong fields and fast motions.

Later, a global, characteristic, numerical study of the self-gravitating version of this problem confirmed that the use of the true null cones is essential in getting the correct radiated waveform [55]. For quasi-periodic radiation, the phase of the waveform is particular sensitive to the truncation of the outer region at a finite boundary. Although a perturbative estimate would indicate an O(M/R) error, this error accumulates over many cycles to produce an error of order π in the phase.

Anderson and Hobill proposed that their method be extended to general relativity by matching a numerical solution to an analytic 1/r expansion in null coordinates. However, the only analytic-numerical matching schemes that have been implemented in general relativity have been based upon perturbations of a Schwarzschild background using the standard Schwarzschild time slicing [1, 3, 2, 5]. It would be interesting to compare results with an analytic-numeric matching scheme based upon the true null cones. However the original proposal by Anderson and Hobill has not been carried out.

4.4 Numerical Matching for 1D Gravitational Systems

The first numerical implementations of CCM were 1D feasibility studies. These model problems provided a controlled situation for the development of CCM, in which either exact or independent numerical solutions were known. The following studies showed that CCM worked like a charm in a variety of 1D applications — the matched evolutions were essentially transparent to the presence of the interface.

4.4.1 Cylindrical Matching

The Southampton group chose cylindrically symmetric systems as their model problem for developing matching techniques. It provides a good testbed, although the infinite extent of a cylindrically symmetric source is unphysical. In preliminary work, they showed how CCM could be consistently carried out for a scalar wave evolving in Minkowski space-time but expressed in a nontrivial cylindrical coordinate system [33].

They then tackled the gravitational problem. In their first paper in a series, they set up the machinery necessary for investigating cylindrically symmetric vacuum space-times [34]. Although the problem involves only one spatial dimension, there are two independent modes of polarization. The Cauchy metric was treated in the Jordan-Ehlers-Kompaneets canonical form, using coordinates (t, r, ϕ, z) adapted to the (ϕ, z) cylindrical symmetry. The advantage here is that u = t − r is then a null coordinate which can be used for the characteristic evolution. They successfully recast the equations in a suitably regularized form for the compactification of \({{\mathcal I}^+}\) in terms of the coordinate \(y = \sqrt {1/r}\). The simple analytic relationship between Cauchy coordinates (t, r) and characteristic coordinates (u, y) facilitates the translation between Cauchy and characteristic variables on the matching worldtube, given by r = const.

The next paper in this series implements the scheme as a numerical code [40]. The interior Cauchy evolution is carried out using an unconstrained leap frog scheme. It is notable that they report no problems with instability, which have arisen in other attempts at unconstrained leapfrog evolution in general relativity. The characteristic evolution also uses a leap frog scheme for the evolution between retarded time levels u, while numerically integrating the hypersurface equations outward along the characteristics.

The matching interface is located at points common to both the Cauchy and characteristic grids. In order to update these points by Cauchy evolution, it is necessary to obtain field values at the Cauchy “guard” points which lie outside the worldtube in the characteristic region. These values are obtained by interpolation from characteristic grid points (lying on three levels of null hypersurfaces in order to ensure second order accuracy). Similarly, the boundary data for starting up the characteristic integration is obtained by interpolation from Cauchy grid values inside the worldtube.

The code was tested using exact solutions for cylindrical waves, which come in from \({{\mathcal I}^-}\), pass through the symmetry axis and expand out to \({{\mathcal I}^+}\). The numerical errors are oscillatory with low growth rate, and second order convergence was confirmed. Of special importance, little numerical noise was introduced by the interface.

Comparisons of CCM were made with Cauchy evolutions using a standard outgoing radiation boundary condition [80]. At high amplitudes the standard condition developed a large error very quickly and was competitive only for weak waves with a large outer boundary. In contrast, the matching code performed well even with a small matching radius.