Abstract

The Laplace–Beltrami operator, a fundamental object associated with Riemannian manifolds, encodes all intrinsic geometry of manifolds and has many desirable properties. Recently, we proposed the point integral method (PIM), a novel numerical method for discretizing the Laplace–Beltrami operator on point clouds (Li et al. in Commun Comput Phys 22(1):228–258, 2017). In this paper, we analyze the convergence of PIM for Poisson equation with Neumann boundary condition on submanifolds that are isometrically embedded in Euclidean spaces.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Background

The partial differential equations on manifolds arise in a wide variety of applications. In many problems, including material science [10, 20], fluid flow [22, 25], biology and biophysics [2, 3, 21, 37], people need to study the physical process, for instance diffusion and convection, in curved surfaces which introduce different kinds of PDEs in surfaces. It has been several decades to develop numerical methods for solving PDEs in surfaces. Many methods have been developed, such as surface finite element method [19], level set method [9, 48], grid-based particle method [31, 32] and closest point method [35, 43].

Recently, manifold model attracts more and more attentions in data analysis and image processing [4, 11, 13, 23, 26, 29, 30, 36, 40,41,42, 47]. In the manifold model, data or images are represented as a point cloud, which is defined as a collection of points that are embedded in a high-dimensional Euclidean space. One fundamental assumption in the manifold model is that the point cloud samples a smooth manifold. Thus, the information of the manifold is very useful to understand the data or images. PDEs on the manifold, particularly the Laplace–Beltrami equation, encode several intrinsic information of the manifold, thus helping reveal the underlying structures in the data or images. To get the information encoded in PDEs, we need to solve them in the unstructured point cloud. Given that the point cloud is embedded in a high-dimensional space, the traditional methods for PDEs on 2D surfaces do not work.

In the past few years, efforts have been devoted to develop alternative numerical methods to discretize differential operators on point cloud. Liang and Zhao [34] proposed to discretize differential operators on point cloud via local least square approximations of the manifold. Their method achieves high-order accuracy and flexibility because no mesh is required. In principle, their method can be applied to manifolds with arbitrary dimensions and co-dimensions with or without boundaries. However, if the dimension of the manifold is high, then this method may be unstable because a high-order polynomial is used to fit the data. Lai et al. [28] later proposed the local mesh method to approximate differential operators on point cloud. The principle of the proposed method involves the use of K nearest neighbors to construct local mesh around each points, which is easier to construct than global mesh. Based on the local mesh, discretizing differential operators and computing integrals is then facilitated. When the dimension of the manifold is high, even the construction of local mesh is difficult. Although lacking proof, moving least square or local mesh-based methods achieve high-order accuracy. In principle, the accuracy of the moving least square is arbitrarily high. The accuracy of the local mesh method is second order because the local mesh approximation to the manifold has at most second-order accuracy.

In [33], we proposed the point integral method (PIM), a novel numerical method, for solving the Poisson equation on point cloud. The main idea of the point integral method is to approximate the Poisson equation via the following integral equation:

where \(\mathbf {n}\) is the out normal of \(\partial {\mathcal {M}}\), \({\mathcal {M}}\) is a smooth k-dimensional manifold embedded in \(\mathbb {R}^d\), \(\partial {\mathcal {M}}\) is the boundary of \({\mathcal {M}}\). \(R_t(\mathbf {x},\mathbf {y})\) and \(\bar{R}_t(\mathbf {x},\mathbf {y})\) are kernel functions given as follows

where \(C_t = \frac{1}{(4\pi t)^{k/2}}\) is the normalizing factor. \(R\in C^2(\mathbb {R}^+) \) be a positive function that is integrable over \([0,+\infty )\),

\({\varDelta }_\mathcal {M}=\text{ div }(\nabla )\) is the Laplace–Beltrami operator (LBO) on \(\mathcal {M}\). Let \({\varPhi }: {\varOmega }\subset \mathbb {R}^k\rightarrow \mathcal {M}\subset \mathbb {R}^d\) be a local parametrization of \(\mathcal {M}\) and \(\theta \in {\varOmega }\). For any differentiable function \(f:\mathcal {M}\rightarrow \mathbb {R}\), define the gradient on the manifold

and for vector field \(F:{\mathcal {M}}\rightarrow T_\mathbf {x}{\mathcal {M}}\) on \({\mathcal {M}}\), where \(T_\mathbf {x}{\mathcal {M}}\) is the tangent space of \({\mathcal {M}}\) at \(\mathbf {x}\in {\mathcal {M}}\), the divergence is defined as

where \((g^{ij})_{i,j=1,\ldots ,k}=G^{-1}\), \(\det G\) is the determinant of matrix G and \(G(\theta )=(g_{ij})_{i,j=1,\ldots ,k}\) is the first fundamental form which is defined by

and \((F^1(\mathbf {x}),\ldots ,F^d(\mathbf {x}))^t\) is the representation of F in the embedding coordinates.

Using the integral approximation, we transfer the LBO to an integral operator. The integral operator is easily discretized on point clouds with proper quadrature rule, because differential operators are nonexistent inside. This is the essential component of PIM. Similar integral approximation is also used in nonlocal diffusion and peridynamic models [1, 15,16,17, 49].

PIM is also related with graph Laplacian, a discrete object that is associated with a graph and reveals many properties of graphs [12]. As observed in [5, 24, 27, 44], the graph Laplacian with the Gaussian weights well approximates the LBO when the vertices of the graph are assumed to be a sample of the underlying manifold. When no boundary is present, Belkin and Niyogi [6] showed that the spectra of the graph Laplacian with Gaussian weights converge to that of \({\varDelta }_{\mathcal {M}}\). Dealing with the boundary remains an unresolved issue. In fact, near the boundary, graph Laplacian is dominated by the first-order derivative and thus fails to be a true Laplacian [7, 27]. Recently, Singer and Wu [45] showed the spectral convergence of the graph Laplacian in the presence of the Neumann boundary. the convergence analysis in both [6] and [45] is based on the connection between the graph Laplacian and the heat operator. Therefore, Gaussian weights are essential.

The main contribution of this paper is that, for Poisson equation with Neumann boundary condition, we prove that the numerical solution computed by the PIM converges to the exact solution in \(H^1\) norm as the density of the sample points tends to infinity. Unlike the methods used in graph Laplacian, we do not relate the integral operator to heat kernel. Instead, we use a strategy that is standard in numerical analysis to prove convergence.

It is well known that the convergence is an easy consequence of consistency and stability. We imply that PIM is stable by proving that PIM preserves the coercivity of the original Laplace–Beltrami operator. Together with the truncation error estimate, we get the convergence of PIM.

The rest of this paper is organized as follows. In Sect. 2, we describe the point integral method for Poisson equation with Neumann boundary condition. The convergence result is stated in Sect. 3. The structure of the proof is shown in Sect. 4. The main body of the proof is presented in Sects. 5, 6 and 7. Finally, the conclusions and discussion of future work are provided in Sec. 8.

2 Point integral method

In this paper, we consider Poisson equation on a smooth, compact k-dimensional submanifold \(\mathcal {M}\) in \(\mathbb {R}^d,\; d\ge k\) with the Neumann boundary

The manifold \({\mathcal {M}}\) is sampled with a set of sample points P and a subset \(S\subset P\) sampling the boundary of \({\mathcal {M}}\). The points are listed in a fixed order \(P=(\mathbf{p }_1, \ldots , \mathbf{p }_n)\) where \(\mathbf{p }_i \in \mathbb {R}^d, 1\le i\le n\) and without loss of generality, let \(S=(\mathbf{p }_1, \ldots , \mathbf{p }_m)\subset P\) with \(m<n\).

In addition, assuming that we are given two vectors, \(\mathbf {V}= (V_1, \ldots , V_n)^t\), where \(V_i\) is an volume weight of \(\mathbf{p }_i\) in \({\mathcal {M}}\), and \(\mathbf {A}= (A_1, \ldots , A_m)^t\), where \(A_i\) is an area weight of \(\mathbf{p }_i\) in \(\partial {\mathcal {M}}\), thus, for any \(f\in C^1({\mathcal {M}})\) and \(g\in C^1({\mathcal {M}})\),

Here \(\mathrm{d}\mu _\mathbf {x}\) and \(\mathrm{d}\tau _\mathbf {x}\) are the volume form of \({\mathcal {M}}\) and \(\partial {\mathcal {M}}\), respectively.

Using the integral approximation (1), the Poisson equation is approximated with an integral equation,

No differential operators exist in the integral equation. Therefore, it is easy to discretize on the point cloud with the weight vectors, \(\mathbf {V}\) and \(\mathbf {A}\),

The solution \(\mathbf{u } = (u_1, \ldots , u_n)^t\) to the above linear system provides an approximation of the solution to problem (6).

3 Main results

The main contribution of this paper is the establishment of the convergence results for the point integral method for solving problem (6). To simplify the notation and make the proof concise, in the analysis, we consider the homogeneous Neumann boundary conditions, i.e.,

The analysis can be easily generalized to nonhomogeneous boundary conditions.

The corresponding numerical scheme is

where \(f_j=f(\mathbf{p }_j)\).

Before proving the convergence of the point integral method, we need to clarify the meaning of the convergence between the point cloud \((P,\mathbf {V})\) and the manifold \({\mathcal {M}}\). In this paper, we consider the convergence in the sense that \(h(P,\mathbf {V},{\mathcal {M}})\rightarrow 0\) where \(h(P,\mathbf {V},{\mathcal {M}})\) is the integral accuracy index defined as following,

Definition 1

(Integral accuracy index) For the point cloud \((P,\mathbf {V})\) that samples the manifold \({\mathcal {M}}\), the integral accuracy index \(h(P,\mathbf {V},{\mathcal {M}})\) is defined as

where \(\Vert f\Vert _{C^1({\mathcal {M}})} = \Vert f\Vert _\infty +\Vert \nabla f\Vert _\infty \) and \(|\text {supp}(f)|\) is the volume of the support of f.

Using the definition of integrable index, the point cloud \((P,\mathbf {V})\) converges to the manifold \({\mathcal {M}}\) if \(h(P,\mathbf {V},{\mathcal {M}})\rightarrow 0\). In the convergence analysis, we assume that \(h(P,\mathbf {V},{\mathcal {M}})\) is small enough.

Remark 1

In some sense, \(h(P,\mathbf {V},{\mathcal {M}})\) is a measure of the point cloud density.

-

1.

If the volume weight \(\mathbf {V}\) comes from a mesh, one can obtain the integral accuracy index \(h(P,\mathbf {V},{\mathcal {M}})=O(\rho )\) where \(\rho \) is the size of the elements in the mesh and the angle between the normal space of an element and the normal space of \({\mathcal {M}}\) at the vertices of the element is of order \(\rho ^{1/2}\) [46].

-

2.

If the point cloud is sampled from some distribution, from central limit theorem, \(h(P,\mathbf {V},{\mathcal {M}})\sim O(1/\sqrt{n})\) where n is the number of point in P.

Remark 2

To consider the nonhomogeneous Neumann boundary condition or Dirichlet boundary condition, we also have to also assume that \(h(S,\mathbf {A},\partial {\mathcal {M}})\rightarrow 0\), where S is the point set that samples the boundary \(\partial {\mathcal {M}}\) and \(\mathbf {A}\) is the corresponding volume weight on the boundary \(\partial {\mathcal {M}}\).

To obtain the convergence, we also need some assumptions on the regularity of the submanifold \({\mathcal {M}}\) and the integral kernel function R.

Assumption 1

-

1.

Smoothness of the manifold: \({\mathcal {M}}, \partial {\mathcal {M}}\) are both compact and \(C^\infty \) smooth k-dimensional submanifolds isometrically embedded in a Euclidean space \(\mathbb {R}^d\).

-

2.

Assumptions on the kernel function R(r):

-

(a)

Smoothness: \(\frac{\mathrm {d}^2 }{\mathrm {d}r^2}R(r)\) is bounded, i.e., there exists a constant C such that \(\left| \frac{\mathrm {d}^2 }{\mathrm {d}r^2}R(r)\right| \le C, \quad \forall r\ge 0\);

-

(b)

Nonnegativity: \(R(r)\ge 0\) for any \(r\ge 0\);

-

(c)

Compact support: \(R(r) = 0\) for \(\forall r >1\);

-

(d)

Nondegeneracy: \(\exists \delta _0>0\) so that \(R(r)\ge \delta _0\) for \(0\le r\le \frac{1}{2}\).

-

(a)

Remark 3

The assumption on the kernel function is very mild. Almost all the smoothed delta functions in the literatures satisfy these condition. One frequently used choice is given by \(\cos \) function:

The compact support assumption can be relaxed to exponentially decay, like Gaussian kernel. In the nondegeneracy assumption, 1 / 2 may be replaced by a positive number \(\theta _0\) with \(0<\theta _0<1\). Similar assumptions on the kernel function is also used in analysis the nonlocal diffusion problem [18].

Remark 4

For simplicity, R is assumed to be compactly supported. After some mild modifications of the proof, the same convergence results also hold for any kernel function that decays exponentially, like the Gaussian kernel \(G_t(\mathbf {x}, \mathbf {y}) = C_t\exp \left( -\frac{|\mathbf {x}-\mathbf {y}|^2}{4t}\right) \). In fact, for any \(s\ge 1\) and any \(\epsilon >0\), the \(H^s\) mass of the Gaussian kernel over the domain \({\varOmega }=\{ \mathbf {y}\in {\mathcal {M}}| |\mathbf {x}-\mathbf {y}|^2\ge t^{1+\epsilon }\}\) decays faster than any polynomial in t as t goes to 0, i.e., \(\lim _{t\rightarrow 0} \frac{\Vert G_t(\mathbf {x}, \mathbf {y})\Vert _{H^s({\varOmega })}}{t^\alpha } = 0\) for any \(\alpha \). In this way, we can bound any influence of the integral outside a compact support.

All the analysis in this paper is conducted under the assumptions in Assumption 1 and \(h(P,\mathbf {V},{\mathcal {M}})\), t are small enough. One upper bound of t, \(T_0\), is given by \(15\sqrt{2T_0}=\delta \) with \(\delta =\rho \sigma /20\), \(\rho =0.1\) and \(\sigma \) is the minimum of the reaches defined in Proposition 1. In the theorems and the proof, we omit the statement of the assumptions without introducing any confusion.

The solution of the point integral method is a vector \(\mathbf{u }\), while the solution of problem (9) is a function defined on \({\mathcal {M}}\). To make these two solutions comparable, for any solution \(\mathbf{u }= (u_1, \ldots , u_n)^t\) to the problem (10), we construct a function on \({\mathcal {M}}\)

It is easy to verify that \(I_{\mathbf{f }}(\mathbf{u })\) interpolates \(\mathbf{u }\) at the sample points P, i.e., \(I_{\mathbf{f }}(\mathbf{u })(\mathbf{p }_j) = u_j\) for any \(\mathbf{p }_j\in P\). The following theorem guarantees the convergence of the point integral method.

Theorem 1

Let u be the solution to Problem (9) with \(f\in C^1({\mathcal {M}})\) and the vector \(\mathbf{u }\) be the solution to the problem (10). Then, there exists constants C and \(T_0\) only depend on \({\mathcal {M}}\), such that for any \(t\le T_0\)

where \(h(P, \mathbf{V },{\mathcal {M}})\) is the integral accuracy index.

4 Structure of the proof

To simplify the notation, we introduce an integral operator,

Roughly speaking, the proof the convergence includes an estimate of the truncation error \(L_t(u-I_{\mathbf{f }}(\mathbf{u }))\) and the stability of the integral operator \(L_t\). Here \(u(\mathbf {x})\) is the solution of the problem (9) and \(\mathbf{u }\) is the solution of the problem (10).

First, we have following theorem regarding the stability of the operator \(L_t\).

Theorem 2

Let \(u(\mathbf {x})\) solve the integral equation

where \(r\in H^1({\mathcal {M}})\) with \(\int _{\mathcal {M}}r(\mathbf {x})\mathrm {d}\mu _\mathbf {x}=0\). There exist constants \(C>0, T_0>0\) independent on t, such that

provided that \(t\le T_0\).

To apply the stability result, we need \(L_2\) estimate of \(L_t(u-I_{\mathbf{f }}(\mathbf{u }))\) and \(\nabla L_t(u-I_{\mathbf{f }}(\mathbf{u }))\). These truncation errors are analyzed in following two theorems by splitting the truncation error \(L_t(u-I_{\mathbf{f }}(\mathbf{u }))\)

where \(u_t\) is the solution of the integral equation

For the second term, we have

Theorem 3

Let \(u_t(\mathbf {x})\) be the solution of the problem (14) and \(\mathbf{u }\) be the solution of the problem (10). If \(f\in C^1({\mathcal {M}})\), then there exists constants \(C, T_0\) depending only on \({\mathcal {M}}\), so that

as long as \(t\le T_0\) and \(\frac{h(P,\mathbf {V},{\mathcal {M}})}{\sqrt{t}}\le T_0\), \(h(P,\mathbf {V},{\mathcal {M}})\) is the integral difference index in Definition 1.

In the analysis, we found that the error term \(L_t(u-u_t)\) has boundary layer structure. In the interior region, it is \(O(\sqrt{t})\) and in a layer adjacent to the boundary with width \(O(\sqrt{t})\), the error is O(1).

Theorem 4

Let \(u(\mathbf {x})\) be the solution of the problem (9) and \(u_t(\mathbf {x})\) be the solution of the corresponding integral equation (14). Let

and

where \(\mathbf {n}(\mathbf {y})=(n^1(\mathbf {y}),\ldots ,n^d(\mathbf {y}))\) is the out normal vector of \(\partial {\mathcal {M}}\) at \(\mathbf {y}\), \(\nabla ^j\) is the jth component of gradient \(\nabla \).

If \(u\in H^3({\mathcal {M}})\), then there exists constants \(C, T_0\) depending only on \({\mathcal {M}}\) and \(p(\mathbf {x})\), so that,

as long as \(t\le T_0\).

To utilize the boundary layer structure, we need a stability result specifically for the boundary term.

Theorem 5

Let \(u(\mathbf {x})\) solve the integral equation

where \(|{\mathcal {M}}|=\int _{\mathcal {M}}\mathrm {d}\mu _\mathbf {x}\) and

Then, there exist constant \(C>0, T_0>0\) independent on t, such that

as long as \(t\le T_0\).

Theorem 1 is an easy corollary from Theorems 2, 3, 4 and 5. Theorems 2 and 3 imply that

which prove Theorem 1.

In the rest of the paper, we prove Theorems 2, 3, 4 and 5, respectively.

5 Error analysis of the integral approximation (Theorem 4)

In this section, we need to introduce a special parametrization of the manifold \({\mathcal {M}}\). This parametrization is based on following proposition. First, we define the reaches of a manifold following the definition in [38]. Consider a compact Riemannian submanifold \({\mathcal {M}}\) of a Euclidean space \(\mathbb {R}^d\). We can define the set

where \(d(x,{\mathcal {M}})=\inf _{y\in {\mathcal {M}}}|x-y|\). The closure of \(G({\mathcal {M}})\), denoted as \(\bar{G}({\mathcal {M}})\), is called the medial axis of \({\mathcal {M}}\). For any \(p\in {\mathcal {M}}\), the reach at p is defined as the distance of p to the medial axis, i.e.,

Proposition 1

Assume both \({\mathcal {M}}\) and \(\partial {\mathcal {M}}\) are compact and \(C^2\) smooth. \(\sigma \) is the minimum of the reaches of \({\mathcal {M}}\) and \(\partial {\mathcal {M}}\), i.e.,

For any point \(\mathbf {x}\in {\mathcal {M}}\), there is a neighborhood \(U\subset {\mathcal {M}}\) of \(\mathbf {x}\), so that there is a parametrization \({\varPhi }: {\varOmega }\subset \mathbb {R}^k \rightarrow U\) satisfying the following conditions. For any \(\rho \le 0.1\),

-

(i)

\({\varOmega }\) is convex and contains at least half of the ball \(B_{{\varPhi }^{-1}(\mathbf {x})}(\frac{\rho }{5} \sigma )\), i.e., \(vol({\varOmega }\cap B_{{\varPhi }^{-1}(\mathbf {x})}(\frac{\rho }{5} \sigma )) > \frac{1}{2}(\frac{\rho }{5}\sigma )^k w_k\) where \(w_k\) is the volume of unit ball in \(\mathbb {R}^k\);

-

(ii)

\(B_{\mathbf {x}}(\frac{\rho }{10} \sigma ) \cap {\mathcal {M}}\subset U\).

-

(iii)

The determinant the Jacobian of \({\varPhi }\) is bounded: \((1-2\rho )^k \le |D{\varPhi }| \le (1+2\rho )^k\) over \({\varOmega }\).

-

(iv)

For any points \(\mathbf {y}, \mathbf {z}\in U\), \(1-2\rho \le \frac{|\mathbf {y}-\mathbf {z}|}{\left| {\varPhi }^{-1}(\mathbf {y}) - {\varPhi }^{-1}(\mathbf {z})\right| } \le 1+3\rho \).

This proposition basically says there exists a local parametrization of small distortion if \(({\mathcal {M}}, \partial {\mathcal {M}})\) satisfies certain smoothness, and moreover, the parameter domain is convex and big enough. The proof of this proposition can be found in “Appendix A.” Next, we introduce a special parametrization of the manifold \({\mathcal {M}}\).

Let \(\rho =0.1\), \(\sigma \) be the minimum of the reaches of \({\mathcal {M}}\) and \(\partial {\mathcal {M}}\) and \(\delta =\rho \sigma /20\). For any \(\mathbf {x}\in {\mathcal {M}}\), denote

and we assume t is small enough such that \(2\sqrt{t}\le \delta \).

Since the manifold \(\mathcal {M}\) is compact, there exists a \(\delta \)-net, \(\mathcal {N}_\delta =\{ \mathbf {q}_i\in \mathcal {M},\;i=1,\ldots ,N\}\), such that

and there exists a partition of \({\mathcal {M}}\), \(\{\mathcal {O}_i, \;i=1,\ldots ,N\}\), such that \(\mathcal {O}_i\cap \mathcal {O}_j=\emptyset ,\; i\ne j\) and

Using Proposition 1, there exist a parametrization \({\varPhi }_i: {\varOmega }_i\subset \mathbb {R}^k \rightarrow U_i\subset \mathcal {M},\; i=1,\ldots , N\), such that

-

1.

(Convexity) \(B_{\mathbf {q}_i}^{2\delta }\subset U_i\) and \({\varOmega }_i\) is convex;

-

2.

(Smoothness) \({\varPhi }_i\in C^3({\varOmega }_i)\);

-

3.

(Locally small deformation) For any points \(\theta _1, \theta _2\in {\varOmega }_i\),

$$\begin{aligned} \frac{1}{2}\left| \theta _1-\theta _2\right| \le \left\| {\varPhi }_i(\theta _1)-{\varPhi }_i(\theta _2)\right\| \le 2\left| \theta _1-\theta _2\right| . \end{aligned}$$

Using the partition, \(\{\mathcal {O}_i, \;i=1,\ldots ,N\}\), for any \(\mathbf {y}\in {\mathcal {M}}\), there exists unique \(J(\mathbf {y})\in \{1,\ldots ,N\}\), such that

Moreover, using the condition, \(2\sqrt{t}\le \delta \), we have \(\mathcal {M}_{\mathbf {y}}^t \subset B_{\mathbf {q}_{J(\mathbf {y})}}^{2\delta }\subset U_{J(\mathbf {y})}\). Then, \({\varPhi }_{J(\mathbf {y})}^{-1}(\mathbf {x})\) and \({\varPhi }_{J(\mathbf {y})}^{-1}(\mathbf {y})\) are both well defined for any \(\mathbf {x}\in \mathcal {M}_{\mathbf {y}}^t\).

Now, we define an auxiliary function, \(\eta (\mathbf {x},\mathbf {y})\) for any \(\mathbf {y}\in {\mathcal {M}},\;\mathbf {x}\in \mathcal {M}_{\mathbf {y}}^t\). Let

where \(\alpha (\mathbf {x},\mathbf {y})={\varPhi }^{-1}_{J(\mathbf {y})}(\mathbf {y})\) and \(\partial \) is the gradient operator in the parameter space, i.e.,

Now, we state the proof of Theorem 4.

Proof

Let \(r(\mathbf {x})=-(L_t u-L_tu_t)\) be the residual, then we have

Here, we use that fact that

and

where the last equality comes from:

Here, \({\varPhi }^i,\; i=1,\ldots , d\), is the ith component of the parameterization function \({\varPhi }\) and the parameterization function \({\varPhi }={\varPhi }_{J(\mathbf {y})}\), \(J(\mathbf {y})\) is the index function given in (20). In the rest of the proof, without introducing any confusion, we always drop the subscript of the parameterization function.

First, we split the residual \(r(\mathbf {x})\) to four terms

where

where \(\nabla ^i,\; i=1,\ldots ,d\), is the ith component of the gradient \(\nabla \), \(\eta ^i, \; i=1,\ldots ,d\) is the ith component of \(\eta (\mathbf {x},\mathbf {y})\) defined in (21). To simplify the notation, we drop the variable \((\mathbf {x},\mathbf {y})\) in the function \(\eta (\mathbf {x},\mathbf {y})\).

Next, we will prove the theorem by estimating above four terms one by one. First, we consider \(r_1\). Let

we have

and

Using Newton–Leibniz formula, we get

Here, \(\alpha =\alpha (\mathbf {x},\mathbf {y})={\varPhi }_{J(\mathbf {y})}^{-1}(\mathbf {y})\), \(\xi =\xi (\mathbf {x},\mathbf {y})={\varPhi }_{J(\mathbf {y})}^{-1}(\mathbf {x})-{\varPhi }_{J(\mathbf {y})}^{-1}(\mathbf {y})\). In above derivation, we need the convexity property of the parameterization function to make sure all the integrals are well defined.

Using above equality and the smoothness of the parameterization functions, it is easy to show that

where we use the fact that \(J(\mathbf {y})=i,\; \mathbf {y}\in \mathcal {O}_i\) and

Let \(\mathbf {z}_i={\varPhi }_i(\alpha +s \xi ),\; 0\le s\le 1\), then for any \(\mathbf {y}\in \mathcal {O}_i\subset B_{\mathbf {q}_i}^\delta \) and \(\mathbf {x}\in {\mathcal {M}}_{\mathbf {y}}^t\),

We can assume that t is small enough such that \(8\sqrt{t}\le \delta \), then we have

After changing of variable, we obtain

This estimate would give us that

Now, we turn to estimate the gradient of \(r_1\).

where \(\nabla _\mathbf {x}\) is the gradient in \({\mathcal {M}}\) with respect to \(\mathbf {x}\).

Using the same techniques in the calculation of \(\Vert r_1(\mathbf {x})\Vert _{L^2({\mathcal {M}})}\), we get that the first term of right-hand side can bounded as follows

The estimation of second term is a little involved. First, we have

Also using Newton–Leibniz formula, we have

Then, the gradient of \(\mathrm{d}(\mathbf {x},\mathbf {y})\) has following representation,

For \(d_1\), we have

which means that

For \(d_2\), we have

This formula tells us that

Using the same arguments as that in the calculation of \(\Vert r_1\Vert _{L^2({\mathcal {M}})}\), we have

Combining (24) and (25), we have

For \(r_2\), first, notice that

Then, we have

Thus, we get

Then, we have following bound for \(r_2\),

Similarly, we have

\(r_3\) is relatively easy to estimate by using the well known Gauss formula.

where \(\tilde{I}_{bd}=\int _{\partial \mathcal {M}}n^{j}\eta ^{i}(\nabla ^{i} \nabla ^{j}u(\mathbf {y})) \bar{R}_t(\mathbf {x}, \mathbf {y})\mathrm {d}\tau _\mathbf {y}\).

Now, we turn to bound the last term \(r_4\). Notice that

where \(\det G\) is the determinant of G and \(G=(g_{ij})_{i,j=1,\ldots ,k}\). Here, we use the fact that

Moreover, we have

where the first equalities are due to that \(\partial _{i'}\xi ^l = -\delta _{i'}^l\). Then we have

Here, we use the equalities (30), (31), \(\eta ^i = \xi ^{l} \partial _{i'}{\varPhi }^l\) and the definition of \(\text{ div }\),

where X is a smooth tangent vector field on \({\mathcal {M}}\) and \((X^1,\ldots ,X^d)^t\) is its representation in embedding coordinates.

Hence,

Then, it is easy to get that

By combining (23), (26), (27), (28), (29), (33), (34), we know that

Using the definition of \(I_{bd}\) and \(\tilde{I}_{bd}\), we obtain

Using the definition of \(\eta (\mathbf {x},\mathbf {y})\), it is easy to check that

which implies that

The theorem is proved by putting (35), (36), (37), (38) together.

6 Error analysis of the discretization (Theorem 3)

In this section, we estimate the discretization error introduced by approximating the integrals in (14) that is to prove Theorem 3. To simplify the notation, we introduce an intermediate operator defined as follows,

If \(u_{t,h}=I_{\mathbf {f}}(\mathbf {u})\) with \(\mathbf {u}\) satisfying equation (10), one can verify that the following equation is satisfied,

We introduce a discrete operator \(\mathcal {L}: \mathbb {R}^n\rightarrow \mathbb {R}^n\) where \(n=|P|\). For any \(\mathbf{u }=(u_1,\ldots ,u_n)^t\), denote

For this operator, we have the following important theorem.

Theorem 6

Under the assumptions in Assumption 1, there exist constants \(C>0,\, C_0>0\), \(T_0\) independent on t so that for any \(\mathbf{u } = (u_1, \ldots , u_n)^t \in \mathbb {R}^d\) with \(\sum _{i=1}^n u_iV_i = 0\), \(t\le T_0\) and sufficient small \(\frac{h(P,\mathbf {V},{\mathcal {M}})}{\sqrt{t}}\)

where \(\left<\mathbf{u }, \mathbf{v }\right>_{\mathbf {V}} = \sum _{i=1}^n u_iv_iV_i\) for any \(\mathbf{u } = (u_1, \ldots , u_n), \mathbf{v } =(v_1, \ldots , v_n)\).

The proof of the above theorem is deferred to “Appendix D.”

It has an easy corollary which gives a priori estimate of \(\mathbf {u}=(u_1, \ldots , u_n)^t\) solving the discrete problem (10).

Lemma 1

Suppose \(\mathbf {u}=(u_1, \ldots , u_n)^t\) with \(\sum _i u_i V_i = 0\) solves the problem (10) and \(\mathbf{f }=(f(\mathbf{p }_1), \ldots , f(\mathbf{p }_n))^t\) for \(f\in C(\mathcal {M})\),

there exists a constant \(C>0\) such that

provided t and \(\frac{h(P,\mathbf {V},{\mathcal {M}})}{\sqrt{t}}\) are small enough.

Proof

From Theorem 6, we have

This proves the lemma.

We are now ready to prove Theorem 3.

Proof of Theorem 3

Denote

where \(\mathbf {u}=(u_1, \ldots , u_n)^t\) with \(\sum _{i=1}^n u_i V_i = 0\) solves the problem (10), \( f_j = f(\mathbf{p }_j)\) and \(w_{t,h}(\mathbf {x})=\sum _{j=1}^nR_t(\mathbf {x},\mathbf{p }_j)V_j\). For convenience, we set

and thus \(u_{t,h} = a_{t, h} +c_{t, h}\).

In the proof, to simplify the notation, we denote \(h=h(P,\mathbf {V},{\mathcal {M}})\) and \(n=|P|\).

First we upper bound \(\Vert L_t(u_{t,h}) - L_{t, h}(u_{t, h})\Vert _{L^2({\mathcal {M}})}\). For \(c_{t, h}\), we have

For \(a_{t, h}\), we have

Let

We have \(|A|<\frac{Ch}{t^{1/2}}\) for some constant C independent of t. In addition, notice that only when \(|\mathbf {x}-\mathbf{p }_i|^2\le 16t \) is \(A\ne 0\), which implies

Then, we have

Combining Eqs. (45), (46) and Lemma 1,

Assembling the parts together, we have the following upper bound.

At the same time, since \(u_t\) respectively \(u_{t,h}\) solves equation (14) respectively equation (40), we have

The complete \(L^2\) estimate follows from Eqs. (47) and (48).

The estimate of the gradient, \(\Vert \nabla (L_t(u_{t}) - L_{t, h}(u_{t, h}))\Vert _{L^2({\mathcal {M}})}\), can be obtained similarly. The details can be found in “Appendix E.”

7 Stability analysis (Theorems 2 and 5)

To prove Theorem 2 and 5, we need following two theorems regarding the coercivity of the operator \(L_t\).

Theorem 7

For any function \(u\in L^2(\mathcal {M})\), there exists a constant \(C>0\) independent on t and u, such that

where \(\left<f, g\right>_{\mathcal {M}} = \int _{\mathcal {M}} f(\mathbf {x})g(\mathbf {x})\mathrm {d}\mu _\mathbf {x}\) for any \(f, g\in L_2(\mathcal {M})\), and

and \(w_t(\mathbf {x}) = C_t\int _{\mathcal {M}}R\left( \frac{|\mathbf {x}-\mathbf {y}|^2}{4t}\right) \mathrm {d}\mu _\mathbf {y}\).

Theorem 8

Assume both \({\mathcal {M}}\) and \(\partial {\mathcal {M}}\) are \(C^\infty \). There exists a constant \(C>0\) independent on t so that for any function \(u\in L_2({\mathcal {M}})\) with \(\int _{\mathcal {M}}u(\mathbf {x})\mathrm {d}\mu _\mathbf {x}= 0\) and for any sufficient small t

Theorem 2 is a direct corollary of following two lemmas.

Lemma 2

For any function \(u\in L^2(\mathcal {M})\), there exists a constant \(C>0\) independent on t and u, such that

where v is the same as defined in (50).

Lemma 3

If t is small enough, then for any function \(u\in L^2(\mathcal {M})\), there exists a constant \(C>0\) independent on t and u, such that

The proofs of the above two lemmas are put in “Appendix B” and “C.” Once we have Lemmas 2 and 3, Theorem 7 becomes obvious by noticing that:

Now, we turn to prove Theorem 51.

Proof of Theorem 51

By Theorem 7 and the Poincaré inequality, there exists a constant \(C>0\), such that

where \(\bar{v}=\frac{1}{|\mathcal {M}|}\int _\mathcal {M} v(\mathbf {x})\mathrm {d}\mu _\mathbf {x}\) and

At the same time, we have

where the second equality comes from \(\int _{{\mathcal {M}}} u(\mathbf {x})\mathrm {d}\mu _\mathbf {x}= 0\). This enables us to upper bound the \(L_2\) norm of v as follows. For t sufficiently small,

Let \(\delta =\frac{w_{\min }}{2w_{\max }+w_{\min }}\) where \(w_{\min }=\min _\mathbf {x}w_t(\mathbf {x})\) and \(w_{\max }=\max _\mathbf {x}w_t(\mathbf {x})\). If u is smooth and close to its smoothed version v, in particular,

then the theorem is proved.

Now, consider the case where (52) does not hold. Note that we now have

Then, we have

This completes the proof for the theorem.

7.1 Proof of Theorem 2

With Theorem 7 and 51, the proof of Theorem 2 is straightforward.

Proof of Theorem 2

Using Theorem 51, we have

To show the last inequality, we use the fact that

This inequality (53) implies that

Now, we turn to estimate \(\Vert \nabla u\Vert _{L^2({\mathcal {M}})}\). Notice that we have the following expression for u, since u satisfies the integral equation (14).

where

By Theorem 7, we have

This completes the proof.

7.2 Proof of Theorem 5

The proof of Theorem 5 is more involved.

Proof

First, we denote

where \(|{\mathcal {M}}|=\int _{\mathcal {M}}\mathrm {d}\mu _\mathbf {y}\).

The key point of the proof is to show that

First, notice that

Then, it is sufficient to show that

Direct calculation gives that

where \(\bar{\bar{R}}_t(\mathbf {x}, \mathbf {y})=C_t\bar{\bar{R}}\left( \frac{\Vert \mathbf {x}-\mathbf {y}\Vert ^2}{4t}\right) \) and \(\bar{\bar{R}}(r)=\int _{r}^{\infty }\bar{R}(s)\mathrm {d}s\). This implies that

On the other hand, using the Gauss integral formula, we have

Here, \(T_\mathbf {x}\) is the projection operator to the tangent space on \(\mathbf {x}\). To get the first equality, we use the fact that \(\nabla \bar{\bar{R}}_t(\mathbf {x}, \mathbf {y})\) belongs to the tangent space on \(\mathbf {x}\), such that \(\mathbf {b}(\mathbf {y})\cdot \nabla \bar{\bar{R}}_t(\mathbf {x}, \mathbf {y})=T_\mathbf {x}(\mathbf {b}(\mathbf {y}))\cdot \nabla \bar{\bar{R}}_t(\mathbf {x}, \mathbf {y})\) and \(\mathbf {n}(\mathbf {x})\cdot T_\mathbf {x}(\mathbf {b}(\mathbf {y}))=\mathbf {n}(\mathbf {x})\cdot \mathbf {b}(\mathbf {y})\) where \(\mathbf {n}(\mathbf {x})\) is the out normal of \(\partial {\mathcal {M}}\) at \(\mathbf {x}\in \partial {\mathcal {M}}\).

For the first term, we have

We can also bound the second term on the right-hand side of (57). By using the assumption that \({\mathcal {M}}\in C^\infty \), we have

where the constant C depends on the curvature of the manifold \({\mathcal {M}}\).

Then, we have

Then, the inequality (55) is obtained from (56), (57), (58) and (59). Now, using Theorem 51, we have

Note \(r(\mathbf {x})=\int _{\partial {\mathcal {M}}}(\mathbf {x}-\mathbf {y})\cdot \mathbf {b}(\mathbf {y})\bar{R}_t(\mathbf {x},\mathbf {y}) \mathrm {d}\tau _\mathbf {y}\). Direct calculation gives us that

The integral equation \(L_t u=r-\bar{r}\) gives that

where

By Theorem 7, we have

which proves the theorem.

8 Discussion and future work

We have proven the convergence of the point integral method for Poisson equations on submanifolds that are isometrically embedded in Euclidean spaces. Our analysis shows that the convergence rate of PIM is \(h^{1/4}\) in \(H^1\) norm. However, our experimental results in [33] show that the empirical convergence rate is approximately linear. In some parts in our analysis, we believe that the error bounds could be improved.

Nevertheless, the quadrature rule we used in the point integral method is of low accuracy. If we have more information, such as the local mesh or local hypersurface, we could use high-order quadrature rule to improve the accuracy of the point integral method.

Based on the convergence result in this paper, we can show that the spectra of the graph Laplacian with proper normalization converge to the spectra of \({\varDelta }_{\mathcal {M}}\) with the Neumann boundary condition. Moreover, we can obtain an estimate of the rate of spectral convergence. The point integral method also applies to Poisson equation with Dirichlet boundary. Moreover, we can also show the convergence of the point integral method for the Dirichlet boundary. These results will be reported in the subsequent papers.

Another interesting problem is generalizing PIM to solve other type of PDEs. PIM can handle elliptic equations with anisotropic or discontinuous coefficients. The generalization to the diffusion equation seems to be natural. If the equation is dominated by convection term, it may need more careful study.

References

Andreu, F., Mazon, J.M., Rossi, J.D., Toledo, J.: Nonlocal Diffusion Problems. Mathematical Surveys and Monographs, vol. 165, AMS, Providence (2010)

Barreira, R., Elliott, C., Madzvamuse, A.: Modelling and simulations of multi-component lipid membranes and open membranes via diffuse interface approaches. J. Math. Biol. 56, 347–371 (2008)

Barreira, R., Elliott, C., Madzvamuse, A.: The surface finite element method for pattern formation on evolving biological surfaces. J. Math. Biol. 63, 1095–1119 (2011)

Belkin, M., Niyogi, P.: Laplacian eigenmaps for dimensionality reduction and data representation. Neural Comput. 15(6), 1373–1396 (2003)

Belkin, M., Niyogi, P.: Towards a theoretical foundation for Laplacian-based manifold methods. In: COLT, pp. 486–500 (2005)

Belkin, M., Niyogi, P.: Convergence of Laplacian eigenmaps. Short version NIPS 2008 (preprint) (2008)

Belkin, M., Que, Q., Wang, Y., Zhou, X.: Toward understanding complex spaces: graph Laplacians on manifolds with singularities and boundaries. In: COLT, pp. 36.1–36.26 (2012)

Belkin, M., Sun, J., Wang, Y.: Constructing laplace operator from point clouds in rd. In: SODA’09: Proceedings of the Nineteenth Annual ACM–SIAM Symposium on Discrete Algorithms, pp. 1031–1040. Society for Industrial and Applied Mathematics, Philadelphia (2009)

Bertalmio, M., Cheng, L.-T., Osher, S., Sapiro, G.: Variational problems and partial differential equations on implicit surfaces. J. Comput. Phys. 174(2), 759–780 (2001)

Cahn, J.W., Fife, P., Penrose, O.: A phase-field model for diffusion-induced grain-boundary motion. Ann. Stat. 36(2), 555–586 (2008)

Choi, P.T., Lam, K.C., Lui, L.M.: Flash: fast landmark aligned spherical harmonic parameterization for genus-0 closed brain surfaces. SIAM J. Imaging Sci. 8, 67–94 (2015)

Chung, F.R.K.: Spectral Graph Theory. American Mathematical Society, Providence (1997)

Coifman, R.R., Lafon, S., Lee, A.B., Maggioni, M., Warner, F., Zucker, S.: Geometric diffusions as a tool for harmonic analysis and structure definition of data: diffusion maps. In: Proceedings of the National Academy of Sciences, pp. 7426–7431 (2005)

Dey, T.K., Sun, J., Wang, Y.: Approximating cycles in a shortest basis of the first homology group from point data. Inverse Probl. 27(12), 124004 (2011)

Du, Q., Gunzburger, M., Lehoucq, R.B., Zhou, K.: Analysis and approximation of nonlocal diffusion problems with volume constraints. SIAM Rev. 54, 667–696 (2012)

Du, Q., Gunzburger, M., Lehoucq, R.B., Zhou, K.: A nonlocal vector calculus, nonlocal volume-constrained problems, and nonlocal balance laws. Math. Models Methods Appl. Sci. 23, 493–540 (2013)

Du, Q., Ju, L., Tian, L., Zhou, K.: A posteriori error analysis of finite element method for linear nonlocal diffusion and peridynamic models. Math. Comp. 82, 1889–1922 (2013)

Du, Q., Li, T., Zhao, X.: A convergent adaptive finite element algorithm for nonlocal diffusion and peridynamic models. SIAM J. Numer. Anal. 51, 1211–1234 (2013)

Dziuk, G., Elliott, C.M.: Finite element methods for surface PDEs. Acta Numer. 22, 289–396 (2013)

Eilks, C., Elliott, C.M.: Numerical simulation of dealloying by surface dissolution via the evolving surface finite element method. J. Comput. Phys. 227, 9727–9741 (2008)

Elliott, C.M., Stinner, B.: Modeling and computation of two phase geometric biomembranes using surface finite elements. J. Comput. Phys. 229, 6585–6612 (2010)

Ganesan, S., Tobiska, L.: A coupled arbitrary Lagrangian Eulerian and Lagrangian method for computation of free-surface flows with insoluble surfactants. J. Comput. Phys. 228, 2859–2873 (2009)

Gu, X., Wang, Y., Chan, T.F., Thompson, P.M., Yau, S.-T.: Genus zero surface conformal mapping and its application to brain surface mapping. IEEE TMI 23, 949–958 (2004)

Hein, M., Audibert, J.-Y., von Luxburg, U.: From graphs to manifolds—weak and strong pointwise consistency of graph Laplacians. In: Proceedings of the 18th Annual Conference on Learning Theory, COLT’05, pp. 470–485. Springer, Berlin (2005)

James, A.J., Lowengrub, J.: A surfactant-conserving volume-of-fluid method for interfacial flows with insoluble surfactant. J. Comput. Phys. 201, 685–722 (2004)

Kao, C.-Y., Lai, R., Osting, B.: Maximization of Laplace–Beltrami eigenvalues on closed riemannian surfaces. ESAIM: Control Optim. Calc. Var. 23, 685–720 (2017)

Lafon, S.: Diffusion Maps and Geodesic Harmonics. Ph.D. Thesis (2004)

Lai, R., Liang, J., Zhao, H.: A local mesh method for solving PDEs on point clouds. Inverse Probl. Imaging 7, 737–755 (2013)

Lai, R., Wen, Z., Yin, W., Gu, X., Lui, L.: Folding-free global conformal mapping for genus-0 surfaces by harmonic energy minimization. J. Sci. Comput. 58, 705–725 (2014)

Lai, R., Zhao, H.: Multi-scale non-rigid point cloud registration using robust sliced-wasserstein distance via Laplace–Beltrami eigenmap. SIAM J. Imaging Sci. (to appear) (2014). arXiv:1406.3758

Leung, S., Lowengrub, J., Zhao, H.: A grid based particle method for solving partial differential equations on evolving surfaces and modeling high order geometrical motion. J. Comput. Phys. 230(7), 2540–2561 (2011)

Leung, S., Zhao, H.: A grid based particle method for moving interface problems. J. Comput. Phys. 228(8), 2993–3024 (2009)

Li, Z., Shi, Z., Sun, J.: Point integral method for solving poisson-type equations on manifolds from point clouds with convergence guarantees. Commun. Comput. Phys. 22(1), 228–258 (2017)

Liang, J., Zhao, H.: Solving partial differential equations on point clouds. SIAM J. Sci. Comput. 35, 1461–1486 (2013)

Macdonald, C., Ruuth, S.: The implicit closest point method for the numerical solution of partial differential equations on surfaces. SIAM J. Sci. Comput. 31(6), 4330–4350 (2009)

Meng, T.W., Choi, P.T., Lui, L.M.: Tempo: feature-endowed Teichmuller extremal mappings of point clouds. SIAM J. Imaging Sci. 9, 1582–1618 (2016)

Neilson, M.P., Mackenzie, J.A., Webb, S.D., Insall, R.H.: Modelling cell movement and chemotaxis using pseudopod-based feedback. SIAM J. Sci. Comput. 33, 1035–1057 (2011)

Niyogi, P., Smale, S., Weinberger, S.: Finding the homology of submanifolds with high confidence from random samples. Discret. Comput. Geom. 39, 419–441 (2008)

Niyogi, P., Smale, S., Weinberger, S.: Finding the homology of submanifolds with high confidence from random samples. Discret. Comput. Geom. 39(1–3), 419–441 (2008)

Osher, S., Shi, Z., Zhu, W.: Low Dimensional Manifold Model for Image Processing. Technical report, UCLA, CAM-report 16-04 (2016)

Peyré, G.: Manifold models for signals and images. Comput. Vis. Image Underst. 113, 248–260 (2009)

Reuter, M., Wolter, F.E., Peinecke, N.: Laplace–Beltrami spectra as ‘shape-DNA’ of surfaces and solids. Comput. Aided Des. 38, 342–366 (2006)

Ruuth, S., Merriman, B.: A simple embedding method for solving partial differential equations on surfaces. J. Comput. Phys. 227(3), 1943–1961 (2008)

Singer, A.: From graph to manifold Laplacian: the convergence rate. Appl. Comput. Harmonic Anal. 21(1), 128–134 (2006)

Singer, A., Wu, H.-T.: Spectral Convergence of the Connection Laplacian from Random Samples. arXiv:1306.1587

Wardetzky, M.: Discrete Differential Operators on Polyhedral Surfaces—Convergence and Approximation. Ph.D. Thesis (2006)

Wong, T.W., Lui, L.M., Gu, X., Thompson, P., Chan, T., Yau, S.-T.: Instrinic Feature Extraction and Hippocampal Surface Registration Using Harmonic Eigenmap. Technical Report, UCLA CAM Report 11-65 (2011)

Xu, J., Zhao, H.: An Eulerian formulation for solving partial differential equations along a moving interface. J. Sci. Comput. 19, 573–594 (2003)

Zhou, K., Du, Q.: Mathematical and numerical analysis of linear peridynamic models with nonlocal boundary conditions. SIAM J. Numer. Anal. 48, 1759–1780 (2010)

Author information

Authors and Affiliations

Corresponding author

Appendices

Proof of Proposition 1

To prove the proposition, we first cite a few results from Riemannian geometry on isometric embeddings. For a submanifold \({\mathcal {M}}\) embedded in \(\mathbb {R}^d\), let \(d_{\mathcal {M}}: {\mathcal {M}}\times {\mathcal {M}}\rightarrow \mathbb {R}\) be the geodesic distance on \({\mathcal {M}}\), and \(T_\mathbf {x}{\mathcal {M}}\) and \(N_\mathbf {x}{\mathcal {M}}\) be the tangent space and the normal space of \({\mathcal {M}}\) at point \(\mathbf {x}\in {\mathcal {M}}\), respectively.

Lemma 4

(e.g., [14]) Assume \({\mathcal {M}}\) is a submanifold isometrically embedded in \(\mathbb {R}^d\) with reach \(\sigma >0\). For any two \(\mathbf {x}, \mathbf {y}\) on \({\mathcal {M}}\) with \(|\mathbf {x}-\mathbf {y}| \le \sigma /2\),

Lemma 5

(e.g., [8]) Assume \({\mathcal {M}}\) is a submanifold isometrically embedded in \(\mathbb {R}^d\) with reach \(\sigma >0\). For any two \(\mathbf {x}, \mathbf {y}\) on \({\mathcal {M}}\) with \(|\mathbf {x}-\mathbf {y}| \le \sigma /2\),

Lemma 6

(e.g., [39]) Assume \({\mathcal {M}}\) is a submanifold isometrically embedded in \(\mathbb {R}^d\) with reach \(\sigma >0\). Let N be any local normal vector field around a point \(\mathbf {x}\in {\mathcal {M}}\). Then, for any tangent vector \(Y\in T_\mathbf {x}{\mathcal {M}}\)

where D and \(\langle \cdot , \cdot \rangle \) are the standard connection and the standard inner product in \(\mathbb {R}^d\).

In what follows, assume the hypotheses on \({\mathcal {M}}\) and \(\partial {\mathcal {M}}\) in Proposition 1 hold. We prove the following two lemmas which bound the distortion of certain parametrization, which are used to build the parametrization stated in Proposition 1.

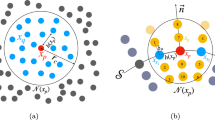

For a point \(\mathbf {x}\in {\mathcal {M}},\), let \(U_\rho = B_\mathbf {x}(\rho \sigma )\cap {\mathcal {M}}\) with \(\rho \le 0.2\). We define the following projection map \({\varPsi }: U_\rho \rightarrow T_\mathbf {x}{\mathcal {M}}= \mathbb {R}^k \) as the restriction to \(U_\rho \) of the projection of \(\mathbb {R}^d\) onto \(T_\mathbf {x}{\mathcal {M}}\). It is easy to verify that \({\varPsi }\) is one-to-one. Then, \({\varPhi }= {\varPsi }^{-1}: {\varPsi }(U_\rho ) \rightarrow U_\rho \) is a parametrization of \(U_\rho \) (see Fig. 1). We have the following lemma which bounds the distortion of this parametrization.

Lemma 7

For any point \(\mathbf {y}\in {\varPsi }(U_\rho )\) and any \(Y\in T_\mathbf {y}(T_\mathbf {x}{\mathcal {M}})\) for any \(\rho \le 0.2\),

Proof

We have \({\varPhi }(\mathbf {y}) = \mathbf {y}- l_T(\mathbf {y}) N_T(\mathbf {y})\) where \(N_T(\mathbf {y}) \perp T_\mathbf {x}{\mathcal {M}}\) for any \(\mathbf {y}\) and \(l_T(\mathbf {y}) = |\mathbf {y}- {\varPhi }(\mathbf {y})|\). So \(D_Y N_T(\mathbf {y}) \perp T_\mathbf {x}{\mathcal {M}}\) for any \(\mathbf {y}\) and any \(Y\in T_\mathbf {y}(T_\mathbf {x}{\mathcal {M}})\). Since \(D_Y {\varPhi }= Y - N_T \left( D_Y l_T\right) - l_T \left( D_Y N_T\right) \), the projection of \(D_Y {\varPhi }\) to \(T_\mathbf {x}{\mathcal {M}}\) is Y. At the same time, \(D_Y{\varPhi }\) is on \(T_{{\varPhi }(\mathbf {y})}{\mathcal {M}}\). Since \(|\mathbf {x}- {{\varPhi }(\mathbf {y})}|\le \rho \sigma \), from Lemma 5, \(\cos \angle T_\mathbf {x}{\mathcal {M}}, T_{{\varPhi }(\mathbf {y})} {\mathcal {M}}\le 1- 2\rho ^2\). This proves the lemma.

To ensure the convexity of the parameter domain \({\varOmega }\) in Proposition 1, we need a different parametrization for the points near the boundary. For a point \(\mathbf {x}\in \partial {\mathcal {M}}\), let \(U_\rho = B_\mathbf {x}(\rho \sigma ) \cap {\mathcal {M}}\) with \(\rho \le 0.1\). We construct a map \(\tilde{{\varPsi }}: U_\rho \rightarrow T_\mathbf {x}\partial {\mathcal {M}}\times \mathbb {R}= \mathbb {R}^k\) as follows. For any point \(\mathbf {z}\in U_\rho \), let \(\bar{\mathbf {z}}\) be the closest point on \(\partial {\mathcal {M}}\) to \(\mathbf {z}\). Such \(\bar{\mathbf {z}}\) is unique. Let \(\mathbf{n }\) be the outward normal of \(\partial {\mathcal {M}}\) at \(\bar{\mathbf {z}}\). The projection P of \(\mathbb {R}^d\) onto \(T_{\bar{\mathbf {z}}} {\mathcal {M}}\) maps \(\mathbf {z}\) to a point on the line \(\ell \) passing through \(\bar{\mathbf {z}}\) with the direction \(\mathbf{n }\). In fact, P projects \(N_{\bar{\mathbf {z}}} \partial {\mathcal {M}}\) onto the line \(\ell \). If let \( V_{\rho _1} = N_{\bar{\mathbf {z}}} \partial {\mathcal {M}}\cap B_{\bar{\mathbf {z}}}(\rho _1 \sigma ) \cap {\mathcal {M}}\) with \(\rho _1 \le 0.2\), P maps \( V_{\rho _1}\) to the line \(\ell \) in the one-to-one manner. Let \(y^k = - (P(\mathbf {z}) - \bar{\mathbf {z}}) \cdot \mathbf{n }\). Think of \(\partial {\mathcal {M}}\) as a submanifold. It is isometrically embedded in \(\mathbb {R}^d\) as is \({\mathcal {M}}\). As \(|\bar{\mathbf {z}} - \mathbf {x}| \le 2|\mathbf {x}- \mathbf {z}| \le 2\rho \sigma \), we apply Lemma 7 by replacing \({\mathcal {M}}\) with \(\partial {\mathcal {M}}\) and obtain the map \({\varPsi }\) that maps \(\bar{\mathbf {z}}\) onto \(T_\mathbf {x}\partial {\mathcal {M}}\). Define \(\tilde{{\varPsi }}(\mathbf {z}) = ({\varPsi }(\bar{\mathbf {z}}), y^k)\). Since both \(P|_{V_{\rho _1}}\) and \({\varPsi }\) are one-to-one, so is \(\tilde{{\varPsi }}\). Then \(\tilde{{\varPhi }} = \tilde{{\varPsi }}^{-1}: \tilde{{\varPsi }}(U_\rho ) \rightarrow U_\rho \) is a parametrization of \(U_\rho \). See Fig. 2. We have the following lemma which bounds the distortion of this parametrization \(\tilde{{\varPhi }}\).

Lemma 8

For any point \((\mathbf {y}, y^k) \in \tilde{{\varPsi }}(U_\rho )\) with \(\rho \le 0.1\) and any tangent vector Y at \((\mathbf {y}, y^k)\),

Proof

Let \(\bar{\mathbf {y}} = {\varPhi }(\mathbf {y}) - y^k \mathbf{n }\). We have \(\tilde{{\varPhi }}(\mathbf {y}, y^k) = {\varPhi }(\mathbf {y}) - y^k \mathbf{n }({\varPhi }(\mathbf {y})) - l_T(\bar{\mathbf {y}}) N_T(\bar{\mathbf {y}})\) where \(N_T(\bar{\mathbf {y}}) \perp T_{{\varPhi }(\mathbf {y})} {\mathcal {M}}\). See Fig. 2. We have

Using the similar strategy of proving Lemma 7, we consider the projection of \(D_Y\tilde{{\varPhi }}(\mathbf {y}, y^k)\) to the space \(T_{\varPhi }(\mathbf {y})M\) to which it is almost parallel. Denote P this projection map. We bound \(P(D_Y\tilde{{\varPhi }}(\mathbf {y}, y^k))\). Let \(Y = (Y^1, \ldots , Y^k)\), \(Y_1 = (Y^1, \ldots , Y^{k-1}, 0)\) and \(Y_2=(0, \ldots , 0, Y^k)\). We have \(D_Y\tilde{{\varPhi }}(\mathbf {y}, y^k) = D_{Y_1}\tilde{{\varPhi }}(\mathbf {y}, y^k) + D_{Y_2}\tilde{{\varPhi }}(\mathbf {y}, y^k)\). First consider each term involved in \(D_{Y_1}\tilde{{\varPhi }}(\mathbf {y}, y^k)\).

-

(i)

\(D_{Y_1}{\varPhi }(\mathbf {y})\) is a vector in \(T_{{\varPhi }(\mathbf {y})}\partial {\mathcal {M}}\), thus \(P(D_{Y_1}{\varPhi }(\mathbf {y})) = D_{Y_1}{\varPhi }(\mathbf {y})\). In addition, from Lemma 7, \(|Y_1|\le \left| D_{Y_1}{\varPhi }(\mathbf {y})\right| \le \frac{1}{1-2\rho ^2}|Y_1|\).

-

(ii)

\(D_{Y_1}\mathbf{n }(\mathbf {y}, y^k) = D_{D_{Y_1}{\varPhi }} \mathbf{n }({\varPhi }(\mathbf {y}))\). First note that \(\mathbf{n }\cdot D_{D_{Y_1}{\varPhi }} \mathbf{n }= 0\). Second, from Lemma 6, we have that the projection of \(D_{D_{Y_1}{\varPhi }} \mathbf{n }\) to the space \(T_{{\varPhi }(\mathbf {y})}\partial {\mathcal {M}}\) is upper bounded by \(\frac{1}{\sigma }\left| D_{Y_1}{\varPhi }\right| \). Since \(|y^k| <\rho \sigma \), \(|P( y^k D_{Y_1}\mathbf{n })| \le \frac{\rho }{1-2\rho ^2}|Y_1|\).

-

(iii)

Consider \(D_{Y_1}N_T(\mathbf {y}, y^k)\). We have \(N_T \perp T_{{\varPhi }(\mathbf {y})}{\mathcal {M}}\). Let \(e_1, \ldots , e_k\) be the orthonormal basis of \(T_{{\varPhi }(\mathbf {y})}{\mathcal {M}}\) so that \(D_{e_i} N_T \cdot e_j = 0\) for \(i\ne j\). Locally extend \(e_1, \ldots , e_k\) to be an orthonormal basis of \(T{\mathcal {M}}\) in a neighborhood of \({\varPhi }(\mathbf {y})\). We have for any \(e_i\)

$$\begin{aligned} \left| D_{Y_1}N_T(\mathbf {y}, y^k) \cdot e_i \right|= & {} \left| D_{D_{Y_1}{\varPhi }} N_T(\bar{\mathbf {y}})\cdot e_i\right| \\= & {} \left| D_{\left( D_{Y_1}{\varPhi }\cdot e_i\right) e_i} N_T(\bar{\mathbf {y}})\cdot e_i\right| \\= & {} \left| D_{\left( D_{Y_1}{\varPhi }\cdot e_i\right) e_i} N_T(\bar{\mathbf {y}})\cdot e_i\right| \\\le & {} \frac{1}{\sigma }|D_{Y_1}{\varPhi }\cdot e_i|, \end{aligned}$$where the last inequality is due to Lemma 6. Moreover, one can verify that \(l_T(\bar{\mathbf {y}}) \le \frac{\rho ^2\sigma }{2}\), which leads to

$$\begin{aligned} \left| P(l_T D_{Y_1}N_t)\right| \le \frac{\rho ^2}{2}|D_{Y_1}{\varPhi }| \le \frac{\rho ^2}{2(1-2\rho ^2)}|Y_1|. \end{aligned}$$ -

(iv)

It is obvious that \(\mathbf{n }D_{Y_1}y^k=P(N_T D_{Y_1}l_T) = 0\).

Next, consider each term involved in \(D_{Y_2}\tilde{{\varPhi }}(\mathbf {y}, y^k)\).

-

(i)

\(\mathbf{n }D_{Y_2}y^k= Y^k \mathbf{n }\), which lies on \(T_{{\varPhi }(\mathbf {y})} {\mathcal {M}}\). Moreover, \(\mathbf{n }\perp D_{Y_1}{\varPhi }(\mathbf {y})\).

-

(ii)

As \(N_T(\mathbf {y}, y^k)\) remains perpendicular to \(T_{{\varPhi }(\mathbf {y})}{\mathcal {M}}\) if we only vary \(y^k\), we have

$$\begin{aligned} P(D_{Y_2}N_t(\mathbf {y}, y^k)) = 0. \end{aligned}$$ -

(iii)

For the remaining terms, we have \(D_{Y_2}{\varPhi }(\mathbf {y}) = y^kD_{Y_2}\mathbf{n }= P(N_T D_{Y_2}l_T) = 0\).

On the other hand, we hand \(D_Y\tilde{{\varPhi }}(\mathbf {y}, y^k)\) lie in the tangent space \(T_{\tilde{{\varPhi }}(\mathbf {y}, y^k)}{\mathcal {M}}\), and

Putting everything together, we have

This proves the lemma.

Now, we are ready to prove Proposition 1

Proof of Proposition 1

First consider the case where \(\mathrm{d}(\mathbf {x}, \partial {\mathcal {M}}) > \frac{\rho }{2}\sigma \). Set \(U' = B_\mathbf {x}(\frac{\rho }{2} \sigma ) \cap {\mathcal {M}}\), and parametrize \(U'\) using map \({\varPhi }: {\varPsi }(U') \rightarrow U'\). Since for any \(\mathbf {y}\in \partial U'\), \(|\mathbf {x}-\mathbf {y}| = \frac{\rho }{2} \sigma \), from Lemma 7, we have that \(B_{{\varPhi }^{-1}(\mathbf {x})}(\frac{\rho }{2(1+\rho )}\sigma )\) is contained in \({\varPsi }(U')\). Set \({\varOmega }= B_{{\varPhi }^{-1}(\mathbf {x})}(\frac{\rho \sigma }{2(1+\rho )})\) and \(U = {\varPhi }({\varOmega })\). This shows the parametrization \({\varPhi }: {\varOmega }\rightarrow U\) satisfies the condition (i). By Lemma 7 and Lemma 4, it is easy to verify that \({\varPhi }\) satisfies the other three conditions.

Next, consider the case where \(\mathrm{d}(\mathbf {x}, \partial {\mathcal {M}}) \le \frac{\rho }{2}\sigma \). Let \(\bar{\mathbf {x}}\) be the closest point on \(\partial {\mathcal {M}}\) to \(\mathbf {x}\). Set \(U' = B_{\bar{\mathbf {x}}}(\rho \sigma )\cap {\mathcal {M}}\) and parametrize \(U'\) using map \(\tilde{{\varPhi }}: \tilde{{\varPsi }}(U')\rightarrow U'\). By Lemma 8, \(\tilde{{\varPsi }}(U')\) contains half of the ball \(B_{\tilde{{\varPhi }}^{-1}(\bar{\mathbf {x}})}(\frac{\rho \sigma }{1+2\rho })\). Let \({\varOmega }\) be that half ball and \(U = \tilde{{\varPhi }}({\varOmega })\). It is easy to verify that the parametrization \(\tilde{{\varPhi }}: {\varOmega }\rightarrow U\) satisfies the condition (iii) and (iv). To see (i), note that \(|\mathbf {x}- \bar{\mathbf {x}}| \le \frac{\rho }{2}\sigma \). From Lemma 8 and Lemma 4, \(\left| \tilde{{\varPsi }}(\mathbf {x}) - \tilde{{\varPsi }}(\bar{\mathbf {x}})\right| \le (1+2\rho )(1+2\rho ^2)|\mathbf {x}- \bar{\mathbf {x}}|\). We have that \({\varOmega }\) contains at least half of the ball centered at \({{\varPhi }}^{-1}(\mathbf {x})\) with radius \((\frac{\rho }{1+2\rho } - \frac{\rho (1+2\rho )(1+2\rho ^2)}{2})\sigma \ge \frac{\rho }{5}\sigma \). This shows that \(\tilde{{\varPhi }}\) satisfies the condition (i). Similarly, the condition (ii) follows from (i) as \(\tilde{{\varPhi }}\) has bounded distortion (Lemma 8) and geodesic distance is bounded by Euclidean distance (Lemma 4).

Proof of Lemma 2

Proof

We start with the evaluation of the \(x^i\) component of \(\nabla v\).

where we set

Think of \(\nabla ^i x^j\) as the i, j entry of the matrix \([\nabla ^i x^j]\) and we have

This shows that the matrix \([\nabla ^i x^j]\) is idempotent. At the same time, \([\nabla ^i x^j]\) is symmetric, which implies that the eigenvalues of \(\nabla \mathbf {x}\) are either 1 or 0. Then, we have the following upper bounds. There exists a constant C depending only on the maximum of R and \(R'\) so that

There exists a constant C independent of t so that

Since R has a compact support, only when \(|\mathbf {y}-\mathbf {y}'|^2 < 16t\) and \(|\mathbf {x}- \frac{\mathbf {y}+\mathbf {y}'}{2}|^2 < 4t\) is \(K^i(\mathbf {x}, \mathbf {y}, \mathbf {y}'; t) \ne 0\). Thus from the assumption on R, we have

We can upper bound the norm of \(\nabla v\) as follows:

Finally, we have

This proves the lemma.

Proof of Lemma 3

Based on the partition and the parametrization of the manifold \({\mathcal {M}}\) introduced in Sect. 5, we have

For any \(\mathbf {x}\in \mathcal {O}_i\) and \(\mathbf {y}\in B_{\mathbf {q}_i}^{2\delta }\), let

Apparently, \(\mathbf {z}_0=\mathbf {x},\;\mathbf {z}_{16}=\mathbf {y}\). Since \({\varOmega }_i\) is convex, we have \({\varPhi }_i^{-1}(\mathbf {z}_j)\in {\varOmega }_i,\;i=0,\ldots ,16\). Then utilizing locally small deformation property of the parametrization, we obtain

Now, we are ready to estimate the integrals in (62).

For any \(\mathbf {y}\in \mathcal {M}_\mathbf {x}^t\),

which implies that

Now, we have

where \(\theta _\mathbf {x}={\varPhi }_i^{-1}(\mathbf {x}),\; \theta _\mathbf {y}={\varPhi }_i^{-1}(\mathbf {y})\).

Let

It is easy to show that \({\varPhi }_i(\theta _{\mathbf {z}_j})=\mathbf {z}_j \in B_{\mathbf {q}_i}^{2\delta },\; j=0,\ldots ,16\) by using the facts that for any \(\mathbf {y}\in \mathcal {M}_\mathbf {x}^t\)

and \(\mathbf {x}\in B_{\mathbf {q}_i}^{\delta }\) and \(15\sqrt{2t}\le \delta \). Then we have

By changing variable, we obtain

Finally, we can prove the lemma as follows.

Proof of Theorem 6

First, we introduce a smooth function \(u_t\) that approximates \(\mathbf{u }\) at the samples P.

where \(w_{t,h}(\mathbf {x})=C_t\sum _{i=1}^nR\left( \frac{|\mathbf {x}-\mathbf{p }_i|^2}{4t}\right) V_i\). We have the following lemma about the function \(w_{t, h}\).

Lemma 9

Assume the submanifold \({\mathcal {M}}\) and \(\partial {\mathcal {M}}\) are \(C^2\) smooth and t, \(\frac{h(P,\mathbf {V},{\mathcal {M}})}{t^{1/2}}\) are sufficiently small. There exists a constant \(C_1, C_2\) and C, so that

Proof

Using the definition of \(h(P,\mathbf {V},{\mathcal {M}})\),

which shows the bounds on \(w_{t, h}(\mathbf {x})\). Next, we show the bound on the gradient.

Now, we are ready to give the proof of Theorem 6.

Proof

In the definition of \(u_t\) and \(w_{t, h}\) in (69), replace t with \(t'=t/18\). We have

Denote

and then notice only when \(|\mathbf{p }_i-\mathbf{p }_j|^2\le 36t'\) is \(A \ne 0\). For \(|\mathbf{p }_i-\mathbf{p }_j|^2\le 36t'\), we have

Combining the above two inequalities and using Lemma 51, we obtain

We now lower bound the RHS of the above equation.

Let \(q(\mathbf {x}) = \frac{C_t'}{w_{t',h}(\mathbf {x})}{R\left( \frac{|\mathbf {x}-\mathbf{p }_j|^2}{4t'}\right) }\). There exists a constant C so that \(|q(\mathbf {x})|\le CC_t' \) and

Then, using the definition of the integral accuracy index, there exists a constant C

Thus, we have

where the first equality is due to \(\sum _{i=1}^n u_iV_i = 0\). Denote

and then \(|A|\le \frac{Ch}{t^{1/2}}\). At the same time, notice that only when \(|\mathbf{p }_i-\mathbf{p }_l|^2 <16t'\) is \(A\ne 0\). Thus, we have

and

Now combining Eqs. (70), (71) and (72), we have for small t

Here, we use the fact that \(t=18t'\) hence

Let \(\delta =\frac{w_{\min }}{2w_{\max }+w_{\min }}\) with \(w_{\min }=\min _\mathbf {x}w_{t,h}(\mathbf {x})\) and \(w_{\max }=\max _\mathbf {x}w_{t,h}(\mathbf {x})\). If

we have completed the proof. Otherwise, we have

This enables us to prove the theorem as follows.

Estimation of \(\Vert \nabla L_t(u_{t}-u_{t, h})\Vert _{L^2({\mathcal {M}})}\)

In this section, we upper bound \(\nabla L_t(u_{t}-u_{t, h})\Vert _{L^2({\mathcal {M}})}\). Remember that \(u_t\) satisfies the integral equation (14) and

where \(\mathbf {u}=(u_1, \ldots , u_n)^t\) with \(\sum _{i=1}^n u_i V_i = 0\) solves the problem (10), \( f_j = f(\mathbf{p }_j)\) and \(w_{t,h}(\mathbf {x})=\sum _{j=1}^nR_t(\mathbf {x},\mathbf{p }_j)V_j\).

\(\nabla L_t(u_{t}-u_{t, h})\Vert _{L^2({\mathcal {M}})}\) is splitted to two terms,

The second term is easy to bound.

The first term is further splitted by defining

To simplify the notation, we denote \(h=h(P,\mathbf {V},{\mathcal {M}})\) and \(n=|P|\). Consider \(\Vert \nabla (L_ta_{t, h} - L_{t, h}a_{t, h})\Vert _{L_2}\).

where \(R_{2t}(\mathbf {x},\mathbf{p }_j)=C_tR\left( \frac{|\mathbf {x}-\mathbf{p }_j|^2}{8t}\right) \). Here, we use the assumption that \(R(s)>\delta _0\) for all \(0\le s\le 1/2\).

Let

We have \(|B|<\frac{Ch}{t^{1/2}}\) for some constant C independent of t. In addition, notice that only when \(|\mathbf {x}-\mathbf {x}_i|^2\le 16t \) is \(B\ne 0\), which implies

Then, we have

Combining Eqs. (74), (75) and (76), we have

Using a similar argument, we obtain

and thus

Then, the estimation is completed by putting (73) and (77) together.

Acknowledgements

Research supported by NSFC Grant 11371220 and 11671005.

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Shi, Z., Sun, J. Convergence of the point integral method for Laplace–Beltrami equation on point cloud. Res Math Sci 4, 22 (2017). https://doi.org/10.1186/s40687-017-0111-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s40687-017-0111-3