Abstract

Purpose

The aim of this study is to present the development of a new technique to obtain 3D models using photogrammetry by a mobile device and free software, as a method for making digital facial impressions of patients with maxillofacial defects for the final purpose of 3D printing of facial prostheses.

Methods

With the use of a mobile device, free software and a photo capture protocol, 2D captures of the anatomy of a patient with a facial defect were transformed into a 3D model. The resultant digital models were evaluated for visual and technical integrity. The technical process and resultant models were described and analyzed for technical and clinical usability.

Results

Generating 3D models to make digital face impressions was possible by the use of photogrammetry with photos taken by a mobile device. The facial anatomy of the patient was reproduced by a *.3dp and a *.stl file with no major irregularities. 3D printing was possible.

Conclusions

An alternative method for capturing facial anatomy is possible using a mobile device for the purpose of obtaining and designing 3D models for facial rehabilitation. Further studies must be realized to compare 3D modeling among different techniques and systems.

Clinical implication

Free software and low cost equipment could be a feasible solution to obtain 3D models for making digital face impressions for maxillofacial prostheses, improving access for clinical centers that do not have high cost technology considered as a prior acquisition.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Background

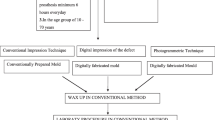

Facial mutilation and defects could derive from cancer, tumors, trauma, infections, congenital or acquired deformation and affect quality of life due to the impact on essential functions such as communication, breathing, feeding and aesthetics [1–5]. Rehabilitation of these patients is possible with adhesive-retained facial prosthetics, implant supported facial prosthetics and plastic surgery [2, 6–12]. Although some aesthetic results can be achieved by plastic surgery [13, 14], frequently this requires multiple attempts which are time consuming and costly [15]. In most cases worldwide, defects of external facial anatomy are primarily treated by prostheses [16, 17]. Still, for the realization of a prosthesis, a highly trained and skilled specialist is required to sculpt a form mimicking the lost anatomy, and to handle the time-consuming technical fabrication process.

To make a facial prosthesis, an impression is required to record the anatomic area of the defect. Some impression materials have demonstrated high and accurate precision registering details of defects and the surrounding anatomy [18–21], but present other difficulties and limitations [22, 23]. Some challenges are related to the technical sensitivity of the material, working time and setting time. Training and experience is needed to handle the materials, especially when working near the airway, and frequently require the assistance of a second professional to help in the procedure. In cases of large facial defects, there is a need to cover all the face which can be claustrophobic for the patient. Also the weight of the materials and the use of cannulas, to allow free airway during the procedure with the mouth opening, can deform the residual facial tissues, causing distortion in the impression [22]. The economic cost of large usage of impression materials is also a topic of concern. A limitation of conventional facial impressions is that they cannot predict information about results of the final rehabilitation because they only register detail of the defect and surrounding tissues.

To address these difficulties of conventional facial impressions, some authors reported [24, 25] clinical cases using Computerized Tomography (CT-Scan) [26, 27], Magnetic Resonance Imaging (MRI) [27, 28], Laser impressions [27, 29–32] and 3D photography [33, 34] to record extra-oral digital impressions. Digital impressions are also used to print working models [34], design prostheses digitally by mirroring from a healthy side [36], digitally capturing structures from a healthy donor patient [37], for designing templates of the final prostheses and prototyping it, or to design a prototype model of the flask where the silicone is directly packaged [31, 38]. These reports represent a viable way to rehabilitate patients in less time, with more effectiveness, improved accuracy and less effort by the patient and the professional. However the use of such technologies can produce even higher costs in software, hardware or other equipment. Different authors have sought alternatives to transform these impressions with low cost solutions [38], but there is still no consensus nor a concept widely accepted.

Among all the possible methods for 3D surface imaging and data acquisition, 3D photogrammetry is attractive for its capacity to obtain 3D models from 2D pictures, the capture and process speed, absence of radiation for patient, good results and non-complex training [39–41]. 3D photography is performed by a method called photogrammetry, that emerged from radiolocation, multilateration and radiometry and it has been used since the mid-19th century in industries of space, aeronautics, geology, meteorology, geography, tourism, and entertainment. More recently, applications in general medicine have been reported. Photogrammetry allows “Structure from Motion” (SFM) where the software examines common features in each image and is able to construct a 3D form from overlapping features, by a complex algorithm that minimizes the sum of errors over the coordinates and relative displacements of the reference points. This minimization is known as “bundle adjustment” and is often performed using the Levenberg-Marquardt algorithm. Photogrammetry can be used in a stereophotogrammetry technique, where all captures are made simultaneously by different cameras at different heights and angles relative to the object/subject; or, by a monoscopic technique, where only one camera is used to do sequential captures at different heights and angles from to the object/subject [39–41]. This industry has developed different products and systems for simplifying the clinical application obtaining increasingly better results. On the other hand, this technology demands high costs for hardware, software and infrastructure and may not be possible for many centers worldwide.

Alternatives for expensive photogrammetry are free software that can be used by computers, tablets and other mobile devices to generate 3D models from 2D pictures by similar methods (Autodesk 123D Catch®, California, US) [42, 43]. Initially, the target of these software was entertainment and other non-medical use. Recently Mahmoud [42] used this free software for medical educational reasons and Koban [43] for making an evaluation and analysis for plastic surgery planning on a plastic mannequin. To the authors best knowledge, monoscopic photogrammetry has not been published for facilitating the process of fabrication of facial prostheses in humans, by adapting this low cost technology into a clinical solution. The possibility to decrease the cost of fabrication of facial prostheses with the use of mobile devices and free software would warrant investigation for the benefit of most parts of the world.

The incorporation of technology into the fabrication process of facial prostheses has the potential to transform the rehabilitation, from a time-consuming artistically driven process to a reconstructive biotechnology procedure [24]. One of the methods for surface data acquisition and 3D modeling is 3D photography (photogrammetry) that has been used in medical sciences since 1951[44–46]. In recent years, techniques and methods have been improving to the benefit of the surgical and prosthetic team [47, 48]. Technical validation and evaluation of sophisticated photogrammetry systems have reported beneficial applications in facial prosthetic treatment [49–57]. 3D photography has been a practical solution in clinical practice compared with other 3D model obtaining methods (MRI, CT-Scan & Laser) [26–37, 58–64]. Still this technology requires substantial investment in infrastructure, hardware and software for clinical practice [65, 66]. For this reason, some authors have pursued low cost processes for fabrication of facial prosthetics [38], with the use of free software and the monoscopic photogrammetry technique with mobile devices [42, 43].

Methods

Patient selection

One subject, who attended the Maxillofacial Prosthetic Clinic of the Universidade Paulista in São Paulo for prosthetic rehabilitation, was selected after being advised about ethical aspects of the research and freely accepted to participate. Informed consent was obtained from the patient.

Data acquisition

Subject and operator positioning

The subject was positioned in a 45 cm-high chair in an upright seated position, with 1 meter of floor space between the chair and the position of the operator with 0° – 180° of clearance laterally, where 90° was the primary area of interest to capture. Floor clearance allowed sufficient room for the operator to move around the subject during the capture process. An adjustable-height (30 cm to 50 cm) chair with wheels for mobility was used by the operator. Earrings, hats, glasses or other accessories that could interfere with the area of capture were removed from the subject prior to photo capture. The subject was instructed to remain still in order to eliminate balance movement and maintain the head in an orthostatic position with the Frankfurt plane parallel to the floor. If balance of the head was detected after giving the instruction of not moving, a head support was used between the head and a wall. The subject was also instructed to: maintain a neutral facial expression, with jaw and lips closed without force (maximal intercuspal occlusion); to wear his intraoral removable prostheses for giving support to the facial tissues; and, to blink between photographs repeating the same eye position. Visual color contrast between the background and the colors of the skin and hair of the subject was established. A clinical measurement of the inter-alar nasal distance was registered for further scale verification.

Lighting

Sufficient lighting in the room was ensured such that the ambient light enabled taking clear images without flash and without underexposing or overexposing images. Lights of the room, blinds and curtains of the windows were opened and orientation of the ambient light was considered to avoid getting shadows on the area of interest through the process of capture. Irregular lighting was avoided, like strong back-lighting and direct, intense light to the subject. Objects with strong reflective or shiny surfaces were eliminated from the camera’s field of view during the photo capture process.

Mobile device and application

An internet Wi-Fi 5Ghz network connection was used. A free photogrammetry application (Autodesk 123D Catch®, California, US) was downloaded by a mobile device (Samsung Galaxy Note 4® - Seoul, South Korea) through the Android® Google Playstore® (California, US). A 123D Catch® and a free account was created. All automatic features of the mobile device were enabled as needed by the application for the data acquisition process. Features of the mobile device are outlined in (Table 1). 123D Catch® PC version was also downloaded in a Windows PC (Dell Inspiron 1525 Dual Core).

Photo capture

The photogrammetry application was opened from the mobile device and new capture was selected by pressing the “+” button in the upper right corner. A planned sequence of 15 conventional 2D photos were taken, always with the area of interest for capturing as the center of the picture and with the operator maintaining a 30 cm distance between his eyes and the mobile device, raising it up to his same eye-height position. Photo captures were taken at three different heights. The first height (H1) was the standup-height of the operator (1.75 m) with the mobile device at 1.50 m of height from the floor. (Figure 1a) The second height (H2) was with the operator seated on the moveable chair at its maximum adjustable height (50 cm) and maintaining the mobile device at 1.25 m from the floor. (Figure 1b) The third height (H3) was with the operator seated on the same chair at its lowest adjustable height (30 cm) with the mobile device at 1 m of height above the floor. (Figure 1c) Each height repeated the same positions for taking the photo captures and was taken at the 0°, 45°, 90°, 135° and 180°, considering 0° as subject’s right side, 90° as the midline of the face and 180° as the subject’s left side (Fig. 2). All photo captures were perpendicular to the primary area of interest. The operator took the first picture starting from H1-0° at a one meter distance from the subject. The complete sequence was H1-0°, H1-45°, H1-90°, H1-135° H1-180°, H2-180°, H2-135°, H2-90°, H2-45°, H2-0°, H3-0°, H3-45°, H3-90°, H3-135° and H3-180°, completing the 15 photo captures (Fig. 3). For photo capture, the “autofocus” was used at the center of the area of interest, avoiding blurry photographs. The “position-in-space-recognition gadget” of the application was used to guide the position of photo captures and to register total numbers of photos recorded in the process (Fig. 4). Following the photo capture, the operator reviewed the integrity of each picture, verifying that there were no illumination irregularities, blurry images, incomplete parts of the face of the subject or any other evident errors in the picture that would compromise data processing. After ensuring the good quality of the photo captures, the subject was released from his static position and the “check” button was pressed for uploading the pictures for processing.

a. Simulation of the Height 1, where the operator is at a stand up height and maintain the mobile device 30 cm from his head, 1.5 m from the floor and 1 meter from the patient. b. Simulation of the Height 2, where the operator sits on the higher height of the chair with wheels and maintain the mobile device 30 cm from his head, 1.25 m from the floor and 1 meter from the patient. c. Simulation of the Height 3, where the operator sits on the lower height of the chair with wheels and maintain the mobile device 30 cm from his head, 1 m from the floor and 1 meter from the patient

Photo capture review and 3D processing

When all 15 photo captures were taken, the “check” button in the upper right corner of the application was pressed and captures were shown in the visor to be reviewed and approved with another pressing of the “Check” button. The application started automatically to upload and process the captures into the 123D Catch® servers. Once finished, the digital model was reviewed through the mobile device to verify its integrity.

All photo captures taken by the mobile device were downloaded from 123D Catch® website and meshed through the 123D Catch® PC version with the maximum quality of meshing. A *.3Dp and *.stl files were obtained. The *.3Dp file was opened and reviewed from 123D Catch® PC version for primary analyzing and the *.stl file was opened and edited from Autodesk Meshmixer (California, US). Editing in Meshmixer® only considered model repositioning in space (x-y-z axis transform tool) into a straight position, deleting triangles beyond the face and re-scaling model into the inter-alar nasal distance that had been clinically registered. 360° degrees observation and in all x-y-z axis angles for descriptive analysis was performed and the model of the face of the patient was printed in Duraform Polyamide C15 degraded material by a Sinterstation HiQ by Selective Laser Sinterization (SLS) (3D Systems, Rockhill SC, USA).

Results

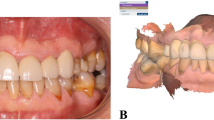

With the use of 123D Catch® mobile device application using the described photo capture protocol, fifteen two-dimensional colored photo captures were obtained in *.jpeg file format. Automatically, according to the mobile device camera features, sizes of photo captures varied from 4710 kb to 5931 kb with an average size of 5118 kb. Revision of the captured photos before processing detected that all captures were compatible with the protocol (Fig. 5). The revision of the created digital model through the mobile device before downloading found no major irregularities which could interrupt the process (Fig. 6).

Digital model and photo captures were downloaded from the Autodesk webpage. Photo captures were re-processed in high quality through the 123D Catch® PC version (Fig. 7). The combined use of 123D Catch® mobile device application and pc version created high quality *.3Dp and *.stl files from the 15 individual 2D photographs, with file sizes of *.3Dp and *.stl of 5 kb and 39,918 kb respectively (Fig. 8a, b).

By the use of Meshmixer®, it was possible to manually eliminate the triangles beyond the head, to reposition in space and to scale the digital model. This final manipulated digital model obtained appropriately represented the shape and proportions of the original face of the patient, leading to a printed polyamide model which also showed similarity of representation; although, some minor irregularities were detectable in the surface of eyebrows, hair and lateral sides of the patient (Figs. 8b and 9).

Discussion

This study aimed to develop a technique to obtain 3D models by mobile device photogrammetry and the use of free software as a method for making facial impressions of patients with facial defects for the final purpose of 3D printing of facial prostheses. For this purpose a patient that voluntarily accepted to participate in the study was submitted to the proposed protocol and methods. Captures were taken by the use of 123D Catch through a mobile device by a controlled sequence, illumination and position of the operator and patient.

The rational for using a cellphone for making photo captures through the 123D Catch® application was that all modern mobile devices have an integrated accelerometer and a gyroscope sensor, which are automatically run by the application to guide the operator in a 3D position during the photo capture sequence. Also in today’s market, mobile devices are equipt with faster processors, fast network and connection qualities, high quality cameras and added features, (Table 1), at a reasonable cost to the consumer as a personal tool, and not as a clinical equipment. Monoscopic photogrammetry has been used with different kinds of cameras like SLR, prosumer, point and shoot, mobile devices and others, principally for non-medical reasons [67], but also recently, for medical purposes [42, 43].

Developers of 123D Catch® published through their web tutorials some general indications for the photo capture process, and for that reason, in the present study, a standardized sequence protocol of photo capturing was designed into a user-friendly sequence, which satisfies both the requirements of 123D Catch® and the clinical needs for maxillofacial rehabilitation. The most important considerations are sequence and orientation of capture, illumination, subject and operator positioning and clinical measurement of a stable reference of the subject. This free photogrammetry application recognizes patterns between captures that have more than 50 % of overlap between each capture [67]. For this reason, it was decided to make a sequence with 45° degree intervals between captures at each height, demonstrating acceptable results in the meshing process. If the illumination pattern is different between each capture, or the subject does not keep still during captures, or if photos are taken randomly or arbitrarily, the photo capture overlapping by the algorithm may not be possible, and will show defects, affecting the viability of using the model. It is for this reason that the flash is not used, and rotating the patient on his own axis is not recommended. Flash will generate its own pattern between each capture, and if the patient is rotated on his own axis during capture, the illumination pattern over the patient and background will differ among captures and will be unreadable by the software [67]. The ideal is to complete multiple captures, as stereophotogrammetry does, while maintaining the position of the patient during the complete sequence of photo captures, one by one, with consistent conditions of ample indirect ambient light. The position of the operator is equally important to allow capture of the entire area of interest without losing detail from too great a distance, or producing shadows by being too close to the patient. One meter of distance between the subject and the camera is compatible with aforementioned technical requirements. Distance and position are important in the capture protocol, but absolute exactness is not critical since the application still recognizes patterns with consistent light reflection [67]. Currently, no information is available about a tolerance of acceptable variance in photo capture, and how this might impact the meshing process. While there are not objective protocols for evaluating the model, the clinician must subjectively evaluate the model to see if it is below a threshold of being usable. The time-consuming process of photo capture is prone to have some irregularities [43]. In this workflow, the 3D position of the reproduced anatomy is a very well startup for sculpture. All possible errors and small texture details may not have much importance because the digital model of the prostheses will serve to produce a prototype that will be duplicated in a wax for final handwork to obtain a sculpture with finishing details, texture, and adaptation into the patient. That’s why small digital discrepancies on surface will not affect the final result of the definitive prosthesis. Actual technology, neither the expensive stereophotogrammetry systems, have not the enough imaging detail to reproduce skin texture, expression lines of the patient or others, resulting in a mandatory handwork finishing sculpture. A clinical measurement is needed for registration because 123D Catch® generates a reduced model and this is not unexpected since the application was meant for entertainment and desktop 3D printing objectives. Subsequently, scaling is required and a reliable, stable distance must be used. In our subject, the inter-alar distance of the nose was used. In other patients that have both eyes, the inter-canthal or inter-pupilar distance could be to ensure stable measurement. Small ruler or fiducial markers fixed on the patient could be used for registration and scaling purposes.

Once the models were obtained (*.3Dp & *.stl), *.3Dp models showed good appearance in color and proportions of the subject through the 123D Catch® mobile device app and PC version. The *.3Dp file was useful only by this application but can be exported as other file types like *.obj or *.stl. Alternatively, multiple file types can be directly downloaded from the web, as was done in this study. Reviewing this file on the PC version provides the colored model, which can be helpful to show to the patient, and for explanation and education of the anatomy and planning. It also provides an indication of the quality of the meshing. If substantial errors were found in this step, they were more evident in the *.stl version. Through the PC version of 123D Catch, it is possible to press the “print” button and that will take you to the *.stl in Meshmixer®, or it is possible to open the *.stl file directly from Meshmixer® as was done in the present study. Once opened the model needed to be up righted, repositioned, and rescaled according to the clinical measurement previously recorded. It was then edited to eliminate all the background and body parts of the model, which are beyond the area of interest for capture.

In the present study the models generated by the mobile device were not used directly for 3D printing. Instead, the captures made by the mobile device were meshed through the PC version of 123D Catch®. They showed better results in the surface of the models virtually and were therefore selected for printing purposes. Further studies should be conducted to better evaluate the accuracy of the respective virtual models. The PC version of 123D Catch® has an option to re-mesh the model with higher quality than the originally configured application for mobile devices. The application was not originally created for medical purposes, but rather, for more simplified CAD designs; complex organic shapes of anatomical models represent a heavier burden for mobile applications, and would run more slowly on smartphones [67].

This *.stl file showed a very acceptable replica of the anatomy of the patient. Once it was re-scaled and printed it showed that it subjectively met the needs for facial prosthetic fabrication, but further studies are needed to evaluate the precision and accuracy of this process.

While not a part of the objective of this study, once an *.stl of the patient is acquired through this process, that sufficiently recreates the anatomy, a digital prostheses design is possible. This is possible through the manipulation of the healthy side of the patient by selecting, isolating, duplicating, mirroring, transforming, editing and sculpting up to have an adequate adaptation of the prostheses model using Meshmixer®. The virtually designed prosthesis model would need to be extruded to provide a volume from the surface data, to produce the final prosthesis design for printing.

Mahmoud, et al., demonstrated that three-dimensional printing of human anatomic pathology specimens is achievable by the use of 123D Catch® and recognize that advances in 3D printing technology may further improve [42]. Koban et al. founded in a comparison between Vectra® and 123D Catch® on a labeled plastic mannequin head with landmarks, that no significant (p > 0.05) difference was found between manual tape measurement and digital distances from 123D Catch® and Vectra®. Also they describe that sufficient results for the 3D reconstruction with 123D Catch® is possible with 16, 12 and 9 photo captures, but with higher deviations on lateral units than in central units. Also they found that 123D Catch® needed 10 minutes on average to capture and compute 3D models (5 times more than Vectra) [43]. The present study obtained similar results in the lateral views of our models, with more irregularities compared to the primary area of interest to be captured (center of the face). This phenomenon could be associated with less intersection of overlapping triangles in those areas which are not the primary area of interest to be captured. Time was not measured as a variable of our study, but we experienced that during the automatic software uploading, meshing and downloading process, the operator’s attention could be dedicated to other tasks.

While the technology process does not print the final adapted prosthesis, some small errors in the surface of the model are acceptable, because a finishing work by hand on the wax replica of the prototyped prosthesis will be done chairside which will eliminate any “stair-stepping” from printing, ensuring appropriate adaptation to the skin surface and applying naturalistic surface texture. While finishing work in the clinic and laboratory is still required, this protocol provides a very helpful advancement in the macro-sculpture of the prosthesis, which can be tested and adapted as needed directly on the patient.

Prolonged capture time with multiple pictures is prone to errors [43] and it is for this reason that standardizing a photo capture protocol for data capture and processing is essential. A standardized photo capture protocol will simplify the process of capture-to-print-prototyping (CPP). 123D Catch® computed models suggest good accuracy of the 3D reconstruction for a standard mannequin model [43] and so is demonstrated in this study for a maxillofacial prosthetic patient.

Conclusion

It was possible to generate 3D models as digital face impressions with the use of monoscopic photogrammetry and photos taken by a mobile device. Free software and low-cost equipment are a feasible alternative for capturing patient facial anatomy for the purpose of generating physical working models, designing templates for facial prostheses, improving communication with patients before and during treatment and improving access to digital clinical solutions for clinical centers that do not have high cost technology allowances in their budget. Further studies are needed to evaluate quality variables of these models. Clinical data capture protocols like the one described in this report must be validated clinically to optimize the process of data acquisition.

References

Jankielewicz I et al. Prótesis Buco-maxilo-facial. Quintessence: Barcelona; 2003.

Alvarez A et al. Procederes básicos de laboratorio en prótesis bucomaxilofacial 2da ed. La Habana: Editorial CIMEQ; 2008.

Mello M, Piras J, Takimoto R, Cervantes O, Abraão M, Dib L. Facial reconstruction with a bone-anchored prosthesis following destructive cancer surgery. Oncol Lett. 2012;4(4):682–4.

Karakoca S, et al. Quality of life of patients with implantretained maxillofacial prostheses: A prospective and retrospective study. J Prosthet Dent. 2013;109:44-52

Pekkan G, Tuna SH, Oghan F. Extraoral prostheses using extraoral implants. Int J Oral Maxillofac Surg. 2011;40:378–83.

Machado L et al. Intra and Extraoral Prostheses Retained by Zygoma Implants Following Resection of the Upper Lip and Nose. J Prosthodont. 2015;24:172–7.

Ashab Yamin MR, Mozafari N, Mozafari M, Razi Z. Reconstructive Surgery of Extensive Face and Neck Burn Scars Using Tissue Expanders. World J Plast Surg. 2015;4(1):40–9.

Zhang R. Ear reconstruction: from reconstructive to cosmetic. Zhonghua Er Bi Yan Hou Tou Jing Wai Ke Za Zhi. 2015;50(3):187–91.

Kang SS et al. Rib Cartilage Assessment Relative to the Healthy Ear in Young Children with Microtia Guiding Operative Timing. Chin Med J. 2015;128(16):2209–14.

Liu T, Hu J, Zhou X, Zhang Q. Expansion method in secondary total ear reconstruction for undesirable reconstructed ear. Ann Plast Surg. 2014;73(S1):S49–52.

Thiele OC et al. The current state of facial prosthetics - A multicenter analysis. J Craniomaxillofac Surg. 2015;15:130–4.

Ariani N et al. Current state of craniofacial prosthetic rehabilitation. Int J Prosthodont. 2013;26(1):57. retrospective study. J Prosthet Dent. 2013;109:44–52.

Hickey A, Salter M. Prosthodontic and psychological factors in treating patients with congenital and craniofacial defects. J Prosthet Dent. 2006;95:392–6.

Vidyasankari N, Dinesh R, Sharma N, Yogesh S. Rehabilitation of a Total Maxillectomy Patient by Three Different Methods. J Clin Diagn Res. 2014;8(10):12–4.

Negahdari R et al. Rehabilitation of a Partial Nasal Defect with Facial Prosthesis: A Case Report. J Dent Res Dent Clin Dent Prospects. 2014;8(4):256–9.

Granström G. Craniofacial osseointegration. Oral Dis. 2007;13:261–9.

Martins M et al. Extraoral Implants in the Rehabilitation of Craniofacial Defects: Implant and Prosthesis Survival Rates and Peri-Implant Soft Tissue Evaluation. J Oral Maxillofac Surg. 2012;70:1551–7.

Kusum CK et al. A Simple Technique to Fabricate a Facial Moulage with a Prefabricated Acrylic Stock Tray: A Clinical Innovation. J Indian Prosthodont Soc. 2014;14(S1):341–4.

Alsiyabi AS, Minsley GE. Facial moulage fabrication using a two-stage poly (vinyl siloxane) impression. Prosthodont. 2006;15(3):195–7.

Moergeli Jr JR. A technique for making a facial moulage. J Prosthet Dent. 1987;57(2):253.

Taicher S, Sela M, Tubiana I, Peled I. A technique for making a facial moulage under general anesthesia. J Prosthet Dent. 1983;50(5):677–80.

Lemon JC, Okay DJ, Powers JM, Martin JW, Chambers MS. Facial moulage: the effect of a retarder on compressive strength and working and setting times of irreversible hydrocolloid impression material. J Prosthet Dent. 2003;90(3):276–81.

Pattanaik S, Wadkar A. Rehabilitation of a Patient with an Intra Oral Prosthesis and an Extra Oral Orbital Prosthesis Retained with Magnets. J Indian Prosthodont Soc. 2012;12(1):45–50.

Davis BK. The role of technology in facial prosthetics. Curr Opin Otolaryngol Head Neck Surg. 2010;18(4):332–40.

Grant GT. Digital capture, design, and manufacturing of a facial prosthesis: Clinical report on a pediatric patient. J Prosthet Dent. 2015;114(1):138–41.

Ting Jiao T et al. Design and Fabrication of Auricular Prostheses by CAD/CAM System. Int J Prosthodont. 2004;17:460–3.

Coward T et al. A Comparison of Prosthetic Ear Models Created from Data Captured by Computerized Tomography, Magnetic Resonance Imaging, and Laser Scanning. Int J Prosthodont. 2007;20:275–85.

Coward T et al. Fabrication of a Wax Ear by Rapid-Process Modeling Using Stereolithography. Int J Prosthodont. 1999;12:20–7.

Yoshiok F et al. Fabrication of an Orbital Prosthesis Using a Non-contact Three-Dimensional Digitizer and Rapid-Prototyping System. J Prosthodont. 2010;19:598–600.

Cheah CM et al. Integration of Laser Surface Digitizing with CAD/CAM Techniques for Developing FacialProstheses. Part 1: Design and Fabrication of Prosthesis Replicas. Int J Prosthodont. 2003;16:435–41.

Cheah CM et al. Integration of Laser Surface Digitizing with CAD/CAM Techniques for Developing Facial Prostheses. Part 2: Development of Molding Techniques for Casting Prosthetic Parts. Int J Prosthodont. 2003;16:543–8.

Tsuji M et al. Fabrication of a Maxillofacial Prosthesis Using a Computer-Aided Design andManufacturing System. J Prosthodont. 2004;13:179–83.

Sabol J et al. Digital Image Capture and Rapid Prototyping. J Prosthodont. 2011;20:310–4.

Kimoto K, Garrett NR. Evaluation of a 3D digital photographic imaging system of the human face. J Oral Rehabil. 2007;34:201–5.

Chen LH et al. A CAD/CAM Technique for Fabricating Facial Prostheses: A Preliminary Report. IntJ Prosthodont. 1997;10:467–72.

Feng Z et al. Computer-assisted technique for the design and manufacture of realistic facial prostheses. Br J Oral Maxillofac Surg. 2010;48:105–9.

Ciocca L et al. Rehabilitation of the Nose Using CAD/CAM and Rapid Prototyping Technology After Ablative Surgery of Squamous Cell Carcinoma: A Pilot Clinical Report. Int J Oral Maxillofac Implants. 2010;25:808–12.

He Y, Xue GH, Fu JZ. Fabrication of low cost soft tissue prostheses with the desktop 3D printer. Sci Rep. 2014;27:1–4.

Feng ZH et al. Virtual Transplantation in Designing a Facial Prosthesis for Extensive Maxillofacial Defects that Cross the Facial Midline Using Computer-Assisted Technology. Int J Prosthodont. 2010;23:513–20.

Heike CL et al. 3D digital stereophotogrammetry: a practical guide to facial image acquisition. Head Face Med. 2010;6:18.

Runte C et al. Optical Data Acquisition for Computer-Assisted Design of Facial Prostheses. Int J Prosthodont. 2002;15:129–32.

Mahmoud A, Bennett M. Introducing 3-Dimensional Printing of a Human Anatomic Pathology Specimen: Potential Benefits for Undergraduate and Postgraduate Education and Anatomic Pathology Practice. Arch Pathol Lab Med. 2015;139(8):1048–51.

Koban KC et al. 3D-imaging and analysis for plastic surgery by smartphone and tablet: an alternative to professional systems? Handchir Mikrochir Plast Chir. 2014;46(2):97–104.

Nyquist V, Tham P. Method of measuring volume movements of impressions, model and prosthetic base materials in a photogrammetric way. Acta Odontol Scand. 1951;9:111.

Savora BS. Application of photogrammetry for quantitative study of tooth and face morphology. Am J Phys Anthropol. 1965;23:427.

Adams LP, Wilding JC. A photogrammetric method for monitoring changes in the residual alveolar ridge form. J Oral Rehabil. 1985;12:443–50.

Fernández-Riveiro P et al. Angular photogrammetric analysis of the soft tissue facial profile. Eur J Orthod. 2003;25:393–9.

Charles W et al. Imaging of maxillofacial trauma: Evolutions and emerging revolutions. Oral Surg Oral Med Oral Pathol Oral Radiol Endod. 2005;100:S75–96.

Artopoulos A, Buytaert J, Dirckx J, Coward T. Comparison of the accuracy of digital stereophotogrammetry and projection moire profilometry for three-dimensional imaging of the face. Int J Oral Maxillofac Surg. 2014;43:654–62.

Winder RJ. Technical validation of the Di3D stereophotogrammetry surface imaging system. Br J Oral Maxillofac Surg. 2008;46:33–7.

Wong JY et al. Validity and reliability of 3D craniofacial anthropometric measurements. Cleft Palate Craniofac J. 2008;45(3):233.

Plooij JM et al. Evaluation of reproducibilit y and reliability of 3D soft tissue analysis using 3D stereophotogrammetry. Int J Oral Maxillofac Surg. 2009;38:267–73.

Kochel J et al. 3D Soft Tissue Analysis – Part 1: Sagittal Parameters. J Orofac Orthop. 2010;71:40–52.

Kochel J et al. 3D Soft Tissue Analysis – Part 2: Vertical Parameters. J Orofac Orthop. 2010;71:207–20.

Menezes M et al. Accuracy and Reproducibility of a 3-Dimensional Stereophotogrammetric Imaging System. J Oral Maxillofac Surg. 2010;68:2129–35.

Verhoeven TJ, et al. Three dimensional evaluation of facial asymmetry after mandibular reconstruction: validation of a new method using stereophotogrammetry. Int J Oral Maxillofac Surg. 2013;42(1):19-25.

Dindaroğlu F, Kutlu P, Duran GS, Görgülü S, Aslan E. Accuracy and reliability of 3D stereophotogrammetry: A comparison to direct anthropometry and 2Dphotogrammetry. Angle Orthod. 2015;12 [Epub ahead of print].

Wu G, Bi Y, Zhou B, et al. Computer-aided design and rapid manufacture of an orbital prosthesis. Int J Prosthodont. 2009;22:293.

Ciocca L, Fantini M, Marchetti C, et al. Immediate facial rehabilitation in cancer patients using CAD/CAM and rapid prototyping technology: a pilot study. Support Care Cancer. 2009. doi:10.1007/s000520-009-0676-5 [Epub ahead of print].

Ciocca L, Scotti R. CAD–CAM generated ear cast by means of a laser scanner and rapid prototyping machine. J Prosthet Dent. 2004;92:591–5.

Rudman K, Hoekzema C, Rhee J. Computer-assisted innovations in craniofacial surgery. Facial Plast Surg. 2011;27(4):358-65.

Turgut G, Sacak B, Kiran K, Bas L. Use of rapid prototyping in prosthet auricular restoration. J Craniofac Surg. 2009;20:321–5.

Ciocca L. CAD–CAM construction of a provisional nasal prosthesis after ablative tumor surgery of the nose: a pilot case report. Eur J Cancer Care (Engl). 2009;18:97–101.

Guofeng W et al. Computer-Aided Design and Rapid Manufacture of an Orbital Prosthesis. Int J Prosthodont. 2009;22:293–5.

Tzou CH et al. Comparison of three-dimensional surface-imaging systems. Reconstructive & Aesthetic Surgery: Journal of Plastic; 2014. http://dx.doi.org/10.1016/j.bjps.2014.01.003.

Menezes M, Sforza C. Three-dimensional face morphometry. Dental Press. 2010;15(1):13–5.

Autodesk. 123D Catch Tutorials [Internet]. USA: 123D Catch Tutorials; 2015. Available from: http://www.123Dapp.com/howto/catch.

Acknowledgements

The authors acknowledge the close collaboration of all the professionals of the “Centro Tecnológico Da Informação Renato Archer” which have collaborated in the design and printing for this project: Amanda Amorin, Paulo Inforçatti, Marcelo Oliveira, Dr. Augusto Oliveira, Ana Cláudia Matzenbacher, and the large team. Also to Dra. Crystianne Signiemartin and Dr. Joaquim Piras De Oliveira who guide the maxillofacial rehabilitation needs of our patient.

Funding

No grant was required for this work. It was supported by own resources of the authors and the printing model was donated by the Centro Tecnológico Renato Archer as partnership in research.

Ethical approval

This article does not contain clinical procedures on a human, but contain the image of a human participant. Only photo captures were taken from him, after he was fully informed about the clinical implication of our study. He voluntarily accepted to participate.

Informed consent

Formal consent was obtained from the patient to use his image and to submit and publicate this article.

Competing interests

The authors are not part of Autodesk. Author Rodrigo Salazar-Gamarra declares that he has no conflict of interest, Rosemary Seelaus declares that he has no conflict of interest, Jorge Vicente Lopes da Silva declares that he has no conflict of interest, Airton Moreira da Silva declares that he has no conflict of interest, Luciano Lauria Dib declares that he has no conflict of interest.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated.

About this article

Cite this article

Salazar-Gamarra, R., Seelaus, R., da Silva, J.V.L. et al. Monoscopic photogrammetry to obtain 3D models by a mobile device: a method for making facial prostheses. J of Otolaryngol - Head & Neck Surg 45, 33 (2016). https://doi.org/10.1186/s40463-016-0145-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s40463-016-0145-3