Abstract

Animal behavioural responses to the environment ultimately affect their survival. Monitoring animal fine-scale behaviour may improve understanding of animal functional response to the environment and provide an important indicator of the welfare of both wild and domesticated species. In this study, we illustrate the application of collar-attached acceleration sensors for investigating reindeer fine-scale behaviour. Using data from 19 reindeer, we tested the supervised machine learning algorithms Random forests, Support vector machines, and hidden Markov models to classify reindeer behaviour into seven classes: grazing, browsing low from shrubs or browsing high from trees, inactivity, walking, trotting, and other behaviours. We implemented leave-one-subject-out cross-validation to assess generalizable results on new individuals. Our main results illustrated that hidden Markov models were able to classify collar-attached accelerometer data into all our pre-defined behaviours of reindeer with reasonable accuracy while Random forests and Support vector machines were biased towards dominant classes. Random forests using 5-s windows had the highest overall accuracy (85%), while hidden Markov models were able to best predict individual behaviours and handle rare behaviours such as trotting and browsing high. We conclude that hidden Markov models provide a useful tool to remotely monitor reindeer and potentially other large herbivore species behaviour. These methods will allow us to quantify fine-scale behavioural processes in relation to environmental events.

Similar content being viewed by others

Introduction

Monitoring animal behaviour enables a better understanding of animal behavioural ecology in an evolutionary context e.g., inter- and intra-specific interactions such as competition and population dynamics [1]. Investigation of fine-scale animal behaviour can improve the understanding of animals’ functional response to the environment [2] and provide an important indicator of animal welfare [3]. Initial responses to stressors related to changes in animal management or environment are often behavioural and can provide the first indications of stress or impaired health of an individual [4, 5]. Tri-axial acceleration sensors have been frequently used to study fine-scale animal behaviours in both wild [6, 7] and domesticated species [8, 9]. As an example, many studies have successfully been able to classify foraging behaviour in a range of species such as harbour seals (Phoca vitulina), arctic ground squirrels (Urocitellus parryii) and roe deer (Capreolus capreolus) [10,11,12]. However, each sensor type needs validation to confirm and quantify its capacity to accurately classify specific species behaviours.

Reindeer and caribou (Rangifer tarandus) is a key species inhabiting the circumpolar north [13]. With the rapid and extreme climate and environmental change going on in the arctic and subarctic regions, there is a need to understand how this affects reindeer behaviour. Reindeer are ruminants of an intermediate, opportunistic feeding type [14], mainly feeding on fresh herbal plants and graminoids and to some degree browsing shrubs and trees in summer and adapted to feed mainly on ground and arboreal lichens in winter [15]. Knowledge of reindeer fine-scale grazing could for example reveal reindeer's ability to suppress the increased growth of woody taxa in the arctic [16, 17] and their ability to search for lichens [15]. Domesticated reindeer in free-roaming systems provide an excellent opportunity to validate accelerometers for Rangifer taxon aligning specific behaviours to the accelerometer data. Acceleration sensors have previously been used on reindeer and caribou for estimation of activity patterns [18,19,20], but have not yet been validated for prediction of fine-scale foraging behaviour.

The acceleration (in m/s2 or G-forces (g)) measured by a sensor in three dimensions (X, Y and Z) [Reviewed by: 21], may be separated into both static and dynamic acceleration [22, 23]. Animal body orientation may be registered using the static acceleration caused by gravitational force acting on the accelerometers [24, 25]. Removing the gravitational component, the dynamic acceleration is revealed. This makes it possible to identify patterns in the acceleration waveform that corresponds to an observed behaviour [26, 27].

Supervised machine learning (ML) algorithms are effective ways to classify features of animal acceleration data into pre-defined behavioural categories [28,29,30,31,32]. To train and validate such algorithms, movement data is collected using acceleration sensors on the animal at the same time as the animal behaviour is recorded through direct observation [33, 34], or with a camera [35]. Animal behaviour is classified into different behavioural categories and then accelerometer data is annotated with the recorded behavioural categories [36, 37]. The raw acceleration annotated with the corresponding behaviour is normally pre-processed (using running means [38, 39] or low- and high pass filters [22, 40]) to reduce noise or to separate static and dynamic acceleration. Then the data is segmented into windows, followed by extraction of characteristics of acceleration data (features), selection of features, and modelling [41]. The features and their corresponding classified behaviours are used to train the models, which learn to distinguish between the classified behaviours given the differences in the acceleration data [41]. Once a model is trained, it can be used on new data (e.g., new individuals) to quantify different behaviours performed by the animal. This enables fine-scale behavioural studies over long time periods under conditions where direct observations are difficult due to constraints such as visibility or geographic scale [20, 21, 42].

Generally, the performance of behaviour classification relies on a fixed placement and orientation of the sensors [43, 44]. This can be achieved using harnesses, halters, or glue-on tags [37, 45,46,47]. However, often sensors are attached to a collar around the neck of the animal [8, 48], and then it is likely that the sensor will change its position and orientation relative to the animal’s body orientation. This may cause significant errors and reduced recognition rate [49]. The variability caused by displacement of the sensors may be accounted for by using robust features derived from the raw sensor output. For example, the net acceleration computed from all three axes (the Euclidean norm of the acceleration vector) is less sensitive to changes in sensor orientation or placement [50, 51]. Alternatively, information about angles around X (roll) and Y (pitch) using a gyroscope or magnetometer can be used to correct the accelerometer sensor displacements using rotation matrices [52, 53]. Such correction has to our knowledge seldom been applied on data collected with collar-attached sensors.

We equipped reindeer with collar-attached accelerometers and registered their behaviour using video cameras to find the best model predicting reindeer foraging behaviour, such as grazing on ground lichens and browsing on shrubs and arboreal lichens in trees. The main objective of our study was to develop and validate a method for classifying foraging behaviour of reindeer using tri-axial acceleration sensors. We evaluated Random forests (RF), Support vector machines (SVM) and hidden Markov models (HMM) to find the best model to classify acceleration data into pre-defined behavioural categories.

Methods

Study area, animals and management

In this study, we simultaneously collected video and acceleration data from in total 19 semi-domesticated female reindeer in Ståkke and Sirges Sami reindeer herding communities in northern Sweden. Initially, ten individuals per herding community were randomly selected from two groups of 40 animals being supplementary fed in a feeding experiment conducted in each community. In Sirges, the ten reindeer (nine-month-old) were kept in a 300 m2 enclosure from 27 February to 2 March 2020 (Fig. 1). In Ståkke, the ten reindeer (two two-year-old and eight nine-month-old) were kept in a 150 m2 enclosure from 4 to 9 March 2020; one of the nine-month-old individuals was difficult to capture for collar fitting and was excluded from sensor attachment. In both enclosures, reindeer lichens (Cladonia rangiferina and Cladonia arbuscula) and commercially available pelleted reindeer feed (Renfor nära ®, Lantmännen, Sweden) were dug down under the snow to encourage natural grazing and digging behaviour. In addition, small trees covered with arboreal lichens (Bryoria fuscescens) were placed in the enclosures to encourage browsing behaviour.

Video recordings

Three cameras (Axis Communications, 2025-LE Network Camera) were used and placed to cover the whole enclosure and enable video recordings of the animals from different angles. The reindeer were video recorded from 6 AM until 6 PM. In total, we generated 50 h daytime video (15 frames per second) on each individual.

Accelerometer data

We used a three-axial accelerometer (Axy-4; 9 × 15 × 2 mm; 0.7 g) including a temperature sensor [54] positioned on the ventral-right side of the neck attached to a GPS-collar (Pellego) [55], with a total weight of 330 g. See Additional file 2: Fig. A1, illustrating the attachment and directions of the accelerometer. We choose to configure the accelerometers to a sampling rate of 10 Hz with 8-bit resolution at ± 8 g. Sampling rate of temperature was set to 0.2 Hz. At these settings, the sensor could store four months of data. Sampling rate was chosen according to Nyquist’s criterion i.e., that sampling rate should be at least twice the highest frequency component of the signal [56, 57]. Reindeer activities, like other large herbivores, were expected to involve frequencies of 5 Hz [58, 59]. Thus, sampling frequency of a minimum of 10 Hz was required to detect motions. The same computer was used for calibration and time synchronization of the accelerometer internal clock. All accelerometers were shaken in front of all cameras prior to attachment to acquire a reference for time synchronization [60]. Collars were attached to mimic the conditions surveying freely ranging reindeer, when the size of the collar needs to allow for growth of the neck, as these reindeer were still in their growth stage. Acceleration data was retrieved using Axy Manager Version 1.8.3.0 [60].

Behavioural observations

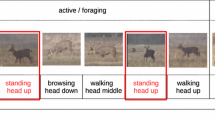

An ethogram was created after consulting the reindeer herders about typical reindeer behaviours and two hours of behavioural observations in the enclosures (Table 1). In total, 39 h of acceleration data from 19 individuals were annotated into 17 behavioural categories. On an average two hours of annotations were performed for each individual from the first day of video recordings. Behaviours were first annotated into the main categories: browsing high, browsing low, grazing, digging, lying, standing, moving, agonistic behaviour, scratching head against tree, other and missing data. If a behaviour did not fit the listed behaviours or if an animal expressed more than one of the listed behaviours at the same time, we annotate it as “other”. The latter occurred on a few occasions when one reindeer was digging and grazing at the same time. Total number of recorded behaviours for each individual are presented in Additional file 1: Table A2. Video recordings were annotated using BORIS Version 7.9.8 [61]. Reindeer have a polycyclic activity pattern with all typical behaviours occurring in bursts both day and night [62, 63] throughout the year [64]. Thus, we expect to cover the most common behaviours occurring in night-time from the daytime recordings.

Behaviours used in model training

Closely related behaviours with high similarity in acceleration waveforms were merged before model training i.e., grazing behaviour included grazing, grazing from a hole, and grazing while walking, and inactivity included sleeping, ruminating, standing, and resting. Running was merged with trotting due to low occurrence. To quantify reindeer foraging behaviour, a total of seven remaining behavioural categories were used for model training: grazing, browsing low, browsing high, inactivity, walking, trotting, and other behaviours. Behaviours such as walking in rough terrain were not observed in the video recordings and were therefore not included in the training and validation.

Analyses of accelerometer data

Raw acceleration data (X, Y and Z) were first smoothed using a running mean of five seconds removing most of the static acceleration (gravitational component of acceleration) from the dynamic acceleration [e.g., 12, 23, 27]. The GPS device (175 g) acted as a counterweight to avoid unwanted collar rotations around the neck. However, this happened to some extent, and when reindeer are free-ranging the accelerometers will also be prone to unwanted rotations. From the estimated static acceleration, the angles around X (roll) and Y (pitch) were calculated [65; Table 2] to estimate accelerometer orientation. To validate our estimated angles, our filtering method and equations for pitch and roll were compared with true angles derived from a dataset collected with IMU sensors (accelerometer and gyroscope; Byström, unpublished data). To adjust for the position of the accelerometer when a collar had rotated, a rotation matrix around the X-axis was calculated to transform the sensor’s measurements into fixed measures based on the estimated angle (α) around the X-axis (Table 2). The \(\ell^{2}\)-norm of raw accelerometer axes was calculated to assess an orientation-independent index of acceleration magnitude [49, 66].

Acceleration data were then segmented into 2-, 3-, and 5-s windows using fixed-size non-overlapping sliding windows [67]. As a result, behaviours occurring during short timespans (< 2 s) were dropped from the data after segmentation. See Additional file 1: Table A3–A5, for final number of windows for each behaviour and individual when using 2-, 3-, and 5-s windows, respectively. Summary statistics were calculated from each segment of data resulting in 50 features. To avoid overfitting and computational load, we removed highly correlated features and used 12 features for model training and validation: m_roll, IQR_roll, mean_sX, sd_sX, mean_dX, max_dX, m_Y, IQR_Y, min_sY, max_sY, sd_dZ and mean_dZ, with m_roll being the combination of median (m, Table 2B) and Roll (roll, Table 2A) and so forth. To further decrease predictor variables to avoid overfitting, we selected the most influential variables for classification using forward feature selection for each window size implemented with the “CAST”-package [68]. Distribution of annotated data using three features (mean_sX, IQR_Y, and sd_dZ) and 2 s windows are shown in Additional file 2: Fig. A2. All data processing and analyses were performed using R version 4.0.3 [69] and RStudio version 1.3.1093 [70]. In this study, time-domain metrics were considered.

Random forests

Random forests (RF) is a classification method that combines an ensemble of classification trees [71, 72]. Each classification tree defines decision rules to partition the dataset into subsamples with similar properties. A RF randomly selects observations and features to build multiple classification trees from a dataset. The predictions of each individual tree are averaged to give an overall classification decision [71]. Thus, the RF corrects for overfitting. In addition, RF is robust with respect to noise [71]. We initially constructed 500 trees using the “randomForest”-package [73], and used the “caret”-package [74] to tune the number of variables chosen at each iteration.

Support vector machines

Support vector machines (SVM) is a supervised machine learning algorithm which finds optimal separating hyperplanes (decision boundaries) that separate the data points into the different classes. We implemented multiclass SVM using the “caret”-package [74] and radial kernel function using the “kernlab”-package [75]. Tuning was performed using the “caret”-package [74] to find the optimal regularization parameter C and kernels smoothing parameter gamma.

Hidden Markov models

An hidden Markov model (HMM) is a stochastic time-series model involving an observable state-dependent process and an underlying, unobservable state process. The goal is to learn about the hidden states (in our case behaviours) by observing the state-dependent process [acceleration metrics; 26]. The hidden states are assumed to follow a first-order Markov chain, and the probability distribution of an observation at time \(t\) is assumed to depend only on the state at time \(t\), independently of all other observations and states [76, 77]. Thus, HMM takes into account the serial dependence between observed behaviours, unlike RF and SVM.

To fit an HMM, we need to specify the number of states and the form of the observation distributions. In our case, the number of states is the number of pre-specified behaviours, and we used state-dependent multivariate normal distributions for the observations. The transition probability matrix of the Markov chain and the parameters of the observation distributions can then be estimated by maximum likelihood, using the Forward Algorithm to evaluate the likelihood efficiently. Given a fitted HMM, the Viterbi algorithm can be used to reconstruct the most likely states (behaviours) corresponding to the observations. See for example Leos-Barajas et al. [26].

Training and validation

To account for variability among individuals used in the training and to make generic predictions on new (unseen) individuals, we used leave-one-subject-out cross-validation for model evaluation. This ensured that an individual never occurred in the training and validation dataset at the same time for each iteration of k. Thus, data from one individual was always left out in each fold and was utilized as a test set. This was repeated until data from all individuals were classified. To retain the naturally unbalanced behaviours across individuals, the dataset was not balanced across individuals, and we used an unequal number of behavioural classes from each individual. Confusion matrices [\({n}_{ij}\)] were used to summarize model performances where \(i, j\) denotes the number of observations belonging to ground-truth behaviour \(i\) that were predicted by the model to be behaviour \(j\) (see calculations in Additional file 1: Table A1). We computed behaviour-specific sensitivity, precision and accuracy and overall accuracy across behaviours. Cross-validation was implemented using the “CAST”-package [68] for RF and SVM.

Results

Model development and evaluation

Random forests was tuned for the optimal number of variables chosen at each iteration (2 s windows: mtry = 2, 3 s windows: mtry = 3, and 5 s windows: mtry = 4) and the number of trees was set to 50 (ntrees = 50, Additional file 2: Fig. A3). Future feature selection using RF to find the most important variables out of twelve resulted in eight predictor variables used for 2 s windows (mean_sX, sd_dZ, IQR_Y, IQR_roll, max_dX, min_sY, sd_sX and m_Y), nine predictor variables used 3 s windows (mean_sX, sd_dZ, IQR_Y, IQR_roll, max_dX, min_sY, sd_sX, mean_dX and m_Y), and seven predictor variables used for 5 s windows (mean_sX, sd_dZ, IQR_Y, IQR_roll, max_dX, sd_sX and min_sY). This subset of variables was later used for SVM and HMM. For RF, overall accuracy was 100% (Kappa = 1) in the training datasets for all sliding windows (2 s, 3 s and 5 s). Model overfitting was checked for RF by reducing number of trees, and the result did not differ (qualitatively) with number of trees as small as 50. Regions of tuning parameter gamma (\(\gamma\)) and optimal regularization parameter C (default values for C were 0.25, 0.5, 1, 2, 4) passed from training were used for SVM (2 s windows: \(\gamma\) = 0.225, C = 1, 3 s windows: \(\gamma\) = 0.217, C = 1, and 5 s windows: \(\gamma\) = 0.308, C = 0.5). Further tuning of hyperparameters for SVM provided better overall accuracy but increased bias towards dominant classes and were not able to classify undersampled behaviours. Similarly, bias towards dominant classes increased with increased window size (Table 3). In the training dataset for SVM, overall accuracy and Kappa was 86% and 79% for 2 s windows, 89% and 83% for 3 s windows and 88% and 81% for 5 s windows, respectively. Training set accuracy and Kappa for HMM was 82% and 73% for 2 s windows, 82% and 73% for 3 s windows and 80% and 68% for 5 s windows, respectively.

Model performance

Grazing (accuracy ≥ 88%) and inactivity (accuracy ≥ 90%) were easily identified by all three models. However, for browsing low, the models did not discriminate the behaviours to the same extent (Table 3). Most confusion was found between browsing low and browsing high for all models. For the three models applied, the highest overall accuracy (85%) was found for RF using 5 s windows (Table 3). Increasing window size (from 2 to 5 s window segmentation) improved overall accuracy of RF and SVM whereas overall accuracy of HMM increased with smaller window size. The best performing HMM (using 2 s windows) had better performance across all individual behaviours and was able to classify browsing high (Table 3). Similarly, HMM had better performance of trotting compared to the best performing RF and SVM (Table 3). Confusion matrices and F1-scores are provided in Additional file 1 (RF: Table A6, SVM: Table A7, HMM: Table A8, F1-scores: Table A9).

Discussion

We illustrate application of collar-attached acceleration sensors to quantify reindeer fine-scale behaviour. Using data from 19 reindeer, we tested the supervised machine learning algorithms RF, SVM, and HMM to find the best model classifying reindeer behaviour. Overall, HMM performed best in predicting individual and rare behaviours, while RF and SVM were biased towards dominant classes and less able to handle rare behaviours such as trotting and browsing high.

Predicting grazing, accuracy varied between 88% (HMM) and 93% (RF and SVM). Our results were similar to Alvarenga et al. [78] and Barwick et al. [79] classifying five sheep behaviours using halter and collar-attached accelerometers, respectively. Other studies have reported prediction accuracy and sensitivity for feeding behaviours from 77% to 96% and 75% to 100%, respectively [29, 80,81,82]. In these studies, sheep and cow behaviour were classified and the predictive performance tended to increase with a lower number of behavioural classes included in the models [29, 80,81,82]. Similarly, Turner et al. [83] found a reduction in overall accuracy when more behavioural classes of sheep behaviour were included in the models using RF, SVM and Deep learning techniques. For example, RF performed best using three behavioural classes with an overall accuracy of 83%, whereas overall accuracy dropped to a maximum of 72.4% for a RF model when using nine classes. Support vector machines achieved 77% overall accuracy using three behavioural classes but dropped to 58% when using nine behavioural classes [83]. In our models, seven behavioural classes were used of which feeding was separated into three subgroups (grazing and browsing high or low). Our results also indicated that when behaviour was classified as browsing high, this was generally correct, but that actual browsing high behaviour was often classified as browsing low (see confusion matrices in Additional file 1, Table A6–A8). This most likely depended on the two behaviours only being separated by the change in angle of the head. The accelerometer was attached to the neck, and the change of the neck angle when browsing high or low might not have been large enough to separate the acceleration pattern for the two behaviours. Thus, there was not a clear difference in acceleration between the two behaviours and the sensitivity for browsing high was lower than for other behaviours only reaching 79% (HMM, 2 s windows), 28% (RF, 3 s windows) and 14% (SVM, 2 s windows). In our dataset, browsing high was also a relatively rare behaviour only expressed by a few individuals. More annotations on rare behaviours could further have improved our classification accuracy, at least for those with distinct feature characteristics. For example, by visualizing the distribution using three statistical features (Additional file 2: Fig. A2) for our seven behavioural classes, it seems like browsing high has a distinct cluster. Nevertheless, RF and SVM failed to predict browsing high.

Annotating behavioural data to the accelerometer data is time-consuming, why a common challenge is to produce a dataset with a sufficient number of observations for each behaviour across individuals. Datasets with too few ground-truth observations are prone to overfitting. In addition, without enough individuals, it may not be possible to generate generalizable results on new individuals. To overcome this problem overlapping windows may be used when segmenting the data. The common data are shared across successive time windows, usually with 50% overlap between the two windows [84]. Using 50% overlap compared to no overlap may increase classification performance significantly [85]. Bersch et al. [67] compared classification performance in human activity recognition data using 0%, 25%, 50%, 75%, and 90% overlap and found a higher accuracy with increased overlap compared to no overlap. Riaboff et al. [86] found that the best prediction performance of cow behaviour was achieved when using 90% overlap. However, there is a risk of information leakage resulting in over-optimistic results when data is shared across adjacent windows [87]. With our large dataset (90,052 labelled samples for one-second windows for seven behavioural categories) it was not necessary with overlapping windows for segmentation. Information leakage could therefore be avoided in our model training.

It is important that an animal’s movement pattern is observed over an optimal time window to be able to identify different behaviours. Hence, window size may have a significant impact on the prediction results [67]. In our evaluation of window size on model performance, we found that RF and SVM had slightly higher performance accuracies at longer window sizes, while HMM had higher performance at shorter window sizes (Table 3). Hidden Markov models consider the serial dependence between behaviours and will most likely gain more information from using shorter time windows. Thus, optimal window size depends on the model selected to classify behaviour.

We had one collar rotating clearly around the neck of the animal. If collar rotations are not accounted for this may result in significant errors when using collar-attached acceleration sensors [49]. One way of dealing with collar rotations is to use orientation-independent features such as the total magnitude of all axes [49]. There may be a risk of decreased recognition rates due to loss of dimensionality when using orientation-independent features [43], but Kamminga et al. [49] and Barker et al. [50] used orientation-independent features and found that feeding behaviour of goat and cow was predicted with an accuracy of 83% and 86%, respectively. Alternatively, rotation matrices can be used for correcting sensor rotations [52]. To reduce the effect of collar rotations, we transformed the data along the X-axis using rotation matrices enabling better discrimination between the behaviours. Thus, total magnitude of axes were removed to avoid over-fitting. Using more individuals with rotating collars and combining magnetometers and/or gyroscopes to provide the true angles, would provide further insight into the impact of using rotation matrices on model performance. Increasing the number of sensor outputs, however, shortens battery life and capacity of the sensor.

Cross-validation is necessary to evaluate the reliability of a model [88, 89]. In many studies, cross-validation is performed using random K-fold cross-validation [e.g., 29, 35], or leave-one-out cross-validation [90] when data is randomly split across individuals into training and validation data. Using these methods, it is likely that observations from all individuals are present in each fold and the model is both trained and validated on all individuals. Random K-fold cross-validation may be suitable for models that will be used to monitor the same group of individuals again. However, this tends to show over-optimistic results and may not assess a generic performance on new individuals [89]. Other studies have used a single random split of training and validation data [59, 91]. Model performance in these studies with experimental settings when animals are fenced is high (overall accuracy ranging from 95 to 98%), but may not be generalizable and good enough to be used on data from new individuals.

It may be challenging to label behaviours on enough individuals to capture the individual variation in a population, especially in wild and free-ranging species. In our study, we strived for a model that would be applicable when reindeer are free-ranging and able to predict behaviour of new individuals. Therefore, leave-one-ID-out cross-validation was used. In other words, we made sure that each fold of observations from one individual was not present in both training and validation data. Leave-one-ID-out cross-validation may increase the variance of the results and significantly reduce prediction accuracy by 40 percent due to the individual variation [89, 92]. However, it will evaluate the generic performance of the models as it includes data from unseen objects, compared to traditional K-fold cross-validation [89, 93]. To our knowledge, this is rarely implemented for classification models on animal-borne accelerometer sensors but has been used for behaviour classification of e.g., meerkat (Suricata suricatta), sheep and cattle [80, 94, 95]. A reason for this could be the time-consuming work of compiling a large enough dataset to perform leave-on-ID-out cross-validation as in Riaboff et al. [96].

Many machine learning algorithms are sensitive to unbalanced classes decreasing overall performance [97,98,99]. Therefore, stratified cross-validation to handle unbalanced datasets is sometimes used [35, 100] and is recommended by Riaboff et al. [101]. In our data, behaviours were unbalanced because some activities were not equally frequently performed across individuals. However, to retain the naturally unbalanced frequencies, behaviours were not stratified. Hidden Markov models had lower overall performance compared to RF and SVM but were able to better predict rare classes and thus under-sampled behaviours (browsing high and trotting). Compared to Smith et al. [94] implementing leave-one-subject out cross-validation using SVM for activity classification of six cow behaviours, our F-scores were higher (Additional file 1, Table A9). Other techniques during data collection could be considered, such as active learning to deal with naturally unbalanced datasets [102]. It is also possible to use a refined systematic approach during the labelling process to obtain more balanced datasets, by collecting just enough observations for each class to avoid under- and oversampling.

Our methods enabled generation of detailed classification of activity data from collar-attached acceleration sensors. Being able to document reindeer fine-scale foraging patterns have a wide range of applications. For example, in management of reindeer information on how reindeer behaviour is affected by management actions, extreme weather events, human presence, changes in habitat structure and land fragmentation are essential. As an example, supplementary feeding has become more common due to competing land use and climate change [103, 104]. Supplementary feeding might be beneficial in the short term but might risk the reindeer’s future ability to search for natural fodder such as ground lichens under the snow, especially under extreme conditions. Warm and wet weather in winter increase icing on the ground and in the snow, restricting access to ground lichens [105]. Such conditions may try reindeer foraging skills searching, finding, and digging for lichens under the snow. In addition, from a climate change perspective with increasing shrubification of the arctic tundra, our method could be vital to quantify reindeer foraging intensity on shrubs and trees and its ability to suppress this vegetative greening [17, 106].

In conclusion, classification of remote fine-scale foraging behaviours from accelerometer data provides means to answer a wide range of questions related to animal behaviour, physiology, and ecology. Our results demonstrate that behaviours can be distinguished by isolated sequences of accelerometer data applying time domain features using a sampling frequency of 10 Hz. Hidden Markov models was able to best predict behaviours based on naturally unbalanced data and thus provide a useful tool to remotely monitor reindeer behaviour and to quantify how foraging behaviour of reindeer is affected by winter feeding.

Availability of data and materials

Data are available from the Dryad Digital Repository: https://doi.org/10.5061/dryad.8sf7m0cs7.

References

Oliveira RF, Bshary R. Expanding the concept of social behavior to interspecific interactions. Ethology. 2021;127:758–73.

Mysterud A, Ims RA. Functional responses in habitat use: availability influences relative use in trade-off situations. Ecology. 1998;79:1435–41.

Weary DM, Huzzey JM, von Keyserlingk MA. Board-invited review: Using behavior to predict and identify ill health in animals. J Anim Sci. 2009;87:770–7.

Tuomainen U, Candolin U. Behavioural responses to human-induced environmental change. Biol Rev. 2011;86:640–57.

Sepúlveda-Varas P, Huzzey JM, Weary DM, von Keyserlingk MAG. Behaviour, illness and management during the periparturient period in dairy cows. Anim Prod Sci. 2013;53:988–99.

Savoca MS, Czapanskiy MF, Kahane-Rapport SR, Gough WT, Fahlbusch JA, Bierlich KC, et al. Baleen whale prey consumption based on high-resolution foraging measurements. Nature. 2021;599:85–90.

Wilson AM, Lowe JC, Roskilly K, Hudson PE, Golabek KA, McNutt JW. Locomotion dynamics of hunting in wild cheetahs. Nature. 2013;498:185–9.

Ladha C, Hammerla N, Hughes E, Olivier P, Ploetz T. Dog's life: wearable activity recognition for dogs. In: Proceedings of the 2013 ACM International Joint Conference on Pervasive and Ubiquitous Computing; 2013. pp 415–418.

Watanabe N, Sakanoue S, Kawamura K, Kozakai T. Development of an automatic classification system for eating, ruminating and resting behavior of cattle using an accelerometer. Grassl Sci. 2008;54:231–7.

Williams CT, Wilsterman K, Zhang V, Moore J, Barnes BM, Buck CL. The secret life of ground squirrels: accelerometry reveals sex-dependent plasticity in above-ground activity. R Soc Open Sci. 2016;3: 160404.

Ydesen KS, Wisniewska DM, Hansen JD, Beedholm K, Johnson M, Madsen PT. What a jerk: prey engulfment revealed by high-rate, super-cranial accelerometry on a harbour seal (Phoca vitulina). J Exp Biol. 2014;217:2239–43.

Kröschel M, Reineking B, Werwie F, Wildi F, Storch I. Remote monitoring of vigilance behavior in large herbivores using acceleration data. Anim Biotelemetry. 2017;5:1–15.

Jernsletten J-LL, Klokov K. Sustainable reindeer husbandry. University of Tromsø: Centre for Saami Studies; 2002.

Hofmann RR. Evolutionary steps of ecophysiological adaptation and diversification of ruminants: a comparative view of their digestive system. Oecologia. 1989;78:443–57.

Trudell J, White RG. The effect of forage structure and availability on food-Intake, biting rate, bite size and daily eating time of reindeer. J Appl Ecol. 1981;18:63–81.

Macias-Fauria M, Forbes BC, Zetterberg P, Kumpula T. Eurasian Arctic greening reveals teleconnections and the potential for structurally novel ecosystems. Nat Clim Change. 2012;2:613–8.

Skarin A, Verdonen M, Kumpula T, Macias-Fauria M, Alam M, Kerby J, et al. Reindeer use of low Arctic tundra correlates with landscape structure. Environ Res Lett. 2020;15:115012.

Mosser AA, Avgar T, Brown GS, Walker CS, Fryxell JM. Towards an energetic landscape: broad-scale accelerometry in woodland caribou. J Anim Ecol. 2014;83:916–22.

Raponi M, Beresford DV, Schaefer JA, Thompson ID, Wiebe PA, Rodgers AR, et al. Biting flies and activity of caribou in the boreal forest. J Wildlife Manage. 2018;82:833–9.

Van Oort BEH, Tyler NJC, Storeheier PV, Stokkan K-A. The performance and validation of a data logger for long-term determination of activity in free-ranging reindeer, Rangifer tarandus L. Appl Anim Behav Sci. 2004;89:299–308.

Brown DD, Kays R, Wikelski M, Wilson R, Klimley A. Observing the unwatchable through acceleration logging of animal behavior. Anim Biotelemetry. 2013;1:1–16.

Sato K, Mitani Y, Cameron MF, Siniff DB, Naito Y. Factors affecting stroking patterns and body angle in diving Weddell seals under natural conditions. J Exp Biol. 2003;206:1461–70.

Veltink PH, Bussmann HJ, De Vries W, Martens WJ, Van Lummel RC. Detection of static and dynamic activities using uniaxial accelerometers. IEEE Trans Neural Syst Rehabil Eng. 1996;4:375–85.

Yoda K, Naito Y, Sato K, Takahashi A, Nishikawa J, Ropert-Coudert Y, et al. A new technique for monitoring the behaviour of free-ranging Adelie penguins. J Exp Biol. 2001;204:685–90.

Laich AG, Wilson RP, Quintana F, Shepard EL. Identification of imperial cormorant Phalacrocorax atriceps behaviour using accelerometers. Endanger Species Res. 2008;10:29–37.

Leos-Barajas V, Photopoulou T, Langrock R, Patterson TA, Watanabe YY, Murgatroyd M, et al. Analysis of animal accelerometer data using hidden Markov models. Methods Ecol Evol. 2017;8:161–73.

Shepard EL, Wilson RP, Quintana F, Laich AG, Liebsch N, Albareda DA, et al. Identification of animal movement patterns using tri-axial accelerometry. Endanger Species Res. 2008;10:47–60.

Le Roux SP, Marias J, Wolhuter R, Niesler T. Animal-borne behaviour classification for sheep (Dohne Merino) and Rhinoceros (Ceratotherium simum and Diceros bicornis). Anim Biotelemetry. 2017;5:1–13.

Diosdado JAV, Barker ZE, Hodges HR, Amory JR, Croft DP, Bell NJ, et al. Classification of behaviour in housed dairy cows using an accelerometer-based activity monitoring system. Anim Biotelemetry. 2015;3:1–14.

Soltis J, Wilson RP, Douglas-Hamilton I, Vollrath F, King LE, Savage A. Accelerometers in collars identify behavioral states in captive African elephants Loxodonta africana. Endanger Species Res. 2012;18:255–63.

Painter MS, Blanco JA, Malkemper EP, Anderson C, Sweeney DC, Hewgley CW, et al. Use of bio-loggers to characterize red fox behavior with implications for studies of magnetic alignment responses in free-roaming animals. Anim Biotelemetry. 2016;4:1–19.

Brewster LR, Dale JJ, Guttridge TL, Gruber SH, Hansell AC, Elliott M, et al. Development and application of a machine learning algorithm for classification of elasmobranch behaviour from accelerometry data. Mar Biol. 2018;165:1–19.

Ryan MA, Whisson DA, Holland GJ, Arnould JP. Activity patterns of free-ranging koalas (Phascolarctos cinereus) revealed by accelerometry. PLoS ONE. 2013;8:e80366.

Yu H, Deng J, Nathan R, Kroschel M, Pekarsky S, Li G, et al. An evaluation of machine learning classifiers for next-generation, continuous-ethogram smart trackers. Mov Ecol. 2021;9:1–14.

Mansbridge N, Mitsch J, Bollard N, Ellis K, Miguel-Pacheco GG, Dottorini T, et al. Feature selection and comparison of machine learning algorithms in classification of grazing and rumination behaviour in sheep. Sensors. 2018;18:2–16.

McClune DW, Marks NJ, Wilson RP, Houghton JD, Montgomery IW, McGowan NE, et al. Tri-axial accelerometers quantify behaviour in the Eurasian badger (Meles meles): towards an automated interpretation of field data. Anim Biotelemetry. 2014;2:1–6.

Nathan R, Spiegel O, Fortmann-Roe S, Harel R, Wikelski M, Getz WM. Using tri-axial acceleration data to identify behavioral modes of free-ranging animals: general concepts and tools illustrated for griffon vultures. J Exp Biol. 2012;215:986–96.

Wilson RP, White CR, Quintana F, Halsey LG, Liebsch N, Martin GR, et al. Moving towards acceleration for estimates of activity-specific metabolic rate in free-living animals: the case of the cormorant. J Anim Ecol. 2006;75:1081–90.

Shepard EL, Wilson RP, Halsey LG, Quintana F, Laich AG, Gleiss AC, et al. Derivation of body motion via appropriate smoothing of acceleration data. Aquat Biol. 2008;4:235–41.

Kokubun N, Kim JH, Shin HC, Naito Y, Takahashi A. Penguin head movement detected using small accelerometers: a proxy of prey encounter rate. J Exp Biol. 2011;214:3760–7.

Bulling A, Blanke U, Schiele B. A tutorial on human activity recognition using body-worn inertial sensors. Acm Comput Surv. 2014;46:1–33.

Scheibe KM, Schleusner T, Berger A, Eichhorn K, Langbein J, Dal Zotto L, et al. ETHOSYS(R)—new system for recording and analysis of behaviour of free-ranging domestic animals and wildlife. Appl Anim Behav Sci. 1998;55:195–211.

Lau SL. Comparison of orientation-independent-based-independent-based movement recognition system using classification algorithms. In: 2013 IEEE Symposium on Wireless Technology & Applications (ISWTA); 2013. pp 322–326.

Byon Y-J, Liang S. Real-time transportation mode detection using smartphones and artificial neural networks: performance comparisons between smartphones and conventional global positioning system sensors. J Intell Transp Syst. 2014;18:264–72.

Jeanniard-du-Dot T, Guinet C, Arnould JPY, Speakman JR, Trites AW, Goldbogen J. Accelerometers can measure total and activity-specific energy expenditures in free-ranging marine mammals only if linked to time-activity budgets. Funct Ecol. 2016;31:377–86.

Nielsen PP. Automatic registration of grazing behaviour in dairy cows using 3D activity loggers. Appl Anim Behav Sci. 2013;148:179–84.

Kölzsch A, Neefjes M, Barkway J, Müskens GJDM, van Langevelde F, de Boer WF, et al. Neckband or backpack? Differences in tag design and their effects on GPS/accelerometer tracking results in large waterbirds. Anim Biotelemetry. 2016;4:1–14.

Studd EK, Landry-Cuerrier M, Menzies AK, Boutin S, McAdam AG, Lane JE, et al. Behavioral classification of low-frequency acceleration and temperature data from a free-ranging small mammal. Ecol Evol. 2019;9:619–30.

Kamminga JW, Le DV, Meijers JP, Bisby H, Meratnia N, Havinga PJ. Robust sensor-orientation-independent feature selection for animal activity recognition on collar tags. Proc ACM Interact Mob Wearable Ubiquitous Technol. 2018;2:1–27.

Barker ZE, Vazquez Diosdado JA, Codling EA, Bell NJ, Hodges HR, Croft DP, et al. Use of novel sensors combining local positioning and acceleration to measure feeding behavior differences associated with lameness in dairy cattle. J Dairy Sci. 2018;101:6310–21.

Williams HJ, Holton MD, Shepard ELC, Largey N, Norman B, Ryan PG, et al. Identification of animal movement patterns using tri-axial magnetometry. Mov Ecol. 2017;5:1–14.

Florentino-Liano B, O'Mahony N, Artes-Rodriguez A. Human activity recognition using inertial sensors with invariance to sensor orientation. In: 2012 3rd International Workshop on Cognitive Information Processing (CIP); 2012. pp 1–6.

Hemminki S, Nurmi P, Tarkoma S. Gravity and linear acceleration estimation on mobile devices. In: Proceedings of the 11th International Conference on Mobile and Ubiquitous Systems: Computing, Networking and Services; 2014. pp 50–59.

Technosmart. Axy-4: Micro Accelerometer datalogger for tracking free-moving animals; 2018. https://www.technosmart.eu.

Followit. Pellego; 2018. https://www.followit.se/livestock/reindeer.

Nyquist H. Certain topics in telegraph transmission theory. Trans AIEE. 1928;47:617–44.

Oppenheim AV, Willsky AL, Nawab SH. Signals and systems. 2nd ed. New Jersey: Prentice-Hall; 1997.

Benaissa S, Tuyttens FAM, Plets D, Cattrysse H, Martens L, Vandaele L, et al. Classification of ingestive-related cow behaviours using RumiWatch halter and neck-mounted accelerometers. Appl Anim Behav Sci. 2019;211:9–16.

Walton E, Casey C, Mitsch J, Vazquez-Diosdado JA, Yan J, Dottorini T, et al. Evaluation of sampling frequency, window size and sensor position for classification of sheep behaviour. R Soc Open Sci. 2018;5: 171442.

Technosmart. Axy Manager; 2020. https://www.technosmart.eu.

Friard O, Gamba M. BORIS: a free, versatile open-source event-logging software for video/audio coding and live observations. Methods Ecol Evol. 2016;7:1325–30.

Eriksson L-O, Källqvist M-L, Mossing T. Seasonal development of circadian and short-term activity in captive reindeer Rangifer Tarandus L. Oecologia. 1981;48:64–70.

Colman JE, Pedersen C, Hjermann DO, Holand O, Moe SR, Reimers E. Twenty-four-hour feeding and lying patterns of wild reindeer Rangifer tarandus tarandus in summer. Can J Zool. 2001;79:2168–75.

van Oort BE, Tyler NJ, Gerkema MP, Folkow L, Stokkan KA. Where clocks are redundant: weak circadian mechanisms in reindeer living under polar photic conditions. Sci Nat. 2007;94:183–94.

Salhuana L. Tilt sensing using linear accelerometers. Freescale Semiconductor. 2012:1–22.

Riaboff L, Poggi S, Madouasse A, Couvreur S, Aubin S, Bedere N, et al. Development of a methodological framework for a robust prediction of the main behaviours of dairy cows using a combination of machine learning algorithms on accelerometer data. Comput Electron Agric. 2020;169:1–16.

Bersch SD, Azzi D, Khusainov R, Achumba IE, Ries J. Sensor data acquisition and processing parameters for human activity classification. Sensors. 2014;14:4239–70.

Meyer H. CAST: 'caret' applications for spatial-temporal models; 2020. https://CRAN.R-project.org/package=CAST.

R Core Team. R: A language and environment for statistical computing. R Foundation for Statistical Computing, Vienna, Austria; 2020. http://www.r-project.org/.

RStudio Team. RStudio: Integrated development for R. RStudio, Inc., Boston. 2020. http://www.rstudio.com/.

Breiman L. Random forests. Mach Learn. 2001;45:5–32.

Hastie T, Tibshirani R, Friedman J. The elements of statistical learning: data mining, inference, and prediction. 2nd ed. New York: Springer Series in Statistics; 2009.

Liaw A, Wiener M. Classification and regression by randomForest. R News. 2002;2:18–22.

Kuhn M. caret: Classification and regression training; 2021. https://CRAN.R-project.org/package=caret.

Karatzoglou A, Smola A, Hornik K, Zeileis A. kernlab: an S4 package for kernel methods in R. J Stat Softw. 2004;11:1–20.

Grewal JK, Krzywinski M, Altman NS. Markov models: Markov chains. Nat Methods. 2019;16:663–4.

McClintock BT, Langrock R, Gimenez O, Cam E, Borchers DL, Glennie R, et al. Uncovering ecological state dynamics with hidden Markov models. Ecol Lett. 2020;23:1878–903.

Alvarenga FAP, Borges I, Palkovič L, Rodina J, Oddy VH, Dobos RC. Using a three-axis accelerometer to identify and classify sheep behaviour at pasture. Appl Anim Behav Sci. 2016;181:91–9.

Barwick J, Lamb DW, Dobos R, Welch M, Schneider D, Trotter M. Identifying sheep activity from tri-axial acceleration signals using a moving window classification model. Remote Sens. 2020;12:3–13.

Benaissa S, Tuyttens FAM, Plets D, de Pessemier T, Trogh J, Tanghe E, et al. On the use of on-cow accelerometers for the classification of behaviours in dairy barns. Res Vet Sci. 2019;125:425–33.

Martiskainen P, Jarvinen M, Skon JP, Tiirikainen J, Kolehmainen M, Mononen J. Cow behaviour pattern recognition using a three-dimensional accelerometer and support vector machines. Appl Anim Behav Sci. 2009;119:32–8.

Fogarty ES, Swain DL, Cronin GM, Moraes LE, Trotter M. Behaviour classification of extensively grazed sheep using machine learning. Comput Electron Agric. 2020;169:105175.

Turner KE, Thompson A, Harris I, Ferguson M, Sohel F. Deep learning based classification of sheep behaviour from accelerometer data with imbalance. Inf Process Agric. 2022. https://doi.org/10.1016/j.inpa.2022.04.001.

Marais J, Le Roux SP, Wolhuter R, Niesler T. Automatic classification of sheep behaviour using 3-axis accelerometer data. In: Proceedings of the twenty-fifth annual symposium of the Pattern Recognition Association of South Africa (PRASA); 2014. pp 97–102.

Pappa L, P. K, Georgoulas G, Stylios C. Multichannel symbolic aggregate approximation intelligent icons: Appl Act Recogn. 2020. pp 505–512.

Riaboff L, Aubin S, Bédère N, Couvreur S, Madouasse A, Goumand E, et al. Considering pre-processing of accelerometer signal recorded with sensor fixed on dairy cows is a way to improve the classification of behaviours. 2019. pp 121–127.

Kamminga JW, Janssen LM, Meratnia N, Havinga PJM. Horsing around: a dataset comprising horse movement. Data. 2019;4:131.

Berrar D. Cross-validation. Encyclopedia of bioinformatics and computational biology. Elsevier; 2018.

Gholamiangonabadi D, Kiselov N, Grolinger K. Deep neural networks for human activity recognition with wearable sensors: leave-one-subject-out cross-validation for model selection. IEEE Access. 2020;8:133982–94.

Grunewalder S, Broekhuis F, Macdonald DW, Wilson AM, McNutt JW, Shawe-Taylor J, et al. Movement activity based classification of animal behaviour with an application to data from cheetah (Acinonyx jubatus). PLoS ONE. 2012;7:e49120.

Robert B, White BJ, Renter DG, Larson RL. Evaluation of three-dimensional accelerometers to monitor and classify behavior patterns in cattle. Comput Electron Agric. 2009;67:80–4.

Tapia EM, Intille SS, Haskell W, Larson K, Wright J, King A, et al. Real-time recognition of physical activities and their intensities using wireless accelerometers and a heart rate monitor. In: 2007 11th IEEE International Symposium on Wearable Computers; 2007. pp 37–40.

Heinz EA, Kunze KS, Sulistyo S, Junker H, Lukowicz P, Tröster G. Experimental evaluation of variations in primary features used for accelerometric context recognition. In: European Symposium on Ambient Intelligence; 2003. pp 252–263.

Smith D, Little B, Greenwood PI, Valencia P, Rahman A, Ingham A, et al. A study of sensor derived features in cattle behaviour classification models. 2015 IEEE Sensors; 2015. p. 1–4.

Chakravarty P, Cozzi G, Dejnabadi H, Leziart PA, Manser M, Ozgul A, et al. Seek and learn: automated identification of microevents in animal behaviour using envelopes of acceleration data and machine learning. Methods Ecol Evol. 2020;11:1639–51.

Riaboff L, Aubin S, Bedere N, Couvreur S, Madouasse A, Goumand E, et al. Evaluation of pre-processing methods for the prediction of cattle behaviour from accelerometer data. Comput Electron Agric. 2019;165: 104961.

Ganganwar V. An overview of classification algorithms for imbalanced datasets. Int J Emerg Technol Adv Eng. 2012;2:42–7.

Yap BW, Rani KA, Rahman HAA, Fong S, Khairudin Z, Abdullah NN. An application of oversampling, undersampling, bagging and boosting in handling imbalanced datasets. In: Proceedings of the First International Conference on Advanced Data and Information Engineering (DaEng-2013); 2014. pp 13–22.

Santos MS, Soares JP, Abreu PH, Araujo H, Santos J. Cross-validation for imbalanced datasets: avoiding overoptimistic and overfitting approaches [research frontier]. IEEE Comput Intell Mag. 2018;13:59–76.

Chakravarty P, Cozzi G, Ozgul A, Aminian K. A novel biomechanical approach for animal behaviour recognition using accelerometers. Methods Ecol Evol. 2019;10:802–14.

Riaboff L, Shalloo L, Smeaton AF, Couvreur S, Madouasse A, Keane MT. Predicting livestock behaviour using accelerometers: a systematic review of processing techniques for ruminant behaviour prediction from raw accelerometer data. Comput Electron Agric. 2022;192:1–22.

Aggarwal U, Popescu A, Hudelot C. Active learning for imbalanced datasets. Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision; 2020. p. 1428–1437.

Persson A-M. Status of supplementary feeding of reindeer in Sweden and its consequences. Management of Fish and Wildlife Populations. Umeå: Swedish University of Agricultural Sciences; 2018

Turunen MT, Rasmus S, Bavay M, Ruosteenoja K, Heiskanen J. Coping with difficult weather and snow conditions: reindeer herders’ views on climate change impacts and coping strategies. Clim Risk Manag. 2016;11:15–36.

Vuojala-Magga T, Turunen M, Ryyppo T, Tennberg M. Resonance strategies of Sami reindeer herders in northernmost Finland during climatically extreme years. Arctic. 2011;64:227–41.

Te Beest M, Sitters J, Ménard CB, Olofsson J. Reindeer grazing increases summer albedo by reducing shrub abundance in Arctic tundra. Environ Res Lett. 2016;11:125013.

Acknowledgements

We thank all involved reindeer herders in Ståkke and Sirges reindeer herding communities for helping us and allowing us to work with their reindeer. To Måns Karlsson at Stockholm University for valuable advice and feedback on the statistical methodology. This study was funded by FORMAS research council decision no. FR-2018/0010.

Funding

Open access funding provided by Swedish University of Agricultural Sciences. The study was supported by grants from FORMAS (AS).

Author information

Authors and Affiliations

Contributions

HR and AS conceived and designed the experiments. HR analysed the data with input from MA and PGB. HR wrote the manuscript with input from all authors. AS received grants for the experiment. All authors approved the final version of the manuscript.

Corresponding author

Ethics declarations

Ethics approval and consent to participate

All applicable institutional and/or national guidelines for the care and use of animals were followed. The methods used in this study comply with the current laws of Sweden. We obtained ethical permissions from the Umeå Board for Laboratory animals (Dnr A 40–2019 and Dnr 5.2.18–13750/2019).

Consent for publication

Not applicable.

Competing interests

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Additional file 1

. Contains supplementary tables including equations for performance statistics, number of recorded behaviours for each individual, confusion matrices, and F1-scores.

Additional file 2

. Contains supplementary figures including illustrations of sensor attachment, data distribution using three statistical features, and out-of-bag error of random forests.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated in a credit line to the data.

About this article

Cite this article

Rautiainen, H., Alam, M., Blackwell, P.G. et al. Identification of reindeer fine-scale foraging behaviour using tri-axial accelerometer data. Mov Ecol 10, 40 (2022). https://doi.org/10.1186/s40462-022-00339-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s40462-022-00339-0