Abstract

In this paper, statistical-model generalizations of independent low-rank matrix analysis (ILRMA) are proposed for achieving high-quality blind source separation (BSS). BSS is a crucial problem in realizing many audio applications, where the audio sources must be separated using only the observed mixture signal. Many algorithms for solving BSS have been proposed, especially in the history of independent component analysis and nonnegative matrix factorization. In particular, ILRMA can achieve the highest separation performance for music or speech mixtures, where ILRMA assumes both independence between sources and the low-rankness of time-frequency structure in each source. In this paper, we propose two extensions of the source distribution assumed in ILRMA. We introduce a heavy-tailed property by replacing the conventional Gaussian source distribution with a generalized Gaussian or Student’s t distribution. Convergence-guaranteed efficient algorithms are derived for the proposed methods, and the relationship between the generalized Gaussian and Student’s t distributions in the source model estimation is revealed. By experimental evaluation, the validity of the heavy-tailed generalizations of ILRMA is confirmed.

Similar content being viewed by others

1 Introduction

Blind source separation (BSS) is a technique for separating individual sources from an observed multichannel mixture without knowing the mixing system, such as the spatial locations of the sensors or sources, in advance. In particular, BSS for multichannel audio signals have been well studied so far. This problem can be divided into two situations: underdetermined (number of microphones < number of sources) and (over-)determined (number of microphones ≥ number of sources) cases. In the underdetermined case, the mixing system of the sources has to be estimated using several assumptions. For example, sparseness-assumption-based methods are popular and reliable approaches [1–3]. In contrast, the determined BSS methods often estimate the inverse system of a mixing process, and high-quality separation can be achieved compared with the underdetermined BSS methods. In this paper, we only focus on the determined BSS problem.

The most popular and successful algorithm for solving determined BSS problem is independent component analysis (ICA) [4], which assumes statistical independence between the sources and estimates a demixing matrix (the inverse system of the mixing process). For a mixture of audio signals, because the sources are mixed by convolution owing to the room reverberation, ICA is often applied to the time-frequency signals (spectrograms) of the observed signal, which are obtained by a short-time Fourier transform (STFT). Frequency-domain ICA (FDICA) [5–8] independently applies ICA to the complex-valued time-series signals in each frequency bin and estimates a frequency-wise demixing matrix. Then, the estimated components in each frequency must be aligned over all frequency bins so that the components of the same source are grouped. This postprocessing of FDICA is the so-called permutation problem [6, 9–11], and several criteria have been used to solve this ambiguity of the signal permutation.

Independent vector analysis (IVA) [12–14] is a sophisticated algorithm that can simultaneously estimate the frequency-wise demixing matrix and solve the permutation problem using only one objective function. IVA assumes higher-order dependences (co-occurrence among the frequency bins) of each source by employing a spherical generative model of the source frequency vector, thus avoiding the permutation problem. The original IVA employs the spherical multivariate Laplace distribution as the source model (hereafter referred to as Laplace IVA). To improve the statistical model flexibility and source separation performance, Laplace IVA has been extended by replacing its source model with a spherical generalized Gaussian distribution [15] (GGD, also known as an exponential power distribution) in many papers [16–20] (hereafter referred to as GGD-IVA), or with a Gaussian distribution having a time-varying variance [21] (hereafter referred to as time-varying Gaussian IVA). Note that the GGD includes the Laplace and Gaussian distributions as special cases.

As another means of audio source modeling and separation, nonnegative matrix factorization (NMF) [22, 23] has been a very common approach during the last decade. NMF is a nonnegative-parts-based low-rank decomposition of an observed nonnegative data matrix that is typically a power or amplitude spectrogram. The decomposed nonnegative parts (bases and activations) can be used for source separation by clustering the parts into each source [24–28]. Also, NMF can be statistically interpreted as a parameter estimation based on a generative model of data, and the distribution of the model defines the objective function (divergence) in NMF. For example, it was revealed that NMF based on Itakura–Saito divergence (IS-NMF) assumes an isotropic complex Gaussian distribution independently defined in each time-frequency slot [29], where the variance of each Gaussian distribution can fluctuate depending on time and frequency. For multichannel audio signals, spatial modeling of the mixing system was introduced into the simple NMF, which is called multichannel NMF (MNMF) [30–32], to solve the BSS problem. MNMF estimates the spatial mixing system, whereas ICA-based BSS techniques optimize the demixing matrix, which yields a more stable and efficient algorithm than MNMF.

Motivated by this issue, a new BSS algorithm called independent low-rank matrix analysis (ILRMA) [33–35] has been proposedFootnote 1. In this method, IS-NMF-based low-rank source modeling is introduced into the source model of IVA, namely, a low-rank time-frequency structure (co-occurrence among the time-frequency slots) is estimated for each source by NMF, and the frequency-wise demixing matrix is optimized taking the NMF source model into account without causing the permutation problem. Since the vector source model in time-varying Gaussian IVA can be interpreted as NMF with a single spectral basis, ILRMA is a natural extension of IVA, where ILRMA utilizes an arbitrary number of bases in the source model. Also, ILRMA can be considered as a dual problem of MNMF (mixing) because ILRMA estimates the demixing matrix, i.e., the inverse of the mixing system (MNMF model), using the low-rank source modeling with NMF.

In this paper, to increase the model flexibility and improve the source separation accuracy, we generalize the source model in ILRMA from the isotropic complex Gaussian distribution of IS-NMF to more heavy-tailed distributions. An important extension is to employ the isotropic complex GGD because it has been reported that GGD-IVA can achieve a better separation result in many papers [17, 19, 20]. As another possible generalization, the isotropic complex Student’s t distribution can also be employed in ILRMA. Student’s t distribution includes the Cauchy and Gaussian distributions as special cases and has been used to model audio sources [36, 37]. For use in NMF-based modeling, Cauchy NMF [38], Student’s t NMF (t-NMF) [39], and its multichannel extension (t-MNMF) [40] have been proposed. The motivation of employing Student’s t distribution is that the Cauchy and Gaussian distributions are a part of the α-stable distribution family [41], which has a stable property of a random variable, namely, a linear combination of two independent random variables generated from the same distribution family also has the same distribution up to location and scale parameters. This property is desirable for NMF-based audio source modeling because it justifies the nonnegative linear decomposition of complex-valued signals [42]. For instance, multichannel BSS based on an α-stable distribution was recently proposed [43] to benefit from this advantage. However, analytical maximum likelihood (ML) estimation with an α-stable distribution is still an open problem because its probability density function (p.d.f.) cannot be represented in a closed form except for several cases. Therefore, instead of employing the α-stable distribution family, we adopt Student’s t distribution as the source model in ILRMA, which partly corresponds to the α-stable distribution and has the stable property. The relationship among the conventional methods and the proposed ILRMA is depicted in Fig. 1. As shown in this figure, the proposed ILRMA based on the GGD (GGD-ILRMA) and that based on Student’s t distribution (t-ILRMA) can be interpreted as a new extension of conventional IVA or ILRMA as well as a computationally efficient solution to the dual problem of MNMF.

Relationship among conventional methods based on Gaussian, Student’s t, and generalized Gaussian distributions. NMF-based low-rank audio source modeling is extended to a multichannel signal, resulting in MNMF, which estimates the mixing system for solving the BSS problem. ILRMA can be considered as a dual problem of MNMF because it estimates the demixing system using the NMF source model

Note that this work extends our preliminary work on t-ILRMA in [44] by developing a new extension, GGD-ILRMA, and providing additional discussion that explains the theoretical relationship between GGD- and t-ILRMA. Also, the experimental results have been updated with new datasets and conditions for more difficult situations in BSS.

The rest of this paper is organized as follows. Section 2 describes the conventional algorithms including IVA and ILRMA, which are the basis for the proposed GGD- and t-ILRMA described in Section 3. Section 4 reports the validation of the proposed methods by conducting BSS experiments with music and speech sources. Finally, Section 5 concludes this paper.

2 Conventional method

2.1 Formulation

Let \(\tilde {s}_{n}(\tau)\), \(\tilde {x}_{m}(\tau)\), and \(\tilde {y}_{n}(\tau)\) be the source, observed (mixture), and estimated (separated) time-domain signals, respectively, where n=1,⋯,N and m=1,⋯,M are the integral indexes of the sources and channels (microphones), respectively. Also, τ is the integral index of the discrete time. The source signal \(\tilde {s}_{n}(\tau)\) is unknown, and only the observed signal \(\tilde {x}_{m}(\tau)\) can be obtained by using the synchronized multiple microphones. The estimated signal \(\tilde {y}_{n}(\tau)\) is the output data of BSS algorithm. In this paper, these time-domain signals are transformed into the time-frequency domain to treat the convolutive mixture with the room reverberation. The complex-valued time-frequency components of \(\tilde {s}_{n}(\tau)\), \(\tilde {x}_{m}(\tau)\), and \(\tilde {y}_{n}(\tau)\) can be obtained via STFT and are respectively denoted as follows:

where i=1,⋯,I and j=1,⋯,J are the integral indexes of the frequency bins and time frames, respectively, and T denotes a transpose. We also denote the spectrograms (time-frequency matrices) of the source, observed, and estimated signals as \(\boldsymbol {S}_{n}\ {\in \mathbb {C}^{I{\times }J}}\), \(\boldsymbol {X}_{m}\ {\in \mathbb {C}^{I{\times }J}}\), and \(\boldsymbol {Y}_{n}\ {\in \mathbb {C}^{I{\times }J}}\), whose elements are sij,n, xij,m, and yij,n, respectively. In FDICA, IVA, and ILRMA, the following mixing system is assumed:

where \(\boldsymbol {A}_{i}=(\boldsymbol {a}_{i,1}~\cdots ~\boldsymbol {a}_{i,n}~\cdots ~\boldsymbol {a}_{i,N})\ {\in \mathbb {C}^{M{\times }N}}\) is a frequency-wise mixing matrix and ai,n = (ai,n1,⋯,ai,nm,⋯,ai,nM)Tis the steering vector for the nth source, which represents the acoustic transfer functions from the nth source to each of the microphones (m=1,⋯,M). The assumed mixing system (4) is called a linear time-invariant mixture or rank-1 spatial model [45] because the spatial covariance of each source image (multichannel observation of each source signal) is restricted to a rank-1 matrix in this system [34]. If the mixing system is determined, namely, M=N, and A i is a non-singular matrix for all i, we can define the frequency-wise demixing matrix \(\boldsymbol {W}_{i}=(\boldsymbol {w}_{i,1} \cdots \boldsymbol {w}_{i,n} \cdots \boldsymbol {w}_{i,N})^{\mathrm {H}}=\boldsymbol {A}_{i}^{-1}\) that recovers the source signal, and the estimated signal y ij is obtained as

where wi,n is the demixing filter for the nth source and H denotes a Hermitian transpose. The goal of BSS based on FDICA, IVA, or ILRMA is to estimate W i and obtain y ij from only the observations x ij by assuming statistical independence between sij,n and \(s_{ij,n'}\phantom {\dot {i}\!}\), where n′≠n. In this paper, we only focus on BSS with the determined situation M=N. For the overdetermined situation M>N, principal component analysis is often applied to x ij for dimensionality reduction so that M=N [46].

2.2 IVA

IVA [12–14] is an elegant solution of the permutation problem [6, 9–11], which considers not the frequency-wise component xij,m but the vector of all frequency components, \(\bar {\boldsymbol {x}}_{j,m}=(x_{1j,m},~\cdots ~x_{Ij,m})^{\mathrm {T}}\ {\in \mathbb {C}^{I{\times }1}}\), as an independent variable as shown in Fig. 2. Thus, in IVA, ICA is applied to the time-series vectors \(\bar {\boldsymbol {x}}_{1,m}~\cdots ~\bar {\boldsymbol {x}}_{J,m}\) while assuming the spherical I-dimensional non-Gaussian distribution \(p(\bar {\boldsymbol {s}})\approx p(\bar {\boldsymbol {y}})\). For example, the generative model in GGD-IVA [16–18, 20] is represented as

where ∥·∥2 denotes the L2 norm and β>0 is the shape parameter of GGD. Laplace IVA [12–14] corresponds to β=1. Since the probability of (6) only depends on the norm of \(\bar {\boldsymbol {y}}_{j,n}\) (spherical property), the components in the vector \(\bar {\boldsymbol {y}}_{j,n}\) have higher-order dependence. Therefore, frequency components that have similar activations, such as a fundamental frequency and its harmonic components, will be merged as one source avoiding the permutation problem.

By assuming the independence between the source vectors, the objective function (negative log-likelihood function of the observed signal) in IVA can be obtained as

where \(G(\bar {\boldsymbol {y}}_{j,n}) = -\log p(\bar {\boldsymbol {y}}_{j,n})\) is called a contrast function and detW i denotes the determinant of a matrix W i . Note that the separated signal yij,n in \(\bar {\boldsymbol {y}}_{j,n}\) includes the variable W i as \(y_{ij,n}=\boldsymbol {w}_{i,n}^{\mathrm {H}}\boldsymbol {x}_{ij}\).

As another generative model of source signals, an isotropic complex Gaussian distribution with time-varying variance can be utilized in IVA [21], which is represented as

where rj,n is the time-varying variance shared over all frequency bins. Similar to (6), (8) also has a spherical property. Note that even though (8) consists of Gaussian distributions, its marginal distribution over j becomes a super-Gaussian distribution because the variance can fluctuate depending on j [17].

Regarding the optimization of W i , a fast and stable optimization algorithm called iterative projection (IP), which is based on a majorization-minimization (MM) algorithm [47], has been derived for ICA [48], Laplace IVA [49], GGD-IVA [17], and time-varying Gaussian IVA [21]. IP can achieve better convergence than classical gradient-based algorithms.

2.3 ILRMA based on Gaussian distribution

2.3.1 Generative model

ILRMA [33–35] is a method unifying IVA and IS-NMF, namely, we assume both statistical independence between sources and the low-rankness of the time-frequency structure in each source. Similar to ICA or IVA, we must assume a non-Gaussian distribution as the generative model of source signals to solve the BSS problem. In ILRMA, the following distribution is assumed for the spectrogram of each source:

where tik,n≥0 and vkj,n≥0 are the nonnegative basis and activation elements (NMF variables) of \(\boldsymbol {T}_{n}\in \mathbb {R}_{\geq 0}^{I{\times }K}\) (basis matrix) and \(\boldsymbol {V}_{n}\in \mathbb {R}_{\geq 0}^{K{\times }J}\) (activation matrix), respectively, k=1,⋯,K is the integral index of the basis, and K is the number of NMF bases (spectral patterns). Also, rij,n≥0 is a sourcewise time-frequency-varying variance that corresponds to the low-rank source model. Therefore, the nonnegative matrix T n V n represents the rank-K model spectrogram of the nth source as |Y n |.2≈T n V n , where |·|.q for matrices denotes the element-wise absolute and qth-power operations. Because of the fluctuation of the variance rij,n of the time and frequency, the marginal distribution of the generative model (9) over j becomes a super-Gaussian distribution, which can be used for independence-based BSS.

The local distribution p(yij,n) is circularly symmetric in the complex plane, and the probability only depends on the power |yij,n|2. For this reason, the variance rij,n corresponds to the expectation value of the power spectrum |yij,n|2, namely, rij,n=E[|yij,n|2]. In addition, if we assume that the source spectrogram yij,n consists of K components cij,nk, namely, \(y_{ij,n} = {\sum \nolimits }_{k} c_{ij,nk}\), the generative model of cij,nk also becomes the complex Gaussian distribution because of the stable property as follows:

Note that the variances in p(yij,n) and p(cij,nk) are \(r_{ij,n}={\sum \nolimits }_{k} t_{ik,n}v_{kj,n}\) and tik,nvkj,n, respectively, and they correspond to the expectation values of |yij,n|2 and |cij,nk|2 as rij,n=E[|yij,k|2] and tik,nvkj,n=E[|cij,nk|2], respectively. Even if \(y_{ij,n}={\sum \nolimits }_{k} c_{ij,nk}\), the additivity of the power spectra does not hold (\(|y_{ij,n}|^{2}\neq {\sum \nolimits }_{k} |c_{ij,nk}|^{2}\)) because of the phase cancelation. However, (9) and (11) mean that the additivity of expectations tik,nvkj,n=E[|cij,nk|2] is satisfied as \(r_{ij,n}={\sum \nolimits }_{k} t_{ik,n}v_{kj,n}\) because of the stable property in Gaussian distribution. Therefore, the generative model (9) theoretically justifies to linearly decompose the power spectrogram |yij,n|2 into K nonnegative parts tik,nvkj,n. This advantage was extended to a more general domain in [42] using an α-stable distribution, which is a distribution family ensuring the stable property. When α=2, α-stable distribution is equal to Gaussian distribution (9) and the additivity of power spectra holds in the expectation sense. When α=1, α-stable distribution converges to Cauchy distribution, which ensures the additivity of amplitude spectra in the expectation sense [38].

2.3.2 Objective function and update rules

The objective function of ILRMA is the negative log-likelihood function of the observed signal x ij and can be obtained from (9) by assuming independence between all sources as

where X={X1,⋯,X M } and Y={Y1,⋯,Y N } are the set of the observed and estimated signals and the independence between sources, \(p(\mathsf {Y}) = \prod _{n} p(\boldsymbol {Y}_{n})\), is assumed. The first and third terms in (13) correspond to the objective function in time-varying Gaussian IVA [21], and the second and third terms correspond to the objective function in IS-NMF [29]. The task of the ILRMA algorithm is to minimize the objective function \({\mathcal {L}}\) w.r.t. T n , V n , and W i .

For the optimization of the demixing matrix W i , similar to IVA, IP can be used for minimizing \(\mathcal {L}\). The update rules based on IP are expressed as follows:

where e n denotes the N×1 unit vector with the nth element equal to unity. By iterating these algorithms, the demixing matrix W i is updated so that the objective function (14) decreases. Note that IP does not include any step-size parameter in its update rules. Regarding the NMF variables T n and V n , the following convergence-guaranteed update rules based on the MM algorithm have been derived [50]:

From the above, the objective function can be efficiently optimized by iterating the update rules (16)–(22). However, a scale ambiguity exists between W i and rij,n because both of them can determine the scale of the separated signal yij,n. Therefore, W i or rij,n has a risk of diverging during the optimization. To avoid this problem, the following normalization should be applied at each iteration:

where λ n is an arbitrary sourcewise normalization coefficient such as the sourcewise average power \(\lambda _{n} = \left [ (IJ)^{-1} {\sum \nolimits }_{i,j} |y_{ij,n}|^{2} \right ]^{\frac {1}{2}}\). These normalizations do not change the value of the objective function (13). The scale of the separated signal yij,n can be restored by applying the following back-projection technique [51] after the optimization:

where \(\hat {\boldsymbol {y}}_{ij,n}=(\hat {y}_{ij,n1}~\cdots ~\hat {y}_{ij,nM})^{\mathrm {T}}\) is a separated source image whose scale is fitted to the observed signals at each microphone and ∘ denotes the Hadamard product (entrywise multiplication). The detailed implementation can be found in [52].

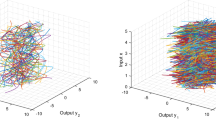

Figure 3 shows the separation principle of ILRMA. When the original sources have a low-rank spectrogram |S n |.2, the spectrogram of their mixture, |X m |.2, should be more complicated, where the rank of |X m |.2 should be greater than that of |S n |.2. On the basis of this assumption, in ILRMA, the low-rank constraint for each estimated spectrogram |Y n |.2 is introduced by employing NMF. The demixing matrix W i is estimated so that the spectrogram of the estimated signal |Y n |.2 becomes a low-rank matrix modeled by T n V n , whose rank is at most K. The estimation of W i , T n , and V n can consistently be carried out by minimizing (13) in a fully blind manner. ILRMA is theoretically equivalent to conventional MNMF only when the rank-1 spatial model (4) is assumed, which yields a stable and computationally efficient algorithm for ILRMA. This issue has been well discussed in [34, 35].

3 Proposed generalization of ILRMA

3.1 Motivation and strategy

The conventional ILRMA described in Section 2.3 is based on the isotropic complex Gaussian distribution (9) with a time-frequency-varying variance rij,n. For independence-based BSS, non-Gaussianity of the source signals is required for the separation, and the model (9) relies on only the fluctuation of the variance rij,n. If the variance rij,n is a constant value for all i and j, the model (9) becomes completely Gaussian and the independence-based BSS collapses because the ICA algorithm cannot distinguish multiple Gaussian sources. Therefore, it is worth generalizing the distribution in ILRMA to a more flexible non-Gaussian source model. In fact, several approaches based on a non-Gaussian distribution with a time-frequency-varying parameter, such as t-NMF, have been proposed, and it has been reported that NMF audio source modeling based on a non-Gaussian distribution provides better separation performance [39]. From the IVA side, the source distribution has also been generalized by employing the GGD in many studies [16–20], which gave more accurate BSS results.

For the reasons mentioned above, in this section, we propose two generalizations of the source distribution (generative model) in ILRMA using heavy-tailed distributions: the isotropic complex GGD and the isotropic complex Student’s t distribution. The former is a natural extension of the conventional generative model (9) and has often been used for the generalization of Laplace IVA or time-varying Gaussian IVA as GGD-IVA. The GGD has a shape parameter that controls the super- or sub-Gaussianity. In particular, the GGD includes Laplace and Gaussian distributions as special cases. Since most audio sources follow super-Gaussian distributions, in this paper, we only focus on GGD-ILRMA with a super-Gaussian region.

The latter generalization was inspired by a recently developed framework [42] that ensures the stable property of complex-valued random variables, i.e., audio modeling based on an α-stable distribution. In this model, similar to IS-NMF (11), the decomposition of a complex-valued spectrogram into several nonnegative parts is theoretically justified by the stable property of this distribution family. Student’s t distribution has a degree-of-freedom parameter that determines the shape of the distribution and its super-Gaussianity. Similar to the GGD, Student’s t distribution includes Cauchy and Gaussian distributions as special cases, which are also special cases of the α-stable distribution. Therefore, NMF source modeling (decomposition of complex-valued spectrogram Y n ) in t-ILRMA is partially justified when the Gaussian or Cauchy distribution is assumed, which is theoretically preferable for audio signal processing.

In addition, we introduce a new domain parameter for NMF modeling in GGD- and t-ILRMA because the generative model of a spectrogram strongly depends on the data domain, such as the selection of the amplitude- or power-domain spectrogram to be used. By controlling both the generative model and the modeling domain of data, we can find a suitable statistical assumption for the audio BSS problem.

3.2 ILRMA based on GGD

3.2.1 Generative model and objective function in GGD-ILRMA

In GGD-ILRMA, we assume the isotropic complex GGD as the source generative model, which is independently defined in each time-frequency slot as follows:

where σij,n is the time-frequency-varying scale parameter, Γ(·) is a gamma function, and p is the domain parameter in the NMF modeling. The distribution (28) is depicted in Fig. 4a. The p.d.f. becomes identical to (9) when β=2. For β=1, (28) corresponds to the complex Laplace distribution. Similar to (9), the probability of (28) only depends on |yij,n|, and the phase of yij,n is uniformly distributed. From (28), the objective function in GGD-ILRMA can be obtained as follows by assuming independence between sources:

It is obvious that GGD-ILRMA (30) coincides with the conventional ILRMA (13) when β=p=2.

3.2.2 Derivation of update rules for GGD-ILRMA

First, we derive the iterative update rules for obtaining W i that optimizes (30). Since it is difficult to directly calculate the partial derivative of (30) w.r.t. wi,n, we use an MM algorithm, i.e., we minimize the majorization function (upper-bound function) instead of the original objective function. This approach can indirectly minimize the original function (30). Unlike the conventional ILRMA (13), GGD-ILRMA (30) includes the term \(|y_{ij,n}|^{\beta } = \left |\boldsymbol {w}_{i,n}^{\mathrm {H}}\boldsymbol {x}_{ij}\right |^{\beta }\). If we bound this term by |yij,n|2, the MM-algorithm-based efficient optimization, IP, can be used for GGD-ILRMA because the objective function becomes identical to the conventional ILRMA (13) w.r.t. wi,n. To achieve this, we use the following inequality:

to design a majorization function of (30), where γij,n>0 is an auxiliary variable and the equality of (31) holds if and only if

Note that the inequality (31) holds only for 0<β<2, and the other values of β are beyond the scope of this paper. By applying (31) to (30), the majorization function of (30) can be designed as

where \({\mathcal {C}}_{1}\) includes the constant terms that do not depend on wi,n. Since (33) has the same form as the conventional ILRMA (14) w.r.t. wi,n, we can apply IP to the majorization function (33). The update rules for wi,n are derived as (34) with (32) and (17)–(19), where (34) coincides with (16) when β=p=2.

Next, we derive the update rules for T n and V n . They can also be derived by designing a majorization function and applying the MM algorithm. Since the term \(\left ({\sum \nolimits }_{k} t_{ik,n}v_{kj,n}\right)^{-\frac {\beta }{p}}\) in (30) is always convex for all values of β>0 and p>0, we can bound this term using Jensen’s inequality as

where δij,nk>0 is an auxiliary variable that satisfies \({\sum \nolimits }_{k} \delta _{ij,nk}=1\). Also, the term \(\log {\sum \nolimits }_{k} t_{ik,n}v_{kj,n}\) in (30) can be bounded by the tangent-line inequality as

where εij,n>0 is an auxiliary variable. The equalities of (35) and (36) hold if and only if

respectively. By applying (35) and (36) to (30), the majorization function of (30) can be designed as

where \({\mathcal {C}}_{2}\) includes the constant terms that do not depend on tik,n or vkj,n. By setting the partial derivative of (39) w.r.t. tik,n to zero, we have

The solution of this equation is

Then, we can obtain the following update rule for tik,n by substituting (37) and (38) into (40):

Similar to (41), we can obtain the update rules for vkj,n as

These algorithms can be interpreted as NMF based on the GGD (hereafter called GGD-NMF). Since the derivations of the update rules are based on the MM algorithm, they ensure the monotonic decrease in the objective function in each iteration.

3.3 ILRMA based on Student’s t distribution

3.3.1 Generative model and objective function in t-ILRMA

In t-ILRMA, the isotropic complex Student’s t distribution is independently assumed in each time-frequency slot as the following source generative model:

where ν>0 is the degree-of-freedom parameter that controls the super-Gaussianity of Student’s t distribution and σij,n is defined as (29). The distribution (43) is depicted in Fig. 4b. Similar to (28), this p.d.f. also becomes identical to (9) when ν→∞, and the probability of (28) does not depend on the phase of yij,n. For ν=1, (43) corresponds to the complex Cauchy distribution. The objective function of t-ILRMA can be obtained from (43) as

When ν → ∞ and p = 2, (44) coincides with (13).

3.3.2 Derivation of update rules for t-ILRMA

Similarly in Section 3.2.2, we first derive the iterative update rules for W i that optimizes (44) using the MM algorithm. In the case of t-ILRMA, the objective function (44) includes the term \(|y_{ij,n}|^{2}= \left |\boldsymbol {w}_{i,n}^{\mathrm {H}}\boldsymbol {x}_{ij}\right |^{2}\) inside of the logarithm function. Therefore, we bound this term by a linear function of |yij,n|2 using the following tangent-line inequality:

where ζij,n>0 is an auxiliary variable and the equality of (45) holds if and only if

By applying (45) to (44), the majorization function of (44) can be designed as

where \({\mathcal {C}}_{3}\) includes the constant terms that do not depend on wi,n. Since (48) has the same form as the conventional ILRMA (14) w.r.t. wi,n, we can apply IP to the majorization function (48). The update rules for wi,n are derived as (49) with (46) and (17)–(19), where (49) coincides with (16) when ν→∞ and p=2.

Next, we derive the update rules for T n and V n in the same manner as for GGD-NMF. Since the term \(\left ({\sum \nolimits }_{k} t_{ik,n}v_{kj,n}\right)^{-\frac {2}{p}}\) in (47) is always convex for any value of p, we can bound this term in the same manner as (35), i.e.,

where ηij,nk>0 is an auxiliary variable that satisfies \({\sum \nolimits }_{k} \eta _{ij,nk}=1\). The equality of (50) holds if and only if

Also, the term \(\log {\sum \nolimits }_{k} t_{ik,n}v_{kj,n}\) in (47) can be bounded by (36). By applying (50) and (36) to (47), we can design a further majorization function of (47) as follows:

where \({\mathcal {C}}_{4}\) includes the constant terms that do not depend on tik,n or vkj,n. By setting the partial derivative of (52) w.r.t. tik,n to zero, we have

The solution of this equation is obtained as

Then, we can obtain the following update rule for tik,n by substituting (51) and (38) into (54):

where

Similar to (55), we can obtain the update rules for vkj,n as

These update rules are similar to those in t-NMF [39], but they include the new domain parameter p. Similar to GGD-ILRMA, all the derivations of the update rules are based on the MM algorithm, thus ensuring their theoretical convergence.

3.4 Relationship between GGD- and t-ILRMA

The update rules for tik,n and vkj,n (the GGD- and t-NMF parts in GGD- and t-ILRMA, respectively) have an interesting relationship. To clarify this issue, we here interpret these two NMF models in relation to the IS-NMF used in the original ILRMA. In GGD- and t-NMF, we introduced a new parameter p that determines the signal domain of the low-rank modeling, whereas IS-NMF is typically applied to the observed power spectrogram (p=2) [29]. To fill the gap in the formulation between IS-NMF and GGD- or t-NMF, we use the following generalized version of the update rules for IS-NMF:

where b is a new exponent parameter. Note that (58) and (59) with b=0.5 were originally derived on the basis of the MM algorithm [50], then the update rules with b=1 were derived using the majorization-equalization (ME) algorithm [53]. Recently, we have proven that (58) and (59) with any value of b in the range (0,1] can be interpreted as valid update rules of IS-NMF, which are obtained by applying the parametric ME algorithm to the objective function in IS-NMF, and can be used for IS-NMF or ILRMA without losing the theoretical convergence [54]. This parameter b controls the optimization speed of the NMF variables tik,n and vkj,n, and b=1 provides the fastest convergence in IS-NMF.

For GGD-NMF, (41) can be reformulated as

where

The update rule of GGD-NMF (60) corresponds to that of IS-NMF (58) by assuming the observed signal as (61), which is the “geometric mean” of the data |yij,n| and the low-rank model σij,n with a ratio of β/p to 1−(β/p). In contrast, for t-NMF, (55) can also be rewritten as

As mentioned in [39], the update rule of t-NMF (62) corresponds to that of IS-NMF (58) by assuming the observed signal to be (63), which is the “harmonic mean” of |yij,n|2 and \(\sigma _{ij,n}^{2}\) with a ratio of ν to two. The same reformulation can be found for the variable vkj,n.

These facts mean that both NMF algorithms approximate the virtual observation zij,n by the low-rank model σij,n in the ISNMF sense. Since zij,n consists of the geometric or harmonic mean of the real observation |yij,n| and the current low-rank model σij,n, low-rankness of the estimated (updated) model σij,n tends to be more emphasized compared with the ISNMF decomposition using only the observation |yij,n|. In other words, the geometric or harmonic mean in zij,n prevents σij,n from an overfitting to |yij,n| by ignoring sparse outliers in |yij,n|, which enhances the low-rank decomposition. In (61) or (63), the shape parameter β or ν controls the intensity of such low-rank enhancement in NMF decomposition. However, intriguingly, the domain parameter p also affects the estimation of the low-rank model σij,n. In GGD-NMF (61), by setting p<β, the geometric mean corresponds to the point externally dividing |yij,n| and σij,n, which mitigates the intensity of the low-rank enhancement mentioned above. Also, in t-NMF, p<2 causes the same behavior because the term \(\sigma _{ij,n}^{p-2}\) exists in (63), where the inverse of \(\sigma _{ij,n}^{2-p}\) (2−p>0) mitigates the low-rankness.

In summary, as shown in Table 1, smaller β and ν, which correspond to the sparse signal model, can inject the low-rank nature in GGD- and t-ILRMA, whereas a smaller p mitigates the property; the optimal balance among them will be discussed later on the basis of experimental evaluations. For ILRMA-based BSS, we can expect that such low-rank enhancement in NMF leads to the more accurate estimation of W i . This is because the estimation of the low-rank model σij,n becomes robust against outliers in the separated signal |yij,n|, and we can correctly capture the inherent spectral parts in the time-frequency structure of each source.

In addition, it is worth mentioning that the exponent value of the NMF update rules, b, is also important for ILRMA. It has been experimentally revealed that a smaller value of b is preferable for achieving better separation performance, although the optimized speed of rij,n becomes slow. This may be to avoid trapping at a poor local minimum in the early and middle stages of the iteration in ILRMA because the optimization balance between W i and rij,n is significant for converging toward a better solution. In GGD- or t-ILRMA, the exponent value in (60) or (62) is defined as p/(β+p) or p/(p+2), respectively. These values become small when p is small and β is large, which may result in a better separation result.

4 Results and discussion

To evaluate our proposed algorithms, we conducted some BSS experiments using music and speech mixtures. We first compared various conventional methods using observed signals in the case of two sources and two microphones. Then, we compared the conventional and proposed ILRMA in a more difficult situation with three sources and three microphones.

4.1 Dataset

We artificially produced monaural dry music sources of the four melody parts depicted in Fig. 5 using a YAMAHA MU-1000 PCM-based MIDI tone synthesizer, where several musical instruments were chosen to play these melody parts as shown in Table 2 [55]. The sources were selected to construct typical combinations of instruments with different melody parts (because the sources that simultaneously play the same melody are rare), where only the six combinations, Music 1–Music 6, were adopted for the sake of avoiding combinatorial explosion. For the speech signals, we used the monaural dry speech sources from the source separation task in SiSEC2011 [56] whose data names are dev1_female4 and dev1_male4 [57]. The detailed conditions of these speech signals are described in [56, 57].

4.2 BSS experiment with two sources

4.2.1 Conditions

In this experiment, we compared the seven methods shown in Fig. 1, namely, Laplace IVA (optimized by IP) [49], GGD-IVA (optimized by IP) [17], MNMF (based on a multivariate complex Gaussian distribution) [32], t-MNMF [40], ILRMA (based on a complex Gaussian distribution with a time-frequency-varying variance) [34], GGD-ILRMA, and t-ILRMA. The dry sources used in this experiment are shown in Table 3. To simulate a reverberant mixture, the mixture signals were produced by convoluting the impulse response E2A, which was obtained from the RWCP database [58], with two spatial arrangements, E2A_1 and E2A_2. The recording conditions of the impulse responses in E2A_1 and E2A_2 are depicted in Fig. 6. The other conditions are shown in Table 4. As the evaluation score, we used the improvement of the signal-to-distortion ratio (SDR) [59], which indicates the overall separation quality.

4.2.2 Results using fixed parameters

Figures 7, 8, 9, and 10 show examples of the average SDR improvements for Music 1, Music 4, Speech 2, and Speech 4, respectively, with the E2A_1 spatial arrangement. Ten trials with different random seeds were performed for all the methods. Note that conventional ILRMA and GGD-ILRMA with β=p=2 are the same method. Also, for GGD-IVA and t-MNMF, the results are shown for the best parameter settings β and ν, as described in the caption of each figure. Similar to the E2A_1 results, we show examples of results for Music 2, Music 3, Speech 1, and Speech 3 with the E2A_2 spatial arrangement in Figs. 11, 12, 13, and 14, respectively. Table 5 indicates the overall average results for all music and speech signals with E2A_1 and E2A_2, respectively, with the best parameter settings. From these results, we can confirm that the conventional and proposed ILRMA mostly outperform the other methods and that there are several settings of p and β or ν that outperform the conventional ILRMA based on the Gaussian distribution. In particular, the proposed methods with p=1.0 or p=0.5 often outperform the same methods with other values of p. However, regarding the parameters β and ν, smaller values produce poor separation results except for t-ILRMA in Fig. 8 (Music 4). This is because the NMF source model with the heavy-tailed distribution excessively enhances the low-rankness in the early stage of the iterative optimization, which can cause the serious problem of the sourcewise NMF model incorrectly capturing the spectrogram of the mixture signal by ignoring the important components for discriminating the sources, and the estimated signals become a distorted mixture signal and an artificial residue.

4.2.3 Results using parameter tempering

To solve the problem described in Section 4.2.2, we applied a tempering approach to the parameters in GGD- or t-ILRMA. The detailed tempering process is shown in Fig. 15. In the first half of the optimization, we perform GGD-ILRMA with β=2 and p=1. Then, the NMF source model T n V n is retrained using a temporary estimated signal. After that, ILRMA with the desired distribution (desired parameters pT and βT or νT) is performed using the pretrained W i , T n , and V n . The intermediate NMF process is based on the same parameters (pT and βT or νT) as the subsequent ILRMA in the second half of the optimization. This can be considered as a binary tempering approach that avoids overfitting of the source model to the mixture signal. Note that we also attempted a more precise tempering approach involving continuously changing the parameters in every iteration, but the binary tempering approach shown in Fig. 15 achieved the most accurate and stablest results. The reason why we started from not p=2 but p=1 is that a small exponent value, p/(β+p) in (41) and (42) or p/(p+2) in (55) and (57), in the NMF update rules provides better separation as revealed in [54], where the exponent value monotonically decreases as a value of p decreases. Indeed, the results in Section 4.2.2 showed outstanding performance for p=1 rather than p=2.

Figures 16, 17, 18, 19, 20, 21, 22, and 23 show examples of results with the proposed tempering approach, where the signals correspond to Figs. 7, 8, 9, 10, 11, 12, 13, and 14 with parameter tempering, and Table 6 shows the overall average results for all the signals. The results show that the parameter tempering improves the separation, particularly in ILRMA with heavy-tailed source models. Also, it further improves the results obtained using fixed values of the parameters. In total, the proposed generalization of ILRMA can achieve approximately 1.2 dB improvement in the SDR compared with the conventional ILRMA with the Gaussian model, which is a significant gain in BSS tasks with two sources.

4.2.4 Performance for various signal lengths

In BSS framework, the length of observed signal is important to achieve the better separation performance. This is because the accuracy of statistical estimation decreases when the number of time frames J is insufficient [60, 61]. In the extreme case, the demixing matrix W i cannot be updated by IP when J=1 because the rank of Ui,n in (16), Gi,n in (34), or Hi,n in (49) becomes unity. However, it is not clarified whether the heavy-tailed source distribution provides more robust statistical estimation for fewer time frames or not. Thus, in this subsection, we experimentally compare the separation performance of ILRMA, GGD-ILRMA, and t-ILRMA for the observed signals with fewer and more time frames.

To simulate the short and long source signals, we utilized the dry sources described in Table 3. As the short dry sources, the music and speech signals were trimmed only to the former half, and their signal lengths were 2.5 s (music) and 5.0 s (speech), respectively. In contrast, the long music and speech signals were produced by repeating the entire length of the dry sources twice, namely, the signal lengths of the long music and speech dry sources become 10.0 s (music) and 20.0 s (speech), respectively. These dry sources were convoluted with E2A_1 to produce the observed mixture signal with two sources, where the combinations of dry sources were the same as those described in Table 3. The other experimental conditions were the same as those in Section 4.2.2.

Figures 24 and 25 show the results of Music 1 for shorter and longer signals, respectively. Also, Table 7 shows the overall average results for all the signals. By comparing these figures and Fig. 7 (the results of Music 1 with the original length), we can confirm that the separation performance of all the methods improves in proportion to the number of time frames J. Similarly to ILRMA, GGD- and t-ILRMA also suffer from the degradation of separation performance depending on the decrease of J regardless of the heavy tail property.

4.3 BSS experiment with three sources

To emphasize the advantage of the proposed methods, we investigated a more difficult situation with three sources. In this experiment, for the sake of simplicity, we only compared the conventional ILRMA and the proposed GGD- and t-ILRMA. The used dry sources are shown in Table 8, which were convoluted with the impulse response depicted in Fig. 26. The other conditions were the same as those in Section 4.2.

Table 9 shows the overall average results of each method. Similar to the previous results, the proposed methods outperform the conventional ILRMA, and the tempering approach slightly improves the quality of separation compared with GGD- or t-ILRMA with fixed parameters.

4.4 Comparison of computational times

To demonstrate the optimization efficiency of ILRMA, we compared the computational times of Laplace IVA, GGD-IVA, MNMF, t-MNMF, ILRMA, GGD-ILRMA, and t-ILRMA. The update calculation for the NMF parameters in each algorithm was almost the same, but the estimation of the spatial parameter (W i for ILRMA-based methods and the spatial covariance for MNMF-based methods) was different. Although ILRMA-based methods require one inverse of W i Ui,n for each i and n, MNMF-based methods require J inverses and two eigenvalue decompositions of the M×M matrix. Table 10 shows relative computational times normalized by that of Laplace IVA based on IP [49], where the conditions are the same as in Table 4 and we used MATLAB 9.2 (64-bit) with an AMD Ryzen 7 1800X (8 cores and 3.6 GHz) CPU. From this table, we can confirm that the computational time of ILRMA-based methods is not significantly larger than that of IVA, whereas that of MNMF-based methods is significantly larger.

5 Conclusions

In this paper, we proposed two generalizations of the source distribution assumed in ILRMA that introduce a heavy-tailed property by using the GGD and Student’s t distribution. The GGD can be considered as a natural extension of the conventional Gaussian source model, and Student’s t distribution partially satisfies the stable property of complex-valued random variables, which is desirable for NMF-based low-rank decomposition. We derived efficient optimization algorithms for GGD- and t-ILRMA, which ensure a monotonic decrease in the objective function and provide faster computation than existing MNMF-based BSS methods. Also, we revealed an interesting relationship between GGD- and t-NMF: GGD-NMF is equivalent to IS-NMF upon assuming the geometric mean of the data and the low-rank model as an observation, whereas t-NMF corresponds to the same algorithm with the harmonic mean of the data and the low-rank model as previously mentioned. These properties lead to more accurate parameter estimation in an ILRMA-based BSS framework, resulting in higher separation accuracy than the conventional ILRMA with the Gaussian source distribution. From the experiments, it is confirmed that the proposed generalized ILRMA improves the separation accuracy, especially for the music mixture signals. However, the improvement for speech mixture signals is still limited. This is because typical speech sources do not have an apparent low-rank time-frequency structure, and NMF-based source model in ILRMA cannot capture the precise spectral structures in speech sources even if the source model is generalized by the heavy-tailed distributions. The better modeling for speech sources remains as a future work.

Abbreviations

- BSS:

-

Blind source separation

- FDICA:

-

Frequency-domain independent component analysis

- GGD:

-

Generalized Gaussian distribution

- GGD-ILRMA:

-

Independent low-rank matrix analysis based on generalized Gaussian distribution

- GGD-IVA:

-

Independent vector analysis based on spherical multivariate generalized Gaussian distribution

- ICA:

-

Independent component analysis

- ILRMA:

-

Independent low-rank matrix analysis

- IP:

-

Iterative projection

- IS-NMF:

-

Nonnegative matrix factorization based on Itakura–Saito divergence

- IVA:

-

Independent vector analysis

- Laplace IVA:

-

Independent vector analysis based on spherical multivariate Laplace distribution

- MM:

-

Majorization-minimization

- MNMF:

-

Multichannel nonnegative matrix factorization

- NMF:

-

Nonnegative matrix factorization

- p.d.f.:

-

Probability density function

- SDR:

-

Signal-to-distortion ratio STFT: Short-time Fourier transform

- t-ILRMA:

-

Independent low-rank matrix analysis based on Student’s t distribution

- t-MNMF:

-

Multichannel nonnegative matrix factorization based on Student’s t distribution

- t-NMF:

-

Nonnegative matrix factorization based on Student’s t distribution

- Time-varying Gaussian IVA:

-

Independent vector analysis based on Gaussian distribution having a time-varying variance

References

P Bofill, M Zibulevsky, Underdetermined blind source separation using sparse representations. Signal Process.81(11), 2353–2362 (2001).

S Araki, H Sawada, R Mukai, S Makino, Underdetermined blind sparse source separation for arbitrarily arranged multiple sensors. Signal Process.87(8), 1833–1847 (2007).

L Zhen, D Peng, Z Yi, Y Xiang, P Chen, Underdetermined blind source separation using sparse coding. IEEE Trans. Neural Netw. Learn. Syst.28(12), 3102–3108 (2017).

P Comon, Independent component analysis, a new concept?Signal Process.36(3), 287–314 (1994).

P Smaragdis, Blind separation of convolved mixtures in the frequency domain. Neurocomputing. 22(1), 21–34 (1998).

S Kurita, H Saruwatari, S Kajita, K Takeda, F Itakura, in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. Evaluation of blind signal separation method using directivity pattern under reverberant conditions, (2000), pp. 3140–3143.

H Sawada, R Mukai, S Araki, S Makino, in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. Convolutive blind source separation for more than two sources in the frequency domain, (2004), pp. 885–888.

H Saruwatari, T Kawamura, T Nishikawa, A Lee, K Shikano, Blind source separation based on a fast-convergence algorithm combining ICA and beamforming. IEEE Trans. Audio Speech Lang. Process.14(2), 666–678 (2006).

N Murata, S Ikeda, A Ziehe, An approach to blind source separation based on temporal structure of speech signals. Neurocomputing. 41(1–4), 1–24 (2001).

H Sawada, R Mukai, S Araki, S Makino, A robust and precise method for solving the permutation problem of frequency-domain blind source separation. IEEE Trans. Speech Audio Process.12(5), 530–538 (2004).

H Sawada, S Araki, S Makino, in Proc. IEEE Int. Symp. Circuits Syst. Measuring Dependence of Bin-wise Separated Signals for Permutation Alignment in Frequency-Domain BSS, (2007), pp. 3247–3250.

A Hiroe, in Proc. Int. Conf. Independent Compon. Anal. Blind Source Separation. Solution of permutation problem in frequency domain ICA using multivariate probability density functions, (2006), pp. 601–608.

T Kim, T Eltoft, T-W Lee, in Proc. Int. Conf. Independent Compon. Anal. Blind Source Separation. Independent vector analysis: an extension of ICA to multivariate components, (2006), pp. 165–172.

T Kim, HT Attias, S-Y Lee, T-W Lee, Blind source separation exploiting higher-order frequency dependencies. IEEE Trans. Audio Speech Lang. Process.15(1), 70–79 (2007).

G Box, G Tiao, Bayesian Inference in Statistical Analysis (Addison Wesley, Reading, Mass, 1973).

T Itahashi, K Matsuoka, Stability of independent vector analysis. Signal Process.92(8), 1809–1820 (2012).

N Ono, in Proc. Asia-Pacific Signal and Info. Process. Assoc. Annual Summit and Conf. Auxiliary-function-based independent vector analysis with power of vector-norm type weighting functions, (2012).

M Anderson, GS Fu, R Phlypo, T Adalı, in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. Independent vector analysis, the Kotz distribution, and performance bounds, (2013), pp. 3243–3247.

Y Liang, J Harris, SM Naqvi, G Chen, JA Chambers, Independent vector analysis with a generalized multivariate Gaussian source prior for frequency domain blind source separation. Signal Process.105:, 175–184 (2014).

Z Boukouvalas, GS Fu, T Adalı, in Proc. Annual Conf. Info. Sci. and Syst. An efficient multivariate generalized Gaussian distribution estimator: application to IVA, (2015).

T Ono, N Ono, S Sagayama, in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. User-guided independent vector analysis with source activity tuning, (2012), pp. 2417–2420.

DD Lee, HS Seung, Learning the parts of objects by non-negative matrix factorization. Nature. 401(6755), 788–791 (1999).

DD Lee, HS Seung, in Proc. Neural Info. Process. Syst. Algorithms for non-negative matrix factorization, (2000), pp. 556–562.

T Virtanen, Monaural sound source separation by nonnegative matrix factorization with temporal continuity and sparseness criteria. IEEE Trans. Audio, Speech, Lang. Process.15(3), 1066–1074 (2007).

P Smaragdis, B Raj, M Shashanka, in Proc. Int. Conf. Independent Compon. Anal. Signal Separation. Supervised and semi-supervised separation of sounds from single-channel mixtures, (2007), pp. 414–421.

A Ozerov, C Févotte, M Charbit, in Proc. IEEE Workshop Applicat. Signal Process. Audio Acoust. Factorial scaled hidden Markov model for polyphonic audio representation and source separation, (2009), pp. 121–124.

D Kitamura, H Saruwatari, K Yagi, K Shikano, Y Takahashi, K Kondo, Music signal separation based on supervised nonnegative matrix factorization with orthogonality and maximum-divergence penalties. IEICE Trans. Fundam. Electron. Commun. Comput. Sci.E97-A(5), 1113–1118 (2014).

D Kitamura, H Saruwatari, H Kameoka, Y Takahashi, K Kondo, S Nakamura, Multichannel signal separation combining directional clustering and nonnegative matrix factorization with spectrogram restoration. IEEE/ACM Trans. Audio, Speech, Lang. Process.23(4), 654–669 (2015).

C Févotte, N Bertin, J-L Durrieu, Nonnegative matrix factorization with the Itakura–Saito divergence. With application to music analysis. Neural Comput.21(3), 793–830 (2009).

A Ozerov, C Févotte, Multichannel nonnegative matrix factorization in convolutive mixtures for audio source separation. IEEE Trans. Audio, Speech, Lang. Process.18(3), 550–563 (2010).

H Kameoka, T Yoshioka, M Hamamura, JL Roux, K Kashino, in Proc. Int. Conf. Latent Variable Anal. Signal Separation. Statistical model of speech signals based on composite autoregressive system with application to blind source separation, (2010), pp. 245–253.

H Sawada, H Kameoka, S Araki, N Ueda, Multichannel extensions of non-negative matrix factorization with complex-valued data. IEEE Trans. Audio, Speech, Lang. Process.21(5), 971–982 (2013).

D Kitamura, N Ono, H Sawada, H Kameoka, H Saruwatari, in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. Efficient multichannel nonnegative matrix factorization exploiting rank-1 spatial model, (2015), pp. 276–280.

D Kitamura, N Ono, H Sawada, H Kameoka, H Saruwatari, Determined blind source separation unifying independent vector analysis and nonnegative matrix factorization. IEEE/ACM Trans. Audio, Speech, Lang. Process.24(9), 1626–1641 (2016).

D Kitamura, N Ono, H Sawada, H Kameoka, H Saruwatari, in Audio Source Separation, ed. by S Makino. Determined blind source separation with independent low-rank matrix analysis (SpringerCham, 2018), pp. 125–155. https://link.springer.com/chapter/10.1007%2F978-3-319-73031-8_6#citeas.

C Févotte, SJ Godsill, A Bayesian approach for blind separation of sparse sources. IEEE Trans. Audio, Speech, Lang. Process.14(6), 2174–2188 (2006).

S Leglaive, R Badeau, G Richard, in Proc. Eur. Signal Process. Conf. Semi-blind Student’s t source separation for multichannel audio convolutive mixtures, (2017).

A Liutkus, D FitzGerald, R Badeau, in Proc. IEEE Workshop Appl. Signal Process. Audio Acoust. Cauchy nonnegative matrix factorization, (2015).

K Yoshii, K Itoyama, M Goto, in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. Student’s t nonnegative matrix factorization and positive semidefinite tensor factorization for single-channel audio source separation, (2016), pp. 51–55.

K Kitamura, Y Bando, K Itoyama, K Yoshii, in Proc. Int. Workshop Acoust. Signal Enh. Student’s t multichannel nonnegative matrix factorization for blind source separation, (2016).

G Samorodnitsky, MS Taqqu, Stable Non-Gaussian Random Processes: Stochastic Models with Infinite Variance (Chapman & Hall/CRC Press, Florida, 1994).

A Liutkus, R Badeau, in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. Generalized Wiener filtering with fractional power spectrograms, (2015), pp. 266–270.

S Leglaive, U Simsekli, A Liutkus, R Badeau, G Richard, in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. Alpha-stable multichannel audio source separation, (2017), pp. 576–580.

S Mogami, D Kitamura, Y Mitsui, N Takamune, H Saruwatari, N Ono, in Proc. IEEE Int. Workshop Mach. Learn. Signal Process. Independent low-rank matrix analysis based on complex Student’s t-distribution for blind audio source separation, (2017).

NQK Duong, E Vincent, R Gribonval, Under-determined reverberant audio source separation using a full-rank spatial covariance model. IEEE Trans. Audio Speech Lang. Process.18(7), 1830–1840 (2010).

D Kitamura, N Ono, H Sawada, H Kameoka, H Saruwatari, in Proc. Eur. Signal Process. Conf. Relaxation of rank-1 spatial constraint in overdetermined blind source separation, (2015), pp. 1271–1275.

DR Hunter, K Lange, Quantile regression via an MM algorithm. J. Comput. Graph. Stat.9(1), 60–77 (2000).

N Ono, S Miyabe, in Proc. Int. Conf. Latent Variable Anal. Signal Separation. Auxiliary-function-based independent component analysis for super-Gaussian sources, (2010), pp. 165–172.

N Ono, in Proc. IEEE Workshop Appl. Signal Process. Audio Acoust. Stable and fast update rules for independent vector analysis based on auxiliary function technique, (2011), pp. 189–192.

M Nakano, H Kameoka, JL Roux, Y Kitano, N Ono, S Sagayama, in Proc. IEEE Int. Workshop Mach. Learn. Signal Process. Convergence-guaranteed multiplicative algorithms for nonnegative matrix factorization with beta-divergence, (2010), pp. 283–288.

N Murata, S Ikeda, A Ziehe, An approach to blind source separation based on temporal structure of speech signals. Neurocomputing. 41(1–4), 1–24 (2001).

D Kitamura, Algorithms for Independent Low-rank Matrix Analysis.http://d-kitamura.net/pdf/misc/AlgorithmsForIndependentLowRankMatrixAnalysis.pdf. Accessed 27 Apr 2018.

C Févotte, J Idier, Algorithms for nonnegative matrix factorization with the β-divergence. Neural Comput.23(9), 2421–2456 (2011).

Y Mitsui, D Kitamura, N Takamune, H Saruwatari, Y Takahashi, K Kondo, in Proc. IEEE Int. Workshop Comput. Adv. Multi-Sensor Adaptive Process. Independent low-rank matrix analysis based on parametric majorization-equalization algorithm, (2017), pp. 98–102.

D Kitamura, Open Dataset: songKitamura. http://d-kitamura.net/en/dataset_en.htm. Accessed 27 Apr 2018.

S Araki, F Nesta, E Vincent, Koldovsky, Ź, G Nolte, A Ziehe, A Benichoux, in Proc. Int. Conf. Latent Variable Anal. Signal Separation. The 2011 signal separation evaluation campaign (SiSEC2011):-audio source separation, (2012), pp. 414–422.

Third Community-based Signal Separation Evaluation Campaign (SiSEC 2011). http://sisec2011.wiki.irisa.fr. Accessed 27 Apr 2018.

S Nakamura, K Hiyane, F Asano, T Nishiura, T Yamada, in Proc. Int. Conf. Lang. Res. Eval. Acoustical sound database in real environments for sound scene understanding and hands-free speech recognition, (2000), pp. 965–968.

E Vincent, R Gribonval, C Févotte, Performance measurement in blind audio source separation. IEEE Trans. Audio, Speech, Lang. Process.14(4), 1462–1469 (2006).

S Araki, R Mukai, S Makino, T Nishikawa, H Saruwatari, The fundamental limitation of frequency domain blind source separation for convolutive mixtures of speech. IEEE Trans. Speech and Audio Process.11(2), 109–116 (2003).

D Kitamura, N Ono, H Saruwatari, in Proc. Eur. Signal Process. Conf. Experimental analysis of optimal window length for independent low-rank matrix analysis, (2017), pp. 1210–1214.

Acknowledgements

The authors would like to thank the anonymous reviewers for their valuable comments and suggestions that helped improve the quality of this manuscript.

Funding

This work was partly supported by the ImPACT Program of Council for Science, SECOM Science and Technology Foundation, and JSPS KAKENHI Grant Numbers JP16H01735, JP17H06101, and JP17H06572.

Availability of data and materials

Not available online. Please contact author for data requests.

Author information

Authors and Affiliations

Contributions

All authors have contributed equally. All authors have read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare that they have no competing interests.

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Kitamura, D., Mogami, S., Mitsui, Y. et al. Generalized independent low-rank matrix analysis using heavy-tailed distributions for blind source separation. EURASIP J. Adv. Signal Process. 2018, 28 (2018). https://doi.org/10.1186/s13634-018-0549-5

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s13634-018-0549-5