Abstract

Background

We completed a scoping review on the barriers and facilitators to use of systematic reviews by health care managers and policy makers, including consideration of format and content, to develop recommendations for systematic review authors and to inform research efforts to develop and test formats for systematic reviews that may optimise their uptake.

Methods

We used the Arksey and O’Malley approach for our scoping review. Electronic databases (e.g., MEDLINE, EMBASE, PsycInfo) were searched from inception until September 2014. Any study that identified barriers or facilitators (including format and content features) to uptake of systematic reviews by health care managers and policy makers/analysts was eligible for inclusion. Two reviewers independently screened the literature results and abstracted data from the relevant studies. The identified barriers and facilitators were charted using a barriers and facilitators taxonomy for implementing clinical practice guidelines by clinicians.

Results

We identified useful information for authors of systematic reviews to inform their preparation of reviews including providing one-page summaries with key messages, tailored to the relevant audience. Moreover, partnerships between researchers and policy makers/managers to facilitate the conduct and use of systematic reviews should be considered to enhance relevance of reviews and thereby influence uptake.

Conclusions

Systematic review authors can consider our results when publishing their systematic reviews. These strategies should be rigorously evaluated to determine impact on use of reviews in decision-making.

Similar content being viewed by others

Background

Knowledge syntheses are comprehensive and reproducible evidence reviews that summarise all relevant studies on a question [1]. They can include traditional systematic reviews and scoping reviews, amongst others. Knowledge translation (KT) focusing on the results of individual studies may be misleading due to bias in their conduct or random variations in findings [2]. Knowledge syntheses that interpret the results of individual studies within the context of global evidence should be considered as the foundational unit of KT, as they interpret the results of individual studies within the context of the totality of evidence and are less susceptible to bias than single studies [3]. Knowledge syntheses, such as systematic reviews, provide the evidence base for implementation vehicles, such as patient decision aids and clinical decision aids clinical practice guidelines and policy [3]. For example, our research team conducts knowledge syntheses for the nationally funded Drug Safety and Effectiveness Network whereby we complete knowledge synthesis to answer questions posed by our provincial and national policy makers [4, 5].

There have been several models or classifications of evidence (or knowledge) use [6–13]. Larsen described conceptual and behavioural knowledge use [7]. Conceptual knowledge use refers to using knowledge to change the way users think about issues. Instrumental knowledge use refers to changes in action as a result of knowledge use. Dunn categorised knowledge use by describing that it could be done by the individual or a collective [8]. Weiss also described several frameworks for knowledge use, including the problem solving model, which she described as the direct application of the results of a study to a decision [9]. She further described this as using knowledge as “ammunition” [9]. Beyer and Trice labelled this type of knowledge use as symbolic, which they added to Larsen’s framework [10]. Symbolic use involves the use of research as a political or persuasive tool. Estabrooks described a similar framework for knowledge use including direct, indirect, and persuasive research utilisation, where these terms are analogous to instrumental, conceptual, and symbolic knowledge use, respectively [11].

We find it useful to consider conceptual, instrumental and persuasive knowledge use [6, 12]. Conceptual use of knowledge implies changes in knowledge, understanding, or attitudes. Research could change thinking and inform decision-making but not change behaviour. Instrumental knowledge use is the concrete application of knowledge and describes changes in behaviour, for example [6]. Evidence can be translated into a usable form, such as a care pathway, guideline, or policy, and is used in making a specific decision. Persuasive knowledge use is also called strategic or symbolic knowledge use and refers to research being used as a political or persuasive tool. It relates to the use of knowledge to attain specific power or profit goals (i.e. knowledge as ammunition) [6, 12].

Use of evidence by policy makers and managers can include any of these approaches. Oliver and colleagues have argued that the concept of knowledge use is further complicated in the policy context because research evidence is just one form of knowledge that informs decision-making. They also pose that researchers need to understand what influences and constitutes policy to better understand what evidence is required and how it can be used [13].

Despite advances in the conduct and reporting of systematic reviews and recognition of their importance in health care decision-making, current evidence suggests that they are infrequently used by health care managers and policy makers [14, 15]. Failure of health systems to optimally use high-quality research evidence results in inefficiencies, reduced quantity and quality of life, and lost productivity [16, 17]. As just one example of this issue, glucose self-testing by older patients with diabetes who use oral hypoglycemic agents has been identified as unnecessary and potentially harmful to patients in systematic reviews [18]. However, financial reimbursement for glucose test strips for these patients continues in many countries, costing just one province in Canada $40 million per year [19].

We previously conducted a knowledge synthesis [20] to identify interventions to encourage use of systematic reviews by health policy makers and health care managers and identified four articles. Three of these articles described one study in which five systematic reviews were mailed to public health officials and followed up with surveys [21–23]. The authors found that 23 to 63 % of survey respondents reported using the systematic reviews to inform policy making decisions. The fourth study was a randomised trial of tailored messages combined with access to a registry of systematic reviews and showed a significant effect on policies made in the area of health body weight promotion by health departments [24]. In more recent systematic reviews [25, 26], no additional studies were identified that assessed interventions to increase uptake of systematic reviews by health care managers and policy makers.

Given that systematic reviews are less susceptible to bias than a single primary study or the opinions of experts, it is not clear why they are not used routinely in decision-making. Two systematic reviews of barriers and facilitators to the use of systematic reviews by any type of decision-maker (e.g. clinicians, patients, managers) identified many factors that contribute to paucity of use including lack of relevance of the questions the reviews are addressing, lack of contextualisation of findings, unwieldy size of the report, and poor presentation format; these factors can be considered intrinsic to the systematic review [25, 27]. The format of systematic reviews has been a key factor identified to influence their use by policy makers and managers [28]. While attention has been paid to enhance the quality of systematic reviews, relatively little attention has been paid to their format. For example, health care managers and policy makers would benefit from highlighting information that is relevant for their decisions including contextual factors affecting local applicability and information about costs [27]. And, because reporting of systematic reviews tends to focus on methodological rigour rather than context, they often do not provide crucial information for decision-makers. Other barriers to use of reviews by health care managers and policy makers include factors extrinsic to the review, such as lack of access and time to seek and acquire systematic reviews and lack of skills to appraise and apply the evidence [27, 29].

Surveys and interviews with policy makers and managers have identified the importance of increasing the usability of systematic reviews in decision-making [30, 31]. Understanding how to make systematic reviews more usable requires consideration of barriers and facilitators to their use, as well as of their format and content. As such, we completed a scoping review on the barriers and facilitators to use of systematic reviews including consideration of format and content by health care managers and policy makers to develop recommendations for systematic review authors and to inform research efforts to develop and test formats for systematic reviews that may optimise uptake. This project arose directly from our decision-maker partners, for whom we conduct knowledge syntheses. This review is part of a multi-phase project to develop and testing a format for a systematic review to optimise use.

Methods

We conducted a scoping review [32] using guidance from the Joanna Briggs Methods Manual for Scoping Reviews [33]. A protocol was prepared and revised using input from our key stakeholders. Although the PRISMA Statement has not been modified for scoping reviews, we used it to guide reporting [34].

Data sources and search

We searched the following electronic databases from inception until week 3 of September 2014: MEDLINE, EMBASE, PsycInfo, The Cochrane Database of Systematic Reviews, Cochrane Central Register of Controlled Trials, CINAHL, and LISA (Library and Information Science Abstracts). The literature searches for the previous reviews [20, 26] were peer-reviewed by an information scientist and modified as necessary. The full literature search for MEDLINE is available in Additional file 1, and the other database searches are available from the corresponding author upon request. The search strategy was not limited by study design or language of dissemination. The grey literature was searched using Google after identifying key websites (such as websites of funding agencies and health care provider organisations in Canada, the USA, and the UK that fund or conduct systematic reviews). We supplemented the literature search by scanning references of included articles and relevant, published systematic reviews [20, 25–27, 29]. We also conducted a forward citation search in the Web of Science whereby we used the included studies to identify other potentially relevant studies. The results of the literature search were imported into Synthesi.SR [35], which was used for screening by the review team.

Study selection: inclusion criteria

Eligible studies included health care managers (defined as an individual in a managerial or supervisory role in a health care organisation with management and supervisory mandates, including public health officials) or policy makers/analysts (defined as an individual (non-elected) at some level of government; they may have some responsibility for analysing data and making recommendations to others and may include regional, provincial, or federal representation) as participants. The focus of the review was on policy/management decision-making; however, clinical decision-making articles were included if policy decision-making was also mentioned and these data could be abstracted. Often the policy articles considered clinical decision-making as well, given that this is often a downstream consideration. For example, if the policy makers felt that clinicians would not implement the evidence, this would be eligible for inclusion. Studies that identified barriers or facilitators (including format and content features) to uptake of systematic reviews by health care managers and policy makers/analysts were eligible for inclusion. All study designs including qualitative or quantitative methodologies where there was a description of the barriers or facilitators to use of evidence from systematic reviews by the relevant end-user groups were eligible. Specifically, we included systematic reviews, experimental (randomised controlled trials, quasi-randomised controlled trials, non-randomised controlled clinical trials), quasi-experimental (controlled before after studies, interrupted time series), observational (cohort, case control, cross-sectional), and qualitative studies. If more than one publication described a single study presenting the same data, we included the most recent. Studies conducted in any setting or country and those published in any language were eligible for inclusion.

Study selection: screening

To ensure reliability, a calibration exercise with reviewers was conducted prior to commencing screening. Using the eligibility criteria, a random sample of 10 % of citations from the search were screened independently by all reviewers. Screening only began when percent agreement was >90 % across the review team. A similar calibration exercise was completed prior to screening full-text articles for inclusion. Subsequently, two reviewers independently reviewed titles and abstracts and full-text articles for inclusion. Conflicts were resolved through discussion.

Data abstraction

Two reviewers independently reviewed each full-text article and extracted relevant data. Data were extracted on study design, participants, country, barriers, and facilitators to use of the systematic review. Differences in abstraction were resolved by discussion. We did not assess risk of bias of individual studies because our aim was to map the evidence, as is consistent with the proposed scoping review methodology [32, 33].

Data charting and collation

The barriers and facilitators were charted using a taxonomy of barriers and facilitators to implementation of clinical practice guidelines by clinicians [36]. This taxonomy was expanded to include attributes of the systematic review, specifically its format and content, and was reviewed by a health care manager to ensure face validity. The taxonomy was based on a systematic review of barriers and facilitators to evidence use by clinicians as no similar review was available explicitly for policy makers and managers at the time we completed our review. We shared the taxonomy with our decision-maker partners to assess for face validity and no additional categories were identified. Two reviewers reviewed each article and identified the unit of text relevant to each of these factors using a coding scheme they developed. Qualitative analysis was conducted using NVivo 10 [37]. Codes were aggregated by themes, centred on whether the barriers/facilitators influenced participants’ attitudes towards; knowledge of; skills in seeking, appraising or using; or use of systematic reviews in decision-making. Discrepancies in coding were discussed by the team to achieve consensus.

Consultation

Team members (including representatives from the health care managers and policy makers/analysts from the Ontario Ministry of Health and Long-term Care) were consulted at various stages of the scoping review to provide input on the search, data abstraction, and interpretation of the results.

Results

Literature search

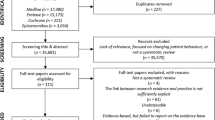

A total of 6635 titles and abstracts and 201 full-text articles was assessed for eligibility. Subsequently, 19 studies reported in 21 publications fulfilled the eligibility criteria and were included [21–24, 30, 31, 38–52]. The reasons for excluding full-text articles are provided in Fig. 1.

Characteristics of the included articles

All but one of the studies was published after 2000. Authors of the papers were commonly from Canada, Australia, and the UK. Nineteen of the publications were qualitative studies, 1 study was quantitative and described the impact of an intervention to facilitate use of systematic reviews, and 1 was a systematic review. A description of included studies is provided in Table 1.

Barriers to use of systematic reviews

Barriers to the use of systematic reviews are presented in Table 2. We have also presented the results per study identified and present this in Additional file 2.

Attitudes

Factors limiting the use of systematic reviews through an affective component were considered as those barriers that affected attitudes towards the use of systematic reviews [36]. Lack of agreement with the usefulness of systematic reviews in general and lack of agreement with results of specific systematic reviews were identified as such barriers [21, 44, 50]. With regard to the former, participants believed that systematic reviews may challenge their autonomy in decision-making because the evidence in the review would dictate their decisions and this was perceived to be a barrier to their use. Lack of outcome expectancy was also a barrier to use whereby participants believed that decisions based on systematic reviews would not lead to the desired outcome because they did not believe the causal inference implied by the results of the review [45]. Lack of motivation to change or resistance to change was a barrier to use of systematic reviews [51]. Barriers related to lack of agreement with results of specific systematic reviews were also noted and included features such as participants’ lack of agreement with the evidence interpretation, their lack of confidence in the authors of the review, or their belief that the results of the review are not valid [21].

Knowledge

Factors limiting adherence through a cognitive component were considered knowledge barriers to use of systematic reviews [36]. For example, participants reported the lack of awareness or lack of familiarity with a systematic review as influencing use. Particular challenges related to this factor included the tremendous volume of information required for participants to stay abreast of in relevant areas, the lack of knowledge on how to access relevant systematic reviews, and the lack of awareness of the importance of systematic reviews [24, 43, 44].

Skills

Factors limiting adherence to systematic review evidence through a lack of ability were considered barriers related to skills [36]. Participants reported the lack of skills to find, assess, interpret, or use systematic reviews in decision-making [44, 47, 52]. Additional elements included the lack of ability to reconcile patient preferences with recommendations.

Behaviour

Several behavioural barriers to use of systematic reviews were reported, and these focused on external barriers to their use, including patient and clinician factors. For example, patient and clinician resistance to implementing the evidence outlined in the systematic review may lead to policy makers and managers being reluctant to use the evidence. Participants also reported factors intrinsic to the systematic review as barriers to their use, including the presence of contradictory results from different systematic reviews or difficulty accessing reviews and in particular, difficulty identifying their key messages quickly when they are needed for decision-making [39, 44, 47]. Extrinsic or environmental barriers were identified including lack of time and organisational constraints, which prevent the individual from implementing the review. In one of the intervention studies that were included, lack of time and availability of relevant systematic reviews were identified as barriers to use in decision-making by public health officials [21, 42, 44].

Facilitators to use of systematic reviews

Facilitators to the use of systematic reviews are presented in Table 3.

Attitudes

Participants identified that agreement with the usefulness of systematic reviews, belief in their relevance, and their applicability to policy facilitated their use. Participants perceived systematic reviews were useful if they had confidence in the review authors [47, 52]. Enthusiasm and motivation to change were facilitators for use of systematic reviews; in particular, if important and relevant reviews could be provided to policy makers at key points in decision-making, this was perceived to be influential in their further use [23, 47, 51].

Knowledge

Familiarity or awareness of systematic reviews were potential facilitators of their use. In particular, knowledge of their importance relative to single primary studies [31].

Skills

Participants reported that skills in seeking, appraising, and interpreting systematic reviews facilitated their use [22, 23, 30]. For example, training in basic searching skills was identified as a facilitator [52].

Behaviour

Extrinsic factors that were perceived to facilitate use included creating collaborations between policy makers and researchers whereby researchers could provide systematic reviews of relevance to policy makers in a timely fashion and facilitate their interpretation [23, 24, 42, 43]. This approach reflects a change in culture for researchers and policy makers/managers. In one of the intervention studies that were included, resources to implement the research and availability of the systematic review were identified as facilitators to using them in decision-making by public health officials [21].

Format features to facilitate use of systematic reviews

Several recommendations from policy makers and health care managers regarding formatting of systematic reviews to enhance uptake were identified (Table 4). Many participants suggested a one-page summary of the review including clear “take home” messages written in plain language, the publication date of the review, and sponsoring logos [47, 49]. Some participants recommended that the summary include sections on relevance, impact, and applicability for decision-makers [45, 47, 52]. They also recommended that the report for the full review should use a liberal amount of white space with bullet points (avoiding dense text) and simple tables (less than one page in length) and consider tailored versions with targeted key messages for relevant audiences [24]. Another suggestion was to frame the title of the systematic review as a question [47].

Content features to facilitate use of systematic reviews

A commonly requested feature amongst the studies was to frame the evidence in terms of policy application, including implications of implementation and potential outcomes (Table 5) [43, 47, 49]. Participants suggested that the methods details be minimised to focus on the critical elements and that the bulk of the report should focus on the results and interpretation [43, 44, 47]. Ways to make study quality of included studies easy for users to interpret, such as providing a graphical summary, were suggested [44, 47, 52]. Participants also asked that consistent approaches be used to report effect sizes of interventions throughout the review report.

Conclusions

We identified several determinants of the use of systematic reviews by policy makers and managers including factors influencing attitudes, knowledge, skills, and behaviours. For authors of systematic reviews, there are factors that are potentially modifiable and that may increase use of systematic reviews including features affecting format and content. From a format perspective, review authors can consider providing a one-page summary with key messages including importance of the topic, key results, and implications for decision-makers. This summary should be clearly written and concise. Similarly, the report for the full review should use white space, avoid dense text, and try to limit tables to one page. With regard to content of the reviews, the methods should be concise and the results should provide an easy to interpret summary of the risk of bias of individual studies, keeping in mind that the audience may have limited skills in appraising the evidence and limited time to do so. The use of a graphical display of the risk of bias, such as the figure advocated by the Cochrane Collaboration [53] is something review authors should consider using. The discussion should include the relevance of the results to decision-makers and factors important for contextualising the evidence. Systematic reviews should include consideration of what factors influence contextualisation of the evidence. To make knowledge more useful to the local context, commissioners of reviews such as policy makers frequently undertake processes to contextualise evidence [54], and if guidance on this can be provided by researchers, this may facilitate the process. For example, if information is available on how the evidence might be useful in resource constrained circumstances versus higher income settings, this should be provided in a systematic review. To make knowledge more useful to the local context, commissioners of reviews, such as policy makers, frequently undertake processes to contextualise evidence [54], and if guidance on this can be provided by researchers, this may facilitate their efforts. It was also suggested that the messages be tailored to different audiences, reflecting their needs. For example, the summary and report that is sent to policy makers would be different from the one sent to health care managers. These formatting suggestions should also be considered by journal editors and publishers to consider enhancing use of reviews. Of particular importance is the topic addressed by the systematic review as several studies raised the concern that the topics often were not perceived to be relevant by policy makers and managers. As such, it was suggested that a different approach be undertaken to conduct reviews whereby partnerships between researchers and decision-makers are created to ensure that the questions the reviews are tackling are relevant to the decision-makers. This approach requires a change in the organisational culture within health care and research, although there are numerous examples of successful partnerships like these [4, 5, 55, 56].

Several factors were perceived to influence use of systematic reviews that are extrinsic to the review, including a lack of motivation to use them, lack of awareness, and lack of skills to seek, appraise, and interpret systematic reviews. Tackling these challenges could also be addressed by developing partnerships between researchers and decision-makers, such as a train the trainer approach whereby systematic reviewers work with decision-makers to build capacity in either conducting reviews or interpreting their results within their organisation. If researchers can provide useful systematic reviews and illustrate how they can be used in a timely fashion to inform decision-making, this could provide motivation for continued use. Similarly, strategies to enhance awareness of reviews could be enhanced by these partnerships. Participants also raised the concern that using a systematic review to guide decision-making led to a perceived lack of autonomy in decision-making. This concern has been raised by clinicians for many years in relation to the practice of evidence-based health care, reflecting the issue that using evidence implies a “cookbook” approach to decision-making [57]. It highlights a misunderstanding around the appropriate use of evidence, whereby the practice of evidence-based health care requires integration of evidence, expertise, and values and circumstances [58].

Our results are consistent with systematic reviews of barriers [29] and facilitators [27] to use of systematic reviews by any decision-maker. These same authors also recently published a systematic review of interventions to increase use of systematic reviews [25]. Oliver and colleagues also published a review of barriers and facilitators to use of evidence by policy makers; however, their review was not limited to use of systematic reviews [59]. Search dates for all of these systematic reviews were between 2010 and 2012, while ours was extended to 2014. We found an additional six to nine studies not included in these reviews. Moreover, our review focused on factors (and categorised them) including format and content of the review. Our intent is to use the results of this review to inform the development of a template for providing results of systematic reviews to decision-makers and as such, we wanted to extend the findings of other reviews to include both intrinsic and extrinsic factors. Because of this focus, we did not include use of other types of research evidence beyond systematic reviews (e.g. results of single studies). Of note, we identified no additional studies reporting interventions to increase use of systematic reviews.

There are several limitations to our scoping review. First, it is a scoping review because we wanted to map the literature to inform future research on formatting systematic reviews and to provide guidance for authors of systematic reviews. As such, we did not perform risk of bias assessment on individual studies [32]. Second, most of the studies that were included in our scoping review were small qualitative studies and thus their results may not be generalizable. However, studies from a broad range of countries were included in our review, and the results are consistent across studies and previous reviews. Third, the literature search on this topic is limited by poor indexing of the primary studies in this area. To overcome this, our comprehensive search of the databases was supplemented by a grey literature search.

This review represents the first phase of a multi-phase project with is being conducted in partnership with decision-makers from four provinces in Canada. The next phases include completing a survey of perceptions of barriers and facilitators to use of systematic reviews by policy makers and health care managers in these provinces; integrating the survey and review results to develop a format for systematic reviews and test its usability using heuristic and individual usability testing; and conducting a randomised trial to assess a traditional systematic review format compared with the new format on the ability of health care managers and policy makers to understand the evidence in the review and apply it to a relevant health care decision-making scenario. We have done similar work to create a format for clinicians and found that it influences their ability to apply the evidence from a systematic review to a clinical scenario [20, 60–64].

In summary, we identified common themes across a variety of studies that explored factors influencing use of systematic reviews by policy makers and managers. Useful information has been identified for authors of systematic reviews to inform their preparation of reviews including providing one-page summaries with key messages, tailored to the relevant audience. Moreover, partnerships between researchers and policy makers/managers to facilitate conduct and use of systematic reviews should be considered to enhance relevance of reviews and thereby influence uptake. Finally, these strategies should be rigorously evaluated to determine impact on reviews.

References

Cook DJ, Mulrow CD, Haynes RB. Systematic reviews: synthesis of best evidence for clinical decisions. Ann Intern Med. 1997;126(5):376–80.

Antman EM, Lau J, Kupelnick B, Mosteller F, Chalmers TC. A comparison of results of meta-analyses of randomized control trials and recommendations of clinical experts. Treatments for myocardial infarction. JAMA. 1992;268(2):240–8.

Grimshaw JM, Santesso N, Cumpston M, Mayhew A, McGowan J. Knowledge for knowledge translation: the role of the Cochrane Collaboration. J Contin Educ Health Prof. 2006;26(1):55–62. doi:10.1002/chp.51.

Tricco AC, Soobiah C, Blondal E, Veroniki AA, Khan PA, Vafaei A, et al. Comparative safety of serotonin (5-HT3) receptor antagonists in patients undergoing surgery: a systematic review and network meta-analysis. BMC Med. 2015;13:142. doi:10.1186/s12916-015-0379-3.

Tricco AC, Soobiah C, Blondal E, Veroniki AA, Khan PA, Vafaei A, et al. Comparative efficacy of serotonin (5-HT3) receptor antagonists in patients undergoing surgery: a systematic review and network meta-analysis. BMC Med. 2015;13:136. doi:10.1186/s12916-015-0371-y.

Graham ID, Logan J, Harrison MB, Straus SE, Tetroe J, Caswell W, et al. Lost in knowledge translation: time for a map? J Contin Educ Health Prof. 2006;26(1):13–24. doi:10.1002/chp.47.

J. L. Knowledge utilization. What is it? Knowledge: creation, diffusion, utilization. 1980;1:421–42.

Dunn WN. Measuring knowledge use. Knowledge: Creation, diffusion, utilization. 1983;5:120–33.

CH W. The many meanings of research utilization. Public Administration Rev. 1979:426–31.

Beyer JM, Trice HM. The utilization process: a conceptual framework and synthesis of empirical findings. Admin Sci. 1982;27:591–622.

Estabrooks CA. The conceptual structure of research utilization. Res Nurs Health. 1999;22:203–16.

Straus S, Tetroe J, Graham I. Knowledge translation in health care. Oxford: Wiley Blackwell, BMJ Books; 2013.

Oliver K, Lorenc T, Innvaer S. New directions in evidence-based policy research: a critical analysis of the literature. Health Res Policy Syst. 2014;12:34. doi:10.1186/1478-4505-12-34.

Lavis JN, Ross SE, Hurley JE, Hohenadel JM, Stoddart GL, Woodward CA, et al. Examining the role of health services research in public policymaking. Milbank Q. 2002;80(1):125–54.

Oxman AD, Lavis JN, Fretheim A. Use of evidence in WHO recommendations. Lancet. 2007;369(9576):1883–9. doi:10.1016/s0140-6736(07)60675-8.

Grol R. Successes and failures in the implementation of evidence-based guidelines for clinical practice. Med Care. 2001;39(8 Suppl 2):II46–54.

Madon T, Hofman KJ, Kupfer L, Glass RI. Public health. Implementation science. Science. 2007;318(5857):1728–9. doi:10.1126/science.1150009.

Canadian Agency for Drugs and Technologies in Health. Systematic review of use of blood glucose test strips for the management of diabetes mellitus. 2009. https://www.cadth.ca/media/pdf/BGTS_SR_Report_of_Clinical_Outcomes.pdf. Accessed 10 Jan 2016.

Ontario Drug Policy Research Network. Self-monitoring of blood glucose: patterns, costs and potential cost reduction associated with reduced testing. 2009. http://www.odprn.ca/research/self-monitoring-of-blood-glucose/ Accessed 10 Jan 2016.

Perrier L, Mrklas K, Lavis JN, Straus SE. Interventions encouraging the use of systematic reviews by health policymakers and managers: a systematic review. Implement Sci. 2011;6:43. doi:10.1186/1748-5908-6-43.

Ciliska D, Hayward S, Dobbins M, Brunton G, Underwood J. Transferring public-health nursing research to health-system planning: assessing the relevance and accessibility of systematic reviews. Can J Nurs Res. 1999;31(1):23–36.

Dobbins M, Cockerill R, Barnsley J. Factors affecting the utilization of systematic reviews. A study of public health decision makers. Int J Technol Assess Health Care. 2001;17(2):203–14.

Dobbins M, Cockerill R, Barnsley J, Ciliska D. Factors of the innovation, organization, environment, and individual that predict the influence five systematic reviews had on public health decisions. Int J Technol Assess Health Care. 2001;17(4):467–78.

Dobbins M, Hanna SE, Ciliska D, Manske S, Cameron R, Mercer SL, et al. A randomized controlled trial evaluating the impact of knowledge translation and exchange strategies. Implement Sci. 2009;4:61. doi:10.1186/1748-5908-4-61.

Wallace J, Byrne C, Clarke M. Improving the uptake of systematic reviews: a systematic review of intervention effectiveness and relevance. BMJ Open. 2014;4(10). doi:10.1136/bmjopen-2014-005834.

Murthy L, Shepperd S, Clarke MJ, Garner SE, Lavis JN, Perrier L, et al. Interventions to improve the use of systematic reviews in decision-making by health system managers, policy makers and clinicians. Cochrane Database Syst Rev. 2012;9:CD009401. doi:10.1002/14651858.CD009401.pub2.

Wallace J, Byrne C, Clarke M. Making evidence more wanted: a systematic review of facilitators to enhance the uptake of evidence from systematic reviews and meta-analyses. Int J Evid Based Healthc. 2012;10(4):338–46. doi:10.1111/j.1744-1609.2012.00288.x.

Ellen ME, Leon G, Bouchard G, Ouimet M, Grimshaw JM, Lavis JN. Barriers, facilitators and views about next steps to implementing supports for evidence-informed decision-making in health systems: a qualitative study. Implement Sci. 2014;9:179. doi:10.1186/s13012-014-0179-8.

Wallace J, Nwosu B, Clarke M. Barriers to the uptake of evidence from systematic reviews and meta-analyses: a systematic review of decision makers’ perceptions. BMJ Open. 2012;2(5). doi:10.1136/bmjopen-2012-001220.

Dobbins M, DeCorby K, Twiddy T. A knowledge transfer strategy for public health decision makers. Worldviews Evid Based Nurs. 2004;1(2):120–8. doi:10.1111/j.1741-6787.2004.t01-1-04009.x.

Dobbins M, Thomas H, O’Brien MA, Duggan M. Use of systematic reviews in the development of new provincial public health policies in Ontario. Int J Technol Assess Health Care. 2004;20(4):399–404.

Arksey H, O’Malley L. Scoping studies: towards a methodological framework. Int J Soc Res Methodol. 2005;8(1):19–32. doi:10.1080/1364557032000119616.

Peters MD, Godfrey CM, Khalil H, McInerney P, Parker D, Soares CB. Guidance for conducting systematic scoping reviews. Int J Evid Based Healthc. 2015. doi:10.1097/xeb.0000000000000050.

Moher D, Liberati A, Tetzlaff J, Altman DG. Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. BMJ. 2009;339:b2535. doi:10.1136/bmj.b2535.

Synthesi.SR. KT Program, Li Ka Shing Knowledge Institute of St. Michael’s Hospital., Toronto, Ontario, Canada. 2014.

Cabana MD, Rand CS, Powe NR, Wu AW, Wilson MH, Abboud PA, et al. Why don’t physicians follow clinical practice guidelines? A framework for improvement. JAMA. 1999;282(15):1458–65.

NVivo qualitative data analysis software; QSR International Pty Ltd. Version 10, 2012. .

Albert MA, Fretheim A, Maiga D. Factors influencing the utilization of research findings by health policy-makers in a developing country: the selection of Mali’s essential medicines. Health Res Policy Syst. 2007;5:2. doi:10.1186/1478-4505-5-2.

Armstrong R, Pettman T, Burford B, Doyle J, Waters E. Tracking and understanding the utility of Cochrane reviews for public health decision-making. J Public Health (Oxf). 2012;34(2):309–13. doi:10.1093/pubmed/fds038.

Atack L, Gignac P, Anderson M. Getting the right information to the table: using technology to support evidence-based decision making. Healthc Manage Forum. 2010;23(4):164–8.

Campbell D, Donald B, Moore G, Frew D. Evidence check: knowledge brokering to commission research reviews for policy. Evid Policy. 2011;7(1):97–107. doi:10.1332/174426411X553034.

Campbell DM, Redman S, Jorm L, Cooke M, Zwi AB, Rychetnik L. Increasing the use of evidence in health policy: practice and views of policy makers and researchers. Aust New Zealand Health Policy. 2009;6:21. doi:10.1186/1743-8462-6-21.

Dobbins M, Jack S, Thomas H, Kothari A. Public health decision-makers’ informational needs and preferences for receiving research evidence. Worldviews Evid Based Nurs. 2007;4(3):156–63. doi:10.1111/j.1741-6787.2007.00089.x.

Jewell CJ, Bero LA. “Developing good taste in evidence”: facilitators of and hindrances to evidence-informed health policymaking in state government. Milbank Q. 2008;86(2):177–208. doi:10.1111/j.1468-0009.2008.00519.x.

Lavis J, Davies H, Oxman A, Denis JL, Golden-Biddle K, Ferlie E. Towards systematic reviews that inform health care management and policy-making. J Health Serv Res Policy. 2005;10 Suppl 1:35–48. doi:10.1258/1355819054308549.

Ritter A. How do drug policy makers access research evidence? Int J Drug Policy. 2009;20(1):70–5. doi:10.1016/j.drugpo.2007.11.017.

Rosenbaum SE, Glenton C, Wiysonge CS, Abalos E, Mignini L, Young T, et al. Evidence summaries tailored to health policy-makers in low- and middle-income countries. Bull World Health Organ. 2011;89(1):54–61. doi:10.2471/blt.10.075481.

Shepperd S, Adams R, Hill A, Garner S, Dopson S. Challenges to using evidence from systematic reviews to stop ineffective practice: an interview study. J Health Serv Res Policy. 2013;18(3):160–6. doi:10.1177/1355819613480142.

Suter E, Armitage GD. Use of a knowledge synthesis by decision makers and planners to facilitate system level integration in a large Canadian provincial health authority. Int J Integr Care. 2011;11:e011.

Vogel JP, Oxman AD, Glenton C, Rosenbaum S, Lewin S, Gulmezoglu AM, et al. Policymakers’ and other stakeholders’ perceptions of key considerations for health system decisions and the presentation of evidence to inform those considerations: an international survey. Health Res Policy Syst. 2013;11:19. doi:10.1186/1478-4505-11-19.

Yousefi-Nooraie R, Rashidian A, Nedjat S, Majdzadeh R, Mortaz-Hedjri S, Etemadi A, et al. Promoting development and use of systematic reviews in a developing country. J Eval Clin Pract. 2009;15(6):1029–34. doi:10.1111/j.1365-2753.2009.01184.x.

Packer C, Hyde C. Does providing timely access to and advice on existing reviews of research influence health authority purchasing? Public Health Med. 2000;2(1):20–4.

Higgins JP, Altman DG, Gotzsche PC, Juni P, Moher D, Oxman AD, et al. The Cochrane Collaboration’s tool for assessing risk of bias in randomised trials. BMJ. 2011;343:d5928. doi:10.1136/bmj.d5928.

Wye L, Brangan E, Cameron A, Gabbay J, Klein J, Pope C. Health services and delivery research. Knowledge exchange in health-care commissioning: case studies of the use of commercial, not-for-profit and public sector agencies, 2011–14. Southampton: NIHR Journals Library; 2015.

Uneke CJ, Ndukwe CD, Ezeoha AA, Uro-Chukwu HC, Ezeonu CT. Implementation of a health policy advisory committee as a knowledge translation platform: the Nigeria experience. Int J Health Policy Manag. 2015;4(3):161–8. doi:10.15171/ijhpm.2015.21.

Lavis JN, Panisset U. EVIPNet Africa’s first series of policy briefs to support evidence-informed policymaking. Int J Technol Assess Health Care. 2010;26(02):229–32. doi:10.1017/S0266462310000206.

Straus SE, McAlister FA. Evidence-based medicine: a commentary on common criticisms. CMAJ. 2000;163(7):837–41.

Straus SERW, Glasziou P, Haynes RB. Evidence-based medicine: how to practice and teach EBM. 4th ed. London: Churchill Livingstone; 2010.

Oliver K, Innvar S, Lorenc T, Woodman J, Thomas J. A systematic review of barriers to and facilitators of the use of evidence by policymakers. BMC Health Services Research. 2014;14(1).

Perrier L, Persaud N, Thorpe KE, Straus SE. Using a systematic review in clinical decision making: a pilot parallel, randomized controlled trial. Implement Sci. 2015;10:118. doi:10.1186/s13012-015-0303-4.

Perrier L, Kealey MR, Straus SE. A usability study of two formats of a shortened systematic review for clinicians. BMJ Open. 2014;4(12):e005919. doi:10.1136/bmjopen-2014-005919.

Perrier L, Kealey MR, Straus SE. An iterative evaluation of two shortened systematic review formats for clinicians: a focus group study. JAMIA. 2014;21(e2):e341–6. doi:10.1136/amiajnl-2014-002660.

Perrier L, Persaud N, Ko A, Kastner M, Grimshaw J, McKibbon KA, et al. Development of two shortened systematic review formats for clinicians. Implement Sci. 2013;8:68. doi:10.1186/1748-5908-8-68.

Perrier L, Mrklas K, Shepperd S, Dobbins M, McKibbon KA, Straus SE. Interventions encouraging the use of systematic reviews in clinical decision-making: a systematic review. J Gen Intern Med. 2011;26(4):419–26. doi:10.1007/s11606-010-1506-7.

Acknowledgements

ACT is funded by a CIHR-DSEN New investigator Award, and SES is funded by a Tier 1 Canada Research Chair. The authors thank Kelly Mrklas for screening some of the citations for inclusion, Dr. Monika Kastner for providing feedback on the pilot-test, Becky Skidmore for peer-reviewing and updating our search strategy, Ana Guzman for formatting the paper, and Alissa Epworth for obtaining the full-text articles.

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

SES is an associate editor of Implementation Science but was not involved with the peer review process or decision to publish this paper. The rest of the authors have no competing interests to declare.

Authors’ contributions

ACT conceived the study, screened citations and full-text articles, analysed and interpreted the data, and wrote the sections of the manuscript. RC and ST coordinated the study, screened the citations and full-text articles, abstracted the data, developed qualitative analysis, cleaned, coded and analysed the data, and edited the manuscript. SM, SES, and MK screened the citations and full-text articles, abstracted the data, and edited the manuscript. BH, MO, MH, and SS conceived the study and conceptualised and edited the manuscript. LP conceived the study, developed the literature search, and conceptualised and edited the manuscript. SES conceived the study, analysed and interpreted the data, and wrote and edited the manuscript. All authors read and approved the final manuscripts.

Additional files

Additional file 1:

Literature search strategy used for Medline; additional search strategies available from the authors.

Additional file 2:

Barriers and facilitators to use of systematic review, categorised by study.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated.

About this article

Cite this article

Tricco, A.C., Cardoso, R., Thomas, S.M. et al. Barriers and facilitators to uptake of systematic reviews by policy makers and health care managers: a scoping review. Implementation Sci 11, 4 (2015). https://doi.org/10.1186/s13012-016-0370-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s13012-016-0370-1