Abstract

Background

Many clinical trials conducted by academic organizations are not published, or are not published completely. Following the US Food and Drug Administration Amendments Act of 2007, “The Final Rule” (compliance date April 18, 2017) and a National Institutes of Health policy clarified and expanded trial registration and results reporting requirements. We sought to identify policies, procedures, and resources to support trial registration and reporting at academic organizations.

Methods

We conducted an online survey from November 21, 2016 to March 1, 2017, before organizations were expected to comply with The Final Rule. We included active Protocol Registration and Results System (PRS) accounts classified by ClinicalTrials.gov as a “University/Organization” in the USA. PRS administrators manage information on ClinicalTrials.gov. We invited one PRS administrator to complete the survey for each organization account, which was the unit of analysis.

Results

Eligible organization accounts (N = 783) included 47,701 records (e.g., studies) in August 2016. Participating organizations (366/783; 47%) included 40,351/47,701 (85%) records. Compared with other organizations, Clinical and Translational Science Award (CTSA) holders, cancer centers, and large organizations were more likely to participate. A minority of accounts have a registration (156/366; 43%) or results reporting policy (129/366; 35%). Of those with policies, 15/156 (11%) and 49/156 (35%) reported that trials must be registered before institutional review board approval is granted or before beginning enrollment, respectively. Few organizations use computer software to monitor compliance (68/366; 19%). One organization had penalized an investigator for non-compliance. Among the 287/366 (78%) accounts reporting that they allocate staff to fulfill ClinicalTrials.gov registration and reporting requirements, the median number of full-time equivalent staff is 0.08 (interquartile range = 0.02–0.25). Because of non-response and social desirability, this could be a “best case” scenario.

Conclusions

Before the compliance date for The Final Rule, some academic organizations had policies and resources that facilitate clinical trial registration and reporting. Most organizations appear to be unprepared to meet the new requirements. Organizations could enact the following: adopt policies that require trial registration and reporting, allocate resources (e.g., staff, software) to support registration and reporting, and ensure there are consequences for investigators who do not follow standards for clinical research.

Similar content being viewed by others

Background

Clinical trials provide evidence about the safety and effectiveness of interventions (Table 1). They underpin health policy and regulation, and they inform patient and provider healthcare decision-making. Because many trials are not published [1,2,3,4,5,6], and because many published reports do not include all of the information needed to understand trial methods [7,8,9,10] and results [11,12,13,14,15,16,17], decisions based on published evidence alone may not lead to the best balance of benefits and harms for patients [18,19,20,21].

To help participants enroll in trials, improve access to information, and reduce bias, authors have long proposed registering all trials prospectively [22,23,24,25,26,27]. The Food and Drug Administration Modernization Act of 1997 led to the creation of ClinicalTrials.gov, a publicly accessible database maintained by the National Library of Medicine (NLM), which launched in 2000 [28]. In 2004, the International Committee of Medical Journal Editors (ICMJE) announced that trials initiated from 2005 would have to be registered to be considered for publication [29, 30]. Title VIII of the Food and Drug Administration Amendments Act of 2007 (FDAAA) [31] required that certain trials of drugs, biologics, and medical devices be registered and that results for trials of approved products be posted on ClinicalTrials.gov. The FDAAA also authorized the Food and Drug Administration (FDA) to issue fines for non-compliance, currently up to $11,569 per trial per day [32]. “The Final Rule,”, which took effect on January 18, 2017, clarified and expanded requirements for registration and reporting (Box 1) [33, 34]; organizations were expected to be in compliance by April 18, 2017. In a complementary policy, the National Institutes of Health (NIH) issued broader requirements that apply to all trials funded by the NIH, including early trials and trials of behavioral interventions [35, 36].

There is little evidence about how academic organizations support trial registration and reporting, but some evidence suggests that they are unprepared to meet these requirements. For example, academic organizations have performed worse than industry in registering trials prospectively [37, 38] and reporting results [39,40,41,42,43,44,45,46].

Methods

Between November 21, 2016 and March 1, 2017, we conducted an online survey of academic organizations in the USA. We surveyed administrators who are responsible for maintaining organization accounts on ClinicalTrials.gov. For each eligible ClinicalTrials.gov account, we asked one administrator to describe the policies and procedures and the available resources to support trial registration and reporting at their organization (Box 2).

Identifying eligible PRS accounts

The online system used to enter information in the ClinicalTrials.gov database is called the Protocol Registration and Results System (PRS). Each study registered on ClinicalTrials.gov is associated with a “record” of that study, and each record is assigned to one PRS organization account. A record may or may not include study results. A single organization, such as a university or health system, might register trials using one or many accounts. For example, “Yale University” is one account; by comparison, “Harvard Medical School” and “Harvard School of Dental Medicine” are each separate accounts.

We used the PRS account as the unit of analysis because accounts related to the same organization often represent schools or departments that have separate policies and procedures related to trial registration and reporting. Furthermore, we are not aware of a reliable method to associate individual accounts with organization. For example, the “Johns Hopkins University” account includes mostly records from the Johns Hopkins University School of Medicine. Investigators at Johns Hopkins University also register trials using the accounts “Johns Hopkins Bloomberg School of Public Health,” “Johns Hopkins Children’s Hospital,” and “Sidney Kimmel Comprehensive Cancer Center.” Schools and hospitals related to Johns Hopkins University have distinct policies, faculties, administrative staff, and institutional review boards (IRBs).

We included all “active” accounts categorized by ClinicalTrials.gov as a “University/Organization” in the USA. We received a spreadsheet from the NLM with the number of records in each eligible account on August 4, 2016, and we received PRS administrator contact information from the NLM on September 28, 2016 and December 12, 2016.

Survey design

We developed a draft survey based on investigators’ content knowledge and evidence from studies that were known to us at the time. We organized questions into three domains: (1) organization characteristics, (2) registration and results policies and practices, and (3) staff and resources. We also invited participants to describe any compliance efforts that our questions did not cover. We then piloted the survey among 14 members of the National Clinical Trials Registration and Results Reporting Taskforce. The final survey used skip logic so that participants saw only those questions that were relevant based on their previous answers. Responses were saved automatically, and participants could return to the survey at any time; this allowed participants to discuss their answers with organizational colleagues before submitting. We conducted the survey using Qualtrics software (www.qualtrics.com/); a copy is available as a Word document (Additional file 1) and on the Qualtrics website (http://bit.ly/2tCSqyl).

Participant recruitment

One or more persons, called “PRS administrators” by ClinicalTrials.gov, may add or modify records in each account. Some PRS administrators are employed specifically to work on ClinicalTrials.gov, but many PRS administrators have little or no time budgeted by their organizations to work on ClinicalTrials.gov.

For each eligible account, we created a unique internet address (URL) which we emailed in an invitation letter to one administrator. For accounts with more than one administrator, we first contacted all administrators and asked them to select the appropriate administrator to complete this survey; then, we sent the survey to the nominated administrator. If an administrator did not complete the survey, EMW sent at least two reminders from his university email account after approximately 2 weeks and 4 weeks. For accounts with multiple administrators, we emailed all administrators if the designated administrator did not respond after two reminders. We instructed administrators associated with multiple accounts to complete a separate survey for each account.

Participants indicated their consent by continuing past the opening page and by completing the survey.

Analyses

To analyze the results, we first excluded accounts that did not complete three required questions about whether they had: (1) a registration policy, (2) a results reporting policy, and (3) computer software to manage their records. We then calculated descriptive statistics using SPSS 24. For categorical data, we calculated the number and proportion of accounts that selected each response. For continuous data, we calculated the median and interquartile range (IQR) depending on the distribution of responses.

We conducted subgroup analyses to determine whether organization characteristics might be related to policies and resources. We compared:

-

1.

Accounts affiliated with a Clinical and Translational Science Award (CTSA) versus other accounts

-

2.

Accounts affiliated with a cancer center versus other accounts

-

3.

Accounts with < 20 records, 20–99 records, and ≥ 100 records

We conducted a sensitivity analysis to determine whether the results might be sensitive to non-response bias by comparing accounts that responded before the effective date for The Final Rule (January 18, 2017) with accounts that responded on or after The Final Rule took effect.

Results

Characteristics of eligible accounts

We identified 783 eligible accounts (Additional file 2), which had 47,701 records by August 2016. The median number of records per account was 7 (IQR = 3–36), ranging from 1 (two accounts) to 1563 (mean = 61, standard deviation (SD) = 155). A minority of accounts are responsible for most records; 113/783 (14%) accounts had ≥ 100 records by August 2016, and these accounts were responsible for 38,311/47,701 (80%) records.

The median number of administrators per account was 1 (IQR = 1–3), and one organization had 182 registered administrators.

Survey participation

Of 783 eligible accounts, we found no contact details for 16 (2%) and attempted to contact 767 (98%). In four cases (< 1%), we were unable to identify a usable email address. Of eligible accounts, 10/783 (1%) emailed us to decline, 306/783 (39%) did not participate in the survey, and 81/783 (10%) did not provide sufficient information to be included in the analysis (Fig. 1). Two accounts reported that they had multiple policies related to the same account; we asked them to complete questions about their account characteristics but not to complete questions about their specific policies and resources.

Included accounts were responsible for 40,351/47,701 (85%) records registered by eligible accounts. We received a partial (43) or complete (323) survey for 366/783 (47%) eligible accounts (Additional file 3).

The first account completed the survey on November 21, 2016, and the last account completed the survey on March 21, 2017; 31/366 (9%) accounts completed the survey after January 17, 2017. Because of skip logic and because some accounts did not answer all possible questions, accounts answered between 6 and 42 questions (median 19, IQR 17–29).

Policies and practices

Of 366 accounts, 156 (43%) reported that they have a registration policy and 129 (35%) have a results reporting policy (Table 2). Policies came into effect between 2000 and 2016 (median = 2013, IQR 2010–2015; mode = 2016).

Among those accounts with policies, most policies require registration of trials applicable under FDAAA (118/140, 84%) and funded by the NIH (72/140, 51%) (Additional file 4). Polices include different requirements for time of registration (Table 3); most require that trials be registered before IRB approval is granted (15/156; 11%), before enrollment begins (49/156; 35%), or within 21 days of beginning enrollment (31/156; 22%). A minority of policies address handling trials associated with investigators joining (57/156; 37%) and leaving organizations (38/156; 24%).

Responsibility for registering trials is most often assigned to principal investigators (72/129; 56%). Responsibility for monitoring whether results are reported on time is most often assigned to principal investigators (54/115, 47%) and administrators (68/115, 59%).

Some policies allow organizations to penalize investigators who fail to register trials (27/115; 18%) or fail to report results (21/114; 18%). One account (< 1%) reported that their organization had penalized an investigator for non-compliance.

Resources

Few accounts use computer software to manage their records (68/366; 19%). Of those that use computer software, two use the application programming interface (API) to connect with ClinicalTrials.gov (Table 3).

Among the 287/366 (78%) accounts that allocate staff to fulfill ClinicalTrials.gov registration and reporting requirements, the median number of full-time equivalent (FTE) staff is 0.08 (IQR = 0.02–0.25). Among the staff who support ClinicalTrials.gov registration and reporting requirements, the staff member with the highest level of education has a graduate degree (232/411; 75%) more often than a bachelor’s degree (68/411; 22%) or a high school diploma (11/411; 3%). At the time of this survey, 34/338 (10%) planned to hire more staff, while 217/338 (64%) and 87/338 (26%) did not plan to hire more staff or did not know, respectively. Among accounts affiliated with a CTSA, 24/109 (22%) receive support for ClinicalTrials.gov compliance from the CTSA.

Staff perform various roles, including educating investigators individually (151/342; 44%) and in groups (61/42; 18%), entering data for principal investigators (174/342; 51%), maintaining educational websites (57/342; 17%), notifying investigators about problems (241/342; 70%), assisting with analysis (58/342; 17%), responding to questions (241/342; 70%), and reviewing problem records (262/342; 77%).

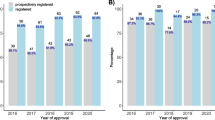

Subgroup analyses

Registration and reporting policies are more common among the following accounts: (1) those with many records, (2) those affiliated with CTSAs, and (3) those affiliated with cancer centers (Table 4). For example, most cancer centers have a registration policy (61/97; 63%) and a reporting policy (52/97; 54%); a minority of other accounts have a registration policy (94/267; 35%) or a reporting policy (77/267; 28%).

Non-response bias

We found direct and indirect evidence of non-response bias, which suggests that our results might overestimate the amount of support available at academic organizations. For example, one administrator who declined to participate replied that their organization “does not have any central staff managing clinicaltrials.gov and does not utilize an institutional account.”

Account size was related to survey participation, and many participating accounts were large (Table 5). Of those accounts we invited to complete the survey that included < 20 records, 171/532 (32%) participated. By comparison, 98/113 (87%) accounts with ≥ 100 records participated.

Participation might have been related to organization resources. Nearly all CTSAs (62/64; 97%) and most National Cancer Institute (NCI) cancer centers (55/69; 80%) participated in the survey (Table 5), including 48 accounts affiliated with both a cancer center and a CTSA. Furthermore, some included accounts were related; for example, 107 accounts were affiliated with one of the 62 CTSAs.

In a sensitivity analysis (Additional file 5), we found no clear differences in policies and computer software when comparing early and late responders. Most participants completed the survey before the effective date, so late responders included only 31/366 (8%) accounts.

Discussion

Summary of findings

To our knowledge, this is the largest and most comprehensive survey of organizations that register and report clinical trials on ClinicalTrials.gov. We had a high participation rate, and accounts that completed the survey conduct the overwhelming majority of clinical trials registered by academic organizations in the USA. We found that some organizations were prepared to meet trial registration and reporting requirements before The Final Rule took effect, but there is wide variation in practice. Most organizations do not have policies for trial registration and reporting. Most existing policies are consistent with FDAAA; however, most are not consistent with the ICMJE registration policy. Nearly half of existing policies do not require registration of all NIH-funded trials, though organizations could adapt their polices in response to the new NIH requirements. Few policies include penalties for investigators who do not register or report their trials. Although some organizations use computer software to monitor trial registration and reporting, only two have systems that connect directly with ClinicalTrials.gov (i.e., using API). Most staff who support trial registration and reporting have other responsibilities, and most organizations do not plan to hire more staff to support trial registration and reporting.

Implications

Our results suggest that most organizations assign responsibility for trial registration and reporting to individual investigators and provide little oversight. Previous studies indicate that senior investigators often delegate this responsibility to their junior colleagues [47].

To our knowledge, the FDA has never assessed a civil monetary penalty for failing to register or report a trial, and the NIH has never penalized an organization for failing to meet their requirements. The ICMJE policy is not applied uniformly [48], and many published trials are still not registered prospectively and completely [37, 49,50,51,52]. Organizations may be more likely to comply with these requirements if they are held accountable for doing so by journals, FDA, and funders (see, e.g., http://www.who.int/ictrp/results/jointstatement/en).

Improving research transparency in the long term will require changes in norms and culture. Organizations could take four immediate steps to improve trial registration and reporting. First, organizations could offer education to help investigators understand these requirements. Second, organizations could implement policies and procedures to support trial registration and reporting. For example, organizations could require that investigators answer questions on IRB applications to identify clinical trials that require registration. Organizations could also require that investigators provide trial registration numbers before allowing trials to commence. Third, organizations could identify trials that do not meet trial registration and reporting requirements and help individual investigators bring those trials into compliance. Notably, software could provide automatic reminders when trial information needs to be updated [53] or when results will be due, and software could help organizations identify problems that require attention from leaders. Prospective reminders would allow administrators and investigators to update information before they become non-compliant with reporting requirements. Finally, organizations could ensure there are consequences for investigators who fail to meet trial registration and reporting requirements. For example, organizations could stop enrollment in ongoing trials or stop investigators from obtaining new grants [54].

Limitations

Although we sent multiple reminders and gave participants months to respond, our results might be influenced by non-response and social desirability. However, such biases would lead us to overestimate support for research trial registration and reporting. Participating accounts conduct more trials than non-participating accounts, and they appear to be most likely to have policies and resources to support transparency.

Because we analyzed results by account, our results are not directly comparable with studies that grouped trials using the data fields “funder” [39, 40, 43], “sponsor” [41, 44], “collaborator” [41], or “affiliation” [42]. We analyzed results by account because (1) the account should usually represent the “responsible party,” which is the person or organization legally responsible for fulfilling trial registration and reporting requirements, and (2) because we were not aware of another method to identify all trials, or even all accounts, associated with each organization.

We could not always determine which trials were associated with specific organizations, and organizations might not know which accounts their investigators use. Organizations could work with ClincalTrials.gov to identify non-working email addresses, update administrators’ contact information, assign and identify an administrator responsible for overseeing each account, and create a one-to-one relationship between each account and organization. For example, ClinicalTrials.gov could identify multiple accounts managed by administrators at the same organization and help organizations move information into a single account. Organizations would need to prepare before centralizing their records; centralized administration could reduce trial registration and reporting if administrators lack the time, training, and resources to manage these tasks effectively.

We requested information from one administrator at each organization, and administrators might have been unaware of policies and practices that affect other parts of their organizations (e.g., IRBs, grant management). Finally, some organizations were misclassified on ClinicalTrials.gov (e.g., non-US organizations); we do not know how many organizations were inadvertently included or excluded because of misclassification.

Future research

Further research is needed to determine how to support trial registration and reporting at different types of organizations. Some large organizations register several trials each week, while other organizations register a few trials each year. For small organizations, hiring staff to support trial registration and reporting could be prohibitively expensive. Further qualitative research could explore how different types of organizations are responding to these requirements.

Future surveys could examine predictors of compliance with trial registration and reporting requirements. Although there are important variations in policy and practice, additional quantitative analyses would have little immediate value because most organizations have low compliance [37,38,39,40,41,42,43,44,45]. Instead, detailed case studies might be most useful for identifying best practices. For example, Duke Medicine developed a centralized approach [55], and the US Department of Veterans Affairs (VA) described multiple efforts to support transparency, including an “internal web-based portal system” [54]. The National Clinical Trials Registration and Results Reporting Taskforce is a network of administrators who meet monthly by teleconference, share resources (e.g., educational materials), and provide informal peer education. As industry appears to be doing better than academia [37, 39,40,41,42,43,44], it might be useful for academic organizations to understand the methods industry uses to monitor and report compliance (see, e.g., [56]).

We surveyed organizations after the publication of The Final Rule, and most accounts completed the survey before The Final Rule took effect, several months before the compliance date [34]. Our results should be considered a “baseline” for future studies investigating whether organizations adopt new policies and procedures, and whether they allocate new resources, to fulfill registration and reporting requirements. The federal government estimates compliance costs for organizations will be $70,287,277 per year [34]. This survey, and future updates, could be used to improve estimates of the costs of compliance.

Conclusions

To support clinical trial registration and results reporting, organizations should strongly consider adopting appropriate policies, allocating resources to implement those policies, and ensuring there are consequences for investigators who do not register and report the results of their research.

Abbreviations

- API:

-

Application programming interface

- CTSA:

-

Clinical and Translational Science Award

- FDA:

-

US Food and Drug Administration

- FDAAA:

-

Food and Drug Administration Amendments Act of 2007

- HHS:

-

Health and Human Services

- ICMJE:

-

International Committee of Medical Journal Editors

- IRB:

-

Institutional review board

- NCI:

-

National Cancer Institute

- NIH:

-

National Institutes of Health

- NLM:

-

National Library of Medicine

- PRS:

-

Protocol Registration and Results System

References

Ross JS, Mulvey GK, Hines EM, Nissen SE, Krumholz HM. Trial publication after registration in ClinicalTrials.Gov: a cross-sectional analysis. PLoS Med. 2009;6(9):e1000144. Epub 2009/11/11

Dwan K, Gamble C, Williamson PR, Kirkham JJ, Reporting Bias Group. Systematic review of the empirical evidence of study publication bias and outcome reporting bias – an updated review. PLoS One. 2013;8(7):e66844.

Song F, Parekh S, Hooper L, Loke YK, Ryder J, Sutton AJ, et al. Dissemination and publication of research findings: an updated review of related biases. Health Technol Assess. 2010;14(8):iii, ix-xi, 1–193. Epub 2010/02/26

Cruz ML, Xu J, Kashoki M, Lurie P. Publication and Reporting of the Results of Postmarket Studies for Drugs Required by the US Food and Drug Administration, 2009 to 2013. JAMA Intern Med. 2017;177(8):1207–10.

Jones CW, Handler L, Crowell KE, Keil LG, Weaver MA, Platts-Mills TF. Non-publication of large randomized clinical trials: cross sectional analysis. BMJ. 2013;347:f6104.

Pica N, Bourgeois F. Discontinuation and nonpublication of randomized clinical trials conducted in children. Pediatrics. 2016;138(3)

Turner L, Shamseer L, Altman DG, Weeks L, Peters J, Kober T, et al. Consolidated Standards of Reporting Trials (CONSORT) and the completeness of reporting of randomised controlled trials (RCTs) published in medical journals. Cochrane Database Syst Rev. 2012;11:MR000030.

Wieseler B, Kerekes MF, Vervoelgyi V, McGauran N, Kaiser T. Impact of document type on reporting quality of clinical drug trials: a comparison of registry reports, clinical study reports, and journal publications. BMJ. 2012;344:d8141.

Dechartres A, Trinquart L, Atal I, Moher D, Dickersin K, Boutron I, et al. Evolution of poor reporting and inadequate methods over time in 20 920 randomised controlled trials included in Cochrane reviews: research on research study. BMJ. 2017;357:j2490.

Glasziou P, Meats E, Heneghan C, Shepperd S. What is missing from descriptions of treatment in trials and reviews? BMJ. 2008;336(7659):1472–4.

Dwan K, Altman DG, Cresswell L, Blundell M, Gamble CL, Williamson PR. Comparison of protocols and registry entries to published reports for randomised controlled trials. Cochrane Database Syst Rev. 2011;1:MR000031.

Hart B, Lundh A, Bero L. Effect of reporting bias on meta-analyses of drug trials: reanalysis of meta-analyses. BMJ. 2012;344:d7202.

Rising K, Bacchetti P, Bero L. Reporting bias in drug trials submitted to the Food and Drug Administration: review of publication and presentation. PLoS Med. 2008;5(11):e217.

Baudard M, Yavchitz A, Ravaud P, Perrodeau E, Boutron I. Impact of searching clinical trial registries in systematic reviews of pharmaceutical treatments: methodological systematic review and reanalysis of meta-analyses. BMJ. 2017;356:j448.

Jones CW, Keil LG, Holland WC, Caughey MC, Platts-Mills TF. Comparison of registered and published outcomes in randomized controlled trials: a systematic review. BMC Med. 2015;13:282.

Pranic S, Marusic A. Changes to registration elements and results in a cohort of ClinicalTrials.gov trials were not reflected in published articles. J Clin Epidemiol. 2016;70:26–37.

Tang E, Ravaud P, Riveros C, Perrodeau E, Dechartres A. Comparison of serious adverse events posted at ClinicalTrials.gov and published in corresponding journal articles. BMC Med. 2015;13:189.

Chalmers I. Underreporting research is scientific misconduct. JAMA. 1990;263(10):1405–8.

Chan AW, Song F, Vickers A, Jefferson T, Dickersin K, Gotzsche PC, et al. Increasing value and reducing waste: addressing inaccessible research. Lancet. 2014;383(9913):257–66.

Glasziou P, Altman DG, Bossuyt P, Boutron I, Clarke M, Julious S, et al. Reducing waste from incomplete or unusable reports of biomedical research. Lancet. 2014;383(9913):267–76.

Maund E, Tendal B, Hrobjartsson A, Jorgensen KJ, Lundh A, Schroll J, et al. Benefits and harms in clinical trials of duloxetine for treatment of major depressive disorder: comparison of clinical study reports, trial registries, and publications. BMJ. 2014;348:g3510.

Simes R. Publication bias: the case for an international registry of clinical trials. J Clin Oncol. 1986;4(10):1529–41.

Meinert CL. Toward prospective registration of clinical trials. Control Clin Trials. 1988;9(1):1–5.

Chalmers TC. Randomize the first patient! N Engl J Med. 1977;296(2):107.

Levine J, Guy W, Cleary PA. Therapeutic trials of psychopharmacologic agents: 1968–1972. In: McMahon FG, editor. Principles and techniques of human research and therapeutics. VIII. Mt. Kisco: Futura Publishing Co; 1974. p. 31–51.

Dickersin K, Rennie D. The evolution of trial registries and their use to assess the clinical trial enterprise. JAMA. 2012;307(17):1861–4.

Dickersin K, Rennie D. Registering clinical trials. JAMA. 2003;290(4):516–23.

Zarin DA, Tse T, Williams RJ, Califf RM, Ide NC. The ClinicalTrials.gov results database—update and key issues. N Engl J Med. 2011;364(9):852–60. Epub 2011/03/04

De Angelis CD, Drazen JM, Frizelle FA, Haug C, Hoey J, Horton R, et al. Is this clinical trial fully registered? A statement from the International Committee of Medical Journal Editors. JAMA. 2005;293(23):2927–9.

DeAngelis CD, Drazen JM, Frizelle FA, Haug C, Hoey J, Horton R, et al. Clinical trial registration: a statement from the International Committee of Medical Journal Editors. JAMA. 2004;292(11):1363–4.

121 Stat. 823. Food and Drug Administration Amendments Act (FDAAA) of 2007. Public Law 110–85.

45 CFR 102.3. Adjustment of civil monetary penalties for inflation. Federal Register.

Zarin DA, Tse T, Williams RJ, Carr S. Trial reporting in ClinicalTrials.gov — The Final Rule. N Engl J Med. 2016;375:1998.

42 CFR 11. Clinical trials registration and results information submission; Final rule. Federal Register.

Hudson KL, Lauer MS, Collins FS. Toward a new era of trust and transparency in clinical trials. JAMA. 2016;316(13):1353–4.

NOT-OD-16-149. National Institutes of Health. NIH policy on dissemination of NIH-funded clinical trial information. Federal Register.

Zarin DA, Tse T, Williams RJ, Rajakannan T. Update on trial registration 11 years after the ICMJE policy was established. N Engl J Med. 2017;376(4):383–91.

Law MR, Kawasumi Y, Morgan SG. Despite law, fewer than one in eight completed studies of drugs and biologics are reported on time on ClinicalTrials.gov. Health Aff (Millwood). 2011;30(12):2338–45.

Prayle AP, Hurley MN, Smyth AR. Compliance with mandatory reporting of clinical trial results on ClinicalTrials.gov: cross sectional study. BMJ. 2012;344:d7373.

Ross JS, Tse T, Zarin DA, Xu H, Zhou L, Krumholz HM. Publication of NIH funded trials registered in ClinicalTrials.gov: cross sectional analysis. BMJ. 2012;344:d7292.

Piller C. Law ignored, patients at risk. STAT December 15, 2015. https://www.statnews.com/2015/12/13/clinical-trials-investigation/. Accessed 24 Oct 2017.

Chen R, Desai NR, Ross JS, Zhang W, Chau KH, Wayda B, et al. Publication and reporting of clinical trial results: cross sectional analysis across academic medical centers. BMJ. 2016;352:i637.

Anderson ML, Chiswell K, Peterson ED, Tasneem A, Topping J, Califf RM. Compliance with results reporting at ClinicalTrials.gov. N Engl J Med. 2015;372(11):1031–9.

Zarin DA, Tse T, Ross JS. Trial-results reporting and academic medical centers. N Engl J Med. 2015;372(24):2371–2.

TranspariMED. Medical Research Ethics at Top UK Universities: Performance, Policies and Future Plans. 2017 Accessed 21 Aug 2017. https://docs.wixstatic.com/ugd/01f35d_15c506da05e4463ca8bd70c2b45bb359.pdf. Report No.

Piller C, Bronshtein T. Faced with public pressure, research institutions step up reporting of clinical trial results. STAT January 9, 2018. https://www.statnews.com/2018/01/09/clinical-trials-reporting-nih/. Accessed 13 Feb 2018.

Weber WE, Merino JG, Loder E. Trial registration 10 years on. BMJ. 2015;351:h3572.

Dal-Re R, Ross JS, Marusic A. Compliance with prospective trial registration guidance remained low in high-impact journals and has implications for primary end point reporting. J Clin Epidemiol. 2016;75:100–7.

Scott A, Rucklidge JJ, Mulder RT. Is mandatory prospective trial registration working to prevent publication of unregistered trials and selective outcome reporting? An observational study of five psychiatry journals that mandate prospective clinical trial registration. PLoS One. 2015;10(8):e0133718.

Cybulski L, Mayo-Wilson E, Grant S. Improving transparency and reproducibility through registration: the status of intervention trials published in clinical psychology journals. J Consult Clin Psychol. 2016; https://doi.org/10.1037/ccp0000115.

Viergever RF, Karam G, Reis A, Ghersi D. The quality of registration of clinical trials: still a problem. PLoS One. 2014;9(1):e84727.

Huic M, Marusic M, Marusic A. Completeness and changes in registered data and reporting bias of randomized controlled trials in ICMJE journals after trial registration policy. PLoS One. 2011;6(9):e25258.

Maruani A, Boutron I, Baron G, Ravaud P. Impact of sending email reminders of the legal requirement for posting results on ClinicalTrials.gov: cohort embedded pragmatic randomized controlled trial. BMJ. 2014;349:g5579.

Huang GD, Altemose JK, O'Leary TJ. Public access to clinical trials: lessons from an organizational implementation of policy. Contemp Clin Trials. 2017;57:87–9.

O'Reilly EK, Hassell NJ, Snyder DC, Natoli S, Liu I, Rimmler J, et al. ClinicalTrials.gov reporting: strategies for success at an academic health center. Clin Transl Sci. 2015;8(1):48–51.

Evoniuk G, Mansi B, DeCastro B, Sykes J. Impact of study outcome on submission and acceptance metrics for peer reviewed medical journals: six year retrospective review of all completed GlaxoSmithKline human drug research studies. BMJ. 2017;357:j1726.

Taichman DB, Sahni P, Pinborg A, Peiperl L, Laine C, James A, et al. Data sharing statements for clinical trials: a requirement of the International Committee of Medical Journal Editors. JAMA. 2017;317:2491–2.

ICH. Guideline for good clinical practice. International Conference on Harmonisation of Technical Requirements for Registration of Pharmaceuticals for Human Use (ICH). Geneva: ICH Expert Working Group; 1996.

46.102(g) C. Protection of Human Subjects. Code of Federal Regulations.

56.102 C. Institutional Review Boards. Code of Federal Regulations.

Acknowledgements

We thank the National Clinical Trials Registration and Results Reporting Taskforce for feedback throughout this study, including members of the taskforce who pilot tested the draft survey: Deborah Barnard, Jennifer Swanton Brown, Debora Dowlin, Elizabeth M. Gendel, Cassandra Greene, Cindy Han, Elizabeth Jach, Kristin Kolsch, Linda Mendelson, Patricia Mendoza, Michelle Morgan, Sheila Noone, Elizabeth Piantadosi, and Lauren Robertson. In designing the survey, we referred to smaller surveys of PRS administrators conducted by The National Clinical Trials Registration and Results Reporting Taskforce in 2010 and 2014, neither of which were published. We thank colleagues at the National Library of Medicine (NLM) for comments on the study design, including Stacey Arnold, Kevin Fain, and Rebecca Williams. Annice Bergeris (NLM) identified eligible PRS accounts and provided email addresses to contact PRS administrators. From the Food and Drug Administration (FDA), we thank Peter Lurie, York Tomita, and Frank Weichold for their support of the study and for suggestions for future research. Vikram Madan (Johns Hopkins University Bloomberg School of Public Health) calculated the number of questions that survey participants completed.

CONTRIBUTORS

The National Clinical Trials Registration and Results Reporting Taskforce Survey Subcommittee includes (in alphabetical order): Nidhi Atri (Johns Hopkins University School of Medicine), Hila Bernstein (Harvard Catalyst The Harvard Clinical & Translational Science Center), Yolanda P. Davis (University of Miami), Keren Dunn (Cedars-Sinai Medical Center), Carrie Dykes (University of Rochester), James Heyward (Johns Hopkins Bloomberg School of Public Health), M. E. Blair Holbein (UT Southwestern Medical Center), Anthony Keyes (Johns Hopkins University School of Medicine), Evan Mayo-Wilson (Johns Hopkins Bloomberg School of Public Health), Jesse Reynolds (Yale School of Public Health), Leah Silbert (Cedars-Sinai Medical Center), Niem-Tzu (Rebecca) Chen (Rutgers, The State University of New Jersey), Sarah White (Partners HealthCare), and Diane Lehman Wilson (University of Michigan Medical School).

Funding

JH was supported by a Johns Hopkins Center of Excellence in Regulatory Science and Innovation (JH-CERSI) grant from the US Food and Drug Administration (U01 FD004977-01; Caleb Alexander; PI). NA and AK were supported by a Johns Hopkins Institute for Clinical and Translational Research (JH-ICTR) grant from the National Center for Research Resources and the National Center for Advancing Translational Sciences (UL1TR001079; Daniel E. Ford, PI). EMW was supported by JH-CERSI and JH-ICTR. JR and AO were supported by the Yale Center for Analytical Sciences (YCAS) and the Yale Center for Clinical Investigation (YCCI). Harvard Catalyst | The Harvard Clinical and Translational Science Center (UL1 TR001102) supports monthly teleconferences for the National Clinical Trials Registration and Results Reporting Taskforce.

The funders were not involved in the design or conduct of the study, manuscript preparation, or the decision to submit the manuscript for publication; its contents are solely the responsibility of the authors and do not necessarily represent the official views of HHS or FDA.

Availability of data and materials

The statistical code for generating these results is available from the authors. We did not obtain consent to identify participants; the corresponding author will share individual-level data for research in which participants will not be identified publicly.

Author information

Authors and Affiliations

Consortia

Contributions

JH, AK, and EMW conceived and designed the study, wrote the study protocol, and obtained institutional review board (IRB) approval. Regarding data acquisition, NA, JH, AK, and EMW drafted the survey; all authors and members of the National Clinical Trials Registration and Results Reporting Taskforce Survey Subcommittee provided comments about the content and wording of the survey. JH and EMW distributed the survey. EMW responded to questions from participants. Regarding analysis and interpretation of data, EMW drafted the table shells with NA, JH, AK, AO, and JR. AO and JR analyzed the data. All authors contributed to interpreting the results. EMW wrote the first draft of the manuscript. All authors reviewed, provided critical revisions, and approved the final manuscript for publication. Evan Mayo-Wilson is the guarantor.

Corresponding author

Ethics declarations

Ethics approval

The Johns Hopkins School of Public Health Institutional Review Board (FWA#0000287) determined that the study was not human subjects research (00007429).

Competing interests

AK, JR, and SW completed the survey on behalf of their organizations.

AK chairs the National Clinical Trials Registration and Results Reporting Taskforce Survey Subcommittee. AK and SW co-chair the National Clinical Trials Registration and Results Reporting Taskforce. AK and NA are Protocol Registration and Results System (PRS) administrators for the “Johns Hopkins University” account. JR and AO are PRS administrators for the “Yale University” account. When we conducted this study, SW was a PRS administrator for the “Brigham and Women’s Hospital,” “Massachusetts General Hospital,” and “McLean Hospital” accounts. SW is currently the Executive Director of the Multi-Regional Clinical Trials Center of Brigham and Women’s Hospital and Harvard.

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Additional files

Additional file 1:

Survey instrument. (DOCX 500 kb)

Additional file 2:

Eligible accounts. (DOCX 452 kb)

Additional file 3:

Participating accounts. (DOCX 476 kb)

Additional file 4:

Additional survey results. (DOCX 440 kb)

Additional file 5:

Sensitivity analysis. (DOCX 436 kb)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated.

About this article

Cite this article

Mayo-Wilson, E., Heyward, J., Keyes, A. et al. Clinical trial registration and reporting: a survey of academic organizations in the United States. BMC Med 16, 60 (2018). https://doi.org/10.1186/s12916-018-1042-6

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s12916-018-1042-6