Abstract

Background

Audit and feedback to physicians is a commonly used quality improvement strategy, but its optimal design is unknown. This trial tested the effects of a theory-informed worksheet to facilitate goal setting and action planning, appended to feedback reports on chronic disease management, compared to feedback reports provided without these worksheets.

Methods

A two-arm pragmatic cluster randomized trial was conducted, with allocation at the level of primary care clinics. Participants were family physicians who contributed data from their electronic medical records. The ‘usual feedback’ arm received feedback every six months for two years regarding the proportion of their patients meeting quality targets for diabetes and/or ischemic heart disease. The intervention arm received these same reports plus a worksheet designed to facilitate goal setting and action plan development in response to the feedback reports. Blood pressure (BP) and low-density lipoprotein cholesterol (LDL) values were compared after two years as the primary outcomes. Process outcomes measured the proportion of guideline-recommended actions (e.g., testing and prescribing) conducted within the appropriate timeframe. Intention-to-treat analysis was performed.

Results

Outcomes were similar across groups at baseline. Final analysis included 20 physicians from seven clinics and 1,832 patients in the intervention arm (15% loss to follow up) and 29 physicians from seven clinics and 2,223 patients in the usual feedback arm (10% loss to follow up). Ten of 20 physicians completed the worksheet at least once during the study. Mean BP was 128/72 in the feedback plus worksheet arm and 128/73 in the feedback alone arm, while LDL was 2.1 and 2.0, respectively. Thus, no significant differences were observed across groups in the primary outcomes, but mean haemoglobin A1c was lower in the feedback plus worksheet arm (7.2% versus 7.4%, p<0.001). Improvements in both arms were noted over time for one-half of the process outcomes.

Discussion

Appending a theory-informed goal setting and action planning worksheet to an externally produced audit and feedback intervention did not lead to improvements in patient outcomes. The results may be explained in part by passive dissemination of the worksheet leading to inadequate engagement with the intervention.

Trial registration

ClinicalTrials.gov NCT00996645

Similar content being viewed by others

Background

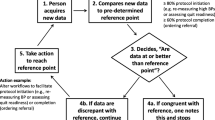

Audit and feedback is often the foundation of quality improvement (QI) projects aiming to close the gap between ideal and actual practice. Audit and feedback is known to improve quality of care but there is variability in the magnitude of effect observed[1]. This variability may be attributed to the nature of the targeted behavior, the context, and the characteristics of the recipient, as well as to the design of the audit and feedback intervention itself[2]. The Cochrane review of audit and feedback found that feedback is more effective when sent more than once, delivered by a supervisor or senior colleague in both verbal and written formats, and when it includes both explicit targets and an action plan[3]. However, these conclusions are based on indirect comparisons from meta-regressions and are thus less reliable than those that would be generated directly from head-to-head trials of different approaches to providing audit and feedback. Further, there is little information to guide operationalization of these factors[4]. For example, few audit and feedback trials explicitly describe goal setting or action planning as part of the intervention, and those trials that did appeared to deliver this component of the intervention in various ways[5, 6]. Although action planning is a familiar activity in clinical practice, the plan can more effectively lead to behavior change if it includes two key elements: an ‘if’ statement (specifying contextual factors that will trigger the action) and a ‘then’ statement (specifying precisely the action to be taken)[7]. In the context of feedback and goals, implementation intention-based action plans could increase goal-directed behaviors possibly by increasing both self-efficacy (confidence in ones ability to perform an action effectively) and goal-commitment (degree to which the person is determined to achieve the goal)[8].

For patients with diabetes, audit and feedback is known to modestly improve processes of care, as well as blood pressure (BP) and glycemic control[9, 10]. In this trial, we aimed to build on the extant knowledge regarding audit and feedback by asking not whether it can improve care, but whether it could be modified to be more effective to improve processes of care related to patients with chronic disease, including diabetes and ischemic heart disease (IHD). Our hypothesis was that feedback reports delivered to family physicians regarding care patterns for patients with chronic disease would draw attention to a discrepancy between actual and desired quality of care (e.g., fewer patients than expected with BP recently measured) and that a worksheet accompanying the feedback to facilitate goal-setting and action-planning would increase the likelihood that family physicians would act to improve quality of care (e.g., identify and more aggressively treat patients with BP above target).

Methods

Study design

This was a two-arm, pragmatic cluster-trial conducted in primary care. To reduce the risk of contamination, randomization was at the level of the primary care clinic. Each physician in the intervention clinics received feedback accompanied by a goal-setting and action-planning worksheet, while each physician in the clinics allocated to usual care received feedback unaccompanied by the worksheet. Feedback reports addressed guideline-based quality indicators for patients in their practice with diabetes and/or IHD. Such patients are at elevated risk of cardiovascular events, especially if they have a history of both conditions[11], and guidelines recommend similar processes of care as well as control of BP and cholesterol to reduce this risk[12, 13]. The trial was pragmatic in that it sought to determine if the intervention could be effective under usual circumstances: the goal-setting and action-planning worksheet was designed to be readily scalable and was delivered with minimal supports; the usual feedback arm was not standardized with respect to co-interventions; patient-level outcomes were assessed from databases with unobtrusive measurement of compliance; and analysis was by intention-to-treat[14]. The protocol has been previously published[15] and is summarized below. This study received approval from the Research Ethics Office at Sunnybrook Health Sciences Centre (271–2006) and registered at ClinicalTrials.gov (NCT00996645).

Setting

In the province of Ontario, patients with chronic conditions such as diabetes and stable ischemic heart disease are generally managed in primary care, mostly by family physicians. There is no co-pay for doctor visits or laboratory tests for Ontarians, but medications are covered by the provincial drug plan only for the elderly and those on social assistance. The majority of primary care providers in the province work in groups and are paid by a mix of capitation and fee for service.

Participants and data collection

Participants were family physicians throughout Ontario who signed data-sharing agreements with the Electronic Medical Record Administrative data Linked Database (EMRALD), held at the Institute for Clinical Evaluative Sciences (ICES). EMRALD has developed mechanisms to extract, securely transfer, and de-identify the electronic medical record (EMR) data for analysis at ICES, maintaining strict standards for confidentiality[16]. Family physicians were originally invited to participate in EMRALD through convenience sampling of EMR users and all EMRALD contributors consented to this study. Physicians with less than one year of experience using their EMR or with less than 100 active adult patients enrolled in their practice were excluded. Included patients were over age 18 at the start of the trial, were enrolled with their family physician throughout the study, and had diabetes and/or IHD. Only patients with one or more visits at least one year prior to the trial to were included to ensure that enough data existed in the EMR to assess quality of care and to ensure that providers were not audited for transient or new patients. EMRALD has validated algorithms to identify patients with diabetes and IHD, which do not require special data input by physicians[17, 18].

Allocation

Practices were allocated using minimization (conducted by the study analyst using the free software, MINIM[19]) to achieve balance on baseline values of the primary outcomes and on the number of eligible patients in each cluster[3]. Using the baseline data for each cluster, these variables were classified as high or low using the median value as the cut-point. After recruitment was completed, practices were allocated simultaneously to ensure low risk of bias related to allocation concealment, as per Cochrane Effective Practice and Organization of Care Group criteria[20].

Intervention

The intervention was developed through an iterative process and piloted with family physicians, as described previously[15]. Each physician received a package (Table 1) by courier every six months for two years featuring feedback reports describing the aggregate percentage of patients with diabetes and/or IHD meeting quality targets, along with explanatory documents and self-reflection surveys to be completed for continuing medical education credits (CME). For each disease condition, the report fit on one page and for every quality target, the aggregate performance achieved by the participating physician was compared to the score achieved by the top 10% of participating physician performers[21]. See Additional file1 for prototype feedback reports. The frequency was limited to twice yearly for two years due to capacity of the research team, and the reports included only aggregate data (no patient-specific information) due to potential privacy risks of sending patient-specific information.

Physicians randomized to the feedback plus worksheet arm also received a one-page worksheet appended to the feedback along with the standard CME survey. The worksheet was designed to facilitate goal-setting and implementation intention-based action-plans, using the ‘if’ and ‘then’ formulation explained above[8]. See Additional file2 for prototype of worksheet. Participants were asked to submit the worksheet along with the CME surveys in order to process their CME credits.

Prior to the second cycle of feedback reports, the College of Family Physicians of Canada implemented the use of standardized forms to earn continuing medical education credits for practice audits. Therefore, the CME surveys changed during the trial. The original surveys asked participants what they learned about diabetes and IHD care, intention to change practice, and asked about potential barriers to change. The revised surveys asked participants to ‘make a decision about your practice,’ to declare ‘what will you have to do to integrate these decisions,’ and to continue to reassess the practice change and make further plans to improve. Given the nature of the intervention, blinding of physicians was not possible, but they were not aware of the exact nature of the intervention being tested.

Outcomes

Outcomes were monitored using validated processes to analyze data collected from participants’ EMRs. There were two patient/disease-level primary outcomes and one professional practice/process-level primary outcome. The patient-level primary outcomes were the patients’ most recent low-density lipoprotein cholesterol (LDL) and systolic BP values, if tested within 24 or 12 months, respectively. The process-level primary outcome was a composite process score indicating whether patients received prescriptions and tests in accordance with relevant guidelines[22–27]. Patients received a composite process score with a maximum of six, as outlined in Table 2 which we multiplied by 100 to report the score as a percentage.

Secondary outcomes were chosen because they were thought to reflect the targets of action by family physicians receiving the feedback. Each of the items in the composite process score was assessed, plus glycemic control (haemoglobin A1c level, HbA1c), the proportion meeting targets recommended in guidelines for LDL (<2 mmol/L), and BP (<130/80 mmHg in diabetes and <140/90 mmHg in IHD), and prescriptions rates for insulin and beta blockers. While not every patient requires each prescription or investigation, we anticipated balance between groups in the proportion of eligible patients. Therefore, increases in aggregate proportions of processes performed indicate general intensification of treatment for patients with these conditions.

Analysis

Primary analysis was performed on an intention-to-treat basis, using patient level variables, combining patients with diabetes and/or IHD. Because the intervention was directed to the physician, final analysis was limited to patients with diabetes and/or IHD who were enrolled in their physician’s practice throughout the trial. We used linear mixed models (SAS MIXED procedure) for continuous variables to estimate the mean difference in each outcome between arms, together with their 95% confidence interval (CI). For dichotomous variables, we used generalized estimating equation models (SAS GENMOD procedure) and estimated relative risk and the 95% confidence interval using log-binomial regression[28]. We used generalized linear models to examine random variation for each outcome at both physician and practice levels (SAS GLIMMIX procedure). The clustering of patients within physicians was accounted for using random effect models and if the practice-level was also significant we included both levels when assessing outcomes. We ran a model for each outcome with and without adjustment for baseline values of the dependent variable. The adjustment for baseline values of the dependent variable was carried out by specifying the pre-intervention measure of the outcome as a covariate. We planned this approach a priori because although there were thousands of patients, we were not confident that allocation of only 14 clinics would result in adequate balance at baseline[3, 29].

Planned sub-group analyses were performed on patients with only IHD, only diabetes, or both, to assess the same outcome variables. We hypothesized that patients with only IHD would have lower quality of care scores at baseline and greater potential for improvement during the intervention because locally it has received relatively less attention with respect to QI initiatives and because identifying patients in the EMR with IHD is more difficult than identifying patients with DM due to the lack of relevant laboratory results or specific medications. A planned per-protocol analysis assessed whether full completion of the intervention worksheet resulted in improved outcomes in general and for the specific clinical topics in participants’ goal statements. All analyses were carried out using the SAS Version 9.2 statistical program (SAS Institute, Cary, NC, USA).

The number of participating practices and eligible physicians providing data to EMRALD determined the sample size. With 54 physicians from 14 practices initially providing data and consenting to this trial and a presumed intra-cluster correlation of 0.05, we estimated 80% power to find an absolute difference in LDL of 0.32 mmol/L and in systolic BP of 7 mmHg, based on pilot data[15].

Results

Cluster and patient flow are described in Figure 1. Just prior to allocation, one physician stopped practicing. Thus, the study began with 4,617 patients cared for by 53 physicians from 14 clinics. At baseline (August 2010), 22 physicians cared for 2,157 patients with diabetes and/or IHD in the feedback plus worksheet arm and 31 physicians cared for 2,460 patients were in the usual feedback arm. During the two-year trial two physicians from each arm were lost to follow up. Of the 562 patients lost to follow up, 175 belonged to these four physicians, and 166 changed physicians during the study. Average age of patients lost to follow up was 68 years and 44% were female, reflecting the underlying distribution of those allocated.

Comparability at baseline

Cluster and participant characteristics are described in Table 3 and Table 4, respectively. The median number of included patients per clinic was 234, (interquartile range (IQR) 186 – 508) and the median per physician was 81 (IQR, 46 – 111). One-half of the practices (7/14, 50%) were located in urban settings. About one-half of the physicians were female (25/53, 47%). Physicians had been in practice for a median of 19 years (IQR, 9 – 24) and had been using their EMR for a median of 7 years (IQR 6 – 7). Intervention physicians were more likely to be male, with more years experience, and located in rural settings. They also tended to have smaller practices overall but with more eligible patients. Patients averaged 65.6 years and 44.4% were female. Most included patients (3,435, 74.4%) had diabetes, the remainder had IHD (25.6%); one-eighth (12.4%) had both diabetes and IHD. The mean weight of included patients was 89 kilograms (standard deviation [SD] = 26, 501 missing). Mean BP was 130/74 (systolic SD = 17, diastolic SD = 11, 467 missing), with 55.1% achieving target, and mean LDL was 2.31 (SD = 0.9, 674 missing), with 67.7% achieving target. The mean process composite score was 72 (SD = 26). Values for these variables and other process measures were similar across groups, except for greater proportion of patients in the feedback plus worksheet arm group than the feedback alone arm with a recent BP test (85% versus 74%) and HbA1c test (79% versus 69%).

Intention-to-treat analyses

There were 4055 patients cared for by 49 physicians from 14 clinics in the final analysis. The primary and secondary outcomes at the end of the trial are summarized in Table 5. No clinically or statistically significant differences were observed across groups in the primary outcomes in both the adjusted and unadjusted models. Mean systolic BP was 128 in both arms (adjusted mean difference = -0.05; 95% CI -2.11, 2.02) and diastolic BP was 72 in the feedback plus worksheet arm and 73 in the feedback alone arm (adjusted mean difference = -0.72; 95% CI -2.18, 0.75). LDL was 2.1 in the feedback plus worksheet arm and 2.0 in the feedback alone arm (adjusted mean difference = 0.04; 95% CI -0.02, 0.10). The mean composite score was 72 in the feedback plus worksheet arm and 70 in the feedback alone arm [adjusted mean difference = 1.76; 95% CI -1.4, 4.9]. Mean HbA1c in patients with diabetes was lower in the feedback plus worksheet arm (7.2% versus 7.4%; adjusted mean difference -0.2; 95% CI -0.3, -0.1). A greater proportion of patients in the feedback plus worksheet arm had their BP tested within six months (81% versus 69%, adjusted relative risk [aRR] = 1.2; 95% CI 1.1, 1.3) and more achieved target BP (53% versus 46%, aRR 1.2; 95% CI 1.0 – 1.3). No other outcomes were significantly different across the arms.

After adjusting for variation at the level of the physicians, there was no further significant variation at the level of the clinic so that all models included a random variable only for the physician. Patients with no recent values for BP or LDL did not differ across arms with respect to sex, or proportion with diabetes and/or IHD. Those with missing values for BP were also similar with respect to age, but patients with missing values for LDL from the feedback plus worksheet arm tended to be older than those in the feedback alone arm (68 years versus 63 years; p = 0.001).

LDL values and diastolic BP decreased slightly in both study arms over time, while systolic BP decreased only in the feedback plus worksheet arm (Additional file3: Table S6). HbA1c also decreased in the feedback plus worksheet arm, but increased slightly in the feedback only arm. The proportion of patients with BP and LDL at target increased in both arms during the study period. The proportion with an LDL test within 12 months and the proportion with a statin prescribed also improved over time in both arms, but the proportion with BP measured within 6 months decreased in both arms and the proportion prescribed an anti-hypertensive (beta blocker or angiotensin-modifying agent) did not change over time except for a small increase in angiotensin-modifying agents in the feedback alone arm. Insulin prescribing increased in both arms over time, but testing of HbA1c and fasting blood glucose decreased.

Results of the planned sub-group analyses for patients with only diabetes, only IHD, or both conditions are described in Additional file3: Table S7, Table S8, Table S9. The results indicate that patients with IHD are treated more aggressively than patients with only diabetes and that patients with both conditions are treated most aggressively. Mean BP and LDL values were lowest and the composite process score was highest among patients with diabetes and IHD.

Per-protocol analyses

One-half of physicians in the intervention arm completed the worksheet (10/22, 45%); of those, one-half responded only once. Restricting analysis for the primary outcomes to the 10 physicians who completed the intervention worksheet indicated non-statistically significant improvement in the feedback plus worksheet arm for systolic BP (126 versus 128, adjusted mean difference -0.8; 95% CI -3.1, 1.5) and for the composite score (73 versus 70, adjusted mean difference 2.6; 95% CI -2.0, 7.2), but a slight decrease in LDL (2.1 versus 2.0, adjusted mean difference 0.1; 95% CI 0.0, 0.2). The most common goals set by participants in the worksheet for their patients with diabetes were for achievement of target BP (i.e., increase the proportion of patients meeting BP target, three times) and increasing testing rates of urinary albumin-to-creatinine ratio (three times). The most common goals for IHD were for achievement of target LDL (three times) and increasing prescription rates of ASA (five times). Patients belonging to the 10 participants who completed the worksheets more often achieved BP targets but the effect was not statistically significant (52% versus 46%, aRR = 1.11; 95% CI 0.97, 1.27). Testing for urinary albumin was similar between arms (66% versus 65%, aRR = 1.00; 95% CI 0.86, 1.17), as was achievement of LDL targets (48% versus 50%, aRR = 0.99; 95% CI 0.90, 1.09). Prescribing rates of ASA was higher among participants who completed the worksheets, but the model-based difference was not statistically significant, as baseline rates of ASA prescribing were also higher in this group (67% versus 57%, aRR = 0.99; 95% CI 0.95, 1.04).

Discussion

We found no difference in the primary outcomes of BP and cholesterol levels and no difference in the composite process score when providers were given feedback plus a goal-setting and action-planning worksheet compared to feedback alone. While it was a secondary outcome and should be interpreted cautiously, the intervention did result in improved glycemic control to a similar extent as many other complex QI strategies for diabetes[30]. We also observed that BP and cholesterol improved in both arms, as well as one-half of the process outcomes, which emphasizes the importance of controlled studies when testing strategies aiming to improve quality of care.

It remains plausible that completing a goal-setting and action-planning exercise could enhance the effectiveness of feedback[1]. Unfortunately, only 10 out of 22 physicians in the intervention arm completed the intervention worksheet and only five of these completed it more than once. Poor compliance (with minimal supports) limited our ability to test the effects of action planning in this pragmatic trial. One-half of the goals set by active participants were behavioral (what will I do) and one-half were outcome-oriented (what would I like to happen as the result of what I do). For actions plans to be most effective, they must very specifically relate to behavioral goals, not outcome goals[31]. Thus, it would seem that one-half of participants who completed the worksheet did so ineffectively, and there is a need to explore how to make action-planning activities more salient and usable. More active, practice-based supports may be needed to implement the development of goals and action plans. For instance, one randomized control trial found that feedback reports plus structured peer interactions in which goals and action plans for improvement were discussed was more effective than feedback alone[32]. A recent Canadian cross-sectional study showed that data management support for patient identification and recall plus assistance by allied health providers with standardized testing and prescribing was associated with improved quality in primary care[33].

To result in behavior change, those receiving feedback must be dissatisfied with their performance, meet a threshold level of self-efficacy for improvement, and be committed to the goal[7, 34]. In our separately reported embedded qualitative evaluation[35], we found that self-efficacy was low, as many practices lacked the necessary QI infrastructure to take action. For example, no one was responsible for searching the EMR to identify patients who may require reassessment. Our qualitative work also found that participants were not highly committed to achieving the targets described in the feedback reports. There was uncertainty regarding the impact on patient outcomes of achieving targets perceived to be aggressive. Many expressed concern that practice-level QI efforts would be at odds with their attempts to achieve patient-centered care. It would appear that although Canadian family physicians generally agree with and accept guideline-based best-practice targets for diabetes and IHD[36], achievement of best-practice targets for chronic disease management was not perceived as urgent compared to other tasks, especially given the relatively high quality of care already achieved. Yet, even if mean performance was acceptable, many patients stand to benefit from improved processes of care. In such settings, to increase goal commitment it may be necessary to first address limited self-efficacy by providing more active supports for QI[37], as there is evidence that self-efficacy influences goal commitment[34]. Recognizing that feedback alone is sometimes not enough to change provider behaviors, further improvements have been sought by pairing feedback with intensive co-interventions, such as academic detailing[38] or practice facilitation[39]. In the Cochrane review, pairing educational outreach with audit and feedback was found to increase desired professional behavior[3]. However, these intensive interventions are costly and more cost-efficient approaches may exist.

The particular nature of the feedback intervention used in this study may have played an important role in the poor uptake of the intervention worksheet. Qualitative work conducted in the Veterans Affairs health system in the USA also indicates that high performing healthcare organizations tend to deliver feedback with more actionable information[40]. It is possible that the participants did not feel that achieving higher scores on their feedback was achievable because only aggregate data was provided[41]. It is also possible that concerns regarding data validity allowed participants to resolve any cognitive dissonance arising from the results provided in the feedback without needing to commit resources to improvement[42]. For instance, the explanatory notes accompanying the reports described that if relevant tests were conducted by specialists but were not received into the EMR in a standardized format they would not be included in the feedback. In addition, the presence of multiple competing priorities is known to mitigate achievement of particular guideline recommendations[43], and this may be highly appropriate for patients with significant symptoms from concomitant illness (e.g., severe depression, cancer) or reduced life expectancy. The Cochrane review indicated that feedback was less effective when targeting many indicators reflecting chronic disease management than when targeting a specific behavior, and feedback intervention theory suggests that feedback should direct attention to a specific task in order to most reliably change behavior[44]. It has also been observed that feedback may be more effective when participants choose standards[5], and when presented by senior colleagues[3], whereas externally generated feedback focusing on a multitude of guideline-based best practices were used in this study. Therefore, the feedback may have been more salient—and the intervention worksheet may have had greater impact—if it focused on quality indicators chosen by participants to be high priority, and delivered by carefully selected opinion leaders[45] with clear and readily achievable tasks for improved scores.

Some limitations in this study warrant further discussion. The lack of a pure control group limits our ability to comment on the impact of this particular audit and feedback intervention on quality of care. However, our approach was necessary because participants expected something in return for contributing data. Furthermore, feedback is known to work for these outcomes[10] such that our interest was in determining whether a simple enhancement could increase feedback effectiveness. Although we used minimization to achieve baseline balance successfully for the primary outcomes, differences in cluster-level characteristics remained. In addition, the study analyst had access to the allocation list; this non-blinding could theoretically create bias, but the same validated algorithms were used to assess outcomes for each study group. We also acknowledge the potential for measurement bias as investigations were counted only if results were available in the EMR and tests performed by specialists may be missed. For outcomes related to investigations and treatments, data in EMRALD compare well with (and often out-perform) administrative databases[46]. While the trial should balance reasons for misclassification or missing data, we observed that those without a recent LDL test tended to be older in the intervention arm. We took a pragmatic approach to intervention delivery, limiting the number of reminders and supports to mimic expected conditions if the intervention were to be widely implemented. This may explain the limited completion rate of the worksheet and raises a question about the role of pragmatic health services trials when evaluating ‘new’ interventions. In this case, more data regarding how to support implementation would have been useful prior to embarking on a trial with this type of design. We did embed a qualitative evaluation to explore this, but participants focused on the usefulness of the particular audit and feedback intervention used in this trial rather than the goal-setting and action-planning worksheets[35]. It is also important to note that the secondary, per-protocol analyses are at risk of bias in favour of the intervention.

In addition, a number of factors may have limited our ability in this study to find differences between intervention arms. First, while this study did include thousands of patients, they were clustered within only 14 clinics and the intra-cluster correlations for disease-level primary outcomes were larger than expected from pilot data. Second, physicians voluntarily provide data to EMRALD and many participating clinics were involved in other QI interventions. Thus, these clinics may be more innovative and may also be achieving a higher level of evidence-based care than most other primary care providers, potentially decreasing both generalizability and the likelihood of finding an effect in this study. Furthermore, we observed that the composite process score was highest among patients with both diabetes and IHD indicating that participating providers appropriately intensified monitoring and management in patients at greatest risk and suggesting the possibility that further gains may be limited by a ceiling effect. Third, risk for type 2 error is exacerbated in trials comparing similar interventions (i.e., head-to-head trials) where anticipated effect sizes are not expected to be large. Finally, differences between groups may also have been more difficult to identify if goal-setting and action-planning aspects of the intervention were duplicated by the revised CME surveys mandated by the College of Family Physicians. During the trial, 52% of physicians (14/27) in the usual feedback group and 54% (12/22) in the feedback plus worksheet arm completed and returned at least one CME survey. Unlike the first iteration of CME surveys, which asked participants ‘what have you learned,’ the revised surveys explicitly asked participants to make a decision about their practice and to identify how to integrate the decision into practice. Though less specific or directive than the intervention worksheets, these questions may have similarly prompted participants to set goals and develop action plans.

In conclusion, we found no effect of adding a theory-informed goal-setting and action-planning worksheet to an audit and feedback intervention. Unfortunately, passive dissemination of this worksheet led to inadequate engagement with the intervention. In the context of primary care practices with minimal QI infrastructure, CME credit alone may not be enough incentive to encourage engagement in goal-setting or action planning activities. To maximize the impact of audit and feedback and to ensure that QI in primary care is prioritized, relevant stakeholders, including professional colleges, associations, and health system payers, should consider the need for further supports to carry out practice-based QI.

References

Ivers N, Jamtvedt G, Flottorp S, Young JM, Odgaard-Jensen J, French SD, O’Brien MA, Johansen M, Grimshaw J, Oxman AD: Audit and feedback: effects on professional practice and healthcare outcomes. Cochrane Database Syst Rev. 2012, 6: CD000259-

Ilgen DR, Fisher CD, Taylor MS: Consequences of individual feedback on behavior in organizations. J Appl Psychol. 1979, 64 (4): 349-371.

Ivers NM, Halperin IJ, Barnsley J, Grimshaw JM, Shah BR, Tu K, Upshur R, Zwarenstein M: Allocation techniques for balance at baseline in cluster randomized trials: a methodological review. Trials. 2012, 13: 120-6215. 10.1186/1745-6215-13-120. 13-120

Foy R, Eccles MP, Jamtvedt G, Young J, Grimshaw JM, Baker R: What do we know about how to do audit and feedback? Pitfalls in applying evidence from a systematic review. BMC Health Serv Res. 2005, 5: 50-10.1186/1472-6963-5-50.

Anonymous Medical audit in general practice: I: Effects on doctors’ clinical behavior for common childhood conditions. North of England study of standards and performance in general practice. BMJ. 1992, 304 (6840): 1480-1484.

Gardner B, Whittington C, McAteer J, Eccles MP, Michie S: Using theory to synthesise evidence from behavior change interventions: the example of audit and feedback. Soc Sci Med. 2010, 70 (10): 1618-1625. 10.1016/j.socscimed.2010.01.039.

Gollwitzer PM, Fujita K, Oettingen G: Planning and the implementation of goals. Handbook of self-regulation: research, theory, and applications. Edited by: Baumeister RF, Vohs KD. 2004, New York: Guilford Press, 211-228.

Sniehotta FF: Towards a theory of intentional behavior change: plans, planning, and self-regulation. Br J Health Psychol. 2009, 14 (Pt 2): 261-273.

Guldberg TL, Lauritzen T, Kristensen JK, Vedsted P: The effect of feedback to general practitioners on quality of care for people with type 2 diabetes. A systematic review of the literature. BMC Fam Pract. 2009, 10: 30-2296. 10.1186/1471-2296-10-30. 10-30

Hermans MP, Elisaf M, Michel G, Muls E, Nobels F, Vandenberghe H, Brotons C, for the OPTIMISE (OPtimal Type 2 dIabetes Management Including benchmarking and Standard trEatment) International Steering Committee: Benchmarking is associated with improved quality of care in type 2 diabetes: the OPTIMISE randomized, controlled trial. Diabetes Care. 2013, 36 (11): 3388-3395. 10.2337/dc12-1853.

Haffner SM, Lehto S, Ronnemaa T, Pyorala K, Laakso M: Mortality from coronary heart disease in subjects with type 2 diabetes and in nondiabetic subjects with and without prior myocardial infarction. N Engl J Med. 1998, 339 (4): 229-234. 10.1056/NEJM199807233390404.

Anderson TJ, Gregoire J, Hegele RA, Couture P, Mancini GB, McPherson R, Francis GA, Poirier P, Lau DC, Grover S, Genest J, Carpentier AC, Dufour R, Gupta M, Ward R, Leiter LA, Lonn E, Ng DS, Pearson GJ, Yates GM, Stone JA, Ur E: 2012 update of the Canadian cardiovascular society guidelines for the diagnosis and treatment of dyslipidemia for the prevention of cardiovascular disease in the adult. Can J Cardiol. 2013, 29 (2): 151-167. 10.1016/j.cjca.2012.11.032.

Hackam DG, Quinn RR, Ravani P, Rabi DM, Dasgupta K, Daskalopoulou SS, Khan NA, Herman RJ, Bacon SL, Cloutier L, Dawes M, Rabkin SW, Gilbert RE, Ruzicka M, McKay DW, Campbell TS, Grover S, Honos G, Schiffrin EL, Bolli P, Wilson TW, Feldman RD, Lindsay P, Hill MD, Gelfer M, Burns KD, Vallee M, Prasad GV, Lebel M, McLean D: The 2013 Canadian hypertension education program recommendations for blood pressure measurement, diagnosis, assessment of risk, prevention, and treatment of hypertension. Can J Cardiol. 2013, 29 (5): 528-542. 10.1016/j.cjca.2013.01.005.

Thorpe KE, Zwarenstein M, Oxman AD, Treweek S, Furberg CD, Altman DG, Tunis S, Bergel E, Harvey I, Magid DJ, Chalkidou K: A pragmatic-explanatory continuum indicator summary (PRECIS): a tool to help trial designers. CMAJ. 2009, 180 (10): E47-57. 10.1503/cmaj.090523.

Ivers NM, Tu K, Francis J, Barnsley J, Shah B, Upshur R, Kiss A, Grimshaw JM, Zwarenstein M: Feedback GAP: study protocol for a cluster-randomized trial of goal setting and action plans to increase the effectiveness of audit and feedback interventions in primary care. Implement Sci. 2010, 5: 98-10.1186/1748-5908-5-98.

Privacy code: Protecting personal health information at ICES: 2008, Institute for Clinical Evaluative Sciences,http://www.ices.on.ca/file/ICES%20Privacy%20Code%20Version%204.pdf,

Ivers N, Pylypenko B, Tu K: Identifying patients with ischemic heart disease in an electronic medical record. J Prim Care Community Health. 2011, 2 (1): 49-53. 10.1177/2150131910382251.

Tu K, Manuel D, Lam K, Kavanagh D, Mitiku TF, Guo H: Diabetics can be identified in an electronic medical record using laboratory tests and prescriptions. J Clin Epidemiol. 2011, 64 (4): 431-435. 10.1016/j.jclinepi.2010.04.007.

Minim: allocation by minimisation in clinical trials: 2013, Stephen Evans, Patrick Royston and Simon Day, http://www-users.york.ac.uk/~mb55/guide/minim.htm

Effective Practice and Organisation of Care (EPOC): EPOC Resources for review authors. 2013, Oslo: Norwegian Knowledge Centre for the Health Services, Available at:http://epocoslo.cochrane.org/epoc-specific-resources-review-authors,

Kiefe CI, Allison JJ, Williams OD, Person SD, Weaver MT, Weissman NW: Improving quality improvement using achievable benchmarks for physician feedback: a randomized controlled trial. JAMA. 2001, 285 (22): 2871-2879. 10.1001/jama.285.22.2871.

Bhattacharyya OK, Estey EA, Cheng AY, Canadian Diabetes Association 2008: Update on the Canadian diabetes association 2008 clinical practice guidelines. Can Fam Physician. 2009, 55 (1): 39-43.

Campbell N, Kwong MM, Canadian Hypertension Education Program: 2010 Canadian hypertension education program recommendations: an annual update. Can Fam Physician. 2010, 56 (7): 649-653.

Holbrook A, Thabane L, Keshavjee K, Dolovich L, Bernstein B, Chan D, Troyan S, Foster G, Gerstein H, COMPETE II Investigators: Individualized electronic decision support and reminders to improve diabetes care in the community: COMPETE II randomized trial. CMAJ. 2009, 181 (1–2): 37-44.

National Collaborating Centre for Chronic Conditions: Type 2 diabetes: national clinical guideline for management in primary and secondary care (update). 2008, London: Royal College of Physicians,http://guidance.nice.org.uk/CG66,

Cooper A, Skinner J, Nherera L, Feder G, Ritchie G, Kathoria M, Turnbull N, Shaw G, MacDermott K, Minhas R, Packham C, Squires H, Thomson D, Timmis A, Walsh J, Williams H, White A: Clinical guidelines and evidence review for post myocardial infarction: secondary prevention in primary and secondary care for patients following a myocardial infarction. Edited by: Anonymous. 2007, London: National Collaborating Centre for Primary Care and Royal College of General Practitioners, Anonymous

Genest J, McPherson R, Frohlich J, Anderson T, Campbell N, Carpentier A, Couture P, Dufour R, Fodor G, Francis GA, Grover S, Gupta M, Hegele RA, Lau DC, Leiter L, Lewis GF, Lonn E, Mancini GB, Ng D, Pearson GJ, Sniderman A, Stone JA, Ur E: 2009 Canadian cardiovascular society/Canadian guidelines for the diagnosis and treatment of dyslipidemia and prevention of cardiovascular disease in the adult - 2009 recommendations. Can J Cardiol. 2009, 25 (10): 567-579. 10.1016/S0828-282X(09)70715-9.

McNutt LA, Wu C, Xue X, Hafner JP: Estimating the relative risk in cohort studies and clinical trials of common outcomes. Am J Epidemiol. 2003, 157 (10): 940-943. 10.1093/aje/kwg074.

Glynn RJ, Brookhart MA, Stedman M, Avorn J, Solomon DH: Design of cluster-randomized trials of quality improvement interventions aimed at medical care providers. Med Care. 2007, 45 (10 Supl 2): S38-43.

Tricco AC, Ivers NM, Grimshaw JM, Moher D, Turner L, Galipeau J, Halperin I, Vachon B, Ramsay T, Manns B, Tonelli M, Shojania K: Effectiveness of quality improvement strategies on the management of diabetes: a systematic review and meta-analysis. Lancet. 2012, 379 (9833): 2252-2261. 10.1016/S0140-6736(12)60480-2.

Michie S, Richardson M, Johnston M, Abraham C, Francis J, Hardeman W, Eccles MP, Cane J, Wood CE: The behavior change technique taxonomy (v1) of 93 hierarchically clustered techniques: building an international consensus for the reporting of behavior change interventions. Ann Behav Med. 2013, 46 (1): 81-95. 10.1007/s12160-013-9486-6.

Verstappen WH, van der Weijden T, Dubois WI, Smeele I, Hermsen J, Tan FE, Grol RP: Improving test ordering in primary care: the added value of a small-group quality improvement strategy compared with classic feedback only. Ann Fam Med. 2004, 2 (6): 569-575. 10.1370/afm.244.

Beaulieu MD, Haggerty J, Tousignant P, Barnsley J, Hogg W, Geneau R, Hudon E, Duplain R, Denis JL, Bonin L, Del Grande C, Dragieva N: Characteristics of primary care practices associated with high quality of care. CMAJ. 2013, 185 (12): E590-6. 10.1503/cmaj.121802.

Locke EA, Latham GP: Building a practically useful theory of goal setting and task motivation. A 35-year odyssey. Am Psychol. 2002, 57 (9): 705-717.

Ivers N, Barnsley J, Upshur R, Shah B, Tu K, Grimshaw J, Zwarenstein M: ‘My job is one patient at a time’: perceived discordance between population-level quality targets and patient-centered care inhibits quality improvement. Can Fam Physician. 2013, (in press)

Burge FI, Bower K, Putnam W, Cox JL: Quality indicators for cardiovascular primary care. Can J Cardiol. 2007, 23 (5): 383-388. 10.1016/S0828-282X(07)70772-9.

Hysong SJ, Simpson K, Pietz K, SoRelle R, Broussard Smitham K, Petersen LA: Financial incentives and physician commitment to guideline-recommended hypertension management. Am J Manag Care. 2012, 18 (10): e378-91.

O’Brien MA, Rogers S, Jamtvedt G, Oxman AD, Odgaard-Jensen J, Kristoffersen DT, Forsetlund L, Bainbridge D, Freemantle N, Davis DA, Haynes RB, Harvey EL: Educational outreach visits: effects on professional practice and health care outcomes. Cochrane Database Syst Rev. 2007, 4 (4): CD000409-

Baskerville NB, Liddy C, Hogg W: Systematic review and meta-analysis of practice facilitation within primary care settings. Ann Fam Med. 2012, 10 (1): 63-74. 10.1370/afm.1312.

Hysong SJ, Best RG, Pugh JA: Audit and feedback and clinical practice guideline adherence: making feedback actionable. Implement Sci. 2006, 1: 9-10.1186/1748-5908-1-9.

Maroney BP, Buckley MR: Does research in performance appraisal influence the practice of performance appraisal. Public Pers Manage. 1992, 21 (2): 185-

van der Veer SN, de Keizer NF, Ravelli AC, Tenkink S, Jager KJ: Improving quality of care. A systematic review on how medical registries provide information feedback to health care providers. Int J Med Inform. 2010, 79 (5): 305-323. 10.1016/j.ijmedinf.2010.01.011.

Presseau J, Francis JJ, Campbell NC, Sniehotta FF: Goal conflict, goal facilitation, and health professionals’ provision of physical activity advice in primary care: an exploratory prospective study. Implement Sci. 2011, 6: 73-10.1186/1748-5908-6-73.

Kluger AN, DeNisi A: The effects of feedback interventions on performance: a historical review, a meta-analysis, and a preliminary feedback intervention theory. Psychol Bull. 1996, 119 (2): 254-284.

Flodgren G, Parmelli E, Doumit G, Gattellari M, O’Brien MA, Grimshaw J, Eccles MP: Local opinion leaders: effects on professional practice and health care outcomes. Cochrane Database Syst Rev. 2011, 8 (8): CD000125-

Tu K, Mitiku T, Ivers N, Guo H, Lu H, Jaakkimainen L, Kavanagh D, Lee D, Tu J: How comprehensive is EMR data? Evaluation of an Electronic Medical Record Administrative data Linked Database (EMRALD). Am J Manage Care. 2013, in press

Acknowledgements

We would like to thank the physicians participating to date in EMRALD. The conduct and analysis of this trial was supported by a grant from the Canadian Institutes of Health Research (CIHR)—funding reference number, 111218. The development of the intervention and the embedded qualitative study was supported by a team grant from CIHR, Knowledge Translation Improved Clinical Effectiveness Behavioral Research. Group (KT-ICEBeRG). This study is supported by the ICES, which is funded by an annual grant from the Ontario Ministry of Health and Long Term Care (MOHLTC). The opinions, results, and conclusions reported in this article are those of the authors and are independent from the funding sources. No endorsement by ICES or the Ontario MOHLTC is intended or should be inferred. NMI is supported by fellowship awards from CIHR and from the University of Toronto. REU is supported by a Canada Research Chair in Primary Care Research. JMG is supported by a Canada Research Chair in Health Knowledge Transfer and Uptake.

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contributions

NI, JG, KT, and MZ conceived the idea. NI, JY, and RM conducted the analyses. NI prepared the manuscript. All authors have made substantial contributions to the research design, have edited the manuscript critically, read and have approved of the final version.

Electronic supplementary material

13012_2013_717_MOESM2_ESM.docx

Additional file 2: Goal-setting and Action-plan Worksheet for Intervention Arm. Prototype of the intervention tested in the trial. (DOCX 14 KB)

13012_2013_717_MOESM3_ESM.docx

Additional file 3: Supplementary Tables. Change from baseline (Table S6) and sub-group analyses (Tables S7, S8, S9). (DOCX 105 KB)

Authors’ original submitted files for images

Below are the links to the authors’ original submitted files for images.

Rights and permissions

This article is published under an open access license. Please check the 'Copyright Information' section either on this page or in the PDF for details of this license and what re-use is permitted. If your intended use exceeds what is permitted by the license or if you are unable to locate the licence and re-use information, please contact the Rights and Permissions team.

About this article

Cite this article

Ivers, N.M., Tu, K., Young, J. et al. Feedback GAP: pragmatic, cluster-randomized trial of goal setting and action plans to increase the effectiveness of audit and feedback interventions in primary care. Implementation Sci 8, 142 (2013). https://doi.org/10.1186/1748-5908-8-142

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1748-5908-8-142