Abstract

In this article, the global robust exponential synchronization of reaction-diffusion BAM recurrent fuzzy neural networks (FNNs) with infinite distributed delays on time scales is investigated. Applied Lyapunov functional and inequality skills, some sufficient criteria are established to guarantee the global robust exponential synchronization of reaction-diffusion BAM recurrent FNNs with infinite distributed delays on time scales. One example is given to illustrate the effectiveness of our results.

Similar content being viewed by others

1 Introduction

The study on the artificial neural networks has attracted much attention because of their potential applications such as signal processing, image processing, pattern classification, quadratic optimization, associative memory, moving object speed detection, etc. Many kinds of models of neural networks have been proposed by some famous scholars. One of these important neural network models is the bidirectional associative memory (BAM) neural network models, which were first introduced by Kosko [1–3]. It is a special class of recurrent neural networks that can store bipolar vector pairs. The BAM neural network is composed of neurons arranged in two layers, the X-layer and the Y-layer. The neurons in one layer are fully interconnected to the neurons in the other layer. Through iterations of forward and backward information flows between the two layers, it performs a two-way associative search for stored bipolar vector pairs and generalize the single-layer auto-associative Hebbian correlation to a two-layer pattern-matched heteroassociative circuits. Therefore, this class of networks possesses good application prospects in some fields such as pattern recognition, signal and image process, artificial intelligence [4]. In general, artificial neural networks have complex dynamical behaviors such as stability, synchronization, periodic or almost periodic solutions, invariant sets and attractors, and so forth. We can refer to [5–27] and the references cited therein. Therefore, the analysis of dynamical behaviors for neural networks is a necessary step for practical design of neural networks. As one of the famous neural network models, it has attracted many attention in the past two decades [28–48] since the BAM model was proposed by Kosko. The dynamical behaviors such as uniqueness, global asymptotic stability, exponential stability and invariant sets and attractors of the equilibrium point or periodic solutions were investigated for BAM neural networks with different types of time delays (see [28–44, 48]).

Synchronization has attracted much attention after it was proposed by Carrol et al. [49, 50]. The principle of drive-response synchronization is this: the driver system sends a signal through a channel to the responder system, which uses this signal to synchronize itself with the driver. Namely, the response system is influenced by the behavior of the drive system, but the drive system is independent of the response one. In recent years, many results concerning a synchronization problem of time lag neural networks have been investigated in the literature [5, 6, 8–15, 27, 36, 49, 50].

As is well known, both in biological and man-made neural networks, strictly speaking, diffusion effects cannot be avoided when electrons are moving in asymmetric electromagnetic fields, so we must consider that the activations vary in space as well as in time. Many researchers have studied the dynamical properties of continuous time reaction-diffusion neural networks (see, for example, [8, 11, 17, 18, 24, 25, 27, 32, 48]).

However, in mathematical modeling of real world problems, we will encounter some other inconveniences such as complexity and uncertainty or vagueness. Fuzzy theory is considered as a more suitable setting for the sake of taking vagueness into consideration. Based on traditional cellular neural networks (CNNs), T Yang and LB Yang proposed the fuzzy CNNs (FCNNs) [23] which integrate fuzzy logic into the structure of traditional CNNs and maintain local connectedness among cells. Unlike previous CNNs structures, FCNNs have fuzzy logic between their template input and/or output besides the sum of product operation. FCNNs are very a useful paradigm for image processing problems, which is a cornerstone in image processing and pattern recognition. Therefore, it is necessary to consider both the fuzzy logic and delay effect on dynamical behaviors of neural networks. To the best of our knowledge, few authors have considered the synchronization of reaction-diffusion recurrent fuzzy neural networks with delays and Dirichlet boundary conditions on time scales which is a challenging and important problem in theory and application. Therefore, in this paper, we will investigate the global robust exponential synchronization of delayed reaction-diffusion BAM recurrent fuzzy neural networks (FNNs) on time scales as follows:

subject to the following initial conditions

and Dirichlet boundary conditions

where ; . is a time scale and is unbounded and . is constant time delay. and is a bounded compact set with smooth boundary ∂ Ω in space . , . and are the state of the i th neurons and the j th neurons at time t and in space x, respectively. and are constant input vectors. The smooth functions and correspond to the transmission diffusion operators along with the i th neurons and the j th neurons, respectively. , , , , , , , , , , , , , , , , , , are constants. and denote the rate with which the i th neurons and j th neurons will reset their potential to the resting state in isolation when disconnected from the network and external inputs, respectively. , , , , , , , , , , , , , , denote the connection weights. () and () denote the activation function of the j th neurons of Y-layer on the i th neurons of X-layer and the i th neurons of X-layer on the j th neurons of Y-layer at time t and in space x, respectively. () denotes the fuzzy activation function of the j th neurons on the i th neurons inside of X-layer. () denotes the fuzzy activation function of the i th neurons on the j th neurons inside of Y-layer. () denotes the bias of the j th neurons on the i th neurons inside of X-layer. () denotes the bias of the i th neurons on the j th neurons inside of Y-layer. ⋀, ⋁ denote the fuzzy AND and fuzzy OR operations, respectively. , are rd-continuous with respect to and continuous with respect to .

In order to investigate the global robust exponential synchronization for system (1.1)-(1.3), the quantities , , , , , , , , , , , and may be considered as intervals as follows: , , , , , , , , , , , , , .

Take the time scale (real number set), then system (1.1)-(1.3) can be changed into the following continuous case (1.4)-(1.6):

subject to the following initial conditions

and Dirichlet boundary conditions

Take the time scale (integer number set), then system (1.1)-(1.3) can be changed into the following discrete case (1.7)-(1.9):

subject to the following initial conditions

and Dirichlet boundary conditions

where , τ is a positive integer, , , .

If we choose , then , . In this case, system (1.1)-(1.3) is the continuous reaction-diffusion BAM recurrent FNNs (1.4)-(1.6). If , then , system (1.1)-(1.3) is the discrete difference reaction-diffusion BAM recurrent FNNs (1.7)-(1.9). In this paper, we study the global robust exponential synchronization of reaction-diffusion BAM recurrent FNNs (1.1)-(1.3), which unify both the continuous case and the discrete difference case. What is more, system (1.1)-(1.3) is a good model for handling many problems such as predator-prey forecast or optimizing of goods output.

The rest of this paper is organized as follows. In Section 2, some notations and basic theorems or lemmas on time scales are given. In Section 3, the main results of global robust exponential synchronization are obtained by constructing the appropriate Lyapunov functional and applying inequality skills. In Section 4, one example is given to illustrate the effectiveness of our results.

2 Preliminaries

In this section, we first recall some basic definitions and lemmas on time scales which are used in what follows.

Let be a nonempty closed subset (time scale) of ℝ. The forward and backward jump operators and the graininess are defined, respectively, by

A point is called left-dense if and , left-scattered if , right-dense if and , and right-scattered if . If has a left-scattered maximum m, then , otherwise . If has a right-scattered minimum m, then , otherwise .

Definition 2.1 ([51])

A function is called regulated provided its right-hand side limits exist (finite) at all right-hand side points in and its left-hand side limits exist (finite) at all left-hand side points in .

Definition 2.2 ([51])

A function is called rd-continuous provided it is continuous at right-dense point in and its left-hand side limits exist (finite) at left-dense points in . The set of rd-continuous function will be denoted by .

Definition 2.3 ([51])

Assume and . Then we define to be the number (if it exists) with the property that given any there exists a neighborhood U of t (i.e., for some ) such that

for all . We call the delta (or Hilger) derivative of f at t. The set of functions that is a differentiable and whose derivative is rd-continuous is denoted by .

If f is continuous, then f is rd-continuous. If f is rd-continuous, then f is regulated. If f is delta differentiable at t, then f is continuous at t.

Lemma 2.1 ([51])

Let f be regulated, then there exists a function F which is delta differentiable with region of differentiation D such that for all .

Definition 2.4 ([51])

Assume that is a regulated function. Any function F as in Lemma 2.1 is called a Δ-antiderivative of f. We define the indefinite integral of a regulated function f by

where C is an arbitrary constant and F is a Δ-antiderivative of f. We define the Cauchy integral by for all .

A function is called an antiderivative of provided for all .

Lemma 2.2 ([51])

If , and , then

-

(i)

,

-

(ii)

if for all , then ,

-

(iii)

if on , then .

A function is called regressive if for all . The set of all regressive and rd-continuous functions will be denoted by . We define the set of all positively regressive elements of ℛ by . If p is a regressive function, then the generalized exponential function is defined by for all , with the cylinder transformation

Let be two regressive functions, we define

If , then .

The generalized exponential function has the following properties.

Lemma 2.3 ([51])

Assume that are two regressive functions, then

-

(i)

;

-

(ii)

;

-

(iii)

;

-

(iv)

;

-

(v)

;

-

(vi)

for all ;

-

(vii)

.

Lemma 2.4 ([51])

Assume that are delta differentiable at . Then

Lemma 2.5 ([52])

For each , let N be a neighborhood of t. Then, for , define to mean that, given , there exists a right neighborhood of t such that

where . If t is right-scattered and is continuous at t, this reduces to .

Next, we introduce the Banach space which is suitable for system (1.1)-(1.3).

Let be an open bounded domain in with smooth boundary ∂ Ω. Let be the set consisting of all the vector function which is rd-continuous with respect to and continuous with respect to . For every and , we define the set . Then is a Banach space with the norm , where . Let consist of all functions which map into and is rd-continuous with respect to and continuous with respect to . For every and , we define the set . Then is a Banach space equipped with the norm , where , , .

In order to achieve the global robust exponential synchronization, the following system (2.1)-(2.3) is the controlled slave system corresponding to the master system (1.1)-(1.3):

subject to the following initial conditions

and Dirichlet boundary conditions

where () and () are error functions. () is a constant error weighting coefficient. , , , .

From (1.1)-(1.3) and (2.1)-(2.3), we obtain the error system (2.4)-(2.6) as follows:

subject to the following initial conditions

and Dirichlet boundary conditions

The following definition is significant to study the global robust exponential synchronization of coupled neural networks (1.1)-(1.3) and (2.1)-(2.3).

Definition 2.5 Let and be the solution vectors of system (1.1)-(1.3) and its controlled slave system (2.1)-(2.3), respectively. is the error vector. Then the coupled systems (1.1)-(1.3) and (2.1)-(2.3) are said to be globally exponentially synchronized if there exists a controlled input vector and a positive constant and such that

where α is called the degree of exponential synchronization on time scales.

3 Main results

In this section, we will consider the global robust exponential synchronization of coupled systems (1.1)-(1.3) and (2.1)-(2.3). At first, we need to introduce some useful lemmas.

Lemma 3.1 ([53])

Let Ω be a cube () and assume that is a real-valued function belonging to which vanishes on the boundary ∂ Ω of Ω, i.e., . Then

Lemma 3.2 ([23])

Suppose that and are the solutions to systems (1.1)-(1.3) and (2.1)-(2.3), respectively, then

Throughout this paper, we always assume that:

(H1) The neurons activation , , and are Lipschitz continuous, that is, there exist positive constants , , and such that , , , for any , ; .

(H2) The delay kernels (; ) are real-valued non-negative rd-continuous functions and satisfy the following conditions:

and there exist constants , such that

(H3) The following conditions are always satisfied:

Theorem 3.1 Assume that (H1)-(H3) hold. Then the controlled slave system (2.1)-(2.3) is globally robustly exponentially synchronous with the master system (1.1)-(1.3).

Proof Calculating the delta derivation of () and () along the solution of (2.1), we can obtain

and

Employing Green’s formula [17], Dirichlet boundary condition (2.6) and Lemma 3.1, we have

and

By applying Lemma 3.2, (3.1)-(3.4), conditions (H1)-(H3) and the Hölder inequality, and noting the robustness of parameter intervals, we get

where , , .

Similar to the arguments of (3.5), we obtain

where , , .

If the first inequality of condition (H3) holds, there exists one positive number (may be sufficiently small) such that

Now we consider the functions

where , . From (3.7) we achieve and is continuous for . Moreover, as , thereby there exist constants such that and for . Choosing , obviously , we have, for ,

Similar to the above arguments of (3.7)-(3.9), we can always choose such that for ,

Thus, taking , we derive, for ; ,

and

Take the Lyapunov functional as follows:

where

Calculating along (2.1) associated with (3.5) and noting that if and only if (that is, is increasing with respect to z if and only if ), we have

By applying (3.6), we can similarly calculate along (2.1) as follows:

From (3.11)-(3.15), we get

Note that (3.16) means that the Lyapunov functional is monotone decreasing with respect to . Therefore, in the light of (3.13) we get, for ,

which implies that

Obviously, . According to Definition 2.5, we conclude that the controlled slave system (2.1)-(2.3) is globally robustly exponentially synchronous with the master system (1.1)-(1.3) on the time scale . The proof is complete. □

When the time scale and , we will obtain the following two important corollaries.

Corollary 3.1 Assume that the following (H4)-(H6) hold. Then the master system (1.4)-(1.6) and its controlled slave system are globally robustly exponentially synchronous.

(H4) The neurons activation , , and are Lipschitz continuous, that is, there exist positive constants , , and such that , , , for any , ; .

(H5) The delay kernels (; ) are real-valued non-negative continuous functions and satisfy the following conditions:

and there exist constants , such that

(H6) The following conditions are always satisfied:

Corollary 3.2 Assume that the following (H7)-(H9) hold. Then the master system (1.7)-(1.9) and its controlled slave system are globally robustly exponentially synchronous.

(H7) The neurons activation , , and are Lipschitz continuous, that is, there exist positive constants , , and such that , , , for any , ; .

(H8) The delay kernels (; ) are real-valued non-negative rd-continuous functions and satisfy the following conditions:

and there exist constants , such that

(H9) The following conditions are always satisfied:

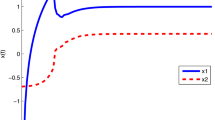

4 Illustrative example

Consider the following reaction-diffusion BAM recurrent FNNs on time scales:

subject to the following initial conditions

and Dirichlet boundary conditions

where , (), (), , , . and are the constant input vectors. and are the constant bias vectors. Obviously, , , and satisfy the Lipschitz condition with . Let , ,

Take the controlled input vector , here . By a simple calculation, we have

Thus, conditions (H1)-(H3) are satisfied. It follows from Theorem 3.1 that the master system (4.1)-(4.3) and its controlled slave system are globally robustly exponentially synchronized.

Author’s contributions

The author read and approved the final manuscript.

References

Kosko B: Adaptive bidirectional associative memories. Appl. Opt. 1987, 26(23):4947-4960. 10.1364/AO.26.004947

Kosko B: Bidirectional associative memories. IEEE Trans. Syst. Man Cybern. 1988, 18(1):49-60. 10.1109/21.87054

Kosko B: Neural Networks and Fuzzy Systems: A Dynamical Systems Approach to Machine Intelligence. Prentice Hall, New York; 1992.

Mathai G, Upadhyaya BR: Performance analysis and application of the bidirectional associative memory to industrial spectral signatures. Neural Netw. 1989, 1: 33-37.

Yu WW, Cao JD: Adaptive synchronization and lag synchronization of uncertain dynamical system with time delay based on parameter identification. Physica A 2007, 375: 467-482. 10.1016/j.physa.2006.09.020

Tang Y, Fang JA: Robust synchronization in an array of fuzzy delayed cellular neural networks with stochastic ally hybrid coupling. Neurocomputing 2009, 72: 3253-3262. 10.1016/j.neucom.2009.02.010

Yuan K, Cao JD: Exponential stability and periodic solutions of fuzzy cellular neural networks with time-varying delays. Neurocomputing 2006, 69: 1619-1627. 10.1016/j.neucom.2005.05.011

Wang K, Teng ZD, Jiang HJ: Adaptive synchronization in an array of linearly coupled neural networks with reaction-diffusion terms and time delays. Commun. Nonlinear Sci. Numer. Simul. 2012, 17: 3866-3875. 10.1016/j.cnsns.2012.02.020

Sheng L, Yang HZ: Exponential synchronization of a class of neural networks with mixed time-varying delays and impulsive effects. Neurocomputing 2008, 71: 3666-3674. 10.1016/j.neucom.2008.03.004

Yan P, Lv T: Exponential synchronization of fuzzy cellular neural networks with mixed delays and general boundary conditions. Commun. Nonlinear Sci. Numer. Simul. 2012, 17: 1003-1011. 10.1016/j.cnsns.2011.07.013

Gan QT: Exponential synchronization of stochastic Cohen-Grossberg neural networks with mixed time-varying delays and reaction-diffusion via periodically intermittent control. Neural Netw. 2012, 31: 12-21.

Zhang YJ, Xu SY: Robust global synchronization of complex networks with neutral-type delayed nodes. Appl. Math. Comput. 2010, 216: 768-778. 10.1016/j.amc.2010.01.075

Luo M, Xu J: Suppression of collective synchronization in a system of neural groups with washout-filter-aided feedback. Neural Netw. 2011, 24: 538-543. 10.1016/j.neunet.2011.02.008

Yang XS, Huang CX, Zhu QX: Synchronization of switched neural networks with mixed delays via impulsive control. Chaos Solitons Fractals 2011, 44: 817-826. 10.1016/j.chaos.2011.06.006

Li T, Fei SM, Zhu Q, Cong S: Exponential synchronization of chaotic neural networks with mixed delays. Neurocomputing 2008, 71: 3005-3019. 10.1016/j.neucom.2007.12.029

Du BZ, James L: Stability analysis of static recurrent neural networks using delay-partitioning and projection. Neural Netw. 2009, 22: 343-347. 10.1016/j.neunet.2009.03.005

Liu PC, Yi FQ, Guo Q, Yang J, Wu W: Analysis on global exponential robust stability of reaction-diffusion neural networks with S-type distributed delays. Physica D 2008, 237: 475-485. 10.1016/j.physd.2007.09.014

Li YK, Zhao KH: Robust stability of delayed reaction-diffusion recurrent neural networks with Dirichlet boundary conditions on time scales. Neurocomputing 2011, 74: 1632-1637. 10.1016/j.neucom.2011.01.006

Gilli M: Stability of cellular neural networks with nonpositive templates and nonmonotonic output functions. IEEE Trans. Circuits Syst. I 1994, 41: 518-528.

Gopalsamy K, He XZ: Stability in asymmetric Hopfield nets with transmission delays. Physica D 1994, 76: 344-358. 10.1016/0167-2789(94)90043-4

Zeng Z, Wang J: Improved conditions for global exponential stability of recurrent neural networks with time-varying delays. Chaos Solitons Fractals 2006, 23(3):623-635.

Shao YF: Exponential stability of periodic neural networks with impulsive effects and time-varying delays. Appl. Math. Comput. 2011, 217: 6893-6899. 10.1016/j.amc.2011.01.068

Yang T, Yang LB: The global stability of fuzzy cellular neural networks. IEEE Trans. Circuits Syst. I 1996, 43: 880-883. 10.1109/81.538999

Zhao KH, Li YK: Existence and global exponential stability of equilibrium solution to reaction-diffusion recurrent neural networks on time scales. Discrete Dyn. Nat. Soc. 2010., 2010: Article ID 624619

Li YK, Zhao KH, Ye Y: Stability of reaction-diffusion recurrent neural networks with distributed delays and Neumann boundary conditions on time scales. Neural Process. Lett. 2012, 36: 217-234. 10.1007/s11063-012-9232-2

Zhao KH, Wang LWJ, Liu JQ: Global robust attractive and invariant sets of fuzzy neural networks with delays and impulses. J. Appl. Math. 2013., 2013: Article ID 935491

Zhao KH: Globally exponential synchronization of diffusion recurrent FNNs with time-delays and impulses on time scales. WSEAS Trans. Math. 2014, 13: 224-235.

Zhang JY, Yang YR: Global stability analysis of bidirectional associative memory neural networks with time delay. Int. J. Circuit Theory Appl. 2001, 29(2):185-196. 10.1002/cta.144

Zhao H: Global stability of bidirectional associative memory neural networks with distributed delays. Phys. Lett. A 2002, 297: 182-190. 10.1016/S0375-9601(02)00434-6

Liu B, Huang L: Global exponential stability of BAM neural networks with recent-history distributed delays and impulse. Neurocomputing 2006, 69(16-18):2090-2096. 10.1016/j.neucom.2005.09.014

Song QK, Cao JD: Global exponential stability of bidirectional associative memory neural networks with distributed delays. J. Comput. Appl. Math. 2007, 202: 266-279. 10.1016/j.cam.2006.02.031

Song QK, Cao JD: Global exponential stability and existence of periodic solutions in BAM networks with delays and reaction-diffusion terms. Chaos Solitons Fractals 2005, 23(2):421-430. 10.1016/j.chaos.2004.04.011

Cao JD, Wang L: Exponential stability and periodic oscillatory solution in BAM networks with delays. IEEE Trans. Neural Netw. 2002, 13(2):457-463. 10.1109/72.991431

Wu XL, Zhang JH, Guan XP, Meng H: Delay-dependent asymptotic stability of BAM neural networks with time delay. Kybernetes 2010, 39(8):1313-1321. 10.1108/03684921011063600

Zhu QX, Li XD, Yang XS: Exponential stability for stochastic reaction-diffusion BAM neural networks with time-varying and distributed delays. Appl. Math. Comput. 2011, 217: 6078-6091. 10.1016/j.amc.2010.12.077

Ge JH, Xu J: Synchronization and synchronized periodic solution in a simplified five-neuron BAM neural network with delays. Neurocomputing 2011, 74: 993-999. 10.1016/j.neucom.2010.11.017

Zhang ZQ, Yang Y, Huang YS: Global exponential stability of interval general BAM neural networks with reaction-diffusion terms and multiple time-varying delays. Neural Netw. 2011, 24: 457-465. 10.1016/j.neunet.2011.02.003

Ding W, Wang LS: Almost periodic attractors for Cohen-Grossberg-type BAM neural networks with variable coefficients and distributed delays. J. Math. Anal. Appl. 2011, 373: 322-342. 10.1016/j.jmaa.2010.06.055

Li YK, Chen XR, Zhao L: Stability and existence of periodic solutions to delayed Cohen-Grossberg BAM neural networks with impulses on time scales. Neurocomputing 2009, 72: 1621-1630. 10.1016/j.neucom.2008.08.010

Li YK, Gao S: Global exponential stability for impulsive BAM neural networks with distributed delays on time scales. Neural Process. Lett. 2010, 31(1):65-91. 10.1007/s11063-009-9127-z

Li YK: Global exponential stability of BAM neural networks with delays and impulses. Chaos Solitons Fractals 2005, 24(1):279-285. 10.1016/j.chaos.2004.09.027

Cao JD, Wan Y: Matrix measure strategies for stability and synchronization of inertial BAM neural network with time delays. Neural Netw. 2014, 53: 165-172.

Du YH, Zhong SM, Zhou N: Global asymptotic stability of Markovian jumping stochastic Cohen-Grossberg BAM neural networks with discrete and distributed time-varying delays. Appl. Math. Comput. 2014, 243: 624-636.

Jian JG, Wang BX: Stability analysis in Lagrange sense for a class of BAM neural networks of neutral type with multiple time-varying delays. Neurocomputing 2015. 10.1016/j.neucom.2014.07.041

Berezansky L, Braverman E, Idels L: New global exponential stability criteria for nonlinear delay differential systems with applications to BAM neural networks. Appl. Math. Comput. 2014, 243: 899-910.

Li YK, Yang L, Wu WQ: Anti-periodic solution for impulsive BAM neural networks with time-varying leakage delays on time scales. Neurocomputing 2015. 10.1016/j.neucom.2014.08.020

Zhang AC, Qiu JL, She JH: Existence and global exponential stability of periodic solution for high-order discrete-time BAM neural networks. Neural Netw. 2014, 50: 98-109.

Quan ZY, Huang LH, Yu SH, Zhang ZQ: Novel LMI-based condition on global asymptotic stability for BAM neural networks with reaction-diffusion terms and distributed delays. Neurocomputing 2014, 136: 213-223.

Carroll TL, Pecora LM: Cascading synchronized chaotic systems. Phys. D, Nonlinear Phenom. 1993, 67(1-3):126-140. 10.1016/0167-2789(93)90201-B

Carroll TL, Heagy J, Pecora LM: Synchronization and desynchronization in pulse coupled relaxation oscillators. Phys. Lett. A 1994, 186(3):225-229. 10.1016/0375-9601(94)90343-3

Bohner M, Peterson A: Dynamic Equation on Time Scales: An Introduction with Applications. Birkhäuser, Boston; 2001.

Lakshmikantham V, Vatsala AS: Hybrid system on time scales. J. Comput. Appl. Math. 2002, 141: 227-235. 10.1016/S0377-0427(01)00448-4

Lu JG: Global exponential stability and periodicity of reaction-diffusion delayed recurrent neural networks with Dirichlet boundary conditions. Chaos Solitons Fractals 2008, 35(1):116-125. 10.1016/j.chaos.2007.05.002

Acknowledgements

The author would like to thank the anonymous referees for their useful and valuable suggestions. This work is supported by the National Natural Sciences Foundation of Peoples Republic of China under Grant (No. 11161025; No. 11326101), Yunnan Province natural scientific research fund project (No. 2011FZ058).

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The author declares to have no competing interests.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (https://creativecommons.org/licenses/by/4.0), which permits use, duplication, adaptation, distribution, and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Zhao, K. Global robust exponential synchronization of BAM recurrent FNNs with infinite distributed delays and diffusion terms on time scales. Adv Differ Equ 2014, 317 (2014). https://doi.org/10.1186/1687-1847-2014-317

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1687-1847-2014-317