Abstract

We present a new set of parton distribution functions (PDFs) based on a fully global dataset and machine learning techniques: NNPDF4.0. We expand the NNPDF3.1 determination with 44 new datasets, mostly from the LHC. We derive a novel methodology through hyperparameter optimization, leading to an efficient fitting algorithm built upon stochastic gradient descent. We use NNLO QCD calculations and account for NLO electroweak corrections and nuclear uncertainties. Theoretical improvements in the PDF description include a systematic implementation of positivity constraints and integrability of sum rules. We validate our methodology by means of closure tests and “future tests” (i.e. tests of backward and forward data compatibility), and assess its stability, specifically upon changes of PDF parametrization basis. We study the internal compatibility of our dataset, and investigate the dependence of results both upon the choice of input dataset and of fitting methodology. We perform a first study of the phenomenological implications of NNPDF4.0 on representative LHC processes. The software framework used to produce NNPDF4.0 is made available as an open-source package together with documentation and examples.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

It is now an accepted fact that frontier high-energy physics at colliders requires percent-level accuracy both in theory and experiment [1]. On the theoretical side, the two main obstacles to achieving this are missing higher order corrections in perturbative computations [2], and uncertainties in parton distribution functions (PDFs) [3, 4]. The main aim of this paper is to show how percent-level accuracy might be achieved for PDFs.

The most recent set of PDFs determined by NNPDF, NNPDF3.1 [5], was the first to extensively include LHC data, and was able to reach 3–5% precision in the PDF uncertainties. It was based on NNPDF3.x fitting methodology, the first to be validated by means of closure tests, thereby ensuring that this precision was matched by a comparable accuracy.

The NNPDF4.0 PDF set presented here is a major step forward in three significant aspects: (i) the systematic inclusion of an extensive set of run I LHC at 7 and 8 TeV data and, for the first time, of LHC Run II data at \(\sqrt{s}=13\) TeV and of several new processes not considered before for PDF determinations; (ii) the deployment of state-of-the-art machine learning algorithms which result in a methodology that is considerably faster and leads to more precise PDFs; (iii) the validation of these PDF uncertainties both in the data and in the extrapolation regions using closure and future tests.

All in all, the main accomplishment of this new PDF set is to go one step further in achieving the main goal that motivated the NNPDF methodology in the first place [6], namely, to reduce sources of bias in PDF determination. The use of a wider dataset reduces sources of bias that might be related to the dominance of a particular process. The use of a machine learned methodology reduces sources of bias related to methodological choices, that are now mostly made through an automated procedure. Finally, the extensive set of validation tools explicitly checks the absence of bias: in fact, “future tests”, to be discussed below, can expose the historical bias that was present in previous PDF determinations.

The NNPDF4.0 global analysis includes 44 new datasets in comparison with NNPDF3.1. These involve a number of new LHC measurements of processes already present in NNPDF3.1, but also data from several new processes, whose impact on PDFs has been the object of dedicated studies. Specifically, direct photon production (studied in Ref. [7]), single-top production (studied in Ref. [8]), dijets (studied in Ref. [9]), W+jet (studied in Ref. [10]), and deep-inelastic jet production. A significant consequence of this extension of the dataset is that now the PDFs are largely controlled by LHC data: unlike in the past, a DIS-only PDF determination leads to much larger uncertainties and visibly different results.

NNPDF4.0 is the first PDF determination based on a methodology that is selected automatically rather than through manual iterations and human experience. All aspects of the neural network PDF parametrization and optimization (such as neural net architecture, learning rates or minimization algorithm) are selected through a hyperparameter optimization procedure [11], an automated scan of the space of models that selects the optimal methodology. A quality control method is used in order to make sure that the optimization does not produce a methodology that leads to overfitted PDFs. This is done through K-folding [6], checking iteratively the effectiveness of any given methodology on sets of data excluded in turn from the fit. All this is made possible by a speedup of the NNPDF fitting code, which is now able to fit an individual replica about twenty times faster, thanks mostly to the use of stochastic gradient descent methods provided by the TensorFlow library, rather than through the genetic algorithm minimization used previously, along with various technical improvements to be discussed below [11,12,13].

The widening of the dataset (with fixed methodology), and especially the methodological improvements (with fixed dataset) lead to a reduction of PDF uncertainties, so their combination brings us close to percent precision. This demands a careful validation of these uncertainties, which is achieved by means of two classes of tests.

The first is closure tests, already introduced in NNPDF3.0 [14], which here are considerably extended and systematized, thanks to the much greater fitting efficiency. These consist of fitting PDFs to pseudo-data generated assuming a certain underlying true PDF, and comparing the result of the fit to the known true PDF by means of suitable statistical estimators. The closure test verifies that PDF uncertainties are faithful, specifically in comparison to the data used to fit them. The second is future tests [15]: these compare the results obtained fitting PDFs to a subset of the data, which covers a small kinematic region compared to the full dataset. For example, PDFs are fitted to a pre-HERA dataset, and the result is compared to LHC data. The future test verifies that PDF uncertainties are faithful when extrapolated outside the region of the data used to fit them.

As a further test of methodological reliability, we study the robustness of results upon methodological variations, and in particular we show that PDFs are stable upon changes of the parametrization basis (i.e. the particular linear combination of PDFs that is parametrized by neural nets), thereby confirming that results are parametrization-independent.

NNPDF4.0 PDFs also include a number of improvements at all stages of the PDF determination procedure. The most relevant ones are the following:

-

While the main PDF determination is performed with NNLO QCD (with further sets provided at NLO and LO), NLO electroweak (EW) and mixed QCD-EW processes are implemented for all LHC processes using recent dedicated tools [16] and assessed both for phenomenology and in the determination of the input dataset to be used for PDF fitting.

-

Whenever heavy nuclear or deuteron targets are involved, nuclear effects are accounted for as theoretical uncertainties using the methodology of Refs. [17,18,19], and the results of the nNNPDF2.0 nuclear PDF determination [20].

-

Strict positivity of \(\overline{\mathrm{MS}}\) PDFs is implemented following the results of Ref. [21].

-

Finiteness of non-singlet baryon number, i.e., integrability of all non-singlet PDF first moments is enforced. This specifically implies finiteness of the Gottfried sum [22] \(U-D\) and of the strangeness sum \(U+D-2 S\), where U, D and S denote respectively the first moment of the sum of quark and antiquark PDFs for up, down and strange quarks.

-

The selection of a consistent dataset is based on an objective two-stage procedure. Potentially problematic datasets are identified on the basis of either poor compatibility with the global dataset, or indications of instability of their experimental covariance matrix. These datasets are then subjected in turn to a dedicated fit in which the failed dataset is given a large weight, and then accepted or rejected depending on the outcome.

The main missing features of the current PDF determination, which are left for future work, are the inclusion of theory uncertainties (specifically missing higher order corrections), which could be done using the methods of Refs. [23, 24], and the full inclusion of EW and mixed QCD-EW corrections directly at the fitting level, which will be possible using the tools of Ref. [16].

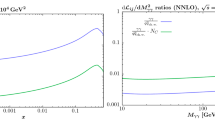

The NNPDF4.0 PDF set is released at LO, NLO and NNLO QCD, for a variety of values of \(\alpha _s\). The default PDF sets are provided in the FONLL variable-flavor number scheme [25] with maximum number of flavors \(n_f=5\), and an independently parametrized charm PDF. PDF sets with different maximum number of flavors and with a perturbatively generated charm PDF are also made available, along with PDF sets determined using reduced datasets, which may be useful for specific applications. The main sets are delivered in the following formats: a Monte Carlo representation with 1000 replicas; a Hessian set with 50 eigenvectors obtained from the Monte Carlo set via the MC2Hessian algorithm [26, 27]; and a compressed set of 100 Monte Carlo replicas, obtained from the original 1000 through the Compressor algorithm [28] as implemented in the new Python code of Ref. [29]. The final NNPDF4.0 NNLO PDFs are shown in Fig. 1 both at a low (\(Q=3.2\) GeV) and a high (\(Q=100\) GeV) scale.

More importantly, the full NNPDF software framework is released as an open source package [30]. This includes the full dataset; the methodology hyperoptimization; the PDF parametrization and optimization; the computation of physical processes; the set of validation tools; and the suite of visualization tools. The code and the corresponding documentation are discussed in a companion paper [31].

The structure of this paper is the following. First, in Sect. 2 we present the input experimental data and the associated theoretical calculations that will be used in our analysis, with emphasis on the new datasets added in comparison to NNPDF3.1. Then in Sect. 3 we discuss the fitting methodology, in particular the parametrization of PDFs in terms of neural networks, their training, and the algorithmic determination of their hyperparameters. The procedure adopted to select the NNPDF4.0 baseline dataset is described in Sect. 4. The main result of this work, the NNPDF4.0 determination of parton distributions, is presented in Sect. 5, where we also compare with previous NNPDF releases and with other PDF sets. The closure test and future test used to validate the methodology are described in Sect. 6.

Subsequently, we assess the dependence of our PDFs on the dataset in Sect. 7, where we study the impact of new data in comparison with NNPDF3.1, and verify the impact of individual processes by studying PDF determinations in which data corresponding to individual classes of processes are removed in turn. Also, we present PDFs determined by adding specific datasets, such as the EMC charm structure function, the NOMAD neutrino dimuon structure functions, and the HERA DIS jet data. Then in Sect. 8 we assess the dependence of PDFs on the methodology and verify the robustness of our results, by comparing with PDFs obtained using the previous NNPDF3.1 methodology and by studying the impact of new positivity and integrability constraints, checking the independence of results of the choice of PDF parametrization, discussing the impact of independently parametrizing the charm PDF, and studying the role of nuclear corrections. We finally present a first assessment of the implications of NNPDF4.0 for LHC phenomenology in Sect. 9, by computing PDF luminosities, fiducial cross-sections, and differential distributions for representative processes. In Sect. 10 we summarize and list the NNPDF4.0 grid files that are made available through the LHAPDF interface [32] and provide a summary and outlook.

A brief overview of the NNPDF fitting code is presented in App. A, while a more extensive description is provided by the companion publication [31]. In App. B we compare the NNPDF4.0 dataset to that adopted in other PDF determinations.

2 Experimental and theoretical input

We present the NNPDF4.0 dataset in detail. After a general overview, we examine each of the processes for which new measurements are considered in NNPDF4.0, we present the details of the measurements, and, for each dataset, we describe how the corresponding theoretical predictions are obtained. In NNPDF4.0, theoretical predictions for data taken on nuclear targets are supplemented by nuclear corrections, which are specifically discussed in a dedicated section. Experimental statistical and systematic uncertainties are treated as in previous NNPDF determinations: see in particular Sect. 2.4.2 of Ref. [14] for a detailed discussion.

The global dataset presented in this section is the basis for the final NNPDF4.0 dataset, which will be selected from it by applying criteria based on testing for dataset consistency and compatibility, and for perturbative stability upon the inclusion of electroweak corrections. The selection of the final dataset will be discussed in Sect. 4 below.

2.1 Overview of the NNPDF4.0 dataset

The NNPDF4.0 dataset includes essentially all the data already included in NNPDF3.1, the only exceptions being a few datasets that are replaced by a more recent final version, and single-inclusive jet datasets which are now partly replaced by dijet data, as we discuss below. All the new datasets that were not included in NNPDF3.1 are more extensively discussed in Sect. 2.2. For all those already included in NNPDF3.1 we refer to Sect. 2 of Ref. [5] for a detailed discussion. Nevertheless we give a summary below.

The NNPDF3.1 dataset included data for lepton-nucleon, neutrino-nucleus, proton-nucleus and proton-(anti)proton scattering processes. The bulk of it consisted of deep inelastic scattering (DIS) measurements: these included fixed-target neutral current (NC) structure function data from NMC [33, 34], SLAC [35] and BCDMS [36], fixed-target inclusive and dimuon charged current (CC) cross-section data from CHORUS [37] and NuTeV [38, 39], and collider NC and CC cross-section data from the HERA legacy combination [40]. The combined H1 and ZEUS measurement of the charm cross-section [41] and the separate H1 [42] and ZEUS [43] measurements of the bottom cross-section were also included, both to be replaced by more recent data as we discuss below. The charm structure function measured by the EMC experiment [44] was also studied in a variant fit, in which its constraining power on the intrinsic component of the charm PDF was explicitly assessed, and the same will be done here.

In addition to the DIS measurements, the NNPDF3.1 dataset included fixed-target DY data from the Fermilab E605 [45] and E866 [46, 47] experiments, inclusive gauge boson production [48,49,50,51] and single-inclusive jet production [52] cross-section data from the Tevatron. A sizable amount of LHC data were also included, specifically: inclusive gauge boson production data from ATLAS [53,54,55,56], CMS [57,58,59,60] and LHCb [61,62,63,64]; Z-boson transverse momentum production data from ATLAS [65] and CMS [66]; and top pair production total and differential cross-section data from ATLAS [67,68,69] and CMS [70,71,72]. Single-inclusive jet production data from ATLAS [73,74,75] and CMS [76, 77] were also included. These will be partly replaced by dijet data as we discuss below. For the determination of NLO PDFs, W production measurements in association with a charm jet from CMS [78] were also included. Most of these LHC measurements were performed at \(\sqrt{s}=7\) TeV [53,54,55,56,57,58,59, 61,62,63, 67, 70, 73, 75, 76, 78]; two single-inclusive jet measurements were performed at \(\sqrt{s}=2.76\) TeV [74, 77]; two gauge boson production measurements [60, 64], the Z-boson transverse momentum measurements [65, 66] and some top pair production measurements [67, 69, 70, 72] were performed at \(\sqrt{s}=8\) TeV; and two top pair total cross-section measurements [68, 71] were performed at \(\sqrt{s}=13\) TeV.

The NNPDF4.0 dataset builds upon NNPDF3.1, by adding various new datasets to it. On the one hand, a variety of new LHC measurements for processes already present in NNPDF3.1, on the other hand data corresponding to new processes. New datasets for existing LHC processes are added for electroweak boson production, both inclusive and in association with charm, single-inclusive jet production, and top pair production. The new processes are gauge boson with jets, single top production, inclusive isolated photon production, and dijet production.

For inclusive electroweak boson production we consider: at \(\sqrt{s}=7\) TeV, the ATLAS W and Z distributions [54] in the central and forward rapidity regions (only the subset corresponding to the central region was included in NNPDF3.1); at \(\sqrt{s}=8\) TeV, the ATLAS Z double- and triple-differential distributions [79, 80], the ATLAS W differential distribution [81] and the LHCb W differential distribution [82]; at \(\sqrt{s}=13\) TeV, the ATLAS W and Z total cross-section [83] and the LHCb Z differential distributions [84]. For electroweak gauge boson production with charm, we consider the ATLAS [85] and CMS [86] differential distributions at \(\sqrt{s}=7\) TeV and \(\sqrt{s}=8\) TeV, respectively. Given that the corresponding NNLO QCD corrections are not available in a format suitable for inclusion in a fit [87], these two datasets are included only in the determination of NLO PDFs.

For single-inclusive jet production we consider the ATLAS [88] and CMS [89] double differential cross-sections at \(\sqrt{s}=8\) TeV. For top pair production we consider: at \(\sqrt{s}=5.02\) TeV, the CMS total cross-section [90]; at \(\sqrt{s}=8\) TeV, the ATLAS differential distributions [91] and the CMS double differential distributions [92], both of which are measured in the dilepton final state; at \(\sqrt{s}=13\) TeV, the CMS differential distributions measured in the lepton+jets [93] and in the dilepton [94] final states. For W-boson production with jets we consider the ATLAS differential distributions at \(\sqrt{s}=8\) TeV [95]. For single top production, we consider only measurements in the t-channel, specifically: at \(\sqrt{s}=7\) TeV, the ATLAS top to antitop total cross-section ratio, with the corresponding differential distributions [96] and the CMS combined top and antitop total cross-sections [97]; at \(\sqrt{s}=8\) TeV, the ATLAS [98] and CMS [99] top to antitop total cross-section ratios and the ATLAS differential distributions [98]; at \(\sqrt{s}=13\) TeV the ATLAS [100] and CMS [101] top to antitop cross-section ratios. For inclusive isolated photon production we consider the ATLAS differential cross-sections at \(\sqrt{s}=8\) TeV [102] and at \(\sqrt{s}=13\) TeV [103]. For dijet production we consider, at \(\sqrt{s}=7\) TeV, the ATLAS [88] and CMS [76] double differential distributions and, at \(\sqrt{s}=8\) TeV, the CMS triple differential distributions [89].

Additional LHC measurements at \(\sqrt{s}=13\) TeV for processes relevant to PDF determination are in principle available: specifically, the ATLAS [104] and CMS [105] Z transverse momentum distributions; the CMS W+jets distributions [106]; the ATLAS [107] and CMS [108] single-inclusive jet distributions; and the ATLAS [109] and LHCb [110] top pair distributions. We do not include these measurements because either they are first analyses based on a still reduced luminosity sample, or because they do not come with complete information on experimental uncertainties, or because NNLO QCD corrections are not yet available.

The non-LHC dataset is also expanded in NNPDF4.0. For DIS, we now also consider the dimuon to inclusive cross-section ratio measured by the NOMAD experiment [111], though only in a variant determination, see Sect. 7.3.4. We also consider a selection of differential cross-sections for single-inclusive and dijet production in DIS measured by ZEUS [112,113,114] and H1-HeraII [115, 116], again only in a variant determination that will be discussed in Sect. 7.3.5. For fixed-target DY, we include the recent measurement for the proton-deuteron to proton-proton differential cross-section ratio performed by the E906/SeaQuest experiment [117].

The theoretical treatment of the data already included in NNPDF3.1 is the same in all respects as in that analysis, to which we refer for details. The general NNPDF3.1 settings will in fact be adopted throughout, with specific aspects relevant for the new data to be discussed in Sect. 2.2 below. Fast interpolation grids, accurate to NLO in perturbative QCD, are produced in the APFELgrid format [118]; APFEL [119] and various fixed-order Monte Carlo event generators [120,121,122,123,124,125,126] (possibly interfaced to APPLgrid [127] or FastNLO [128,129,130] with MCgrid [131, 132] or aMCfast [133]) are utilized for the computation of DIS and non-DIS observables, respectively. The charm PDF is parametrized by default and the FONLL general-mass variable flavor number scheme [25, 134, 135] is utilized to compute DIS structure functions.

Except for DIS and for DIS jets, for which we also make use of NNLO fast interpolation grids, NNLO QCD corrections to matrix elements are implemented by multiplying the NLO predictions by a K-factor. This is defined as the bin-by-bin ratio of the NNLO to NLO prediction computed with a pre-defined NNLO PDF set (see Sect. 2.3 in [14] for details). For all of the fixed-target DY data and for all of the new LHC datasets considered in NNPDF4.0, this PDF set is NNPDF3.1_nnlo_as_0118 [5]; for the Tevatron and LHC datasets already included in NNPDF3.1, we used the same PDF sets specified in Sect. 2.1 of [5]. For these datasets the PDF dependence of the K-factors is generally smaller than all the other relevant uncertainties, as explicitly shown in [5]. We have checked this explicitly by recomputing the K-factors for all of the inclusive gauge boson production measurements, for both fixed-target and collider experiments, and for all of the top-quark pair production measurements with the baseline NNPDF4.0 set, and then repeating the NNLO PDF determination. The ensuing PDFs turn out to be statistically equivalent to the NNPDF4.0 baseline. The values of all physical parameters are the same as in NNPDF3.1.

The NNPDF4.0 dataset is thus a superset of NNPDF3.1 with the following exceptions. First, in the NNPDF4.0 baseline the single-inclusive jet data are replaced by their dijet counterparts (though the single-inclusive jet data will be considered in a variant NNPDF4.0 determination, see Sect. 7.3.3 below). Furthermore, a number of small alterations is made to the original set of NNPDF3.1 data, or to their theoretical treatment, as we now discuss.

In terms of data, the total cross-section results from Ref. [68] are no longer used, as they are replaced by the more recent measurement [136] based on the full Run II luminosity, to be discussed in Sect. 2.2.6 below. For the differential distributions measured by ATLAS at \(\sqrt{s}=8\) TeV in the lepton+jets final state [69] only one distribution out of the four available was included in NNPDF3.1 while all of them are included in NNPDF4.0, because the correlations between distributions have become available meanwhile. The single-inclusive jet measurements from ATLAS [74] and CMS [77] at \(\sqrt{s}=2.76\) TeV and from ATLAS [53] at \(\sqrt{s}=7\) TeV are no longer included in NNPDF4.0 because NNLO QCD corrections, which are provided with the optimal scale choice of Ref. [137], are not available for these measurements. For the same reason the CDF single-inclusive jet data [52] are also not included. These datasets were already removed in intermediate updates of the NNPDF3.1 determination [8, 10] or in subsequent studies [19, 23, 24, 138].

In terms of theoretical treatment the changes are the following. For DIS we correct a bug in the APFEL computation of the NLO CC structure functions, that mostly affects the large-x region; and we re-analyze the NuTeV dimuon cross-section data by including the NNLO charm-quark massive corrections [139, 140], as explained in [10], and by updating the value of the branching ratio of charmed hadrons into muons to the PDG value [141], as explained in [18]. For fixed-target DY, we include the NNLO QCD corrections for the E866 measurement [47] of the proton-deuteron to proton-proton cross-section ratio: these corrections had been inadvertently overlooked in NNPDF3.1. For gauge boson production at the Tevatron, we correct a small bug affecting the CDF Z rapidity distribution [48], whereby the last two bins had not been merged consistently with the updated measurement. For jets, we update the theoretical treatment of the single-inclusive jet measurements at \(\sqrt{s}=7\) TeV [75, 76], in that NLO and NNLO theoretical predictions are now computed with factorization and renormalization scales equal to the optimal scale choice advocated in Ref. [137], namely, the scalar sum of the transverse momenta of all partons in the event, see Ref. [9].

To assess the impact of these changes in dataset and theoretical treatment, we will consider a variant of NNPDF3.1 in which all of these changes, but not the replacement of single-inclusive jets with dijets, are taken into account. This determination will be referred to as NNPDF3.1-like henceforth. It will be used to carry out various methodological tests in Sects. 3 and 6. The NNPDF3.1-like determination contains 4092 data points for a NNLO fit.

The data included in NNPDF4.0 are summarized in Tables 1, 2, 3, 4 and 5, respectively for DIS, DIS jets, fixed-target DY, collider inclusive gauge boson production and other LHC processes. For each process we indicate the name of the dataset used throughout this paper, the corresponding reference, the number of data points in the NLO/NNLO fits before (and after) kinematic cuts (see Sect. 4), the kinematic coverage in the relevant variables after cuts, and the codes used to compute the corresponding predictions. Datasets not previously considered in NNPDF3.1 are indicated with an asterisk. Datasets not included in the baseline determination are indicated in brackets.

The total number of data points included in the default PDF determination is 4426 at NLO and 4618 at NNLO, to be compared to 4295 at NLO 4285 at NNLO in NNPDF3.1 and to 4092 (at NNLO) in NNPDF3.1-like fits presented here. A comparison between the datasets considered in NNPDF4.0 and the datasets included in NNPDF3.1 and in other recent PDF determinations, namely ABMP16 [142], CT18 [143] and MSHT20 [144], is presented in App. B, see Tables 33, 34, 35, 36, 37 and 38.

The kinematic coverage in the \((x,Q^2)\) plane of the NNPDF4.0 dataset entering the default NNLO fit is displayed in Fig. 2. For hadronic data, kinematic variables are determined using LO kinematics. Whenever an observable is integrated over rapidity, the center of the integration range is used to compute the values of x. The data points corresponding to datasets that are new in NNPDF4.0 are indicated with a black edge.

The complete information on experimental uncertainties, including the breakdown into different sources of systematic uncertainties and their correlations, is taken into account whenever available from the corresponding publications or from the HEPData repository [150]. No decorrelation models are used, except when explicitly recommended by the collaboration. This is the case of the single-inclusive jet cross-section measurement performed by ATLAS at \(\sqrt{s}=8\) TeV [88]. Decorrelation models [9, 151,152,153,154] were studied for the ATLAS jet measurements at \(\sqrt{s}=7\) TeV [75] and for the ATLAS top pair measurements at \(\sqrt{s}=8\) TeV [69]. However these are not considered in our default determination, but only in variant fits, see Sect. 8.7.

2.2 New data in NNPDF4.0

We now discuss in detail the new datasets considered in NNPDF4.0. These are indicated with an asterisk in Tables 1, 2, 3, 4 and 5. The data are presented by process, with the processes already considered in NNPDF3.1 addressed first.

2.2.1 Deep-inelastic scattering

We include the combined H1 and ZEUS measurements of reduced electron-proton NC DIS cross-sections for the production of open charm and bottom quarks [145]. These measurements extend the previous combination of open charm production cross-sections [41] and supersede the separate H1 [42] and ZEUS [43] datasets for the structure function \(F_2^b\) that were included in NNPDF3.1. As for the other DIS measurements included in the NNPDF4.0 dataset, they are analyzed in the FONLL scheme [25, 134, 135] within fixed order perturbative accuracy (i.e. not including resummation).

We also consider the measurements of the ratio \(\mathcal {R}_{\mu \mu }\) of dimuon to inclusive neutrino-nucleus CC DIS cross-sections performed by the NOMAD experiment [111]. These measurements are presented alternatively as a function of the neutrino beam energy \(E_\nu \), of the momentum fraction x, or of the final state invariant mass W. Because experimental correlations are not provided among the three distributions, they cannot be included in the fit at the same time. We therefore select only one of them, namely the measurement as a function of the neutrino beam energy, the only variable among the three that is directly measured by the experiment. This choice is based on the previous study [10], carried out in the context of a variant of the NNPDF3.1 determination, in which it was shown that the three distributions have a similar impact in the fit.

The treatment of this dataset in NNPDF4.0 closely follows Ref. [10]. Specifically we incorporate the recently computed NNLO charm-quark massive corrections [139, 140] by means of a K-factor (see Sect. 2.2.2 in [10]). The NOMAD data are not included in our default determination, however we assess its impact on the NNLO PDFs by means of Bayesian reweighting [155, 156]. The reason for this choice is dictated by the fact that the observable is integrated over Q and x (see e.g. Eq. (2.1) in Ref. [10]), which complicates the generation of fast interpolation tables in the APFELgrid format.

2.2.2 Jet production in deep-inelastic scattering

We consider a selection of DIS single-inclusive jet (1j) and dijet production (2j) cross-sections measured by ZEUS [112,113,114] in the high-Q (HQ) region and by H1-HeraII [115, 116] in the HQ and low-Q (LQ) regions. Specifically we consider cross-sections double differential in \(Q^2\) and in the transverse momentum of the jet or of the jet pair, listed in Table 2. Experimental correlations between single-inclusive jet and dijet measurements, which are available only for H1, are taken into account. These allow us to include single-inclusive jet and dijet datasets simultaneously. Additional available measurements, in particular from H1-HeraI [157, 158], are left for future studies. Likewise, variants of the H1-HeraII measurements [115, 116], in which cross-sections are normalized to the inclusive NC cross-section integrated over the width of each \(Q^2\) bin, are not yet considered. These normalized cross-sections might benefit from cancellations of systematic uncertainties and uncertainty correlation with HERA inclusive DIS measurements.

Theoretical predictions for the ZEUS and H1-HeraII datasets are obtained using fast interpolation grids precomputed with NNLOjet. These incorporate the recently determined NNLO QCD corrections [159]. Multiplicative hadronization correction factors, as provided in the experimental analyses, are included throughout. Because this theoretical input has become available only very recently, the ZEUS and H1-HeraII datasets are not included in our default determination, but only in a variant NNLO set by means of Bayesian reweighting, see Sect. 7.3.5.

2.2.3 Fixed-target Drell–Yan production

We consider the new measurement recently performed by the SeaQuest experiment at Fermilab [117] for production of a Z boson decaying into muon pairs. Like the previous NuSea measurement [47], which was included in the NNPDF3.1 dataset, the SeaQuest experiment measures the ratio of the scattering cross-section of a proton beam off a deuterium target to the cross-section off a proton target. The measurement is double differential in the partonic momentum fractions of the struck partons. The SeaQuest data extend the NuSea data to larger values of x, \(0.15\lesssim x \lesssim 0.40\), with the aim of constraining the antiquark asymmetry in this region [47]. Theoretical predictions are computed by taking into account acceptance corrections, according to Eq. (10) in Ref. [117]. Fast interpolation tables accurate to NLO are generated with APFEL; these are then supplemented with a NNLO K-factor computed with a version of Vrap [160] that we modified to account for the isoscalarity of the deuteron target. Nuclear effects are taken into account by means of the procedure discussed in Ref. [19] and further summarized in Sect. 2.3.

2.2.4 Inclusive collider electroweak gauge boson production

The new datasets we consider for inclusive W and Z boson production and decay are from the ATLAS and LHCb experiments.

We include the ATLAS measurements of the W and Z differential cross-section at \(\sqrt{s}=7\) TeV [54] in the central and forward rapidity regions. As mentioned above, these data were already included in NNPDF3.1, but only the subset corresponding to the central region. The measurements cover, respectively, the pseudo-rapidity range \(|\eta _\ell |<2.5\) (for W bosons) and the rapidity range of the lepton pair \(|y_{\ell \ell }|<3.6\) (for the Z boson). In the latter case, the invariant mass of the lepton pair is \(46\le m_{\ell \ell }\le 150\) GeV. The measurements correspond to an integrated luminosity of 4.6 \(\hbox {fb}^{-1}\). We consider the combination of measurements in the electron and muon decays.

We consider the ATLAS measurements of the double and triple differential DY lepton pair production cross-section at \(\sqrt{s}=8\) TeV [79, 80]. The differential variables are the invariant mass and rapidity of the lepton pair, \(m_{\ell \ell }\) and \(y_{\ell \ell }\), and, in addition to these for the latter case, the cosine of the Collins-Soper angle \(\cos \theta ^*\). The measurements cover two separate invariant mass ranges, respectively \(116\le m_{\ell \ell }\le 1500\) GeV and \(46\le m_{\ell \ell }\le 200\) GeV, in the same central rapidity range \(|y_{\ell \ell }|<2.4\). The same data sample corresponding to an integrated luminosity of 20.2 \(\hbox {fb}^{-1}\) is used in the two cases, which therefore overlap in the interval \(116\le m_{\ell \ell }\le 200\) GeV. The two analyses are consistent in this region, however because the one in [79] is optimized to high invariant masses, we remove the overlapping bins from the dataset in [80]. In both cases we consider the measurements in which the electron and muon decay channels have been combined; for the triple differential distribution, we consider the measurement integrated over \(\cos \theta ^*\) in order to reduce sensitivity to the value of the Weinberg angle \(\sin ^2\theta _W\).

We include the ATLAS measurement of the W production cross-section and decay at \(\sqrt{s}=8\) TeV [81]. The data are differential in the pseudo-rapidity of the decay muon \(\eta _\mu \), which is accessed in the central pseudo-rapidity range \(|\eta _\mu |<2.4\) by analyzing a data sample corresponding to an integrated luminosity of 20.2 \(\hbox {fb}^{-1}\). As for the companion ATLAS measurement at \(\sqrt{s}=7\) TeV [54], we consider the separate \(W^+\) and \(W^-\) differential distributions rather than their asymmetry.

We consider the ATLAS measurement of the total W and Z cross-section and decay into leptons at \(\sqrt{s}=13\) TeV [83]. The measurement corresponds to an integrated luminosity of 81 \(\hbox {pb}^{-1}\).

We include the LHCb measurement of the W cross-section at \(\sqrt{s}=8\) TeV [82]. The data are differential in the pseudo-rapidity of the decay electron \(\eta _e\), which is accessed in the forward range \(2.00<|\eta _e|<4.25\). The data sample corresponds to an integrated luminosity of 2 \(\hbox {fb}^{-1}\). In this case, we cannot consider the separate \(W^+\) and \(W^-\) differential distributions, because we find that the correlated experimental uncertainties lead to a covariance matrix that is not positive definite. Therefore, in this case we make use of the asymmetry measurement, which is not affected by this problem since most of the correlations cancel out.

Finally, we include the LHCb measurement of the Z cross-section at \(\sqrt{s}=13\) TeV [84]. The data are differential in the Z boson rapidity \(y_Z\) [84], with \(2.00<|y_Z|<4.50\), and it covers the Z-peak lepton pair invariant mass range \(60\le m_{\ell \ell }\le 120\) GeV. The data sample corresponds to an integrated luminosity of 294 \(\hbox {pb}^{-1}\). We include separately the datasets in the dimuon and dielectron decay channels.

These datasets, specifically from ATLAS, are particularly precise, with systematic uncertainties of the order of percent or less and even smaller statistical uncertainties. They are dominated by the luminosity uncertainty, which is of the order of 1.9-2.1% (1.2-3.9%) for ATLAS (LHCb) respectively at \(\sqrt{s}=8\) TeV and at \(\sqrt{s}=13\) TeV.

Theoretical predictions are computed at NLO with MCFM (v6.8) [120,121,122] and are benchmarked against those obtained with mg5_aMC (v3.1) [124, 125]. The NNLO K-factor is computed with FEWZ (v3.1) [161,162,163] for all the datasets excepting those of [80, 81], for which DYNNLO [164, 165] is used instead. We benchmarked these calculations against MCFM (v9.0) [166], and found the relative difference between different computations to be negligible in comparison to the data uncertainties. The renormalization and factorization scales are set equal to the mass of the gauge boson, for total cross-sections and for cross-sections differential in rapidity or pseudorapidity variables, or to the central value of the corresponding invariant mass bin, for cross-sections that are also differential in the invariant mass of the lepton pair.

2.2.5 Gauge boson production with additional jets

On top of inclusive gauge boson production, we consider more exclusive measurements in which a W boson is produced in association with \(N_\mathrm{jets}\) jets of light quarks, or with a single jet of charm quarks.

Specifically, we include the ATLAS data for W production with \(N_\mathrm{jets}\ge 1\) [95] at \(\sqrt{s}=8\) TeV. The measurement corresponds to an integrated luminosity of 20.2 \(\hbox {fb}^{-1}\). We select the distribution differential in the transverse momentum of the W boson, \(p_T^W\), which covers the range \(0\le p_T^W\le 800\) GeV. Theoretical predictions are determined as in the ATLAS study of [167]: at NLO, fast interpolation grids are generated with MCFM; at NNLO, QCD corrections are implemented by means of K-factors determined with the \(N_\mathrm{jetti}\) program [168, 169]. The factorization and renormalization scales are set equal to the mass of the W boson.

We further include the ATLAS [85] and CMS [86] data for production of W with a charm jet, at \(\sqrt{s}=7\) TeV and \(\sqrt{s}=13\) TeV, respectively. The two measurements correspond to integrated luminosities of 4.6 \(\hbox {fb}^{-1}\) and 35.7 \(\hbox {fb}^{-1}\). In both cases, we utilize the cross-sections differential in the pseudo-rapidity of the decay lepton \(\eta _\ell \), which is accessed in the range \(|\eta _\ell |<2.5\) for ATLAS and \(|\eta _\ell |<2.4\) for CMS. In the case of ATLAS, separate distributions for the production of positively and negatively charged bosons are provided; in the case of CMS, only the distribution for the sum of the two is available. Theoretical predictions are computed at NLO with MCFM; NNLO QCD corrections have been computed very recently [87], although in a format that does not allow for their ready implementation. These datasets are therefore not included in the determination of NNLO PDFs. The factorization and renormalization scales are set equal to the mass of the W boson.

All the measurements discussed in this section have been included in a PDF determination, in a specific study based on NNPDF3.1 [10].

2.2.6 Top pair production

We consider several new datasets for top pair production at the LHC. At \(\sqrt{s}=8\) TeV, we include the ATLAS normalized differential cross-section [91] and the CMS normalized double differential cross-section [92], both of which are measured in the dilepton channel. Companion measurements in the lepton+jets channel [69, 72] were already part of NNPDF3.1. These measurements correspond respectively to an integrated luminosity of 20.2 \(\hbox {fb}^{-1}\) and 19.7 \(\hbox {fb}^{-1}\). At \(\sqrt{s}=8\) TeV, we include the ATLAS total cross-section [136] and the CMS absolute differential distributions in the lepton+jets channel [93] and in the dilepton channel [94]. The ATLAS measurement is based on the full Run II sample, corresponding to an integrated luminosity of 139 \(\hbox {fb}^{-1}\) and replaces the corresponding measurement, determined from a partial luminosity [68], included in NNPDF3.1; the CMS measurements are for an integrated luminosity of 35.8 \(\hbox {fb}^{-1}\).

Various differential distributions are available for each of these measurements. Because correlations between different distributions are not available, only one distribution at a time can be included. Rapidity distributions are generally affected by small higher order corrections [170], hence we chose the rapidity of the top quark, when available, as our preferred observable, and otherwise, the rapidity of the top pair. Specifically, we select the distributions differential in the rapidity of the top pair in the case of [91], the double-differential distribution in the rapidity of the top quark and the invariant mass of the top pair in the case of [92] and in the rapidity of the top quark in the case of [93, 94]. We have explicitly verified that the choice of any other distributions does not alter the results. The kinematic coverage of the distributions that we included is shown in Table 5.

Theoretical predictions are computed at NLO with mg5_aMC (v2.6.6) [125]; NNLO QCD corrections are determined from publicly available FastNLO tables [171, 172] for differential distributions and from top++ [173] for the total cross-section. The renormalization and factorization scales are set as in NNPDF3.1, see Sect. 2.7 in [5] for details.

2.2.7 Single-inclusive and dijet production

In NNPDF4.0, following the study of Ref. [9], we consider both single-inclusive jets (as in previous NNPDF determinations) and dijets, that have several desirable theoretical features [137].

For single-inclusive jet production, we include the ATLAS [88] and CMS [89] measurements at \(\sqrt{s}=8\) TeV. They correspond to integrated luminosities of 20.2 \(\hbox {fb}^{-1}\) and 19.7 \(^{-1}\), respectively. In both cases the measurements are provided for the cross-section differential in the transverse momentum, \(p_T^\mathrm{jet}\), and of the rapidity, \(y^\mathrm{jet}\), of the jet. The data cover the range \(70\le p_T^\mathrm{jet}\le 2.5\) TeV and \(|y^\mathrm{jet}|\le 3.0\). Theoretical predictions are computed at NLO with NLOJet++ (v4.1.3) [126] and benchmarked against the independent computation presented in [174]. NNLO QCD corrections are incorporated by means of the K-factor computed in the same publication. The factorization and renormalization scales are set equal to the optimal scale choice recommended in Ref. [137], namely, the scalar sum of the transverse momenta of all partons in the event.

For dijet production we consider the ATLAS [148] and CMS [76] measurements at \(\sqrt{s}=7\) TeV and the CMS measurement [149] at \(\sqrt{s}=8\) TeV. They correspond to integrated luminosities of 4.5 \(\hbox {fb}^{-1}\) (at 7 TeV) and of 19.7 \(\hbox {fb}^{-1}\) (at 8 TeV). For ATLAS, the cross-section is double differential in the dijet invariant mass \(m_{jj}\) and in the absolute difference of the rapidities of the two jets \(y^*\). The corresponding ranges are \(260\le m_{jj}\le 4.27\) TeV and \(0.0\le y^* \le 3.0\). For CMS, the cross-section is double differential in \(m_{jj}\) and in the maximum absolute rapidity of the two jets \(|y_\mathrm{max}|\) (at 7 TeV) and triple differential in the average transverse momentum of the jet pair \(p_{T,\mathrm{avg}}\), the dijet boost \(y_b\), and \(y^*\) (at 8 TeV). The corresponding ranges are \(133\le p_{T,\mathrm{avg}}\le 1.78\) TeV and \(0.0\le y_b,y^*\le 3.0\). As in the case of single-inclusive jets, theoretical predictions are computed at NLO with NLOJet++ and are benchmarked against the independent computation of Ref. [174]. This computation is also used to determine the NNLO QCD corrections, implemented as K-factors. The renormalization and factorization scale used in the computation are set to the invariant mass of the dijet system, again following the recommendation of Ref. [137].

Single-inclusive jet and dijet observables cannot be simultaneously included because full knowledge of the experimental correlations between them is not available. The selection of the optimal set of jet observables will be performed and discussed in Sect. 4, in the context of the final dataset selection.

2.2.8 Inclusive isolated-photon production

Isolated photon production was not included in previous NNPDF releases and is included in NNPDF4.0 for the first time. We specifically consider the ATLAS measurements at \(\sqrt{s}=8\) TeV [102] and \(\sqrt{s}=13\) TeV [175]. They correspond to integrated luminosities of 20.2 \(\hbox {fb}^{-1}\) and 3.2 \(\hbox {fb}^{-1}\), respectively. The measurements are provided for the cross-section differential in the photon transverse energy \(E_T^\gamma \) in different bins of the photon pseudorapidity \(\eta _\gamma \). The accessed ranges are, in both cases, \(E_T^\gamma <1500\) GeV and \(|\eta _\gamma |<2.37\). Theoretical predictions are computed at NLO with MCFM and benchmarked against the independent computation presented in [176]. The smooth cone isolation criterion [177] is adopted accordingly, with the parameter values determined in [178]. NNLO QCD corrections are incorporated by means of the K-factors computed in [176]; K-factors are also used to incorporate corrections due to resummation of the electroweak Sudakov logarithms at leading-logarithmic accuracy, according to the procedure presented in [179, 180]. The factorization and renormalization scales are set equal to the central value of \(E_T^\gamma \) for each bin. The impact of the measurements presented above on a PDF determination was studied in [7] in the context of a variant of the NNPDF3.1 fit. These data were found to be generally well described, except in the most forward rapidity region, and to have a mild impact on the gluon PDF at intermediate values of x.

2.2.9 Single top production

Another process included for the first time in an NNPDF release is t-channel single top production. We consider the ATLAS [96, 98, 100] and CMS [97, 99, 101] measurements at \(\sqrt{s}=7\), 8 and 13 TeV that correspond, for ATLAS (CMS), to integrated luminosities of 4.59, 20.2 and 3.2 \(\hbox {fb}^{-1}\) (2.73, 19.7 and 2.2 \(\hbox {fb}^{-1}\)), respectively. In the case of ATLAS, we consider the ratio of the top to antitop inclusive cross-sections at 7 and 13 TeV and the distributions differential in the top or antitop rapidity \(y_{t,\bar{t}}\) at 7 and 8 TeV normalized to the corresponding total cross-section. The rapidity ranges are \(|y_{t,\bar{t}}|<3.0\) and \(|y_{t,\bar{t}}|<2.2\) at \(\sqrt{s}=7\) and 8 TeV, respectively. In the case of CMS, we consider the sum of the top and antitop inclusive cross-sections at 7 TeV and the ratio of the top to antitop inclusive cross-sections at 8 and 13 TeV. Theoretical predictions are computed in the five-flavor scheme. At NLO the calculation is performed with mg5_aMC (v2.6.6) [125]; NNLO corrections are incorporated by means of the K-factors determined in [181, 182]. The renormalization and factorization scales are set equal to the top mass.

The measurements presented above were extensively studied in the context of a variant of the NNPDF3.1 fit in [8]. The choice of observables included for PDF determinations is based on the results of that reference. In particular, distributions differential in the transverse momentum of the top quark or antiquark are also provided by the experimental collaborations. However, their inclusion would result in a double counting, given that experimental correlations across uncertainties for different distributions are not provided. In [8] these measurements were found to have a mild impact on the up and down PDFs at \(x\gtrsim 0.1\).

Single top t-channel production is in principle also sensitive to the theoretical details of the matching schemes and, in the five-flavor scheme, to the bottom PDF. Here we determine the bottom PDF using perturbative matching conditions, but it could in principle be parametrized independently, like the charm PDF. However, while this may become relevant in the future, it does not seem necessary at present given the precision and kinematic coverage of the existing data.

2.3 Treatment of nuclear corrections

The NNPDF4.0 dataset, like its predecessors, includes a significant amount of data involving deuterium or heavy nuclear targets, both for deep inelastic and hadronic processes. These are summarized in Table 6, where we also report the corresponding reference, the number of data points in the NLO and NNLO baseline fits, and the species of the nuclear target. Overall, 1416 and 1417 data points come from nuclear measurements in the NLO and NNLO fits respectively, which amount to about 30% of the full dataset. All of these datasets but SeaQuest [117] were already included in the previous NNPDF3.1 determination [5].

The inclusion of nuclear data in a fit of proton PDFs requires accounting for changes in the PDFs induced by the nuclear medium. The impact of such changes was studied by us in [14, 183] and found to be subdominant in comparison to the PDF uncertainty at that time. Specifically, it was shown (see Sect. 4.11 in [5]) that, upon removing data with nuclear targets from the dataset, the precision of up, down and strange quark and anti-quark PDFs deteriorated by an amount larger than the size of the effect of the nuclear corrections estimated on the basis of models. Nuclear corrections were consequently not included in the NNPDF3.1 determination.

In NNPDF4.0 we revisit this state of affairs, motivated by the significant reduction of the PDF uncertainty in comparison to NNPDF3.1, which suggests that nuclear effects can no longer be neglected. We now account for nuclear effects by viewing them as a theoretical uncertainty. The way this is determined and included follows the methodology developed in [18, 19], to which we refer for details. The basic idea is to determine the uncertainty from the difference between the values of observables computed with the proton and nuclear PDFs, with each different determination of the nuclear PDF taken as an independent nuisance parameter. This can then be used to compute a theoretical covariance matrix, that can be added to the experimental covariance matrix.

In order to apply this methodology an underlying set of nuclear PDFs is needed for the computation of the shifts. Heavy nuclear and deuteron corrections are treated separately because of the substantial difference in the observed size and expected origin of the nuclear effects. For heavier nuclei (Fe, Cu and Pb targets) we will use the nNNPDF2.0 nuclear PDFs [20]. For deuterium, we use the self-consistent procedure described in [19], whereby the proton and deuteron PDFs are determined simultaneously, each including the uncertainties on the other. This procedure thus requires in turn the use of a PDF determination without deuterium corrections in order to initiate the self-consistent iteration. Here we will apply it by starting with the NNPDF4.0 determination itself. The deuterium PDF determined by this procedure will be described in Sect. 8.6 below.

While nuclear effects will be included as an extra uncertainty in the default NNPDF4.0 determination, we will also discuss for comparison PDFs obtained by neglecting nuclear effects altogether, or by using the nuclear corrections computed as discussed above as a correction to the data and not just as an additional uncertainty, again following the methodology of Refs. [18, 19]. These alternative treatments of nuclear effects will be compared and discussed in Sect. 8.6 below and provide the motivation for including nuclear uncertainties without a correction in the default PDF determination.

3 Fitting methodology

As discussed in the introduction, NNPDF4.0 is the first PDF set to be based on a methodology fully selected through a machine learning algorithm. This means that, whereas the basic structure of the NNPDF4.0 methodology is the same as in previous NNPDF releases, specifically the use of a Monte Carlo representation of PDF uncertainties and correlations, and the use of neural networks as basic interpolating functions [5, 14], all the details of the implementation, such as the choice of neural network architecture and the minimization algorithm, are now selected through an automated hyperoptimization procedure. This is possible thanks to an extensive rewriting and reorganization of the NNPDF framework. Furthermore, some theory constraints built into the PDF parametrization are implemented for the first time in NNPDF4.0. Also for the first time we consider PDF determinations performed with different choices of parametrization basis.

In Sect. 3.1 we start by discussing the PDF parametrization and choice of basis and the way they implement theoretical constraints. In Sect. 3.2 we then present the new NNPDF fitting framework, which is the basis of the hyperoptimization procedure. The hyperoptimization in turn is discussed in Sect. 3.3, along with its output, which defines the baseline NNPDF4.0 methodology. We conclude in Sect. 3.4 with quantitative benchmarks assessing both the efficiency and speed of this final methodology compared to the methodology used for NNPDF3.1.

3.1 PDF parametrization and theoretical constraints

We now turn to the general structure of the PDF parametrization, and the theory constraints that are imposed upon it: specifically sum rules, positivity and integrability.

3.1.1 Parametrization bases

A PDF analysis requires a choice of basis, namely a set of linearly independent PDF flavor combinations that are parametrized at the input evolution scale \(Q_0\). In the NNPDF approach, this corresponds to choosing which are the PDF combinations whose value is the output of a neural network. Optimal results should in principle be independent of this specific choice of basis. Previous NNPDF releases adopted the so-called evolution basis, in which the basis PDFs are chosen as the singlet quark \(\Sigma \) and gluon g that mix upon QCD evolution, and valence \(V_i\) and nonsinglet sea \(T_i\) combinations that are eigenstates of evolution, namely

In NNPDF3.1, this set of linearly independent flavor combinations was supplemented by an independently parametrized total charm PDF \(c+\bar{c}\), with the charm asymmetry \(c-\bar{c}\) assumed to vanish at scale \(Q_0\). Here we will instead supplement the basis Eq. (3.1) with a further nonsinglet combination, namely

still assuming \(c-\bar{c}=0\) at the parametrization scale. At NNLO a small charm asymmetry is then generated by perturbative evolution. The union of Eqs. (3.1, 3.2) will be referred to as the evolution basis henceforth.

We will also consider PDF determination carried out in the flavor basis, in which the PDFs that are parametrized are

related to their evolution basis counterparts

The evolution and flavor bases each have advantages and disadvantages.

For instance, if one chooses a factorization scheme in which PDFs are non-negative [21], positivity is easier to implement in the flavor basis. On the other hand, the integrability of the valence distributions \(V,V_3,V_8\), as required by the valence sum rules, is simpler in the evolution basis.

In this work, we take the evolution basis as our standard choice, however we will explicitly check basis independence, by verifying that equivalent results are obtained in the data region if the flavor basis is adopted instead, see Sect. 8.4 below.

The output of the neural network is supplemented by a preprocessing factor and by normalization constants. The relation between the PDFs and the neural network output is

where k runs over the elements of the PDF basis, \(\mathrm{NN}_k(x;{\varvec{\theta }})\) is the k-th output of a neural network, and \({\varvec{\theta }}\) collectively indicates the full set of neural network parameters. The preprocessing function has the purpose of speeding up the training of the neural net. In order to make sure that it does not bias the result, the exponents \(\alpha _k\) and \(\beta _k\) are varied in a range that is determined iteratively in a self-consistent manner as described in [14], supplemented by a further integrability constraint, to be discussed in Sec. 3.1.4. The independence of result of the choice of preprocessing ranges has been recently validated in Ref. [184], where it is shown that results obtained here can be obtained by a suitable rescaling on the neural network input that avoids preprocessing altogether. The normalization constants \(A_k\) are constrained by the valence and momentum sum rules, also to be discussed below, in Sec. 3.1.2.

When using the flavor basis, the small-x preprocessing is removed from Eq. (3.5), i.e. \(\alpha _k=1\) for all k. This is because standard Regge theory arguments (see e.g. [185]) imply that the singlet and nonsinglet have a different small x behavior, and in particular the nonsinglet has a finite first moment, while the singlet first moment diverges. This means that the small-x behavior of flavor-basis PDFs is the linear combination of a leading singlet small-x growth and a subleading nonsinglet power behavior characterized by a different exponent. Hence, factoring out a common preprocessing exponent is not advantageous in this case.

3.1.2 Sum rules

Irrespectively of the choice of fitting basis, PDFs should satisfy both the momentum sum rule

and the three valence sum rules,

for all values of Q. Provided these sum rules are imposed at the initial parametrization scale, \(Q_0\), perturbative QCD ensures that they will hold for any other value \(Q\ne Q_0\). When transformed to the evolution basis, Eq. (3.8), the valence sum rules read

We have then four equations that fix four of the normalization constants \(A_k\), namely \(A_V\), \(A_{V_8}\),\(A_{V_3}\) and \(A_g\).

In the present analysis we always impose the sum rules in the evolution basis. This means that when performing a fit in the flavor basis, we express the evolution basis PDFs \(f_k\) Eq. (3.4) in terms of the flavor basis PDFs \(\tilde{f}_{k}\) Eq. (3.3) through a transformation matrix \(R_{kk'}\):

and then impose Eqs. (3.6, 3.8).

The integrals in Eqs. (3.6, 3.8) are evaluated between \(x_\mathrm{min}=10^{-9}\) and \(x_\mathrm{max}=1\). Each time the neural network parameters \({\varvec{\theta }}\) are modified by the minimization algorithm, using an adaptative strategy that achieves a relative precision of \(\mathcal {O}\left( 10^{-5}\right) \) across the whole range of x.

3.1.3 Positivity of PDFs and physical observables

Hadron-level cross-sections are non-negative quantities, because they are probability distributions. However, PDFs beyond LO are not probabilities, and thus they may be negative. The reason is that, beyond LO, PDFs include a collinear subtraction which is necessary in order for the partonic cross-sections to be finite. Whether they remain positive or not then depends on the form of the subtraction, i.e. on the factorization scheme. Consequently, in previous NNPDF determinations, in order to exclude unphysical PDFs, we imposed positivity of a number of cross-sections, by means of Lagrange multipliers which penalize PDF configurations leading to negative physical observables. Specifically, we imposed positivity of the \(F_2^u\), \(F_2^d\), \(F_2^s\), and \(F_{L}\) structure functions and of the flavor-diagonal Drell–Yan rapidity distributions \(\sigma _{\mathrm{DY},u\bar{u}}\), \(\sigma _{\mathrm{DY},d\bar{d}}\), \(\sigma _{\mathrm{DY},s\bar{s}}\). However, since this set of positivity observables is not exhaustive, in some extreme kinematic regions physical observables (e.g. very high-mass \(W'\) production) could still become negative within uncertainties.

It was recently shown in Ref. [21] that PDFs for individual quark flavors and the gluon in the \(\overline{\mathrm{MS}}\) factorization scheme are non-negative.Footnote 1 We thus now also impose this positivity condition along with the constraint of positivity of physical cross-sections discussed above. Indeed, note that the positivity of \(\overline{\mathrm{MS}}\) PDFs is neither necessary nor sufficient in order to ensure cross-section positivity [21]: they are independent (though of course related) constraints that limit the space of acceptable PDFs.

We impose positivity of the gluon and of the up, down and strange quark and antiquark PDFs. The charm PDF is also positive in the \(n_f=3\) scheme, but it needs not be positive in the \(n_f=4\) scheme because perturbative matching conditions neglect the quark mass and this generally spoils positivity for a massive quark PDF [21]. We do, however, add a positivity constraint for the charm structure function \(F_2^c\), similar to the ones for other structure functions of individual flavors. Note that this constraint was not included in NNPDF3.1, though it was included in a more recent study based on NNPDF3.1 dataset and methodology [10], where it was found to have a significant impact on the strange PDF.

In the same manner as for the cross-sections, PDF positivity is implemented by means of Lagrange multipliers. Specifically, for each flavor basis PDF \(\tilde{f}_{k}\) Eq. (3.3), one adds a contribution to the total cost function used for the neural network training given by

with \(Q^2 = 5\, \text {GeV}^2\) and with the \(n_i\) values \(x_i\) given by 10 points logarithmically spaced between \(5\cdot 10^{-7}\) and \(10^{-1}\) and 10 points linearly spaced between 0.1 and 0.9. The Elu function is given by

with the parameter \(\alpha =10^{-7}\). Eq. (3.10) indicates that negative PDFs receive a penalty which is proportional both to the corresponding Lagrange multipliers \(\Lambda _k\) and to the absolute magnitude of the PDF itself, and therefore these configurations will be strongly disfavored during the minimization. The Lagrange multiplier increases exponentially during the minimization, with a maximum value \(\Lambda _k^\mathrm{max}\) attained when the maximum training length is reached. We choose \(\Lambda _k^\mathrm{max}=10^{10} \) for the three Drell–Yan observables, and \(\Lambda _k^\mathrm{max}=10^6 \) for all the other positivity observables. These values are chosen in such a way that the constraint is enforced with sufficient accuracy in all cases. The starting values of the Lagrange multipliers and the maximum training length instead are determined as part of the hyperoptimization procedure described in Sect. 3.3 below.

When performing fits in the evolution basis, this PDF positivity constraint is applied after performing the inverse transformation to Eq. (3.9) in order to express the flavor basis PDFs \(\tilde{f}_{k}\) Eq. (3.3) in terms of their evolution basis counterparts \(f_{k}\).

3.1.4 PDF integrability

The small-x behavior of the PDFs is constrained by integrability requirements. First, the gluon and singlet PDFs must satisfy the momentum sum rule, Eq. (3.6), which implies that

while the valence sum rules, Eq. (3.8), constrain the small-x behavior of the valence distributions,

Furthermore, as mentioned, standard Regge theory arguments suggest that the first moments of the non-singlet combinations \(T_3\) and \(T_8\) are also finite, so for instance the Gottfried sum (which is proportional to the first moment of \(T_3\)) is finite. This implies that also for these two combinations one has

To ensure that these integrability requirements are satisfied, first of all we constrain the range of the small-x preprocessing exponents \(\alpha _i\) Eq. (3.5). We supplement the iterative determination of the exponents described in Ref. [14] with the constraints \(\alpha _k <2\) for the singlet and gluon and \(\alpha _k <1\) for the nonsinglet combinations \(xV,\,xV_3,\, xV_8,\, xT_3\) and \(xT_8\). Indeed if the preprocessing exponent were to violate these bounds, the neural net \(\mathrm{NN}(x; {\varvec{\theta }})\) in Eq. (3.5) would have to compensate this behavior in order for integrability to hold. Preprocessing would then be slowing the minimization rather than speeding it up. Note that, in the flavor basis, the small-x preprocessing exponents are absent, so this requirement only applies to the evolution basis.

We observe that while Eq. (3.12) always turns out to be satisfied automatically when fitting to the experimental data, the additional constraints Eqs. (3.13) and (3.14) can sometimes be violated by the fit, and thus must be imposed. This is also achieved through Lagrange multipliers. We include in the total cost function additional contributions of the form

where \(f_k= T_3, T_8\) in the evolution basis while \(f_k=V,V_3,V_8,T_3,T_8\) in the flavor basis. The points \(\{ x_\mathrm{int}^{(i)}\}\) are a set of values in the small x region, \(Q^2_i\) is a suitable reference scale, and, like in the case of positivity, the Lagrange multipliers \(\Lambda _k^{(\mathrm int)}\) grow exponentially during the minimization, with a maximum value \(\Lambda _k^{(\mathrm int)}=100\) attained at maximum training length. We choose \(Q_i^2=5\) GeV\(^2\) and in the evolution basis \(n_i=1\) and \(x_\mathrm{int}^{(1)} = 10^{-9}\), while in the flavor basis \(n_i=3\) and \(x_\mathrm{int}^{(i)}=10^{-9},\,10^{-8},\,10^{-7}\). As for the positivity multiplier, the starting values of the Lagrange multipliers (as well as the maximum training length) are hyperoptimization parameters.

Finally, we introduce a post-selection criterion, in order to discard replicas that fail to satisfy the integrability and retain a large value at small x despite the Lagrange multiplier. It turns out that imposing

is enough to preserve integrability for all replicas. This is due to the fact that the function xf(x) at its maximum is of order one, so the condition Eq. (3.16) ensures that at small x it is decreasing. When determining PDF replicas, we have explicitly checked a posteriori that the numerical computation of the first moment yields a finite result for all PDF replicas.

3.2 Fitting framework

The machine learning approach to PDF determination that we will discuss shortly has been made possible by a complete restructuring of the NNPDF fitting framework. Further motivations for this are the need to deal with a particularly large dataset, and the goal of releasing the NNPDF code as open source, which imposes stringent requirements of quality and accessibility. The code was written in the Python programming language and has been documented and tested thoroughly. The original developments of our new fitting framework were presented in Ref. [11]. The main differences between the NNPDF3.1 and NNPDF4.0 codes are summarized in Table 7.

3.2.1 General structure

A schematic representation of the NNPDF4.0 fitting framework is displayed in Fig. 3. The fit requires three main inputs, which are managed by the NNPDF framework as discussed in Ref. [31]: first, theoretical calculations of physical processes, which are encoded in precomputed tables (FK-tables, see below) possibly supplemented by QCD and EW K-factors. Second, experimental data provided in a common format, including fully correlated uncertainties encoded in a covariance matrix (possibly also including theoretical uncertainties). Third, hyperparameter settings that determine the particular fitting methodology adopted, determined through a hyperoptimization procedure as discussed below. The neural network optimization algorithm, with settings determined by the hyperparameters, finds the best fit of predictions to data by minimizing a figure of merit whose computation is illustrated in Fig. 4. Following a post-fit selection, where outliers with insufficient quality are discarded, the final PDFs are stored in LHAPDF grid format so that they are readily available for use.

Diagrammatic representation of the NNPDF fitting framework. The blue box contains the minimization of the \(\chi ^2\) figure of merit, whose computation is illustrated in Fig. 4

3.2.2 Evaluation of cross-sections and cost function

Figure 4 illustrates the structure of the part of NNPDF4.0 fitting code that evaluates the physical observables in terms of the input PDFs and then computes the associated figure of merit to be used for the fitting. This is at the core of the minimization procedure, indicated by a blue box in Fig. 3. Starting from a matrix of momentum fraction x values, \(\{x_n^{(k)}\}\), the code first evaluates the neural network and the preprocessing factors to construct unnormalized PDFs which are then normalized according to Eqs. (3.6, 3.8) in order to produce the PDFs at the input scale,

where j, n, and k label the PDF flavor, the experimental dataset, and the node in the corresponding x-grid respectively. These PDFs are those listed in Eqs. (3.3) and (3.4) in the evolution and flavor bases respectively, and are related to the neural network output by Eq. (3.5).

The input scale PDFs are convoluted with partonic scattering cross-sections (including perturbative QCD evolution); these are encoded in precomputed grids called FK-tables (see Refs. [118, 189]) resulting in the corresponding physical observables \(\{\mathcal {O}_n\}\). Observables are split into a training and a validation set and cost functions \(\chi ^2_\mathrm{tr}\) and \(\chi ^2_\mathrm{val}\) are computed for each set. The \(\chi ^2\) is defined as in previous NNPDF determinations, and in particular it uses the \(t_0\) method [190] for the computation of multiplicative uncertainties.

Note that each block in Fig. 4 is fully independent, so that its settings can be modified or the whole block can be replaced as required. This characterizes the modular structure of the code. For instance, the block “Neural Net” implements by default the neural network which after hyperoptimization has the architecture displayed in Fig. 11, but it could be replaced by any other parametrization, even by a quantum circuit [191] based on the QIBO library [192]. Similarly, the \(\chi ^2\) with \(t_0\) uncertainties could be replaced by any other cost function.

3.2.3 Optimization strategy

Previous NNPDF determinations used stochastic algorithms for the training of neural networks, and in particular in NNPDF3.1 nodal genetic algorithms were used. Stochastic minimization algorithms are less prone to end up trapped in local minima, but are generally less efficient than deterministic minimization techniques, such as backpropagation combined with stochastic gradient descent (SGD). In the approach adopted here [11], the optimizer is just another modular component of the code, to be chosen through a hyperoptimization as we discuss shortly. The algorithms that we consider are SGD algorithms implemented in the Tensorflow [193] package. Restricting to gradient descent algorithms ensures greater efficiency, while the use of hyperoptimization guarantees against the risk of missing the true minimum or overfitting. The TensorFlow library provides automated differentiation capabilities, which enables the use of arbitrarily complex network architectures without having to provide analytical expressions for their gradients. However, the whole convolution between input PDFs and FK-tables, indicated in Fig. 4 between brackets, needs to be provided to the optimization library in order to use gradient based algorithms. The specific SGD optimizer and its settings are determined via the hyperoptimization procedure described in Sect. 3.3. In comparison to the genetic algorithms used in previous NNPDF releases, the hyperoptimized SGD-based optimizers improve both replica stability and computational efficiency, as we demonstrate in Sect. 3.4 below.

3.2.4 Stopping criterion and post-fit selection

As in previous NNPDF releases, a cross-validation method is used in order to avoid overfitting, which could lead the neural networks to learn noise (such as statistical fluctuations) in the data, rather than the underlying law. This is done through the patience algorithm shown diagrammatically in Fig. 5. This algorithm is based on the look-back cross-validation stopping method [14], whereby the optimal length of the fit is determined by the absolute minimum of \(\chi ^2_\mathrm{val}\) evaluated over a sufficiently large number of iterations of the minimizer. Specifically, the stopping algorithm keeps track of the training step with the lowest \(\chi ^2_\mathrm{val}\), and as soon as this value does not improve for a given number of steps (set equal to a percentage of the maximum number of training epochs), the fit is finalized.

There are three main differences between the stopping criterion used in NNPDF4.0 and that of its predecessor used for NNPDF3.1. First, the patience parameter is hyperoptimized, while previously it was set to be infinity, i.e., the values of \(\chi ^2_\mathrm{val}\) were monitored until the maximum number of iterations was reached. Second, the percentage of data that enters the training set has been increased to 75% for all datasets. This is motivated by the observation that the current dataset is so wide that even with just 25% validation overlearning does not occur in practice. In fact, even with the previous NNPDF3.0 dataset it was observed in the framework of closure testing in Ref. [14] that larger training fractions lead to essentially equivalent results. The faithfulness of results found with this training fraction will be confirmed by closure test studies in Sect. 6 below. Third, the stopping algorithm now also tracks the positivity requirement so that a fit cannot stop if the positivity condition is not satisfied. Instead in NNPDF3.1 replicas which were not fulfilling positivity could be generated and had to be discarded a posteriori. This is now done by verifying that the penalty term of Eq. (3.10) remains below the threshold value \(10^{-6}\) (numerically zero).

Once the optimal stopping point for a given fit has been identified, the same post-fit quality checks that were imposed in NNPDF3.1 are still enforced. Specifically, we remove replicas with too large \(\chi ^2\) values or with too large arc-lengths: in both cases, defined as replicas outside the \(4\sigma \) interval of their distribution. The post-fit selection algorithm also removes replicas that do not satisfy either the positivity or the integrability conditions. Imposing positivity and integrability constraints through post-fit selection has the consequence of making the fit results independent of the way the constraints are imposed: for instance, a looser constraint will simply have the effect of increasing the number of replicas that are discarded.

It is interesting to note that while previously on average around 30% of the fitted replicas were discarded upon applying these criteria, in NNPDF4.0 this fraction has been reduced to around 1%. This improvement is largely the result of the improved handling of these constraints during the fit as well as of the higher stability of the new SGD-based optimization strategy, which results in smoother PDFs with fewer outliers.

3.3 Hyperparameter optimization

Hyperoptimization is at the heart of the construction of the NNPDF4.0 methodology. In brief, hyperoptimization selects the methodology, just like gradient descent selects the values of weights and thresholds of the neural net. The k-folding method, to be discussed below, ensures that a proper fitting (i.e. not over- or under-fitting methodology) is arrived at, just like cross-validation achieves the same goal for neural network training.

Indeed, the optimization procedure (neural network training) described in Sect. 3.2 requires as input a number of methodological choices, such as the neural network architecture, the training rate, and the specific SGD variant to be used. We can view these choices as the set of hyperparameters that defines a specific fitting strategy. While in many ML studies (including previous NNPDF determinations) these hyperparameters are determined by trial and error, here we implement an automated algorithmic procedure to scan the space of hyperparameters and determine the optimal configuration according to a figure of merit.

In this work, the implementation of the hyperparameter scan is based on the hyperopt library [194], which uses a Bayesian optimization algorithm [195] to identify the best configuration.

In order to visualize a typical output of a hyperparameter scan, we show in Fig. 6 the result of a scan based on 1500 independent configurations. We display the hyperoptimization loss function L (figure of merit), to be defined below, for a representative subset of hyperparameters: the depth of the network, the algorithm for the initialization of the network weights, the learning rate and the SGD optimizer variant. The smaller the value of the loss function L, the better this specific point is in the hyperparameter space. The full list of hyperparameters is given in Table 9. Note that here we only display the outcome of hyperparameter configurations that satisfy the post-fit selection cuts. The shape of the reconstructed probability distributions provides an indication of the stability of the results, with a wider distribution corresponding to a higher stability with respect to this specific hyperparameter.