Abstract

We study the problem of optimal transport in tropical geometry and define the Wasserstein-p distances in the continuous metric measure space setting of the tropical projective torus. We specify the tropical metric—a combinatorial metric that has been used to study of the tropical geometric space of phylogenetic trees—as the ground metric and study the cases of \(p=1,2\) in detail. The case of \(p=1\) gives an efficient computation of the infinitely-many geodesics on the tropical projective torus, while the case of \(p=2\) gives a form for Fréchet means and a general inner product structure. Our results also provide theoretical foundations for geometric insight a statistical framework in a tropical geometric setting. We construct explicit algorithms for the computation of the tropical Wasserstein-1 and 2 distances and prove their convergence. Our results provide the first study of the Wasserstein distances and optimal transport in tropical geometry. Several numerical examples are provided.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In algebraic geometry, the geometry of zero sets of systems of polynomials—known as algebraic varieties—are studied using commutative algebra. Tropical geometry is a variant of this field where the polynomials are defined by the tropical algebra: the tropical sum of two elements is their maximum and the tropical product is their usual sum. Mathematical objects such as functions and curves evaluated under the tropical algebra are piecewise linear structures, and tropical varieties are polyhedral complexes. Tropical geometry is an important tool for the study of classical algebraic varieties due to many theoretical coincidences between the two settings. In addition, tropical geometry possesses the advantage of computational tractability and efficiency, as well as connections to other applied sciences. For example, it has been used in optimization theory [41], dynamic programming in computer science [30], as well as in economics and game theory [26]. An application of tropical geometry that has gained much interest is the tropical geometric representation of the space of phylogenetic trees. In particular, there has very recently been active work in using tropical geometry as a data analytic tool for sets of phylogenetic trees [33, 37, 45, 50]. In this paper, we study the tropical projective torus, which is the ambient space of phylogenetic trees, and build upon it to provide a set of tools for statistical, probabilistic, and geometric studies using optimal transport theory.

Optimal transport theory arises from a question posed in economics, and specifically, in the allocation of resources. It deals with optimizing transport modes when geographically displacing resources. Its mathematical formulation was established in the 18th century and has been well-studied since, resulting in strong connections and mutual implications between the domains of dynamical systems and geometry. It has also provided important results in applications and computational fields, such as computer science. An important concept arising from optimal transport is the Wasserstein distances, which are metrics on probability distributions. Intuitively, they measure the effort required to recover the probability mass of one distribution in terms of an efficient reconfiguration of the other. As such, Wasserstein distances broaden the scope of optimal transport theory to probability theory. Additionally, they have been exploited to move further beyond these realms to solve concrete problems in inferential statistics, such as in Panaretos and Zemel [39]. Establishing Wasserstein distances in tropical geometric settings thus provides a framework for a vast body of existing results in these related fields to be applicable to the important problem of statistical inference and data analysis in applied tropical geometric settings by providing a setting for the study of probability measures and distributions. Additionally, it provides an alternative mechanism to study geometric aspects of tropical objects and spaces.

Connecting algebraic theory to optimal transport theory is a new direction of research with very recent contributions involving algebraic geometry and algebraic topology. In Çelik et al [7], the Wasserstein distance between a probability distribution and an algebraic variety is minimized via transportation polytopes. In topological data analysis, where algebraic topology is leveraged to reduce the dimensionality of complex data spaces and extract shape features within the data, optimal transport theory has improved computational efficiency [20] and also has been used to study geometric aspects of algebraic topological invariants [10]. A prior transportation problem (distinct from the optimal transport setting) has been previously considered in tropical geometry by Richter-Gebert et al [41]. Our work in this paper presents the first connection between tropical geometry and optimal transport theory. Specifically, we consider an infinite metric measure space in a continuous tropical geometric setting endowed with a combinatorial ground metric. Numerical computations of optimal transport with various ground metrics has been recently studied in the continuous setting and shown to be efficient [5, 25]. Additionally, studying the optimal transport problem provides a computational framework for the probability density space, which also encodes the geometry of sample space [21, 35, 36, 48]. In solving the optimal transport problem, we thus define tropical Wasserstein distances and provide algorithms for our proposed tropical Wasserstein distances. Collectively, these results offer tools for probabilistic, statistical, and geometric inference in a tropical geometric setting, which then may be translated to other applications where tropical geometry plays an important computational and interpretive role.

The remainder of this paper is organized as follows. Section 2 gives an overview of tropical geometry and the tropical projective torus as our ground space of interest. We present and review properties of the tropical metric, which endows this space with a metric structure; we also give some variational forms for the tropical metric. Section 3 overviews the problem of optimal transport and the role of the Wasserstein distances in this framework. We then define the tropical Wasserstein-p distance, with the tropical metric as the ground metric and the tropical projective torus as the ground space; we also give variational forms of the tropical Wasserstein distance. We study the specific cases of \(p=1\) and 2: the \(p=1\) case gives a method for computing all infinitely many tropical geodesics, while in the case of \(p=2\), the Wasserstein metric is amenable to statistical analysis by providing an inner product structure on probability measures on the tropical projective torus. Section 4 gives algorithms to explicitly compute the tropical Wasserstein-p distances, while Sect. 5 presents the results of several numerical experiments implementing our proposed algorithms. We close the paper with a discussion in Sect. 6 on future research stemming from the work presented in this paper.

2 Tropical geometry, the tropical projective torus, and the tropical metric

In this section, we give the basics of tropical geometry that are relevant for our work. We then present the tropical projective torus as our ground space of interest, and the tropical metric as the ground metric on this space. We also give alternative versions of the metric in terms of variational forms. This is the metric with respect to which we will define the tropical optimal transport problem and the tropical Wasserstein-p distances.

2.1 Essentials of tropical geometry: tropical algebra

Tropical geometry may be seen as a subdiscipline of algebraic geometry. In the latter, the zero sets of systems of polynomial equations are studied using algebraic methods; in the former, these polynomials are defined via the tropical semiring, \(({{\mathbb {R}}}\cup \{-\infty \}, \boxplus , \odot )\) where addition between two elements is given by their max and multiplication is given by their sum:

Notice that tropical subtraction is not defined, therefore resulting in a semiring, rather than a ring. Both operations of the semiring are commutative and associative; multiplication distributes over addition. Tropicalization refers to interpreting classical arithmetic operations with their tropical counterparts. Using these operations, lines, polynomials, and other more general mathematical constructions can be built, which will result in “skeletal" piecewise linear structures.

2.2 The tropical projective torus

Tropical geometry naturally gives rise to polyhedral structures. The interplay between algebraic geometry and polyhedral geometry results in new interpretations of important concepts which form the building blocks for the study of tropical geometry.

An important example is the reinterpretation of a fundamental object in computational algebraic geometry—the Gröbner basis. A Gröbner basis is a particular generating set of an ideal in a polynomial ring over a field; computing Gröbner bases is one of the main approaches in solving systems of polynomials, which is a central problem in algebraic geometry. Reinterpreting Gröbner bases using valuations (functions over fields that give a notion of its size) gives rise to Gröbner complexes. Gröbner complexes lead to universal Gröbner bases, which are analogs to tropical bases; see Maclagan and Sturmfels [30] for full details of this construction. The Gröbner complex is thus a fundamental object in tropical geometry; it is a polyhedral complex constructed for a homogeneous ideal in the polynomial ring \(K[x_0, x_1, \ldots , x_n]\) over a field K. The ambient space of a Gröbner complex is the tropical projective torus, denoted by \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\). In this paper, we consider the tropical projective torus as our ground space of interest.

The tropical projective torus is the quotient space that identifies vectors differing from each other by tropical scalar multiplication (or classical addition). It is generated by the following equivalence relation \(\sim \) on \({{\mathbb {R}}}^{n+1}\):

Mathematically, \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) is constructed in the same manner as the complex torus: take a lattice \(\varLambda \in {\mathbb {C}}^{n+1}\) as a real vector space, then the complex torus is \({\mathbb {C}}^{n+1}/\varLambda \). For \(x\in {{\mathbb {R}}}^{n+1}\), let \({\bar{x}}\) be its image in \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\). The tropical projective torus identifies with \({{\mathbb {R}}}^n\) by taking representatives of the equivalence classes whose last coordinate is zero:

We denote an element in \({{\mathbb {R}}}^{n+1}\) by x, an element in \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) by \({{\bar{x}}}\), and an element in \({{\mathbb {R}}}^n\) by \(\mathbf{{x}}= (x_1 - x_{n+1},\, \ldots ,\, x_n - x_{n+1})\)—which is the image of \({\bar{x}}\) in \({{\mathbb {R}}}^n\).

The Space of Phylogenetic Trees. One important practical example that arises in the tropical projective torus is the space of phylogenetic trees, \({\mathcal {T}}_N\) (where N is the fixed number of leaves in a tree). Speyer and Sturmfels [44] identify an equivalence between the space of all phylogenetic trees and a tropical geometric space via a homeomorphism [28, 30, 33]. The space of phylogenetic trees is contained within the tropical projective torus. In other words, the tropical projective torus is also the ambient space of phylogenetic trees. Although the space of phylogenetic trees is a proper subset of the tropical projective torus, it possesses a very complex structure that is not yet well understood. In particular, it is connected and possesses a polyhedral structure, but is not convex [28, 33]. Additionally, trees are defined by a specific combinatorial condition, which makes the precise characterization of the space of phylogenetic trees and establishing its boundary within the tropical projective torus difficult. The dimension of tree space also is lower than the tropical projective torus: its dimension grows linearly in the number of leaves in a tree, while for the tropical projective torus, the dimension grows quadratically.

2.3 The tropical metric

The tropical projective torus \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) becomes a metric space when endowed with a generalized Hilbert projective metric function [1, 9], which is a combinatorial metric that is tropical in nature. It has been referred to as the tropical metric in recent literature [28, 33]. Our work here is based on the ambient tree space given by the tropical projective torus endowed with the tropical metric.

Definition 1

For a point \(x \in {{\mathbb {R}}}^{n+1}\), denote its coordinates by \(x_1, x_2, \ldots , x_{n+1}\) and its representation in the tropical projective torus \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) by \({\bar{x}}\). The tropical metric on \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) is given by

When considering the representatives of the equivalence classes as in (1), the tropical metric translates to the following between \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) and \({{\mathbb {R}}}^n\): for \({\bar{x}}, {\bar{y}} \in {{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) and \(\mathbf{{x}}, \mathbf{{y}}\in {{\mathbb {R}}}^n\),

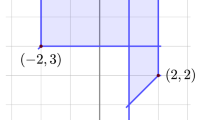

Figure 1 illustrates the relationship where \({{\mathbb {R}}}^{n+1}\) identifies with \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) by the equivalence relation \(\sim \); \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) then embeds into \({{\mathbb {R}}}^{n}\). The metric \(d_{\mathrm {tr}}\) is defined on \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) and has a representation in \({{\mathbb {R}}}^{n}\); it is an isometry from \({{\mathbb {R}}}^{n+1}\) to \({{\mathbb {R}}}^{n+1}/{\mathbf{1}}\) to \({{\mathbb {R}}}^{n}\). Again, recall the notation that an element in \({{\mathbb {R}}}^{n+1}\) is denoted by x, an element in \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) is denoted by \({{\bar{x}}}\), and an element in \({{\mathbb {R}}}^n\) is denoted by \(\mathbf{{x}}= (x_1 - x_{n+1}, \ldots , x_n - x_{n+1})\).

Lemma 1

On \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\), we have the following alternate expression for the tropical metric:

Proposition 1

[33, Proposition 17] \(d_{\mathrm {tr}}(\cdot ,\cdot )\) is a well-defined metric function on \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\).

2.4 Variational forms of the tropical metric

It turns out that the tropical metric may be considered in terms of unknown functions and corresponding differential equations, which provides an alternative formulation for the tropical metric in terms of a variational form. Variational forms are useful in computational studies, since numerically, it is often easier to find solutions to variational problems rather than differential equations. As we will see further on, this turns out to be an important advantage in explicit computations of the tropical Wasserstein distances and associated results.

Notation. We use the \(+\) and − superscript notation as follows:

Proposition 2

For \({\bar{x}}, {\bar{y}}\in {{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\), we have

where \(\mathbf{v,z}: [0,1] \rightarrow {{\mathbb {R}}}^{n}\) and we define the tropical Lagrangian \(L_{\mathrm {tr}}(\cdot )\) as the tropical norm for \({\mathbf {a}} \in {{\mathbb {R}}}^{n}\) as follows:

Proof

Let \(D = \{\mathbf{{x}}_{i}-\mathbf{{y}}_{i}\mid 1\le i\le n\} \cup \{0\}\). By Definition 1, \(d_{\mathrm {tr}}({\bar{x}}, {\bar{y}}) = \max (D) - \min (D)\). Hence

First, let \(\mathbf{z}(t) = t\cdot \mathbf{{y}}+ (1-t) \cdot \mathbf{{x}}\), then \(\mathbf{{v}}(t)\) is the constant vector \(\mathbf{{y}}- \mathbf{{x}}\), and the integral \(\int _{0}^{1}{\Vert {\mathbf {v}}(t) \Vert _{\mathrm {tr}} dt}\) becomes \(L_{\mathrm {tr}}(\mathbf{{y}}- \mathbf{{x}}) = d_{\mathrm {tr}}({\bar{x}},{\bar{y}})\). Second, in order to show that

it suffices to show that the integral is always no less than any of \(|\mathbf{{y}}_{i} - \mathbf{{x}}_{i}|\) and \(\left| \left( \mathbf{{y}}_{i} - \mathbf{{x}}_{i}\right) - \left( \mathbf{{y}}_{j} - \mathbf{{x}}_{j}\right) \right| \) where \(1\le i,j\le n\).

For \(1\le i\le n\), by definition of \(L_{\mathrm {tr}}\) we have

Now consider the function \(f_{i}: [0,1] \rightarrow {{\mathbb {R}}}\) given by \(f_{i}(t) = {\mathbf {z}}(t)_{i}\). Then \(\displaystyle \mathbf{{v}}(t)_{i} = \frac{df_{i}}{dt}(t)\), which gives

and

Similarly, for any \(1\le i,j\le n\), by definition of \(L_{\mathrm {tr}}\), we have

By (4), we get

\(\square \)

Example 1

When \(n=2\),

The above variational form (2) of \(d_{\mathrm {tr}}(\cdot ,\cdot )\) may be further generalized as follows.

Corollary 1

For \({\bar{x}}, {\bar{y}}\in {{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\), let \(L_{\mathrm {tr}}\) be the same as in Proposition 2. For \(p>1\), we have

Proof

When \(\mathbf{z}(t) = t\cdot \mathbf{{y}}+(1-t)\cdot \mathbf{{x}}\), \(\mathbf{{v}}(t)\) is still the constant \(\mathbf{{y}}- \mathbf{{x}}\) and the equality still holds. In addition, by the Hölder inequality,

Hence for any \(\mathbf{z}:[0,1]\rightarrow {{\mathbb {R}}}^{n}\) and \(\mathbf{v}(t) = \frac{d\mathbf{z}}{dt}\),

as in Definition 1. \(\square \)

3 Optimal transport and the tropical Wasserstein-p distances

We now give a brief background on and a description of the problem of optimal transport; we also formally present the setting of the optimal transport problem specific to our work.

The question underlying the theory of optimal transport can be posed in a very basic and intuitive manner as follows: What is the most efficient way to move a given pile of dirt from one location to another? The total volume of the dirt must remain intact, but the shape and form of the pile may change during transportation and arrive at its location in a differently shaped pile. This problem has been recast mathematically in various formulations with various assumptions. There is a vast literature of historical as well as technical aspects and perspectives on the optimal transport problem; see for example Ambrosio and Gigli [2], Villani [47, 48] for detailed discussions.

3.1 Optimal transport and probability

Adapting the intuitive description of the optimal transport problem above to a more mathematically formal setting, we may view the pile of dirt as a probability measure to be transported over a space—or alternatively, one probability distribution to be transformed into another—which gives us a probabilistic and statistical perspective on the problem.

A key factor in solving the optimal transport problem is the cost function, which gives the cost of moving the pile of dirt, or the transporting the probability measure. Mathematically, this is generally a function of two variables—an origin or “start" location and destination or “end" location—which maps to the positive real line to give the cost, and may take into account any number of factors. In the simplest case, however, when the cost of moving the pile of dirt from its origin to destination is nothing more than the distance between the origin and destination, the solution to the optimal transport problem yields the Wasserstein distance (for a fixed dimension). Intuitively, the Wasserstein distance gives the minimum cost of transforming one probability distribution into another. This minimum cost is simply the “amount of dirt" to be transported, multiplied by the mean distance it must be moved. In the case of probability distributions that contain a total mass of 1, the minimum cost is therefore simply the mean distance it must be moved. More precisely, the Wasserstein distance is a distance function for probability distributions defined on a given metric space, referred to as the ground space and the associated metric is referred to as the ground metric; these concepts are formalized further on in Definition 3. The Wasserstein distance is thus a useful tool for comparing distributions.

Specific Setting. In our work, the ground space is the tropical projective torus and the ground metric is the tropical metric. We consider the set of all probability measures on the tropical projective torus, which exist and are well-defined [33], as a space. This work defines and constructs Wasserstein distances as a metric on these probability measures associated with the tropical projective torus. Figure 2 provides a conceptual illustration of the relationship between the ground space, equipped with a ground metric, and the Wasserstein space of probability measures over the ground space, equipped with the Wasserstein distance.

Illustrative figure of the relationship between the ground space and the Wasserstein space of probability measures. Here, the plane below depicts the tropical projective torus \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) is the ground space; it is equipped with the tropical metric. This space admits well-defined probability measures [33]. Collecting these probability measures as a separate space yields the space of probability measures on \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\); in this figure, it is depicted in the manifold above. This space can be equipped with a particular metric—the Wasserstein distance. The Wasserstein distance is therefore defined on the space of probability measures on \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\); it measures distances between probability measures on the tropical projective torus. In this illustrative figure, we also show the space of phylogenetic trees with N leaves, \({\mathcal {T}}_N\), as a figurative proper non-convex subset of the tropical projective torus \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\). The probability measures associated with this specific subset of \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) are depicted in the Wasserstein space of probability measures above, which is also non-convex (see Remark 3)

Wasserstein Distances as Metrics Between Probability Distributions. Although other metrics for probability distributions exist in the literature on mathematical statistics, the Wasserstein distance possesses desirable computational and intuitive properties. To illustrate a few such properties, let us consider random variables X, Y defined on \({{\mathbb {R}}}^d\) distributed as \(X \sim P\) and \(Y \sim Q\) with densities p and q, respectively. Three commonly-used measures for distances between P and Q are total variation, \(\frac{1}{2}\int |p-q|\); Hellinger, \(\sqrt{\int (\sqrt{p} - \sqrt{q})^2}\); and \(L_2\), \(\int (p-q)^2\).

When comparing one discrete versus one continuous distribution, these distances yield results that are not very informative. Let P be uniform on [0, 1], and let Q be uniform on \(\{0,\, 1/n,\, 2/n,\, \ldots ,\, 1\}\). The total variation distance between these distributions is 1, which is the total size of each of the two sets, and the largest that any distance can be, while the Wasserstein distance is 1/n.

These distances also do not take into account the underlying geometry of the space on which the distributions are defined. Consider the three densities \(p_1\), \(p_2\) and \(p_3\) shown in Fig. 3. We have

and similar results for the Hellinger and \(L_2\) distances, however, intuitively, we would like to think of \(p_1\) and \(p_2\) being more similar and and hence closer to each other than to \(p_3\). The Wasserstein distance is able to make this distinction.

In computing a distance between distributions, we arrive at some measure of their similarity or dissimilarity, but the total variation, Hellinger, \(L_2\), and other distances do not provide any information on how or why the distributions are qualitatively different. Perhaps the most helpful property of the Wasserstein distance is that, in addition to a measure of distance between the distributions, we also obtain a map that describes how P morphs into Q. This map is known as a transport plan.

In addition to the illustrative examples discussed above, there are other desirable computational and statistical properties of the Wasserstein distance, such as stability to small perturbations and a well-behaved and intuitive Wasserstein Fréchet mean. Further details and more complete discussions on statistical aspects of the Wasserstein distance can be found in Panaretos and Zemel [38], Wasserman [49].

Aside from statistical aspects, there also exist other analytic advantages of the Wasserstein distances, depending on the context. For instance, the Wasserstein distances’ intimate connection to optimal transport problems inherently make them natural tools in these and other settings with foundations in partial differential equations.

Three example densities \(p_1\), \(p_2\), \(p_3\). This figure appears in Wasserman [49]. The total variation, Hellinger, and \(L_2\) distances between these three densities are the same, while the Wasserstein distance between \(p_1\) and \(p_2\) is smaller than that between either \(p_1\) or \(p_2\) and \(p_3\)

Wasserstein Distances and Phylogenetic Trees. Wasserstein distances have been previously studied in the context of phylogenetic trees. A single tree itself may be treated as a metric space, for instance, by considering genetic distances which measure distances between pairs of sequences on a single tree; the metric here is defined within the tree itself. When considering a single tree, the context is a finite metric space. Wasserstein distances have been defined and studied in these contexts, such as in Evans and Matsen [12], where probability distributions giving rise to individual trees are compared. Kloeckner [18] studies geometric properties of measures on equidistant trees (i.e., rooted trees with equal branch lengths from the root to all leaves) using Wasserstein distances. For finite spaces, Sommerfeld and Munk [43] conduct statistical inference studies for empirical Wasserstein metrics computed from datasets. Very recently, Le et al [22] studied the sliced formulation of optimal transport—developed to alleviate computational and statistical drawbacks of optimal transport theory—on tree metrics. Sato et al [42] furthermore propose an extremely fast algorithm that solves the optimal transport problem to compute Wasserstein distances on a tree with one million nodes in less than one second. The setting of these works all differ from the study of Wasserstein distances on the space of phylogenetic trees.

In the context of tree spaces, other probability-based distances between trees have also been proposed [13, 14]. These are related, but are nevertheless strictly different from the notion of distances between probability measures over tree space. The contributions of these works are classical measures between probability distributions on genetic sequences that make up trees, which then induce probabilistic distances between trees, including Hellinger distances and Kullback–Leibler divergences. Kullback–Leibler divergences measure the difference in terms of information gain between models of statistical inference [19]. Outside the scope of interest of this paper, other tree spaces have also been proposed that are not probability-based; an example of a combinatorial construction based on posets that turns out to be related to tree-reconstruction using Markov processes is the edge-product space [15, 34].

3.2 Formalizing the optimal transport problem and defining the Wasserstein-p distances

Monge [32] is largely recognized to have provided the first mathematical formalization of the optimal transport problem described above, while the subsequent probabilistic reinterpretation by Kantorovich [17] lead to a fundamental computational breakthrough that seeded the development of linear optimization. As such, the statement of the mathematical optimal transport problem is often referred to as the Monge–Kantorovich transport problem and presented in the setting of measure theory. We now give an overview of this presentation.

Definition 2

Let \(\varOmega \) and \(\varOmega '\) be separable metric spaces that are Radon spaces (that is, any probability measure on each space is a Radon measure). Let \(c: \varOmega \times \varOmega ' \rightarrow [0, \infty ]\) be a Borel-measurable cost function. For \(\rho ^0 \in {\mathscr {P}}(\varOmega )\) and \(\rho ^1 \in {\mathscr {P}}(\varOmega ')\) where \({\mathscr {P}}(\cdot )\) denotes the collection of probability measures on the respective spaces, the Monge–Kantorovich transport problem is to find a probability measure \(\pi \) on \(\varOmega \times \varOmega '\) such that

is achieved. Here, \(\varPi (\rho ^0, \rho ^1)\) denotes the collection of all probability measures on \(\varOmega \times \varOmega '\) with marginal measures \(\rho ^0\) on \(\varOmega \) and \(\rho ^1\) on \(\varOmega '\).

When the cost function is lower semi-continuous, and given that \(\varOmega \) and \(\varOmega '\) are Radon spaces, \(\varPi (\rho ^0, \rho ^1)\) is tight, and therefore a solution to the Monge–Kantorovich transport problem always exists under these conditions (e.g., [3]). From this formulation, the Wasserstein-p distance may be defined as follows.

Definition 3

Let \((\varOmega ,d)\) be a separable metric Radon space. Let \(p \ge 1\) and \({\mathscr {P}}_p(\varOmega )\) be the collection of all probability measures \(\mu \) on \(\varOmega \) such that \(\mu \) has finite pth moment for some \(\mathbf{{x}}_0 \in \varOmega \); i.e., \(\displaystyle \int _{\varOmega } d(\mathbf{{x}}, \mathbf{{x}}_0)^p \mathrm {d}\mu (\mathbf{{x}}) < +\infty \). The Wasserstein-p distance between probability measures \(\rho ^0, \rho ^1 \in {\mathscr {P}}_p(\varOmega )\) is given by

where, as before, \(\varPi (\rho ^0, \rho ^1)\) is the collection of all probability measures on \(\varOmega \times \varOmega \) with marginal measures \(\rho ^0\) and \(\rho ^1\) on the respective copies of \(\varOmega \). Equivalently, we have

where \({\mathbb {E}}[\cdot ]\) denotes the expectation, and the infimum is taken over all joint distributions of random variables X and Y with respective marginals \(\rho ^0\) and \(\rho ^1\). The metric d is referred to as the ground metric; the function \(\pi \) is known as the transport plan.

The transport plan \(\pi (\mathbf{{x}},\mathbf{{y}})\) is a function that describes a way to move the measure \(\rho ^0\) into \(\rho ^1\), and between locations \(\mathbf{{x}}\) and \(\mathbf{{y}}\); transport plans are not unique. Since the total mass moved out of a region around x must be equal to \(\rho ^0(\mathbf{{x}})\mathrm {d}\mathbf{{x}}\) and the total mass moved into a region around \(\mathbf{{x}}\) must be \(\rho ^1(\mathbf{{x}})\mathrm {d}\mathbf{{x}}\), we have the following restrictions on a transport plan:

In other words, \(\pi \) is a joint probability distribution with marginals \(\rho ^0\) and \(\rho ^1\). The total infinitesimal mass which moves from \(\mathbf{{x}}\) to \(\mathbf{{y}}\), therefore, is \(\pi (\mathbf{{x}},\mathbf{{y}}) \mathrm {d}\mathbf{{x}}\mathrm {d}\mathbf{{y}}\) and the cost of moving this amount of mass from \(\mathbf{{x}}\) to \(\mathbf{{y}}\) is \(c(\mathbf{{x}},\mathbf{{y}})\pi (\mathbf{{x}},\mathbf{{y}})\mathrm {d}\mathbf{{x}}\mathrm {d}\mathbf{{y}}\). The total cost is then

The optimal transport plan is the \(\pi \) which achieves the minimal value of C:

If the cost of a move \(c(\mathbf{{x}},\mathbf{{y}})\) is no more than the distance between the two points \(d(\mathbf{{x}},\mathbf{{y}})\), then the optimal cost value \(C^*\) is identically the Wasserstein-1 distance, \(W_1\).

Remark 1

In the particular case where \(p=1\), the Wasserstein-1 distance is also referred to as the Kantorovich–Rubinstein distance, and the earth mover’s distance (EMD) in the computer science literature.

Remark 2

The Wasserstein distances satisfy all conditions for a formal definition of a metric (e.g., [48]). If the condition of finite pth moment is relaxed, the Wasserstein distances may technically be infinite, and therefore not a metric in the strict sense.

Remark 3

For any \(p \ge 1\), if \((\varOmega , d)\) is a complete and separable metric space, then so too is \(({\mathscr {P}}_p(\varOmega ), W_p)\) (e.g., [48]). Other geometric properties between the ground space and its associated Wasserstein distance also hold, including compactness, convexity, as well as non-convexity. An adaptation of the Brunn–Minkowski theorem [6, 31] relating volumes of compact and convex sets, as well as its generalization to non-convex sets by Lyusternik [29], for comparative relations between ground and Wasserstein spaces also exists [48]. The geometric implication of these results is that compact, non-convex subsets of the ground space with respect to the ground metric correspond to non-convex subsets in the Wasserstein space of probability measures (with generalized Ricci curvature bounds) over the ground space with respect to the Wasserstein distance.

In the applicative setting of our work concerning the space of phylogenetic trees as a non-convex subset of the tropical projective torus, the implication is that the corresponding space of probability measures associated with the space of phylogenetic trees is also non-convex with respect to the Wasserstein distances. (Compactness of tree space can be established by fixing an upper bound on the height of trees.) This provides a geometric compatibility between the space of phylogenetic trees equipped with the tropical metric and its associated space of probability measures equipped with Wasserstein distances. See Fig. 2 for an illustrative description of this relationship.

3.3 A time-dependent cost function: formulating a Hamiltonian

In formulating the above variational forms of the tropical metric (2) and (5), the notation with respect to t is not by coincidence and purposely alludes to a dependence upon time. Within the setting of Wasserstein distances and their relation to the optimal transport problem where the ground metric is itself the cost function, intuitively, a time-dependent ground metric corresponds to a cost function where time is a cost factor.

Considering time dependence allows for a rich and alternate formulation of the optimal transport problem, which extends to the continuous displacement of measures—precisely the setting of the tropical metric on the tropical projective torus as a continuous metric measure space. However, there are certain instances where continuous displacement problems turn out to be equivalent to steady-state, time-independent problems with an alternate formulation that favors computational efficiency: this occurs when the Lagrangian L is homogeneous of degree 1 and convex.

Lemma 2

The tropical Lagrangian \(L_{\mathrm {tr}}\) defined in (3) is convex on \({{\mathbb {R}}}^n\). More specifically, for \({\mathbf {a}},{\mathbf {b}}\in {{\mathbb {R}}}^{n}\) and \(0\le w\le 1\), we have

Proof

By definition,

So either there exist \(1\le j,k\le n\) such that

or there exists \(1\le j\le n\) such that

Note that

We also have

Hence Lemma 2 holds in either case. \(\square \)

Remark 4

Note that convexity of \(L_{\mathrm {tr}}\) also implies convexity of \(\frac{1}{p}L_{\mathrm {tr}}^{p}\).

The convexity of the tropical Lagrangian \(L_{\mathrm {tr}}\) then allows for the formulation of the Hamiltonian [48, Example 7.5] for \({\mathbf {b}} \in {{\mathbb {R}}}^{n}\) as follows:

We now explicitly compute the value of the Hamiltonian (7), which will provide concise formulations with regard to the tropical Wasserstein-p distances. For convenience, and identifying \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) with \({{\mathbb {R}}}^n\), for \({\mathbf {b}} \in {{\mathbb {R}}}^{n}\) we define

In other words, \(\zeta ({\mathbf {b}})\) is the absolute value of the sum of either all positive \(b_{i}\) or all negative \(b_{i}\). In particular, \(\zeta ({\mathbf {b}})=0\) if and only if \({\mathbf {b}}={\mathbf {0}}\).

Example 2

When \(n=2\), we have \({\mathbf {b}} = (b_1, b_2)\) and

Proposition 3

The value of \(H({\mathbf {b}})\) is:

-

(i)

0 when \({\mathbf {b}}={\mathbf {0}}\), or \(\zeta ({\mathbf {b}})\le 1\) and \(p=1\);

-

(ii)

\(\infty \) when \({\mathbf {b}}\ne {\mathbf {0}}\) and \(p<1\), or \(\zeta ({\mathbf {b}})>1\) and \(p=1\);

-

(iii)

\(\displaystyle \frac{p-1}{p}\zeta ({\mathbf {b}})^{\frac{p}{p-1}}\) when \({\mathbf {b}}\ne {\mathbf {0}}\) and \(p>1\).

Proof

-

(i)

When \({\mathbf {b}}={\mathbf {0}}\), \(\sum _{i=1}^{n}{b_{i} a_{i}}\) is always zero, and \(L_{\mathrm {tr}}({\mathbf {a}})\ge 0\), so \(H({\mathbf {b}})\le 0\). However, when \({\mathbf {a}}={\mathbf {0}}\), the right-hand side of (7) is zero, so \(H({\mathbf {0}})=0\). When \(\zeta ({\mathbf {b}})\le 1\) and \(p=1\), let

$$\begin{aligned} u := \max _{1\le i\le n}(a_{i},0) \ge 0 ~~~\text{ and }~~~ v := \min _{1\le i\le n}(a_{i},0) \le 0. \end{aligned}$$Then we have

$$\begin{aligned} \sum _{i=1}^{n}{b_{i} a_{i}}&= \sum _{b_{i}>0}{b_{i} a_{i}} + \sum _{b_{i}<0}{b_{i} a_{i}} \\&\le \sum _{b_{i}>0}{b_{i} u} + \sum _{b_{i}<0}{b_{i} v} \\&\le \zeta ({\mathbf {b}})u + \zeta ({\mathbf {b}})(-v) \\&= \zeta ({\mathbf {b}})(u-v)\le u-v. \end{aligned}$$Hence \(H({\mathbf {b}})\le 0\), and equality holds when \({\mathbf {a}}={\mathbf {0}}\). So \(H({\mathbf {b}})=0\).

-

(ii)

Now we may assume that \({\mathbf {b}}\ne {\mathbf {0}}\) and thus \(\zeta ({\mathbf {b}})>0\). We may choose nonempty \(S\subset \{1,2,\ldots ,n\}\) such that

$$\begin{aligned} \zeta ({\mathbf {b}}) = \left| \sum _{j\in S}{b_{j}} \right| . \end{aligned}$$For any \(N>0\) and each \(1\le i\le n\), we let

$$\begin{aligned} a_{i} = {\left\{ \begin{array}{ll} \displaystyle \frac{b_{i}}{|b_{i}|}\cdot N, &{}\text { if } i\in S; \\ 0, &{}\text { if } i\notin S. \end{array}\right. } \end{aligned}$$Then \(\sum _{i=1}^{n}{b_{i} a_{i}} = \zeta ({\mathbf {b}})\cdot N\) and the set \(\{a_{i}\mid 1\le i\le n\}\cup \{0\}\) is either \(\{0, N\}\) or \(\{0, -N\}\), so \(L_{\mathrm {tr}}({\mathbf {a}})\) is \(N - 0\) or \(0 - (-N)\), which is N. Since \(\zeta ({\mathbf {b}})>0\), when \(p<1\), or \(\zeta ({\mathbf {b}})>1\) and \(p=1\), we have

$$\begin{aligned} \lim \limits _{N\rightarrow \infty }{\left( \zeta ({\mathbf {b}})N - \frac{1}{p}N^{p}\right) } = \infty . \end{aligned}$$So \(H({\mathbf {b}})=\infty \).

-

(iii)

We denote u, v as in (i) above. Then

$$\begin{aligned} H({\mathbf {b}}) \le \zeta ({\mathbf {b}})(u-v) - \frac{1}{p}(u-v)^{p}. \end{aligned}$$Let \(s:=u-v\ge 0\). We need to find the maximum of \(\zeta ({\mathbf {b}})s-\frac{1}{p}s^{p}\) when \(s\ge 0\). The derivative of this function of s is

$$\begin{aligned} \zeta ({\mathbf {b}}) - s^{p-1}. \end{aligned}$$Hence the function is increasing when \(0\le s\le \zeta ({\mathbf {b}})^{\frac{1}{p-1}}\), and it is decreasing when \(s\ge \zeta ({\mathbf {b}})^{\frac{1}{p-1}}\). So the maximum is attained when \(s=\zeta ({\mathbf {b}})^{\frac{1}{p-1}}\), thus

$$\begin{aligned} H({\mathbf {b}})\le \zeta ({\mathbf {b}})\cdot \zeta ({\mathbf {b}})^{\frac{1}{p-1}} - \frac{1}{p}\zeta ({\mathbf {b}})^{\frac{p}{p-1}} = \frac{p-1}{p}\zeta ({\mathbf {b}})^{\frac{p}{p-1}}. \end{aligned}$$Finally, as in (ii), we may choose nonempty \(S\subset \{1,2,\ldots ,n\}\) such that

$$\begin{aligned} \zeta ({\mathbf {b}}) = \left| \sum _{j\in S}{b_{j}} \right| , \end{aligned}$$and the equality holds when

$$\begin{aligned} a_{i} = {\left\{ \begin{array}{ll} \displaystyle \frac{b_{i}}{|b_{i}|}\cdot \zeta ({\mathbf {b}})^{\frac{1}{p-1}}, &{}\text { if } i\in S; \\ 0, &{}\text { if } i\notin S. \end{array}\right. } \end{aligned}$$(9)

\(\square \)

For notational convenience, we also define \(\eta :{\mathbb {R}}^n\rightarrow {\mathbb {R}}^n\), where \(\eta ({\mathbf {b}})=(\eta ({\mathbf {b}})_i)_{i=1}^n\), with

That is, \(\eta ({\mathbf {b}})_i\) is defined by (9).

The Tropical Wasserstein-p Distances. We consider the tropical projective torus as a probability space [33] with finite pth moment as follows:

Within the optimal transport framework discussed above and as in Definition 3, the tropical Wasserstein-p distance is given as follows:

where the infimum is taken over the set of all possible joint distributions (transport plans) \(\pi \) with marginals \(\rho ^0\) and \(\rho ^1\), \(\varPi (\rho ^0, \rho ^1)\). Here, the distance \({\tilde{W}}^{\mathrm {tr}}_p\) depends the choice of p in the linear programming formulation (10). The following alternative gives an equivalent definition of the tropical Wasserstein-p distances.

Definition 4

(Tropical Wasserstein-p distance) The tropical Wasserstein-p distance is given by

such that the following dynamical constraint or continuity equations hold:

Here \(\Vert \cdot \Vert _{\mathrm {tr}}\) is the tropical norm, \(\rho ^0\), \(\rho ^1\in {\mathscr {P}}_p({\mathbb {R}}^n)\), \(\nabla \), \(\nabla \cdot \) are gradient and divergence operators in \({\mathbb {R}}^n\), and the infimum is taken over all continuous density functions \(\rho :[0,1]\times {\mathbb {R}}^n\rightarrow {\mathbb {R}}\), and Borel vector fields \({\mathbf {v}}:[0,1]\times {\mathbb {R}}^n \rightarrow {\mathbb {R}}^n\).

Here, the formulation (4) given by the pairs (11a) and (11b) is known as the Benamou–Brenier formula, given by Benamou and Brenier [4]. As discussed in Chapter 8 of Villani [47], when c satisfies suitable conditions, the linear programming formulation \({\tilde{W}}_p^{\text {tr}}\) is equivalent to the dynamical formulation \(W_p^{\text {tr}}\). In this work, we focus on the dynamical formulation (4) with \(p=1,2\) for their concrete implications on computations of the tropical projective torus.

3.4 The tropical Wasserstein-1 distance

We first study the case \(p=1\). In this case, it turns out that the tropical Wasserstein-1 distance \(W_1^{\mathrm {tr}}\) may be recast as the following minimization problem.

Proposition 4

(Minimal Flux Formulation) By identifying \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\) with \({{\mathbb {R}}}^n\) as discussed in Sect. 2.3, the tropical Wasserstein-1 distance satisfies

where the infimum is taken over all Borel flux functions \({\mathbf {m}} :{\mathbb {R}}^n\rightarrow {\mathbb {R}}^n\).

Proof

This minimal flux formulation follows the result in optimal transport theory. By Jensen’s inequality, the minimizer of (4) is obtained by a time-independent solution. Denote

Then

By choosing \(\rho (t,\mathbf{{x}})=(1-t)\rho ^0(\mathbf{{x}})+t\rho ^1(\mathbf{{x}})\), i.e., \(\rho ^1(x)-\rho ^0(x)+\nabla \cdot m(x)=0\), we derive the minimizer of above minimization problem. \(\square \)

Concretely, \({\mathbf {m}}(\mathbf{{x}})\) is the flux vector field that assigns a vector to each point in the measure and determines how much of the mass (measure) should be moved, and in which direction.

The reformulation of the tropical Wasserstein-1 distance given in Proposition 4 has enormous computational benefits, compared to that given in Definition 3 [25]. Notably, the size of the optimization variable is much smaller in solving a discrete approximation; additionally, the structure of the formulation given in Proposition 4 borrows from \(L_1\)-type minimization problems, which are well-studied and for which there exist fast and simple algorithms (see references in Li et al [25]). We will reap these benefits in formulating explicit algorithms to compute the tropical Wasserstein-p distances for \(p=1,2\), as discussed further on in Sect. 4.

Geodesics on the Tropical Projective Torus. Geodesics on the tropical projective torus are not unique [28, 33]. In particular, between any two given points in \({{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\), there are infinitely many geodesics. The following result gives the explicit connection between geodesics on the tropical projective torus and the minimizer of the tropical Wasserstein-1 distance.

Proposition 5

(Minimizer of the Tropical Wasserstein-1 distance) The minimizer of the tropical Wasserstein-1 distance is given by the following pair:

Proof

The minimizer of tropical Wasserstein-1 distance may be derived as follows. Define a Lagrange multiplier \(\varPhi :{{\mathbb {R}}}^n \rightarrow {\mathbb {R}}\) for the equality constraint of (12), and consider the saddle point problem

Notice that L is convex in \({\mathbf {m}}\) and concave in \(\varPhi \). Thus, the saddle point \(({\mathbf {m}}, \varPhi )\) satisfies \(\delta _{{\mathbf {m}}}L({\mathbf {m}},\varPhi )=0\), \(\delta _\varPhi L({\mathbf {m}},\varPhi )=0\). This corresponds to the equation pair (13). \(\square \)

Remark 5

We notice that the first equation in (13) represents the tropical Eikonal equation

The tropical Eikonal equation describes the movement of each particle according to the infinitely many geodesics under the tropical metric between \(\rho ^0\) to \(\rho ^1\). This behavior will be explored and demonstrated numerically in experiments further on in Sect. 5.

Proposition 6

The set of all infinitely many tropical geodesics is contained in a classical convex polytope.

Proof

For any point \({\bar{c}}\) on a tropical geodesic connecting \({\bar{a}}, {\bar{b}} \in {{\mathbb {R}}}^{n+1}/{{\mathbb {R}}}{\mathbf{1}}\), by the definition of geodesics, we have

So \({\bar{c}}\) belongs to a tropical ellipse with foci \({\bar{a}},{\bar{b}}\). By Proposition 26 of Lin and Yoshida [27], the set of all points on tropical geodesics is a classical convex polytope. \(\square \)

3.5 The tropical Wasserstein-2 distance

We now consider the case where \(p=2\). Here we refer to (9) using the notation \(\eta ({\mathbf {b}})\).

Proposition 7

(Minimizer of the Tropical Wasserstein-2 Distance) The minimizer of the tropical Wasserstein-2 distance \(({\mathbf {v}}(t,\mathbf{{x}}), \rho (t,\mathbf{{x}}))\) satisfies

where \(\eta :{\mathbb {R}}^n\rightarrow {\mathbb {R}}^n\) is given by

where S is as in (8). Also,

In particular, if \(\rho (t,\mathbf{{x}})>0\), then

Proof

The minimizer path for the tropical Wasserstein-2 distance is derived as follows. For \(p=2\), denote \({\mathbf {m}}(t,\mathbf{{x}}):=\rho (t,\mathbf{{x}}) v(t,\mathbf{{x}})\) where

Then the variational problem (4) can be reformulated as

Notice that variational problem (15) is convex in \(({\mathbf {m}},\mu )\). Again, we denote the Lagrange multiplier \(\varPhi :[0,1]\times {{\mathbb {R}}}^n \rightarrow {\mathbb {R}}\), then we can reformulate (15) into a saddle point problem.

Thus the saddle point \(({\mathbf {m}},\rho , \varPhi )\) satisfies the system \(\delta _{{\mathbf {m}}} L =0\), \(\delta _\rho L \ge 0\), \(\delta _\varPhi L =0\), i.e.,

Following Proposition 3, we obtain the minimizer of the system (14). \(\square \)

4 Algorithms: solving the optimal transport problem

In this section, we design algorithms for solving the optimal transport problems that give rise to the tropical Wasserstein-p distances and geodesics. Our approach is mainly based on the G-Prox primal-dual hybrid gradient (G-Prox PDHG) algorithm [16], which is a modified version of Chambolle–Pock primal-dual algorithms [8, 40].

We now provide a brief overview of the algorithm; see Chambolle and Pock [8], Jacobs et al [16], Pock and Chambolle [40] for further details. The classical primal-dual hybrid gradient algorithms convert the following minimization problem

into the following saddle point problem

where f and g are convex functions with respect to a variable X, \(f^*\) is a convex dual function of F, and K is a continuous linear operator. For each iteration, the algorithm performs gradient descent on the primal variable X and gradient ascent on the dual variable Y as follows:

where suitable norms need to be considered in the update.

For the tropical Wasserstein-1 and Wasserstein-2 distances, we apply the algorithm in (16) to (12) and (15) by setting \(Y = \varPhi \) and specifying

In this paper, we use a version of the G-Prox PDHG algorithm that applies the \(H^1\) norm in the dual variable Y update and uses the \(L^2\) norm in the primal variable X update. This choice of norms gives us more stable and faster convergence of the algorithm than the standard PDHG algorithm [8].

4.1 Computing the tropical Wasserstein-1 distances

We consider here \(p=1\). We first present the spatial discretization to compute the general Wasserstein-1 distance.

Consider a uniform lattice graph \(G=(V, E)\) with spacing \(\varDelta \mathbf{{x}}\) to discretize the spatial domain, where V is the vertex set \(V=\{1,2,\ldots , N\},\) and E is the edge set. Here \({\mathbf {i}}=(i_1, \ldots , i_d)\in V\) represents a point in \({\mathbb {R}}^d\). Consider a discrete probability set supported on all vertices:

where \(q_{\mathbf {i}}\) here represents a probability at node i, i.e., \(q_{\mathbf {i}}=\int _{C_{\mathbf {i}}} \rho (\mathbf{{x}})d\mathbf{{x}}\), and \(C_{\mathbf {i}}\) is a cube centered at \({\mathbf {i}}\) with length \(\varDelta \mathbf{{x}}\). Thus, \(\rho ^0(\mathbf{{x}})\), \(\rho ^1(\mathbf{{x}})\) is approximated by \(q^0=(q^0_{\mathbf {i}} )_{{\mathbf {i}}=1}^N\) and \(q^1= (q^1_{\mathbf {i}} )_{{\mathbf {i}}=1}^N\).

We use two steps to compute the Wasserstein-1 distance on \({\mathcal {P}}(G)\). We first define a flux on a lattice. Denote the flux matrix as \({\mathbf {m}}=({\mathbf {m}}_{{\mathbf {i}}+\frac{1}{2}})_{{\mathbf {i}}=1}^N\in {\mathbb {R}}^{N\times d}\), where each component \({\mathbf {m}}_{{\mathbf {i}}+\frac{1}{2}}\) is a row vector in \({\mathbb {R}}^d\), i.e.,

where \(e_v=(0,\ldots , \varDelta \mathbf{{x}},\ldots , 0)^\intercal \), with \(\varDelta \mathbf{{x}}\) at the vth column. In other words, if we denote \({\mathbf {i}}=(i_1, \ldots , i_d)\in {\mathbb {R}}^d\) and \({\mathbf {m}}(\mathbf{{x}})=({\mathbf {m}}^1(\mathbf{{x}}), \ldots , {\mathbf {m}}^d(\mathbf{{x}}))\), then

We consider a zero flux condition: if a point \({\mathbf {i}}+\frac{1}{2}e_v\) is outside the domain of interest \(\varOmega \), we let \({\mathbf {m}}_{{\mathbf {i}}+\frac{1}{2}e_v}=0\). Based on such a flux \({\mathbf {m}}\), we define a discrete divergence operator \(\text {div}_G({\mathbf {m}}):=(\text {div}_G \big ({\mathbf {m}}_{\mathbf {i}}))_{{\mathbf {i}}=1}^N\), where

We next introduce the discrete cost functional

This gives rise to the following optimization problem in the tropical setting

for \({\mathbf {i}} =1,\ldots , N; v =1,\ldots , d.\)

We solve (17) by studying its saddle point structure. Denoting the Lagrange multiplier of (17) as \(\varPhi =(\varPhi _{\mathbf {i}})_{{\mathbf {i}}=1}^N\), we obtain

Saddle point problems such as (18) are well studied by the first-order primal-dual hybrid gradient (PDHG) algorithm. Implementing the G-Prox PDHG algorithm gives the following iteration steps:

where the quantities h, \(\tau \) are two small step sizes, and

These steps alternate a gradient ascent in the dual variable \(\varPhi \), and a gradient descent in the primal variable \({\mathbf {m}}\).

It turns out that iteration (19) can be solved by simple explicit formulae. Since the unknown variables \({\mathbf {m}}\), \(\varPhi \) are component-wise separable in this problem, each of its components \({\mathbf {m}}_{{\mathbf {i}}+\frac{1}{2}}\), \(\varPhi _{{\mathbf {i}}}\) can be independently obtained by solving (19). First, notice that

where \(\nabla _G\varPhi ^k_{{\mathbf {i}}+\frac{1}{2}}:=\frac{1}{\varDelta \mathbf{{x}}}(\varPhi ^k_{{\mathbf {i}}+e_v}-\varPhi _{{\mathbf {i}}}^k)_{v=1}^d\). The first iteration in (19) has an explicit solution, which is:

where the shrink operator is a projection operation to the unit ball with norm \(\Vert \cdot \Vert _{\mathrm {tr}}\). Its exact formulation is given further on in Proposition 8.

Second, consider

Thus the second iteration in (19) becomes

where \(\varDelta _G=\text {div}_G \cdot \nabla _G\) is the discrete Laplacian operator.

We are now ready to state our algorithm.

Remark 6

The relative error at iteration k is given by \(\displaystyle \frac{|\Vert {\mathbf {m}}^k\Vert _\text {tr}-\Vert {\mathbf {m}}^{k-1}\Vert _\text {tr}|}{\Vert {\mathbf {m}}^{k-1}\Vert _\text {tr}}\).

In the algorithm, we require the shrink operator with respect to the tropical metric, \(\text {shrink}_{\text {tr}}\), which is given in the following result.

Proposition 8

Let \(h>0\) and \(b_{1}\ge b_{2}\ge \cdots \ge b_{k}\ge 0 > b_{k+1} \ge \cdots \ge b_{n}\). We denote

and

Suppose

and

We let

and

Then

is the following unique point \(\mathbf{{x}}\in {{\mathbb {R}}}^{n}\), where

Proof

Note that by definition of \(t_{1}, t_{2}\), they are bounded by all of \(u_{i}\) with \(i\le j_{1}\) and all of \(v_{i}\) with \(i\le j_{2}\), respectively. In addition, we have

and

Now we claim that

Notice that (21) implies that

We also have that (22) implies that

Hence, the right-hand side of (23)

So our claim is proved.

Since \(h>0\) is a constant, we can multiply the objective function in (20) by 2h. Now, this new function is greater than or equal to

The global minimum of the last quadratic polynomial is attained exactly at the point \(\mathbf{{x}}\) in Proposition 8, so we have a lower bound for the new objective function, which is given when \({\mathbf {a}}=\mathbf{{x}}\). Finally, we note that the equality of (23) is attained at \(\mathbf{{x}}\), so this value is actually attained by \({\mathbf {a}}=\mathbf{{x}}\). \(\square \)

Example 3

When \(n=2\), given \((b_{1},b_{2})\in {{\mathbb {R}}}^{2}\), suppose \(x_{1} = f_{1}(b_{1},b_{2})\) and \(x_{2} = f_{2}(b_{1},b_{2})\), then the shrink operator is given as follows.

Remark 7

Proposition 8 provides an algorithm to compute the shrink. Suppose we have \(h>0\) and \({\mathbf {a}}_0, {\mathbf {b}}\in {{\mathbb {R}}}^{n}\) and we would like find

Note that

Then we let \({\mathbf {b}}' = {\mathbf {b}} + \frac{{\mathbf {a}}_0}{h}\), the optimization problem becomes the one in Proposition 8 for \({\mathbf {b}}'\) and h after sorting the coordinates of \({\mathbf {b}}'\).

4.2 Computing the tropical Wasserstein-2 distances

We now present an algorithm to compute the tropical Wasserstein-2 distance in the tropical projective torus \({{\mathbb {R}}}^3/{{\mathbb {R}}}{\mathbf{1}}\) identified with \({\mathbb {R}}^2\). Consider the same uniform lattice graph on a domain \(\varOmega \subset {\mathbb {R}}^2\) as in the case for the tropical Wasserstein-1 distance. Define the following matrices

where the time interval is discretized uniformly with \(N_t\) points, and \(N_x\) is the number of vertices from a uniform lattice graph. Here we assume Neumann boundary conditions for \(\varvec{\rho }\): \(\displaystyle \frac{\partial \rho }{\partial \hat{{\mathbf {n}}}} = 0\) on \(\partial \varOmega \), where \(\hat{{\mathbf {n}}}\) is a outward normal vector. Given initial densities \(\rho _0\) and \(\rho _1\), the boundary conditions for \(\rho \) at \(t=0\) and \(t=1\) are

Define \(\varDelta t := \frac{1}{N_t}\). We can reformulate the minimization problem (15) into a discretization as follows:

where

and

In \({\mathbb {R}}^2\), using (3), we can calculate the tropical norm of the flux function \({\mathbf {m}}\) by considering the six different cases based on \(\{{\mathbf {m}}_{{\mathbf {i}}+\frac{1}{2}e_v}\}^2_{v=1}\). The tropical norm of \({\mathbf {m}}\) is given as follows:

Let \(\varPhi =(\varPhi ^n_{{\mathbf {i}}})_{{\mathbf {i}}=1}^{N_x}{}_{n=1}^{N_t}\) here be the Lagrange multiplier which satisfies the Neumann boundary condition on the boundary of the domain. The minimization problem (24) can be reformulated as a saddle point problem.

Again, we implement G-Prox PDHG to solve the problem as follows:

where h, \(\tau \) are two small step sizes and

From (26), each component \({\mathbf {m}}^n_{{\mathbf {i}}+\frac{1}{2}}\), \(\varvec{\rho }^n_{{\mathbf {i}}}\), and \(\varPhi ^n_{{\mathbf {i}}}\) can be obtained. From the first iteration,

We calculate the minimizer by differentiating the equation with respect to \(\varvec{\rho }^n_{{\mathbf {i}}}\). The minimizer \(\varvec{\rho }^{k+1}\) is a positive root of the following cubic polynomial:

Thus, we can calculate the root by using a cubic solver.

where \(\text {root}^+(a,b,c)\) is a solution for a cubic polynomial \(x^3 + a x^2 + b x + c = 0\).

We can reformulate the second iteration as follows:

Differentiating the equation with respect to \({\mathbf {m}}^n_{{\mathbf {i}}+\frac{1}{2}}\), we obtain the following expression:

Solving this expression gives an explicit solution for \(({\mathbf {m}}^n_{{\mathbf {i}}+\frac{1}{2}})^{k+1}\):

Let \(\mu =\tau /(\varvec{\rho }^n_{{\mathbf {i}}})^{k+1}\) and \(c=(c_1,c_2)\) be

The function \({\varvec{F}}(c,\mu )\) is then given as follows:

Similarly, we get an explicit formula of \(\varPhi ^{k+1}\) from the third iteration.

for \({\mathbf {i}}=1,\ldots N_x\) and \(n=1,\ldots ,N_t\). Here, \(\varDelta _{t,G} = \partial _{tt} + \varDelta _G\) is the discrete Laplacian operator over time and space.

Now, define

Then the relative error at iteration k is calculated as \(\displaystyle \frac{|E^k-E^{k-1}|}{|E^{k-1}|}\).

We are now ready to present our algorithm to compute the tropical Wasserstein-2 metric.

4.3 Convergence

Our proposed primal-dual algorithms for the tropical Wasserstein-1 and tropical Wasserstein-2 distances converge to their respective minimizers as given by Propositions 5 and 7.

Theorem 1

-

(i)

Consider the G-Prox PDHG algorithm to compute the tropical Wasserstein-1 distance. Let

$$\begin{aligned} \sqrt{\tau \mu }\Vert (-\varDelta _G)^{-\frac{1}{2}}\mathrm {div}_G\Vert _2<1. \end{aligned}$$Then \(({\mathbf {m}}^{k}, \varPhi ^{k})\) defined by (19) converges weakly to \(({\mathbf {m}}^*,\varPhi ^*)\).

-

(ii)

Consider the G-Prox PDHG algorithm to compute the tropical Wasserstein-2 distance. Let

$$\begin{aligned} \sqrt{\tau \mu }\Vert (-\varDelta _{t,G})^{-\frac{1}{2}}\mathrm {div}_{t,G}\Vert _2<1. \end{aligned}$$Then \(({\mathbf {m}}^{k}, \varvec{\rho }^k, \varPhi ^{k})\) defined by (26) converges weakly to \(({\mathbf {m}}^*,\varvec{\rho }^*,\varPhi ^*)\).

Proof

The proof follows that of Theorem 1 in Pock and Chambolle [40]. We justify the conditions in Pock and Chambolle [40]. In the case of (i), we write the Lagrangian L as

where \(g({\mathbf {m}})=\Vert {\mathbf {m}}\Vert _{\mathrm {tr}}\), \(K=\text {div}_G\), and \(f^*(\varPhi )=\sum _{{\mathbf {i}}}\varPhi _{{\mathbf {i}}} (q_{{\mathbf {i}}}^0-q_{{\mathbf {i}}}^1)\). Observe that g, \(f^*\) are convex functions and K is a linear operator. Then there exists a saddle point \(({\mathbf {m}}^*,\varPhi ^*)\). Notice that the preconditioning norm for \(\varPhi \) is \(\varSigma :=\mu (-\varDelta _G)^{-1}\) and the preconditioning norm for \({\mathbf {m}}\) is \(T:=\tau \cdot \mathrm {Id}\) where \(\mathrm {Id}\) is an identity operator. Thus, the algorithm converges when \(\Vert \varSigma ^{\frac{1}{2}}KT^{\frac{1}{2}}\Vert _2^2<1\). This is our condition \(\sqrt{\tau \mu }\Vert (-\varDelta _G)^{-\frac{1}{2}}\mathrm {div}_G\Vert _2<1\), which finishes the proof. A similar argument holds for (ii). \(\square \)

5 Numerical experiments

In this section, we present the results of numerical experiments solving the tropical optimal transport problem for three different sets of initial densities using our proposed G-Prox primal-dual methods for \(L^1\) and \(L^2\). In particular, we give the minimizers of \(L^1\) and \(L^2\) tropical optimal transport problems from each experiment.

Experiment 1. We consider a two-dimensional problem on \(\varOmega = [0,1]\times [0,1]\). The initial densities \(\rho _0\) and \(\rho _1\) are same sizes of squares centered at \((\frac{1}{3},\frac{1}{3})\) and \((\frac{2}{3},\frac{2}{3})\), respectively. In this experiment, the parameters are

Figure 4 shows the minimizer m(x) of the tropical Wasserstein-1 distance and Fig. 5 shows the minimizer \(\rho (t,x)\) of the tropical Wasserstein-2 distance.

Experiment 1: \(L^2\) tropical optimal transport. The six figures show the geodesics of \(L^2\) tropical optimal transport from \(t=0\) to \(t=1\). The initial densities are same as in Fig. 4

Experiment 2. Similar to Experiment 1, we consider a two dimensional problem on \(\varOmega = [0,1]\times [0,1]\). The initial densities \(\rho _0\) and \(\rho _1\) are same sizes of squares centered at \((\frac{1}{3},\frac{2}{3})\) and \((\frac{2}{3},\frac{1}{3})\) respectively. The same parameters are set as in Experiment 1. Together with Experiment 1, Experiment 2 shows that the minimizers of tropical optimal transport show different geodesics depending on the positions of initial densities. See Fig. 6 for \(L^1\) result and Fig. 7 for \(L^2\) result.

Experiment 2: \(L^2\) tropical optimal transport. The figures show the geodesics of \(L^2\) tropical optimal transport between two initial densities from \(t=0\) to \(t=1\). The initial densities are same as in Fig. 6

Experiment 3. We again consider a two dimensional problem on \(\varOmega = [0,1]\times [0,1]\). The initial density \(\rho _0\) at time 0 is a square centered at (0.5, 0.5) with width 0.2. The initial density \(\rho _1\) at time 1 is four squares of the same size centered at (0.2, 0.2), (0.2, 0.8), (0.8, 0.2) and (0.8, 0.8) with width 0.1. The same parameters are set as in Experiment 1. See Fig. 8 for the \(L^1\) result and Fig. 9 for the \(L^2\) result; notice that the geodesics of minimizers from both results depend on the direction in which the densities travel. We see that Experiment 3 coincides with Experiments 1 and 2.

Experiment 3: \(L^1\) tropical optimal transport. a, b show the initial densities \(\rho _0\) and \(\rho _1\), while c shows the geodesics of the \(L^1\) tropical optimal transport between \(\rho _0\) and \(\rho _1\). This experiment shows similar patterns of geodesics from Experiment 1 and Experiment 2

Experiment 3: \(L^2\) tropical optimal transport. The six figures show the geodesics of \(L^2\) tropical optimal transportation from \(t=0\) to \(t=1\). The initial densities are same as in Fig. 8

Software. Software to implement the numerical experiments presented in this paper is publicly available and located on the TropicalOT GitHub repository at https://github.com/antheamonod/TropicalOT.

6 Discussion

In this paper, we connected optimal transport theory—specifically, dynamic optimal transport—with tropical geometry. In particular, we explicitly formulated geodesics for the tropical Wasserstein-p distances over the tropical projective torus. The tropical projective torus is the ambient space of the polyhedral Gröbner complex of a homogeneous ideal in a polynomial ring \(K[x_0, x_1, \ldots , x_n]\) over a field K—a foundational object in tropical geometry. It is also the ambient space of the space of phylogenetic trees.

We constructed and implemented primal-dual algorithms to compute tropical Wasserstein-1 and 2 geodesics on the tropical projective torus. These results provide a framework to identifying all infinitely-many geodesic paths between points in this space, which leads to a better understanding of paths on the ambient space containing important structures in tropical geometry theory as well as in practice and applications. In addition, the Wasserstein-2 distance possesses an important structure for statistical inference, since it provides the form for Fréchet means on the tropical projective torus, as well as a general inner product structure.

Our research lays the foundation for further connections between optimal transport and tropical geometry. Our work provides powerful tools to study important aspects such as geometry and statistics on the tropical projective torus. A current work in progress is to characterize and solve the optimal transport problem on the subset of the tropical projective torus corresponding to phylogenetic tree space with 5 leaves, \({\mathcal {T}}_5\). This space is made up of a union of 5!! = 15 polyhedral cones in the tropical projective torus, each with dimension 2. In this study, the main challenge involves the polyhedral structure of the tree space (as discussed in Sect. 2.2), and in particular, how to handle the intersections of the cones; a weaker form of the divergence and gradient operators are required to traverse the cones. The present work solves the problem within a single cone, which defines a shrink operator with already six cases, see Table 1; we also expect the characterization of the shrink operator to be combinatorially more complicated on all 15 cones of \({\mathcal {T}}_5\).

From the perspective of optimal transport, we observe that the combinatorial structure of the tropical metric poses several interesting challenges in optimal transport. For example, the partial differential equations derived in Sect. 3 are defined in a piecewise manner: in two-dimensional sample space, there are six corresponding equations characterizing geodesics in optimal transport. In the general case, there are interesting regularity issues to be further studied. The theory of optimal transport and the study of associated density manifolds provide a natural base to construct heat equations with respect to the tropical metric. This provides an important potential to defining non-uniform probability distributions on the tropical projective torus: classically, the solution to the heat equation gives rise to the Gaussian distribution, thus, a solution to the tropical heat equation is a candidate for a tropical Gaussian distribution on the tropical projective torus [11, 46]. The dynamic setting of optimal transport with the tropical ground metric introduced in this paper also provides a foundation to studying the displacement convexity and Ricci curvature tensor on the tropical projective torus. In forthcoming work, we further study such questions by applying the relevant work of Li [23, 24], which also studies geometric and probabilistic questions in the context of optimal transport theory.

References

Akian, M., Gaubert, S., Niţică, V., Singer, I.: Best approximation in max-plus semimodules. Linear Algebra and its Applications 435(12), 3261–3296 (2011). https://doi.org/10.1016/j.laa.2011.06.009, http://www.sciencedirect.com/science/article/pii/S0024379511004551

Ambrosio, L., Gigli, N.: A User’s Guide to Optimal Transport, pp. 1–155. Springer Berlin Heidelberg, Berlin, Heidelberg (2013). https://doi.org/10.1007/978-3-642-32160-3_1

Ambrosio, L., Gigli, N., Savaré, G.: Gradient Flows: In Metric Spaces and in the Space of Probability Measures. Springer Science & Business Media, New York (2008)

Benamou, J.D., Brenier, Y.: A computational fluid mechanics solution to the Monge-Kantorovich mass transfer problem. Numerische Mathematik 84(3), 375–393 (2000). https://doi.org/10.1007/s002110050002

Benamou, Jean-David, Carlier, Guillaume, Hatchi, Roméo: A numerical solution to Monge’s problem with a Finsler distance as cost. ESAIM: M2AN 52(6), 2133–2148 (2018). https://doi.org/10.1051/m2an/2016077

Brunn, H.: Ueber ovale und eiflächen, inaugural. PhD thesis, Dissertation, Munich, F. Straub (1887)

Çelik, TÖ., Jamneshan, A., Montúfar, G., Sturmfels, B., Venturello, L.: Optimal Transport to a Variety. In: International Conference on Mathematical Aspects of Computer and Information Sciences, Springer, pp 364–381 (2019)

Chambolle, A., Pock, T.: A First-Order Primal-Dual Algorithm for Convex Problems with Applications to Imaging. J. Math. Imaging Vis. 40(1), 120–145 (2011)

Cohen, G., Gaubert, S., Quadrat, J.P.: Duality and Separation Theorems in Idempotent Semimodules. Linear Algebra and its Applications 379, 395–422 (2004). https://doi.org/10.1016/j.laa.2003.08.010, http://www.sciencedirect.com/science/article/pii/S0024379503007201, special Issue on the Tenth ILAS Conference (Auburn, 2002)

Divol, V., Lacombe, T.: Understanding the topology and the geometry of the persistence diagram space via optimal partial transport (2019). arXiv:1901.03048

El Maazouz, Y., Tran, N.M.: Statistics of Gaussians on local fields and their tropicalizations (2019). arXiv:1909.00559

Evans, S.N., Matsen, F.A.: The phylogenetic Kantorovich—Rubinstein metric for environmental sequence samples. Journal of the Royal Statistical Society: Series B (Statistical Methodology) 74(3), 569–592 (2012). https://doi.org/10.1111/j.1467-9868.2011.01018.x, https://rss.onlinelibrary.wiley.com/doi/abs/10.1111/j.1467-9868.2011.01018.x

Garba, M.K., Nye, T.M.W., Boys, R.J.: Probabilistic Distances Between Trees. Systematic Biology 67(2), 320–327 (2017). https://doi.org/10.1093/sysbio/syx080, https://academic.oup.com/sysbio/article-pdf/67/2/320/25156589/syx080.pdf

Garba, M.K., Nye, T.M., Lueg, J., Huckemann, S.F.: Information geometry for phylogenetic trees (2020). arXiv:2003.13004

Gill, J., Linusson, S., Moulton, V., Steel, M.: A regular decomposition of the edge-product space of phylogenetic trees. Advances in Applied Mathematics 41(2), 158–176 (2008). https://doi.org/10.1016/j.aam.2006.07.007, https://www.sciencedirect.com/science/article/pii/S0196885807001054

Jacobs, M., Léger, F., Li, W., Osher, S.: Solving Large-Scale Optimization Problems with a Convergence Rate Independent of Grid Size. SIAM J Numer Anal 57(3), 1100–1123 (2019). https://doi.org/10.1137/18M118640X

Kantorovich, L.V.: On the translocation of masses. J Math Sci 133(4), 1381–1382 (2006)

Kloeckner, B.R.: A geometric study of Wasserstein spaces: Ultrametrics. Mathematika 61(1), 162–178 (2015). https://doi.org/10.1112/S0025579314000059

Kullback, S., Leibler, R.A.: On information and sufficiency. The Annals of Mathematical Statistics 22(1), 79–86 (1951), https://projecteuclid.org:443/euclid.aoms/1177729694

Lacombe, T., Cuturi, M., Oudot, S.: Large scale computation of means and clusters for persistence diagrams using optimal transport. In: Advances in Neural Information Processing Systems, pp 9770–9780 (2018)

Lafferty, J.D.: The Density Manifold and Configuration Space Quantization. Transactions of the American Mathematical Society 305(2), 699–741 (1988), http://www.jstor.org/stable/2000885

Le, T., Yamada, M., Fukumizu, K., Cuturi, M.: Tree-Sliced Variants of Wasserstein Distances. In: Wallach, H., Larochelle, H., Beygelzimer, A., d’ Alché-Buc, F., Fox, E., Garnett, R. (eds) Advances in Neural Information Processing Systems 32, Curran Associates, Inc., pp 12,304–12,315 (2019). http://papers.nips.cc/paper/9396-tree-sliced-variants-of-wasserstein-distances.pdf

Li, W.: Transport Information Geometry I: Riemannian Calculus on Probability Simplex (2018). arXiv:1803.06360

Li, W.: Diffusion hypercontractivity via generalized density manifold (2019). arXiv:190712546

Li, W., Ryu, E.K., Osher, S., Yin, W., Gangbo, W.: A Parallel Method for Earth Mover’s Distance. J. Sci. Comput. 75(1), 182–197 (2018). https://doi.org/10.1007/s10915-017-0529-1

Lin, B., Tran, N.M.: Two-player incentive compatible outcome functions are affine maximizers. Linear Algebra and its Applications 578, 133–152 (2019). https://doi.org/10.1016/j.laa.2019.04.027, http://www.sciencedirect.com/science/article/pii/S0024379519301855

Lin, B., Yoshida, R.: Tropical Fermat-Weber Points. SIAM J. Discrete Math. 32(2), 1229–1245 (2018). https://doi.org/10.1137/16M1071122

Lin, B., Sturmfels, B., Tang, X., Yoshida, R.: Convexity in Tree Spaces. SIAM J. Discrete Math. 31(3), 2015–2038 (2017). https://doi.org/10.1137/16M1079841

Lyusternik, L.: Die brunn-minkowskische ungleichung für beliebige messbare mengen, cr (dokl.) acad. Sci URSS, n Ser 3, 55–58 (1935)

Maclagan, D., Sturmfels, B.: Introduction to Tropical Geometry (Graduate Studies in Mathematics). American Mathematical Society (2015), https://www.amazon.com/Introduction-Tropical-Geometry-Graduate-Mathematics/dp/0821851985?SubscriptionId=0JYN1NVW651KCA56C102&tag=techkie-20&linkCode=xm2&camp=2025&creative=165953&creativeASIN=0821851985

Minkowski, H.: (1896) Geometrie der zahlen (2 vol.). Teubner, Leipzig 1910 (1896)

Monge, G.: Mémoire sur la théorie des déblais et des remblais. Histoire de l’Académie royale des sciences de Paris (1781)

Monod, A., Lin, B., Kang, Q., Yoshida, R.: Tropical Geometry of Phylogenetic Tree Space: A Statistical Perspective (2018). arXiv:1805.12400

Moulton, V., Steel, M.: Peeling phylogenetic ‘oranges’. Advances in Applied Mathematics 33(4), 710–727 (2004). https://doi.org/10.1016/j.aam.2004.03.003, https://www.sciencedirect.com/science/article/pii/S0196885804000430

Otto, F.: The Geometry of Dissipative Evolution Equations: The Porous Medium Equation. Commun. Partial Differ. Equ. 26(1–2), 101–174 (2001). https://doi.org/10.1081/PDE-100002243

Otto, F., Villani, C.: Generalization of an Inequality by Talagrand and Links with the Logarithmic Sobolev Inequality. Journal of Functional Analysis 173(2), 361–400 (2000). https://doi.org/10.1006/jfan.1999.3557, http://www.sciencedirect.com/science/article/pii/S0022123699935577

Page, R., Yoshida, R., Zhang, L.: Tropical principal component analysis on the space of phylogenetic trees. Bioinformatics (2020). https://doi.org/10.1093/bioinformatics/btaa564, btaa564, https://academic.oup.com/bioinformatics/article-pdf/doi/10.1093/bioinformatics/btaa564/33372220/btaa564.pdf

Panaretos, V.M., Zemel, Y.: Statistical Aspects of Wasserstein Distances. Annu. Rev. Stat. Appl. 6(1), 405–431 (2019). https://doi.org/10.1146/annurev-statistics-030718-104938

Panaretos, V.M., Zemel, Y.: An Invitation to Statistics in Wasserstein Space. Springer Nature, New York (2020)

Pock, T., Chambolle, A.: Diagonal Preconditioning for First Order Primal-Dual Algorithms in Convex Optimization. In: 2011 International Conference on Computer Vision, pp 1762–1769 (2011)

Richter-Gebert, J., Sturmfels, B., Theobald, T.: First steps in tropical geometry. Contemp. Math. 377, 289–318 (2005)

Sato, R., Yamada, M., Kashima, H.: Fast Unbalanced Optimal Transport on Tree (2020). arXiv:2006.02703

Sommerfeld, M., Munk, A.: Inference for empirical Wasserstein distances on finite spaces. Journal of the Royal Statistical Society: Series B (Statistical Methodology) 80(1), 219–238 (2018). https://doi.org/10.1111/rssb.12236, https://rss.onlinelibrary.wiley.com/doi/abs/10.1111/rssb.12236

Speyer, D., Sturmfels, B.: The Tropical Grassmannian. Adv. Geom. 4(3), (2004). https://doi.org/10.1515/advg.2004.023

Tang, X., Wang, H., Yoshida, R.: Tropical Support Vector Machine and its Applications to Phylogenomics (2020). arXiv:2003.00677

Tran, N.M.: Tropical Gaussians: A Brief Survey (2018). arXiv:1808.10843

Villani, C.: Topics in Optimal Transportation. 58, American Mathematical Soc (2003)

Villani, C.: Optimal Transport: Old and New, vol. 338. Springer Science & Business Media, New York (2008)

Wasserman, L.: Lecture notes on Statistical Methods for Machine Learning (2019)

Yoshida, R., Zhang, L., Zhang, X.: Tropical principal component analysis and its application to phylogenetics. Bull. Math. Biol. 81(2), 568–597 (2019)

Acknowledgements

The authors wish to thank Marzieh Eidi, Théo Lacombe, Victor Panaretos, Ronen Talmon, and Yoav Zemel for helpful discussions, with special thanks extended to Emil Saucan. A.M. wishes to acknowledge the Max Planck Institute for Mathematics in the Sciences for hosting her visit in Leipzig in July 2018, which inspired this work.

Funding

W.L. and W.L. are supported by the Air Force Office of Scientific Research under grant number AFOSR MURI FA9550-18-1-0502.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflicts of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Lee, W., Li, W., Lin, B. et al. Tropical optimal transport and Wasserstein distances. Info. Geo. 5, 247–287 (2022). https://doi.org/10.1007/s41884-021-00046-6

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s41884-021-00046-6