Abstract

This study assessed the accuracy of a low-cost marker-based motion capture system with smartphone devices to estimate the spatiotemporal behavior of human gait in comparison with the performance of the commercial OptiTrack system. Initially, three test subjects were selected for the study, and after equipping them with passive retroreflective markers, they were recorded for gait velocities of 1.50, 1.90, and 2.30 \(m\bullet {s}^{-1}\) while collecting kinematic data and videos. The results showed that the smartphone motion capture system exhibited significant spatiotemporal tracking and accuracy in the x-y trajectories and estimation of joint relative angles of the hip, knee, and ankle joints (θ1, θ2, and θ3, respectively) compared to the commercial OptiTrack system. In this comparison, an average goodness-of-FIT and normalized root mean square error of over 88.93% and 2.71% were obtained, respectively, for the joint relative angles of the hip and knee (θ1 and θ2) in all tests performed. However, the accuracy of the joint relative angle of the ankle (θ3, average FIT: 71.04% and nRMSE: 4.26%) was lower because of the low capture rate of the retroreflective markers in the smartphone system and the higher relative velocity in the lower extremities of the test subjects, which generated noise in the calculation of x-y trajectories. This decrease in accuracy has been reported in other studies. However, both motion capture systems experienced marker data loss at the hip, highlighting the need for improvement in the spatial distribution of the optical devices. The OptiTrack system demonstrated better optical redundancy but still required improvements. In contrast, the smartphone system, with its inherent limitations in terms of optical redundancy and spatial distribution, can be enhanced by incorporating multiple cameras for a three-dimensional view. Despite these limitations, the low-cost smartphone system showed optimal performance with minimal errors compared with the commercial system, making it a cost-effective option with potential for further development. The rapid advancement of smartphone technology and its accessibility make it an attractive choice for motion capture applications.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

In recent years, the use of optical motion capture systems (MOCAPs) has focused on digital animation of human activity of characters for the film and video game industry. Therefore, this technology has made rapid inroads in the field of human motion analysis and measurement because it provides accurate and reliable spatiotemporal measurements, versatile testing schemes, high spatial measurement resolution, and relative ease of implementation [1,2,3,4]. MOCAPs employ a single camera (measurement 2-D) or more cameras (measurement 3-D) and sophisticated computer algorithms, which allow for a detailed assessment of anthropic activities [5,6,7,8,9]. In the case of human gait, compared to traditional methods such as wearable sensors or force plates, optical systems offer non-invasive tracking, allowing for a more natural and unconstrained physical motion analysis [10,11,12]. This non-intrusive approach is particularly beneficial in the analysis of the dynamic interaction of human gait with civil structures [13,14,15,16,17,18], where it is important to capture and analyze motion patterns in a manner that does not disrupt the natural gait of the test subject (TSs) while transiting structures.

The accuracy of MOCAP systems is outstanding because of advanced algorithms and calibration techniques, which achieve millimeter accuracy in tracking the position and orientation of body segments, enabling a detailed understanding of the kinematics and kinetics of human gait. This level of accuracy is important for acquiring subtle movements and biomechanical parameters during gait analysis. Accurate measurements are essential for detecting abnormalities, assessing rehabilitation progress, and designing customized interventions. By providing highly reliable data, MOCAP systems offer researchers and clinicians valuable tools for studying and evaluating human gait dynamics. Numerous MOCAPs technologies have been developed and are widely used today [19,20,21,22]. Marker-based systems, often considered the gold-standard method to quantify human activities, involve the attachment of retroreflective markers to specific body landmarks, allowing the precise tracking of their positions. These markers reflect the light emitted by the environment, thereby enabling accurate position and orientation calculations [23,24,25]. On the other hand, Markerless systems use computer algorithms to identify and track anatomical features without the need for markers. These markerless approaches leverage pattern recognition and machine-learning techniques to analyze captured video data and estimate joint positions and movements [9, 19, 21, 26]. Examples of popular marker-based systems used for the assessment of human gait include Vicon [15, 27,28,29], CODA [15, 18, 30, 31], and OptiTrack [32,33,34,35,36,37,38,39], whereas markerless systems such as Kinect [24, 40,41,42,43] and OpenPose [19, 44,45,46,47,48,49] have gained prominence in recent years.

The market prices of MOCAP systems are often very expensive [27]. These systems are frequently tailored for specific applications and offer advanced features and capabilities. However, the high costs associated with these systems make them inaccessible in many research and clinical settings, thereby limiting their widespread adoption and implementation. To address this problem, there is an increasing need to develop low-cost optical technologies that can provide accurate motion capture capabilities, while remaining affordable. By developing cost-effective and reliable solutions, researchers and clinicians can access motion analysis tools and promote their integration into various human gait analysis fields [11, 12, 19, 41, 42]. Smartphones are potential devices for integrating the MOCAP systems. Smartphones are equipped with high-resolution cameras, substantial computational power, and portability, making them attractive platforms for implementing motion capture technologies [50,51,52,53]. In addition, the general accessibility of smartphones to society allows researchers to develop low-cost solutions that utilize built-in cameras and combine them with computer vision algorithms to capture and analyze the human gait. The versatility of smartphones allows for real-time data processing [54,55,56], immediate feedback [51, 57, 58], and seamless integration with other applications for data visualization and analysis [52, 53, 57, 59].

Although continuous advancements in smartphone technology have enhanced their potential as MOCAPs devices, the evaluation of the accuracy, performance, and cost-effectiveness of motion capture using this type of low-cost device in comparison with gold-standard methods, such as marker-based systems, for assessing human gait is still in its early stages. This study compared the x-y trajectory in sagittal plane gait measured using two marker-based MOCAP systems: a custom low-cost MOCAP system using a smartphone device and a commercial MOCAP system known as OptiTrack. In order to evaluate human gait using a low-cost MOCAP system, cameras of Huawei Ascend G7 smartphones as devices within a Marker-based system were integrated to evaluated three TSs (65.00 ± 8.00 kg, and 1.65 ± 0.10 m) in the sagittal plane, subjected to three walking speeds (1.50, 1.90, and 2.30 \(m\bullet {s}^{-1}\)) on a treadmill. The obtained results were compared with those of tests using the OptiTrack system. The remainder of this paper is organized as follows. Section "ExperimentaL Methods" provides details of the experimental methods. In Section "Results", the results obtained for both the MOCAP systems are presented and evaluated. Finally, section "Discussion" presents a discussion of the results.

Experimental Methods

Investigation General Design

This investigation assessed the spatiotemporal behavior of human gait through two MOCAP systems: a Marker-based OptiTrack system and Low-cost Smartphone systems. Three TSs were selected for these gait tests and their general anthropometric information was recorded. Initially, TSs were equipped with passive-retro-reflective markers. The TSs then walked on the treadmill for at least 2.0 minutes to allow them to adapt to the testing environment. Subsequently, the TSs performed gait tests for 3.0 minutes, whereas body kinematics and smartphone videos were collected for both MOCAP systems. The data collected during the human gait tests were used in this investigation to compare the tracking performance of the OptiTrack system and marker-based motion capture with a smartphone camera for assessing gait kinematics.

Test Subjects (TSs)

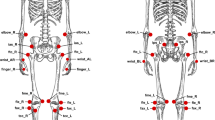

Three TSs (2 female, 1 male, 24.6 ± 5.5 years, 172.3 ± 11.2 cm, and 71.6 ± 9.6 kg) were volunteers in this investigation. Basic anthropometric information on the TSs was obtained by physical measurements using a tape measure to provide relevant reference data for similar investigations. The anthropometric measurements of the TSs are shown in Table 1 according to the scheme shown in Fig. 1. All TSs were free of injuries for at least one month, had a body mass index (BMI) of less than 25.5 (Normal weight level), and were in the 20–30-year age range.

Assembly, Acquisition, and Calibration Setup

Assembly setup

The experimental setup, as shown in Fig. 2, was developed in the Laboratory of Seismic engineering and Structural at Universidad del Valle, Cali, Colombia, and consists of a SOLE F65 treadmill (belt length and width 1.40 × 0.55 m, 60 Hz belt speed update frequency, and 0.5–10 \(m\bullet {s}^{-1}\) speed range) on which the human gait of the three TSs in this study was performed. A rigid fame, set up at a horizontal distance of 2.25 m parallel to the sagittal plane of the treadmill, was used as a support for the six interconnected 3-D motion capture system with their acquisition integrated circuit (OptiTrack system) and a Huawei Ascend G7 smartphone system with a 13-megapixel main camera. Both video and motion capture data were recorded using both MOCAP systems. Each optical system transferred the information to laptops, which processed the data captured during human gait tests. A flash of light and an audible beep were used at the beginning and end of each human gait test, respectively, to synchronize the data from both systems.

Pinhole camera model calibration

The pinhole camera model is based on the model proposed by J. Bouguet [60], as presented in equation (1), where \({Depth}_{u}\) corresponds to approximated depth value to the sagittal plane, \(({x}_{u},{y}_{u})\) are undistorted depth pixel coordinates, and \({(f}_{x}{,f}_{y,}{c}_{x}{,c }_{y})\) are intrinsic parameters of the camera. This model requires camera calibration to calculate the intrinsic and extrinsic parameters of a smartphone camera. The extrinsic parameters represent the location of the camera in the 3-D scene and the intrinsic parameters represent the optical center and focal length of the camera. The world points are transformed into camera coordinates using extrinsic parameters, and the camera coordinates are mapped onto the image plane using intrinsics parameters. A special calibration pattern-type checkerboard of 9 squares on the x-axis and 13 squares on the y-axis, with a square size of 7.0 cm each, was used as the reference object for calibration. In this study, 66 images of the checkerboard at different positions with randomness and the highest resolution were selected, close to the sagittal plane of the gait zone, to estimate the pinhole camera model parameters of the camera smartphone with a mean reprojection error per image of less than 0.25 pixels.

The intrinsic and extrinsic pinhole camera model parameters of the camera smartphone were calculated using the MATLAB function estimateCameraParameters, which uses images of the checkerboard at different positions. The intrinsic parameters are presented in Table 2.

Data Collection Procedures

Initially, the MOCAP systems were calibrated using the procedure outlined in Section "ExperimentaL Methods"c for low-cost smartphone and the procedure recommended by the manufacturer for OptiTrack. Nine passive retroreflective adhesive markers, with a diameter of 12.0 mm were attached to specific joints of the human body, as shown in Fig. 3. Prior to each gait test, the TSs were adapted to the gait velocity by walking on the treadmill for two-minutes. Tests were conducted at three velocities: 1.50, 1.90, and 2.30 \(m\bullet {s}^{-1}\), which were chosen as they are representative of the slow, normal, and fast velocities of human gait [61]. Marker-based MOCAP system data were simultaneously collected during all human gait tests and used for assessment in this investigation. In addition, the mean sample rate of the smartphone camera was determined in 29.85 ± 0.89 fps. Similarly, an average capture rate of 100.0 fps was reported for the OptiTrack system.

Marker-based OptiTrack and Low-Cost Smartphone System Data Processing

Gait kinematics were acquired using a middle-body sagittal model (human middle body, compound of seven rigid body segments generated by nine passive retroreflective markers), and the output was processed using custom algorithms in MATLAB to extract the joint coordinates. The anatomical joints were then labelled as the head, shoulder, elbow, wrist, hip, knee, ankle, heel, and metatarsus, as shown in Fig. 3. Although the OptiTrack system tracks and computes gait kinematics using its own hardware and software, the low-cost MOCAP system tracks each of the nine passive retroreflective markers in each video frame. The spatial position of each marker in the gait-plane was determined using the calibration parameters and the custom algorithms developed in MATLAB. The x-y positions of the nine reflective markers of the TSs were acquired and processed for all the test times. On the other hand, when a measurement contained incorrect data or empty data owing to non-redundancy capture or occluded marker (Figure , right), the gaps were filled with a combination of splines and estimated from previous data. The biomechanical results of marker-based motion capture were compared based on their potential relevance for acquiring kinematic data for human gait [6, 7, 13, 24, 25, 48]. Only the right side of the body was used for assessment as it was closest to the sagittal view in the MOCAP system. In addition, the length of the gait period acquired from the tests will be able to support the statistical analysis of performance between both MOCAP systems in subsequent studies, positioning these records as reference data.

Motion Capture Systems Performance Evaluation

The tracking accuracy of the low-cost smartphone system in comparison with the tracking realized by the gold standard system (OptiTrack) was performed using the coefficients of Normalized Root Mean Square Error (nRMSE) and goodness-of-FIT (FIT), as described in equations (2) and (3), for the assessed joint angles, which were generated by a passive retroreflective marker across all temporal assessments and all TSs. Both acquired data systems were resampled at a rate of 100.0 Hz for their comparison.

Where \({{\text{y}}}_{{\text{OptiTrack}}}\) and \({\widehat{{\text{y}}}}_{{\text{Smartphone}}}\) are the measurement signals (x-y trajectory) with the OptiTrack and low-cost smartphone systems, \(x\) is the normalization factor, which is equal to the difference between the maximum and minimum values in the required range of the reference signal and \(n\) is the data number.

Results

The x-y positions of the head, shoulder, elbow, wrist, hip, knee, ankle, heel, and metatarsus were acquired, according to the arrangement of the retroreflective markers in Fig. 4. The low-cost smartphone system performed an acceptable spatiotemporal tracking compared to that of the commercial OptiTrack system, as shown in Figure. In this figure, three representative gait cycles at a velocity of 1.90 \(m\bullet {s}^{-1}\) are shown for illustrative purposes and all other gait cycle data is included in the shaded bands. Likewise, hip, knee, and ankle relative angle in the sagittal plane were calculated with low-cost smartphone system data show joint extensions with minimal differences from those calculated with the OptiTrack system data for the gait velocities of 1.50, 1.90 and 2.30 \(m\bullet {s}^{-1}\), are shown in Fig. 5. The relative angles were selected in this comparative study because of their major contribution to the mechanical energy expenditure during gait tests, relevant to human gait models [62]. In these figures, the blue area is the size of the retroreflective marker calculated by the low-cost smartphone system, which for the x-y positions cover the trajectory determined by the OptiTrack system, evidencing the high accuracy in the tracking of this low-cost marker-based system.

Relative angle calculated hip (θ1, left column), knee (θ2, middle column), and ankle (θ3, right column) with OptiTrack (red) and low-cost smartphone (blue) systems at gait velocities of 1.50 (top), 1.90 (middle), and 2.30 \({\text{m}}\bullet {{\text{s}}}^{-1}\) (bottom). The blue area is the size of the retroreflective marker calculated by the low-cost smartphone system

The nRMSE and FIT for the assessed joint relative angles, across all temporal assessments and all TSs for the low-cost smartphone system and OptiTrack system are reported in Table 3 at 1.50, 1.90 and 2.30 \(m\bullet {s}^{-1}\).

Discussion

The relative hip (\({\theta }_{1}\)) and knee (\({\theta }_{2}\)) angles, calculated from OptiTrack and low-cost smartphone system data, demonstrate a high level of accuracy in estimating human gait kinematics across all tested velocities. The goodness-of-fit averages above 88.93%, with an nRMSE average below 2.71%. This accuracy surpasses rates reported in previous studies for gait velocities between 2.5-3.4 \(m\bullet {s}^{-1}\) [63,64,65,66], as illustrated in Fig. 6. According to the resources to post-process data collected using the MOCAP systems described in Section "ExperimentaL Methods"e and the results in Section "Results", the low-cost smartphone system was more cost-effective than OptiTrack because of the simple calibration requirement and open-access algorithms. However, in the case of angle ankle (θ3), a lower performance was obtained, with an average goodness-of-FIT of 72.07% and nRMSE of 2.86%. This decrease in accuracy was due to the speed of angular movement of the ankle and the low capture rate of the low-cost smartphone system (≈30.0 fps) compared to the OptiTrack system (≈100 fps). These factors produced a deformation of the marker in the acquired frame-propagating error in the calculation of the marker centroid, as shown in Figure (right). This causes a decrease in the estimation of the ankle angle (θ3) and an increase in the noise of the calculated data; this situation has been previously reported in other studies [67]. In addition, the maximum peaks of the relative angles evaluated by both MOCAP systems were determined for all the TSs and velocities in this study, as shown in Table 4. These analyses demonstrated differences between the maximum relative angles calculated by both systems of less than 3.40% for speeds of 1.50-1.90 \(m\bullet {s}^{-1}\) and differences of less than 12.20% for speeds of 2.50 \(m\bullet {s}^{-1}\), which demonstrating the robustness and accuracy of the low-cost smartphone system for calculating kinematics for typical gait speeds.

Despite the optical redundancy demonstrated by the OptiTrack system (3D view) in the experimental setup (Fig. 2), there is a clear need for improvement in the spatial distribution of the optical devices around the TS to prevent the loss of kinematic data, even when only data in the sagittal plane of the gait are desired. In contrast, low-cost smartphone system uses a single optical device (2D view) and exhibits the inherent limitations of optical redundancy and spatial distribution, which can be overcome by combining the vision from multiple cameras to approach a three-dimensional view, as demonstrated in other studies [45, 53, 67,68,69]. However, based on the obtained results, the MOCAP system with smartphones achieves optimal performance with minimal errors compared with the commercial OptiTrack system, positioning it as a low-cost motion capture system with the potential for further development because of the rapid advancement of technical specifications in smartphones and their accessibility to the general population.

Limitations and Future Work

Overall, the results and discussion show that the low-cost smartphone system used as MOCAP systems exhibit a difference less than 3.40% in the maximum peak relative angles of the joints in the sagittal plane during treadmill gait compared to the commercial OptiTrack system for velocities less or equal than 1.90 \({\text{m}}\bullet {{\text{s}}}^{-1}\). However, the low capture rate of the smartphone device, combined with the lack of inherent redundancy, resulted in markers that moved at a higher relative speed during the gait cycles, experienced deformation during optical capture, generated noise in the estimation of the x-y trajectories, as shown in Figure (right), and consequently in the calculation of the relative angle of the ankle (θ3). To overcome these limitations, smartphones with higher capture rates and a greater number of strategically placed devices can be used to provide optical redundancy, thereby enabling more precise and reliable estimations. Additionally, this low-cost smartphone-based motion capture system is expected to be accurate and cost-effective, which can easily be achieved owing to the increasing technical specifications of this technology and its easy accessibility. The findings of this study establish a smartphone-based motion capture system as a precise, reliable, and cost-effective technology for the study and assessment of human gait. In addition, although these marker-based systems utilize paid software (e.g., MATLAB) for some of the data processing, further steps are necessary to implement these processing steps in free software (e.g., Python) without the need for coding to facilitate usage by practitioners. In this regard, the integration of real-time analysis is expected to provide an important tool for professionals and researchers, which can be achieved using recent tools focused on real-time pose estimation [58].

Data Availability

Informed consent was obtained from all study participants according to the guidelines of the ethics committee of the Universidad del Valle. The raw and time-normalized marker-based with OptiTrack and Smartphone kinematic data, as well as the test video, are available from the Mendeley Data at DOI:https://doi.org/10.17632/hncgbssmdb.1

References

Moeslund TB, Granum E (2001) A survey of computer vision-based human motion capture. Comput Vis Image Underst. 81(3):231–268. https://doi.org/10.1006/cviu.2000.0897

Guerra-filho GB (2005) Optical motion capture: Theory and implementation. J. Theor Appl Informatics 12:61–89

Sharma S, Verma S, Kumar M, Sharma L (2019) Use of Motion Capture in 3D Animation: Motion Capture Systems, Challenges, and Recent Trends. In: Proc. Int. Conf. Mach. Learn. Big Data, Cloud Parallel Comput. Trends, Prespectives Prospect. Com, pp. 289–294, https://doi.org/10.1109/COMITCon.2019.8862448

Bilesan A et al (2018) Marker-based motion tracking using Microsoft Kinect. IFAC-PapersOnLine 51(22):399–404. https://doi.org/10.1016/j.ifacol.2018.11.575

D. M. Gavrila (1999) Human Motion Recognition. vol. 73, no. 1, pp. 82–98

Tsun MTK, Lau BT, Jo HS, Lau SL (2015) “A human orientation tracking system using Template Matching and active Infrared marker”, 2015 Int. Conf Smart Sensors Appl ICSSA 2015:116–121. https://doi.org/10.1109/ICSSA.2015.7322522

Moeslund TB, Hilton A, Krüger V (2006) A survey of advances in vision-based human motion capture and analysis. Comput Vis Image Underst 104(2-3):90–126 https://doi.org/10.1016/j.cviu.2006.08.002

Schacter DS, Donnici M, Nuger E, Mackay M, Benhabib B (2014) A multi-camera active-vision system for deformable-object-motion capture. J Intell Robot Syst Theory Appl 75(3–4):413–441. https://doi.org/10.1007/s10846-013-9961-0

Cheung KM, Baker S, Kanade T (2005) Shape-from-silhouette across time part II: Applications to human modeling and markerless motion tracking. Int J Comput Vis 63(3):225–245. https://doi.org/10.1007/s11263-005-6879-4

Connie T, Aderinola TB, Ong TS, Goh MKO, Erfianto B, Purnama B (2022) Pose-Based Gait Analysis for Diagnosis of Parkinson’s Disease. Algorithms 15(12). https://doi.org/10.3390/a15120474.

Qiu S et al (2022) Sensor network oriented human motion capture via wearable intelligent system. Int J Intell Syst 37(2):1646–1673. https://doi.org/10.1002/int.22689

Zhang P, Li Y, Zhuang Y, Kuang J, Niu X, Chen R (2023) Multi-level information fusion with motion constraints: Key to achieve high-precision gait analysis using low-cost inertial sensors. Inf Fusion 89:603–618. https://doi.org/10.1016/j.inffus.2022.09.009

Zheng F, Shao L, Racic V, Brownjohn J (2016) Measuring human-induced vibrations of civil engineering structures via vision-based motion tracking. Meas J Int Meas Confed 83:44–56. https://doi.org/10.1016/j.measurement.2016.01.015

Bocian M, Macdonald JHG, Burn JF, Redmill D (2015) Experimental identification of the behaviour of and lateral forces from freely-walking pedestrians on laterally oscillating structures in a virtual reality environment. Eng Struct 105:62–76. https://doi.org/10.1016/j.engstruct.2015.09.043

Racic V, Pavic A, Brownjohn JMW (2009) Experimental identification and analytical modelling of human walking forces Literature review. J Sound Vib 326(1–2):1–49. https://doi.org/10.1016/j.jsv.2009.04.020

Racic V, Pavic A, Brownjohn JMW (2013) Modern facilities for experimental measurement of dynamic loads induced by humans: A literature review. Shock Vib 20(1):53–67. https://doi.org/10.3233/SAV-2012-0727

Carroll SP, Owen JS, Hussein MFM (2013) Reproduction of lateral ground reaction forces from visual marker data and analysis of balance response while walking on a laterally oscillating deck. Eng Struct. 49:1034–1047. https://doi.org/10.1016/j.engstruct.2012.12.028

Racic V, Brownjohn JMW, Pavic A (2010) Reproduction and application of human bouncing and jumping forces from visual marker data. J Sound Vib. 329(16):3397–3416. https://doi.org/10.1016/j.jsv.2010.02.021

Van Hooren B, Pecasse N, Meijer K, Essers JMN (2023) The accuracy of markerless motion capture combined with computer vision techniques for measuring running kinematics. Scand J Med Sci Sport 966–978. https://doi.org/10.1111/sms.14319

Menolotto M, Komaris DS, Tedesco S, O’flynn B, Walsh M (2020) Motion capture technology in industrial applications A systematic review. Sensors (Switzerland) 20(19):1–25 https://doi.org/10.3390/s20195687.

Zago M, Luzzago M, Marangoni T, De Cecco M, Tarabini M, Galli M (2020) 3D Tracking of Human Motion Using Visual Skeletonization and Stereoscopic Vision. Front Bioeng Biotechnol 8:1–11. https://doi.org/10.3389/fbioe.2020.00181

Wang L, Li Y, Xiong F, Zhang W (2021) Gait recognition using optical motion capture: A decision fusion based method. Sensors 21(10):1–17. https://doi.org/10.3390/s21103496

Vox JP et al (2021) An evaluation of motion trackers with virtual reality sensor technology in comparison to a marker-based motion capture system based on joint angles for ergonomic risk assessment. Sensors 21(9):1–32. https://doi.org/10.3390/s21093145

Guess TM, Bliss R, Hall JB, Kiselica AM (2022) Comparison of Azure Kinect overground gait spatiotemporal parameters to marker based optical motion capture. Gait Posture 96:130–136. https://doi.org/10.1016/j.gaitpost.2022.05.021

Kanko RM, Laende EK, Davis EM, Selbie WS, Deluzio KJ (2021) Concurrent assessment of gait kinematics using marker-based and markerless motion capture. J Biomech 127:110665. https://doi.org/10.1016/j.jbiomech.2021.110665

Mündermann L, Corazza S, Andriacchi TP (2006) The evolution of methods for the capture of human movement leading to markerless motion capture for biomechanical applications. J. Neuroeng Rehabil. 3:1–11. https://doi.org/10.1186/1743-0003-3-6

Choo CZY, Chow JY, Komar J (2022) Validation of the Perception Neuron system for full-body motion capture. PLoS One 17:1–18. https://doi.org/10.1371/journal.pone.0262730

Abhayasinghe N, Murray I, Bidabadi SS (2019) Validation of thigh angle estimation using inertial measurement unit data against optical motion capture systems. Sensors (Switzerland) 19(3) https://doi.org/10.3390/s19030596

Windolf M, Götzen N, Morlock M (2008) Systematic accuracy and precision analysis of video motion capturing systems-exemplified on the Vicon-460 system. J Biomech 41(12):2776–2780. https://doi.org/10.1016/j.jbiomech.2008.06.024

Mella DA, Brevis W, Higham JE, Racic V, Susmel L (2019) Image-based tracking technique assessment and application to a fluid–structure interaction experiment. Proc Inst Mech Eng Part C J Mech Eng Sci 233(16):5724–5734 https://doi.org/10.1177/0954406219853852

Yeo SS, Park GY (2020) Accuracy Verification of Spatio-Temporal and. Sensors 20:1343

Cannavo A, Prattico FG, Bruno A, Lamberti F (2023) AR-MoCap: Using Augmented Reality to Support Motion Capture Acting. Proc. - 2023 IEEE Conf. Virtual Real. 3D User Interfaces, VR 2023, pp. 318–327. https://doi.org/10.1109/VR55154.2023.00047.

Žlajpah L, Petrič T (2023) Kinematic calibration for collaborative robots on a mobile platform using motion capture system. Robot Comput Integr Manuf 79:102446. https://doi.org/10.1016/j.rcim.2022.102446

Wu Y, Tao K, Chen Q, Tian Y, Sun L (2022) A Comprehensive Analysis of the Validity and Reliability of the Perception Neuron Studio for Upper-Body Motion Capture. Sensors 22(18) https://doi.org/10.3390/s22186954

Rosa-Clot M and Tina GM (2020) Tracking Systems. Float. PV Plants, 79–87, https://doi.org/10.1016/B978-0-12-817061-8.00007-5

Schepelmann A, Gerdts S (2022) Characterization of infrared optical motion tracking system in NASA's simulated lunar operations (SLOPE) laboratory (No. E-20035)

Bilesan A, Komizunai S, Tsujita T, Konno A (2021) Improved 3D Human Motion Capture Using Kinect Skeleton and Depth Sensor. J Robot Mechatronics 33(6):1408–1422 https://doi.org/10.20965/jrm.2021.p1408

Furtado JS, Liu HH, Lai G, Lacheray H, Desouza-Coelho J (2019) Comparative analysis of optitrack motion capture systems. In: Advances in Motion Sensing and Control for Robotic Applications: Selected Papers from the Symposium on Mechatronics, Robotics, and Control (SMRC’18)-CSME International Congress 2018, May 27-30, 2018 Toronto, Canada. Springer International Publishing, pp 15–31

Feng M et al (2019) Measurements of cervical range of motion using an optical motion capture system: Repeatability and validity. Exp Ther Med 4193–4202, https://doi.org/10.3892/etm.2019.8105

Ortiz AR, Blachowski B, Holobut P, Franco JM, Marulanda J, Thomson P (2017) Modeling and Measurement of a Pedestrian’s Center-of-Mass Trajectory. 2:159–167 https://doi.org/10.1007/978-3-319-54777-0

Franco JM, Caicedo JM, Marulanda J, Sutton M, Thomson P (2019) RGB-D-DIC technique for low-cost 3D displacement fields measurements. Eng Struct 197. https://doi.org/10.1016/j.engstruct.2019.109457

Franco JM, Mayag BM, Marulanda J, Thomson P (2017) Static and dynamic displacement measurements of structural elements using low cost RGB-D cameras. Eng Struct 153:97–105. https://doi.org/10.1016/j.engstruct.2017.10.018

Steinebach T, Grosse EH, Glock CH, Wakula J, Lunin A (2020) Accuracy evaluation of two markerless motion capture systems for measurement of upper extremities: Kinect V2 and Captiv. Hum Factors Ergon Manuf 30(4):291–302. https://doi.org/10.1002/hfm.20840

Lannan N, Zhou L, Fan G (2022) A Multiview Depth-based Motion Capture Benchmark Dataset for Human Motion Denoising and Enhancement Research. IEEE Comput Soc Conf Comput Vis Pattern Recognit Work 2022:426–435. https://doi.org/10.1109/CVPRW56347.2022.00058.

Nakano N et al (2020) Evaluation of 3D Markerless Motion Capture Accuracy Using OpenPose with Multiple Video Cameras. Front Sport Act Living 2:1–9. https://doi.org/10.3389/fspor.2020.00050

Wai CY, Ngali MZB (2021) The biomechanics analysis: development of biomechanics analysis algorithm with openpose motion capture system. Research Progress in Mechanical and Manufacturing Engineering 2(2):658–668

Solichah U, Purnomo MH, Yuniarno EM (2020) Marker-less Motion Capture Based on Openpose Model Using Triangulation. In: Proc. - 2020 Int. Semin. Intell. Technol. Its Appl. Humanification Reliab. Intell. Syst. ISITIA 2020, pp. 217–222, https://doi.org/10.1109/ISITIA49792.2020.9163662.

Takeda I, Yamada A, Onodera H (2021) Artificial Intelligence-Assisted motion capture for medical applications: a comparative study between markerless and passive marker motion capture. Comput Methods Biomech Biomed Engin 24(8):864–873. https://doi.org/10.1080/10255842.2020.1856372

Gan S, Zhang X, Zhuge S, Ning C, Zhong L, Li Y (2023) A Multi-View Vision System for Astronaut Postural Reconstruction with Self-Calibration. Aerospace 10(3):298. https://doi.org/10.3390/aerospace10030298

Huang L, Liu G (2022) Functional motion detection based on artificial intelligence. J. Supercomput. 78(3):4290–4329. https://doi.org/10.1007/s11227-021-04037-3

Mrad Y, Elloumi Y, Akil M, Bedoui MH (2022) A Fast and Accurate Method for Glaucoma Screening from Smartphone-Captured Fundus Images. Irbm 43(4):279–289. https://doi.org/10.1016/j.irbm.2021.06.004

Fajrianti ED et al (2022) Application of Augmented Intelligence Technology with Human Body Tracking for Human Anatomy Education. Int J Inf Educ Technol 12(6):476–484. https://doi.org/10.18178/ijiet.2022.12.6.1644

Uhlrich SD et al (2022) OpenCap: 3D human movement dynamics from smartphone videos. bioRxiv, p 2022.07.07.499061

Yoo M et al (2022) Motion Estimation and Hand Gesture Recognition-Based Human–UAV Interaction Approach in Real Time. Sensors 22(7). https://doi.org/10.3390/s22072513

Tang S et al (2022) A smartphone-integrated optical sensing platform based on Lycium ruthenicum derived carbon dots for real-time detection of Ag+. Sci Total Environ 825:153913. https://doi.org/10.1016/j.scitotenv.2022.153913

Martinez-Alpiste I, Golcarenarenji G, Wang Q, Alcaraz-Calero JM (2022) Smartphone-based real-time object recognition architecture for portable and constrained systems. J Real-Time Image Process. 19(1):103–115. https://doi.org/10.1007/s11554-021-01164-1

Wang N, Zhao X, Zhao P, Zhang Y, Zou Z, Ou J (2019) Automatic damage detection of historic masonry buildings based on mobile deep learning. Autom Constr 103:53–66. https://doi.org/10.1016/j.autcon.2019.03.003

Kane GA, Lopes G, Saunders JL, Mathis A, Mathis MW (2020) Real-time, low-latency closed-loop feedback using markerless posture tracking. Elife 9:1–29. https://doi.org/10.7554/ELIFE.61909

Thio SK, Bae SW, Park SY (2022) Lab on a smartphone (LOS): A smartphone-integrated, plasmonic-enhanced optoelectrowetting (OEW) platform for on-chip water quality monitoring through LAMP assays. Sensors Actuators B Chem 358:131543. https://doi.org/10.1016/j.snb.2022.131543

Bouguet JY (2012) Camera calibration toolbox for matlab. http://www.vision.caltech.edu/bouguetj/calib_doc/index.html

Ricciardelli F, Mafrici M, Ingólfsson ET (2014) Lateral Pedestrian-Induced Vibrations of Footbridges: Characteristics of Walking Forces. J Bridg Eng 19(9). https://doi.org/10.1061/(asce)be.1943-5592.0000597.

Ren L, Jones RK, Howard D (2007) Predictive modelling of human walking over a complete gait cycle. J Biomech 40(7):1567–1574. https://doi.org/10.1016/j.jbiomech.2006.07.017

Okahisa T et al (2023) Between-day reliability and minimum detectable change of the Conventional Gait Model 2 and Plug-in Gait Model during running. Gait Posture 100:171–178 https://doi.org/10.1016/j.gaitpost.2022.12.006

Bramah C, Preece SJ, Gill N, Herrington L (2021) The between-day repeatability, standard error of measurement and minimal detectable change for discrete kinematic parameters during treadmill running. Gait Posture 85:211–216. https://doi.org/10.1016/j.gaitpost.2020.12.032

Noehren B, Manal K, Davis I (2010) Improving between-day kinematic reliability using a marker placement device. J Orthop Res 28(11):1405–1410. https://doi.org/10.1002/jor.21172

Yongli He RL (2020) Ac ce d M us pt. 2D Mater 0–6. https://doi.org/10.1088/2053-1583/abe778

Needham L et al (2021) The accuracy of several pose estimation methods for 3D joint centre localisation. Sci Rep 11(1):1–11. https://doi.org/10.1038/s41598-021-00212-x

Pagnon D, Domalain M, Reveret L (2022) Pose2Sim: An open-source Python package for multiview markerless kinematics. J Open Source Softw 7(77):4362 https://doi.org/10.21105/joss.04362

Slembrouck M, Luong H, Gerlo J, Schütte K, Van Cauwelaert D, De Clercq D, ... Philips W (2020) Multiview 3D markerless human pose estimation from openpose skeletons. In: Advanced Concepts for Intelligent Vision Systems: 20th International Conference, ACIVS 2020, Auckland, New Zealand, February 10–14, 2020, Proceedings 20. Springer International Publishing, pp 166–178

Acknowledgments

This work is part of the research project: Real-time hybrid simulations: a reliable, fast, and economical alternative for the evaluation of resilient structures, under the program: Emerging technologies for the mitigation of seismic risk in civil infrastructure, CT 463-2020. The authors would like to express their gratitude to the Universidad del Valle and the Ministerio de Ciencia Tecnología e Innovación (Minciencias), entities that financed the project.

Funding

Open Access funding provided by Colombia Consortium.

Author information

Authors and Affiliations

Contributions

All authors contributed to this research and discussed the results and reviews during all phases of the research paper. All authors read and accepted the published version of the research paper.

Corresponding author

Ethics declarations

Competing Interest

The authors declare that they have no know competing financial interest or personal relationships that could have appeared to influence the work reported in this paper.

Institutional Review Board

This study was approved by the ethics committee of the Universidad del Valle (Acta de Aval No.011-2023); in addition, all participants signed an informed consent form before the measurements.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Castillo, B., Riascos, C., Franco, J.M. et al. Assessing Spatiotemporal Behavior of Human Gait: A Comparative Study Between Low-Cost Smartphone-Based Mocap and OptiTrack Systems. Exp Tech (2024). https://doi.org/10.1007/s40799-024-00716-x

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s40799-024-00716-x