Abstract

Brain tumor segmentation is one of the most challenging problems in medical image analysis. The goal of brain tumor segmentation is to generate accurate delineation of brain tumor regions. In recent years, deep learning methods have shown promising performance in solving various computer vision problems, such as image classification, object detection and semantic segmentation. A number of deep learning based methods have been applied to brain tumor segmentation and achieved promising results. Considering the remarkable breakthroughs made by state-of-the-art technologies, we provide this survey with a comprehensive study of recently developed deep learning based brain tumor segmentation techniques. More than 150 scientific papers are selected and discussed in this survey, extensively covering technical aspects such as network architecture design, segmentation under imbalanced conditions, and multi-modality processes. We also provide insightful discussions for future development directions.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Medical imaging analysis has been commonly involved in basic medical research and clinical treatment, e.g. computer-aided diagnosis [34], medical robots [126] and image-based applications [84]. Medical image analysis provides useful guidance for medical professionals to understand diseases and investigate clinical challenges in order to improve health-care quality. Among various tasks in medical image analysis, brain tumor segmentation has attracted much attention in the research community, which has been continuously studied (illustrated in Fig. 1a). In spite of tireless efforts of researchers, as a key challenge, accurate brain tumor segmentation still remains to be solved, due to various challenges such as location uncertainty, morphological uncertainty, low contrast imaging, annotation bias and data imbalance. With the promising performance made by powerful deep learning methods, a number of deep learning based methods have been applied upon brain tumor segmentation to extract feature representations automatically and achieve accurate and stable performance as illustrated in Fig. 1b.

Growth of scientific attention on deep learning based brain tumor segmentation. a Keyword frequency map in MICCAI from 2018 to 2020. The size of the keyword is proportional to the frequency of the word. We observe that ‘brain’, ‘tumor’, ‘segmentation’, and ‘deep learning’, have drawn large research interests in the community. b Blue line represents the number of deep learning based solutions in The Multimodal Brain Tumor Segmentation Challenge (BraTS) in each year. Red line represents the Top-1 whole tumor dice score of the test set in each year. Researchers shift their interests to deep learning based segmentation methods due to the powerful feature learning ability and systematic performance due to deep learning techniques since 2012 (green dashed line). Best viewed in colors

Glioma is one of the most primary brain tumors that stems from glial cells. World Health Organization (WHO) reports that glioma can be graded into four different levels based on microscopic images and tumor behaviors [92]. Grade I and II are Low-Grade-Gliomas (LGGs) which are close to benign with slow-growing pace. Grade III and IV are High-Grade-Gliomas (HGGs) which are cancerous and aggressive. Magnetic Resonance Imaging (MRI) is one of the most common imaging methods used before and after surgery, aiming at providing fundamental information for the treatment plan.

Image segmentation plays an active role in gliomas diagnosis and treatment. For example, an accurate glioma segmentation mask may help surgery planning, postoperative observations and improve the survival rate [6, 7, 94]. To quantify the outcome of image segmentation, we define the task of brain tumor segmentation as follows: Given an input image from one or multiple image modality (e.g. multiple MRI sequences), the system aims to automatically segment the tumor area from the normal tissues by classifying each voxel or pixel of the input data into a pre-set tumor region category. Finally, the system returns the segmentation map of the corresponding input. Figure 2 shows one exemplar HGG case with a multi-modality MRI as input and corresponding ground truth segmentation map.

Exemplar input dataset with different MRI modalities and corresponding ground truth segmentation map. Each frame represents a unique MRI modality. The last frame on the right is the ground truth with corresponding manual segmentation annotation. Different colors represent different tumor sub-regions, i.e., gadolinium (GD) enhancing tumor (green), peritumoral edema (yellow) and necrotic and non-enhancing tumor core (NCR/ECT) (red)

Difference from previous surveys

A number of notable brain tumor segmentation surveys have been published in the last few years. We present recent relevant surveys with details and highlights in Table 1. Among them, the closest survey papers to ours are presented by Ghaffari et al. [44], Biratu et al. [11] and Magadza et al. [93]. The authors in [11, 44, 93] covered a majority of solutions from BraTS2012 to BraTS2018 challenges, lacking, however, an analyses based on methodology category and highlights. Two recent surveys by Kapoor et al. [71] and Hameurlaine et al. [51] also focused on the overview of classic brain tumor segmentation methods. However, both of them lacked the technical analysis and discussion of deep learning based segmentation methods. A survey of early state-of-the-art brain tumor segmentation methods before 2013 was presented in [49], where most of the proposals before 2013 combined conventional machine learning models with hand-crafted features. Liu et al. [86] reported a survey on MRI based brain tumor segmentation in 2014. This survey does not include deep learning based methods as well. Nalepa et al. [98] analyzed the technical details and impacts of different kinds of data augmentation methods with the application to brain tumor segmentation, while ours focuses on the technical analysis of deep learning based brain tumor segmentation methods.

There is a number of representative survey papers published with similar topics in recent years. Litjens et al. [84] summarized recent medical image analysis applications with deep learning techniques. This survey gave a broad study on medical image analysis including several state-of-the-art deep learning based brain tumor segmentation methods before 2017. Bernal et al. [9] reported a review focusing on the use of deep convolutional neural networks for brain image analysis. This review only highlights the application of deep convolutional neural networks. Other important learning strategies such as segmentation under imbalance condition and learning from multi-modality were not mentioned. Akkus et al. [2] presented a survey on deep learning for brain MRI segmentation. Recently, Esteva et al. [40] presented a survey on deep learning for health-care applications. This survey summarized how deep learning and generalized methods promote health-care applications. For a broader view of object detection and semantic segmentation, a survey was recently published in [87], providing the implications on object detection and semantic segmentation.

Narrowly speaking, the word “deep learning” means using deep neural network models with stacked functional layers [48]. Neural networks are able to learn high dimensional hierarchical features and approximate any continuous functions [83, 135]. Considering the achievements and recent advances of deep neural networks, several surveys have reported the developed deep learning techniques, such as [50, 77].

Scope of this survey

Challenges in segmentation of brain glioma tumors. a Shows glioma tumor exemplars with various sizes and locations inside the brain. b, c Show the statistical information of the training set in the multimodal brain tumor segmentation challenge 2017 (BraTS2017). The left hand side of b shows the FLAIR and T2 intensity projection, and the right hand side shows the T1ce and T1 intensity projection. c is the pie chart of the training data with labels, where the top figure shows the HGG labels while the bottom figure shows the LGG labels. We here experience region and label imbalance problems. Best viewed in colors

In this survey, we have collected and summarized the research studies reported on over one hundred scientific papers. We have examined major journals in the scientific community such as Medical Image Analysis and IEEE Transactions on Medical Imaging. We also evaluated proceedings of major conferences, such as ISBI, MICCAI, IPMI, MIDL, CVPR, ECCV and ICCV, to retain frontier medical imaging research outcomes. We reviewed annual challenges and their related competition entries such as The Multimodal Brain Tumor Segmentation Challenge (BraTS). In addition, some pre-printed versions of the established methods are also included as a source of information.

The goal of this survey is to present a comprehensive technical review of deep learning based brain tumor segmentation methods, according to architectural categories and strategy comparisons. We wish to explore how different architectures affect the segmentation performance of deep neural networks and how different learning strategies can be further improved for various challenges in brain tumor segmentation. We cover diverse high level inspects, including effective architecture design, imbalance segmentation and multi-modality process. The taxonomy of this survey is made (Fig. 5) such that our categorization can help the reader to understand the technical similarities and differences between segmentation methods. The proposed taxonomy may also enable the reader to identify open challenges and future research directions.

We first present the background information of deep learning based brain tumor segmentation in “Background” section and the rest of this survey is organized as follows: In “Designing effective segmentation networks” section, we review the design paradigm of effective segmentation modules and network architectures. In “Segmentation under imbalanced condition” section, we categorize, explore and compare the solutions for tackling the data imbalance issue, which is a long-standing problem in brain tumor segmentation. As multi-modality provides promising solutions towards accurate brain tumor segmentation, we finally review the methods of utilizing multi-modality information in “Utilizing multi modality information” section. We conclude this paper in “Conclusion” section. We also build up a regularly maintained project page to accommodate the updates related to this survey.Footnote 1

Background

Research challenges

Despite significant progress that has been made in brain tumor segmentation, state-of-the-art deep learning methods still experience difficulties with several challenges to be solved. The challenges associated with brain tumor segmentation can be categorized as follows:

-

1.

Location uncertainty Glioma is mutated from gluey cells which surround nerve cells. Due to the wide spatial distribution of gluey cells, either High-Grade Glioma (HGG) or Low-Grade Glioma (LGG) may appear at any location inside the brain.

-

2.

Morphological uncertainty Different from a rigid object, the morphology, e.g. shape and size, of different brain tumors varies with large uncertainty. As the external layer of a brain tumor, edema tissues show different fluid structures, which barely provide any prior information for describing the tumor’s shapes. The sub-regions of a tumor may also vary in shape and size.

-

3.

Low contrast High resolution and high contrast images are expected to contain diverse image information [88]. Due to the image projection and tomography process, MRI images may be of low quality and low contrast. The boundary between biological tissues tends to be blurred and hard to detect. Cells near the boundary are hard to be classified, which makes precise segmentation more difficult and harder to achieve.

-

4.

Annotation bias Manual annotation highly depends on individual experience, which can introduce an annotation bias during data labeling. As shown in Fig. 3 (a), it seems that some annotations tend to connect all the small regions together while the other annotations can label individual voxels precisely. The annotation biases have a huge impact on the segmentation algorithm during the learning process [28].

-

5.

Imbalanced issue As shown in Fig. 3b, c, there exists an imbalanced number of voxels in different tumor regions. For example, the necrotic/non-enhancing tumor core (NCR/ECT) region is much smaller than the other two regions. The imbalanced issue affects the data-driven learning algorithm as the extracted features may be highly influenced by large tumor regions [15].

Progress in the past decades

Representative research milestones of brain tumor segmentation are shown in Fig. 4. In the late 90s’, researchers like Zhu et al. [158] started to use a Hopfield Neural Network with active contours to extract the tumor boundary and dilate the tumor region. However, training a neural network was highly constrained due to the computational resource limitation and technical supporting. From late 90s’ to early 20s’, most of the brain tumor segmentation methods focused on traditional machine learning algorithms with hand-crafted features, such as expert systems with multi-spectral histogram [32], segmentation with templates [72, 108], graphical models with intensity histograms [33, 133], tumor boundary detection from latent atlas [95]. These early works pioneered the use of machine learning in solving brain tumor segmentation problems. However, early attempts have significant shortcomings. First, most of the early works only focused on the segmentation of the whole tumor region, that is, the segmentation result has only one category. Compared with recent brain tumor segmentation algorithms, early works are formulated with strong conditions, relying on unrealistic assumptions. Second, manually designed feature engineering is constrained by prior knowledge, which cannot be fully generalized. Last but not least, early research works fail to address some challenges such as appearance uncertainty and data imbalance.

With the revolutionary breakthrough by deep learning technology [74], researchers began to focus on using deep neural networks to solve various practical problems. Pioneering works from Zikic et al. [160], Havaei et al. [52] and Pereira et al. [106] intend to design customized deep convolutional neural network (DCNN) to achieve accurate brain tumor segmentation. With breakthrough brought by Fully Convolutional Network (FCN) [90] and U-Net [111], later innovations [59, 147] on brain tumor segmentation focus on building fully convolutional encoder-decoder networks without fully connected layers to achieve end-to-end tumor segmentation.

A long-standing challenge in brain tumor segmentation is data imbalance. To effectively deal with the imbalance problem, researchers try different solutions, such as network cascade and ensemble [64, 67, 130], multi-task learning [97, 150], and customized loss functions [120]. Another solution is to fully utilize information from multi-modality. Recent research focused on modality fusion [142] and dealing with modality missing [152].

Based on the evolution, we generally categorize the existing deep learning based brain tumor segmentation methods into three categories, i.e., methods with effective architectures, methods for dealing with imbalanced condition and methods of utilizing multi-modality information. Figure 5 shows a taxonomy of the research work in deep learning based brain tumor segmentation.

Related problems

There are a number of unsolved problems that relates to brain tumor segmentation. Brain tissue segmentation or anatomical brain segmentation aims to label each unit with a unique brain tissue class. Their task assumes that the brain image does not contain any tumor tissue or other anomalies [12, 102]. The goal of white matter lesion segmentation is to segment the white matter lesion from the normal tissue. In their task, the white matter lesion does not contain sub-regions such as tumor cores, where segmentation may be achieved through binary classification methods. Tumor detection aims to detect abnormal tumors or lesion and reports the predicted class of each tissue. Generally, this task has the bounding box as the detection result and the label as the classification result [37, 38, 46]. It is worth mentioning that some research methods in brain tumor segmentation only return the single label segmentation mask or the center point of the tumor core without sub-region segmentation. In our paper, we focus on tumor segmentation with sub-region level semantic segmentation as the main topic. Disorder classification is to extract pre-defined features from brain scan images and then classify feature representations into graded disorders such as High-Grade-Gliomas (HGGs) vs Low-Grade-Gliomas (LGGs), Mild Cognitive Impairment (MCI) [122], Alzheimer’s Disease (AD) [121] and Schizophrenia [107]. Survival Prediction identifies tumors’ patterns and activities [136] in order to predict the survival rate as a supplementary to clinical diagnosis [16]. Both disorder classification and survival prediction can be regarded as down-stream tasks, based on the tumor segmentation outcomes.

Contributions of this survey

A large number of deep learning based brain tumor segmentation methods have been published with promising results. Our paper, as a platform, provides a comprehensive and critical survey of state-of-the-art brain tumor segmentation methods. We anticipate that this survey supplies useful guidelines and coherent technical insights to academia and industry. The major contributions of this survey can be summarized as follows:

-

1.

We present a comprehensive review to categorize and outline deep learning based brain tumor segmentation methods with a structured taxonomy of various important technical innovation perspectives.

-

2.

We present the reader with a summarization of research progress of deep learning base brain tumor segmentation with detailed background information and system comparisons (e.g. Tables 1, 5).

-

3.

We carefully and extensively compares existing methods based on results from public accessible challenges and datasets (e.g. Tables 2, 3, 4), with critical summaries and insightful discussions.

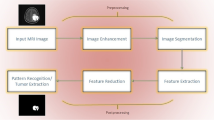

Designing effective segmentation networks

Compared with complex feature engineering pipelines to extract useful features, recent deep learning mainly relies on designing effective deep neural networks to automatically extract high-dimensional discriminative features. Designing effective modules and network architectures has become one of the most important factors for achieving accurate segmentation performance. In this section, we reviewed two important design guidelines for deep learning based brain tumor segmentation: designing effective modules and designing network architecture.

There are mainly two principles to follow when designing effective modules. One is to learn high level semantics and localize precious targets, through the enlargement of the receptive field [81, 91, 144], attention mechanism [60, 131, 150] feature fusion update [85, 154] and other forms. The other way is to reduce the amount of the network parameters and speed up during training and inference, thereby saving computational time and resources [4, 14, 22, 29, 105, 117, 117].

The design of the network architecture is mainly reflected in the transition from a single-channel network to a multi-channel network, from a network with fully connected layers to a fully convolutional network, from a simple network to a deep cascaded network. The purpose is to deepen the network, enhance the feature learning ability of the network and completes more precise segmentation. In the following, we divide theses methods and review them comprehensively. A systematical comparison between various network architectures and modules is shown in Fig. 6.

Structural comparison between representative methods based on designing effective network modules and architectures. From top-left to bottom-right: (a1) CNN in [160], (b1) CNN with (b2) residual convolution module [19], (c1) CNN with (c2) full resolution residual unit [66], (d1) CNN with (d2) dense connection module [114], (e1) CNN with (e2) residual dilation block [91], (f1) CNN with (f2) atrous convolution feature pyramid module [155], (g1) FCN with (g2) multi-fiber unit [22], (h1) FCN with (h2) reversible block [14] and (i1) FCN with (i2) modality fusion module [153]. Best viewed in colors

Designing specialized modules

Modules for higher accuracy

Numerous methods for brain tumor segmentation focus on designing effective modules inside neural networks, aiming to stabilize training, learning informative, discriminative features for accurate segmentation. Early design attempts followed the pattern of well-known networks such as AlexNet [74] and gradually increased the network depth by stacking convolutional blocks. Early research works such as [39, 110, 147] stacked several blocks with convolutional layers composed of a large kernel size (typically greater than 5), pooling layers and activation layers together. Blocks with a large size convolution kernel enable us to capture details with a large number of parameters to be trained. Other research works such as [106, 160] followed the pattern of VGG [119] to build convolutional layers with a small sized kernel (typically 3) as basic block. Further research work such as [52] stacked hybrid blocks with a combination of different kernel sizes, where large sized kernels tend to find global features (such as tumor location and size) with a large receptive field and small kernels tend to contain local features (such as boundary and texture) with a small receptive field. As stacking two \(3\times 3\) convolutional layers leads to equal sized reception fields while maintaining less parameters, compared with a single \(5\times 5\) layer, most recent tumor segmentation works constructed basic network blocks, based on stacking \(3\times 3\) layers, and started to extend to volumetric reconstruction in MRI with \(3\times 3\times 3\) kernels [18, 62].

As the number of stacked layers increases, the network is getting deeper, causing the issue of gradient explosion and vanishing during the training process. In order to stabilize system training and reach higher segmentation accuracy, early brain tumor segmentation methods such as [21] and [19] followed ResNet [53] and introduced residual connection into module design. Residual connection helps solving the problem of gradient vanishing and explosion, by adding the input of a convolution module to its output, which avoids degradation and converges faster with better accuracy. Now, residual connection has become one of the standard operations for designing modules and complex network architectures. In the following works [45, 114, 132, 156], the authors followed DenseNet [57] and expanded residual connection to dense connection. Although dense connection design looks more conducive to gradient back-propagation, the complex close connection structure can cause multiple usage of the computing memory during the network training.

By stacking convolution modules and using residual connections inside and outside modules, neural networks can be deeper and features can be learnt with higher dimensions. However, this process may lead to the sacrifice of spatial resolution. The resolution of high dimensional feature maps is much smaller than that of the original data. In order to preserve the spatial resolution of feature whilst still expanding the receptive field, [81, 91, 144] replaced the standard convolution layer with the dilated convolution layer [139]. The dilated convolution comes up with several benefits. First, dilation convolution enlarges the receptive field without introducing additional parameters. Larger receptive fields are helpful for segmenting large-area targets, such as edema. Second, dilated convolution avoids the loss of spatial resolution. Thus, the position of the object to be segmented can be accurately localized in the original input space. However, the problem of incorrect localization and segmentation of small structures remains to be solved. In response, [30] proposed to design a multi-scale dilation convolution or atrous spatial pyramid pooling module, capturing the semantic context that describes subtle details of the object.

Modules for efficient computation

Designing and stacking complex modules help effectively learn high-dimensional discriminative features and achieve precise segmentation, but it requires high computational resources and long training and inference time. In response to this request, many works have adopted lightweight ideas in module design. With similar accuracy, fewer computing resources are required by lightweight modules, training and inference time is shorter, and the speed is faster. [3] is one of the earliest research works aiming at speeding up brain tumor segmentation. The authors of [3] reordered the input data (a data sample rotated by 6-degrees) so that the samples with high visual similarity are placed closer in the memory, in an attempt to speed up I/O communication. Instead of managing the input data, [29] chose to build a U-Net variant with decreased down-sampling channels to reduce the computational cost.

The above-mentioned works used less computational resources, but lose learning information and decreased segmentation accuracy. Inspired by reversible residual network [14, 47] introduced reversible blocks into U-Net where each layer’s activation can be collected from the previous layer’s output during the backward pass process. Thus, no additional memory is used to store intermediate activation and hence reduce memory cost. [105] further extend reversible blocks by introducing Mobile Reversible Convolution Blocks (MBConvBlock) used in MobileNetV2 [112] and EfficientNet [125]. In addition to the reversible computation design, MBConvBlock replaced standard convolutions with depthwise separable convolutions. Depthwise separable convolutions first split the computation of feature maps accordingly using depthwise convolution and merge the feature maps together using \(1\times 1\times 1\) pointwise convolutions, which further reduced parameters compared with the standard convolution. Later research works, including 3DESPNet [101] and DMFNet [22], further extend this idea with dilated convolutions, requiring less computational resources while preserving most spatial resolutions.

Designing effective architectures

A major factor that promotes prosperity and development of deep neural networks in various fields is to invest efforts in designing intelligent and effective network architectures. We divide most deep learning based brain tumor segmentation networks into single/multiple path networks and encoder–decoder networks according to the characteristics of network structures. Single and multiple path networks are used to extract features and classify the center pixels of the input patch. Encoder-Decoder networks are designed in an end-to-end fashion, that is, the encoder enables deep feature to be extracted from part of or the entire image, and then the decoder conducts feature-to-segmentation mapping. In the following subsections, we conduct a systematic analysis and comparison of variant architecture designs.

Multi-path architecture

Here we refer network path as the flow of data processing (Fig. 7). Many research works, e.g. [106, 128, 160] use single path networks due to computational efficiency. Compared with single path networks, multi-path networks can extract different features from different pathways of different scales. The extracted features are combined (added or concatenated) together for further processing. A common interpretation is that a large scale path (path with a large size’s kernel or input etc.) allows networks to learn global features. Small scale’s paths (paths with a small size’s kernel or input etc.) allows networks to learn features known as local features. Similar to the functionality mentioned in the previous section, global features tend to provide global information such as tumor location, size and shape while local features provide descriptive details such as tumor texture and boundary.

The work of Havaei et al. [52] is one of the early multi-path network based solutions. The author reported a novel two pathway structure that learns local tumor information as well as global contexts. The local pathway uses a \(7\times 7\) convolution kernel and the global pathway uses a \(13\times 13\) convolution kernel. In order to utilize CNN architectures, the authors designed several variant architectures that concatenate CNN outputs. Castillo et al. [19] used a 3 pathway CNN to segment brain tumors. Different from [52] that used kernels in different scales, [19] inputs each path with different sizes’ patches e.g. patches with low (\(15\times 15\)), medium(\(17\times 17\)) and normal (\(27\times 27\)) resolutions. Thus, each path can learn specific features under the condition of different spatial resolutions. Inspired by [52], Akil et al. [1] extended the network structure with overlapping patch prediction methods, where the center of the target patch is associated with the neighboring overlapping patches.

Instead of building multi-path networks with different sizes’ kernels, other research works attempt to learn local-to-global information from the input directly. For example, Kamnitsas et al. [69] presented a dual pathway network which considers the input with different sizes’ patches, known as the normal resolution input of size \(25 \times 25 \times 25\) and the low resolution input of size \(19 \times 19 \times 19\). Different from [19], the authors in [69] applied small convolution kernels with a size of \(3 \times 3 \times 3\) on both pathways. Later research works by Zhao et al. [90] also designed a multi-scale CNN with a large scale path with the input size of \(48 \times 48\), a middle scale path with the input size of \(18 \times 18\) and a small scale path with the input size of \(12 \times 12\).

Encoder–decoder architecture

The input of the single and multiple path network for brain tumor segmentation is a patch or a certain area of the image, and the output is the classification outcome of the patch or the classification outcome of the central pixel of the input. It is very challenging to promote an accurate mapping from the patch level to the category label. First of all, the segmentation performance of single and multiple path network is easily affected by the size and quality of the input patch. A small sized input patch holds incomplete spatial information, while a large sized patch requires more computational resources. Secondly, the feature-to-label mapping is mostly conducted by the last fully connected layer. A simple fully connected layer cannot fully represent the feature space where complicated fully connected layers may overload the computer’s memory. Last but not least, this feature-to-label mapping is not of an end-to-end mode, which significantly increases the optimization cost. To tackle these problems, recent research works start to use fully convolutional network (FCN) [90] and U-Net [111] based encoder-decoder networks, establish an end-to-end fashion from the input image to the output segmentation map, and further improve the segmentation performance of networks.

Jesson et al. [62] extended standard FCN by using a multi-scale loss function. One limitation of FCN is that FCN does not explicitly model the contexts in the label domain. In [62], the FCN variant minimized the multi-scale loss by combining higher and lower resolution feature maps to model the contexts in both image and label domains. In [116], researchers proposed a boundary aware fully convolutional neural network, including two branches for up-sampling. The boundary detection branch aims to learn and model boundary information of the whole tumor as a binary classification problem. The region detection branch learns to detect and classify sub-region classes of the tumor. The outputs from the two branches are concatenated and fed to a block of two convolutional layers with a softmax classification layer.

One important mutant of FCN is U-Net [111]. U-Net consists of a contracting path to capture features and a symmetric expanding path that enables precise localization. One advantage of using U-Net, compared against traditional FCN, is the skip connections between the contracting and the expanding paths. The skip connections pass feature maps from the contracting path to the expanding path and concatenate the feature maps from the two paths directly. The original image data through skip connections can help the layers in the contracting path recover details. Several research works have been proposed for brain tumor segmentation based on U-Net. For example, Brosch et al. [13] used a fully convolutional network with skip connections to segment multiple sclerosis lesions. Isensee et al. [58] reported a modified U-Net for brain tumor segmentation, where the authors used a dice loss function and extensive data augmentation to successfully avoid over-fitting. In [35], the authors used zero padding to keep the identical output dimension for all the convolutional layers in both down-sampling and up-sampling paths. Chang et al. [21] reported a fully convolutional neural network with residual connections. Similar to skip connection, the residual connection allows both low- and high-level feature maps to contribute towards the final segmentation.

In order to extract information from the original volumetric data, Milletari et al. [96] introduced a modified 3D version of U-Net, called V-Net, with a customized dice coefficient loss function. Beers et al. [8] introduced 3D U-Nets based on sequential tasks, which uses the entire tumor ground truth as an auxiliary channel to detect enhancing tumors and tumor cores. In the post-processing stage, the authors employed two additional U-Nets that serve to enhance prediction for better classification outcomes. The input patches consist of seven channels: four anatomical MR and three label maps corresponding to the entire tumor, enhancing tumor, and tumor core.

Summary

In this section, we review and compare the work focused on module and network architecture design in brain tumor segmentation. Table 2 shows the results generated by methods focused on module and network architecture design in brain tumor segmentation. We drawn key information of these research works and list it below.

-

1.

By designing custom modules, the accuracy and speed of the network can be improved.

-

2.

By designing a customized architecture, it can help the network learn features at different scales, which is one of the most important steps to achieve accurate brain tumor segmentation.

-

3.

The design of modules and networks heavily relies on human experience. In the future, we anticipate the application of network architecture search for searching effective brain tumor segmentation architectures [5, 73, 157, 159].

-

4.

Most of the existing network architecture designs do not combine domain knowledge about brain tumor, such as modeling degree information and physically inspired morphological information within tumor segmentation network.

The structure of cascaded convolutional networks for brain tumor segmentation, modified from the original structure reported in [130]. WNet, TNet and ENet are used for segmenting the whole tumor, tumor core and enhancing tumor core, respectively

Segmentation under imbalanced condition

One of the long standing challenges for brain tumor segmentation is the data imbalance issue. As shown in Fig. 3c, imbalance is mainly reflected in the number of pixels in the sub-regions of the brain tumor. In addition, there is also an imbalance issue in patient samples, that is, the number of the HGG cases is much more than that of the LGG cases. At the same time, labeling biases introduced by manual experts can also be treated as a special form of data imbalance (different experts have different standards, resulting in imbalanced labeling results). Data imbalance plays a significant effect on learning algorithms especially deep networks. The main manifestation is that learning models trained with imbalanced data tend to learn more about the dominant groups, e.g. to learn the morphology of the edema area, and to learn HGG instead of LGG patients) [36, 65, 120].

Numerous works have presented many improvement strategies to address data imbalance. According to core components of these strategies, we divide the existing methods into three categories: multi-network driven, multi-task driven and custom loss function driven approaches.

Multi-network driven approaches

Even if complex modules and architectures have been designed to ensure the learning of high-dimensional discriminative features, a single network often suffers from the problem of data imbalance. Inspired by the methods such as multi-expert systems, people have started to construct complex network systems to effectively deal with data imbalance and achieved promising segmentation performance. Common multi-network systems can be divided into network cascade and network ensemble, according to data flows shared between multiple networks.

Network cascade

The definition of network cascade is that, in a serially connected network, the output of an upstream network is passed to the downstream network as input. This topology simulates the coarse-to-fine strategy, that is, the upstream network extracts rough information or features, and the downstream network subdivides the input and achieves a fine-grained segmentation.

The earliest work of adopting the cascade strategy was undertaken by Wang et al. [130] (Fig. 9). In their work, the author proposed to connect three networks in series. First, WNet segmented Whole Tumor, and output the segmentation result of Whole Tumor to TNet, and TNet traces Tumor Core. Finally, the segmentation result of TNet is handed over to ENet for the segmentation of Enhancing Tumor. This design logic is inspired by the attributes of the tumor sub-region, where it is assumed that Whole Tumor, Tumor Core, and Enhancing Tumor are included one by one. Therefore, the segmentation output of the upstream network is the Region-of-Interest (RoI) of the downstream network. The advantage of this practice is to avoid the interference caused by the unbalanced data. The introduction of astropic convolution and the manually cropped input effectively reduces the amount of network parameters. But there are two disadvantages: First, the segmentation effect of the downstream network is heavily dependent on the performance of the upstream network. Second, only the upstream segmentation result is considered as the input so that the downstream network cannot use other image areas as auxiliary information, which is not conducive to other tasks such as tumor location detection. Similarly, Hua et al. [56] also proposed a network cascade based on the physical inclusion characteristics of tumor. Unlike Wang et al. [56, 130] replaced the cascade unit with a V-Net, which is suitable for 3D segmentation to improve performance. Fang et al. [43] trained two networks to act as upstream networks at the same time according to different characteristics highlighted by different modalities, respectively training for Flair and T1ce. The results of the two upstream networks can be passed to the downstream network for final segmentation. Jia et al. [63] replaced upstream and downstream networks with HRNet [123] to learn feature maps with higher spatial resolutions.

Combining 3D networks for cascading can bring better segmentation performance, but the combination of multiple 3D networks requires a large amount of parameters and high computational resources. In response to this, Li [79] proposed a cascading model that mixes 2D and 3D networks. 2D networks learn from multi views slices of a volume to obtain the segmentation mask of the whole tumor. Then, the whole tumor mask and the original 3D volume are fed to the downstream 3D U-Net. The downstream network pairs tumor core and enhancing tumor for fine segmentation. Li et al. [80] also adopted a similar method by connecting multiple U-Nets in series for coarse-to-fine segmentation. The segmentation results at each stage is associated with different loss functions. Vu et al. [129] further introduced dense connection between the upstream and downstream networks to enhance feature expression. The two-stage cascaded U-Net designed by Jiang et al. [64] has been further enhanced at the output end. In addition to the single network architecture, they also tried two different segmentation modules (interpolation and deconvolution) at the output end.

In addition to cascaded coarse-to-fine segmentation, there are other attempts to introduce other auxiliary functions. Liu designed a novel strategy in [89] to pass the segmentation result of the upstream network to the downstream network. The downstream network reconstructs the original input image according to the segmentation result of the upstream network. The loss of the recovery network is also back-propagated to the upstream segmentation network, in order to help the upstream network to outline the tumor area. Cirillo et al. [31] introduced adversarial training to tumor segmentation. The generator network constitutes the upstream network, and the discriminator network is used as the downstream network to determine whether a segmentation map is from ground truth or not. Chen et al. [23] introduced left and right symmetry characteristics of the brain to the system. The added left and right similarity masks at the connection of the upstream and downstream networks can improve the robustness of network segmentation.

Network ensemble

One main drawback of using a single deep neural network is that its performance is heavily influenced by the hyper-parameter choices. This refers to a limited generalization capability of the deep neural network. Cascaded network intends to aggregate multiple networks’ output in a coarse-to-fine strategy, however downstream networks’ performance heavily relies on the upstream network, which still limits the capability of a cascaded system. In order to achieve a more robust and more generalized tumor segmentation, the segmentation output from multiple networks can be aggregated together with a high variance, known as network ensemble. Network ensemble enlarges the hypothesis space of the parameters to be trained by aggregating multiple networks and avoids falling into local optimum caused by data imbalance.

Early research works presented in multi-path network (“Multi-path architecture” section) such as Castillo et al. [19], Kamnitsas et al. [68, 69] can be regarded as a simplified form of network ensemble, where each path can be treated as a sub-network. The features extracted by the sub-network are then ensembled and processed for the final segmentation. In this section, we pay more attention to explicit ensemble of segmentation results from multiple sub-networks, rather than implicit ensemble of the features extracted by sub-paths.

Ensembles of multiple models and architectures (EMMA) [67] is one of the earliest well-structured works using ensemble deep neural networks for brain tumor segmentation. EMMA ensembles segmentation results from DeepMedic [68], FCN [90] and U-Net [111] and associated the final segmentation with the highest confidence score. Kao et al. [70] ensembles 26 neural networks for tumor segmentation and survival prediction. [70] introduced brain parcellation atlas to produce a location prior information for tumor segmentation. Lachinov et al. [75] ensembles two variant U-Net [59, 97] and a cascaded U-Net [76]. The final ensemble result out-performs each single network 1–2%.

The structure of multi-task networks for brain tumor segmentation. Image courtesy of [97]. The shared encoder learns generalized feature representation and the reconstruction decoder performs multi-task as regularization

Instead of feeding sub-networks with the same input, Zhao et al. [146] averaged ensembles 3 2D-FCNs where each FCN takes different view slices as input. Similarly, Sundaresan et al. [124] averaged ensembles 4 2D-FCNs, where each FCN is designed for segmenting a specific tumor region. Chen et al. [24] used a DeconvNet [100] to generate a primary segmentation probability map and another multi-scale convolutional label evaluation net is used to evaluate previously generated segmentation maps. False positives can be reduced using both the probability map and the original input image. Hu et al. [55] ensembles a 3D cascaded U-Net with a multi-modality fusion structure. The proposed two-level U-Net in [55] aims to outline the boundary of tumors and the patch-based deep network associates tumor voxels with predicted labels.

Ensemble can be regarded as a boosting strategy for improving final segmentation results by aggregating results from multiple homogeneous networks. The winner of the BraTS2018 [97] ensembles 10 models, which further boosted the performance with 1% on dice score compared with the best single network segmentation. Similar benefits brought by ensembling can be observed from Silva et al. [118] as well. BraTS2019 winner [64] also adopted an ensemble strategy where the final result is generated by ensembling 12 models, which slightly improves the result (around 0.6−1%) compared with the best single model’s performance.

Multi-task driven approaches

Most of the work described above only perform single-task learning, that is, design and optimize a network for precise brain tumor segmentation only. The disadvantage of single-task learning is that the training target of a single-task may ignore the potential information in some tasks. Information from related tasks may improve the performance of tumor segmentation. Therefore, in recent years, many research works have started from the perspective of multi-task learning, introducing auxiliary tasks on the basis of precise segmentation of brain tumors. The main setting of multi-task learning is a low-level feature representation that can be shared among multiple tasks. There are two advantages from the shared representation. One is to share the learnt domain-related information with each other through shallow shared representations so as to promote learning and to enhance the ability to obtain updated information. The second is mutual restraint. When multi-task learning performs gradient back-propagation, it will take into account the feedback of multiple tasks. Since different tasks may have different noise patterns, the model that learns multiple tasks at the same time will learn a more general representation, which reduces the risk of over-fitting and increases the generalization ability of the system.

Early attempts such as [115, 149] adapt the idea of multi-task learning and split the brain tumor segmentation task into three different sub-region segmentation tasks, i.e. segmenting whole tumor, tumor core and enhancing tumor individually. In [149], the author incorporated three sub-region segmentation tasks into an end-to-end holistic network, and exploited the underlying relevance among the three sub-region segmentation tasks. In [115], the author designed three different loss functions, corresponding to the segmentation loss of whole tumor, tumor core and enhancing tumor. In addition, more recent works introduce auxiliary tasks different from image segmentation. The learnt features from other tasks will support accurate segmentation. In [116], the author additionally introduces a boundary localization task. The features extracted by the shared encoder are not only suitable for tumor segmentation, but also for tumor boundary localization. Precise boundary localization can assist in minimizing the searching space and defining precise boundaries during tumor segmentation. [99] introduced the idea of first detecting and then segmenting, that is, detecting the location of tumors, and then performing precise tumor segmentation.

Another commonly used auxiliary task is to reconstruct the input data, that is, the encoded feature representation can be restored to the original input using an auxiliary decoder. [97] is the first method to introduce reconstruction as an auxiliary task to brain tumor segmentation. [134] introduced two auxiliary tasks, reconstruction and enhancement, to further enhance the ability of feature representation. [61] introduced three auxiliary tasks, including reconstruction, edge segmentation and patch comparison. These works regard the auxiliary task as a regularization to the main brain tumor segmentation task. Most multi-task designs use shared encoder to extract features and independent decoders to process different tasks. From the perspective of parameter update, the role of auxiliary task is to further regularize shared encoder’s parameter. Different from L1 or L2 that explicitly regularize parameter numbers and values, the auxiliary task shared low-level sub-space with main task. During training, auxiliary task is helpful for the network to train in the direction that simultaneously optimize the auxiliary task and the main segmentation task, which reduces the search space of the parameters, makes the extracted features more generalized for accurate segmentation [17, 41, 113, 143].

Customized loss function driven approaches

During network training, the gradient is likely dominated by the excessively large sample given an imbalanced dataset. Therefore, a number of works propose a custom loss function to regulate gradients during the training of a brain tumor segmentation model. Designing a custom loss function aims to reduce the weights of the easy-to-classify samples in the loss function, whilst increasing the weights of the hard samples, so that the model is more focused on the samples of a small proportion, reducing the impact of gradient bias generated while learning from imbalanced datasets.

The illustration of cross-modality feature learning framework. Image courtesy from [141]

Early research works tend to uses the standard loss functions, e.g. categorical cross-entropy [106], cross-entropy [127], and dice loss [20]. [109] is the first attempt to customise the loss function. In [109], the authors enhance the loss function to give more weights to the edge pixels, which significantly improve segmentation accuracy at classifying tumor boundaries. Experimental results show that the weighted loss function for edge pixels helps to improve the performance of segmentation dice by \(2-4\%\). Later on, [116] proposed a customised cross-entropy loss for boundary pixels while using an auxiliary task that includes boundary localization. In [89], the reconstruction task is adopted as regularization, so the loss function aims at improving pixel-wise reconstruction accuracy. In [85], the space loss function was designed to ensure that the learnt features can keep spatial information as much as possible. [99] further used a focal loss to deal with imbalanced issues. [58] used a multi-class dice loss, that is, the smaller the proportion of the category, the higher the error weight during back-propagation. In [62], a multi-scale loss function was added to perform in-depth supervision on the features of different scales at each stage of the encoder, helping the network to learn the features in multi-scale resolutions that are more conducive to object segmentation. In [43], from the perspective of a modal, two types of losses were designed for T1ce and Flair respectively. [29] proposed a weighted combination of the dice loss, the edge loss and the mask loss. The result shows that the combined losses can improve dice performance by about \(2\%\). [104] also proposed a combination loss set, which includes the categorical cross-entropy and the soft dice loss.

Summary

Table 3 shows the results generated by methods focused on dealing with data imbalance in brain tumor segmentation. From the above comparison, we can find several interesting observations.

-

1.

From the perspective of the network, the strategy to solve the imbalance problem is mainly to combine the output of multiple networks. Commonly used combination methods include network cascade and network ensemble. But these strategies all depend on the performance of each network. The consumption of the computing resources is also increased proportionally to the number of the network candidates.

-

2.

From the perspective of a task, the strategy to solve the imbalance problem is to set up auxiliary tasks for the regulating networks so that the networks can make full use of the existing data and learn more generalized features that are beneficial to the auxiliary tasks as well as the segmentation task.

-

3.

From the perspective of the loss function, the strategy to solve the imbalance problem is to use a custom loss function or an auxiliary loss function. By weighting the hard samples, the networks are regulated to pay more attention to the small data.

Utilizing multi modality information

Multi-modality imaging has played a key role in medical image analysis and applications. Different modalities of MRI emphasize on different tissues. Effectively utilizing of multi-modality information is one of the key factors in MRI-based brain tumor segmentation. According to the completeness of the available modalities, we divide the multi-modality brain tumor segmentation into two scenes: leveraging information based on multiple modalities and limited information processing with missing modality.

The structure of the modality-aware feature embedding module. Image courtesy of [142]

Learning with multiple modalities

In this paper, we follow the BraTS competition standard, that is, a complete multi-modality set refers the input data modalities include but not limit to T1, T1ce, T2, and Flair. In order to effectively use multi-modality information, existing works focus on effectively learning multi-modality information. The designed learning methods can be classified into three categories based on their purposes: Learning to Rank, Learning to Pair and Learning to Fuse.

Learning to Rank Modalities In multi-modality processing, the existing data modality is sorted by relevance based on the learning task, so that the network can focus on learning the modality with high relevance. This definition can be re-named as modality-task modeling. Early work from [110] can be treated as basic learning to rank formation. In [110], the author transformed each modality to a single CNN. In [110], each CNN corresponds to a different modality and the features extracted by CNN are independent of each other. The loss returned by the final classifier is similar to the scoring of the input data and the segmentation is undertaken according to the score. A similar processing method was used in [142]. For each of the two modalities, two independent networks were used for modeling relationship matching, and the parameters of each network are affected by the influence of different supervision losses. [141] extracted features of different embedding modalities (as shown in Fig. 11), modeled the relationship between the modalities and the segmentation of different tumor sub-regions, so that the data of different modalities were weighted and sorted corresponding to individual tasks.

The structure of the modality correlation module. Image courtesy of [153]

Learning to Pair Modalities Learning to rank modalities refers to the sorting of the modality-task relation for a certain segmentation task. Another commonly used modeling is the modality-modality pairing, which selects the best combination from multi-modality data to achieve precise segmentation. [82] is one of the early works to model the modality-modality relationship. The authors paired every two modalities and sent all the pairing combinations to the downstream network. [141] further strengthens the modality-modality pairing relationship through the cross-modal feature transition module and the modal pairing module. In the cross-modality feature transition module, the authors converted the input and output from one modality’s data to the concatenation of a modality pair. In the cross-modality feature fusion module, the authors converted the single-modality feature learning to the single-modality-pair feature learning, which predicts the segmentation masks of each single-modality-pair.

Learning to Fuse Modalities More recent works focus on learning to fuse multi-modality. Different from the modality ranking and pairing, modality fusion is to fuse features from each modality for accurate segmentation. The early fusion method is relatively simple, usually concatenates or adds features learned from different modalities. In [110], the authors used 4 networks to extract features from each modality and concatenates the extracted modality aware features. The features after concatenation are sent to Random Forest to classify the central pixel of the input patch. In [43], features from T1ce and Flair were added and sent to the downstream network for entire tumor segmentation. Similarly, in [141], modality aware feature extraction is performed and sent to the downstream network for further learning. These two fusion methods do not introduce additional parameters and are very simple and efficient. In [141], even though the authors fused the features from more complex cross-modal feature pairing and single-modal feature pairing modules. In addition, there are other works such as [82, 127] that used additional convolutional modules to combine and learn features from different modalities so as to accomplish modality fusion.

Although concatenation and addition are used, these two fusion methods do not change the semantics of learned features and cannot highlight or suppress features. To tackling this problem, many research works in recent years have adopted attention mechanisms to strengthen the learnt features. [60, 85, 131, 154] used a spatial and channel attention based fusion module. The proposed attention mechanism highlights useful features and suppresses redundant features, resulting in accurate segmentation.

Dealing with missing modalities

The modality learning methods mentioned above work in a complete multi-modality set. For example, in BraTS, we obtain the data of four modalities: T1, T1ce, T2, and FLAIR. However, in actual application scenarios, it is very difficult to obtain complete and high-quality multi-modality datasets, refers to as missing modality scenarios. Yu et al. [137] is one of the earliest works targeting learning under missing modality. The authors in Yu et al. [137] constructed the only available modal T1 and used generative adversarial networks to generate the missed modalities. In Yu et al. [137], the authors used the existing T1 modality as input to generate Flair modality. The generated Flair data is sent as a supplement with the original T1 data to the downstream segmentation network. [151, 153] learnt the implicit relationship between modalities and examined all possible missing scenarios. The results show that multi-modality have an important influence on accurate segmentation. In [138], the intensity correction algorithm was proposed for different scenarios of the single modality input. In this framework, the intensity query and correction of the data of multiple modalities makes it easier to distinguish the tumor and non-tumor regions in the synthetic data.

Summary

Table 4 shows the results generated by methods focused learning with multi-modality in deep learning based brain tumor segmentation. We can collect several common observations in utilizing the information from multi modalities.

-

1.

For task-modality modeling, learning to rank modalities can help the network choose the most relative and conducive modality for accurate segmentation. Most of the research works model the implicit ranking while learning the modality aware features.

-

2.

For modality-modality modeling, learning to pair modalities can help the network find the most suitable modality combination for segmentation. However, existing pairing works show modality pairs through exhaustive combination with large computing resources.

-

3.

The fusion of multi-modality information can improve the expressive ability and generalization of features. Existing fusion methods have their own advantages and disadvantages. Addition or concatenation does not introduce additional parameters, but lacks the physical expression of features. Using a small network, an attention module can optimize feature expression, but introduce additional parameters and computational cost.

-

4.

Missing modalities are one of the most common scenes in clinical imaging. Existing works focus on the perspective of generation, using existing modality data to generate missing modalities. However, the performance and quality of the generator modal heavily relies on the quality of the existing modality data.

Future trends

Deep learning based brain tumor segmentation methods have achieved satisfying performance, there are challenges remaining to be solved. In this section, we briefly discuss several open issues and also point out potential directions for possible future works.

Segmentation with less supervision

Most existing research methods belong to fully supervised methods, which rely on complete dataset with precious annotated segmentation masks. However, it is very challenging to obtain segmentation mask without annotation bias, which is time-consuming and labor-intensive. Recently, research attempts such as [78] evaluate the self-supervised representation for brain tumor segmentation. In the future, brain tumor segmentation methods expected to be powered by self, weak and semi-supervised training with fewer labels.

Neural architecture search based segmentation

As we discussed in Sect. “Designing effective segmentation networks”, the design of modules and networks heavily relies on human experience. In the future, we anticipate the combination between domain knowledge (e.g. tumor degree, tumor morphology) with neural architecture search algorithms for searching effective brain tumor segmentation networks.

Protect patient’s privacy

Current methods heavily mining the data information, especially for downstream tasks such as survival prediction for learning to segment brain tumor with patient statistics. In the future, privacy-preserved learning framework are expected to be explored aiming at protecting patients privacy [78].

Conclusion

Applying various deep learning methods to brain tumor segmentation is a challenging task. Automated brain tumor segmentation benefits several aspects due to the powerful feature learning ability of deep learning techniques. In this paper, we have investigated relevant deep learning based brain tumor segmentation methods and presented a comprehensive survey. We structurally categorized and summarized the deep learning based brain tumor segmentation methods. We have widely investigated this task and discussed several key aspects such as methods’ pros and cons, designing motivation and performance evaluation.

References

Akil M, Saouli R, Kachouri R et al (2020) Fully automatic brain tumor segmentation with deep learning-based selective attention using overlapping patches and multi-class weighted cross-entropy. Med Image Anal 63:101692

Akkus Z, Galimzianova A, Hoogi A, Rubin DL, Erickson BJ (2017) Deep learning for brain MRI segmentation: state of the art and future directions. J Digit Imag 30(4):449–459

Andermatt S, Pezold S, Cattin P (2016) Multi-dimensional gated recurrent units for the segmentation of biomedical 3d-data. In: Deep learning and data labeling for medical applications, pp. 142–151. Springer

Andermatt S, Pezold S, Cattin P (2017) Multi-dimensional gated recurrent units for brain tumor segmentation. In: International MICCAI BraTS Challenge. Pre-Conference Proceedings, pp. 15–19

Bae W, Lee S, Lee Y, Park B, Chung M, Jung KH (2019) Resource optimized neural architecture search for 3d medical image segmentation. In: International Conference on Medical Image Computing and Computer-Assisted Intervention, pp. 228–236. Springer

Baid U, Ghodasara S, Mohan S, Bilello M, Calabrese E, Colak E, Farahani K, Kalpathy-Cramer J, Kitamura FC, Pati S, et al. (2021) The rsna-asnr-miccai brats 2021 benchmark on brain tumor segmentation and radiogenomic classification. arXiv preprint arXiv:2107.02314

Bakas S, Akbari H, Sotiras A, Bilello M, Rozycki M, Kirby JS, Freymann JB, Farahani K, Davatzikos C (2017) Advancing the cancer genome atlas glioma mri collections with expert segmentation labels and radiomic features. Sci Data 4(1):1–13

Beers A, Chang K, Brown J, Sartor E, Mammen C, Gerstner E, Rosen B, Kalpathy-Cramer J (2017) Sequential 3d u-nets for biologically-informed brain tumor segmentation. arXiv preprint arXiv:1709.02967

Bernal J, Kushibar K, Asfaw DS, Valverde S, Oliver A, Martí R, Lladó X (2018) Deep convolutional neural networks for brain image analysis on magnetic resonance imaging: a review. Artificial intelligence in medicine

Bernal J, Kushibar K, Asfaw DS, Valverde S, Oliver A, Martí R, Lladó X (2019) Deep convolutional neural networks for brain image analysis on magnetic resonance imaging: a review. Artif Intell Med 95:64–81

Biratu ES, Schwenker F, Ayano YM, Debelee TG (2021) A survey of brain tumor segmentation and classification algorithms. J Imag 7(9):179

de Brebisson A, Montana G (2015) Deep neural networks for anatomical brain segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, pp. 20–28

Brosch T, Tang LY, Yoo Y, Li DK, Traboulsee A, Tam R (2016) Deep 3d convolutional encoder networks with shortcuts for multiscale feature integration applied to multiple sclerosis lesion segmentation. IEEE Trans Med Imag 35(5):1229–1239

Brügger R, Baumgartner CF, Konukoglu E (2019) A partially reversible u-net for memory-efficient volumetric image segmentation. In: International conference on medical image computing and computer-assisted intervention, pp. 429–437. Springer

Bulo SR, Neuhold G, Kontschieder P (2017) Loss max-pooling for semantic image segmentation. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 7082–7091. IEEE

van der Burgh HK, Schmidt R, Westeneng HJ, de Reus MA, van den Berg LH, van den Heuvel MP (2017) Deep learning predictions of survival based on mri in amyotrophic lateral sclerosis. NeuroImage Clin 13:361–369

Caruana R (1997) Multitask learning. Mach Learn 28(1):41–75

Casamitjana A, Puch S, Aduriz A, Sayrol E, Vilaplana V (2016) 3d convolutional networks for brain tumor segmentation. Proceedings of the MICCAI Challenge on Multimodal Brain Tumor Image Segmentation (BRATS) pp. 65–68

Castillo LS, Daza LA, Rivera LC, Arbeláez P (2017) Volumetric multimodality neural network for brain tumor segmentation. In: 13th international conference on medical information processing and analysis, vol. 10572, p. 105720E. International Society for Optics and Photonics

Catà M, Casamitjana Díaz A, Sanchez Muriana I, Combalia M, Vilaplana Besler V (2017) Masked v-net: an approach to brain tumor segmentation. In: 2017 International MICCAI BraTS Challenge. Pre-conference proceedings, pp. 42–49

Chang PD (2016) Fully convolutional deep residual neural networks for brain tumor segmentation. In: International workshop on Brainlesion: Glioma, multiple sclerosis, stroke and traumatic brain injuries, pp. 108–118. Springer

Chen C, Liu X, Ding M, Zheng J, Li J (2019) 3d dilated multi-fiber network for real-time brain tumor segmentation in mri. In: International Conference on Medical Image Computing and Computer-Assisted Intervention, pp. 184–192. Springer

Chen H, Qin Z, Ding Y, Tian L, Qin Z (2020) Brain tumor segmentation with deep convolutional symmetric neural network. Neurocomputing 392:305–313

Chen L, Bentley P, Rueckert D (2017) Fully automatic acute ischemic lesion segmentation in dwi using convolutional neural networks. NeuroImage Clin 15:633–643

Chen S, Ding C, Liu M (2019) Dual-force convolutional neural networks for accurate brain tumor segmentation. Pattern Recognition 88:90–100

Chen S, Ding C, Zhou C (2017) Brain tumor segmentation with label distribution learning and multi-level feature representation. 2017 International MICCAI BraTS Challenge

Chen W, Liu B, Peng S, Sun J, Qiao X (2018) S3d-unet: separable 3d u-net for brain tumor segmentation. In: International MICCAI Brainlesion Workshop, pp. 358–368. Springer

Chen Y, Joo J (2021) Understanding and mitigating annotation bias in facial expression recognition. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 14980–14991

Cheng X, Jiang Z, Sun Q, Zhang J (2019) Memory-efficient cascade 3d u-net for brain tumor segmentation. In: International MICCAI Brainlesion Workshop, pp. 242–253. Springer

Choudhury AR, Vanguri R, Jambawalikar SR, Kumar P (2018) Segmentation of brain tumors using deeplabv3+. In: International MICCAI Brainlesion Workshop, pp. 154–167. Springer

Cirillo MD, Abramian D, Eklund A (2020) Vox2vox: 3d-gan for brain tumour segmentation. arXiv preprint arXiv:2003.13653

Clark MC, Hall LO, Goldgof DB, Velthuizen R, Murtagh FR, Silbiger MS (1998) Automatic tumor segmentation using knowledge-based techniques. IEEE Trans Med Imag 17(2):187–201

Corso JJ, Sharon E, Dube S, El-Saden S, Sinha U, Yuille A (2008) Efficient multilevel brain tumor segmentation with integrated bayesian model classification. IEEE Trans Med Imag 27(5):629–640

Doi K (2007) Computer-aided diagnosis in medical imaging: historical review, current status and future potential. Comput Med Imag Grap 31(4–5):198–211

Dong H, Yang G, Liu F, Mo Y, Guo Y (2017) Automatic brain tumor detection and segmentation using u-net based fully convolutional networks. In: annual conference on medical image understanding and analysis, pp. 506–517. Springer

Dong Q, Gong S, Zhu X (2018) Imbalanced deep learning by minority class incremental rectification. IEEE Trans Pattern Anal Mach Intel 41(6):1367–1381

Dou Q, Chen H, Yu L, Shi L, Wang D, Mok VC, Heng PA (2015) Automatic cerebral microbleeds detection from mr images via independent subspace analysis based hierarchical features. In: Engineering in Medicine and Biology Society (EMBC), 2015 37th Annual International Conference of the IEEE, pp. 7933–7936. IEEE

Dou Q, Chen H, Yu L, Zhao L, Qin J, Wang D, Mok VC, Shi L, Heng PA (2016) Automatic detection of cerebral microbleeds from mr images via 3d convolutional neural networks. IEEE Trans Med Imag 35(5):1182–1195

Dvořák P, Menze B (2015) Local structure prediction with convolutional neural networks for multimodal brain tumor segmentation. In: International MICCAI workshop on medical computer vision, pp. 59–71. Springer

Esteva A, Robicquet A, Ramsundar B, Kuleshov V, DePristo M, Chou K, Cui C, Corrado G, Thrun S, Dean J (2019) A guide to deep learning in healthcare. Nature Med 25(1):24–29

Evgeniou T, Pontil M (2004) Regularized multi–task learning. In: Proceedings of the tenth ACM SIGKDD international conference on Knowledge discovery and data mining, pp. 109–117

Fang F, Yao Y, Zhou T, Xie G, Lu J (2021) Self-supervised multi-modal hybrid fusion network for brain tumor segmentation. IEEE J Biomed Health Inform. https://doi.org/10.1109/JBHI.2021.3109301

Fang L, He H (2018) Three pathways u-net for brain tumor segmentation. In: Pre-conference proceedings of the 7th medical image computing and computer-assisted interventions (MICCAI) BraTS Challenge, vol. 2018, pp. 119–126

Ghaffari M, Sowmya A, Oliver R (2019) Automated brain tumor segmentation using multimodal brain scans: a survey based on models submitted to the brats 2012–2018 challenges. IEEE Trans Biomed Eng 13:156–168

Ghaffari M, Sowmya A, Oliver R (2020) Brain tumour segmentation using cascaded 3d densely-connected u-net

Ghafoorian M, Karssemeijer N, Heskes T, Bergkamp M, Wissink J, Obels J, Keizer K, de Leeuw F.E, van Ginneken B, Marchiori E et al (2017) Deep multi-scale location-aware 3d convolutional neural networks for automated detection of lacunes of presumed vascular origin. NeuroImage: Clin 14:391–399

Gomez AN, Ren M, Urtasun R, Grosse RB (2017) The reversible residual network: Backpropagation without storing activations. In: Proceedings of the 31st International Conference on Neural Information Processing Systems, pp. 2211–2221

Goodfellow I, Bengio Y, Courville A (2016) Deep learning

Gordillo N, Montseny E, Sobrevilla P (2013) State of the art survey on mri brain tumor segmentation. Magn Reson Imag 31(8):1426–1438

Gu J, Wang Z, Kuen J, Ma L, Shahroudy A, Shuai B, Liu T, Wang X, Wang G, Cai J et al (2018) Recent advances in convolutional neural networks. Pattern Recognit 77:354–377

Hameurlaine M, Moussaoui A (2019) Survey of brain tumor segmentation techniques on magnetic resonance imaging. Nano Biomed Eng 11(2):178–191

Havaei M, Davy A, Warde-Farley D, Biard A, Courville A, Bengio Y, Pal C, Jodoin PM, Larochelle H (2017) Brain tumor segmentation with deep neural networks. Med Imag Anal 35:18–31

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 770–778

Henry T, Carre A, Lerousseau M, Estienne T, Robert C, Paragios N, Deutsch E (2020) Brain tumor segmentation with self-ensembled, deeply-supervised 3d u-net neural networks: a brats 2020 challenge solution. arXiv preprint arXiv:2011.01045

Hu Y, Xia Y (2017) 3d deep neural network-based brain tumor segmentation using multimodality magnetic resonance sequences. In: International MICCAI Brainlesion Workshop, pp. 423–434. Springer

Hua R, Huo Q, Gao Y, Sun Y, Shi F (2018) Multimodal brain tumor segmentation using cascaded v-nets. In: International MICCAI Brainlesion Workshop, pp. 49–60. Springer

Huang G, Liu Z, Van Der Maaten L, Weinberger KQ (2017) Densely connected convolutional networks. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 4700–4708

Isensee F, Kickingereder P, Wick W, Bendszus M, Maier-Hein KH (2017) Brain tumor segmentation and radiomics survival prediction: Contribution to the brats 2017 challenge. In: International MICCAI Brainlesion Workshop, pp. 287–297. Springer

Isensee F, Kickingereder P, Wick W, Bendszus M, Maier-Hein KH (2018) No new-net. In: International MICCAI Brainlesion Workshop, pp. 234–244. Springer

Islam M, Vibashan V, Jose VJM, Wijethilake N, Utkarsh U, Ren H (2019) Brain tumor segmentation and survival prediction using 3d attention unet. In: International MICCAI Brainlesion Workshop, pp. 262–272. Springer

Iwasawa J, Hirano Y, Sugawara Y (2020) Label-efficient multi-task segmentation using contrastive learning. arXiv preprint arXiv:2009.11160

Jesson A, Arbel T (2017) Brain tumor segmentation using a 3d fcn with multi-scale loss. In: International MICCAI Brainlesion Workshop, pp. 392–402. Springer

Jia H, Cai W, Huang H, Xia Y (2020) H2nf-net for brain tumor segmentation using multimodal mr imaging: 2nd place solution to brats challenge 2020 segmentation task. In: BrainLes@ MICCAI (2)

Jiang Z, Ding C, Liu M, Tao D (2019) Two-stage cascaded u-net: 1st place solution to brats challenge 2019 segmentation task. In: International MICCAI Brainlesion Workshop, pp. 231–241. Springer

Johnson JM, Khoshgoftaar TM (2019) Survey on deep learning with class imbalance. J Big Data 6(1):1–54

Jungo A, McKinley R, Meier R, Knecht U, Vera L, Pérez-Beteta J, Molina-García D, Pérez-García VM, Wiest R, Reyes M (2017) Towards uncertainty-assisted brain tumor segmentation and survival prediction. In: International MICCAI Brainlesion Workshop, pp. 474–485. Springer

Kamnitsas K, Bai W, Ferrante E, McDonagh S, Sinclair M, Pawlowski N, Rajchl M, Lee M, Kainz B, Rueckert D, et al (2017) Ensembles of multiple models and architectures for robust brain tumour segmentation. In: International MICCAI brainlesion workshop, pp. 450–462. Springer

Kamnitsas K, Ferrante E, Parisot S, Ledig C, Nori AV, Criminisi A, Rueckert D, Glocker B (2016) Deepmedic for brain tumor segmentation. In: International workshop on Brainlesion: Glioma, multiple sclerosis, stroke and traumatic brain injuries, pp. 138–149. Springer

Kamnitsas K, Ledig C, Newcombe VF, Simpson JP, Kane AD, Menon DK, Rueckert D, Glocker B (2017) Efficient multi-scale 3d cnn with fully connected crf for accurate brain lesion segmentation. Med Imag Anal 36:61–78

Kao PY, Ngo T, Zhang A, Chen JW, Manjunath B (2018) Brain tumor segmentation and tractographic feature extraction from structural mr images for overall survival prediction. In: International MICCAI Brainlesion Workshop, pp. 128–141. Springer

Kapoor L, Thakur S (2017) A survey on brain tumor detection using image processing techniques. In: 2017 7th international conference on cloud computing, data science & engineering-confluence, pp. 582–585. IEEE

Kaus M, Warfield SK, Nabavi A, Chatzidakis E, Black PM, Jolesz FA, Kikinis R (1999) Segmentation of meningiomas and low grade gliomas in mri. In: International conference on medical image computing and computer-assisted intervention, pp. 1–10. Springer

Kim S, Kim I, Lim S, Baek W, Kim C, Cho H, Yoon B, Kim T (2019) Scalable neural architecture search for 3d medical image segmentation. In: International Conference on Medical Image Computing and Computer-Assisted Intervention, pp. 220–228. Springer

Krizhevsky A, Sutskever I, Hinton GE (2012) Imagenet classification with deep convolutional neural networks. Adv Neural Inf Process Syst 25:1097–1105

Lachinov D, Shipunova E, Turlapov V (2019) Knowledge distillation for brain tumor segmentation. In: International MICCAI Brainlesion Workshop, pp. 324–332. Springer

Lachinov D, Vasiliev E, Turlapov V (2018) Glioma segmentation with cascaded unet. In: International MICCAI Brainlesion Workshop, pp. 189–198. Springer

LeCun Y, Bengio Y, Hinton G (2015) Deep learning. Nature 521(7553):436