Abstract

Online education platforms play an increasingly important role in mediating the success of individuals’ careers. Therefore, while building overlying content recommendation services, it becomes essential to guarantee that learners are provided with equal recommended learning opportunities, according to the platform principles, context, and pedagogy. Though the importance of ensuring equality of learning opportunities has been well investigated in traditional institutions, how this equality can be operationalized in online learning ecosystems through recommender systems is still under-explored. In this paper, we shape a blueprint of the decisions and processes to be considered in the context of equality of recommended learning opportunities, based on principles that need to be empirically-validated (no evaluation with live learners has been performed). To this end, we first provide a formalization of educational principles that model recommendations’ learning properties, and a novel fairness metric that combines them to monitor the equality of recommended learning opportunities among learners. Then, we envision a scenario wherein an educational platform should be arranged in such a way that the generated recommendations meet each principle to a certain degree for all learners, constrained to their individual preferences. Under this view, we explore the learning opportunities provided by recommender systems in a course platform, uncovering systematic inequalities. To reduce this effect, we propose a novel post-processing approach that balances personalization and equality of recommended opportunities. Experiments show that our approach leads to higher equality, with a negligible loss in personalization. This paper provides a theoretical foundation for future studies of learners’ preferences and limits concerning the equality of recommended learning opportunities.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Learning experience selection by learners is at the heart of curriculum development and, consequently, is vital to shaping individuals’ knowledge and competencies (Talla, 2012; Druzhinina et al., 2018). The term learning experience generally refers to interactions in courses, programs, or other situations where learning takes place, including traditional and non-traditional settings (Girvan, 2018). Notable examples of the latter, with an impact on individual experiences, are online course platforms, such as Coursera and Udemy. The proliferation of initiatives and the increasing adoption of these platforms have been requiring automated mechanisms to support learning experience selection by learners, tailored to the platform’s principles, context, pedagogy, and needs (Rieckmann, 2018).

One aspect receiving special attention to support the learning experience selection on these online platforms is the ranking of courses deemed of relevance to individual learners. As a result, recommender systems are being deployed to suggest courses that accommodate learner’s interests and needs (Kulkarni et al., 2020). These recommended courses can be envisioned as learning opportunities being offered for the attention of a learner. Though optimizing recommendations according to learners’ interests has been seen for years as the ultimate goal in the context of educational recommender systems, important principles (i.e., properties the platform aims to pursue, such as the validity, learnability, quality, and affordability of the recommended coursesFootnote 1) and the extent to which they are equally met across learners should be considered to shape online learning opportunities (Talla, 2012; Druzhinina et al., 2018). Recommendations thus need to meet a trade-off between the interests of learners and the principles of the platform, providing learners with a well-rounded range of learning experiences (Abdollahpouri et al., 2020).

Recommender capabilities represent a fundamental component of artificial intelligence systems in education. For this reason, ensuring equality among learners according to the recommended educational opportunities is essential, as the suggested courses may translate to educational gains and losses for the learners. By extension, education significantly influences individuals’ life chances in the job market, and these opportunities should not be undermined by arbitrary decisions provided by a recommender system. Meyer (2016) has revealed how equal learning opportunities, equal learning outcomes, and equal job opportunities relate to each other and emphasized the indispensable, but at the same time potentially dangerous, need for equal learning opportunities. The demand for equal learning opportunities alone can lead to (i) attributing unequal learning outcomes that could have been avoided to unequal talent and effort, (ii) justify social inequalities by saying that all measures were taken to realize equal learning opportunities, (iii) limit efforts to merely realize equal educational opportunities. These aspects have been investigated in traditional educational settings worldwide, such as in China (Golley and Kong, 2018), Germany (Buchholz et al., 2016), Japan (Fujihara & Ishida, 2016), Korea (Byun & Park, 2017), Spain (Fernández-Mellizo & Martínez-García, 2017), and United States (Shields et al., 2017).

Thanks to extensive empirical analyses, these studies have identified several variables that may lead to unequal educational opportunities, with the gap in up-to-date competencies required by the job market and the considerable costs of access to education as two of them. Operationalizing these principles and the consequent notion of equal learning opportunities in online ecosystems via recommenders is still under-explored. These systems learn patterns from data containing inherent biases, which end up being amplified in the recommendations based on such data (Boratto et al., 2019). Some learners might thus receive unequal opportunities based on the principles pursued by the targeted educational ecosystem. Figure 1 shows an example of this phenomenonFootnote 2. Hence, it is imperative to mitigate inequalities while retaining personalization.

Example of Inequality in Recommended Learning Opportunities. We consider two learners, ui and uj (first line). The mid-line shows us that the learners interacted with similar resources in terms of quality (star:high; square:low), validity (light-blue:old; dark-blue:fresh), and affordability level (1:low; 2:mid; 3:high). However, if we consider ranked lists provided by a collaborative algorithm to those two learners (bottom line), ui’s recommendation list consists of mostly fresh, high-quality, and affordable resources, while uj’s recommendations focus on obsolete, low-quality, and expensive resources

In this paper, we propose the concept of equality of recommended learning opportunities in personalized recommendations. To investigate how this concept applies to the online learning ecosystem, we envision a scenario wherein the educational platform should guarantee that a set of learning principles are met for all the learners, to a certain degree, when generating recommendations according to the learner’s interests. We assume that those principles can be operationalized in terms of properties held by a list of recommendations. Therefore, the ideal recommender system would (i) achieve higher consistency between the principles pursued by the platform and those measured in the recommendations, (ii) retain the consistency across different learner populations while (iii) honouring individual interests. Under this scenario, we characterize the recommendations proposed to learners in a real-world online course platform as a function of seven principles derived from knowledge and curriculum literature, as reported in “Problem Formulation”. Ten pre-existing available recommender algorithms, whose details are presented in “?? Recommendation Algorithms ??and Protocols”, are evaluated. Our exploratory analysis sheds light on systematic inequalities against learners based on the properties of the suggested courses. The results of our study thus motivate us to devise a novel post-processing approach that balances equality and personalization in recommendations. Specifically, the core assumption is that the notion of equality might be enhanced by trying to balance out desirable properties of course recommendations and that this objective can be achieved by re-ranking the courses originally suggested (and optimized for personalization) by the recommender system, such that the lists recommended to learners meet desirable principles of course recommendations equally across learners. The contribution of the work presented in this paper is four-fold:

-

1.

Operational: we define principles that model learning opportunity properties, and we combine them in a fairness metric that monitors the equality of recommended learning opportunities across learners.

-

2.

Social: we provide observations and insights on learning opportunities in recommendations, using a dataset that includes more than 40K learners and 30K courses.

-

3.

Technical: we propose a post-processing recommendation approach that aims to balance personalization and equality of learning opportunities to enable optimization of different combinations of the principles of equality.

-

4.

Ethical: we evaluate our approach on a real-world publicly available dataset, and we show how it may lead to higher equality of recommended learning opportunities among learners, with a negligible loss in personalization.

Our study represents a step toward understanding how equality principles can be operationalized and combined in a formal notion of equal opportunities in educational recommendations. This paper shapes a blueprint of the decisions and processes to be recommended, based on principles that need to be empirically-validated (no evaluation with live learners has been performed), and serves as a theoretical foundation for future studies of learners’ concepts of fairness, preferences, or limits concerning the equality of recommended learning opportunities. For instance, this paper can be used to create examples of what questions to ask as part of interviews with learners, what scenarios to explore to elicit their concepts of fairness, and how to process data in the platform to monitor and ensure the equality principles.

The remainder of this paper is structured as follows. Section “Related Work” presents related work. Section “Problem Formulation” introduces the proposed principles and notion of equality, and “Exploratory Analysis” depicts the explorative analysis. Then, “Optimizing for Equality of Learning Opportunities” describes and evaluates our approach for mitigating inequality of recommended opportunities. Finally, “Conclusions” provides concluding remarks and discusses future research directions.

Related Work

This research lies at the intersection of Artificial Intelligence in Education (AIED), Recommender Systems (RecSys), and Fairness, Accountability, Transparency, Explainability (FATE).

Educational Recommender Systems in the Artificial Intelligence Context

The advances in the area of computing technologies have facilitated the implementation of artificial intelligence applications in educational settings, improving teaching, learning, and decision making (Pinkwart, 2016). Learners’ behavioral patterns have been analyzed to make inferences, judgments, or predictions, serving for personalized guidance or feedback to students, teachers, or policymakers, for example, as proposed by Mao (2019) and Ren et al. (2019).

Our study in this paper treats recommender capabilities as a crucial component of AIED systems (Khanal et al., 2019). Given the increase in resources available in online course platforms and learning management systems, designing personalized recommendations has become a key challenge. This challenge, thereby, motivates research carried out by the AIED, Educational Data Mining (EDM), and Learning Analytics and Knowledge (LAK) communities. The most common objective in prior work is to suggest resources or peers in a given course. For instance, Lin et al. (2020) proposed a deep attention-based model to recommend resources based on learners’ online behaviors. This work outperformed state-of-the-art baselines in terms of accuracy (i.e., the extent to which the recommended items are among those included in the test set for that learner, meaning that the recommender system predicts well the future interests of the learner). Similarly, Wang et al. (2019) introduced a recommendation algorithm for textbooks, showing that adding adaptivity significantly increases engagement.

Beyond recommending learning resources, Eagle et al. (2018) designed an algorithm for individualized help messages. Further, the work demonstrates that the needs of learners for a lesson can be effectively predicted from their behavior in prior lessons. Mi and Faltings (2017) and Chen and Demmans (2020) showed the important role of personalization while modeling forum discussion recommendations. Both works illustrated the presented algorithms’ effectiveness in predicting learners’ preferences. Additionally, Chau et al. (2018) assisted instructors with recommendations on the most relevant material to teach. Reciprocal recommendations have been investigated by Labarthe et al. (2016) and Potts et al. (2018). These works proposed two recommendation approaches for personalized contact lists. Their experiments uncovered that learners are more likely to engage in courses if they received peer recommendations. Finally, other tasks dealt with the accuracy of recommender systems in matching learners and job offers (Jacobsen and Spanakis, 2019).

Course recommendations have recently received attention due to the increasing number of initiatives carried out online. For instance, Pardos and Jiang (2020) generated course recommendations that are novel and unexpected, but still relevant to learners’ interests. Their results revealed that providing services optimized for serendipity allows learners to explore resources without a strong bias towards the learner’s (past) experience. Furthermore, Morsomme and Alferez (2019) found that students find recommendations for courses at other departments very helpful. In the university context, Esteban et al. (2018) and Boxuan et al. (2020) described two hybrid-methods for discovering the most relevant criteria that affect the course recommendation for university learners. Their respective results confirmed that the overall rating that a learner gives to a course is the most reliable information source. However, when it is complemented with other criteria about the courses, the recommendation accuracy increases. Capturing the sequential relationships across courses made it possible to devise a course recommender system in Polyzou et al. (2019). Their course recommender system outperforms other collaborative filtering and baseline approaches.

Extensive research work has been devoted to mastery learning in intelligent tutoring systems, which select educational resources for learners based on knowledge tracing. For instance, Thaker et al. (2020) automatically identified the most relevant textbooks to be recommended by incorporating learner’s knowledge states. Chanaa and Faddouli (2020) proposed a model that predicts learner’s needs for recommendation using dynamic graph-based knowledge tracing. By learning feature information and topology representation related to learners, their model achieved a competitive accuracy of more than 80%. To avoid the mismatch between learners and learning resources, Dai et al. (2016) introduced a recommender system for suggesting learning resources, with a domain knowledge structure to connect learners’ skills and learning resources. They showed that the accuracy is higher when texts related to the concerned domain knowledge are involved. In Chan et al. (2006), the authors conclude that ready-to-hand access conceives the potential for a new evolution of technology-enhanced learning (TEL) phase. This phase is defined as “seamless learning spaces” and marked by a succession of the learning experiences over different scenarios. Further, it arises from the availability of one (or more) device(s) per student (“one-to-one”). The one-to-one TEL holds the potential to ”cross the chasm” from early adopters handling isolated design studies to adoption-based research and extensive implementation. Finally, Ai et al. (2019) designed an exercise recommender that considers exercise-concept mappings while tracing learners’ knowledge. This recommender led to a better performance than the heuristic policy of maximizing learners’ knowledge level.

Our contribution differs from prior work in three major ways. First, current approaches have been mostly optimized for learners’ preference prediction, given their ratings, performance, grades, or enrolments. Conversely, our approach aims to balance how learning opportunities vary, based on high-level properties directly measurable on the ranked lists (e.g., familiarity and learnability of the recommended courses), going beyond the accuracy in predicting the future learner’s preferences only. Second, even though several recommender systems integrated beyond-accuracy aspects, such as learnability and serendipity, combining them with other aspects and decoupling them from the underlying recommendation strategy appears impractical. By contrast, our post-processing mechanism can be applied to the output of any recommender system to arrange recommendations that meet a range of properties. Third, controlling how much the generated recommendations are equally consistent across learners has been rarely investigated. Hence, we introduce and operationalize a novel fairness metric that monitors equality among learners concerning the targeted educational principles.

Fairness in Artificial Intelligence for Education

Characterizing and counteracting potential pitfalls of data-driven educational interventions is receiving increasing attention from the research community. Educational applications of artificial intelligence are not immune to the risks observed in other domains. Moreover, the design of systems may often be driven more by profit than by actual educational impact, with serious potential risks (e.g., algorithmic biases, invasion of privacy, or negative social impacts) out-weighting any benefits (Shum, 2018; Bulger, 2016; Williamson, 2017).

Responding to these concerns may be critical to determine the fairness of AIED systems and to shape how the ethics for human learning are more broadly defined. However, only a few works have focused on ethics in AIED, where the increasing use of learning analytics and artificial intelligence raises unique context-specific challenges (Ocumpaugh et al., 2014). For instance, while most existing fairness auditing and de-biasing methods require access to sensitive demographic information (e.g., age, race, gender) at an individual level, such information is often unavailable to AIED practitioners (Holstein et al., 2019a). Also, it becomes challenging to define fair outcomes in contexts where a system results in disparate outcomes across subpopulations, such as learners having lower or higher prior knowledge (Hansen and Reich, 2015). Although the community has been interested in the ethical dimensions of data-driven educational systems (Drachsler et al., 2015; Sclater & Bailey, 2015; Tsai & Gasevic, 2017), the focus has often been on policies.

Despite this widespread attention, fairness has been rarely discussed from a more practical and technical perspective (Holmes et al., 2019; Holstein & Doroudi, 2019; Mayfield et al., 2019; Porayska-Pomsta & Rajendran, 2019). Given that designing methods for addressing unfairness challenges can be highly context-dependent (Holstein et al., 2019b; Green & Hu, 2018; Selbst et al., 2019), the education research community has started to explore what fairness, accountability, transparency, and ethics look like in technology-supported education specifically. For instance, (Yu et al., 2020) found that combining the profile and material data sources does not fully neutralize biases, and it still leads to high rates of underestimation among disadvantaged groups for learners’ success prediction. Similarly, (Doroudi & Brunskill, 2019) showed that knowledge tracing algorithms are susceptible to unfairness, but that knowledge tracing with the additive factor may be fairer. Hu and Rangwala (2020) focused on individually fair models for identifying students at-risk of underperforming. The work shows how to effectively mitigate bias in models and make the models useful in aiding all learners. Conversely, Abdi et al. (2020) investigated the impact of complementing educational recommender systems with transparent justifications for their recommendations. This impact leads to a positive effect on engagement and perceived effectiveness and an increasing sense of unfairness due to learners not agreeing with how their competency is modeled. Such appraisal is key to enhancing our understanding of fairness, building on knowledge gleaned from AIED research.

However, to the best of our knowledge, controlling equality in educational recommender systems has been so far under-explored. Consequently, we investigated how fairness and ethical aspects have been treated by the general-purpose RecSys community (Barocas et al., 2017; Ramos et al., 2020), analyzing whether and how the resulting treatments can be tailored to recommender systems in education. Fairness across end-users deals with ensuring that users who belong to different protected classes (group-based) or are similar at the individual level (individual-based) receive recommendations with the same quality. Group-based fairness requires that the protected groups are treated similarly to the advantaged groups or the population as a whole. For instance, Zhu et al. (2018) designed an approach that identifies and removes from tensors all gender information about users. This approach leads to fairer recommendations (i.e., with a smaller difference in the quality of the recommendations received by user’s groups) regardless of the user’s group membership. Rastegarpanah et al. (2019) generated artificial data to balance group representations in the training set and minimize the difference between groups in terms of mean squared error. Similarly, (Yao and Huang, 2017) proposed metrics related to population imbalance (i.e., a class of users characterized by a sensitive attribute being the minority) and observation bias (i.e., a class of users who produced fewer ratings than their counterpart). Under a similar scenario, for instance, Beutel et al. (2019) built a pairwise regularization that penalizes the model if its ability to predict which item was clicked is better for one group than the other. These works showed that operationalizing their metrics in the recommender’s objective function results in fairer recommendations.

Group-fairness may be, unfortunately, inadequate as a notion of fairness, given that there exist circumstances wherein group fairness is maintained but, from an individual point of view, the outcome is blatantly unfair. Hence, our study cares about learners as individuals, not as belonging to a class based on a certain sensitive attribute. This condition also fits with educational scenarios where sensitive demographic attributes (e.g., age, race, gender) at an individual level are unavailable to learning analytics practitioners. Examples of individual fairness notions proposed by the RecSys community (Biega et al., 2018; Lahoti et al., 2019a; Singh and Joachims, 2019) imply that similar users should have similar outcomes. Their definition of fairness states that any two individuals similar concerning a particular task should be treated likewise, assuming that a similarity metric between individuals exists. For instance, in a health-related recommender system, two patients with a similar pathology should receive recommendations of the same quality.

Our study generalizes the original definition of individual fairness and applies it to the educational context. Specifically, we aim to provide all learners, indistinctly, with recommended learning opportunities that are equally consistent with the targeted principles. We do not rely on any notion of similarity across pairs of learners based on how the targeted principles were met in the past. Compared to our definition, the other existing ones could even emphasize existing inequalities (e.g., two learners who similarly experienced less learnable recommended courses in the past could end up receiving low learnable courses more and more, though the recommender would have been fair under the original individual fairness notion). On the other hand, achieving the fairness goal indistinctly for all learners, as per our definition, can be more challenging since the demographic and behavioral (e.g., in terms of preferences) similarity between learners can vary significantly.

Problem Formulation

In this section, we formalize recommendation concepts, educational principles, and metrics that respectively monitor consistency and equality of recommended learning opportunities among learners, according to our definition of fairness, as explained earlier.

Preliminaries

Given a set of learners U and a set of educational resources I, we assume that learners expressed their interest for a subset of resources in I. The feedback collected from learner-resource interactions can be abstracted to a set of pairs (u, i), implicitly obtained from user activity, or triplets (u, i, rating) explicitly provided by learners, denoted in short by Ru,i. We denote the learner-resource feedback matrix by \(R \in \mathbb {R}^{M*N}\) where Ru,i > 0 indicates that learner u interacted with resource i, and Ru,i = 0 otherwise. Furthermore, we denote the set of resources that learners u ∈ U interacted with by Iu = {i ∈ I : Ru,i > 0}.

We assume that each resource i ∈ I is represented by an m-dimensional feature vector Fi = (f1,…,fm) over a set of features F = {Fi,1,Fi,2,…,Fi,m}. Each dimension Fj can be viewed as a set of values or labels describing a feature of a resource i, fi,j ∈ Fj for j = 1,…,m. In our experiments, we considered five features, i.e., instructional level (discrete), resource category (discrete), last update timestamp (discrete datetime), number of enrolled learners (continuous), and price (continuous). Furthermore, we assume that each resource i ∈ I is composed of a set of assets Li. Each li,j ∈ Li has a type ti,j ∈ T. In our study, we considered T = {V ideo,Article,Ebook,Podcast}, due to their popularity and their availability in the public datasets.

We assume that a recommender estimates relevance for unobserved entries in R for a given learner and uses them to rank resources. It can be abstracted as learning \(\widetilde {R}_{u,i} \in [0,1]\), which represents the predicted relevance of resource i for learner u. Given a certain learner u, resources i ∈ I ∖ Iu are ranked by decreasing \(\widetilde {R}_{u,i}\), and top-k, with \(k\in \mathbb N\) and k > 0, resources are recommended. Finally, we denote the set of \(k\in \mathbb N\) resources recommended to user u by Ĩu.

Modeling Recommended Learning Opportunity through Principles

Given that the recommendation capabilities are a relevant part of AIED systems, investigating whether educational recommender systems are fair and how they can be made a vehicle for making our educational systems fairer is essential. Capturing, formalizing, and operationalizing notions of equality can shape our understanding of the extent to which the educational offerings available to learners provide them with equal opportunities and how recommender systems influence the normal course of educational business. To this end, defining the variables to be equalized constitutes a natural pre-requisite.

Organizing learning opportunities in classroom settings has been traditionally a responsibility of instructional designers or teachers. To this end, they rely on a range of principles coming from the curriculum design field, including significance, self-sufficiency, validity, interest, utility, learnability, feasibility (Talla, 2012; Druzhinina et al., 2018). Hence, our study assumes that the notion of equality needs to consider these principles derived from the instructional design beliefs as those to be equalized in recommendations, given their real-world validity for learners’ educational experiences from the instructional perspective. However, we do not argue that this approach and the consequent principles are the only ones as they strongly depend on the educational context and the outcomes of the fairness auditing processes in the target context. Our principle modelling aims to serve as a starting point for researchers, to guide them in what questions, scenarios, and data to explore, while addressing the questions related to fairness. Therefore, we argue that our study offers a blueprint for the decisions and processes needed.

Human inspection of curriculum-design-based principles is usually based on textual guidelines, and the translation into numerical indicators, when available, is dependent on the specificity of the educational context. Given the unique characteristics of the online educational context and the constraints the platforms introduced in the collection of learners’ data, we assume that the principles are based on data that would typically be available in a platform in which an educational recommender system would be embedded. For this reason, not all the principles and not all the guidelines can be directly operationalizedFootnote 3. One of the core assumptions of our approach is then that it should be possible to define those principles in terms of properties held by a list of recommendations. Specifically, we envision a scenario wherein only a representative subset of principles are embedded in the recommender system’s logic. The educational platform is thus empowered with the capability of controlling the extent to which the list of courses recommended to learners meets each principle. While the high-level conception of the selected principles is assumed to be relevant, their operationalization into the recommender’s logic is dependent on the platform, turning to simplified implementations in some cases. While we provide formulations that are as general as possible, we will ensure that our approach can be extended or adapted to any principle.

Formally, we consider a set C of functions \(c_{\Tilde {I}_{u}}(\cdot ) : I^{k} \xrightarrow {} [0,1]\). Each function receives a set of k resources Ik and returns a value indicating how much the set of resources meets that principle. The higher the value, the higher the extent to which the principle is met. Specifically, we consider the following seven principles, whose mathematical formulation is provided in Appendix A.

Definition 1 (Familiarity)

Familiarity is defined as whether the learner is familiar with the recommended content, as measured by whether the relative frequency of the course categories in a recommended set is proportional to that in the courses the learner took.

Familiarity is at the heart of learner-centered education. Learners might be more comfortable if the subject matter is meaningful to them, and it is assumed that it becomes meaningful if they are familiar with that subject. Xie and Joo (2009) supported this observation through descriptive and statistical analysis, uncovering that the familiarity was correlated to the content searching behavior. Similarly, Qiu and Lo (2017) showed that participants were behaviourally and cognitively more engaged in tasks with familiar topics as well as having a more positive affective response to them.

Our study models familiarity using the category of the resources in a recommended list, encoded into a pre-defined taxonomy. If the relative frequency of the course categories in a recommended set is proportional to that in the courses the learner took, we assume that the familiarity is high (a value of 1). Conversely, the minimum familiarity of 0 is achieved when the recommender suggests resources in the opposite direction concerning the learner’s most familiar categories. This principle is related to the concept of calibrated recommendations, which aim to reflect the various interests of a user in the recommended list with their appropriate proportions (Steck, 2018)Footnote 4.

Definition 2 (Validity)

Validity is defined as whether the course is likely to be up-to-date and not obsolete, as measured by when content was last updated. A subject is assumed to be more valid if it has been newly updated.

Controlling the validity of the learning content is one of the major axes of education since learners would not find information invalid anymore in the courses. One way of maintaining the validity of the course content is to continuously update it, either with more recent content or with new versions of the same contents (e.g., adapted based on the learners’ feedback). This practice also shows learners that the course is alive. Curriculum-design experts usually seek to follow current trends and carefully consider the validity of a curriculum (Druzhinina et al., 2018); otherwise, the opportunity becomes obsolete. Similarly, Bulathwela et al. (2019) highlighted that content freshness is one of the main factors shaping content validity. Hence, we assume that validity should be to be taken into account in the recommendations offered.

Our scenario operationalizes the validity principle by controlling that learners are presented with recommended courses that have been recently or frequently updated. Values close to 0 imply that the recommended list includes courses no longer updated for a long time, while values close to 1 are achieved by recommended courses with recent updatesFootnote 5.

Definition 3 (Learnability)

Learnability is defined as whether the recommended courses present an opportunity that is coherent with the learners’ ability, with the learnability measured as whether the set of courses varies in terms of instructional level.

Learnability is generally associated with the ease, efficiency, and effectiveness with which learners can perform a knowledge acquisition activity. Our study assumes that the subject matter to be recommended should be within the knowledge schema of the learners. The literature indicated that learnability impacts learner motivation to learn (Conaway and Zorn-Arnold, 2016), prompting us to monitor this principle in the recommended lists.

In our scenario, this concept is operationalized to ensure that courses of diverse instructional levels are presented and maximize the possibility that learners can find an opportunity coherent with their abilities. Please, note that our study is not learner-centric, i.e., no record of student learning, performance, or exam grades is made and, therefore, student knowledge is not tracked. The factors tracked to measure learnability are the instructional levels of the courses attended by and recommended to learners. Compared to knowledge-tracking methods, our operationalization might appear an over-simple way of modelling the zone of proximal development, i.e., the zone between the actual level of development of the learner and the next level attainable through the use of mediating tools and/or collaboration, defined by Vygotsky (1978). However, the current online course platforms impose constraints that should be met. Specifically, data on mid-term quizzes and final exams are often not recorded internally, and this leaves the implementation of traditional knowledge-tracking techniques in these platforms as an open challenge. Hence, we rely on course recommendations that cover different instructional levels. Learnability values close to 0 imply inequality among levels, while the high balance is obtained with values close to 1.

Definition 4 (Variety)

Variety is defined as whether the recommendation takes into account that learners are different and learn in different ways based on their interests and ability, as measured by the degree to which the recommended courses present a mix of different asset types.

Providing course material in a variety of formats represents a primary objective. For instance, by studying the online course design and teaching practices of award-winning teachers, Kumar et al. (2019) uncovered that including video, audio, reading, and interactive content made courses more engaging and appreciated by learners during their learning sessions, though no explicit mention of the effectiveness of variety on learning gains has been made. In another study, Papathoma et al. (2020) highlighted how this variety of formats increases the accessibility of a course, given that learners may struggle with a particular medium (e.g., due to a reading barrier such as dyslexia or a video barrier such as hearing or attention problem). Therefore, monitoring whether a course provides learners with a large variety of content formats is crucial.

Our operationalization of variety assumes that varied asset types may be provided to help learners comprehend the subject from various perspectives. Hence, variety values close to 0 mean that the learning opportunities are focused on only one asset type, while types greatly vary for values close to 1.

Definition 5 (Quality)

Quality is defined as the perceived appreciation of the recommended resources by the learners, as measured by the ratings that the learners assign to resources after interacting with them.

Student evaluation of teaching quality is prominent to assess current teaching experiences. Teaching evaluation helps to promote a better learning experience for learners and provide information to future learners while deciding for attending a course. However, defining quality in online learning is challenging because there is no real consensus on its true meaning. Consequently, quality is evaluated differently depending on the organization in charge of measuring it. For instance, Darwin (2017) showed that student ratings are perceived as a valuable, though fragile, source of intelligence about the effectiveness of curriculum design, teaching practices, and assessment strategies. On the other hand, Gómez-Rey et al. (2016) observed that learners considered other core variables in defining quality in online programs, such as the ability to transfer, knowledge acquisition, learner satisfaction, and course design.

Our study operationalizes quality by leveraging the learners’ ratings. Rating values close to 0 mean that the learning opportunities are of low quality (i.e., they have received a low rating from other learners), while values close to 1 are measured for high-quality recommended opportunities. Though some studies demonstrated that learners’ ratings do not often correlate with other measures of quality (e.g., learning outcomes), this design choice makes it possible to meet the current constraints in data gathering in large-scale educational platforms and allows us also to maintain this principle as general as possible.

Definition 6 (Manageability)

Manageability is defined as whether the online classes are large or small, as measured by the number of learners enrolled in the recommended courses, with small classes considered more manageable.

Organizational aspects are critical for shaping learners’ experiences. In this context, class size differences may influence academic interactions between students and their professors and peers. For instance, with a large number of learners, the instructor may work harder to combat student passivity and encourage participation, as learners feel an increasing sense of anonymity. This point is confirmed by the study of Beattie and Thiele (2016), which uncovered that the likelihood of academic interactions about course material and assignments with professors was diminished in larger classes, as was the probability of talking to peers about ideas from classes. Similarly, Lowenthal et al. (2019) revealed that online courses with fewer enrollments are seen better for student learning and faculty satisfaction by learners and instructors.

Our study embeds the notion of manageability, associating it with the size of the course class where the recommended opportunities take place. This principle is relevant to offer opportunities under smaller and controlled classes. Hence, manageability values close to 0 mean that the learning opportunities include very large classes, while values close to 1 refer to small classesFootnote 6.

Definition 7 (Affordability)

Affordability is defined as the cost of accessing the recommended opportunities, as measured by the enrolment fees of the suggested courses, with less expensive courses having higher affordability value.

Dealing with the increasing costs of education is critical, given that lots of learners need access to vastly more affordable and quality education opportunities, including tuition-free course options. For instance, Mohapatra and Mohanty (2017) emphasized that the affordability of the offering is one of the prime predictors of the learners’ perception, while Joyner et al. (2016) uncovered that providing more affordable courses has led to the learner population that is more intrinsically motivated to learn, more experienced, and more professionally diverse in some contexts. Institutions and platforms are thus under increasing pressure to provide more affordable learning without sacrificing optimal learning outcomes. For this reason, we monitor the affordability principle in the recommendations generated in the online platform.

Our notion of affordability aims to control the degree of economic accessibility for the recommended opportunities, measured by their enrolment fees. Specifically, we consider how much the learning opportunities cover a range of fees. A value close to 0 means that the learning opportunities are expensive, while a value close to 1 corresponds to free-of-charge opportunities.

Though each of the principles has relevance for students’ educational experiences from the instructional design perspective, the set of principles could be expanded. Additionally, the proposed set is not meant to be the unique right set. Furthermore, it should be noted that massive online course platforms are often targeted for profitability and large coverage, and a business plan should be provided and making a profit must be considered as one of the primary goals. Thus, the question of how to integrate business and educational principles remains an open one. This question deserves a broader and specific discussion, going beyond a closer inspection of the technicalities. Our study in this paper assumes that the educational platform aims to impact the learners positively. Furthermore, there might be several principles left out, but relevant for certain educational scenarios or specific platforms (e.g., the time of day a course is offered). This observation challenges an assumption that there is a one-size-fits-all set of principles to be equalized in educational recommender systems. Another point to mention is that the considered principles assume a top-down approach and seem to leave little in the way of learner autonomy to help in their decision-making about the courses they take. However, this is not fully the case, given that recommendations are meant as a suggestion to learners, and learners are the entity that makes the final decision on the courses to attend. In addition, the principles to be considered and the importance to give to each of them could be tailored to each learner individually, through ad-hoc user interfaces integrated into the course platform. The protocols and interfaces required to favor customization at the individual learner level go beyond the scope of this paper, although our approach might be adapted.

Equality of Recommended Learning Opportunity

To formalize the equality of recommended learning opportunities, we first need to define how much the list recommended to each learner meets the principles targeted by the educational platform. In this paper, we propose to operationalize the concept of consistency across principles as the similarity between (i) the degree to which all principles are met into the recommended list and (ii) the degree of importance for the principles targeted by the educational platform. The higher the similarity, the higher the extent to which the principles are met. We resorted to the operationalization of this metric locally on each ranked list so that it will be possible to optimize such a metric on a pre-computed recommended list through a post-processing function (see “The Post-Processing Approach Proposed”). For the ranked list of recommended courses Ĩu to a learner u, we assume the platform aims to ensure a targeted degree pu(m) for each principle m ∈ C for each learner. In other words, pu(m) defines the extent to which the platform seeks to meet that principle m. The higher the pu(m) score is, the more important principle m is for the platform. The main motivation behind this term is that principles might have different importance, and the term we are defining here allows us to model the degree to which each principle should be met.

Once a recommender computes the top-k resources Ĩu to be suggested to learner u ∈ U, we need to define the extent to which each principle m ∈ C is met in Ĩu. To this end, we measure the degree the principle m is met in the recommended list Ĩu as \(q_{\Tilde {I}_{u}}(m)\). The way this score is obtained depends on the principle under consideration and how it has been operationalized (see Section 3.2 for a textual description of each principle m and Appendix A for the mathematical formulation of each principle m to obtain c). For instance, given a recommended list Ĩu and assuming that m is the principle of affordability, the score c represents the extent to which the courses in Ĩu are affordable (the more affordable the recommended courses are, the higher the c score is). This score is needed to monitor the gap between the degree qu(m) the principle m is met in the recommended courses and the targeted degree pu(m) expected by the platform (defined in (1)). The score qu(m) is defined as follows:

where the value corresponding to each principle \(q_{\Tilde {I}_{u}}(m)\) is computed by applying the formulas formalized in Appendix A.

Once we have formulated the degree qu(m) the principle m is met in the recommended courses and the targeted degree pu(m) expected by the platform, we need to define how to measure the gap between these two degrees across principles. This is of fundamental importance to assess how far the recommender system is from achieving the targeted degree pursued by the platform. Specifically, for the ranked list of a learner u, the principles targeted by the educational platform are met if the values in pu (targeted degree of the platform) and \(q_{\Tilde {I}_{u}}\) (degree achieved in the recommended list) are aligned with each other. To assess the extent to which the principles’ goals targeted by the educational platform are met (are consistent between each other), we compare the vectors pu and \(q_{\Tilde {I}_{u}}\), measuring the distance between the two. We define the notion of Consistency between (i) target principles and (ii) the extent to which the principles are achieved in recommendations, by the complement of the Manhattan (M) distanceFootnote 7, a symmetric and bounded distance measure. The higher the distance is, the lower the consistency score for the target principles is. Computing this consistency score Consistency(u|w) for all learners allows us to compare the extent to which recommendations are equally consistent across all learners, i.e., whether the notion of equality of recommended learning opportunities defined in our paper is met. The consistency for each learner and the entire learners’ population is formulated as follows:

where w is a vector of size |C|; the element wi is the weight assigned to the principle i, between 0 and 1. Consistency is 1 if pu (targeted degree of the platform) and \(q_{\Tilde {I}_{u}}\) (degree achieved in the recommended list) are perfectly balanced, meaning that the principles pursued by educational platform are met. Conversely, the lowest Consistency 0 is achieved when pu assigns value 0 to every principle that \(q_{\Tilde {I}_{u}}\) assigns value 1 (or vice versa), so that the distributions are completely unbalanced. In the latter situation, the recommender suggests resources opposite to the educational platform’s goals. Given that principles are context-sensitive, our notion of consistency might provide different target degrees of principles for each learner or each time period. For instance, concerning familiarity, different learners might have a different propensity for familiar content, and the same learner may, at different times, have distinct preferences. The above formulation allows modeling these circumstances.

Given the notion of consistency, we can formalize the notion of Equality across consistencies as the complement of the Gini indexFootnote 8 over the consistencies across learners. The Gini index ranges between 0 and 1, with higher values representing distributions with high inequality. It is used as:

where a value of 0 represents the largest inequality across consistencies (i.e., the extent to which the degree of the principles targeted by the platform and the degree of the principles achieved by the recommender system are the same), and a value of 1 means that the recommender systems are perfectly equal across learners in terms of consistency. Differently from Lahoti et al. (2019b) and Biega et al. (2018), we count as a positive effect when learners achieve high consistency in recommendations, regardless of the consistency in their past interactions. Thus, the ideal recommender system would be the one that (i) achieves the highest consistency between the principles pursued by the platform and those measured in the recommendations, (ii) keeps it equal over the learners’ population, and (iii) retains individual interests of learners.

It should be noted that our notion of equality is defined as providing the same consistency on principles to all learners, without leveraging any information on learners’ sensitive features, e.g., gender. The targeted degree for each principle for each student is assumed to be set by or known by the educational platform. Our approach enables a platform to set the same targeted degree for all students or apply student-specific targeted degrees set based on the previous learners’ preference or elicited from learners. To focus better on the core contribution of this paper, “Exploratory Analysis” will investigate whether all the principles can be maximized for all learners, leaving student-specific targeted degrees as part of a human-centered studyFootnote 9. Furthermore, the reliance on stakeholders empowered with decision-making capabilities to configure the platform with the considered principles and their different targeted degrees represents an essential element towards implementing our notion of equality. This primary responsibility of stakeholders is in addition to all the others involved in the educational ecosystem (e.g., selecting the preferred system, deciding the recommendation strategy, and defining the visual interface).

Exploratory Analysis

To illustrate the trade-off between learners’ interests and the considered principles and further emphasize the value of our analytical modeling, we characterize the learning opportunities proposed by ten algorithms to learners of a real-world educational dataset as a function of the proposed principles.

Data

We analyze data from the educational context, exploring the role of the proposed principles in recommendations. We remark that the experimentation is challenging because there are very few large-scale educational datasets coming from this specific field of online education. To the best of our knowledge, COCO (Dessì et al., 2018) is the widest educational dataset with all the attributes required to model the proposed principles and with enough data to assess performance significantly. Collected from an online course platform, it includes 43,045 courses and 4,123,127 learners who gave 6,564,870 ratings. Other educational datasets proposed by Feng et al. (2019), Zhang et al. (2019), and Qiu et al. (2016) generally include (learner,course,rating) triplets only, as needed in traditional recommendation scenarios.

Recommendation Algorithms and Protocols

We considered ten methods and investigated the recommendations they generated. Two of them are baseline recommenders, and the other eight are state-of-the-art algorithms, chosen due to their performance, wide adoption, and core applicability in learning contexts (Kulkarni et al., 2020). These algorithms are:

-

Non-Personalized: Random and TopPopular.

-

Neighbor-based: UserKNN and ItemKNN (Sarwar et al., 2001).

-

Matrix-Factorized: GMF (He et al., 2017), NeuMF (He et al., 2017).

-

Graph: P3-Alpha (Cooper et al., 2014) and RP3-Beta (Paudel et al., 2016).

-

Content: ItemKNN-CB (Lops et al., 2011).

-

Hybrid: CoupledCF (Zhang et al., 2018).

Based on hyperparameter tuning, UserKNN and ItemKNN relied on the cosine metric and 100 neighbors. GMF and NeuMF used 10 factors and were trained on 4 negative samples per positive instance. This means that, for each observed user-item interaction, we added to the training set four user-item pairs where the selected item has been never observed by that user in the dataset. P3-Alpha was executed with 0.8 alpha and 200 neighbors, while RP3-Beta adopted 0.6 alpha, 0.3 beta, and 200 neighbors. ItemKNN-CB mapped course descriptions to Term-Frequency Inverse-Document Frequency (TF-IDF) features. The TF-IDF features of courses into the user’s profile were averaged, and their cosine similarity with the TF-IDF features of other courses is used during ranking. CoupledCF embedded user-item associations, the user tendency to interact with each category of courses, and the category of the course in the current user-item pair. To be as close as possible to a real scenario, we used a fixed-timestamp split (Campos et al., 2014). The basic idea is to choose a single timestamp that represents the moment in which test learners are on the platform waiting for recommendations. Their past corresponds to the training set, and the performance is evaluated with data coming from their future. In this work, we select the splitting timestamp 2017-06-08, which maximizes the number of learners involved in the evaluation, by setting two constraints: the training set must keep at least 4 ratings per user, and the test set must contain at least 1 rating. This split led to 43,021 learners, 24,321 courses, and 529,857 interactions (Fig. 2). Normalized Discounted Cumulative Gain (NDCG)Footnote 10 is used as an effectiveness metric. As a measure of relevance for NDCG, the binarized (u, i) tuples formalized in “Problem Formulation” were usedFootnote 11.

Data Statistics. Characteristics of the real-world dataset relevant to the learning opportunity principles proposed by this paper: course popularity, rating values, last update timestamp, thematic category, instructional level, asset types (V:Video; A:Article; E:Ebook; P:Podcast), prices, number of enrolments per course, and average rating per course. Subfigure captions specify the feature and the interested principle as < Feature >:< principle >

Real-World Observations

We characterize how the proposed principles were met in the lists of courses suggested by the algorithms considered. Student-specific targeted weights for each principle would be elicited through user groups, surveys, or implicit preferences observed in the collected data. However, due to the absence of this form of feedback in COCO and given that the preference of each learner derived from historical data might have been biased by the recommender system itself, we consider a scenario where the educational platform aims to maximize all the targeted principles, i.e.,  . To this end, we assume to give the same maximum weight to all the principles, i.e.,

. To this end, we assume to give the same maximum weight to all the principles, i.e.,  . While this assumption comes with some limitations described in “Limitations”, given that each learner does not always prefer maximum familiarity, for example, such setup allows us to quantify the extent to which each principle is met. We leave experiments on learner-specific weights elicited through interviews or surveys as future work. Three research questions drove our analysis:

. While this assumption comes with some limitations described in “Limitations”, given that each learner does not always prefer maximum familiarity, for example, such setup allows us to quantify the extent to which each principle is met. We leave experiments on learner-specific weights elicited through interviews or surveys as future work. Three research questions drove our analysis:

-

RQ4.3.1 Does a relation exist between consistency and equality?

-

RQ4.3.2 To what extent principles impact on consistency and equality?

-

RQ4.3.3 Are consistency and equality affected by the past learners’ behavior?

Equality Analysis (RQ4.3.1)

In this subsection, to answer the first research question, we explore whether a relation between consistency and equality exists and, if this is the case, which type of relation exists. Answering this question can allow us to uncover a link between a metric that requires knowledge about the whole learner population (i.e., equality) and a metric that can be directly optimized on a single ranked list (consistency), making it possible to apply a non-NP-Hard re-ranking procedure to increase equality in our task. To this end, we provided recommendations to all learners, suggesting to each learner k = 10 courses; then we measured consistency across the whole learners’ population, i.e., how much the principles were met in the recommendations of learners (4), and equality, i.e., how similar were the consistencies across learners (5). Furthermore, to assess the extent to which the recommender system is accurate (i.e., predicts well the future interests of the learners), we also computed the accuracy of the recommender system in terms of Normalized Discounted Cumulative Gain (NDCG). Table 1 reports the consistency, equality, and accuracy of the ten recommender systems considered. A higher value indicates that a recommender better drives consistency, equality, and/or accuracy respectively. A first observation from Table 1 is the following:

Though the observation above holds under our setting, the values associated with the equality of the recommender systems and the mean consistency values associated with each principle do not reveal much about how consistency estimates are equal across individual learners. Therefore, we plot consistencies across learners for each algorithm, sorted by increasing values (Fig. 3a). It can be observed that ItemKNN-CB and CoupledCF are equally consistent across learners. This result might depend on the fact that, in the presence of principles related to the course content, the content-based and hybrid methods may, incidentally, increase those principles and lead to higher consistency. In other words, their equality could be biased by the fact that they capitalize on input information that is related to some principles.

Consistency over the Entire Population. On the left plot, lines represent the consistency distribution over learners, sorted in increasing order. On the center plot, each error bar includes mean (dot), std deviation (black solid line), and min-max values (colored thick line). The right plot highlights the direct relation between consistency and equality

While it may happen that certain principles are optimized by a traditional recommendation algorithm involuntarily, it is generally impractical to arrange the internal logic of an algorithm a priori to all the principles targeted. Figure 3b plots the consistency error bars for each algorithm, with mean, standard deviation, minimum, and maximum values. We observe that there is a link between the magnitude of the mean and the standard deviation. More precisely, the higher the mean consistency guaranteed by the algorithm, the lower the standard deviation across consistency values is (Fig. 3c). Hence, we can draw a subsequent observation:

Uncovering a link between a metric that requires knowledge about the whole learner population (i.e., Equality in (5)) and a metric that can be directly optimized on a single ranked list (i.e., Consistency in (4)) makes it possible to apply a non-NP-Hard re-ranking procedure to solve our task. This suggests that we should investigate the interplay between (i) the average consistency across principles and (ii) the consistency achieved for each principle individually when a given learner and algorithm are considered.

We can conclude that a relation between consistency and equality exists in all the recommender systems considered. The relation is direct, i.e., the higher the consistency is, the higher the equality is, meaning that recommender systems that achieve higher consistency also tend to equalize it across learners. The strength of this relation depends on the recommender system.

Individual Principle Analysis (RQ4.3.2)

Next, to answer the second research question, we investigate the extent to which the considered principles impact on consistency and equality and whether this impact is different based on the principle. An exploration of this perspective can inform us on the extent to which each principle is met and, by extension, provide helpful insights for the approach we will develop to increase equality. For the sake of readability and conciseness, we do not further consider the Random algorithm over the analysis. Figure 4 reports the mean, standard deviation, minimum, and maximum values over each principle on that recommender. For instance, the coupledcf plot shows that the familiarity score has a mean of 0.80, a standard deviation of ± 0.05, and spans the whole range (min: 0.00; max: 1.00). The first observations can be made for the top popular algorithm, whose results reveal that popular courses are mostly fresh (high validity) and have high quality. However, the consistency of these two principles comes at the price of low familiarity, learnability, variety, and affordability. Considering algorithms that capitalize on course metadata (CoupledCF and ItemKNN-CB), similar patterns can be observed across principles, except on variety and quality. For the variety and quality, embedding user-item interactions in CoupledCF can reduce the min-max gap. Hence, we can avoid situations where few learners have very high/low values. Other algorithms achieved a more stable consistency.

To assess whether certain algorithms favor or hurt a given principle, Fig. 5 reports for each principle how it varies over algorithms. We observe that familiarity and affordability suffer from high deviations, while more stable values were measured for other principles over algorithms. We conjecture that the stability observed on quality comes from the highly unbalanced rating value distribution. Indirectly, this effect could come from the fact that learners tend to evaluate courses with high ratings when they decide to rate them. Figure 5 also confirmed this intuition. On principles like affordability, manageability, and learnability, the considered algorithms got lower values.

To further confirm the role of each principle over consistency, we looked at the correlation between the consistency achieved for a given principle and the consistency achieved by including all the principles. In Fig. 6, we report the results for each principle and algorithm pair. Values higher than 0 are expected when the consistency at the principle level is directly related to the high consistency achieved when all the principles are considered. Hence, the overall consistency is more likely to be met when that specific principle is met. Values lower than 0 result in the opposite behavior. No relation is found when the value is close to 0. This property allows us to make another observation:

Principle-Consistency Relation. Heatmap of correlations between the consistency for a given principle and the consistency for the whole principles list, over different algorithms. Each value ranges in [-1, + 1], and for each principle and algorithm, the Spearman correlation is computed over a distribution of (principle value, user consistency) pairs

We can conclude that the extent to which the principles impact consistency and equality depends on the recommender system and the principle considered. In general, certain principles (e.g., familiarity, learnability, and affordability) appear as the principles with the highest impact on consistency and equality, across all recommender systems. Those principles might be the ones that will be impacted the most by an approach that increases equality.

Past Interaction Analysis (RQ4.3.3)

The last research question in this section is related to an exploration of the extent to which consistency and equality are affected by the past learners’ behavior. Most of the observations seen so far are based on the fact that the observed consistency values are averaged over learners. However, it is interesting to ask whether, for two learners with similar past interactions concerning the considered principles, we should expect a similar consistency. In other words, we ask whether similar learners get similar consistency. In our setting, for learners, we assume that being similar means having similar consistency in their past interactions. Therefore, we computed the consistency metric defined in (3) by substituting the vector \(q_{\Tilde {I}_{u}}\) (i.e., the extent to which principles are met in the recommendations Ĩu) with the vector \(q_{I_{u}}\) (i.e., the extent to which principles are met in the list of courses Iu previously attended by the learner), so that we can quantify how much the targeted principles were met in the set of past interactions of each learner.

To this end, for all the possible pairs of learners, u1 and u2, we computed the difference of consistency in their profile and their recommendations. Figure 7 depicts pairs of results by increasing the difference of consistency in their profiles. It can be observed that, except for the graph-based P3Alpha and RP3Alpha, a higher similarity of consistency between the profiles results in a higher similarity of consistency over the recommendations. Figure 8 also shows, for each principle and algorithm, the best and worst consistency across learners, according to the above definition. It is confirmed that familiarity, learnability, variety, and affordability play a key role in overall consistency.

We can conclude that consistency and equality are affected by the prior courses the learner has attended in the platform. Learners whose former courses already achieve high consistency tend to receive recommendations that are consistent too. Increasing equality might require playing with the lists recommended to learners that suffer from a low consistency even in their profile.

Optimizing for Equality of Learning Opportunities

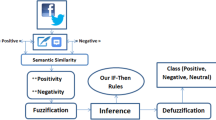

With the observations made so far, we conjecture that re-ranking each list of recommendations to maximize the considered principles will lead to higher consistency, and, consequently, to higher equality. Therefore, in this section, we describe, evaluate, and discuss the approach proposed in this paper to favor consistency of the principles (Section 3.2) in recommendations (Fig. 9).

Support Framework. First, given interactions and metadata, a recommendation algorithm computes a user-item relevance matrix. Then, given a user-item relevance matrix, a list of principle functions, a principle weight strategy, and a list of principle targets, our approach returns a user-item relevance matrix that meets the input principles. Finally, a ranking step, given the adjusted user-item relevance, outputs the recommended list

The Post-Processing Approach Proposed

To meet the principles pursued by the platform for each learner and optimizing for equality of opportunities across learners, we introduce a recommendation procedure that seeks to maximize the consistency formalized in (3).

Given that it is generally hard to build the equity-enhancing mechanisms into the main recommendation algorithm, we propose to re-arrange the recommended lists returned by a recommender system, a common practice known as re-ranking (Potey & Sinha, 2017). On the one hand, this strategy might be limited in its impact, since reordering a small set of recommendations might have a less profound effect than building equality into the recommendation selected by the recommender system from the extensive pool of possibilities. On the other hand, it has several advantages, such as that it can be applied to the output of any recommender system and can be easily extended to include any novel principle. Therefore, we assume that the notion of equal recommended learning opportunities might be enhanced by trying to balance out desirable properties of course recommendations, and that re-ranking the courses originally suggested (and optimized for personalization) by the recommender system can be a feasible strategy for achieving this objective. The re-ranking should operate such that the modified lists recommended to learners meet desirable principles of course recommendations equally across learners.

For each learner u ∈ U, our goal is to determine an optimal set \(\mathcal I^{\ast }\) of k courses to be recommended to u, so that the principles pursued by the platform are met while preserving accuracy (i.e., the extent to which the recommended items are among those included in the test set for that learner, meaning that the recommender system predicts well the future interests of the learner). To this end, we capitalize on a maximum marginal relevance (Carbonell & Goldstein, 1998) approach, with (3) as the support metric. In other words, we aim to find the set of courses \(\mathcal I^{\ast }\) to recommended to the learner u such that those courses have high relevance for the learner u (\(\widetilde R_{ui}\): the relevance predicted by the recommender on the course i for learner u) and their addition to the recommended list brings the highest increase in the consistency level across the principles (\(Consistency(p_{u},{q}_{\mathcal I}| w)\): the extent to which the degrees pu for all principles targeted by the platform and the actual degrees \({q}_{\mathcal I}\) these principles are met by the recommended list agree with each other). Let us consider an example where we aim to recommend k = 10 courses to a given learner u. For each position p of the ranking, for each course, we compute the weighted sum between (i) the relevance of that course for the learner u and (ii) the consistency the recommended list to u would achieve if we include that course in the list of recommendations. The weight λ assigned to the consistency term allows us to define how important the consistency is concerning the relevance of that course for the learner (i.e., the degree that course meets the individual interests of that learner). Once we compute this weighted score for all courses, we find the course that achieves the highest weighted score, and we add it to the recommendations to u at position p. The same procedure is repeated similarly for the other positions till k.

The set \(\mathcal I^{\ast }\) is obtained by solving the following optimization problem:

where \({q}_{\mathcal I}\) is q when the top-k list includes items \(\mathcal I\), and λ ∈ [0,1] is a parameter that expresses the trade-off between accuracy and learning opportunity consistency. With λ = 0, we yield the output of the recommender, not taking consistency optimization into account. Conversely, with λ = 1, the output of the recommender is discarded, and we focus only on maximizing consistency.

This greedy approach yields an ordered list of resources, and the resulting list at each step is (1 − 1/e) optimal among the lists of equal size. The proof of the optimality of the proposed approach is provided in Appendix B. This property fits with the real world, where learners may initially see only the first k recommendations, and the remaining items may become visible after scrolling. Our approach also allows controlling more than one learning opportunity principle in the ranked lists, with no constraints on the size of C.

Evaluation Scenario and Experimental Results

In this section, we assess the impact of controlling consistency and equality of learning opportunities across learners after applying our procedure to pursue the platform’s principles (i.e., maximizing all the principle indicators). It is important to note that we considered the same setup described for the exploratory analysis, including the same datasets (“Data”), protocols (“Recommendation Algorithms and Protocols”), and metrics (“Problem Formulation”), to answer four key research questions:

-

RQ5.2.1 Which weight setup achieved the best accuracy-equality trade-off?

-

RQ5.2.2 Which principles have experienced the largest gain in consistency?

-

RQ5.2.3 Which is the influence of the original relevance score distribution?

-

RQ5.2.4 How do the recommended lists differ, before and after our approach?

Influence of Weight Setup (RQ5.2.1)

In this subsection, to answer the first research question, we explore the extent to which each principles’ weight setup meets the accuracy-equality trade-off. Given that the consistency achieved with the originally recommended lists is different across principles and learners, different weight setups might lead to distinct levels of the mentioned trade-off. Finding the weight setup that results in the best trade-off is therefore of primary importance to increase equality. We run experiments to assess (i) the influence of our procedure and the weight-based strategy on accuracy, consistency, and equality, and (ii) the relation between a loss in accuracy and a gain in consistency and equality while applying our procedure. To this end, we envisioned three approaches of principle weight assignment:

-

Glob assigns the same weight to all the principles, for all users. This method would not account for the level of consistency the recommended list to a given user already achieved and will treat all the principles equally.

-

User assigns, to a principle, a weight proportional to the consistency gap for that principle concerning the target of the platform, computed during the exploratory analysis. The consistency gap for a principle has been obtained by averaging the individual consistency gaps across users.

-

Pers, given a user, assigns the weight for a principle by considering only their (individual) consistency gap for that principle. Thus, different weights are used along with the user population.

For each model, we run an instance of our re-ranking procedure for each weight assignment strategy, assigning to λ a value in [0.01,0.25,0.50,0.75,0.99].

The results related to NDCG, consistency, and equality are shown in Fig. 10. Specifically, top-row plots on NDCG highlighted that ItemKNN and ItemKNN-CB experienced the largest loss in NDCG at increasing λ. The rest of the algorithms showed a more stable pattern on NDCG, even though the NDCG absolute value is significantly lower for the one achieved by ItemKNN and ItemKNN-CB. Throughout the weight assignment strategy, we did not observe a significant difference for the same algorithm over the three strategies. On the other hand, the weight assignment strategy has a notorious role in consistency and equality (middle and bottom rows). Specifically, User and Pers weight setups made it possible to achieve higher consistency and equality than Glob. We can also observe that all the algorithms brought the same degree of improvement in consistency while varying λ.

Interestingly, by looking at equality scores, two patterns of improvement were observed. Specifically, the algorithms from the graph-based, content-based, and hybrid families showed a larger improvement at each value of λ than the other families. The following observation can be drawn:

To have a more detailed picture, we analyzed the connection between a loss in NDCG and a gain in consistency and equality. This aspect plays a key role in a real-world context. While it is the responsibility of scientists to bring forth the discussion about metrics, and possibly to design algorithms to optimize them by turning parameters, it is ultimately up to the stakeholdersFootnote 12 (e.g., teachers, instructional designers, platform owners), depending on the targeted educational domain, to select the trade-offs most suitable for their context. Therefore, this aspect would support a decision regarding the value of λ to set up to achieve the desired trade-off. Figure 11 plots the gain of consistency (top row) and equality (bottom row) resulting from the degree of NDCG loss. It should be noted that the gain in consistency (equality) is computed with respect to the original consistency (equality) at λ = 0.01. We observe that consistency and equality within the same weight strategy show the same behavior on the loss in NDCG. This observation confirms the results of our exploratory analysis, where consistency and equality were directly proportional.

We can conclude that the principles’ weight setup has a high impact on the accuracy-equality trade-off. Using user-based weights that represent the average of the individual consistency gaps across learners or individual weights that are personalized for each user lead to higher equality of recommended learning opportunities.

Influence on Each Principle (RQ5.2.2)

In this subsection, we answer the second research question, aimed at exploring which principles have experienced the largest gain in consistency, with our approach. For instance, this aspect is important to understand whether our approach will favor those principles that already have high consistency or those principles that suffer from a low consistency. To this end, we run experiments to assess (i) which principles show the largest improvement thanks to the proposed approach, and (ii) what is the impact of the weighting strategy on the consistency of each principle. To answer these questions, for each model, we run an instance of our re-ranking procedure for each weighting strategy, varying λ ∈ [0.01,0.25,0.50,0.75,0.99]. Then, we computed the consistency of each principle achieved by an algorithm, at a given λ, with a given weighting.