Abstract

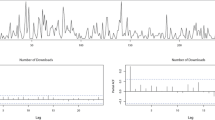

Motivated by the need of modeling and inference for high-order integer-valued threshold time series models, this paper introduces a pth-order two-regime self-excited threshold integer-valued autoregressive (SETINAR(2, p)) model. Basic probabilistic and statistical properties of the model are discussed. The parameter estimation problem is addressed by means of conditional least squares and conditional maximum likelihood methods. The asymptotic properties of the estimators, including the threshold parameter, are obtained. A method to test the nonlinearity of the underlying process is provided. Some simulation studies are conducted to show the performances of the proposed methods. Finally, an application to the number of people suffering from meningococcal disease in Germany is provided.

Similar content being viewed by others

References

Al-Osh, M.A., Alzaid, A.A.: First-order integer-valued autoregressive (INAR(1)) process. J. Time Ser. Anal. 8, 261–275 (1987)

Billingsley, P.: Statistical Inference for Markov Processes. The University of Chicago Press, Chicago (1961)

Chen, C.W.S., Lee, S.: Bayesian causality test for integer-valued time series models with applications to climate and crime data. J. R. Stat. Soc. Ser. C 66, 797–814 (2017)

Davis, R.A., Fokianos, K., Holan, S.H., Joe, H., Livsey, J., Lund, R., Pipiras, V., Ravishanker, N.: Count time series: a methodological review. J. Am. Stat. Assoc. 116, 1533–1547 (2021)

Doukhan, P., Latour, A., Oraichi, D.: A simple integer-valued bilinear time series model. Adv. Appl. Probab. 38, 559–578 (2006)

Du, J.G., Li, Y.: The integer-valued autoregressive (INAR(\(p\))) model. J. Time Ser. Anal. 12, 129–142 (1991)

Franke, J., Seligmann, T.: Conditional Maximum Likelihood Estimates for INAR(1) Processes and their Application to Modeling Epileptic Seizure Counts. In: SubbaRao, T. (ed.) Developments in Time Series Analysis. pp. 310–330 (1993)

Ferland, R., Latour, A., Oraichi, D.: Integer-valued GARCH process. J. Time Ser. Anal. 27, 923–942 (2006)

Fokianos, K., Rahbek, A., Tjøstheim, D.: Poisson autoregression. J. Am. Stat. Assoc. 104, 1430–1439 (2009)

Hall, A., Scotto, M.G., Cruz, J.P.: Extremes of integer-valued moving average sequences. TEST 19, 359–374 (2010)

Jung, R.C., Kukuk, M., Liesenfeld, R.: Time series of count data: modelling and estimation and diagnostics. Comput. Stat. Data Anal. 51, 2350–2364 (2006)

Klimko, L.A., Nelson, P.I.: On conditional least squares estimation for stochastic processes. Ann. Stat. 6, 629–42 (1978)

Latour, A.: Existence and stochastic structure of a non-negative integer-valued autoregressive process. J. Time Ser. Anal. 19, 439–455 (1998)

Li, H., Yang, K., Wang, D.: Quasi-likelihood inference for self-exciting threshold integer-valued autoregressive processes. Comput. Stat. 32, 1597–1620 (2017)

Li, H., Yang, K., Zhao, S., Wang, D.: First-order random coefficients integer-valued threshold autoregressive processes. AStA-Adv. Stat. Anal. 102, 305–331 (2018)

Liu, M., Li, Q., Zhu, F.: Self-excited hysteretic negative binomial autoregression. AStA Adv. Stat. Anal. 104, 385–415 (2020)

Monteiro, M., Scotto, M.G., Pereira, I.: Integer-valued self-exciting threshold autoregressive processes. Commun. Stat. Theory Methods 140, 2717–2737 (2012)

Möller, T.A., Silva, M.E., Weiß, C.H., Scotto, M.G., Pereira, I.: Self-exciting threshold binomial autoregressive processes. AStA Adv. Stat. Anal. 100, 369–400 (2016)

Pedeli, X., Davison, A.C., Fokianos, K.: Likelihood estimation for the INAR(\(p\)) model by saddlepoint approximation. J. Am. Stat. Assoc. 110, 1229–1238 (2015)

Scotto, M.G., Weiß, C.H., Gouveia, S.: Thinning-based models in the analysis of integer-valued time series: a review. Stat. Model. 15, 590–618 (2015)

Thyregod, P., Carstensen, J., Madsen, H.: Integer valued autoregressive models for tipping bucket rainfall measurements. Environmetrics 10, 395–411 (1999)

Tong, H., Lim, K.S.: Threshold autoregression, limit cycles and cyclical data. J. Roy. Stat. Soc. B 42, 245–292 (1980)

Wang, Z.K.: Stochastic Process. Scientific Press, Beijing (1982)

Wang, C., Liu, H., Yao, J., Davis, R.A., Li, W.K.: Self-excited threshold Poisson autoregression. J. Am. Stat. Assoc. 109, 776–787 (2014)

Wang, X., Wang, D., Yang, K., Xu, D.: Estimation and testing for the integer-valued threshold autoregressive models based on negative binomial thinning. Commun. Stat. Simul. Comput. 50, 1622–1644 (2021)

Weiß, C.H.: Thinning operations for modeling time series of counts: a survey. AStA Adv. Stat. Anal. 92, 319–343 (2008)

Yang, K., Li, H., Wang, D.: Estimation of parameters in the self-exciting threshold autoregressive processes for nonlinear time series of counts. Appl. Math. Model. 57, 226–247 (2018)

Yang, K., Wang, D., Jia, B., Li, H.: An integer-valued threshold autoregressive process based on negative binomial thinning. Stat. Pap. 59, 1131–1160 (2018)

Yang, K., Li, H., Wang, D., Zhang, C.: Random coefficients integer-valued threshold autoregressive processes driven by logistic regression. AStA-Adv. Stat. Anal. 105, 533–557 (2021)

Yang, K., Yu, X., Zhang, Q., Dong, X.: On MCMC sampling in self-exciting integer-valued threshold time series models. Comput. Stat. Data Anal. (2022). https://doi.org/10.1016/j.csda.2021.107410

Acknowledgements

This work is supported by the National Natural Science Foundation of China (No. 11901053), Natural Science Foundation of Jilin Province (No. 20220101038JC, 20210101149JC), and Scientific Research Project of Jilin Provincial Department of Education (No. JJKH20220671KJ).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

On behalf of all authors, the corresponding author states that there is no conflict of interest.

8 Appendix

8 Appendix

We first introduce some notations. These notations will be used in the following proofs. Let \({\varvec{A}}= {\left( a_{ij} \right) }_{m \times n} \) be an \(m \times n\) matrix. We write \({\varvec{A}} \ge {\varvec{0}}\) if \(a_{ij} \ge 0\) for all \(1 \le i \le m\) and \(1 \le j \le n\). Meanwhile, we write \({\varvec{A}} < \infty \) if \(a_{ij} < \infty \) for all \(1\le i \le m\) and \(1 \le j \le n\). Let \({\varvec{X}}\) and \({\varvec{Y}}\) be two \(n \times 1\) column vectors. We write \({\varvec{X}} \ge {\varvec{Y}}\) if \({\varvec{X}} - {\varvec{Y}} \ge {\varvec{0}}\).

Proof of Theorem 2.1

Let \(L^2:= \{ X|E(X^2) < + \infty \}\). Define the scalar product operation on \(L^2\) as \(( X,Y) = E(XY)\), then \(L^2\) is a Hilbert space.

Let us introduce a sequence \(\{ X_t^{(n)}\}_{n \in \mathbb {Z}}\),

where \(\phi _{t,i}: = \alpha _{1,i} I_{t-d,1} + \alpha _{2,i}I_{t-d,2}\), \(i = 1,2, \ldots , p\), \(1 \le d \le p\), \(I_{t-d,1}:= I\{ W_{t,d} \le r \}\), \(I_{t-d,2}:= I\{ W_{t,d} > r \}\), \(W_{t,d} \in L^2\), and \(Cov( X_s^{(n)}, Z_t ) = 0\) for any n and \(s < t\).

Step 1 Existence.

(A1) \(X_t^{(n)} \in {L^2}\).

Let \({{\varvec{X}}_t^{(n)} = {( {X_t^{(n)},X_{t - 1}^{(n - 1)}, \ldots ,X_{t - p + 1}^{(n - p + 1)}} )}^{\textsf {T}}}\), \({\varvec{Z}}_t = ( Z_t,0\), \(\ldots ,0 )^{\textsf {T}}\). Denote by \({\varvec{\varSigma }}_z = E({\varvec{Z}}_t {\varvec{Z}}_t^{\textsf {T}} )\), \({\varvec{\mu }} _{z} = {( {\lambda ,0, \ldots ,0} )^{\textsf {T}} }\), where \(E({Z_t}) = \lambda \). Denote by \({\varvec{I}}_j=diag(I_{t - d,j},\ldots ,I_{t - d,j})_{p\times p}\) (\(j=1,2\)) a p-dimensional diagonal matrix, further denote

Then, we have \({\varvec{X}}_t^{(n)} = ({\varvec{A}}_1 {\varvec{I}}_1 + {\varvec{A}}_2 {\varvec{I}}_2 ) \circ {\varvec{X}}_{t - 1}^{(n - 1)} + {\varvec{Z}}_t\). With this representation, we have

where

Repeating the above recurrence n times, we can show that \(E\left| {X_t^{(n)}}\right| ^2 < \infty \), which implies \(X_t^{(n)} \in {L^2}\).

(A2) \(X_t^{(n)}\) is a Cauchy sequence.

Let \(U(n,t,k) = \left| {X_t^{(n)} - X_t^{(n - k)}} \right| \), \(k = 1,2, \ldots \), note that \(U(n,t,k) > 0\), we have

In addition, we consider the vector

It is easy to see that \( E{\varvec{U}}_{t,k}^{(n)} \le {\varvec{A}}_{\max }E{\varvec{U}}_{t - 1,k}^{(n - 1)} \le \cdots \le {\varvec{A}}_{\max }^n E{\varvec{U}}_{t - n,k}^{(0)} = {\varvec{A}}_{\max }^n {\varvec{\mu }} _{z}. \) Since the eigenvalues of \({\varvec{A}}_{\max }\) are all in the unit circle, we can draw a conclusion

In a similar way, we have

where \(\beta _i={\alpha _{\max ,i}}\left( {1 - {\alpha _{\max ,i}}} \right) \) and

Since the eigenvalues of \({\varvec{A}}_{\max }\) are all in the unit circle, the second term of the above formula converges to 0. The upper bound of the first term is

where \(S( n) = \sum _{j = 0}^{n - 1} {{\varvec{1}}^{\textsf {T}}{\varvec{A}}_{\max }^{n - j - 1}{\varvec{1}}{\varvec{A}}_{\max }^j ({\varvec{A}}_{\max }^j)^{\textsf {T}}}\), \({\varvec{1}} = {\left( {1, \ldots ,1} \right) ^{\textsf {T}} }\). According to Lemma 3.1 in Latour [13], the sequence S(n) converges to 0. Therefore, \(\{X_t^{(n)}\}\) is a Cauchy sequence. Thus, there exists a random variable \(X_t\) in \(L^2\) such that \({X_t} = \mathop {\lim }\limits _{n \rightarrow \infty } X_t^{(n)}\).

(A3) \(\left\{ {X_t}\right\} \) satisfies the SETINAR(2, p) process.

Let \(W_{t,d}\) be \(X_{t-d}\) (\(1 \le d \le p\)) and substitute it into (8.1). Then, we have

\(X_t^{(n)}\buildrel {{L_2}} \over \longrightarrow {X_t}\), where \(\buildrel {{L_2}} \over \longrightarrow \) denotes convergence in \({L^2}\). Thus,

Note that \(X_t^{(n)}\) converges to \(X_t\) implying \(\mathop {\lim }\limits _{n \rightarrow \infty } \textrm{Cov}( {X_s^{(n)},{Z_t}} ) = \textrm{Cov}\left( {{X_s},{Z_t}} \right) \). \(\textrm{Cov}\left( {X_s^{(n)},{Z_t}} \right) = 0\) implies \(\textrm{Cov}\left( {{X_s},{Z_t}} \right) = 0\) for \(s < t\). Therefore, the process \(\left\{ {X_t} \right\} \) satisfies the equation of SETINAR(2, p) process.

Step 2: Uniqueness.

Suppose there is another process \(\left\{ {X_t^ * } \right\} \) such that \(X_t^{(n)}\buildrel {{L_2}} \over \longrightarrow {X_t^ * }\). According to the Hölder inequality, we have

Therefore, \(E\left| {{X_t} - X_t^*} \right| =0\), which implies \({X_t} = X_t^*\) a.s.

Step 3 Strict stationarity.

Since \(X_t^{(n)} = 0\) for \(n < 0\), recursively solving (8.1), regardless of t, there exists a function \({F}\left( \cdot \right) \) such that

where \(\mathop = \limits ^d \) stands for equal in distribution. Therefore, the distribution of \(X_t^{(n)}\) depends only on n, not on t. Then, for any n and T, \(( {X_0^{(n)},X_1^{(n)},\ldots ,X_T^{(n)}} )\) and \(( {X_k^{(n)},X_{k + 1}^{(n)}, \ldots ,X_{k + T}^{(n)}} )\) are identically distributed for any k. Since \(X_t^{(n)}\buildrel {{L_2}} \over \longrightarrow {X_t}\), then \(X_t^{(n)}\buildrel {{P}} \over \longrightarrow {X_t}\). Thus, for any real number \({a_i}\)’s, we have \(\sum \nolimits _{i = 0}^T {{a_i}X_{t + i}^{(n)}} \buildrel P \over \longrightarrow \sum \nolimits _{i = 0}^T {{a_i}{X_{t + i}}} \). By the Cramer–Wold device, we have

Since \(\left( {X_0^{(n)},X_1^{(n)}, \ldots ,X_T^{(n)}} \right) \mathop = \limits ^d \left( {X_k^{(n)},X_{k + 1}^{(n)}, \ldots ,X_{k + T}^{(n)}} \right) \) for every k, \(\left( {{X_0},{X_1}, \ldots ,{X_T}} \right) \) and \(( {X_k},{X_{k + 1}}, \ldots \), \({X_{k + T}})\) have the same distribution, which implies that \(\left\{ {X_t} \right\} \) is a strictly stationary process.

Step 4 Ergodicity.

Let \(\left\{ {Y(t)} \right\} \) be all the counting series of \({\phi _{t,1}} \circ {X_{t - 1}} + {\phi _{t,2}} \circ {X_{t - 2}} + \cdots + {\phi _{t,p}} \circ {X_{t - p}}\) in (2.1), then \(\left\{ {Y(t)} \right\} \) is a time series. Let \(\sigma (X)\) be a \(\sigma \)-field generated by the random variable X. According to (2.1), we obtain \( \sigma ({X_t},{X_{t + 1}}, \ldots ) \subset \sigma ({Z_t},Y(t),{Z_{t + 1}},Y(t + 1), \ldots ) \) and \( \bigcap \limits _{t = 1}^{ + \infty } {\sigma ({X_t},{X_{t + 1}}, \ldots )} \subset \bigcap \limits _{t = 1}^{ + \infty } {\sigma ({Z_t},Y(t),{Z_{t + 1}},Y(t + 1), \ldots )}. \) Because \(\left\{ {\left( {{Z_t},Y(t)} \right) } \right\} \) is an independent sequence, Kolmogorov’s 0–1 law implies that for any event \(A \in \bigcap \limits _{t = 1}^{ + \infty } {\sigma ({Z_t},Y(t),{Z_{t + 1}},Y(t + 1), \ldots )}\), we have \(P(A) = 0\) or \(P(A) = 1\), so the tail event of \(\sigma \)-field of \(\left\{ {X_t} \right\} \) only contains the set with measure of 0 or 1. We know from Wang [23] that \(\left\{ {X_t} \right\} \) is ergodic. \(\square \)

Proof of Theorem 3.2

Let \(h_t ({\varvec{\phi }})= -B_t ({\varvec{\phi }})\). The proof will be done in three steps.

Step 1: We show that \(E(B_t ({\varvec{\phi }}))\) is continuous in \({\varvec{\phi }}\), so that \(E (h_t ({\varvec{\phi }}))\) is also continuous in \({\varvec{\phi }}\). For any \({\varvec{\phi }} \in \Theta \times \mathbb {N}_{0}\), let \(V _\eta ( {\varvec{\phi }} )= B ( {{\varvec{\phi }}, \eta } )\) be an open ball centered at \({\varvec{\phi }}\) with radius \(\eta \) (\(\eta < 1\)). We go on to show the following property:

To see this, observe that

where \(\lambda _{\max }=\max (\lambda ,\lambda ^{'})\), \(C=\max (\lambda _{\max },1)\). Then,

Step 2 We prove that \(E_{{\varvec{\phi }}_0}\left[ h_t ({\varvec{\phi }})- h_t ({\varvec{\phi }}_0)\right] < 0 \), or equivalently, \(E_{{\varvec{\phi }}_0}\left[ B_t ({\varvec{\phi }})-B_t ({\varvec{\phi }}_0)\right] > 0 \) for any \({\varvec{\phi }}\ne {\varvec{\phi }}_0 \). Denote by \(\nabla g({\varvec{\phi }}_0,{\varvec{\phi }},X_{t-1},\ldots ,X_{t-p}):=g( {{\varvec{\phi }}_0,X_{t-1},\ldots ,X_{t-p}})- g( {{\varvec{\phi }},X_{t-1},\ldots ,X_{t-p}})\). With the fact that the true value of \({\varvec{\phi }}\) is \({\varvec{\phi }}_0\), we have

where \( \mathbb {I}= E_{{\varvec{\phi }}_0} ( \nabla g({\varvec{\phi }}_0,{\varvec{\phi }},X_{t-1},\ldots ,X_{t-p}))^2 > 0 ~ (\text{ by } \text{ Assumption } \text{(A2) }) \), and

Thus, we have \(E_{{\varvec{\phi }}_0}[B_t ({\varvec{\phi }})- B_t ({\varvec{\phi }}_0)] > 0 \).

Step 3: We prove that \( \hat{{\varvec{\phi }}}_{CLS} \in V\) holds for arbitrary (small) open neighborhood V of \( {{\varvec{\phi }}}_{0}\), which means \( \hat{{\varvec{\phi }}}_{CLS}\) is a consistent estimator of \({\varvec{\phi }}_0\). This proof will be conducted in the similar arguments given in Yang et al. [28]. Therefore, we omit the details. \(\square \)

Proof of Theorems 3.4 and 3.5

As discussed in Franke and Seligmann [7], we only have to check that the conditions (C1–C6) hold, which implies the regularity conditions of Theorems 2.1 and 2.2 in Billingsley [2] hold.

(C1) The set \(\left\{ {m:~P\left( {{Z_t} = m} \right) = f(m,\lambda ) = {{{\lambda ^m}} \over {m!}}{e^{ - \lambda }} > 0} \right\} \) does not depend on \(\lambda \);

(C2) \(E\left[ {Z_t^3} \right] = {\lambda ^3} + 3{\lambda ^2} + \lambda < \infty \);

(C3) \(P\left( {{Z_t} = m} \right) \) is three times continuously differentiable with respect to \(\lambda \);

(C4) For any \(\lambda ^{'} \in B\), where B is an open subset of \(\mathbb {R}_+\), there exists a neighborhood U of \(\lambda ^{'}\) such that:

1. \({\sum _{k = 0}^\infty {\mathop {\sup }_{\lambda \in U} f(k,\lambda )} < \infty }\),

2. \({\sum _{k = 0}^\infty {\mathop {\sup }_{\lambda \in U} \left| {{{\partial f(k,\lambda )} \over {\partial \lambda }}} \right| } < \infty }\),

3. \({\sum _{k = 0}^\infty {\mathop {\sup }_{\lambda \in U} \left| {{{{\partial ^2}f(k,\lambda )} \over {\partial {\lambda ^2}}}} \right| } < \infty }\);

(C5) For any \(\lambda ^{'}\in B\), there exists a neighborhood U of \(\lambda ^{'}\) and the sequences \({\psi _1}(n) = \textrm{const}1 \cdot n\), \({\psi _{11}}(n) = \textrm{const}2 \cdot {n^2}\) and \({\psi _{111}}(n) = \textrm{const}3 \cdot {n^3}\), with suitable constants const1, const2, const3 and \(n \ge 0\) such that \(\forall \lambda \in U\) and \(\forall m \le n\), with \(P\left( {{Z_t}} \right) \) nonvanishing \(f(m,\lambda )\),

-

(i)

\(\left| {{{\partial f(m,\lambda )} \over {\partial \lambda }}} \right| \le {\psi _1}(n)f(m,\lambda )\), \(\left| {{{{\partial ^2}f(m,\lambda )} \over {\partial {\lambda ^2}}}} \right| \le {\psi _{11}}(n)f(m,\lambda )\), \(\left| {{{{\partial ^3}f(m,\lambda )} \over {\partial {\lambda ^3}}}} \right| \le {\psi _{111}}(n)f(m,\lambda )\),

and with respect to the stationary distribution of the process \(\{ {X_t} \}\),

-

(ii)

\(E[ {\psi _1^3( {{X_1}} )}] < \infty \), \(E[ {{X_1}{\psi _{11}}( {{X_2}} )}] < \infty \), \(E[ {{\psi _1}( {{X_1}} ){\psi _{11}}( {{X_2}} )}] < \infty \), \(E[ {{\psi _{111}}( {{X_1}} )}] < \infty \).

(C6) Let \({\varvec{I}}\left( {\varvec{\theta }} \right) = {\left( {{\sigma _{ij}}} \right) _{\left( {2p + 1} \right) \times \left( {2p + 1} \right) }}\) denote the Fisher information matrix, i.e.,

where \(P(X_t,\ldots ,X_{t-p};{\varvec{\theta }}):=P(X_t =x_t|X_{t-1}=x_{t-1}, \ldots ,X_{t-p}=x_{t - p})\) denotes the transition probability given in (2.2), \(\theta _i\) denotes the ith component of \({\varvec{\theta }}\), and \({\varvec{I}}( {\varvec{\theta }} )\) is nonsingular.

Franke and Seligmann [7] have proved that the above conditions (C1)–(C4) all hold. Therefore, for the SETINAR(2, p) model it is only necessary to verify conditions (C5) and (C6) also hold. It is easy to verify that statement (i) in (C5) holds trivially by the properties of Poisson distribution. Condition (C2) implies \(E X_t^3<\infty \) with respect to the stationary distribution of \(\{ {X_t} \}\). Therefore, statement (ii) in (C5) also holds.

In the following, we prove condition (C6) holds. Then, we need to check the following statements are all true.

(S1) \(E{\left| {{\partial \over {\partial {\alpha _{j,i}}}}\log P(X_t,\ldots ,X_{t-p};{\varvec{\theta }})} \right| ^2} < \infty \), \(j = 1,2\); \(\quad i = 1,2, \ldots p\);

(S2) \(E{\left| {{\partial \over {\partial \lambda }}\log P(X_t,\ldots ,X_{t-p};{\varvec{\theta }})} \right| ^2} < \infty \);

(S3) \(E\left| {{\partial \over {\partial {\alpha _{j,i}}}}\log P(X_t,\ldots ,X_{t-p};{\varvec{\theta }}){\partial \over {\partial \lambda }}\log P(X_t,\ldots ,X_{t-p};{\varvec{\theta }})} \right| < \infty \), \(j = 1,2\); \( i = 1,2, \ldots p\);

(S4) \(E\left| {{\partial \over {\partial {\alpha _{j,i}}}}\log P(X_t,\ldots ,X_{t-p};{\varvec{\theta }}){\partial \over {\partial {\alpha _{j,k}}}}\log P(X_t,\ldots ,X_{t-p};{\varvec{\theta }})} \right| < \infty \), \(j = 1,2\), \(i,k \in \{1,\ldots p\}\);

(S5) \(E\left| {{\partial \over {\partial {\alpha _{1,i}}}}\log P(X_t,\ldots ,X_{t-p};{\varvec{\theta }}){\partial \over {\partial {\alpha _{2,k}}}}\log P(X_t,\ldots ,X_{t-p};{\varvec{\theta }})} \right| < \infty \), \(i,k \in \{1,\ldots p\}\).

We shall first prove statement (S1). Recall that

where \(h( {{\varvec{\alpha }}_{j},{x_{t - 1},\ldots ,x_{t-p}},{{\varvec{i}}}} ) = \prod _{k = 1}^p {\alpha _{j,k}^{{i_k}}{{\left( {1 - {\alpha _{j,k}}} \right) }^{{x_{t - k}} - {i_k}}}} \), \({\varvec{i}}=(i_1,\ldots ,i_p)\), \(j = 1,2\). Thus, for \(j = 1,2\) and \(k=1,2,\ldots ,p\), we have

yielding

Together with (8.2), we have

where \(\alpha _{\max ,k}:=\max \{\alpha _{1,k},\alpha _{2,k}\}\), and \(\alpha _{\min ,k}:=\min \{\alpha _{1,k},\alpha _{2,k}\}\), \(k=1,2,\ldots ,p\). Therefore, we have

for some suitable constant C.

Next, we will prove statement (S2). By (C5), we know that \(\left| {{{\partial f(m,\lambda )} \over {\partial \lambda }}} \right| \le {\psi _1}(n)f(m,\lambda )\) holds for \(m \le n\), which means that

This implies \( {\left| {{\partial \over {\partial \lambda }}\log P(X_t,\ldots ,X_{t-p};{\varvec{\theta }})} \right| ^2}< E\psi _1^2\left( {{X_1}} \right) < \infty . \)

Lastly, by (8.3), (8.4) and (C5), we can conclude that statements (S3), (S4) and (S5) hold. Therefore, the Fisher information matrix \({\varvec{I}}\left( {\varvec{\theta }} \right) \) is well defined. Finally, some elementary but tedious calculations show that (C6) is satisfied too. \(\square \)

Rights and permissions

Springer Nature or its licensor holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Yang, K., Li, A., Li, H. et al. High-Order Self-excited Threshold Integer-Valued Autoregressive Model: Estimation and Testing. Commun. Math. Stat. (2023). https://doi.org/10.1007/s40304-022-00325-3

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s40304-022-00325-3