Abstract

In this paper, we study the optimization problem (P) of minimizing a convex function over a constraint set with nonconvex constraint functions. We do this by given new characterizations of Robinson’s constraint qualification, which reduces to the combination of generalized Slater’s condition and generalized sharpened nondegeneracy condition for nonconvex programming problems with nearly convex feasible sets at a reference point. Next, using a version of the strong CHIP, we present a constraint qualification which is necessary for optimality of the problem (P). Finally, using new characterizations of Robinson’s constraint qualification, we give necessary and sufficient conditions for optimality of the problem (P).

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Consider the following optimization problem:

where

S is a non-empty closed convex cone in \(\mathbb {R}^m,\) \(f:\mathbb {R}^n\longrightarrow \mathbb {R}\) is a convex function, \(g:\mathbb {R}^n\longrightarrow \mathbb {R}^m\) is a Fréchet differentiable function, but it is not assumed to be S-convex and C is a non-empty closed convex subset of \(\mathbb {R}^n\) such that \(C \cap K \ne \emptyset .\) The problem (P) is referred to as a convex optimization problem whenever g is S-convex [3, 7, 17]. The programming problem (P) covers a broad class of nonconvex and nonlinear programming problems, including convex programming problems in the standard form (see [3]) and convex optimization problems with quasi-convex constraint functions (note that in the latter case, the constraint set K is convex).

Constraint qualifications have a crucial role for the study of convex optimization problems and guarantee necessary and sufficient conditions for optimality. Over the years, various constraint qualifications have been employed to study convex optimization problems in the literature [3, 7, 13, 15, 17]. In the special case, the Slater’s condition [10] was used to obtain the so-called Karush–Kuhn–Tucker conditions, which are necessary and sufficient for optimality. Unfortunately, the characterization of optimality by the Karush–Kuhn–Tucker conditions may fail under the Slater’s condition whenever g is not S-convex. Recently, the Slater’s condition together with nondegeneracy condition [11] has been shown to guarantee that the Karush–Kuhn–Tucker conditions are necessary and sufficient for optimality of the problem (P), where K is a convex set and \(C:=\mathbb {R}^n\) [6, 8, 11, 12].

In this paper, we study the problem (P), where the constraint set K is nearly convex at a reference point [8, 9, 16], but is not necessarily convex. We first present new characterizations of Robinson’s constraint qualification, which reduces to the combination of generalized Slater’s condition and generalized sharpened nondegeneracy condition for nonconvex programming problems with nearly convex feasible sets at a reference point. Also, using a version of the strong CHIP, we give a constraint qualification which is necessary for optimality of the problem (P). Finally, we present necessary and sufficient conditions for optimality of the problem (P), extending known results in the finite dimensional case (see [4, 8, 11] and other references therein).

The paper has the following structure. In Sect. 2, we provide some definitions and elementary results related to generalized convexity. We also give several constraint qualifications that are used to study optimality of the optimization problem (P). New characterizations of Robinson’s constraint qualification are presented in Sect. 3 whenever the constraint set K is nearly convex at a reference point. In Sect. 4, using new characterizations of Robinson’s constraint qualification, we give necessary and sufficient conditions for optimality of the problem (P). Several examples are given to illustrate our results.

2 Preliminaries

Throughout the paper, we assume that \(\mathbb {R}^{n}\) is the Euclidean space with the inner product \(\langle \cdot ,\cdot \rangle \) and the induced norm \(\Vert \cdot \Vert \). Now, we consider the following optimization problem:

where \(f:\mathbb {R}^n\longrightarrow \mathbb {R}\) is a real-valued (continuous) convex function, the constraint set K is defined by

S is a non-empty closed convex cone in \(\mathbb {R}^m,\) C is a non-empty closed convex subset of \(\mathbb {R}^n\) such that \(C \cap K \ne \emptyset \) and \(g:\mathbb {R}^n\longrightarrow \mathbb {R}^m\) is a Fréchet differentiable function, but it is not assumed to be S-convex. Note that by continuity of g and closedness of S, K is closed.

We recall from [14, 19] the following definitions. A function \(h:\mathbb {R}^n \longrightarrow \mathbb {R}^m\) is called S-convex, if

where S is a non-empty closed convex cone in \(\mathbb {R}^m.\)

For a function \(h: \mathbb {R}^n \longrightarrow \mathbb {R},\) the subdifferential of h at a point \({\bar{x}} \in \mathbb {R}^n,\) \(\partial h({\bar{x}}),\) is defined by

A function \(h: \mathbb {R}^n \longrightarrow \mathbb {R}\) is said to be Fréchet differentiable at a point \({\bar{x}} \in \mathbb {R}^n,\) if there exists \(x^* \in \mathbb {R}^n\) such that

In this case, \(x^*\) is called the Fréchet derivative of h at the point \({\bar{x}}\) and denoted by \(\nabla h({\bar{x}}) := x^*.\)

We define the negative and positive polar cones of S by:

and

respectively. We also define the normal cone to a convex set \( E\subseteq \mathbb {R}^{n}\) at a point \(x \in E\), by:

It is easy to see that \(N_E(x) =(E-x)^{\circ }.\) Moreover, for a subset \(\mathcal {B}\) of \(S^\circ \backslash \{0\},\) define \( \, \mathrm{pos}(\mathcal {B})\) by

For a subset W of \(\mathbb {R}^n,\) we denote by clW, bd(W) and \(\mathrm{int} W,\) the closure, boundary and interior of W, respectively. The nonnegative orthant of \(\mathbb {R}^m\) is denoted by \(\mathbb {R}^m_{+}\) and is defined by

We now give a generalization of Mangasarian–Fromovitz’s constraint qualification [1, 2, 14], nondegeneracy condition [9, 11], sharpened nondegeneracy condition [9] and Slater’s condition [1, 2].

Definition 2.1

Let S be a closed convex cone in \(\mathbb {R}^m\) with non-empty interior. Let K be given as in (2.2), \(\mathcal {B}\) be given as in (2.6), and let \(\bar{x} \in K.\)

- (i):

-

We say that K satisfies generalized Mangasarian-Fromovitz’s constraint qualification (GMFCQ) at \({\bar{x}}\in K\) if there exists \(0 \ne v\in \mathbb {R}^n\) with \(\langle \nabla g({\bar{x}})^T \lambda , v \rangle <0,\) whenever \(\lambda \in \mathcal {B}\) such that \(\langle \lambda , g(\bar{x}) \rangle = 0.\)

- (ii):

-

One says that K satisfies generalized nondegeneracy condition (GNC) at \({\bar{x}}\in K\) if

$$\begin{aligned} \nabla g({\bar{x}})^T \lambda \not =0, \ \text{ whenever }\ \lambda \in \mathcal {B} \ \text{ such } \text{ that } \ \langle \lambda , g(\bar{x}) \rangle = 0. \end{aligned}$$If generalized nondegeneracy condition holds at every point \(x\in K,\) we say that K satisfies generalized nondegeneracy condition.

- (iii):

-

We say that K satisfies generalized sharpened nondegeneracy condition (GSNC) at \(\bar{x} \in K\) if

$$\begin{aligned} \langle \nabla g({\bar{x}})^T \lambda , u-{\bar{x}} \rangle \not =0, \ \text{ for } \text{ some } \ u \in K, \ \text{ whenever } \ \lambda \in \mathcal {B} \ \text{ such } \text{ that } \ \langle \lambda , g({\bar{x}}) \rangle = 0. \end{aligned}$$If generalized sharpened nondegeneracy condition holds at every point \(x\in K,\) we say that K satisfies generalized sharpened nondegeneracy condition.

- (iv):

-

Let C be a non-empty closed convex subset of \(\mathbb {R}^n\) such that \(C \cap K \ne \emptyset .\) The set \(\Omega :=C \cap K\) is said to satisfy generalized Slater’s condition (GSC) if there exists \(x_0 \in C\) such that \(-g(x_0) \in \mathrm{int} S.\)

Remark 2.2

It should be noted that generalized sharpened nondegeneracy condition implies generalized nondegeneracy condition, but the converse is not true (see [9, Example 3.1]). However, generalized nondegeneracy condition together with generalized Slater’s condition implies generalized sharpened nondegeneracy condition (see Corollary 2.4).

Proposition 2.3

Let \(K:=\{x\in \mathbb {R}^n\, : \, -g(x)\in S\}\) be given as in (2.2), where \(S\subseteq \mathbb {R}^m\) is a closed convex cone with non-empty interior. Let C be a non-empty closed convex subset of \(\mathbb {R}^n\) such that \(C \cap K \ne \emptyset ,\) and let \({\bar{x}} \in C \cap K.\) Assume that generalized Slater’s condition holds. Then the following assertions are equivalent:

- (i):

-

\(\nabla g({\bar{x}})^T\lambda \not =0,\) whenever \(\lambda \in S^\circ \setminus \{0\}\) such that \(\langle \lambda , g({\bar{x}})\rangle =0.\)

- (ii):

-

\(\langle \nabla g({\bar{x}})^T\lambda , y-{\bar{x}} \rangle \not =0\) for some \(y \in K,\) whenever \(\lambda \in S^\circ \setminus \{0\}\) such that \(\langle \lambda , g({\bar{x}})\rangle =0.\)

Proof

\(\mathrm{(i)}\Longrightarrow \mathrm{(ii)}.\) Let (i) hold. Assume if possible that (ii) does not hold. Then there exists \(\lambda \in S^\circ \setminus \{0\}\) satisfying \(\langle \lambda , g({\bar{x}})\rangle =0\) such that

Since generalized Slater’s condition holds, so there exists \(x_0 \in C\) such that \(-g(x_0) \in \mathrm{int S}.\) Thus, there exists \(r >0\) such that \(-g(x_0 + r u) \in {S}\) for all \(u \in {\mathbb {B}},\) where \({\mathbb {B}}\) is defined by:

This implies that \(x_0 + r u \in K\) for all \(u \in {\mathbb {B}}.\) In view of (2.7), one has

Put \(u=0\) in (2.8), we conclude that \(\langle \nabla g(\bar{x})^T\lambda , x_0 -{\bar{x}} \rangle =0.\) This together with (2.8) implies that

This guarantees that \(\nabla g({\bar{x}})^T\lambda =0\) with \(\lambda \in S^\circ \setminus \{0\}\) such that \(\langle \lambda , g({\bar{x}})\rangle =0,\) which contradicts (i). Thus, (ii) holds.

Clearly (ii) implies (i) without the validity of generalized Slater’s condition. \(\square \)

Corollary 2.4

Let \(K:=\{x\in \mathbb {R}^n\, : \, -g(x)\in S\}\) be given as in (2.2), where \(S\subseteq \mathbb {R}^m\) is a closed convex cone with non-empty interior. Let C be a non-empty closed convex subset of \(\mathbb {R}^n\) such that \(C \cap K \ne \emptyset ,\) and let \({\bar{x}} \in C \cap K.\) Assume that generalized Slater’s condition holds. Then the following assertions are equivalent:

- (i):

-

Generalized nondegeneracy condition holds at \(\bar{x}.\)

- (ii):

-

Generalized sharpened nondegeneracy condition holds at \(\bar{x}.\)

Proof

This is an immediate consequence of Proposition 2.3. \(\square \)

Definition 2.5

(Robinson’s constraint qualification [1]). Let S be a closed convex cone in \(\mathbb {R}^m\) with non-empty interior and \(K :=\{x \in \mathbb {R}^n : -g(x) \in S \}\) be given as in (2.2). Let C be a non-empty closed convex subset of \(\mathbb {R}^n\) such that \(C \cap K \ne \emptyset ,\) and let \(\Omega := C \cap K\) and \({\bar{x}}\in \Omega .\) We say that the set \(\Omega \) satisfies the Robinson’s constraint qualification (RCQ) at the point \({\bar{x}}\) if

It is worth noting that in the case \(C:=\mathbb {R}^n,\) Robinson’s constraint qualification is equivalent to Mangasarian–Fromovitz’s constraint qualification (see the proof of Theorem 3.5).

In the following, we recall the notion of the strong conical hull intersection property (the strong CHIP) (see [5]).

Definition 2.6

Let \(C_1, C_2,\ldots , C_m\) be closed convex sets in \(\mathbb {R}^n\) and let \(x \in \bigcap _{j=1}^{m} C_j.\) Then, the collection \(\{C_1, C_2,\ldots , C_m\}\) is said to have the strong CHIP at x if

The collection \(\{C_1, C_2,\ldots , C_m\}\) is said to have the strong CHIP if it has the strong CHIP at each \(x \in \bigcap _{j=1}^{m} C_j.\)

Definition 2.7

[8, 9, 16] Let E be a non-empty subset of \( \mathbb {R}^{n}\). The set E is called nearly convex at a point \( x\in E\) if, for each \(z \in E,\) there exists a sequence \( \{ \beta _{m} \}_{m \in \mathcal {N}}\subset (0,+\infty )\) with \( \beta _{m}\longrightarrow 0^{+}\) such that

where \(\mathcal {N}\) is the set of natural numbers.

The set E is said to be nearly convex if it is nearly convex at each point \(x \in E.\) It is easy to see that if E is convex, then E is nearly convex (for more details and illustrative examples related to the near convexity, see [8, 9, 16]).

3 Characterizing Robinson’s constraint qualification

In this section, we first characterize the near convexity of the constraint set K, which given by (2.2). Next, whenever the constraint set K is nearly convex at a reference point, we present new characterizations of Robinson’s constraint qualification that will be used for optimality of the problem (P). We start with the following lemma which has a crucial role for proving our main results. This lemma gives us characterizations of near convexity.

Lemma 3.1

Let \(K:=\{x\in \mathbb {R}^n\, : \, -g(x)\in S\}\) be given as in (2.2), where \(S\subseteq \mathbb {R}^m\) is a non-empty closed convex cone. Consider the following assertions:

-

(i)

K is convex.

-

(ii)

K is nearly convex.

-

(iii)

K is nearly convex at each boundary point.

-

(iv)

For each \(x \in \text{ bd }\,(K)\) and each \(\lambda \in S^\circ \setminus \{0\}\) with \(\langle \lambda , g(x)\rangle =0,\) one has

$$\begin{aligned} \langle \nabla g(x)^T \lambda , u-x\rangle \ge 0, \ \forall \ u \in K. \end{aligned}$$(3.10)Then, (i)\(\Longrightarrow \)(ii)\(\Longrightarrow \)(iii) \(\Longrightarrow \) (iv).

Moreover, if we assume that S has a non-empty interior and there exists a subset \(\mathcal {B}\) of \(S^\circ \backslash \{0\}\) such that \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) and moreover, generalized sharpened nondegeneracy condition (GSNC) holds at each point \(x \in \text{ bd }\,(K),\) then (iv)\(\Longrightarrow \)(i).

Proof

Clearly, (i) \(\Longrightarrow \) (ii) \(\Longrightarrow \) (iii).

(iii)\(\Longrightarrow \)(iv). Suppose that (iii) holds. Assume if possible that there exist \(x \in \text{ bd }\,(K),\) \(u\in K\) and \(\lambda \in S^\circ \setminus \{0\}\) with \(\langle \lambda , g(x)\rangle =0\) such that \(\langle \nabla g(x)^T\lambda , u-x\rangle <0.\) Then, by differentiability of g at x, we conclude that for every sequence \(\{t_n\}_{n\ge 1}\) of positive real numbers with \(t_n \downarrow 0,\) we have \(\langle \lambda , g(x+t_n(u-x))-g(x)\rangle <0\) for all sufficiently large n. Taking into account that \(\langle \lambda , g(x)\rangle =0,\) we obtain \(\langle \lambda , g(x+t_n(u-x))\rangle <0\) for all sufficiently large n. Noting that \(\lambda \in S^\circ \setminus \{0\},\) the latter inequality implies that \(-g(x+t_n(u-x))\not \in S\) for all sufficiently large n. So, \(x+t_n(u-x)\not \in K\) for all sufficiently large n and for every sequence \(\{t_n\}_{n\ge 1}\) of positive real numbers with \(t_n \downarrow 0.\) This is a contradiction, because \(u \in K\) and, by (iii), K is nearly convex at x (see Definition 2.7). Therefore, (iv) holds.

(iv)\(\Longrightarrow \)(i). Suppose that S has a non-empty interior and there exists a subset \(\mathcal {B}\) of \(S^\circ \backslash \{0\}\) such that \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) and moreover, generalized sharpened nondegeneracy condition holds at each point \(x \in \text{ bd }\,(K).\) Now, we assume that (iv) holds, and \({\bar{x}} \in \text{ bd }\,(K)\) is arbitrary. We first prove that there exists \({\bar{\lambda }} \in S^\circ \backslash \{0\}\) with \(\langle {\bar{\lambda }}, g({\bar{x}})\rangle =0.\) Indeed, since \({\bar{x}}\in \mathrm{bd}(K),\) by continuity of g at \({\bar{x}},\) one has \(-g(\bar{x})\in \mathrm{bd}(S).\) So, \(\{-g({\bar{x}})\}\cap \mathrm{int}S=\emptyset .\) Then, by the convex separation theorem, there exists \({\bar{\lambda }} \in \mathbb {R}^m\backslash \{0\}\) such that \(\langle {\bar{\lambda }}, -g(\bar{x})\rangle \ge \langle {\bar{\lambda }},y\rangle \) for all \(y\in S.\) Since S is a closed cone and \(-g({\bar{x}}) \in S,\) it follows that \(\langle {\bar{\lambda }},y\rangle \le 0\) for all \(y\in S\) and \(\langle {\bar{\lambda }}, g({\bar{x}})\rangle = 0.\) Consequently, we conclude that \({\bar{\lambda }} \in S^\circ \backslash \{0\}\) and \(\langle {\bar{\lambda }}, g({\bar{x}})\rangle =0.\) Since \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) in view of (2.6), we deduce that \( \bar{\lambda }=\sum \nolimits _{i=1}^p t_i{\bar{\lambda }}_i\) for some \(p\in \mathcal {N},\) \(t_i>0,\) and \({\bar{\lambda }}_i\in \mathcal {B},\) \(i=1,2,\ldots ,p.\) Note that \(\langle {\bar{\lambda }}_i, g({\bar{x}})\rangle \ge 0\) for all \(i=1,2,\ldots ,p,\) because \({\bar{\lambda }}_i \in S^\circ \) \((i=1,2,\ldots ,p).\) Since \(\langle {\bar{\lambda }}, g({\bar{x}})\rangle =0\) and \(t_i >0\) \((i=1,2,\ldots ,p),\) it follows that \(\langle {\bar{\lambda }}_i, g({\bar{x}})\rangle =0\) for all \(i=1,2,\ldots ,p.\) Thus, by the hypothesis (iv), we have

On the other hand, since \({\bar{\lambda }}_i \in \mathcal {B}\) and \(\langle {\bar{\lambda }}_i, g({\bar{x}})\rangle =0\) \((i=1,2,\ldots ,p),\) in view of the assumption that generalized sharpened nondegeneracy condition holds at each point \(x \in \text{ bd }\,(K)\) (and hence, at \({\bar{x}}),\) we have, for each \(i=1,2,\ldots ,p,\) that there exists \(u \in K\) such that \(\langle \nabla g({\bar{x}})^T {\bar{\lambda }}_i, u - {\bar{x}} \rangle \not =0.\) This implies that \(\nabla g({\bar{x}})^T{\bar{\lambda }}_i\not =0\) for all \(i=1,2,\ldots ,p.\) This together with (3.11) implies that there exists a supporting hyperplane for K at each boundary point \({\bar{x}}\) of K. Combining this with the closedness of K, by [18, Theorem 1.3.3], we conclude that K is a convex set. Therefore, (i) holds.\(\square \)

Corollary 3.2

Let \(K:=\{x\in \mathbb {R}^n\, : \, -g(x)\in S\}\) be given as in (2.2), where \(S\subseteq \mathbb {R}^m\) is a non-empty closed convex cone. Let \({\bar{x}} \in K\) be arbitrary. If K is nearly convex at \({\bar{x}},\) then for each \(\lambda \in S^\circ \setminus \{0\}\) with \(\langle \lambda , g({\bar{x}})\rangle =0,\) one has

Proof

The proof is exactly similar to the proof of the implication (iii) \(\Longrightarrow \) (iv) in Lemma 3.1 with replacing x by \({\bar{x}}.\) \(\square \)

Lemma 3.3

Let D be a closed subset of \(\mathbb {R}^n.\) Then D is convex if and only if D is nearly convex.

Proof

Suppose that D is convex. Then it is clear that D is nearly convex. Conversely, let D be nearly convex. Assume if possible that D is not convex. Then there exist \(x, y \in D\) and \(z_0 := x + t_0(y - x)\) with \(0< t_0 < 1\) such that \(z_0 \notin D.\) Put

Since D is closed, it follows that \(z_1 := x + t_1 (y - x) \in D.\) Hence, \(t_1 < t_0.\) Since D is nearly convex at \(z_1,\) there exists a sequence \(\{\beta _m\}_{m \ge 1} \subset (0, +\infty )\) with \(\beta _m \longrightarrow 0^+\) such that \(z_1 + \beta _m (y - z_1) \in D.\) For \(m \in \mathbb {N}\) sufficiently large, we have

But \(x + (t_1 + \beta _m (1 - t_1)) (y - x) = z_1 + \beta _m (y - z_1) \in D.\) Therefore, in view of (3.13) we conclude that

which together with (3.13) contradicts the definition of \(t_1.\) Thus, D is convex. \(\square \)

The following example shows that condition (3.10) alone does not guarantee the near convexity of K at each boundary point.

Example 3.4

Let \(g(x):=x^3(x+1)^2\) for all \(x \in \mathbb {R}\) and \(S:=\mathbb {R}_-.\) Thus, \(K:=\{x\in \mathbb {R}: -g(x)\in S\}= \{-1\} \cup [0, +\infty ).\) We see that \(S^\circ =\mathbb {R}_+\) and \(\text{ bd }\,(K) = \{-1, 0\}.\) Since \(g(0) = g(-1) = 0,\) it follows that \(\langle \lambda , g({\bar{x}}) \rangle = 0\) for each \(\lambda \in S^\circ \) and each \({\bar{x}} \in \text{ bd }\,(K).\) Moreover, \(\langle \nabla g({\bar{x}})^T\lambda , u - {\bar{x}} \rangle =0\) for all \(u \in K,\) because \(\nabla g({\bar{x}}) = 0\) for each \({\bar{x}} \in \text{ bd }\,(K).\) Hence, condition (3.10) is satisfied. However, K is not nearly convex at each boundary point, because for \({\bar{x}} =0 \in \text{ bd }\,{K},\) \(u = -1 \in K\) and every sequence \(\{t_n\}_{n\ge 1}\) of positive real numbers with \(t_n \downarrow 0,\) we have \({\bar{x}} + t_n(u - {\bar{x}}) \notin K\) for all sufficiently large n, i.e., K is not nearly convex at \({\bar{x}} = 0 \in \text{ bd }\,(K).\)

We now present new characterizations of Robinson’s constraint qualification whenever the constraint set K is nearly convex at some reference point, but is not necessarily convex.

Theorem 3.5

Let \(K:=\{x\in \mathbb {R}^n\, : \, -g(x)\in S\}\) be given as in (2.2), where \(S\subseteq \mathbb {R}^m\) is a closed convex cone with non-empty interior. Let C be a non-empty closed convex subset of \(\mathbb {R}^n\) such that \(C \cap K \ne \emptyset ,\) and let \({\bar{x}}\in C\cap K.\) Then the following assertions are equivalent:

-

(i)

For each \(\lambda \in S^\circ \backslash \{0\}\) with \(\langle \lambda , g({\bar{x}})\rangle =0,\) one has

$$\begin{aligned} \langle \nabla g({\bar{x}})^T\lambda , v-{\bar{x}} \rangle >0\ \, \text{ for } \text{ some }\ v\in C. \end{aligned}$$ -

(ii)

Robinson’s constraint qualification holds at \({\bar{x}}.\)

Hence, if one of assertions (i) and (ii) holds, then generalized Slater’s condition holds.

Furthermore, if we assume that K is nearly convex at \({\bar{x}}\) and there exists a subset \(\mathcal {B}\) of \(S^\circ \backslash \{0\}\) such that \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) and moreover, generalized Slater’s condition holds, then (i) (and hence (ii)) is equivalent to the following assertions:

-

(iii)

Generalized nondegeneracy condition holds at \(\bar{x}.\)

-

(iv)

Generalized sharpened nondegeneracy condition holds at \(\bar{x}\).

Proof

\(\mathrm{(i)}\Longrightarrow \mathrm{(ii)}.\) Suppose that (i) holds. Assume on the contrary that Robinson’s constraint qualification does not hold at \({\bar{x}},\) thus,

So, by the convex separation theorem, there exists \({\bar{\lambda }}\in \mathbb {R}^m\backslash \{0\}\) such that

This implies that \({\bar{\lambda }} \in S^\circ \backslash \{0\},\) \(\langle {\bar{\lambda }}, g({\bar{x}})\rangle =0,\) and \( \langle \nabla g({\bar{x}})^T {\bar{\lambda }}, v-{\bar{x}} \rangle \le 0\) for all \(v\in C,\) which contradicts the validity of (i). Therefore, the implication \(\mathrm{(i)}\Longrightarrow \mathrm{(ii)}\) is justified.

\(\mathrm{(ii)}\Longrightarrow \mathrm{(i)}.\) Suppose that Robinson’s constraint qualification is satisfied at \({\bar{x}}.\) Let \(\lambda \in S^\circ \backslash \{0\}\) with \(\langle \lambda , g(\bar{x})\rangle =0.\) We show that there exists \(v\in C\) such that \(\langle \nabla g({\bar{x}})^T\lambda , v-{\bar{x}} \rangle >0.\) Since Robinson’s constraint qualification holds at \({\bar{x}}\) and \(\mathrm{int }S\not =\emptyset ,\) by [1, p. 71], there exists \(v\in C\) such that

We claim that \(\langle \lambda , g({\bar{x}})+\nabla g({\bar{x}})(v-\bar{x})\rangle >0.\) Indeed, we assume on the contrary that

Since \(\lambda \in S^\circ ,\) we have \(\langle \lambda , y\rangle \le 0\) for all \(y\in S,\) and hence, by (3.4), \(\langle \lambda , g(\bar{x})+\nabla g({\bar{x}})(v-{\bar{x}})\rangle \ge 0.\) This together with (3.5) implies that

This implies that

So, by the Fermat rule, \(\lambda =0,\) which is a contradiction. Hence, \(\langle \lambda , g({\bar{x}})+\nabla g({\bar{x}})(v-{\bar{x}})\rangle >0.\) On the other hand, \(\langle \lambda , g({\bar{x}})\rangle =0.\) Thus, \(\langle \lambda , \nabla g({\bar{x}})(v-{\bar{x}})\rangle >0\) for some \(v \in C.\) The latter inequality shows that (i) holds.

Moreover, if (ii) holds, then it follows from (3.4) and the differentiability of g at \({\bar{x}}\) that

So, for some \(0< t < 1\) sufficiently small, we have

Put \(x_0:= {\bar{x}}+t(v-{\bar{x}})\in C.\) Then \(-g(x_0)\in \mathrm{int}S,\) i.e., generalized Slater’s condition holds.

Now, we assume that K is nearly convex at \({\bar{x}}\) and there exists a subset \(\mathcal {B}\) of \(S^\circ \backslash \{0\}\) such that \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) and moreover, generalized Slater’s condition holds, i.e., there exists \(x_0\in C\) such that \(-g(x_0) \in \mathrm{int} S.\)

\(\mathrm{(i)}\Longrightarrow \mathrm{(iii)}.\) Clearly, this implication holds.

\(\mathrm{(iii)}\Longrightarrow \mathrm{(i)}.\) Suppose that (iii) holds. Assume if possible that (i) does not hold. Then there exists \({\bar{\lambda }}\in S^\circ \backslash \{0\}\) with \( \langle {\bar{\lambda }}, g({\bar{x}})\rangle =0\) such that

Since, by the hypothesis, \(\mathrm{pos}(\mathcal {B})= S^\circ \) and \({\bar{\lambda }} \in S^\circ \setminus \{0\},\) we conclude that

for some \(p\in \mathcal {N},\) \(t_i>0,\) and \({\bar{\lambda }}_i\in \mathcal {B},\) \(i=1,2,\ldots ,p.\) So, it follows from \(\langle {\bar{\lambda }}, g(\bar{x})\rangle =0\) and \(\langle {\bar{\lambda }}_i, g({\bar{x}})\rangle \ge 0\) \((i=1,2,\ldots ,p)\) that \(\langle {\bar{\lambda }}_i, g({\bar{x}})\rangle =0\) for all \(i=1,2,\ldots ,p.\) Since, by the hypothesis, there exists \(x_0\in C\) such that \(-g(x_0)\in \mathrm{int} S\) and g is continuous at \(x_0,\) it follows that there exists \(r>0\) such that \(-g(x_0+ru)\in S\) for all \(u\in {\mathbb {B}},\) where

Thus, \(x_0 + ru \in K\) for all \(u \in {\mathbb {B}}.\) So, by the hypothesis, since K is nearly convex at \({\bar{x}},\) we conclude from Corollary 3.2 that

In particular, for \(u =0 \in {\mathbb {B}},\) one has

This together with (3.6) and the fact that \(x_0 \in C\) implies that

Since \(t_i>0\) for all \(i=1,2,\ldots ,p,\) it follows from (3.9) and (3.10) that \(\langle \nabla g({\bar{x}})^T {\bar{\lambda }}_i , x_0-\bar{x}\rangle =0\) for all \(i=1,2,\ldots ,p.\) So, it follows from (3.8) that

which implies that \(\nabla g({\bar{x}})^T {\bar{\lambda }}_i=0\) for all \(i=1,2,\ldots ,p.\) This contradicts the validity of (iii), because \({\bar{\lambda }}_i\in \mathcal {B}\) such that \(\langle {\bar{\lambda }}_i, g({\bar{x}})\rangle =0,\) \(i=1,2,\ldots ,p.\) Hence, the implication \(\mathrm{(iii)}\Longrightarrow \mathrm{(i)}\) is justified.

\(\mathrm{(iii)} \Longleftrightarrow \mathrm{(iv)}.\) This follows from Corollary 2.4.\(\square \)

By the following example, we illustrate Theorem 3.5.

Example 3.6

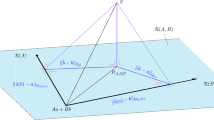

Let \(K:=\{x\in \mathbb {R}^2\, : \, -g(x)\in S\},\) where

and \(g(x):=\big (g_1(x), g_2(x)\big )\) with \(g_1(x):= x_1^3-x_2\) and \(g_2(x):=-x_1^2+x_2\) for all \(x:=(x_1,x_2)\in \mathbb {R}^2.\) Let \(C:=\mathbb {R}^2_+.\) Clearly, C is a closed convex subset of \(\mathbb {R}^2.\) We see that

\(\mathcal {B} =\{(1, 0), (0, -1)\}\) such that \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) and \(-g(\frac{1}{2}, \frac{1}{16})=(-\frac{1}{16}, \frac{3}{16})\in \mathrm{int}S,\) i.e., generalized Slater’s condition holds (note that \((\frac{1}{2}, \frac{1}{16}) \in C).\) Let \({\bar{x}} :=(1, 1) \in C \cap K.\) It is easy to check that \(g({\bar{x}}) = 0,\) \(\langle \lambda , g({\bar{x}}) \rangle = 0\) for all \(\lambda \in \mathcal {B},\) and moreover, \(\nabla g({\bar{x}})^T \lambda \ne 0\) whenever \(\lambda \in \mathcal {B}\) such that \(\langle \lambda , g({\bar{x}}) \rangle = 0.\) So, generalized nondegeneracy condition holds at \({\bar{x}}.\) It is not difficult to show that K is not nearly convex at \({\bar{x}},\) while Robinson’s constraint qualification is invalid at \({\bar{x}},\) because \(g({\bar{x}}) + \nabla g(\bar{x})(C - {\bar{x}}) + S = \mathbb {R}_-\times \mathbb {R}.\) Therefore,

Thus, in the absence of the near convexity of K at \({\bar{x}},\) the validity of both generalized Slater’s condition and generalized nondegeneracy condition at \({\bar{x}}\) does not guarantee the validity of Robinson’s constraint qualification at \({\bar{x}}\in C \cap K.\)

Remark 3.7

In view of the proof of Theorem 3.5, we see that the implication (i)\(\Longrightarrow \)(iii) and (iii) \(\Longleftrightarrow \) (iv) do not require the near convexity of K at the point \({\bar{x}}.\) However, even in the case, where S is a convex polyhedral cone, the near convexity of K at \({\bar{x}}\) is essential for the validity of the implication (iii)\(\Longrightarrow \)(ii) (see Example 3.6). Also, it may happen that \(\nabla g(x)^T\lambda \not =0\) whenever \(x\in K\) and \(\lambda \in \mathcal {B}\) with \(\langle \lambda , g(x)\rangle =0\) for some \(\mathcal {B}\subset S^\circ \backslash \{0\}\) such that \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) while there is no \(x_0\in \mathbb {R}^n\) such that \(-g(x_0)\in \mathrm{int} S;\) for example, let \(S:=\mathbb {R}^2_+,\) \(C:=\mathbb {R}^n,\) \( \mathcal {B}:=\{(-1,0), (0,-1)\}\) and \(g(x_1,x_2):=(x_1-x_2, x_2-x_1)\) for all \(x_1, x_2 \in \mathbb {R}.\) \(\square \)

4 Necessary and sufficient conditions for optimality

In this section, using new characterizations of Robinson’s constraint qualification (Theorem 3.5), we present necessary and sufficient conditions for optimality of the problem (P). We first give the notion of the generalized sharpened strong conical hull intersection property, which was introduced in [4] for the case where the constraint functions \(g_j,\) \(j=1,2,\ldots ,m,\) are continuously Fréchet differentiable, and the constraint set K is convex. We now give this notion for the case where g is a Fréchet differentiable function, and the constraint set \(K:=\{x \in \mathbb {R}^n : - g(x) \in S \}\) is nearly convex at some reference point, but is not necessarily convex.

Definition 4.1

Let \(K:=\{x \in \mathbb {R}^n: - g(x) \in S\}\) be given as in (2.2), and let C be a non-empty closed convex subset of \(\mathbb {R}^n\) such that \(C \cap K \ne \emptyset .\) We say that the pair \(\{C, K\}\) has the “generalized sharpened strong conical hull intersection property“ (G-S strong CHIP in short) at a point \({\bar{x}}\in C\cap K,\) if

where \(S^{+}\) defined by (2.4). The pair \(\{C, K\}\) is said to have the generalized sharpened strong CHIP if it has the generalized sharpened strong CHIP at each point \(x\in C\cap K.\)

In the following, we give an example of a pair \(\{C, K\},\) with K is nearly convex at some point \({\bar{x}} \in C \cap K\) but not convex, having the G-S strong CHIP at \({\bar{x}}.\)

Example 4.2

Let \(g_{j}:\mathbb {R}^{2}\longrightarrow \mathbb {R}\) be defined by

and let \(S:=\mathbb {R}_{+}^2.\)

It is easy to see that g is a differentiable function which is not S-convex, and

So, K is not convex. Let \(C:=\mathbb {R}^2_{+},\) and \({\bar{x}}:=(0,0) \in C \cap K.\) Therefore, K is nearly convex at \({\bar{x}},\) \((C \cap K-{\bar{x}})^{\circ }=\mathbb {R}^{2}_{-}\) and \((C-\bar{x})^{\circ }=\mathbb {R}^{2}_{-}.\) One can easily show that

Then

i.e., the pair \(\{C, K\}\) has the G–S strong CHIP at \({\bar{x}}.\) Also, if we assume that \(C:=\mathbb {R}_{-}\times \mathbb {R}_{+},\) thus \(C \subset K,\) and hence \(C \cap K = C.\) Therefore, \((C \cap K - \bar{x})^\circ = (C - {\bar{x}})^\circ .\) Then the pair \(\{C, K\}\) has the G–S strong CHIP at \({\bar{x}}.\)

In the sequel, for each convex function \(f: \mathbb {R}^n \longrightarrow \mathbb {R},\) we denote by \((P_f)\) the following optimization problem:

where \(K:=\{x\in \mathbb {R}^n\, : \, -g(x)\in S\}\) is defined by (2.2), S is a non-empty closed convex cone in \(\mathbb {R}^m,\) and C is a non-empty closed convex subset of \(\mathbb {R}^n\) such that \(C \cap K \ne \emptyset .\)

We now show that the G-S strong CHIP is necessary for optimality of the problem \((P_{f})\) whenever the constraint set K is nearly convex at some point \({\bar{x}} \in C \cap K.\)

Theorem 4.2

Let \(K:=\{ x \in \mathbb {R}^n : -g(x) \in S \}\) be given as in (2.2), and let \({\bar{x}}\in C\cap K.\) Consider the following assertions:

-

(i)

For each convex function \(f:\mathbb {R}^n \longrightarrow \mathbb {R}\) that attains its global minimum at \({\bar{x}}\) over \(C\cap K,\) we have

$$\begin{aligned} 0\in \partial f({\bar{x}})+\{\nabla g({\bar{x}})^T\lambda : \lambda \in S^+, \ \langle \lambda , g({\bar{x}})\rangle =0\}+(C-{\bar{x}})^\circ . \end{aligned}$$(4.22) -

(ii)

The pair \(\{C, K\}\) has the G-S strong CHIP at \({\bar{x}}.\)

If K is nearly convex at \({\bar{x}},\) then (i)\(\Longrightarrow \)(ii). Moveover, if K is convex, then (ii)\(\Longrightarrow \)(i).

It should be noted that since K is closed, in view of Lemma 3.3, K is convex if and only if K is nearly convex.

Proof

\(\mathrm{(i)} \Longrightarrow \mathrm{(ii)}.\) Assume that K is nearly convex at \({\bar{x}}\) and (i) holds. Let \(u\in (C\cap K-\bar{x})^\circ \) be arbitrary. Then \(\langle u, y - {\bar{x}} \rangle \le 0\) for all \( y \in C \cap K,\) and so, \(\langle -u, y \rangle \ge \langle -u, {\bar{x}} \rangle \) for all \(y \in C \cap K.\) Therefore, \({\bar{x}}\) is a global minimizer of the convex function f over \(C \cap K,\) where the function \(f:\mathbb {R}^n \longrightarrow \mathbb {R}\) is defined by \(f(y):= \langle -u, y \rangle \) for all \(y \in \mathbb {R}^n.\) Thus, by the hypothesis (i),

(Note that \(\partial f(y) = \{\nabla f(y)\} = \{-u\}\) for all \(y \in \mathbb {R}^n,\) and so \(\partial f({\bar{x}}) = \{-u\}).\) Hence,

This shows that

Conversely, let

be arbitrary. Then there exist \(\lambda \in S^+\) with \(\langle \lambda , g({\bar{x}})\rangle =0\) and \(v\in (C-{\bar{x}})^\circ \) such that \(u=\nabla g({\bar{x}})^T\lambda + v.\) On the other hand, since by the hypothesis, K is nearly convex at \({\bar{x}},\) then by Definition 2.7, for each \(y \in C \cap K,\) there exists a sequence \(\{t_n\}_{n\ge 1}\) of positive real numbers with \(t_n \longrightarrow 0^+\) such that \({\bar{x}}+t_n(y-{\bar{x}})\in K\) for all sufficiently large n. This implies that \(-g({\bar{x}}+t_n(y-{\bar{x}}))\in S\) for all sufficiently large n. This together with \(\lambda \in S^{+}\) and \(\langle \lambda , g({\bar{x}}) \rangle = 0\) and the fact that g is differentiable at \({\bar{x}}\) implies that

Now, since \(v \in (C - {\bar{x}})^\circ ,\) we obtain from (4.24) that

Therefore, \(u\in (C\cap K-{\bar{x}})^\circ ,\) and consequently,

We conclude from (4.23) and (4.25) that the pair \(\{C, K\}\) has the G-S strong CHIP at \({\bar{x}},\) i.e., (ii) holds.

\(\mathrm{(ii)} \Longrightarrow \mathrm{(i)}.\) Suppose that K is convex and (ii) holds. Let \({\bar{x}}\) be a global minimizer of any convex function \(f:\mathbb {R}^n \longrightarrow \mathbb {R}\) over \(C\cap K.\) Then, due to the convexity of \(C \cap K\) and using Moreau–Rockafellar’s theorem, we get

This together with (4.21) implies that (4.22) holds, i.e., (i) is justified. \(\square \)

Definition 4.3

(KKT conditions and stationary points). Consider the problem \((P_{f}),\) and let \({\bar{x}}\in C\cap K.\) We say that \({\bar{x}}\) is a stationary point of the problem \((P_{f}),\) if

If the function \(f:\mathbb {R}^n \longrightarrow \mathbb {R}\) is Fréchet differentiable, \(C:=\mathbb {R}^n\) and \(S:=\mathbb {R}_{+}^m,\) then (4.26) is called the Karush–Kuhn–Tucker conditions (KKT conditions), and \(\lambda \) in (4.26) is called a Lagrange multiplier at \({\bar{x}}.\)

We now present necessary and sufficient conditions for optimality of the problem \((P_{f}).\)

Theorem 4.4

Consider the problem \((P_{f}),\) and let \({\bar{x}}\in C\cap K.\) Assume that S has a non-empty interior and there exists a subset \(\mathcal {B}\) of \(S^\circ \backslash \{0\}\) such that \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) and moreover, generalized sharpened nondegeneracy condition holds at \({\bar{x}}.\) Furthermore, suppose that generalized Slater’s condition holds. Consider the following assertions:

-

(i)

\({\bar{x}}\) is a stationary point of the problem \((P_{f}).\)

-

(ii)

\({\bar{x}}\) is a global minimizer of the problem \((P_{f})\) over \(C \cap K.\)

If K is nearly convex at \({\bar{x}},\) then (i)\(\Longrightarrow \) (ii). Moveover, if K is convex, then (ii)\(\Longrightarrow \)(i).

Proof

\(\mathrm{(i)} \Longrightarrow \mathrm{(ii)}.\) Assume that K is nearly convex at \({\bar{x}},\) and \({\bar{x}}\) is a stationary point of the problem \((P_{f}).\) Then, by Definition 4.3, there exists \(\lambda \in (S+g({\bar{x}}))^\circ \) such that

Since \(\lambda \in (S+g({\bar{x}}))^\circ ,\) \(-g({\bar{x}}) \in S\) and S is a cone, we conclude that \(-\lambda \in S^+\) and \(\langle \lambda , g({\bar{x}})\rangle =0,\) and hence,

Now, by near convexity of K at \({\bar{x}},\) we show that the later inclusion holds. To this end, let \(w \in \{\nabla g({\bar{x}})^T \lambda : \lambda \in S^+, \ \langle \lambda , g({\bar{x}})\rangle =0\}+(C-{\bar{x}})^ \circ \) be arbitrary. Then there exist \(\lambda \in S^+\) with \(\langle \lambda , g({\bar{x}}) \rangle =0\) and \(v \in (C - {\bar{x}})^\circ \) such that \(w= \nabla g({\bar{x}})^T \lambda + v.\) Now, let \(y \in C \cap K\) be arbitrary. Since K is nearly convex at \({\bar{x}},\) it follows from Definition 2.7 that there exists a sequence \( \{\beta _{m} \}_{m \in \mathcal {N}} \subset (0,+\infty )\) with \( \beta _{m}\longrightarrow 0^{+}\) such that

This implies that

Since \(\lambda \in S^+,\) we get

Since g is differentiable at \({\bar{x}}\) and \(\langle \lambda , g({\bar{x}}) \rangle =0,\) \(v \in (C - {\bar{x}})^\circ ,\) we conclude from (4.28) that

and hence, \(w \in (C \cap K - {\bar{x}})^\circ .\)

Now, using (4.27), we get

This implies that there exist \(u \in \partial f({\bar{x}})\) and \(v \in (C \cap K - {\bar{x}})^\circ \) such that \(u + v =0.\) Since \(u \in \partial f({\bar{x}}),\) it follows that

Thus,

This together with the fact that \(v \in (C \cap K - {\bar{x}})^\circ \) implies that

So, \(f({\bar{x}}) \le f(x)\) for all \(x \in C \cap K.\)

Therefore, \({\bar{x}}\) is a global minimizer of the problem \((P_{f})\) over \(C \cap K,\) i.e., (ii) holds.

(ii)\(\Longrightarrow \)(i). Suppose that K is convex. We first show that the pair \(\{C, K\}\) has the G-S strong CHIP at \({\bar{x}}.\) To this end, since by the hypothesis, K is nearly convex at \({\bar{x}}\) (because K is convex), S has a non-empty interior and there exists a subset \(\mathcal {B}\) of \(S^\circ \backslash \{0\}\) such that \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) and generalized Slater’s condition holds, then by Theorem 3.5 (the implication (iv)\(\Longrightarrow \)(ii)) (note that, by the hypothesis, generalized sharpened nondegeneracy condition holds at \({\bar{x}}),\) Robinson’s constraint qualification holds at \({\bar{x}}\in D:= C\cap K.\) Now, let the function \(G:\mathbb {R}^n\longrightarrow \mathbb {R}^m\times \mathbb {R}^n\) be defined by \(G(x):=(-g(x), x)\) for all \(x\in \mathbb {R}^n.\) Clearly, one has \(D=\{x\in \mathbb {R}^n : G(x)\in S\times C\}.\) Since Robinson’s constraint qualification holds at \({\bar{x}}\in D,\) we conclude from [1, Lemma 2.100] that

Thus, it follows from [14, Corollaries 1.15 & 3.9] that

Since C and K are convex sets and S is a convex cone, we conclude that \(N_D({\bar{x}})=(C\cap K-{\bar{x}})^\circ ,\) \(N_C({\bar{x}})=(C-{\bar{x}})^\circ \) and

Therefore, we deduce from (4.29) that the pair \(\{C, K\}\) has the G-S strong CHIP at \({\bar{x}}.\) Now, suppose that \({\bar{x}}\) is a global minimizer of the problem \((P_{f})\) over \(C \cap K.\) Then, by Theorem 4.2 (the implication (ii)\(\Longrightarrow \)(i)),

This implies that there exists \(\lambda \in S^+\) such that \(\langle \lambda , g({\bar{x}})\rangle =0\) and

Since S is a closed cone, we conclude that \(-\lambda \in \big (S+g({\bar{x}})\big )^\circ .\) So, there exists \(\lambda \in (S + g({\bar{x}}))^\circ \) such that

Hence, in view of Definition 4.3, \({\bar{x}}\) is a stationary point of the problem \((P_{f}),\) which completes the proof. \(\square \)

Corollary 4.5

Consider the problem \((P_{f}),\) and let \({\bar{x}}\in C\cap K.\) Assume that S has a non-empty interior and there exists a subset \(\mathcal {B}\) of \(S^\circ \backslash \{0\}\) such that \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) and moreover, generalized nondegeneracy condition holds at \({\bar{x}}.\) Furthermore, suppose that generalized Slater’s condition holds. Consider the following assertions:

-

(i)

\({\bar{x}}\) is a stationary point of the problem \((P_{f}).\)

-

(ii)

\({\bar{x}}\) is a global minimizer of the problem \((P_{f})\) over \(C \cap K.\) If K is nearly convex at \({\bar{x}},\) then (i)\(\Longrightarrow \)(ii). Moveover, if K is convex, then (ii)\(\Longrightarrow \)(i).

Proof

Since generalized nondegeneracy condition holds at \({\bar{x}}\) and generalized Slater’s condition holds, thus by Corollary 2.4 (the implication (i)\(\Longrightarrow \)(ii)), generalized sharpened nondegeneracy condition holds at \({\bar{x}}.\) Now, the result follows from Theorem 4.4. \(\square \)

Corollary 4.6

Consider the problem \((P_{f}),\) and let \({\bar{x}}\in C\cap K.\) Assume that S has a non-empty interior and there exists a subset \(\mathcal {B}\) of \(S^\circ \backslash \{0\}\) such that \(\mathrm{pos}(\mathcal {B})= S^\circ ,\) and moreover, Robinson’s constraint qualification holds at \({\bar{x}}.\) Consider the following assertions:

-

(i)

\({\bar{x}}\) is a stationary point of the problem \((P_{f}).\)

-

(ii)

\({\bar{x}}\) is a global minimizer of the problem \((P_{f})\) over \(C \cap K.\) If K is nearly convex at \({\bar{x}},\) then (i)\(\Longrightarrow \)(ii). Moveover, if K is convex, then (ii)\(\Longrightarrow \)(i).

Proof

Since Robinson’s constraint qualification holds at \( {\bar{x}},\) then in view of the hypotheses, by Theorem 3.5 (the implication (ii)\(\Longrightarrow \)(iii)), generalized nondegeneracy condition holds at \({\bar{x}},\) and moreover, generalized Slater’s condition holds. Now, the result follows from Corollary 4.5. \(\square \)

We now illustrate Theorem 4.4 and its corollaries by the following examples.

Example 4.7

Let \(f:\mathbb {R}^2 \longrightarrow \mathbb {R}\) be defined by \(f(x):= -2x_1 + \frac{1}{2}x_1^2 + \frac{1}{2}x_2^2\) for all \(x:=(x_1, x_2) \in \mathbb {R}^2.\) Let \(C:=\mathbb {R}^2,\) \(S:=\mathbb {R}^3_+,\) \(g_1(x):= 1-x_1,\) \(g_2(x):=1-x_2,\) \(g_3(x):=x_2 - x_1\) and \(g(x):=(g_1(x),g_2(x), g_3(x))\) for all \(x:=(x_1, x_2) \in \mathbb {R}^2.\)

Clearly, f is a convex function on \(\mathbb {R}^2.\) We have \(K:=\{x\in \mathbb {R}^2 : -g(x)\in S\}=\{x\in \mathbb {R}^2 : x_1 \ge x_2 \ge 1\}.\) Suppose that \({\bar{x}}:=(2, 1) \in \mathbb {R}^2.\) It is clear that \(-g({\bar{x}}) = (1, 0, 1) \in S,\) and so, \({\bar{x}} \in K = C \cap K.\) One can easily see that K is nearly convex at \({\bar{x}}\) (in fact, K is closed and convex), the function g is Fréchet differentiable and \(S^\circ =-S.\) Let \(x_0 :=(2, \frac{3}{2}) \in C.\) It is easy to see that \(-g(x_0) = (1, \frac{1}{2}, \frac{1}{2}) \in \mathrm{int}S,\) i.e., generalized Slater’s condition holds.

Also, for \(\mathcal {B}:=\{(-1,0,0), (0,-1,0), (0, 0, -1)\}\) we have \(\mathrm{pos}(\mathcal {B})= S^\circ .\) Take any \(x \in K \) and any \(\lambda :=(\lambda _1, \lambda _2, \lambda _3) \in \mathcal {B}\) such that \(0=\langle \lambda , g(x)\rangle =\sum \limits _{i=1}^3\lambda _ig_i(x),\) then it holds that

and

Thus, generalized nondegeneracy condition holds at \({\bar{x}},\) and hence, in view of Theorem 3.5, generalized sharpened nondegeneracy condition and Robinson’s constraint qualification hold at \({\bar{x}}.\) Therefore, all hypotheses of Theorem 4.4, Corollary 4.5 and Corollary 4.6 hold. Now, put \(\lambda :=(0, -1, 0).\) Then \(\lambda \in (S + g({\bar{x}}))^\circ ,\) and

Hence, in view of Definition 4.3, \({\bar{x}}\) is a stationary point of the problem \((P_{f}).\) Thus, by Theorem 4.4 (and also, by its corollaries), \({\bar{x}}\) is a global minimizer of the problem \((P_{f})\) over \(C \cap K.\)

Example 4.8

Let \(f:\mathbb {R}^3 \longrightarrow \mathbb {R}\) be defined by \(f(x):= \frac{1}{2} x_1^2 - 3x_1 + 2x_2 + x_3\) for all \(x:=(x_1, x_2, x_3) \in \mathbb {R}^3.\) Let \(C:=\mathbb {R}^3,\) \(S:=K_3\times \mathbb {R}^3_+\) with \(K_3:=\big \{(x_1, x_2, x_3)\in \mathbb {R}^3 : x_3\ge \sqrt{x_1^2+x_2^2}\big \},\) \(g_i(x):=-a_{i1}x_1-a_{i2}x_2-a_{i3}x_3-b_i,\) where \(a_{ij}, b_i\in \mathbb {R}\) \((i,j=1,2,3),\) \(g_4(x):= 1-x_1x_2,\) \(g_5(x):=-x_1,\) \(g_6(x):=-x_2,\) \(g(x):=\big (g_1(x),...,g_6(x)\big )\) and

It is clear that f is a convex function on \(\mathbb {R}^3.\) One has \(K:=\{x\in \mathbb {R}^3 : -g(x)\in S\}=\{x\in \mathbb {R}^3 : Ax+b\in K_3,\ x_1x_2\ge 1,\ x_1\ge 0, \ x_2\ge 0\}.\) Clearly, g is not S-convex. Now, let \(x^0 =(x_1^0, x_2^0, x_3^0) :=(1, 2, 1)\in \mathbb {R}^3 = C.\) Then, \(A x^0+b\in \mathrm{int} K_3,\) \(x_1^0>0,\) \(x_2^0>0\) and \(x_1^0 x_2^0>1.\) We see that \(-g(x^0)\in \mathrm{int}S,\) and so generalized Slater’s condition holds. Let \({\bar{x}} :=(1, 1, 1).\) One can easily see that \(A {\bar{x}} + b \in \mathrm{int}K_3,\) and since \({\bar{x}}_1 > 0,\) \({\bar{x}}_2 > 0,\) \({\bar{x}}_1 {\bar{x}}_2 \ge 1,\) it follows that \({\bar{x}} \in K = C \cap K.\) It is not difficult to show that K is not convex and K is nearly convex at \({\bar{x}}.\) We have \(S^\circ =-S.\) Also, for \(\mathcal {B}:=\{(\lambda _1,\lambda _2,\lambda _3)\in \mathbb {R}^3 : \lambda _1^2+\lambda _2^2=1,\, \lambda _3=-1\}\times \{-e_1, -e_2,- e_3\},\) where \(e_1=(1,0,0),\) \(e_2=(0,1,0)\) and \(e_3=(0,0,1),\) one has \(\mathrm{pos}(\mathcal {B})= S^\circ .\) Take any \(x \in K\) and any \(\lambda :=(\lambda _1,...,\lambda _6) \in \mathcal {B}\) such that \(0=\langle \lambda , g(x)\rangle =\sum \limits _{i=1}^6\lambda _ig_i(x).\) Thus it holds that \(\lambda _1^2+\lambda _2^2=1,\) \(\lambda _3=\lambda _4=-1,\) \(\lambda _5=\lambda _6=0,\) \(x_1x_2-1=0,\) \(x_1>0,\) \(x_2>0,\)

and

Therefore, generalized nondegeneracy condition holds at \({\bar{x}},\) and hence, by near convexity of K at \({\bar{x}},\) it follows from Theorem 3.5 that generalized sharpened nondegeneracy condition and Robinson’s constraint qualification hold at \({\bar{x}}.\) Then all hypotheses of Theorem 4.4, Corollaries 4.5 and 4.6 hold for using the implication (i)\(\Longrightarrow \)(ii). Now, let \(\lambda :=(0, -1, -1, -1, 0, 0).\) It is easy to show that \(\lambda \in (S + g({\bar{x}}))^\circ ,\) and

Hence, in view of Definition 4.3, \({\bar{x}}\) is a stationary point of the problem \((P_{f}).\) Thus, by Theorem 4.4 (the implication (i)\(\Longrightarrow \)(ii)) (and also, by its corollaries), \({\bar{x}}\) is a global minimizer of the problem \((P_{f})\) over \(C \cap K.\)

It is worth noting that the pair \(\{C, K \}\) has the G-S strong CHIP at \({\bar{x}}.\)

References

Bonnans, J.F.; Shapiro, A.: Perturbation Analysis of Optimization Problems. Springer, New York (2000)

Borwein, J.M.; Lewis, A.S.: Convex Analysis and Nonlinear Optimization Theory and Examples. Springer, New York (2006)

Boyd, S.; Vandenberghe, L.: Convex Optimization. Cambridge University Press, Cambridge (2004)

Chieu, N.H.; Jeyakumar, V.; Li, G.; Mohebi, H.: Constraint qualifications for convex optimization without convexity of constraints: new connections and applications to best approximation. Eur. J. Oper. Res. 265, 19–25 (2018)

Deutsch, F.; Li, W.; Ward, J.D.: Best approximation from the intersection of a closed convex set and a polyhedron in Hilbert space, weak Slater conditions, and the strong conical hull intersection property. SIAM J. Optim. 10, 252–268 (1999)

Dutta, J.; Lalitha, C.S.: Optimality conditions in convex optimization revisited. Optim. Lett. 7, 221–229 (2013)

Hiriart-Urruty, J.B.; Lemarechal, C.: Convex Analysis and Minimization Algorithms I. Springer, In Grundlehren der mathematischen Wissenschaften (1993)

Ho, Q.: Necessary and sufficient KKT optimality conditions in non-convex optimization. Optim. Lett. 11, 41–46 (2017)

Jeyakumar, V.; Mohebi, H.: Characterizing best approximation from a convex set without convex representation. J. Approx. Theory 239, 113–127 (2019)

Jeyakumar, V.; Mohebi, H.: A global approach to nonlinearly constrained best approximation. Numer. Funct. Anal. Optim. 26(2), 205–227 (2005)

Lasserre, J.-B.: On convex optimization without convex representation. Optim. Lett. 5, 549–556 (2011)

Martinez-Legaz, J.-E.: Optimality conditions for pseudoconvex minimization over convex sets defined by tangentially convex constraints. Optim. Lett. 9, 1017–1023 (2015)

Mordukhovich, B.S.: Variational Analysis and Applications. Springer, New York (2018)

Mordukhovich, B.S.: Variational Analysis and Generalized Differentiation, I: Basic Theory. Springer, Berlin (2006)

Mordukhovich, B.S.; Nghia, T.T.A.: Non-smooth cone-constrained optimization with applications to semi-infinite programming. Math. Oper. Res. 39, 301–324 (2014)

Moffat, S.M.; Moursi, W.M.; Wang, X.: Nearly convex sets: fine properties and domains or ranges of subdifferentials of convex functions. Math. Program. Ser. A 160, 193–223 (2016)

Rockafellar, R.T.: Convex Analysis. Princeton University Press, Princeton (1970)

Schneider, R.: Convex Bodies: The Brunn–Minkowski Theory. Cambridge University Press, Cambridge (1994)

Zalinescu, C.: Convex Analysis in General Vector Spaces. World Scientific, London (2002)

Acknowledgements

The authors are very grateful to the two anonymous referees for their useful suggestions and criticism regarding an earlier version of this paper. The comments of the referees were very useful and they helped us to improve the paper significantly (the proof of Lemma 3.3 has been given by one of the anonymous referees). The second author was partially supported by Mahani Mathematical Research Center, Shahid Bahonar University of Kerman, Iran [Grant No. 97/3267].

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Ghafari, N., Mohebi, H. Optimality conditions for nonconvex problems over nearly convex feasible sets. Arab. J. Math. 10, 395–408 (2021). https://doi.org/10.1007/s40065-021-00315-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40065-021-00315-3