Abstract

Motivated by data on co-authorships in scientific publications, we analyze a team formation process that generalizes matching models and network formation models, allowing for overlapping teams of heterogeneous size. We apply different notions of stability: myopic team-wise stability, which extends to our setup the concept of pair-wise stability, coalitional stability, where agents are perfectly rational and able to coordinate, and stochastic stability, where agents are myopic and errors occur with vanishing probability. We find that, in many cases, coalitional stability in no way refines myopic team-wise stability, while stochastically stable states are feasible states that maximize the overall number of activities performed by teams.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In this paper we propose a theoretical analysis of the process of team formation to perform tasks with the aim to shed light on a broad variety of real-world activities. Our model generalizes matching models and network formation models.

We consider a finite set of agents, with heterogeneous constraints on time, who have the possibility of choosing between a finite set of teams and allocate their time to perform tasks. Teams of different size are allowed and can be formed only if they satisfy exogenous constraints, called technology. For instance, agents may form a team only if they are neighbors in an exogenously given social network, or if they match complementary exogenous skills, or if they have common communication tools. If time constraints are satisfied for each agent, a configuration of teams is feasible and called a state. Each state provides a specific payoff to each agent. As we discuss in Sect. 4.4, this setup generalizes matching models and network formation models.

We find that, under the assumption of non-satiation with respect to teams for every agent, an extension of the simple notion of pair-wise stability [25] to this setup—which we call myopic team-wise stability—does not have a strong predictive power on the stable states of the model; in particular, feasible states that are maximal with respect to set inclusion are myopically team-wise stable. Therefore, we compare two possible refinements. The first, following the same approach of [24], is given by stochastic stability: In the presence of extremely rare errors that can create or dissolve teams, and with agents that adapt myopically to the current state of the system, we find that the stochastically stable states are those that actually maximize the overall number of teams. The second is a generalization of coalitional stability for cooperative games [14]: its predictive capability will turn out to be heavily dependent on the assumptions on payoff functions. Moreover, when all projects are equivalent for every agent in terms of costs (resources employed) and benefits (payoffs earned), we find that the states that satisfy this form of coalitional stability are exactly the same as those that are myopically team-wise stable. Therefore, this latter refinement—which is much more demanding in terms of agents’ rationality—proves to have no greater predictive power with respect to myopic team-wise stability in a stark but significant case.

The paper is structured as follows. In Sect. 2 we consider its relation to the extant literature. In Sect. 3 we motivate the contribution by considering an empirical analysis to which our model can be applied. In Sect. 4 we present all the aspects of the model, without any definition of stability. In Sect. 5 we introduce and discuss the weak notion of myopic team-wise stability, which is then refined with the tools of stochastic stability in Sect. 6, and with a concept of coalitional stability in Sect. 7. Section 8 lists possible extensions of our study, and some additional discussion and results are in the Appendices.

2 Literature

In the real world, activities are often performed by people in teams, so that the constraints each agent has to take into consideration in her decision depend on the choices, and hence the constraints, of others. For instance, if Alice wants to allocate a couple of hours on Saturday morning to playing tennis, but all her friends have already fully allocated time on Saturday morning to other activities, then Alice’s desire to play tennis will remain unsatisfied. This simple example shows the existence of indirect externalities that must be taken into account in every individual decision when activities are performed in teams.

The same happens for team formation and co-authorships in academic research. People work simultaneously on different projects, often participating to different teams which, in turn, may also work simultaneously on several projects. Each researcher allocates her time for each team and each project and, hence, every team that is formed may generate negative (or positive) direct externalities for non-members, due for instance to the reduction of effort that a researcher puts into each single project when she undertakes a new project (as further described in Sect. 3 and modeled in Sect. 4.5). Since size and composition of teams are important drivers of performance, this team formation process is not only studied in the literature about the academic profession (e.g., by [32]) but also in the literature on R &D and entrepreneurial activity related to co-foundation of firms [4, 45, 47].

This paper starts with the pair-wise stability notion defined by [25] and extends it to team-wise stability, whereby tasks can be performed by groups of more than two individuals and of different sizes. To refine this myopic equilibrium concept with stochastic stability we use the same approach of [24] while extending their model, which is a special case of the one presented here. Moreover, we contribute to this stream of literature by incorporating constraints to the capacity of agents of performing tasks and of coordinating with others in the spirit of [46] and [1], although both works consider a pure noncooperative framework in contrast with our approach which is more cooperative.

As far as matching models are concerned, the most recent papers that study multi-matching environments with more specific results are Pycia [39] and Pycia and Yenmez [40], concentrating on the presence of externalities, and Hatfield et al. [18], with the focus on the effects of agents’ specific preferences. With respect to network formation models, we generalize pair-wise stability (see [25]) and strong stability [26] to a more general setting of resource-constrained team formation. The constraint imposed on our agents by a fixed time resource has been modeled in network formation models, e.g., by Staudigl and Weidenholzer [46]. On the other hand, the constraints imposed by the technology can be related to many aspects introduced in the network formation literature: Constraints may be due to homophily (see [7]), because only similar agents may be able to form a team together; or they may be related to an exogenous network of opportunities, because only linked agents have the opportunity to match (on this, we are aware only of [13]); or they may be imposed by complementary exogenous skills that need to be matched together (see, e.g., [8]); or they may be a constraint in the number of links agents can form (e.g., see [6]).

Stochastic stability (for which the references are discussed in Sect. 6) has been applied to networks (first by [24]). More recently Klaus et al. [29] used stochastic stability as a predictive tool for roommate markets. In Boncinelli and Pin [2], best shot games played in exogenous networks are analyzed, and stochastically stable states are proven to be the states with the maximum number of contributing agents if the error structure is such that contributing agents are much more likely to be hit by perturbations.

The stochastic stability analyses carried out on in this paper generalizes the results in Boncinelli and Pin [3], where an application to marriage markets is considered, distinguishing between the case of a link-error model, where mistakes directly hit links, and the case of an agent-error model, where mistakes hit agents’ decisions and only indirectly links. In this setting, stochastic stability proves ineffective for refinement purposes in the link-error model—where all maximal matchings are stochastically stable—while it proves effective in the agent-error model—where all and only matchings that maximize the number of pairs formed are stochastically stable.

Our concept of coalitional stability stems from concepts of cooperative game theory, and particularly from the literature on coalition formation (see, e.g., [5, 15, 22, 30, 31, 43, 44]) and clubs (see, e.g., [11, 37]). We provide further references and undertake an in-depth discussion on this in Sect. 7.

3 Empirical Motivation

We consider the American Physical Society dataset (APS), which comprises publications spanning several decades in virtually all fields of physics and also contains information about their references and their authors.Footnote 1 This allows building a co-authorship network where a link between two authors is established if and only if they are both authors of a paper and, in addition, it allows the computation of the citations received by every paper (and, hence, by each author).

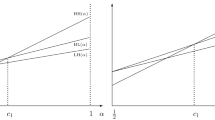

To analyze the data at an individual level, we focus on authors that have a lengthy and consistent career of at least 25 years, thus restricting the sample to around 24,000 authors.Footnote 2 This analysis shows that, on the one hand, throughout one’s career every researcher tends to participate to a stable—or slightly increasing—number of projects together with an increasing number of collaborators (Fig. 1 top and bottom-left). This suggests that researchers behave as if they always gain by entering new projects, even if they are already participating to many of them. (This is in line with the assumptions of our model and, specifically, with Assumption v1 introduced in Sect. 4.2.)

On the other hand, as shown in Fig. 1 bottom-right (and Fig. 7 in “Appendix A”), the trends of papers produced and citations received—when normalized for the number of authors—seem to suggest that researchers tend to dedicate less energy and time to these projects and also their quality—as proxied by citations—decreases. This is also captured by our model in terms of negative externalities: When an agent takes part in a project, this imposes a negative externality on agents that are her collaborators in other projects. In the application outlined in Sect. 4.5, researchers face the tension between the benefits accrued when authoring multiple projects with multiple co-authors and the communication and coordination costs that increase with team size.

Trends of individuals’ career. Boxplots (a.k.a. letter-value plots, see [21]) where the median is the centerline and the quartiles Q2 and Q3 are the rectangles below and above it, respectively. Each successive level outward contains 50% of the remaining data. The average is denoted by a solid blue line. A weighted paper is counted as \(\frac{1}{\text {n authors}}\)

The analysis at an aggregate level seems also to confirm that inefficiencies may be caused by congestion and overproduction of papers. The aggregate production of papers has significantly increased, together with the sheer number of authors participating to the profession (Fig. 8 in “Appendix A”). However, Fig. 2 shows that the fraction of papers without citations has increased even though the median number of citations received by papers has remained stable over time and the number of papers that got cited—at least once—has increased at the same rate as the number of citations produced overall. Our model offers a possible explanation for how these types of aggregate-level inefficiencies may arise as a consequence of individual-level behavior and non-internalized externalities (Sect. 4.5).

4 Model

4.1 The Team Formation Model

We take into consideration a finite set N of n agents. Each agent i has an endowment \(w_i \in {\mathbb {N}}_+\) of a time resource. We denote by \(\mathbf {w} \in {\mathbb {N}}_+^n\) the vector of endowments of all agents.Footnote 3 A team is a vector \(\mathbf {t} \in {\mathbb {N}}^n\), \(\mathbf {t} \le \mathbf {w}\), with \(t_i\) indicating the amount of time employed by agent i in a joint task. We denote by T the set of teams.

Let A be a finite set of activities (or tasks). A project \(p = (a,\mathbf {t})\) is an activity \(a \in A\) carried out by a team \(\mathbf {t} \in T\). We use set \(P \subseteq A \times T\) to collect all projects \(p = (a,\mathbf {t})\) such that team \(\mathbf {t}\) is able to accomplish activity a. We can think of P as representing the technology, since it indicates, for every possible task, which combinations of inputs allow the task to be completed.Footnote 4 It will simplify the following exposition to introduce, with a slight abuse of notation, the auxiliary function \(n(p)=n(a,\mathbf {t}) \equiv \{ i \in N: t_i > 0 \}\), which gives us the set of agents that put some positive amount of time (possibly different among agents) into project p. Another notation we will use is \(h(p)=h(a,\mathbf {t}) \equiv \sum _{i=1}^N t_i \), which indicates the total amount of time (e.g., hours) employed on aggregate by the agents in project p.

In the following discussion we will often use teams and projects as synonyms, but some clarification is necessary. A project \(p=(a,\mathbf {t})\) characterizes an activity a performed by a team \(\mathbf {t}\), where \(\mathbf {t}\) specifies not only the members of the team (who are in the set n(p)) but also how much time each of them devotes to the project. A collection of projects, i.e., of activities and teams, where each activity is performed at most by one team is called a state. We note that while every project p can occur only once in a state, because every activity a can be executed only once by the same team, the same team \(\mathbf {t}\) can occur in different projects, if this is allowed by the technology P, i.e., if there are at least two projects \((a,\mathbf {t}),(b,\mathbf {t}) \in P\), with \(a \ne b\).

A state is denoted by \(x \subseteq P\). We use \(\mathbf {e}(x) = \sum _{ (a,\mathbf {t}) \in x} \mathbf {t}\) to indicate the vector collecting the overall amount of resources employed in state x, agent by agent. We say that x is feasible if \(\mathbf {e}(x) \le \mathbf {w}\), and we denote by \(X \subseteq {\mathcal {P}}(P)\) the collection of subsets of P containing all feasible states. We also introduce function \(\ell (x)=|x|\) that simply counts the number of projects that are completed in state x.

Finally, we introduce utilities that agents earn depending on the state they are in. For every \(i \in N\), and for every \(x \in X\), we denote by \(u_i(x)\) the utility gained by agent i in state x.

Given these elements, it is possible to define a team formation model with the quintuple \((N,\mathbf {w},P,\mathbf {u})\). The primitives are the set N of agents involved, their constraints \(\mathbf {w}\), the set P of projects allowed by technology, and agents’ utilities \(\mathbf {u}\). Given N, \(\mathbf {w}\) and P, it is possible to derive the set X of all feasible states which is a partially ordered set with respect to set inclusion.

4.2 Assumptions

In deriving our results, we employ the following restrictions on the possible structure of teams (first three) and on utilities (second group of three). We explicitly refer to each of these assumption whenever used. We note that some of them are a refinement of one another, while others are incompatible.

Assumption t1

In every \((a,\mathbf {t}) \in P\), we have for every \(i \in N\) that either \(t_i= 0\) or \(t_i=1\).

Assumption t1 states that the time allocated to each feasible project by every agent is always 0 or 1, or simply (up to a normalization of time) that the time allocated to each feasible project by its participants is a constant of the model which is homogeneous across projects for every agent.

In contrast, the next two are assumptions that exogenously fix the number of members in each team. We will discuss them in more detail in Sect. 4.4 where we will see how our model is a generalization of other common theoretical setups.

Assumption s1

There is a \(k \in {\mathbb {N}}_+\), such that for every \(p \in P\), we have that \(|n(p)|=k\).

Following Assumption s2 is a refinement of Assumption s1, where k is fixed to be equal to 2.

Assumption s2

For every \(p \in P\), we have that \(|n(p)|=2\).

We now present some assumptions that specify how agents gain utilities by performing activities in teams.

Assumption v1

For every \(x, x' \in X\), with \(x'\ne x\) and \(x' = x \cup \{p\}\), and for every \(i \in N\) such that \(i \in n(p)\), we have that \(u_i (x') > u_i (x)\).

Assumption v1 is the only one that is needed for our main result. It states that the marginal utility in forming a team, for each of its members, is always positive, independently of all other teams in place. We note that this assumption allows for a large variety of externalities that a project may have on the utility of non-members of that team, or on the fact that the same team could bring different marginal effects to its members, depending on the state.

In particular, this is in line with the assumptions of the model in [1], where the benefit of an agent from participating to a project always increases if she gets involved in it. Moreover, this assumption is consistent with the behavior of researchers that we observe in the APS dataset discussed in Sect. 3.

An additional possible restriction is to impose that the aggregate utility of each project is constant across projects (normalized to 1).

Assumption v2

For each \(x \in X\), \(\sum _{i \in N} u_i (x) = |x|\).

Finally, we will consider also a more restrictive assumption that asks for linearity in teams membership, so making the marginal value of each team, for each of its members, independent on states.

Assumption v3

For each state \(x \in X\), and any agent \(i \in N\), we have that \(u_i (x) = v \cdot |\{p \in x: i \in n(p)\}|\), with \(v \in {\mathbb {R}}^+\).

The last two assumptions convey different ideas on the assignment of utilities: While Assumption v2 imposes that the aggregate marginal value of each team is 1, Assumption v3 says that the payoff earned by each agent i is merely given by the number of projects in which i participates. We note that the two assumptions are compatible only if Assumption s1 holds as well, in which case we have \(v=\frac{1}{k}\).

4.3 Maximal States

We observe that X is a partially ordered set under inclusion. This is because, for any two states x and \(x'\) belonging to X, we can have that either x is included in \(x'\), or \(x'\) is included in x, or no set inclusion relationship can be established between them. However, as the empty state \(x_0\) is included in any other state, it is the only minimal state (or the least state) and, given two states x and \(x'\), the set of those states that are included in both is always nonempty. On the other hand, as there is a threshold \(\mathbf {w}\) on the overall available resources, there may not always be a common superset for any two states. In general there will be many maximal states, i.e., states above which it is not possible to include other teams, because otherwise the threshold would be exceeded.

We denote by \({\mathcal {M}}\) the set of maximal states, \({\mathcal {M}} = \{x \in X : x \subseteq x' \text { and } x \ne x' \Rightarrow x' \notin X \}\). We denote by \({\mathcal {L}}\) the set of states with maximum number of completed projects, \({\mathcal {L}} = \{x \in X : |x| \ge |x'|, \text { for all } x' \in X\}\). We observe that \({\mathcal {L}} \subseteq {\mathcal {M}}\). In fact, if \(x \in X\) and \(x \notin {\mathcal {M}}\), then there exists a feasible state that can be obtained from x by adding some project, and x cannot maximize the number of projects. In contrast, there exist in general maximal states that do not maximize the number of projects, as the following example shows.Footnote 5

Example 1

(Maximal states and maximum number of projects)

Consider the case in which \(N=\{i,j,k,m\}\), \(\mathbf {w}=(2,2,2,2)\), \(A = \{a,b\}\), and \(P = \{ (a,(1,1,0,0)), (b,(1,1,0,0)), (a,(0,1,1,0)),\) (b, (0, 1, 1, 0)), \((a,(0,0,1,1)), (b,(0,0,1,1)) \}\). This is a situation in which there are four agents with two units of time each, there are two activities to be performed, and each activity requires that either \(\{i,j\}\), or \(\{j,k\}\), or \(\{k,m\}\) must be involved, with one unit of time each. We note that Assumptions t1 and s2 hold. Figure 3 illustrates the partial order on set X resulting from the above assumptions: An arrow from a state x to another state y indicates that we can pass from x to y by adding a single project.Footnote 6 There are three maximal states, but only one of them maximizes the number of projects. \(\square \)

The partially ordered set X of all feasible states for the team formation model of Example 1. In this graphical representation—where we have not considered differences in activities, focusing only on their number—there are three maximal states, which are those underlined in black

4.4 Why this Generalization?

The theoretical setup we have introduced encompasses different matching models with non-transferable utility that have been developed in the literature. We acknowledge that some of the existing models might be stretched to deal with most of the cases analyzed within our setup. However, we claim that our model is a natural and simple container for all these models, and we find a value in its capability to adapt easily so as to take into consideration specific cases. In the following we will illustrate this capability.

Cooperative games with non-transferable utility are obtained in our setup if we specify that each agent can belong to one coalition only, and that no externalities are allowed. In order to deal with matching, as done in [3], we simply need to add Assumption s2, so that only teams of size two are allowed to be formed. Marriage—that is bipartite matching—can be obtained through adequate constraints on the technology; after dividing the set of agents between males and females, only heterosexual pairs are allowed in P, and additional constraints can also be considered. Figure 4 provides an example.

If, instead, we relax the upper bound on \(\mathbf {w}\), still following Assumption s2, then any state can be considered as a network that satisfies the constraints imposed by \(\mathbf {w}\) (concerning the maximum degree of nodes), and the connections made available by technology P (representing the exogenous network of opportunities). This is illustrated in the following example.

Example 2

(Co-authorships) A popular and seminal model in the economic literature on networks is the “Co-author model” of Jackson and Wolinsky [25] and later extended by [41]:Footnote 7 Agents are researchers and links are pair-wise collaborations on scientific projects, which are costly but provide payoffs that depend endogenously on the negative externalities given by each collaboration to the other co-authors of an author.

We can include in our setup a payoff function with costs and negative externalities of a project \(p=(a,\mathbf {t})\) on the members of other teams that are formed by the members of \(\mathbf {t}\), as in the original model, and with Assumption s2. However, our model allows for more generality and also for more realistic time constraintsFootnote 8 that can be imposed on the available (multi-)matchings. First of all, (i) some agents may work alone, but even three or more agents can set up a team together and produce a paper, as happens in the profession. Then, (ii) with regard to constraints, there could be an exogenous network G of acquaintances, so that a group of co-authors is possible only if they are mutually connected in G. Or, (iii) the researchers could have exogenous complementary skills, and only projects involving agents with enough diversity could be successful. Aspects such as the three listed above, and even others, could all be modeled by some technology P. \(\square \)

So, what is the added value of our setup with respect to existing ones, in terms of representation of real-world phenomena? To provide an answer through an example, let us stick to the co-authorship model of Example 2. Imagine that agents i, j and k set up a project p together, so that \(|n( p )|=3\). This could be represented in the original co-authorship model of Jackson and Wolinsky [25] that allows only for couples, by saying that i is linked to j and k, and j and k are also linked together. We observe that the link between i and j would have a negative externality on each neighbor of these agents, including k. However, in the general setup and in reality, the fact that i and j are in a three-agent collaboration has a positive externality on the third agent k, and a negative externality on the others. Formally, this could be done in the original network formation model by specifying, for each link between two agents, and for any other agent, the sign of the externality of that link on the third agent. It is clear that this would seriously complicate the notation and that only a more general framework such as the one we use can overcome such difficulties.

We conclude this section with a stylized but fairly general applications that show how the possibilities and the competing incentives of some environments cannot be dealt with using the standard models of matching and network formation.

This model provides a possible mechanism for explaining some of the inefficiencies that we observe in the APS dataset discussed in Sect. 3, where researchers seem to congestion the overall activity because they do not internalize the negative externalities that they have on each other.

4.5 The Publishing Model

We present here an extended example providing the general idea of the model. We continue to adopt an intuition related to the insights presented in Sect. 3 and to the daily experience of everyone in the academic profession, but it is clear that it can easily be extended to R &D between firms that are competing in a market, as in the model of [16]. Consider a world where there are n homogeneous scientific authors, each trying to form teams of collaborators and each with a common time constraint w. They all have two goals: a good output in terms of publications (on which they compete with colleagues), but also the objective of doing good research that can provide advancements in the field. Each author maximizes in each project both the probability of being published and the probability of authoring a good idea. We assume no constraint on the multi-matching technology P, except for the fact that agents cannot work alone: \(p \in P\) if and only if \(|n(p)| \ge 2\).

Here we assume that a project p has a strictly positive divulgative fitness (i.e., an expected popularity) that we call \(\phi (p)\). The divulgative fitness of a paper may depend on the amount of work that is put into the paper by its members. This fitness can clearly also be related to heterogeneous exogenous factors.

Accordingly, a paper’s probability of being published has the multinomial form:Footnote 9

When a new team is formed there are clear negative externalities (increasing in the divulgative fitness of the new project) for all the agents that are not members of the new team, because their probabilities of being published decrease.

In a related but not necessarily collinear way, we assume that each project has a strictly positive probability of providing a good idea which is \(P_{good} (p) \). This probability is reasonably increasing in effort, but there may be communication and coordination costs which make it decrease in the size, in terms of members, of the team. Or, there could be positive externalities from the aggregate quality of all the scientific production as a whole. In general, the whole environment of x can provide both positive and negative externalities, with network effects like those described in the connection model and in the co-authorship model of [25].

To provide a simplified functional form, which maintains the general idea, we assume that each author i receives a payoff in a generic state x that is:

where U and V are positive numbers, homogeneous for all agents,Footnote 10 and \(P_{good} (p)\) does not depend on other existing projects in x. We observe that, while the utility U coming from a publication is not affected by the number of authors (what matters is to have a publication in the curriculum vitae), the benefits V deriving from a good idea must be shared among the participants (consider, for instance, the earnings that come from a patented idea).

This utility is in line with what observed in Sect. 3. On the one hand, authors benefit from taking part in many projects and from having multiple collaborators to accommodate and meet the time constraint while, on the other hand, they do not take into account the externalities which may cause a reduction in effort spent and on the quality of the project.

For this simplified model it is not difficult to prove that it satisfies Assumption v1 (while the other assumptions are not).Footnote 11 That is because, for an agent i, if we call \(\Phi \equiv \sum _{q \in x: i \in n(q)} \phi (q)\), the marginal utility for being member of a new project \(p'\) is:

The first term is nonnegative, and it is null only if that agent was already a member of each existing team. The second term is strictly positive by definition.

5 Myopic Team-Wise Stability

The first equilibrium notion that we provide—called myopic team-wise stability—is a direct generalization of the concept of pair-wise stability from Jackson and Wolinsky [25] within the literature on network formation games. The original notion only considers activities performed in pairs while the present extension allows for activities performed by groups of different sizes.Footnote 12

5.1 Myopically Team-Wise Stable States

The following definition formalizes the notion:

Definition 1

A state x is myopically team-wise stable [MTS] if

-

(i)

for any project \(p \in x\), and for any agent \(i \in n(p)\), we have that \(u_i (x) \ge u_i (x \backslash \{ p \}) \);

-

(ii)

there exists no project \(p \in P\) such that \(x \cup \{ p \} \in X\) and, for any agent \(i \in n(p)\), \(u_i (x \cup \{ p \}) > u_i (x)\). \(\square \)

In words, a state x is myopically team-wise stable, if (i) there is no agent that would be better off by deleting a project she belongs to; and (ii) there is no project that could be added to state x, without hitting the constraints of its members, and which would make them all strictly better off.Footnote 13 With some abuse of notation, we also denote by MTS the set of states that are myopically team-wise stable.

The reason why we call it myopic is that it considers only deviations of one single step in the partially ordered set X.Footnote 14 Note also that, even if a dynamic is implicit in the definition, this concept of equilibrium is a static one.

The following lemma is the first building block of our results.

Lemma 1

Take a team formation model satisfying Assumption v1. We have that \(MTS = {\mathcal {M}}\).

Proof

If a state is maximal it is not possible to add a project, and any deletion would damage the members of the removed project (by Assumption v1). Therefore, that state is MTS.

Suppose that a state is not maximal, then it would be possible to add a project, which would add a positive marginal amount to the utility of all its members (by Assumption v1), and thus that state is not myopically team-wise stable. \(\square \)

5.2 Direct and Indirect Externalities

Our specification allows for externalities between the agents, or for a non-trivial structure of preferences of the agents toward the other team members. All the inefficiencies arising because positive and negative externalities are not endogenized by the agents would give rise to a comparison between stable and efficient outcomes that would be very similar to the one extensively analyzed in network formation models (see [23]).

However, even if utilities from states had a simple structure, e.g., as in the case of the one imposed by Assumption v3, numerous indirect effects would arise from the constraints imposed by the technology P, and by the vector \(\mathbf {w}\) of endowments, as will be clear from the following examples.

First of all, consider again the case illustrated in Example 1 and Fig. 3. Because of the constraints imposed by the technology, agents j and k clearly have a negative externality on the other two agents when they form a team together: by forming a team on an activity they reduce the available teams for agents i and m.

Example 3

Consider a team formation model with four agents: i, j, k and m, all with an endowment of 3 units of time. Agent i can form a team with j only, if one of the two puts in 1 unit and the other 2 units of time. The same holds for the couple formed by k and m. Agents j and k, on the other hand, can form a team together by investing only one unit of time each. As illustrated graphically in Fig. 5, this team formation model has three myopically team-wise stable sets: I, II and III.Footnote 15 The payoff of a project for an agent is always \(\frac{1}{2}\). Therefore, this example does not satisfy Assumption t1, but satisfies Assumptions s2 and v3.

In this case, even if all the teams have the same payoff for each of their members, there are indirect preferences for some of the agents. In particular, if agents j and k could choose ex ante their teams they would rather form teams together, because this would allow them to form up to 3 teams, while forming a team with i or m (respectively) would bind them to myopically team-wise stable sets where they can form at most two teams. We will formalize in Sect. 7 the idea of “choosing ex ante” and “would rather”.

MTS states of Example 3

Example 4

As the model is specified, it is not possible to have indirect externalities of the following form: a team is feasible if and only if another team is not, and possibly also the other way round. As an example consider Case I in Fig. 6, where we may want to express a condition by which the team (i, j) can be active only if the team (k, m) is not active. Another example could be one in which the same subset of agents cannot simultaneously work on more than one project, even if many are independently feasible. It is possible, however, to model a similar situation including fictitious agents, with a dummy utility function, whereby the agents have limited resources of time and must be included as members in the teams that we want to be mutually exclusive. We will not develop all the formal definitions of this approach, but we maintain the simple example given in Fig. 6: As illustrated in Case II it is possible to add a fictitious agent h with \(w_{h}=1\), and such that the possible teams are now (i, j, h) and (k, m, h). In this way the original two teams become mutually exclusive. Adding such fictitious agents does not affect the analysis nor the results and does not require any further assumption.

The two cases discussed in Example 4

6 Stochastic Stability

To refine MTS we consider an unperturbed dynamics where absorbing states are myopically team-wise stable states, and we then insert vanishing perturbations with the aim of refining our prediction by means of stochastic stability.

6.1 Unperturbed Dynamics and Preliminary Results

In order to deal with stochastic stability, we need to introduce an underlying dynamics which describes the probabilistic passage from state to state, and then to add perturbations. In particular, we work with discrete time and we indicate it with \(s = 0,1,\ldots \). We denote the state of the system at time s with \(x^s\). At time s a single project \(p \in P\) is drawn, with every project in P having positive probability of being drawn.Footnote 16

One remark is worth making at this point. Extensive heterogeneity is allowed between the probabilities of different projects being drawn; for instance, it might be reasonable to assume that better projects (in some sense) are more likely to be selected and then implemented. As long as every feasible project has a positive, even tiny, probability of being drawn all our results remain valid.

The drawn project p will actually be formed if \(x \cup \{p\}\) is feasible, i.e., \(\mathbf {e}(x \cup \{p\}) \le \mathbf {w}\). Otherwise, such a team is not formed, since its creation is not possible due to resource constraints. In any case, state \(x^{s+1}\) is reached. We refer to this dynamic process as myopic team-wise dynamics.

A Markov chain (X, D) turns out to be defined, where X is the state space and D the transition matrix, with \(D_{xx'}\) denoting the probability of moving from state x to state \(x'\). We recall some concepts and results in Markov chain theory, following Young [49]. Given any two states \(x,x' \in X\), state \(x'\) is said to be accessible from state x if there exists a sequence of states starting from x and reaching \(x'\) such that the system can move with positive probability from each state to the next state. A set \({\mathcal {E}}\) of states is called a recurrent set (or ergodic class) when each state in \({\mathcal {E}}\) is accessible from any other state in \({\mathcal {E}}\), and no state outside of \({\mathcal {E}}\) is accessible from any state in \({\mathcal {E}}\). By extension, a state \(x \in {\mathcal {E}}\) is also called recurrent. Let \({\mathcal {R}}\) denote the set of all recurrent states of (X, D). If a recurrent set is made of a single element, \({\mathcal {E}} = \{x\}\), such a state is called absorbing. Equivalently, x is absorbing when \(D_{x x}=1\). Let \({\mathcal {A}}\) denote the set of all absorbing states of (X, D). Clearly, an absorbing state is recurrent, hence \({\mathcal {A}} \subseteq {\mathcal {R}}\).

The following proposition proves that there are no recurrent states other than absorbing states, and provides a characterization of absorbing states as maximal states, and thus as myopically team-wise stable states.

Proposition 1

Take a team formation model satisfying Assumption v1 and a myopic team-wise dynamics. We have that \({\mathcal {A}} = {\mathcal {R}} = {\mathcal {M}} = MTS\).

Proof

From the definitions of \({\mathcal {R}}\) and \({\mathcal {A}}\), we know that \({\mathcal {A}} \subseteq {\mathcal {R}}\). Moreover, from Lemma 1, we have that \({\mathcal {M}} = MTS\).

Take \(x \notin {\mathcal {M}}\). An additional project can be formed, and once formed, state x will never be visited again in the future. This shows, by contraposition, that \({\mathcal {R}} \subseteq {\mathcal {M}}\) and \({\mathcal {A}} \subseteq {\mathcal {M}}\).

Finally, consider any state \(x \in {\mathcal {M}}\). Since existing projects never disappear because of Assumption v1, and no new project is feasible starting from x, we can conclude that x is absorbing. Therefore, \({\mathcal {M}} \subseteq {\mathcal {A}}\). \(\square \)

6.2 Perturbed Dynamics and Stochastically Stable States

We are ready to introduce perturbations in the unperturbed dynamics considered in the previous subsection, and then use the techniques developed by Foster and Young [12], Young [48], Kandori et al. [27]. Basically, we suppose that with a tiny amount of probability active projects may accidentally dissolve, and possibly (but not necessarily) non-existing projects can be formed if feasible. By so doing, the dynamic system under consideration becomes ergodic, and from known results it follows that there exists a unique probability distribution among states that is stationary and describes the limiting behavior of the Markov chain as time goes to infinity, irrespectively of the initial state. Then we consider the limit of this stationary distribution for the amount of perturbation decreasing to zero. Those states that are visited with positive probability in this limiting stationary distribution are called stochastically stable.

We invite the reader who is interested in a formal exposition of perturbed Markov chain theory to consult Young [48] and Ellison [10], while in the following we simply make use of the resistance function  , where \(r(x,x')\) indicates the minimal amount of perturbations required to move the system from x to \(x'\) in one unit of time. If \(r(x,x') = 0\) then the system moves from x to \(x'\) with positive probability in the unperturbed dynamics, i.e., \(T_{xx'}>0\), while \(r(x,x') = \infty \) is interpreted as impossibility of moving from x to \(x'\) in one unit of time even when perturbations are allowed.

, where \(r(x,x')\) indicates the minimal amount of perturbations required to move the system from x to \(x'\) in one unit of time. If \(r(x,x') = 0\) then the system moves from x to \(x'\) with positive probability in the unperturbed dynamics, i.e., \(T_{xx'}>0\), while \(r(x,x') = \infty \) is interpreted as impossibility of moving from x to \(x'\) in one unit of time even when perturbations are allowed.

We rely on the techniques and results illustrated in Foster and Young [12], Young [48] and Young [49], as they provide a relatively easy way to identify which states are stochastically stable.Footnote 17 More precisely, we restrict our attention to absorbing states (since there are no other recurrent states by virtue of Proposition 1), and for any pair \((x,x')\) of absorbing states we define \(r^*(x,x')\) as the minimum sum of the resistances between states over any path starting in x and ending in \(x'\).Footnote 18 Then, for any absorbing state x, we define an x-tree as a tree having root at x and all absorbing states as nodes. The resistance of an x-tree is defined as the sum of the \(r^*\) resistances of its edges. Finally, the stochastic potential of x is said to be the minimum resistance over all trees rooted at x.

A state x is proven to be stochastically stable [12] if and only if x has minimum stochastic potential in the set of absorbing states. Intuitively, stochastic stability selects those states that are easiest to reach from other states, with “easiest” interpreted as requiring the fewest mutations (as measured by the stochastic potential).

We now introduce two alternative perturbation schemes in the unperturbed dynamics considered in the previous subsection. The two perturbation schemes lead us to the same results. The first one is called a uniform perturbation scheme, and it is such that at every time s each project \(p \in P\) has an i.i.d. probability \(\epsilon \) subject to an error; such an error makes the project disappear if existing, and be formed if non-existing and \(x^s \cup \{p\} \in X\). The second perturbation scheme is such that only existing projects can be hit by a perturbation, so that each existing project disappears with an i.i.d. probability \(\epsilon \). We refer to this modeling of errors as a uniform destructive perturbation scheme.Footnote 19 It is easy to check that the perturbed system is irreducible and aperiodic: From any state \(x \in X\), every existing project may dissolve by means of perturbations, and then one project per period may form, leading the system to any state \(x'\). Every project can form in the unperturbed dynamics, thanks to Assumption v1, and hence perturbations creating new projects (that are allowed only in the uniform perturbation scheme) do not play any significant role. Aperiodicity is ensured since there are no recurrent states other than absorbing states (see Proposition 1).

We denote by SS the set of stochastically stable states. The following proposition identifies stochastically stable states as the states with the maximum number of existing projects.

Proposition 2

Take a team formation model satisfying Assumption v1, a team-wise dynamics and either a uniform destructive perturbation scheme or a uniform perturbation scheme. Then, \(SS ={\mathcal {L}}\), i.e., the set of states with maximum number of completed projects.

Proof

Consider any two absorbing states \(x, x' \in X\). In order to move from x to \(x'\) it is necessary for all projects that exist at x and do not exist at \(x'\) to be stopped, and this can only occur by means of \(\ell (x) - \ell (x \cap x')\) perturbations, both in the uniform destructive perturbation scheme and in the uniform perturbation scheme. In contrast, projects that exist at \(x'\) and do not exist at x can form in the unperturbed dynamics, thanks to Assumption v1. Therefore:

We are ready to prove \(SS \subseteq {\mathcal {L}}\). We proceed by contradiction. Suppose \(x \notin {\mathcal {L}}\). Then we can find an \(x'\) such that \(\ell (x') > \ell (x)\). Take any x-tree and consider the path from \(x'\) to x, say \((x', x_1, \ldots , x_i, \ldots , x_k, x)\). By (1), the sum of resistances over this path is \(\ell (x') - \ell (x' \cap x_1) + \ell (x_1) - \ell (x_1 \cap x_2) + \ldots + \ell (x_{k-1}) - \ell (x_{k-1} \cap x_k) + \ell (x_k) - \ell (x_k \cap x)\). We now consider the \(x'\)-tree obtained from the x-tree by reversing the path from \(x'\) to x. Again by (1), the sum of resistances over this reversed path is \(\ell (x) - \ell (x \cap x_k) + \ell (x_k) - \ell (x_k \cap x_{k-1}) + \ldots + \ell (x_2) - \ell (x_2 \cap x_1) + \ell (x_1) - \ell (x_1 \cap x')\). Taking the difference between the above sums of resistances over the two paths, we obtain that the \(x'\)-tree has a resistance which is equal to the resistance of the x-tree \(+ \ell (x) - \ell (x')\). Since \(\ell (x') > \ell (x)\), we can conclude that for any x-tree we can find an \(x'\)-tree with a lower overall resistance, and hence the stochastic potential of \(x'\) is lower than the stochastic potential of x. Therefore, x cannot be stochastically stable.

We now prove \({\mathcal {L}} \subseteq SS\). Since at least one stochastically stable state must exist, and we have just seen that no state outside of \({\mathcal {L}}\) is stochastically stable, we can therefore conclude that there exists an \(x \in {\mathcal {L}}\) that is stochastically stable. Consider any other \(x' \in {\mathcal {L}}\). Following exactly the same reasoning as above we obtain that the stochastic potential of \(x'\) must be the same as the stochastic potential of x. Therefore, \(x'\) is stochastically stable as well. \(\square \)

Proposition 2 is our main result. It provides a very precise characterization of stochastically stable states for every team formation model that satisfies the general Assumption v1, and under both destructive and uniform perturbation schemes that we have considered.

To aid intuitive comprehension, we provide the following discussion. In the representation of all the states in X as a partially ordered set, with the empty state at the top and maximal states at the bottom, an error can be seen as a step upward, while the adapting best response of agents (under Assumption v1) can be seen as a step downward. Consider Example 1 as represented in Fig. 3. In this case, the bottom state is SS, because it would need at least two errors before the system can move in the unperturbed dynamics to another MTS state. To move away from the other two MTS states, on the other hand, only one error is required.

In line with one of the main results by [24], which is that stochastic stability selects the complete network among the many network structures obtained as pair-wise equilibria, in our framework this is obtained directly by applying Proposition 1: If the technology allows it, the complete network is the one that maximizes the number of projects realized.

Clearly, the fact that SS states maximize the number of teams in the state does not tell us anything about efficiency, because the utility function could have a structure that highly rewards agents in states with few teams.

Actually, in the APS dataset discussed in Sect. 3 the evidence seems to suggest that researchers tend to accept every new project, but this causes inefficiencies because of negative externalities and congestion. However, under Assumption v2, we can prove the following corollary.

Corollary 1

Given a team formation model, under Assumption v2, every stochastically stable state is Pareto efficient.

Proof

By Proposition 2 a stochastically stable state x maximizes the number of projects \(\ell (x)\). By Assumption v2 we have \( \ell (x) = \sum _{i \in N} u_i ( x ) \), so that x also maximizes the aggregate utility to agents. Thus, there is no other state that can provide a higher utility for some agent, without damaging any other agent. \(\square \)

6.3 Descriptive Value of Stochastic Stability

In this section we have shown that in a team formation model stochastically stable states coincide with the maximal states with the maximum number of projects. This result rests not only on Assumption v1 and a large class of perturbation schemes, but also on individual behavior that is boundedly rational. In particular, no agent will ever exit from existing projects in order to free up time and start other projects, despite the fact that this may increase her utility. Given this circumstance, one may then query the descriptive value of stochastic stability. An answer clearly depends on the specific case under consideration. We limit ourselves to the following observations. Even if agents have sufficient cognitive skills to recognize the possibility of an increase in utility, there are at least two kinds of reasons that might prevent them from doing so. First, coordination issues: in order to carry out a utility enhancement, an agent has to quit projects with some teams and contextually start other projects with new teams, and this involves coordinating the actions of several agents. Second, switching costs: these costs may be due to legal obligations—consider for instance divorce costs in marriage—or to learning how to operate in new teams.

Nevertheless, in the following section we analyze a refinement of myopic team-wise stability based on strong rationality and absence of coordination and switching costs.

In “Appendix B” we consider a variant of the team formation model where agents are endowed with unlimited cognitive and coordination skills, but face switching costs when leaving an existing project. We show that when switching costs are high enough, stochastically stable states are exactly those states having the maximum number of existing projects.

7 Coalitional Stability

In this section we introduce a concept of stability—coalitional stability—which is strongly based on coordination opportunities and rationality of agents. Then we compare it with myopic team-wise stability and stochastic stability, providing a class of situations where coalitional stability has no refining power.

7.1 Coalitionally Stable States

We consider the following definition:

Definition 2

A state x is coalitionally stable [CS] (or coalition proof) if there exists no subset \(C \subseteq N\) such that

-

(i)

there exists a set of projects \(y \subseteq x\) such that \(\forall ~ p \in y\), \(\exists ~ i \in C\) such that \(i \in n(p)\);

-

(ii)

there exists a set of projects z such that \(z \cap x = \emptyset \) and, \(\forall ~ p \in z\), if \(j \in n(p)\) then \(j \in C\);

-

(iii)

\( (x \backslash y ) \cup z \in X\) and for any agent \(i \in C\) we have that \(u_i ( (x \backslash y ) \cup z ) > u_i (x)\). \(\square \)

In words, a state x is coalitionally stable if there is no coalition that can (i) erase a set of projects, each of which contains at least one agent of the coalition,Footnote 20 (ii) form a set of other projects, where all the members of each are also members of the coalition, and (iii) make all the members of the coalition strictly better off in the resulting state.Footnote 21 We denote by CS the set of states that are coalitionally stable.

It is evident that this definition allows for a profound rationality by the agents: They can identify and coordinate a deviation through a long path in the partially ordered set X.

Notice, in particular, that in CS deviations by larger coalitions are allowed, so the equilibrium requirement is more binding than under MTS, hence \(CS \subseteq MTS\). Whether this inclusion can become an equality is discussed more in depth in Proposition 3.Footnote 22 This definition of CS allows agents to maintain some of their existing teams with other agents outside of the coalition, and in this sense it is a generalization of bilateral deviations defined in a network formation setting by Goyal and Vega-Redondo [17]. The literature on clubs (e.g., [37] and [11]) focuses on deviations where all clubs are deleted when members are both outside and inside the coalition. Note finally that we are not providing a general result of existence of CS states, for which we may need a more general concept of stability such as the one provided in more specific settings by Herings et al. [19, 20] and Mauleon et al. [31]. However, we will focus on situations in which we can compare CS states with SS states, so that a simpler definition suffices.Footnote 23 In “Appendix B” we provide more general definitions, which integrate this approach with that of stochastic stability.

7.2 A Comparison Between Notions of Stability

In the representation of all the states in X as a partially ordered set, the deviation of a coalition can be seen as a path that moves first upward and then downward. Consider as an example the whole states of Example 1 and Fig. 3. Suppose that in this case the utility that agents j and k receive from being together is always greater than the utility they receive from being, respectively, with i and m. Then, the bottom state that maximizes the number of projects would not be CS: j and k could coordinate to delete all the existing projects and start two projects together, moving to the state on the extreme right. Therefore, in general, SS and CS states are not necessarily related concepts and can have empty intersections. This can also clearly be seen if we look back at Example 3 and related Fig. 5. I and II are stochastically stable, because they maximize the number of teams at four, while II and III are coalitionally stable, because I can be broken by the coalition \(\{ j,k\}\).

There are cases in which SS and CS states can be a subset of one another, and given the flexibility of the utility function \(\mathbf {u}\), a setup can always be provided, even under Assumption v1, such that any desired subset of states would always be chosen by the grand coalition N.

On the other hand, there are many other cases, based on simple and general utility functions, where the CS states do not provide a clear or apparently improving refinement upon the MTS states. As an example, consider that in general CS states may not even be Pareto efficient, because agents adhere to deviating coalitions only if their marginal profit from doing so is strictly positive: Thus, it could be that a Pareto improving deviation is feasible through a coalition whose members will not all be strictly better off. An instance of this sort is in the case provided by Example 1, under Assumption v3: the top right maximal state of Fig. 3, with only agents b and c forming teams, is a CS state, even if it is Pareto dominated by the bottom left state (with all agents taking part in two teams each).

Here below we present a result for a case (which actually encompasses the previous example) where CS states coincide with MTS states, so that they provide no refinement at all with respect to the myopic and boundedly rational concept of myopic team-wise stability.

Proposition 3

Given a team formation model, under Assumptions t1 and v3, we have that \(CS = MTS\).

Proof

Since larger coalitions are allowed to deviate under CS, we always have that \(CS \subseteq MTS\). We focus on showing that \(MTS \subseteq CS\). Let us suppose this is not the case; then, there is a team-wise stable state x which is not coalitionally stable. From the definition, this means that in x there is a coalition C that can erase a set y of projects and start a set z of new projects.

As agents in C need to strictly increase their utility (i.e., the number of teams to which they belong, from Assumption v3), for each of the agents there is at least one unit of free time in x; formally we have that \(e_i (x) < w_i\) for each \(i \in C\).

As all the projects give strictly positive payoffs, then z is nonempty.

But then, as each team in z is formed by all and only agents from C, and by Assumption t1 it would cost one unit of time each, then each project \(p \in w\) could be started already in x, and \(x \cup \{p \} \in X \), i.e., it would be feasible. We have reached a contradiction with the hypothesis that x is a team-wise stable state. \(\square \)

8 Conclusions and Future Research

In this paper we have provided a model which describes how teams of individuals arise in order to perform activities, investing amounts of a scarce resource (typically time) to conduct the activities. The kinds of interaction that can be modeled in the proposed framework are many and widespread in economic and social spheres. Unfortunately, in a context like this the complexity of analysis can increase very rapidly, so that predictions become very hard to make. Nevertheless, we introduce and discuss alternative notions of stability—myopic team-wise stability, stochastic stability and coalitional stability—and we are able to provide the results that are rather clear-cut (especially for stochastic stability).

Future work can highlight the relevance of the model for specific applications. The setting that we have provided is sufficiently general and flexible to accommodate many different sets of assumptions, and this allows a proper fine-tuning of the model. The examples throughout the paper illustrate its applicative potential. In “Appendix B”, a variant of the model is presented where we add switching costs, which can be considered as a realistic feature for several applications. A promising direction for research could explore its potential applicability to the job market, where the result that stochastic stability selects states with the highest number of projects has an interesting interpretation in terms of unemployment reduction.

On a purely theoretical ground, we provide here below three possible lines along which research may lead to interesting advancements.

The first question is related to the concept of coalitional stability that we define in Sect. 7. We are able to provide examples of non-existence of coalitionally stable states, but also (as in Proposition 3) to prove their existence under specific assumptions. We partly address this question in “Appendix C”, where we define a wider set of farsightedly stable states, whose existence is always granted. However, we conjecture that the existence of simple coalitionally stable states holds also for more general assumptions than those of Proposition 3.

The second question is related to welfare issues and follows from the discussion in Sects. 6 and 7: under which conditions on the utility function are SS states and CS states Pareto efficient, or do they maximize the objective function of some social planner? Clearly a simple case is the one in which the objective function is monotonic in the number of teams, so that SS states are optimal, or the case in which the utility function is monotonic for all agents in the objective function, so that CS states would be optimal, because even the grand coalition made of all the N agents is better off in those states. But how much of the two simple statements above can be generalized in order to have non-trivial results?

The third question follows from the previous one but has a mechanism design approach. Suppose that, through incentives, a planner can slightly change the utility function, not to obtain the trivial forms discussed above but something approaching that. Alternatively, the planner could have the possibility of modifying the technology, at least to the point at which feasibility depends on the structure of connections among agents: Only agents that are close (in some sense) can work together, and the planner can adopt policies to affect who is close to whom. What are the sufficient conditions that would allow the planner to make SS or CS states Pareto efficient, or make them approach maxima of some objective function?

The last two questions could also have empirical applications and, possibly, policy implications if applied to contexts like academic research and organization of researchers’ communities, as exemplified by our motivational description of the APS dataset in Sect. 3. In such environments, there are many possible objective functions that a social planner can aim at, and choosing one would be the first step to start thinking about an ideal mechanism.

Data Availability

The data used in this paper, specifically in Sect. 3, can be freely asked to the American Physical Society at the following link: https://journals.aps.org/datasets. Further information about how they are used in this paper are in “Appendix A”.

Change history

18 July 2022

Missing Open Access funding information has been added in the Funding Note.

22 March 2022

A Correction to this paper has been published: https://doi.org/10.1007/s13235-022-00442-2

Notes

The dataset consists of around 300,000 authors and 570,000 papers published from the beginning of the XX century until 2015. To avoid the scarcity of data before the 1950s, here we only focus on the most consistent part of this dataset, thus limiting our attention to papers published from 1960 and, consequently, to authors whose career started after 1960 as well.

The career length of an author is determined by the years passed from her first to her last publication (recorded in APS). Her cohort is the first year of publication. The subsample selected consists of authors whose cohort is from 1960 to 1990 and whose median career length is around 32 years. These authors are consistently present over time in the dataset, since the median author has a publication recorded every 2 years. Additional information in “Appendix A”.

We denote by \({\mathbb {N}}\) the set of nonnegative integers and by \({\mathbb {N}}_+\) the set of positive integers.

A complementary interpretation of the technology P is based on the agents’ preferences. From this point of view, P allows only for those teams in which no member would rather stay alone than participate in the project. In the literature on matching this condition is called individual rationality and it is also used in decentralized matching models [42]. We observe, however, that interpreting P as individual rationality asks for a different model when combining mistakes with exit costs (see “Appendix B”): In such a case, a project that is formed by mistake persists over time due to the costs for exiting, even if some agent would prefer to stay alone. Finally, we point out that, when allowed, both interpretations for the technology can co-exist: a project is technologically feasible if such a team is able to perform the activity and, at the same time, every agent is willing to do so.

These definitions generalize those in [3], and the following example is analogous to the introductory example in that paper.

In order to provide a simplified figure, we have summarized in a single node the states that are the same in any respect apart from the labels of activities.

It can be reasonable to assume (at least in certain contexts) that everyone likes to be in as many projects as possible, and hence that Assumption v1 is satisfied. With this interpretation, costs arise indirectly as opportunity costs due to the constraint given by available time.

We set up the model as if only one paper is published, but this can be easily relaxed as long as the number of published papers is fixed and independent of the aggregate fitness.

In a context of industrial organization, with R &D between firms, U could be the aggregate value of a fixed market, on which firms compete for shares, while V could be the expected value of further markets that new products could open.

It is possible to provide more complicated payoff functions, which are nonlinear or for which \(P_{good} (p)\) is state dependent, and which also satisfy Assumption v1. A simple but reasonable first step of generalization is by assuming the existence of nonnegative net externalities on \(P_{good} (p)\) that come from a member of n(p) that participates in other projects. This would bring our model closer to the connection model than to the co-authorship model. Indeed, the negative externalities of the co-authorship model are an indirect way to take into account the scarcity of time that researchers face in their activity; the reason for such externalities is removed in our setting, where agents are explicitly given time endowments.

We require a strict Pareto improvement for the members of a project that is going to be formed in order to conclude that a state is not myopically team-wise stable, while a weak Pareto improvement is usually considered sufficient. We remark that our choice—which in principle yields a weaker equilibrium concept—makes no actual difference if Assumption v1 is satisfied.

We use here the same simplifying representation that we have employed in Fig. 3 and briefly discussed in footnote 6.

See Newton ([34], Section 7.2) for a recent survey of evolutionary models.

It is worth noting that here absorbing states refer to those of the corresponding unperturbed dynamics, \({\mathcal {A}}\), while no state is absorbing in the perturbed dynamics.

For our result we do not require the probabilities to be equal, and every team \(\mathbf {t}\) could have any state and time dependent utility \(\eta (p,x,s,\epsilon )\), depending also on some positive real number \(\epsilon \). Thus we merely require that all such probabilities converge to zero with the same order as \(\epsilon \) goes to 0.

Here, by deviating and leaving a team, an agent does not allow a subset of that coalition to form, if this subset includes some non-members of the coalition.

In contrast to what happens for myopic team-wise stability (see footnote 13), the choice to require a strict Pareto improvement for the agents of a blocking coalition—instead of asking for a weak Pareto improvement—can enlarge the set of coalitionally stable states even when Assumption v1 is satisfied. However, this difference ceases to exist when we introduce costs to exit from existing projects (see “Appendix B”).

The concept of coalitional stability is analogous to the core in cooperative game theory, in a context of coalition formation. To define the core one needs to explicitly define the agents’ strategies, which is possible but not useful for our analysis.

Some clarification is needed. The definition of farsighted coalitionally stable states, as proposed by Herings et al. [19, 20] in a context where agents are farsighted players who evaluate the desirability of a deviation in terms of its future consequences (see also [9] and [33]), can easily be generalized to our context, and this is what we achieve in “Appendix C”. The good thing about the above definition is that there always exists a farsighted coalitionally stable state, while existence is not guaranteed for simple coalitionally stable states, as we define them. Accordingly, they are a super-set of the coalitionally stable states. However, our point is that in many contexts coalitional stability has too little predictive power, so that the coalitionally stable states are too numerous or can be anything. We are not concerned here with the point that in other contexts coalitional stability can be a concept so restrictive that no state satisfies it.

The details of this probabilistic selection are not important for the results as long as every pair y, z is chosen with positive probability.

References

Baumann L (2021) A model of weighted network formation. Theor Econ 16(1):1–23

Boncinelli L, Pin P (2012) Stochastic Stability in the Best Shot Network Game. Games Econom Behav 75(2):583–554

Boncinelli L, Pin P (2018) The stochastic stability of decentralized matching on a graph. Games Econom Behav 108:239–244

Breschi S, Lissoni F (2001) Knowledge spillovers and local innovation systems: a critical survey. Ind Corp Chang 10(4):975–1005

Chalkiadakis G, Elkind E, Markakis E, Polukarov M, Jennings NR (2010) Cooperative games with overlapping coalitions. J Art Intell Res 39:179–216

Cui Z, Weidenholzer S (2021) Lock-in through passive connections. J Econ Theory 192:105187

Currarini S, Jackson M, Pin P (2009) An economic model of friendship: homophily, minorities and segregation. Econometrica 77(4):1003–1045

Currarini S, Matheson J, Vega-Redondo F (2016) A simple model of homophily in social networks. Eur Econ Rev 90:18–39

Dutta B, Ghosal S, Ray D (2005) Farsighted network formation. J Econ Theory 122(2):143–164

Ellison G (2000) Basins of attraction, long-run stochastic stability, and the speed of step-by-step evolution. Rev Econ Stud 67:17–45

Faias M, Luque J (2017) Endogenous formation of security exchanges. Econ Theor 64(2):331–355

Foster D, Young HP (1990) Stochastic evolutionary game dynamics. Theor Popul Biol 38:219–232

Franz S, Marsili M, Pin P (2010) Observed choices and underlying opportunities. Sci Cult 76:471–476

Gillies D (1959) Solutions to general non–zero–sum games. In Tucker A, Luce R (Eds.), Contributions to the theory of games, Vol. 4. Princeton University Press

Gomes A, Jehiel P (2005) Dynamic processes of social and economic interactions: on the persistence of inefficiencies. J Polit Econ 113(3):626–667

Goyal S, Joshi S (2003) Networks of collaboration in oligopoly. Games Econom Behav 43(1):57–85

Goyal S, Vega-Redondo F (2007) Structural holes in social networks. J Econ Theory 137:460–492

Hatfield JW, Kojima F, Narita Y (2014) Many-to-many matching with max-min preferences. Discret Appl Math 179:235–240

Herings P-J, Mauleon A, Vannetelbosch V (2009) Farsightedly stable networks. Games Econom Behav 67:526–541

Herings P-J, Mauleon A, Vannetelbosch V (2010) Coalition formation among farsighted agents. Games 1(3):286–298

Hofmann H, Wickham H, Kafadar K (2017) Value plots: boxplots for large data. J Comput Graph Stat 26(3):469–477

Hyndman K, Ray D (2007) Coalition formation with binding agreements. Rev Econ Stud 74(4):1125–1147

Jackson M (2005) A survey of models of network formation: stability and efficiency. In: Demange G, Wooders M (eds) Group formation in economics; networks, clubs and coalitions. Cambridge University Press, Cambridge

Jackson M, Watts A (2002) The evolution of social and economic networks. J Econ Theory 106(2):265–296

Jackson M, Wolinsky A (1996) A strategic model of social and economic networks. J Econ Theory 71:44–74

Jackson MO, Van den Nouweland A (2005) Strongly stable networks. Games Econom Behav 51(2):420–444

Kandori M, Mailath GJ, Rob R (1993) Learning, mutation and long run equilibria in games. Econometrica 61:29–56

Kirchsteiger G, Mantovani M, Mauleon A, Vannetelbosch V (2016) Limited farsightedness in network formation. J Econ Behav Org 128:97–120

Klaus B, Klijn F, Walzl M (2010) Stochastic stability for roommate markets. J Econ Theory 145(6):2218–2240

Konishi H, Ray D (2003) Coalition formation as a dynamic process. J Econ Theory 110(1):1–41

Mauleon A, Roehl N, Vannetelbosch V (2018) Constitutions and groups. Games Econom Behav 107:135–152

Milojević S (2014) Principles of scientific research team formation and evolution. Proc Natl Acad Sci 111(11):3984–3989

Navarro N (2013) Expected fair allocation in farsighted network formation. Soc Choice Welfare, pp 1–22

Newton J (2018) Evolutionary game theory: a renaissance. Games 9(2):31

Newton J (2021) Conventions under heterogeneous behavioural rules. Rev Econ Stud 88(4):2094–2118

Page F, Wooders M, Kamat S (2005) Networks and farsighted stability. J Econ Theory 120(2):257–269

Pauly M (1970) Cores and Clubs. Public Choice 9(1):53–65

Peski M (2010) Generalized risk-dominance and asymmetric dynamics. J Econ Theory 145(1):216–248

Pycia M (2012) Stability and preference alignment in matching and coalition formation. Econometrica 80(1):323–362

Pycia M, Yenmez MB (2019) Matching with externalities

Rêgo LC, dos Santos AM (2019) Co-authorship model with link strength. Eur J Oper Res 272(2):587–594

Roth AE, Vate JHV (1990) Random paths to stability in two-sided matching. Econometrica 58(6):1475–1480

Sawa R (2014) Coalitional stochastic stability in games, networks and markets. Games Econom Behav 88:90–111

Sawa R (2019) Stochastic stability under logit choice in coalitional bargaining problems. Games Econom Behav 113:633–650

Shah SK, Agarwal R, Echambadi R (2019) Jewels in the crown: exploring the motivations and team building processes of employee entrepreneurs. Strateg Manag J 40(9):1417–1452

Staudigl M, Weidenholzer S (2014) Constrained interactions and social coordination. J Econ Theory 152:41–63

Stuart T, Sorenson O (2008) Strategic networks and entrepreneurial ventures. Strateg Entrep J 1:211–227

Young HP (1993) The evolution of conventions. Econometrica 61:57–84

Young HP (1998) Individual strategy and social structure. Princeton University Press, Princeton

Funding

Open access funding provided by Universitá degli Studi di Siena within the CRUI-CARE Agreement.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

We thank the following for helpful comments: Andrea Galeotti, Sanjev Goyal, Matthew Jackson, Shachar Kariv, Brian Rogers, Fernando Vega Redondo, Simon Weidenholzer, Leeat Yariv and seminar participants at the ASSET 2012 Meeting in Cyprus, Berkeley, Caltech, Essex University, European University Institute, LUISS University in Rome, University of Siena and Stanford University. We also thank Matteo Chinazzi for his help with the data. We acknowledge funding from the Italian Ministry of Education Progetti di Rilevante Interesse Nazionale” (PRIN) grants 2015592CTH and 2017ELHNNJ.

The original online version of this article was revised: The affiliation for the last author has been corrected.

This article is part of the topical collection “Dynamic Games and Social Networks” edited by Ennio Bilancini, Leonardo Boncinelli, Paolo Pin and Simon Weidenholzer.

Appendices

Appendix A: Additional Information on APS Data

In this section we provide some figures that help to describe the data analyzed in Sect. 3. To analyze individual careers, we focus on authors that have a lengthy and consistent career of at least 25 years and whose first year of publication, called author’s cohort, is after 1960 (Figs. 7, 8 ). Figure 9 contains information about this subsample of authors and, in particular, it shows the distributions of the number of authors per cohort (left), the authors’ career lengths (right) and the distribution of authors’ active years, where an year is considered active year for an author if she has published at least one paper in that year. Consequently, the number of an author’s active years is the count of such years for that author. Such figure then shows that this subsample consists of a set of authors that are well spread terms of cohort year (the first cohorts, 1960–1965, obviously are less numerous but afterward the number of authors in each cohort remains constant enough) that the median author has a career of 32 years and has 15 active years (meaning one papers recorded every 2 years).

Figure 10 contains the distribution of citations received papers (left), which, with the help of a logarithmic scale on the y-axis, shows that while most papers (i.e., 75th percentile) receive less than 20 total citations, the top 1 percentile of papers receive more than 100 citations. In Fig. 10 (right), the distribution of papers’ citation age (that is, how many years have passed from the citing paper to the cited paper) shows that most of a paper’s citations accumulate in the first 5–7 years after its publication.

Lastly, in Fig. 11 (left) we plot the increasing trends over the years of the fraction of papers that receive few citations (that is, less than 5 citations). While in Fig. 11 (right) we show that, over time, the number of isolated authors have diminished and also that the number of connected components has remained stable—or has slightly decreased—even if the number of authors participating to the profession, that is, number of nodes in the co-authorship network, has increased drastically (see Fig. 8).

Appendix B: Robustness of Stochastic Stability with Respect to Switching Costs

In this appendix we endow agents with the same degree of rationality and coordinating abilities that we have used in Sect. 7 for coalitional stability. However, we introduce switching costs to exit from existing projects, showing that if such costs are sufficiently high then coalitional stability coincides with myopic team-wise stability. Moreover, we consider an unperturbed dynamics based on coalitional stability, and we reproduce the results of Proposition 1. Finally, we show that the introduction of uniform perturbations like in Sect. 6 yields the same predictions as Proposition 2, i.e., stochastically stable states are maximal states.

The following definition provides an adjusted notion of coalitional stability.

Definition A

A state x is coalitionally stable [CS] (or coalition proof) if there exists no subset \(C \subseteq N\) such that

-

(i)

there exists a set of projects \(y \subseteq x\) such that \(\forall ~ p \in y\), \(\exists ~ i \in C\) such that \(i \in n(p)\);

-

(ii)

there exists a set of projects z such that \(z \cap x = \emptyset \) and, \(\forall ~ p \in z\), if \(j \in n(p)\) then \(j \in C\);

-

(iii)