Abstract

A unified treatment of all currently available cumulant-based indexes of multivariate skewness and kurtosis is provided here, expressing them in terms of the third and fourth-order cumulant vectors respectively. Such a treatment helps reveal many subtle features and inter-connections among the existing indexes as well as some deficiencies, which are hitherto unknown. Computational formulae for obtaining these measures are provided for spherical and elliptically-symmetric, as well as skew-symmetric families of multivariate distributions, yielding several new results and a systematic exposition of many known results.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Using the standard normal distribution as the yardstick, statisticians have defined notions of skewness (asymmetry) and kurtosis (peakedness) in the univariate case. Since all the odd central moments (when they exist) are zero for a symmetric distribution on the real line, a first attempt at measuring asymmetry is to ask how different the 3rd central moment is from zero, although in principle, one could use any other odd central moment, or even a combination of them. Several alternate indexes of asymmetry, using the mode or the quantiles are also available –see for instance (Arnold and Groeneveld, 1995; Averous and Meste, 1997; Ekström and Jammalamadaka, 2012) for recent work and review. Similarly for kurtosis, taking again the standard normal distribution as the yardstick, a coefficient of kurtosis has been developed using the 4th central moment.

When dealing with multivariate distributions, the notions of symmetry, measurement of skewness, as well as of kurtosis, are not uniquely defined. For example, the mode-based approach in Arnold and Groeneveld (1995), although very popular, does not seem extendable to the multivariate case. Also, in interpreting the cumulant-based measures of skewness and kurtosis, one has to pause – especially for the kurtosis if there are multiple peaks.

Our focus in this paper is on multivariate distributions and one may remark that the two fundamental papers by Rao (1948a, b) take us back to the early days of such multivariate analysis. A good starting point is the monograph by Fang et al. (2017) and a more recent review article by Serfling (2004). We consider symmetry of a d-dimensional random vector X, around a given point, which we will assume without loss of generality, to be the origin. Such an X is said to be spherically symmetric or rotationally symmetric if for all (d × d) orthogonal matrices A, X has the same distribution as AX. One may generalize this to elliptical or ellipsoidal symmetry in an obvious way. A more common and practical notion of symmetry in multi-dimensions, is the reflective or antipodal symmetry, and we say X has this property if it has the same distribution as −X. For measuring departures from such symmetry, various notions of skewness have been proposed by different authors, and the list includes (Mardia, 1970; Malkovich and Afifi, 1973; Isogai, 1982; Srivastava, 1984; Song, 2001) and Móri et al. (1994); see also Sumikawa et al. (2013) for an extension of Mardia’s multivariate skewness index to the high-dimensional case. In a broad discussion and analysis of multivariate cumulants, their properties and their use in inference, Jammalamadaka et al. (2006) proposed using the full vector of third and fourth order cumulants, as vectorial measures for multivariate skewness and kurtosis respectively. Such measures based on the cumulant vectors, were further discussed by Balakrishnan et al. (2007) and Kollo (2008). A systematic treatment of asymptotic distributions of skewness and kurtosis indexes can be found in Baringhaus and Henze (1991a, 1991b, 1992), and Klar (2002).

In this paper, one of our primary goals is to look at these disparate looking definitions of skewness and kurtosis based on cumulants which have been proposed in the literature, and to assess, and relate them from a unified perspective in terms of the cumulant vectors discussed in Jammalamadaka et al. (2006). Such a unified treatment helps reveal several relationships and features of the many existing proposals. For example it will be shown: (i) that Mardia (1970) and Malkovich and Afifi (1973) skewness measures can be equivalent; (ii) that Balakrishnan et al. (2007) and Móri et al. (1994) skewness vectors are just proportional to each other; (iii) that Kollo (2008) vectorial measure can be a null vector even for some asymmetric distributions, and (iv) that Srivastava (1984) index is not affine invariant.

In Section 4, we also introduce alternate measures for skewness and Kurtosis based just on the distinct cumulants, and evaluate their performance with some examples in Section 7.

Another significant contribution of the paper is to provide clear and easy formulae for computing cumulants up to the fourth order for spherically symmetric, elliptically symmetric, and skew elliptical families along with several specific examples. These, comprehensive and mostly new, results allow for a straightforward computation of all the indexes of skewness and kurtosis discussed here for several important multivariate distributions.

The analysis presented here is based on the cumulant vectors of the third and fourth order, defined below. In our derivations we utilize an elegant and powerful tool— the so-called T-derivative, which we now describe. Let \(\boldsymbol {\lambda }=(\lambda _{1},\dots ,\lambda _{d})^{\top }\) be a d-dimensional vector of constants and let \(\boldsymbol {{\phi }}(\boldsymbol {\lambda })=(\phi _{1} (\boldsymbol {\lambda }),\dots ,\phi _{m}(\boldsymbol {\lambda }))^{\top }\) denote a m-dimensional vector valued function (\(m\in \mathbb {N}\)), which is differentiable in all its arguments. The Jacobian matrix of ϕ is defined by

Then the operator \(D_{\boldsymbol {\lambda }}^{\otimes }\), which we refer to as the T-derivative, is defined as

where the symbol ⊗ here and everywhere else in the paper, denotes the Kronecker (tensor) product. Assuming ϕ is k times differentiable, the k-th T-derivative is given by

which is a vector of order m × dk containing all possible partial derivatives of entries of ϕ(λ) according to the tensor product \(\left (\frac {\partial }{\partial \lambda _{1}},\dots ,\frac {\partial }{\partial \lambda _{d}}\right )^{\top \otimes k}\). Refer to Jammalamadaka et al. (2006) for further details, properties, examples, and applications of the operator \(D_{\boldsymbol {\lambda }}^{\otimes }\) (see also Terdik 2002).

We note that if ϕX(λ) denotes the characteristic function of a d-dimensional random vector X, then the operator \(D^{\otimes k}_{\boldsymbol {\lambda }}\) applied to ϕ, and \(\log \phi \) will provide the vector of moments of order k, and cumulants of order k respectively.

One may also refer to MacRae (1974) for a similar definition of a matrix derivative using the tensor product and a differential operator; indeed, the T-derivative we obtain by vectorizing the transposed Jacobian (1.1), can be seen as closely related to that. While these results can also be obtained by using tensor-calculus, as is done in McCullagh (1987) and Speed (1990), our approach is more straightforward and simpler, requiring only the knowledge of calculus of several variables. We believe that using only the tensor products of vectors leads to an intuitive and natural way to deal with higher order moments and cumulants for multivariate distributions, as it will be demonstrated in the paper. Another comprehensive reference on matrix derivatives is the book by Mathai (1997).

The paper is organized as follows: Sections 2 and 3 introduce respectively the skewness and kurtosis vectors, and treat several existing measures of skewness and kurtosis based on these cumulant vectors. Section 4 discusses a linear transformation on the skewness and kurtosis vectors, which helps remove from them redundant/duplicate information, and proposes skewness and kurtosis indexes based on distinct elements of the corresponding vectors. Sections 5 and 6 provide computational formulae for the skewness and kurtosis vectors for spherical, elliptical and asymmetric/skew multivariate distributions. Section 7 provides some examples while Section 8 provides some final considerations. To improve readability of the paper, more technical details and proofs are placed in an Appendix. A word about the notations: bold uppercase letters are used for random vectors and matrices while bold lowercase letters denote their specific values.

2 Multivariate Skewness

In this section and the next one, it will be shown that all cumulant-based measures of skewness and kurtosis that appear in the literature can be expressed in terms of the third and fourth cumulant vectors respectively. Also, several hitherto unnoticed relationships between different indexes will be brought out.

Let X be a d −dimensional random vector whose first four moments exist. We will denote EX = μ, with a positive-definite variance-covariance matrix VarX = Σ. Consider the standardized vector

with zero means and identity matrix for its variance-covariance. A complete picture about the skewness, is contained in the “skewness vector” of X or Y, defined as \( \boldsymbol {\kappa }_{3}=\underline {\operatorname *{Cum}}_{3}\left (\mathbf {Y}\right ). \) Since the third order cumulants are the same as the third order central moments, we may write

Note that for a d-dimensional vector \(\mathbf {Y}=(Y_{1},\dots ,Y_{d})^{\top }\), κ3 has length d3 and it contains all terms of the form \(\operatorname *{E}{Y_{r}^{3}}\), \(\operatorname *{E} {Y_{r}^{2}}Y_{s}\), EYrYsYt for 1 ≤ r,s,t ≤ d. Among these d3 elements, only d(d + 1)(d + 2)/6 are distinct elements, and in Section 4 we discuss a linear transformation which allows one to get the distinct elements of κ3. In the examples below, we denote the unit matrix of dimension k by Ik, and the k-vector of ones by 1k.

The following 6 examples reveal several relationships among various indexes of skewness which appear in the literature, and their connection to the third-order cumulant vector κ3, which can actually be seen as the common denominator.

Example 1.

Mardia (1970) suggested the square norm of the vector κ3 as a measure of departure from symmetry, viz.

which is denoted by β1,d. If Y1 and Y2 are two independent copies of Y, then we may write

Example 2.

Móri et al. (1994), after observing that

define a “skewness vector”

Note that \(\left (\operatorname *{vec}\mathbf {I}_{d}\right )^{\top } \otimes \mathbf {I}_{d}\) is a matrix of dimension (d × d3) which contains d unit values per-row whereas all the others are 0; as a consequence, this measure does not take into account the contribution of cumulants of the type \(\operatorname *{E}\left (Y_{r}Y_{s}Y_{t}\right ) \), where (r,s,t) are all different.

Example 3.

Kollo (2008), noting the fact that not all third-order mixed moments appear in s(Y), proposes an alternate skewness vector \(b\left (\mathbf {Y}\right ) \) which can again be expressed in terms of κ3 as follows:

Comparing \(b\left (\mathbf {Y}\right ) \) in Eq. 2.4 to s(Y) in Eq. 2.3, we see that the difference between the two expressions comes from the fact that \(b\left (\mathbf {Y}\right ) \) has the term \(\mathbf {1}_{d^{2}}^{\top }\), compared to \(\left (\operatorname *{vec} \mathbf {I}_{d}\right )^{\top }\) in s(Y). This results in the Kollo measure summing the elements of d consecutive groups of size d2 in κ3.

Following this line of reasoning, note that if d = 2 and \(\boldsymbol {\kappa }_{3} ={}\left [ 1,-1,-1,\right .\) \(\left .1,-1,1,1,-1\right ] \), then \(b\left (\mathbf {Y} \right ) =0\) even for an asymmetric distribution, making it not a valid measure of skewness. Section 7 gives specific examples where this actually happens.

Example 4.

Malkovich and Afifi (1973) (see also Balakrishnan and Scarpa 2012) consider the following approach to measuring skewness: let \(\mathbb {S}_{d-1}\) be the (d − 1) dimensional unit sphere in \(\mathbb {R}^{d}\). First, for \(\mathbf {u}\in \mathbb {S}_{d-1}\), note that

Malkovich–Afifi define their measure as

Consider

where \(\cos \limits \left (\mathbf {a},\mathbf {b} \right )\) indicates the cosine of the angle between the vectors a and b; next note that

and \( \sup _{\mathbf {u}}\cos \limits \left (\mathbf {u}^{\top \otimes 3}, \boldsymbol {\kappa }_{3}\right ) \) could be 1 when there would exist a u0 such that \(\mathbf {u}_{0}^{\top \otimes 3} =\boldsymbol {\kappa }_{3}/\left \Vert \boldsymbol {\kappa }_{3}\right \Vert \). This can happen only when the normed \(\boldsymbol {\kappa }_{3}/\left \Vert \boldsymbol {\kappa }_{3}\right \Vert \) has the same form as u⊤⊗3. It follows that

Example 5.

Balakrishnan et al. (2007) discuss a multivariate extension of Malkovich–Afifi measure. Denoting \({\Omega }\left (d \mathbf {u}\right ) \) as the normalized Lebesgue element of surface area on \(\mathbb {S}_{d-1}\), they suggest

and we see that this extension is a constant times (a matrix-multiple of) the skewness vector κ3. Indeed, one can use Theorem 3.3 of Fang et al (1990) (Fang et al. 2017) to show that this matrix-multiple reduces to

Therefore T defined in Eq. 2.6 becomes a scalar-multiple, \(3/d\left (d+2\right ) \) times \(s\left (\mathbf {Y} \right ) \) defined in Eq. 2.3 by Móri, Székely and Rohatgi (1984). In particular when d = 3, we have \(\mathbf {T}=\frac {3}{15}s\left (\mathbf {Y}\right ) \). It follows that, as in Móri et al. (1994), the vector T does not take into account the contribution of cumulants of the type \(\operatorname *{E}\left (Y_{r}Y_{s}Y_{t}\right ) \), where r,s,t are all different.

Example 6.

Srivastava (1984). If X is a d −dimensional random vector with variance matrix Σ, then consider \({\Gamma }^{\top }\boldsymbol {\Sigma } \boldsymbol {\Gamma }=\operatorname *{Diag}\left (\lambda _{1},\ldots ,\lambda _{d}\right ) =\boldsymbol {D_{\lambda }}\), with orthogonal matrix Γ. The skewness measure defined by Srivastava can be written as

where \(\widetilde {\mathbf {Y}}=\boldsymbol {D_{\lambda }}^{-1}{\Gamma }\left (\mathbf {X} -\operatorname *{E}\mathbf {X}\right ) \). We have \( \widetilde {\mathbf {Y}}=\boldsymbol {D_{\lambda }}^{-1}\boldsymbol {\Gamma }\boldsymbol {\Sigma } ^{1/2}\mathbf {Y}=\boldsymbol {D_{\lambda }}^{-1/2}\boldsymbol {\Gamma }\mathbf {Y}, \) and the ith coordinate of \(\widetilde {\mathbf {Y}}\) is \(\widetilde {Y} _{i}=\mathbf {e}_{i}^{\top }\widetilde {\mathbf {Y}},\) where ei is the ith coordinate axis. Since

E\(\widetilde {\mathbf {Y}}=0\), and \(\operatorname *{Var}\left (\widetilde {\mathbf {Y}}\right ) =D_{\lambda }^{-1}\), Srivastava measure can be re-expressed as

and

One notices that \(\mathbf {e}_{i}^{\top \otimes 3}\) is a unit axis vector in the Euclidean space \(\mathbb {R}^{d^{3}}\), so that the measure \({b_{1}^{2}}\left (\mathbf {Y}\right ) \) is the norm square of the projection of κ3 to the subspace of \(\mathbb {R}^{d^{3}}\), and it does not contain all the information contained in the vector κ3. Note that this index is NOT affine invariant (nor the corresponding kurtosis index).

3 Multivariate Kurtosis

The kurtosis of Y is measured by the 4th order cumulant vector denoted by κ4, and is computed as

where K2,2 denotes the commutator matrix (1.2) (see Appendix 1 for details). Note that κ4 turns out to be the zero vector for multivariate Gaussian distributions, and may be used as the “standard”.

This kurtosis vector κ4 forms the basis for all multivariate measures of kurtosis proposed in the literature, as our next 6 examples demonstrate. For instance, one may define its square norm as a scalar index of kurtosis, called the total kurtosis defined by

which is one of the measures. We now connect the kurtosis vector κ4 and the total kurtosis κ4 to various other indexes discussed in the literature.

Example 7.

Mardia (1970), defined an index of kurtosis as \( \beta _{2,d}=\operatorname *{E}\left (\mathbf {Y}^{\top }\mathbf {Y}\right )^{2}. \) Note that

and this is related to the kurtosis vector κ4 as follows:

In particular for the standard Gaussian vector Y, we have κ4 = 0, so that \(\beta _{2,d}=\operatorname *{E}\left (\mathbf {Y}^{\top }\mathbf {Y}\right )^{2}=d\left (d+2\right ) \). As a consequence, for such a Y we have the equation

Note that \(\operatorname *{vec}\mathbf {I}_{d^{2}}\) contains only d2 ones and hence Mardia’s measure does not take into account all the entries of κ4; it includes only some of the entries of EY⊗4 namely

Example 8.

Koziol (1989) considered the following index of kurtosis. Let \(\widetilde {\mathbf {Y}}\) be an independent copy of Y, then

is the next higher degree analogue of Mardia’s skewness index β1,d. Specifically

where β2,d is Mardia (1970) index of kurtosis.

Example 9.

Móri et al. (1994) define kurtosis of Y as

Then \( \operatorname *{vec}K\left (\mathbf {Y}\right ) =\left (\mathbf {I}_{d^{2} }\otimes \left (\operatorname *{vec}\mathbf {I}_{d}\right )^{\top }\right ) \operatorname *{E}\mathbf {Y}^{\otimes 4}-\left (d+2\right ) \operatorname *{vec}\mathbf {I}_{d} \) which can be expressed in terms of κ4 as \( \operatorname *{vec}K\left (\mathbf {Y}\right ) =\left (\mathbf {I}_{d^{2} }\otimes \left (\operatorname *{vec}\mathbf {I}_{d}\right )^{\top }\right ) \boldsymbol {\kappa }_{4}\).

As in the case of their skewness measure, this measure does not take into account the contribution of cumulants of the type \(\operatorname *{E}\left (Y_{r}Y_{s}Y_{t}Y_{u}\right ) \) where r,s,t,u are all different.

Example 10.

Malkovich and Afifi (1973). Similar to the discussion regarding skewness, a measure proposed by Malkovich and Afifi simply provides a different derivation of the total kurtosis in the form

because of the fact that

Remark 1.

It may be noted that the idea used in our (2.6), namely integrating \(\mathbf {u}\left (\mathbf {u}^{\top \otimes 4}\boldsymbol {\kappa }_{Y}\right ) \) over the unit sphere, will not work for the kurtosis since it can be verified that this will result in a zero vector.

Example 11.

Kollo (2008) introduces the kurtosis matrix \(\mathbf {B}\left (\mathbf {Y} \right ) \) as

Then \( \operatorname *{vec}\mathbf {B}\left (\mathbf {Y}\right ) =\operatorname *{E} \left ({\sum }_{j=1}^{d}Y_{i}\right )^{2}\left (\mathbf {Y}\otimes \mathbf {Y}\right ) , \) which can be written as

4 Alternative Measures Based on Distinct Elements of the Cumulant Vectors

The skewness κ3 and the kurtosis κ4 vectors contain d3 and d4 elements respectively, which are not all distinct. Just as the covariance matrix of a d-dimensional vector contains only d2 = d(d + 1)/2 distinct elements, a simple computation shows that κ3 contains d3 = d(d + 1)(d + 2)/6 distinct elements, while κ4 contains d4 = d(d + 1)(d + 2)(d + 3)/24 distinct elements.

Similar to the fact that there are many applications as well as measures which consider only the distinct elements of a covariance matrix, it is quite sensible and reasonable to follow this approach and define skewness and kurtosis measures based on just the distinct elements of the corresponding cumulant vectors. For example, in estimating the “total skewness” index \(\left \Vert \boldsymbol {\kappa }_{3}\right \Vert ^{2}\) discussed in Mardia (1970), one may use the “elimination matrix” since the terms in the summation are symmetric like in a covariance matrix.

Selection of the distinct elements from the vectors κ3 and κ4 can be accomplished via linear transformations. This approach can be traced back to Magnus and Neudecker (1980) who introduce two transformation matrices, L and D, which consist of zeros and ones. For any \(\left (n,n\right ) \) arbitrary matrix A, L eliminates from vec(A) the supra-diagonal elements of A, while D performs the reverse transformation for a symmetric A.

In the case of a covariance matrix Σ of a vector X, the elimination matrix L above is a matrix acting on vec(Σ) and in the approach defined in this paper it holds that \(\operatorname {vec}(\boldsymbol {\Sigma })= \underline {\operatorname *{Cum}}_{2}\left (\mathbf {X},\mathbf {X}\right ) \), i.e. the distinct elements of vec(Σ) correspond to the distinct elements of a tensor product X ⊗X. In this way the elimination matrix L can be generalized to tensor products of higher orders in a simple way.

We shall use elimination matrices \(\mathbf {G}\left (3,d\right ) \) and \(\mathbf {G}\left (4,d\right ) \), for shortening the skewness and kurtosis vectors respectively and keeping just the distinct entries in them. See Meijer (2005) for details where the notations \(\mathbf {T}_{3}{{~}^{+}}\) and \(\mathbf {T}_{4}{{~}^{+}}\) are used respectively, which are actually the Moore–Penrose inverses of triplication and quadruplication matrices. This gives cumulant vectors of distinct elements as

\(\boldsymbol {\kappa }_{3,D}=\mathbf {G} \left (3,d \right ) \boldsymbol {\kappa }_{3}\) and \(\boldsymbol {\kappa }_{4,D}=\mathbf {G} \left (4,d \right ) \boldsymbol {\kappa }_{4}\).

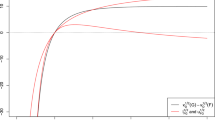

In such a case, the distinct element vector has dimension \(\dim \left (\boldsymbol {\kappa }_{3,D}\right ) =d\left (d+1\right ) \left (d+2\right ) /6\). For instance, when d = 2, \(\dim \left (\boldsymbol {\kappa } _{3,D}\right ) \) is 50% of the \(\dim \left (\boldsymbol {\kappa }_{3}\right ) \), and in general, the percentage of distinct elements decreases in the proportion \(\left (1+1/d\right ) \left (1+2/d\right ) /6\), getting close to 1/6 for large d. Similarly, the fraction of distinct elements in κ4,D relative to κ4 approaches 1/24 for large d — a significant reduction.

Following the discussion of this section one could define indexes of total skewness and total kurtosis exploiting the square norms of the skewness and kurtosis vectors containing only the distinct elements, i.e.

In Section 7 some numerical evidence about the performance of these indexes, which eliminate duplication of information, will be given.

5 Multivariate Symmetric Distributions

Two important classes of symmetric distributions are the spherically symmetric distributions and the elliptically symmetric distributions. In this section, we discuss them in turn and derive their cumulants. Some related results and discussion may be found in Fang et al. (2017).

5.1 Multivariate Spherically Symmetric Distributions

A d-vector \(\mathbf {W}=(W_{1}, {\dots } , W_{d})^{\top }\) has a spherically symmetric distribution if that distribution is invariant under the group of rotations in \(\mathbb {R}^{d}\). This is equivalent to saying that W has the stochastic representation

where R is a non negative random variable, \(\mathbf {U}=(U_{1}, {\dots } , U_{d})^{\top }\) is uniform on the sphere \(\mathbb {S}_{d-1}\), and R and U are independent (see e.g. Fang et al., 2017, Theorem 2.5). The moments of the components of W, when they exist, can be expressed in terms of a one-dimensional integral, Fang et al., (2017 Theorem 2.8, p. 34) and the characteristic function has the form

where g is called the “characteristic generator” and R is the generating variate, with say, a generating distribution F. The relationship between the distribution F of R and g is given through the characteristic function of the uniform distribution on the sphere (see Fang et al., 2017, p. 30).

The marginal distributions of any such spherically or elliptically symmetric distributions have zero skewness, and the same kurtosis value given by the “kurtosis parameter” of the form (see Muirhead, 2009, p. 41)

The next lemma provides the moments of the uniform distribution on \(\mathbb {S}_{d-1}\), and is proved in the Appendix.

Lemma 1.

Let U be uniform on sphere \(\mathbb {S}_{d-1}\). Then

-

1.

For odd-order moments

$$\operatorname*{E}\mathbf{U}^{\otimes\left( 2k+1\right) }=0,\qquad \underline{\operatorname*{Cum}}_{2k+1}\left( \mathbf{U}\right) =0,$$while for even-order moments

$$ \operatorname*{E} {\displaystyle\prod\limits_{i=1}^{d}} U_{i}^{2k_{i}}=\frac{1}{\left( d/2\right)_{k}} {\displaystyle\prod\limits_{i=1}^{d}} \frac{\left( 2k_{i}\right) !}{2^{2k_{i}}k_{i}!}. $$(5.4)For T-products we have

$$ \operatorname*{E}\mathbf{U}^{\otimes4}=\frac{1}{d\left( d+2\right) }\left( 3\mathbf{e}\left( d^{3},d\right) +\mathbf{e}\left( d^{4}\right) \right) , $$(5.5)where zero-one vectors \(\mathbf {e}\left (d^{3},d\right ) \ \) and \(\mathbf {e}\left (d^{4}\right ) \) are given by Eq. 1.8 and 1.9 respectively, more over the sum of the entries is

$$ \sum\operatorname*{E}\mathbf{U}^{\otimes4}=\frac{3d}{d+2}. $$ -

2.

The odd moments of the modulus

$$ \operatorname*{E} {\displaystyle\prod\limits_{i=1}^{d}} \left\vert U_{i}\right\vert^{k_{i}}=\sqrt{\frac{1}{\pi^{d_{1}}}}\frac {1}{G_{k}} {\displaystyle\prod\limits_{i=1}^{d_{1}}} {\Gamma}\left( \left( k_{i}+1\right) /2\right) $$(5.6)where \(k=\sum k_{i}\),see Eq. 6.3 for Gk, and d1 is the number of nonzero ki, in particular

$$ \operatorname*{E}\left\vert U_{i}\right\vert^{2k+1}=\sqrt{\frac{1}{\pi}} \frac{k!}{G_{2k+1}}. $$For T-products we have

$$ \operatorname{E}\left\vert \mathbf{U}\right\vert^{\otimes2}=\frac{1} {d}\operatorname*{vec}\mathbf{I}_{d}+\frac{1}{\pi}\frac{1}{G_{2} }\left( \boldsymbol{1}_{d^{2}}-\operatorname*{vec}\mathbf{I} _{d}\right) $$and

$$ \operatorname{E}\left\vert \mathbf{U}\right\vert^{\otimes3}=\sqrt{\frac {1}{\pi}}\frac{1}{G_{3}}\left( \boldsymbol{1}_{d^{3}}\left( 3\right) +\frac{1}{2}\boldsymbol{1}_{d^{3}}\left( 1,2\right) +\frac{1}{\pi }\boldsymbol{1}_{d^{3}}\left( 1,1,1\right) \right) , $$where the zero-one vectors are given in Eq. 1.10 and

$$ \begin{array}{@{}rcl@{}} \operatorname{E}\left\vert \mathbf{U}\right\vert^{\otimes4} & =&\frac {3}{d\left( d+2\right) }\boldsymbol{1}_{d^{4}}\left( 4\right) +\frac {1}{4G_{4}}\boldsymbol{1}_{d^{4}}\left( 2,2\right) \\ && +\frac{1}{\pi}\frac{1}{G_{4}}\left( \boldsymbol{1}_{d^{4}}\left( 1,3\right) +\sqrt{\frac{1}{\pi}}\boldsymbol{1}_{d^{4}}\left( 2,1,1\right) +\frac{1}{\pi}\boldsymbol{1}_{d^{4}}\left( 1,1,1,1\right) \right) \end{array} $$where the zero-one vectors are given in Eq. 1.11.

Remark 2.

Observe that there are only three distinct elements of the third order moments, and four distinct elements of the fourth order moments!

The next lemma provides the cumulants of W (proof in the Appendix).

Lemma 2.

Let W be spherically symmetric with the representation W = RU and characteristic generator g having second derivative at 0; then \( \underline {\operatorname *{Cum}}_{1}\) \(\left (\mathbf {W}\right )= \underline {\operatorname *{Cum}}_{3}\left (\mathbf {W}\right ) =0\), \( \underline {\operatorname *{Cum}}_{2}\left (\mathbf {W}\right ) =-2g^{\prime }\left (0\right ) \operatorname *{vec}\mathbf {I}_{d}, \) and

In terms of kurtosis parameter κ0, we have \(\kappa _{4}=3d(d+2){\kappa _{0}^{2}}\), \(\kappa _{4,D}={\kappa _{0}^{2}}(9d+d(d-1)/2)\) and Mardia’s β2,d = d(d + 2)(κ0 + 1).

From the representation of W given in Eq. 5.1, it is easy to see that \( \underline {\operatorname *{Cum}}_{2\ell +1}\left (\mathbf {W}\right ) =0,\quad \ell =0,1\ldots , \) while the second and fourth order cumulants are calculated directly using the Lemmas 1 and 2 above, so that we have the following

Theorem 1.

If W is spherically distributed with the representation W = RU and \(\operatorname {E}(R^{4})<\infty \), then \( \underline {\operatorname *{Cum}}_{2\ell +1}\left (\mathbf {W}\right ) = 0\), \( \underline {\operatorname *{Cum}}_{2}\left (\mathbf {W}\right ) =\frac {\operatorname *{E}R^{2}}{d}\operatorname *{vec}\mathbf {I}_{d} \) and

In terms of these moments, the kurtosis parameter becomes

5.2 Multivariate elliptically symmetric distributions

A d-vector X has an elliptically symmetric distribution if it has the representation

where \(\boldsymbol {\mu }\in \mathbb {R}^{d}\), Σ is a variance-covariance matrix and W is spherically distributed. Hence the cumulants of X are just constant times the cumulants of W except for the mean i.e.

from which one gets \( \underline {\operatorname *{Cum}}_{1}\left (\mathbf {X}\right ) =\boldsymbol {\mu }\), \(\underline {\operatorname *{Cum}}_{2\ell +1}\left (\mathbf {X}\right ) =0\), ℓ = 1,… and

Moments of elliptically symmetric distributions have also been discussed by Berkane and Bentler (1986). As a special case, we now discuss

5.2.1 Multivariate t-distribution

Multivariate t-distribution is spherically symmetric (see Example 2.5 Fang et al., 2017, p.32). Consult also the monograph by Kotz and Nadarajah (2004) for further details. Let

where \(\mathbf {Z}\in \mathcal {N}_{d}\left (0,\mathbf {I}_{d}\right ) \) standard normal, and S2 is χ2 distributed with m degrees of freedom\(.\mathbf {W}\in Mt_{d}\left (m,0,\mathbf {I}_{d}\right ) \), we have

where \(R^{\ast }=\sqrt {m}\left \Vert \mathbf {Z}\right \Vert /S\), and R∗2/d has an F-distribution with d and m degrees of freedom. Let \(\boldsymbol {\mu }\in \mathbb {R}^{d}\), and A is an d × d matrix and X = μ + A⊤W then \(\mathbf {X}\in Mt_{d}\left (m,\boldsymbol {\mu ,}\boldsymbol {\Sigma }\right ) \), where Σ = A⊤A, hence X is an elliptically symmetric random variable. Since the characteristic function is quite complicated (see Fang et al., 2017 Section 3.3.6 p. 85), we utilize the stochastic representation given in Eq. 5.7 for deriving the kurtosis (note that the skewness is zero). The proof of the next Lemma is in the Appendix.

Lemma 3.

Let W be a multivariate t-vector with dimension d and degrees of freedom m, with m > 4, then EW = 0, \( \underline {\operatorname *{Cum}}_{2}\left (\mathbf {W}\right ) =\frac {m}{m-2}\operatorname *{vec}\mathbf {I}_{d}\) and \(\underline {\operatorname *{Cum} }_{3}\left (\mathbf {W}\right ) =0\). From Theorem 1, the kurtosis parameter in Eq. 5.3 becomes \(\kappa _{0}=\frac {2}{m-4}\), and the kurtosis κ4 = 3d2κ0. Moreover, if \(\mathbf {X}\in Mt_{d}\left (m,\boldsymbol {\mu },\boldsymbol {\Sigma }\right ) \), where Σ = A⊤A, then EX = μ,

and kurtosis parameter κ0 and kurtosis κ4 are the same as for W.

6 Multivariate Skew Distributions

Starting with Azzalini and Dalla Valle (1996) who suggest methods for obtaining multivariate skew-normal distributions, several authors discuss different approaches for obtaining asymmetric multivariate distributions, by skewing a spherically or an elliptically symmetric distribution. We mention here (Branco and Dey, 2001) who extend the work in Azzalini and Dalla Valle (1996) to multivariate skew-elliptical distribution, Arnold and Beaver (2002) who use a conditioning approach on a d-dimensional random vector to an elliptically contoured distribution (although this conditioning is not strictly necessary), Sahu et al. (2003) who use transformation and conditioning techniques, Dey and Liu (2005) who discuss an approach based on linear constraints, Genton and Loperfido (2005) who introduce a general class of multivariate skew-elliptical distributions– the so-called multivariate generalized skew-elliptical (GSE) distribution. See also Genton (2004) and the references therein.

Here we provide a systematic treatment of several skew-multivariate distributions by providing general formulae for cumulant vectors up to the fourth order, which are needed in deriving the corresponding skewness and kurtosis measures discussed in Sections 2 and 3.

6.1 Multivariate skew spherical distributions

Let \(\mathbf {Z}=\left [ \mathbf {Z}_{1}^{\top },\mathbf {Z}_{2}^{\top }\right ]^{\top }\) be spherically symmetric distributed in dimension (m + d). Define the canonical fundamental skew-spherical (CFSS) distribution (Arellano-Valle and Genton 2005, Prop. 3.3) by

where the modulus is taken element-wise, and Δ is the d × m skewness matrix, and let R be the generating variate. A simple construction of Δ is given by \(\boldsymbol {\Delta }=\boldsymbol {\Lambda }\left (\mathbf {I}_{m}+\boldsymbol {\Lambda }^{\top }\boldsymbol {\Lambda }\right )^{-1/2}\) with some real matrix Λ of dimension d × m. If Z = RU, then \(\mathbf {Z}_{1}=p_{1}R\mathbf {U}_{1}\in \mathbb {R}^{m}\) and \(\mathbf {Z}_{2}=p_{2}R\mathbf {U}_{2}\in \mathbb {R}^{d}\), then \({p_{1}^{2}}\) is Beta\(\left (m/2,d/2\right ) \), \({p_{2}^{2}}=1-{p_{1}^{2}}\), which is Beta\(\left (d/2,m/2\right ) \). The variables R, \({p_{1}^{2}}\), U1 and U2 are independent by Theorem 2.6 in Fang et al. (2017). Then we have EZ = 0 and \(\operatorname *{Cov}\left (\mathbf {Z}\right ) =\operatorname *{E}R^{2}\mathbf {I}/d. \) Introduce the function

and,

written as Gk for short. We may express the joint moments of p1, p2 as

In particular \(\operatorname *{E}p_{1}=G_{1,0}\left (m,d\right ) ,\)\(\operatorname *{E}p_{1}p_{2}=G_{1,1}\left (m,d\right ) \). The cumulants of X can be obtained from these moments. Using Eq. 6.4 above, and Lemma (1) for the moments of pk and \(\left \vert \mathbf {U}_{j}\right \vert \), we obtain the following result (see the Appendix for the proof).

Theorem 2.

Let the d-vector X have a CFSS distribution as defined in Eq. 6.1 and denote by \(\mathbf {V}_{2}=\left (\mathbf {I}-\boldsymbol {\Delta {\Delta }}^{\top }\right )^{1/2}\mathbf {Z}_{2}\) for ease of notation, with

The moments of X, assuming \(\operatorname {E}(R^{4})<\infty \), are given by:

where \(\boldsymbol {\Delta }_{1}=\left (\mathbf {I}_{d}-\boldsymbol {\Delta {\Delta }}^{\top }\right )^{1/2}\), with the commutators as given in Eqs. 1.1 and 1.2. The cumulants of X are obtained by using the relations in Section 1.2.

Remark 3.

It can be seen that the cumulants depend on Δ, and the moments of the generating variate R. For instance

where

6.2 Multivariate Skew-t Distribution

This distribution goes back to Azzalini and Capitanio (2003); consult also Kim and Mallick (2003) for a derivation of moments up to the 4th order and moments of quadratic forms of the multivariate skew t-distribution. Let X be a d-dimensional vector having multivariate skew-normal distribution, \(SN_{d}\left (0,\boldsymbol {\Omega },\boldsymbol {\alpha }\right )\) (see Section 6.3 below for details), and S2 be a random variable which follows a χ2 distribution with m degrees of freedom. Then the random vector

has a multivariate skew t-distribution denoted by \(St_{d}\left (\boldsymbol {\mu },\boldsymbol {\Omega },\boldsymbol {\alpha },m\right ) \). Derivation of the cumulants of V up to the 4th order are provided in the next theorem, with detailed proof given in the Appendix.

Theorem 3.

Let \(\mathbf {V}\in St_{d}\left (\boldsymbol {\mu },\boldsymbol {\Omega },\boldsymbol {\alpha },m\right ) \) with m > 4, then its first four cumulants are:

From Theorem 3 the skewness and kurtosis vectors are \(\boldsymbol {\kappa }_{3}=\left (\boldsymbol {\Sigma }_{\mathbf {V} }^{-1/2}\right )^{\otimes 3}\) \(\underline {\operatorname *{Cum}}_{3}\left (\mathbf {V}\right ) ,\) and \(\boldsymbol {\kappa }_{4}=\left (\boldsymbol {\Sigma }_{\mathbf {V}}^{-1/2}\right )^{\otimes 4} \underline {\operatorname *{Cum}}_{4}\left (\mathbf {V}\right ) \), where

6.3 Multivariate Skew-Normal Distribution

Consider the multivariate skew-normal distribution introduced by Azzalini and Dalla Valle (1996), whose marginal densities are scalar skew-normals. A d-dimensional random vector X is said to have a multivariate skew-normal distribution, \(\text {SN}_{d}\left (\boldsymbol {\mu },\boldsymbol {\Omega },\boldsymbol {\alpha }\right ) \) with shape parameter α if it has the density function

where \(\varphi \left (\mathbf {X};\boldsymbol {\mu },\boldsymbol {\Omega }\right ) \) is the d-dimensional normal density with mean μ and correlation matrix Ω; here φ and Φ denote the univariate standard normal density and the cdf. The cumulant function of \(\text {SN}_{d}\left (0,\boldsymbol {\Omega },\boldsymbol {\alpha }\right ) \) is given by

where

Note that the cumulants of order higher than 2 do not depend on Ω but only on δ. Here we use the approach discussed in this paper to get explicit expressions for the cumulants of the multivariate SN distribution. See also Genton et al. (2001) and Kollo et al. (2018) for moments of SN and its quadratic forms. The proof of the next Lemma is similar to that of Lemma 5 that is coming later, and is omitted.

Lemma 4.

The cumulants of the multivariate skew-normal distribution, \(\text {SN}_{d}\left (\boldsymbol {\mu },\boldsymbol {\Omega },\boldsymbol {\alpha }\right ) \) are the following:

while for k = 3,4…, \( \underline {\operatorname *{Cum}}_{k}\left (\mathbf {X}\right ) =c_{k}\boldsymbol {\delta }^{\otimes k}. \) In particular

From this Lemma one observes that \(\boldsymbol {\delta }=\sqrt {\pi /2} \boldsymbol {\mu }_{\mathbf {X}}\), from which \(\underline {\operatorname *{Cum} }_{3}\) \(\left (\mathbf {X}\right ) =\left (2-\frac {\pi }{2}\right ) \boldsymbol {\mu }_{\mathbf {X}}^{\otimes 3}\). Hence Mardia’s skewness measure becomes

and the kurtosis measure \( \beta _{2,d}=\left (2\pi -6\right )^{2}\left (\operatorname *{vec} \mathbf {I}_{d^{2}}\right )^{\top }\boldsymbol {\mu }_{\mathbf {X}}^{\otimes 4}+d(d+2). \)

6.4 Canonical Fundamental Skew-Normal (CFUSN) Distribution

Arellano-Valle and Genton (2005) introduced the CFUSN distribution (cf. their Proposition 2.3), to include all existing definitions of SN distributions. The marginal stochastic representation of X with distribution \(\text {CFUSN}_{d,m}\left (\boldsymbol {\Delta }\right ) \) is given by

where Δ, is the d × m skewness matrix such that \(\left \Vert \boldsymbol {\Delta }\underline {a}\right \Vert <1\), for all \(\left \Vert \underline {a}\right \Vert =1\), and \(\mathbf {Z}_{1}\in \mathcal {N}\left (0,\mathbf {I}_{m}\right ) \) and \(\mathbf {Z}_{2} \in \mathcal {N}\left (0,\mathbf {I}_{d}\right ) \) are independent (Proposition 2.2. (Arellano-Valle and Genton, 2005)). A simple construction of Δ is \(\boldsymbol {\Delta }=\boldsymbol {\Lambda }\left (\mathbf {I}_{m}\mathbf {+}\boldsymbol {\Lambda }^{\top }\boldsymbol {\Lambda }\right )^{-1/2}\) with some real matrix Λ with d × m. The CFUSNd,m \(\left (\boldsymbol {\mu },\boldsymbol {\Sigma },\boldsymbol {\Delta }\right ) \) can be defined via the linear transformation μ + Σ1/2X. We then have the following

The cumulant function of a \(\text {CFUSN}_{d,m}\left (0,\boldsymbol {\Sigma },\boldsymbol {\Delta }\right ) \) is

where Φm denotes the standard normal distribution function of dimension m. Note again that the cumulants of order higher than 2 do not depend on Σ but just on Δ. The proof of the next Lemma is given in the Appendix.

Lemma 5.

The cumulants of \(CFUSN_{d,m}\left (0,\boldsymbol {\Sigma },\boldsymbol {\Delta }\right ) \) are given by

and for k = 3,4…

where ek denotes the kth unit vector in \(\mathbb {R}^{m} \). Expressions for ck are provided in Lemma 4.

7 Some Illustrative Examples

Figure 1 provides the contour plots of the density of a \(\text {CFUSN}_{2,2}(\underline {0},\boldsymbol {I}_{2},\boldsymbol {\Delta })\) for selected choices of the generating Λ as described under each figure.

Table 1 reports the values of skewness and kurtosis indexes computed with the help of Lemma 5. These results have been further verified by simulations. The index of Malkovich and Afifi and that of Balakrishnan et al. are not reported as they are equivalent to Mardia and MSR measures respectively.

Among the skewness indexes, note that Kollo’s vector measure is not able to capture the presence of skewness (although it is quite strong) in case b). As far as the kurtosis indexes are concerned, note that the total kurtosis κ4 and κ4,D are quite effective in showing differences among the four cases.

Figure 2 reports the contour plots of the density of \(\text {St}_{2}(\underline {0},\boldsymbol {I}_{2},\boldsymbol {\alpha },10)\) for some choices of α as given under the figure. Table 2 reports the corresponding values of skewness and kurtosis measures

8 Concluding Remarks

In this paper we have taken a vectorial approach to express information about skewness and kurtosis of multivariate distributions. This can be achieved by applying a vector derivative operator that we call the T-derivative, to the cumulant generating function. Although some of our methods may appear as similar to some existing results, we demonstrate that they lead to a direct and natural way of expressing higher order cumulants and moments in the multivariate case. This approach can also be easily extended to obtain moments and cumulants beyond the fourth order.

Our careful analysis of existing measures of skewness and kurtosis via the third and fourth order cumulant vectors, reveals some hidden features and relationships among them.

Explicit formulae for cumulant vectors for several distributions have been obtained. Several results are new, such as those in Lemmas 1 and 3, while others complement and complete existing results available in the literature.

The availability of explicit formulae for κ3 and κ4 together with available computing formulae for commutators provides a systematic treatment of higher order moments and cumulants for general classes of symmetric and asymmetric multivariate distributions, which are needed in applications, estimation and testing.

References

Arellano-Valle, R B and Genton, M G (2005). On fundamental skew distributions. Journal of Multivariate Analysis 96, 1, 93–116.

Arnold, B C and Groeneveld, R A (1995). Measuring skewness with respect to the mode. The American Statistician 49, 1, 34–38.

Arnold, B C and Beaver, R J (2002). Skewed multivariate models related to hidden truncation and/or selective reporting. Test 11, 1, 7–54.

Averous, J and Meste, M (1997). Skewness for multivariate distributions: two approaches. The Annals of Statistics 25, 5, 1984–1997.

Azzalini, A and Dalla Valle, A (1996). The multivariate skew-normal distribution. Biometrika 83, 4, 715–726.

Azzalini, A and Capitanio, A (1999). Statistical applications of the multivariate skew normal distribution. Journal of the Royal Statistical Society: Series B (Statistical Methodology) 61, 3, 579–602.

Azzalini, A and Capitanio, A (2003). Distributions generated by perturbation of symmetry with emphasis on a multivariate skew t-distribution. Journal of the Royal Statistical Society: Series B (Statistical Methodology) 65, 2, 367–389.

Balakrishnan, N, Brito, M R and Quiroz, A J (2007). A vectorial notion of skewness and its use in testing for multivariate symmetry. Communications in Statistics. Theory and Methods 36, 9, 1757–1767.

Balakrishnan, N and Scarpa, B (2012). Multivariate measures of skewness for the skew-normal distribution. Journal of Multivariate Analysis 104, 1, 73–87.

Baringhaus, L (1991a). Testing for spherical symmetry of a multivariate distribution. The Annals of Statistics 19, 2, 899–917.

Baringhaus, L and Henze, N (1991b). Limit distributions for measures of multivariate skewness and kurtosis based on projections. Journal of Multivariate Analysis38, 1, 51–69.

Baringhaus, L and Henze, N (1992). Limit distributions for mardia’s measure of multivariate skewness. Ann. Stat. 20, 4, 1889–1902.

Berkane, M and Bentler, P M (1986). Moments of elliptically distributed random variates. Statistics & Probability Letters 4, 6, 333–335.

Branco, M D and Dey, D K (2001). A general class of multivariate skew-elliptical distributions. Journal of Multivariate Analysis 79, 1, 99–113.

Dey, D K and Liu, J (2005). A new construction for skew multivariate distributions. Journal of Multivariate Analysis 95, 2, 323–344.

Ekström, M and Jammalamadaka, S R (2012). A general measure of skewness. Statistics & Probability Letters 82, 8, 1559–1568.

Fang, K W, Kotz, S and Ng, KW (2017). Symmetric Multivariate and Related Distributions. Chapman and Hall/CRC, London.

Genton, M G, He, L and Liu, X (2001). Moments of skew-normal random vectors and their quadratic forms. Statistics & Probability Letters 51, 4, 319–325.

Genton, MG (2004). Skew-elliptical distributions and their applications: a journey beyond normality. CRC Press, London.

Genton, M G and Loperfido, N M R (2005). Generalized skew-elliptical distributions and their quadratic forms. Annals of the Institute of Statistical Mathematics57, 2, 389–401.

Graham, A (2081). Kronecker products and matrix calculus with applications. Courier Dover Publications, USA.

Isogai, T (1982). On a measure of multivariate skewness and a test for multivariate normality. Annals of the Institute of Statistical Mathematics 34, 1, 531–541.

Jammalamadaka, S R, Subba Rao, T and Terdik, G (2006). Higher order cumulants of random vectors and applications to statistical inference and time series. Sankhya (Ser A, Methodology) 68, 326–356.

Johnson, N, Kotz, S and Balakrishnan, N (1994). Continuous Univariate Distributions, 1, 2nd edn. Houghton Mifflin, Boston.

Kim, HJ and Mallick, BK (2003). Moments of random vectors with skew t distribution and their quadratic forms. Statistics & Probability Letters 63, 4, 417–423. https://doi.org/10.1016/S0167-7152(03)00121-4, http://www.sciencedirect.com/science/article/pii/S0167715203001214.

Klar, B (2002). A treatment of multivariate skewness, kurtosis, and related statistics. Journal of Multivariate Analysis 83, 1, 141–165.

Kollo, T (2008). Multivariate skewness and kurtosis measures with an application in ICA. Journal of Multivariate Analysis 99, 10, 2328–2338.

Kollo, T, Käärik, M and Selart, A (2018). Asymptotic normality of estimators for parameters of a multivariate skew-normal distribution. Communications in Statistics-Theory and Methods 47, 15, 3640–3655.

Kotz, S and Nadarajah, S (2004). Multivariate t-distributions and their applications. Cambridge University Press, Cambridge.

Koziol, JA (1989). A note on measures of multivariate kurtosis. Biometrical Journal 31, 5, 619–624. https://doi.org/10.1002/bimj.4710310517.

MacRae, E C (1974). Matrix derivatives with an application to an adaptive linear decision problem. The Annals of Statistics 2, 2, 337–346.

Magnus, JR and Neudecker, H (1980). The elimination matrix: Some lemmas and applications. SIAM Journal on Algebraic Discrete Methods 1, 4, 422–449. https://doi.org/10.1137/0601049.

Magnus, JR and Neudecker, H (1999). Matrix differential calculus with applications in statistics and econometrics. Wiley, Chichester, revised reprint of the 1988 original.

Malkovich, J F and Afifi, A A (1973). On tests for multivariate normality. Journal of the American Statistical Association 68, 341, 176–179.

Mardia, K V (1970). Measures of multivariate skewness and kurtosis with applications. Biometrika 57, 519–530.

Mathai, AM (1997). Jacobians of matrix transformations and functions of matrix arguments. World Scientific Publishing Company.

McCullagh, P (1987). Tensor methods in statistics Monographs on Statistics and Applied Probability. Chapman & Hall, London.

Meijer, E (2005). Matrix algebra for higher order moments. Linear Algebra and its Applications 410, 112–134. https://doi.org/10.1016/j.laa.2005.02.040.

Móri, T F, Rohatgi, V K and Székely, G J (1994). On multivariate skewness and kurtosis. Theory of Probability & Its Applications 38, 3, 547–551.

Muirhead, RJ (2009). Aspects of multivariate statistical theory, 197. Wiley, New York.

Rao, CR (1948a). Tests of significance in multivariate analysis. Biometrika 35, 1/2, 58–79.

Rao, CR (1948b). The utilization of multiple measurements in problems of biological classification. Journal of the Royal Statistical Society Series B (Methodological) 10, 2, 159–203.

Sahu, S K, Dey, D K and Branco, M D (2003). A new class of multivariate skew distributions with applications to Bayesian regression models. Canadian Journal of Statistics 31, 2, 129–150.

Serfling, R J (2004). Multivariate symmetry and asymmetry. Encyclopedia of statistical sciences 8, 5338–5345.

Song, K S (2001). Rényi information, loglikelihood and an intrinsic distribution measure. Journal of Statistical Planning and Inference 93, 1-2, 51–69.

Speed, T P (1990). Invariant moments and cumulants. In: Coding theory and design theory, Part II, IMA Vol. Math. Appl., vol. 21, Springer, New York, pp. 319–335.

Srivastava, M S (1984). A measure of skewness and kurtosis and a graphical method for assessing multivariate normality. Statistics & Probability Letters 2, 5, 263–267.

Sumikawa, T, Koizumi, K and Seo, T (2013). Measures of multivariate skewness and kurtosis in highdimensional framework. Hiroshima Statistical Research Group Technical Reports, Technical Report 13, 3, 22.

Terdik, Gy (2002). Higher order statistics and multivariate vector Hermite polynomials for nonlinear analysis of multidimensional time series. Teor Ver Matem Stat(Teor Imovirnost ta Matem Statyst) 66, 147–168.

Acknowledgments

The work of the third author is partially supported by the Project EFOP3.6.2-16-2017-00015 of the European Union and co-financed by the European Social Fund.

Funding

Open access funding provided by Università degli Studi di Trento within the CRUI-CARE Agreement.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

1.1 1.1. Commutation matrices

In order to define the skewness and kurtosis in terms of the moments, some preliminary discussion of what are known as “commutation matrices” is helpful. We use the notation 1 : d to denote \(1,2,\dots ,d\) and Ei,j to represent the (d × d) elementary matrix, i.e. one whose entries are all zero except for just the i,jth element which is 1.

Note that the vector \(\operatorname *{vec}\operatorname *{E}_{i,j}^{\intercal }=\operatorname *{vec}\operatorname *{E}_{j,i}\), is the \(\left (\left (i-1\right ) d+j\right )^{th}\), i = 1 : d, j = 1 : d, unit vector of the identity matrix \(\mathbf {I}_{d^{2}}\). We now define the matrix K\(_{\left (1,2\right )}\) by stacking these column vectors \(\operatorname *{vec} \mathbf {E}_{i,j}^{\intercal }\) into a matrix according to the order defined by the operator vec, i.e. we follow the column-wise ordering of entries \(\operatorname *{vec}\operatorname *{E}_{1,1}^{\intercal }\) followed by \(\operatorname *{vec}\operatorname *{E}_{1,2}^{\intercal }\) etc. into a matrix of dimension d2 × d2, (cf. Graham 2081). Then

which is the reason why it is called a commutation matrix (see e.g. Graham 2081, Magnus and Neudecker 1999). By changing the neighboring elements of a tensor product, we can reach any permutation of them. For instance, if \(\mathfrak {p=}\left (i_{1},i_{2},i_{3},i_{4}\right ) \) is a permutation of the numbers 1,2,3,4, we can introduce the commutator matrix \(\mathbf {K} _{\mathfrak {p}}\) for changing the order of a tensor product, namely

In particular if we set \(\mathfrak {p}_{1}\mathfrak {=}\left (1,3,2,4\right ) \)

It is worth noting that \(\mathbf {K}_{\left (1,2\right )}\), and in general each commutator matrix, depends on the dimensions of the vectors under consideration. In our example, the dimension of \(\mathbf {K}_{\left (1,2\right )}\) is d2 × d2, while the dimension of \(\mathbf {K}_{\mathfrak {p}_{1}}\) is d4 × d4.

Commutator matrices are very useful for expressing the formulae for moments in terms of cumulants and vice versa, as shown in the next Section 1.2.

1.2 1.2. Conversion of moments and cumulants

Since we will be dealing with the cumulants of different variables, from now on, we will index the cumulants by the variable as well and write \(\boldsymbol {\kappa } _{X,k}=\underline {\operatorname *{Cum}}_{k}\left (\mathbf {X}\right ) \). In terms of the commutator matrices, we can write

where

while

where

For cumulants we have (see e.g. Jammalamadaka et al., 2006)

where the commutators are as defined in Eqs. 1.1 and 1.2.

1.3 1.3. Proofs

We start with the proof of Theorem 3, which is one of the central results of the paper. Proofs of the remaining results follow.

Proof of Theorem 3.

In order to derive the cumulants of V, set \(S_{m}=\frac {\sqrt {m}}{S}\) and let

Note that the cumulants of W are the same as those of V except for the mean, and that (cf. Johnson et al., 1994, p. 452)

where Gk(m) is as given in Eq. 6.3. We have

Consider first the computation of mean and variance.

with δ as also α given in Eq. 6.5. From the formula for cumulants of products (see Terdik 2002) we need to compute:

where, from Lemma 4,

and

For m > 2, using the results above

We note that Sahu et al., (2003, p.137) provide a different approach and expression for the second cumulant. Consider now the third order cumulant. Again, from the formula for cumulants of products (see Terdik 2002) we need to compute:

Notice that W is a scalar multiple of a vector valued variate, making the general formula for vector valued case somewhat simplified. Now, we consider separately the terms in Eq. 1.4: first those depending on X, namely

Here, as well as in the next set of calculations, we use Lemma 4 which expresses the cumulants of a multivariate skew normal variable in terms of its parameters. As a side result we have

since the third moment

cf. Genton et al. (2001) where the mean is zero. The next term in Eq. 1.4 contains

Plugging these results into Eq. 1.4, we obtain

The cumulants of Sm that are needed, come from the expressions for cumulants in terms of moments and the moment formula (1.3). They are as follows

and

\(C_{1}\left (S_{m}\right )^{3}\) is simply the third power of the expected value. Finally we collect the coefficients in Eq. 1.6 of different terms. Starting with \(\mathbf {K}_{2,1}\left (\boldsymbol {\kappa }_{X,2} \otimes \boldsymbol {\kappa }_{X,1}\right ) \), we have

Then collecting the coefficients of δ⊗3 gives

Putting these results together, we obtain

For the fourth cumulant (k = 4), again expressing the cumulant of product in terms of product of cumulants (see Terdik et al., 2002), and writing \(C_{k}\left (S_{m}\right ) =\operatorname *{Cum}\nolimits _{k}\left (S_{m}\right ) \) for short, we have

Remark 4.

In the most general case when one considers cumulants of products such as

\(\operatorname *{Cum}\nolimits _{4}\left (X_{1}Y_{1},X_{2}Y_{2} ,X_{3}Y_{3},X_{4}Y_{4}\right ) \) in terms of products of cumulants; there are 2465 terms according to the indecomposable partitions with respect to partition \(\mathcal {L=}\left \{ \left (1,2\right ) ,\left (3,4\right ) ,\left (5,6\right ) ,\left (7,8\right ) \right \} \) of 8 elements. In our case it simplifies because we consider four products of the same independent variables \(\operatorname *{Cum}\nolimits _{4}\left (XY\right ) \). One can also check these formulae by expressing the 4th order cumulant in terms of expected values and using independence.

In doing so, one should pay attention to the fact that the first order cumulants are not zero.

The cumulants contained in this expression are considered in Lemma 4. The cumulants of X, except the second order one, depend on constant times tensor powers δ⊗k, thus making the use of commutator matrices unnecessary. The products including \(\boldsymbol {\kappa }_{X,2}=\operatorname *{vec} \boldsymbol {\Sigma }-2/\pi \boldsymbol {\delta }^{\otimes 2}\), needs to be considered

The term with vecΩ and δ⊗2 becomes

Calculations for kurtosis are similar to those that have been used for skewness. The expression (1.7) contains five groups of cumulants, which are as follows:

-

1.

It follows from Section 1.2

$$ \boldsymbol{\kappa}_{X,4}+\mathbf{K}_{3,1}\left( \boldsymbol{\kappa} _{X,3}\otimes\boldsymbol{\kappa}_{X,1}\right) +\mathbf{K}_{2,2} \boldsymbol{\kappa}_{X,2}^{\otimes2}+\mathbf{K}_{2,1,1}\left( \boldsymbol{\kappa}_{X,2}\otimes\boldsymbol{\kappa}_{X,1}^{\otimes2}\right) +\boldsymbol{\kappa}_{X,1}^{\otimes4}=\operatorname*{E}\mathbf{X}^{\otimes4} $$(cf. Genton et al., 2001). Observe that the coefficient of δ⊗4 is 0.

-

2.

$$ \begin{array}{@{}rcl@{}} & &4\boldsymbol{\kappa}_{X,4} + 3\mathbf{K}_{3,1} \left( \boldsymbol{\kappa} _{X,3}\otimes\boldsymbol{\kappa}_{X,1}\right) + 4\mathbf{K}_{2,2} \boldsymbol{\kappa}_{X,2}^{\otimes2}+2\mathbf{K}_{2,1,1}\left( \boldsymbol{\kappa}_{X,2}\otimes\boldsymbol{\kappa}_{X,1}^{\otimes2}\right) \\ & =&4\frac{2}{\pi}\boldsymbol{\delta}^{\otimes4}+4\mathbf{K}_{2,2}\left( \operatorname*{vec}\boldsymbol{\Sigma}\right)^{\otimes2}-2\frac{2}{\pi }\mathbf{K}_{2,1,1}\left( \operatorname*{vec}\boldsymbol{\Sigma} \otimes\boldsymbol{\delta}^{\otimes2}\right) \end{array} $$

and the coefficient of δ⊗4 is \(4\frac {2}{\pi }\ \).

-

3.

$$ \begin{array}{@{}rcl@{}} &&3\boldsymbol{\kappa}_{X,4}+3\mathbf{K}_{3,1}\left( \boldsymbol{\kappa} _{X,3}\otimes\boldsymbol{\kappa}_{X,1}\right) +2\mathbf{K}_{2,2} \boldsymbol{\kappa}_{X,2}^{\otimes2}+2\mathbf{K}_{2,1,1}\underline{\kappa }_{X,2}\otimes\boldsymbol{\kappa}_{X,1}^{\otimes2}\\&=&2\left( \mathbf{K} _{2,2}\left( \operatorname*{vec}\boldsymbol{\Sigma}\right)^{\otimes 2}\right) \end{array} $$

and the coefficient of δ⊗4 is 0.

-

4.

$$ \begin{array}{@{}rcl@{}} 6\boldsymbol{\kappa}_{X,4}+3\mathbf{K}_{3,1}\left( \boldsymbol{\kappa} _{X,3}\otimes\boldsymbol{\kappa}_{X,1}\right) +4\mathbf{K}_{2,2} \boldsymbol{\kappa}_{X,2}^{\otimes2} \\ \qquad \qquad =12\frac{2}{\pi}\boldsymbol{\delta} ^{\otimes4}+4\mathbf{K}_{2,2}\left( \operatorname*{vec}\boldsymbol{\Sigma }\right)^{\otimes2}-4\frac{2}{\pi}\mathbf{K}_{2,1,1}\left( \left( \operatorname*{vec}\boldsymbol{\Sigma}\otimes\boldsymbol{\delta}^{\otimes 2}\right) \right) \end{array} $$

and the coefficient of δ⊗4 is \(12\frac {2}{\pi }\)

-

5.

\(\boldsymbol {\kappa }_{X,4}=\left (-6\left (\frac {2}{\pi }\right )^{2}+4\frac {2}{\pi }\right ) \boldsymbol {\delta }^{\otimes 4}\),

Now we collect the coefficients for δ⊗4

and for \(\mathbf {K}_{2,2}\left (\operatorname *{vec}\boldsymbol {\Sigma }\right )^{\otimes 2}\)

and for \(\mathbf {K}_{2,1,1}\left (\operatorname *{vec}\boldsymbol {\Sigma }\otimes \boldsymbol {\delta }^{\otimes 2}\right ) \)

Combining these five groups, one arrives at the proof of the Theorem. □

Proof of Lemma 1.

This Lemma can be obtained essentially from the results in Fang et al. (2017), by rearranging them into a vector form, and using our notations.

Writing \(d_{1}=\left (d^{3}-1\right ) /\left (d-1\right ) \), and defining the matrix \(\mathbf {I}\left (d^{3},d\right ) =\mathbf {I}_{d^{3}}\left (:,\left [ 1,1+d_{1},\ldots ,1+(d-1)d_{1}\right ] \right ) ,\) (i.e. d columns of the unit matrix \(\mathbf {I}_{d^{3}}\)), we will denote

Also let

Note that the number of ones in \(\mathbf {e}\left (d^{3},d\right ) \) and \(\mathbf {e}\left (d^{2},d\right ) \) are d and \(\left (d-1\right ) d\) respectively. Let \(\mathbf {I}_{d}=\left [ \mathbf {e}_{k}\right ]_{k=1:d}\), i.e. ek is a unit vector in \(\mathbb {R}^{d}\). The lemma follows with the following additional notations

and

□

Proof of Lemma 2.

Recall \(\phi _{\mathbf {W}}\left (\boldsymbol {\lambda }\right ) \) defined in Eq. 5.2. Applying \(D_{\boldsymbol {\lambda }}^{\otimes }\) to the cumulant generator \(\psi _{\mathbf {W}}\left (\underline {\lambda }\right ) =\log \phi _{\mathbf {W}}\left (\boldsymbol {\lambda }\right ), \) we have

Writing \(f\left (\boldsymbol {\lambda }^{\intercal }\boldsymbol {\lambda }\right ) =g^{\prime }\left (\boldsymbol {\lambda }^{\intercal }\boldsymbol {\lambda }\right ) /g\left (\boldsymbol {\lambda }^{\intercal }\boldsymbol {\lambda }\right ) \), we have

from which we obtain \(\underline {\operatorname *{Cum}}_{1}\left (\mathbf {W}\right ) =0\), \(\underline {\operatorname *{Cum}}_{2}\left (\mathbf {W}\right ) =-2g^{\prime }\left (0\right ) \operatorname *{vec} \mathbf {I}_{d},\) and \(\underline {\operatorname *{Cum}}_{3}\left (\mathbf {W} \right ) =0\). For \(\underline {\operatorname *{Cum}}_{4}\left (\mathbf {W}\right ) \) we have

Mardia’s measure can now be obtained by noting that

□

Proof of Lemma 3.

Since R∗2/d has an F-distribution with d and m degrees of freedom, we have for m > 4,

and using Theorem 1. One may use Fang et al., (2017 p. 88) to obtain formulae for the moments of components of W, and get

hence \( \operatorname *{Cum}\nolimits _{4}\left (W_{1}/\sigma _{W_{1}}\right ) =3\left (\frac {m-2}{m-4}-1\right ); \) Theorem 1 can be used to get κ0. □

Proof of Theorem 2.

Recall (6.4) and Lemma 1 for the moments of pk and \(\left \vert \mathbf {U} _{j}\right \vert \). Set \(\mathbf {V}_{1}=\boldsymbol {\Delta }\left \vert \mathbf {Z}_{1}\right \vert \), \(\mathbf {V}_{2}=\left (\mathbf {I} -\boldsymbol {\Delta {\Delta }}^{\top }\right )^{1/2}\mathbf {Z}_{2}\) for simplifying notations. The commutation matrices are given in Eqs. 1.1 and 1.2.

where \(\operatorname {E}\mathbf {V}_{1}^{\otimes 3}=\boldsymbol {\Delta }^{\otimes 3}\operatorname {E}\left \vert \mathbf {Z}_{1}\right \vert ^{\otimes 3}=\operatorname {E}{p_{1}^{3}}\operatorname {E}R^{3}\operatorname {E} \left \vert \mathbf {U}_{1}\right \vert ^{\otimes 3}\) and

where \(\operatorname {E}\mathbf {V}_{1}^{\otimes 4} =\operatorname {E}p_{1} ^{4}\operatorname {E}R^{4}\boldsymbol {\Delta }^{\otimes 4}\operatorname {E} \left \vert \mathbf {U}_{1}\right \vert ^{\otimes 4}\) and

□

Proof 6 (Proof of Lemma 5.

In the proof, the operator \(D_{\boldsymbol {\lambda }}^{\otimes }\) is used repeatedly; let CX denote the cumulant function of X and note that

put ek for the kth unit vector, since Φm is the distribution function of the m-variate standard normal distribution which is simply the product of univariate standard normal distributions; hence

and

The first two cumulants are obtained, by evaluating the derivatives at λ = 0. We have:

Consider next

to obtain

For the third order cumulant, we have

and

hence

Here \(\left [ \mathbf {e}_{k}^{\otimes 2}\right ]_{k=1:m}\) is a matrix of vectors of \(\mathbf {e}_{k}^{\otimes 2}\) with dimension m2 × m. Higher order cumulants come out as constant times \(\boldsymbol {\Delta }^{\otimes k}\operatorname *{vec}\left [ \mathbf {e}_{p}^{\otimes k-1}\right ]_{p=1:m}\), k = 3,4,….

Note that c3 and c4 coincide with those given in Appendix A.2 of Azzalini and Capitanio (1999), and we omit details about the other constants ck. □

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Jammalamadaka, S.R., Taufer, E. & Terdik, G.H. On Multivariate Skewness and Kurtosis. Sankhya A 83, 607–644 (2021). https://doi.org/10.1007/s13171-020-00211-6

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13171-020-00211-6

Keywords

- Multivariate skewness

- Multivariate kurtosis

- Cumulant vectors

- Interconnections between different measures

- Symmetric and skew multivariate distributions