Abstract

For continuous functions, midpoint convexity characterizes convex functions. By considering discrete versions of midpoint convexity, several types of discrete convexities of functions, including integral convexity, L\(^\natural\)-convexity and global/local discrete midpoint convexity, have been studied. We propose a new type of discrete midpoint convexity that lies between L\(^\natural\)-convexity and integral convexity and is independent of global/local discrete midpoint convexity. The new convexity, named DDM-convexity, has nice properties satisfied by L\(^\natural\)-convexity and global/local discrete midpoint convexity. DDM-convex functions are stable under scaling, satisfy the so-called parallelogram inequality and a proximity theorem with the same small proximity bound as that for L\(^{\natural }\)-convex functions. Several characterizations of DDM-convexity are given and algorithms for DDM-convex function minimization are developed. We also propose DDM-convexity in continuous variables and give proximity theorems on these functions.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

For a continuous function f defined on a convex set \(S \subseteq {\mathbb {R}}^n\), it was proved by Jensen [12] that midpoint convexity defined by

is equivalent to the inequality defining convex functions

By capturing the concept of midpoint convexity, several types of ‘discrete’ midpoint convexities for functions defined on the integer lattice \({\mathbb {Z}}^n\) have been proposed.

A weak version of ‘discrete’ midpoint convexity is obtained by replacing \(f((x+y)/2)\) by the smallest value of a linear extension of f among the integer points neighboring \((x+y)/2\). More precisely, for any point \(x \in {\mathbb {R}}^n\), we consider its integer neighborhood

and the set \(\Lambda (x)\) of all coefficients \((\lambda _z \mid z \in N(x))\) for convex combinations indexed by N(x). For a function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\), we define the local convex envelope \({\tilde{f}}\) of f by

We say that f satisfies weak discrete midpoint convexity if the following inequality holds

for all \(x,y \in {\mathbb {Z}}^n\). On the other hand, f is said to be integrally convex [3] if \({\tilde{f}}\) is convex on \({\mathbb {R}}^n\). Characterizations of integral convexity by using weak discrete midpoint convexity have been discussed in [3, 17, 18]. The simplest characterization, Theorem A.1 in [18], says that f is integrally convex if and only if f satisfies (1.1) for all \(x,y \in \mathop {\mathrm{dom}}\limits f\) withFootnote 1\(\Vert x-y\Vert _{\infty } \ge 2\), where the effective domain \(\mathop {\mathrm{dom}}\limits f\) of f is defined by

The class of integrally convex functions establishes a general framework of discrete convex functions, including separable convex, L\(^\natural\)-convex, M\(^\natural\)-convex, L\(^\natural _2\)-convex, M\(^\natural _2\)-convex functions [21], BS-convex and UJ-convex functions [4], and globally/locally discrete midpoint convex functions [18]. The concept of integral convexity is used in formulating discrete fixed point theorems [8, 9, 29], designing algorithms for discrete systems of nonlinear equations [14, 28], and guaranteeing the existence of a pure strategy equilibrium in finite symmetric games [10].

A strong version of ‘discrete’ midpoint convexity is obtained by replacing \(f((x+y)/2)\) by the average of the values of f at two integer points obtained by rounding-up and rounding-down of all components of \((x+y)/2\). More precisely, for a function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\), we say that f satisfies discrete midpoint convexity if it has

for all \(x,y \in {\mathbb {Z}}^n\), where \(\lceil \cdot \rceil\) and \(\lfloor \cdot \rfloor\) denote the integer vectors obtained by rounding up and rounding down all components of a given real vector, respectively. It is known that discrete midpoint convexity characterizes the class of L\(^\natural\)-convex functions [5, 20] which play important roles in both theoretical and practical aspects. L\(^\natural\)-convex functions are applied to several fields, including auction theory [15, 25], image processing [13], inventory theory [2, 27, 30] and scheduling [1]. Since discrete midpoint convexity (1.2) obviously implies weak discrete midpoint convexity (1.1), L\(^\natural\)-convex functions forms a subclass of integrally convex functions.

Moriguchi et al. [18] classified discrete convex functions between L\(^\natural\)-convex and integrally convex functions in terms of discrete midpoint convexity with \(\ell _\infty\)-distance requirements, and proposed two new classes of discrete convex functions, namely, globally/locally discrete midpoint convex functions. A function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) is said to be globally discrete midpoint convex if (1.2) holds for any pair \((x,y) \in {\mathbb {Z}}^n \times {\mathbb {Z}}^n\) with \(\Vert x-y\Vert _\infty \ge 2\). A set \(S \subseteq {\mathbb {Z}}^n\) is called a discrete midpoint convex set if its indicator function \(\delta _S\) defined by

is globally discrete midpoint convex, that is, if

A function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) is said to be locally discrete midpoint convex if \(\mathop {\mathrm{dom}}\limits f\) is a discrete midpoint convex set and (1.2) holds for any pair \((x,y) \in {\mathbb {Z}}^n \times {\mathbb {Z}}^n\) with \(\Vert x-y\Vert _\infty = 2\). It is shown in [18] that the following inclusion relations among function classes hold:

and globally/locally discrete midpoint convex functions inherit nice features from L\(^\natural\)-convex functions, that is, for a globally/locally discrete midpoint convex f and a positive integer \(\alpha\),

-

the scaled function \(f^\alpha\) defined by \(f^\alpha (x) = f(\alpha x)\; (x \in {\mathbb {Z}}^n)\) belongs to the same class, that is, global/local discrete midpoint convexity is closed with respect to scaling operations,

-

a proximity theorem with the same proximity distance with L\(^\natural\)-convexity holds, that is, given an \(x^\alpha\) with \(f(x^\alpha ) \le f(x^\alpha + \alpha d)\) for all \(d \in \{-1,0,+1\}^n\), there exists a minimizer \(x^*\) of f with \(\Vert x^\alpha -x ^*\Vert _\infty \le n(\alpha -1)\),

-

when f has a minimizer, a steepest descent algorithm for the minimization of f is developed such that the number of local minimizations in the neighborhood of \(\ell _\infty\)-distance 2 (the 2-neighborhood minimizations) is bounded by the shortest \(\ell _\infty\)-distance from a given initial feasible point to a minimizer of f, and

-

when \(\mathop {\mathrm{dom}}\limits f\) is bounded and \(K_\infty\) denotes the \(\ell _\infty\)-size of \(\mathop {\mathrm{dom}}\limits f\), a scaling algorithm minimizing f with \(O(n \log _2 K_\infty\)) calls of the 2-neighborhood minimization is developed.

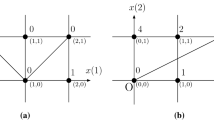

This paper, strongly motivated by [18], proposes a new type of discrete midpoint convexity between L\(^\natural\)-convexity and integral convexity, but it is independent of global/local discrete midpoint convexity with respect to inclusion relation. We name the new convexity directed discrete midpoint convexity (DDM-convexity) which forms the following classification

The same features as mentioned above are satisfied by DDM-convexity. The merits of DDM-convexity relative to global/local discrete midpoint convexity are the following properties:

-

DDM-convexity is closed with respect to individual sign inversion of variables, that is, for a DDM-convex function f and \(\tau _i \in \{+1,-1\}\;(i=1,\ldots ,n)\), \(f(\tau _1 x_1,\ldots ,\tau _n x_n)\) is also DDM-convex (see Proposition 4 (3)). Neither L\(^\natural\)-convexity nor global nor local discrete midpoint convexity has this property, while integral convexity is closed with respect to individual sign inversion of variables.

-

For a quadratic function \(f(x) = x^\top Q x\) with a symmetric matrix \(Q = [q_{ij}]\), DDM-convexity is characterized by the diagonal dominance with nonnegative diagonals of Q:

$$\begin{aligned} q_{ii} \ge \sum _{j:j \ne i}|q_{ij}| \qquad (\forall i =1,\ldots ,n) \end{aligned}$$(see Theorem 9). While L\(^\natural\)-convexity is characterized by the combination of diagonal dominance with nonnegative diagonals and nonpositivity of all off-diagonal components of Q, global/local discrete midpoint convexity is independent of the diagonal dominance with nonnegative diagonals.

-

A function \(g : {\mathbb {Z}}\rightarrow {\mathbb {R}}\cup \{+\infty \}\) is said to be discrete convex if \(g(t-1)+g(t+1) \ge 2g(t)\) for all \(t \in {\mathbb {Z}}\). For univariate discrete convex functions \(\xi _{i}, \varphi _{ij}, \psi _{ij} : {\mathbb {Z}}\rightarrow {\mathbb {R}}\cup \{+\infty \}\; (i=1,\ldots ,n; j \in \{1,\ldots ,n\} {\setminus } \{i\}\)), a 2-separable convex function [7] defined as a function represented as

$$\begin{aligned} f(x)=\sum _{i=1}^n \xi _i(x_i)+ \sum _{i,j:j\not =i}\varphi _{ij} (x_i-x_j)+ \sum _{i,j:j\not =i} \psi _{ij}(x_i+x_j)\qquad (x\in {\mathbb {Z}}^n). \end{aligned}$$The class of DDM-convex functions includes all 2-separable convex functions (see Theorem 4). It is known that if all \(\psi _{ij}\) are identically zero, then f is L\(^\natural\)-convex, whereas there exists a 2-separable convex function not contained in the class of globally/locally discrete midpoint convex functions.

-

A steepest descent algorithm for the minimization of DDM-convex functions requires only the 1-neighborhood minimization in contrast to the 2-neighborhood minimization (see Sect. 8.1).

In the next section, we give the definition of DDM-convexity and basic properties of DDM-convex functions. In Sect. 3, we discuss a relationship between DDM-convexity and known discrete convexities. For globally/locally discrete midpoint convex functions, Moriguchi et al. [18] revealed a useful property, which is expressed by the so-called parallelogram inequality. We show that a similar parallelogram inequality holds for DDM-convex functions in Sect. 4. Sections 5 and 6 are devoted to characterizations and operations for DDM-convexity. We prove a proximity theorem for DDM-convex functions in Sect. 7, while in Sect. 8 we propose a steepest descent algorithm and a scaling algorithm for DDM-convex function minimization. In Sect. 9, we define DDM-convex functions in continuous variables and give proximity theorems for such functions.

2 Directed discrete midpoint convexity

We give the definition of directed discrete midpoint convexity and show its basic properties.

For an ordered pair (x, y) of \(x,y\in {\mathbb {Z}}^n\), we define \(\mu (x,y) \in {\mathbb {Z}}^n\) by

That is, each component \(\mu (x,y)_i\) of \(\mu (x,y)\) is defined by rounding up or rounding down \(\frac{x_i+y_i}{2}\) to the integer in the direction of \(x_i-y_i\). It is easy to show the next characterization of \(\mu (x,y)\) and \(\mu (y,x)\).

Proposition 1

For \(x,y,p,q\in {\mathbb {Z}}^n\), \(p=\mu (x,y)\) and \(q=\mu (y,x)\) hold if and only if the following conditions (a)\(\sim\)(c) hold:

-

(a)

\(p+q = x+y\),

-

(b)

\(\Vert p-q\Vert _\infty \le 1\), and

-

(c)

for each \(i=1,\dots ,n\), if \(x_i\ge y_i\), then \(p_i\ge q_i\); otherwise \(p_i\le q_i\).

For every \(a,b\in {\mathbb {R}}^n\), let us denote the n-dimensional vector \((a_1b_1,\dots ,a_nb_n)\) by \(a\odot b\). The next proposition gives fundamental properties of \(\mu (\cdot ,\cdot )\).

Proposition 2

For every \(x,y,d\in {\mathbb {Z}}^n\), the following properties hold.

-

(1)

\(x+y=\mu (x,y)+\mu (y,x)\).

-

(2)

If \(x\ge y\), then \(\mu (x,y)=\lceil \frac{x+y}{2}\rceil\) and \(\mu (y,x)= \lfloor \frac{x+y}{2}\rfloor\).

-

(3)

If \(\Vert x-y\Vert _\infty \le 1\), then \(\mu (x,y)=x\) and \(\mu (y,x)=y\).

-

(4)

\(\mu (x+d,y+d)=\mu (x,y)+d\).

-

(5)

For any permutation \(\sigma\) of \((1,\dots ,n)\),

$$\begin{aligned} \mu ((x_{\sigma (1)},\dots ,x_{\sigma (n)}), (y_{\sigma (1)},\dots ,y_{\sigma (n)}))= (\mu (x,y)_{\sigma (1)},\dots ,\mu (x,y)_{\sigma (n)}). \end{aligned}$$ -

(6)

For any \(\tau \in \{+1,-1\}^n\), \(\mu (\tau \odot x,\tau \odot y)=\tau \odot \mu (x,y)\).

Proof

Properties (1)–(5) are obvious by the definition of \(\mu (\cdot ,\cdot )\). Let us show (6). If \(\tau _i=+1\),

holds, and if \(\tau _i=-1\),

holds. \(\square\)

By using the introduced \(\mu (\cdot ,\cdot )\), we propose new classes of functions and sets. We say that a function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) satisfies directed discrete midpoint convexity (DDM-convexity) or is a directed discrete midpoint convex function (DDM-convex function) if

for all \(x,y \in {\mathbb {Z}}^n\). We call \(S \subseteq {\mathbb {Z}}^n\) a directed discrete midpoint convex set (DDM-convex set) if its indicator function \(\delta _S\) is DDM-convex, that is, if

holds.

The next propositions are direct consequences of Proposition 2 and the definition (2.1).

Proposition 3

The following statements hold:

-

(1)

Any function defined on \(\{0, 1\}^n\) is a DDM-convex function.

-

(2)

Any subset of \(\{0, 1\}^n\) is a DDM-convex set.

-

(3)

For a DDM-convex function f, its effective domain \(\mathop {\mathrm{dom}}\limits f\) and the set \(\mathop {\mathrm{argmin}}\limits f\) of minimizers of f are DDM-convex sets, where \(\mathop {\mathrm{argmin}}\limits f\) is defined by

$$\begin{aligned} \mathop {\mathrm{argmin}}\limits f = \{ x \in {\mathbb {Z}}^n \mid f(x) \le f(z)\; (\forall z \in {\mathbb {Z}}^n)\}. \end{aligned}$$

Proposition 4

Let \(f, f_1, f_2:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be DDM-convex functions.

-

(1)

For any \(d\in {\mathbb {Z}}^n\), \(g(x)=f(x+d)\) is a DDM-convex function.

-

(2)

For any permutation \(\sigma\) of \((1, \dots , n)\), \(g(x)=f(x_{\sigma (1)}, \dots , x_{\sigma (n)})\) is a DDM-convex function.

-

(3)

For any \(\tau \in \{+1, -1\}^n\), \(g(x)=f(\tau \odot x)\) is a DDM-convex function.

-

(4)

For any \(a_1, a_2\ge 0\), \(g(x)=a_1f_1(x)+a_2f_2(x)\) is a DDM-convex function. In particular, the sum of DDM-convex functions is DDM-convex.

Proposition 5

Let \(S, S_1, S_2\subseteq {\mathbb {Z}}^n\) be DDM-convex sets.

-

(1)

For any \(d\in {\mathbb {Z}}^n\), \(T=\{x+d \mid x\in S\}\) is a DDM-convex set.

-

(2)

For any permutation \(\sigma\) of \((1, \dots , n)\), \(T=\{(x_{\sigma (1)},\dots ,x_{\sigma (n)}) \mid (x_1,\dots ,x_n) \in S \}\) is a DDM-convex set.

-

(3)

For any \(\tau \in \{+1, -1\}^n\), \(T=\{\tau \odot x \mid x\in S\}\) is a DDM-convex set.

-

(4)

\(T=S_1\cap S_2\) is a DDM-convex set.

3 Relationships with known discrete convexities

We discuss relationships between DDM-convexity and known discrete convexities, including integral convexity, L\(^\natural\)-convexity, global/local discrete midpoint convexity and 2-separable convexity.

As mentioned in Sect. 1, the class of integrally convex functions is characterized by weak discrete midpoint convexity (1.1). Since DDM-convexity (2.1) trivially implies (1.1), any DDM-convex function is integrally convex. Therefore, DDM-convex functions inherit many properties of integrally convex functions. We introduce a good property of integrally convex functions as well as DDM-convex functions, box-barrier property.

Theorem 1

(Box-barrier property [17, Theorem 2.6]) Let \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be an integrally convex function, and let \(p\in ({\mathbb {Z}}\cup \{-\infty \})^n\) and \(q\in ({\mathbb {Z}}\cup \{+\infty \})^n\) with \(p\le q\). Define

and \({\hat{x}}\in S\cap \mathop {\mathrm{dom}}\limits f\). If \(f({\hat{x}})\le f(y)\) for all \(y\in W\), then \(f({\hat{x}})\le f(z)\) for all \(z\in {\mathbb {Z}}^n{\setminus } S\).

By setting \(p = {\hat{x}} - \varvec{1}\) and \(q = {\hat{x}} + \varvec{1}\) where \(\varvec{1}\) denotes the vector of all ones, box-barrier property implies the minimality criterion of integrally convex functions.

Theorem 2

([3, Proposition 3.1]; see also [21, Theorem 3.21]) Let \(f: {\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be an integrally convex function and \({\hat{x}} \in \mathop {\mathrm{dom}}\limits f\). Then \({\hat{x}}\) is a minimizer of f if and only if \(f({\hat{x}}) \le f({\hat{x}} + d)\) for all \(d \in \{ -1, 0, +1 \}^{n}\).

As a special case of Theorem 2, we have the minimality criterion of DDM-convex functions.

Corollary 1

Let \(f: {\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be a DDM-convex function and \({\hat{x}} \in \mathop {\mathrm{dom}}\limits f\). Then \({\hat{x}}\) is a minimizer of f if and only if \(f({\hat{x}}) \le f({\hat{x}} + d)\) for all \(d \in \{ -1, 0, +1 \}^{n}\).

We next discuss the relationship between L\(^\natural\)-convexity and DDM-convexity. L\(^\natural\)-convex functions are originally defined by translation-submodularity:

for all \(x,y \in {\mathbb {Z}}^n\) and nonnegative integer \(\alpha\), where \(p \vee q\) and \(p \wedge q\) denote the componentwise maximum and minimum of the vectors p and q, respectively. Translation-submodularity is a generalization of submodularity:

L\(^\natural\)-convexity has several equivalent characterizations as below.

Theorem 3

([3, Corollary 5.2.2], [5, Theorem 3], [21, Theorem 7.7] ) For a function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\), the following properties are equivalent:

-

(1)

f is \(\text {L}^\natural\)-convex, that is, (3.1) holds for all \(x,y \in {\mathbb {Z}}^n\) and nonnegative integer \(\alpha\).

-

(2)

f satisfies discrete midpoint convexity (1.2) for all \(x,y \in {\mathbb {Z}}^n\).

-

(3)

f is integrally convex and submodular.

-

(4)

For every \(x, y\in {\mathbb {Z}}^n\) with \(x \not \ge y\) and \(A={{\,\mathrm{\mathrm {argmax}}\,}}_i\{y_i-x_i\}\),

$$\begin{aligned} f(x)+f(y)\ge f(x+\varvec{1}_A)+f(y-\varvec{1}_A), \end{aligned}$$where the i-component of \(\varvec{1}_A\) is 1 if \(i \in A\); otherwise 0.

Theorem 3 yields the next property.

Proposition 6

Any L\(^\natural\)-convex function is DDM-convex.

Proof

Let \(f : {\mathbb {Z}}\rightarrow {\mathbb {R}}\cup \{+\infty \}\) be an L\(^\natural\)-convex function. We arbitrarily fix \(x,y \in \mathop {\mathrm{dom}}\limits f\) and show that (2.1) holds for x and y. By Proposition 2 (3), (2.1) holds if \(\Vert x-y\Vert _\infty \le 1\). Suppose that \(m = \Vert x-y\Vert _\infty \ge 2\) and \(\Vert y-x\Vert _\infty =\max _i \{y_i-x_i\}\) (by exchanging the role of x and y if necessary). Let \(A = {{\,\mathrm{\mathrm {argmax}}\,}}_i\{y_i - x_i\}\), \(p=x+\varvec{1}_A\) and \(q=y-\varvec{1}_A\). The vectors p and q satisfy the properties (a) and (c) of Proposition 1 and \(\Vert p-q\Vert _\infty \le m\). Furthermore, by Theorem 3 (4), we have

If \(\Vert p-q\Vert _\infty =m\), by applying the above process for the pair (q, p) again, we obtain p and q with \(\Vert p-q\Vert _\infty < m\) preserving (a), (c) of Proposition 1 and (3.3). By repeating this argument, we finally obtain p and q having (a)\(\sim\)(c) of Proposition 1 and (3.3), which means that (2.1) holds for x and y. \(\square\)

A set \(S \subseteq {\mathbb {Z}}^n\) is called an L\(^\natural\)-convex set if its indicator function \(\delta _S\) is L\(^\natural\)-convex.

Corollary 2

Any L\(^\natural\)-convex set is DDM-convex.

Example 1

([18, Remark 1]) The class of L\(^\natural\)-convex functions is a proper subclass of DDM-convex functions. For example,

is a DDM-convex set, but for \(x=(1, 0), y=(0, 1)\), \(\lceil \frac{x+y}{2}\rceil =(1, 1)\not \in S\) and \(\lfloor \frac{x+y}{2}\rfloor =(0, 0)\not \in S\), which means that S is not L\(^\natural\)-convex. \(\square\)

Example 2

([18, Remark 2]) A set \(S\subseteq {\mathbb {Z}}^n\) is said to be \(L^\natural _2\)-convex set if it is the Minkowski sum of two L\(^\natural\)-convex sets. DDM-convexity and L\(^\natural _2\)-convexity are mutually independent. For example,

is a DDM-convex set, but is not L\(^\natural _2\)-convex. On the other hand,

is the Minkowski sum of two L\(^\natural\)-convex sets \(S_1 = \{\{(0, 0, 0, 0), (0, 1, 1, 0)\}\) and \(S_2 = \{(0, 0, 0, 0),(1, 1, 0, 0)\}\), but S is not DDM-convex because for \(x=(0, 0, 0, 0)\), \(y=(1, 2, 1, 0)\), \(\mu (x, y)=(0, 1, 0, 0)\not \in S\) and \(\mu (y, x)=(1, 1, 1, 0)\not \in S\). \(\square\)

We next discuss the independence between global/local discrete midpoint convexity and DDM-convexity by showing the independence between discrete midpoint convex sets and DDM-convex sets.

Example 3

It is easy to show that the set S defined by

is discrete midpoint convex, but S is not DDM-convex because for \(x=(0, 0, 0)\) and \(y=(2, 1, -1)\), we have \(\mu (x, y)=(1, 0, 0)\not \in S\) and \(\mu (y, x)=(1, 1, -1)\not \in S\).

On the other hand,

is DDM-convex. However, T is not discrete midpoint convex, and moreover, for any \((\tau _1, \tau _2, \tau _3)\in \{-1, +1\}^3\), the modified set

is not discrete midpoint convex while it is DDM-convex by Proposition 5 (3). The reason is as follows. Since T is symmetric on the third component, we can assume \(\tau _3=+1\).

-

In the case where \(\tau = (\pm 1, +1, +1)\), for \(x=(0, 0, 0) = \tau \odot (0,0,0)\) and \(y=(\pm 2, 1, -1)=\tau \odot (2,1,-1)\), \(\lfloor (x+y)/2 \rfloor = (\pm 1, 0, -1)\not \in \tau \odot T\).

-

In the case where \(\tau = (\pm 1, -1, +1)\), for \(x = (0, 0, 0) = \tau \odot (0,0,0)\) and \(y = (\pm 2, -1, 1)=\tau \odot (2,1,1)\), \(\lceil (x+y)/2 \rceil = (\pm 1, 0, 1)\not \in \tau \odot T\). \(\square\)

We finally show that 2-separable convex functions are DDM-convex. Let \(\xi _{i}, \varphi _{ij}, \psi _{ij} : {\mathbb {Z}}\rightarrow {\mathbb {R}}\cup \{+\infty \}\; (i=1,\ldots ,n; j \in \{1,\ldots ,n\} {\setminus } \{i\}\)) be univariate discrete convex functions. A 2-separable convex function [7] is defined as a function represented as

It is known that the function g defined by

is L\(^\natural\)-convex [21, Proposition 7.9]. By Propositions 4 (4) and 6, it is enough to show that each \(\psi _{ij}\) is DDM-convex in order to prove DDM-convexity of 2-separable convex function

Lemma 1

For a univariate discrete convex function \(\psi :{\mathbb {Z}}\rightarrow {\mathbb {R}}\cup \{+\infty \}\), \(f:{\mathbb {Z}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\) defined by

is DDM-convex.

Proof

For every \(x,y \in {\mathbb {Z}}^n\), we show that

Suppose that \(\min \{x_1{+}x_2, y_1{+}y_2\}=x_1{+}x_2\) and \(\max \{x_1{+}x_2, y_1{+}y_2\}=y_1{+}y_2\) without loss of generality. By convexity of \(\psi\), for every \(a,b,p,q \in {\mathbb {Z}}\) such that (i) \(a+b=p+q\), (ii) \(a \le p \le b\) and (iii) \(a \le q \le b\), we have \(\psi (a)+\psi (b)\ge \psi (p)+\psi (q)\). Thus, it is enough to show that

Obviously, (3.6) holds by

To show (3.7) and (3.8) under \(x_1+x_2 \le y_1+y_2\), we consider the following three cases separately: Case 1: \(x_1\le y_1\) and \(x_2\le y_2\), Case 2: \(x_1>y_1\) and \(x_2<y_2\), Case 3: \(x_1<y_1\) and \(x_2>y_2\).

Case 1 (\(x_1\le y_1\) and \(x_2\le y_2\)). In this case, we have \(\mu (x, y)_1=\lfloor \frac{x_1+y_1}{2}\rfloor\), \(\mu (x, y)_2=\lfloor \frac{x_2+y_2}{2}\rfloor\), \(\mu (y, x)_1=\lceil \frac{x_1+y_1}{2}\rceil\) and \(\mu (y, x)_2=\lceil \frac{x_2+y_2}{2}\rceil\), which imply

Conditions (3.7) and (3.8) are direct consequences of the above inequalities.

Case 2 (\(x_1>y_1\) and \(x_2<y_2\)). In this case, under condition \(x_1+x_2 \le y_1+y_2\), \(c_1=x_1-y_1\) and \(c_2=y_2-x_2\) satisfy \(c_2\ge c_1>0\). By the following calculations:

we have

Conditions (3.7) and (3.8) follow from \(\left\lfloor \frac{c_2}{2}\right\rfloor -\left\lfloor \frac{c_1}{2}\right\rfloor \ge 0\) and \(\left\lceil \frac{c_2}{2}\right\rceil -\left\lceil \frac{c_1}{2}\right\rceil \ge 0\).

Case 3 (\(x_1<y_1\) and \(x_2>y_2\)). In this case, we can show (3.7) and (3.8) in the same way as Case 2. \(\square\)

By Proposition 4 (4), Proposition 6 and Lemma 1, we have the next property.

Theorem 4

Any 2-separable convex function is DDM-convex.

Hence, 2-separable convex function is integrally convex.

4 Parallelogram inequality

Parallelogram inequality was originally proposed in [18] for globally/locally discrete midpoint convex functions. By borrowing arguments from [18], we show that DDM-convex sets/functions have similar properties.

For every pair \((x, y)\in {\mathbb {Z}}^n\times {\mathbb {Z}}^n\) with \(\Vert y-x\Vert _\infty =m\), we consider sets defined by

for which \(A_1\supseteq A_2\supseteq \cdots \supseteq A_m\), \(B_1\supseteq B_2\supseteq \cdots \supseteq B_m\), \(A_1\cap B_1=\emptyset\) and \(A_m\cup B_m\not =\emptyset\).

We first show the following property of DDM-convex sets.

Theorem 5

Let \(S\subseteq {\mathbb {Z}}^n\) be a DDM-convex set, \(x, y\in S\) with \(\Vert y-x\Vert _\infty =m\), and \(J\subseteq \{1, 2, \dots , m\}\). If \(\{A_k\}\) and \(\{B_k\}\) are defined by (4.1) and \(d=\sum _{k\in J}(\varvec{1}_{A_k}-\varvec{1}_{B_k})\), we have \(x+d\in S\) and \(y-d\in S\).

To show this theorem, it is enough to verify

We first show that the decomposition \(\sum _{k=1}^m (\varvec{1}_{A_k} - \varvec{1}_{B_k})\) of \(y-x\) can be constructed by using the operation \(\mu (\cdot ,\cdot )\).

For every \(x\in {\mathbb {Z}}^n\), let us consider multiset D(x) of vectors by the following recursive formula:

where \(\varvec{0}\) denotes the n-dimensional zero vector. We give several propositions.

Proposition 7

If a multiset \(\{d^k\in \{-1, 0, 1\}^n{\setminus }\{\varvec{0}\} \mid k=1, \dots , m\}\) satisfies

for each \(i\in \{1, \dots , n\}\), then

Proof

We prove the assertion by induction on m. The assertion obviously holds if \(m \le 1\).

Suppose that \(m=2\). By (4.4), we have

which, together with (4.3), imply the assertion.

Suppose that \(m \ge 3\). Let \(K=\{1, 2, \dots , m\}\), \(K^\mathrm {O}=\{k \in K \mid k \text{ is } \text{ odd }\}\) and \(K^\mathrm {E}=\{k \in K \mid k \text{ is } \text{ even }\}\). By induction hypothesis together with \(|K^\mathrm {O}|, |K^\mathrm {E}|<|K|\), we obtain

Furthermore, the claim below guarantees that

By combining (4.3), (4.6) and (4.7), we have

Claim

(i) \(\mu \left( \sum _{k\in K}d^k, \varvec{0}\right) =\sum _{k\in K^\mathrm {O}}d^k\), and (ii) \(\mu \left( \varvec{0}, \sum _{k\in K}d^k\right) =\sum _{k\in K^\mathrm {E}}d^k\).

Proof

We show (i) (and can show (ii) in the same way). Let us fix \(i \in \{1,\ldots ,n\}\). Assume that \(\left( \sum _{k\in K}d^k\right) _i=l > 0\). Since \(d^{1}_i=\cdots =d^{l}_i=1\) and \(d^{{l+1}}_i=\cdots =d^{m}_i=0\) by (4.4), we have

In the case where \(\left( \sum _{k\in K}d^k\right) _i=-l < 0\), we have \(d^{1}_i=\cdots =d^{l}_i=-1\) and \(d^{{l+1}}_i=\cdots =d^{m}_i=0\) by (4.4), and hence

If \(\left( \sum _{k\in K}d^k\right) _i=0\), by (4.4), we have \(d^{1}_i=\cdots =d^{m}_i=0\) and

Thus, (i) holds. (End of the proof of Claim). \(\square\)

Proposition 8

\(D(y-x)=\{\varvec{1}_{A_k}-\varvec{1}_{B_k} \mid k=1, \dots , m\}\).

Proof

By the construction (4.1), \(y-x=\sum _{k=1}^m(\varvec{1}_{A_k}-\varvec{1}_{B_k})\). By defining \(d^k=\varvec{1}_{A_k}-\varvec{1}_{B_k}\ (k=1, \dots , m)\), condition (4.4) of Proposition 7 holds. The assertion is an immediate consequence of (4.5). \(\square\)

By Proposition 8, (4.2) can be rewritten as

Therefore, Theorem 5 can be shown by the following proposition.

Proposition 9

For a DDM-convex set S and \(x,y \in S\), (4.8) holds.

Proof

We prove (4.8) for x, y by induction on \(\Vert y-x\Vert _\infty\). If \(\Vert y-x\Vert _\infty \le 1\), (4.8) trivially holds. If \(\Vert y-x\Vert _\infty =2\), then \(D(y-x)=\{\mu (y{-}x, \varvec{0}), \ \mu (\varvec{0}, y{-}x)\}\). Since S is DDM-convex, we have

which guarantee that (4.8) holds.

Suppose that \(\Vert y-x\Vert _\infty \ge 3\), and (4.8) holds for every \(x'', y''\in S\) with \(\Vert x''-y''\Vert _\infty <\Vert x-y\Vert _\infty\). We fix \(E\subseteq D(y-x)\) arbitrarily. Let \(x'=\mu (x, y)\) and \(y'=\mu (y, x)\). Then we have \(y' - x = y - x' = \mu (y-x,\varvec{0})\). By DDM-convexity of S, \(x'\) and \(y'\) also belong to S. By Proposition 2 (4) and the assumption \(\Vert y-x\Vert _\infty \ge 3\), we have

By induction hypothesis, (4.8) holds for \((x, y')\) and \((x', y)\), and furthermore, by the equality \(D(y'-x)=D(y-x')=D(\mu (y-x, \varvec{0}))\), we have

We also have \(v-u = x'-x = \mu (\varvec{0}, y-x)\) and

which, together with the induction hypothesis, guarantee that (4.8) holds for (u, v). Moreover, by (4.3), \(D(y-x) = D(\mu (y-x, \varvec{0}))\cup D(\mu (\varvec{0}, y-x))\) includes E, and hence, \(D(v-u)=D(\mu (\varvec{0}, y-x))\) includes \(E{\setminus } D(\mu (y-x, \varvec{0}))\). Thus, we have

By (4.9) and (4.10), we obtain

which implies (4.8). \(\square\)

We denote by DDMC(k) and by DDMC(\(\ge\) \(k\)) the classes of functions \(f : {\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) that satisfy DDM-convexity (2.1) for all \(x,y \in {\mathbb {Z}}^n\) with \(\Vert x-y\Vert _\infty = k\) and for all \(x,y \in {\mathbb {Z}}^n\) with \(\Vert x-y\Vert _\infty \ge k\), respectively. Before presenting parallelogram inequality for DDM-convex functions, we give a useful property.

Theorem 6

Let \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be a function in DDMC(2) such that \(\mathop {\mathrm{dom}}\limits f\) is DDM-convex. For \(x\in \mathop {\mathrm{dom}}\limits f\) and \(y\in {\mathbb {Z}}^n\) with \(\Vert y-x\Vert _\infty =m\), and for any partition (I, J) of \(\{1, \dots , m\}\), we consider

where \(A_k, B_k\ (k=1, \dots , m)\) are the sets defined by (4.1). Then we have

Proof

We note that \(y = x+d_1+d_2\). If \(y\not \in \mathop {\mathrm{dom}}\limits f\), by \(f(y)=+\infty\), (4.11) trivially holds. In the sequel, we assume that \(y \in \mathop {\mathrm{dom}}\limits f\). Let I be denoted by \(\{i_1,i_2\ldots ,i_{|I|}\}\) \((i_1< i_2< \cdots < i_{|I|})\) and J by \(\{j_1,j_2\ldots ,j_{|J|}\}\) \((j_1< j_2< \cdots < j_{|J|})\). For every \(k = 1,\ldots ,|I|\) and \(l = 1,\ldots ,|J|\), we denote \(d_1^k = \varvec{1}_{A_{i_k}}-\varvec{1}_{B_{i_k}}\) and, similarly, \(d_2^l = \varvec{1}_{A_{j_l}}-\varvec{1}_{B_{j_l}}\). For every \(k=0,1, \dots , |I|\) and for every \(l=0,1, \dots , |J|\), define

By Theorem 5, for every k, l, we have \(x(k, l) \in \mathop {\mathrm{dom}}\limits f\). We note that (4.11) is equivalent to

Fix \(k \in \{1,\ldots ,|I|\}\) and \(l \in \{1,\ldots , |J|\}\). According to whether \(i_k > j_l\) or \(i_k < j_l\), either

or

Thus, in the case where \(i_k > j_l\), we have

which implies \(\Vert d_1^k+d_2^l\Vert _\infty = 2\), \(\mu (d_1^k+d_2^l, \varvec{0})=\varvec{1}_{A_{j_l}}-\varvec{1}_{B_{j_l}}=d^l_2\) and \(\mu (\varvec{0}, d_1^k+d_2^l)=\varvec{1}_{A_{i_k}}-\varvec{1}_{B_{i_k}}=d^k_1\). Similarly, in the case where \(i_k < j_l\), we have \(\Vert d_1^k+d_2^l\Vert _\infty = 2\), \(\mu (d_1^k+d_2^l, \varvec{0})=d^k_1\), \(\mu (\varvec{0}, d_1^k+d_2^l)=d^l_2\). In both cases, since \(\Vert x(k, l)-x(k-1, l-1)\Vert _\infty =\Vert d_1^k+d_2^l\Vert _\infty =2\) and \(f \in\) DDMC(2), we obtain

On the other hand, the facts

together with \(\{\mu (d_1^k+d_2^l, \varvec{0}),\mu (\varvec{0}, d_1^k+d_2^l)\} = \{d_1^k, d_2^l\}\) in the both cases where \(i_k > j_l\) and \(i_k < j_l\), yield the right-hand side of (4.13) is equal to \(f(x(k, l-1))+f(x(k-1, l))\). Therefore, we obtain

By adding the above inequalities for (k, l) with \(1\le k\le |I|\) and \(1\le l\le |J|\), we obtain (4.12). We emphasize that all the terms that are canceled in this addition of inequalities are finite valued because \(x(k,l) \in \mathop {\mathrm{dom}}\limits f\) for all (k, l) with \(0\le k\le |I|\) and \(0\le l\le |J|\). \(\square\)

The next theorem is an immediate consequence of Theorem 6, because a DDM-convex function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) belongs to DDMC(2) and \(\mathop {\mathrm{dom}}\limits f\) is DDM-convex.

Theorem 7

Let \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be a DDM-convex function. For every \(x, y\in \mathop {\mathrm{dom}}\limits f\), let \(\{A_k \mid k=1,\ldots ,m\}\) and \(\{B_k \mid k=1,\ldots ,m\}\) be the families defined by (4.1), where \(m = \Vert y-x\Vert _\infty\). For any subset \(J \subseteq \{1,\ldots ,m\}\), let \(d = \sum _{k\in J}(\varvec{1}_{A_k}-\varvec{1}_{B_k})\). Then we have

We call the inequality (4.14) parallelogram inequality of DDM-convex functions.

5 Characterizations

In this section, we give several equivalent conditions of DDM-convexity and a simple characterization of quadratic DDM-convex functions.

For every pair \((x,y) \in {\mathbb {Z}}^n \times {\mathbb {Z}}^n\), we recall that the families \(\{A_k \mid k=1,\ldots ,m\}\) and \(\{B_k \mid k=1,\ldots ,m\}\) are defined by

in (4.1), where \(m = \Vert y-x\Vert _\infty\).

Theorem 8

For a function \(f:{\mathbb {Z}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\), the following properties are equivalent to each other.

-

(1)

f is DDM-convex, i.e., \(f\in\) DDMC(\(\ge\) \(1\)).

-

(2)

\(\mathop {\mathrm{dom}}\limits f\) is DDM-convex and \(f\in\) DDMC(2).

-

(3)

f satisfies parallelogram inequalities for every \(x, y\in \mathop {\mathrm{dom}}\limits f\) and for any subset \(J \subseteq \{1,\ldots ,m\}\) where \(m = \Vert y-x\Vert _\infty\).

-

(4)

For every \(x, y\in \mathop {\mathrm{dom}}\limits f\), we have

$$\begin{aligned} f(x)+f(y)\ge f(x+\varvec{1}_{A_m}-\varvec{1}_{B_m})+f(y-\varvec{1}_{A_m}+\varvec{1}_{B_m}), \end{aligned}$$(5.1)where \(m = \Vert y-x\Vert _\infty\), and the sets \(A_m\) and \(B_m\) are defined by (4.1).

-

(5)

For every \(x, y\in \mathop {\mathrm{dom}}\limits f\) and for any \(\alpha \in \{1, \dots , m\}\), by defining \(d=\sum _{k=1}^\alpha (\varvec{1}_{A_k}-\varvec{1}_{B_k})\),we have

$$\begin{aligned} f(x)+f(y)\ge f(x+d)+f(y-d), \end{aligned}$$where \(m = \Vert y-x\Vert _\infty\), and the families \(\{A_k \mid k=1,\ldots ,m\}\) and \(\{B_k \mid k=1,\ldots ,m\}\) are defined by (4.1).

Proof

The implication \((1) \Rightarrow (2)\) is obvious and the implications from (1) to (3), (4) and (5) follow from Theorem 7. To prove opposite implications, we show \(f \in\) DDMC(m) for every \(m\ge 1\) from (4), and show [\((5) \Rightarrow (4)\)], [\((3) \Rightarrow (4)\)] and [\((2) \Rightarrow (3)\)].

(4)\(\Rightarrow\)(1): Since \(f \in\) DDMC(1) always holds from Proposition 2, we assume \(m\ge 2\) and fix \(x,y \in \mathop {\mathrm{dom}}\limits f\) with \(\Vert x-y\Vert _\infty =m\), arbitrarily. Let denote \(p=x+\varvec{1}_{A_m}-\varvec{1}_{B_m}\) and \(q=y-\varvec{1}_{A_m}+\varvec{1}_{B_m}\) in (5.1). Vectors p and q satisfy conditions (a) and (c) of Proposition 1, \(\Vert p-q\Vert _\infty <m\) and \(p,q \in \mathop {\mathrm{dom}}\limits f\). By repeating (5.1) for (p, q) until \(\Vert p-q\Vert _\infty \le 1\), the final p and q satisfy all conditions of Proposition 1, and hence, \(p=\mu (x, y)\) and \(q=\mu (y, x)\) are satisfied. This shows \(f \in\) DDMC(m) holds.

(5)\(\Rightarrow\)(4): By setting \(\alpha = m-1\) in (5), we obtain

and hence, (4).

(3)\(\Rightarrow\)(4): By setting \(J=\{1, \dots , m-1\}\) in (3), we obtain

and hence, (4).

(2)\(\Rightarrow\)(3): Property (2) and Theorem 6 imply (3). \(\square\)

Remark 1

Theorem 8 may be regarded as a generalization of Theorem 3.

Property (4) of Theorem 8 corresponds to (4) of Theorem 3. For an L\(^\natural\)-convex function f, the following inequalities

and

hold. These inequalities imply (5.1). However, for a DDM-convex function f, the two parts on \(A_m\) and \(B_m\) must be combined to a single inequality (5.1). This demonstrates the difference between L\(^\natural\)-convexity and DDM-convexity.

Property (5) of Theorem 8 corresponds to (1) of Theorem 3. In (5) of Theorem 8, two vectors \(x+d=x+\sum _{k=1}^\alpha (\varvec{1}_{A_k}-\varvec{1}_{B_k})\) and \(y-d=y-\sum _{k=1}^\alpha (\varvec{1}_{A_k}-\varvec{1}_{B_k})\) are expressed by

On the other hand, in (3.1), two vectors \((x-\alpha \varvec{1})\vee y\) and \(x\wedge (y+\alpha \varvec{1})\) can be rewritten as

In the same way as the relation between (4) of Theorem 8 and (4) of Theorem 3, two separate operations for L\(^\natural\)-convex functions must be executed simultaneously for DDM-convex functions. \(\square\)

For a quadratic function \(f(x) = x^\top Q x\;(x\in {\mathbb {Z}}^n)\) with a symmetric matrix \(Q = [q_{ij}]\), we show that f is DDM-convex if and only if Q is diagonally dominant with nonnegative diagonals:

For each \(p\in {\mathbb {R}}\), let \(p^+=\max \{p, 0\}\) and \(p^-=\max \{-p, 0\}\). Note that \(|p|=p^{+} + p^{-}\). Quadratic function \(f(x)=x^\top Q x\) can be written asFootnote 2

In (5.3), the condition (5.2) of Q implies the nonnegativity of coefficients of \(x_i^2\). Thus, if Q is diagonally dominant with nonnegative diagonals, then f is 2-separable convex, and hence, DDM-convex by Theorem 4. By proving the opposite implication, we obtain the following property.

Theorem 9

For a quadratic function \(f(x) = x^\top Q x\; (x \in {\mathbb {Z}}^n)\) with a symmetric matrix \(Q = [q_{ij}]\), f is DDM-convex if and only if Q is diagonally dominant with nonnegative diagonals.

Proof

It is enough to show that if f is DDM-convex, then Q is diagonally dominant with nonnegative diagonals. For each \(i\in \{1, \dots , n\}\), define \(z^i\in {\mathbb {Z}}^n\) by

By DDM-convexity of f, the inequality

must hold. Since \(\mu (z^i, \varvec{0})=z^i-\varvec{1}_i\) and \(\mu (\varvec{0}, z^i)=\varvec{1}_i\), we have

which implies

By \(Q^\top = Q\), we obtain the diagonal dominance with nonnegative diagonals of Q. \(\square\)

The minimizers of DDM-convex functions are DDM-convex sets, while the minimizers of L\(^\natural\)-convex functions are L\(^\natural\)-convex sets. The class of L\(^\natural\)-convex functions has a characterization in terms of minimizers. For a function \(f : {\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) and \(p \in {\mathbb {R}}^n\), we denote by \(f-p\) the function given by

Theorem 10

([21, 23]) Under some regularity condition, a function \(f : {\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) is L\(^\natural\)-convex if and only if \(\mathop {\mathrm{argmin}}\limits (f-p)\) is an L\(^\natural\)-convex set for every \(p \in {\mathbb {R}}^n\) with \(\inf (f-p) >-\infty\).

Unfortunately, the class of DDM-convex functions does not have a similar characterization.

Example 4

Let us consider the function \(f : {\mathbb {Z}}^3 \rightarrow {\mathbb {R}}\cup \{+\infty \}\) given by

The function f is not DDM-convex because

while \(\mathop {\mathrm{dom}}\limits f\) is a DDM-convex set (in fact, an L\(^\natural\)-convex set). Furthermore, \(\mathop {\mathrm{argmin}}\limits (f-p)\) is a DDM-convex set for every \(p \in {\mathbb {R}}^3\) as follows. There exists no \(p \in {\mathbb {R}}^3\) such that \(\{(0,0,0),(2,1,1)\} \subseteq \mathop {\mathrm{argmin}}\limits (f-p)\), because we have \(0 \le 1-p_1-p_2\) from \((f-p)(0,0,0) \le (f-p)(1,1,0)\), and \(2-p_1-p_2 \le 0\) from \((f-p)(2,1,1) \le (f-p)(1,0,1)\). For any \(p \in {\mathbb {R}}^3\) and for any \(x,y \in \mathop {\mathrm{argmin}}\limits (f-p)\), this fact implies that \(\Vert x-y\Vert _\infty \le 1\) must hold, and hence, \(\mathop {\mathrm{argmin}}\limits (f-p)\) is a DDM-convex set. We note that this example also shows that a similar characterization does not hold for the classes of globally/locally discrete midpoint convex functions. \(\square\)

6 Operations

We discuss several operations for discrete convex functions, including scaling operations [17, 18, 21], restriction, projection, direct sum and convolution operations [16, 21, 22].

6.1 Scaling operations

Scaling operations are useful techniques for designing efficient algorithms in discrete optimization. It is shown in [18] that global/local discrete midpoint convexity, including L\(^\natural\)-convexity, is closed under scaling operations. We show that DDM-convexity is also closed under scaling operations.

Given a function \(f:{\mathbb {Z}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\) and a positive integer \(\alpha\), the \(\alpha\)-scaling of f is the function \(f^\alpha\) defined by

We also define the \(\alpha\)-scaling \(S^\alpha\) of a set \(S \subseteq {\mathbb {Z}}^n\) by

Theorem 11

Given a DDM-convex function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) and a positive integer \(\alpha\), the scaled function \(f^\alpha :{\mathbb {Z}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\) is also DDM-convex.

Proof

By the equivalence between (1) and (4) of Theorem 8, it is sufficient to show that

for every \(x, y\in {\mathbb {Z}}^n\) with \(\Vert x-y\Vert _\infty = m\) and for families \(\{A_k \mid k=1,\ldots ,m\}\) and \(\{B_k \mid k=1,\ldots ,m\}\) defined by (4.1). The above inequality is written as

For \(\alpha x\) and \(\alpha y\) with \(\Vert \alpha x-\alpha y\Vert _\infty =\alpha m\), by defining

we have \(\alpha y-\alpha x=\sum _{l=1}^{\alpha m}(\varvec{1}_{A_l^\alpha }-\varvec{1}_{B_l^\alpha })\) and

By (5) of Theorem 8 for

we have

that is, (6.1). \(\square\)

Corollary 3

For a DDM-convex set \(S\subseteq {\mathbb {Z}}^n\) and a positive integer \(\alpha\), the \(\alpha\)-scaled set \(S^\alpha\) is also DDM-convex.

6.2 Restrictions

For a function \(f:{\mathbb {Z}}^{n+m}\rightarrow {\mathbb {R}}\cup \{+\infty \}\), the restriction of f on \({\mathbb {Z}}^n\) is the function g defined by

For a set \(S\subseteq {\mathbb {Z}}^{n+m}\), the restriction of S on \({\mathbb {Z}}^n\) is also defined by

Obviously, the following properties hold.

Proposition 10

For a DDM-convex function, its restrictions are also DDM-convex.

Proposition 11

For a DDM-convex set, its restrictions are also DDM-convex.

6.3 Projections

For a function \(f:{\mathbb {Z}}^{n+m}\rightarrow {\mathbb {R}}\cup \{+\infty \}\), the projection of f to \({\mathbb {Z}}^n\) is the function defined by

where we assume that \(g(x)>-\infty\) for all \(x \in {\mathbb {Z}}^n\). For a set \(S\subseteq {\mathbb {Z}}^{n+m}\), the projection of S to \({\mathbb {Z}}^n\) is also defined by

In the same way as the proof for globally discrete midpoint convex functions in [16, Theorem 3.5] we can show the following property.

Proposition 12

For a DDM-convex function, its projections are DDM-convex.

Proof

Let g be the projection defined by (6.2) of a DDM-convex function f. For every \(x^{(1)}, x^{(2)} \in \mathop {\mathrm{dom}}\limits g\) and every \(\varepsilon >0\), by the definition of the projection, there exist \(y^{(1)}, y^{(2)}\in {\mathbb {Z}}^m\) with \(g(x^{(i)})\ge f(x^{(i)}, y^{(i)})-\varepsilon\) for \(i=1, 2\). Thus, we have

By DDM-convexity of f and the definition of the projection, we have

for any \(\varepsilon >0\), which guarantees DDM-convexity of g. \(\square\)

Corollary 4

For a DDM-convex set, its projections are also DDM-convex.

6.4 Direct sums

For two functions \(f_1:{\mathbb {Z}}^{n_1}\rightarrow {\mathbb {R}}\cup \{+\infty \}\) and \(f_2:{\mathbb {Z}}^{n_2}\rightarrow {\mathbb {R}}\cup \{+\infty \}\), the direct sum of \(f_1\) and \(f_2\) is the function \(f_1\oplus f_2:{\mathbb {Z}}^{n_1+n_2}\rightarrow {\mathbb {R}}\cup \{+\infty \}\) defined by

For two sets \(S_1\subseteq {\mathbb {Z}}^{n_1}\) and \(S_2\subseteq {\mathbb {Z}}^{n_2}\), the direct sum of \(S_1\) and \(S_2\) is defined by

DDM-convexity is closed under direct sums as below.

Proposition 13

For two DDM-convex functions \(f_1:{\mathbb {Z}}^{n_1}\rightarrow {\mathbb {R}}\cup \{+\infty \}\) and \(f_2:{\mathbb {Z}}^{n_2}\rightarrow {\mathbb {R}}\cup \{+\infty \}\), the direct sum \(f_1\oplus f_2\) is also DDM-convex.

Proof

For every \(x^{(1)}, y^{(1)}\in {\mathbb {Z}}^{n_1}\) and \(x^{(2)}, y^{(2)}\in {\mathbb {Z}}^{n_2}\), it follows from DDM-convexity of \(f_1\) and \(f_2\) that

By the following relations

and by (6.5) and (6.6), we obtain

which says DDM-convexity of \(f_1 \oplus f_2\). \(\square\)

Corollary 5

For two DDM-convex sets \(S_1\subseteq {\mathbb {Z}}^{n_1}\) and \(S_2\subseteq {\mathbb {Z}}^{n_2}\), \(S_1\oplus S_2\) is also DDM-convex.

6.5 Convolutions

For two functions \(f_1, f_2:{\mathbb {Z}}^{n}\rightarrow {\mathbb {R}}\cup \{+\infty \}\), the convolution \(f_1\square f_2\) is the function defined by

where we assume \((f_1\square f_2)(x)>-\infty\) for every \(x \in {\mathbb {Z}}^n\). For two sets \(S_1, S_2\subseteq {\mathbb {Z}}^n\), the Minkowski sum \(S_1 + S_2\) defined by

corresponds to the convolution of indicator functions \(\delta _{S_1}\) and \(\delta _{S_2}\). The next example shows that the Minkowski sum of two DDM-convex sets may not be DDM-convex, and hence, DDM-convexity is not closed under the convolutions.

Example 5

We borrow the example in [16, Example 4.2], which shows that L\(^\natural\)-convexity and global/local discrete midpoint convexity may not be closed under the convolutions.

Let \(S_1=\{(0, 0, 0), (1, 1, 0)\}\) and \(S_2=\{(0, 0, 0), (0, 1, 1)\}\) which are DDM-convex. The Minkowski sum of \(S_1\) and \(S_2\)

is not DDM-convex, because for \(x=(0, 0, 0)\) and \(y=(1, 2, 1)\), we have \(\mu (x, y)=(0, 1, 0)\not \in S_1+S_2\) and \(\mu (y, x)=(1, 1, 1)\not \in S_1+S_2\). \(\square\)

It is known that the convolution of an L\(^\natural\)-convex function and a separable convex function is also L\(^\natural\)-convex, where a separable convex function \(\varphi : {\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) is given by

for univariate discrete convex functions \(\varphi _i (i=1,\ldots ,n)\). By the same arguments of [16, Proposition 4.7], this can be extended to DDM-convexity.

Proposition 14

The convolution of a DDM-convex function and a separable convex function is also DDM-convex.

Proof

Let \(f :{\mathbb {Z}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\) be a DDM-convex function, \(\varphi :{\mathbb {Z}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\) a separable convex function represented as \(\sum _{i=1}^n \varphi _i\) and let \(g=f\square \varphi\). For every \(x^{(1)}, x^{(2)}\in \mathop {\mathrm{dom}}\limits g\) and \(\varepsilon >0\), by the definition of convolutions, there exist \(y^{(i)}, z^{(i)}\; (i=1, 2)\) such that

It follows from DDM-convexity of f that

Let

By \(g = f\square \varphi\), we have

The claim, below, states that

for each \(i=1, \dots , n\). Thus, we have

By summing up (6.7), (6.8), (6.9), (6.10) and (6.12), we obtain

for any \(\varepsilon >0\), which guarantees DDM-convexity of g.

Claim

(6.11) holds.

Proof

Since \(\varphi _i\) is univariate discrete convex, for every \(a, b\in {\mathbb {Z}}\) with \(a\le b\) and for every \(p, q\in {\mathbb {Z}}\) such that (i) \(a+b=p+q\), (ii) \(a \le p \le b\) and (iii) \(a \le q \le b\), we have \(\varphi _i(a)+\varphi _i(b) \ge \varphi _i(p)+\varphi _i(q)\). Thus, it is enough to show the following relations:

Condition (6.13) follows from

and

To show (6.14) and (6.15), we consider the following cases: Case 1: \(x^{(1)}_i\ge x^{(2)}_i\) and \(y^{(1)}_i\ge y^{(2)}_i\), Case 2: \(x^{(1)}_i< x^{(2)}_i\) and \(y^{(1)}_i< y^{(2)}_i\), Case 3: \(x^{(1)}_i\ge x^{(2)}_i\) and \(y^{(1)}_i< y^{(2)}_i\), Case 4: \(x^{(1)}_i< x^{(2)}_i\) and \(y^{(1)}_i\ge y^{(2)}_i\).

Case 1 (\(x^{(1)}_i\ge x^{(2)}_i\) and \(y^{(1)}_i\ge y^{(2)}_i\)). In this case, we have

By substituting \(z_i^{(1)}{+}z_i^{(2)}=(x_i^{(1)}{+}x_i^{(2)})-(y_i^{(1)}{+}y_i^{(2)})\) into

we obtain

In the above inequalities, we round up and round down every terms, to obtain

Thus, (6.14) and (6.15) are satisfied.

Case 2 (\(x^{(1)}_i< x^{(2)}_i\) and \(y^{(1)}_i< y^{(2)}_i\)). In the same way as Case 1, we can show (6.14) and (6.15).

Case 3 (\(x^{(1)}_i\ge x^{(2)}_i\) and \(y^{(1)}_i< y^{(2)}_i\)). In this case, we have

Moreover, we obtain

Case 4: (\(x^{(1)}_i< x^{(2)}_i\) and \(y^{(1)}_i\ge y^{(2)}_i\)). In the same way as Case 3, we can show (6.14) and (6.15). (End of the proof of Claim). \(\square\)

Corollary 6

Minkowski sum of a DDM-convex set and an integral box is also DDM-convex, where an integral box is the set defined by \(\{ x \in {\mathbb {Z}}^n \mid a \le x \le b\}\) for some \(a \in ({\mathbb {Z}}\cup \{-\infty \})^n\) and \(b \in ({\mathbb {Z}}\cup \{+\infty \})^n\) with \(a \le b\).

7 Proximity theorems

For a function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) and a positive integer \(\alpha\), a proximity theorem estimates the distance between a given local minimizer \(x^\circ\) of the \(\alpha\)-scaled function \(f^\alpha\) and a minimizer \(x^*\) of f. For instance, the following proximity theorems for L\(^\natural\)-convex functions and globally/locally discrete midpoint convex functions are known.

Theorem 12

([11, 21]) Let \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be an L\(^\natural\)-convex function, \(\alpha\) be a positive integer and \(x^\circ \in \mathop {\mathrm{dom}}\limits f\). If \(f(x^\circ )\le f(x^\circ +\alpha d)\) for all \(d\in \{0, +1\}^n \cup \{0,-1\}^n\), then there exists \(x^*\in \mathop {\mathrm{argmin}}\limits f\) with \(\Vert x^\circ -x^*\Vert _\infty \le n(\alpha -1)\).

Theorem 13

([18]) Let \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be a globally/locally discrete midpoint convex function, \(\alpha\) be a positive integer and \(x^\circ \in \mathop {\mathrm{dom}}\limits f\). If \(f(x^\circ )\le f(x^\circ +\alpha d)\) for all \(d\in \{-1, 0, +1\}^n\), then there exists \(x^*\in \mathop {\mathrm{argmin}}\limits f\) with \(\Vert x^\circ -x^*\Vert _\infty \le n(\alpha -1)\).

In the same way as the arguments in [18], we can show the following proximity theorem for DDM-convex functions.

Theorem 14

Let \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be a DDM-convex function, \(\alpha\) be a positive integer and \(x^\circ \in \mathop {\mathrm{dom}}\limits f\). If \(f(x^\circ )\le f(x^\circ +\alpha d)\) for all \(d\in \{-1, 0, +1\}^n\), then there exists \(x^*\in \mathop {\mathrm{argmin}}\limits f\) with \(\Vert x^\circ -x^*\Vert _\infty \le n(\alpha -1)\).

We note that \(f^\alpha\) is also DDM-convex by Theorem 11 and \(x^\circ\) corresponds to a minimizer \(\varvec{0}\) of \(f^\alpha (y) = f(x^\circ +\alpha y)\) by Corollary 1. We emphasize that the bound \(n(\alpha -1)\) for DDM-convex functions is the same as that for L\(^\natural\)-convex functions and globally/locally discrete midpoint convex functions.

To prove Theorem 14, we assume \(x^\circ = \varvec{0}\) without loss of generality. Let \(S=\{x\in {\mathbb {Z}}^n \mid \Vert x\Vert _\infty \le n(\alpha -1)\}\), \(W=\{x\in {\mathbb {Z}}^n \mid \Vert x\Vert _\infty =n(\alpha -1)+1\}\) and let \(\gamma =\mathop {\mathrm{argmin}}\limits \{f(x) \mid x\in S\}\). We show that

Then Theorem 1 (box-barrier property) implies that \(f(z)\ge \gamma\) for all \(z\in {\mathbb {Z}}^n\).

Fix \(y =(y_1,\ldots ,y_n)\in W\), and let \(\Vert y\Vert _\infty =m (=n(\alpha -1)+1)\). By using

we can write y as

where \(A_1\supseteq \cdots \supseteq A_m\), \(B_1\supseteq \cdots \supseteq B_m\), \(A_1\cap B_1=\emptyset\) and \(A_m\cup B_m\not =\emptyset\).

Lemma 2

There exists some \(k_0\in \{1, \dots , m-\alpha +1\}\) with \((A_{k_0}, B_{k_0})=(A_{k_0+j}, B_{k_0+j})\) for \(j=1, \ldots , \alpha -1\).

Proof

By \(A_m \cup B_m \ne \emptyset\), we may assume \(A_m\not =\emptyset\). Let \(s=|A_1|\) and \((a_k, b_k)=(|A_k|, |B_k|+s)\) for \(k=1, \dots , m\). Since \(A_1\supseteq \cdots \supseteq A_m \ne \emptyset\), \(B_1\supseteq \cdots \supseteq B_m\) and \(A_1\cap B_1=\emptyset\), we have \(n-s \ge |B_1|\), \(s= a_1\ge \cdots \ge a_m \ge 1\) and \(n \ge b_1 \ge \cdots \ge b_m\ge s\). Therefore, \((s, n) \ge (a_1, b_1) \ge \cdots \ge (a_m, b_m) \ge (1, s)\). Because \(m=n(\alpha -1)+1\) and the length of a strictly decreasing chain connecting (s, n) to (1, s) in \({\mathbb {Z}}^2\) is bounded by n, there exists a constant subsequence of length \(\ge \alpha\) in the sequence \(\{(a_k,b_k)\}_{k=1,\ldots ,m}\) by the pigeonhole principle. Hence the assertion holds. \(\square\)

By using \(k_0\) in Lemma 2, we define a subset J of \(\{1, \dots , m\}\) by \(J=\{k_0, \dots , k_0+\alpha -1\}\). By the parallelogram inequality (4.14) in Theorem 7, where \(d_0=\varvec{1}_{A_{k_0}}-\varvec{1}_{B_{k_0}}\) and \(d = \sum _{j\in J}(\varvec{1}_{A_j}-\varvec{1}_{B_j})=\alpha d_0\), we obtain

By the assumption, we have \(f(\alpha d_0)\ge f(x^\circ ) = f(\varvec{0})\). We also have \(y - \alpha d_0 \in S\) because

By the definition of \(\gamma\), \(f(y - \alpha d_0)\ge \gamma\) must hold. Therefore,

8 Minimization algorithms

In this section, we propose two algorithms for DDM-convex function minimization.

8.1 The 1-neighborhood steepest descent algorithm

We first propose a variant of steepest descent algorithm for DDM-convex function minimization problem.

Let \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be a DDM-convex function with \(\mathop {\mathrm{argmin}}\limits f\not =\emptyset\). We suppose that an initial point

is given. Let L denote the minimum \(l_\infty\)-distance between \(x^{(0)}\) and a minimizer of f, that is, L is defined by

For all \(k=0, 1, \dots , L\) we define sets \(S_k\) by

The idea of our algorithm is to generate a sequence of minimizers in \(S_k\) for \(k=1,\ldots ,L\). The next proposition guarantees that consecutive minimizers can be chosen to be close to each other.

Proposition 15

For each \(k = 1,\ldots ,L\) and for any \(x^{(k-1)}\in \mathop {\mathrm{argmin}}\limits \{f(x) \mid x\in S_{k-1}\}\), there exists \(x^{(k)}\in \mathop {\mathrm{argmin}}\limits \{f(x) \mid x\in S_k \}\) with \(\Vert x^{(k)}-x^{(k-1)}\Vert _\infty \le 1\).

Proof

If \(k=1\), the assertion is obvious. Suppose that \(k \ge 2\) and y is any point in \(S_k\). By (2.1) for \(x^{(k-1)}\) and y, we have

Since \(x^{(k-1)}, y\in S_k\) and \(S_k\) is a DDM-convex set, we also have

Next, we show

To show this we arbitrarily fix \(i\in \{1, \dots , n\}\), and consider the two cases: Case 1: \(x^{(k-1)}_i-y_i=l\ (l\ge 1)\) and Case 2: \(x^{(k-1)}_i-y_i=-l\ (l\ge 1)\).

Case 1 (\(x^{(k-1)}_i-y_i=l\ (l\ge 1)\)). In this case, \(\mu (x^{(k-1)}, y)_i=x^{(k-1)}_i-\left\lfloor \frac{l}{2}\right\rfloor\) and \(\left\lfloor \frac{l}{2}\right\rfloor \le l-1\). Thus, we have

Case 2 (\(x^{(k-1)}_i-y_i=-l\ (l\ge 1)\)). In this case, \(\mu (x^{(k-1)}, y)_i=x^{(k-1)}_i+\left\lfloor \frac{l}{2}\right\rfloor\) and \(\left\lfloor \frac{l}{2}\right\rfloor \le l-1\). Thus, we have

By the above arguments, (8.3) holds.

Let \(y^*\) be a point y in \(\mathop {\mathrm{argmin}}\limits \{f(x) \mid x\in S_k\}\) minimizing \(\Vert y-x^{(k-1)}\Vert _\infty\). To prove \(\Vert y^*-x^{(k-1)}\Vert _\infty \le 1\) by contradiction, suppose that \(\Vert y^*-x^{(k-1)}\Vert _\infty \ge 2\), which yields \(\Vert y^*-x^{(k-1)}\Vert _\infty >\Vert \mu (y^*, x^{(k-1)})-x^{(k-1)}\Vert _\infty\). Since \(\mu (y^*, x^{(k-1)}) \in S_k\) by (8.2), this implies \(f(y^*)<f(\mu (y^*, x^{(k-1)}))\). Moreover, by (8.3), we have \(f(x^{(k-1)})\le f(\mu (x^{(k-1)}, y^*))\). These two inequalities contradict (8.1) for \(x^{(k-1)}\) and \(y^*\). Hence \(\Vert y^*-x^{(k-1)}\Vert _\infty \le 1\) must hold. \(\square\)

By Proposition 15, it seems be natural to assume that we can find a minimizer of f within the 1-neighborhood \(N_1(x)\) of x defined by

With the use of a 1-neighborhood minimization oracle, which finds a point minimizing f in \(N_1(x)\) for any \(x \in \mathop {\mathrm{dom}}\limits f\), our algorithm can be described as below.

The 1-neighborhood steepest descent algorithm

-

D0: Find \(x^{(0)} \in \mathop {\mathrm{dom}}\limits f\), and set \(k:= 1\).

-

D1: Find \(x^{(k)}\) that minimizes f in \(N_{1}(x^{(k-1)})\).

-

D2: If \(f(x^{(k)}) = f(x^{(k-1)})\), then output \(x^{(k-1)}\) and stop.

-

D3: Set \(k := k+1\), and go to D1.

Theorem 15

For a DDM-convex function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) with \(\mathop {\mathrm{argmin}}\limits f\not =\emptyset\), the 1-neighborhood steepest descent algorithm finds a minimizer of f exactly in \((L{+}1)\) iterations, that is, exactly in \((L{+}1)\) calls of the 1-neighborhood minimization oracles.

Proof

By Proposition 15, the sequence \(\{x^{(k)}\}\) generated by the 1-neighborhood steepest descent algorithm satisfy

Claim

If \(f(x^{(k)})=f(x^{(k-1)})\) at Step D2, then \(x^{(k-1)}\in \mathop {\mathrm{argmin}}\limits f\).

Proof

For any \(d\in \{+1, 0, -1\}^n\), \(x^{(k-1)}+d\) belongs to \(S_{k}\), and hence, \(f(x^{(k)})\le f(x^{(k-1)}+d)\) by (8.4). Therefore, if \(f(x^{(k)})=f(x^{(k-1)})\), then \(f(x^{(k-1)})\le f(x^{(k-1)}+d)\) for any d. Corollary 1 in Sect. 3 guarantees \(x^{(k-1)}\in \mathop {\mathrm{argmin}}\limits f\). (End of the proof of Claim).

By the definition of L, \(x^{(k)} \ne x^{(k-1)}\) if \(k \le L\), and \(x^{(L)}=x^{(L+1)}\). Therefore, our algorithm stops in \((L+1)\) iterations. \(\square\)

Remark 2

Theorem 15 says that the sequence of points generated by the 1-neighborhood steepest descent algorithm is bounded by the \(\ell _\infty\)-distance between an initial point and the nearest minimizer. Similar facts are pointed out for L\(^\natural\)-convex function minimization [13, 24, 26] and globally/locally discrete midpoint convex function minimization [18]. \(\square\)

Remark 3

Let F(n) denote the number of function evaluations in the 1-neighborhood minimization oracle. Since any function defined on \(\{0,1\}^n\) is DDM-convex, the 1-neighborhood minimization problem is NP-hard. In almost cases, F(n) seems to be \(\Theta (3^n)\) by a brute-force calculation, because \(|N_1(\cdot )| = 3^n\). Fortunately, for L\(^\natural\)-convex functions, F(n) is bounded by a polynomial in n. Another hopeful case is a fixed parameter tractable case, that is, the case where there exists some parameter k such that the number of function evaluations F(n, k) in n and k is bounded by a polynomial p(n) in n times any function g(k) in k (see the next remark). \(\square\)

Remark 4

Let us consider the following problem:

where a symmetric matrix \(Q \in {\mathbb {R}}^{n \times n}\) is nonsingular and diagonally dominant with nonnegative diagonals, and \(c \in {\mathbb {R}}^n\). Since Q is nonsingular, the (convex) continuous relaxation problem has a unique minimizer \(-Q^{-1}c\). Furthermore, because the objective function is 2-separable convex, it follows from Theorem 18 in the next section that there exists an optimal solution in the box:

Therefore, the 1-neighborhood steepest descent algorithm with an initial point \(\lfloor -Q^{-1}c \rfloor\) find an optimal solution in O(n) iterations. Furthermore, if \(Q = [q_{ij}]\) is \((2k+1)\)-diagonal, that is,

then \(F(n,k) = O(n 3^{k+1})\) as in [6]. \(\square\)

8.2 Scaling algorithm

In the same way as the scaling algorithm for minimization of globally/locally discrete midpoint convex functions in [18], the scaling property (Theorem 11) and the proximity theorem (Theorem 14) enable us to design a scaling algorithm for the minimization of DDM-convex functions with bounded effective domains.

Let \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) be a DDM-convex function with bounded effective domain. We suppose that \(K_\infty =\max \{\Vert x-y\Vert _\infty \mid x, y\in \mathop {\mathrm{dom}}\limits f\} \; (K_\infty <+\infty )\) and an initial point \(x \in \mathop {\mathrm{dom}}\limits f\) are given. Our algorithm can be described as follows.

Scaling algorithm for DDM-convex functions

-

S0: Let \(x \in \mathop {\mathrm{dom}}\limits f\) and \(\alpha := 2^{\lceil \log _{2} (K_{\infty }+1) \rceil }\).

-

S1: Find a vector y that minimizes \(f^{\alpha }(y) = f(x{+}\alpha y)\) subject to \(\Vert y \Vert _{\infty } \le n\) (by the 1-neighborhood steepest descent algorithm), and set \(x:= x+ \alpha y\).

-

S2: If \(\alpha = 1\), then stop (x is a minimizer of f).

-

S3: Set \(\alpha :=\alpha /2\), and go to S1.

Theorem 16

For a DDM-convex function \(f:{\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) with bounded effective domain, the scaling algorithm finds a minimizer of f in \(O(n\log _2 K_\infty )\) calls of the 1-neighborhood minimization oracles.

Proof

The correctness of the algorithm can be shown by induction on \(\alpha\). If \(\alpha = 2^{\lceil \log _{2} (K_{\infty }+1) \rceil }\), then x is a unique point of \(\mathop {\mathrm{dom}}\limits f^\alpha\) because \(\alpha =2^{\lceil \log _2 (K_\infty +1)\rceil }>K_\infty\), that is, a minimizer of \(f^\alpha\). Let \(x^{2\alpha }\) denote the point x at the beginning of S1 for \(\alpha\) and assume that \(x^{2\alpha }\) is a minimizer of \(f^{2\alpha }\). The function \(f^\alpha (y)=f(x^{2\alpha }+\alpha y)\) is DDM-convex by Theorem 11. Let \(y^\alpha =\mathop {\mathrm{argmin}}\limits \{f^\alpha (y) \mid \Vert y\Vert _\infty \le n\}\) and \(x^\alpha =x^{2\alpha }+\alpha y^\alpha\). Theorem 14 guarantees that \(x^\alpha\) is a minimizer of \(f^\alpha\) because of \(x^{2\alpha } \in \mathop {\mathrm{argmin}}\limits f^{2\alpha }\). At the termination of the algorithm, we have \(\alpha =1\) and \(f^\alpha =f\). The output of the algorithm, which is computed by the 1-neighborhood steepest descent algorithm, satisfies the condition of Corollary 1, and hence, the output is indeed a minimizer of f.

The time complexity of the algorithm can be analyzed as follows: by Theorem 15, S1 terminates in O(n) calls of the 1-neighborhood minimization oracles in each iteration. The number of iterations is \(O(\log _2 K_\infty )\). Hence, the assertion holds. \(\square\)

9 DDM-convex functions in continuous variables

In [19], proximity theorems between L\(^\natural\)-convex functions and their continuous relaxations are proposed. We extend these results to DDM-convexity.

It is known that the continuous version of L\(^\natural\)-convexity can naturally be defined by using translation-submodularity (3.1). In this section, we define DDM-convexity in continuous variables in a different way. We call a continuous convex function \(F : {\mathbb {R}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) a directed discrete midpoint convex function in continuous variables (\({\mathbb {R}}\)-DDM-convex function) if for any positive integer \(\alpha\), the function \(f^{1/\alpha } : {\mathbb {Z}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) defined by

is DDM-convex. We denote by f the DDM-convex function \(f^{1/1}\) which is nothing but the restriction of F to \({\mathbb {Z}}^n\).

An example of an \({\mathbb {R}}\)-DDM-convex function is a continuous 2-separable convex function F which is defined as

for univariate continuous convex functions \(\xi _{i}, \varphi _{ij}, \psi _{ij} : {\mathbb {R}}\rightarrow {\mathbb {R}}\cup \{+\infty \}\; (i=1,\ldots ,n; j \in \{1,\ldots ,n\} {\setminus } \{i\}\)) as below. The restriction f of F to \({\mathbb {Z}}^n\) is trivially a 2-separable convex function on \({\mathbb {Z}}^n\) defined by (3.4). Furthermore, the function \(F^{1/\alpha }:{\mathbb {R}}^n \rightarrow {\mathbb {R}}\cup \{+\infty \}\) defined by

is also a continuous 2-separable convex function, and hence, the restriction \(f^{1/\alpha }\) of \(F^{1/\alpha }\) to \({\mathbb {Z}}^n\) is also a 2-separable convex function on \({\mathbb {Z}}^n\).

We have the following proximity theorems between an \({\mathbb {R}}\)-DDM-convex function F and its restriction f to \({\mathbb {Z}}^n\).

Theorem 17

Let \(F:{\mathbb {R}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\) be an \({\mathbb {R}}\)-DDM-convex function. For each \(x^* \in \mathop {\mathrm{argmin}}\limits f\;(= \mathop {\mathrm{argmin}}\limits f^{1/1})\), there exists \({\overline{x}}\in \mathop {\mathrm{argmin}}\limits F\) with \(\Vert x^*-{\overline{x}}\Vert _\infty \le n\).

Proof

Since f is DDM-convex, by Corollary 1 in Sect. 3, we have

Thus, for every integer \(\alpha \ge 2\), by \(f(x)=f^{1/\alpha }(\alpha x)\; (x\in {\mathbb {Z}}^n)\), we have

By Theorem 14 for \(f^{1/\alpha }\), there exists \(x^{1/\alpha } \in \mathop {\mathrm{argmin}}\limits f^{1/\alpha }\) with

By dividing all terms in (9.2) by \(\alpha\), we obtain

Let \(B=\{x\in {\mathbb {R}}^n \mid x^*-n\varvec{1}\le x \le x^*+n\varvec{1}\}\). For each integer \(k\ge 1\), considering \(\alpha _k=2^k\) and \(x^{1/\alpha _k}\in \mathop {\mathrm{argmin}}\limits f^{1/\alpha _k}\), we have \(\frac{x^{1/\alpha _k}}{\alpha _k}\in B\). Since B is compact, there exists a subsequence \(\{\frac{x^{1/\alpha _{k_i}}}{\alpha _{k_i}}\}\) with

Since F is continuous, we have

Since \(x^{1/\alpha _{k_i}}\in \mathop {\mathrm{dom}}\limits f^{1/\alpha _{k_{i+1}}}\) holds for each i by the definition (9.1), we have \(F(\frac{x^{1/\alpha _{k_1}}}{\alpha _{k_1}}) \ge F(\frac{x^{1/\alpha _{k_2}}}{\alpha _{k_2}}) \ge \cdots \ge F(\frac{x^{1/\alpha _{k_i}}}{\alpha _{k_i}}) \ge \cdots\) which together with \(x^{1/2^{k_i}}\in \mathop {\mathrm{argmin}}\limits f^{1/2^{k_i}}\) for all i, guarantees that

We finally show \(F({\overline{x}})=\min F\), that is, \({\overline{x}} \in \mathop {\mathrm{argmin}}\limits F\). Suppose to the contrary that there exists \(x'\) with \(F(x') < F({\overline{x}})\). Let \(\varepsilon = F({\overline{x}})-F(x') > 0\). By the continuity of F, there exists \(\delta _{\varepsilon }\) such that

Because there exist \(N\in \{k_i \mid i=1, 2, \dots \}\) and \(y \in {\mathbb {R}}^n\) such that \(2^N y \in {\mathbb {Z}}^n\) and \(\Vert x'-y\Vert _\infty <\delta _{\varepsilon }\), by (9.4), we have

which contradicts (9.3). Therefore, \({\overline{x}}\) must be a minimizer of F. \(\square\)

If F has a unique minimizer, the converse of Theorem 17 also holds.

Theorem 18

Let \(F:{\mathbb {R}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\) be an \({\mathbb {R}}\)- DDM-convex function. If F has a unique minimizer \({\overline{x}}\), there exists \(x^*\in \mathop {\mathrm{argmin}}\limits f\) with \(\Vert x^*-{\overline{x}}\Vert _\infty \le n\).

Proof

Let \({\overline{x}}\) be a unique minimizer of F. If f has a minimizer \(x^*\), then \(\Vert x^*-{\overline{x}}\Vert _\infty \le n\) must hold by Theorem 17. Thus, it is enough to show that f has a minimizer.

Suppose to the contrary that f has no minimizer and let \(B=\{x\in {\mathbb {R}}^n \mid {\overline{x}}-n\varvec{1}\le x \le {\overline{x}}+n\varvec{1}\}\). Then, there exists \(y \in \mathop {\mathrm{dom}}\limits f {\setminus } B\) such that \(f(y) < f(x)\) for all \(x \in \mathop {\mathrm{dom}}\limits f \cap B\). Let \(\ell = \Vert y-{\overline{x}}\Vert _\infty\) and \(B' = \{x\in {\mathbb {R}}^n \mid {\overline{x}}-\ell \varvec{1}\le x \le {\overline{x}}+\ell \varvec{1}\}\). Note that \(\ell > n\) and \(B' \supset B\). Let us consider the restriction G of F to \(B'\) defined by

Obviously, G is \({\mathbb {R}}\)-DDM-convex and \({\overline{x}}\) is a unique minimizer of G. In particular, the restriction g of G to \({\mathbb {Z}}^n\) is DDM-convex and has a minimizer z since \(B'\) is bounded. This point z does not belong to B since \(y \not \in B\) and \(f(y) < f(x)\) for all \(x \in \mathop {\mathrm{dom}}\limits f \cap B\). However, this contradicts Theorem 17 for G and g. \(\square\)

If F has a bounded effective domain, a similar statement holds. Let \({\overline{K}}_{\infty }=\sup \{\Vert x-y\Vert _\infty \mid x, y\in \mathop {\mathrm{dom}}\limits F\}\).

Theorem 19

Let \(F:{\mathbb {R}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\) be an \({\mathbb {R}}\)-DDM-convex function. If \(\mathop {\mathrm{dom}}\limits F\) is bounded (i.e., \({\overline{K}}_{\infty } < \infty\)), for each \({\overline{x}} \in \mathop {\mathrm{argmin}}\limits F\), there exists \(x^*\in \mathop {\mathrm{argmin}}\limits f\) with \(\Vert x^*-{\overline{x}}\Vert _\infty \le n\).

Proof

If \(\mathop {\mathrm{dom}}\limits f=\mathop {\mathrm{argmin}}\limits f\), the assertion holds. In the sequel, we assume that \(\mathop {\mathrm{dom}}\limits f\not = \mathop {\mathrm{argmin}}\limits f\). We fix a minimizer \({\overline{x}}\) of F, arbitrarily. For a sufficiently small \(\varepsilon >0\), let us consider functions \(F_\varepsilon :{\mathbb {R}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\) and \(f_\varepsilon :{\mathbb {Z}}^n\rightarrow {\mathbb {R}}\cup \{+\infty \}\) defined by

Function \(F_\varepsilon\) has the unique minimizer \({\overline{x}}\) and satisfies the conditions of Theorem 18, by Proposition 4 (4), because \(f_\varepsilon ^{1/\alpha }\) defined by (9.1) for \(F_\varepsilon\) is the sum of \(f^{1/\alpha }\) and a separable convex function which are DDM-convex. Thus, by Theorem 18 for \(F_\varepsilon\) and \(f_\varepsilon\), there exists \(x^{\varepsilon }\in \mathop {\mathrm{argmin}}\limits f_\varepsilon\) with \(\Vert x^{\varepsilon }-{\overline{x}}\Vert _\infty \le n\).

Let \(\beta =\min \{f(x) \mid x\in \mathop {\mathrm{dom}}\limits f{\setminus } \mathop {\mathrm{argmin}}\limits f\} > 0\). Note that \(\beta\) is well-defined by boundedness of \(\mathop {\mathrm{dom}}\limits f\). We show that if \(\varepsilon <(\beta -\min f)/(n{\overline{K}}_{\infty }^2)\), then \(x^{\varepsilon } \in \mathop {\mathrm{argmin}}\limits f\). For any \(x\in \mathop {\mathrm{argmin}}\limits f\), by \(f_\varepsilon (x^{\varepsilon })\le f_\varepsilon (x)\), we have

As \(f(x^{\varepsilon })\ge f(x)\), \(\sum _{i=1}^n \{(x_i-{\overline{x}}_i)^2-(x^{\varepsilon }_i-{\overline{x}}_i)^2\} \ge 0\) must hold. Therefore, we obtain

which says \(x^{\varepsilon }\in \mathop {\mathrm{argmin}}\limits f\). \(\square\)

Remark 5

There is a convex function which is not \({\mathbb {R}}\)-DDM-convex. For example, for a positive definite matrix \(Q = \left[ {\begin{matrix} 5 &{} 2 \\ 2 &{} 1 \end{matrix}} \right]\), the function

is convex, but the restriction f of F to \({\mathbb {Z}}^2\) is not DDM-convex by Theorem 9, and hence, F is not \({\mathbb {R}}\)-DDM-convex. \(\square\)

Remark 6

The convex extension of a DDM-convex function may not be \({\mathbb {R}}\)-DDM-convex. For example,

is a DDM-convex set, and hence, its indicator function \(f = \delta _S\) is DDM-convex. We denote the convex hull of S by \({\overline{S}}\). Then the convex extension F of f is expressed by

and \(f^{1/2}\) is given by

where \(T = \{(2,0,0),(1,1,0),(0,2,0),(0,1,1),(0,0,2),(1,0,1)\}\). The function \(f^{1/2}\) is not DDM-convex, because for \(x = (2,0,0)\) and \(y = (0,1,1)\), we have \(\mu (x,y) = (1,0,0) \not \in T\). \(\square\)

Notes

If \(\Vert x-y\Vert _{\infty } \le 1\), then (1.1) obviously holds.

Private communication with F. Tardella (2017).

References

Begen, M., Queyranne, M.: Appointment scheduling with discrete random durations. Math. Oper. Res. 36, 240–257 (2011)

Chen, X.: L\(^\natural\)-convexity and its applications in operations. Front. Eng. Manag. 4, 283–294 (2017)

Favati, P., Tardella, F.: Convexity in nonlinear integer programming. Ricerca Oper. 53, 3–44 (1990)

Fujishige, S.: Bisubmodular polyhedra, simplicial divisions, and discrete convexity. Discrete Optim. 12, 115–120 (2014)

Fujishige, S., Murota, K.: Notes on L-/M-convex functions and the separation theorems. Math. Program. 88, 129–146 (2000)

Gu, S., Cui, R., Peng, J.: Polynomial time solvable algorithms to a class of unconstrained and linearly constrained binary quadratic programming problems. Neurocomputing 198, 171–179 (2016)

Hirai, H.: L-extendable functions and a proximity scaling algorithm for minimum cost multiflow problem. Discrete Optim. 18, 1–37 (2015)

Iimura, T.: Discrete modeling of economic equilibrium problems. Pac. J. Optim. 6, 57–64 (2010)

Iimura, T., Murota, K., Tamura, A.: Discrete fixed point theorem reconsidered. J. Math. Econ. 41, 1030–1036 (2005)

Iimura, T., Watanabe, T.: Existence of a pure strategy equilibrium in finite symmetric games where payoff functions are integrally concave. Discrete Appl. Math. 166, 26–33 (2014)

Iwata, S., Shigeno, M.: Conjugate scaling algorithm for Fenchel-type duality in discrete convex optimization. SIAM J. Optim. 13, 204–211 (2002)

Jensen J.L.W.V.: Om konvekse Funktioner og Uligheder imellem Middelværdier. Mathematica Scandinavica 16B, 49–68 (1905) Also: Sur les fonctions convexes et les inégalités entre les valeurs moyennes. Acta Mathematica 30, 175–193 (1906)

Kolmogorov, V., Shioura, A.: New algorithms for convex cost tension problem with application to computer vision. Discrete Optim. 6, 378–393 (2009)

van der Laan, G., Talman, D., Yang, Z.: Solving discrete systems of nonlinear equations. Eur. J. Oper. Res. 214, 493–500 (2011)

Lehmann, B., Lehmann, D., Nisan, N.: Combinatorial auctions with decreasing marginal utilities. Games Econ. Behav. 55, 270–296 (2006)

Moriguchi, S., Murota, K.: Projection and convolution operations for integrally convex functions. Discrete Appl. Math. 255, 283–298 (2019)

Moriguchi, S., Murota, K., Tamura, A., Tardella, F.: Scaling, proximity, and optimization of integrally convex functions. Math. Program. 175, 119–154 (2019)

Moriguchi, S., Murota, K., Tamura, A., Tardella, F.: Discrete midpoint convexity. Math. Oper. Res. 45, 99–128 (2020)

Moriguchi, S., Tsuchimura, N.: Discrete L-convex function minimization based on continuous relaxation. Pac. J. Optim. 5, 227–236 (2009)

Murota, K.: Discrete convex analysis. Math. Program. 83, 313–371 (1998)

Murota, K.: Discrete Convex Analysis. SIAM, Philadelphia (2003)

Murota, K.: A survey of fundamental operations on discrete convex functions of various kinds. to appear in Optimization Methods and Software (2020). https://doi.org/10.1080/10556788.2019.1692345

Murota, K., Shioura, A.: Extension of M-convexity and L-convexity to polyhedral convex functions. Adv. Appl. Math. 25, 352–427 (2000)

Murota, K., Shioura, A.: Exact bounds for steepest descent algorithms of L-convex function minimization. Oper. Res. Lett. 42, 361–366 (2014)

Murota, K., Shioura, A., Yang, Z.: Time bounds for iterative auctions: a unified approach by discrete convex analysis. Discrete Optim. 19, 36–62 (2016)

Shioura, A.: Algorithms for L-convex function minimization: connection between discrete convex analysis and other research areas. J. Oper. Res. Soc. Japan 60, 216–243 (2017)

Simchi-Levi, D., Chen, X., Bramel, J.: The Logic of Logistics: Theory, Algorithms, and Applications for Logistics Management, 3rd edn. Springer, New York (2014)

Yang, Z.: On the solutions of discrete nonlinear complementarity and related problems. Math. Oper. Res. 33, 976–990 (2008)

Yang, Z.: Discrete fixed point analysis and its applications. J. Fixed Point Theory Appl. 6, 351–371 (2009)

Zipkin, P.: On the structure of lost-sales inventory models. Oper. Res. 56, 937–944 (2008)

Acknowledgements

The notion of DDM-convexity was first proposed by Fabio Tardella at an informal meeting of Satoko Moriguchi, Kazuo Murota, Akihisa Tamura and Fabio Tardella in 2018. The authors wish to express their deep gratitude to Fabio Tardella. They also thank Kazuo Murota for discussion about the first manuscript. His comments were helpful to improve the paper. This work was supported by JSPS KAKENHI Grant Number JP16K00023.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

This article is published under an open access license. Please check the 'Copyright Information' section either on this page or in the PDF for details of this license and what re-use is permitted. If your intended use exceeds what is permitted by the license or if you are unable to locate the licence and re-use information, please contact the Rights and Permissions team.

About this article

Cite this article

Tamura, A., Tsurumi, K. Directed discrete midpoint convexity. Japan J. Indust. Appl. Math. 38, 1–37 (2021). https://doi.org/10.1007/s13160-020-00416-0

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13160-020-00416-0

Keywords

- Midpoint convexity

- Discrete midpoint convexity

- Integral convexity

- L\(^{\natural }\)-convexity

- Proximity theorem

- Scaling algorithm