Abstract

Artificial intelligence (AI) offers great potential in organizations. The path to achieving this potential will involve human-AI interworking, as has been confirmed by numerous studies. However, it remains to be explored which direction this interworking of human agents and AI-enabled systems ought to take. To date, research still lacks a holistic understanding of the entangled interworking that characterizes human-AI hybrids, so-called because they form when human agents and AI-enabled systems closely collaborate. To enhance such understanding, this paper presents a taxonomy of human-AI hybrids, developed by reviewing the current literature as well as a sample of 101 human-AI hybrids. Leveraging weak sociomateriality as justificatory knowledge, this study provides a deeper understanding of the entanglement between human agents and AI-enabled systems. Furthermore, a cluster analysis is performed to derive archetypes of human-AI hybrids, identifying ideal–typical occurrences of human-AI hybrids in practice. While the taxonomy creates a solid foundation for the understanding and analysis of human-AI hybrids, the archetypes illustrate the range of roles that AI-enabled systems can play in those interworking scenarios.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Rapid advancements in the field of artificial intelligence (AI) have raised the level of expectations to the point at which some are even heralding AI as the next general-purpose technology (Goldfarb et al. 2019; Jöhnk et al. 2021). As AI-related technologies become ever more sophisticated, researchers and practitioners alike are identifying increasing numbers of AI use cases in the world of business (Bughin et al. 2018). At the same time, this growing potential of business applications has led to significant investments, which in turn has led to copious amounts of AI use cases (Dellermann et al. 2019a).

The conventional approach of AI use cases has been to treat humans and machines as substitutes that can replace one another in the performance of tasks (Daugherty and Wilson 2018; Raisch and Krakowski 2021). Recent studies, however, have revealed that this limited perspective on AI has had two unfortunate consequences: (1) a disproportionately large focus on automation and (2) a tendency to neglect the powerful interworking that occurs when humans and AI augment each other (Dellermann et al. 2019b; Rai et al. 2019; Seeber et al. 2020). It has, therefore, become a complicated matter to identify true value-generating use cases (Bughin et al. 2018) which is why many organizations still fail to realize value from using AI (Ransbotham et al. 2020). Researchers have since resorted to so-called human-in-the-loop and algorithm-in-the-loop approaches (Green and Chen 2019; Grønsund and Aanestad 2020). Both concepts advance AI application since they are predicated on the understanding that, in an ideal scenario, humans and algorithms work in mutual recognition of each other's merits to augment one another. So far, however, both concepts decidedly lack a differentiated view of the precise ways in which human agents and AI-enabled systems can complement one another when performing tasks as so-called human-AI hybrids (Rai et al. 2019).

While the insights of current studies into the interworking of humans and AI are undoubtedly detailed, they often focus on either a technical or a social perspective. To account for the multiple ways in which human-AI hybrids are entangled, an integrative perspective is required that focuses on the complementary interworking of human agents and AI-enabled systems (Jarrahi 2018). This rationale is shared by several experts at work in the field (Agrawal et al. 2018; Daugherty and Wilson 2018; Traumer et al. 2017), with Davenport and Ronanki (2018) providing the empirical evidence that companies can do better when they focus on augmenting rather than replacing human capabilities as they try to develop AI use cases. With this in mind, we ask the following research question:

How Can We Conceptualize the Collaborative Interworking of Human Agents and AI-Enabled Systems?

To address this question, we develop a taxonomy of human-AI hybrids. We use an iterative taxonomy development method (Kundisch et al. 2022; Nickerson et al. 2013) that allows us to analyze the complex interworking of human agents and AI-enabled systems (Oberländer et al. 2019; Nickerson et al. 2013). Moreover, we derive five archetypes of human-AI hybrids, each illustrating which roles AI-enabled systems can play in those collaborative interworking scenarios.

As a theory for analyzing (Gregor 2006), our taxonomy provides a holistic structure to the emerging field of human-AI hybrids. Using weak sociomateriality as justificatory knowledge (Jones 2014; Orlikowski 2007; Gregor and Jones 2007), we present AI-enabled systems and human agents as locally separate entities with distinct characteristics that globally intra-act in sociomaterial practices. In doing so, we also acknowledge the importance of both human and material agency in human-AI hybrids. Moreover, by deriving archetypes of human-AI hybrids, we shed light on overarching interworking patterns in human-AI hybrids. Finally, our study provides inspiration for practitioners to create and shape human-AI hybrids tapping their full potential.

2 Background

2.1 Artificial Intelligence

AI provides both new opportunities and notable challenges in its ability to learn, solve problems, and create objects (Benbya et al. 2021). Most recently, there has been a quantum leap in the advancement of AI-enabled technologies with previously unfathomable real-world applications, such as AI-powered diagnostic radiology tools (Paschen et al. 2020) or the AI-driven digital operating model of Ant Financial (Iansiti and Lakhani 2020). Although AI has already established itself in many organizations, there is still some debate on its definition. In agreement with Russell and Norvig (2016), we regard AI and its related technologies as an instrument that lets one automate and augment activities formerly associated with human intelligence, such as decision-making, problem-solving, and learning. Such activities are often referred to as cognitive functions. They range from basic and even subconscious functions, such as memory or perception, to highly specific functions, such as recognition or problem-solving (Hwang and Chen 2017). Looking at AI through the lens of cognitive functions provides a common understanding of the broad range of possibilities afforded by AI-enabled technologies (Corea 2019; Stohr and O’Rourke 2021). With that in mind, we will use this cognitive functions lens of AI to ensure that first, our perception of AI is in line with that of the wider IS research community, and that, second, this study is situated in the area where further research is most needed (Rai et al. 2019). That is, we understand AI as “the ability of a machine to perform cognitive functions that we associate with human minds, such as perceiving, learning, and interacting with the environment, problem-solving, decision-making, and even demonstrating creativity” (Rai et al. 2019, p. iii).

In this context, it is worth noting that the umbrella term AI is not a ready-made technology but rather an evolving research field (Russell and Norvig 2016). It constitutes a moving frontier of computing technologies that reference human intelligence (Berente et al. 2021). As such, AI itself cannot have capabilities or provide action possibilities. Only technologies related to AI (e.g., machine learning algorithms) can do so by functioning as an AI-enabled system within a technical subsystem (Chatterjee et al. 2021). Therefore, we agree with Rzepka and Berger (2018) that the term AI-enabled system is best understood and used as a subsumption of AI-enhanced (e.g., autonomous car navigation) as well as AI-based (e.g., natural language processing) systems. In the following, we will refer to AI-enabled systems whenever we discuss the interworking between human agents and a particular instantiation of AI.

It is of critical importance to acquire a broad understanding of both the strengths and limitations of AI-enabled systems before embarking on AI use cases (Davenport and Ronanki 2018). Among these limitations are a lack of empathy, intuition, or the ability to quickly adapt to unforeseen circumstances (Bughin et al. 2018), capabilities that are closely related to the intuitive actions of what Kahneman (2011) refers to as “System 1.” Not addressing these limitations properly can hamper the success of AI initiatives (Bughin et al. 2018). Combining the capabilities of human agents and AI-enabled systems could help overcome these limitations, because those intuitive actions related to “System 1” have been an important foundation of human development (Seeber et al. 2020; Kahneman 2011).

2.2 Human-AI Hybrids

To date, however, the entangled interworking of human agents and AI-enabled systems has neither been given a clear definition nor has it been dealt with comprehensively in the academic literature. Possible reasons for this are the vague terms in which AI has been discussed in a range of disciplines (Demlehner et al. 2021) and the fact that these discussions have been conducted in several related yet separate streams of research, such as IS, human–computer interaction (HCI), human–robot interaction, human–machine interaction, engineering, and management. Existing research predominantly focuses on structuring specific aspects of human-AI collaboration such as tasks and interactions (e.g.,Dellermann et al. 2019a, 2019b; Traumer et al. 2017), specific use cases and the roles of human agents (e.g., Davenport et al. 2020; Maedche et al. 2019; Paschen et al. 2020; Rzepka and Berger 2018), or technical aspects of AI-enabled systems (e.g.,Liew 2018; Østerlund et al. 2021). Yet, there is a lack of a holistic conceptualization of what constitutes the interworking of human agents and AI-enabled systems that mutually acknowledges the role of both. One of the most impactful commentaries on the interworking of human agents and AI-enabled systems in the realm of IS has been the editor's comment by Rai et al. (2019), which also features a description of their understanding of human-AI hybrids. The authors describe human-AI hybrids as dynamic combinations of individual competencies of humans and AI-enabled systems. In the interest of clarity, we aim to collect our insights under one shared generic label (“human-AI hybrids”), which we will use throughout this paper. We also adopt Rai et al.'s (2019) definition of human-AI hybrids as combinations of capabilities of human agents and AI-enabled systems.

Agreeing with Daugherty and Wilson (2018) and Rai et al. (2019), we note that certain aspects of tasks are likely to align well with the capabilities of AI-enabled systems, while others are likely to correspond better with those of human agents. By and large, human agents and AI-enabled systems perform different roles and interactions to facilitate collaborative work (Daugherty and Wilson 2018; Davenport and Ronanki 2018). We understand human-AI hybrids to constitute the symbiotic interworking of cognitive functions, some attributed to human agents, others to AI-enabled systems, and all working together to perform tasks. Combining the complementary strengths of AI-enabled systems and human agents can offer distinct benefits, including an increase in organizational knowledge as well as performance improvements (Fügener et al. 2021; Maedche et al. 2019; Sturm et al. 2021). We refer to Dellermann et al. (2019b) – who coined the term “hybrid intelligence” as the combination of human intelligence and AI – when we argue that a division of labor between human agents and AI-enabled systems is likely to emerge as the dominant way in which organizations will soon be using AI. Peeters et al. (2021) define “hybrid collective intelligence” as a quality that allows humans and AI-enabled systems to act more intelligently when they do so collectively. We support this view, as human-AI hybrids can use symbiotic interworking to elevate themselves to hybrid assemblages that have the potential to be greater than the sum of their parts.

At the same time, organizations also need to be cognizant of the risks that can arise for human agents when their increased exposure to AI-enabled systems is not managed well, such as conflicts in employee role identity (Strich et al. 2021). This unmanaged exposure can also decrease unique human knowledge, which in turn can hamper creativity (Fügener et al. 2021). Furthermore, there is the risk that AI-enabled systems may produce unfair results (Teodorescu et al. 2021). Managing or mitigating these potential risks requires a deep understanding of the interworking between human agents and AI-enabled systems (Teodorescu et al. 2021).

2.3 Sociomateriality

Human-AI hybrids refer to the dynamic pairing of the capabilities of AI-enabled systems with those of human agents. As a sociomaterial perspective helps conceptualize such complex relationships and facilitate a deeper understanding of the results of those pairings (Cecez-Kecmanovic et al. 2014), we used this perspective as justificatory knowledge for developing our taxonomy of human-AI hybrids. Sociomateriality proposes to focus on an integrated perspective of human agents and (technical) objects (Orlikowski and Scott 2008). Such an integrated, sociomaterial view collapses the boundaries between the social (e.g., humans) and the material (e.g., technologies). It facilitates insights into their relationships, interplay, and the various results thereof (Leonardi 2012; Cecez-Kecmanovic et al. 2014). Sociomateriality can be regarded as an umbrella term that incorporates several pre-existing theories, such as socio-technical systems, actor network, and practice theory (Leonardi 2013).

While the entangled view of the social and the material unifies IS research concerned with sociomateriality, there are different interpretations of their relationship. On the one hand, strong sociomateriality presumes that the social and material are inextricably entangled, meaning that "there is no social that is not also material, and no material that is not also social" (Orlikowski 2007, p. 1437). A strong sociomateriality lens would implicate that both social and material entities do not exist beforehand but only emerge through intra-actions (Cecez-Kecmanovic et al. 2014; Barad 2003). Weak sociomateriality, on the other hand, has its roots in the critical realist tradition, which is why it views the social and material as separate entities that can exist without one another (Leonardi 2013). Although (weak) sociomateriality provides an interesting perspective to study human-AI hybrids, research leveraging this perspective is scarce. However, there is agreement that our interpretation of the material and material agency will need to change with advancements in AI-enabled systems and their potential to act autonomously (Ågerfalk 2020; Johri 2022; van Rijmenam 2019).

In accordance with weak sociomateriality, we understand human agents and AI-enabled systems as sociomaterial actors in the sense of locally separate entities who globally intra-act in sociomaterial practices (Leonardi 2013; Niemimaa 2016). As a result, the weak sociomateriality perspective allows for examining not just specific preexisting attributes and capabilities of human agents and AI-enabled systems (local seperabillity) but also new action possibilities available due to human-AI hybrids and their entangled interworking (sociomaterial practices). In sum, drawing from weak sociomateriality as justificatory knowledge provides the means to analyze the entangled nature of human-AI hybrids and its potential to transcend the boundaries of any single actor – be it social or material. We provide more detailed explanations together with concrete examples of how weak sociomateriality contributes to our taxonomy development process in Sect. 4.1.

3 Research Method

To address the question of how to conceptualize the collaborative interworking of human agents and AI-enabled systems, we chose a twofold approach: developing a taxonomy and deriving archetypes of human-AI hybrids based on an application of this taxonomy. We decided to do so, as the field of human-AI hybrids at the time did lack not only a foundational structure for sensemaking but also a comprehensive overview of the status quo of human-AI hybrid usage in real-world scenarios. To capture both existing research and real-world applications, we base our research on an extensive overview of relevant literature as well as a sample of 101 human-AI hybrids. More specifically, we first conducted a literature review on the collaboration of human agents and AI-enabled systems following the guidelines of Webster and Watson (2002) and vom Brocke et al. (2015). We applied a search string with three major elements to various databases: (“Human”) AND (“Artificial Intelligence” OR “AI”) AND (“Hybrid” OR “Collaboration”). After careful screening and applying forward–backward search, we arrived at a final set of 49 relevant paper that encompass current debates, theories, and taxonomies in the realm of human-AI hybrids. Subsequently, we added a fourth block to our search string to find practical applications: (“Use Case” OR “Case Study” OR “Pilot Project” OR “Application” OR “Prototype”). We applied this string to an expanded set of databases that included more application-oriented publications of the IEEE as well as several search engines. After careful screening and applying forward–backward search, we assembled the sample of 101 human-AI hybrids displayed in Table 1. “Appendix 1” (available online via http://link.springer.com) contains more details on the literature review and the sample compilation.

Frequently used synonymously with frameworks or typologies, taxonomies are empirically as well as conceptually derived groupings categorized in layers, dimensions, and characteristics (Nickerson et al. 2013). By delivering structure, taxonomies facilitate a deeper understanding of emerging research fields that are as yet little understood (Nickerson et al. 2013). This makes them particularly useful in the field of human-AI hybrids that is currently lacking a holistic understanding of what characterizes the collaborative interworking of human agents and AI-enabled systems when they augment one another.

Our purpose being to conceptualize the collaborative interworking of human agents and AI-enabled systems, we also derive archetypes of human-AI hybrids that illustrate overarching approaches to the interworking of human agents and AI-enabled systems to perform specific tasks. As a result, these archetypes facilitate deeper insights into the usage of human-AI hybrids.

In sum, our approach delivers a comprehensive conceptualization of human-AI hybrids that is based on both an extensive conceptual and empirical analysis. In the following, we explain in detail how we developed our taxonomy and our archetypes of human-AI hybrids.

3.1 Developing a Taxonomy of Human-AI Hybrids

To develop a taxonomy of human-AI hybrids, we adopted the taxonomy development method of Nickerson et al. (2013). While this method has set an excellent standard for the development of structure giving taxonomies (Oberländer et al. 2019), we also agree with Kundisch et al. (2022) that it benefits from more detailed guidance in certain aspects of the development process. Thus, we include further methodological steps, as described by Kundisch et al. (2022), to create a more rigorous and robust taxonomy building process. Overall, our iterative taxonomy development method comprises thirteen steps. “Appendix 2” contains a detailed description of these steps alongside the influence of our justificatory knowledge as well as the modifications to the taxonomy in each iteration.

We set about this task by specifying the observed phenomenon as human-AI hybrids. Building on that, we defined our target user group as consisting of IS researchers and researchers from other fields related to the topic of human-AI hybrids (e.g., HCI, (cognitive) psychology) along with high- and mid-level decisionmakers concerned with the use and integration of AI. With this in mind, we specified the purpose of our taxonomy as holistically understanding of what characterizes the collaborative interworking of human agents and AI-enabled systems when they augment one another (Kundisch et al. 2022). We defined “the relevant properties of the collaborative interworking of human agents and AI-enabled systems” to be the meta-characteristic for developing our taxonomy. Throughout the entire development process, we ensured the compatibility of all characteristics and dimensions with the meta-characteristic, this being a relevant step for developing useful taxonomies (Nickerson et al. 2013). We then defined our objective as well as our subjective ending conditions.

To develop our taxonomy, we performed a total of four iterations, combining two conceptual-to-empirical (C2E) approaches with two empirical-to-conceptual (E2C) approaches (see Table 2). Thus, we developed our taxonomy based on combining deductive (conceptual) and inductive (empirical) approaches (Nickerson et al. 2013). We referred to weak sociomateriality as justificatory knowledge to inform each of these four iterations (Gregor and Jones 2007). Weak sociomateriality provided us the means to structure, analyze and understand how the entangled nature of human-AI hybrids can produce something greater than the sum of its individual components. In our first two iterations, we applied a C2E approach, the rationale being to inform our efforts with the knowledge produced by prior research. With this approach, we were able to integrate ideal–typical conceptual characteristics as discussed in the literature (Snow and Reck 2016). To extend our knowledge of human-AI hybrids use cases and render the taxonomy applicable to real-world scenarios, we took an E2C approach in iterations three and four (Nickerson et al. 2013). After each iteration, we revised our taxonomy and created a revised version (see Fig. A1). We terminated the process when all objective and subjective ending conditions were met (Nickerson et al. 2013).

Sociomaterial entanglement of human-AI hybrids (adapted from Niemimaa 2016)

To improve both the rigor of our development process and the applicability of our taxonomy, we performed an external evaluation after all of our objective and subjective ending conditions had been met (Szopinski et al. 2020). Here, we conducted eight semi-structured interviews with a select group of individuals who combined practical and academic expertise (for details, see “Appendix 3”). The interviews consisted of a brief introduction to the taxonomy and the topic at hand so that the ensuing interview could focus on the dimensions and characteristics of the taxonomy and its applications. Moreover, we evaluated the taxonomy based on a set of criteria (i.e., comprehensibility, completeness, robustness, and suitability for the real world in terms of the purpose of our taxonomy) for taxonomy evaluation (Kundisch et al. 2022). By way of an iterative approach, we performed one interview, analyzed and discussed the results of that interview, revised our taxonomy, and then prepared for the following interview accordingly (see “Appendix 3”). We terminated our external evaluation process when all our evaluation criteria were met, and no further revisions were necessary.

Lastly, we performed a final validation of the taxonomy with three authors independently classifying thirteen randomly selected human-AI hybrids from our sample and determine the taxonomy's reliability. Based on these independent classifications, we calculated the quality of agreement by using the kappa coefficient of Fleiss (1971). This allowed us to measure the proportion of joint judgment between a fixed number of raters (see results in “Appendix 4”).

3.2 Developing Archetypes of Human-AI Hybrids

To facilitate deeper insights on the status quo of human-AI hybrid usage and demonstrate the applicability of our taxonomy, we applied it to the complete sample of 101 human-AI hybrids that informed our taxonomy development process and was built based on an extensive review of academic and practice-oriented literature (see “Appendix 1”). First, one of our co-authors performed an initial classification based on the definitions of characteristics and dimensions from the preceding taxonomy development process (see Sect. 4) (Gimpel et al. 2018). We jointly reviewed and discussed the results of this classification to arrive at a consensual classification of all 101 human-AI hybrids. Then, we clustered our classified sample of human-AI hybrids to derive archetypes.

Cluster analysis and, more specifically, hierarchical clustering are common statistical methods in IS research used to group objects based on their similar characteristics (Hair et al. 2010). Hierarchical clustering offers the advantage of a better interpretation of results due to the visualization of clusters using dendrograms. To perform our cluster analysis, we chose Ward's (1963) agglomerative hierarchical clustering algorithm, as it minimizes the total within-cluster variance (Ferreira and Hitchcock 2009) and has produced reliable results in prior IS research studies (e.g. Janssen et al. 2020). As a distance measure, we chose Euclidean distance because it naturally fits Ward's linkage method (Rencher 2002) and has proven to perform reliably in practice (Nerbonne and Heeringa 1997). To facilitate a correct application of the distance measure, we dichotomized our classification so that each characteristic of a dimension is represented by a separated column that equals either 1 if the characteristic is observable in the analyzed human-AI hybrid or 0 if it is not (Gimpel et al. 2018). Further, we normalized all values in the respective columns so the distance resulting from the classification of characteristics in one dimension can only assume values between 0 and 1 to avoid overrating dimensions with a high number of characteristics.

Cluster algorithms require input on the number of clusters to classify sets of data. To date, however, there is no consensus on which approach produces the ideal number of clusters (Wu 2012). Therefore, we calculated the ideal number of clusters based on various metrics, including but not limited to the Calinski-Harabasz index, the Davies-Bouldin index, the gap statistic, and the silhouette coefficient (Calinski and Harabasz 1974; Davies and Bouldin 1979; Rousseeuw 1987; Tibshirani et al. 2001). According to early indications, the ideal number of clusters appeared to lie between three and seven. Having analyzed the resulting dendrogram (see “Appendix 6”), we determined five to be the appropriate number of clusters in our case (Aldenderfer and Blashfield 1984).

After clustering our data set, we studied each cluster for dominant taxonomy characteristics, then derived suitable archetypes of human-AI hybrids. Finally, we evaluated our archetypes with the Q-sort method (Nahm et al. 2002), a frequently used statistical tool to test the reliability and usefulness of taxonomies and archetypes (Carter et al. 2007). To perform Q-sort, Carter et al. (2007) recommend that two or more judges (P-set) with a clear understanding of the topic classify a set of items (Q-set) to predefined criteria. In our case, two of our co-authors were yet unfamiliar with the results of our agglomerative clustering. Unbiased as they were, we selected them to map the human-AI hybrids to the identified archetypes. Following the advice of Nahm et al. (2002), we measured the reliability of our archetypes with Cohen's (1960) kappa coefficient and the validity of our archetypes through hit-ratios (for results, see “Appendix 8”). In doing so, we were able to measure the proportion of joint judgment as well as the frequency of correctly assigned objects (Moore and Benbasat 1991; Nahm et al. 2002).

4 Taxonomy of Human-AI Hybrids

Here, we present the results of our taxonomy development process, including the insights generated from using weak sociomateriality as justificatory knowledge. We include a detailed description of the dimensions and characteristics across the three sociomaterial entities (sociomaterial practices, AI, and human) as of the final iteration.

4.1 Taxonomy Foundations: Sociomateriality

In line with the meta-characteristic, our taxonomy refers to the collaborative interworking of human agents and AI-enabled systems. Leveraging weak sociomateriality as justificatory knowledge, we understand both entities as locally separate sociomaterial actors. That is, we regard AI-enabled systems with their underlying technology and algorithms as “material”, whereas human agents are “social” entities. In line with weak sociomateriality, we acknowledge that both actors have specific attributes and capabilities. Consequently, these attributes and capabilities are reflected in different dimensions and characteristics of our taxonomy. At the same time, weak sociomateriality allows us to analyze how AI-enabled systems and human agents intra-act on a higher-level to form sociomaterial practices (Leonardi 2013; Niemimaa 2016). Since either actor can initiate interactions, we view both human and material (non-human) agency as relevant aspects of a sociomateriality perspective on human-AI hybrids (Jones 2014; Oberländer et al. 2018; van Rijmenam 2019; Johri 2022). Moreover, this notion acknowledges that AI-enabled systems can assume responsibility for tasks, even when there are ambiguous requirements. They can act autonomously, complementing the human agent (Ågerfalk 2020).

Figure 1 illustrates how we depict the two sociomaterial actors (human agent, AI-enabled system) and their interworking. Drawing from weak sociomateriality, we organized our taxonomy in three distinct entities: the human (i.e., the “social”), the AI (i.e., the “material”), and sociomaterial practices.

For instance, an AI-enabled diagnosis system can autonomously monitor its environment and deliver predictions based on its observations (material agency). A human inspector can make sense of and verify those predictions to complete diagnosis and plan next steps accordingly (human agency). Collectively, the AI-enabled system and the human agent enact sociomaterial practices that can result in better outcomes, such as improved diagnosis or better plannability of maintenance activities.

Moreover, using weak sociomateriality as justificatory knowledge influenced both the structuring of our taxonomy’s dimensions (i.e., the organization of dimensions under the overarching sociomaterial entities) and our terminology. For instance, weak sociomateriality helped us understand that both the human and the AI require dimensions that reflect their specific capabilities (i.e., cognitive functions) and the interaction from one entity to the other (see “Appendix 2” for more details).

In summary, weak sociomateriality as justificatory knowledge provides us with the theoretical groundings to analyze human-AI hybrids in their local separability and their higher-level entanglement through sociomaterial practices (Kautz and Jensen 2013; Leonardi 2013). Thus, weak sociomateriality helped with structuring the collaborative interworking of human-AI hybrids.

4.2 Taxonomy

In line with our meta-characteristic, we developed a taxonomy that provides a clear structure to the field of human-AI hybrids. Our final taxonomy includes three layers: sociomaterial entities, dimensions, and characteristics. It comprises three distinct sociomaterial entities, the human (human agency), the AI (material agency), and sociomaterial practices, and nine dimensions, of which seven are mutually exclusive (ME) and two are non-exclusive (NE) (see Fig. 2). Although a taxonomy's characteristics are ideally mutual exclusive (Bailey 1994; Bowker and Star 1999; Nickerson et al. 2013), certain cases may justify deviations (Kundisch et al. 2022). We find that the dimensions human cognitive functions and AI cognitive functions justify non-exclusiveness as we could introduce an individual characteristic for each combination or binary dimensions for each cognitive function. This would lead to mutual exclusiveness, but also to an excessive set of characteristics or dimensions. Such a taxonomy, in turn, would contradict Nickerson et al.'s (2013) conciseness criterium.

4.2.1 Human (Human Agency)

The sociomaterial actor human comprises three dimensions, each consisting of characteristics that concretize the cognitive functions, the interplay toward AI-enabled systems, and the focus of human agents in human-AI hybrids. These dimensions and characteristics reflect a human agent's specific attributes and capabilities (i.e., the “social”). The dimension human cognitive functions comprises the relevant cognitive functions that human agents can contribute to human-AI hybrids. Based on our analysis, we determine the key cognitive functions of human agents to be perceiving, reasoning, predicting, planning, decision-making, explaining, interacting, creating, and empathizing.

The interaction possibilities of human agents that are tailored to AI-enabled systems (interaction human to AI) are akin to the interaction possibilities in the other direction. They consist of facilitating, verifying, and supplementing. We discovered this to be the case in our interviews (see “Appendix 3”). Facilitating is about putting an AI-enabled system in a position where it can deliver a meaningful contribution to the accomplishment of a task. Facilitation can also happen when human input or modification is required to improve an AI-enabled system. Beyond that, human agents can verify the results of AI-enabled systems based on reactive or proactive oversight (Teodorescu et al. 2021). Meanwhile, supplementing AI-enabled systems with the actions of human agents happens in various forms, all of which depend on the context and requirements of the specific task.

Human focus denotes the primary purpose of human agents in human-AI hybrids. Whereas AI-enabled systems can also have a primary role of automation in human-AI hybrids, human agents' primary purpose in human-AI hybrids is augmentation. Therefore, we have further specified the augmentation purpose in this dimension. More specifically, this dimension indicates whether the augmenting purpose is sensemaking, creativity, compassion, or flexibility. Sensemaking, for instance, occurs in strategic thinking processes that require a certain understanding of the world beyond the specific decision context. While AI-enabled systems have already found their way into the enhancement of art creation processes, e.g., composing music or paintings based on large sets of training data, creativity itself is still driven by the competencies of human agents (Rai et al. 2019). Thus, when being a part of human-AI hybrids, human agents still focus on generating value with their creative capabilities. Other clear remits of human agents are compassionate activities, e.g., sensing and displaying emotions, or social and emotional intelligence in general. This brings us to the final element of this dimension, the flexibility of human agents that enables them to perform a broad range of activities without the need for extensive training data, a skill facilitated by the inherent versatility of human beings.

To take the medical diagnostics hybrid (Jussupow et al. 2021) as an example: We found that human agents (physicians) use the cognitive functions of reasoning to draw conclusions based on the information of the AI-enabled system and their patient inspection and decision-making to decide upon the treatment. Moreover, we classified the interaction human to AI as verify because the physician examines the patient and makes a diagnosis to verify the AI-enabled system's recommendation. Lastly, we classified the physicians' focus as sensemaking because they need to evaluate the AI-enabled system's diagnosis and use their own judgment to decide upon a treatment best suited to the individual patient, taking into account other factors such as allergies and life situations.

4.2.2 AI (Material Agency)

The sociomaterial actor AI comprises three dimensions, each consisting of characteristics that concretize the cognitive functions, the interplay toward human agents, and the focus of the AI-enabled system in human-AI hybrids. These dimensions and characteristics reflect an AI-enabled system's specific attributes and capabilities (i.e., the “material”). AI cognitive functions constitute their own dimension because an AI-enabled system contributes certain primary cognitive functions to human-AI hybrids. This understanding is consistent with current literature (e.g., Daugherty and Wilson 2018; Stohr and O’Rourke 2021) and observations made in multiple use cases (see “Appendix 1”). We identified perceiving, reasoning, predicting, planning, decision-making, interacting, and creating as relevant (current) cognitive functions of AI-enabled systems. It is worth noting that many cognitive functions are common to both human agents and AI-enabled systems (e.g., creating and decision-making). While this may seem counterintuitive at first glance, it accounts for the rapid advancements of AI-enabled systems and the far-reaching potential for collaboration that is associated with these advancements. At this point, only explaining and empathizing can still be regarded as cognitive functions that human agents predominantly possess. As AI-enabled systems remain limited in their emotional and social competencies, their use in scenarios that require such capabilities continues to be rather unattractive for organizations (Paschen et al. 2020; Sowa et al. 2021). At the same time, explainable AI (XAI) proposes to make a shift toward more transparent AI (Adadi and Berrada 2018). It aims to create a suite of methods and techniques to generate more explainable models that human users can understand, appropriately trust, and derive implications from while maintaining a high level of prediction performance (Adadi and Berrada 2018; Barredo Arrieta et al. 2020). Therefore, we understand XAI as enabling human “users to understand, appropriately trust, and effectively manage the emerging generation of AI systems” (Gunning and Aha 2019, p. 45). In this understanding, XAI is not actively explaining but rather providing transparency on its reasoning. However, with continuous advancements in the field of XAI explaining may need to be added to the taxonomy as a cognitive function of AI-enabled systems in the future.

Interaction AI to human denotes the interaction possibilities of AI-enabled systems that are tailored to human agents. Here, facilitating refers to an AI-enabled system that makes a human agent's course of action possible. For instance, an AI-enabled system can guide human actions by providing indications or nudging (Heer 2019). An AI-enabled system can also put a human agent into a position to perform a specific task. More still, an AI-enabled system can also act as a control body, which is to say it can verify the results of human agents to detect wrongdoing or highlight potential requirements for change. This is also referred to as supervised reliance (Teodorescu et al. 2021). Supplementing occurs when the cognitive functions of an AI-enabled system are used to symbiotically complement the cognitive functions of human agents for them to perform tasks jointly.

AI focus denotes the overarching purpose of an AI-enabled system in a human-AI hybrid. This dimension indicates whether the primary purpose of an AI-enabled system is automation or augmentation. However, while the focus can be directed exclusively on automation or augmentation, there is a beneficial co-existence of both (Davenport and Ronanki 2018; Raisch and Krakowski 2021). With the technological advancements of AI-enabled systems in mind, it is worth mentioning that automation can extend into formerly human-dominated domains, such as creativity, as evidenced by Autodesk's generative design approach (Iansiti and Lakhani 2020). We agree with Harper (2019), however, that this rather represents an enhancement of the creativity of human agents, given that creative activities such as setting goals, formulating hypotheses, or determining decision criteria for algorithms remain within the ambit of human agents, at least for now (Brynjolfsson and Mitchell 2017; Corea 2019).

To take the Alibaba packing system (Zhang et al. 2021) as an example: We found that the AI-enabled system uses the cognitive functions of reasoning to analyze the accuracy of picked orders and planning, to plan the packing of transport boxes. Moreover, we classified the AI-enabled system's interaction with the human agent as facilitating because it supports the human employee in selecting transport boxes and enables a faster and less error-prone packaging process. Due to this focus on improving the procedural steps of the human agents, we classified the AI focus as augmentation.

4.2.3 Sociomaterial Practices

Sociomaterial practices comprise three dimensions, each consisting of characteristics that concretize the interworking in human-AI hybrids. We understand these as the aggregation of activities performed by human-AI hybrids, separated from the actors and objects involved (Faulkner and Runde 2012). Socio-material practices can be characterized by the form of interworking between human agents and AI-enabled systems, which can occur either in parallel or sequentially (Daugherty and Wilson 2018). More complex human-AI hybrids are characterized by a flexible form of interworking that allows for a switch between parallel and sequential work (Grønsund and Aanestad 2020).

Mode of interworking classifies whether the interaction between human agents and AI-enabled systems happens in a singular or continuous manner. A human agent and an AI-enabled system may only interact once during a process (e.g., the AI-enabled system predicts a value, and the human agent verifies this value), or it may happen several times (e.g., human agent and AI-enabled system continuously supplement one another with their respective cognitive functions). Such a continuous mode of interaction is present, for example, when a human worker assembling a product is continuously augmented by an AI-enabled system that provides instruction on the assembly process.

Learning plays a vital role in the context of human-AI hybrids and constitutes a critical success factor in the meaningful implementation of AI (Ransbotham et al. 2020). In this context, we understand learning as the accumulation of additional knowledge that leads to improved performance of the specific task. There are several degrees of learning for human-AI hybrids: none (there is no actual feedback loop), AI learning (the focus of learning rests primarily on the AI-enabled systems), human learning (the focus of learning rests primarily on the human agent), human and AI learn separately (human agents and AI-enabled systems learn independently of one another), and a so-called co-evolution. We agree with Dellermann et al. (2019b) in that we understand co-evolution as the achievement of superior results due to continuous improvement by way of learning from one another. Particularly in turbulent environments, AI-enabled systems can facilitate organizational learning and offer human agents greater freedom to learn in accordance with their personal preferences (Sturm et al. 2021).

To take the Atomwise hybrid (Agrawal et al. 2018) as an example: We classified the form of interworking as sequential because the AI-enabled prediction tool predicts the binding affinity of drugs and, in the subsequent step, the human agent recognizes possible side effects and evaluates the trade-off between targeting the disease and the potential side effects. Moreover, we classified the mode of interworking as continuous because the AI-enabled prediction tool repeatedly makes predictions for the binding affinity of new drugs. Lastly, the human agent learns by getting information about the binding and potentially new molecules and combining this knowledge with his expertise. The AI-enabled system does not currently use the feedback of the human agent to improve prediction.

5 Application of the Taxonomy of Human-AI Hybrids

Since our research aims at a comprehensive picture of how to conceptualize the collaborative interworking of human agents and AI-enabled systems, we not only want to provide a foundational structure for sensemaking but also capture the status quo of human-AI hybrid usage in real-world scenarios. For this purpose, we, first, include a detailed analysis of this status quo based on the application of our taxonomy to the complete sample of 101 human-AI hybrids used to create our taxonomy. Second, we derive archetypes of human-AI hybrids that illustrate ideal–typical occurrences and facilitate deeper insights into the usage of human-AI hybrids.

5.1 Status Quo Analysis

Based on our classification of human-AI hybrids, we analyzed the relative frequency of all characteristics to discover the current dissemination and use of human-AI hybrids (see Fig. 3). It is worth noting that 62 percent of human-AI hybrids are already using a continuous mode of interaction. Not only does this enable a closer relationship between the human agent and AI-enabled system. It also enhances the learning possibilities, something we will discuss in greater detail in the following section. It is also interesting to see that in the learning dimension only the characteristic AI learns is somewhat underrepresented. This, however, is probably rooted in the frequent target of human learning in human-AI hybrids, which also influences the human and AI learn separately and the co-evolution characteristic. Another point of interest concerning AI-enabled system is that it only verifies a human agent's output or decisions in two percent of human-AI hybrids. Further research will have to clarify whether this is due to the technological shortcomings of AI-enabled systems or perhaps due to the acceptance in organizations. For now, our findings just reveal that AI-enabled systems focus on augmentation rather than automation in almost two-thirds of human-AI hybrids.

Meanwhile, we find that human agents supplement AI-enabled systems in almost three-quarters of human-AI hybrids. This lends credence to the widespread assumption that human agents are still needed to perform tasks in many scenarios. Our data analysis also reveals that the dominant cognitive functions of human agents greatly vary from those of the AI-enabled systems involved in human-AI hybrids. Whereas predicting, for instance, is a frequently used cognitive function of AI-enabled systems, its use is less common for human agents. When it comes to decision-making, however, the opposite is true, although the difference in the distribution is not quite as pronounced. We suspect that these differences are since the inherent capabilities of human agents and AI-enabled systems offer varying benefits to human-AI hybrids. One need only look at the example of predicting, which often relies on analyzing enormous amounts of data, to appreciate that an AI-enabled system can be more efficient than a human agent. Decision-making, on the other hand, is still often a matter of trust and the consideration of complex interdependencies, which frequently means that the human agent will have the final word. However, AI-enabled systems are already capable of decision-making, which is reflected in their increasing albeit less established use as a decision-making tool.

We extended our analysis to the correlation of characteristics in current human-AI hybrids to identify relevant dependencies (see “Appendix 5”). This analysis provides three distinct insights. First, a flexible form of interworking may require flexibility on the part of the human agent. Since humans can thrive when dealing with diverse challenges, a flexible approach to interworking gives them room to maneuver when they encounter obstacles or ambiguities. Moreover, a flexible interworking scenario correlates positively with the mode of continuous interaction. Since a flexible mode of interworking can result in switching between sequential and parallel work during the execution of a specific task, human agents and AI-enabled systems may need to interact several times in these instances. This is of particular interest since such continuous interaction is also positively correlated with a co-evolution of human agents and AI-enabled systems. Considering that co-evolution is a highly desirable characteristic of human-AI hybrids (Ransbotham et al. 2020), organizations stand to gain significant benefits by choosing a flexible form of interworking along with a continuous mode of interaction for their human-AI hybrids.

Second, we note a positive correlation between an AI-enabled system verifying the work of a human agent and AI learning. There is, then, an additional option for targeted learning by focusing attention on verification, and this goes well beyond the more conventional approach by which human agents verify the output of AI-enabled systems (which is also positively correlated to human learning). More specifically, the establishment of human-AI hybrids with verification interaction can be a valid option for knowledge transfer and training purposes.

Third, a human agent's focus on sensemaking is closely related to facilitating or verifying the AI-enabled system but not supplementing it. We attribute this to the fact that the act of facilitating and verifying requires the human also to evaluate and understand the output of an AI-enabled system.

5.2 Archetypes

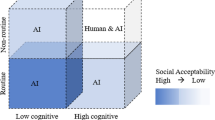

From our previous analysis, we infer that existing human-AI hybrids have diverse configurations across our taxonomy's nine dimensions. To better understand how we can conceptualize human-AI hybrids, we were interested in whether there were any overarching interworking patterns across the classified hybrids. For this purpose, we applied agglomerative cluster analysis and derive five archetypes to conceptualize prototypical human-AI hybrid usage scenarios: sequential automation (AI pre-worker), parallel automation (outsourcing AI), sequential augmentation (superpower-giving AI), sequential co-evolution (assembly line AI), and flexible co-evolution (collaborator AI). These archetypes provide a comprehensive picture of the possibilities available when human agents and AI-enabled systems engage in sociomaterial practices (see Fig. 4 and “Appendix 7” for classification percentages).

Since our research indicates that some human-AI hybrids demonstrate a closer sociomaterial entanglement than others (e.g., a continuous mode of interaction indicates closer entanglement of human agents and AI-enabled system than a singular mode of interaction), we will present our archetypes in ascending order, from the lowest to the highest perceived entanglement. In doing so, we also illustrate the potential of progressing current human-AI hybrids in such a way that they work well in a state of closer entanglement, the beneficial results of which include co-evolution.

5.2.1 Archetype 1: Sequential Automation (AI Pre-Worker)

Archetype 1 is one of two where the AI-enabled system focuses on automation. AI-enabled systems in this archetype are predominantly concerned with supplementing rather than facilitating human agents, which is why a large variety of AI cognitive functions comes into play, ranging from perceiving to creating. The form of interworking is strictly sequential, the mode of interaction singular. In this archetype, the role of human agents often goes beyond verifying AI-enabled systems. In doing so, they predominantly rely on the cognitive functions of reasoning and decision-making. Moreover, their focus is on sensemaking. Thus, we call this archetype AI pre-worker.

For example, Liew (2018) describes a case of using AI-enabled systems to automate detection and prediction in the domain of radiology. Based on perceiving and reasoning, such systems can generate an automated prediction that supports human agents in performing subsequent task steps. In doing so, these systems perform the function of an AI pre-worker. Human agents are not required until they get involved in verifying the results of the AI-enabled radiologic analysis systems, make a decision, and initiate the next steps in accordance with the completed diagnosis (Liew 2018).

5.2.2 Archetype 2: Parallel Automation (Outsourcing AI)

In our archetype 2, AI-enabled systems also focus primarily on automation. In contrast to archetype 1, however, interworking tends to take the shape of parallel execution of work and learning mostly happens separately. The mode of interaction in this archetype is continuous, allowing for a much closer entanglement of human agents and AI-enabled systems. Because the form of interworking is mostly parallel, archetype 2 human-AI hybrids often provide an opportunity to outsource certain parallel task steps to the AI-enabled system, which then performs a diverse set of cognitive functions while mainly supplementing human agents. Thus, we call this second archetype of human-AI hybrids outsourcing AI.

An example of this archetype is the AI-enabled call center system, as proposed by Kahn et al. (2020). This system offers human call center agents the possibility to outsource specific simple service requests to AI-enabled systems, such as providing answers to frequently asked questions. As a result, human agents can focus on more complex cases in which their sensemaking is required, thus supplementing the AI-enabled systems and the efficiency achieved by them.

5.2.3 Archetype 3: Sequential Augmentation (Superpower-Giving AI)

Contrary to the two preceding archetypes, the AI-enabled systems in archetype 3 focus on augmentation. This paradigm shift has significant implications for the nature of collaborative interworking between human agents and AI-enabled systems. First, AI-enabled systems in archetype 3 facilitate the work of human agents as often as they supplement it. The form of interworking is sequential, the mode of interaction singular. Learning mostly happens on the part of the human agent. As a result, the AI-enabled system acts as a facilitator and enabler. Relevant cognitive functions in this archetype are decision-making and interacting for the human agent, as opposed to reasoning and predicting for the AI-enabled system. We refer to human-AI hybrids in archetype 3 as superpower-giving AI.

An example of this archetype is the risk assessment use case in courts, as discussed by Green and Chen (2019). By performing the tasks of reasoning and predicting, an AI-enabled risk assessment system allows judges to make a data-based and, therefore, presumably fairer risk assessment. The authors show how such an approach helps to mitigate potential biases that can occur in risk assessment. They further point out that this form of augmentation, in contrast to an automated prediction, can fit the context of risk assessment in criminal justice systems. As a result, it benefits judges in that it enables them to focus their attention on the task of interpreting results, while defendants also benefit in that they experience the judge's treatment of their case to be less subjective.

5.2.4 Archetype 4: Sequential Co-Evolution (Assembly Line AI)

While archetype 4 human-AI hybrids also have an augmentation focus and a sequential form of interworking, they differ from archetype 3 human-AI hybrids in their mode of interaction. A continuous interaction between human agents and AI-enabled systems allows for co-evolution (i.e., both actors learn from each other). AI-enabled systems in archetype 4 mostly facilitate the tasks of human agents, but they also supplement them. Meanwhile, human agents are mainly concerned with supplementing AI-enabled systems. The continuous interaction puts the individual strengths of both sides in a successive sequence. AI-enabled systems predominantly perform cognitive functions, such as reasoning, predicting, and perceiving, whereas human agents concentrate on reasoning, decision-making, and interacting. Compared to archetypes 1 to 3, human agents in archetype 4 focus less on sensemaking, which is why creativity and flexibility play more prominent roles. Due to the combination of sequential and continuous interaction, we refer to archetype 4 human-AI hybrids as assembly line AI.

An example of this archetype is the hybrid ramp-up process in production, as presented by Doltsinis et al. (2018). In this case, a human operator and an AI-enabled ramp-up system work together continuously to reach a state of maximum production output. They perform a so-called guided exploration strategy in which the AI-enabled system continuously augments the human agent by formulating possible ramp-up policies, the execution of which is subject to the human agent's decision. Interestingly, this sequential co-evolution approach achieved a close to optimal behavior far more efficiently than a purely algorithmic approach (Doltsinis et al. 2018).

5.2.5 Archetype 5: Flexible Co-Evolution (Collaborator AI)

With its flexible form of interworking, archetype 5 marks the last augmentation-focused archetype. Since its mode of interaction is strictly continuous, it facilitates a co-evolution of human agents and AI-enabled systems. However, because interworking takes a flexible form, the direction of learning is also somewhat flexible. For instance, some cases in this archetype prioritize human learning, while others separate the learning of human agents from that of AI-enabled systems. The most relevant AI cognitive functions in archetype 5 are reasoning, perceiving, and predicting, whereas human agents mostly rely on reasoning and interacting. AI-enabled systems in archetype 5 facilitate the work of human agents as often as they supplement it. Human agents focus mainly on supplementing AI-enabled systems in this archetype. Because of this symbiotic collaboration, we refer to archetype 5 human-AI hybrids as the collaborator AI, not least because human agents are marked out by their flexibility to collaborate.

An example of this archetype is the smart augmented instruction system for mechanical assembly, as discussed by Lai et al. (2020). Here, a human assembly worker is paired with an AI-enabled assistance system that delivers real-time guidance and instruction through augmented reality. In the process, both continuously learn from each other, leading to a mutually beneficial co-evolution.

6 Discussion

6.1 Contribution

Even though first applications of human-AI hybrids have already made their way into organizations, research has yet to embrace a holistic perspective that acknowledges the contribution of both human agents and AI-enabled systems as separate entities with distinct characteristics that globally intra-act in human-AI hybrids (Rai et al. 2019). Our study addresses this issue by means of two methods widely recognized in IS research: taxonomy development and cluster analysis to develop archetypes. Drawing from weak sociomateriality as justificatory knowledge, our study contributes to the descriptive knowledge of human-AI hybrids and the ongoing discourse on human-AI collaboration (Benbya et al. 2021; Dwivedi et al. 2021; Raisch and Krakowski 2021; Seeber et al. 2020) by providing 1) a well-founded taxonomy of human-AI hybrids and 2) archetypes of human-AI hybrids. We understand both contributions together as a theory for analyzing that provides a solid foundation for future sensemaking and design research in the field of human-AI hybrids (Gregor 2006).

Our taxonomy of human-AI hybrids gives a clear structure to the collaborative interworking of human agents and AI-enabled systems. Using weak sociomateriality as justificatory knowledge, we present AI-enabled systems and human agents as locally separate entities with distinct characteristics that intra-act globally to form sociomaterial practices. Bearing this in mind during our taxonomy development process, we took an integrated perspective on the complementary interworking of human agents and AI-enabled systems. It follows that our taxonomy not only enables a well-founded classification of individual human-AI hybrids but also creates a better understanding of what constitutes human-AI hybrids. Based on such a theoretically founded and empirically validated understanding of human-AI hybrids, our study complements existing research that structures specific aspects of human-AI collaboration and hybrid intelligence such as tasks and interactions (Dellermann et al. 2019a, 2019b; Traumer et al. 2017).

Based on the classification and analysis of 101 human-AI hybrids, we present five archetypes of human-AI hybrids that outline the design opportunities that come with the collaborative interworking of human agents and AI-enabled systems. Each archetype represents a unique form of interworking, each of which is illustrated by the analysis of an exemplary human-AI hybrid. Building on an extensive knowledge base, these archetypes offer insights into the prototypical implementation of human-AI hybrids in the real world. Our taxonomy of human-AI hybrids also makes it possible to indicate differences in how closely human-AI hybrids are entangled in these archetypes. Our analysis of relative frequencies and the correlation between different characteristics of human-AI hybrids reveals interesting dependencies, such as a link between the mode of interworking and the flexibility requirement for human agents. Moreover, we find that an AI-enabled system’s focus is connected to the learning possibilities. With this, our work acknowledges the importance of both human and non-human agency, thus complementing existing research that rather focuses on specific uses cases as well as on predominantly social (e.g., Davenport et al. 2020; Maedche et al. 2019; Paschen et al. 2020; Rzepka and Berger 2018) or technical aspects (e.g.,Liew 2018; Østerlund et al. 2021). Our proposed archetypes also illustrate that both automation and augmentation can be important goals of human-AI hybrids. Based on both our taxonomy and the proposed archetypes, circumstances can be analyzed in which one or the other focus (automation or augmentation) is more favorable. That is, our work makes it possible to identify interdependencies between characteristics of human-AI hybrids that allow inferences about the focus. Therefore, our study provides a solid foundation on which to further explore the decision criteria for targeting an AI-enabled system toward automation or augmentation. In this way, our study also contributes to research focused on the discussion of automation versus augmentation (Benbya et al. 2021; Raisch and Krakowski 2021).

6.2 Theoretical Implications

Our work connects to and advances the discourse on human-AI collaboration as well as the future of work in IS research (Dellermann et al. 2019b; Maedche et al. 2019; Rai et al. 2019). By using weak sociomateriality as justificatory knowledge, we answer the calls of IS (Cecez-Kecmanovic et al. 2014; Sarker et al. 2019) and AI (Davenport et al. 2020; Paschen et al. 2020) research for a balanced consideration of human (human agency) and AI (material agency) in human-AI collaboration scenarios. Therefrom, our study offers two distinct theoretical implications.

To the best of our knowledge, our work is the first in which weak sociomateriality is leveraged to study human-AI hybrids, which is to say that it introduces a new perspective on human-AI collaboration. It is a balanced perspective that accounts for the respective attributes and capabilities of human agents and AI-enabled systems in equal measure. This balanced perspective is consistent with recent research on agentic IS artifacts illustrating that AI-enabled systems are no longer mere passive tools waiting to be used for repetitive tasks (Baird and Maruping 2021). Instead, AI-enabled systems have developed the ability to initiate actions and accept rights and responsibilities on behalf of humans and organizations (Ågerfalk 2020). Our study is appreciative of the fact that social and material agency are converging with progress in AI. In other words, this means that our work not only avoids overemphasizing the human-centeredness that often hampers practice theory (Jones 2014) but also the harmful tendency to subjugate man to the machine (Cecez-Kecmanovic et al. 2014). In doing so, we also contrast earlier sociomateriality studies where the social aspect fully controls the material one (e.g., Leonardi 2013). We use weak sociomateriality as justificatory knowledge in the specific context of human-AI hybrids to understand how human agents and AI-enabled systems become what they are in these use cases. Sociomateriality can help researchers to analyze and understand the high degree of interconnectedness that characterizes human-AI hybrids (Cecez-Kecmanovic et al. 2014). Moreover, this approach has two further benefits: it enables researchers to view human agents and AI-enabled systems as separate entities with distinct characteristics as well as potential interactions. At the same time, it also allows us to appreciate them as a hybrid assemblage that has the potential to be greater than the sum of its parts (Leonardi 2013; Jones 2014). By understanding both aspects, we shed light on how human agents and AI-enabled systems could combine their strengths and achieve results that would be impossible if they acted separately.

Second, our study serves a catalytic means for the progress of broader theorizing on human-AI hybrids and the future of work in general. The clear structure provided in this study reveals avenues for more in-depth studies of the collaborative interworking in human-AI hybrids (Gregor 2006). In the long term, our taxonomy of human-AI hybrids offers a theoretically founded and empirically validated foundation on which future research can build more advanced theories for explanation, design, and action concerning the collaborative interworking of human agents and AI-enabled systems (Gregor 2006). By applying our taxonomy to a sample of human-AI hybrids and deriving five archetypes, we have provided a starting point for a discussion on the future division of labor between human agents and AI-enabled systems (Seeber et al. 2020; Østerlund et al. 2021). In this context, our archetypes provide insights into how the roles of humans are likely to shift and how the capabilities and limits of AI-enabled systems are likely to contribute to this shift. The distinctive characteristics of each archetype (e.g., cognitive functions, interplay patterns, and foci) illustrate how this division of labor plays out in practice. We encourage fellow researchers to build on our analysis of current human-AI hybrids and develop specific hypotheses for the foundational ideas in this paper to be tested quantitatively.

6.3 Managerial Implications

Along with these theoretical implications, this study also has several practical ones. Our taxonomy makes it a straightforward task to classify the cognitive functions, foci, and interplay of human agents as well as those of AI-enabled systems, which puts decision-makers in a position to analyze and understand human-AI hybrids and their sensible use. Moreover, it lets decision-makers comprehend the characteristics that constitute specific human-AI hybrids and what these characteristics might entail.

Our archetypes of human-AI hybrids with their distinctive combinations of characteristics facilitate a deeper understanding of how human-AI hybrids work in concrete, practical terms. Thus, our archetypes can serve as a blueprint for practitioners to create human-AI hybrids and generate value for their respective tasks and processes. As organizations today still struggle to identify worthwhile use cases for AI-enabled systems, our archetypes deliver inspirational guidance to identify potential applications. Practitioners can also recognize these archetypes in real-world scenarios. With such applicable understanding, our study can help them evaluate how current human-AI hybrids can be brought into closer entanglement, which may result in advantageous benefits, such as co-evolution.

7 Conclusion

Our work is subject to certain limitations, which, if recognized as such, promise to provide opportunities for further research. First, although we have covered a significant number of human-AI hybrid use cases in our research, an analysis of additional, potentially even more advanced, and complex use cases would allow fellow researchers to refine our taxonomy and improve our archetypes. As the deployment of human-AI hybrids is still in its infancy, more elaborate use cases are likely to emerge. Future research ought to consider relevant emergent cognitive functions along with focus points or interplay dynamics of human agents and AI-enabled systems. Meanwhile, the expected advancements in the field of human-AI hybrids might require certain modifications of our proposed dimensions or indeed additions. Second, the development of a taxonomy requires a certain amount of generalization and simplification of complex issues. This limits, as it must, our insights from the analysis of specific human-AI hybrids. An in-depth case study of specific human-AI hybrids, however, might provide the additional insights required to explain the more complex correlations and interdependencies of characteristics within those use cases. Third, our study focuses human-AI hybrids, that is, the collaborative interworking of human agents and AI-enabled systems. However, there may be cases in which AI-enabled systems (or human agents) perform better when acting alone (Hemmer et al. 2021; Bansal et al. 2021). Future research could build upon our taxonomy to facilitate a deeper understanding of the characteristics of these cases. Finally, even though we have demonstrated the potential of our taxonomy to deepen the understanding of human-AI hybrids, the inherently descriptive nature of a taxonomy limits the amount of guidance it can give. We encourage future research in the belief that it can develop more prescriptive artifacts, such as decision support frameworks or design principles, to design human-AI hybrids. Only then will it be possible to fully support practitioners in their endeavor to make the most purposeful use of human-AI hybrids.

In conclusion, despite its limitations, we believe that our research provides both foundation and stimulation for future research in the increasingly relevant field of human-AI hybrids. Thus, we hope that our research will be recognized as a starting point for the development of further guiding elements that may be required for the successful deployment of human-AI hybrids in practice and that it will provide fellow researchers with opportunities for continued work and discussion in this domain.

References

Adadi A, Berrada M (2018) Peeking inside the black-box: a survey on explainable artificial intelligence (XAI). IEEE Access 6:52138–52160. https://doi.org/10.1109/ACCESS.2018.2870052

Ågerfalk PJ (2020) Artificial intelligence as digital agency. Eur J Inf Syst 29(1):1–8. https://doi.org/10.1080/0960085X.2020.1721947

Agrawal A, Gans J, Goldfarb A (2018) Prediction machines: the simple economics of artificial intelligence. Harvard Business Review Press, Boston

Aldenderfer M, Blashfield R (1984) Cluster analysis. Sage, Thousand Oaks

Ansari F, Glawar R, Nemeth T (2019) PriMa: a prescriptive maintenance model for cyber-physical production systems. Int J Comput Integr Manuf 32(4–5):482–503. https://doi.org/10.1080/0951192X.2019.1571236

Bailey KD (1994) Typologies and taxonomies: an introduction to classification techniques. Sage, Thousand Oaks

Baird A, Maruping LM (2021) The next generation of research on IS use: a theoretical framework of delegation to and from agentic IS artifacts. MIS Q 45(1):315–341. https://doi.org/10.25300/MISQ/2021/15882

Bansal G, Wu T, Zhou J, Fok R, Nushi B, Kamar E, Ribeiro MT, Weld D (2021) Does the whole exceed its parts? The effect of AI explanations on complementary team performance. In: Proceedings of the 2021 CHI conference on human factors in computing systems, Yokohama

Barad K (2003) Posthumanist performativity: toward an understanding of how matter comes to matter. Signs J Women Cultur Soc 28(3):801–831. https://doi.org/10.1086/345321

Barredo Arrieta A, Díaz-Rodríguez N, Del Ser J, Bennetot A, Tabik S, Barbado A, Garcia S, Gil-Lopez S, Molina D, Benjamins R, Chatila R, Herrera F (2020) Explainable artificial intelligence (XAI): concepts, taxonomies, opportunities and challenges toward responsible AI. Inf Fusion 58:82–115. https://doi.org/10.1016/j.inffus.2019.12.012

Benbya H, Pachidi S, Jarvenpaa S (2021) Special issue editorial: artificial intelligence in organizations: implications for information systems research. J Assoc Inf Syst 22(2):281–303. https://doi.org/10.17705/1jais.00662

Berente N, Gu B, Recker J, Santhanam R (2021) Managing artificial intelligence. MIS Q 45(3):1433–1450. https://doi.org/10.25300/MISQ/2021/16274

Berger B, Adam M, Rühr A, Benlian A (2021) Watch me improve – algorithm aversion and demonstrating the ability to learn. Bus Inf Syst Eng 63(1):55–68. https://doi.org/10.1007/s12599-020-00678-5

Bowker GC, Star SL (1999) Sorting things out: classification and its consequences. MIT Press, Cambridge

Brynjolfsson E, Mitchell T (2017) What can machine learning do? Workforce implications. Science 358(6370):1530–1534. https://doi.org/10.1126/science.aap8062

Bughin J, Seong J, Manyika J, Chui M, Joshi R (2018) Notes from the AI frontier: insights from hundreds of use cases. https://www.mckinsey.com/featured-insights/artificial-intelligence/notes-from-the-ai-frontier-applications-and-value-of-deep-learning. Accessed 3 Jan 2022

Calinski T, Harabasz J (1974) A dendrite method for cluster analysis. Commun Stat Theor Meth 3(1):1–27. https://doi.org/10.1080/03610927408827101

Carter C, Kaufmann L, Michel A (2007) Behavioral supply management: a taxonomy of judgment and decision-making biases. Int J Phys Distrib Logist Manag 37(8):631–669. https://doi.org/10.1108/09600030710825694

Cecez-Kecmanovic D, Galliers R, Henfridsson O, Newell S, Vidgen R (2014) The sociomaterialty of information systems: current status, future directions. MIS Q 38(3):809–830. https://doi.org/10.25300/MISQ/2014/38:3.3

Chatterjee S, Sarker S, Lee MJ, Xiao X, Elbanna A (2021) A possible conceptualization of the information systems (IS) artifact: a general systems theory perspective. Inf Syst J 31(4):550–578. https://doi.org/10.1111/isj.12320

Cohen J (1960) A coefficient of agreement for nominal scales. Educational and Psychol Meas 20(1):37–46. https://doi.org/10.1177/001316446002000104

Corea F (2019) An introduction to data: everything you need to know about AI, big data and data science. Springer, Cham

Daugherty PR, Wilson HJ (2018) Human + machine: reimagining work in the age of AI. Harvard Business Review Press, Boston

Davenport TH (2018) The AI advantage: how to put the artificial intelligence revolution to work. MIT Press, Cambridge

Davenport TH, Kirby J (2015) Beyond automation. Harv Bus Rev 93(5):58–65

Davenport TH, Ronanki R (2018) Artificial intelligence for the real world. Harv Bus Rev 96(1):108–116

Davenport TH, Guha A, Grewal D, Bressgott T (2020) How artificial intelligence will change the future of marketing. J Acad Mark Sci 48(1):24–42. https://doi.org/10.1007/s11747-019-00696-0

Davies DL, Bouldin DW (1979) A cluster separation measure. IEEE Trans Pattern Anal Mach Intell 1(2):224–227. https://doi.org/10.1109/TPAMI.1979.4766909

Dellermann D, Ebel P, Söllner M, Leimeister JM (2019b) Hybrid intelligence. Bus Inf Syst Eng 61(5):637–643. https://doi.org/10.1007/s12599-019-00595-2

Dellermann D, Calma A, Lipusch N, Weber T, Weigel S, Ebel P (2019a) The future of human-AI collaboration: a taxonomy of design knowledge for hybrid intelligence systems. In: Proceedings of the 52nd Hawaii international conference on system sciences, Maui

Demlehner Q, Schoemer D, Laumer S (2021) How can artificial intelligence enhance car manufacturing? A Delphi study-based identification and assessment of general use cases. Int J Inf Manag 58:102317. https://doi.org/10.1016/j.ijinfomgt.2021.102317

Doltsinis S, Ferreira P, Lohse N (2018) A symbiotic human–machine learning approach for production ramp-up. IEEE Trans Hum-Mach Syst 48(3):229–240. https://doi.org/10.1109/THMS.2017.2717885

Dwivedi YK, Hughes L, Ismagilova E, Aarts G, Coombs C, Crick T, Williams MD (2021) Artificial intelligence (AI): multidisciplinary perspectives on emerging challenges, opportunities, and agenda for research, practice and policy. Int J Inf Manag 57:101994. https://doi.org/10.1016/j.ijinfomgt.2019.08.002

Faulkner P, Runde J (2012) On sociomateriality. In: Leonardi PM et al (eds) Materiality and organizing. Oxford University Press, Oxford, pp 49–66

Ferreira L, Hitchcock DB (2009) A comparison of hierarchical methods for clustering functional data. Commun Stat Simul Comput 38(9):1925–1949. https://doi.org/10.1080/03610910903168603

Fleiss J (1971) Measuring nominal scale agreement among many raters. Psychol Bull 76(5):378–382. https://doi.org/10.1037/h0031619

Fügener A, Grahl J, Gupta A, Ketter W (2021) Will humans-in-the-loop become borgs? Merits and pitfalls of working with AI. MIS Q 45(3):1527–1556. https://doi.org/10.25300/MISQ/2021/16553

Gimpel H, Rau D, Röglinger M (2018) Understanding FinTech start-ups – a taxonomy of consumer-oriented service offerings. Electron Mark 28(3):245–264. https://doi.org/10.1007/s12525-017-0275-0

Goldfarb A, Gans J, Agrawal A (2019) The economics of artificial intelligence: an agenda. University of Chicago Press, Chicago

Green B, Chen Y (2019) Disparate interactions. In: Proceedings of the conference on fairness, accountability, and transparency, Atlanta

Gregor S (2006) The nature of theory in information systems. MIS Q 30(3):611–642. https://doi.org/10.2307/25148742

Gregor S, Jones D (2007) The anatomy of a design theory. J Assoc Inf Syst 8(5):312–335. https://doi.org/10.17705/1jais.00129

Grønsund T, Aanestad M (2020) Augmenting the algorithm: emerging human-in-the-loop work configurations. J Strateg Inf Syst 29(2):101614. https://doi.org/10.1016/j.jsis.2020.101614

Gunning D, Aha D (2019) DARPA’s explainable artificial intelligence (XAI) program. AI Mag 40(2):44–58. https://doi.org/10.1609/aimag.v40i2.2850

Hair JF, Black WC, Babin BJ, Anderson RE (2010) Multivariate data analysis. Pearson, Harlow

Harper RHR (2019) The role of HCI in the age of AI. Int J Hum-Comput Interact 35(15):1331–1344. https://doi.org/10.1080/10447318.2019.1631527