Abstract

Artificial intelligence (AI) voice assistants possess significant market potential and offer diverse services through voice interaction. However, the influence of anthropomorphic features on consumers’ mind perception and continued use intention, particularly across various age groups, remains underexplored. To address this research gap, we employ mind perception theory, the stimulus–organism-response model, and cognitive load theory to conduct a research model. Using a sample of 303 survey responses, we evaluate the research model and hypotheses through partial least squares analysis. Findings reveal that these features positively affect alleviating loneliness and enhancing perceived usefulness. Additionally, the alleviation of loneliness and perceived usefulness contribute to consumers’ continued use intention and mediate the relationship between anthropomorphic features and continued use intention. Furthermore, the effect of anthropomorphic features on mind perception varies across age groups. This research enhances understanding of the influence of anthropomorphic features on consumers’ mind perception and continued use intention of AI voice assistants, providing valuable insights for product developers and marketers to enhance the consumer experience.

Similar content being viewed by others

Introduction

The swift development of artificial intelligence (AI) and voice technology has led to the emergence of AI-powered voice assistants, exemplified by Apple’s Siri, Amazon’s Alexa, and Google’s Google Home, which have provided significant assistance to consumers in executing various tasks encompassing information searches, online shopping, and simple tasks (McLean & Osei-Frimpong, 2019). These technologies have the potential to revolutionize the field of personal digital services (Hu et al., 2021). According to forecasts, the global AI voice assistant market is expected to reach $7.9 billion by 2024, and the growth of AI voice assistants is expected to reach 1000% from 2018 to 2023 (Hu et al., 2021; McLean & Osei-Frimpong, 2019). AI voice assistants, comprising both software and hardware components, possess the capability to engage with consumers and respond to their inquiries or commands (Mishra et al., 2022). In everyday interactions with consumers, AI voice assistants have emerged as a pivotal channel for providing information and support (Pelau et al., 2021). They can help consumers perform various tasks, such as message transmission, telephone calls, music playback, weather forecast checking, and answering general queries (Hu et al., 2021; Kim & Choudhury, 2021). Moreover, their capacity for personalized task execution through consumer preference and habit assimilation is noteworthy. In addition, due to the impact of COVID-19, people have to keep a social distance from their relatives, friends, colleagues, and other social relations (Mishra et al., 2022), which aggravates people’s loneliness. AI voice assistants have the potential to alleviate loneliness by providing companionship, playing music or videos, and engaging in conversations (Park et al., 2018), which can be particularly beneficial for older adults because the use of voice interaction can lower the barriers of people with low technical literacy (Kim & Choudhury, 2021).

Despite the manifold advantages AI voice assistants offer and their positive market growth, scholars express concerns derived from historical market experiences concerning specific smart devices, such as smartwatches and smart glasses (Lee et al., 2021). These concerns center on the potential for AI voice assistants to remain in the initial adoption phase for a considerable period (Lee et al., 2021; Xie et al., 2023). Essentially, AI voice assistants may experience low continued use intention in the later stages of adoption. It has been acknowledged that while initial adoption marks a pivotal step in the diffusion of new technology, continued use exerts a more profound influence than initial adoption in terms of the long-term viability and sustainability of the technology (Bhattacherjee, 2001; Wang et al., 2019; Xie et al., 2023). To ensure the enduring survival and ultimate triumph of AI voice assistants, it becomes imperative to promote their continued use by consumers beyond the initial adoption (Lv et al., 2022; Pal et al., 2021). To address the low continuation rates of AI voice assistants, there is a need to examine the determinants that may boost consumers’ continued use intention. While prior research within the information system discipline has largely centered on the initial adoption of AI voice assistants (Cheng et al., 2022; Liu & Tao, 2022; Mishra et al., 2022; Moussawi & Benbunan-Fich, 2021; Park et al., 2018; Pelau et al., 2021), however, initial adoption and continued use intention are two different concepts that are influenced by different factors; therefore, the results of previous studies on the factors influencing the initial adoption of AI voice assistants cannot be directly applied to continued use intention (Ding, 2019; Pal et al., 2021). In addition, considering that continued use intention is a major predictor of continued use behavior (Tang & Zhang, 2020), and it is challenging to measure users’ continued use behavior through questionnaires (Xie et al., 2023), the present study endeavors to elucidate the antecedents of continued use intention among AI voice assistant consumers.

Previous research on the continued use intention of AI voice assistants has predominantly investigated key antecedents from various perspectives, including personal traits, personal motivation, artificial autonomy, and human–computer interaction. For example, Lee et al. (2021) revealed that personal innovativeness is positively associated with the continuation intention of AI assistants. Cheng and Jiang (2020) found that utilitarian, hedonic, technology, and social gratifications derived from chatbot use positively predicted consumers’ continuance intention. Hu et al. (2021) identified three types of artificial autonomy (sensing, thought, and action autonomy) as contributors to consumers’ continuance intention of AI assistants. Xie et al. (2023) investigated the influence mechanism of human–computer interaction and human–human interaction on AI assistant consumers’ continuance intention. However, in the field of human–AI interaction, a pivotal concept, anthropomorphism, remains underexplored in studies concerning the continued use intention of AI voice assistants. Anthropomorphism denotes the extent to which an entity possesses human-like characteristics (Cheng et al., 2022). It plays a vital role in the effectiveness of AI products and has greatly affected the consumer experience in the human–AI interaction process (Kim & Choudhury, 2021). AI products endowed with anthropomorphic features have the potential to enhance the psychological distance between humans and AI (Li & Sung, 2021), foster trust in AI (Cheng et al., 2022), facilitate the emergence of social presence (Munnukka et al., 2022), and impact individuals’ positive evaluations of AI (Li & Sung, 2021). Overall, anthropomorphism can have a positive impact on the consumer experience, and these good experiences may also influence consumers’ continued use intention (Hu et al., 2021; Lv et al., 2022; Xie et al., 2023). Although some studies have pointed out that high anthropomorphism may trigger a sense of unease or even revulsion (Kim et al., 2022; Troshani et al., 2021; Yam et al., 2021), a more general consequence of anthropomorphism is a positive influence on interactions with anthropomorphized entities (Cheng et al., 2022; Li & Sung, 2021; Munnukka et al., 2022; Pelau et al., 2021). Previous research on the influence of anthropomorphism on consumer behavior has primarily centered on their effects on initial adoption (Moussawi & Benbunan-Fich, 2021; Pelau et al., 2021; Qiu & Benbasat, 2009) and purchase intention (Malhotra & Ramalingam, 2023; Schanke et al., 2021; Sharma et al., 2022). However, little research has explored the influence of anthropomorphism on the continued use intention of AI voice assistants (Mishra et al., 2022). Our study aims to examine the antecedents of the continued use intention of AI voice assistants from the novel perspective of anthropomorphism.

Moreover, most studies only use AI products’ anthropomorphism as a single variable (Malhotra & Ramalingam, 2023; Moussawi & Benbunan-Fich, 2021; Pelau et al., 2021; Qiu & Benbasat, 2009; Sharma et al., 2022) and few studies have divided anthropomorphic features into multiple dimensions to examine the impact of anthropomorphism on the continued use intention of AI voice assistants. Given that different anthropomorphic features may have varying degrees of impact on humanness perception (Fan et al., 2016; Go & Sundar, 2019), and these humanness perceptions may influence consumers’ continued use intention of AI products (Hu et al., 2021), this study further divides anthropomorphism into three dimensions and explores how these anthropomorphic features affect mind perception and continued use intention of AI voice assistants. Firstly, as a voice-based technology, the most essential feature of AI voice assistants is their voice cues, which refer to the extent to which the voice of the AI voice assistant sounds like a human (Fan et al., 2016). Secondly, message interactivity cues are also an important anthropomorphic feature as they reflect the relevance of the information replied by the AI voice assistant to the information provided by people and it also provides the most amount of information to consumers (Go & Sundar, 2019). Finally, emotional cues are a deep emotional capability of AI voice assistants that mimic human empathy, which refers to the emotional response of AI voice assistants to people’s feelings and thoughts (Pelau et al., 2021). Since most of the AI voice assistants on the market do not have a specific anthropomorphic appearance (such as Apple’s HomePod, Amazon’s Alexa, and Google’s Google Home), visual cues are not taken into account. Therefore, our study proposes to divide anthropomorphic features into three dimensions: voice cues, message interactivity cues, and emotional cues, based on the distinctive characteristics of AI voice assistants.

According to the mind perception theory, individuals perceive non-human entities based on two main dimensions: warmth and competence (Hu et al., 2021). Warmth refers to the perception of friendliness, trustworthiness, and the degree of human-like characteristics, while competence pertains to the perception of capability, usefulness, and efficiency of the entity. In multiple disciplines, these two dimensions jointly contribute to a comprehensive understanding of how the attributes of non-human entities influence individual emotions, attributions, and behaviors by virtue of their human-like perceptions (Cuddy et al., 2008). In the field of information systems, while human-like perception has proven valuable for explaining individuals’ responses to AI products, prior research on AI products has mostly focused on a single dimension, either warmth or competence (Lee et al., 2020). Some studies have delved into factors tied to the warmth dimension of AI products, such as closeness and trust, because the social emotions people perceive during their interactions with AI are core determinants of the AI product experience (Lee et al., 2020; Mulcahy et al., 2022). Other studies focus on factors related to the AI products’ competence dimension, such as perceived usefulness, automation, mobility, performance expectancy, and functionality, because whether they are competent in task performance is germane to the perceived service quality and customer experience (Lu et al., 2019; Nikou, 2019; Park, 2020; Park et al., 2018; Shin et al., 2018; Yang et al., 2017). These two mind perception dimensions provide a suitable theoretical foundation for examining the impact of anthropomorphic features on the continued use intention of AI voice assistants because anthropomorphic features can easily lead individuals to perceive AI voice assistants as human-like, thereby shaping their subsequent responses to these AI voice assistants. However, there exists a dearth of research examining both the warmth and competence dimensions in the literature related to the continued use intention of AI voice assistants. And even though some have used both dimensions, few have defined them in more detail for specific research contexts (Hu et al., 2021). This study seeks to address this gap by investigating both dimensions simultaneously, with the alleviation of loneliness as an indicator of perceived warmth and perceived usefulness as an indicator of perceived competence. Therefore, we propose the first research question: RQ1 How do different anthropomorphic features affect mind perception and the continued use intention of AI voice assistants?

Furthermore, the existing literature fails to consider the potential influence of age differences on the continued use intention of AI voice assistants. Although limited research on this issue has been conducted by Guo et al. (2016) regarding the impact of age differences on the acceptance of mobile medical services, the research gap remains partially narrowed. Which partially narrowed the research gap. This study addresses the potential differences in the impact mechanism of anthropomorphic features on the continued use intention of AI voice assistants between older and younger consumer groups. For example, according to cognitive load theory (Ghasemaghaei et al., 2019; Hollender et al., 2010), older adults have difficulty engaging in tasks with high cognitive load due to reduced attentional resources and declining cognitive abilities. Therefore, when faced with AI voice assistants, they may have difficulty gaining from the anthropomorphic feature of message interaction cues, which contain a large amount of information. When faced with vocal cues and affective cues, older adults may easily gain loneliness relief and perceived usefulness from them because they bring less cognitive load. Therefore, this study explores age differences and their effects on the influence mechanism of anthropomorphic features on the continued use intention of AI voice assistants. Therefore, we propose the second research question: RQ2 How do age differences affect the mechanism of anthropomorphic features on the continued use intention of AI voice assistants?

To address the above research questions, drawing on the literature on anthropomorphism in AI products, mind perception theory, and the stimulus-organism-response (S–O-R) model, we develop a research model to examine the effects of anthropomorphic features on consumers’ perception of AI voice assistants and their continued use intention by taking the anthropomorphic features as stimulus variables, the mind perceptions as organismic variables, and the continued use intention as a response variable. In addition, based on the literature on age difference and cognitive load theory, we incorporate the impact of age difference into our research model. We collected 303 valid samples with experience using AI voice assistants through the questionnaire method. The findings reveal that (1) voice cues, message interactivity cues, and emotional cues effectively mitigate consumers’ loneliness and exert a positive influence on their perceived usefulness; (2) alleviation of loneliness and perceived usefulness promote consumers’ continued use intention of AI voice assistants, and they mediate the relationship between the three anthropomorphic features and the continued use intention; (3) message interactivity cues containing substantial informational content exert a greater impact on alleviating loneliness and enhancing perceived usefulness for younger consumers compared to older ones; (4) voice cues possess a more pronounced effect on the alleviation of loneliness for older consumers than younger ones, whereas emotional cues have a stronger influence on perceived usefulness for older consumers than younger ones.

Our research has made significant contributions to the literature on AI voice assistants. Firstly, prior literature on AI voice assistants mainly focuses on initial adoption (Cheng et al., 2022; Liu & Tao, 2022; Mishra et al., 2022; Moussawi & Benbunan-Fich, 2021; Park et al., 2018; Pelau et al., 2021). Few studies have concentrated on the continued use intention of AI voice assistants (Ding, 2019; Pal et al., 2021). Our study adds to the literature on the continued use intention of AI voice assistants, promoting continued use intention by consumers beyond their initial adoption (Lv et al., 2022; Pal et al., 2021). Secondly, we extend existing research on the relationship between anthropomorphic features and the continued use intention of such assistants by classifying them into three dimensions and drawing conceptual distinctions between voice cues, message interactivity cues, and emotional cues. Thirdly, we apply mind perception theory to operationalize the alleviation of loneliness and perceived usefulness as two basic dimensions inherent in the perception of human-likeness in non-human entities and simultaneously investigate the psychological mechanisms underlying the impact of anthropomorphic features on the continued use intention of AI voice assistants. Lastly, our study examines the impact of age differences on the mechanism of continued use intention of AI voice assistants, which has received limited attention in prior studies. Our findings offer new insights for researchers to investigate consumers’ continued use intention of AI voice assistants and suggest ways for product developers to improve the functionality of such assistants from an anthropomorphic perspective. Additionally, the study highlights the need for marketers to adopt differentiated marketing strategies for consumers of different ages based on the identified aspects of anthropomorphism.

Literature review

Anthropomorphism in AI products

Anthropomorphism refers to human-like characteristics exhibited by non-human entities (Cheng et al., 2022). It plays an important role in the effectiveness of AI products such as AI voice assistants and has greatly affected the consumer experience in the human–computer interaction process (Kim & Choudhury, 2021), thus promoting the consumer’s willingness to use (Huang et al., 2021). For example, in the context of the service industry, AI applications’ anthropomorphism positively influences consumers’ trust in AI applications in the process of human–computer interaction (Cheng et al., 2022; Pentina et al., 2023; Troshani et al., 2021). For those AI assistants with high anthropomorphism, the psychological distance between consumers and them is closer, and consumers tend to have a positive attitude toward them (Li & Sung, 2021).

Previous literature on anthropomorphism has either delved into the influence of a single dimension of anthropomorphism (Benlian et al., 2020; Yam et al., 2021) or defined anthropomorphism broadly as the degree to which AI products are humanlike (Delgosha & Hajiheydari, 2021; Mishra et al., 2022; Munnukka et al., 2022). However, few studies have simultaneously discussed the effects of different anthropomorphic features of AI voice assistants on user perception and user behavior. Based on the previous studies, this paper mainly divides anthropomorphic features into three dimensions: voice cues, message interactivity cues, and emotional cues.

Firstly, as a voice-based technology, the most essential feature of AI voice assistants is their voice cues, which refer to the extent to which the voice of the AI voice assistant sounds like a human, and those AI voice assistants with more friendly and warm voices may be more acceptable to users (Fan et al., 2016). Secondly, message interactivity cues respond to AI voice assistants’ interaction capabilities, which refer to the relevance of the information replied by the AI voice assistant to the information provided by people, while it also provides the most amount of information to consumers (Go & Sundar, 2019). Finally, emotional cues are a deep emotional capability of AI voice assistants that mimic human empathy, which refers to the emotional response of AI voice assistants to people’s feelings and thoughts (Pelau et al., 2021). Since most of the AI voice assistants on the market do not have a specific anthropomorphic appearance (such as Apple’s HomePod, Amazon’s Alexa, and Google’s Google Home), visual cues are not taken into account.

Mind perception theory

Mind perception theory has been employed to elucidate how the attributes of non-human entities contribute to the formation of individual emotions, attributions, and behaviors by being perceived as human (Cuddy et al., 2008). This theory has discerned warmth and competence as two pivotal dimensions of human-like mind attribution in non-human entities (Gray & Wegner, 2010; Gray et al., 2007; Hu et al., 2021; Zhou et al., 2019). The dimension of warmth pertains to the extent to which individuals perceive caring, benevolence, and friendliness within non-human entities, while the dimension of competence relates to the degree to which individuals perceive intelligence, capability, efficacy, and efficiency in non-human entities (Aaker et al., 2010; Hu et al., 2021).

In the information systems discipline, some studies have concentrated on competence-related factors, including perceived usefulness, automation, mobility, performance expectancy, and functionality (Lu et al., 2019; Nikou, 2019; Park, 2020; Park et al., 2018; Shin et al., 2018; Yang et al., 2017). Other studies have delved into warmth-related factors, including trust and closeness (Lee et al., 2020; Mulcahy et al., 2019; Van Pinxteren et al., 2019). Although previous research has highlighted the capability and warmth dimensions of AI-related products (Hu et al., 2021), the role of AI voice assistants in alleviating loneliness has rarely been used as a warmth dimension to explain the impact of AI-related product features (Kim et al., 2019). The alleviation of loneliness brought about by the use of information technologies, such as social media and companion robots, has been proven to provide users with more social support, promote people’s well-being, and ultimately lead to consumers’ continued use intention of this information technology (Odekerken-Schröder et al., 2020). Therefore, the alleviation of loneliness brought by AI voice assistants can provide consumers with human-like warmth and intimacy, enabling consumers to maintain and strengthen trust and harmonious relationships with AI voice assistants (Han & Yang, 2018), which can be an essential variable of the warmth dimension. Furthermore, previous studies have also shown that the use of information technology can be effective in reducing perceived loneliness among older users (Ma et al., 2021), and AI voice assistants can also establish digital companionship with older adults to alleviate loneliness (Kim & Choudhury, 2021). Our study aims to investigate further whether there are differences between older and younger people in the effects of anthropomorphic features on perceived competence and perceived warmth of AI voice assistants.

In this paper, the variable of alleviation of loneliness is defined as the degree to which the loneliness perceived by individuals is relieved (Yang et al., 2021). Perceived usefulness refers to the degree to which a consumer feels that using the new technology (e.g., an AI voice assistant) is useful for supporting his/her activities as well as helping perform some specific tasks effectively (e.g., getting information and making an online order) (Ashfaq et al., 2020). Therefore, this paper takes the alleviation of loneliness as the main variable of the warmth dimension and perceived usefulness as the main variable of the competence dimension.

The S–O-R model

The S–O-R model posits that the stimulus variable (S) may be construed as an external environmental stimulus, exerting influence upon an individual’s cognition and perception, denoted as the organism variable (O). Subsequently, the organism variable (O) influences the individual’s behavioral response (R) through a sequence of internal psychological mechanisms (Mehrabian & Russell, 1974). The S–O-R model has found extensive application in exploring the impact of external stimuli on consumer behavior in the online business environment (Cho et al., 2018; Xu et al., 2020). In recent years, this theory has also been applied to the social media environment and the interaction between consumers and AI assistants (Moussawi & Benbunan-Fich, 2021; Xu et al., 2020; Zhou et al., 2023).

In accordance with this model, the stimulus functions as the primary factor driver behind alterations in individual cognitive processes. Various aspects of the external environment that people are exposed to, such as product design and product features, can be regarded as a stimulus that affects the organismic experience (Cho et al., 2018). Therefore, in our context, the three anthropomorphic features of voice cues, message interactivity cues, and emotional cues can be regarded as stimulus variables, representing AI voice assistants’ product features. The organism is related to individual cognition, bridging the gap between a stimulus and an individual’s behavioral response. In the context of consumer behavior, products’ design and features can influence consumers’ cognitive experience, such as satisfaction, pleasure, and perceived value after product use (Cho et al., 2018; Zhou et al., 2022; Zhu et al., 2020). Therefore, in our context, the alleviation of loneliness and perceived usefulness, two important cognitive variables, can be regarded as the organism variables, representing consumers’ cognitive experience after using AI voice assistants. The response refers to an individual’s behavioral response based on cognitive experience. The cognitive experience consumers form about a product can significantly influence subsequent behavioral responses, such as purchase intention, product attachment, and continued use intention (Cho et al., 2018; Xiang & Chae, 2022; Xiang et al., 2022; Zhou et al., 2022; Zhu et al., 2020). In addition, continued use intention is a major predictor of continued use behavior (Tang & Zhang, 2020), and many studies have used consumer behavioral intention as a response variable in S–O-R models (Hu et al., 2016; Li et al., 2023; Shao & Chen, 2021). Therefore, in our context, we regard the continued use intention of AI voice assistants as the response variable, which represents consumers’ behavioral response to perceived warmth and competence. Therefore, the S–O-R model is an appropriate theoretical framework for our study.

Age difference and cognitive load theory

Age-related cognitive and motor changes often present challenges for older adults when it comes to adopting new technologies, particularly in comparison to younger individuals (Charness & Boot, 2009). These challenges can primarily be attributed to diminished attentional resources and cognitive decline, which result in difficulties in filtering out irrelevant information and engaging in tasks with high cognitive load (Ghasemaghaei et al., 2019). Cognitive load theory, grounded in the limited working memory capacity of humans, postulates that when the cognitive demands of a task surpass this capacity, cognitive overload occurs (Hollender et al., 2010). The experience of cognitive overload in relation to new technologies varies across age groups. Notably, several empirical studies have identified a positive association between age and overload, as well as technostress (Saunders et al., 2017). Tu et al. (2005) propose several explanations for this positive relationship: older individuals tend to exhibit more rigid thinking patterns and greater resistance to change, and the decline in learning capacity with age results in higher levels of stress when engaging with information technologies compared to younger individuals. Furthermore, age-related deterioration in cognitive functions, such as processing speed, memory, reasoning, and dual-task performance, poses additional challenges in handling information technology–related cognitive loads (Saunders et al., 2017).

Hypothesis development

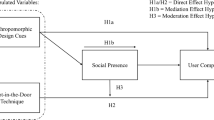

Although previous studies have explored the antecedents and mechanisms influencing the initial adoption of AI voice assistants (Cheng et al., 2022; Liu & Tao, 2022; Mishra et al., 2022; Pelau et al., 2021), however, very little literature has explored the mechanism influencing the continued use intention of AI voice assistants. In contrast to the perspectives of previous literature examining the continued use intention of AI voice assistants (personal traits, personal motivation, artificial autonomy, and human–computer interaction), we take anthropomorphism as a novel perspective to explore the mechanisms influencing the continued use intention of AI voice assistants. To connect anthropomorphic features, consumers’ mind perceptions, and continued use intention, we developed a research model based on the S–O-R model. Initially, in contrast to previous studies that have mostly considered anthropomorphism as a single-dimensional variable (Malhotra & Ramalingam, 2023; Pelau et al., 2021), we divide anthropomorphic features into three dimensions: voice cues, message interactivity cues, and emotional cues, which are regarded as stimulus variables. Subsequently, combining the mind perception theory, we consider incorporating both the alleviation of loneliness and perceived usefulness into our research model, rather than just one of them (Lee et al., 2020), which serve as organismic variables. Moreover, the continued use intention is regarded as a response variable. Finally, considering the differences in attentional and cognitive abilities between older and younger people (Ghasemaghaei et al., 2019), we incorporate the impact of age difference into our research model based on the literature on age difference and cognitive load theory. The research model is shown in Fig. 1.

Voice cues, alleviation of loneliness, and perceived usefulness

People prefer machines with anthropomorphic features (Go & Sundar, 2019), and voice cues are considered to be one of the most important anthropomorphic features (Fan et al., 2016). Because of the two motivational mechanisms of sociality and effectance motivation, AI voice assistance with good voice cues provides a better interaction experience. On the one hand, a human-like voice can create for consumers a sense of social connectedness and comfort (Epley et al., 2007; Fan et al., 2016), which makes consumers feel as if they are interacting with another person in real-time, which can alleviate loneliness. On the other hand, a human-like voice can increase consumers’ sense of control and confidence during human–machine interactions (Epley et al., 2007; Fan et al., 2016), which allows consumers to feel that they can use the AI voice assistant to complete specific instructions efficiently and quickly, thus enhancing the perceived usefulness. Hence, we propose the following hypotheses:

-

Hypothesis 1 Voice cues are positively correlated with the alleviation of loneliness (H1a) and perceived usefulness (H1b).

Message interactivity cues, alleviation of loneliness, and perceived usefulness

Message interactivity cues allow responses exchanged between AI voice assistants and people to be interconnected with each other, that is, in multiple rounds of conversation, a response is contingent upon the preceding message as well as those preceding it (Go & Sundar, 2019). Message interactivity cues can promote people’s sense of the social presence of AI voice assistants, that is, the sense of dialogue with people (Sundar et al., 2015). Message interactivity cues enhance the emotional intimacy and social connection felt by consumers (Go & Sundar, 2019), which can alleviate people’s loneliness. At the same time, this high sense of connection can lead to people’s positive evaluation of AI voice assistants and enhance their perceived usefulness. Hence, we propose the following hypotheses:

-

Hypothesis 2: Message interactivity cues are positively correlated with the alleviation of loneliness (H2a) and perceived usefulness (H2b).

Emotional cues, alleviation of loneliness, and perceived usefulness

Emotional cues are also regarded as a crucial anthropomorphic feature of AI voice assistants (de Kervenoael et al., 2020). This anthropomorphic feature enables AI voice assistants to attentively address consumers’ needs, engender positive experiences, enhance satisfaction, and foster trust and long-term relationship development (Pelau et al., 2021). As AI voice assistants can analyze and gather extensive data to cater to specific consumer needs, they can exhibit empathetic behavior during interactions (Wang, 2017), effectively mitigating feelings of loneliness. Moreover, this display of anthropomorphic empathy significantly improves the quality and efficiency of human–computer interactions, in contrast to the empathy-lacking AI devices that struggle to navigate complex situations and optimize interaction quality and efficiency (Pelau et al., 2021). Therefore, emotional cues also amplify perceived usefulness. Accordingly, we posit the following hypotheses:

-

Hypothesis 3: Emotional cues are positively correlated with the alleviation of loneliness (H3a) and perceived usefulness (H3b).

Alleviation of loneliness, perceived usefulness, and continued use intention

AI voice assistants can be used as a substitute for human beings to alleviate the loneliness caused by the lack of interpersonal relationships by interacting with people (Odekerken-Schröder et al., 2020). Previous studies have shown that establishing social relationships to satisfy loneliness through the Internet/smartphone and other channels can promote the frequency of internet/smartphone use and even addiction (Mahapatra, 2019). Similarly, due to the various anthropomorphic features of the AI voice assistant, consumers have a strong sense of social presence, which greatly alleviates the loneliness of consumers and makes them have an emotional attachment and even addiction in the process of interaction with the AI voice assistant (Cao et al., 2020). We propose the following hypotheses:

-

Hypothesis 4: Alleviation of loneliness is positively correlated with the continued use intention of AI voice assistants.

The AI voice assistant can talk with consumers and help consumers regularly perform mundane tasks like setting up an alarm, scheduling meetings or appointments, and shopping (Mishra et al., 2022), which can make consumers feel that the AI voice assistant has high functional value (de Kervenoael et al., 2020). Many useful functions improve the perceived usefulness of AI voice assistants, provide convenience, reduce the cognitive load of consumers, and improve the continued use intention of AI voice assistants (Ashfaq et al., 2020; Mishra et al., 2022; Moussawi & Benbunan-Fich, 2021). We propose the following hypotheses:

-

Hypothesis 5: Perceived usefulness is positively correlated with the continued use intention of AI voice assistants.

Age differences

Previous studies have shown that there are significant differences in behavioral intention between different age groups (Guo et al., 2016). However, as an emerging research field, AI voice assistant has rarely explored the impact of age differences on the process of consumer use and the continued use intention. In the context of our study, different anthropomorphic features impose different levels of cognitive load on older and younger consumers. For example, among the three anthropomorphic features, message interactivity cues impose more cognitive load on consumers because they provide the most amount of information to consumers, and consumers need to spend more cognitive effort to process and understand the information (Go & Sundar, 2019). Therefore, older consumers may find it more difficult to alleviate loneliness and perceive usefulness through message interactivity cues compared to young consumers due to excessive information overload. Voice cues and emotional cues impose less cognitive load on consumers because agents with voice cues and emotional cues are socially attractive and can bring about more positive emotional interactions. In contrast, agents that do not possess these two anthropomorphic features generate negative social cues, and these cues impose additional cognitive load (Liew et al., 2020). Thus, older consumers may find it easier to alleviate loneliness and perceive usefulness through voice cues and emotional cues than young consumers. We propose the following hypotheses:

-

Hypothesis 6: For older consumers, voice cues have a greater impact on the alleviation of loneliness (H6a) and perceived usefulness (H6b).

-

Hypothesis 7: For older consumers, emotional cues have a greater impact on the alleviation of loneliness (H7a) and perceived usefulness (H7b).

-

Hypothesis 8: For younger consumers, message interactivity cues have a greater impact on the alleviation of loneliness (H8a) and perceived usefulness (H8b).

Research method

Measurement

The research questionnaire in this study comprises two distinct sections. The initial section encompasses the collection of demographic information regarding the participants, encompassing gender, age, education, monthly income, and prior exposure to the utilization of AI voice assistants. The subsequent section encompasses the measurement items of the variables within the proposed research model. All measurement items pertaining to the variables in this research model have been adapted from well-established questionnaires, as well as validated measurement items from previous studies. These have been appropriately refined to align with the specific context of AI voice assistant usage. The questionnaire is in the form of a five-level Likert scale. From 1 to 5, it represents the degree of approval of the respondents to the question, 1 represents very disagree, and 5 represents very agree. The specific measurement items and reference sources of each variable are shown in Table 1.

Data collection procedures and participants

A survey-based approach was utilized for data collection. Firstly, the questionnaire underwent rigorous validation by a panel of five academic experts well-versed in using AI voice assistants, and then, based on their comments, we modified some items to improve clarity and comprehensibility. Secondly, we used the modified questionnaire to pre-survey consumers with experience in using AI voice assistants, and 18 valid questionnaires were collected. These data were analyzed for reliability and validity, and the questionnaire was appropriately modified and improved based on the results of the analysis. Thirdly, we conducted a random questionnaire survey mainly from some universities and colleges, some pension institutions, and some communities in central Chinese cities, and we selected consumers with experience in using AI voice assistants, including Apple’s Siri, Xiaomi’s Xiaoai Classmate, Alibaba’s Tmall Genie, and Baidu Duer, as the participants. And at the beginning of the survey, the study set a filter question in the questionnaire, “Have you ever used an AI voice assistant?” to ensure that respondents had experience. From May to July 2022, a total of 340 questionnaires were distributed. We carefully reviewed all responses, and due to their incompleteness and aberration with singular answers, we deleted 37 responses (Li et al., 2021). Finally, 303 valid questionnaires were received, with an effective recovery of 89.11%. In addition, to enhance participants’ enthusiasm, we provided five types of gifts valued at 2–10 yuan, and each participant who completed the questionnaire could pick any of the gifts based on their preferences.

Similar to the study of Guo et al. (2016), 50 years old is used in this paper as the cut-off age to distinguish older consumers from younger consumers. The reasons are as follows: (1) in the 1980s, computer education was gradually popularized in China, so most people over 50 years old missed the opportunity to receive formal information technology education in schools, so the technical literacy of people over 50 years old is relatively low (Guo et al., 2016); (2) as of December 2021, internet consumers over 50 years old in China only account for 26.8% of the total consumers (CNNIC, 2022). Therefore, some studies worry whether this age group will adopt or continue to use the newly introduced technologies (Yu et al., 2009).

According to data statistics of the participants, we found that 47.9% of the participants were male and 52.2% were female. A total of 48.8% of participants were below 50 years old and 51.2% of participants were over 50 years old. The number of participants with junior high school, senior high school, undergraduate, or junior college degrees is relatively balanced, accounting for 23.4%, 29.0%, and 34.7%, respectively, and only 4.6% of participants with master’s degrees and above. There are many participants with a monthly income of 2001–4000 yuan, 4001–600 yuan, and 6001–8000 yuan, accounting for 38.3%, 21.1%, and 29.4%, respectively. In the past 6 months, the participants who used the AI voice assistant 1–2 times a week accounted for the most, accounting for 40.9%; the participants who used the AI voice assistant once a few months and once or twice a month accounted for 22.8% and 27.1%, respectively; while the participants who used the AI voice assistant every day only accounted for 9.2%. The specific data collection is shown in Table 2.

Common method bias

All the data in this study was exclusively sourced from a singular origin at a particular juncture. As a result, there arises a potential concern regarding the validity of our findings, specifically in relation to common method bias (CMB). To address this, we employed three distinct methodologies to assess the presence of CMB. Our initial approach involved scrutinizing such bias via Harman’s one-factor test, encompassing all measurement items. The results of the principal components factor analysis revealed that all these items were classifiable into six distinct constructs, with Eigen-values surpassing the critical threshold of 1.0, collectively capturing 66.28% of the overall variance. The findings of our analysis suggested that 41.45% of the total variation can be attributed to a singular construct, which is well below the requisite 50%, showing that the common method bias was not a serious concern (Podsakoff et al., 2003). Subsequently, we followed an approach involving a comprehensive collinearity examination to assess CMB in partial least squares structural equation modeling (PLS-SEM) (Kock & Lynn, 2012). The approach entails the calculation of the variance inflation factor (VIF) for all latent variables in the model. Notably, the VIFs for all latent variables remain consistently below the threshold of 3 (Aldossari & Sidorova, 2020). Finally, following Liang et al. (2007), we have incorporated a common method factor into our model. After the introduction of the common method factor, there were only minor adjustments observed in the coefficients within both the measurement and structural models (Δχ2 = 73.106, Δdf = 23, Δχ2/Δdf = 0.072). Hence, it is concluded that the presence of common method bias does not substantially affect the validity of this research.

Data analysis and results

In our research, we employed structural equation modeling (SEM) with partial least squares (PLS) to conduct a comprehensive assessment, simultaneously evaluating measurement quality (the measurement model) and constructing interrelationships (the structural model). Additionally, we performed a multi-group analysis. PLS-SEM offers several advantages, including its suitability for smaller sample sizes, its capability for processing complex structural models, and the simultaneous treatment of both reactive and formative indicators (Benitez et al., 2020; Hair et al., 2019; Ringle et al., 2012). Our research model is complex, and the sample size is relatively modest. Therefore, we utilized SmartPLS 3.2.8 software for analysis, thoroughly validating both the measurement model and structural model through the SEM of PLS regression.

Measurement model

First, a comprehensive statistical analysis of the sample data was conducted, and the results of this analysis are presented in Table 3. According to the analysis results, it is observed that the mean values for each variable within the model range between 3.79 and 4.132, while the standard deviation (SD) varies from 0.874 to 1.131. These findings suggest a high level of data concentration, minimal fluctuations, and a notable level of adaptability. Furthermore, the factor loading of each item surpasses the threshold of 0.7, ranging from 0.728 to 0.863. This substantiates the questionnaire items’ rationality (Hair et al., 2012).

Second, the measurement quality of the questionnaire necessitates an examination of both its reliability and validity. We used Cronbach’s α to assess items’ reliability; we used factor loadings, composite reliability (CR), and average variance extracted (AVE) to assess convergent validity; we compared the square root of AVE with the correlation between constructs to test discriminant validity (Wang et al., 2021). The test results are shown in Table 4. Table 4 reveals that Cronbach’s α of all measurement variables surpasses the recommended threshold of 0.7 (Hair et al., 2012), affirming the questionnaire’s high reliability. Furthermore, the factor loadings of each variable exceed the suggested threshold of 0.6, indicating strong associations between model variables and their respective structural constructs (Hair et al., 2012). The CR values in this model surpass the recommended threshold of 0.7, attesting to strong internal consistency among model variables. The observation variable set can explain the underlying model structure well (Hair et al., 2012). The AVE in this model exceeds the suggested threshold of 0.5, indicating that the observed variables in the model can explain each measurement dimension well (Hair et al., 2012). In this model, the square root of each variable’s AVE exceeds its correlation coefficient with other observation variables, signifying robust discriminant validity for each observation variable. These results indicate a high level of discrimination.

Based on the aforementioned analysis, it can be concluded that the questionnaire’s design is well-founded and demonstrates strong reliability and validity. The design of the measurement model is both effective and rational, paving the way for further structural model fitting analysis.

Structural model

The structural model is estimated using the bootstrapping procedure with 5000 resamples (Hair Jr et al., 2016). The multicollinearity for each construct’s predictors is checked using VIF values, which are lower than 5 as recommended by Hair Jr et al. (2016). The proposed model explains 60.8% of the variance in continued use intention; voice cues, message interactivity cues, and emotional cues explain 48.4% of the variance in the alleviation of loneliness; voice cues, message interactivity cues, and emotional cues explain 49.1% of the variance in perceived usefulness. This study reported an SRMR value of 0.057, which falls comfortably below the threshold of 0.08, thus meeting an acceptable standard (Cheng et al., 2022). In addressing the unique challenges presented by PLS-SEM, Tenenhaus et al. (2005) introduced a distinctive goodness-of-fit (GoF) index for comprehensive model assessment, which has gained wide acceptance (Cheng et al., 2022; Xiao et al., 2020). In accordance with established criteria, a GoF value exceeding 0.25 is indicative of a well-fitting model, calculated as per the formula [GoF = √(average AVE * average R2)]. Our GoF value was 0.580, indicating a model fit.

The hypotheses (H1a, H2a, and H3a) that voice cues, message interactivity cues, and emotional cues positively affect the alleviation of loneliness were supported, with path coefficients of 0.361 (t = 5.124), 0.264 (t = 3.673), and 0.218 (t = 3.118), respectively. The hypotheses (H1b, H2b, and H3b) that voice cues, message interactivity cues, and emotional cues positively affect perceived usefulness were supported, with path coefficients of 0.305 (t = 3.748), 0.360 (t = 5.136), and 0.187 (t = 2.530), respectively. The hypotheses (H4 and H5) that alleviation of loneliness and perceived usefulness positively affect continued use intention of AI voice assistants were supported, with path coefficients of 0.385 (t = 5.064), and 0.373 (t = 5.351), respectively. Figure 2 shows the analysis results, and Table 5 shows the hypothesis test analysis results.

Mediation effects

To examine whether alleviation of loneliness and perceived usefulness mediate the influences of anthropomorphic features on continued usage intention we employ a bootstrapping approach to test the significance of trust as a mediator. Following the methodology of Zhou et al. (2022), we utilize the PROCESS macro for SPSS 22 to conduct the bootstrapping test with 5000 samples. A significant mediating effect is determined when the 95% confidence interval does not include 0. The results presented in Table 6 indicate all six mediating effects with 95% confidence intervals that exclude 0. Specifically, alleviation of loneliness mediates the relationship between voice cues, message interactivity cues, emotional cues, and continued use intention; perceived usefulness mediates the relationship between voice cues, message interactivity cues, emotional cues, and continued use intention.

Multiple group analysis

We used multiple group analysis in SmartPLS 3.2.8 (PLS-MGA) to test whether the path coefficients differ significantly between older and younger consumers (Mishra et al., 2022). Multiple group analysis results show that age differences moderate four relationships: voice cues → alleviation of loneliness (βdiff = 0.263, t = 2.340), emotional cues → perceived usefulness (βdiff = 0.300, t = 2.285), message interactivity cues → alleviation of loneliness (βdiff = − 0.405, t = 3.002), and message interactivity cues → perceived usefulness (βdiff = − 0.439, t = 3.600), while other relationship path coefficients have no significant difference between older and younger consumers. Therefore, H6a, H7b, H8a, and H8b are supported, while H6b and H7a are not supported. The PLS-MGA analysis results are shown in Table 7.

Discussion

Key findings

This study investigates the impacts of anthropomorphic features on consumers’ mind perception and continued use intention of AI voice assistants. Drawing upon the S–O-R model and mind perception theory, this study examines the role of three anthropomorphic features: voice cues, message interactivity cues, and emotional cues. Furthermore, based on cognitive load theory, the study explores the differential effects of these features on older and younger consumers. The findings affirm the support for 12 hypotheses (H1a, H1b, H2a, H2b, H3a, H3b, H4, H5, H6a, H7b, H8a, and H8b) while rejecting two hypotheses (H6ba and H7a), leading to the following conclusions:

Firstly, the study reveals that voice cues, message interactivity cues, and emotional cues effectively mitigate consumers’ loneliness, aligning with prior research findings (de Kervenoael et al., 2020; Fan et al., 2016; Go & Sundar, 2019). This implies that AI voice assistants possessing a heightened level of anthropomorphism can augment the sense of social presence and engage in interactions with consumers, providing companionship and addressing their social needs while alleviating loneliness. Furthermore, in comparison to message interactivity cues and emotional cues, voice cues exert a more substantial influence on alleviating loneliness, indicating their potential as a pivotal anthropomorphic feature in mitigating consumers’ sense of isolation.

Secondly, voice cues, message interactivity cues, and emotional cues exert a positive influence on perceived usefulness, aligning with prior research findings (Fan et al., 2016; Go & Sundar, 2019; Moussawi & Benbunan-Fich, 2021; Pelau et al., 2021). This discovery implies that AI voice assistants exhibiting a heightened degree of anthropomorphism can deliver more comprehensive and valuable information, thereby enhancing the efficiency and quality of human–computer interaction, ultimately bolstering users’ perceived usefulness. Notably, among the three anthropomorphic features, message interactivity cues exhibit a more pronounced impact on perceived usefulness, underscoring their pivotal role in augmenting consumers’ perceived usefulness.

Thirdly, the study finds that alleviation of loneliness and perceived usefulness promote consumers’ continued use intention of AI voice assistants, which is consistent with previous research findings (Ashfaq et al., 2020; Cao et al., 2020; Mahapatra, 2019; Mishra et al., 2022; Moussawi & Benbunan-Fich, 2021). Moreover, our study also finds that alleviation of loneliness and perceived usefulness mediate the relationship between the three anthropomorphic features and the continued use intention.

Finally, the study uncovers age differences in the impact of anthropomorphic features on consumers’ continued use intention of AI voice assistants. Considering diminished attentional resources and cognitive decline among older adults relative to younger adults (Ghasemaghaei et al., 2019), they encounter more challenges when engaging in high cognitive load tasks. Our findings indicate that message interactivity cues containing substantial informational content exert a greater impact on alleviating loneliness and enhancing perceived usefulness for younger consumers compared to older ones. Furthermore, we observe that voice cues possess a more pronounced effect on the alleviation of loneliness for older consumers than younger ones, whereas emotional cues have a stronger influence on perceived usefulness for older consumers than younger ones. Notably, voice cues and emotional cues impose a lower cognitive load on consumers (Liew et al., 2020), enabling older consumers to better discern the positive effects of such cues compared to younger consumers. Despite our findings do not support H6b and H7a, in terms of the effect of voice cues on perceived usefulness, the coefficient is higher for older consumers than for younger consumers. In terms of the effect of emotional cues on the alleviation of loneliness, the coefficient is significant for older consumers but not for younger consumers.

In summary, this study provides valuable insights into the impact of anthropomorphic features on consumers’ mind perception and continued use intention of AI voice assistants, and it is pointed out that there are some differences in the impact of anthropomorphic features on mind perception and behavioral intention between older and younger consumers.

Theoretical implications

This study makes several theoretical contributions. Firstly, our study adds to the literature on the continued use intention of AI voice assistants. Prior literature on AI voice assistants mainly focuses on initial adoption (Cheng et al., 2022; Liu & Tao, 2022; Mishra et al., 2022; Moussawi & Benbunan-Fich, 2021; Park et al., 2018; Pelau et al., 2021), rather than on the continued use intention (Ding, 2019; Pal et al., 2021). By focusing on the continued use intention of AI voice assistants, our research contributes to the long-term viability and sustainability of this technology, promoting continued use intention by consumers beyond their initial adoption (Lv et al., 2022; Pal et al., 2021).

Secondly, we explore the critical antecedents of AI voice assistants from the novel perspective of anthropomorphism. Although previous research on the continued use intention of AI voice assistants has predominantly investigated key antecedents from various perspectives, including personal traits (Lee et al., 2021), personal motivation (Cheng & Jiang, 2020), artificial autonomy (Hu et al., 2021), and human–computer interaction (Xie et al., 2023), the role of anthropomorphism remains underexplored in studies concerning the continued use intention of AI voice assistants. Our study adds to the literature on the antecedents of AI voice assistants’ continued use intention by introducing a new perspective of anthropomorphism. Moreover, the paper offers a new framework for understanding the impact of anthropomorphic features of AI voice assistants by dividing anthropomorphic features into three distinct dimensions: voice cues, message interactivity cues, and emotional cues. This approach is in contrast to prior studies that have treated anthropomorphism as a single variable (Liu & Tao, 2022; Mishra et al., 2022; Pelau et al., 2021), or only focused on its impact on consumer perception without examining its effect on behavior (Go & Sundar, 2019). By identifying and investigating the impact of different anthropomorphic features on consumer behavior, this paper provides a valuable contribution to the existing literature.

Thirdly, we apply mind perception theory to operationalize the alleviation of loneliness and perceived usefulness as two basic dimensions inherent in the perception of human likeness in non-human entities and simultaneously investigate the psychological mechanisms underlying the impact of anthropomorphic features on the continued use intention of AI voice assistants. Previous research on AI voice assistants has mostly focused on a single dimension, either warmth or competence (Lee et al., 2020). By expanding these two dimensions to AI voice assistants, this paper offers new insights into how consumers perceive and interact with anthropomorphic technology.

Finally, given the differences in attentional and cognitive abilities between older and younger people (Ghasemaghaei et al., 2019), the impact of anthropomorphic features on individuals’ perceptions may vary according to age difference. Based on cognitive load theory, this study improves our understanding of the role of age differences in the continued use intention of AI voice assistants, an area that has received limited attention in prior research (Guo et al., 2016). Specifically, this study demonstrates that the effect of voice cues on the alleviation of loneliness is more pronounced in older consumers, the effect of emotional cues on perceived usefulness is more pronounced in older consumers, while the impact of message interactivity cues on loneliness alleviation and perceived usefulness is stronger in younger consumers. These findings highlight the importance of considering age differences when studying the impact of anthropomorphic features on consumers’ perceptions and behavior.

Practical implications

Practically, this study holds significant implications for AI voice assistant developers, marketers, and consumers. Regarding product developers, it is essential to recognize the potential of the three anthropomorphic features examined in this research in fostering consumers’ continued use intention of AI voice assistants by alleviating loneliness and enhancing perceived usefulness. Consequently, developers should enhance the anthropomorphic features of AI voice assistants by refining voice cues, message interactivity cues, and emotional cues. Additionally, developers must prioritize the two fundamental consumer needs of alleviating loneliness and enhancing perceived usefulness to promote the continued use intention of AI voice assistants. Our findings show that voice cues have the most significant impact on the alleviation of loneliness, while message interactivity cues have the most significant impact on perceived usefulness. Thus, product developers can focus on enhancing certain aspects of anthropomorphic features based on consumers’ needs. Notably, the impact of anthropomorphic features on consumers’ perception varies across different age groups. Therefore, according to our results, for older consumers, developers should concentrate on elevating the anthropomorphic quality of voice cues and emotional cues, employing friendlier, warmer, and more trustworthy voices, along with fostering positive emotional interactions. And for younger consumers, developers should ensure that AI voice assistants provide relevant replies, maintaining a high level of interaction and enabling consumers to engage in more profound and meaningful dialogues.

For marketers, they should emphasize the three important anthropomorphic features of AI voice assistants and highlight their usefulness and accompanying functions. They should implement different marketing strategies for older and younger consumers to effectively reach their target audience. Specifically, marketers should emphasize the importance of AI voice assistants’ voice cues and emotional cues to older consumers, e.g., emphasizing in advertisements that AI voice assistants have warm, friendly, and trustworthy voices with human-like emotional interactions, while emphasizing the importance of message interactivity cues to younger consumers, e.g., emphasizing in advertisements that AI voice assistants have strong conversational interaction and language understanding.

For consumers, this study provides evidence that AI voice assistants can alleviate consumers’ loneliness and help them carry out various task commands. Therefore, both older and young consumers experiencing high levels of loneliness and a desire to communicate with others could consider using an AI voice assistant to meet their social needs and accomplish their daily tasks.

Limitations and future research directions

Although this study has made many contributions, it still has some limitations that need to be further addressed in future research. Firstly, our research focuses on AI voice assistants without the screen, which means that the important dimension of visual cues in anthropomorphic features has not been considered in the research model. Future research can explore the impact of visual cues on consumer perception and behavioral intention. Secondly, this study only collected 303 valid questionnaires, which is a relatively small sample size. Since the use of AI voice assistants by older consumers requires certain physiological, economic, and educational foundations, the sample size of older consumers collected in this study is small. Therefore, future research should increase the sample size to obtain more reliable results. Thirdly, due to the limited functions of current AI voice assistants in the markets, it is difficult to conduct empirical research on the impact of voice cues (such as different tones, intonations, and dialects) on consumer perception and behavioral intention. In the future, experimental research can be designed to investigate the impact of AI voice assistants with the aforementioned features. Finally, this paper focuses on the positive effects of anthropomorphic features, however, previous scholars have also shown that anthropomorphic features may have some negative effects (Kim et al., 2022; Troshani et al., 2021; Yam et al., 2021). Future research could include negative triggers caused by anthropomorphic features in the research model and explore the boundaries of the effectiveness of anthropomorphic features.

Data Availability

Data is available upon reasonable request.

References

Aaker, J., Vohs, K. D., & Mogilner, C. (2010). Nonprofits are seen as warm and for-profits as competent: Firm stereotypes matter. Journal of Consumer Research, 37(2), 224–237. https://doi.org/10.1086/651566

Aldossari, M. Q., & Sidorova, A. (2020). Consumer acceptance of Internet of Things (IoT): Smart home context. Journal of Computer Information Systems, 60(6), 507–517. https://doi.org/10.1080/08874417.2018.1543000

Ashfaq, M., Yun, J., Yu, S., & Loureiro, S. M. C. (2020). I, chatbot: Modeling the determinants of users’ satisfaction and continuance intention of AI-powered service agents. Telematics and Informatics, 54, 101473. https://doi.org/10.1016/j.tele.2020.101473

Benitez, J., Henseler, J., Castillo, A., & Schuberth, F. (2020). How to perform and report an impactful analysis using partial least squares: Guidelines for confirmatory and explanatory IS research. Information & Management, 57(2), 103168. https://doi.org/10.1016/j.im.2019.05.003

Benlian, A., Klumpe, J., & Hinz, O. (2020). Mitigating the intrusive effects of smart home assistants by using anthropomorphic design features: A multimethod investigation. Information Systems Journal, 30(6), 1010–1042. https://doi.org/10.1111/isj.12243

Bhattacherjee, A. (2001). Understanding information systems continuance: An expectation-confirmation model. MIS Quarterly, 25(3), 351–370. https://doi.org/10.2307/3250921

Cao, X., Gong, M., Yu, L., & Dai, B. (2020). Exploring the mechanism of social media addiction: An empirical study from WeChat users. Internet Research, 30(4), 1305–1328. https://doi.org/10.1108/INTR-08-2019-0347

Charness, N., & Boot, W. R. (2009). Aging and information technology use: Potential and barriers. Current Directions in Psychological Science, 18(5), 253–258. https://doi.org/10.1111/j.1467-8721.2009.01647.x

Cheng, Y., & Jiang, H. (2020). How do AI-driven chatbots impact user experience? Examining gratifications, perceived privacy risk, satisfaction, loyalty, and continued use. Journal of Broadcasting & Electronic Media, 64(4), 592–614. https://doi.org/10.1080/08838151.2020.1834296

Cheng, X., Zhang, X., Cohen, J., & Mou, J. (2022). Human vs AI: Understanding the impact of anthropomorphism on consumer response to chatbots from the perspective of trust and relationship norms. Information Processing & Management, 59(3), 102940. https://doi.org/10.1016/j.ipm.2022.102940

Cho, W.-C., Lee, K. Y., & Yang, S.-B. (2018). What makes you feel attached to smartwatches? The stimulus–organism–response (S–O–R) perspectives. Information Technology & People, 32(2), 319–343. https://doi.org/10.1108/ITP-05-2017-0152

CNNIC. (2022). The 49th statistical report on China’s internet development. Retrieved from http://www.cnnic.net.cn/hlwfzyj/hlwxzbg/ Accessed 15 May 2022

Cuddy, A. J., Fiske, S. T., & Glick, P. (2008). Warmth and competence as universal dimensions of social perception: The stereotype content model and the BIAS map. Advances in Experimental Social Psychology, 40, 61–149. https://doi.org/10.1016/S0065-2601(07)00002-0

de Kervenoael, R., Hasan, R., Schwob, A., & Goh, E. (2020). Leveraging human-robot interaction in hospitality services: Incorporating the role of perceived value, empathy, and information sharing into visitors’ intentions to use social robots. Tourism Management, 78, 104042. https://doi.org/10.1016/j.tourman.2019.104042

Delgosha, M. S., & Hajiheydari, N. (2021). How human users engage with consumer robots? A dual model of psychological ownership and trust to explain post-adoption behaviours. Computers in Human Behavior, 117, 106660. https://doi.org/10.1016/j.chb.2020.106660

Ding, Y. (2019). Looking forward: The role of hope in information system continuance. Computers in Human Behavior, 91, 127–137. https://doi.org/10.1016/j.chb.2018.09.002

Epley, N., Waytz, A., & Cacioppo, J. T. (2007). On seeing human: A three-factor theory of anthropomorphism. Psychological Review, 114(4), 864. https://doi.org/10.1037/0033-295X.114.4.864

Fan, A., Wu, L. L., & Mattila, A. S. (2016). Does anthropomorphism influence customers’ switching intentions in the self-service technology failure context? Journal of Services Marketing, 30(7), 713–723. https://doi.org/10.1108/JSM-07-2015-0225

Ghasemaghaei, M., Hassanein, K., & Benbasat, I. (2019). Assessing the design choices for online recommendation agents for older adults: Older does not always mean simpler information technology. MIS Quarterly, 43(1), 329–346. https://doi.org/10.25300/MISQ/2019/13947

Go, E., & Sundar, S. S. (2019). Humanizing chatbots: The effects of visual, identity and conversational cues on humanness perceptions. Computers in Human Behavior, 97, 304–316. https://doi.org/10.1016/j.chb.2019.01.020

Gray, K., & Wegner, D. M. (2010). Blaming God for our pain: Human suffering and the divine mind. Personality and Social Psychology Review, 14(1), 7–16. https://doi.org/10.1177/1088868309350299

Gray, H. M., Gray, K., & Wegner, D. M. (2007). Dimensions of mind perception. Science, 315(5812), 619–619. https://doi.org/10.1126/science.1134475

Guo, X., Zhang, X., & Sun, Y. (2016). The privacy–personalization paradox in mHealth services acceptance of different age groups. Electronic Commerce Research and Applications, 16, 55–65. https://doi.org/10.1016/j.elerap.2015.11.001

Hair, J. F., Sarstedt, M., Ringle, C. M., & Mena, J. A. (2012). An assessment of the use of partial least squares structural equation modeling in marketing research. Journal of the Academy of Marketing Science, 40(3), 414–433. https://doi.org/10.1007/s11747-011-0261-6

Hair, J. F., Jr., Hult, G. T. M., Ringle, C. M., & Sarstedt, M. (2016). A primer on partial least squares structural equation modeling (PLS-SEM). Sage publications.

Hair, J. F., Risher, J. J., Sarstedt, M., & Ringle, C. M. (2019). When to use and how to report the results of PLS-SEM. European Business Review, 31(1), 2–24. https://doi.org/10.1108/EBR-11-2018-0203

Han, S., & Yang, H. (2018). Understanding adoption of intelligent personal assistants: A parasocial relationship perspective. Industrial Management & Data Systems, 118(3), 618–636. https://doi.org/10.1108/IMDS-05-2017-0214

Hollender, N., Hofmann, C., Deneke, M., & Schmitz, B. (2010). Integrating cognitive load theory and concepts of human–computer interaction. Computers in Human Behavior, 26(6), 1278–1288. https://doi.org/10.1016/j.chb.2010.05.031

Hu, X., Huang, Q., Zhong, X., Davison, R. M., & Zhao, D. (2016). The influence of peer characteristics and technical features of a social shopping website on a consumer’s purchase intention. International Journal of Information Management, 36(6), 1218–1230. https://doi.org/10.1016/j.ijinfomgt.2016.08.005

Hu, Q., Lu, Y., Pan, Z., Gong, Y., & Yang, Z. (2021). Can AI artifacts influence human cognition? The effects of artificial autonomy in intelligent personal assistants. International Journal of Information Management, 56, 102250. https://doi.org/10.1016/j.ijinfomgt.2020.102250

Huang, Y., Gursoy, D., Zhang, M., Nunkoo, R., & Shi, S. (2021). Interactivity in online chat: Conversational cues and visual cues in the service recovery process. International Journal of Information Management, 60, 102360. https://doi.org/10.1016/j.ijinfomgt.2021.102360

Kim, S., & Choudhury, A. (2021). Exploring older adults’ perception and use of smart speaker-based voice assistants: A longitudinal study. Computers in Human Behavior, 124, 106914. https://doi.org/10.1016/j.chb.2021.106914

Kim, A., Cho, M., Ahn, J., & Sung, Y. (2019). Effects of gender and relationship type on the response to artificial intelligence. Cyberpsychology, Behavior, and Social Networking, 22(4), 249–253. https://doi.org/10.1089/cyber.2018.0581

Kim, B., de Visser, E., & Phillips, E. (2022). Two uncanny valleys: Re-evaluating the uncanny valley across the full spectrum of real-world human-like robots. Computers in Human Behavior, 135, 107340. https://doi.org/10.1016/j.chb.2022.107340

Kock, N., & Lynn, G. (2012). Lateral collinearity and misleading results in variance-based SEM: An illustration and recommendations. Journal of the Association for Information Systems, 13(7). https://doi.org/10.17705/1jais.00302

Lee, S., Lee, N., & Sah, Y. J. (2020). Perceiving a mind in a chatbot: Effect of mind perception and social cues on co-presence, closeness, and intention to use. International Journal of Human-Computer Interaction, 36(10), 930–940. https://doi.org/10.1080/10447318.2019.1699748

Lee, K. Y., Sheehan, L., Lee, K., & Chang, Y. (2021). The continuation and recommendation intention of artificial intelligence-based voice assistant systems (AIVAS): The influence of personal traits. Internet Research, 31(5), 1899–1939. https://doi.org/10.1108/INTR-06-2020-0327

Li, X., & Sung, Y. (2021). Anthropomorphism brings us closer: The mediating role of psychological distance in user–AI assistant interactions. Computers in Human Behavior, 118, 106680. https://doi.org/10.1016/j.chb.2021.106680

Li, Q., Guo, X., Bai, X., & Xu, W. (2018). Investigating microblogging addiction tendency through the lens of uses and gratifications theory. Internet Research, 28(5), 1228–1252. https://doi.org/10.1108/IntR-03-2017-0092

Li, L., Lee, K. Y., Emokpae, E., & Yang, S.-B. (2021). What makes you continuously use chatbot services? Evidence from chinese online travel agencies. Electronic Markets, 31(3), 575–599. https://doi.org/10.1007/s12525-020-00454-z

Li, X., Zhu, X., Lu, Y., Shi, D., & Deng, W. (2023). Understanding the continuous usage of mobile payment integrated into social media platform: The case of WeChat pay. Electronic Commerce Research and Applications, 60, 101275. https://doi.org/10.1016/j.elerap.2023.101275

Liang, H., Saraf, N., Hu, Q., & Xue, Y. (2007). Assimilation of enterprise systems: The effect of institutional pressures and the mediating role of top management. MIS Quarterly, 31(1), 59–87. https://doi.org/10.2307/25148781

Liew, T. W., Tan, S.-M., Tan, T. M., & Kew, S. N. (2020). Does speaker’s voice enthusiasm affect social cue, cognitive load and transfer in multimedia learning? Information and Learning Sciences, 121(3/4), 117–135. https://doi.org/10.1108/ILS-11-2019-0124

Liu, K., & Tao, D. (2022). The roles of trust, personalization, loss of privacy, and anthropomorphism in public acceptance of smart healthcare services. Computers in Human Behavior, 127, 107026. https://doi.org/10.1016/j.chb.2021.107026

Lu, L., Cai, R., & Gursoy, D. (2019). Developing and validating a service robot integration willingness scale. International Journal of Hospitality Management, 80, 36–51. https://doi.org/10.1016/j.ijhm.2019.01.005

Lv, X., Yang, Y., Qin, D., Cao, X., & Xu, H. (2022). Artificial intelligence service recovery: The role of empathic response in hospitality customers’ continuous usage intention. Computers in Human Behavior, 126, 106993. https://doi.org/10.1016/j.chb.2021.106993

Ma, X., Zhang, X., Guo, X., Lai, K.-H., & Vogel, D. (2021). Examining the role of ICT usage in loneliness perception and mental health of the elderly in China. Technology in Society, 67, 101718. https://doi.org/10.1016/j.techsoc.2021.101718

Mahapatra, S. (2019). Smartphone addiction and associated consequences: Role of loneliness and self-regulation. Behaviour & Information Technology, 38(8), 833–844. https://doi.org/10.1080/0144929X.2018.1560499

Malhotra, G., & Ramalingam, M. (2023). Perceived anthropomorphism and purchase intention using artificial intelligence technology: Examining the moderated effect of trust. Journal of Enterprise Information Management. https://doi.org/10.1108/JEIM-09-2022-0316

McLean, G., & Osei-Frimpong, K. (2019). Hey Alexa… examine the variables influencing the use of artificial intelligent in-home voice assistants. Computers in Human Behavior, 99, 28–37. https://doi.org/10.1016/j.chb.2019.05.009

Mehrabian, A., & Russell, J. (1974). An approach to environmental psychology. The MIT Press.

Mishra, A., Shukla, A., & Sharma, S. K. (2022). Psychological determinants of users’ adoption and word-of-mouth recommendations of smart voice assistants. International Journal of Information Management, 67, 102413. https://doi.org/10.1016/j.ijinfomgt.2021.102413

Moussawi, S., & Benbunan-Fich, R. (2021). The effect of voice and humour on users’ perceptions of personal intelligent agents. Behaviour & Information Technology, 40(15), 1603–1626. https://doi.org/10.1080/0144929X.2020.1772368

Mulcahy, R., Letheren, K., McAndrew, R., Glavas, C., & Russell-Bennett, R. (2019). Are households ready to engage with smart home technology? Journal of Marketing Management, 35(15–16), 1370–1400. https://doi.org/10.1080/0267257X.2019.1680568

Mulcahy, R., Letheren, K., McAndrew, R., Glavas, C., & Russell-Bennett, R. (2022). Are households ready to engage with smart home technology? The Role of Smart Technologies in Decision Making (pp. 4–33). Routledge.

Munnukka, J., Talvitie-Lamberg, K., & Maity, D. (2022). Anthropomorphism and social presence in human–virtual service assistant interactions: The role of dialog length and attitudes. Computers in Human Behavior, 135, 107343. https://doi.org/10.1016/j.chb.2022.107343

Nikou, S. (2019). Factors driving the adoption of smart home technology: An empirical assessment. Telematics and Informatics, 45, 101283. https://doi.org/10.1016/j.tele.2019.101283

Odekerken-Schröder, G., Mele, C., Russo-Spena, T., Mahr, D., & Ruggiero, A. (2020). Mitigating loneliness with companion robots in the COVID-19 pandemic and beyond: An integrative framework and research agenda. Journal of Service Management, 31(6), 1149–1162. https://doi.org/10.1108/JOSM-05-2020-0148

Pal, D., Babakerkhell, M. D., & Zhang, X. (2021). Exploring the determinants of users’ continuance usage intention of smart voice assistants. IEEE Access, 9, 162259–162275. https://doi.org/10.1109/ACCESS.2021.3132399

Park, E. (2020). User acceptance of smart wearable devices: An expectation-confirmation model approach. Telematics and Informatics, 47, 101318. https://doi.org/10.1016/j.tele.2019.101318

Park, K., Kwak, C., Lee, J., & Ahn, J.-H. (2018). The effect of platform characteristics on the adoption of smart speakers: Empirical evidence in South Korea. Telematics and Informatics, 35(8), 2118–2132. https://doi.org/10.1016/j.tele.2018.07.013

Pelau, C., Dabija, D.-C., & Ene, I. (2021). What makes an AI device human-like? The role of interaction quality, empathy and perceived psychological anthropomorphic characteristics in the acceptance of artificial intelligence in the service industry. Computers in Human Behavior, 122, 106855. https://doi.org/10.1016/j.chb.2021.106855

Pentina, I., Hancock, T., & Xie, T. (2023). Exploring relationship development with social chatbots: A mixed-method study of replika. Computers in Human Behavior, 140, 107600. https://doi.org/10.1016/j.chb.2022.107600

Podsakoff, P. M., MacKenzie, S. B., Lee, J.-Y., & Podsakoff, N. P. (2003). Common method biases in behavioral research: A critical review of the literature and recommended remedies. Journal of Applied Psychology, 88(5), 879–903. https://doi.org/10.1037/0021-9010.88.5.879

Qiu, L., & Benbasat, I. (2009). Evaluating anthropomorphic product recommendation agents: A social relationship perspective to designing information systems. Journal of Management Information Systems, 25(4), 145–182. https://doi.org/10.2753/MIS0742-1222250405