Abstract

In this paper, a new family of neural network (NN) operators is introduced. The idea is to consider a Durrmeyer-type version of the widely studied discrete NN operators by Costarelli and Spigler (Neural Netw 44:101–106, 2013). Such operators are constructed using special density functions generated from suitable sigmoidal functions, while the reconstruction coefficients are based on a convolution between a general kernel function \(\chi \) and the function being reconstructed, f. Here, we investigate their approximation capabilities, establishing both pointwise and uniform convergence theorems for continuous functions. We also provide quantitative estimates for the approximation order thanks to the use of the modulus of continuity of f; this turns out to be strongly influenced by the asymptotic behaviour of the sigmoidal function \(\sigma \). Our study also shows that the estimates we provide are, under suitable assumptions, the best possible. Finally, \(L^p\)-approximation is also established. At the end of the paper, examples of activation functions are discussed.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In recent years, there has been a large increase in interest of the scientific community in neural network (NN) operators driven by their wide-ranging applications, in particular in numerical analysis, artificial intelligence, and machine learning [15, 30,31,32, 35, 37, 38].

Anastassiou [3], was the first to establish NN approximations for continuous functions. He provided estimates for the rate of convergence using NN operators of the Cardaliaguet-Euvrard and Squashing type [11], based on the modulus of continuity of the function being approximated, thereby deriving Jackson type inequalities. The same author continued the investigations on the rate of convergence for univariate and multivariate NN operators activated by the hyperbolic tangent and by the logistic sigmoidal functions (see, e.g., [4]). Ramp function activated NN operators were further examined in [8].

Afterward, in [19], the authors established a unified approach for a general class of sigmoidal functions and they introduced the following discrete NN operators

for sufficiently large \(n \in \mathbb {N}^{+}\), where \(\phi _\sigma \) is a suitable linear combination of sigmoidal activation functions \(\sigma : \mathbb {R} \rightarrow \mathbb {R}\), and \(f: [a,b] \rightarrow \mathbb {R}\) is a bounded function. In the above definition, \(\lfloor \cdot \rfloor \) and \(\lceil \cdot \rceil \) denote the integral and ceiling part of a given number.

In [20], replacing the sampling values f(k/w) in (I) with an average of f in an interval containing k/w, Costarelli and Spigler constructed the NN-type operators in the Kantorovich form, as follows

for sufficiently large \( n \in \mathbb {N}^{+} \) such that \( \lceil na \rceil \le \lfloor {nb \rfloor } - 1 \). For more detailed insights into such operators, we refer to [13, 14, 36].

In practice, the passage from the operators \(F_n\) to \(K_n\) followed the same path as the evolution from the Bernstein polynomials to the Kantorovich polynomials.

Following the same way, the next step appears to involve the introduction of a Durrmeyer version of the NN-type operators, just as a Durrmeyer version of the Bernstein polynomials has been defined, extensively studied, and extended in the literature (see, e.g., [5, 6, 17, 23,24,25,26,27, 29]).

In this paper, motivated by the above considerations, we introduce the Durrmeyer-type NN operators, as follows

Here, the coefficients are obtained through a general convolution integral involving a kernel function \(\chi \) and the function being approximated, denoted as f. The operators in (III) represent a generalization of the Kantorovich NN operators, as we will show in the Remark 8 below.

Here, we investigate the approximation capabilities of \(D_n^{\sigma ,\chi }\), establishing both pointwise and uniform convergence theorems for continuous functions (Sect. 4). We also provide quantitative estimates for the approximation order thanks to the use of the modulus of continuity \(\omega (f, \delta )\), \(\delta > 0\), of f; this turns out to be strongly influenced by the asymptotic behaviour of the sigmoidal function \(\sigma \) (Sect. 5). Our study also shows that the estimates we provide are, under suitable assumptions, the best possible on the space of continuous functions on [a, b]. Finally, also \(L^p\), \(1 \le p<+\infty \), convergence theorem is established (Sect. 6). In order to prove such a result, we exploit a norm inequality for the above operators, and the density of the continuous functions in \(L^p\).

Finally, several examples of sigmoidal activation functions have been presented (see, e.g., [9, 10, 33, 34]); here we also show that also the case of the celebrated ReLU and RePUs function can be included in the results established by the present approach.

2 Preliminaries

Throughout the paper, we denote the integer part and the ceiling of a fixed real number by \(\lfloor {\cdot \rfloor }\) and \(\lceil {\cdot \rceil }\), respectively.

In line with the definition given in [22], we will assume that \(\sigma \) is a sigmoidal function, i.e., a non-decreasing measurable function such that

Let us consider a sigmoidal function \(\sigma :\mathbb {R}\rightarrow \mathbb {R}\) such that \(\sigma (x)-1/2\) is an odd function, \(\sigma \in C^{2}(\mathbb {R})\) is concave for \(x\ge 0\), and \(\sigma (x)={\mathcal {O}}\left( \left| x\right| ^{-\alpha }\right) \) as \(x\rightarrow -\infty \) for some \(\alpha >1\) (see, e.g., [19]).

Now, taking \(\phi _{\sigma }(x)=\frac{1}{2}\left[ \sigma (x+1)-\sigma (x-1)\right] \), \(x\in {\mathbb {R}}\), it is immediate that \(\phi _{\sigma }\) is an even function taking non-negative values, non-decreasing on \((-\infty ,0]\) and non-increasing on \([0,+\infty )\). In addition to that, \(\phi _{\sigma }\) satisfies a list of properties (see Lemmas 2.2, 2.4, 2.6 and 2.7 in [19] and Lemma 2.6 (I) in [20]), which can be summarized as follows.

Lemma 1

We have

-

(i)

\(\displaystyle \sum _{k\in \mathbb {Z}}\phi _{\sigma }(x-k)=1\), for all \(x\in \mathbb {R}\);

-

(ii)

\(1\ge \displaystyle \sum _{k=\lceil {na \rceil }}^{\lfloor {nb \rfloor }}\phi _{\sigma }(nx-k)\ge \phi _{\sigma }(1)>0\), for every \(n\in \mathbb {N}^{+}\) such that \(\lceil {na \rceil } \le \lfloor {nb \rfloor } \), and \(x\in [a,b]\);

-

(iii)

\(\displaystyle \sum _{k=\lceil {na \rceil }}^{\lfloor {nb \rfloor }-1}\phi _{\sigma }(nx-k)\ge \phi _{\sigma }(2)>0\), for every \(n\in \mathbb {N}^{+}\) such that \(\lceil {na \rceil } \le \lfloor {nb \rfloor } - 1\), and \(x\in [a,b];\)

-

(iv)

\(\phi _{\sigma }(x)={\mathcal {O}}\left( \left| x\right| ^{-\alpha }\right) \) as \(x\rightarrow \pm \infty \), and consequently \(\phi _{\sigma }\in L^{1}({\mathbb {R}})\); furthermore, we also have that \(\displaystyle \int _{{\mathbb {R}}}\phi _{\sigma }(t)dt=1;\)

-

(v)

for every \(\gamma >0\), we have \(\displaystyle \lim _{n\rightarrow +\infty }\sum _{\left| x-k\right| >\gamma n}\phi _{\sigma }(x-k)=0\), uniformly with respect to \(x\in \mathbb {R}\).

Remark 2

We can observe that, the theory of the neural network operators holds also for sigmoidal activation functions \(\sigma \) which not-necessarily belong to \(C^{2}(\mathbb {R})\). In the latter case, we can assume that the corresponding \(\phi _{\sigma }\) satisfies the following conditions

-

\(\phi _{\sigma }(x)\) is non-decreasing for \(x<0\) and non-increasing for \(x\ge 0\);

-

\(\phi _{\sigma }(1)>0\).

For more details on this matter one can check, e.g., [19].

We recall the notion of the discrete absolute moment of order \(\nu \ge 0\) of \(\phi _{\sigma }\), i.e.,

Given the aforementioned conditions on \(\sigma \), it follows that \(M_{\nu }(\phi _{\sigma })<+\infty ,\) \( 0\le \nu <\alpha -1\) (see, e.g., [16]). Now, we introduce the truncated discrete absolute moment of order \(\nu \ge 0\), as follows

This notion can also be applied to express the sum in ii) of Lemma 1, that can be reformulated as \(M_{\lceil {na \rceil }}^{\lfloor {nb \rfloor }}(\phi _{\sigma }, 0, x)\ge \phi _{\sigma }(1)>0\).

Furthermore, we recall the following lemmas that will be useful in the next sections. These lemmas serve as a formal representation of results established in [14].

Lemma 3

Let \(\sigma \) be a sigmoidal function satisfying condition (iv) of Lemma 1 with \(1<\alpha <2\). Then, there exist constants \(H\ge 1\), \(K>0\) such that

for \(n\in \mathbb {N}^{+}\).

Lemma 4

Let \(\sigma \) be a sigmoidal function satisfying condition (iv) of Lemma 1 with \(\alpha =2\). Then, there exist constants \(H\ge 1\), \(K>0\) such that

for \(n\in \mathbb {N}^{+}\) sufficiently large.

3 The Durrmeyer-type NN operators

From now on, we assume for simplicity that both a, b are integers, with \(a<b\).

Let \(\chi :\mathbb {R}\rightarrow [0,+\infty )\) be bounded and \(L^{1}\) -integrable on \(\mathbb {R}\), such that

and its discrete absolute moment of order 0 is finite, i.e.,

Moreover, for any \(\nu \ge 0\), let us define the continuous absolute moment of order \(\nu \) as follows

Now, we are able to introduce the following definition.

Definition 5

If \(f:[a,b]\rightarrow \mathbb {R}\) is bounded and \(L^{1}\)-integrable on [a, b], the Durrmeyer-type neural network (NN) operators associated to f with respect to \(\phi _{\sigma }\) and \(\chi \) is defined by

In order to show that the above definition is well-defined, we have to prove the following lemma.

Lemma 6

Under the above assumptions, the following inequality holds

\(x \in [a,b]\), \(n \in \mathbb {N}^+\) such that \(n \ge 1/(b-a)\).

Proof

Using the change of variable \(y=nt-k\), we can write what follows

\(x \in [a,b]\). Since \(\chi \) is non-negative, and the following inclusions hold

we immediately obtain

thanks to the use of (1) and Lemma 1 (iii). \(\square \)

Therefore, by Lemma 6, we immediately obtain that \(D_{n}^{\sigma ,\chi }\) is well-defined, e.g., for any function that is bounded over the interval [a, b]. Then, of course, \(D_{n}^{\sigma ,\chi }\) is a positive linear operator on the space of bounded and \(L^{1}\)-integrable function on [a, b].

Remark 7

It is worth noting that the operator \(D_{n}^{\sigma ,\chi }\) preserves constant functions, a property inherent in its definition. Specifically, \(D_{n}^{\sigma ,\chi }e_{0}=e_{0}\), where \(e_{0}:[a,b]\rightarrow \mathbb {R}\) and \(e_{0}(x)=1\).

Remark 8

We emphasize that the Durrmeyer-type NN operators generalizes some other well-known families of NN operators. For instance, the Kantorovich-type NN operators (see, e.g., [20])

can be viewed as a special case of the Durrmeyer-type NN operators. In fact, for any bounded \(f\in L^1([a,b])\) and \(\chi (u)={\textbf{1}}_{[0,1]}(u)\), \(u\in {\mathbb {R}}\), where \({\textbf{1}}_{[0,1]}\) is the characteristic function on the set \([0,1]\subset {\mathbb {R}}\), we have

Thus, \(\left( D_{n}^{\sigma ,{\textbf{1}}_{[0,1]} }f\right) (x)=(K_n f)(x)\), for every \(x\in [a,b]\) and \(n\in \mathbb {N}^{+}\).

In the next Remark 14, we will provide further examples illustrating kernel functions \(\chi \) suitable for defining \(D_n^{\sigma ,\chi }\).

4 Pointwise and uniform convergence

If \(a<b\) and \(f\in C([a,b])\), we denote, as usual, the uniform norm on C([a, b]), by

Theorem 9

Let \(f:[a,b]\rightarrow \mathbb {R}\) be a bounded and \(L^{1}\)-integrable function. Then

at any point \(x_0\in [a,b]\) of continuity of f. Moreover, if \(f\in C([a,b])\), then

Proof

Let \(x_0\in [a,b]\) be a point of continuity of f. Using Lemma 6, we obtain

Let now \(\varepsilon >0\) be arbitrarily chosen. By the continuity of f at \(x_0\), there exists \(\gamma >0\) such that \(|f(t)-f(x_0)|<\varepsilon \) for every \(t\in [a,b]\) with \(|t-x_0|\le \gamma \). At this point, we proceed to define

and

Hence, we can write

The first term can be further divided into

For \(t\in [a,b]\), such that \(\left| t-k/n\right| <\gamma /2\), and \(k\in S_{1}\), we have

therefore, it follows that \(\left| f(t)-f(x_{0})\right| <\varepsilon \). This implies

where, in the above computations, we used the change of variable \(nt-k=u\), and also condition (i) of Lemma 1.

Let us now estimate \(I_{1,2}\). By the boundedness of f, it follows that

with

since \(\chi \in L^{1}({\mathbb {R}})\). Then, using again i) of Lemma 1, \(I_{1,2}\le 2\left\| f\right\| _{\infty }\varepsilon \), for sufficiently large n. Through analogous reasonings, we obtain the following inequalities

From property v) of Lemma 1, there exists a sufficiently large n, such that \(I_{2}\le 2\left\| f\right\| _{\infty } \left\| \chi \right\| _{1} \varepsilon \).

In conclusion, setting \(M:=\left\| \chi \right\| _{1}+2\left\| f\right\| _{\infty }(1+\left\| \chi \right\| _{1})\), we have

for sufficiently large n, and by the arbitrariness of \(\varepsilon \), it follows that

therefore the first part of the statement is proved.

Finally, if \(f\in C([a,b])\) the second part of the theorem easily follows, as we can now use the parameter \(\gamma \) of the uniform continuity of f (in place of that of the continuity of f) for any arbitrary \(x\in [a,b]\). \(\square \)

5 Quantitative estimates

In this section, we aim to figure out how fast are the approximations achieved by the operator \(D_{n}^{\sigma ,\chi }\).

To address this task, it is necessary to recall the notion of the modulus of continuity of a given function \(f\in C([a,b])\), defined as usual

with \(\delta >0\). It is interesting to point out that the following well-known inequality

holds, with \(\lambda ,\delta >0\).

Let \(x_{0}\) be a fixed point within this interval, for a sake of clarity, we rewrite the inequality obtained in (3)

One can easily check that

where the previous estimate is a consequence of (4), with \(\lambda =\gamma (n)|t-x_0|\) and \(\delta =\frac{1}{\gamma (n)}\). Here, in accordance with the insights from [14], the function \(\gamma (n)\) is defined as

Hence, it follows

Through the change of variable \(nt-k=u\), we have \(\displaystyle \int _{a}^{b}\chi (nt-k)dt\le \frac{\left\| \chi \right\| _{1}}{n}\). Therefore, we can write

assuming that \({\tilde{M}}_{1}(\chi )<+\infty \). Consequently, by i) of Lemma 1, we get

where the truncated absolute moment of order 1, under the assumption on \(\sigma \) assumed in Lemma 3 and in Lemma 4 can be bounded by an increasing function on the variable n. Thus, for \(f\in C([a,b])\), we can immediately deduce the following estimates

where each constant C appearing in these estimates is independent on n and f.

Now, we proceed to prove that these estimates are the best possible.

We focus only on the case \(\alpha =2\), employing an approach inspired by the recent paper [13]. The remaining cases follow by similar methodologies, and therefore, we omit discussing them here.

Throughout this section, the notation \(f(x) \sim g(x)\) as \(x\rightarrow a\), means that \(f(x)={\mathcal {O}}(g(x))\) as \(x\rightarrow a\), and \(g(x)={\mathcal {O}}(f(x))\) as \(x\rightarrow a\).

Now, if we define

it is easy to see that the corresponding \(\phi _{\sigma }\) satisfies

Then, consider the function \(f:[-1,1]\rightarrow \mathbb {R}\), \(f(x)=\left| x\right| \). It is immediate that, for \(\delta >0\) sufficiently small, we have

Thus, recalling that \(\phi _{\sigma }\) is even, we can write

Noting that

for sufficiently large \(n\in \mathbb {N}^{+}\), and referring to (8), it can be deduced that

and, hence

for sufficiently large \(n\in \mathbb {N}^{+}\). It could be possible to generalize this estimation for the case of an arbitrary sigmoidal function \(\sigma \) such that \(\phi _{\sigma }(x)\sim |x|^{-2}\), as \(x\rightarrow -\infty \), see, e.g., [13].

In conclusion, estimation (6) is the best possible in the case \(\alpha =2\).

By similar reasonings, we deduce that the estimations (5) and (7) are the best possible, in the cases \(\alpha \in \left( 1,2\right) \) and \(\alpha >2\), respectively.

Therefore, we can summarize such properties in the following theorem.

Theorem 10

Consider a sigmoidal function \(\sigma :{\mathbb {R}}\rightarrow {\mathbb {R}}\) and \(\alpha > 1\), such that \(\phi _{\sigma }(x)\sim \left| x\right| ^{-\alpha }\) as \(x\rightarrow -\infty \), and let \(\chi :\mathbb {R}\rightarrow [0,\infty )\) be a bounded and \(L^{1}\)-integrable function on \(\mathbb {R}\). The Durrmeyer-type neural network operators, defined in (2), have the following properties:

-

(i)

if \(\alpha \in (1,2)\), \({\mathcal {O}}\left( \omega \left( f,\,\frac{1}{n^{\alpha -1}}\right) \right) \), as \(n\rightarrow +\infty \), is the best order of uniform approximation over the class C([a, b]) in the approximation by the operators \(D_{n}^{\sigma ,\chi }\);

-

(ii)

if \(\alpha =2\), \({\mathcal {O}}\left( \omega \left( f,\,\frac{\log n}{n}\right) \right) \), as \(n\rightarrow +\infty \), is the best order of uniform approximation over the class C([a, b]) in the approximation by the operators \(D_{n}^{\sigma ,\chi }\);

-

(iii)

if \(\alpha >2\), \({\mathcal {O}}\left( \omega \left( f,\,\frac{1}{n}\right) \right) \), as \(n\rightarrow +\infty \), is the best order of uniform approximation over the class C([a, b]) in the approximation by the operators \(D_{n}^{\sigma ,\chi }\).

6 Convergence in Lebesgue spaces

Now, in order to obtain approximation results for not necessarily continuous functions, we work in the setting of Lebesgue spaces \(L^{p}([a,b])\), \(1\le p<+\infty \). Therefore, we want to exploit the integral form of the operator \(D_{n}^{\sigma ,\chi }\) to show that it also converges with respect to the \(L^{p}\)-norm.

As an easy consequence of Theorem 9, we can prove the following.

Theorem 11

For every \(f\in C([a,b])\) and \(1\le p<+\infty \), we have

where \(\left\| \cdot \right\| _{p}\) denotes the usual \(L^{p}\)-norm.

Proof

We immediately have

from which we get the thesis, if \(n\in \mathbb {N}^{+}\) is sufficiently large, thanks to Theorem 9. \(\square \)

Subsequently, to prove the convergence of the family of Durrmeyer-type neural network operators in \(L^p\), it is necessary to establish the following inequality.

Theorem 12

For every \(f\in L^{p}(\left[ a,b\right] ) \), \(1\le p<+\infty \), we have

Proof

For every \(f\in L^{p}(\left[ a,b\right] ) \), \(1\le p<+\infty \), taking into account the convexity of the function \(x\rightarrow \left| x\right| ^{p}\), and applying the Jensen inequality, we obtain

One can easily verify that

where the equality arises from (iv) of Lemma 1, which asserts that \(\displaystyle \int _{\mathbb {R}}\phi _{\sigma }(t)dt=1\). Taking this into consideration, we obtain

Subsequently applying Jensen inequality once again, we deduce that

and now the proof is complete. \(\square \)

From Theorem 12, we deduce that \(D_{n}^{\sigma ,\chi }\) map the whole space \(L^{p}([a,b])\) into itself, and that \(D_{n}^{\sigma ,\chi }\) are well-defined in \(L^{p}([a,b])\).

Finally, by exploiting the density of C([a, b]) within \(L^{p}(\left[ a,b\right] ) \), \(1\le p<+\infty \), with respect to the norm \(\left\| \cdot \right\| _{p}\), we achieve the following convergence result.

Theorem 13

For every \(f\in L^{p}(\left[ a,b\right] ) \), \(1\le p<+\infty \), we have

Proof

Let \(f \in L^{p}([a,b])\) and \(\varepsilon > 0\) be fixed. Since the space C([a, b]) is dense in \(L^{p}([a,b])\) with respect to the norm \(\Vert \cdot \Vert _p\), there exists \(g \in C([a,b])\) such that \(\Vert f - g\Vert _p < \left( \frac{\left\| \chi \right\| _{1}^{\frac{p-1}{p}}M_{0}(\chi )^{\frac{1}{p}}}{{{{\mathcal {K}}}}\,\phi _{\sigma }(2)}+1\right) ^{-1}\varepsilon /2\).

Then, using such a g and Theorem 12,

Finally, by Theorem 11,

for \(n \in \mathbb {N}^+\) sufficiently large. Being \(\varepsilon \) arbitrary, the proof follows. \(\square \)

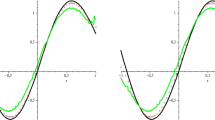

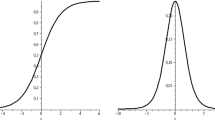

Remark 14

Examples of sigmoidal functions satisfying all the assumption of the above theory can be easily provided. For instance, we can mention the well-known logistic function \(\sigma _{l}(x)=(1+e^{-x})^{-1}\), \(x\in {\mathbb {R}}\), and the hyperbolic tangent sigmoidal function \(\sigma _{h}(x)=\frac{1}{2}(\tanh x+1)\), \(x\in {\mathbb {R}}\) (see, e.g., [4, 7, 30]). An example of a non-smooth sigmoidal function can be provided by the ramp function \(\sigma _{R}(x)\) [8, 33, 34], defined by

for which the corresponding function \(\phi _{\sigma _{R}}\) has compact support.

Additionally, since it is well-known that in the last years the so-called rectified linear unit (ReLU) activation function received a lot of attention due to their useful peculiarities in the application of training algorithms, it is natural to ask if the previous results can be extended also to that case. In general, such a question can be non-trivial, since the ReLU function is not a sigmoidal one.

We recall that, the ReLU function is defined as \(\psi _{\text {ReLU}}(x)=(x)_{+}\), \(x\in {\mathbb {R}}\) (see, e.g., [12]), where the function \((x)_{+}:= \max \{x, 0\}\) denotes the positive part of \(x\in {\mathbb {R}}\).

Similarly, also powers of ReLU functions, known as rectified power unit (RePUs, [28]) functions \(\psi ^{k}_{\text {ReLU}}(x)=[(x)_{+}]^{k}\), \(x\in {\mathbb {R}}\), are of interest in the theory of neural networks, for reasons similar to those written above.

However, it is not difficult to see that certain density functions \(\phi _{\sigma }\) generated by suitable \(\sigma \) can be viewed as finite linear combination of ReLu or RePUs, respectively (see [14]).

Finally, to conclude the paper, it is important to provide examples of functions \(\chi \) that can be used in the definition of the Durrmeyer-type NN operators. This is quite easy since the required assumptions on \(\chi \) are very soft. For instance, we can consider as \(\chi \) the well-known central B-spline kernel of order n, expressed as

Additionally, the Féjer kernel provides another suitable choice

with the sinc-function defined as

Further options can be explored in references such as [1, 2, 17, 18, 21].

7 Final remarks and conclusions

In this paper, the theory of the Durrmeyer-type NN operators is introduced and studied, in case of the approximation of functions of one variable. It is well-known that NN-type approximations typically involve multivariate data; hence, as a future work we aim to extend the above definition and results to the multivariate setting, following the same approach considered in [20].

Data availability

Not applicable.

References

Allasia, G., Cavoretto, R., De Rossi, A.: A class of spline functions for landmark-based image registration. Math. Methods Appl. Sci. 35, 923–934 (2012)

Allasia, G., Cavoretto, R., De Rossi, A.: Lobachevsky spline functions and interpolation to scattered data. Comput. Appl. Math. 32, 71–87 (2013)

Anastassiou, G.A.: Rate of convergence of some neural network operators to the unit-univariate case. J. Math. Anal. Appl. 212, 237–262 (1997)

Anastassiou, G.A.: Intelligent Systems: Approximation by Artificial Neural Networks, Intelligent Systems Reference Library, vol. 19. Springer, Berlin (2011)

Bardaro, C., Faina, L., Mantellini, I.: Quantitative Voronovskaja formulae for generalized Durrmeyer sampling type series. Math. Nachr. 289(14–15), 1702–1720 (2016)

Bardaro, C., Mantellini, I.: Asymptotic expansion of generalized Durrmeyer sampling type series. Jean J. Approx. 6(2), 143–165 (2014)

Baxhaku, B., Agrawal, P.N.: Neural network operators with hyperbolic tangent functions. Expert Syst. Appl. 226(15), 119996 (2023)

Cao, F., Chen, Z.: The construction and approximation of a class of neural networks operators with ramp functions. J. Comput. Anal. Appl. 14(1), 101–112 (2012)

Cao, F., Xie, T., Xu, Z.: The estimate for approximation error of neural networks: a constructive approach. Neurocomputing 71(4), 626–630 (2008)

Cao, F., Zhang, R.: The errors of approximation for feed forward neural networks in the Lp metric. Math. Comput. Model. 49(7), 1563–1572 (2009)

Cardaliaguet, P., Euvrard, G.: Approximation of a function and its derivative with a neural network. Neural Netw. 5(2), 207–220 (1992)

Chen, H., Yu, D., Li, Z.: The construction and approximation of ReLU neural network operators. J. Funct. Spaces (2022)

Coroianu, L., Costarelli, D.: Best approximation and inverse results for neural network operators, in print in: Results in Mathematics (2024)

Coroianu, L., Costarelli, D., Kadak, U.: Quantitative estimates for neural network operators implied by the asymptotic behaviour of the sigmoidal activation functions. Mediterr. J. Math. 19, 211 (2022)

Coroianu, L., Kadak, U.: Integrating multivariate fuzzy neural networks into fuzzy inference system for enhanced decision making. Fuzzy Sets Syst. 470, 108668 (2023)

Costarelli, D., Natale, M., Vinti, G.: Convergence results for nonlinear sampling Kantorovich operators in modular spaces. Numer. Funct. Anal. Optim. 44(12), 1276–1299 (2023)

Costarelli, D., Piconi, M., Vinti, G.: Quantitative estimates for Durrmeyer-sampling series in Orlicz spaces. Sampling Th. Signal Proc. Data Anal. 21(1), 3 (2023)

Costarelli, D., Sambucini, A.R., Vinti, G.: Convergence in Orlicz spaces by means of the multivariate max-product neural network operators of the Kantorovich type and applications. Neural Comput. Appl. 31, 5069–5078 (2019)

Costarelli, D., Spigler, R.: Approximation results for neural network operators activated by sigmoidal functions. Neural Netw. 44, 101–106 (2013)

Costarelli, D., Spigler, R.: Convergence of a family of neural network operators of the Kantorovich type. J. Approx. Theory 185, 80–90 (2014)

Cruz-Uribe, D., Hasto, P.: Extrapolation and interpolation in generalized Orlicz spaces. Trans. Am. Math. Soc. 370, 4323–4349 (2018)

Cybenko, G.: Approximation by superpositions of a sigmoidal function. Math. Control Signals Syst. 2, 303–314 (1989)

Derriennic, M.M.: Sur l’approximation de fonctions integrables sur \([0,1]\) par des plynomes de Berstein modifies. J. Approx. Theory 31(4), 325–343 (1981)

Durrmeyer, J.L.: Une formule d’inversion de la transformée de Laplace: applications à la théorie des moments, Thése de 3e cycle, Université de Paris (1967)

Gonska, H., Heilmann, M., Rasa, I.: Convergence of iterates of genuine and ultraspherical Durrmeyer operators to the limiting semigroup: \(c^2\)-estimates. J. Approx. Theory 160(1–2), 243–255 (2009)

Gonska, H., Kacso, D., Rasa, I.: The genuine Bernstein–Durrmeyer operators revisited. Results Math. 62(3–4), 295–310 (2012)

Gonska, H., Zhou, X.: A global inverse theorem on simultaneous approximation by Berstein–Durrmeyer operators. J. Approx. Theory 67, 284–302 (1991)

Gühring, I., Raslan, M.: Approximation rates for neural networks with encodable weights in smoothness spaces. Neural Netw. 134, 107–130 (2021)

Heilmann, M., Rasa, I.: A nice representation for a link between Baskakov- and Szász–Mirakjan–Durrmeyer operators and their Kantorovich variants. Results Math. 74, 1–12 (2019)

Kadak, U.: Multivariate neural network interpolation operators. J. Comput. Appl. Math. 414, 114426 (2022)

Kainen, P.C., Kurková, V., Vogt, A.: Approximative compactness of linear combinations of characteristic functions. J. Approx. Theory 257, 105435 (2020)

Kurková, V., Sanguineti, M.: Classification by sparse neural networks. IEEE Trans. Neural Netw. Learn. Syst. 30(9), 2746–2754 (2019)

Qian, Y., Yu, D.: Rates of approximation by neural network interpolation operators. Appl. Math. Comput. 418, 126781 (2022)

Qian, Y., Yu, D.: Neural network interpolation operators activated by smooth ramp functions. Anal. Appl. 20(4), 791–813 (2022)

Schmidhuber, J.: Deep learning in neural networks: an overview. Neural Netw. 61, 85–117 (2015)

Sharma, M., Singh, U.: Some density results by deep Kantorovich type neural network operators. J. Math. Anal. Appl. 533(2), 128009 (2024)

Zhou, D.X.: Universality of deep convolutional neural networks. Appl. Comput. Harmonic Anal. 48(2), 787–794 (2020)

Zoppoli, R., Sanguineti, M., Gnecco, G., Parisini, T.: Neural Approximations for Optimal Control and Decision, Communications and Control Engineering book series CCE. Springer, Cham (2020)

Acknowledgements

The second and the third authors are members of the Gruppo Nazionale per l’Analisi Matematica, la Probabilità e le loro Applicazioni (GNAMPA) of the Istituto Nazionale di Alta Matematica (INdAM), of the network RITA (Research ITalian network on Approximation), and of the UMI (Unione Matematica Italiana) group T.A.A. (Teoria dell’Approssimazione e Applicazioni).

Funding

The authors D. Costarelli and M. Natale have been partially supported within the 2024 GNAMPA-INdAM Project “Tecniche di approssimazione in spazi funzionali con applicazioni a problemi di diffusione", CUP E53C23001670001. Moreover, D. Costarelli has been partially supported within the projects: (1) 2023 GNAMPA-INdAM Project “Approssimazione costruttiva e astratta mediante operatori di tipo sampling e loro applicazioni" CUP E53C22001930001, (2) “National Innovation Ecosystem grant ECS00000041 - VITALITY", funded by the European Union - NextGenerationEU under the Italian Ministry of University and Research (MUR), and (3) PRIN 2022 PNRR: “RETINA: REmote sensing daTa INversion with multivariate functional modeling for essential climAte variables characterization", funded by the European Union under the Italian National Recovery and Resilience Plan (NRRP) of NextGenerationEU, under the MUR (Project Code: P20229SH29, CUP: J53D23015950001).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The author declares that he has no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Coroianu, L., Costarelli, D., Natale, M. et al. The approximation capabilities of Durrmeyer-type neural network operators. J. Appl. Math. Comput. (2024). https://doi.org/10.1007/s12190-024-02146-9

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s12190-024-02146-9

Keywords

- Durrmeyer-type neural network operators

- Order of approximation

- Modulus of continuity

- Quantitative estimates

- Lebesgue spaces