Abstract

Social affective touch is an important aspect of close relationships in humans. It has been also observed in many non-human primate species. Despite the high relevance of behaviours like embraces for personal wellbeing and mental health, they remain vastly under-investigated in psychology. This may be because psychology often relies on a limited repertoire of behavioural measurements such as error rates and reaction time measurements. These are, however, insufficient to capture the multidimensional complexity of highly interactive dyadic behaviours like embraces. Based on recent advances in computational ethology in animal models, the rapidly emerging field of human computational ethology utilizes an accessible repertoire of machine learning methods to track and quantify complex natural behaviours. We highlight how such techniques can be utilized to investigate social touch and which preliminary conditions, motor aspects and higher-level interactions need to be considered. Ultimately, integration of computational ethology with mobile neuroscience techniques such as ultraportable EEG systems will allow for an ecologically valid investigation of social affective touch in humans that will advance psychological research of emotions.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

Affective interpersonal touch is an important aspect of close relationships across different cultures (Sorokowska et al., 2021) and has not only been observed in humans, but also in non-human primate species (Jablonski, 2021). One of the most common forms of affective interpersonal touch is embracing (Ocklenburg et al., 2018). Embraces may have a positive impact on both physical and mental health. For example, a recent study in older adults found that a greater availability of embraces was associated with higher self-rated health (Rogers-Jarrell et al., 2021). Moreover, embraces have been associated with an attenuation of negative mood on days of interpersonal conflict (Murphy et al., 2018) or if individuals feel particularly lonely (Packheiser et al., 2021b). Furthermore, they have been implicated in buffering against infections (Cohen et al., 2015). Finally, romantic partner embraces have been implicated in the physiological stress response as they attenuate the release of the hormone cortisol following acute stress induction (Berretz et al., 2021). While the exact physiological mechanisms through which embraces reduce cortisol are not yet examined in detail, the most pervasive explanation comes from the hypothesis that embraces lead to a release of the hormone oxytocin. Oxytocin has been demonstrated to inhibit the synthesis of adrenocorticotropic hormone in the pituitary due to its molecular resemblance of vasopressin, a hormone released during HPA-axis activation (Gimpl & Fahrenholz, 2001). Thus, an increase in oxytocin blood levels should ultimately attenuate the secretion of cortisol in the body.

Despite the high relevance of social touch for relationships and wellbeing (Yoshida & Funato, 2021), embraces remain under-investigated in psychology. A key reason for this is the fact that embraces are highly complex natural behaviours. Behavioural research in humans often relies on limited repertoires of measurements such as response frequencies, error rates and reaction time measurements (Brereton et al., 2022). This kind of experimental set-up is insufficient to investigate the complex behavioural richness of embraces that rely on pose and movements of several different body parts and are affected by a multitude of higher-level interactions with social, biological, and relational variables (see below). A way to mend this issue is a rapidly emerging new research area in behavioural neuroscience that has been termed human computational ethology (HCE; Mobbs et al., 2021).

What is human computational ethology?

HCE is based on recent advances in computational ethology in animal models, which have provided an accessible repertoire of machine learning methods to track and quantify natural behaviour. Briefly, freely moving animals are video recorded, and a convolutional neural network (CNN) is then used to analyse video frames and extract key point coordinates of pre-trained body parts. Widely used software packages for this purpose include LEAP (Pereira et al., 2019) and DeepLabCut (Mathis et al., 2018). Depending on scene complexity, behaviour can be recorded from multiple angles to avoid occlusion and to triangulate tracked body parts for post-hoc 3D reconstruction of poses, for example with the Anipose toolkit (Karashchuk et al., 2021). New techniques such as DANNCE even allow training CNNs directly on 3D video voxels (Dunn et al., 2021), thus learning geometric distances between body parts directly from raw data. The primary output of these video-tracking methods is a long time series of coordinates for each tracked body part. From here, simple kinematics can describe a myriad of useful features such as the relative distance and angles between body parts, e.g., to measure arm length or head orientation, as well as temporal dynamics such as velocity and acceleration, and total paths travelled by a specific body part.

In addition to feature extraction from raw key point data, additional supervised and unsupervised machine learning approaches are stacked to the analysis pipeline to classify successive time points to specific behavioural motifs. Unsupervised classification methods such as hidden Markov models (HMM), recurrent neural networks (RNN) and variational autoencoders (VAE) have proven especially useful in identifying behavioural structure from spatiotemporal dynamics without previous training, thus sequencing behaviour in individual motifs or syllables (Calhoun et al., 2019; Luxem et al., 2020; Sun et al., 2021). Moreover, supervised machine learning methods such as support vector machines (SVM) can be directly trained to classify specific behaviours of interest, that are then detected from the stream of raw coordinates (Hsu & Yttri, 2019).

In animal research, methods in computational ethology have been successfully applied to investigate typical behaviour and motor development in rats (Dunn et al., 2021), to detect behavioural differences between transgenic mice (Luxem et al., 2020), and to investigate internal states of fruit flies during courtship (Calhoun et al., 2019). Ironically, although most of the methods developed in computational ethology are inspired by advances in computer vision with direct human use cases, and even pre-trained on human data, computational ethology has not yet reached comparable implementation in human neuroscience (Mobbs et al., 2021).

How can HCE improve the study of social affective touch?

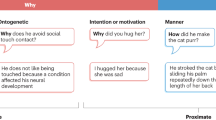

Non-scientific approaches (e.g., blog posts on entertainment websites) often classify embraces in categorical ways and differentiate several different types of embraces such as ‘the awkward men hug”, “the romantic hug”, “the side hug”, etc. However, in a HCE framework such categorical approaches are not optimal, as they over-simplify the richness of the behaviour. Specifically, there are two main problems. On the one hand, broad categorical labels are subjective and not necessarily mutually exclusive or collectively exhaustive. On the other hand, broad categories do not necessarily account for the spatiotemporal structure nor discrete components of the behaviour described (Anderson & Perona, 2014). HCE offers two main solutions for these problems. First, machine learning classifications are objective, based on the input data and network hyperparameters, and categories are defined statistically. Second, classifications can be traced back to raw data and even subdivided into lower-level motifs. The complexity of specific behaviour categories can be thus described by the structure of its components (Luxem et al., 2020).

Some preliminary considerations must be considered when investigating embraces from a HCE perspective. For one thing, the number of involved individuals and their different roles in the embrace will be crucial to evaluate the social situation. Secondly, the pose and movement of the body parts of interest should be carefully considered to include all the relevant kinematic information. Additionally, the temporal dynamics will allow to describe the precise timing and synchronicity of the embrace, as well as decompose structural components of the overall behaviour. Furthermore, higher order interactions beyond the coordinated embrace of the involved partners should be considered, such as situational variables, affective context, and personality traits. These will be highlighted in the following sections to lay the ground for due HCE research of social touch and affective behavioural neuroscience.

Simplifying the number of interaction partners

The most widely investigated form of embrace is the dyadic embrace. However, sometimes people would also engage in self-hugging (Dreisoerner et al., 2021) or group hugging. As evident from the existence of only a single published academic paper on these unusual forms of embraces, they certainly are deserving of investigation. In the context of HCE, these forms of embraces could affect technical requirements in different ways.

First, increasing scene complexity with a high number of individuals significantly complicates video data collection. Especially in high-proximity interactions with body contact and entangled poses, occlusion of body parts by other individuals will interrupt continuous tracking of each individual’s pose, thus literally occluding information. See in figure 1 that not a single camera perspective captures the total scene, but rather every additional view offers new, previously occluded information. A larger number of cameras is likely needed to record data from multiple angles on a group of people hugging, and the increase in additional data will be proportional to scene complexity. It may therefore be advisable to start with the dyadic embrace or a self-hug, before tackling multiple individuals.

Sequence of a typical dyadic embrace (from left to right) recorded with a synchronized camera array (from top to bottom) covering a field of 360°. Note how each camera angle contains unique information occluded in other camera perspectives. All individuals shown here gave their permission to use this figure as part of this publication.

Second, multi-agent scenes in general pose an additional challenge for machine learning tracking algorithms. These need not only to track an increased amount of body parts, but must identify the multiple agents, assign each body part, and conserve the assignment to the corresponding individual over time. Although new advances continuously improve such tasks (Lauer et al., 2021), this still represents an added challenge.

Lastly, in dyadic embraces or group-hugs, the behaviour of each individual is not independent of each other. In these synchronized interactions, individuals not only react and adjust their pose and movement to their interaction partner, but each can assume different roles such as lead or initiator and follow. These roles are evidently more difficult to track in larger groups with embrace reactions either propagating in a sequence or a more complex network. To this day, there is little to no data on how personality traits or demographic variables are associated with the initiation of social touch. While some studies show an increase in somatosensory responses in extroverted individuals (Schaefer et al., 2012), it is entirely unclear if similar personality domains are also associated with an increased likelihood to initiate social touch. For gender differences, there have been reports that men initiate social touch in the relationship more often at younger ages (Willis & Dodds, 1998). This imbalance seems to disappear at around 30 to 40 years of age where both men and women in the relationship equally engage in social touch. A problem of such studies however is their reliance on self-reports which might not accurately reflect reality due to for example recall biases. Using HCE could provide a suitable tool to tackle these issues as objective parameters can be used to classify the initiator of the touching dyad.

Considering pose and movement of multiple body parts

Several different motor aspects of embraces are relevant within a HCE framework. Embraces can vary substantially regarding the position and spatial closeness of the involved individuals. Most people embrace while facing each other, but it is also possible to hug the side or the back of a person. Tracking the overall pose plays an important role in analysing embraces, especially since the synchronicity of body parts among individuals may differ between embraces, revealing specific characteristics. Main points of analysis would be the arms (Are both arms used, or only one?) and the hands (Where are they placed?), ideally from both interaction partners. Moreover, the upper body (do the involved individuals induce chest and/or shoulder contact?), the lower body (do the involved individuals induce leg contact, or is there a gap between their lower bodies?), and the head (do the individuals induce head contact, e.g., by kissing or putting the head on the shoulder of the other person?) should be taken into account. Given the relevance of the questions above, it seems appropriate to track a complete pose of the entire body, rather than focusing on single body parts.

Incidentally, greater pressure in a hug has been suggested to indicate greater positive affection towards the hugged person (Forsell & Åström, 2012). Accordingly, the tightness of the embrace or degree of chest compression, is a variable that may need to be measured with multiple cameras with a sidewise perspective and 3D tracking. Thus, giving some additional depth to the individuals’ pose and then comparing these to their relative distance from back-to-back. Note that this feature can only be calculated by combining multiple views from figure 1, thus emphasizing the importance of 3D pose estimation. On top of visual information that can be used for classification, pressure devices can be worn by the participants during embraces to provide additional tactile input in the predictive model (Koshar & Knowles, 2020). Training the model on multimodal data should thus provide even more accurate predictions of the underlying emotionality of the embrace or the relationship between the touching dyad.

A whole-body plan will also allow to detect individual differences that may have been otherwise unaccounted for. While most embraces involved both arms and hands, most individuals prefer one arm over the other for the movement leading into an embrace (Turnbull et al., 1995). It has been shown that people on average show a significant bias to go into an embrace with the right arms first (Packheiser et al., 2019a), but this bias is modulated by affective parameters (see below). Whether the left arm or the right arm is leading the embrace therefore is an important aspect of embracing that should be assessed in a computational ethology approach of embracing. In addition to assessing right- or left-sidedness, accurate movement tracking would allow to quantify the exact rightward or leftward tilt or lateral flexion of the upper body, as well as the relative elevation of the leading shoulder and the elevation of the elbows, while tracking hip rotation and foot position at the same time. Furthermore, pose and location must be egocentrically aligned to the interaction partner to account for relative movement in joint action to one side.

The information on sidedness and rotation can also be associated with information on the initiating individual. Since embraces or kisses are usually mutual, the sidedness is determined by the initiator. If the initiator leads for example with the right arm, the partner of the dyad usually must do the same to successfully execute the embrace. If the underlying emotionality is predicted from the leading arm of the embrace, it becomes critical to correctly identify the initiator as the direction most likely reflects this person’s mental state.

Considering the timing and structure of embraces

The average embrace lasts about 3.17 seconds (Nagy, 2011), but there is substantial variability in the temporal dynamics of embraces, with some lasting several minutes. It has been shown that the duration of an embrace is associated with its affective value, with 1-second embraces being rated as less pleasant compared to 5-second or 10-second embraces (Dueren et al., 2021). Thus, it can be assumed that longer embraces are associated with a strongly positive emotional valence of the relationship between the involved individuals or a high emotional arousal of the situation. However, the relationship between duration of an embrace and emotional valence is unlikely to be linear. After a certain duration (e.g., several minutes), an embrace may start to feel awkward even between partners or close friends. Clearly, more empirical research is needed to elucidate this association.

Assessing the duration of the embrace therefore is a critical component in a HCE approach of embracing. Tracking body movement and pose over time allows to analyse temporal dynamics in a higher resolution, using both the continuous embrace trajectories and discrete decompositions into distinct phases of the embrace. Thus, moving far beyond manually recorded start and stop times.

Moreover, the temporal dynamics of individual trajectories of the above-mentioned body parts may be relevant information to classify embraces. For example, it could be assumed that in more emotional situations, a faster arm movement in the embracing trajectory towards the other person may be observed than in an emotionally neutral situation. In addition to measuring duration, speed, and latency of the overall embrace and its individual components, continuous recordings in computational ethology allow to investigate synchronicity between individuals. The window of analysis could also be extended to include seconds prior to the initiation as well as after the embrace. Furthermore, a recent study found that especially intimate social touch is beneficial for mental well-being (Mohr et al., 2021). HCE could therefore also be used to identify the relationship between embracing partners, i.e. whether they are for example romantic partners or platonic friends.

Embraces can also occur in association with one or more additional forms of nonverbal behaviour that are simultaneous. Commonly shown co-occurrences include kissing on the lips, cheeks or the forehead, shoulder clapping, or patting the back. Different varieties of embraces could be analysed by isolating individual behavioural motifs, or units of organized movement, and describing the complex composition of each embrace on a whole-body level as a complex temporal sequence of behaviour.

Cradling and HCE

A behaviour that is closely related to embracing is the cradling of babies and young children. This lateralized human and non-human primate behaviour (Hopkins, 2004; Packheiser et al., 2019b) likely represents the most investigated instance of embraces as it has been studied since the 1960 pioneering cross-species study of Lee Salk (Salk, 1960). Cradling seems to be independent of culture (Saling & Cooke, 1984) and is only mildly influenced by other forms of lateralization such as handedness (Packheiser et al., 2019b). Many studies have suggested that the cradling bias is an indicator of the mental or emotional state of the cradling adult (Malatesta, Marzoli, Rapino, and Tommasi, 2019b; Packheiser et al., 2020; Reissland et al., 2009). It has also been used as a cross-species marker for social pressure, attachment style and the attitude towards the cradled individual (Boulinguez-Ambroise et al., 2020; Hendriks et al., 2011; Malatesta et al., 2020; Malatesta, Marzoli, Morelli, Pivetti, and Tommasi, 2021a; Malatesta, Marzoli, Piccioni, & Tommasi, 2019a; Malatesta, Marzoli, Prete, & Tommasi, 2021b; Malatesta, Marzoli, Rapino, & Tommasi, 2019b; Morgan et al., 2019). Moreover, an atypical right-sided cradling bias is significantly increased in individuals diagnosed with Autism spectrum disorders (Herdien et al., 2021). Finally, cradling side bias has been suggested to potentially serve as a predictor for the neural development of the cradled child (Malatesta, Marzoli, Prete, & Tommasi, 2021b; Vauclair, 2022). All these results are especially noteworthy since they could be observed based on a rather coarse distinction into left- vs. right-sided cradling biases in largely cross-sectional rather than longitudinal data. Since cradling research suffers from many methodological problems that we outlined for embracing, the application of HCE could provide a suitable method to investigate the predictive value of the cradling bias more intricately for either part of the cradling dyad. Using additional information other than the side bias (e.g., holding style, duration, dynamics, or intensity), more trait or state variables associated with cradling could be assessed. In contrast to embracing, cradling is mostly determined by the cradling adult as the infant only passively influences the cradling style (e.g., through its weight or height). Thus, there is likely less interdependence that needs to be disentangled in this form of dyadic behaviour than for embracing between two adults.

Considering dynamic interactions

To embrace, to be embraced, or both? As mentioned before, it is essential to track not only the entire body plan of each individual but to estimate the relative pose of both interaction partners towards each other. For one thing, the embracing pose of partner A could indicate important characteristics, but only provided the interaction partner B is not 2 meters away. Similarly, lead and follow dynamics identical in shape and speed would have completely different implications if one had a latency between leading and follow movement larger than the other. Thus, the synchronicity, both temporal and spatial (see figure 2), or interaction between embracing individuals is key in a HCE framework of embraces.

Sequence of temporally synchronous embrace. Each panel represents a single frame at 1Hz, for a 4-second-long embrace. Note how typical actions such as eye-contact, approach, embrace, and squeeze are in synchrony between interaction partners. All individuals shown here gave their permission to use this figure as part of this publication.

Considering higher order interactions

Importantly, embraces are not solely defined by motor behaviour, but show higher-level interactions with several situational, affective and personality variables. While the basic motor behaviour could resemble or differ among different embraces, the psychological implications of the embrace could vary drastically between contexts.

The emotional valence of the relationship between the involved individuals is a highly important aspect of embracing. Embracing individuals often do have a prior relational history, but not always. Common constellations are embraces between romantic partners, between parents and children, between family members, and between friends. For most relationships that involve embracing, the emotional valence is likely to be positive, but there are situations in which embraces occur in emotionally neutral situations (such as hugging as a greeting between strangers that are introduced to each other in a common social context). Negative emotional valence can also occur (e.g., when family members or friends are estranged to each other, but still hug in a social context to remain a façade).

Embraces also differ among contexts and can occur in emotionally positive situations (such as embracing the romantic partner to communicate affection), emotionally neutral situations (such as embracing someone briefly as a greeting) and emotionally negative situations (such as embracing someone to console them at a funeral). Importantly, it has been shown that affective state can affect the sidedness dimension of embracing, with a reduced rightward bias in emotional compared to neutral situations, a finding that has been linked to emotional lateralization in the brain (Packheiser et al., 2019a). Anecdotal evidence suggests that embraces in emotional situations with higher emotional arousal may be related with a longer duration of the hug and higher physical closeness of the individuals.

While social touch is a universal human behaviour, it is modulated by culture with cultural conventions up- or down-regulating the average magnitude of social affective touching (Suvilehto et al., 2015). This is reflected by the finding that country of residence has a strong effect on the prevalence of social touch, with a higher diversity of social touch behaviour being observed among younger female and liberal people and in warmer, less conservative and less religious countries (Sorokowska et al., 2021). Therefore, culture may be an important influence factor on embraces.

Not everyone enjoys social touch to the same extent. Personality is an important factor, with the extraversion and openness facets of the Big Five personality model showing a positive correlation with predisposition to social touch (Trotter et al., 2018) whereas the neuroticism domain has been negatively associated with a preference to social touch (Thiebaut et al., 2021).

Moreover, trauma can affect individual comfortable interpersonal distance, with individuals with anxiety and PTSD preferring to be farther away from other people than healthy controls (Haim-Nachum et al., 2021). Thus, personality factors and effects of trauma on social touch preferences need to be integrated as higher-level interaction factors in computational ethology models of embracing.

While some studies report gender differences in embracing with male-male dyads being less likely to engage in embracing then female-female or male-female dyads (Packheiser et al., 2019a), other studies did not observe gender differences in hugging (Nagy, 2011). For non-binary individuals, we were unfortunately unable to identify published studies on embracing. In general, it is largely unclear whether gender has any causative influence on embracing or whether results reporting less embraces in men are caused by harmful stereotypes such as the “awkward men hug”. A recent study also reported a greater use of embodied strategies in females compared to males (Marzoli et al., 2017), which may be relevant for sex differences in social touch and should be investigated in future studies.

Further research is needed to clarify these questions. One solution would be to classify a myriad of subtypes of embraces represented in the data while conserving the hierarchical embrace category. This type of model would help investigate differences in behaviour and identify sets of features that can separate subtypes of embrace from one another, defining a decision boundary from situational, affective or personality variables. This would, in turn, allow the reverse prediction of affective or situational component of an interaction given the embrace behaviour observed.

Solving interactions in HCE

In most machine learning applications, covariates and confounders are accounted for by training models on a balanced dataset as representative as possible for the target population. Learning from a high number of embraces between individuals differing in gender, height, age and personal relationship or social context will result in a model that generalizes complex embrace behaviour well among these different conditions. Similarly, to account for interactions between specific variables, one would only need to include this data into a common multivariate dataset. Fully connected layers in neural networks can then learn representations from a combination of multiple features (i.e., higher order interactions) rather than individual weights for each parameter, as in the classic multiple regression approach (i.e., main effects). For example, training a machine learning model on pose data from one individual during the embrace would learn to classify the basic motor behaviour of extending the arms, side bending the trunk and flexing the forearms, but would completely disregard the presence of the interaction partner. Including data from both individuals into the same dataset, as well as information on age and individual history, would generate a complete set of interaction features, thus accounting for relative behaviour between individuals, as well as moderating effects of age, gender, situational and affective valence, personality, or personal history.

A different approach to include higher level interactions in HCE would be to include environmental information into the model in form of a GLM-HMM. A recent study utilized a generalized linear model (GLM) to train an average ‘filter’ and convert time series data into probability estimates of a given outcome given specific states of a hidden Markov Model (HMM) (Calhoun et al., 2019). Similarly, the type of embrace could be estimated from motor behaviour conditioned on probability states based on gender, age, or other personality variables.

Conclusion

Social touch remains an under-researched area within psychology. We hope that the topics discussed here might be helpful in advancing empirical HCE studies on embracing. One critical step forward for HCE studies on embracing and other forms of social touch will be the integration of HCE data with data gathered using portable neuroscientific measurement devices. Recently, the first study using mobile EEG to assess electrophysiological brain responses during real-life embracing has been published (Packheiser et al., 2021a). Moreover, it has been suggested that measuring electrodermal activity is a useful tool to assess individual sensitivity to social touch (Nava et al., 2021). Furthermore, tactile sensors (Dvořák et al., 2021) and movement tracking sensors (Prince et al., 2021) can complement HCE approaches by providing information on some critical aspects of embraces that may not be fully assessed by analysing visual data, such as the pressure applied during a hug. Eventually, advances in HCE will lead to measurement accuracies matching those of such portable recording devices to quantify neural activity and behaviour. Ultimately, the integration of HCE with such mobile measurement devices will allow for an ecologically more valid investigation of social touch in affective behavioural neuroscience.

References

Anderson, D. J., & Perona, P. (2014). Toward a science of computational ethology. Neuron, 84(1), 18–31. https://doi.org/10.1016/j.neuron.2014.09.005

Berretz, G., Cebula, C., Wortelmann, B. M., Papadopoulou, P., Wolf, O. T., Ocklenburg, S., & Packheiser, J. (2021). Romantic partner embraces reduce cortisol release after acute stress induction in women but not in men. https://doi.org/10.31234/osf.io/32bde

Boulinguez-Ambroise, G., Pouydebat, E., Disarbois, É., & Meguerditchian, A. (2020). Human-like maternal left-cradling bias in monkeys is altered by social pressure. Scientific Reports, 10(1), 11036. https://doi.org/10.1038/s41598-020-68020-3

Brereton, J. E., Tuke, J., & Fernandez, E. J. (2022). A simulated comparison of behavioural observation sampling methods. Scientific Reports, 12(1), 3096. https://doi.org/10.1038/s41598-022-07169-5

Calhoun, A. J., Pillow, J. W., & Murthy, M. (2019). Unsupervised identification of the internal states that shape natural behavior. Nature Neuroscience, 22(12), 2040–2049. https://doi.org/10.1038/s41593-019-0533-x

Cohen, S., Janicki-Deverts, D., Turner, R. B., & Doyle, W. J. (2015). Does hugging provide stress-buffering social support? A study of susceptibility to upper respiratory infection and illness. Psychological Science, 26(2), 135–147. https://doi.org/10.1177/0956797614559284

Dreisoerner, A., Junker, N. M., Schlotz, W., Heimrich, J., Bloemeke, S., Ditzen, B., & van Dick, R. (2021). Self-soothing touch and being hugged reduce cortisol responses to stress: A randomized controlled trial on stress, physical touch, and social identity. Comprehensive Psychoneuroendocrinology, 8, 100091. https://doi.org/10.1016/j.cpnec.2021.100091

Dueren, A. L., Vafeiadou, A., Edgar, C., & Banissy, M. J. (2021). The influence of duration, arm crossing style, gender, and emotional closeness on hugging behaviour. Acta Psychologica, 221, 103441. https://doi.org/10.1016/j.actpsy.2021.103441

Dunn, T. W., Marshall, J. D., Severson, K. S., Aldarondo, D. E., Hildebrand, D. G. C., Chettih, S. N., Wang, W. L., Gellis, A. J., Carlson, D. E., Aronov, D., Freiwald, W. A., Wang, F., & Ölveczky, B. P. (2021). Geometric deep learning enables 3D kinematic profiling across species and environments. Nature Methods, 18(5), 564–573. https://doi.org/10.1038/s41592-021-01106-6

Dvořák, N., Chung, K., Mueller, K., & Ku, P.-C. (2021). Ultrathin Tactile Sensors with Directional Sensitivity and a High Spatial Resolution. Nano Letters, 21(19), 8304–8310. https://doi.org/10.1021/acs.nanolett.1c02837

Forsell, L. M., & Åström, J. A. (2012). Meanings of Hugging: From Greeting Behavior to Touching Implications. Comprehensive Psychology, 1, 02.17.21.CP.1.13. https://doi.org/10.2466/02.17.21.CP.1.13

Gimpl, G., & Fahrenholz, F. (2001). The oxytocin receptor system: Structure, function, and regulation. Physiological Reviews, 81(2), 629–683. https://doi.org/10.1152/physrev.2001.81.2.629

Haim-Nachum, S., Sopp, M. R., Michael, T., Shamay-Tsoory, S., & Levy-Gigi, E. (2021). No distance is too far between friends: Associations of comfortable interpersonal distance with PTSD and anxiety symptoms in Israeli firefighters. European Journal of Psychotraumatology, 12(1), 1899480. https://doi.org/10.1080/20008198.2021.1899480

Hendriks, A. W., van Rijswijk, M., & Omtzigt, D. (2011). Holding-side influences on infant's view of mother's face. Laterality, 16(6), 641–655. https://doi.org/10.1080/13576500903468904

Herdien, L., Malcolm-Smith, S., & Pileggi, L.-A. (2021). Leftward cradling bias in males and its relation to autistic traits and lateralised emotion processing. Brain and Cognition, 147, 105652. https://doi.org/10.1016/j.bandc.2020.105652

Hopkins, W. D. (2004). Laterality in Maternal Cradling and Infant Positional Biases: Implications for the Development and Evolution of Hand Preferences in Nonhuman Primates. International Journal of Primatology, 25(6), 1243–1265. https://doi.org/10.1023/B:IJOP.0000043961.89133.3d

Hsu, A. I., & Yttri, E. A. (2019). An Open Source Unsupervised Algorithm for Identification and Fast Prediction of Behaviors. https://doi.org/10.1101/770271

Jablonski, N. G. (2021). Social and affective touch in primates and its role in the evolution of social cohesion. Neuroscience, 464, 117–125. https://doi.org/10.1016/j.neuroscience.2020.11.024

Karashchuk, P., Rupp, K. L., Dickinson, E. S., Walling-Bell, S., Sanders, E., Azim, E., Brunton, B. W., & Tuthill, J. C. (2021). Anipose: A toolkit for robust markerless 3D pose estimation. Cell Reports, 36(13), 109730. https://doi.org/10.1016/j.celrep.2021.109730

Koshar, P., & Knowles, M. L. (2020). Do Hugs and Their Constituent Components Reduce Self-Reported Anxiety, Stress, and Negative Affect? Psi Chi Journal of. Psychological Research, 25(2), 181–191. https://doi.org/10.24839/2325-7342.JN25.2.181

Lauer, J., Zhou, M., Ye, S., Menegas, W., Nath, T., Rahman, M. M., Di Santo, V., Soberanes, D., Feng, G., Murthy, V. N., Lauder, G., Dulac, C., Mathis, M.W, Mackenzie W., & Mathis, A. (2021). Multi-animal pose estimation and tracking with DeepLabCut. https://doi.org/10.1101/2021.04.30.442096

Luxem, K., Fuhrmann, F., Kürsch, J., Remy, S., & Bauer, P. (2020). Identifying Behavioral Structure from Deep Variational Embeddings of Animal Motion. https://doi.org/10.1101/2020.05.14.095430

Malatesta, G., Marzoli, D., Morelli, L., Pivetti, M., & Tommasi, L. (2021a). The Role of Ethnic Prejudice in the Modulation of Cradling Lateralization. Journal of Nonverbal Behavior, 45(2), 187–205. https://doi.org/10.1007/s10919-020-00346-y

Malatesta, G., Marzoli, D., Piccioni, C., & Tommasi, L. (2019a). The Relationship Between the Left-Cradling Bias and Attachment to Parents and Partner. Evolutionary Psychology : An International Journal of Evolutionary Approaches to Psychology and Behavior, 17(2), 1474704919848117. https://doi.org/10.1177/1474704919848117

Malatesta, G., Marzoli, D., Prete, G., & Tommasi, L. (2021b). Human Lateralization, Maternal Effects and Neurodevelopmental Disorders. Frontiers in Behavioral Neuroscience, 15, 668520. https://doi.org/10.3389/fnbeh.2021.668520

Malatesta, G., Marzoli, D., Rapino, M., & Tommasi, L. (2019b). The left-cradling bias and its relationship with empathy and depression. Scientific Reports, 9(1), 6141. https://doi.org/10.1038/s41598-019-42539-6

Malatesta, G., Marzoli, D., & Tommasi, L. (2020). Keep a Left Profile, Baby! The Left-Cradling Bias Is Associated with a Preference for Left-Facing Profiles of Human Babies. Symmetry, 12(6), 911. https://doi.org/10.3390/sym12060911

Marzoli, D., Lucafò, C., Rescigno, C., Mussini, E., Padulo, C., Prete, G., D'Anselmo, A., Malatesta, G., & Tommasi, L. (2017). Sex-specific effects of posture on the attribution of handedness to an imagined agent. Experimental Brain Research, 235(4), 1163–1171. https://doi.org/10.1007/s00221-017-4886-7

Mathis, A., Mamidanna, P., Cury, K. M., Abe, T., Murthy, V. N., Mathis, M. W., Weygandt, M., & Bethge, M. (2018). Deeplabcut: Markerless pose estimation of user-defined body parts with deep learning. Nature Neuroscience, 21(9), 1281–1289. https://doi.org/10.1038/s41593-018-0209-y

Mobbs, D., Wise, T., Suthana, N., Guzmán, N., Kriegeskorte, N., & Leibo, J. Z. (2021). Promises and challenges of human computational ethology. Neuron. Advance online publication. https://doi.org/10.1016/j.neuron.2021.05.021

von Mohr, M., Kirsch, L. P., & Fotopoulou, A. (2021). Social touch deprivation during COVID-19: Effects on psychological wellbeing and craving interpersonal touch. Royal Society Open Science, 8(9), 210287. https://doi.org/10.1098/rsos.210287

Morgan, B., Hunt, X., Sieratzki, J., Woll, B., & Tomlinson, M. (2019). Atypical maternal cradling laterality in an impoverished South African population. Laterality, 24(3), 320–341. https://doi.org/10.1080/1357650X.2018.1509077

Murphy, M. L. M., Janicki-Deverts, D., & Cohen, S. (2018). Receiving a hug is associated with the attenuation of negative mood that occurs on days with interpersonal conflict. PloS One, 13(10), e0203522. https://doi.org/10.1371/journal.pone.0203522

Nagy, E. (2011). Sharing the moment: the duration of embraces in humans. Journal of Ethology, 29(2), 389–393. https://doi.org/10.1007/s10164-010-0260-y

Nava, E., Etzi, R., Gallace, A., & Macchi Cassia, V. (2021). Socially-relevant Visual Stimulation Modulates Physiological Response to Affective Touch in Human Infants. Neuroscience, 464, 59–66. https://doi.org/10.1016/j.neuroscience.2020.07.007

Ocklenburg, S., Packheiser, J., Schmitz, J., Rook, N., Güntürkün, O., Peterburs, J., & Grimshaw, G. M. (2018). Hugs and kisses - The role of motor preferences and emotional lateralization for hemispheric asymmetries in human social touch. Neuroscience and Biobehavioral Reviews, 95, 353–360. https://doi.org/10.1016/j.neubiorev.2018.10.007

Packheiser, J., Berretz, G., Rook, N., Bahr, C., Schockenhoff, L., Güntürkün, O., & Ocklenburg, S. (2021a). Investigating real-life emotions in romantic couples: A mobile EEG study. Scientific Reports, 11(1), 1142. https://doi.org/10.1038/s41598-020-80590-w

Packheiser, J., Malek, I. M., Reichart, J. S., Katona, L., Luhmann, M., & Ocklenburg, S. (2021b). The association of embracing with daily mood and general life satisfaction: An ecological momentary assessment study. https://doi.org/10.31234/osf.io/rxbcv

Packheiser, J., Rook, N., Dursun, Z., Mesenhöller, J., Wenglorz, A., Güntürkün, O., & Ocklenburg, S. (2019a). Embracing your emotions: Affective state impacts lateralisation of human embraces. Psychological Research, 83(1), 26–36. https://doi.org/10.1007/s00426-018-0985-8

Packheiser, J., Schmitz, J., Berretz, G., Papadatou-Pastou, M., & Ocklenburg, S. (2019b). Handedness and sex effects on lateral biases in human cradling: Three meta-analyses. Neuroscience and Biobehavioral Reviews, 104, 30–42. https://doi.org/10.1016/j.neubiorev.2019.06.035

Packheiser, J., Schmitz, J., Metzen, D., Reinke, P., Radtke, F., Friedrich, P., Güntürkün, O., Peterburs, J., & Ocklenburg, S. (2020). Asymmetries in social touch-motor and emotional biases on lateral preferences in embracing, cradling and kissing. Laterality, 25(3), 325–348. https://doi.org/10.1080/1357650X.2019.1690496

Pereira, T. D., Aldarondo, D. E., Willmore, L., Kislin, M., Wang, S. S.-H., Murthy, M., & Shaevitz, J. W. (2019). Fast animal pose estimation using deep neural networks. Nature Methods, 16(1), 117–125. https://doi.org/10.1038/s41592-018-0234-5

Prince, E. B., Ciptadi, A., Tao, Y., Rozga, A., Martin, K. B., Rehg, J., & Messinger, D. S. (2021). Continuous measurement of attachment behavior: A multimodal view of the strange situation procedure. Infant Behavior & Development, 63, 101565. https://doi.org/10.1016/j.infbeh.2021.101565

Reissland, N., Hopkins, B., Helms, P., & Williams, B. (2009). Maternal stress and depression and the lateralisation of infant cradling. Journal of Child Psychology and Psychiatry, and Allied Disciplines, 50(3), 263–269. https://doi.org/10.1111/j.1469-7610.2007.01791.x

Rogers-Jarrell, T., Eswaran, A., & Meisner, B. A. (2021). Extend an Embrace: The Availability of Hugs Is an Associate of Higher Self-Rated Health in Later Life. Research on Aging, 43(5-6), 227–236. https://doi.org/10.1177/0164027520958698

Saling, M. M., & Cooke, W.-L. (1984). Cradling and Transport of Infants by South African Mothers: A Cross-Cultural Study. Current Anthropology, 25(3), 333–335. https://doi.org/10.1086/203140

Salk, L. (1960). The effects of the normal heartbeat sound on the behaviour of the newborn infant : Implications for mental health. World Mental Health, 12, 168–175.

Schaefer, M., Heinze, H.-J., & Rotte, M. (2012). Touch and personality: Extraversion predicts somatosensory brain response. NeuroImage, 62(1), 432–438. https://doi.org/10.1016/j.neuroimage.2012.05.004

Sorokowska, A., Saluja, S., Sorokowski, P., Frąckowiak, T., Karwowski, M., Aavik, T., Akello, G., Alm, C., Amjad, N., Anjum, A., Asao, K., Atama, C. S., Atamtürk Duyar, D., Ayebare, R., Batres, C., Bendixen, M., Bensafia, A., Bizumic, B., Boussena, M., . . . Croy, I. (2021). Affective Interpersonal Touch in Close Relationships: A Cross-Cultural Perspective. Personality & Social Psychology Bulletin, 146167220988373. https://doi.org/10.1177/0146167220988373

Sun, R., Huang, C., Zhu, H., & Ma, L. (2021). Mask-aware photorealistic facial attribute manipulation. Computational Visual Media, 7(3), 363–374. https://doi.org/10.1007/s41095-021-0219-7

Suvilehto, J. T., Glerean, E., Dunbar, R. I. M., Hari, R., & Nummenmaa, L. (2015). Topography of social touching depends on emotional bonds between humans. Proceedings of the National Academy of Sciences of the United States of America, 112(45), 13811–13816. https://doi.org/10.1073/pnas.1519231112

Thiebaut, G., Méot, A., Witt, A., Prokop, P., & Bonin, P. (2021). "Touch Me If You Can!": Individual Differences in Disease Avoidance and Social Touch. Evolutionary Psychology : An International Journal of Evolutionary Approaches to Psychology and Behavior, 19(4), 14747049211056159. https://doi.org/10.1177/14747049211056159

Trotter, P., Belovol, E., McGlone, F., & Varlamov, A. (2018). Validation and psychometric properties of the Russian version of the Touch Experiences and Attitudes Questionnaire (TEAQ-37 Rus). PloS One, 13(12), e0206905. https://doi.org/10.1371/journal.pone.0206905

Turnbull, O. H., Stein, L., & Lucas, M. D. (1995). Lateral Preferences in Adult Embracing: A Test of the “Hemispheric Asymmetry” Theory of Infant Cradling. The Journal of Genetic Psychology, 156(1), 17–21. https://doi.org/10.1080/00221325.1995.9914802

Vauclair, J. (2022). Maternal cradling bias: A marker of the nature of the mother-infant relationship. Infant Behavior & Development, 66, 101680. https://doi.org/10.1016/j.infbeh.2021.101680

Willis, F. N., & Dodds, R. A. (1998). Age, relationship, and touch initiation. The Journal of Social Psychology, 138(1), 115–123. https://doi.org/10.1080/00224549809600359

Yoshida, S., & Funato, H. (2021). Physical contact in parent-infant relationship and its effect on fostering a feeling of safety. IScience, 24(7), 102721. https://doi.org/10.1016/j.isci.2021.102721

Acknowledgements

We thank Petunia Reinke and Marius Fenkes for help with video recording and for their permission to use those pictures in figures of this publication; Hiroshi Matsui for introducing us to methods in computational ethology; and Onur Güntürkün for supporting the development of the multi-view camera system for 3D pose estimation (https://gitlab.ruhr-uni-bochum.de/ikn/syncflir).

Funding

Open Access funding enabled and organized by Projekt DEAL. This work was supported by the German Research Foundation DFG in the context of the Research Training Group “Situated Cognition” (GRK 2185/1), in German: “Gefördert durch die Deutsche Forschungsgemeinschaft (DFG) - Projektnummer GRK 2185/1”. Julian Packheiser was supported by the German National Academy of Sciences Leopoldina (LPDS2021-05).

Author information

Authors and Affiliations

Contributions

Sebastian Ocklenburg had the idea for the article, Sebastian Ocklenburg and Guillermo Hidalgo Gadea performed literature research and drafted the manuscript. All three authors critically revised the work.

Corresponding author

Ethics declarations

Competing interests

The authors have no relevant financial or non-financial interests to disclose.

Ethics approval, Consent, Data, Materials and Code availability

This is a purely theoretical review article without any new data collection. Therefore, no ethics approval or consent were needed, and no data, materials and code are available.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Ocklenburg, S., Packheiser, J. & Hidalgo-Gadea, G. Social touch in the age of computational ethology: Embracing as a multidimensional and complex behaviour. Curr Psychol 42, 18539–18548 (2023). https://doi.org/10.1007/s12144-022-03051-9

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12144-022-03051-9