Abstract

Ecologists have many ways to measure and monitor ecosystems, each of which can reveal details about the processes unfolding therein. Acoustic recording combined with machine learning methods for species detection can provide remote, automated monitoring of species richness and relative abundance. Such recordings also open a window into how species behave and compete for niche space in the sensory environment. These opportunities are associated with new challenges: the volume and velocity of such data require new approaches to species identification and visualization. Here we introduce a newly-initiated acoustic monitoring network across the subtropical island of Okinawa, Japan, as part of the broader OKEON (Okinawa Environmental Observation Network) project. Our aim is to monitor the acoustic environment of Okinawa’s ecosystems and use these space–time data to better understand ecosystem dynamics. We present a pilot study based on recordings from five field sites conducted over a one-month period in the summer. Our results provide a proof of concept for automated species identification on Okinawa, and reveal patterns of biogenic vs. anthropogenic noise across the landscape. In particular, we found correlations between forest land cover and detection rates of two culturally important species in the island soundscape: the Okinawa Rail and Ruddy Kingfisher. Among the soundscape indices we examined, NDSI, Acoustic Diversity and the Bioacoustic Index showed both diurnal patterns and differences among sites. Our results highlight the potential utility of remote acoustic monitoring practices that, in combination with other methods can provide a holistic picture of biodiversity. We intend this project as an open resource, and wish to extend an invitation to researchers interested in scientific collaboration.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

When animals produce acoustic signals, during the breeding season or otherwise, they provide evidence of their identity and their presence at a particular time and place. These acoustic signals form the biotic component of the ‘soundscape’, which is the set of all observable sounds produced in an ecosystem (Pijanowski et al. 2011a). These sounds include not only birds, amphibians, insects, and mammals (biophony), but also anthropogenic noise such as cars, planes, machinery and people (anthropophony), as well as non-human and non-biological noises like wind, rain, and the sound of the sea (geophony; Farina 2014, p. 8). In many cases the soundscape changes in parallel with the landscape, for example if a forest is cut down and replaced by an agricultural or residential area. However, as a record of animal abundance and behavior, the soundscape may reveal subtler environmental changes, for example if a forest appears healthy but several frog and bird species have been lost.

Many species have built niches in acoustic space (frequency and time) over generations of competition and selection (Krause 1993). The extent to which these niches are shaped by convergent selection for signal efficiency in a noisy environment, or divergent selection into comfortably partitioned niches, remains a point of debate for many taxa (Sueur 2002; Tobias et al. 2014). Increasingly, animals must now also compete for acoustic space with humans (Dowling et al. 2011) and the species humans have introduced (Both and Grant 2012). This has the potential to disrupt the reproductive success of species that rely on acoustic signals to attract mates, defend resources, or coordinate behavior—particularly as signals may be altered to avoid overlap in ways that decrease their efficiency or effectiveness (Both and Grant 2012). Thus, an understanding of the acoustic environment and its ecological stressors is as critical as it is historically neglected (Schafer 1977; Lomolino et al. 2015).

As technological advances have led to the development of miniaturized recording and data storage equipment, the widespread collection of soundscape data for monitoring biodiversity has become increasingly feasible (Servick 2014). Many new software tools for processing and analyzing large acoustic datasets have been developed, including methods for the abstraction of animal signals into soundscape indices (Kasten et al. 2012), and more recently, methods for automated identification of bioacoustic signals (Digby et al. 2013). However, few studies to date have used these tools to examine patterns of biodiversity over a long duration and/or broad geographic scale (Lomolino et al. 2015).

There are many benefits associated with including a soundscape ecology approach to biodiversity monitoring. First, bioacoustic data provides a fine temporal scale for species presence and behavior, and can be preserved using archival methods to produce long-term datasets (e.g. Kasten et al. 2012). Second, because the recording approach does not require in situ identification by experts, a large number of field sites can be monitored simultaneously by a small research team. Further, passive recordings produce a dataset of species behavior and interactions in a complex sensory environment. However, practical limitations to soundscape ecology have also been identified (Digby et al. 2013), and caution is advised when interpreting several of the common soundscape indices currently in use (Fuller et al. 2015). One consistent challenge in soundscape ecology is the presence of wind and insect sounds, which may obscure and even deter other animal signals in a manner similar to inclement weather, particularly in late summer (Seki 2012; Hart et al. 2015).

The Ryūkyū archipelago is home to many unique endemic species, several of which are recognized as threatened, endangered or critically endangered (IUCN 2016). Of the islands in this archipelago, the main island of Okinawa (hereafter: “Okinawa-jima”) is the largest, and is home to a diverse assemblage of animals and plants. As the largest island, Okinawa-jima also has the highest rate of urbanization and the highest population with more than 1.3 million people (Japan Statistics Bureau 2017; Okinawa Prefectural Government 2017), leading to questions of how anthropogenic activity is impacting biodiversity across the island. The most intense human activity is observed from the south to the middle of the island, producing an urban–rural gradient along its north–south axis. Birds are key indicators of ecosystem health (Gregory and van Strien 2010) and when studied in combination with soundscape indices (Pijanowski et al. 2011b), allow for fine-scale investigation into the spatiotemporal dynamics of Okinawa-jima’s soundscape, with the potential to address a variety of questions in ecology and conservation as well as to reveal longer-term dynamic patterns of biodiversity across time and space in coming years.

Recently, we have established 24 long-term monitoring sites across habitats with different levels of human impact on Okinawa-jima, as part of the OKEON Churamori Project (Okinawa Environmental Observation Network; OKEON

). OKEON (http://okeon.unit.oist.jp) is a network of citizens, curators, and scientists working together to monitor the ecosystems of Okinawa. The 24 sites are intended to generate long-term space–time data series to monitor the dynamics of Okinawa’s terrestrial environments over time. The program includes several monitoring techniques including camera traps, arthropod traps, weather and environmental data logging, and acoustic recorders monitoring continuously.

). OKEON (http://okeon.unit.oist.jp) is a network of citizens, curators, and scientists working together to monitor the ecosystems of Okinawa. The 24 sites are intended to generate long-term space–time data series to monitor the dynamics of Okinawa’s terrestrial environments over time. The program includes several monitoring techniques including camera traps, arthropod traps, weather and environmental data logging, and acoustic recorders monitoring continuously.

Here, we use data from five sites over the same one-month period in a preliminary study to explore both the potential of and challenges to the effective application of this technology in Okinawa. We take two approaches to analyzing acoustic data: (1) Calculating soundscape indices that reflect different components (e.g., composition, complexity) of acoustic diversity; and (2) Automated detection of five bird species representing multiple avian clades, levels of endemism and conservation status. We applied these approaches across four weeks during the late summer period, which combines many of the challenges of acoustic monitoring in Okinawa, in an effort to assess analysis tools for monitoring soundscapes across the Ryūkyūs. We tested our ability to detect individual bird species, and fit models describing temporal and spatial variation in the overall soundscape and its relationship to ecological and environmental predictors. We also used this approach to test for seasonal soundscape patterns over a longer period from October to February.

Methods

Recording sites

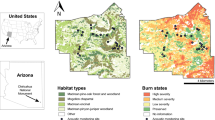

We have installed acoustic monitors at all 24 OKEON field sites on Okinawa-jima (Fig. 1), and have been collecting data at all sites since February 15, 2017, with pilot studies beginning earlier at a few sites. These field sites are distributed along an approximate North–South gradient at locations entailing different degrees of urbanization, disturbance, and species endemism. Permission for acoustic monitoring was obtained from local land owners, park officials, and community leaders as part of the OKEON project. Here we present data from a pilot study conducted in the period between July 30 and August 15 2016. This period was chosen because it includes most of the adverse conditions for field operations and automated species detection that are experienced throughout the year on Okinawa-jima: in particular high temperatures, typhoons, and noise from cicadas. This pilot study focuses on five field sites, which reflect a variety of closed forest habitats across the subtropical island of Okinawa-jima. One site was located at the Yambaru Discovery Forest (hereafter “Manabi no Mori”) within the newly designated Yambaru National Park, an important region with many endemic flora and fauna (Itô et al. 2000). Three sites were located in parks of varying urban density: in Nago Central Park (hereafter “Nago”), Nakagusuku Park (hereafter “Nakagusuku”), and Sueyoshi park in Naha city (hereafter “Sueyoshi”), the island’s largest city. One site was located in a forest in the suburbs outside Okinawa City (hereafter “Takiyamabaru”; see Fig. 1). To compare the efficacy of our methods across a longer timescale in what is arguably a less complex acoustic environment (i.e., without cicadas), we conducted additional recordings following the same protocols as described above between October 19, 2016 and February 10, 2017, at Tamagusuku Youth and Children’s Center (hereafter “Tamagusuku”; see Fig. 1).

Land-use features and locations of study sites. Land-use surrounding five focal sites arranged North–South on Okinawa-jima. Left panels represent the proportional land-use for each buffer radius from 25 to 1000 m (1 km). Land-use is grouped in six categories: forest, grasses, shrubs, agriculture, urban and other (see online supplement for details). Map at right describes land cover using the same color scale. Recording sites are marked according to their active dates

Acoustic monitoring

We used Song Meter recording devices (Model SM4, Wildlife Acoustics Inc., Concord, MA, USA), which made digital recordings in stereo.wav format from two omnidirectional microphones, saved to an SD card. We kept gain settings at their default parameters (+ 16 dB), and programmed a recording schedule to activate for 10 min at the beginning of every hour and half-hour (i.e., 10 min on, 20 min off), recording at a sampling rate of 24 kHz. We attached recorders to trees at each field site at approximately breast height (~ 1.3 m). Recording was conducted for approximately one month at each site, constrained by battery life, with recorders allowed to run on a regular schedule until their batteries ran out of power (D 1.2 V NiMH 9500 mAh—average depletion time on this schedule was 227.4 h). At the end of the study period, we recovered 1136.3 h of recordings, resulting in a total of 365 GB of data, which we have archived with the Okinawa Institute of Science and Technology’s SANGO high-performance computing center. Wind speed and rainfall values for each recording were approximated using data from the site’s nearest Japan Meteorological Agency weather station (http://www.jma.go.jp/; JMA 2016). To assess the replicability of our measurements, we include data from an additional field trial conducted on OIST campus in April 2016 (see Online Supplement for details).

Geographic analysis

Using GPS coordinates collected at each of our sites we created circular buffers around each site at 25 m intervals from 25 to 2000 m. We observed that a majority of change occurred up to 1000 m before leveling off and so have constrained our discussion and analysis up to 1000 m. Using the area within each circular buffer we calculated the proportion of land cover surrounding each site including building footprints, road surface area, and land cover. Polygons for building footprints and roads were generated using polyline data from a 2008 to 2009 survey obtained from the Geospatial Information Authority of Japan (http://www.gsi.go.jp). Land cover classification was carried out on a Jan 4, 2015 Landsat 8 Operational Land Imager image obtained from the US Geological Survey (Scene: LC81130422015004LGN00). For additional details on the classification see the online supplement. Land classes were defined as: Agriculture, dominated by sugarcane and a mix of fields at various phenologies; Shrub, medium to low growth coastal and disturbed vegetation; Forest, dense forest stands with closed canopy; Grass, short trimmed vegetation found in golf courses and airfields; Urban, a complex mix of man-made surface materials such as concrete and asphalt with limited vegetation; Other, remaining surfaces, bare earth, rocks, and sand (this class also includes unclassified pixels); Water, classified but not used in analysis (see Tables S1–S3 for more details).

Soundscape indices

We used the packages soundecology (Villanueva-Rivera and Pijanowski 2016) and seewave (Sueur et al. 2008) in R Version 3.3.2 (R Development Core Team 2016) to estimate five soundscape indices for each recording. The Bioacoustic Index (BioA) is calculated as the area under the power spectrum curve across the range of frequencies typically occupied by bird species (2–8 kHz), and is intended to describe the proportion of this acoustic space that is occupied in a recording (Boelman et al. 2007). Acoustic Diversity (ADI) is calculated by dividing the frequency range into 10 bins of 1 kHz width each, and returning the Shannon index of the occupancy of these bins for a recording (Villanueva-Rivera et al. 2011). The Acoustic Evenness index (AEI) also uses 1 kHz frequency bins, but returns the evenness (Gini index) of their occupancy. The Acoustic complexity index (ACI) measures the variability of sound intensity within a recording, which can be an accurate index of avian diversity, but is also easily misled by a small number of insects (Farina et al. 2011). Lastly, Normalized Difference Soundscape Index (NDSI) provides a range of values comparing sound intensity between frequency ranges that correspond to biophony (2–12 kHz) and anthropophony (1–2 kHz), such that values approaching − 1 represent dominant anthropogenic noise and approaching + 1 represents a soundscape dominated by biophony (Kasten et al. 2012). Taken together, these indices are intended to describe the soundscape in ecologically relevant ways (Gasc et al. 2016).

Automated recognition of bird vocalizations

We used Kaleidoscope Pro (version 4.1.0; Wildlife Acoustics Inc., Concord, MA, USA) to train software recognizers for five bird species. We selected species that are representative of the full range of vocal repertoires and population sizes from among those on Okinawa-jima, thus making differing contributions to the acoustic soundscape of Okinawa and ultimately to ecosystem functioning. These species included those common species that were widespread across the island (Hypsipetes amaurotis and Corvus macrorhynchos), potentially disturbance-sensitive forest species (Halcyon coromanda and Otus elegans), and the endangered Yambaru-endemic Okinawa Rail (Gallirallus okinawae).

Kaleidoscope Pro’s recognition algorithm follows a supervised clustering and recognition approach using Hidden Markov Models to detect vocalizations. Species identifications from recordings were verified by local experts and cross-checked with recordings accessioned in public databases (Xeno Canto and the Macaulay Library) in all cases. Our species identification approach followed four main steps:

-

1.

We ran a preliminary clustering analysis in Kaleidoscope Pro on a subset of our data from one day at Manabi no Mori, which generally exhibits the highest species diversity (SRP-JR and NRF, pers. obs.). We then identified clusters of vocalizations and detection events by manual inspection of spectrograms.

-

2.

We chose a roughly 50-h subset of recordings from our range of field sites for each species, enhanced mainly with recordings from individual sites that were considered to include a large number of recordings of the focal species from across a range of recording quality levels. For most species, we chose to use data from Manabi no Mori; although for H. amaurotis and C. macrorhynchos we chose data from Nakagusuku as this site included a greater number of high quality recordings for these species. Using this subset of recordings, we ran a clustering analysis in Kaleidoscope Pro with signal parameters set to 250–10000 Hz, 0.1–7.5 s duration and 0.35 s as the maximum inter-syllable gap, and cluster analysis parameters left as default (1.0 maximum distance from cluster center, FFT window 5.33 ms, 12 maximum states, 0.5 maximum distance to cluster center for building clusters and 500 as the maximum number of clusters). We then manually identified all vocalizations of the focal species in the collection of putative focal species detection events.

-

3.

We used the manually-identified vocalizations to re-train the species-specific recognizer model in Kaleidoscope Pro using the same input data and parameters as in step 2. False positives were marked as noise to assist in training the hidden Markov chain model separators between different sounds. If necessary, steps 2–3 were repeated until a well-performing model output was produced.

-

4.

After a well-performing model was obtained from step 3, we screened the full recording dataset for each site using our five species-specific recognizers, then manually inspected the results to calculate the proportion of false positives for each species per site (see Table 1).

Table 1 Detection numbers and model accuracy for species detectors across five sites

Statistical analysis

We compared soundscape data at a fine scale (30 min blocks) using generalized least-squares (GLS) multiple regression models in the R programming environment (R Development Core Team 2016). We included a temporal auto-regression term (AR1: ~ Time|Site) as a covariance structure in all regression models to correct for time series pseudo-replication. To test general characteristics of soundscape time series, we used a model selection approach to choose from a set of all possible combinations of diurnal vs. static, flat vs. trending, and site-specific vs. site-independent regression models, using the Akaike Information Criterion (AIC) to assess model fit. We assessed the support for each model component by comparing the goodness of fit of a model that included the component to a model which had the component removed. To test for correlations between the number of species detections and land cover components, we calculated the sum of detections for each recording day and fit GLS models with temporal auto-regression terms as above. We used a similar approach to test for the effects of wind and rain on soundscape index values.

Results

Soundscape indices

We generated soundscape indices for five sites during the period July 30–August 15, 2016, and found clear differences in the degree to which each index varied over time. Two out of five indices we measured—acoustic diversity (ADI) and the bioacoustic index (BioA)—showed significant non-zero trends within our initial study period. Both indices had significantly negative slopes (Table 2), indicating a trend in soundscape composition across the study period. Similarly, we found that regression models with a diurnal component were a significantly better fit to the Normalized Difference Soundscape Index (NDSI), ADI and BioA indices (Table 2), and indeed these indices exhibited higher values at night (Fig. 2). For several indices we observed daily peaks at dawn, around 5:00–7:00 for ADI and BioA (Fig. 3).

Diurnal-cycle site comparisons for five soundscape indices. Violin plots represent range of observed values for the normalized difference soundscape index (NDSI; top panel); Acoustic Diversity index (ADI); acoustic complexity index (ACI); acoustic evenness index (AEI) and the Bioacoustic index (BioA) across the diurnal-cycle for our five study sites (arranged left–right in order of increasing proportional urban area on a 1 km2 buffer scale, such that Takiyamabaru is the least urban site and Sueyoshi the most urban at this scale). Violins represent diurnal values (day time 6:00–18:00 inclusive; white violins) and nocturnal values (night time 19:00–5:00 inclusive; grey violins)

Comparisons between sites for five Soundscape indices across time. Spiral plots show absolute values of five soundscape indices: the normalized difference soundscape index (NDSI), left panels; acoustic diversity index (ADI); acoustic complexity index (ACI); acoustic evenness index (AEI) and the bioacoustic index (BioA, right panels). Individual boxes represent indices values (yellow = high values, purple = low values) at 30-min resolution across the study period (Jul. 30 2016–Aug. 15 2016). Twenty-four hour clock scales follow the first panel throughout. Data are shown for five sites on Okinawa-jima, arranged North–South: Manabi No Mori (top panels); Nago; Takiyamabaru; Nakagusuku and Sueyoshi (color figure online)

We observed clear differences between sites for each soundscape index, and regression models including separate intercepts for each site were a significantly better fit to our data for NDSI, ADI, and BioA than those without (Table 2). Some variation among sites appeared to follow Okinawa-jima’s urban–rural gradient (Table 2; Figs. 2, 3). NDSI was highest in recordings from Manabi no Mori, the site with the most forest cover, and lowest in recordings from the urban Sueyoshi Park (Fig. 3). This pattern was most apparent in recordings made at night (19:00–5:00; Fig. 2), but was also observable for recordings made during the day (6:00–18:00). However, no significant correlation between daily average NDSI and land cover was found (Table 2). In contrast, ADI was lowest on average in recordings from rural sites, and highest in recordings from urban sites (Fig. 2; Table 2). Conversely, acoustic evenness (AEI) was lowest in Sueyoshi, and BioA was highly variable regardless of site but showed a generally negative association with forest cover (Fig. 2; Table 2). Acoustic complexity (ACI) was largely similar in the most rural sites and Sueyoshi, our most urban site, but Nakagusuku and Nago displayed higher complexity than the other three sites (Fig. 2).

We were also able to identify periods during our study in which our soundscape indices were affected by inclement weather. Some sites were more variable than others across time, with Sueyoshi being least variable across temporal scales (i.e., both within and between days) and Manabi no Mori showing relatively little temporal variation, although there were a few bands of lower values of ADI and ACI, and higher AEI about midway through the study period. This duration corresponds to a period of bad weather on Okinawa-jima, with high winds and rain (i.e., geophony) dominating the soundscape. In support of this, we found that high wind gust velocity was associated with a significant decrease in both ADI and BioA, and an increase in AEI (Table 3). Likewise, ACI increased with higher daily rainfall. These results were paralleled in our bi-hourly time series analyses, as model fit improved following the addition of wind and rain components to models for ACI (LRT = 33.8, P < 0.001), ADI (LRT = 8.9, P < 0.05), and AEI (LRT = 6.6, P < 0.05).

We estimated NDSI values for a late-year monitoring period at our Tamagusuku field site. These estimates showed a general decline in biophony throughout the recording period. To examine changes in the timing of the dawn chorus, we tested the fit of seasonal models with a year-long period to hourly subsets of this data. We found that seasonal models were a better fit than flat or trending models for recordings at 5:30 and 6:00 am (Likelihood Ratio Test: 5.6, P < 0.05; LRT: 6.1, P < 0.05). Seasonal and trending models showed similar fit at 6:30am (LRT: 1.8, P = 0.17), and no models showed significant improvement over an empty model for 7:00 am (LRT: 0.8, P = 0.36), which exhibited a consistently high NDSI value throughout the study period (see Fig. S1).

Automated species detection

Automated detection results revealed considerable differences in vocalization densities between species and across sites (Fig. 4). Species differed in their detection frequency (Table 1), with C. macrorhynchos being detected most often (total 19698 vocalizations across sites), followed by Hypsipetes amaurotis (13302 vocalizations), Halcyon coromanda (5288 vocalizations), O. elegans (4496 vocalizations) and finally G. okinawae (319 vocalizations). For all species with the exception of H. amaurotis, we observed a substantial decline in the number of detected vocalizations from north to south, with Manabi No Mori, Nago and Takiyamabaru displaying higher densities than Nakagusuku and Sueyoshi (see Fig. 4, Tables 1, 4). All species except H. amaurotis exhibited their lowest recorded detection frequencies at the two southernmost sites, Nakagusuku and Sueyoshi park (see Fig. 1). Likewise, all species (with the exception of G. okinawae) exhibited their highest vocalization density at the Nago field site in northern Okinawa-jima. The Okinawa Rail (G. okinawae) is endemic to the Yambaru forest in northern Okinawa-jima, and we did not detect any vocalizations from this species outside of the Manabi No Mori field site.

Comparisons across sites for the vocalization density of five bird species. Absolute numbers of detected vocalizations for five species of birds: the Brown-eared Bulbul (Hypsipetes amaurotis; left panels); the Large-billed Crow (Corvus macrorhynchos); the Ruddy Kingfisher (Halcyon coromanda); the Ryūkyū Scops Owl (Otus elegans) and the Okinawa Rail (Gallirallus okinawae; right panels). Bubble size represents absolute vocalization detections per hour (larger bubbles = more detection events) for each day across the study period (30th July 2016–15th August 2016). Twenty-four hour clock scales follow the first panel throughout. Data are shown for five sites on Okinawa-jima, arranged North–South: Manabi No Mori (top panels); Nago; Takiyamabaru; Nakagusuku and Sueyoshi

We observed significant site-specific effects for all species except Hypsipetes amaurotis and O. elegans (Table 4). The number of H. amaurotis detections differed little between sites, but was lowest in Takiyamabaru. In contrast, despite showing no significant site effect, O. elegans was entirely absent from Sueyoshi to Nakagusuku. We tested whether this geographic trend might be explained by the proportion of forest or urban land cover near each site. We found significant positive relationships between forest cover and daily detection rate for G. okinawae and Halcyon coromanda, indicating that they are more often detected in forested areas (Table 4). A similar relationship was uncovered for O. elegans but was not significantly supported by our data.

Our data also showed temporal patterns in species detections across the study period. Halcyon coromanda showed a significantly decreasing trend in daily detection rate (P < 0.001; std. B −0.21, B err. 0.15). For all other species, we found no evidence of a trend in detection rate over the study period. Whilst most species showed perceptible decreases in detection rate during a period of rough weather, no correlations were recovered between daily detection rate and either wind speed or rainfall (Table 4). Halcyon coromanda vocalized almost exclusively in the morning during our focal period, and Hypsipetes amaurotis began vocalizing one or two hours later across most days at Sueyoshi and Takiyamabaru than at the other sites. C. macrorhynchos vocalized throughout the day, typically persisting from sunrise until sunset. O. elegans was detected most often at night but occasionally during the day, and G. okinawae was detected sparsely throughout the day but only rarely between 10 pm and pre-dawn (Fig. 4).

Discussion

The study we present here addresses the challenge of monitoring biodiversity across space and time by using remote acoustic monitoring techniques to assess acoustic activity across an urban–rural gradient on Okinawa-jima in Japan. We found effects of land-use on several soundscape indices, as well as on species’ vocal activity. Furthermore, we observed significant variation across a diurnal cycle and among sites. Daytime anthropophony tended to be higher at urban and suburban sites, which we believe may reflect an effect of urban noise and the diurnal anthropogenic cycle, as human activity is often highest during daylight hours (Fuller et al. 2007; Joo et al. 2011; Gage and Axel 2014). We also identified an increase in species detections along an urban to rural gradient, with stronger impacts on the endangered Okinawa rail and other disturbance-sensitive forest species than on common generalist species.

Soundscape indices

The soundscape indices reported here displayed systematic variation across sites, particularly along an urban–rural gradient. The Normalized difference soundscape index (NDSI) has been shown to accurately reflect anthropogenic noise when values are low (Gage and Axel 2014), and avian species richness when values are high (Fuller et al. 2015). We found that, on average, our urban site in Sueyoshi park had higher values than our suburban sites in Nago and Nakagusuku. However, the typical baseline NDSI at Sueyoshi was the lowest of all sites, indicating both a weak biophony and a persistent and constant noise from the city that was easily observable in spectrograms (NRF pers. obs.). In contrast, Nago and Nakagusuku had high baseline NDSI values with frequent incidents of high anthropophony, likely due to passing trucks or aircraft. This suggests that in a dynamic acoustic environment, complex temporal models may be necessary to better disentangle the effects of temporary and persistent acoustic disturbance (Botteldooren et al. 2006).

Acoustic diversity (ADI), which describes the diversity of 1 kHz frequency bands in a recording (Villanueva-Rivera et al. 2011), showed a strongly negative association with forest land cover and a strongly positive one with urban land cover. Given that bird diversity is highest at in the northern Yambaru forest, both in prior wildlife surveys (Itô et al. 2000; Biodiversity Center of Japan 2004) and in our species detections, we do not expect this ADI gradient to reflect species diversity. One explanation for this pattern is the constant broadband sound emitted by cicadas, which were recorded during most of the day throughout the study period. However, this pattern may also be explained by the prevalence of broadband anthropophony events in urban and suburban Okinawa. This result highlights the need for cross-validation of soundscape index values with estimates of local biodiversity, particularly birds and stridulating insects.

It is important to note some of the limitations inherent in drawing spatial conclusions from a small number of sites. Previous studies have shown that while soundscapes tend to be spatially auto-correlated (nearby areas sound similar), they can show important variation in sound amplitude, propagation, and diversity indices (Pekin et al. 2012; Rodriguez et al. 2014). Thus, further sampling is necessary to draw solid conclusions regarding the relationship between Okinawa’s soundscape and geography, and is indeed underway.

We identified several soundscape trends which were driven either solely or in part by weather conditions. The identified bands of lower acoustic diversity and complexity, and higher evenness roughly midway through our study correspond to a period of poor weather. During this time, high wind speed reduced acoustic diversity but increased evenness, whereas higher daily rainfall was associated with increased ADI and acoustic complexity (ACI). This seems intuitive, as it is widely accepted that geophony influences soundscape indices either directly through the impact of wind or rain on the soundscape (Farina 2014), or indirectly through the impact of geophony on the signaling behavior of sound-producing animal species (Davidson et al. 2017).

Automated species detection

Using an automated recognition approach, we were able to consistently detect vocalizations from all five focal species. The degree of accuracy varied for these detections between sites and species, with an average accuracy of 76.8% in locations where species were confirmed as present (Table 1). This accuracy level was generally sufficient to allow human confirmation of detection events. Furthermore, cluster assignment score cutoffs can be applied to enable conservative scoring of species presence or absence with minimal human interference. Ultimately, we were able to document a clear effect of geographic location—and potentially urbanization—on the focal species in our study. The two most common species in our study (Corvus macrorhynchos and Hypsipetes amaurotis; see Biodiversity Center of Japan 2004) were present at all five sites, and vocalization density of these species was least affected by our urban–rural gradient. We found that forest species (Halcyon coromanda and Otus elegans) showed a clear decline in vocalization density towards the more urban southern sites, suggesting greater sensitivity to disturbance. At the Yambaru forest site, Manabi no Mori, we were able to consistently detect the endangered Okinawa Rail, Gallirallus okinawae, whilst false positives for this species at other sites were minimal and exhibited poor clustering scores. Our finding that the detection rate of both G. okinawae and H. coromanda was affected by forest cover, with these culturally important species both being detected more frequently in forest sites, further highlights the value of Yambaru forest in supporting endemic, endangered and culturally significant biota (Itô et al. 2000).

Despite being in close proximity to roads and having large proportional surrounding urban land-use, the Nago forest site appears to be a local refuge for several of our focal species. Our common focal species, Hypsipetes amaurotis and C. macrorhynchos, were highly vocal at this site, as were our disturbance-sensitive focal species, Halcyon coromanda and O. elegans. This may suggest that the forest near Nago is an important site for biodiversity despite its urban setting, which could potentially support some of Okinawa-jima’s endemic species, were their ranges to expand further southwards. The Nakagusuku field site displayed high values of the bioacoustic index (BioA) and ACI, low NDSI and was a local hotspot for Hypsipetes amaurotis vocalizations, and to a lesser degree also Halcyon coromanda, despite the site’s southern location and mixed land-use cover. Along with Takiyamabaru, this site also exhibited a clear effect of diurnal cycle on soundscape indices, mainly for ADI and AEI. But unlike Takiyamabaru, at Nakagusuku we also observed high intensities of both anthropophony—mostly trucks, aircraft, and construction—and biophony—mostly cicadas and birds (SRP-JR and NRF, pers. obs.). This combination of intense biophony and anthropophony may also have contributed to the lower accuracy of our species detection models for Nago and Nakagusuku, particularly for C. macrorhynchos, H. coromanda and O. elegans (see Table 1).

Combining approaches

We identified several patterns in our soundscape indices that appear to be driven by stridulating insects. Further, we found a clear effect of weather conditions on soundscape indices and in some cases species detection. Lastly, we observed a ‘dawn chorus’ effect in several indices, which we attributed to bird species vocalizing in the morning.

We found that Acoustic complexity (ACI) was highest at our suburban sites in Nago and Nakagusuku, likely due to the abundance and activity of cicadas (Graptopsaltria bimaculata, Platypleura kaempferi and P. kuroiwae) during the day and a repetitive orthopteran (Hexacentrus unicolor) during the night. Previous studies have found that ACI increases drastically in the presence of cicadas and other orthopterans that produce a broadband signal (Farina et al. 2011; Fuller et al. 2015). BioA was also highest at night in Nago and Nakagusuku, likely due to the persistent broadband signaling of several orthopteran species, especially Hexacentrus unicolor. However, the degree of diurnal differentiation in soundscape index values (particularly NDSI) appeared to be greater at suburban sites, potentially due to the presence of vehicular traffic noises during the day (dark blue lines in Fig. 3).

We also observed several notable temporal patterns that were concordant between our soundscape index and species detection results. Firstly, there was a general decrease in all species’ vocal activity during the period of inclement weather on Okinawa-jima. These low levels of vocalization detection across all species were concurrent with low soundscape values for NDSI and high values for ADI and ACI. This suggests an impact of inclement weather on species behavior, and also serves as a proof of concept by demonstrating the capability of both methods to detect it (Keast 1994). Secondly, we observed a general increase in diurnal species vocal activity during the “dawn chorus” at around 5:30 am. Three of our most abundant species: Hypsipetes amaurotis, C. macrorhynchos and Halcyon coromanda, began vocalizing around this time, and most detections of Halcyon coromanda were observed during the dawn chorus period (5:30–7:30). We found this effect to be correlated with several soundscape indices, with some indication of a dawn chorus being visible for ACI and ADI, but most noticeably for peaks of the Bioacoustic Index, which were a good temporal match with the onset of Halcyon coromanda and Hypsipetes amaurotis vocalizations. Our further recording of soundscapes from the winter supports the detection of a later dawn chorus in October through February (see Fig. 5). Despite the dawn chorus effect being well documented in studies of bioacoustics and soundscape ecology (Kacelnik and Krebs 1983; Wimmer et al. 2013; Towsey et al. 2014), future acoustic monitoring should explore the phenology of this event, and role of seasonal, weather, and biotic interference in determining its timing (Farina et al. 2015; Hart et al. 2015).

Normalized difference soundscape index (NDSI) calculated for a recording at the Tamagusuku field site in southern Okinawa-jima. Higher values represent a greater proportion of biophony relative to anthropophony. Clear patterns visible in this figure are the “dawn chorus” of birds at 7:00 each morning, and the “evening chorus” of frogs and insects at night. The dawn chorus can be seen to change in time during the change from autumn to winter, as it typically correlates with nautical sunrise. In contrast the evening chorus decreases in intensity as winter evenings become quieter

Taken together, our results indicate a weather-related decline in both soundscape indices and species detections, a detectable dawn chorus of individual species being visible in our soundscape results, and that several of our soundscape indices were saturated by insect activity for periods of our study. Overall, this is an encouraging sign that the soundscape approach succeeds in reflecting species’ behavioral phenology on Okinawa-jima (Farina et al. 2011; Davidson et al. 2017). Further, our findings suggest that future soundscape studies conducted across the summer season should recognize and treat differently periods of inclement weather and high cicada activity. However, it should be noted that perhaps poor weather conditions and broadband insect acoustic activity are equivalent insofar as they both reduce the signal efficacy of soniferous species due to a ‘filling up’ of acoustic niche space (Hart et al. 2015). We find it encouraging to note that this issue appears to be more manageable when comparing soundscape indices across longer duration recordings, as was the case for our winter recording period.

Conclusions

Our results highlight the promise of remote acoustic monitoring of ecosystems on Okinawa-jima. Bioacoustic monitoring of populations and communities across an urban–rural gradient are a means to understand the impacts of urbanization on the avifauna of the Ryūkyū archipelago. This includes a clear potential for both measuring the acoustic components of biodiversity and anthropogenic noise (e.g. Botteldooren et al. 2006; Dowling et al. 2011), and for monitoring populations and vocal behavior of individual species (e.g. Kacelnik and Krebs 1983). Considering patterns in bird diversity across trophic levels, and incorporating detection of acoustic signals from other taxa such as frogs and insects, will provide further insight into community dynamics and ecosystem functioning in coming years (Boelman et al. 2007; Both and Grant 2012).

As acoustic monitoring projects increase in spatial and temporal scale, the scale of the questions we can address increases (Bush et al. 2017). However, the amount of data storage and processing time required by these projects also increases tremendously. The soundscape analysis and automated detection approaches we describe in this paper will help alleviate the “growing pains” of soundscape projects, as will technologies involving further application of machine learning and artificial intelligence (e.g., Xie et al. 2016). During the trial period that is the focus of this study, we collected a little more than 47 days of field recordings. However, in the six months since this paper was presented to the Ecological Society of Japan, we have collected an additional 4 years of field recordings encompassing a broader sample of both time and space. Hence, we wish to convey caution in interpreting broadly the correlations described in this study, but also optimism that the broader patterns of which they are a part can be characterized.

Much work remains to quantify and understand the functioning of Okinawa’s ecosystems, all of which are embedded to various degrees in a complex matrix of human activity. Furthermore, like all ecosystems worldwide, high resolution long-term monitoring is needed to track the impacts of continual global change (see Bush et al. 2017). Along with other OKEON data series, we intend this to be an open resource, and we invite researchers to contact us if they have scientific interest in the data. We hope that acoustic monitoring will contribute to a broader, more holistic view of the processes that structure and regulate the functioning of Okinawa-jima’s ecosystems (Sekercioglu 2006).

References

Biodiversity Center of Japan (2004) The National Survey on the Natural Environment, Report of the Distributional Survey of Japanese Birds

Boelman NT, Asner GP, Hart PJ, Martin RE (2007) Multi-trophic invasion resistance in Hawaii: bioacoustics, field surveys, and airborne remote sensing. Ecol Appl 17:2137–2144

Both C, Grant T (2012) Biological invasions and the acoustic niche: the effect of bullfrog calls on the acoustic signals of white-banded tree frogs. Biol Lett 8:714–716

Botteldooren D, De Coensel B, De Muer T (2006) The temporal structure of urban soundscapes. J Sound Vib 292:105–123

Bush A, Sollmann R, Wilting A, Bohmann K, Cole B, Balzter H, Martius C, Zlinszky A, Calvignac-Spencer S et al (2017) Connecting earth observation to high-throughput biodiversity data. Nat Ecol Evol 1:176

Davidson BM, Antonova G, Dlott H, Barber JR, Francis CD (2017) Natural and anthropogenic sounds reduce song performance: insights from two emberizid species. Behav Ecol 28:974

Digby A, Towsey M, Bell B, Teal P (2013) A practical comparison of manual, semi-automatic and automatic methods for acoustic monitoring. Methods Ecol Evol 4:675–683

Dowling JL, Luther DA, Marra PP (2011) Comparative effects of urban development and anthropogenic noise on bird songs. Behav Ecol 23:201–209

Farina A (2014) Soundscape ecology: principles, patterns, methods, and applications. Springer, New York

Farina A, Pieretti N, Piccioli L (2011) The soundscape methodology for long-term bird monitoring: a Mediterranean Europe case-study. Ecol Inform 6:354–363

Farina A, Ceraulo M, Bobryk C et al (2015) Spatial and temporal variation of bird dawn chorus and successive acoustic morning activity in a Mediterranean landscape. Bioacoustics 24:269–288

Fuller RA, Warren PH, Gaston KJ (2007) Daytime noise predicts nocturnal singing in urban robins. Biol Lett 3:368–370

Fuller S, Axel AC, Tucker D, Gage SH (2015) Connecting soundscape to landscape: which acoustic index best describes landscape configuration? Ecol Indic 58:207–215

Gage SH, Axel AC (2014) Visualization of temporal change in soundscape power of a Michigan lake habitat over a 4-year period. Ecol Inform 21:100–109

Gasc A, Francomano D, Dunning JB, Pijanowski BC (2016) Future directions for soundscape ecology: the importance of ornithological contributions. Auk 134:215–228

Gregory RD, van Strien A (2010) Wild bird indicators: using composite population trends of birds as measures of environmental health. Ornithol Sci 9:3–22

Hart PJ, Hall R, Ray W, Beck A, Zook J (2015) Cicadas impact bird communication in a noisy tropical rainforest. Behav Ecol 26:839–842

Itô Y, Miyagi K, Ota H (2000) Imminent extinction crisis among the endemic species of the forests of Yanbaru, Okinawa, Japan. Oryx 34:305–316

IUCN (2016) The IUCN Red List of Threatened Species. Version 2016-3. http://www.iucnredlist.org. Accessed 20 April 2017

Japan Meteorological Agency (2016) Table of hourly weather observations. http://www.jma.go.jp/en/amedas_h/map65.html. Accessed 6 Oct 2016

Japan Statistics Bureau (2017) Japan statistical yearbook. http://www.stat.go.jp/english/data/ Accessed 20 April 2017

Joo W, Gage SH, Kasten EP (2011) Analysis and interpretation of variability in soundscapes along an urban–rural gradient. Landsc Urban Plan 103:259–276

Kacelnik A, Krebs JR (1983) The dawn chorus in the Great Tit (Parus major): proximate and ultimate causes. Behaviour 83:287–308

Kasten EP, Gage SH, Fox J, Joo W (2012) The remote environmental assessment laboratory’s acoustic library: an archive for studying soundscape ecology. Ecol Inform 12:50–67

Keast A (1994) Temporal vocalization patterns in members of a eucalypt forest bird community: the effects of weather on song production. Emu 94:172–180

Krause BL (1993) The niche hypothesis. Soundsc Newsl 6:6–10

Lomolino MV, Pijanowski BC, Gasc A (2015) The silence of biogeography. J Biogeogr 42:1187–1196

Okinawa Prefectural Government (2017) 59th Okinawa statistical yearbook 2016. http://www.pref.okinawa.jp/toukeika/yearbook/yearbook_index.html. Accessed 27 April 2017

Pekin BK, Jung J, Villanueva-Rivera LJ, Pijanowski BC, Ahumada JA (2012) Modeling acoustic diversity using soundscape recordings and LIDAR-derived metrics of vertical forest structure in a neotropical rainforest. Landsc Ecol 27:1513–1522

Pijanowski BC, Farina A, Gage SH, Dumyahn SL, Krause BL (2011a) What is soundscape ecology? An introduction and overview of an emerging new science. Landsc Ecol 26:1213–1232

Pijanowski BC, Villanueva-Rivera LJ, Dumyahn SL, Farina A, Krause BL, Napoletano BM, Gage SH, Pieretti N (2011b) Soundscape ecology: the science of sound in the landscape. Bioscience 61:203–216

R Development Core Team (2016) R: a language and environment for statistical computing. R Foundation for Statistical Computing, Vienna. Version 3.3.3. https://www.R-project.org/

Rodriguez A, Gasc A, Pavoine S, Grandcolas P, Gaucher P, Sueur G (2014) Temporal and spatial variability of animal sound within a neotropical forest. Ecol Inform 21:133–143

Schafer R (1977) The soundscape: our sonic environment and the tuning of the world. Destiny Books, Rochester

Sekercioglu CH (2006) Increasing awareness of avian ecological function. Trends Ecol Evol 21:464–471

Seki S-I (2012) Use of automatic photo and sound recording systems for avian inventory collections of three uninhabited islands located in the Tokara Islands, Japan. Bird Res 8:35–48

Servick K (2014) Eavesdropping on ecosystems. Science 343:834–837

Sueur J (2002) Cicada acoustic communication: potential sound partitioning in a multispecies community from Mexico (Hemiptera: Cicadomorpha: Cicadidae). Biol J Linn Soc 75:379–394

Sueur J, Aubin T, Simonis C (2008) Seewave: a free modular tool for sound analysis and synthesis. Bioacoustics 18:213–226

Tobias JA, Planqué R, Cram DL, Seddon N (2014) Species interactions and the structure of complex communication networks. PNAS 111:1020–1025

Towsey M, Wimmer J, Williamson I, Roe P (2014) The use of acoustic indices to determine avian species richness in audio-recordings of the environment. Ecol Inform 21:110–119

Villanueva-Rivera LJ, Pijanowski BC (2016) Soundecology: soundscape ecology. R package version 1.3.2. https://CRAN.R-project.org/package=soundecology

Villanueva-Rivera LJ, Pijanowski BC, Doucette J, Pekin B (2011) A primer of acoustic analysis for landscape ecologists. Landsc Ecol 26:1233–1246

Wimmer J, Towsey M, Roe P, Williamson I (2013) Sampling environmental acoustic recordings to determine bird species richness. Ecol Appl 23:1419–1428

Xie J, Towsey M, Zhang J, Roe P (2016) Frog call classification: a survey. Artif Intell Rev. doi:10.1007/s10462-016-9529-z

Acknowledgements

We thank Kenji Takehara for his kind assistance throughout this project. Many individuals in the OKEON Churamori project contributed to data collection, site maintenance and community outreach; we especially thank Masako Ogasawara, Linda Ida, Shinji Iriyama, Toshihiro Kinjo, Izumi Maehira, Yasutaka Tamaki, Kozue Uekama, Ayumi Inoguchi, Shoko Suzuki, Masafumi Kuniyoshi, Mayuko Suwabe, and Chisa Oshiro. Our sincere thanks go to the landowners, museums, local governments and schools that host the OKEON Churamori project—especially Mori no iye Minmin, Nakagusuku Park, Okinawa Municipal Museum, Tamagusuku Youth and Children’s Center, and Yambaru Manabi no Mori—and to the people of Okinawa. Margaret Howell and members of OIST’s Biodiversity and Biocomplexity Unit contributed helpful comments and opinions that improved this paper. All authors were supported by subsidy funding to OIST.

Author information

Authors and Affiliations

Corresponding author

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

This article is published under an open access license. Please check the 'Copyright Information' section either on this page or in the PDF for details of this license and what re-use is permitted. If your intended use exceeds what is permitted by the license or if you are unable to locate the licence and re-use information, please contact the Rights and Permissions team.

About this article

Cite this article

Ross, S.R.P.J., Friedman, N.R., Dudley, K.L. et al. Listening to ecosystems: data-rich acoustic monitoring through landscape-scale sensor networks. Ecol Res 33, 135–147 (2018). https://doi.org/10.1007/s11284-017-1509-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11284-017-1509-5