Abstract

Pseudo-marginal Markov chain Monte Carlo methods for sampling from intractable distributions have gained recent interest and have been theoretically studied in considerable depth. Their main appeal is that they are exact, in the sense that they target marginally the correct invariant distribution. However, the pseudo-marginal Markov chain can exhibit poor mixing and slow convergence towards its target. As an alternative, a subtly different Markov chain can be simulated, where better mixing is possible but the exactness property is sacrificed. This is the noisy algorithm, initially conceptualised as Monte Carlo within Metropolis, which has also been studied but to a lesser extent. The present article provides a further characterisation of the noisy algorithm, with a focus on fundamental stability properties like positive recurrence and geometric ergodicity. Sufficient conditions for inheriting geometric ergodicity from a standard Metropolis–Hastings chain are given, as well as convergence of the invariant distribution towards the true target distribution.

Similar content being viewed by others

Explore related subjects

Find the latest articles, discoveries, and news in related topics.Avoid common mistakes on your manuscript.

1 Introduction

1.1 Intractable target densities and the pseudo-marginal algorithm

Suppose our aim is to simulate from an intractable probability distribution \(\pi \) for some random variable X, which takes values in a measurable space \(\left( \mathcal {X},\mathcal {B}(\mathcal {X})\right) \). In addition, let \(\pi \) have a density \(\pi (x)\) with respect to some reference measure \(\mu (dx)\), e.g. the counting or the Lebesgue measure. By intractable we mean that an analytical expression for the density \(\pi (x)\) is not available and so implementation of a Markov chain Monte Carlo (MCMC) method targeting \(\pi \) is not straightforward.

One possible solution to this problem is to target a different distribution on the extended space \(\left( \mathcal {X}\times \mathcal {W},\mathcal {B}(\mathcal {X})\times \mathcal {B}(\mathcal {W})\right) \), which admits \(\pi \) as marginal distribution. The pseudo-marginal algorithm (Beaumont 2003; Andrieu and Roberts 2009) falls into this category since it is a Metropolis–Hastings (MH) algorithm targeting a distribution \(\bar{\pi }_{N}\), associated to the random vector (X, W) defined on the product space \(\left( \mathcal {X}\times \mathcal {W},\mathcal {B}(\mathcal {X})\times \mathcal {B}(\mathcal {W})\right) \) where \(\mathcal {W}\subseteq {\mathbb {R}}^{+}_0:=[0,\infty )\). It is given by

where \(\left\{ Q_{x,N}\right\} _{(x,N)\in \mathcal {X}\times {\mathbb {N}}^{+}}\) is a family of probability distributions on \(\left( \mathcal {W},\mathcal {B}(\mathcal {W})\right) \) satisfying for each \((x,N)\in \mathcal {X}\times {\mathbb {N}}\)

Throughout this article, we restrict our attention to the case where for each \(x\in \mathcal {X}\), \(W_{x,N}\) is \(Q_{x,N}\)-a.s. strictly positive, for reasons that will become clear.

The random variables \(\left\{ W_{x,N}\right\} _{x,N}\) are commonly referred as the weights. Formalising this algorithm using (1) and (2) was introduced by Andrieu and Vihola (2015), and “exactness” follows immediately: \(\bar{\pi }\) admits \(\pi \) as a marginal. Given a proposal kernel \(q:\mathcal {X}\times \mathcal {B}(\mathcal {X})\rightarrow [0,1]\), the respective proposal of the pseudo-marginal is given by

and, consequently, the acceptance probability can be expressed as

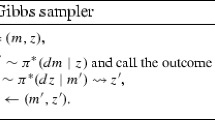

The pseudo-marginal algorithm defines a time-homogeneous Markov chain, with transition kernel \(\bar{P}_{N}\) on the measurable space \(\left( \mathcal {X}\times \mathcal {W},\mathcal {B}(\mathcal {X})\times \mathcal {B}(\mathcal {W})\right) \). A single draw from \(\bar{P}_{N}(x,w;\cdot ,\cdot )\) is presented in Algorithm 1.

Due to its exactness and straightforward implementation in many settings, the pseudo-marginal has gained recent interest and has been theoretically studied in some depth, see e.g. Andrieu and Roberts (2009), Andrieu and Vihola (2014, 2015), Doucet et al. (2015), Girolami et al. (2013), Maire et al. (2014) and Sherlock et al. (2015). These studies typically compare the pseudo-marginal Markov chain with a “marginal” Markov chain, arising in the case where all the weights are almost surely equal to 1, and (3) is then the standard Metropolis–Hastings acceptance probability associated with the target density \(\pi \) and the proposal q.

1.2 Examples of pseudo-marginal algorithms

A common source of intractability for \(\pi \) occurs when a latent variable Z on \((Z,\mathcal {B}(Z))\) is used to model observed data, as in hidden Markov models (HMMs) or mixture models. Although the density \(\pi (x)\) cannot be computed, it can be approximated via importance sampling, using an appropriate auxiliary distribution, say \(\nu _{x}\). Here, appropriate means \(\pi _{x}\ll \nu _{x}\), where \(\pi _{x}\) denotes the conditional distribution of Z given \(X=x\). Therefore, for this setting, the weights are given by

which motivates the following generic form when using averages of unbiased estimators

It is clear that (4) describes only a special case of (2). Nevertheless, we will pay special attention to the former throughout the article. For similar settings to (4) see Andrieu and Roberts (2009).

Since (2) is more general, it allows \(W_{x,N}\) to be any random variable with expectation 1. Sequential Monte Carlo (SMC) methods involve the simulation of a system of some number of particles, and provide unbiased estimates of likelihoods associated with HMMs (see Del Moral 2004, Proposition 7.4.1 or Pitt et al. 2012) irrespective of the size of the particle system. Consider the model given by Fig. 1. The random variables \(\left\{ X_{t}\right\} _{t=0}^{T}\) form a time-homogeneous Markov chain with transition \(f_{\theta }(\cdot |x_{t-1})\) that depends on a set of parameters \(\theta \). The observed random variables \(\left\{ Y_{t}\right\} _{t=1}^{T}\) are conditionally independent given the unobserved \(\left\{ X_{t}\right\} _{t=1}^{T}\) and are distributed according to \(g_{\theta }(\cdot |x_{t})\), which also may depend on \(\theta \). The likelihood function for \(\theta \) is given by

where \({{\mathbb {E}}_f}_\theta \) denotes expectation w.r.t. the \(\theta \)-dependent law of \(\{X_t\}_{t=1}^T\), and we assume for simplicity that the initial value \(X_0=x_0\) is known. If we denote by \(\hat{l}_N(\theta ;y_1,\ldots ,y_T)\) the unbiased SMC estimator of \(l(\theta ;y_1,\ldots ,y_T)\) based on N particles, we can then define

and (2) is satisfied but (4) is not. The resulting pseudo-marginal algorithm is developed and discussed in detail in Andrieu et al. (2010), where it and related algorithms are referred to as particle MCMC methods.

1.3 The noisy algorithm

Although the pseudo-marginal has the desirable property of exactness, it can suffer from “sticky” behaviour, exhibiting poor mixing and slow convergence towards the target distribution (Andrieu and Roberts 2009; Lee and Łatuszyński 2014). The cause for this is well-known to be related with the value of the ratio between \(W_{y,N}\) and \(W_{x,N}\) at a particular iteration. Heuristically, when the value of the current weight (w in (3)) is large, proposed moves can have a low probability of acceptance. As a consequence, the resulting chain can get “stuck” and may not move after a considerable number of iterations.

In order to overcome this issue, a subtly different algorithm is performed in some practical problems (see, e.g., McKinley et al. 2014). The basic idea is to refresh, independently from the past, the value of the current weight at every iteration. The ratio of the weights between \(W_{y,N}\) and \(W_{x,N}\) still plays an important role in this alternative algorithm, but here refreshing \(W_{x,N}\) at every iteration can improve mixing and the rate of convergence.

This alternative algorithm is commonly known as Monte Carlo within Metropolis (MCWM), as in O’Neill et al. (2000), Beaumont (2003) or Andrieu and Roberts (2009), since typically the weights are Monte Carlo estimates as in (4). From this point onwards it will be referred as the noisy MH algorithm or simply the noisy algorithm to emphasize that our main assumption is (2). Due to independence from previous iterations while sampling \(W_{x,N}\) and \(W_{y,N}\), the noisy algorithm also defines a time-homogeneous Markov chain with transition kernel \({\tilde{P}}_{N}\), but on the measurable space \((\mathcal {X},\mathcal {B}(\mathcal {X}))\). A single draw from \({\tilde{P}}_{N}(x,\cdot )\) is presented in Algorithm 2, and it is clear that we restrict our attention to strictly positive weights because the algorithm is not well-defined when both \(W_{y,N}\) and \(W_{x,N}\) are equal to 0.

Even though these algorithms differ only slightly, the related chains have very different properties. In Algorithm 2, the value w is generated at every iteration whereas in Algorithm 1, it is treated as an input. As a consequence, Algorithm 1 produces a chain on \(\left( \mathcal {X}\times \mathcal {W},\mathcal {B}(\mathcal {X})\times \mathcal {B}(\mathcal {W})\right) \) contrasting with a chain from Algorithm 2 taking values on \(\left( \mathcal {X},\mathcal {B}(\mathcal {X})\right) \). However, the noisy chain is not invariant under \(\pi \) and it is not reversible in general. Moreover, it may not even have an invariant distribution as shown by some examples in Sect. 2.

From O’Neill et al. (2000) and Fernández-Villaverde and Rubio-Ramírez (2007), it is evident that the implementation of the noisy algorithm goes back even before the appearance of the pseudo-marginal, the latter initially conceptualised as Grouped Independence Metropolis–Hastings (GIMH) in Beaumont (2003). Theoretical properties, however, of the noisy algorithm have mainly been studied in tandem with the pseudo-marginal by Beaumont (2003), Andrieu and Roberts (2009) and more recently by Alquier et al. (2014).

The noisy chain generated by Algorithm 2 can be seen as a perturbed version of an idealised Markov chain where the weights \(\left\{ W_{x,N}\right\} _{x,N}\) are all equal to one. Perturbed Markov chains have been investigated in , e.g., Roberts et al. (1998), Breyer et al. (2001), Shardlow and Stuart (2000), Mitrophanov (2005), Ferré et al. (2013). More recently Pillai and Smith (2014) and Rudolf and Schweizer (2015) study such chains using the notion of Wasserstein distance. We focus on total variation distance, a particular case of the Wasserstein distance. The relationship between our work and these latter papers is pointed out in subsequent remarks.

1.4 Objectives of the article

The objectives of this article can be illustrated using a simple example. Let \(\mathcal {N}(\cdot | \mu , \sigma ^2)\) denote a univariate Gaussian distribution with mean \(\mu \) and variance \(\sigma ^2\) and \(\pi (\cdot )=\mathcal {N}(\cdot | 0, 1)\) be a standard normal distribution. Let the weights \(W_{x,N}\) be as in (4) with

where \(\log \mathcal {N}(\cdot | \mu , \sigma ^2)\) denotes a log-normal distribution of parameters \(\mu \) and \(\sigma ^2\). In addition, let the proposal q be random walk given by \(q(x,\cdot )=\mathcal {N}\left( \cdot |x, 4 \right) \). For this example, Fig. 2 shows the estimated densities using the noisy chain for different values of N. It appears that the noisy chain has an invariant distribution, and as N increases it seems to approach the desired target \(\pi \). Our objectives here are to answer the following types of questions about the noisy algorithm in general:

-

1.

Does an invariant distribution exist, at least for N large enough?

-

2.

Does the noisy Markov chain behave like the marginal chain for sufficiently large N?

-

3.

Does the invariant distribution, if it exists, converge to \(\pi \) as N increases?

We will see that the answer to the first two questions is negative in general. However, all three questions can be answered positively when the marginal chain is geometrically ergodic and the distributions of the weights satisfy additional assumptions.

1.5 Marginal chains and geometric ergodicity

In order to formalise our analysis, let P denote the Markov transition kernel of a standard MH chain on \(\left( \mathcal {X},\mathcal {B}(\mathcal {X})\right) \), targeting \(\pi \) with proposal q. We will refer to this chain and this algorithm using the term marginal (as in Andrieu and Roberts 2009; Andrieu and Vihola 2015), which is the idealised version for which the noisy chain and corresponding algorithm are simple approximations. Therefore

where \(\alpha \) is the MH acceptance probability and \(\rho \) is the rejection probability, given by

Similarly, for the transition kernel \({\tilde{P}}_{N}\) of the noisy chain, moves are proposed according to q but are accepted using \({\bar{\alpha }}_{N}\) (as in (3)) instead of \(\alpha \), once values for \(W_{x,N}\) and \(W_{y,N}\) are sampled. In order to distinguish the acceptance probabilities between the noisy and the pseudo-marginal processes, despite being the same after sampling values for the weights, define

Here \(\tilde{\alpha }_{N}\) is the expectation of a randomised acceptance probability, which permits defining the transition kernel of the noisy chain by

where \(\tilde{\rho }_{N}\) is the noisy rejection probability given by

As briefly noted before, the noisy kernel \({\tilde{P}}_{N}\) is just a perturbed version of P involving a ratio of weights in the noisy acceptance probability \(\tilde{\alpha }_{N}\). When such weights are identically one, i.e. \(Q_{x,N}(\{1\})=1\), the noisy chain reduces to the marginal chain, whereas the pseudo-marginal becomes the marginal chain with an extra component always equal to 1.

So far, the terms slow convergence and “sticky” behaviour have been used in a relative vague sense. A powerful characterisation of the behaviour of a Markov chain is provided by geometric ergodicity, defined below. Geometrically ergodic Markov chains have a limiting invariant probability distribution, which they converge towards geometrically fast in total variation (Meyn and Tweedie 2009). For any Markov kernel \(K:\mathcal {X}\times \mathcal {B}(\mathcal {X})\rightarrow [0,1]\), let \(K^{n}\) be the n-step transition kernel, which is given by

Definition 1.1

(Geometric ergodicity) A \(\varphi \)-irreducible and aperiodic Markov chain \(\varvec{\Phi }:=(\varPhi _i)_{i\ge 0}\) on a measurable space \(\left( \mathcal {X},\mathcal {B}(\mathcal {X})\right) \), with transition kernel P and invariant distribution \(\pi \), is geometrically ergodic if there exists a finite function \(V\ge 1\) and constants \(\tau <1\), \(R<\infty \) such that

Here, \(\Vert \cdot \Vert _{TV}\) denotes the total variation norm given by

where \(\mu \) is any signed measure.

Geometric ergodicity does not necessarily provide fast convergence in an absolute sense. For instance, consider cases where \(\tau \), or R, from Definition 1.1 are extremely close to one, or very large respectively. Then the decay of the total variation distance, though geometric, is not particularly fast (see Roberts and Rosenthal 2004 for some examples).

Nevertheless, geometric ergodicity is a useful tool when analysing non-reversible Markov chains as will become apparent in the noisy chain case. Moreover, in practice one is often interested in estimating \({\mathbb {E}}_{\pi }\left[ f(X)\right] \) for some function \(f:\mathcal {X}\rightarrow {\mathbb {R}}\), which is done by using ergodic averages of the form

In this case, geometric ergodicity is a desirable property since it can guarantee the existence of a central limit theorem (CLT) for \(e_{n,m}(f)\), see Chan and Geyer (1994) and Roberts and Rosenthal (1997, 2004) for a more general review. Also, its importance is related with the construction of consistent estimators of the corresponding asymptotic variance in the CLT, as in Flegal and Jones (2010).

As noted in Andrieu and Roberts (2009), if the weights \(\left\{ W_{x,N}\right\} _{x,N}\) are not essentially bounded then the pseudo-marginal chain cannot be geometrically ergodic; in such cases the “stickiness” may be more evident. In addition, under mild assumptions (in particular, that \(\bar{P}_N\) has a left spectral gap), from Andrieu and Vihola (2015, Proposition 10) and Lee and Łatuszyński (2014), a sufficient but not necessary condition ensuring the pseudo-marginal inherits geometric ergodicity from the marginal, is that the weights are uniformly bounded. This certainly imposes a tight restriction in many practical problems.

The analyses in Andrieu and Roberts (2009) and Alquier et al. (2014) mainly study the noisy algorithm in the case where the marginal Markov chain is uniformly ergodic, i.e. when it satisfies (8) with \(\sup _{x\in \mathcal {X}} V(x)<\infty \). However, there are many Metropolis–Hastings Markov chains for statistical estimation that cannot be uniformly ergodic, e.g. random walk Metropolis chains when \(\pi \) is not compactly supported. Our focus is therefore on inheritance of geometric ergodicity by the noisy chain, complementing existing results for the pseudo-marginal chain.

1.6 Outline of the paper

In Sect. 2, some simple examples are presented for which the noisy chain is positive recurrent, so it has an invariant probability distribution. This is perhaps the weakest stability property that one would expect a Monte Carlo Markov chain to have. However, other fairly surprising examples are presented for which the noisy Markov chain is transient even though the marginal and pseudo-marginal chains are geometrically ergodic. Section 3 is dedicated to inheritance of geometric ergodicity from the marginal chain, where two different sets of sufficient conditions are given and are further analysed in the context of arithmetic averages given by (4). Once geometric ergodicity is attained, it guarantees the existence of an invariant distribution \(\tilde{\pi }_{N}\) for the noisy chain. Under the same sets of conditions, we show in Sect. 4 that \(\tilde{\pi }_{N}\) and \(\pi \) can be made arbitrarily close in total variation as N increases. Moreover, explicit rates of convergence are possible to obtain in principle, when the weights arise from an arithmetic average setting as in (4).

2 Motivating examples

2.1 Homogeneous weights with a random walk proposal

Assume a log-concave target distribution \(\pi \) on the positive integers, whose density with respect to the counting measure is given by

where \(h:{\mathbb {N}}^{+}\rightarrow {\mathbb {R}}\) is a convex function. In addition, let the proposal distribution be a symmetric random walk on the integers, i.e.

From Mengersen and Tweedie (1996), it can be seen that the marginal chain is geometrically ergodic.

Now, assume the distribution of the weights \(\left\{ W_{m,N}\right\} _{m,N}\) is homogeneous with respect to the state space, meaning

In addition, assume \(W_{N}>0\ Q_{N}\)-a.s., then for \(m\ge 2\)

For this particular class of weights and using the fact that h is convex, the noisy chain is geometrically ergodic, implying the existence of an invariant probability distribution.

Proposition 2.1

Consider a log-concave target density on the positive integers and a proposal density as in (9). In addition, let the distribution of the weights be homogeneous as in (10). Then, the chain generated by the noisy kernel \({\tilde{P}}_{N}\) is geometrically ergodic.

It is worth noting that the distribution of the weights, though homogeneous with respect to the state space, can be taken arbitrarily, as long as the weights are positive. Homogeneity ensures that the distribution of the ratio of such weights is not concentrated near 0, due to its symmetry around one, i.e. for \(z>0\)

In contrast, when the support of the distribution \(Q_{N}\) is unbounded, the corresponding pseudo-marginal chain cannot be geometrically ergodic.

2.2 Particle MCMC

More complex examples arise when using particle MCMC methods, for which noisy versions can also be performed. They may prove to be useful in some inference problems. Consider again the hidden Markov model given by Fig. 1. As before, set \(X_{0}=x_{0}\) and let

Therefore, once a prior distribution for \(\theta \) is specified, \(p(\cdot )\) say, the aim is to conduct Bayesian inference on the posterior distribution

In this particular setting, the posterior distribution is tractable. This will allows us to compare the results obtained from the exact and noisy versions, both relying on the SMC estimator \(\hat{l}_N(\theta ;y_1,\ldots ,y_T)\) of the likelihood. Using a uniform prior for the parameters and a random walk proposal, Fig. 3 shows the run and autocorrelation function (acf) for the autoregressive parameter a of the marginal chain. Similarly, Fig. 4 shows the corresponding run and acf for both the pseudo-marginal and the noisy chain when \(N=250\). Plots for the other parameters and different values of N can be found in Online Appendix 2. It is noticeable how the pseudo-marginal gets “stuck”, resulting in a lower acceptance than the marginal and noisy chains. In addition, the acf of the noisy chain seems to decay faster than that of the pseudo-marginal chain.

Last 20,000 iterations of the pseudo-marginal (top left) and noisy (bottom left) algorithms, for the autoregressive parameter a when \(N=250\). Estimated autocorrelation functions of the corresponding pseudo-marginal (top right) and noisy (bottom right) chains. The mean acceptance probabilities were 0.104 for the pseudo-marginal and 0.283 for the noisy chain

Finally, Figs. 5 and 6 show the estimated posterior densities for the parameters when \(N=250\) and \(N=750\), respectively. There, the trade-off between the pseudo-marginal and the noisy algorithm is noticeable. For lower values of N, the pseudo-marginal will require more iterations due to the slow mixing, whereas the noisy converges faster towards an unknown noisy invariant distribution. By increasing N, the mixing in the pseudo-marginal improves and the noisy invariant approaches the true posterior. Plots for other values of N can also be found in Online Appendix 2.

2.3 Transient noisy chain with homogeneous weights

In contrast with example in Sect. 2.1, this one shows that the noisy algorithm can produce a transient chain even in simple settings. Let \(\pi \) be a geometric distribution on the positive integers, whose density with respect to the counting measure is given by

In addition, assume the proposal distribution is a simple random walk on the integers, i.e.

where \(\theta \in (0,1)\). Under these assumptions, the marginal chain is geometrically ergodic, see Proposition 5.1 in Appendix 1.

Consider \(N=1\) and as in Sect. 2.1, let the distribution of weights be homogeneous and given by

where Ber(s) denotes a Bernoulli random variable of parameter \(s\in (0,1).\) There exists a relationship between s, b and \(\varepsilon \) that guarantees the expectation of the weights is identically one. The following proposition, proven in Appendix 1 by taking \(\theta > 1/2\), shows that the resulting noisy chain can be transient for certain values of b, \(\epsilon \) and \(\theta \).

Proposition 2.2

Consider a geometric target density as in (11) and a proposal density as in (12). In addition, let the weights when \(N=1\) be given by (13). Then, for some b, \(\varepsilon \) and \(\theta \) the chain generated by the noisy kernel \({\tilde{P}}_{N=1}\) is transient.

In contrast, since the weights are uniformly bounded by b, the pseudo-marginal chain inherits geometric ergodicity for any \(\theta \), b and \(\epsilon \). The left plot in Fig. 7 shows an example. We will discuss the behaviour of this example as N increases in Sect. 3.4 .

2.4 Transient noisy chain with non-homogeneous weights

One could argue that the transient behaviour of the previous example is related to the large value of \(\theta \) in the proposal distribution. However, as shown here, for any value of \(\theta \in (0,1)\) one can construct weights satisfying (2) for which the noisy chain is transient. With the same assumptions as in the example in Sect. 2.3, except that now the distribution of weights is not homogeneous but given by

the noisy chain will be transient for b large enough. The proof can be found in Appendix 1.

Proposition 2.3

Consider a geometric target density as in (11) and a proposal density as in (12). In addition, let the weights when \(N=1\) be given by (14). Then, for any \(\theta \in (0,1)\) there exists some \(b>1\) such that the chain generated by the noisy kernel \({\tilde{P}}_{N=1}\) is transient.

The reason for this becomes apparent when looking at the behaviour of the ratios of weights. Even though \(\varepsilon _{m}\rightarrow 0\) as \(m\rightarrow \infty \), the non-monotonic behaviour of the sequence implies

and

Hence, the ratio of the weights can become arbitrarily large or arbitrarily close to zero with a non-negligible probability. This allows the algorithm to accept moves to the right more often, if m is large enough. Once again, the pseudo-marginal chain inherits the geometrically ergodic property from the marginal. See the central and right plots of Fig. 7 for two examples using different proposals. Again, we will come back to this example in Sect. 3.4, where we look at the behaviour of the associated noisy chain as N increases.

Runs of the marginal, pseudo-marginal and noisy chains. Left plot shows example in Sect. 2.3, where \(\theta =0.75\), \(\varepsilon =2-\sqrt{3}\) and \(b=2\varepsilon \frac{\theta }{1-\theta }\). Central and right plots show example in Sect. 2.4, where \(\theta =0.5\) and \(\theta =0.25\) respectively, with \(\varepsilon _{m}=m^{-(3-m\ (\text {mod}\ 3))}\) and \(b=3+\left( \frac{1-\theta }{\theta }\right) ^{3}\)

3 Inheritance of ergodic properties

The inheritance of various ergodic properties of the marginal chain by pseudo-marginal Markov chains has been established using techniques that are powerful but suitable only for reversible Markov chains (see, e.g. Andrieu and Vihola 2015). Since the noisy Markov chains treated here can be non-reversible, a suitable tool for establishing geometric ergodicity is the use of Foster–Lyapunov functions, via geometric drift towards a small set.

Definition 3.1

(Small set) Let P be the transition kernel of a Markov chain \(\varvec{\Phi }\). A subset \(C\subseteq \mathcal {X}\) is small if there exists a positive integer \(n_{0}\), \(\varepsilon >0\) and a probability measure \(\nu (\cdot )\) on \(\left( \mathcal {X},\mathcal {B}(\mathcal {X})\right) \) such that the following minorisation condition holds

The following theorem, which is immediate from combining Roberts and Rosenthal (1997, Proposition 2.1) and Meyn and Tweedie (2009, Theorem 15.0.1), establishes the equivalence between geometric ergodicity and a geometric drift condition. For any kernel \(K:\mathcal {X}\times \mathcal {B}(\mathcal {X})\rightarrow [0,1]\), let

Theorem 3.1

Suppose that \(\varPhi \) is a \(\phi \)-irreducible and aperiodic Markov chain with transition kernel P and invariant distribution \(\pi \). Then, the following statements are equivalent:

-

(i)

There exists a small set C, constants \(\lambda <1\) and \(b<\infty \), and a function \(V\ge 1\) finite for some \(x_0\in \mathcal {X}\) satisfying the geometric drift condition

$$\begin{aligned} PV(x) \le \lambda V(x)+b\mathbbm {1}_{\left\{ x\in C\right\} },\quad \text {for}\;x\in \mathcal {X}. \end{aligned}$$(16) -

(ii)

The chain is \(\pi \)-a.e. geometrically ergodic, meaning that for \(\pi \)-a.e. \(x\in \mathcal {X}\) it satisfies (8) for some \(V\ge 1\) (which can be taken as in (i)) and constants \(\tau <1\), \(R<\infty \).

From this point onwards, it is assumed that the marginal and noisy chains are \(\phi \)-irreducible and aperiodic. In addition, for many of the following results, it is required that

- (P1):

-

The marginal chain is geometrically ergodic, implying its kernel P satisfies the geometric drift condition in (16) for some constants \(\lambda <1\) and \(b<\infty \), some function \(V\ge 1\) and a small set \(C\subseteq \mathcal {X}\).

3.1 Conditions involving a negative moment

From the examples of the previous section, it is clear that the weights play a fundamental role in the behaviour of the noisy chain. The following theorem states that the noisy chain will inherit geometric ergodicity from the marginal under some conditions on the weights involving a strengthened version of the Law of Large Numbers and convergence of negative moments.

- (W1):

-

For any \(\delta >0\), the weights \(\left\{ W_{x,N}\right\} _{x,N}\) satisfy

$$\begin{aligned} \lim _{N\rightarrow \infty }\sup _{x\in \mathcal {X}}{\mathbb {P}}_{Q_{x,N}}\left[ \big \vert W_{x,N}-1\big \vert \ge \delta \right] = 0. \end{aligned}$$ - (W2):

-

The weights \(\left\{ W_{x,N}\right\} _{x,N}\) satisfy

$$\begin{aligned} \lim _{N\rightarrow \infty }\sup _{x\in \mathcal {X}}{\mathbb {E}}_{Q_{x,N}}\left[ W_{x,N}^{-1}\right] = 1. \end{aligned}$$

Theorem 3.2

Assume (P1), (W1) and (W2). Then, there exists \(N_{0}\in {\mathbb {N}}^{+}\) such that for all \(N\ge N_{0}\), the noisy chain with transition kernel \({\tilde{P}}_{N}\) is geometrically ergodic.

The above result is obtained by controlling the dissimilarity of the marginal and noisy kernels. This is done by looking at the corresponding rejection and acceptance probabilities. The proofs of the following lemmas appear in Appendix 1.

Lemma 3.1

For any \(\delta >0\)

Lemma 3.2

Let \(\rho (x)\) and \(\tilde{\rho }_{N}(x)\) be the rejection probabilities as defined in (5) and (7) respectively. Then, for any \(\delta >0\)

Lemma 3.3

Let \(\alpha (x,y)\) and \(\tilde{\alpha }_{N}(x,y)\) be the acceptance probabilities as defined in (5) and (6) respectively. Then,

Notice that (W1) and (W2) allow control on the bounds in the above lemmas. While Lemma 3.2 provides a bound for the difference of the rejection probabilities, Lemma 3.3 gives one for the ratio of the acceptance probabilities. The proof of Theorem 3.2 is now presented.

Proof of Theorem 3.2

Since the marginal chain P is geometrically ergodic, it satisfies the geometric drift condition in (16) for some \(\lambda <1\), \(b<\infty \), some function \(V\ge 1\) and a small set \(C\subseteq \mathcal {X}\). Now, using the above lemmas

By (W1) and (W2), for any \(\varepsilon \), \(\delta >0\) there exists \(N_{0}\in {\mathbb {N}}^{+}\) such that

whenever \(N\ge N_{0}\), implying

Taking \(\delta =\frac{\varepsilon }{2}\) and \(\varepsilon \in \left( 0,\frac{1-\lambda }{1+\lambda }\right) \), the noisy chain \({\tilde{P}}_{N}\) also satisfies a geometric drift condition for the same function V and small set C, completing the proof. \(\square \)

Remark 3.1

In fact, (W1) and (W2) together guarantee for any \(\delta >0\)

which is the crucial assumption in Pillai and Smith (2014, Lemma 3.6) for obtaining a similar drift condition.

3.2 Conditions on the proposal distribution

In this subsection a different bound for the acceptance probabilities is provided, which allows dropping assumption (W2) but imposes a different one on the proposal q instead.

- \((\mathbf{P1}^{*})\) :

-

(P1) holds and for the same drift function V in (P1) there exists \(K<\infty \) such that the proposal kernel q satisfies

$$\begin{aligned} qV(x) \le KV(x),\quad \text {for}\;x\in \mathcal {X}. \end{aligned}$$

Theorem 3.3

Assume (P1*) and (W1). Then, there exists \(N_{0}\in {\mathbb {N}}^{+}\) such that for all \(N\ge N_{0}\), the noisy chain with transition kernel \({\tilde{P}}_{N}\) is geometrically ergodic.

In order to prove Theorem 3.3 the following lemma is required. Its proof can be found in Appendix 1. In contrast with Lemma 3.3, this lemma provides a bound for the additive difference of the noisy and marginal acceptance probabilities.

Lemma 3.4

Let \(\alpha (x,y)\) and \(\tilde{\alpha }_{N}(x,y)\) be the acceptance probabilities as defined in (5) and (6), respectively. Then, for any \(\eta >0\)

Proof of Theorem 3.3

Using Lemmas 3.2 and 3.4 with \(\eta =\delta \)

By (W1), there exists \(N_{0}\in {\mathbb {N}}^{+}\) such that

whenever \(N\ge N_{0}\). This implies

and using (P1*)

Taking \(\delta =\frac{\varepsilon }{2}\) and \(\varepsilon \in \left( 0,\frac{1-\lambda }{1+K}\right) \), the noisy chain \({\tilde{P}}_{N}\) also satisfies a geometric drift condition for the same function V and small set C, completing the proof. \(\square \)

Remark 3.2

By itself, (W1) implies for any \(\delta >0\)

but it needs to be paired with (P1*) to obtain the desired result. These assumptions are comparable to those in Pillai and Smith (2014, Lemma 3.6), taking f constant therein. Additionally, (W1) and (P1*) imply the required conditions on \(\mathcal {E}\) and \(\lambda \) in Rudolf and Schweizer (2015, Corollary 31), where a similar result is proved in terms of V-uniform ergodicity.

In general, assumption (P1*) may be difficult to verify as one must identify a particular function V, but it is easily satisfied when restricting to log-Lipschitz targets and when using a random walk proposal of the form

where \(\Vert \cdot \Vert \) denotes the usual Euclidean distance. To see this the following assumption is required, which is a particular case of (P1) and is satisfied under some extra technical conditions (see, e.g., Roberts and Tweedie 1996).

- \((\mathbf{P1}^{**})\) :

-

\(\mathcal {X}\subseteq {\mathbb {R}}^{d}\). The target \(\pi \) is log-Lipschitz, meaning that for some \(L>0\)

$$\begin{aligned} |\log \pi (z)-\log \pi (x)| \le L\Vert z-x\Vert . \end{aligned}$$(P1) holds taking the drift function \(V=\pi ^{-s}\), for any \(s\in (0,1)\). The proposal q is a random walk as in (17) satisfying

$$\begin{aligned} \int _{{\mathbb {R}}^{d}}\exp \left\{ a\Vert u\Vert \right\} q(\Vert u\Vert )du < \infty , \end{aligned}$$for some \(a>0\).

See Appendix 1 for a proof of the following proposition.

Proposition 3.1

Assume \((\mathrm{P}1^{**})\) and (W1). Then, (P1*) holds.

3.3 Conditions for arithmetic averages

In the particular setting where the weights are given by (4), sufficient conditions on these can be obtained to ensure geometric ergodicity is inherited by the noisy chain. For the simple case where the weights are homogeneous with respect to the state space (W1) is automatically satisfied. In order to attain (W2), the existence of a negative moment for a single weight is required. See Appendix 1 for a proof of the following result.

Proposition 3.2

Assume weights as in (4). If \({\mathbb {E}}_{Q_x}\left[ W_x^{-1}\right] <\infty \) then

For homogeneous weights, (18) implies (W2). When the weights are not homogeneous, stronger conditions are needed for (W1) and (W2) to be satisfied. An appropriate first assumption is that the weights are uniformly integrable.

- (W3):

-

The weights \(\left\{ W_{x}\right\} _{x}\) satisfy

$$\begin{aligned} \lim _{K\rightarrow \infty }\sup _{x\in \mathcal {X}}{\mathbb {E}}_{Q_{x}}\left[ W_{x}\mathbbm {1}_{\{W_{x}>K\}}\right] =0. \end{aligned}$$

The second condition imposes an additional assumption on the distribution of the weights \(\left\{ W_{x}\right\} _{x}\) near 0.

- (W4):

-

There exists \(\gamma \in (0,1)\) and constants \(M<\infty \), \(\beta >0\) such that for \(w\in (0,\gamma )\) the weights \(\left\{ W_{x}\right\} _{x}\) satisfy

$$\begin{aligned} \sup _{x\in \mathcal {X}} {\mathbb {P}}_{Q_x} \left[ W_x \le w \right] \le M w^{\beta }. \end{aligned}$$

These new conditions ensure (W1) and (W2) are satisfied.

Proposition 3.3

For weights as in (4),

-

(i)

(W3) implies (W1);

-

(ii)

(W1) and (W4) imply (W2).

The following corollary is obtained as an immediate consequence of the above proposition, Theorems 3.2 and 3.3.

Corollary 3.1

Let the weights be as in (4). Assume (W3) and either

-

(i)

(P1) and (W4);

-

(ii)

(P1*).

Then, there exists \(N_{0}\in {\mathbb {N}}^{+}\) such that for all \(N\ge N_{0}\), the noisy chain with transition kernel \({\tilde{P}}_{N}\) is geometrically ergodic.

The proof of Proposition 3.3 follows the statement of Lemma 3.5, whose proof can be found in Appendix 1. This lemma allows us to characterise the distribution of \(W_{x,N}\) near 0 assuming (W4) and also provides conditions for the existence and convergence of negative moments.

Lemma 3.5

Let \(\gamma \in (0,1)\) and \(p>0\).

-

(i)

Suppose Z is a positive random variable, and assume that for \(z\in (0,\gamma )\)

$$\begin{aligned} {\mathbb {P}}\left[ Z \le z \right] \le Mz^{\alpha },\quad \text {where}\;\alpha >p,M<\infty . \end{aligned}$$Then,

$$\begin{aligned} {\mathbb {E}}\left[ Z^{-p} \right] \le \frac{1}{\gamma ^p} + pM\frac{\gamma ^{\alpha -p}}{\alpha -p}. \end{aligned}$$ -

(ii)

Suppose \(\left\{ Z_{i}\right\} _{i=1}^{N}\) is a collection of positive and independent random variables, and assume that for each \(i\in \left\{ 1,\ldots ,N\right\} \) and \(z\in (0,\gamma )\)

$$\begin{aligned} {\mathbb {P}}\left[ Z_{i} \le z \right] \le M_{i} z^{\alpha _{i}},\quad \text {where}\;\alpha _{i}>0,M_{i}<\infty . \end{aligned}$$Then, for \(z\in (0,\gamma )\)

$$\begin{aligned} {\mathbb {P}}\left[ \sum _{i=1}^{N}Z_{i}\le z\right] \le \prod _{i=1}^N M_i z^{\sum _{i=1}^{N}\alpha _{i}}. \end{aligned}$$ -

(iii)

Let the weights be as in (4). If for some \(N_0\in {\mathbb {N}}^+\)

$$\begin{aligned} {\mathbb {E}}_{Q_{x,N_0}}\left[ W_{x,N_0}^{-p} \right] < \infty , \end{aligned}$$then for any \(N\ge N_0\)

$$\begin{aligned} {\mathbb {E}}_{Q_{x,N+1}}\left[ W_{x,N+1}^{-p} \right] \le {\mathbb {E}}_{Q_{x,N}}\left[ W_{x,N}^{-p} \right] . \end{aligned}$$ -

(iv)

Assume (W1) and let \(g:{\mathbb {R}}^{+}\rightarrow {\mathbb {R}}\) be a function that is continuous at 1 and bounded on the interval \([\gamma ,\infty )\). Then

$$\begin{aligned} \lim _{N\rightarrow \infty }\sup _{x\in \mathcal {X}}{\mathbb {E}}_{Q_{x,N}}\left[ |g\left( W_{x,N}\right) -g\left( 1\right) |\mathbbm {1}_{W_{x,N}\ge \gamma }\right] = 0. \end{aligned}$$

Proof of Proposition 3.3

Part (i) is a consequence of Chandra (1989, Theorem 1). Assuming (W3), it implies

By Markov’s inequality

and the result follows.

To prove (ii), assume (W4) and by part (ii) of Lemma 3.5, for \(w\in (0,\gamma )\)

Take \(p>1\) and define \(N_0:=\lfloor \frac{p}{\beta } \rfloor +1\), then using part (i) of Lemma 3.5 if \(N\ge N_0\)

Hence, by Hölder’s inequality

and applying part (iii) of Lemma 3.5, for \(N\ge N_0\)

Therefore,

Since \(\gamma <1\) and by (W1)

implying

Now, for fixed \(\gamma \in (0,1)\) the function \(g(x)=x^{-1}\) is bounded and continuous on \([\gamma ,\infty )\), implying by part (iv) of Lemma 3.5

and by the triangle inequality

the result follows. \(\square \)

3.4 Remarks on results

Equipped with these results, we return to the examples in Sects. 2.3 and 2.4. Even though the noisy chain can be transient in these examples, the behaviour is quite different when considering weights that are arithmetic averages of the form in (4). Since in both examples the weights are uniformly bounded by the constant b, they immediately satisfy (W1). Additionally, by Proposition 3.2, condition (W2) is satisfied for the example in Sect. 2.3. This is not the case for example in Sect. 2.4, but condition (P1*) is satisfied by taking \(V=\pi ^{-\frac{1}{2}}\). Therefore, applying Theorems 3.2 and 3.3 to examples in Sects. 2.3 and 2.4 respectively, as N increases the corresponding chains will go from being transient to geometrically ergodic.

Despite conditions (W1) and (W2) guaranteeing the inheritance of geometric ergodicity for the noisy chain, they are not necessary. Consider a modification of the example in Sect. 2.3, where the weights are given by

Again, there exists a relationship between the variables \(b_{m}\), \(\varepsilon _{m}\) and \(s_{m}\) for ensuring the expectation of the weights is equal to one. Let \(Bin\left( N,s\right) \) denote a binomial distribution of parameters \(N\in {\mathbb {N}}^{+}\) and \(s\in (0,1)\). Then, in the arithmetic average context, \(W_{m,N}\) becomes

For particular choices of the sequences \(\left\{ b_{m}\right\} _{m\in {\mathbb {N}}^{+}}\) and \(\left\{ \varepsilon _{m}\right\} _{m\in {\mathbb {N}}^{+}}\), the resulting noisy chain can be geometrically ergodic for all \(N\ge 1\), even though neither (W1) nor (W2) hold.

Proposition 3.4

Consider a geometric target density as in (11) and a proposal density as in (12). In addition, let the weights be as in (21) with \(b_{m}\rightarrow \infty \), \(\varepsilon _{m}\rightarrow 0\) as \(m\rightarrow \infty \) and

Then, the chain generated by the noisy kernel \({\tilde{P}}_{N}\) is geometrically ergodic for any \(N\in {\mathbb {N}}^{+}\).

Finally, in many of the previous examples, increasing the value of N seems to improve the ergodic properties of the noisy chain. However, the geometric ergodicity property is not always inherited, no matter how large N is taken. The following proposition shows an example rather similar to Proposition 3.4, but in which the ratio \(\frac{\varepsilon _{m-1}}{\varepsilon _{m}}\) does not converge as \(m\rightarrow \infty \).

Proposition 3.5

Consider a geometric target density as in (11) and a proposal density as in (12). In addition, let the weights be as in (21) with \(b_{m}=m\) and

Then, the chain generated by the noisy kernel \({\tilde{P}}_{N}\) is transient for any \(N\in {\mathbb {N}}^{+}\).

4 Convergence of the noisy invariant distribution

So far the only concern has been whether the noisy chain inherits the geometric ergodicity property from the marginal chain. As an immediate consequence, geometric ergodicity guarantees the existence of an invariant probability distribution \(\tilde{\pi }_{N}\) for \({\tilde{P}}_{N}\), provided N is large enough. In addition, using the same conditions from Sect. 3, we can characterise and in some cases quantify the convergence in total variation of \(\tilde{\pi }_{N}\) towards the desired target \(\pi \), as \(N\rightarrow \infty \).

4.1 Convergence in total variation

The following definition, taken from Roberts et al. (1998), characterises a class of kernels satisfying a geometric drift condition as in (16) for the same V, C, \(\lambda \) and b.

Definition 4.1

(Simultaneous geometric ergodicity) A class of Markov chain kernels \(\left\{ P_{k}\right\} _{k\in \mathcal {K}}\) is simultaneously geometrically ergodic if there exists a class of probability measures \(\left\{ \nu _{k}\right\} _{k\in \mathcal {K}}\), a measurable set \(C\subseteq \mathcal {X}\), a real valued measurable function \(V\ge 1\), a positive integer \(n_{0}\) and positive constants \(\varepsilon \), \(\lambda \), b such that for each \(k\in \mathcal {K}\):

-

(i)

C is small for \(P_{k}\), with \(P_{k}^{n_{0}}(x,\cdot )\ge \varepsilon \nu _{k}(\cdot )\) for all \(x\in C\);

-

(ii)

the chain \(P_{k}\) satisfies the geometric drift condition in (16) with drift function V, small set C and constants \(\lambda \) and b.

Provided N is large, the noisy kernels \(\{ {\tilde{P}}_{N+k}\}_{k\ge 0}\) together with the marginal P will be simultaneous geometrically ergodic. This will allow the use of coupling arguments for ensuring \(\tilde{\pi }_{N}\) and \(\pi \) get arbitrarily close in total variation. The main additional assumption is

- (P2):

-

For some \(\varepsilon >0\), some probability measure \(\nu (\cdot )\) on \(\left( \mathcal {X},\mathcal {B}(\mathcal {X})\right) \) and some subset \(C\subseteq \mathcal {X}\), the marginal acceptance probability \(\alpha \) and the proposal kernel q satisfy

$$\begin{aligned} \alpha (x,y)q(x,dy) \ge \varepsilon \nu (dy), \quad \text {for}\;x\in C. \end{aligned}$$

Remark 4.1

(P2) ensures the marginal chain satisfies the minorisation condition in (15), purely attained by the sub-kernel \(\alpha (x,y)q(x,dy)\). This occurs under fairly mild assumptions (see, e.g., Roberts and Tweedie 1996, Theorem 2.2).

Theorem 4.1

Assume (P1), (P2), (W1) and (W2). Alternatively, assume (P1*), (P2) and (W1). Then,

-

(i)

there exists \(N_{0}\in {\mathbb {N}}^{+}\) such that the class of kernels \(\left\{ P,{\tilde{P}}_{N_{0}},{\tilde{P}}_{N_{0}+1},\ldots \right\} \) is simultaneously geometrically ergodic;

-

(ii)

for all \(x\in \mathcal {X}\), \(\lim _{N\rightarrow \infty }\Vert {\tilde{P}}_{N}(x,\cdot )-P(x,\cdot )\Vert _{TV}=0\);

-

(iii)

\(\lim _{N\rightarrow \infty }\Vert \tilde{\pi }_{N}(\cdot )-\pi (\cdot )\Vert _{TV}=0.\)

Part (iii) of the above theorem is mainly a consequence of Roberts et al. (1998, Theorem 9) when parts (i) and (ii) hold. Indeed, by the triangle inequality,

Provided \(N\ge N_{0}\), the first two terms in (22) can be made arbitrarily small by increasing n. In addition, due to the simultaneous geometrically ergodic property, the first term in (22) is uniformly controlled regardless the value of N. Finally, using an inductive argument, part (ii) implies that for all \(x\in \mathcal {X}\) and all \(n\in {\mathbb {N}}^{+}\)

Proof of Theorem 4.1

From the proofs of Theorems 3.2 and 3.3, there exists \(N_{1}\in {\mathbb {N}}^{+}\) such that the class of kernels \(\left\{ P,{\tilde{P}}_{N_{1}},{\tilde{P}}_{N_{1}+1},\ldots \right\} \) satisfies condition (ii) in Definition 4.1 for the same function V, small set C and constants \(\lambda _{N_{1}},b_{N_{1}}\). Respecting (i), for any \(\delta \in (0,1)\)

Then, by Lemma 3.1

By (W1), there exists \(N_{2}\in {\mathbb {N}}^{+}\) such that for \(N\ge N_{2}\)

giving

Due to (P2),

Finally, take \(N_{0}=\max \left\{ N_{1},N_{2}\right\} \) implying (i).

To prove (ii) apply Lemma 3.2 and Lemma 3.4 to get

Finally, taking \(N\rightarrow \infty \) and by (W1)

The result follows since \(\eta \) and \(\delta \) can be taken arbitrarily small.

For (iii), see Theorem 9 in Roberts et al. (1998) for a detailed proof. \(\square \)

Remark 4.2

A Wasserstein distance variant of part (iii) in Theorem 4.1 has been proved in Rudolf and Schweizer (2015, Corollary 28), in which control of the difference between \(\tilde{\alpha }_N\) and \(\alpha \) is still required and can be obtained using (W1).

4.2 Rate of convergence

Let \((\tilde{\varPhi }_{n}^{N})_{n\ge 0}\) denote the noisy chain and \(\left( \varPhi _{n}\right) _{n\ge 0}\) the marginal chain, which move according to the kernels \({\tilde{P}}_{N}\) and P, respectively and define \(c_{x}:=1-\Vert {\tilde{P}}_{N}(x,\cdot )-P(x,\cdot )\Vert _{TV}\). Using notions of maximal coupling for random variables defined on a Polish space (see Lindvall 2002 and Thorisson 2013), there exists a probability measure \(\nu _{x}(\cdot )\) such that

Let \(c:=\inf _{x\in \mathcal {X}}c_{x}\), define a coupling in the following way

-

If \(\tilde{\varPhi }_{n-1}^{N}=\varPhi _{n-1}=y\), with probability c draw \(\varPhi _{n}\sim \nu _{y}(\cdot )\) and set \(\tilde{\varPhi }_{n}^{N}=\varPhi _{n}\). Otherwise, draw independently \(\varPhi _{n}\sim R(y,\cdot )\) and \(\tilde{\varPhi }_{n}^{N}\sim \tilde{R}_{N}(y,\cdot )\), where

$$\begin{aligned} R(y,\cdot )&:= \left( 1-c\right) ^{-1}\left( P(y,\cdot )-c\nu _{y}(\cdot )\right) \quad \text {and}\nonumber \\ \tilde{R}_{N}(y,\cdot )&:= \left( 1-c\right) ^{-1}\left( {\tilde{P}}_{N}(y,\cdot )-c\nu _{y}(\cdot )\right) . \end{aligned}$$ -

If \(\tilde{\varPhi }_{n-1}^{N}\ne \varPhi _{n-1}\), draw independently \(\varPhi _{n}\sim P(y,\cdot )\) and \(\tilde{\varPhi }_{n}^{N}\sim {\tilde{P}}_{N}(y,\cdot )\).

Since

and noting

an induction argument can be applied to obtain

Therefore, using the coupling inequality, the third term in (22) can be bounded by

On the other hand, using the simultaneous geometric ergodicity of the kernels and provided N is large enough, the noisy and marginal kernels will each satisfy a geometric drift condition as in (16) with a common drift function \(V\ge 1\), small set C and constants \(\lambda ,b\). Therefore, by Theorem 3.1, there exist \(R>0\), and \(\tau <1\) such that

Explicit values for R and \(\tau \) are in principle possible, as done in Rosenthal (1995) and Meyn and Tweedie (1994). For simplicity assume \(\inf _{x\in \mathcal {X}}V(x)=1\), then combining (24) and (25) in (22), for all \(n\in {\mathbb {N}}^{+}\)

So, if an analytic expression in terms of N is available for the second term on the right hand side of (26), it will be possible to obtain an explicit rate of convergence for \(\tilde{\pi }_N\) and \(\pi \).

Theorem 4.2

Assume (P1), (P2), (W1) and (W2). Alternatively, assume (P1*), (P2) and (W1). In addition, suppose

where \(r:{\mathbb {N}}^{+}\rightarrow {\mathbb {R}}^{+}\) and \(\lim _{N\rightarrow \infty }r(N)=+\infty \). Then, there exists \(D>0\) and \(N_{0}\in {\mathbb {N}}^{+}\) such that for all \(N\ge N_{0}\),

Proof

Let \(R>0,\tau \in (0,1)\) and \(r>0\). Pick r large enough, such that

then the convex function \(f:[1,\infty )\rightarrow {\mathbb {R}}^{+}\) where

is minimised at

Restricting the domain of f to the positive integers and due to convexity, it is then minimised at either

In any case

Finally take N large enough such that

and from (26)

obtaining the result. \(\square \)

Remark 4.3

A general result bounding the total variation between the law of a Markov chain and a perturbed version is presented in Rudolf and Schweizer (2015, Theorem 21). This is done using the connection between the V-norm distance and the Wasserstein distance introduced in Hairer and Mattingly (2011). With such a result, and considering the same assumptions in Theorem 4.2, one could in principle obtain an explicit value for D in (27).

Moreover, when the weights are expressed in terms of arithmetic averages as in (4), an explicit expression for r(N) can be obtained whenever there exists a uniformly bounded moment. This is a slightly stronger assumption than (W3).

- (W5):

-

There exists \(k>0\), such that the weights \(\left\{ W_{x}\right\} _{x}\) satisfy

$$\begin{aligned} \sup _{x\in \mathcal {X}}{\mathbb {E}}_{Q_{x}}\left[ W_{x}^{1+k}\right] < \infty . \end{aligned}$$

Proposition 4.1

Assume (P1), (P2), (W4) and (W5). Alternatively, assume (P1*), (P2) and (W5). Then, there exists \(D_{k}>0\) and \(N_{0}\in {\mathbb {N}}^{+}\) such that for all \(N\ge N_{0}\),

If in addition (W5) holds for all \(k>0\), then for any \(\varepsilon \in (0,1)\) there will exist \(D_{\varepsilon }>0\) and \(N_{0}\in {\mathbb {N}}^{+}\) such that for all \(N\ge N_{0}\),

5 Discussion

In this article, fundamental stability properties of the noisy algorithm have been explored. The noisy Markov kernels considered are perturbed Metropolis–Hastings kernels defined by a collection of state-dependent distributions for non-negative weights all with expectation 1. The general results do not assume a specific form for these weights, which can be simple arithmetic averages or more complex random variables. The former may arise when unbiased importance sampling estimates of a target density are used, while the latter may arise when such densities are estimated unbiasedly using a particle filter.

Two different sets of sufficient conditions were provided under which the noisy chain inherits geometric ergodicity from the marginal chain. The first pair of conditions, (W1) and (W2), involve a stronger version of the Law of Large Numbers for the weights and uniform convergence of the first negative moment, respectively. For the second set, (W1) is still required but (W2) can be replaced with (P1*), which imposes a condition on the proposal distribution. These conditions also imply simultaneous geometric ergodicity of a sequence of noisy Markov kernels together with the marginal Markov kernel, which then ensures that the noisy invariant \(\tilde{\pi }_{N}\) converges to \(\pi \) in total variation as N increases. Moreover, an explicit bound for the rate of convergence between \(\tilde{\pi }_{N}\) and \(\pi \) is possible whenever an explicit bound (that is uniform in x) is available for the convergence between \({\tilde{P}}_{N}(x,\cdot )\) and \(P(x,\cdot )\).

When weights are arithmetic averages as in (4), specific conditions were given for inheriting geometric ergodicity from the corresponding marginal chain. The uniform integrability condition in (W3) ensures that (W1) is satisfied, whereas (W4) is essential for satisfying (W2). Regarding the noisy invariant distribution \(\tilde{\pi }_{N}\), (W5), which is slightly stronger than (W3), leads to an explicit bound on the rate of convergence of this distribution to \(\pi \).

The noisy algorithm remains undefined when the weights have positive probability of being zero. If both weights were zero one could accept the move, reject the move or keep sampling new weights until one of them is not zero. Each of these lead to different behaviour.

As seen in the examples of Sect. 3.4, the behaviour of the ratio of the weights (at least in the tails of the target) plays an important role in the ergodic properties of the noisy chain. In this context, it seems plausible to obtain geometric noisy chains, even when the marginal is not, if the ratio of the weights decays sufficiently fast to zero in the tails. Another interesting possibility, that may lead to future research, is to relax the condition on the expectation of the weights to be identically one.

References

Alquier, P., Friel, N., Everitt, R., Boland, A.: Noisy Monte Carlo: convergence of Markov chains with approximate transition kernels. Stat. Comput., 1–19 (2014). doi:10.1007/s11222-014-9521-x

Andrieu, C., Roberts, G.O.: The pseudo-marginal approach for efficient Monte Carlo computations. Ann. Stati. 37(2), 697–725 (2009). http://www.jstor.org/stable/30243645

Andrieu, C., Vihola, M.: Establishing some order amongst exact approximations of MCMCs (2014). arXiv preprint. arXiv:14046909

Andrieu, C., Vihola, M.: Convergence properties of pseudo-marginal Markov chain Monte Carlo algorithms. Ann. Appl. Probab. 25(2), 1030–1077 (2015). doi:10.1214/14-AAP1022

Andrieu, C., Doucet, A., Holenstein, R.: Particle Markov chain Monte Carlo methods. J. R. Stat. Soc. Ser. B (Stat. Methodol.) 72(3), 269–342 (2010). http://www.jstor.org/stable/40802151

Beaumont, M.A.: Estimation of population growth or decline in genetically monitored populations. Genetics 164(3), 1139–1160 (2003). http://www.genetics.org/content/164/3/1139.abstract. http://www.genetics.org/content/164/3/1139.full.pdf+html

Breyer, L., Roberts, G.O., Rosenthal, J.S.: A note on geometric ergodicity and floating-point roundoff error. Stat. Probab. Lett. 53(2), 123–127 (2001). doi:10.1016/S0167-7152(01)00054-2. http://www.sciencedirect.com/science/article/pii/S0167715201000542

Callaert, H., Keilson, J.: On exponential ergodicity and spectral structure for birth-death processes, II. Stoch. Process. Appl. 1(3), 217–235 (1973). doi:10.1016/0304-4149(73)90001-X. http://www.sciencedirect.com/science/article/pii/030441497390001X

Chan, K.S., Geyer, C.J.: Discussion: Markov chains for exploring posterior distributions. Ann. Stat. 22(4), 1747–1758 (1994). http://www.jstor.org/stable/2242481

Chandra, T.K.: Uniform Integrability in the Cesàro sense and the weak law of large numbers. Sankhyā Ser. A (1961–2002) 51(3), 309–317 (1989). http://www.jstor.org/stable/25050754

Del Moral, P.: Feynman–Kac formulae: genealogical and interacting particle systems with applications. In: Probability and Its Applications. Springer, New York (2004). http://books.google.co.uk/books?id=8LypfuG8ZLYC

Doucet, A., Pitt, M.K., Deligiannidis, G., Kohn, R.: Efficient implementation of Markov chain Monte Carlo when using an unbiased likelihood estimator. Biometrika (2015). doi:10.1093/biomet/asu075. http://biomet.oxfordjournals.org/content/early/2015/03/07/biomet.asu075.abstract, http://biomet.oxfordjournals.org/content/early/2015/03/07/biomet.asu075.full.pdf+html

Fernández-Villaverde, J., Rubio-Ramírez, J.F.: Estimating macroeconomic models: a likelihood approach. Rev. Econ. Stud. 74(4), 1059–1087 (2007). doi:10.1111/j.1467-937X.2007.00437.x. http://restud.oxfordjournals.org/content/74/4/1059.abstract, http://restud.oxfordjournals.org/content/74/4/1059.full.pdf+html

Ferré, D., Hervé, L., Ledoux, J.: Regular perturbation of V-geometrically ergodic Markov chains. J. Appl. Probab. 50(1), 184–194 (2013). doi:10.1239/jap/1363784432

Flegal, J.M., Jones, G.L.: Batch means and spectral variance estimators in Markov chain Monte Carlo. Ann. Stat. 38(2), 1034–1070 (2010). http://www.jstor.org/stable/25662268

Girolami, M., Lyne, A.M., Strathmann, H., Simpson, D., Atchade, Y.: Playing Russian roulette with intractable likelihoods (2013). arXiv preprint. arXiv:13064032

Hairer, M., Mattingly, J.C.: Yet another look at Harris’ ergodic theorem for Markov chains. In: Seminar on Stochastic Analysis, Random Fields and Applications VI, Springer, Basel, pp. 109–117 (2011)

Khuri, A., Casella, G.: The existence of the first negative moment revisited. Am. Stat. 56(1), 44–47 (2002). http://www.jstor.org/stable/3087326

Lee, A., Łatuszyński, K.: Variance bounding and geometric ergodicity of Markov chain Monte Carlo kernels for approximate Bayesian computation. Biometrika (2014). doi:10.1093/biomet/asu027. http://biomet.oxfordjournals.org/content/early/2014/08/05/biomet.asu027.abstract, http://biomet.oxfordjournals.org/content/early/2014/08/05/biomet.asu027.full.pdf+html

Lindvall, T.: Lectures on the Coupling Method. Dover Books on Mathematics Series. Dover Publications, Incorporated (2002). http://books.google.co.uk/books?id=GUwyU1ypd1wC

Maire, F., Douc, R., Olsson, J.: Comparison of asymptotic variances of inhomogeneous Markov chains with application to Markov chain Monte Carlo methods. Ann. Stat. 42(4), 1483–1510 (2014). doi:10.1214/14-AOS1209

McKinley, T.J., Ross, J.V., Deardon, R., Cook, A.R.: Simulation-based Bayesian inference for epidemic models. Comput. Stat. Data Anal. 71(0), 434 – 447 (2014). doi:10.1016/j.csda.2012.12.012. http://www.sciencedirect.com/science/article/pii/S016794731200446X

Mengersen, K.L., Tweedie, R.L.: Rates of convergence of the Hastings and Metropolis algorithms. Ann. Stat. 24(1), 101–121 (1996). http://www.jstor.org/stable/2242610

Meyn, S.P., Tweedie, R.L.: Computable bounds for geometric convergence rates of Markov chains. Ann. Appl. Probab. 4(4), 981–1011 (1994). http://www.jstor.org/stable/2245077

Meyn, S., Tweedie, R.L.: Markov Chains and Stochastic Stability, 2nd edn. Cambridge University Press, New York (2009)

Mitrophanov, A.Y.: Sensitivity and convergence of uniformly ergodic Markov chains. J. Appl. Probab. 42(4), 1003–1014 (2005). doi:10.1239/jap/1134587812

Norris, J.: Markov Chains. No. 2008 in Cambridge Series in Statistical and Probabilistic Mathematics. Cambridge University Press (1999). https://books.google.co.uk/books?id=qM65VRmOJZAC

O’Neill, P.D., Balding, D.J., Becker, N.G., Eerola, M., Mollison, D.: Analyses of infectious disease data from household outbreaks by Markov chain Monte Carlo methods. J. R. Stat. Soc. Ser. C (Appl. Stat.) 49(4), 517–542 (2000). http://www.jstor.org/stable/2680786

Piegorsch, W.W., Casella, G.: The existence of the first negative moment. Am. Stat. 39(1), 60–62 (1985). http://www.jstor.org/stable/2683910

Pillai, N.S., Smith, A.: Ergodicity of approximate MCMC chains with applications to large data sets (2014). arXiv preprint arXiv:14050182

Pitt, M.K., dos Santos Silva, R., Giordani, P., Kohn, R.: On some properties of Markov chain Monte Carlo simulation methods based on the particle filter. J. Econom. 171(2), 134–151 (2012). doi:10.1016/j.jeconom.2012.06.004. http://www.sciencedirect.com/science/article/pii/S0304407612001510

Roberts, G., Rosenthal, J.: Geometric ergodicity and hybrid Markov chains. Electron. Commun. Probab. 2(2), 13–25 (1997). doi:10.1214/ECP.v2-981. http://ecp.ejpecp.org/article/view/981

Roberts, G.O., Rosenthal, J.S.: General state space Markov chains and MCMC algorithms. Probab. Surv. 1, 20–71 (2004)

Roberts, G.O., Tweedie, R.L.: Geometric convergence and central limit theorems for multidimensional Hastings and Metropolis algorithms. Biometrika 83(1), 95–110 (1996). http://www.jstor.org/stable/2337435

Roberts, G.O., Rosenthal, J.S., Schwartz, P.O.: Convergence properties of perturbed Markov chains. J. Appl. Probab. 35(1), 1–11 (1998). http://www.jstor.org/stable/3215541

Rosenthal, J.S.: Minorization conditions and convergence rates for Markov chain Monte Carlo. J. Am. Stat. Assoc. 90(430), 558–566 (1995). http://www.jstor.org/stable/2291067

Rudolf, D., Schweizer, N.: Perturbation theory for Markov chains via Wasserstein distance (2015). arXiv preprint. arXiv:150304123

Shardlow, T., Stuart, A.M.: A perturbation theory for ergodic Markov chains and application to numerical approximations. SIAM J. Numer. Anal. 37(4), 1120–1137 (2000). doi:10.1137/S0036142998337235

Sherlock, C., Thiery, A.H., Roberts, G.O., Rosenthal, J.S.: On the efficiency of pseudo-marginal random walk Metropolis algorithms. Ann. Stat. 43(1), 238–275 (2015). doi:10.1214/14-AOS1278

Thorisson, H.: Coupling, stationarity, and regeneration. In: Probability and Its Applications. Springer, New York (2013). http://books.google.co.uk/books?id=187hnQEACAAJ

Acknowledgments

All authors would like to thank the EPSRC-funded Centre for Research in Statistical methodology (EP/D002060/1). The first author was also supported by Consejo Nacional de Ciencia y Tecnología. The third author’s research was also supported by the EPSRC Programme Grant i-like (EP/K014463/1).

Author information

Authors and Affiliations

Corresponding author

Additional information

An erratum to this article is available at http://dx.doi.org/10.1007/s11222-017-9755-5.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Appendix 1: Proofs

Appendix 1: Proofs

1.1 On state-dependent random walks

The following proposition for state-dependent Markov chains on the positive integers will be useful for addressing some proofs. See Norris (1999) for a proof of parts (i) and (ii), for part (iii) see Callaert and Keilson (1973), which is proved within the birth-death process context.

Proposition 5.1

Suppose we have a random walk \(\varPhi \) on \({\mathbb {N}}^{+}\) with transition kernel P. Define for \(m\ge 1\)

with \(q_{1}=0,p_{1}\in (0,1]\) and \(p_{m},q_{m}>0,p_{m}+q_{m}\le 1\) for all \(m\ge 2\). The resulting chain is:

-

(i)

recurrent if and only if

$$\begin{aligned} \sum _{m=2}^{\infty }\prod _{i=2}^{m}\frac{q_{i}}{p_{i}}\rightarrow \infty ; \end{aligned}$$ -

(ii)

positive recurrent if and only if

$$\begin{aligned} \sum _{m=2}^{\infty }\prod _{i=2}^{m}\frac{p_{i-1}}{q_{i}} < \infty ; \end{aligned}$$ -

(iii)

geometrically ergodic if

$$\begin{aligned} \lim _{m\rightarrow \infty }p_{m} < \lim _{m\rightarrow \infty }q_{m}. \end{aligned}$$

Remark 5.1

Notice that (iii) is not an if and only if statement and that it implies (ii). Additionally, if the chain is not state-dependent, (ii) implies (iii).

1.2 Section 2

Proof of Proposition 2.1

Since h is convex

implying

Define \(Z:=\frac{W_{N}^{(1)}}{W_{N}^{(2)}}\), and since \(\pi (m)\rightarrow 0\) it is true that

hence

If \(k=+\infty \), it is clear that the limit in (29) diverges, consequently the noisy chain is geometrically ergodic according to Proposition 5.1. If \(k<\infty \), the noisy chain will be geometrically ergodic if

which can be translated to

or equivalently to

Now consider two cases, first if \({\mathbb {P}}\left[ k^{-1}<Z<k\right] >0\) then it is clear that

which satisfies (30). Finally, if \({\mathbb {P}}\left[ k^{-1}<Z<k\right] =0\) then

implying from (28)

and leading to (30). \(\square \)

Proof of Proposition 2.2

For simplicity the subscript N is dropped. In this case,

and the condition \({\mathbb {E}}_{Q}\left[ W\right] =1\) implies

Let \(\theta \in \left( \frac{1}{1+2\varepsilon },1\right) \) and set

this implies \({\bar{\alpha }}(m,w;m-1,u)\equiv 1\) and

Therefore, for \(m\ge 2\),

Consequently, \({\tilde{P}}(m,\left\{ m-1\right\} )=1-\theta \) and

From Proposition 5.1, if

then the noisy chain will be transient. For this to happen, it is enough to pick \(\theta \) and s such that

Let \(s=\varepsilon \), then from (31) and (32)

and if \(\varepsilon \le 2-\sqrt{3}\) then

Hence, for \(\varepsilon \in \left( 0,2-\sqrt{3}\right) \) and setting \(s=\varepsilon \), \(\theta \) as in (33) and b as in (32), the resulting noisy chain is transient. \(\square \)

Proof of Proposition 2.3

For simplicity the subscript N is dropped. In this case,

and the condition \({\mathbb {E}}_{Q_{m}}\left[ W_{m}\right] =1\) implies

Then, for m large enough

Define

Since \(s_{m}\rightarrow \frac{1}{b}\) as \(m\rightarrow \infty \),

and

Therefore, for any \(\delta >0\) there exists \(k_{0}\in {\mathbb {N}}^{+}\), such that whenever \(k\ge k_{0}+1\)

implying

Hence, for \(i\in \lbrace 0,1,2\rbrace \) and some \(C>0\)

Let \(a_{m}:=\prod _{j=2}^{m}c_{j}\), then a sufficient condition for the series \(\sum _{m=2}^{\infty }a_{m}\) to converge, implying a transient chain according to Proposition 5.1, is \(l_{2}<1\). This is the case for \(b\ge 3+\left( \frac{1-\theta }{\theta }\right) ^{3}\), since

Hence, the resulting noisy chain is transient if \(b\ge 3+\left( \frac{1-\theta }{\theta }\right) ^{3}\), for any \(\theta \in (0,1)\). \(\square \)

1.3 Section 3

Proof of Lemma 3.1

For any \(\delta >0\)

\(\square \)

Proof of Lemma 3.2

Using the inequality

and applying Markov’s inequality with \(\delta >0\),

Finally, using Lemma 3.1

\(\square \)

Proof of Lemma 3.3

For the first claim apply Jensen’s inequality and the fact that

hence

\(\square \)

Proof of Lemma 3.4

Using the inequality

Notice that

then applying Lemma 3.1 taking \(\delta =\frac{\eta }{1+\eta }\).

\(\square \)

Proof of Proposition 3.1

Taking \(V=\pi ^{-s}\), where \(0<s<\min \left\{ 1,\frac{a}{L}\right\} \),

Finally, using the transformation \(u=z-x\),

which implies (P1*). \(\square \)

Proof of Proposition 3.2

By properties of the arithmetic and harmonic means

which implies, by Jensen’s inequality,

Then, using Fatou’s lemma and the law of large numbers

hence

Finally, since

the expression in (34) becomes

\(\square \)

Proof of Lemma 3.5

The proof of (i) is motivated by Piegorsch and Casella (1985, Theorem 2.1) and Khuri and Casella (2002, Theorem 3), however the existence of a density function is not assumed here. Since \(Z^{-p}\) is positive,

For part (ii), since the random variables \(\left\{ Z_{i}\right\} \) are positive, then for any \(z>0\)

Therefore, for \(z\in (0,\gamma )\)

Part (iii) can be seen as a consequence of \(W_{x,N}\) and \(W_{x,N+1}\) being convex ordered and \(g(x)=x^{-p}\) being a convex function for \(x>0\) and \(p\ge 0\), (see, e.g., Andrieu and Vihola 2014). We provide a self-contained proof by defining for \(j\in \{1,\ldots ,N+1\}\)

and we have

and since the arithmetic mean is greater than or equal to the geometric mean

This implies for \(p>0\)

where Hölder’s inequality has been used and the fact that the random variables \(\left\{ S_{x,N}^{(j)} : j \in {1,\ldots ,N+1} \right\} \) are identically distributed according to \(Q_{x,N}\).

For part (iv), let \(M_{\gamma }=\sup _{y\in [\gamma ,\infty )}|g(y)|\) and due to continuity at \(y=1\), for any \(\varepsilon >0\) there exists a \(\delta >0\) such that

Therefore, for fixed \(\varepsilon \) and by (W1)

obtaining the result since \(\varepsilon \) can be picked arbitrarily small.\(\square \)

Proof of Proposition 3.4

First notice that if \(l<\infty \) then \(l\ge 1\). To see this, define

then for fixed \(\delta >0\), there exists \(M\in {\mathbb {N}}\) such that for \(m\ge M\)

Then, for \(m\ge M\)

and because \(\varepsilon _{m}\rightarrow 0\), it is clear that \(\left( l+\delta \right) ^{m}\rightarrow \infty \) as \(m\rightarrow \infty \). Therefore, \(l+\delta >1\) and since \(\delta \) can be taken arbitrarily small, it is true that \(l\ge 1\).

Now, for weights as in (21) and using a simple random walk proposal, the noisy acceptance probability can be expressed as

and

Since \(b_{m}\rightarrow \infty \), then \(s_{m}\rightarrow 0\) as \(m\rightarrow \infty \); therefore, any term in (35) and (36), for which \(j+k\ne 0\), tends to zero as \(m\rightarrow \infty \). Hence,

and

implying,

If \(l=+\infty \), (37) tends to \(+\infty \), whereas if \(l<\infty \)

In any case, this implies

and since

the noisy chain is geometrically ergodic according to Proposition Proposition 5.1. \(\square \)

Proof of Proposition 3.5

Noting that

expressions in (35) and (36) become

and

Therefore,

implying there exists \(C\in {\mathbb {R}}^{+}\) such that for \(j=0,2\)

and

Then, for fixed \(\delta >0\) there exists \(k_{0}\in {\mathbb {N}}^{+}\) such that whenever \(k\ge k_{0}\)

and

Let

then for \(k\ge k_{0}+1\)

Take \(\delta \) small enough, such that \(\left( C+\delta \right) ^{2}\delta <1\), hence

Similarly, it can be proved that

thus

implying the noisy chain is transient according to Proposition 5.1. \(\square \)

1.4 Section 4

Proof of Proposition 4.1

From (23) and taking \(\delta <\frac{1}{2}\), \(\eta =\frac{\delta }{1-\delta }\)

Using Markov’s inequality

Now, let

then the convex function \(f:{\mathbb {R}}^{+}\rightarrow {\mathbb {R}}^{+}\) where

is minimised at

Then,

Applying Theorem 4.2 by taking

and noting \(\log \left( N^{1-\frac{2}{2+k}} \right) \le \log (N)\), the result is obtained.

For the second claim, for a given \(\varepsilon \in (0,1)\) take \(k_{\varepsilon }\ge 2\left( \varepsilon ^{-1}-1\right) \) and apply the first part. \(\square \)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Medina-Aguayo, F.J., Lee, A. & Roberts, G.O. Stability of noisy Metropolis–Hastings. Stat Comput 26, 1187–1211 (2016). https://doi.org/10.1007/s11222-015-9604-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11222-015-9604-3