Abstract

An Engineering Radiation Monitor (ERM) has been developed as a supplementary spacecraft subsystem for NASA’s Radiation Belt Storm Probes (RBSP) mission. The ERM will monitor total dose and deep dielectric charging at each RBSP spacecraft in real time. Configured to take the place of spacecraft balance mass, the ERM contains an array of eight dosimeters and two buried conductive plates. The dosimeters are mounted under covers of varying shielding thickness to obtain a dose-depth curve and characterize the electron and proton contributions to total dose. A 3-min readout cadence coupled with an initial sensitivity of ∼0.01 krad should enable dynamic measurements of dose rate throughout the 9-hr RBSP orbit. The dosimeters are Radiation-sensing Field Effect Transistors (RadFETs) and operate at zero bias to preserve their response even when powered off. The range of the RadFETs extends above 1000 krad to avoid saturation over the expected duration of the mission. Two large-area (∼10 cm2) charge monitor plates set behind different thickness covers will measure the dynamic currents of weakly-penetrating electrons that can be potentially hazardous to sensitive electronic components within the spacecraft. The charge monitors can handle large events without saturating (∼3000 fA/cm2) and provide sufficient sensitivity (∼0.1 fA/cm2) to gauge quiescent conditions. High time-resolution (5 s) monitoring allows detection of rapid changes in flux and enables correlation of spacecraft anomalies with local space weather conditions. Although primarily intended as an engineering subsystem to monitor spacecraft radiation levels, real-time data from the ERM may also prove useful or interesting to a larger community.

Similar content being viewed by others

1 Introduction

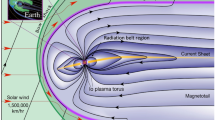

The RBSP spacecraft must operate in a high-radiation environment consisting primarily of energetic electrons and protons (Mauk et al. 2012, this issue). Most of the spacecraft instruments and subsystems have included significant amounts of shielding around their electronics and detectors to limit the total accumulated dose. The amount of shielding (depth) chosen was based on a conservative determination of the predicted total integrated dose for the mission lifetime using conventional models of the static particle flux (Sawyer and Vette 1976; Vette 1991). The predicted dose is typically obtained using Monte Carlo or ray-tracing radiation transport tools (Calvel et al. 2008). However, several aspects of the design process can be improved with the use of in-situ dosimetry. Real-time monitoring of the dynamic radiation dose and dose rate near the location of sensitive electronics can help eliminate inaccuracies associated with static particle environment models and the transport of radiation through simplified spacecraft shielding models. It also allows the direct measurement of space radiation at shielded depths internal to the spacecraft.

Recent studies by Bogorad et al. (2009) presented results of on-orbit particle measurements along with comparisons to the standard AE8 model (Ginet and O’Brien 2009) that suggest a need for updates to the existing static AE8 model. Specifically, analytical estimates from the existing models show long-term radiation rates averaged over six years that are approximately 4X to 10X higher than the measured on-orbit dose for the same time period. This large discrepancy appears to be due primarily to the dynamics of the electron fluence during the period from 1997 to 2003, but could also be due to the use of simplified spacecraft geometry models or calculation methods.

An early design concept of the RBSP spacecraft included an extensive radiation monitoring package that was later descoped along with other proposed spacecraft subsystems (e.g., star trackers) in a necessary cost-cutting exercise. Late in the development cycle (after completion of the Spacecraft Critical Design Review), we proposed recovering some radiation monitoring capability as a balance mass experiment. No longer considered a mission-critical subsystem (i.e., not part of the RBSP Program Level requirements), we were able to obtain approval from NASA to build a simple, inexpensive radiation monitor using a streamlined hardware development process that reduced the documentation and parts screening requirements. As a result, we were able to develop and build the ERM on an accelerated schedule and deliver the qualified flight units to the spacecraft at the same time as other payload elements.

2 ERM Measurement Objectives

As a supplementary experiment, there are no RBSP Program Level (Level-1) requirements levied on the ERM, and its measurement objectives do not feed into the mission success criteria. Nevertheless, we established four challenging objectives for the ERM experiment: (1) Measure all relevant electrical parameters of the RadFET devices in flight to understand better their response to dose in a mixed-particle environment and develop confidence in their long-term application to spacecraft dosimetry; (2) Characterize the actual on-orbit dose-depth curve using an array of dosimeters at different effective shielding depths and compare this result with predictions; (3) Profile the dose and dose rate of the RBSP orbit over a period of two years or longer; (4) Measure the internal charging conditions on each spacecraft ranging from quiescent to the largest, worst-case storm with sufficient sensitivity and time resolution to enable correlation of spacecraft anomalies with local space weather conditions.

A secondary objective of the experiment aims to take advantage of the unique opportunity on RBSP to cross-compare RadFET-based dosimetry data with data collected by the Aerospace dosimeter being flown as part of the Magnetic Electron Ion Spectrometer (MagEIS) (Blake et al. 2012, this issue). The Aerospace dosimeter actively measures the energy deposition of each particle passing through its internal solid state detector. A comparison of results may highlight measurement differences in these two very different technological approaches.

A detailed description of the RBSP mission design may be found in Mauk et al. (2012, this issue). In summary, the two spacecraft are in nearly identical orbits with perigee of ∼600 km altitude, apogee of 5.8 RE geocentric, and inclination of 10°. The orbital period is ∼9 hr and a slight difference in orbital apogees causes their radial spacing to vary between ∼100 km and ∼5RE. These orbits allow RBSP to access all of the most critical regions of the radiation belts. The 2-yr nominal mission lifetime allows all local times to be studied.

3 ERM Description

Simplicity, ease of manufacture, and ease of integration and test were driving principles in the design of the ERM. The ERM is contained in a single box that fits on the aft deck of the spacecraft and mounts to an existing balance mass location (see Fig. 1). Because the balance mass plates bolt directly into the edge member of the spacecraft structure, a separate mounting bracket had to be bonded to the spacecraft deck so that both ends of the ERM housing could be properly secured. The ERM dissipates little power (∼0.25 W) and was permitted a generous footprint (17 cm×17 cm), so no thermal gasket material is required. The box is completely covered with multi-layer insulation (MLI) and the overall profile was kept low (∼6 cm) to limit any intrusion into the field-of-view (FOV) of nearby thermal radiators. The box is not fitted with survival heaters, thermostats, or spacecraft temperature sensors and will simply follow the deck temperature. The mounting location near the edge of the deck assures a clear FOV for the two charge monitors (circular depressions in the cover) and the dosimeter array (rectangular aperture with thinnest absorber at center). The small amount of absorption due to the MLI blanket over the aperture is only significant in comparison to the thinnest part of the cover and has been included in the response model.

Figure 2 provides internal and external views of the ERM. The rectangular aperture in the cover spans the dosimeter array and contains a varying thickness absorber to characterize dose vs. depth. The circular depressions above the charge monitor plates provide two levels of shielding thickness to gauge deep dielectric charging currents over an extended range. The ERM is primarily sensitive to radiation penetrating these defined apertures with thick box walls limiting the exposure from the other sides.

The ERM receives its power and data interface over a single cable (4 wires) that connects to a test port on the Radiation Belt Storm Probes Ion Composition Experiment (RBSPICE) electronics (Lanzerotti et al. 2012, this issue). When the ERM was proposed, there were no more power and data ports available from the spacecraft Integrated Electronics Module (IEM), so the ERM experiment would not have been possible without the foresight of the RBSPICE engineering team to include such a flexible interface in their design. Because the ERM connects to a primary instrument, these interfaces were carefully reviewed and all parts associated with this interface were selected to meet the same reliability standards as other spacecraft components and were fully screened. As further protection, the RBSPICE test port contains a secondary power switch that can turn off the ERM in the event of a fault.

The RBSPICE instrument software collects ERM data and packetizes it for downlink. ERM data will be archived as an RBSPICE data product, but it will also be accessible via the spacecraft Mission Operations Center (MOC). Given the relatively low ERM data rate (∼16 bps), some portion of its data may also be made available through the special RBSP real-time space weather downlink.

Table 1 gives a summary of ERM characteristics and performance. The dosimeter and charge monitor sensitivities exceed the targets assumed for basic engineering measurements and the readout rates are considered sufficient to capture dynamic transients. In general, the ERM design approach is scalable depending on application.

Dosimeter

An objective of the dosimeter is to characterize the dose-depth curve and compare the measured results with model predictions. For simplicity, an array of 7 RadFET dosimeters is mounted along a raised aluminum ‘bench’ that brings them in close proximity to the cover. The RadFETs are spaced ∼2 cm apart to form separate pixels, where each pixel sits beneath a different thickness cover (see Fig. 3). An 8th RadFET dosimeter mounted directly to the printed circuit board provides a representative dose for the more heavily shadowed electronics. Given the closeness of the pixels to each other and the penetrating nature of radiation, there is considerable overlap of dose between adjacent pixels. This result was expected and has been accounted for in the modeling effort. The varying thickness cover (absorber) is thinnest at the center and thicker toward the ends in a v-configuration (see Table 2). The absorber material is primarily magnesium that has been precisely machined to form steps at each pixel boundary. The desired thickness over the center pixel was too thin for accurate machining and was replaced with a piece of 40-micron thick aluminum foil bonded to a framed cut-out.

Exploded view of the ERM. The ERM is a single assembly consisting of an electronics board mounted in a thick aluminum frame with top and bottom covers. A cut-out in the top cover is fitted with a varying thickness absorber that spans an array of 7 RadFETs mounted on a raised aluminum substrate. Two isolated charge plates sit atop pedestals mounted to the bottom cover

Each dosimeter is an integrated circuit (IC), type RFT-300CC10G1, developed and manufactured by REM Oxford Ltd. that contains two RadFET sensors and an on-chip diode. The redundant devices are well-matched and can be read out independently with their results averaged to reduce uncertainty. The on-chip diode can be used to monitor the die temperature. Each die is mounted to a small printed circuit carrier board and covered with a low-Z epoxy (“glob top”). A 6-way edge connection permits surface-mount soldering or plugging into a commercial test socket (Kyocera 6207).

RadFETs are p-type Metal Oxide Field Effect Transistors (p-MOSFET) with a thickened gate oxide region. Radiation-induced charge in the insulated gate oxide (SiO2) region can remain trapped for many years. The presence of this stored space charge produces a threshold voltage shift in the device characteristic vs. the accumulated dose (Holmes-Siedle and Adams 2002). A thicker oxide region increases sensitivity but reduces dynamic range. The ERM employs devices with an oxide thickness of 0.3 microns, which provides an acceptable balance between sensitivity and dynamic range.

Being an IC device, RadFETs have a sensitive micro-volume that mimics those of most spacecraft electronic devices and therefore “sees” the same effects. The oxide is sensitive to all types of ionizing dose and provides a Linear Energy Transfer (LET) type response that is not overly affected by dose rate or particle species (Holmes-Siedle et al. 2007). The response to dose is most sensitive and linear if the gate is biased during irradiation, but an operational constraint of the ERM is that power might be removed at any time, so it was decided to operate the ERM RadFETs under zero bias mode (ZBM) so that they will respond to dose in a predictable manner whether powered on or off. As a consequence, their response is nonlinear, so a careful calibration will be required to convert from threshold shift to dose; however, a benefit of the lower sensitivity and sublinear curvature or “roll-off” is a significantly extended dynamic range (>1000 krad) that will potentially allow operation for several years on-orbit before reaching saturation (see Fig. 4). The shape of the ZBM curve may be approximated as a power law with voltage shift varying as dose to a power n, where n starts near unity and slowly falls with increasing dose. For the high total dose expected during the RBSP mission, the value of n may fall to 0.5 or less. Characteristics of RadFETs other than threshold voltage vary with dose, such as the device current-voltage transfer characteristic and a transient effect called “drift up”, which is related to bias-induced charging of traps in the oxide-silicon interface (Holmes-Siedle et al. 2007). The ERM electronics read out the RadFETs at multiple currents and at multiple time intervals to gauge these additional effects.

RadFET specification for shift in threshold voltage with dose of MeV photons (taken from REM Oxford datasheet). The ERM uses the 0.3 micron oxide thickness device biased at 0 V (lower solid red trace). The response of an unbiased RadFET is less sensitive and nonlinear, but extends the useful dynamic range more than an order-of-magnitude and doesn’t rely on continuous spacecraft power to maintain calibration

Other accepted consequences of operating in ZBM are a larger percentage scatter in responses and increased “fade”. Long-term loss of the stored charge in the oxide region (“fade” or room-temperature annealing) occurs as a result of the slow emptying of some charge traps on the oxide. This effect potentially poses a calibration issue but may be less of a problem if the deck temperature runs cold. Most RadFET data for the RFT300 device has been collected under biased conditions, and it has only recently been realized that fade for an unbiased RadFET is more significant. As a result, good accuracy will require a new calibration curve to be captured at dose rates near expected mission values and operating temperatures, which is a planned part of the ground calibration effort.

Charge Monitors

The charge monitors are designed to measure the flux of those electrons that penetrate the cover and then stop in buried dielectrics building up potentially hazardous amounts of charge. The ERM has two independent charge monitors beneath different thickness aluminum covers (1.0 mm and 3.8 mm) as a means of providing crude spectrometry (>0.7 MeV and >2 MeV, respectively) and for extending the dynamic range of intensity measurements in case an unexpectedly large event saturates the more sensitive channel. The two identical charge plates are 38 mm in diameter and 2.5 mm thick. The plates are made of copper (as opposed to aluminum) to reduce the needed thickness to stop electrons penetrating the cover while minimizing the exposure to unwanted background from the sides. The cover itself extends downward to surround the charge plates with a thick baffle that further reduces background from the sides (see Fig. 5). The grounded baffle also reduces susceptibility to Electro-Magnetic Interference (EMI) ensuring measurement capability down to the electronics noise limit.

Cross-sectional view of ERM Charge Monitor plates. Two circular charge collection plates made of copper are mounted close to the top cover. The cover is thinned to two different thicknesses to extend the dynamic range and provide crude spectrometry. The cover extends downward to surround each charge plate to provide additional shielding from the sides

The charge plates sit atop stainless steel pedestals at their center to bring the charge plates closer to the top cover to maximize their view to space (solid angle ∼50 %) and provide the necessary structural support during launch (see Fig. 5). A thin insulating disc between each charge plate and its pedestal, along with an internal bushing inside the pedestal cavity, provides the necessary electrical isolation. The pedestals pass through cut-outs in the electronics board. A short curved wire connects each charge plate to its corresponding preamplifier located near the cut-out. The charge-plate region is kept clear of extraneous circuits and coatings to minimize parasitic leakage currents and couplings.

The charge monitor preamplifiers are configured as transimpedance (current-to-voltage) amplifiers with resistive feedback. Tying the charge plates to the virtual ground connection keeps the plates operating near zero volts potential and prevents them from charging up to high potentials (and possibly arcing). The preamplifiers convert the incoming electron flux to a voltage representing the instantaneous current. Subsequent filtering provides a time-averaged (∼0.5 s) full-scale range of 30 pA (∼3 pA/cm2), which exceeds the largest expected signal with 50 % margin.

Response Modeling

The RBSP spacecraft will be subject to a challenging high-energy proton and electron radiation environment. The Total Integrated Dose (TID) that any particular component on the spacecraft receives is a function of the thickness of the shielding around that component (and similarly for the amount of radiation-induced charge build up). To provide guidance on the amount of shielding necessary to limit the total dose to electronics and other sensitive components, a general analysis of the RBSP spacecraft was performed using models of the environment along with radiation transport calculations. These simulations assumed two simple shielding geometries: spherical and slab, which may in themselves not be representative of the response for a more complex geometry. The ERM aims to investigate the deviation from these simple cases and evaluate an actual flight configuration.

Using a version of the Geometry and Tracking (Geant4) toolkit for the simulation of the passage of particles through matter using Monte Carlo methods (Agostinelli et al. 2003), a high-fidelity simulation of the ERM experiment was developed to predict the response of the RadFETs and charge collection plates to the expected environment. Details of the ERM mechanical configuration were imported into Geant4 by converting the Computer Assisted Drawing (CAD) files to Geometry Description Markup Language (GDML) using Fastrad (Beutier et al. 2003). The simulation results were then used to understand the directional response of the ERM and optimize the design of the cover.

For the dosimeter, the total mission average proton and electron fluxes were calculated using the SPace ENVIronment Information System (SPENVIS; e.g. Heynderickx et al. 2003) as input to the Geant4 simulations. These fluxes are predictions of the radiation environment in the ERM orbit for the full 2 year, 75 day primary mission. Particles reaching the sensitive volume of each RadFET were tracked and the total energy deposition per second was converted to dose. The design was iterated and a set of cover thicknesses was chosen such that measurements from the RadFET array span much of the range of the dose-depth curve and provide enough well-spaced sample points to characterize its shape (see Fig. 6). Using a range of cover thicknesses from 0.04 mm to 9.0 mm (see Table 2), the simulation effort was able to achieve a factor of ∼30 in total integrated dose between the thinnest and thickest shielded RadFETs.

For the charge monitors, a simulation of the ERM response to the expected worst case electron flux (Mauk 2011, private communication) showed that 3.8-cm diameter plates (as large as would practically fit within the ERM) were sufficiently large to detect environmental signals, but, that without further design effort, “background” electrons from other directions would dominate the overall response. Given this initial result, the ERM design was modified to move the charge plates much closer to the cover (67 % improvement in solid angle) and to increase the amount of shielding around the sides of the plates. Using this modified approach, the change in the measured current from quiescent to worst case is estimated to be ∼20 pA for the plate underneath the thinner 1-mm cover and ∼10 pA behind the 3.8-mm cover.

Electronics

Figure 7 shows a functional block diagram of the ERM. The ERM electronics were designed for low-power (<0.25 W) and simple operation with a single operational mode and a fixed-format data interface. There is no internal software and the measurement sequence is controlled by a Field Programmable Gate Array (FPGA). The ERM accepts +5 V power and generates all of its internal voltages from simple charge pumps with unregulated outputs. The critical measurement circuits rely on precision voltage references to maintain accuracy over varying conditions.

ERM functional block diagram. The ERM accepts +5 V power and generates all needed voltages internally. When powered, the ERM FPGA sequences through a 3-min repeating cycle of 1-s measurements consisting of staggered readings of 16 RadFETs, 2 charge monitors, calibration references, and general housekeeping parameters. The ERM outputs these data over a protected serial data interface

The RadFET readout circuit is designed to be responsive to the special features of a solid-state dosimeter based on charge trapping in a glassy material. In all of these materials, there are side effects such as “fade” (slow alteration of trapping), drifts due to interfaces (in this case a Si/SiO2 interface), and sensitivity to temperature (Holmes-Siedle et al. 2007). The die temperature can be measured directly via the on-chip diode and used for characterization and correction. Precise timing of “reads” can reveal short-term drift effects, and long time-lapse measurements can help determine corrections for “fade”. The “read” process itself will affect the field applied to the gate and alter the sensitivity of charge traps in the oxide and will be included in the characterization and calibration of the system.

Most of the time (∼98 %), each RadFET is maintained in an unbiased “irradiate” state in which its gate, drain, and body/source connections are shorted together using a single-pole, double-throw reed relay; a connection scheme consistent with zero bias mode operation where the gate oxide applied field is zero (see Fig. 4). During a measurement cycle, the selected RadFET source terminal is connected to a precision current source while its gate and drain terminals are connected to ground. The RadFET is held in this “read” state for a period of 3 seconds. During the first second, the voltage is measured at a reduced current (167 μA) to allow a two-point measurement of its I–V characteristic. This measurement is then followed by two successive 1-s measurements at a current of 500 μA, which lies much closer to the minimum temperature coefficient point for these devices. The purpose of the two consecutive measurements made at the same current is to characterize any “drift-up” in the device, an effect that also strongly depends on total dose. Since the charge buildup in the oxide during irradiation is very dependent on the gate oxide field, and because it is necessary to apply gate bias during the read cycle, the total amount of read time is held below 2 % so as to minimize the effects of “reads” on the overall calibrated response to dose.

The reed relay associated with each RadFET is a commercial component (Pickering series 101), but was chosen for its compelling attributes: it provides a normally-closed state when unpowered, which may happen at any time and for an indefinite period during spacecraft operations; it acts as an ideal switch, with the ability to tolerate and multiplex voltages in excess of 30 V with no associated leakage current; and it requires very little power to activate (∼15 mW) and draws no power when idle. For ruggedness and reliability, the manufacturer pots these relays using a soft, low-outgassing material that reduces stress on the reed switch under repeated thermal cycling. An internal mu-metal magnetic screen included within the potting shell satisfies the magnetic cleanliness requirement of the RBSP spacecraft. Each of the 16 RadFET devices is assigned a relay. Two more relays are assigned to a precision resistor (“RadFET simulator”) and a connection to ground to provide a regular internal calibration of the measurement system. A final relay allows selection between two different precision bias currents.

All voltages to be measured are fed through an analog multiplexer to a central voltage-to-frequency (V/F) converter (Analog Devices AD537), which acts as a low-power, high-resolution analog-to-digital converter (ADC). The AD537 output frequency, which is set for a nominal full-scale value of ∼100 kHz, drives a 16-bit counter inside the FPGA. The output of a 10-MHz crystal oscillator is divided down to provide a precise 512-ms gate period over which to measure the V/F output frequency. This approach provides nearly 16 bits of resolution in a low-noise measurement system that offers excellent differential non-linearity, an essential feature when looking for small changes in successive readings. The integral non-linearity of this type of system is generally worse in comparison to conventional successive-approximation type converters, but absolute measurement errors less than 0.1 % in either the total dose measurement or the charge monitor measurement are considered negligible. A V/F system may also be more subject to temperature drift than conventional ADCs that have built-in references, but regular measurements of the precision current source in combination with the precision resistor will enable ground correction of drift in both gain and offset that occur due to temperature and/or radiation effects encountered on orbit.

The amount of current applied during the readout of the RadFETs was selected to be close to the minimum temperature coefficient point for these devices, but a residual temperature characteristic will likely remain, which may result in the need for further corrections on the ground to remove this systematic error. Fortunately, each RadFET IC is equipped with an on-chip diode that can be used to track changes in the die temperature. Each of the 8 die temperatures is reported regularly along with a global temperature measurement of the ERM box using a calibrated thermistor.

The two charge monitor outputs are read out much more frequently (once every 5 s) than the RadFETs with these measurements interleaved between RadFET readings. The preamplifier for each charge monitor is a Burr-Brown OPA129 operational amplifier that uses dielectrically-isolated FET inputs to achieve ultra-low input bias currents (<100 fA). While this device is only available in commercial form, a test in our Co-60 irradiator showed its performance meets our 30 krad requirement for electronics inside the shielded portion of the ERM enclosure. The V/F input is configured for positive voltages only, and so a 25 mV dc offset was added to each charge monitor output to allow precision measurements near zero since quiescent currents may be 4 orders down from worst-case conditions. This offset also allows the charge monitors to report small negative currents, which indicates a signal dominated by the collection of protons, a situation that might occur during a solar proton event or passage through the inner belt of trapped protons.

4 Ground Performance and Calibration

The engineering model unit of the ERM closely matches the two delivered flight units and will be used to perform detailed ground performance and calibration measurements at selected beam facilities. These results will be described elsewhere. Here only critical performance parameters are discussed and calibration experiments are described in general terms, with some results given that have been analyzed to date.

Dosimeter Testing and Calibration

All of the flight RadFET devices were selected from the same wafer lot and their general characteristics match reasonably well; however, some spread in their response to dose is expected. To assess the amount of spread and establish an initial calibration, each ERM was given a small 100-rad exposure in a Co-60 chamber. The cover (with its integral absorber) was removed so that all of the devices would receive the same dose (see Fig. 8). The dose rate was kept low to simulate the rate expected on-orbit. For this test, we used a 10 Curie source at a separation distance of 300 mm, which provided a dose rate of 2.08e-2 cGy(Si)/s.

The initial matching in dose response between the RadFET devices was measured to be ±5 %, which is consistent with the manufacturer’s claims and is considered acceptable (see Fig. 9). The board-mounted RadFET is positioned slightly further away from the source and therefore received a lower dose. Separating it from the central cluster of measurements also shows the slightly better matching that is achieved between devices on the same die. The initial measured response to dose (∼0.25 mV/rad) is about 50 % higher than was observed for bare RadFETs in the same chamber, which suggests the mounted RadFETs see an enhancement in dose due to backscatter effects from nearby materials (e.g., the thick aluminum substrate and box walls). A Geant4 analysis of the chamber configuration confirmed this result and emphasizes the need to model the calibration setup to obtain accurate results.

ERM-B flight unit dosimeter calibration results. The first 32 readings (one every 3 minutes) were captured during the initial 100-rad exposure and show good linearity with a spread between RadFET die of ±5 % or less. The board-mounted RadFET (lower blue and green traces) received a slightly lower dose as it was slightly further from the source. Subsequent readings, captured after 15 days and 29 days, where the RadFETs were maintained in the shorted condition while sitting on the spacecraft, show two steep downward steps that indicate significant “fade” over a period of weeks. Future tests are planned to evaluate this fade characteristic over temperature. Results for ERM-A are similar

Following the irradiation, the covers were re-installed and the qualified units were integrated with the spacecraft. Periodic measurements of the RadFETs during spacecraft Integration and Test (I&T) revealed a fade rate much higher than is normally expected for these devices. A ∼20 % drop in the threshold voltage was observed after about a month’s time, where data collected by the manufacturer using devices under bias exhibit an order-of-magnitude less. Later investigations by the manufacturer confirm that the fade rate for unbiased devices is higher than for biased devices. It also appears that small differences in the fade rate between devices may lead to an increase in the overall uncertainty (note increasing spread in Fig. 9). In general, fade may be less of a problem at colder temperatures (reduced annealing rate) and additional measurements of fade at selected operating temperatures are planned. The effect of fade, in general, will be to reduce dose accuracy. Study of the present case indicates that the effect is predictable and that an appropriate correction can be included in the calibration to determine dose estimates. In the event fade remains a significant source of error, we can perform additional ground calibrations on the EM using the environmental conditions observed in flight.

During spacecraft Thermal Vacuum (TV) testing, each ERM was cycled over a range of operating temperatures. This test provided an opportunity to evaluate the temperature dependence of the RadFET measurement scheme. Figure 10 shows an overlay of the temperature dependence for all 16 RadFET devices in ERM-B. There is some variation in the shape of the dependence from die to die, but devices on the same die match extremely well. In all cases, the magnitude of the variation is small and amounts to a systematic error of less than 20 rads over a 50 °C swing in temperature. At a given dose the characteristic is repeatable, which lends itself to further correction. In this case, it was found that a simple linear correction applied to each die reduced this error by a factor of 5. Previous experience shows, however, that the device temperature coefficient (TC) increases with deposited dose (Holmes-Siedle et al. 2007); the cause linked to the formation of new interface states (Camanzi et al. 2001). The effect of this change will be to reduce dose accuracy; however, study so far of the change in TC indicates that the effect is predictable, can be calibrated, and will be allowed for in dose estimates.

ERM-B RadFET temperature dependence. The RadFETs are read out using a precision current source with a value close to the minimum temperature coefficient for these devices. The residual variation is less than ±5 mV over a 50 °C range, which corresponds to an error in dose of less than 20 rads. A simple linear correction reduces the variation to ±1 mV. The temperature characteristic may be different from die to die, but RadFETs on the same die match very well. The temperature sensitivity will increase with dose, but this change is predictable and will be allowed for in dose estimates

ERM Charge Monitor Testing and Calibration

The charge monitors have not yet been tested in an actual electron beam, which is planned as part of future ground tests on the EM, but their conversion gain was verified using an injected signal via a very high-value resistor (1000 GΩ) and found to be within the precision limit of the feedback resistor (∼1 %). With the injection resistor removed, the output will read close to the added offset (24.8 mV), which was chosen to be an order-of-magnitude larger than the maximum expected input offset voltage of the amplifier along with any stray leakage currents on the board. A guaranteed positive offset voltage allows precision measurements of quiescent currents near zero. Performance testing shows a strong correlation between the amount of stray leakage current and the internal relay state (i.e., which RadFET is selected). As a result, the 36 leakage current measurements within a measurement cycle tend to form “bands” since many of the relay states are similar (see Fig. 11). This effect appears stable and repeatable, which lends itself to a simple offset correction. Without any correction, the maximum difference between readings amounts to less than ∼60 fA, which is less than ∼0.3 % of the expected full-scale signal. With offset corrections applied, this source of systematic error is removed completely and the residual variation reduces to the quantization limit of the ADC. During quiescent periods, additional sensitivity can be achieved at the expense of temporal resolution by averaging together the 36 measurements within a cycle. In this case, the variation reduces to less than ∼0.2 fA, which is 5 orders down from the expected full-scale signal and a full order of magnitude below quiescent levels, thus demonstrating excellent margin.

ERM-B flight unit charge monitor performance test. (Top panel) Measurements within a packet tend to form “bands” due to slight differences in offset related to the internal relay state. (Middle panel) These systematic offsets can be removed to demonstrate a noise floor consistent with the quantization limit of the ADC. (Bottom panel) Additional sensitivity can be achieved by averaging at the expense of temporal resolution

The output offsets of the charge monitors show some sensitivity to temperature, but the effect is small and will likely be slowly varying on-orbit and thus not likely to confuse the detection of dynamic fluctuations. Figure 12 shows the temperature characteristic for each charge monitor, where the 36 measurements within each 3-min cycle were averaged to reduce the dispersion. The characteristic appears exponential with an effective doubling of stray leakage currents about every 10 °C. Ignoring the very slight amount of hysteresis, the characteristic is repeatable and could be corrected; however, the magnitude of the initial offset and the total change over the expected operating range is relatively small (<100 fA) and can probably be neglected under most conditions.

ERM-B flight unit charge monitor temperature characteristic. As expected, stray leakage currents increase exponentially with temperature with an effective doubling about every 10 °C. Over the expected operating range, the effect is small and repeatable and the slight amount of hysteresis can be ignored

5 Sensor Operations

The operations plan for ERM is very simple and straightforward as ERM has only a single operating mode. The ERM can be on and generating data whenever the RBSPICE instrument is powered on in at least its low-voltage, standby mode. The operations goal is to remain powered continuously as much as possible, but it is understood that there will be times when it must be switched off to accommodate special RBSPICE operations or to support other instrument or spacecraft operations. The ERM dosimeter is designed to accumulate dose accurately even if powered off for indefinite periods and to report the total integrated mission dose correctly when power is again available (see Sect. 3). The charge monitors can only provide real-time data when ERM is powered. The ERM has a short warm-up characteristic and produces stable data within minutes following a long outage. Short outages have no effect on system performance.

The sensitivity of the RadFETs decreases with the accumulation of dose and will be highest at the start of the mission. The central RadFET (thinnest shielding) is expected to accumulate ∼1 krad/day and the ERM experiment would therefore benefit by being powered on as soon as possible after successful orbit insertion to minimize the data gap since launch and help reduce this initial uncertainty. RBSPICE commissioning activities are scheduled about 18 days into the mission to allow adequate time for outgassing before opening its cover, but an early scheduled turn-on of power rails in low-voltage mode with its cover closed will allow ERM to be powered within the first two days. Pre-irradiation tests show that the fresh sensor array can resolve dose increments well under 100 rads (see Fig. 9), so changes in the threshold voltages before the RBSPICE cover is deployed will be especially meaningful.

6 Telemetry Data Products

The ERM produces a single telemetry packet that contains sensor measurements and housekeeping readings. These packets are assigned a unique RBSPICE application identifier and will be archived as an RBSPICE data product, but ERM data will also be readily accessible via the RBSP Mission Operations Center (MOC) directly. Real-time displays of ERM data have been developed for use by spacecraft operations personnel, but most off-line analysis can ingest the daily Level-0 ERM data files produced by the MOC. This data consists of Payload Telemetry Packet (PTP) files as defined in the RBSP Mission Operations Center (MOC) to Science Operations Center (SOC) Interface Control Document (drawing 7417-9050).

Each Level-0 data file contains all of the raw binary ERM data generated during a Mission Elapsed Time (MET) day. A nominal day will contain 480 ERM data packets. Each packet consists of the MET timestamp followed by a sequence of 178 measurements broken into 9 groups. The first group contains mostly housekeeping measurements of the internally-generated voltages, total current, board temperature, and oscillator frequency. The next 8 groups are identical in structure and contain measurements corresponding to each of the 8 RadFET die along with calibration checks of the measurement system. The charge monitor readings are interleaved throughout the entire sequence to maintain a constant measurement cadence of one every 5 s.

Given the ERM is a supplementary experiment and not a part of the RBSP Level-1 requirements, conversion of the raw engineering data into calibrated units and higher-level data is planned only on an ad hoc basis. Possible products, as part of a participating scientist program or other funded effort, might include an orbit-by-orbit analysis of accumulated dose and deep dielectric charging for each spacecraft, a cross-comparison of ERM data with other real-time measurements of solar weather, and a general comparison of ERM results with models of the space environment such as AE8.

7 Conclusion

An Engineering Radiation Monitor (ERM) has been successfully built as a balance mass experiment for NASA’s Radiation Belt Storm Probes (RBSP) mission that will enable monitoring of total dose and deep dielectric charging at each spacecraft in real time. Each ERM contains an array of RadFET dosimeters with carefully-designed absorbers ranging in thickness from 50 microns to 9 mm. The dosimeters mimic the situation of electronics located at various depths in the spacecraft and the growing doses they receive from the space environment and will obtain a measured dose-depth curve specific to the RBSP orbit for comparison with model predictions. Its high initial sensitivity will enable profiling of RBSP’s path through the Earth’s trapped radiation belts, which could reveal details not included in current static models. Such data can be used by spacecraft designers when considering the degree of shielding and derating of sensors and electronics that is demanded by the actual environmental conditions in orbit. Two buried conductive plates set behind different thickness covers will measure deep dielectric charging conditions that can be potentially hazardous to sensitive electronic components within the spacecraft. The charge monitors are designed to handle extreme events without saturating and will enable correlation of spacecraft anomalies with local space weather conditions. Beyond its role as a spacecraft engineering subsystem, the ERM may also prove beneficial to the RBSP science investigations by helping to characterize the high-energy background omnipresent in their instruments.

References

S. Agostinelli et al., Nucl. Instrum. Methods A 506, 250 (2003)

T. Beutier et al., in Proc. 7th Eur. Conf. RADECS (2003), p. 181

B. Blake et al. (2012, this issue)

A. Bogorad et al., IEEE Trans. Nucl. Sci. 56, 3429 (2009)

P. Calvel et al., IEEE Trans. Nucl. Sci. 55, 3106 (2008)

B. Camanzi, A.G. Holmes-Siedle, A.K. McKemey, Nucl. Instrum. Methods A 457, 476 (2001)

G. Ginet, T. O’Brien, AE-9/AP-9 trapped radiation and plasma models requirements specification (2009)

D. Heynderickx et al., in Proc. 7th Eur. Conf. RADECS (2003), p. 643

A.G. Holmes-Siedle, L. Adams, A Handbook of Radiation Effects, 2nd edn. (Oxford University Press, Oxford, 2002)

A.G. Holmes-Siedle, F. Ravotti, M. Glaser, in IEEE Radiation Effects Data Workshop (2007). doi:10.1109/REDW.2007.4342539L

J. Lanzerotti et al. (2012, this issue)

B.H. Mauk et al. (2011, private communication)

B.H. Mauk et al. (2012, this issue)

D.M. Sawyer, J.I. Vette, Natl. Sp. Sci. Data Cent., NSSDC/WDC-A-R&S 76-06 (1976)

J.I. Vette, Natl. Sp. Sci. Data Cent., NSSDC/WDC-A-R&S 91-24 (1991)

Acknowledgements

The authors would like to express our gratitude to the Living With a Star (LWS) Program, the RBSP Project Office, and the RBSPICE Instrument team for enabling the development of this experiment. The authors also wish to thank the ERM engineering team for their dedicated efforts in designing and building a successful ERM on a very short schedule.

Open Access

This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Goldsten, J.O., Maurer, R.H., Peplowski, P.N. et al. The Engineering Radiation Monitor for the Radiation Belt Storm Probes Mission. Space Sci Rev 179, 485–502 (2013). https://doi.org/10.1007/s11214-012-9917-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11214-012-9917-x