Abstract

A continuous-time nonlinear regression model with Lévy-driven linear noise process is considered. Sufficient conditions of consistency and asymptotic normality of the Whittle estimator for the parameter of spectral density of the noise are obtained in the paper.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The paper is focused on such an important aspect of the study of regression models with correlated observations as an estimation of random noise functional characteristics. When considering this problem the regression function unknown parameter becomes nuisance and complicates the analysis of noise. To neutralise its presence, we must estimate the parameter and then build estimators, say, of spectral density parameter of a stationary random noise using residuals, that is the difference between the values of the observed process and fitted regression function.

So, in the first step we employ the least squares estimator (LSE) for unknown parameter of nonlinear regression, because of its relative simplicity. Asymptotic properties of the LSE in nonlinear regression model were studied by many authors. Numerous results on the subject can be found in monograph by Ivanov and Leonenko (1989), Ivanov (1997).

In the second step we use the residual periodoram to estimate the unknown parameter of the noise spectral density using the Whittle-type contrast process (Whittle 1951, 1953).

The results obtained at this time on the Whittle minimum contrast estimator (MCE) form a developed theory that covers various mathematical models of stochastic processes and random fields. Some publications on the topic are Hannan (1970, 1973), Dunsmuir and Hannan (1976), Guyon (1982), Rosenblatt (1985), Fox and Taqqu (1986), Dahlhaus (1989), Heyde and Gay (1989, 1993), Giraitis and Surgailis (1990), Giraitis and Taqqu (1999), Gao et al. (2001), Gao (2004), Leonenko and Sakhno (2006), Bahamonde and Doukhan (2017), Ginovyan and Sahakyan (2017), AvLeoSaspsoSTLTHUBLIetc, Anh et al. (2004), Bai et al. (2016), Ginovyan et al. (2014), Giraitis et al. (2017).

In the article by Koul and Surgailis (2000) in the linear regression model the asymptotic properties of the Whittle estimator of strongly dependent random noise spectral density parameters were studied in a discrete-time setting.

In the paper by Ivanov and Prykhod’ko (2016) sufficient conditions on consistency and asymptotic normality of the Whittle estimator of the spectral density parameter of the Gaussian stationary random noise in continuous-time nonlinear regression model were obtained using residual periodogram. The current paper continues this research extending it to the case of the Lévy-driven linear random noise and more general classes of regression functions including trigonometric ones. We use the scheme of the proof in the case of Gaussian noise (Ivanov and Prykhod’ko 2016) and some results of the papers (Avram et al. 2010; Anh et al. 2004). For linear random noise the proofs utilize essentially another types of limits theorems. In comparison with Gaussian case it leads to the use of special conditions on linear Lévy-driven random noise, new consistency and asymptotic normality conditions.

In the present publication continues-time model is considered. However, the results obtained can be also used for discrete time observations using the statements like Theorem 3 of Alodat and Olenko (2017) or Lemma 1 of Leonenko and Taufer (2006).

2 Setting

Consider a regression model

where \(g{:}\;(-\gamma ,\,\infty )\times \mathcal {A}_\gamma \ \rightarrow \ \mathbb {R}\) is a continuous function, \(\mathcal {A}\subset \mathbb {R}^q\) is an open convex set, \(\mathcal {A}_\gamma =\bigcup \limits _{\Vert e\Vert \le 1}\left(\mathcal {A}+\gamma e\right)\), \(\gamma \) is some positive number, \(\alpha _0\in \mathcal {A}\) is a true value of unknown parameter, and \(\varepsilon \) is a random noise described below.

Remark 1

The assumption about domain \((-\gamma ,\,\infty )\) for function g in t is of technical nature and does not effect possible applications. This assumption makes it possible to formulate the condition \(\mathbf{N }_2\), which is used in the proof of Lemma 7.

Throughout the paper \((\Omega ,\,\mathcal {F},\,\hbox {P})\) denotes a complete probability space.

A Lévy process L(t), \(t\ge 0\), is a stochastic process, with independent and stationary increments, continuous in probability, with sample-paths which are right-continuous with left limits (cádlág) and \(L(0)=0\). For a general treatment of Lévy processes we refer to Applebaum (2009) and Sato (1999).

Let \((a,\,b,\,\Pi )\) denote a characteristic triplet of the Lévy process L(t), \(t\ge 0\), that is for all \(t\ge 0\)

for all \(z\in \mathbb {R}\), where

where \(a\in \mathbb {R}\), \(b\ge 0\), and

The Lévy measure \(\Pi \) in (2) is a Radon measure on \(\mathbb {R}\backslash \{0\}\) such that \(\Pi (\{0\})=0\), and

It is known that L(t) has finite pth moment for \(p>0\) (\(\hbox {E}|L(t)|^p<\infty \)) if and only if

and L(t) has finite pth exponential moment for \(p>0\) (\(\hbox {E}\left[e^{pL(t)}\right]<\infty \)) if and only if

see, i.e., Sato Sato (1999), Theorem 25.3.

If L(t), \(t\ge 0\), is a Lévy process with characteristics \((a,\,b,\,\Pi )\), then the process \(-L(t)\), \(t\ge 0\), is also a Lévy process with characteristics \((-a,\,b,\,\tilde{\Pi })\), where \(\tilde{\Pi }(A)=\Pi (-A)\) for each Borel set A, modifying it to be cádlág (Anh et al. 2002).

We introduce a two-sided Lévy process L(t), \(t\in \mathbb {R}\), defined for \(t<0\) to be equal an independent copy of \(-L(-t)\).

Let \(\hat{a}\,:\,\mathbb {R}\rightarrow \mathbb {R}_+\) be a measurable function. We consider the Lévy-driven continuous-time linear (or moving average) stochastic process

For causal process (4) \(\hat{a}(t)=0,\ t<0\).

In the sequel we assume that

Under the condition (5) and

the stochastic integral in (4) is well-defined in \(L_2(\Omega )\) in the sense of stochastic integration introduced in Rajput and Rosinski (1989).

The popular choices for the kernel in (4) are Gamma type kernels:

\(\hat{a}(t)=t^\alpha e^{-\lambda t}\mathbb {I}_{[0,\,\infty )}(t)\), \(\lambda >0\), \(\alpha >-\frac{1}{2}\);

\(\hat{a}(t)=e^{-\lambda t}\mathbb {I}_{[0,\,\infty )}(t)\), \(\lambda >0\) (Ornstein-Uhlenbeck process);

\(\hat{a}(t)=e^{-\lambda |t|}\), \(\lambda >0\) (well-balanced Ornstein-Uhlenbeck process).

\(\mathbf A _1\). The process \(\varepsilon \) in (1) is a measurable causal linear process of the form (4), where a two-sides Lévy process L is such that \(\hbox {E}L(1)=0\), \(\hat{a}\in L_1(\mathbb {R})\cap L_2(\mathbb {R})\). Moreover the Lévy measure \(\Pi \) of L(1) satisfies (3) for some \(p>0\).

From the condition \(\mathbf{A }_1\) it follows Anh et al. (2002) for any \(r\ge 1\)

In turn from (6) it can be seen that the stochastic process \(\varepsilon \) is stationary in a strict sense.

Denote by

the moment and cumulant functions correspondingly of order \(r,\ r\ge 1\), of the process \(\varepsilon \). Thus \(m_2(t_1,\,t_2)=B(t_1-t_2)\), where

is a covariance function of \(\varepsilon \), and the fourth moment function

The explicit expression for cumulants of the stochastic process \(\varepsilon \) can be obtained from (6) by direct calculations:

where \(d_r\) is the rth cumulant of the random variable L(1). In particular,

Under the condition \(\mathbf{A }_1\), the spectral densities of the stationary process \(\varepsilon \) of all orders exist and can be obtained from (8) as

where \(a\in L_2(\mathbb {R})\), \(a(\lambda )=\int \limits _{\mathbb {R}}\,\hat{a}(t)e^{-\mathrm {i}\lambda t}dt\), \(\lambda \in \mathbb {R}\), if complex-valued functions \(f_r\in L_1\left(\mathbb {R}^{r-1}\right)\), \(r>2\), see, e.g., Avram et al. (2010) for definitions of the spectral densities of higher order \(f_r,\ r\ge 3\).

For \(r=2\), we denote the spectral density of the second order by

- \(\mathbf A _2\). (i):

Spectral densities (9) of all orders \(f_r\in L_1(\mathbb {R}^{r-1})\), \(r\ge 2\);

- (ii):

\(a(\lambda )=a\left(\lambda ,\,\theta ^{(1)}\right)\), \(d_2=d_2\left(\theta ^{(2)}\right)\), \(\theta =\left(\theta ^{(1)},\,\theta ^{(2)}\right)\in \Theta _\tau \), \(\Theta _\tau =\bigcup \limits _{\Vert e\Vert < 1}(\Theta +\tau e)\), \(\tau >0\) is some number, \(\Theta \subset \mathbb {R}^m\) is a bounded open convex set, that is \(f(\lambda )=f(\lambda ,\,\theta )\), \(\theta \in \Theta _\tau \), and a true value of parameter \(\theta _0\in \Theta \);

- (iii):

\(f(\lambda ,\,\theta )>0\), \((\lambda ,\,\theta )\in \mathbb {R}\times \Theta ^c\).

In the condition \(\mathbf{A }_2\)(ii) above \(\theta ^{(1)}\) represents parameters of the kernel \(\hat{a}\) in (4), while \(\theta ^{(2)}\) represents parameters of Lévy process.

Remark 2

The last part of the condition \(\mathbf{A }_1\) is fully used in the proof of Lemma 5 and Theorem B.1 in “Appendix B”. The condition \(\mathbf{A }_2\)(i) is fully used just in the proof of Lemma 5. When we refer to these conditions in other places of the text we use them partially: see, for example, Lemma 3, where we need in the existence of \(f_4\) only.

Definition 1

The least squares estimator (LSE) of the parameter \(\alpha _0\in \mathcal {A}\) obtained by observations of the process \(\left\rbrace X(t),\ t\in [0,T]\right\lbrace \) is said to be any random vector \(\widehat{\alpha }_T=(\widehat{\alpha }_{1T},\,\ldots ,\,\widehat{\alpha }_{qT})\in \mathcal {A}^c\) (\(\mathcal {A}^c\) is the closure of \(\mathcal {A}\)), such that

We consider the residual periodogram

and the Whittle contrast field

where \(w(\lambda ),\ \lambda \in \mathbb {R}\), is an even nonnegative bounded Lebesgue measurable function, for which the intgral (10) is well-defined. The existence of integral (10) follows from the condition \(\mathbf{C }_4\) introduced below.

Definition 2

The minimum contrast estimator (MCE) of the unknown parameter \(\theta _0\in \Theta \) is said to be any random vector \(\widehat{\theta }_T=\left(\widehat{\theta }_{1T},\ldots ,\widehat{\theta }_{mT}\right)\) such that

The minimum in the Definition 2 is attained due to integral (10) continuity in \(\theta \in \Theta ^c\) as follows from the condition \(\mathbf{C }_4\) introduced below.

3 Consistency of the minimum contrast estimator

Suppose the function \(g(t,\,\alpha )\) in (1) is continuously differentiable with respect to \(\alpha \in \mathcal {A}^c\) for any \(t\ge 0\), and its derivatives \(g_i(t,\,\alpha )=\dfrac{\partial }{\partial \alpha _i}g(t,\,\alpha )\), \(i=\overline{1,q}\), are locally integrable with respect to t. Let

and \(\underset{T\rightarrow \infty }{\liminf }\,T^{-\frac{1}{2}}d_{iT}(\alpha )>0\), \(i=\overline{1,q}\), \(\alpha \in \mathcal {A}\).

Set

We assume that the following conditions are satisfied.

- \(\mathbf{C }_1\).:

The LSE \(\widehat{\alpha }_T\) is a weakly consistent estimator of \(\alpha _0\in \mathcal {A}\) in the sense that

$$\begin{aligned} T^{-\frac{1}{2}}d_T(\alpha _0)\left(\widehat{\alpha }_T-\alpha _0\right)\ \overset{\hbox {P}}{\longrightarrow }\ 0,\ \text {as}\ T\rightarrow \infty . \end{aligned}$$- \(\mathbf{C }_2\).:

There exists a constant \(c_0<\infty \) such that for any \(\alpha _0\in \mathcal {A}\) and \(T>T_0\), where \(c_0\) and \(T_0\) may depend on \(\alpha _0\),

$$\begin{aligned} \Phi _T(\alpha ,\,\alpha _0)\le c_0\Vert d_T(\alpha _0)\left(\alpha -\alpha _0\right)\Vert ^2,\ \alpha \in \mathcal {A}^c. \end{aligned}$$The fulfillment of the conditions C\(_1\) and C\(_2\) is discussed in more detail in “Appendix A”. We need also in 3 more conditions.

- \(\mathbf{C }_3\).:

\(f(\lambda ,\,\theta _1)\ne f(\lambda ,\,\theta _2)\) on a set of positive Lebesgue measure once \(\theta _1\ne \theta _2\), \(\theta _1,\theta _2\in \Theta ^c\).

- \(\mathbf{C }_4\).:

The functions \(w(\lambda )\log f(\lambda ,\,\theta )\), \(\dfrac{w(\lambda )}{f(\lambda ,\,\theta )}\) are continuous with respect to \(\theta \in \Theta ^c\) almost everywhere in \(\lambda \in \mathbb {R}\), and

- (i):

\(w(\lambda )\left|\log f(\lambda ,\,\theta )\right|\le Z_1(\lambda )\), \(\theta \in \Theta ^c\), almost everywhere in \(\lambda \in \mathbb {R}\), and \(Z_1(\cdot )\in L_1(\mathbb {R})\);

- (ii):

\(\sup \limits _{\lambda \in \mathbb {R},\,\theta \in \Theta ^c}\,\dfrac{w(\lambda )}{f(\lambda ,\,\theta )} =c_1<\infty \).

- \(\mathbf{C }_5\).:

There exists an even positive Lebesgue measurable function \(v(\lambda )\), \(\lambda \in \mathbb {R}\), such that

- (i):

\(\dfrac{v(\lambda )}{f(\lambda ,\,\theta )}\) is uniformly continuous in \((\lambda ,\,\theta )\in \mathbb {R}\times \Theta ^c\);

- (ii):

\(\sup \limits _{\lambda \in \mathbb {R}}\,\dfrac{w(\lambda )}{v(\lambda )}<\infty \).

Theorem 1

Under conditions \(\mathbf{A }_1, \mathbf{A }_2, \mathbf{C }_1\)–\(\mathbf{C }_5\)\(\widehat{\theta }_T\ \overset{\hbox {P}}{\longrightarrow }\ \theta \), as \(T\rightarrow \infty \).

To prove the theorem we need some additional assertions.

Lemma 1

Under condition \(\mathbf{A }_1\)

Proof

For any \(\rho >0\) by Chebyshev inequality and (7)

From \(\mathbf{A }_1\) it follows that \(I_2=O(T^{-1})\). Using expression (8) for cumulants of the process \(\varepsilon \) we get

where \(\left\Vert\hat{a}\right\Vert_2=\left(\int \limits _{\mathbb {R}}\,\hat{a}^2(u)du\right)^{\frac{1}{2}}\), that is \(I_1=O(T^{-1})\) as well. \(\square \)

Let

with \(u_k=-\left(u_1+\ldots +u_{k-1}\right)\), \(u_j\in \mathbb {R}\), \(j=\overline{1,k}\).

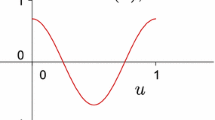

The functions \(\mathrm {F}_T^{(k)}\left(u_1,\ldots ,u_k\right)\), \(k\ge 3\), are multidimensional analogues of the Fejér kernel, for \(k=2\) we obtain the usual Fejér kernel.

The next statement bases on the results by Bentkus (1972a, b), Bentkus and Rutkauskas (1973).

Lemma 2

Let function \(G\left(u_1,\,\ldots ,\,u_k\right)\), \(u_k=-\left(u_1+\ldots +u_{k-1}\right)\) be bounded and continuous at the point \(\left(u_1,\,\ldots ,\,u_{k-1}\right)=(0,\,\ldots ,\,0)\). Then

We set

and write the residual periodogram in the form

Let \(\varphi =\varphi (\lambda ,\,\theta )\), \((\lambda ,\,\theta )\in \mathbb {R}\times \Theta ^c\), be an even Lebesgue measurable with respect to variable \(\lambda \) for each fixed \(\theta \) weight function. We have

Suppose

Then by the Plancherel identity and condition \(\mathbf{C }_2\)

Taking into account conditions \(\mathbf{A }_1, \mathbf{C }_1, \mathbf{C }_2\) and the result of Lemma 1 we obtain

On the other hand

and again, thanks to \(\mathbf{C }_1, \mathbf{C }_2\),

Lemma 3

Suppose conditions \(\mathbf{A }_1, \mathbf{A }_2\) are fulfilled and the weight function \(\varphi (\lambda ,\,\theta )\) introduced above satisfies (11). Then, as \(T\rightarrow \infty \),

Proof

The lemma in fact is an application of Lemma 2 in Anh et al. (2002) and Theorem 1 in Anh et al. (2004) reasoning to linear process (4). It is sufficient to prove

Omitting parameters \(\theta _0\), \(\theta \) in some formulas below we derive

To apply Lemma 2 we have to show that the functions \(G_2(u)\), \(u\in \mathbb {R}\); \(G_4^{(1)}(\mathrm {u})\), \(G_4^{(2)}(\mathrm {u})\), \(\mathrm {u}=(u_1,\,u_2,\,u_3)\in \mathbb {R}^3\), are bounded and continuous at origins.

Boundedness of \(G_2\) follows from (11). Thanks to (11)

On the other hand, by (9)

Integral \(I_4\) admits the same upper bound. So,

where \(\gamma _2=\dfrac{d_4}{d_2^2}>0\) is the excess of L(1) distribution, and functions \(G_2\), \(G_4^{(1)}\), \(G_4^{(2)}\) are bounded. The continuity at origins of these functions follows from conditions of Lemma 3 as well. \(\square \)

Corollary 1

If \(\varphi (\lambda ,\,\theta )=\dfrac{w(\lambda )}{f(\lambda ,\,\theta )}\), then under conditions \(\mathbf{A }_1, \mathbf{A }_2, \mathbf{C }_1, \mathbf{C }_2\) and \(\mathbf{C }_4\)

Consider the Whittle contrast function

with \(K(\theta _0,\,\theta )=0\) if and only if \(\theta =\theta _0\) due to \(\mathbf{C }_3\).

Lemma 4

If the coditions \(\mathbf{A }_1, \mathbf{A }_2, \mathbf{C }_1, \mathbf{C }_2, \mathbf{C }_4\) and \(\mathbf{C }_5\) are satisfied, then

Proof

Let \(\{\theta _j,\ j=\overline{1,N_{\delta }}\}\) be a \(\delta \)-net of the set \(\Theta ^c\). Then

and for any \(\rho \ge 0\)

with

by Corollary 1. On the other hand,

By the condition \(\mathbf{C }_5\)(i)

where

Since by Lemma 3 and the condition \(\mathbf{C }_5\)(ii)

and the 2nd term under the probability sign in (14) by chosing \(\delta \) can be made arbitrary small, then \(P_1\rightarrow 0\), as \(T\rightarrow 0\), taking into account that the 3rd and the 4th terms converge to zero in probability, thanks to (12) and (13), if \(\varphi =\dfrac{w}{f}\). \(\square \)

Proof of Theorem 1

By Definition 2 for any \(\rho >0\)

when \(T\rightarrow \infty \) due to Lemma 4 and the property of the contrast function K. \(\square \)

4 Asymptotic normality of minimum contrast estimator

The first three conditions relate to properties of the regression function \(g(t,\,\alpha )\) and the LSE \(\widehat{\alpha }_T\). They are commented in “Appendix B”.

- \({\mathbf{N }}_1\).:

The normed LSE \(d_T(\alpha _0)\left(\widehat{\alpha }_T-\alpha _0\right)\) is asymptotically, as \(T\rightarrow \infty \), normal \(N(0,\,\Sigma _{_{LSE}})\), \(\Sigma _{_{LSE}}=\left(\Sigma _{_{LSE}}^{ij}\right)_{i,j=1}^q\).

Let us

- \(\mathbf N _2\).:

The function \(g(t,\,\alpha )\) is continuously differentiable with respect to \(t\ge 0\) for any \(\alpha \in \mathcal {A}^c\) and for any \(\alpha _0\in \mathcal {A}\), and \(T>T_0\) there exists a constant \(c_0'\) (\(T_0\) and \(c'_0\) may depend on \(\alpha _0\)) such that

$$\begin{aligned} \Phi _T'(\alpha ,\,\alpha _0) \le c_0'\Bigl \Vert d_T(\alpha _0)\left(\alpha -\alpha _0\right)\Bigr \Vert ^2,\ \alpha \in \mathcal {A}^c. \end{aligned}$$

Let

- \(\mathbf N _3\).:

The function \(g(t,\,\alpha )\) is twice continuously differentiable with respect to \(\alpha \in \mathcal {A}^c\) for any \(t\ge 0\), and for any \(R\ge 0\) and all sufficiently large T (\(T>T_0(R)\))

- (i):

\(d_{iT}^{-1}(\alpha _0)\sup \limits _{t\in [0,T],\,u\in v^c(R)}\, \left|g_i\left(t,\,\alpha _0+d_T^{-1}(\alpha _0)u\right)\right|\le c^i(R)T^{-\frac{1}{2}}\), \(i=\overline{1,q}\);

- (ii):

\(d_{il,T}^{-1}(\alpha _0)\sup \limits _{t\in [0,T],\,u\in v^c(R)}\, \left|g_{il}\left(t,\,\alpha _0+d_T^{-1}(\alpha _0)u\right)\right|\le c^{il}(R)T^{-\frac{1}{2}}\), \(i,l=\overline{1,q}\);

- (iii):

\(d_{iT}^{-1}(\alpha _0)d_{lT}^{-1}(\alpha _0) d_{il,T}(\alpha _0)\le \tilde{c}^{il}T^{-\frac{1}{2}}\), \(i,l=\overline{1,q}\),

with positive constants \(c^i\), \(c^{il}\), \(\tilde{c}^{il}\), possibly, depending on \(\alpha _0\).

We assume also that the function \(f(\lambda ,\,\theta )\) is twice differentiable with respect to \(\theta \in \Theta ^c\) for any \(\lambda \in \mathbb {R}\).

Set

and introduce the following conditions.

- \(\mathbf{N}_4\).:

- (i):

For any \(\theta \in \Theta ^c\) the functions \(\varphi _i(\lambda )=\dfrac{f_i(\lambda ,\,\theta )}{f^2(\lambda ,\,\theta )}w(\lambda )\), \(\lambda \in \mathbb {R}\), \(i=\overline{1,m}\), possess the following properties:

- (1):

\(\varphi _i\in L_\infty (\mathbb {R})\cap L_1(\mathbb {R})\);

- (2):

\(\overset{+\infty }{\underset{-\infty }{\hbox {Var}}}\,\varphi _i<\infty \);

- (3):

\(\underset{\eta \rightarrow 1}{\lim }\,\underset{\lambda \in \mathbb {R}}{\sup }\, \left|\varphi _i(\eta \lambda )-\varphi _i(\lambda )\right|=0\) ;

- (4):

\(\varphi _i\) are differentiable and \(\varphi '_i\) are uniformly continuous on \(\mathbb {R}\).

- (ii):

\(\dfrac{|f_i(\lambda ,\,\theta )|}{f(\lambda ,\,\theta )}w(\lambda ) \le Z_2(\lambda )\), \(\theta \in \Theta \), \(i=\overline{1,m}\), almost everywhere in \(\lambda \in \mathbb {R}\) and \(Z_2(\cdot )\in L_1(\mathbb {R})\).

- (iii):

The functions \(\dfrac{f_i(\lambda ,\,\theta ) f_j(\lambda ,\,\theta )}{f^2(\lambda ,\,\theta )}w(\lambda )\), \(\dfrac{f_{ij}(\lambda ,\,\theta )}{f(\lambda ,\,\theta )}w(\lambda )\) are continuous with respect to \(\theta \in \Theta ^c\) for each \(\lambda \in \mathbb {R}\) and

$$\begin{aligned} \dfrac{f_i^2(\lambda ,\,\theta )}{f^2(\lambda ,\,\theta )}w(\lambda ) +\dfrac{|f_{ij}(\lambda ,\,\theta )|}{f(\lambda ,\,\theta )}w(\lambda )\le a_{ij}(\lambda ),\ \lambda \in \mathbb {R},\ \theta \in \Theta ^c, \end{aligned}$$where \(a_{ij}(\cdot )\in L_1(\mathbb {R})\), \(i,j=\overline{1,m}\).

- \(\mathbf N _5\).:

- (i):

\(\dfrac{f_i^2(\lambda ,\,\theta )}{f^3(\lambda ,\,\theta )}w(\lambda )\), \(\dfrac{f_{ij}(\lambda ,\,\theta )}{f^2(\lambda ,\,\theta )}w(\lambda )\), \(i,j=\overline{1,m}\), are bounded functions in \((\lambda ,\,\theta )\in \mathbb {R}\times \Theta ^c\);

- (ii):

There exists an even positive Lebesgue measurable function \(v(\lambda ),\ \lambda \in \mathbb {R}\), such that the functions \(\dfrac{f_i(\lambda ,\,\theta ) f_j(\lambda ,\,\theta )}{f^3(\lambda ,\,\theta )}v(\lambda )\), \(\dfrac{f_{ij}(\lambda ,\,\theta )}{f^2(\lambda ,\,\theta )}v(\lambda )\), \(i,j=\overline{1,m}\), are uniformly continuous in \((\lambda ,\,\theta )\in \mathbb {R}\times \Theta ^c\);

- (iii):

\(\underset{\lambda \in \mathbb {R}}{\sup }\,\dfrac{w(\lambda )}{v(\lambda )}<\infty \).

Conditions \(\mathbf N _5\)(iii) and \(\mathbf C _5\)(ii) look the same, however the function v in these conditions must satisfy different conditions \(\mathbf N _5\)(ii) and \(\mathbf C _5\)(i), and therefore, generally speaking, the functions v in these two conditions can be different.

The next three matrices appear in the formulation of Theorem 2:

where \(\gamma _2=\dfrac{d_4}{d_2^2}>0\) is the excess of the random variable L(1), \(\nabla _\theta \) is a column vector-gradient, \(\nabla _\theta '\) is a row vector-gradient.

\(\mathbf N _6\). Matrices \(W_1(\theta )\) and \(W_2(\theta )\) are positive definite for \(\theta \in \Theta \).

Theorem 2

Under conditions \(\mathbf{A }_1, \mathbf{A }_2, \mathbf{C }_1\)–\(\mathbf{C }_5\) and \(\mathbf{N }_1\)–\(\mathbf{N }_6\) the normed MCE \(T^{\frac{1}{2}}(\widehat{\theta }_T-\theta _0)\) is asymptotically, as \(T\rightarrow \infty \), normal with zero mean and covariance matrix

The proof of the theorem is preceded by several lemmas. The next statement is Theorem 5.1 Avram et al. (2010) formulated in a form convenient to us.

Lemma 5

Let the stochastic process \(\varepsilon \) satisfies \(\mathbf A _1\), \(\mathbf A _2\), spectral density \(f\in L_p(\mathbb {R})\), a function \(b\in L_q(\mathbb {R})\bigcap L_1(\mathbb {R})\), where \(\dfrac{1}{p}+\dfrac{1}{q}=\dfrac{1}{2}\). Let

and

Then the central limit theorem holds:

where “\(\Rightarrow \)” means convergence in distributions,

where \(\gamma _2=\dfrac{d_4}{d_2^2}>0\) is the excess of the random variable L(1). In particular, the statement is true for \(p=2\) and \(q=\infty \).

Alternative form of Lemma 5 is given in Bai et al. (2016). We formulate their Theorem 2.1 in the form convenient to us.

Lemma 6

Let the stochastic process \(\varepsilon \) be such that \(\hbox {E}L(1)=0\), \(\hbox {E}L^4(1)<\infty \), and \(Q_T\) be as in (17). Assume that \(\hat{a}\in L_p(\mathbb {R})\cap L_2(\mathbb {R})\), \(\hat{b}\) is of the form (16) with even function \(b\in L_1(\mathbb {R})\) and \(\hat{b}\in L_q(\mathbb {R})\) with

then

where \(\sigma ^2\) is given in (18).

Remark 3

It is important to note that conditions of Lemma 5 are given in frequency domain, while Lemma 6 employs the time domain conditions.

Theorems similar to Lemmas 5 and 6 can be found in paper by Giraitis et al. (2017), where the case of martingale-differences were considered. Overview of analogous results for different types of processes is given in the paper by Ginovyan et al. (2014).

Set

Lemma 7

Suppose the conditions \(\mathbf{A }_1, \mathbf{A }_2, \mathbf{C }_2, \mathbf{N }_1\)–\(\mathbf{N }_3\) are fulfilled, \(\varphi (\lambda )\), \(\lambda \in \mathbb {R}\), is a bounded differentiable function satisfying the relation 3) of the condition \(\mathbf{N }_4\)(i), and moreover the derivative \(\varphi '(\lambda )\), \(\lambda \in \mathbb {R}\), is uniformly continuous on \(\mathbb {R}\). Then

Proof

Let \(B_\sigma \) be the set of all bounded entire functions on \(\mathbb {R}\) of exponential type \(0\le \sigma <\infty \) (see “Appendix C”), and \(\delta >0\) is an arbitrarily small number. Then there exists a function \(\varphi _\sigma \in B_\sigma \), \(\sigma =\sigma (\delta )\), such that

Let \(T_n(\varphi _\sigma ;\,\lambda )=\sum \limits _{j=-n}^n\, c_j^{(n)}e^{\mathrm {i}j\frac{\sigma }{n}\lambda },\ n\ge 1\), be a sequence of the Levitan polynomials that corresponds to \(\varphi _\sigma \). For any \(\Lambda >0\) there exists \(n_0=n_0(\delta ,\,\Lambda )\) such that for \(n>n_0\)

Write

So, under the condition \(\mathbf{C }_2\), for any \(\rho >0\)

The probability \(P_4\rightarrow 0\), as \(T\rightarrow \infty \), and the probability \(P_3\) under the condition \(\mathbf{N }_1\) for sufficiently large T (we will write \(T>T_0\)) can be made less than a preassigned number by chosing \(\delta >0\) for a fixed \(\rho >0\).

As far as the function \(\varphi _\sigma \in B_\sigma \) and the corresponding sequence of Levitan polynomials \(T_n\) are bounded by the same constant, we obtain

The integral in the term \(D_1\) can be majorized by an integral over \(\mathbb {R}\) and bounded as earlier. We have further

where \(\overline{s_T'(\lambda ,\,\widehat{\alpha }_T)} =\int \limits _0^T\, e^{-\mathrm {i}\lambda t}(g'(t,\,\alpha _0)-g'(t,\,\widehat{\alpha }_T))dt\).

Under the Lemma conditions

Obviously,

\(\alpha ^*_T=\alpha _0+\eta \left(\widehat{\alpha }_T-\alpha _0\right)\), \(\eta \in (0,\,1)\), \(d_T(\alpha _0)\left(\alpha ^*_T-\alpha _0\right)= \eta d_T(\alpha _0)\left(\widehat{\alpha }_T-\alpha _0\right)\), and for any \(\rho >0\) and \(i=\overline{1,q}\)

By condition \(\mathbf N _3\)(i) for any \(R\ge 0\)

according to \(\mathbf{N }_1\) (or \(\mathbf{C }_1\)). On the other hand, by condition \(\mathbf{N }_1\) the value R can be chosen so that for \(T>T_0\) the probability \(P_6\) becomes less that preassigned number.

So,

and, similarly, \(g(0,\,\widehat{\alpha }_T)- g(0,\,\alpha _0)\ \overset{\hbox {P}}{\longrightarrow }\ 0\), as \(T\rightarrow \infty \).

Moreover, for any \(\rho >0\)

and the second probability is equal to zero, if \(\Lambda >\frac{R}{\rho }\).

Thus for any fixed \(\rho >0\), similarly to the probability \(P_3\), the probability \(P_7=\hbox {P}\{D_2\ge \rho \}\) for \(T>T_0\) can be made less than preassigned number by the choice of the value \(\Lambda \).

Consider

It means that

For \(j>0\) consider the value

\(\alpha _T^{*}=\alpha _0+\bar{\eta }\left(\widehat{\alpha }_T-\alpha _0\right)\), \(\bar{\eta }\in (0,\,1)\).

Note that for \(i=\overline{1,q}\)

by the condition \(\mathbf{N }_1\). Moreover,

since

It means that the sum \(S_{1T}\overset{\hbox {P}}{\longrightarrow }0\), as \(T\rightarrow \infty \).

For the general term \(S_{2T}^{ik}\) of the sum \(S_{2T}\) and any \(\rho >0\), \(R>0\),

Under condition  using assumptions \(\mathbf{N }_3\)(ii) and \(\mathbf{N }_3\)(iii) we get as in the estimation of the probability \(P_5\)

using assumptions \(\mathbf{N }_3\)(ii) and \(\mathbf{N }_3\)(iii) we get as in the estimation of the probability \(P_5\)

By Lemma 1

So, by condition \(\mathbf{N }_1\)\(P_8\rightarrow 0\), as \(T\rightarrow \infty \), that is \(S_{2T}\ \overset{\hbox {P}}{\longrightarrow }\ 0\), as \(T\rightarrow \infty \). For \(j\le 0\) the reasoning is similar, and

\(\square \)

Lemma 8

Let the function \(\varphi (\lambda ,\,\theta )w(\lambda )\) be continuous in \(\theta \in \Theta ^c\) for each fixed \(\lambda \in \mathbb {R}\) with

If \(\theta _T^{*}\overset{\hbox {P}}{\longrightarrow }\theta _0\), then

Proof

By a Lebesgue dominated convergence theorem the integral \(I(\theta )\), \(\theta \in \Theta ^c\), is a continuous function. Further argument is standard. For any \(\rho >0\) and \(\varepsilon =\dfrac{\rho }{2}\) we find such a \(\delta >0\), that \(|I(\theta )-I(\theta _0)|<\varepsilon \) as \(\Vert \theta -\theta _0\Vert <\delta \). Then

where

due to the choice of \(\varepsilon \), and

\(\square \)

Lemma 9

If the conditions \(\mathbf{A }_1, \mathbf{C }_2\) are satisfied and \(\sup \limits _{\lambda \in \mathbb {R},\,\theta \in \Theta ^c}\, |\varphi (\lambda ,\,\theta )|=c(\varphi )<\infty \), then

Proof

These relations are similar to (12), (13), and can be obtained in the same way. \(\square \)

Lemma 10

Let under conditions \(\mathbf{A }_1, \mathbf{A }_2\) there exists an even positive Lebesgue measurable function \(v(\lambda )\), \(\lambda \in \mathbb {R}\), and an even Lebesgue measurable in \(\lambda \) for any fixed \(\theta \in \Theta ^c\) function \(\varphi (\lambda ,\,\theta )\), \((\lambda ,\,\theta )\in \mathbb {R}\times \Theta ^c\), such that

- (i):

\(\varphi (\lambda ,\,\theta )v(\lambda )\) is uniformly continuous in \((\lambda ,\,\theta )\in \mathbb {R}\times \Theta ^c\);

- (ii):

\(\underset{\lambda \in \mathbb {R}}{\sup }\,\dfrac{w(\lambda )}{v(\lambda )}<\infty \);

- (iii):

\(\underset{\lambda \in \mathbb {R},\ \theta \in \Theta ^c}{\sup }\,|\varphi (\lambda ,\,\theta )|w(\lambda )<\infty \). Suppose also that \(\theta _T^{*}\overset{\hbox {P}}{\longrightarrow }\theta _0\), then, as \(T\rightarrow \infty \),

$$\begin{aligned} \int \limits _{\mathbb {R}}\,I_T^{\varepsilon }(\lambda )\varphi (\lambda ,\,\theta _T^{*})w(\lambda )d\lambda \ \overset{\hbox {P}}{\longrightarrow }\ \int \limits _{\mathbb {R}}\,f(\lambda ,\,\theta _0)\varphi (\lambda ,\,\theta _0)w(\lambda )d\lambda . \end{aligned}$$

Proof

We have

By Lemma 3 and the condition (iii)

On the other hand, for any \(r>0\) under the condition (i) there exists \(\delta =\delta (r)\) such that for \(\left\Vert\theta _T^{*}-\theta _0\right\Vert<\delta \)

and by the condition (ii)

The relations (19)–(21) prove the lemma. \(\square \)

Proof of Theorem 2

By definition of the MCE \(\widehat{\theta }_T\), formally using the Taylor formula, we get

Since there is no vector Taylor formula, (22) must be taken coordinatewise, that is each row of vector equality (22) depends on its own random vector \(\theta _T^{*}\), such that \(\Vert \theta _T^{*}-\theta _0\Vert \le \Vert \widehat{\theta }_T-\theta _0\Vert \). In turn, from (22) we have formally

As far as the condition \(\mathbf{N }_4\) implies the possibility of differentiation under the sign of the integrals in (10), then

Similarly

where the terms \(B_T^{(3)}\) and \(B_T^{(4)}\) contain values \(\hbox {Re}\{\varepsilon _T(\lambda )\overline{s_T(\lambda ,\widehat{\alpha }_T)}\}\) and \(|s_T(\lambda ,\widehat{\alpha }_T)|^2\), respectively.

Bearing in mind the 1st part of the condition \(\mathbf N _4\)(i), we take in Lemma 7 the functions

Then in the formula (23) \(A_T^{(2)}\ \overset{\hbox {P}}{\longrightarrow }\ 0\), as \(T\rightarrow \infty \).

Consider the term \(A_T^{(3)}=(a_{iT}^{(3)})_{i=1,}^m\), in the sum (23)

where \(\varphi _i(\lambda )\) are as before. Under conditions \(\mathbf{C }_1, \mathbf{C }_2, \mathbf{N }_1\) and (1) of \(\mathbf{N }_4\)(i)\(A_T^{(3)}\ \overset{\hbox {P}}{\longrightarrow }\ 0\), as \(T\rightarrow \infty \), because

Examine the behaviour of the terms \(B_T^{(1)}-B_T^{(4)}\) in formula (24). Under conditions \(\mathbf{C }_1\) and \(\mathbf{N }_4\)(iii) we can use Lemma 8 with functions

to obtain the convergence

Under the condition \(\mathbf{N }_5\)(i) we can use Lemma 9 with functions

to obtain that

Under conditions \(\mathbf{C }_1\) and \(\mathbf{N }_5\)

if we take in Lemma 10 in conditions (i) and (iii)

So, under conditions \(\mathbf{C }_1, \mathbf{C }_2, \mathbf{N }_4\)(iii) and \(\mathbf{N }_5\)

because \(W_1(\theta _0)\) is the sum of the right hand sides of (25) and (26).

From the facts obtained, it follows that for the proof of Theorem 2 it is necessary to study an asymptotic behaviour of vector \(A_T^{(1)}\) from (23):

We will take

and write

Under conditions (1) and (2) of \(\mathbf{N }_4\)(i) (Bentkus 1972b; Ibragimov 1963) for any \(u\in \mathbb {R}^m\)

On the other hand

with

Thus we can apply Lemma 5 taking \(b(\lambda )=(2\pi )^{-1}\Psi (\lambda )\) in the formula (18) to obtain for any \(u\in \mathbb {R}^m\)

where

The relations (28) and (29) are equivalent to the convergence

From (27) and (30) it follows (15).

Remark 4

From the conditions of Theorem 2 it follows also the fulfillment of Lemma 6 conditions for functions \(\hat{a}\) and \(\hat{b}\). Really by condition \(\mathbf{A }_1\)\(\hat{a}\in L_1(\mathbb {R})\cap L_2(\mathbb {R})\) and we can take \(p=1\) in Lemma 6. On the other hand, if we look at \(b=(2\pi )^{-1}\Psi \) as at an original of the Fourier transform, from \(\mathbf{N }_4\)(i)1) we have \(b\in L_1(\mathbb {R})\cap L_2(\mathbb {R})\). Then according to the Plancherel theorem \(\hat{b}\in L_2(\mathbb {R})\) and we can take \(q=2\) in Lemma 6. Thus

and conclusion of Lemma 6 is true.

5 Example: The motion of a pendulum in a turbulent fluid

First of all we review a number of results discussed in Parzen (1962), Anh et al. (2002), and Leonenko and Papić (2019), see also references therein.

We examine the stationary Lévy-driven continuous-time autoregressive process \(\varepsilon (t),\ t\in \mathbb {R}\), of the order two ( CAR(2)-process ) in the under-damped case (see Leonenko and Papić 2019 for details).

The motion of a pendulum is described by the equation

in which \(\varepsilon (t)\) is the replacement from its rest position, \(\alpha \) is a damping factor, \(\dfrac{2\pi }{\omega }\) is the damped period of the pendulum (see, i.e., Parzen 1962, pp. 111–113).

We consider the Green function solution of the equation (31), in which \(\dot{L}\) is the Lévy noise, i.e. the derivative of a Lévy process in the distribution sense (see Anh et al. 2002; Leonenko and Papić 2019 for details). The solution can be defined as the linear process

where the Green function

Assuming \(\hbox {E}L(1)=0\), \(d_2=\hbox {E}L^2(1)<\infty \), we obtain

The formula (33) for the covariance function of the process \(\varepsilon \) corresponds to the formula (2.12) in Leonenko and Papić (2019) for the correlation function

On the other hand for \(\hat{a}(t)\) given by (32)

Then the positive spectral density of the stationary process \(\varepsilon \) can be written as (compare with Parzen 1962)

It is convenient to rewrite (34) in the form

where \(\alpha =\theta _1\) is a damping factor, \(\beta =-\varkappa ^{(2)}(0)=d_2(\theta _2)=\theta _2\), \(\gamma =\omega =\theta _3\) is a damped cyclic frequency of the pendulum oscillations. Suppose that

The condition \(\mathbf{C }_3\) is fulfilled for spectral density (35).

Assume that

More precisely the value of a will be chosen below.

Obviously the functions \(w(\lambda )\log f(\lambda ,\,\theta )\), \(\frac{w(\lambda )}{f(\lambda ,\,\theta )}\) are continuous on \(\mathbb {R}\times \Theta ^c\). For any \(\Lambda >0\) the function \(\left|\log f(\lambda ,\,\theta )\right|\) is bounded on the set \([-\Lambda ,\,\Lambda ]\times \Theta ^c\). The number \(\Lambda \) can be chosen so that for \(\mathbb {R}\backslash [-\Lambda ,\,\Lambda ]\)

Thus the function \(Z_1(\lambda )\) in the condition \(\mathbf{C }_4\)(i) exists.

As for condition \(\mathbf{C }_4\)(ii), if \(a\ge 2\), then

As a function v in condition \(\mathbf{C }_5\) we take

Obviously, if \(a\ge b\), then \(\sup \limits _{\lambda \in \mathbb {R}}\,\frac{w(\lambda )}{v(\lambda )}<\infty \) (condition \(\mathbf{C }_5\)(ii)), and the function \(\frac{v(\lambda )}{f(\lambda ,\,\theta )}\) is uniformly continuous in \((\lambda ,\,\theta )\in \mathbb {R}\times \Theta ^c\), if \(b\ge 2\) (condition \(\mathbf{C }_5\)(i)).

Further it will be helpful to use the notation \(s(\lambda )=\left(\lambda ^2-\alpha ^2-\gamma ^2\right)^2+4\alpha ^2\lambda ^2\). Then

To check the condition \(\mathbf{N }_4\)(i)1) consider the functions

Then the condition \(\mathbf{N }_4\)(i)1) is satisfied for \(\varphi _\alpha \) and \(\varphi _\gamma \) when \(a>\frac{3}{2}\), for \(\varphi _\beta \) when \(a>\frac{5}{2}\). The same values of a are sufficient also to meet the condition \(\mathbf{N }_4\)(i)2).

To verify \(\mathbf{N }_4\)(i)3) fix \(\theta \in \Theta ^c\) and denote by \(\varphi (\lambda )\), \(\lambda \in \mathbb {R}\), any of the continuous functions \(\varphi _\alpha (\lambda )\), \(\varphi _\beta (\lambda )\), \(\varphi _\gamma (\lambda )\), \(\lambda \in \mathbb {R}\). Suppose \(|1-\eta |<\delta <\frac{1}{2}\). Then

By the properties of the functions \(\varphi \) under assumption \(a>\frac{5}{2}\) for any \(\varepsilon >0\) there exists \(\Lambda =\Lambda (\varepsilon )>0\) such that for \(|\lambda |>\frac{2}{3}\Lambda \)\(|\varphi (\lambda )|<\frac{\varepsilon }{2}\). So, \(s_3\le \frac{\varepsilon }{2}\). We have also \(s_4\le \underset{|\lambda |>\frac{2}{3}\Lambda }{\sup }\,|\varphi (\lambda )|\le \frac{\varepsilon }{2}\). On the other hand,

and by the proper choice of \(\delta \)

and condition \(\mathbf{N }_4\)(i)(3) is met.

Using (37) we get for any \(\theta \in \Theta ^c\), as \(\lambda \rightarrow \infty \),

Therefore for \(a>\frac{3}{2}\) these derivatives are uniformly continuous on \(\mathbb {R}\) (condition \(\mathbf{N }_4\)(i)4). So, to satisfy condition \(\mathbf{N }_4\)(i) we can take weight function \(w(\lambda )\) with \(a>\frac{5}{2}\).

The check of assumption \(\mathbf{N }_4\)(ii) is similar to the check of \(\mathbf{C }_4\)(i).

As \(\lambda \rightarrow \infty \), uniformly in \(\theta \in \Theta ^c\)

On the other hand, for any \(\Lambda >0\) the functions (38) are bounded on the sets \([-\Lambda ,\,\Lambda ]\times \Theta ^c\).

To check \(\mathbf{N }_4\)(iii) note first of all that the functions uniformly in \(\theta \in \Theta ^c\), as \(\lambda \rightarrow \infty \),

These functions are continuous on \(\mathbb {R}\times \Theta ^c\), as well as the functions

Moreover, uniformly in \(\theta \in \Theta ^c\), as \(\lambda \rightarrow \infty \),

Note that the functions (41) are continuous on \(\mathbb {R}\times \Theta ^c\) as well as functions (39) and (40). Therefore the condition \(\mathbf{N }_4\)(iii) is fulfilled.

Let us verify the condition \(\mathbf{N }_5\)(1). According to equation (39), uniformly in \(\theta \in \Theta ^c\), as \(\lambda \rightarrow \infty \),

Therefore the continuous in \((\lambda ,\theta )\in \mathbb {R}\times \Theta ^c\) functions (42) are bounded in \((\lambda ,\theta )\in \mathbb {R}\times \Theta ^c\), if \(a\ge 2\).

Using equations (40) and (41) we obtain uniformly in \(\theta \in \Theta ^c\), as \(\lambda \rightarrow \infty \),

So, continuous on \(\mathbb {R}\times \Theta ^c\) functions (43) are bounded in \((\lambda ,\theta )\in \mathbb {R}\times \Theta ^c\), if \(a\ge 1\).

To check \(\mathbf{N }_5\)(ii) consider the weight function

If \(a\ge b\), then function \(\frac{w(\lambda )}{v(\lambda )}\) is bounded on \(\mathbb {R}\) (condition \(\mathbf{N }_5\)(iii)). Using (42) we obtain uniformly in \(\theta \in \Theta ^c\), as \(\lambda \rightarrow \infty \),

In turn, similarly to (40) it follows uniformly in \(\theta \in \Theta ^c\), as \(\lambda \rightarrow \infty \),

The functions (44) and (45) will be uniformly continuous in \((\lambda ,\theta )\in \mathbb {R}\times \Theta ^c\), if they converge to zero, as \(\lambda \rightarrow \infty \), uniformly in \(\theta \in \Theta ^c\), that is if \(b>2\).

Similarly to (43) uniformly in \(\theta \in \Theta ^c\), as \(\lambda \rightarrow \infty \),

Thus the functions (44)–(46) are uniformly continuous in \((\lambda ,\theta )\in \mathbb {R}\times \Theta ^c\), if \(b>2\).

Proceeding to the verification of condition \(\mathbf{N }_6\), we note that for any \(x=\left(x_\alpha ,\,x_\beta ,\,x_\gamma \right)\ne 0\)

From equation (36) it is seen that the positive definiteness of the matrix \(W_1(\lambda )\) follows from linear independence of the functions \(\lambda ^2+\alpha ^2+\gamma ^2\), \(s(\lambda )\), \(\lambda ^2-\alpha ^2-\gamma ^2\). Positive definiteness of the matrix \(W_2(\theta )\) is established similarly.

In our example to satisfy the consistency conditions \(\mathbf{C }_4\) and \(\mathbf{C }_5\) the weight functions \(w(\lambda )\) and \(v(\lambda )\) should be chosen so that \(a\ge b>2\). On the other hand to satisfy the asymptotic normality conditions \(\mathbf{N }_4\) and \(\mathbf{N }_5\) the functions \(w(\lambda )\) and \(v(\lambda )\) should be such that \(a>\frac{5}{2}\) and \(a\ge b>2\).

The spectral density (35) has no singularity at zero, so that the functions \(v(\lambda )\) in the conditions \(\mathbf{C }_5\)(i) and \(\mathbf{N }_5\)(ii) could be chosen to be equal to \(w(\lambda )\), for example, \(a=b=3\). However we prefer to keep in the text the function \(v(\lambda )\), since it is needed when the spectral density could have a singularity at zero or elsewhere, see, e.g., Example 1 (Leonenko and Sakhno 2006), where linear process driven by the Brownian motion and regression function \(g(t,\,\alpha )\equiv 0\) have been studied. Specifically in the case of Riesz-Bessel spectral density

where \(\theta =\left(\theta _1,\,\theta _2,\,\theta _3\right)=(\alpha ,\,\beta ,\,\gamma )\in \Theta =(\underline{\alpha },\,\overline{\alpha }) \times (\underline{\beta },\,\overline{\beta })\times (\underline{\gamma },\,\overline{\gamma })\), \(\underline{\alpha }>0\), \(\overline{\alpha }<\frac{1}{2}\), \(\underline{\beta }>0\), \(\overline{\beta }<\infty \), \(\underline{\gamma }>\frac{1}{2}\), \(\overline{\gamma }<\infty \), and the parameter \(\alpha \) signifies the long range dependence, while the parameter \(\gamma \) indicates the second-order intermittency (Anh et al. 2004; Gao et al. 2001; Lim and Teo 2008), the weight functions have been chosen in the form

Unfortunately, our conditions do not cover so far the case of the general non-linear regression function and Lévy driven continuous-time strongly dependent linear random noise such as Riesz-Bessel motion.

References

Akhiezer NI (1965) Lections on approximation theory. Nauka, Moscow (in Russian)

Alodat T, Olenko A (2017) Weak convergence of weighted additive functionals of long-range dependent fields. Theor Probab Math Stat 97:9–23

Anh VV, Heyde CC, Leonenko NN (2002) Dynamic models of long-memory processes driven by Lévy noise. J Appl Prob 39(4):730–747

Anh VV, Leonenko NN, Sakhno LM (2004) On a class of minimum contrast estimators for fractional stochastic processes and fields. J Statist Plan Inference 123:161–185

Applebaum D (2009) Lévy processes and stochastic calculus, vol 116. Cambridge studies in advanced mathematics. Cambridge University Press, Cambridge

Avram F, Leonenko N, Sakhno L (2010) On a Szegö type limit theorem, the Hölder–Young–Brascamp–Lieb inequality, and the asymptotic theory of integrals and quadratic forms of stationary fields. ESAIM: Probab Stat 14:210–225

Bahamonde N, Doukhan P (2017) Spectral estimation in the presence of missing data. Theor Probab Math Stat 95:59–79

Bai S, Ginovyan MS, Taqqu MS (2016) Limit theorems for quadratic forms of Lévy-driven continuous-time linear processes. Stoch Process Appl 126(4):1036–1065

Bentkus R (1972) Asymptotic normality of an estimate of the spectral function. Liet Mat Rink 3(12):5–18

Bentkus R (1972) On the error of the estimate of the spectral function of a stationary process. Liet Mat Rink 1(12):55–71

Bentkus R, Rutkauskas R (1973) On the asymptotics of the first two moments of second order spectral estimators. Liet Mat Rink 1(13):29–45

Dahlhaus R (1989) Efficient parameter estimation for self-similar processes. Ann Stat 17:1749–1766

Dunsmuir W, Hannan EJ (1976) Vector linear time series models. Adv Appl Probab 8:339–360

Fox R, Taqqu MS (1986) Large-sample properties of parameter estimates for strongly dependent stationary Gaussian time series. Ann Stat 2(14):517–532

Gao J (2004) Modelling long-range-dependent Gaussian processes with application in continuous-time financial models. J Appl Probab 41:467–485

Gao J, Anh V, Heyde CC, Tieng Q (2001) Parameter estimation of stochastic processes with long-range dependence and intermittency. J Time Ser Anal 22:517–535

Ginovyan MS, Sahakyan AA, Taqqu MS (2014) The trace problem for Toeplitz matrices and operators and its impact in probability. Probab Surv 11:393–440

Ginovyan MS, Sahakyan AA (2017) Robust estimation for continuous-time linear models with memory. Theor Probab Math Stat 95:81–98

Giraitis L, Surgailis D (1990) A central limit theorem for quadratic forms in strongly dependent linear variables and its application to asymptotic normality of Whittle estimate. Probab Theory Relat Fields 86:87–104

Giraitis L, Taniguchi M, Taqqu MS (2017) Asymptotic normality of quadratic forms of martingale differences. Stat Inference Stoch Process 20(3):315–327

Giraitis L, Taqqu MS (1999) Whittle estimator for finite-variance non-Gaussian time series with long memory. Ann Stat 1(27):178–203

Grenander U (1954) On the estimation of regression coefficients in the case of an autocorrelated disturbance. Ann Stat 25(2):252–272

Grenander U, Rosenblatt M (1984) Statistical analysis of stationary time series. Chelsea Publishing Company, New York

Guyon X (1982) Parameter estimation for a stationary process on a d-dimensional lattice. Biometrica 69:95–102

Hannan EJ (1970) Multiple time series. Springer, New York

Hannan EJ (1973) The asymptotic theory of linear time series models. J Appl Probab 10:130–145

Heyde C, Gay R (1989) On asymptotic quasi-likelihood stochastic process. Stoch Process Appl 31:223–236

Heyde C, Gay R (1993) Smoothed periodogram asymptotic and estimation for processes and fields with possible long-range dependence. Stoch Process Appl 45:169–182

Ibragimov IA (1963) On estimation of the spectral function of a stationary Gaussian process. Theory Probab Appl 8(4):366–401

Ibragimov IA, Rozanov YA (1978) Gaussian random processes. Springer, New York

Ivanov AV (1980) A solution of the problem of detecting hidden periodicities. Theory Probab Math Stat 20:51–68

Ivanov AV (1997) Asymptotic theory of nonlinear regression. Kluwer, Dordrecht

Ivanov AV (2010) Consistency of the least squares estimator of the amplitudes and angular frequencies of a sum of harmonic oscillations in models with long-range dependence. Theory Probab Math Statist 80:61–69

Ivanov AV, Leonenko NN (1989) Statistical analysis of random fields. Kluwer, Dordrecht

Ivanov AV, Leonenko NN (2004) Asymptotic theory of nonlinear regression with long-range dependence. Math Methods Stat 13(2):153–178

Ivanov AV, Leonenko NN (2007) Robust estimators in nonlinear regression model with long-range dependence. In: Pronzato L, Zhigljavsky A (eds) Optimal design and related areas in optimization and statistics. Springer, Berlin, pp 191–219

Ivanov AV, Leonenko NN (2008) Semiparametric analysis of long-range dependence in nonlinear regression. J Stat Plan Inference 138:1733–1753

Ivanov AV, Leonenko NN, Ruiz-Medina MD, Zhurakovsky BM (2015) Estimation of harmonic component in regression with cyclically dependent errors. Statistics 49:156–186

Ivanov AV, Orlovskyi IV (2018) Large deviations of regression parameter estimator in continuous-time models with sub-Gaussian noise. Mod Stoch Theory Appl 5(2):191–206

Ivanov OV, Prykhod’ko VV (2016) On the Whittle estimator of the parameter of spectral density of random noise in the nonlinear regression model. Ukr Math J 67(8):1183–1203

Jennrich RI (1969) Asymptotic properties of non-linear least squares estimators. Ann Math Stat 40:633–643

Koul HL, Surgailis D (2000) Asymptotic normality of the Whittle estimator in linear regression models with long memory errors. Stat Inference Stoch Process 3:129–147

Leonenko NN, Papić I (2019) Correlation properties of continuous-time autoregressive processes delayed by the inverse of the stable subordinator. Commun Stat: Theory Methods. https://doi.org/10.1080/03610926.2019.1612918

Leonenko NN, Sakhno LM (2006) On the Whittle estimator for some classes of continuous-parameter random processes and fields. Stat Probab Lett 76:781–795

Leonenko NN, Taufer E (2006) Weak convergence of functionals of stationary long memory processes to Rosenblatt-type distributions. J Stat Plan Inference 136:1220–1236

Lim SC, Teo LP (2008) Sample path properties of fractional Riesz–Bessel field of variable order. J Math Phys 49:013509

Malinvaud E (1970) The consistency of nonlinear regression. Ann Math Stat 41:953–969

Parzen E (1962) Stochastic processes. Holden-Day Inc, San Francisco

Rajput B, Rosinski J (1989) Spectral representations of infinity divisible processes. Prob Theory Rel Fields 82:451–487

Rosenblatt MR (1985) Stationary sequences and random fields. Birkhauser, Boston

Sato K (1999) Lévy processes and infinitely divisible distributions, vol 68. Cambridge studies in advanced mathematics. Cambridge University Press, Cambridge

Walker AM (1973) On the estimation of a harmonic component in a time series with stationary dependent residuals. Adv Appl Probab 5:217–241

Whittle P (1951) Hypothesis testing in time series. Hafner, New York

Whittle P (1953) Estimation and information in stationary time series. Ark Mat 2:423–434

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A: LSE consistency

Some results on consistency of the LSE \(\widehat{\alpha }_T\) in the observation model of the type (1) with stationary noise \(\varepsilon (t)\), \(t\in \mathbb {R}\), were obtained, for example, in Ivanov and Leonenko (1989, 2004, 2007, 2008), Ivanov (1980, 2010), Ivanov et al. (2015) to mention several of the relevant works. In this section we formulate a generalization of Malinvaud theorem (Malinvaud 1970) on \(\widehat{\alpha }_T\) consistency for linear stochastic process (4) and consider an example of nonlinear regression function \(g(t,\,\alpha )\) satisfying the conditions of this theorem and conditions \(\mathbf{C }_1\), \(\mathbf{C }_2\). Then we consider another possibilities of \(\mathbf{C }_1\) and \(\mathbf{C }_2\) fulfillment.

Set

For any fixed \(\alpha _0\in \mathcal {A}\), the function \(\Psi _T(u_1,\,u_2)\) is defined on the set \(U_T(\alpha _0)\times U_T(\alpha _0)\), \(U_T(\alpha _0)=T^{-\frac{1}{2}}d_T(\alpha _0)\left(\mathcal {A}^c-\alpha _0\right)\).

Assume the following.

- (1)

For any \(\varepsilon >0\) and \(R>0\) there exists \(\delta =\delta (\varepsilon ,\,R)\) such that

$$\begin{aligned} \sup \limits _{\begin{array}{c} u_1,u_2\in U_T(\alpha _0)\cap v^c(R)\\ \Vert u_1-u_2\Vert \le \delta \end{array}}\,T^{-1}\Psi _T(u_1,\,u_2)\le \varepsilon . \end{aligned}$$(48) - (2)

For some \(R_0>0\) and any \(\rho \in (0,\,R_0)\) there exist numbers \(a=a(R_0)>0\) and \(b=b(\rho ,\,R_0)\) such that

$$\begin{aligned} \inf \limits _{u\in U_T(\alpha _0)\cap \left(v^c(R_0)\backslash v(\rho )\right)}\, T^{-1}\Psi (u,\,0)\ge b; \end{aligned}$$(49)$$\begin{aligned} \inf \limits _{u\in U_T(\alpha _0)\backslash v^c(R_0)}\, T^{-1}\Psi (u,\,0)\ge 4B(0)+a. \end{aligned}$$(50)

It was proven in Lemma 1 that under condition \(\mathbf{A }_1\)

Lemma A.1

Under condition \(\mathbf{A }_1\),

Proof

By formula (7)

By condition \(\mathbf{A }_1\) and Fubini-Tonelli theorem

\(\left\Vert\hat{a}\right\Vert_1=\int \limits _{\mathbb {R}}\,|\hat{a}(t)|dt\).

On the other hand by formula (8)

For integral \(I_7^{(2)}\) we get the same bound. So, we obtain inequality (52) with

\(\square \)

Theorem A.1

If assumptions (1), (2), and \(\mathbf{A }_1\) are valid then for any \(\rho >0\)

Proof

The proof of this Malinvaud theorem generalization is similar to the proof of Theorem 3.2.1. in Ivanov and Leonenko (1989) and uses the relations (51) and (52).

Instead of \(\mathbf{C }_2\) consider the stronger condition.

\(\mathbf{C }_2'\). There exist positive constants \(c_0,\,c_1<\infty \) such that for any \(\alpha \in \mathcal {A}^c\) and \(T>T_0\)

Point out a sufficient condition for \(\mathbf{C }_2\)’ fulfillment. Introduce a diagonal matrix

\(\mathbf{C }_2''\). (i) There exist positive constants \(\underline{c}_i,\ \overline{c}_i\), \(i=\overline{1,q}\), such that for \(T>T_0\) uniformly in \(\alpha \in \mathcal {A}\)

(ii) For some numbers \(c_0^*.\ c_1^*\) and \(T>T_0\),

Under condition \(\mathbf{C }_2''\) as it is easily seen one can take in \(\mathbf{C }_2'\)

The next example demonstrates the fulfillment of the condition \(\mathbf{C }_2'\) [compare with Ivanov and Orlovskyi (2018)].

Example A.1

Let

with \(\left<\alpha ,\,y(t)\right>=\sum \limits _{i=1}^q\,\alpha _iy_i(t)\), regressors \(y(t)=\Bigl (y_1(t),\,\ldots ,\,y_q(t)\Bigr )'\), \(t\ge 0\), take values in a compact set \(Y\subset \mathbb {R}^q\). Suppose

where J is a positive definite matrix, and the set \(\mathcal {A}\) in the model (1) is bounded. Set

Then for any \(\delta >0\) and \(T>T_0\)

and condition \(\mathbf{C }_2''\)(i) is fulfilled with matrix \(s_T=T^{\frac{1}{2}}\mathbb {I}_q\), \(\mathbb {I}_q\) is identity matrix of order q, and \(\underline{c}_i=L^2\left(J_{ii}-\delta \right)\), \(\overline{c}_i=M^2\left(J_{ii}+\delta \right)\), \(i=\overline{1,q}\).

Let us check the condition \(\mathbf{C }_2''\)(ii). We have

As far as \(\left( e^x-1\right) ^2\ge x^2\), \(x\ge 0\), and \(\left( e^x-1\right) ^2\ge e^{2x}x^2\), \(x<0\), then

Thus

and for any \(\delta >0\) and \(T>T_0\)

where \(\lambda _{\min }(J)\) is the least eigenvalue of the matrix J.

On the other hand,

\(\eta (t)\in (0,\,1)\), and

where \(\lambda _{\max }(J)\) is the maximal eigenvalue of the matrix J. It means that condition \(\mathbf{C }_2''\)(ii) is valid for matrix \(s_T=T^{\frac{1}{2}}\mathbb {I}_q\).

So the condition \(\mathbf{C }_2'\) is valid as well and in (53) one can choose for \(T>T_0\) some numbers

Inequalities (53) can be rewritten in the equivalent form

From the right hand side of (55) it follows (48). Similarly, from the left hand side of (55) taking \(\nu =0\) we obtain (49) for any \(R_0>0\) and it is possible to choose \(R_0>0\) satisfying (50).

In our example \(\mathbf{A }_1\) due to inequalities (54) with \(s_{iT}=T^{\frac{1}{2}}\), \(i=\overline{1,q}\), the set \(U_T(\alpha )\) is bounded uniformly in T and it is not necessary to use condition (50). However in Malinvaud theorem we can not ignore the condition (50) of parameters distinguishability in the cases when the sets \(U_T(\alpha )\) expands to infinity as \(T\rightarrow \infty \) or the set \(\mathcal {A}\) is unbounded.

It goes without saying not all the interesting classes of nonlinear regression functions satisfy consistency conditions of Malinvaud or, say, Jennrich (1969) types. The important example of such a class is given by the trigonometric regression functions.

Example A.2

Let

\(\alpha =\left(\alpha _1,\,\alpha _2,\,\alpha _3, \ldots , \alpha _{3N-2},\,\alpha _{3N-1},\,\alpha _{3N}\right) =\left(A_1,\,B_1,\,\varphi _1,\,\ldots ,\,A_N,\,B_N,\,\varphi _N\right)\), \(0<\underline{\varphi }<\varphi _1<\ldots<\varphi _N<\overline{\varphi }<\infty \).

Under some conditions on angular frequencies \(\varphi =\left(\varphi _1,\,\ldots ,\,\varphi _N\right)\) distinguishability (see Walker Walker 1973; Ivanov 1980; Ivanov et al. 2015) it is possible to prove that at least

\(\widehat{\alpha }_T=\left(A_{1T},\,B_{1T},\,\varphi _{1T},\,\ldots ,\,A_{NT},\,B_{NT},\,\varphi _{NT}\right)\), \(\alpha _0=\left(A_1^0,\,B_1^0,\,\varphi _1^0,\,\ldots ,\,A_N^0,\,B_N^0,\,\varphi _N^0\right)\), \(\left(C_k^0\right)^2=\left(A_k^0\right)^2+\left(B_k^0\right)^2>0\), \(k=\overline{1,N}\).

The convergence in (57) can be a.s. In turn, form (57) it follows (see cited papers)

Note that

\(k=\overline{1,N}\).

From (58) and (59) we obtain the relation of condition \(\mathbf{C }_1\) for trigonometric regression:

To check the fulfillment of the condition \(\mathbf{C }_2\) for regression function (56) we get

\(k=\overline{1,N}\), and therefore

Using again the relation (59) we arrive at the inequality of the condition \(\mathbf{C }_2\).

with constant \(c_0\) depending on \(A_i^0\), \(B_i^0\), \(i=\overline{1,N}\).

The next lemma is the main part of the convergence (57) proof.

Lemma 11

Under condition \(\mathbf{A }_1\)

Proof

Since

then

By formula (7)

and

By formula (8)

that is

Obviously,

From inequalities (63)–(67) it follows

\(\square \)

The result of the lemma can be strengthened to a.s. convergence in (62). Note also that in the proof we did not use the condition \(\hat{a}\in L_1(\mathbb {R})\).

Appendix B: LSE asymptotic normality

Cumbersome sets of conditions on the behavior of the nonlinear regression function are used in the proofs of the LSE asymptotic normality of the model parameter can be found, for example, in Ivanov and Leonenko (1989); Ivanov (1997); Ivanov et al. (2015), and it does not make sense to write here all of them. We will comment only on the conditions associated with the proof of the CLT for one weighted integral of the linear process \(\varepsilon \) in the observation model (1).

Consider the family of the matrix-valued measures \(\mu _T (dx;\,\alpha )=\left(\mu _T^{jl}(dx;\,\alpha )\right)_{j,l=1}^q\), \(T>T_0\), \(\alpha \in \mathcal {A}\), with densities

where

- (1)

Suppose that the weak convergence \(\mu _T\ \Rightarrow \ \mu \) as \(T\rightarrow \infty \) holds, where \(\mu _T\) is defined by (68) and \(\mu \) is a positive definite matrix measure.

This condition means that the element \(\mu ^{jl}\) of the matrix-valued measure \(\mu \) are complex measures of bounded variation, and the matrix \(\mu (A)\) is non-negative definite for any set \(A\in \mathcal {Z}\), with \(\mathcal {Z}\) denoting the \(\sigma \)-algebra of Lebesgue measurable subsets of \(\mathbb {R}\), and \(\mu (\mathbb {R})\) is positive definite matrix, (see, for example, Ibragimov and Rozanov 1978).

The following definition can be found in Grenander (1954), Grenander and Rosenblatt (1984), Ibragimov and Rozanov (1978) and Ivanov and Leonenko (1989).

Definition B.1

The positive-definite matrix-valued measure \(\mu (dx;\,\alpha )=\left(\mu ^{jl}(x;\,\alpha )\right)_{j,l=1}^q\) is said to be the spectral measure of regression function \(g(t,\,\alpha )\).

Practically the components \(\mu ^{jl}(x;\,\alpha )\) are determined from the relations

where it is supposed that the matrix function \(\bigl (R_{jl}(h;\,\alpha )\bigr )\) is continuous at \(h=0\).

Continuing Example A.2 with the trigonometric regression function (56) from “Appendix A”, we can state using (69) that the function \(g(t,\,\alpha )\) has a block-diagonal spectral measure \(\mu (d\lambda ;\,\alpha )\) (see e.g., Ivanov et al. 2015) with blocks

where

In (70) the measure \(\varkappa _k=\varkappa _k(d\lambda )\) and the signed measure \(\rho _k=\rho _k(d\lambda )\) are concentrated at the points \(\pm \varphi _k\), and \(\varkappa _k\bigl (\left\rbrace \pm \varphi _k\right\lbrace \bigr )=\frac{1}{2}\), \(\rho _k\bigl (\left\rbrace \pm \varphi _k\right\lbrace \bigr )=\pm \frac{1}{2}\).

Returning to the general case let the parameter \(\alpha \in \mathcal {A}\) of regression function \(g(t,\,\alpha )\) be fixed. We will use the notation \(d_{iT}^{-1}(\alpha )g_i(t,\,\alpha )=b_{iT}(t,\,\alpha )\) and condition

- (2)

\(\sup \limits _{t\in [0,\,T]}\,\left|b_{iT}(t,\,\alpha )\right|\le c_iT^{-\frac{1}{2}}\), \(i=\overline{1,q}\).

The next CLT is an important part of the proof of LSE \(\widehat{\alpha }_T\) asymptotic normality in the model (1) and fully uses condition \(\mathbf{A }_1\).

Theorem B.1

Under conditions \(\mathbf{A }_1\), 1) and (2) the vector

is asymptotically, as \(T\rightarrow \infty \), normal \(N(0,\Sigma )\),

Proof

For any \(z=\left(z_1,\,\ldots ,\,z_q\right)\in \mathbb {R}^q\) set

By condition (1)

\(\mu _z(d\lambda ;\,\alpha )=\sum \limits _{i,j=1}^q\,\mu ^{ij}(d\lambda ;\,\alpha )z_iz_j\).

To prove the theorem it is sufficient to show for any \(z\in \mathbb {R}\) and \(\nu \ge 1\), that

Use the Leonov–Shiryaev formula (see, e.g., Ivanov and Leonenko 1989). Let

Then

where \(\sum \limits _{A_r}\) denotes summation over all unordered partitions \(A_r=\left\rbrace \bigcup \limits _{p=1}^r\,I_p\right\lbrace \) of the set I into sets \(I_1,\,\ldots ,\,I_r\) such that \(I=\bigcup \limits _{p=1}^r\,I_p\), \(I_i\cap I_j=\emptyset \), \(i\ne j\).

Since

then the application of formula (73) to (74) shows that to obtain (72) it is sufficient to prove

for all \(i=\overline{3,n}\). Taking into account the equality \(\hbox {E}\varepsilon (t)=0\), from (75) will follow that in (72) all the odd moments \(\hbox {E}\eta ^{2\nu +1}=0\). On the other hand, for even moments \(\hbox {E}\eta ^{2\nu }\) we shall find that in (74) thanks to (73) only those terms correspond to the partitions of the set \(I=\{1,\,2,\,\ldots ,\,2\nu \}\) into pairs of indices will remain nonzero, i.e. “Gaussian part” : all \(l_p=2\). In (73) it will be \((2\nu -1)!!\) of such terms and each of them will be equal to \(\sigma ^{2\nu }(z)\).

Let us prove (75). We note that condition (2) implies

Then using formula (8) we have

To obtain (76) we have used \(\hat{a}\in L_1(\mathbb {R})\) only.

Using the theorem, just as in the works cited above (for definiteness, we turn our attention to Ivanov et al. 2015), it can be proved that, if a number of additional conditions on the regression function are satisfied, the normalized LSE \(d_T(\alpha _0)\left(\widehat{\alpha }_T-\alpha _0\right)\) is asymptotically normal \(N\left(0,\,\Sigma _{_{LSE}}\right)\), with

Note that, firstly, our conditions \(\mathbf{N }_3\), (1), (2) are included in the conditions for the LSE asymptotic normality of Ivanov et al. (2015), and, secondly, the trigonometric regression function (56) satisfies the conditions of Ivanov et al. (2015). Moreover, using (70) and (59) we conclude that for the trigonometric model the normalized LSE

is asymptotically normal \(N\left(0,\,\Sigma _{_{TRIG}}\right)\), where \(\Sigma _{_{TRIG}}\) is a block diagonal matrix with blocks

The matrix \(\Sigma _{_{TRIG}}\) is positive definite, if \(f\left(\varphi _k^0\right)>0\), \(k=\overline{1,N}\). Hovewer it follows from our condition \(\mathbf{A }_2\)(iii).

Note also that condition \(\mathbf{N }_2\) is satisfied, for example, for the trigonometric regression function (56). Indeed, in this case

and similarly to (60)

which leads to the inequality of condition \(\mathbf{N }_2\) similar to (61), but with a different constant \(c_0'\)

Appendix C: Levitan polynomials

Some necessary facts of approximation theory adapted to needs of this article are represented in this Appendix. All the definitions and results are taken from the book (Akhiezer 1965).

In complex analysis entire function of exponential type is said to be such a function F(z) that for any complex z the inequality

holds true, where the numbers A and B do not depend on z. Infinum \(\sigma \) of the constant B values for which inequality (77) takes place is called the exponential type of function F(z) and can be determined by formula

Denote by \(\mathcal {B}_\sigma \) the totality of all the entire functions F(z) of exponential type \(\le \sigma \) with property \(\underset{\lambda \in \mathbb {R}}{\sup }\,|F(\lambda )|<\infty \).

Let \(\mathcal {C}\) be linear normed space of bounded continuous functions \(\varphi (\lambda )\), \(\lambda \in \mathbb {R}\), with norm \(\Vert \varphi \Vert =\underset{\lambda \in \mathbb {R}}{\sup }\,|\varphi (z)|<\infty \). Consider further some set of functions \(\mathfrak {M}\subset \mathcal {C}\). For the function of interest \(\varphi \in \mathfrak {M}\) suppose that

and write

Let \(h(\lambda )\), \(\lambda \in \mathbb {R}\), be uniformly continuous function. Denote by

the modulus of continuity of the function h. Obviously \(\omega (\delta ),\ \delta >0\), is nondecreasing continuous function tending to zero, as \(\delta \rightarrow 0\).

Let the set \(\mathfrak {M}\) introduced above consists of differentiable functions such that for \(\varphi \in \mathfrak {M}\) the derivatives \(\varphi '(\lambda )=h(\lambda ),\ \lambda \in \mathbb {R}\), are uniformly continuous on \(\mathbb {R}\). Then for function \(\varphi \) satisfying the property (78) there exists a function \(F_\sigma \subset \mathcal {B}_\sigma \) such that (see Akhiezer 1965, p. 252)

The inequality (79) means that for the described function \(\varphi \) and any \(\delta >0\) there exists a number \(\sigma =\sigma (\delta )\) and a function \(F_\sigma \in \mathcal {B}_\sigma \) such that

As it has been proved in the 40s of the 20th century by B.M. Levitan for any function \(F\in \mathcal {B}_\sigma \) it is possible to build a sequence of trigonometric sums \(T_n(F;\,z),\ n\ge 1\), bounded on \(\mathbb {R}\) by the same constant as the function F, that converges to F(z) uniformly in any bounded part of the complex plane. In particular, for any compact set \(K\in \mathbb {R}\)

Put \(s=\frac{\sigma }{n}\), \(n\in \mathbb {N}\); \(c_j^{(n)}=sE_s(js)\), \(j\in \overline{-n,n}\);

Then the sequence of the Levitan polynomials that corresponds to F can be written as

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Ivanov, A.V., Leonenko, N.N. & Orlovskyi, I.V. On the Whittle estimator for linear random noise spectral density parameter in continuous-time nonlinear regression models. Stat Inference Stoch Process 23, 129–169 (2020). https://doi.org/10.1007/s11203-019-09206-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11203-019-09206-z