Abstract

Using a case study of the Economic and Law Department of the Hungarian Academy of Sciences, we find that domestic rankings greatly distort researchers’ publication preferences. This finding is contrary to the importance of international journal rankings, such as the SCOPUS Scimago journal ranking or the Web of Science Impact Factor. We found inconsistencies between international and domestic standards and differences between the eight committees of the academic department. Based on an exploratory empirical analysis of 4213 journals spanning eight different scientific committees of social science areas (business, law, demography, sociology, regional studies, world economics, military science and political science), we prove that a key reason for Hungary’s decreasing rank in international visibility is the inconsistently strong bias for domestic journals. We show that inconsistency of ranking fairness determines the behavior of researchers; this motivational effect is especially important in small countries in which local relevance often offsets the importance of mainstream international discourses. This problem is attenuated with the digital transformation of science; online repositories, indexing systems and online visibility have become key enablers of evidence-based assessments of publication performance.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

The debate on publication performance in the social sciences has been a heated topic amongst Hungarian scientists. Discussions focus on what generates real scientific discourse on relevant academic problems instead of chasing impact factor (IF) scores or following mainstream scientometrics bandwagons generated by Elsevier and other giant publishers (Csaba et al. 2014; Braun 2010). These dilemmas extend to dealing with the bias in “core and periphery” contradiction in science (Demeter 2017a, b), indicating a lack of attention paid to periphery countries in the social sciences (Demeter 2018). This neglect appears is the so-called Matthew effect of scientific citations (Bonitz et al. 1997). Needless to say, some of these issues are local problems. However, as we argue in our paper, there are at least three areas in which these debates have international (INT) and conceptual relevance.

Firstly, these debates on scientometrics encompass a deep phenomenon impacting non-English-speaking countries with unique national research areas. This situation is, in our opinion, the effect of the Information Communication Technologies (ICT) transformation in science. As a result of digitalization, databases, online tools, open-access journals—as illustrated in the case of Hungary—local repositories provide a vast amount of accessible data for analysis about actual publication performances. This situation elevates the importance of “evidence-based arguments” compared with “arguments of beliefs” about publication outputs. While Pajić (2014) found that Eastern European countries largely base their INT scientific production on national journals covered by the Web of Science (WoS) and SCOPUS, we found that the performance of Hungarian management and social science followed a different strategy (Table 1).

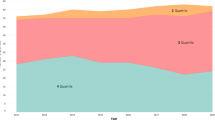

Pajić (2014) noted that Hungary maintains one of the highest H indices in the social sciences and a high rank in the Eastern European region. However, we noted a problem: the serious decrease in the total number of Hungarian publications apart from the social sciences in the subject areas of business, economics and decision sciences. These series of data, coupled with Pajic’s arguments that the number of Hungarian publications indexed in SCOPUS stagnated after 2006 (Pajić 2014; Fig. 1), also highlights that Hungary has not followed the path of other Central and Eastern European countries in its social and management sciences publication contributions; Hungarian public policy in these areas has chosen an alternative strategy, which might serve as a lesson to the international community.

Secondly, there is a need for unique scientific indicators for assessing the performance of Hungarian social science work on the basis of the country’s history and national path dependency of this academic field. Partly because these scientific areas are nested in the local and national environment, such questions are often only relevant to a smaller community and are not of interest to a wider global academic audience (Bastow et al. 2014). In the Eastern European context, this problem has been coupled—particularly in the 1990s—with the heritage of dictatorship and other oppressive political regimes, which dominated over a relatively long period of time (Kornai 1992). This environment made it literally impossible for economics, law and sociology to operate properly as free, independent disciplines; this situation was especially pronounced when one compared these fields with the natural and engineering sciences. According to this logic, the primary question arises as to how to deal with the pressures of the “publish-or-perish” principle and how to assess real scientific impact and fairness of performance assessment across disciplines. As a science policy, should HAS further motivate the proliferation of domestic (DOM) journals for local researchers? Hungary has more than doubled its number of Hungarian-language scientific journals from 184 to 298 from 2016 to 2018. Pajić (2015) also found that Scopus and Web of Science Journal Citation Reports (WoS JCR) ranking lists between 2007 and 2014 have grown in volume by 30–40% and 40–50%, respectively. However, Hungarian data only show that 10% of the 298 Hungarian journals are listed in SCOPUS and 3% are listed in WoS. As a result, the growth of journals in Hungary is “domestically recognized” and not going through Elsevier’s or Clarivate’s quality assurance.

Thirdly, by connecting the two previous dilemmas—that is, (a) publication data are stored in databases in a transparent manner and can be easily compared by researchers, institutions and countries and, (b) there are different expectations in country specific research issue in which there is tension about how to set normative academic standards for motivating researchers to choose appropriate publication strategies—we argue that consistency and alignment with journal ranking best practices is essential. We focus on Hungary to illustrate that the ways in which scientific journals are assessed and ranked determines the behavior of researchers much more than the “politically correct” value of internationalization in science. Contrary to what (Pajić 2015) found regarding his assessment of an evaluation of the methodologies of scientific papers in Eastern Europe (i.e., a lower value is ascribed to national journals), we show that the Hungarian Academy of Science’s (HAS) practice is different. However, we found practical evidence to confirm Pajić (2015)’s statement that authors boost their performance by publishing in journals of lower quality due to pressure to publish in INT journals. Building on these thoughts, we center our investigation on the fairness and consistency of journal rankings in a unique environment in which both INT and special local repositories and assessment systems exist. This area has been less investigated—particular in formerly communist countries—unlike practices in Western countries (Templeton and Lewis 2015; Astaneh and Masoumi 2018).

We have formulated our problem statement based on these propositions by choosing scientific journals as our unit of analysis. Particularly, we have been interested in investigating the results that actual data reveal and how this information can be used to gain a deeper understanding of publication performance. Our assumption is that the consistency of journal rankings has a strong implication on the behavior of researchers.

Literature review

There are several theories and concepts as to what determines INT publication outputs. In the context of Central and Eastern European (CEE) countries, economic development (Vinkler 2008), the post-communist past (Kornai 1992) and being on the periphery of social sciences (Demeter 2017a, b) are most often noted. This last issue has been recurring presented in the literature as a major challenge for authors as individuals (Schmoch and Schubert 2008), institutions as competing scientific entities (Gumpenberger et al. 2016), and also for regions with the intention of disseminating or acquiring knowledge in particular fields (Wang and Wang 2017). Stakeholder interest and objectives are also key factors in publication outputs (Shenhav 1986). In the case of social sciences, a lot of emphasis is placed on to local relevance because this concept drives access to R&D resources (Bastow et al. 2014). On the other hand, relevant local problems do not often fall within mainstream areas of research discourse (Lauf 2005; Wiedemann and Meyen 2016. This situation results in an imbalanced flow of knowledge dissemination and absorption (Gerke and Evers 2006).

Digital disruption in the form of readily available repositories, online aggregators, indexing systems, and research platforms has widened the gap even more between journals which exploit new ICT innovations and business models and those who are lagging behind (Nederhof 2006; Teodorescu and Andrei 2011; Bunz 2006). Apart from the audited and centrally controlled main academic databases such as Elsevier’s SCOPUS, which contains more than 30,000 journal titles, or Clarivate Analytics WoS, which contains 12,000 academic journals, there are crowdsourcing and community-controlled visibility tools such as Researchgate, Academia.edu and other initiatives such as Harzing’s Publish or Perish and Google Scholar for increasing publication outreach and visibility (Delgado and Repiso 2013). Needless to say, several ethical questions come into play pertaining to academic standards, search bias, scoring algorithms, language preferences and predator business models that take advantage on the publication pressure placed on authors and institutions (Astaneh and Masoumi 2018). Irrespective of what kind of resistance there is to accepting new methods of publication dissemination and marketing techniques, one thing is very certain: as the digital ecosystem evolves in science, all stakeholders must assess the situation based on data and evidence from reliable and “accredited” sources (Demeter 2017a, b).

Countries in the periphery might choose to use INT repositories and indexing sites; they can also build their own local ones that cater to the publication policy and assessment needs of their local communities. Hungary chose the latter method; the idea was to establish a comprehensive database for collecting scientific output of Hungarian researchers. As a result, the Repository of Hungarian Scientific Artefacts (RHSA) was established. The project was initiated in 2008 by the HAS, the Hungarian Accreditation Committee, The Rectors’ Conference, the Council of Hungarian Doctoral Schools, and the National Foundation for Scientific Research (Makara and Seres 2013). The five founding organizations agreed that the repository needed to be a credible, auditable and authentic source of Hungarian research artifacts, including DOM and INT journal articles, books, patents, conference proceedings, educational materials and other such items. Over the years, the RHSA system has been strengthened institutionally by several legislative acts; its maintenance and operations has been delegated to the HAS. This dedicated governance body consists of library and research experts that develop its strategy and regularly report to the president and the general council of the HAS (HAS Doctor of Science Requirements—www.mta.hu2018). There are links and migration tools to connect RHSA with SCOPUS and WoS. However, this cooperation is limited; the RHSA and its governance has practically developed its own evaluation system (Csaba et al. 2014).

As publication performance, especially INT visibility, gain importance, countries are motivating their researchers to publish their work in journals that reach a wide range of audiences while, at the same time, maintaining a high level of academic quality (Bastow et al. 2014). Because this performance is assessed as a mixture of local and INT publications—especially in small-country settings—the operation of these journals has become vitally important (Zdeněk 2018). Especially with scientific journals, the fairness of their ranking has risen to a level that is at the center of discussions in all academic disciplines and geographical regions (Templeton and Lewis 2015). Fairness in this context means an unbiased judgement of the stature of scientific journals that enables an impartial evaluation of research performance in a particular field of science as well as the comparison of the new results and the added value of individual fields of science to one another (Templeton and Lewis 2015). In Hungary, fairness regarding social sciences is surrounded by suspicion originating from a number of individual researchers and also often from the evaluating institutions. Such resentments often result in institutional and scientific “chauvinism” in an attempt to protect fairness as a social construction of science (Soós and Schubert 2014).

We intend to contribute to the discourse of assessing the drivers of publications in social sciences by three propositions:

-

(a)

Firstly, we put scientific journals into the center of our research—as a unit of analysis—and hypothesize that local and INT journal rankings determine publication behavior regardless of the INT trends and pressures in small countries.

-

(b)

Secondly, there is enough empirical evidence and factual data—both in INT (SCOPUS and WoS in particular) and DOM (e.g., RHSA) repositories—to direct the discourse from the territory of “beliefs” to the territory of factual arguments and lay the ground for “evidence-based publication policy” design.

-

(c)

Thirdly, by analyzing empirical data of journal rankings and actual publication outputs, we show that journal valuation fairness and bias determines—with a high level of significance—the publication performance of Hungarian researchers in the social sciences.

We chose our research design as a case study and present the journal ranking data in the IX. Department of Economics and Law at the HAS.

Case study background

The research design was put together for the scientific journal assessment board of the IX. Economics and Law Department (ELD) of at the HAS; this work was led by two leading members of the HAS. The lead author of the paper has served as the chief analyst for the project and developed the research case design according to the experience he gained during that process.

The ELD of the HAS was originally established for and by academics working in economics and law. Later, different scientific areas and disciplines were added to this department. Presently, the scientific and doctoral committees of this department grant “Doctor of Sciences (D.Sc.)” degrees in the following disciplines (HAS Doctor of Science Requirements—www.mta.hu2018):

-

Business and Management Studies (BUS)

-

Demographic Studies (DEM)

-

Legal and Governmental Sciences (LAW)

-

Military Science (MIL)

-

Political Science (POL)

-

Regional Studies (REG)

-

Sociology (SOC)

-

World Economics and Development Studies (WED)

These committees play a pivotal role in setting scientific standards for doctoral education, establishing guidelines for academic promotion and laying down principles for scientific practices, both at research institutes and at universities. Doctoral schools that award Ph.D. degrees can only be directed by academics who possess D.Sc. degrees. Therefore, the role of the HAS is not only theoretical but also institutional.

For assessing the scientific publication performance of D.Sc. candidates, the committees have created journal baskets categorizing both DOM and INT journals. Adherence to these lists and categories is essential in order to receive the required level of scores from each committee. Although specific conditions do vary among the eight disciplines, each committee requires regular publication and a large number of independent citations in both INT and Hungarian journals. There are several other requirements for granting the D.Sc. degree, but our research focuses specifically on journals. Therefore, these other criteria are not discussed in the course of our analysis. Only one aspect is important to note as a critical element; it plays a highly important role across all committees and basically the entire academic department; beyond journals monographs also play a large role. The heavy emphasis on books is especially relevant in Legal and Governmental Sciences (LAW) and Military Studies (MIL). Journal baskets are regularly re-evaluated and modernized while they attempt to maintain stability for long-range academic planning. Doctoral schools also use the lists for Ph.D. assessment, and universities use them for academic promotion. Therefore, the motivational power and influence of journal rankings determine carrier paths and how institutions and individuals build their long-term publication strategies.

To assign journals into categories, the committees used predefined quality requirements; the process of determining journal baskets is a social construction process in which the personal experience of senior committee members, these individuals’ publication histories, the stature of the Hungarian language and local importance of the journal’s focus are, naturally, involved. As far as quality requirements are concerned, bylaws state that the journals recognized by the HAS have to be peer-reviewed, must possess an editorial board, must be published regularly, need to adhere to academic standards of the field and should contain a list of references (HAS Doctoral Regulations 2014). The transparency and conclusiveness of the—sometimes rather heated—constructive dialogue is ensured by the committee bylaws, the overseeing power of the Academic Board of the IX. Department, and the President Board of the entire Academy. A lengthy and time-consuming procedure ensures that committees openly discuss, vote and publish the so-called IX. Section list of accepted academic journals (HAS list) (HAS Doctor of Science Requirements—www.mta.hu2018).

Source of data

To explore our research questions, we collected two sources of data about the journal ranks of the eight committees at the end of 2017. The first source was the actual journal list with available features of both DOM and INT journals. For the INT ones, we collected, where available, the relevant INT database in which they were listed. We paid special attention to SCOPUS and WoS journals. To access SCOPUS journals, we used the recently developed Scimago Journal Ranking (González-Pereira et al. 2010). For WoS, we used the IF data. The second major source of data was the RHSA, from which we collected the number of publications uploaded in each of the HAS ranked journals of the eight committees.

Table 2 summarizes the database we built for the analysis. The first set of data identifies the journals by juxtaposing their ISSN and electronic ISSN number, title and numerical code. The second category in the dataset describes the audience of the journals, which are either DOM (DOM = 197) and written in Hungarian or INT (INT = 4016) journals written in English. Finally, the third category encompasses data about the journals’ availability in different repositories.

We used SPSS Statistics for Windows (IBM Corp. Armonk, NY, Version 24.0. 2016) and Excel (Microsoft, Redmond, WA, Version 2016) modeling to test our three propositions.

Discussion of results

Journal rankings and publication output: what empirical data show in the IX—Department of the HAS

Scientific committees ranked different numbers of DOM and INT journals. Table 3 reveals that while the number of DOM journals varied between 30 (MIL) and 53 (SOC) journals, INT numbers were characterized by much more variation: from 56 (MIL) to 1896 (BUS). Pearson’s r = 0.127 on the p < 0.01 level of significance reveals that the difference between the committees is not random but the correlation is weak.

The HAS journal ranking, which is the most important for academic promotion, is summarized in Table 4. Committees typically create four journal categories: category A includes top-ranked journals and category D includes the lowest-ranked journals. Two committees, however, only use three categories (MIL and SOC), and another two (LAW and POL) do not assign D categories to INT journals.

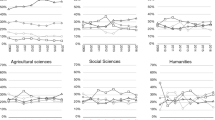

The highest ratio of A-ranked DOM journals is in the Legal and Governmental Sciences Committee (LAW = 30%); the lowest is in World Economics and Development Studies Committee (WED = 5.9%). In contrast, Business and Management Studies (BUS) classifies the highest ratio of journals into the lowest category (BUS = 36.7%), and the Regional Economics Committee groups only REG = 13.0% of journals into the D category. As far as INT journals are concerned, the proportional variation is much less: Political Science ranks 25% of journals as the highest proportion; Sociology ranks 14.6% of journals into A category. On the other end of the categories, Demographic Studies classifies 36.8% of their listed journals into category D, and the Committee of Regional Studies puts only 3.4% of their journals into this basket. The HAS journal ranking generally shows that, with the exception of Military Sciences, more than 50% of DOM journals are ranked into the lowest half of the categories. Business and Management ranked a record high 70% of journals into categories C and D, basically assessing that most Hungarian academic journals bear lower scientific value than INT journals. In the INT market, there is a similar assessment: six committees classified more than 50% of journals into C or D categories: WED ranks 48% of journals into C or D categories and POL ranks 49% of journals into C and D categories. These ratios are slightly less than in the DOM case.

To describe publication performance, we used the RHSA repository by downloading the total number of publications indicated in all 4210 journals (3 were missing data) on 2017 January 31. As Table 5 shows, the RHSA contains 98,324 papers in the ranked journals; 91% had been published in Hungarian journals and only 9% were published in INT journals. This ratio is almost opposite the composition of the journals (5% Hungarian and 95% INT), which suggests that a DOM journal in the RHSA database contains, on average, 453.9 papers while an INT journal contains, on average, only 2.2 papers. Both distributions are skewed to right, INT journals 10 times more so than DOM journals. The large spread in publications suggests that there is a significant number of journals in which researchers have not published at all: 2 DOM journals and 2675 INT journals, which, together, constitute 66% of the total number of HAS-ranked journals.

In Table 6, we present how concentrated the publication outputs are, especially in the BUS and LAW committees where the number of listed journals are comparably high. The MIL, DEM and REG committees produce a more even distribution of publications in HAS-ranked journals, but in the Scopus and IF they demonstrate the same concentration as other committees. It was a surprise to find that the SOC committee included 13% of IF journals containing the top 80% of their WoS papers, showing the highest spread comparing all the committees.

The RHSA data consequently reveal that researchers, according to the ranking scheme of the HAS IX. Department, strongly prefer Hungarian journals, regardless of the fact that the number of available opportunities in INT journals is 18 times larger. However, differences between committees (Table 7) reveal major as opposed to random differences. These data do not confirm the findings of Pajić (2015) that the evaluation methodologies of scientific papers usually prefer journals ranked within the top 10% or 30% of JCR or SCOPUS and accordingly give lower value to DOM journals (Pajić 2015).

The largest number of INT papers were written in Business and Management committee journals (6063), and the lowest number of INT papers were written in Military Science committee journals (113). The Military Science committee also includes the lowest number of DOM publications (8076); the highest number was found in the Regional Studies committee.

The percentage values listed in Table 7 describe the distribution within committees between HAS journal categories. One can conclude from these data that Sociology committee journals contain the most A and B category papers (73%) in the INT audience; the Military Science committee journals contain the least (24%). This committee, however, is associated with the highest percentage of category A and B publications in the DOM audience; Business and Management committee is associated with the least (27%). The total fraction of publications in A and B categories in INT journals exceeded 50% in five committees (LAW, WED, POL, REG and SOC); there were only two categories (MIL = 73% and LAW = 70%) in which the same ratio was higher than 50% in DOM journals. Those few researchers who publish in INT journals prefer A or B category journals in LAW, WED, POL REG and SOC committees; the much larger group of researchers who publish in DOM journals place their papers in C and D category journals (DEM, BUS, WED, POL, REG, SOC).

These empirical data indicate a serious bias toward DOM publications and, beyond that, a significant inconsistency between HAS rankings and actual journal publication performance. In the next section, we assess the constructive fairness of the committees and show how inconsistency results in the increase of domestic publication output.

Assessing constructive fairness: comparison of HAS, SCOPUS and WoS journal rankings

In order to assess ranking fairness, we chose two benchmarks and collected indicators for the HAS-ranked journals listed in Table 2. The first set consisted of the journals which received the best Scimago quartile ranking (González-Pereira et al. 2010); the second set consisted of WoS IF where these data were available. We found that out of the total number of 4213 journals, 2272 (54%) had 2015 Scimago Q value, and 1330 (31%) had a 2014 IF value. The total number of publications in these journals in RHSA was 13,718 in SCOPUS-Scimago (14% of the total publications) and 6650 in the WoS IF journals (7% of the total publications). Both the low number of journals and the number of papers published in these journals highlights the need for the HAS ranking. This situation is especially true for Hungarian journals: we only found 2 with IF values, and 13 with Q categories (Q2 = 1, Q3 = 2, Q4 = 10). Still, we argue that the more than 2200 SCOPUS and 1300 WoS journals serve as good proxies for assessing HAS ranking fairness. The key idea is that increased consistency of the three different rankings (HAS, Scopus and WoS) indicates that the constructive scientific discourse in a particular committee is also consistent.

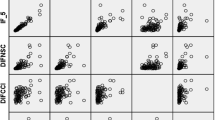

After cross-tabulating the SCOPUS and WoS values, we found that 814 journals had IF values out of the 956 Q1 journals; the 587 Q2 journals included 322 with IF scores. There were 140 journals with IF values in the Q3 category; only 47 journals of the 275 Q4 journals had IF values. Correlation tests between the two variables revealed a Spearman correlation value of 0.522 at the 99% significance level (i.e., the SCOPUS and IF rankings correlated to a medium degree).

We ran correlation tests for each committee separately, given the fact that the WoS IF and SCOPUS quartile value of journals is the same across HAS committees (i.e., they all took the highest Q value from the Scimago scientific areas) and only the HAS ranking differed in each committee. The results are summarized in Table 8. Five committees exhibited a (p < 0.05) correlation between HAS ranking and the Q quartile categories as a result of the Pearson Chi squared test (SOC, POL, WED, BUS and LAW). The Cramer V in each of these cases exhibited a weak-moderate correlation (LAW = 0.201; POL = 0.334).

In order to determine how each committee distorted its own ranking, we calculated a strengthening and weakening ratio for each HAS category.

Mathematically, we formalized this values as follows; the results of the computations are shown in Table 9 series (a)–(h).

-

Weakening indicator:

$$w_{j} = \frac{{\mathop \sum \nolimits_{i = j + 1}^{5} a_{ij} }}{{N_{j} }}$$(1) -

Strengthening indicator:

$$s_{j} = \frac{{\mathop \sum \nolimits_{i = 1}^{j - 1} a_{ij} }}{{N_{j} }}$$(2)

where \({w_{j} }\) is the weakening factor of the jth HAS category in the given committee, j runs from 1 to 4 (A = 1, B = 2, C = 3, D = 4), i has five values; the four Q categories (1, 2, 3, 4) and a the non-indexed category (5), \({s_{j} }\) is the strengthening factor of the jth HAS category in the given committee, \({a_{ij} }\) is the number of journals ranked in the ith SCOPUS and jth HAS category, Nj is the total number of journals in the jth HAS category.

It is important to note that by comparing of weakening and strengthening factors of journals we do not judge the HAS categorization but merely draw attention to the distortion from the two main indexing regimes. These strengthening and weakening ratios show how each committee over- or undervalues Q quartile SCOPUS ranking when classifying journals into their respective A–D categories. The LAW and DEM committees show a tendency to weaken SCOPUS rankings and indicate lower motivation for publishing in higher-value INT journals. The BUS and SOC committees, on the other hand, demonstrate a slight overvaluing tendency and provide more incentive for INT publications by placing high HAS rankings on SCOPUS journals. Three committees (WED, POL and REG) appear to be well-balanced—their HAS rankings are very much in accordance with SCOPUS quartiles. Finally, there is one committee that has a significantly large discrepancy and inconsistency in terms of both its number of journals and its ranking practices. We can also see that each committee ranks a relatively large number of non-indexed INT journals. However, there are two committees in which this number exceeds the SCOPUS ranked journals: LAW (965 non-SCOPUS and 417 SCOPUS) and MIL (71 non-SCOPUS and 15 SCOPUS).

Distortion of journal rankings by actual publications

Needless to say, publication preferences also determine how scientists view the value of a journal. After all, it is a strategic choice how to combine different higher and lower ranked journals or when to publish in DOM or INT journals; these decisions result in a socially constructivist judgement of the academic community. Responsibility of the HAS committees are pivotal from this point because by ranking journals they are fine tuning the motivations for researchers’ actual publishing behavior. Researchers prefer to publish in DOM journals across all eight committees (Table 6). In order to modify the committees’ journal rank with a community’s publication behavior, we developed an index that weighs the nominal rank of the journals with the proportion of the publications having appeared in the journal in the given committee of ELD:

where \({\text{RHSA}}_{i}\) is the number of publications in ith journal and \((\sum\nolimits_{i = 1}^{n} {{\text{RHSA}}_{i} } )\) is the total number of publications in the committee, \({\text{HAS}}\_{\text{value}}_{i}\) is the HAS category value of the ith journal, \({\text{HAS}}\_{\text{index}}_{i}\) is the HAS category value weighted by the publication preference of the ith journal, \(Q\_{\text{value}}_{i}\) is the weighted rank of the ith journal, \(Q\_{\text{index}}_{i}\) is the Q category value weighted by the publication preference of the ith journal, \({\text{IF}}\_{\text{value}}_{i}\) is the IF of the ith journal, \({\text{IF}}\_{\text{index}}_{i}\) is the IF value weighted by the publication preference of the ith journal.

If we summarize these weighted journal rankings by committees, we create an index that is a unique attribute of each committee with regards to DOM and INT publication preferences. This index takes into consideration both the actual behavior of researchers (publication) and the assessment of the particular committee of the journal (journal rank). According to this logic, a journal “loses” its rank if it provides easy access to scientists or also if no one ever publishes in it.

The other interpretation to our calculation logic is that a publication in an A-ranked HAS journal is worth four times more than a publication in a D-ranked journal. Taking the LAW committee in for instance, this index is the highest in the case of DOM journals (3.04); in BUS, it is the lowest (2.13) (Table 10). This difference indicates a major difference in evaluation fairness between the two committees; LAW is equivalent to a strong B HAS category and BUS is closer to a C HAS category.

One naturally could argue that it is more valuable for the academic community to proceed with its scientific discourses in local journals, which, for the disciplines of legal studies, public administration, military science or other ELD, provide more relevance, visibility and academic impact. By accepting that in many social science areas local relevance overrides compliance with INT bandwagons, we argue that the quality of journals and a critical amount of readership—indicated by citations—are crucial elements of ranking fairness. In order to support our argument, we collected the following data of 298 DOM journals listed in ELD at the end of 2018:

-

109 (37%) published paper titles in English or some other foreign language,

-

111 (37%) required English or other language abstracts,

-

42 (14%) included DOI identifiers,

-

22 (7%) had ethical guidelines or a codex

-

13 (4%) had an archiving policy (the so-called Sherpa Romeo)

Furthermore, 162 journals out of 298 were published only after a delay of more than 6 months (138 journals suffered a delay of more than a year), and only 136 were published on time or within 6 months. This means that less the half (46%) of the DOM academic journals appear regularly according to their schedule. In this respect, the average delay of the last issue was 42 months. In addition, 20% of the journals were inactive, that is, they only existed as archived collections. An online appearance is also indicative of journal quality; 20% of the HAS-listed DOM journals did not have an online presence at all.

There are also issues with the DOM impact of local journals. As shown in Table 5, Hungarian scientists in the social and management science area of HAS publish about 10 times more papers in DOM journals than INT ones. Table 11 summarizes a simple analysis of the extent to which Hungarian scientists read and cite articles in these journals. We divided the number of papers published in the journals by the number of citations from the same journals in the same 2 years in order to generate an “impact factor-like” ratio. As Table 11 shows, this ratio varies from 0.487 (Military Science committee) to 0.254 (Demography committee). These findings show that the articles do not generate academic discussions or have little impact on the Hungarian research community.

For SCOPUS journals, we used a similar ranking (4 for the category value of Q1 and 1 for Q4). Analyzing the same committees as above reveals opposite differences in evaluation fairness; the BUS index is 2.98 while the LAW index is 0.62. These results show that the academic community of the former committee averages out at around a Q2 SCOPUS rating; the latter does not even reach Q4 if we take into account where researchers in this committee actually publish.

To assess the IF equivalency, there are no “references” because IF is a ratio scale variable. Generally, we can state that the normalized IF value in a committee corresponds to a similar IF level journal. By taking the IF values of INT journals, the dominance of BUS = 3.14 is staggering compared with the committees which are invisible regarding IF scores—Table 9 shows the results of our computation. In our opinion, these data demonstrate the critical inhomogeneity with the IX. Department journal evaluation fairness, which is visualized in Figs. 1, 2 and 3.

Conclusions

We have developed arguments that journal evaluations in a country with specific research strategies and unique language environment generate noticeable influence on publication output and performance. In addition, other documented factors such as path dependency, national relevance of scientific fields and historical heritage affect publication output. Analyzing the journal rankings in the Economic and Legal Department of the HAS and the RHAS, we presented such a case and illustrated how evidence-based assessment of scientific performance can contribute to heated debates on beliefs, normative expectations and actual situation analysis. The availability of online databases and repositories has improved the transparency of scientific publications, even in the niche language countries. The use of such repositories is essential for making public policy decisions for scientific publications.

We have shown, that regardless of the staggering speed of globalization in the scientific fields of ELD committees (i.e., business, demography, law, sociology, political science, regional studies, world economics, law and military science), over 90 percent of publication output has been visible only in Hungarian journals; the remaining 10% is almost exclusively placed into non-indexed journals with questionable quality and visibility.

Our data have shown that this performance history is strongly reaffirmed—and also driven—by inconsistent journal evaluation practices in the IX. Department of the HAS. First of all, the ranking values appear to be strongly biased toward the small number of Hungarian journals compared with the large number of INT journals. Academic committees approach the weakening and strengthening of SCOPUS Scimago rankings differently: Legal Studies and Military Science ranks more non-indexed INT journals than SCOPUS-ranked journals, which likely is responsible for the largest deviation from the INT trends. Web of Science journals and publications with IF are very rare as a result of the HAS Departmental Committee, which declared some years ago that IF is not a required condition for academic promotion in the social sciences. Our empirical data reveal a statistically significant differences in committee journal rankings and also that publication volume in journals correlates with language preferences. We also noted a strong bias toward highly ranked journals according to the HAS rather than SCOPUS or WoS.

We have also developed a so-called publication value index of journals for each scientific committee. This index weights a journal’s given category with the proportion of the papers it contains in the scientific committee according to three schemes (HAS, SCOPUS and WoS). By ranking the committees’ publication performance based on this index, we obtain different results depending on the audience (DOM vs. INT) and the scheme we choose. Legal Studies and Military Science are the leading committees in taking Hungarian genres; Business Studies, on the other hand, rank the highest when the SCOPUS and WoS schemes are selected.

The bias toward non-indexed DOM journals in the social sciences create difficulties in fairly evaluating differences and contributes to a large extent to a decline in the visibility of INT journals and a decrease in the prestige of Hungarian publications.

Our results have been discussed by several committees since we started to publish these data. Beginning in 2017, a much more thorough process has been initiated to create more consistent journal baskets and ensure evidence-based discussions about fair journal evaluations on how to construct journal rankings. All in all, harmonizing publication practices with lists of INT journals is a necessity; the careful implementation of a consistent and fair journal ranking scheme will in our opinion contribute to an increasing motivation for the researchers of the IX. Department to increase their number of publications.

References

Astaneh, B., & Masoumi, S. (2018). From paper to practice; indexing systems and ethical standards. Science and Engineering Ethics, 24(2), 647–654.

Bastow, S., Dunleavy, P., & Tinkler, J. (2014). The impact of social sciences: How academics and their research make a difference. Los Angeles: SAGE.

Bonitz, M., Bruckner, E., & Scharnhorst, A. (1997). Characteristics and impact of the Matthew effect for countries. Scientometrics, 40(3), 407–422.

Braun, T. (2010). Új mutatószámok a tudományos folyóiratok értékelésére—valóban indokolt-e az Impaktfaktor egyeduralma? Magyar Tudomány, 171(11), 215–220.

Bunz, U. (2006). Publish or perish: A limited author analysis of ICA and NCA journals. Journal of Communication, 55(4), 703–720. https://doi.org/10.1111/j.1460-2466.2005.tb03018.x.

Csaba, L., Szentes, T., & Zalai, E. (2014). Tudományos-e a tudományosmérés? Megjegyzések a tudománymetria, az Impakt Faktor és az MTMT használatához. Magyar Tudomány, 174(12), 442–466.

Delgado, E., & Repiso, R. (2013). The impact of scientific journals of communication: Comparing Google Scholar Metrics, Web of Science and Scopus. Cominicar, 41(21), 45–52.

Demeter, M. (2017a). Author productivity index: Without distortions. Science and Engineering Ethics. https://doi.org/10.1007/s11948-017-9954-7.

Demeter, M. (2017b). The core-periphery problem in communication research: A network analysis of leading publication. Publishing Research Quarterly, 33(4), 402–420.

Demeter, M. (2018). Nobody notices it? Qualitative inequalities of leading publication in communication and media research. International Journal of Communication, 12, 1–18.

Gerke, S., & Evers, H.-D. (2006). Globalizing local knowledge: Social science research on Southeast Asia, 1970–2000. Journal of Social Issues in Southeast Asia, 21(1), 1–21.

González-Pereira, B., Guerrero-Bote Vicente, P., & Moya-Anegón, F. (2010). A new approach to the metric of journals’ scientific prestige: The SJR indicator. Journal of Informetrics, 4(3), 379–391.

Gumpenberger, C., Sorz, J., Wieland, M., & Gorraiz, J. (2016). Humanities and social sciences in the bibliometric spotlight—Research output analysis at the University of Vienna and considerations for increasing visibility. Research Evaluation, 25(3), 271–278.

HAS Doctor of Science Requirements (in Hungarian)—www.mta.hu. (05 August 2018). Source: http://mta.hu/doktori-tanacs/a-ix-osztaly-doktori-kovetelmenyrendszere-105380.

HAS Doctoral Regulations, 17/2014. (in Hungarian) (HAS Assembly 06/05/2014). Source: http://mta.hu/data/dokumentumok/doktori_tanacs/IX.%20Osztaly/Doktori_Ugyrend_IX_Osztaly.pdf. Accessed 14 Aug 2017.

Kornai, J. (1992). The Socialist system: The political economy of Communism. Oxford: Oxford University Press.

Lauf, E. (2005). National diversity of major international journals in the field of communication. Journal of Communication, 55(1), 139–151.

Makara, G. B., & Seres, J. (2013). A Magyar Tudományos Művek Tára (MTMT) és az MTMT2. Tudományos és műszaki tájékoztatás, 60(4), 191–195.

Nederhof, A. J. (2006). Bibliometric monitoring of research performance in the social sciences and the humanities: A review. Scientometrics, 1, 81–100.

Pajić, D. (2014). Globalization of the social sciences in Eastern Europe: Genuine breakthrough or a slippery slope of the research evaluation practice? Scientometrics, 102(3), 2131–2150.

Pajić, D. (2015). On the stability of citation-based journal rankings. Journal of Informetrics, 9(4), 990–1006.

Schmoch, U., & Schubert, T. (2008). Are international co-publications an indicator for quality of scientific research? Scientometrics, 74(3), 361–377.

Shenhav, Y. A. (1986). Dependency and compliance in academic research infrastructures. Sociological Perspectives, 28(1), 29–51.

Soós, S., & Schubert, A. (2014). PTB-folyóiratlista MTMT-alapú osztályozása kutatásértékelési eljárásokhoz, A tudományos folyóiratok kutatásértékelési célú osztályozási gyakorlatának korszerűsítése az MTMT adattartalmának felhasználásával. MTA KIK Tudománypolitikai és Tudományelemzési Osztály. Budapest. Source: http://www.mtakszi.iif.hu/docs/jelentesek/TTO_jelentes_MTMT2_D6.pdf. Accessed 14 Aug 2017.

Templeton, G. F., & Lewis, B. R. (2015). Fairness in the institutional valuation of business journals. MIS Quarterly, 39(3), 523–539.

Teodorescu, D., & Andrei, T. (2011). The growth of international collaboration in East European scholarly communities: A bibliometric analysisof journal articles published between 1989 and 2009. Scientometrics, 89(2), 711–722.

Vinkler, P. (2008). Correlation between the structure of scientific research, scientometric indicators and GDP in EU and non-EU countries. Scientometrics, 74(2), 237–254.

Wang, L., & Wang, X. (2017). Who sets up the bridge? Tracking scientific collaborations between China and the European Union. Research Evaluation, 26(2), 124–131.

Wiedemann, T., & Meyen, M. (2016). Internationalization through Americanization: The expansion of the international communication association’s leadership to the world. International Journal of Communication, 10, 1489–1509.

Zdeněk, R. (2018). Editorial board self-publishing rates in Czech economic journals. Science and Engineering Ethics, 24(2), 669–682.

Acknowledgements

Open access funding provided by University of Miskolc (ME).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Sasvári, P., Nemeslaki, A. & Duma, L. Exploring the influence of scientific journal ranking on publication performance in the Hungarian social sciences: the case of law and economics. Scientometrics 119, 595–616 (2019). https://doi.org/10.1007/s11192-019-03081-4

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-019-03081-4