As a communications system, the network of journals that play a paramount role in the exchange of scientific and technical information is little understood…..

Garfield (1972, p. 471)

Abstract

Journals were central to Eugene Garfield’s research interests. Among other things, journals are considered as units of analysis for bibliographic databases such as the Web of Science and Scopus. In addition to providing a basis for disciplinary classifications of journals, journal citation patterns span networks across boundaries to variable extents. Using betweenness centrality (BC) and diversity, we elaborate on the question of how to distinguish and rank journals in terms of interdisciplinarity. Interdisciplinarity, however, is difficult to operationalize in the absence of an operational definition of disciplines; the diversity of a unit of analysis is sample-dependent. BC can be considered as a measure of multi-disciplinarity. Diversity of co-citation in a citing document has been considered as an indicator of knowledge integration, but an author can also generate trans-disciplinary—that is, non-disciplined—variation by citing sources from other disciplines. Diversity in the bibliographic coupling among citing documents can analogously be considered as diffusion or differentiation of knowledge across disciplines. Because the citation networks in the cited direction reflect both structure and variation, diversity in this direction is perhaps the best available measure of interdisciplinarity at the journal level. Furthermore, diversity is based on a summation and can therefore be decomposed; differences among (sub)sets can be tested for statistical significance. In the appendix, a general-purpose routine for measuring diversity in networks is provided.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

The journal network and its role in collecting and communicating advances in science continues to be a source of debate and challenge to understanding. The network illustrates Herbert Simon’s (1962) argument that systems are shaped in hierarchies in order to deal with complexity (Boyack et al. 2014). The journal structures provide order and improve the efficiency of the search for new information. Eugene Garfield enhanced this role by creating additional categories for the evaluation of journals (Bensman 2007).

Although citations are paper-specific (Waltman and van Eck 2012), Garfield (1972) constructed the Science Citation Index (SCI) and its derivatives (such as the Social Science Citation Index) at the journal level (Garfield 1971). By aggregating citations at that level, one obtains a systems view of the disciplines as they are linked to the subjects covered by the respective journals (Narin et al. 1972). The Institute of Scientific Information (ISI) developed a journal classification system—the so-called “Web-of-Science subject categories” (WC)—that is often used in scientometric evaluations. Three decades later, however, Pudovkin and Garfield (2002, p. 1113) stated that journals had been assigned to these categories by “subjective and heuristic methods” that did not sufficiently appreciate, or perhaps allow for the visibility of, the relatedness of journals across boundaries (Leydesdorff and Bornmann 2016). As boundaries are drawn to enhance efficiency, new developments, especially those that bring together disparate ideas in original ways (Uzzi et al. 2013), can be disadvantaged by a scheme that relies on incremental additions to the conventional subject categories (Rafols et al. 2012).

The categorization of research in terms of disciplines has often been commented upon in the history of science. For example, Bernal (1939, p. 78) noted that: “From their very nature there must be a certain amount of overlapping….” In 1972, the Organization for Economic Cooperation and Development (OECD 1972) proposed a systematization of the distinctions between multi-, pluri-, inter-, and trans-disciplinarity as categories for research and higher education (Klein 2010; Stokols et al. 2003) “Multidisciplinary” is used for juxtaposing disciplinary/professional perspectives, which retain separate voices; “interdisciplinary” integrates disciplines; and “transdisciplinary” synthesizes disciplines into larger frameworks (Gibbons et al. 1994). We adopt these definitions in this study.

We return to a question which Leydesdorff and Rafols (2011) raised, but did not answer conclusively at the time, namely, how to distinguish and if possible rank journals in terms of their “interdisciplinarity” in such a way as to identify where creative combinations are indicated. In this previous study we used the 8707 journals included in the Journal Citation Reports (JCR) 2008, and explored a number of measures of interdisciplinarity and diversity, also detailed in Wagner et al. (2011). In this study, we build on the statistical decomposition of the JCR data 2015 (11,365 journals) using VOSviewer (Leydesdorff et al. 2017). A statistical decomposition, however, does not have to be semantically meaningful (Rafols and Leydesdorff 2009). The advantage of this approach, however, is that the two problems—of decomposition and interdisciplinarity—can be separated analytically. Furthermore, we exploit advances made in recent years:

-

1.

At the time, we did not sufficiently distinguish between cosine-normalization of the data as more or less standard in the scientometric tradition (Ahlgren et al. 2003; Salton and McGill 1983) and the use of graph-analytical measures such as betweenness centrality that presume binary networks. The distance measures in the two topologies, however, are very different: graph-analytically, one can distinguish shortest paths in the network of relations; but the vector space is spanned in terms of correlations—that is, including non-relations. Proximity can be expressed in this topology, for example, as a cosine value; and distance accordingly as (1-cosine).

-

2.

Betweenness centrality—the relative number of times that a node is part of the shortest distance (“geodesic”) between other nodes in a network—is an obvious candidate for the measurement of interdisciplinarity; it scored as best for this purpose in the previous comparison. Leydesdorff and Rafols (2011, p. 93) noted that weighted betweenness could be further explored using the citation values of the links as the weights; but at that time, this concept was still under development (Brandes 2001; Newman 2004) and not yet implemented for larger-sized matrices. In the meantime, Brandes (2008) comprehensively discussed betweenness and the measure for valued networks was implemented, for example, in the software package visone (available at http://visone.info).

-

3.

Diversity measures have been further developed into “true” diversity measures by Zhang et al. (2016); “true” diversity can be scaled at the ratio level so that one can consider percentages in increase or decrease of diversity (Jost 2006; Rousseau et al. 2017, in preparation). Furthermore, Cassi et al. (2014) proposed that diversity can be decomposed into within-group and between-group diversity. Using a general approximation method for distributions, these authors have developed benchmarks for institutional interdisciplinarity (see also the further elaboration in Cassi et al. 2017; cf. Chiu and Chao 2014). However, we shall argue that one can decompose diversity in terms of the cell values of (\(p_{i} p_{j} d_{ij}\)) because it is a summation. Differences among aggregates of these values can be tested for statistical significance using ANOVA with Bonferroni correction ex post (e.g., the Tukey test).

-

4.

The availability of virtually unlimited memory resources using a 64-bit operating system and the further development of software for network analysis (Gephi, ORA, Pajek, UCInet, visone, VOSviewer, etc.) enables us to address questions that were previously out of reach.

Interdiscipinarity

Interdisciplinarity has remained a fluid concept fulfilling various functions at the interfaces between political and scientific discourses (Wagner et al. 2011). Funding agencies and policy makers call for interdisciplinarity from a normative perspective based upon their expectation that boundary-spanning produces creative outputs and can contribute to solving practical problems. For example, in 2015 Nature devoted a special issue to interdisciplinarity, stating in the editorial that scientists and social scientists “must work together … to solve grand challenges facing society—energy, water, climate, food, health.” On this occasion, van Noorden (2015) collected a number of indicators of interdisciplinarity showing a mixed, albeit optimistic picture; interdisciplinary research, by some measures, has been on the rise since the 1980s. According to this study, interdisciplinary research would have long-term impact (> 10 years) more frequently than disciplinary research (p. 306). Asian countries were shown to publish interdisciplinary papers more frequently than western countries (p. 307).

On the basis of a topic model, Nichols (2014, p. 747) concluded that 89% of the portfolio of the Directorate for Social, Behavioral, and Economic Sciences (SBE) of the US National Science Foundation is “comprised of IDR (interdisciplinary research)—with 55% of the portfolio identified as having external interdisciplinarity and 34% of the portfolio comprised of awards with internal interdisciplinarity. When dollar amounts are taken into account, 93% of this portfolio is comprised of IDR (…).” Although this result may be partly an effect of the methods used (Leydesdorff and Nerghes 2017), these impressive percentages show, in our opinion, the responsiveness of (social) scientists to calls for interdisciplinarity by funding agencies.

Is this commitment also reflected in the output of research? Do scientists relabel their research for the purpose of obtaining funds (Mutz et al. 2015)? On the output side, the journal literature can be considered as a selection environment at the global level. However, the journal literature has recently witnessed important changes in its orientation toward “interdisciplinarity.” Using a new business model, PLOS ONE was introduced in 2006 with the objective to cover research from all fields of science without disciplinary criteria. “PLOS ONE only verifies whether experiments and data analysis were conducted rigorously, and leaves it to the scientific community to ascertain importance, post publication, through debate and comment” (https://en.wikipedia.org/wiki/PLOS_ONE; MacCallum 2006, 2011). Although this model is multi-disciplinary, it creates room for the evolution of new standards at the edges of existing disciplines.

It remains difficult to define interdisciplinarity, when disciplines cannot be demarcated clearly. Most if not all of science is a process of seeking diverse inputs in order to create innovative insights. Labels as to whether the results of research are classified as “chemistry” or “physics” are added afterwards. Yet, such classifications structure expectations, behavior, and action. A physicist hired in a medical faculty, for example, has to fulfill a different range of expectations and thus faces another range of options than his/her colleague in a physics department.

The journal literature is mostly structured in terms of specialties because its main function has been to control quality, particularly in the case of specialized contributions. The launch of a new journal and its incorporation into the quality control system of the relevant neighboring journals and databases (including the bibliometric ones) provide practicing scientists with new options. The emergence of a new specialty is often associated with the clustering of journals supporting new developments at the field level (e.g., Leydesdorff and Goldstone 2014; van den Besselaar and Leydesdorff 1996). However, the demarcation of disciplines in terms of journals has remained a major problem. As noted, one uses WCs in scientometrics as a proxy, but this generates error (Leydesdorff 2006).

Interdisciplinarity can also be considered as a variable; neither journals nor departments are mono-disciplinary. The system is operational and therefore in flux. But how could one measure the interdisciplinarity of a journal, a department, or even an individual scholar “while a storm is raging at sea” (Neurath 1932/1933)? Is physical chemistry more or less ‘interdisciplinary’ than biochemistry? Or are both parts of chemistry? Does it matter when a laboratory for biochemistry is relabeled as molecular biology, and thereafter classified as biology?

When interviewed, for example, physicists who attended the emergence of nanotechnology and nanoscience during the 1990s considered the nano domain as just another domain in physics, whereas material scientists experienced this same development as revolutionary (Wagner et al. 2015). A new set of research questions became possible and new journals emerged at relevant interfaces, while existing journals changed their orientations; for example, in terms of what is admissible as a contribution. The material scientists considered nanotechnology and nanoscience as a new discipline, while the physicists did not. As Klein (2010) states: “It is two disciplines, one might say, divided by a common subject” (p. 79).

Unlike hierarchical classifications, a network representation of the relations among disciplines and specialties provides room for operational definitions of interdisciplinarity and measurement. Dense areas in the networks can overlap into areas that are less dense; new densities can emerge in the less dense areas because of recursive interactions; densities at interfaces can be approached from different angles, and then other characteristics may prevail in the perception and hence categorization.

In this study, these larger questions about the (inter)disciplinary dynamics of science are reduced to the seemingly trivial question of the measurement of interdisciplinarity of scholarly journals in a specific year. Can we sharpen the instrument so that an operational definition and measurement of interdisciplinarity become feasible? Using the aggregated journal–journal citation network 2015 based on JCR data, we test two measures which have been suggested for measuring interdisciplinarity. By moving from the top level of “all of science” (11 k + journals) to ten broad fields and then to lower levels of specialties, we hope to be able to say more about the quality of the instruments as well as about the problems of measuring interdisciplinarity. In other words, we entertain two research questions: one substantial, about measuring the interdisciplinarity of journals at different levels, and one methodological, about problems with this measurement.

The measurement instruments

The focus will be on two measures of interdisciplinarity: betweenness centrality and diversity.

Betweenness centrality

Betweenness centrality (Brandes 2001, 2008; Freeman 1978/1979) and its derivatives such as “structural holes” (Burt 2001) are readily available in software packages as measures for brokering roles between clusters. A high betweenness measure at the node level indicates that the node has a higher than average likelihood of being on the shortest path from one node to another. This position may enable the agent at the node to control the flow between other vertices (Brandes 2008, p. 137). Investigators with high betweenness are, for example, better positioned to relay (or withhold) information between research groups (Freeman 1977; Abbasi et al. 2012). They are advantaged in terms of search.

Algorithms for variants of betweenness centrality were notably implemented in the software package visone. Among these variants is the possibility to use weighted networks (Freeman et al. 1991). The combination of betweenness centrality with the disparity notion in diversity studies—to be discussed below—is also the subject of ongoing research on Q- or Gefura measures (Flom et al. 2004; Rousseau and Zhang 2008; Guns and Rousseau 2015). However, this further extension is not studied here.

Diversity

Following a series of empirical studies by Alan Porter and his colleagues (Porter et al. 2006, 2007, 2008; Porter and Rafols 2009), on the one hand, and Stirling’s (2007) mathematical elaboration, on the other, Rafols and Meyer (2010) distinguished three aspects of interdisciplinarity: (1) variety, (2) balance, and (3) disparity. Variety can be measured, for example, as Shannon entropy. The participating disciplines in specific instances of interdisciplinarity can be assessed in terms of their balance: a balanced participation can be associated with interdisciplinarity, whereas an unbalanced one suggests a different relationship (Nijssen et al. 1998). In the extreme case, the one discipline is enrolled by the other in a service relationship.

On the basis of animations of newly emerging journal structures, Leydesdorff and Schank (2008) showed that interdisciplinary developments occur often at specific interfaces between disciplines, but are initially presented as—and believed to be—interdisciplinary. From this perspective, interdisciplinarity can be associated with the idea of a pre-paradigmatic phase in the development of disciplines and specialties (van den Daele et al. 1979). New and initially interdisciplinary developments may crystallize into new disciplinary structures (van den Besselaar and Leydesdorff 1996) or they may dissipate as the core disciplines absorb the new concepts.

The measurement of “disparity” provides us with an ecological perspective: a collaboration between authors in biology and chemistry, for example, can be considered as less interdisciplinary in terms of disparity than one between authors in chemistry and anthropology. The cognitive distance between latter two disciplines—being a natural and a social science discipline is much larger than that between two neighboring fields in the natural sciences (Boschma 2005). The disparity thus reflects a next-order structure in terms of ecological distances and niches among journal sets.

Disparity and variety can be combined in the noted measures of diversity (Rao 1982; Stirling 2007) as follows:

In this formula, i and j represent different categories; p i represents the relative frequency or probability of category i, and d ij the distance between i and j. The distance, for example, can be the geodesic (that is, shortest path) in a network or (1-cosine) in a vector space by using the cosine as a proximity measure (Ahlgren et al. 2003; Jaffe 1989; Salton and McGill 1983). The multiplication of the measures of distance and relative occupation has led to the characterization of this measure as “quadratic entropy” (e.g., Izsáki and Papp 1995). Stirling (2007) suggests developing a further heuristics by weighing the two components; for example, by adding exponents. However, one then obtains a parameter space which is infinite (Ricotta and Szeidl 2006).

The first part of Eq. 1 (that is, the measure of variety \(\sum _{\mathop {ij}\limits_{(i \ne j)}} p_{i} p_{j} \) is also known as the Gini–Simpson diversity measure in biology or the Herfindahl–Hirschman index in economics (Leydesdorff 2015). Note that this term is measured at the level of a vector. Using a citation matrix, two different distance matrices can be constructed among the citing and cited vectors, respectively. In the citing dimension, Rao–Stirling diversity has been considered as a measure of integration in interdisciplinary research (Porter and Rafols 2009; Rousseau et al. 2017, in preparation; Wagner et al. 2011, p. 16). Variety and disparity are combined and integrated in a citing paper by the citing author(s). In the cited dimension, one measures diversity (in terms of variety and disparity) in the structures from which one cites. The structures operate as selection environments. Rousseau et al. (2017, in preparation) suggests that diversity in the cited dimension should be considered as diffusion: diffusion can be interdisciplinary to various extents.

Zhang et al. (2016) further developed Δ into 2 D 3 as a “true” diversity measure; true diversity has the advantage that the measure is scaled so that a 20% higher value of 2 D 3 indicates 20% more diversity (Jost 2006). Conveniently, the two measures relate monotonically as follows (Zhang et al. 2016, p. 1260, Eq. 6):

True diversity varies from one to infinity when Δ varies between zero and one. Note that these diversity measures do not include “balance” as the third element distinguished in the definition of interdisciplinarity by Rafols and Meyer (2010). One can envisage adding a third probability distribution (p k ) to Eq. 1 as a representation of the disciplinary contributions (Rafols 2014). Alternatively, “balance” can be operationalized using, for example, the Gini-index.

As noted, Cassi et al. (2014) developed a methodology for the decomposition of diversity into within-group and between-group diversity (see also the further elaboration in Cassi et al. 2017; cf. Chiu and Chao 2014). In our opinion, the Eqs. 1 and 2 are valid for each subset since the operation is a straightforward summation. Consequently, one can decompose diversity in terms of the cell values (\(p_{i} p_{j} d_{ij}\)). Differences among aggregated subsets can be tested using ANOVA with Bonferroni correction ex post (e.g., the Tukey test). For the exploration of this decomposition, we use the WoS Category Library and Information Science (86 journals in the JCR 2015) instead of the set of 62 journals categorized by Leydesdorff et al. (2017) into a single group on statistical grounds. The results are then easier to follow.

Units of analysis

In addition to the various aspects of interdisciplinarity that can be distinguished, the choice of the system of reference will make a difference. Interdisciplinarity can be attributed to departments, journals, œuvres, emerging disciplines, etc. In science studies, it is customary to distinguish between the socially organized group level and the level of intellectually organized fields of science (Whitley 1984). The interdisciplinarity of a group (e.g., a department) can be important from the perspective of team science. The dynamics of interdisciplinary at the field level are relatively autonomous (“self-organizing”; van den Daele and Weingart 1975).

For example, the interdisciplinary development of nano-technology in the 1990s required contributions from chemistry (e.g., advanced ceramics), applied physics, and materials sciences. A group in a chemistry faculty will be positioned for the challenge of participating in this new development differently from a group in physics. The group dynamics, in other words, can be different among groups and from the field dynamics. New fields of science may develop at the global level, whereas groups are localized. One can also consider the fields as the selection environments for groups or, more generally, individual or institutional agency. Selection mechanisms can reflexively be anticipated.

Furthermore, one can attribute interdisciplinarity as a variable to units of analysis such as authors, groups, texts at the nodes of networks, or second-order units of analysis such as links. (Factor loadings, for example, are attributes of variables.) One can expect different dynamics at the first-order or second-order level. Whereas interdisciplinarity can a political or managerial objective in the case of first-order units (e.g., groups), interdisciplinarity attributed at the level of second-order units (e.g., fields) is largely beyond the control of decision makers or individual scientists. Second-order units can be rearranged and thus develop resilience against external steering.

Note that these distinctions are analytical: journals, for example, are organized in terms of their production process, but can be self-organizing in terms of their content to variable extents. The interdisciplinarity of a journal or a department is also determined by the sample and the level of granularity in the analysis. A journal, for example, may appear interdisciplinary in the context of a large set of journals, but when this set is decomposed, the interdisciplinarity may be lost since the borders are drawn differently. For example, important ties to other domains may be cut by decomposition. In sum, one unavoidably entertains a model when measuring “interdisciplinarity;” and by using this model, the concept is (re)constructed.

Methods

Data

We use the directed (asymmetrical and valued) 1-mode matrix among the 11,365 journals listed in the Science and Social Sciences Citation Index in 2015. Table 1 provides descriptive statistics of the largest component of 11,359 journals. (Six journals are not connected.)Footnote 1

In the first round of the decomposition, ten clusters were distinguished. These are listed in Table 2. At http://www.leydesdorff.net/jcr15/scope/index.htm the reader will find a hierarchical decomposition in terms of maps of science.

We pursue the analysis for the complete set (n = 11,359) and for the first cluster (n = 3274). Within this latter subset, 62 journals are classified as Library and Information Science (LIS) in the second round of decomposition. We use the LIS set as an example at the (next-lower) specialty level.Footnote 2

Statistics

As noted, we focus in this study on betweenness centrality and diversity as two main candidates for measuring interdisciplinarity in journal citation networks. The betweenness centrality (BC) of a vertex k is equal to the proportion of all the geodesics between pairs (g ij ) of vertices that include this vertex (g ijk ; e.g., de Nooy et al. 2011, p. 151). The BC for a vertex k can formally be written as follows:

Freeman (1977) introduced several variants of this betweenness measure when proposing it. In their study of centrality in valued graphs, Freeman et al. (1991) further elaborated flow centrality, which includes all the independent paths contributing to BC in addition to the geodesics. In the meantime, a number of software programs for network analysis have adopted Brandes’ (2008) algorithm for valued graphs.Footnote 3 We use Pajek and UCInet for non-valued graphs and visone for analyzing valued ones.Footnote 4

While BC can be computed on an asymmetrical matrix, Rao-Stirling diversity and 2 D 3 are evaluated along vectors in either the cited or citing direction of a citation matrix. Both the proportions and the distances have to be taken in the one direction or the other. One thus obtains two different—but most likely correlated—measures. The proportions are straightforward relative to the sum of the references given by the journal (citing) or the citations received (cited).Footnote 5 The distance measure, however, provides us with another parameter choice.

In line with the reasoning about BC, one could consider using geodesics as a measure of distance. However, the average geodesic in the network under study is 2.5 with a standard deviation of 0.6 (Table 1). In other words, the variation in the geodesic distances is small: most of them are 2 or 3.Footnote 6 The choice of another distance measure—or equivalently (1-proximity)—provides us with a plethora of options. We chose (1-cosine) as the distance measure because Euclidean distances did not work in our previous project (Leydesdorff and Rafols 2011). The cosine has been used as a proximity measure in technology studies by Jaffe (1989); Ahlgren et al. (2003) suggested using the cosine (Salton and McGill 1983) as an alternative to the Pearson correlation in bibliometrics.

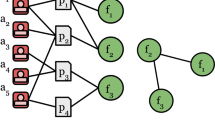

The computation of diversity is computationally intensive because of the permutation of the i and j parameters along the vector in each case. A routine for generating diversity values on the basis of a Pajek file is provided in the Appendix. Note that p i and p j along a vector can both be larger than zero, but the cosine between the vectors i and j in the same direction may be zero. For example, if a journal n is cited by two marginal journals (i = 5 and j = 6 in Fig. 1), the co-occurrence in the vertical direction is larger than zero; but in the horizontal direction the cosine value can be zero and the distance therefore one. The cosine values between marginal journals may thus boost diversity as measured here. (Given the skew in scientometric distributions, one can expect relative marginality to prevail in any delineated domain.)

Results

The full set of 11,365 journals in JCR 2015

Table 3 lists the top-25 journals when ranked for betweenness, valued betweenness, and diversity measured as 2 D 3 in both the citing and cited directions. Not surprisingly, PLOS ONE ranks highest on BC in both the binary and the valued case. The Pearson correlation for the two rankings across the file is larger than .99 (Table 4), but differences at the top of the list are sometimes considerable. PLOS ONE and, for example, Psychol Bull gain in score when BC is based on values, but Nature and Science lose.

Interestingly, Scientometrics ranks 11th on BC in the binary case, but only in the 15th place using valued BC. Typically, this journal cites and is cited by journals in other fields incidentally and unsystematically in addition to more dense citation in its own intellectual environment. Citations to and from Psychol Bull in contrast are more specific. Annu Rev Psychol and Psychol Rev show the same pattern as Psychol Bull of increasing BC when valued.

Most of the journals with high BC values are multi-disciplinary journals. In accordance with its definition, BC measures the extent to which the distance between otherwise potentially distant clusters is bridged. Note that some journals in the social sciences score high on BC, among which is Scientometrics. In our opinion, Scientometrics can be considered as a specialist journal with a specific disciplinary orientation. As noted, however, its citation patterns and being cited patterns span across different disciplines because a variety of disciplines can be the subject of study and indicators are used in other fields. In other words, BC does not teach us about the nature of the knowledge production process, but about patterns of integration and diffusion across disciplinary boundaries (Rousseau et al. 2017, in preparation).

Table 4 shows that BC and 2 D 3 measure different things. Diversity in the citing direction is not correlated to BC. In the cited direction the rank-order correlation is still substantial. This correlation can be explained as follows: the disparity factor (d ij ) indicates the distances that have to be bridged between different domains. The (multi-disciplinary) structure of science is reflected in both this distance and BC. However, variety [\(\sum \mathop {ij}\nolimits_{(i \ne j)} p_{i} p_{j}\)]—as the second component of diversity—is based on a different principle. In the citing dimension, particularly, one may cite across disciplinary boundaries (“trans-disciplinarily”; Gibbons et al. 1994) and generate variety. This source of variation is also reflected in the cited dimension, since the cited can be considered as the archive of a time-series of citing relations. Not incidentally, therefore, we find journals in the right-most column of Table 3 from the periphery, or with a specific national background that may be problem- or sector-oriented (e.g., agriculture). Leydesdorff and Bihui (2005) found such a non-disciplinary orientation in the case of Chinese journals that are institutionally based.

The Journal of the Chinese Institute of Engineering (with 2 D 3 = 20.13 at the top of this list), for example, was cited in 2015 in articles published in 56 journals, but it cites from 230 journals. It can therefore be considered a net importer of knowledge (Yan et al. 2013). Figure 2 shows this environment of 230 journals as a map based on aggregated citation relations. Using BC as the values for the nodes, Fig. 2a first shows the structure of the journals as a map. In Fig. 2b, diversity in the citing direction is used as the parameter for the node sizes. This brings engineering journals more to the fore then physics journals. The J Chin Inst Eng itself is not visible in Fig. 2a, but most pronouncedly in Fig. 2b. Note that there is further nothing special about this journal: its 2-year Journal Impact Factor (JIF) is .246 and the 5-year JIF is .259.

Journals in the social sciences

The largest subset of journals distinguished in the decomposition (Table 2) is a group of 3274 journals in the social sciences. We pursue the analysis for this subset in order to see whether the patterns found above can be considered general. In a next section we zoom further into the subset of journals classified as LIS within this set.

Table 5 shows that Soc Sci Med is ranked highest in terms of BC in both the valued and non-valued analysis. This journal was ranked in the third position in the full set—after PLOS ONE and PNAS. The rank of Scientometrics has now decreased from the 11th to the 21st position using non-valued BC and from the 15th to the 31st position using valued BC. A large proportion of its betweenness is in connecting social science disciplines with the natural and medical sciences. These relations across disciplinary divides are cut by the decomposition (Table 6).

The pattern described above for the full set is also found in this subset. Two factors explain 78.6% of the variance in the four variables: BC versus the variation-factor in the diversity citing (Table 7). The Pearson correlations between BC (binary and valued) and 2 D 3 citing are .010 and .008, respectively. Note the negative sign of 2 D 3 citing on factor 1 in Table 7. The two mechanisms thus stand orthogonally.

The journals in the right-most column of Table 6 are recognizably trans-disciplinary or, in other words, reaching out across boundaries. On the cited side, the pronounced position of sociology journals is noteworthy. Major sociology journals such as Am J Sociol, Brit J Sociol, and Am Sociol Rev figure in this top list as they did in Table 3, but other sociology journals such as Soc Sci Inform also rank high on this list (#9), while this journal was ranked only at position 1363 in the total set.

In Fig. 3, we try to capture the differences visually. Figure 3a first provides a map of these 3274 journals. In Fig. 3b, the node sizes are proportional to the BC scores of the journals. One can see a shift to the applied side. For example, the Am Rev Econ comes to the foreground in the left-most cluster (pink) in Fig. 3a, while this most-pronounced position is assumed by Appl Econ and World Dev in Fig. 3b. Similarly, J Pers Soc Psychology—the flagship of this field—is overshadowed by Psych Bull in the top-right cluster (turkois) of Fig. 3b. This journal is also read outside the specialty. The J Bus Ethics is most pronounced in terms of BC values among the business and management journals in the light-blue cluster top-left.

In Fig. 3c, the node sizes are proportional to the diversity scores (citing). The picture teaches us that highly diverse journals are spread across the disciplines as variation. All disciplines have portfolios of journals of which some are more diverse than others.

Library and information sciences (LIS)

We first pursued the analysis using the 62 journals that were classified as LIS, but for reasons of presentation, here we use the results of the analysis based on citations among the 86 journals classified in terms of SC as LIS in the JCR 2015. Otherwise, the discussion about the differences between the two samples would lead us away from the objectives of this study (cf. Leydesdorff et al. 2017).

In Table 8, Scientometrics is the journal with the highest BC in both analyses. JASIST follows at only the 12th position, while one would expect the latter journal to be more integrative among the different subjects studied in LIS. In terms of knowledge integration indicated as diversity in the citing dimension, JASIST assumes the third position and Scientometrics trails in 45th position. In the cited dimension, the diversity of Scientometrics is ranked 70 (among 86). Thus, the journal is cited in this environment much more specifically than in the larger context of all the journals included in the JCR, where it assumed the 339th and 6246th position among 11,359 observations, respectively. In the latter case the quantile values are 97.0 and 45.0, respectively, versus 47.7 and 7.0 in the smaller set of LIS journals (Table 10).

In this much smaller set, the diversity in the citing dimension is significantly correlated to BC (Table 9). In other words, citing behavior is more specific at the specialty level. The socio-cognitive structure of the field guides the variation. Table 10 shows the values of diversity in the case of Scientometrics at the three levels, respectively. Diversity is larger in the citing than cited dimension at the level of the full set. Limitation to the social sciences leads to losing citation in the citing dimension more than in the cited. As a consequence, diversity is larger in the cited than citing dimension at this level. Being at the edge of the LIS set, the journal cites more than it is cited by other journals in this set.

In sum, diversity is dependent on the delineations of the sample in which it is measured.

Decomposition of the diversity

In a next step we decompose the LIS set of 86 journals (Table 11). Three journals (Econtent, Restauror, and Z Bibl Bibl) are not part of the large component, and therefore not included in this decomposition. Using VOSviewer, six groups are distinguished, of which one contains only a single journal (Soc Sci Inform). Figure 4 shows this map. Mean diversity values with standard errors for the five groups decomposed as sub-matrices are provided in Fig. 5.

Based on the post hoc Tukey test, two homogenous groups are distinguished in the citing dimension: library science and bibliometrics with relatively high citing scores, on the one side, and the other three with significantly lower scores, on the other. However, the distinction is not significant. In the cited direction, the entire set is statistically homogenous.

Within the subsets, however, the diversity scores are based on sub-matrices with corresponding cosine values. Table 12 provides the diversity values when all cell values are normalized in terms of the grand matrix. The difference between the total diversity and the sum of the within-group diversities is then by definition equal to the between-group diversity. Using the Tukey test with this design between-group diversity is significantly different from diversity in all the subsets with the exception of citing diversity in the subset of 19 journals labeled as “information science.” Both cited and citing, “information science” and “library science” are considered as homogenous with between-group diversity. The other three specialism are considered as significantly different.

Note that the total diversity is generated in a matrix of 82 times 81 or 6642 cells, whereas the within-group diversity is generated in subsets which add up to 1648 cells (24.8%).Footnote 7 In other words, diversity is concentrated in these groupings since they generate in 24.8% of the cells (100–53.3 =) 46.7% and (100–58.1 =) 41.9% of the total diversity in the cited and citing directions, respectively.

Diffusion and integration

Bibliographic coupling among diverse sources by a citing unit has been considered as integration (Wagner et al. 2011), whereas co-citation can be considered conversely as diffusion (Rousseau et al. 2017, in preparation). Using the concepts of integration and diffusion of knowledge for citing and cited diversity, however, one can directly draw diffusion and integration networks by extracting the k = 1 neighbourhoods; for example in Pajek. Figure 6a, b provide these networks for the journal Scientometrics in the LIS set (JCR 2015) as an example: 38 journals constitute the diffusion network (Fig. 6a) and 51 the knowledge integration network (Fig. 6b).

For example, Fig. 6b shows that the Journal of the American Society for Information Science and Technology plays a central role in the knowledge integration network. Articles in this journal are cited in Scientometrics; but only the Journal of the Assocation for Information Science and Technology—the current name of the same journal—is visible in the diffusion network (Fig. 6a). Note that this analysis was pursued within the LIS set of 86 journals. Other journals outside the LIS field (e.g., Research Policy) are also important in the citation environment of Scientometrics.

Conclusions and discussion

Using journals as units of analysis, we addressed the question of whether interdisciplinarity can be measured in terms of betweenness centrality or diversity as indicators. We tried to pursue these ideas in considerable detail. It seems to us that the problem of measuring interdisciplinarity, however, remains unsolved because of the fluidity of the term “interdisciplinarity.” The very concept means different things in policy discourse and in science studies. From a scientometric perspective, interdisciplinarity is difficult to define if there is no operational definition of the disciplines. The latter problem, however, has remained an unsolved problem in bibliometrics.

Bibliometricians often use the WoS Subject Categories as a proxy for disciplines, but these categories are pragmatic (e.g., Pudovkin and Garfield 2002; Leydesdorff and Bornmann 2016; Rafols and Leydesdorff 2009). In this study, we build on the statistical decomposition of the JCR data using VOSviewer (Leydesdorff et al. 2017). The advantage of this approach is that the two problems—of decomposition and interdisciplinarity—are separated.

Our main conclusions are:

-

The analysis at different levels of aggregation teaches us that BC can be considered as a measure of multi-disciplinarity more than interdisciplinarity. Valued BC improves on binary BC because citation networks are valued; marginal links should not be considered equal to central ones.

-

Diversity in the citing dimension is very different (and statistically independent) from BC: it can also indicate non- or trans-disciplinarity. In local and applicational contexts, for example, the disciplinary origin of knowledge contributions may be irrelevant. In specialist contexts, however, citing diversity is coupled to the intellectual structures in the set(s) under study.

-

Diversity in the cited dimension may come closest to an understanding of interdisciplinarity as a trade-off between structural selection and stochastic variation.

-

Despite the absence of “balance”—the third element in Rafols and Meyer’s (2010) definition of interdisciplinarity—Rao-Stirling “diversity” is often used as an indicator of interdisciplinarity; but it remains only an indicator of diversity.

-

The bibliographic coupling by citation of diverse contributions in a citing article has been considered as knowledge integration (Wagner et al. 2011; Rousseau et al. 2017). Analogously, but with the opposite direction in the arrows, diversity in co-citation can be considered as diffusion across domains. Using an example, we have demonstrated how these concepts can be elaborated into integration and diffusion networks.

-

The sigma (Σ) in the formula (Eq. 2) makes it possible to distinguish between within-group and between-group diversity. In this respect, the diversity measure is as flexible as Shannon entropy measures (Theil 1972). Differences in diversity can be tested for statistical significance using Bonferroni correction ex post. Homogenous and non-homogenous (sub)sets can thus be distinguished.

In other words, the problems of measurement could be solved to the extent that a general routine for generating diversity scores from networks is provided (see the Appendix). However, the interpretation of diversity as interdisciplinarity remains the problem. Diversity is very sensititive to the delineation of the sample; but is this also the case for interdisciplinarity? Is interdisciplinarity an intrinsic characteristic or can it only be defined (as more or less interdisciplinarity) in relation to a distribution?

We focused on journals in this study, but our arguments are not journal-specific. Some units of analysis, such as universities, are almost by definition multi-disciplinary or non-disciplinary. Non-disciplinarity can also be called “trans-disciplinary” (Gibbons et al. 1994). However, the semantic proliferation of Greek and Latin propositions—meta-disciplinary, epi-disciplinary, etc.—does not solve the problem of the operationalization of disciplinarity and then also interdisciplinarity.

In summary, we conclude that multi-disciplinarity is a clear concept that can be operationalized. Knowledge integration and diffusion refer to diversity, but not necessarily to interdisciplinarity. Diversity can flexibly be measured, but the score is dependent on the system of reference. We submit that a conceptualization in terms of variation and selection may prove more fruitful. For example, one can easily understand that variation is generated when different sources are cited, but to consider this variation as interdisciplinary knowledge integration is at best metaphorical.

Given this state of the art, policy analysts seeking measures to assess interdisciplinarity can be advised to specify first the relevant contexts, such as journal sets, comparable departments, etc. Networks in these environments can be evaluated in terms of BC and diversity. The routine provided in the Appendix may serve for the latter purpose and network analysis programs can be used for measuring BC. (When the network can be measured at the interval scale, one is advised to use valued BC.) The arguments provided in this study may be helpful for the interpretation of the results; for example, by specifying methodological limitations.

Notes

Avian Res, EDN, Neuroforum, Austrian Hist Yearb, Curric Matters, and Policy Rev.

For the delineation of the LIS set, see the appendix of Leydesdorff et al. (2017, pp. 1611f).

Non-valued BC can be obtained by first binarizing the matrix.

UCInet offers also a number of options such as “attribute weighted betweenness centrality,” but the results are sometimes very similar to ordinary BC.

The “total citations” provided by the JCR can be considerably larger than the citations included in the matrix. Probably, citations by other sources such as the Arts and Humanities Citation Index are also included. We correct for this by recounting the total citations (and total references) in each set.

A transformation to a measure between zero and one could be (1 − 1/N) leading to a distance of ½ in the case of a distance of two and 0.67 in the case of three.

(32 × 31) + (19 × 18) + (14 × 13) + (10 × 9) + (7 × 6) = 1648 cells.

References

Abbasi, A., Hossain, L., & Leydesdorff, L. (2012). Betweenness centrality as a driver of preferential attachment in the evolution of research collaboration networks. Journal of Informetrics, 6(3), 403–412.

Ahlgren, P., Jarneving, B., & Rousseau, R. (2003). Requirements for a cocitation similarity measure, with special reference to Pearson’s correlation coefficient. Journal of the American Society for Information Science and Technology, 54(6), 550–560.

Bensman, S. J. (2007). Garfield and the impact factor. Annual Review of Information Science and Technology, 41(1), 93–155.

Bernal, J. D. (1939). The social function of science. London: Routledge & Kegan Paul, Ltd.

Boschma, R. (2005). Proximity and innovation: a critical assessment. Regional Studies, 39(1), 61–74.

Boyack, K. W., Patek, M., Ungar, L. H., Yoon, P., & Klavans, R. (2014). Classification of individual articles from all of science by research level. Journal of Informetrics, 8(1), 1–12.

Brandes, U. (2001). A faster algorithm for betweenness centrality. Journal of Mathematical Sociology, 25(2), 163–177.

Brandes, U. (2008). On variants of shortest-path betweenness centrality and their generic computation. Social Networks, 30(2), 136–145.

Burt, R. S. (2001). Structural holes versus network closure as social capital. In N. Lin, K. Cook, & R. S. Burt (Eds.), Social capital: Theory and research (pp. 31–56). New Brunswick NJ: Transaction Publishers.

Cassi, L., Champeimont, R., Mescheba, W., & de Turckheim, É. (2017). Analysing institutions interdisciplinarity by extensive use of Rao–Stirling diversity index. PLoS ONE, 12(1), e0170296.

Cassi, L., Mescheba, W., & De Turckheim, E. (2014). How to evaluate the degree of interdisciplinarity of an institution? Scientometrics, 101(3), 1871–1895.

Chiu, C.-H., & Chao, A. (2014). Distance-based functional diversity measures and their decomposition: A framework based on hill numbers. PLoS ONE, 9(7), e100014.

de Nooy, W., Mrvar, A., & Batgelj, V. (2011). Exploratory social network analysis with Pajek (2nd ed.). New York, NY: Cambridge University Press.

Flom, P. L., Friedman, S. R., Strauss, S., & Neaigus, A. (2004). A new measure of linkage between two sub-networks. Connections, 26(1), 62–70.

Freeman, L. C. (1977). A set of measures of centrality based on betweenness. Sociometry, 40(1), 35–41.

Freeman, L. C. (1978/1979). Centrality in social networks: Conceptual clarification. Social Networks, 1, 215–239.

Freeman, L. C., Borgatti, S. P., & White, D. R. (1991). Centrality in valued graphs: A measure of betweenness based on network flow. Social Networks, 13(2), 141–154.

Garfield, E. (1971). The mystery of the transposed journal lists—wherein Bradford’s law of scattering is generalized according to Garfield’s law of concentration. Current Contents, 3(33), 5–6.

Garfield, E. (1972). Citation analysis as a tool in journal evaluation. Science, 178(4060), 471–479.

Gibbons, M., Limoges, C., Nowotny, H., Schwartzman, S., Scott, P., & Trow, M. (1994). The new production of knowledge: the dynamics of science and research in contemporary societies. London: Sage.

Guns, R., & Rousseau, R. (2015). Unnormalized and normalized forms of gefura measures in directed and undirected networks. Frontiers of Information Technology & Electronic Engineering, 16(4), 311–320.

Izsák, J., Papp, L. (1995). Application of the quadratic entropy indices for diversity studies of drosophilid assemblages. Environmental and Ecological Statistics, 2(3), 213–224.

Jaffe, A. B. (1989). Characterizing the “technological position” of firms, with application to quantifying technological opportunity and research spillovers. Research Policy, 18(2), 87–97.

Jost, L. (2006). Entropy and diversity. Oikos, 113(2), 363–375.

Klein, J. T. (2010). Typologies of Interdisciplinarity. In R. Frodeman, J. T. Klein, & R. Pacheco (Eds.), The Oxford handbook of interdisciplinarity (pp. 21–34). Oxford: Oxford University Press.

Leydesdorff, L. (2006). Can scientific journals be classified in terms of aggregated journal-journal citation relations using the journal citation reports? Journal of the American Society for Information Science and Technology, 57(5), 601–613.

Leydesdorff, L. (2015). Can technology life-cycles be indicated by diversity in patent classifications? The crucial role of variety. Scientometrics, 105(3), 1441–1451. doi:10.1007/s11192-015-1639-x.

Leydesdorff, L., & Bihui, J. (2005). Mapping the chinese science citation database in terms of aggregated journal-journal citation relations. [Article]. Journal of the American Society for Information Science and Technology, 56(14), 1469–1479. doi:10.1002/asi.20209.

Leydesdorff, L., & Bornmann, L. (2016). The operationalization of “Fields” as WoS subject categories (WCs) in evaluative bibliometrics: The cases of “Library and Information Science” and “Science & Technology Studies”. Journal of the Association for Information Science and Technology, 67(3), 707–714.

Leydesdorff, L., Bornmann, L., & Wagner, C. S. (2017). Generating clustered journal maps: An automated system for hierarchical classification. Scientometrics, 110(3), 1601–1614. doi:10.1007/s11192-016-2226-5.

Leydesdorff, L., & Goldstone, R. L. (2014). Interdisciplinarity at the Journal and Specialty Level: The changing knowledge bases of the journal Cognitive Science. Journal of the Association for Information Science and Technology, 65(1), 164–177.

Leydesdorff, L., & Nerghes, A. (2017). Co-word maps and topic modeling: A comparison from a user’s perspective. Journal of the Association for Information Science and Technology, 68(4), 1024–1035. doi:10.1002/asi.23740.

Leydesdorff, L., & Rafols, I. (2011). Indicators of the interdisciplinarity of journals: Diversity, centrality, and citations. Journal of Informetrics, 5(1), 87–100.

Leydesdorff, L., & Schank, T. (2008). Dynamic animations of journal maps: Indicators of structural change and interdisciplinary developments. Journal of the American Society for Information Science and Technology, 59(11), 1810–1818.

MacCallum, C. J. (2006). ONE for all: The next step for PLoS. PLoS Biology, 4(11), e401.

MacCallum, C. J. (2011). PLOS BIOLOGY-Editorial-Why ONE Is More Than 5. PLoS-Biology, 9(12), 2457.

Mutz, R., Bornmann, L., & Daniel, H.-D. (2015). Cross-disciplinary research: What configurations of fields of science are found in grant proposals today? Research Evaluation, 24(1), 30–36.

Narin, F., Carpenter, M., & Berlt, N. C. (1972). Interrelationships of scientific journals. Journal of the American Society for Information Science, 23, 323–331.

Neurath, O. (1932/1933). Protokollsätze. Erkenntnis, 3, 204–214

Newman, M. E. (2004). Analysis of weighted networks. Physical Review E, 70(5), 056131.

Nichols, L. G. (2014). A topic model approach to measuring interdisciplinarity at the National Science Foundation. Scientometrics, 100(3), 741–754.

Nijssen, D., Rousseau, R., & Van Hecke, P. (1998). The Lorenz curve: A graphical representation of evenness. Coenoses, 13(1), 33–38.

OECD (1972). Interdisciplinarity: Problems of Teaching and Research. In L. Apostel, G. Berger, A. Briggs & G. Michaud (Eds.). Paris: OECD/Centre for Educational Research and Innovation.

Porter, A. L., Cohen, A. S., David Roessner, J., & Perreault, M. (2007). Measuring researcher interdisciplinarity. Scientometrics, 72(1), 117–147.

Porter, A. L., & Rafols, I. (2009). Is science becoming more interdisciplinary? Measuring and mapping six research fields over time. Scientometrics, 81(3), 719–745.

Porter, A. L., Roessner, J. D., Cohen, A. S., & Perreault, M. (2006). Interdisciplinary research: meaning, metrics and nurture. Research Evaluation, 15(3), 187–195.

Porter, A. L., Roessner, D. J., & Heberger, A. E. (2008). How interdisciplinary is a given body of research? Research Evaluation, 17(4), 273–282.

Pudovkin, A. I., & Garfield, E. (2002). Algorithmic procedure for finding semantically related journals. Journal of the American Society for Information Science and Technology, 53(13), 1113–1119.

Rafols, I. (2014). Knowledge integration and diffusion: Measures and mapping of diversity and coherence. In Y. Ding, R. Rousseau, & D. Wolfram (Eds.), Measuring scholarly impact (pp. 169–190). Cham, Heidelberg: Springer.

Rafols, I., & Leydesdorff, L. (2009). Content-based and algorithmic classifications of journals: Perspectives on the dynamics of scientific communication and indexer effects. Journal of the American Society for Information Science and Technology, 60(9), 1823–1835.

Rafols, I., Leydesdorff, L., O’Hare, A., Nightingale, P., & Stirling, A. (2012). How journal rankings can suppress interdisciplinary research: A comparison between innovation studies and business management. Research Policy, 41(7), 1262–1282.

Rafols, I., & Meyer, M. (2010). Diversity and network coherence as indicators of interdisciplinarity: Case studies in bionanoscience. Scientometrics, 82(2), 263–287.

Rao, C. R. (1982). Diversity: Its measurement, decomposition, apportionment and analysis. Sankhy : The Indian Journal of Statistics, Series A, 44(1), 1–22.

Ricotta, C., Szeidl, L. (2006). Towards a unifying approach to diversity measures: bridging the gap between the Shannon entropy and Rao's quadratic index. Theoretical Population Biology, 70(3), 237–243.

Rousseau, R., & Zhang, L. (2008). Betweenness centrality and Q-measures in directed valued networks. Scientometrics, 75(3), 575–590.

Rousseau, R., Zhang, L., Hu, X. (2017, in preparation). Knowledge integration. In W. Glänzel, H. Moed, U. Schmoch M. Thelwall (Eds.), Springer handbook of science and technology indicators. Berlin: Springer

Salton, G., & McGill, M. J. (1983). Introduction to modern information retrieval. Auckland: McGraw-Hill.

Simon, H. A. (1962). The architecture of complexity. Proceedings of the American Philosophical Society, 106(6), 467–482.

Stirling, A. (2007). A general framework for analysing diversity in science, technology and society. Journal of the Royal Society, Interface, 4(15), 707–719.

Stokols, D., Fuqua, J., Gress, J., Harvey, R., Phillips, K., Baezconde-Garbanati, L., et al. (2003). Evaluating transdisciplinary science. Nicotine & Tobacco Research, 5, S21–S39.

Theil, H. (1972). Statistical decomposition analysis. Amsterdam/London: North-Holland.

Uzzi, B., Mukherjee, S., Stringer, M., & Jones, B. (2013). Atypical combinations and scientific impact. Science, 342(6157), 468–472.

Van den Besselaar, P., & Leydesdorff, L. (1996). Mapping change in scientific specialties: A scientometric reconstruction of the development of artificial intelligence. Journal of the American Society for Information Science, 47(6), 415–436.

Van den Daele, W., Krohn, W., & Weingart, P. (Eds.). (1979). Geplante Forschung: Vergleichende Studien über den Einfluss politischer Programme auf die Wissenschaftsentwicklung. Suhrkamp: Frankfurt a.M.

van den Daele, W., & Weingart, P. (1975). Resistenz und Rezeptivität der Wissenschaft–zu den Entstehungsbedingungen neuer Disziplinen durch wissenschaftliche und politische Steuerung. Zeitschrift fuer Soziologie, 4(2), 146–164.

van Noorden, R. (2015). Interdisciplinary research by the numbers: an analysis reveals the extent and impact of research that bridges disciplines. Nature, 525(7569), 306–308.

Wagner, C. S., Horlings, E., Whetsell, T. A., Mattsson, P., & Nordqvist, K. (2015). Do Nobel laureates create prize-winning networks? An analysis of collaborative research in physiology or medicine. PLoS ONE, 10(7), e0134164.

Wagner, C. S., Roessner, J. D., Bobb, K., Klein, J. T., Boyack, K. W., Keyton, J., et al. (2011). Approaches to understanding and measuring interdisciplinary scientific research (IDR): A review of the literature. Journal of Informetrics, 5(1), 14–26.

Waltman, L., & van Eck, N. J. (2012). A new methodology for constructing a publication-level classification system of science. Journal of the American Society for Information Science and Technology, 63(12), 2378–2392.

Whitley, R. D. (1984). The intellectual and social organization of the sciences. Oxford: Oxford University Press.

Yan, E., Ding, Y., Cronin, B., & Leydesdorff, L. (2013). A bird’s-eye view of scientific trading: Dependency relations among fields of science. Journal of Informetrics, 7(2), 249–264.

Zhang, L., Rousseau, R., & Glänzel, W. (2016). Diversity of references as an indicator for interdisciplinarity of journals: Taking similarity between subject fields into account. Journal of the American Society for Information Science and Technology, 67(5), 1257–1265. doi:10.1002/asi.23487.

Acknowledgements

We thank Wouter de Nooy for advice and are grateful to Thomson Reuters for JCR data.

Author information

Authors and Affiliations

Corresponding author

Appendix: A routine for the measurement of diversity in networks

Appendix: A routine for the measurement of diversity in networks

The routine net2rao.exe—available at http://www.leydesdorff.net/software/diversity/net2rao.exe—reads a network in the Pajek format (.net) and generates the files rao1.dbf and rao2.dbf. Rao1.dbf contains diversity values for each of the rows (named here “cited”) and each of the columns (named “citing”). Rao2.dbf is needed for the computation of cell values (see here below).

The input file is preferentially saved by Pajek so that the format is consistent. Use the standard edge-format. The user is first prompted for the name of this .net-file. The output contains the values of both Rao-Stirling diversity and so-called “true” diversity (labels: “Zhang_ting” in the citing direction and “Zhang_ted” in the cited one; see Zhang et al. 2016; cf. Jost 2006).

By changing the default “No” into “Yes,” one can make the program write two files, labeled res_ting and res_ted, containing detailed information for each pass. These files can be used for detailed decompositions. However, the files may grow rapidly in size (> 1 GB). All files are overwritten in later runs; one is advised to save them under other names or in other folders.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Leydesdorff, L., Wagner, C.S. & Bornmann, L. Betweenness and diversity in journal citation networks as measures of interdisciplinarity—A tribute to Eugene Garfield. Scientometrics 114, 567–592 (2018). https://doi.org/10.1007/s11192-017-2528-2

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-017-2528-2