Abstract

Bioscientific advances raise numerous new ethical dilemmas. Neuroscience research opens possibilities of tracing and even modifying human brain processes, such as decision-making, revenge, or pain control. Social media and science popularization challenge the boundaries between truth, fiction, and deliberate misinformation, calling for critical thinking (CT). Biology teachers often feel ill-equipped to organize student debates that address sensitive issues, opinions, and emotions in classrooms. Recent brain research confirms that opinions cannot be understood as solely objective and logical and are strongly influenced by the form of empathy. Emotional empathy engages strongly with salient aspects but blinds to others’ reactions while cognitive empathy allows perspective and independent CT. In order to address the complex socioscientific issues (SSIs) that recent neuroscience raises, cognitive empathy is a significant skill rarely developed in schools. We will focus on the processes of opinion building and argue that learners first need a good understanding of methods and techniques to discuss potential uses and other people’s possible emotional reactions. Subsequently, in order to develop cognitive empathy, students are asked to describe opposed emotional reactions as dilemmas by considering alternative viewpoints and values. Using a design-based-research paradigm, we propose a new learning design method for independent critical opinion building based on the development of cognitive empathy. We discuss an example design to illustrate the generativity of the method. The collected data suggest that students developed decentering competency and scientific methods literacy. Generalizability of the design principles to enhance other CT designs is discussed.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Socioscientific issues (SSIs) raised by the rapid progress and potential applications of life sciences and technology in areas such as genetics, medicine, and neuroscience challenge students and future citizens with new moral dilemmas. For example, results from recent neuroscience research have attracted considerable attention in the media, with popularized information often claiming that neuroimaging can be used to decipher various human mental processes and possibly modify them. Insights into brain functioning seem to challenge the classical boundaries of psychology, biology, philosophy, and popularized science that students are confronted with. They raise intense and complex SSIs for which there is no large body of ethical or educational reflection (Illes and Racine 2005). There are serious issues and some controversy surrounding the confusion of brain activity with mental processes or states of mind (Lundegård and Hamza 2014) and the emotive power of brain scans; for example, Check (2005) and McCabe and Castel (2008) show that neuroimages can have much greater convincing power than the methods and the scientific data they produce a warrant. Ali et al. 2014call this phenomenon neuroenchantment. Proper interpretation of the neuroimaging data frequently presented in popularized science is a key epistemological and ethical challenge (Illes and Racine 2005) that schools do not generally address, leaving future citizens unprepared to face these new issues. Students need to be better equipped with reasonable thinking for deciding what to believe or do: critical thinking (CT).

What citizens know of science is currently shaped mainly by out-of-school sources such as traditional and social media (Fenichel and Schweingruber 2010). Developing CT in students is an important educational goal in many curricula, e.g., the CIIP (2011) in Switzerland. However, the PISA study shows that there is room for improvement (Schleicher 2019). While the internet offers access to invaluable information, the propagation of “fake news” has become a worrying issue (Brossard and Scheufele 2013; Rider and Peters 2018; Vosoughi et al. 2018). Additionally, Bavel and Pereira (2018) argue that our increased access to information has isolated us in ideological bubbles where we mostly encounter information that reflects our own opinions and values. The overwhelming amount of information available on social media paradoxically does not help understand other opinions; rather, it hinders CT and especially perspective-taking (Jiménez-Aleixandre and Puig 2012; Rowe et al. 2015; Willingham 2008).

Adding to these difficulties regarding CT, neuroscience research has been criticized because of distortions introduced through sensationalist popularization. We adopt a neutral stance towards results published under the label of neuroscience or presented as “brain research.” Education must navigate between naïve adhesion to anything published under the label of neuroscience or popularized as “brain research” and rejection of all neuroscience research because of these sensationalist flaws in its popularization. This study is an attempt to address this challenge and propose a new perspective for helping students develop some difficult aspects of CT that might enhance many classical learning designs. Self-centered or group-centered emotions often hinder CT (Ennis 1987; Facione 1990). Sadler and Zeidler (2005) also show that emotive informal reasoning is directed towards real people or fictitious characters. Imagining people’s emotional and moral reactions in these different situations without being overwhelmed by one’s own empathetic emotional reactions is a major difficulty in CT education. While the most basic form of empathy focuses on the emotional aspects of a situation, it blinds us to others (Bloom 2017a) and hinders decentering. The more advanced cognitive form of empathy (Klimecki and Singer 2013) enables decentering and reasonable assessment of moral dilemmas. This article proposes an approach for developing CT that draws not only on rational reasoning but also on understanding others’ emotional reactions (cognitive empathy) to develop the perspective that is needed: thinking independently, challenging one’s own personal or collective interest, and overcoming egocentric values (Jiménez-Aleixandre and Puig 2012). Consequently, developing this decentering aspect of CT in students is a central aim of this contribution. In addition, we argue that a proper understanding of methods is also necessary to discuss the potential and limits of research findings, especially in popularized neuroscience. Thus, methodological knowledge is a preliminary and necessary step towards understanding the social and human implications of such scientific results. Therefore, developing scientific methods literacy is a foundational goal of this contribution.

We will develop this new contribution to CT teaching in five steps:

-

(i)

In Section 2, we will discuss theories that can guide the crafting of learning designs for developing selected CT skills and lead to an original conceptualization focused on decentering when discussing popularized neuroscience. We start by reviewing CT in education and its various definitions and discuss the challenges of its implementation and several approaches. We show through recent literature that attempting to ignore emotions while debating opinions does not reduce their effects on CT. Starting from this, we will discuss the importance of decentering from one’s own values and social belonging in CT and the essential role of empathy in this process. We develop the idea that helping students to discover and understand the scientific methods used in neuroscience research is foundational to imagining its limits and potential as well as others’ moral and emotional reactions. We will argue that focusing the discussion of the SSIs raised on empathetic discussion of these different reactions can enhance decentering skills. We finish by summarizing the design approach.

-

(ii)

In Section 3, we map the theory developed in Section 2 onto educational design principles. We first explain the conjecture mapping technique that we used (exemplified in Section 4). We then define learning goals, i.e., the expected effects (EEs), and finish by elaborating design principles in the form of educational design conjectures for decentering CT skills.

-

(iii)

In Section 4, we present, analyze and discuss an example learning design. Learning design as an activity can be defined as design for learning, i.e., “the act of devising new practices, plans of activity, resources and tools aimed at achieving particular educational aims in a given situation” (Mor and Craft 2012, p. 86). In this study, the learning design is part of the outcome, i.e., a reproducible design. We start by presenting an abstract model based on Sandoval and Bell’s (2004) conjecture map, a design method developed for design-based research that allows the identification of key elements of a learning design in a way suitable for research and practice. The presented design was developed in 10 iterations over 15 years in higher secondary biology classes (equivalent to high school) in Geneva, Switzerland. We then present the design of the 2018/2019 implementation.

-

(iv)

In Section 5, we present some empirical results based on quali-quantitative data from student-produced artifacts from the 2018/2019 cohort. We also present findings from an end-of-semester survey.

-

(v)

Section 6 summarizes and discusses the main findings, discusses their implications and limitations, and outlines further perspectives.

We formulate two research questions at the end of the theory sections that we summarize as follows: (1) How can a conceptualization that focuses on decentering and methods literacy be implemented through an operational learning design and what are its main design elements? (2) Does an implementation of this learning design help students improve the selected CT skills?

2 Theoretical Framework

2.1 Critical Thinking in Education

In education, calls to develop critical thinking (CT) in students are frequent. This crucial skill, necessary for citizens to participate in a plural and democratic society, is often lacking among students according to PISA results (Schleicher 2019). Science education curricula usually include CT as a learning goal. The official curriculum for Swiss-French secondary schools (CIIP 2011) states that “In a society deeply modified by scientific and technological progress, it is important that every citizen masters basic skills in order to understand the consequences of choices made by the community, to take part in social debate on such subjects and to grasp the main issues. In the ever-faster evolution of the world, it is necessary to develop in students a conceptual, coherent, logical and structured thinking, with a flexible mind and a capacity to deliver adequate productions and act according to reasoned choices” (our translation) but then focuses on rational thinking: “The purpose of science is to establish a principle of rationality for the confrontation of ideas and theories with the facts observed in the learner’s world” (CIIP 2011, our translation). Official educational guidelines often focus on the reason-based aspect of CT, but the emotional aspects of CT are also recognized in some official educational programs. For example, the CIIP (2011) mentions the learning goal “reflexive approach and critical thinking,” which consists in the “ability to develop a reflexive approach and critical stance to put into perspective facts and information, as well as one’s own actions…” The descriptors include “evaluating the shares of reason and affectivity in one’s approach; verifying the accuracy of the facts and putting them into perspective” (our translation).

One of the most widely cited definitions of CT, by Robert Ennis, introduces the concept as “reasonable reflective thinking, that is focused on deciding what to believe or do” (1987, p. 6). Ennis proposes a list of twelve dispositions and sixteen abilities that characterize the ideal critical thinker. This list and its items “can be considered as guidelines or goals for curriculum planning, as ‘necessary conditions’ for the exercise of critical thinking, or as a checklist for empirical research” (Jiménez-Aleixandre and Puig 2012, p. 1002). Facione (1990), in a statement of expert consensus, states, “We understand critical thinking to be purposeful, self-regulatory judgment which results in interpretation, analysis, evaluation, and inference, as well as explanation of the evidential, conceptual, methodological, criteriological, or contextual considerations upon which that judgment is based. […] The ideal critical thinker is habitually inquisitive, well-informed, trustful of reason, open-minded, flexible, fair-minded in evaluation, honest in facing personal biases, prudent in making judgments, willing to reconsider, […] It combines developing CT skills with nurturing those dispositions which consistently yield useful insights and which are the basis of a rational and democratic society” (p. 3).

In both texts, the focus is on reasonable thinking, and emotions are only referenced implicitly. For example, Facione’s definition mentions “personal biases,” and the only mention of emotion in the main text is negative: “to judge the extent to which one’s thinking is influenced by deficiencies in one’s knowledge, or by stereotypes, prejudices, emotions or any other factors which constrain one’s objectivity or rationality” (Facione 1990, p. 10). CT seems to shun emotions. As in philosophy and argumentation, emotions are considered out of place in good reasoning (Bowell 2018), and no form of empathy is explicitly taken into account, except within “personal biases.”

A set of Ennis’s CT abilities are related to scientific information literacy: the ability to discuss the limits and potential of scientific information based on a good understanding of the methods and foundations of its elaboration. From a science education point of view, Hounsell and McCune (2002) propose the ability “to access and evaluate bioscience information from a variety of sources and to communicate the principles both orally and in writing [...] in a way that is well organized, topical and recognizes the limits of current hypotheses” (Hounsell and McCune 2002, p. 7, quoting QAA 2002). We draw from this definition that science does not produce truths but tentative, empirically based knowledge that must be understood within the limits of the conceptual framework and hypotheses that determine the methods that produced this knowledge.

It is also important to define what CT does not mean in this context: it does not imply negative thinking or an obsessive search for faults and flaws in scientific results. CT should not be conflated with a systematic criticism of science, which in some cases has become so strong as to create defiance towards science and scientific methods. CT does not mean discussing only bad examples and exaggerated claims or inferences. Angermuller (2018) warns, “research critically interrogating truth and reality may serve propagandists of post-truth and their ideological agenda” (p. 2). Furthermore, CT should not mean observance of a teacher’s personal critical views. CT must focus on skills that allow students to reasonably evaluate knowledge on the basis of available evidence and requires recognizing but decentering from personal biases and understanding scientific methods well enough to evaluate the potential and limits of research.

One classical approach in classrooms is argumentation and debating beliefs and opinions (Bowell 2018; Dawson and Venville 2010; Dawson and Carson 2018; Duschl and Osborne 2002; Jiménez-Aleixandre et al. 2000; Jonassen and Kim 2010; Legg 2018). Additionally, learning progressions organizing skills into different stages have been well discussed (Berland and McNeill 2010; Plummer and Krajcik 2010). Osborne (2010) writes that much is understood about how to organize groups for learning and how the norms of social interaction can be supported and taught. For example, Buchs et al. (2004) show that debate is most efficient as a learning activity when it is very specifically organized to favor epistemic rather than relational elaboration of conflict. This requires ignoring emotions (and implicitly any form of empathy) to focus on rational discussion. Constructive controversy has been demonstrated to be very efficient at identifying the best group answer on a specific question (Johnson and Johnson 2009), but focuses—remarkably well—on keeping the debate rational and does encourage decentering through role exchange; however, in our view, it is not specifically focused on handling the emotions and empathetic reactions that some very sensitive issues can raise, as Bowell (2018) shows.

Teachers who attempt to organize classroom debates or argumentation often encounter great difficulty in doing so (Osborne 2010; Simonneaux 2003). They often feel ill-trained and worried about handling the emotional reactions and value conflicts that arise during discussions and arguments about SSIs. Ultimately, they frequently refrain from debates (Osborne et al. 2013) or confine themselves to the apparently safe boundaries of rationality. How student groups can be supported to produce elaborated, critical discourse is unclear according to Osborne (2010). An unusual approach was proposed by Cook et al. (2017). They describe it well in their title: “Neutralizing misinformation through inoculation: Exposing misleading argumentation techniques reduces their influence.” This immunological metaphor of exposing students to possible biases and manipulations in advance as a strategy for developing CT skills contrasts with approaches where students are protected from and cautioned against such information, which is in turn dismissed. We consider here how to face the educational challenge and address the difficult new SSIs raised by scientific advances—notably in neuroscience.

While this article is not about conceptual change, which is the subject of abundant research, including Clark and Linn (2013); diSessa (2002); Duit et al. (2008); Ohlsson (2013); Posner et al. (1982); Potvin (2013); Strike and Posner (1982); and Vosniadou (1994), it is worth noting that conceptual change also cannot be fully understood without considering the effects of beliefs—especially on some subjects such as evolution (Clément and Quessada 2013; Sinatra et al. 2003; Potvin 2013). Tracy Bowell (2018) insists that against deeply held beliefs, rational argument cannot suffice: “Although critical thinking pedagogy does often emphasize the need for a properly critical thinker to be willing (and able) to hold up their own beliefs to critical analysis and scrutiny, and be prepared to modify or relinquish them in the face of appropriate evidence, it has been recognized that the type of critical thinking instruction usually offered at the first-year level in universities frequently does not lead to these outcomes for learners” (p. 172).

Discussing SSIs engages opinions. Roget’s Thesaurus defines opinions as views or judgments formed about something, not necessarily based on fact or knowledge. For Astolfi (2008), opinion “is not of the same nature as knowledge. The essential question is then no longer to decide between the points of view expressed as to who is right and who is wrong. It is to access the underlying reasons that justify the points of view involved” (p. 153, our translation). Among others, Legg (2018) discusses how difficult—even for professional thinkers—forming a well-built opinion is. We will not address this thorny philosophical question here but discuss how to develop decentering skills with 18- to 19-year-old high school biology students discovering recent popularized research. The central point in this article is not about deciding which opinion is correct or socially acceptable in the specific social and cultural environment of students or even which opinion the current state of scientific knowledge supports. Jiménez-Aleixandre and Puig (2012) highlight the importance of thinking not only reasonably but also independently. This text discusses putting into perspective the rational reasons with emotional and empathetic reactions that justify one’s own opinions through understanding that others might have other underlying reasons and emotional and empathetic reactions leading to different opinions, calling for decentering skills.

It would seem natural to discuss opinions. However, discussing students’ opinions in the multicultural classrooms of today could hurt personal, cultural, or religious sensitivities and can be counterproductive (Bowell 2018). Research has shown that many forms of debate, e.g., debate-to-win (Fisher et al. 2018), can unintentionally modify participants’ opinions (Simonneaux and Simonneaux 2005). Abundant social psychology research has shown, for example, that holding one point of view in a debate modifies the arguer’s opinion (Festinger 1957; Aronson et al. 2013). Cognitive dissonance reduction has long been identified as an obstacle to accepting new ideas (Festinger 1957). Indeed, debating well-established opinions with students or even inexperienced scholars can easily lead to the entrenchment of personal opinions (Bavel and Pereira 2018; Legg 2018). This raises serious ethical questions: some learning designs might influence the opinions of students or might even become manipulative, unconsciously leading students to observance of the teacher’s personal outrage or opinion. Creating fair, respectful, and productive opinion debates in the classroom setting is difficult. The emotional reactions of teachers and students can get out of hand. Biology teachers are sometimes afraid of students’ reactions when discussing socially loaded topics such as the mechanisms of evolution (Clément and Quessada 2013), possibly confusing the well-established explanatory power of evolutionary scientific models with beliefs and opinions students might have. In Switzerland, biology curricula require students to be able to use these scientific models to explain observed phenomena and predict, for example, the consequences for a species of variations in the environment but not to adhere to any specific belief.

For many, a focus on rational and independent thinking should restrict the role emotions play in the opinion building process. Jiménez-Aleixandre and Puig (2012) mention, “Although we think that it is desirable for students (and people) to integrate care and empathy in their reasoning, we would contemplate purely or mainly emotive reasoning as less strong than rational reasoning” (p. 1011). This concern about the threat of emotion-only reasoning could be understood by some readers to imply that rational thinking processes alone should guide independent opinion building to allow decentered thinking and that empathy should not be encouraged. It does not appear realistic to expect this of 19-year-old students, and we will discuss below how ignoring emotions in opinion building processes might in fact increase their influence.

Rider and Peters (2018) discuss free thinking, and Legg (2018) stresses how social media could lead users to avoid encountering any viewpoints or arguments that contradict their own, discussing how professional thinkers and writers seek better opinions by confronting others’ opinions. In her final line, she encourages readers to “[listen] well to those with contrary opinions—even those who promote them most aggressively—since, in the epistemic as opposed to the political space, as ever, ‘the [only] solution to poor opinions is more opinions’” (p. 56). She suggests seeking further information before behaving as if one has certainty as a way to overcome the arrogant assumed certainty that is a dismaying feature of our current regime. We fully agree with the need to take into account differing and contrary opinions: a good capacity for decentering is indeed central to CT, but how this can be achieved is a challenge that cannot be tackled without taking into account emotions and dealing with different forms of empathy.

With young students in particular, social belonging and emotions cannot be ignored. Bowell (2018) shows in an example that “students’ deeply held beliefs […] had been formed in the environments of their families and their communities. […] By recognizing and acknowledging the emotional weight of the students’ deeply held beliefs about climate change and their suspicion toward scientists and the evidence they produce, the teacher found a way to disrupt those beliefs” (p. 183). For 19-year-old students, asking for rational debate while ignoring emotions might be quite problematic for some SSIs. Since CT can be challenged by emotionally overwhelming reactions, without developing skills to decenter students from their own emotional and empathetic responses, many educational designs based on debate might not develop their full potential.

In summary, educational strategies for rational debate have substantial potential to promote science and CT and are often used in schools where CT is pursued; however, it appears, as PISA results show (Schleicher 2019), that there is still room for improvement. New learning designs specifically aimed at balancing reason and emotional reactions may contribute to increasing CT skills. Such designs should probably include learning to deal with the different forms of empathy that will be discussed below and could be implemented before setting up debates or possibly even before students develop their own opinions about the new SSIs raised by the abundance of neuroscience research.

2.2 Emotions and Decentering in Critical Thinking

Recent research adds evidence to what psychologists and some philosophers have long argued, namely, that opinion building and moral decisions cannot be understood solely as cold, objective, and logical (Young and Koenigs 2007; Decety and Cowell 2014; Narvaez and Vaydich 2008; Goldstein 2018) and that rational-only approaches cannot suffice to guide educational interventions on SSIs (Bowell, 2018). According to Sander and Scherer (2009, pp. 189–195), emotion is a process that is fast, focused on a specific event, and triggers an emotional response. It involves 5 components: expression (facial, vocal or postural), motivation (orientation and tendency for action), bodily reaction (physical manifestations that accompany or precede the emotion), feeling (how the emotion is consciously experienced), and cognitive evaluation (interpretations that make sense of emotions and induce them). These interpretations differ across people, moments, individual memories, values, and social belongings, implying complex relationships among emotions, values, and “reason” and indicating how much emotional responses to the same situations can vary according to personal, cultural, and social characteristics. Emotions affect attention to and the salience of specific aspects of a situation (Sander and Scherer 2009) and can lead to focusing only on some aspects of the triggering situation and ignoring others. For example, negative emotions narrow the attentional focus and one’s ability to take others’ emotions, such as pain, into account (Qiao-Tasserit et al. 2018). Positive emotions (Fredrickson 2004; Rowe et al. 2007) can broaden people’s attention and thinking, but negative emotions tend to reduce judgment errors and result in more effective interpersonal strategies (Forgas 2013; Gruber et al. 2011).

The role played by emotions in opinion building has often been considered detrimental (Facione 1990; Ennis 1987). However, Tracy Bowell (2018) argues for “ways in which emotion and reason work together to form, scrutinise and revise deeply held beliefs” (p.170). Sadler and Zeidler (2005) insist on “the pervasive influence emotions have on how students frame and respond to ethical issues” (p. 115), and it appears there is an agreement that opinion building cannot be understood as only objective and logical. Adding empirical evidence to Sadler and Zeidler (2005) in a way, Young and Koenigs (2007) use fMRI data to show that emotions not only are engaged during moral cognition but are in fact critical for human morality and opinion building. Confirming in-group biases identified by social psychologists, neuroscience research suggests that thinking about the mind of another person is done with reference to one’s own mental characteristics (Jenkins et al. 2008) and can therefore interfere with and thwart decentering attempts. Vollberg and Cikara (2018) showed that in-group bias can unknowingly influence emotions and opinions in favor of the priorities and interests of the group. We see this new evidence as convergent with the discussion by Sadler and Zeidler (2005) of the interactions between informal (rationalistic, emotive, and intuitive) reasoning patterns that occur when students think about SSIs.

We have seen that both Ennis (1987) and Facione (1990) support the importance of decentering from one’s own point of view, emotions, and values in order to be able to take into account other, potentially conflicting perspectives. De Vecchi (2006) also differentiates levels of CT, with the highest level being “Debating one’s own work as well as that of others in a cooperative manner. Positively discussing objections from others and taking them into account” (p. 180, our translation). Jiménez-Aleixandre and Puig (2012) emphasize thinking independently, challenging one’s own personal or collective interests and overcoming egocentric values. Piaget (1950) used the term décentration (often translated as decentering) to describe the progressive ability of a child to move from his or her “necessarily deforming and egocentric viewpoint” to a more objective elaboration of “the real connections” between things (p. 107–108, our translation). This move implies disengaging the object from one’s immediate action to locate it in a system of relations between things corresponding to a system of operations that the subject could apply to them from all possible viewpoints. The capacity for “putting oneself in another’s shoes” and envisioning the complex potential intentions and mental states of others, also referred to as the theory of mind or cognitive empathy, begins developing in young children around the age of 2 and appears to be unique to humans and a few other animals (Call and Tomasello 2008; Seyfarth and Cheney 2013).

This particularly highlights the relevance of decentering to independent opinion building processes in our multicultural, connected world, where sensationalism, speed, and immediacy challenge one’s capacity to put into perspective one’s own opinion or emotional reactions. The SSIs raised by neuroscience research include sensitive issues such as claims in popularized media about deciphering various human mental processes (e.g., the placebo effect (Wager et al. 2004), face identification from neuron activity measurements (Chang and Tsao 2017), and vengeance control (Klimecki et al. 2018) and possibly modifying them (e.g., activating brain areas to control pain (deCharms et al. 2005)) that could elicit strongly differing moral views across the diversity of social and religious belongings or personal values and monistic or dualistic views about the mind. Helping students to think independently from their moral perspective about such issues calls for teaching designs specially geared towards developing decentering skills, not just requiring them.

The process of forming an independent opinion about a given SSI should therefore include two dimensions: (1) awareness that one’s point of view and emotional reaction towards a situation are not necessarily the only ones; (2) the capacity to understand and take into account other possible emotional reactions than one’s own without necessarily adhering to them.

Jiménez-Aleixandre and Puig (2012), as they highlight the importance of thinking not only reasonably but also independently, point out that CT should include the challenge of argument from authority (traditional authority of position (Peters 2015)) and the capacity to criticize discourses that contribute to the reproduction of asymmetrical relations of power. They distinguish four main components of CT:

-

1.

The ability “to evaluate knowledge on the basis of available evidence [...]”

-

2.

The display of critical “dispositions, such as seeking reasons for one’s own or others’ claims [...]”

-

3.

The “capacity of a person to develop independent opinions [...] as opposed to relying on the views of others (e.g., family, peers, teachers, media)”

-

4.

“the capacity to analyze and criticize discourse that justifies inequalities and asymmetrical relations of power.” (p. 1002)

For these authors, while the first two components belong to argumentation, the other two have to do with social emancipation and citizenship. This socially decentered dimension of CT highlights the importance of the skills this project focuses on: “the competence to develop both independent opinions and the ability to reflect about the world around oneself and participate in it. It is related to the evaluation of scientific evidence [...], to the analysis of the reliability of experts, to identifying prejudices [...] and to distinguishing reports from advertising or propaganda. Thinking critically [...] could involve challenging one’s own personal or collective interest and overcoming egocentric values” (p. 1012).

We will refer to decentering as the ability to put one’s first emotional reactions in perspective and take into account different, contradictory values and emotional reactions other people (with different values, social contexts, and beliefs) might have in a given situation—real or imagined.

2.3 Empathy as a Skill for Decentering in Critical Thinking?

Singer and Klimecki (2014) write that perspective-taking ability is the foundation for understanding that people may have views that differ from our own and that moral decisions strongly imply empathic response systems. Empathy is “a psychological construct regulated by both cognitive and affective components, producing emotional understanding” (Shamay-Tsoory et al. 2009, p. 617). Empathy is often considered a positive, benevolent emotional reaction, but some forms of empathy can hinder decentering. Bloom 2017a, b) highlights the ambiguous role of emotional empathy in moral reasoning: he argues that empathy is fraught with biases, including biases towards attractive people and for those who look like us or share our ethnic or national background. Additionally, it connects us to particular individuals, real or imagined, but is insensitive to others, however numerous they may be (Bloom 2017a). He compares empathy to a searchlight: it focuses on one aspect of the situation and the emotions it causes but leaves in darkness the other emotional reactions that people with different values or in different situations might have; therefore, some forms of empathy do not facilitate perspective-taking. Klimecki and Singer (2013) distinguish two empathetic response systems. The first response type, emotional empathy, focuses the attention of subjects through the emotions the situation evokes but blinds them to other people’s reactions and leads to self-oriented behavior. A second type of response, cognitive empathy (which we consider to be similar to Sadler and Zeidler’s emotive reasoning), helps one understand the emotional reactions and perspectives of those with different values or from different cultures and is a critical decentering skill. For Shamay-Tsoory et al. (2009), emotional empathy is developed early in infants and acts as a simulation system (I feel what you feel) involving mainly emotion recognition and emotional contagion. Cognitive empathy develops later and relies on “more complex cognitive functions,” such as the “mentalizing” or “perspective-taking” system: the ability to understand another person’s perspective and to feel concerned for what the other feels without necessarily sharing the same feelings. The first form of empathy is problematic (Bloom 2017a), because sharing the negative emotions of others can paradoxically lead to withdrawal from the negative experience and self-oriented behavior. Cognitive empathy allows for a more distant and balanced appraisal of a situation: it results in positive feelings of care and concern and promotes prosocial motivation. It also helps one understand the emotional reactions of others who have different values and social belongings, which is necessary for decentering in CT.

We have seen that opinion building cannot be considered a cold and rational process and that many biases prevent individuals from understanding others’ emotional reactions, which hinders independent thinking in CT. Some forms of empathy, also called perspective-taking, theory of mind, empathy, or sympathy, might mitigate this problem; therefore, we will discuss their implications for thinking about SSIs. Sadler and Zeidler (2005) show that empathy “has allowed the students to identify with the characters in the SSI scenarios and allow for multiple perspective-taking” (p. 115). Furthermore, they describe how emotive reactions can help students imagine others’ reactions and describe informal reasoning as involving empathy, a moral emotion characterized by “a sense of care toward the individuals who might be affected by the decisions made” (p. 121). This informal emotive reasoning is rational and rooted in emotion and differs from rationalistic reasoning. The authors insist that emotive patterns can be directed towards real people or fictitious characters. We assume that empathy (emotive reactions) directed at real or imagined people could be used in education to help students develop a decentered perspective. Complex decisions involving contradictory moral principles strongly imply empathy (Sadler and Zeidler 2005). While Sadler and Zeidler (2005) mention the importance of emotive informal thinking, this skill is not generally addressed when designing education about SSIs.

Shamay-Tsoory et al. (2009) suggest that emotional and cognitive empathy rely on “distinct neuronal substrates.” Singer and Klimecki (2014) also show that the plasticity of these systems allows cognitive empathy to be trained to some degree in a few sessions. Overall, these neuroscientific results suggest that cognitive and emotional systems are complex and concurrent and might well be separate within the brain. While measures of activity, from which empathy is inferred in ways the scientific community recognizes, cannot be considered from a philosophical point of view as proof, it is scientific evidence that is worth considering for learning design. This could imply that cognitive empathy can be activated and trained without necessarily activating emotional empathy. Educational designs that develop cognitive empathy and decentering might help students to “think independently, challenging [their] own personal or collective interest and overcoming egocentric values” while reducing the pitfalls of “emotions […] which constrain one’s objectivity or rationality” (Facione 1990, p. 12). This is the challenge this research focuses on. Cognitive empathy, so crucial for decentering, is not generally developed in schools. Debate-based learning designs that do not distinguish between emotional and cognitive empathy might not realize their full potential because of previous emotionally biased opinions. This could explain some of the difficulties felt by many about purely or mainly emotive reasoning and the limits of intuitive reasoning (Jiménez-Aleixandre and Puig 2012). The conceptualization we develop here suggests pursuing a new approach for developing decentering competency: developing cognitive empathy for the emotional reactions of others while refraining from emotional empathy in the process of building independent opinions.

2.4 Understanding Science Methods to Develop CT

Methods are at the core of research paradigms (Kuhn 1962) and determine a good part of the potential and limits of scientific research (Lilensten 2018). Therefore, some understanding of research techniques and methods is required to assess the scope (including the limits, implications, and potential uses) of research results (Hoskins et al. 2007). Facione (1990) also insists on the necessity of a proper domain-specific understanding of methods. One implication the experts draw from their analysis of CT skills is this: “While CT skills themselves transcend specific subjects or disciplines, exercising them successfully in certain contexts demands domain-specific knowledge, some of which may concern specific methods and techniques used to make reasonable judgments in those specific contexts” (p. 7).

Methods and their limits are often ignored by teachers (e.g., Waight and Abd-El-Khalick 2011; Kampourakis et al. 2014). Didactic transposition (DT) theory (Chevallard 1991) investigates how knowledge that teachers are required to teach is transformed during the process of selection into curricula and adaptation to teacher values and classroom requirements. The methods that produce research results are generally not thoroughly discussed with students. The large body of research on DT shows that to be easily teachable, exercisable, and assessable, classroom knowledge generally becomes definitive and is often reduced to assertive conclusions (Lombard & Weiss 2018).

Understanding the limits of neuroscience research results, especially neuroimaging results, is a particular challenge. A proper understanding of the methods used is needed to understand the limits of such research and develop a critical perspective to overcome neuroenchantment (Ali et al. 2014). There is a risk that activities might be understood as objects and essential concepts and that inferences of the engagement of a specific cognitive process from brain activation observed during a task might be overinterpreted (Nenciovici et al. 2019. While research articles are required to discuss the limits of their claims, proper interpretation of the neuroimaging data commonly found in popularized science is a critical challenge (Illes and Racine 2005), and students are not often presented primary literature. Rather, they encounter transposed versions where claims and simplified interpretations are typically presented as definitive without discussion of the limits that the methods imply. Indeed, there are many issues with the emotive power of brain scans; for example, Check (2005) and McCabe and Castel (2008) show that neuroimages can have much more convincing power than the methods and the scientific data they produce warrant, leaving future citizens unprepared to face new issues as they arise. We will refer to this solid understanding of the methods required to assess the limits and potential uses of research as scientific method literacy.

Since methods are generally absent or insufficiently represented in the popularized science that students are confronted with (Hoskins et al. 2007), this has an important implication: in order to discuss SSIs, it is necessary to refer to the original article to obtain a proper understanding of the potential uses and limits of the research. Having secondary or high school students use primary literature with some help has been shown to be possible and, in fact, beneficial for a good understanding of science (Yarden et al. 2009; Falk et al. 2008; Hoskins et al. 2007; Lombard 2011).

From this literature, we draw the need for what we call scientific methods literacy, in this context defined as the ability to understand scientific techniques and methods sufficiently to imagine potential uses and limits. This will generally imply some access to primary literature.

2.5 Educational Design for Decentering CT Skills

Let us recall that we aim to propose and discuss a new learning design to develop a selection of students’ skills for CT about SSIs in neuroscience. More precisely, we aim to foster an independent opinion building. The aims of this article are (1) to translate the new conceptualization emerging from the theoretical framework into an instructional design that develops the selected CT skills in higher secondary biology classes, (2) to describe this design, and (3) to analyze and discuss the results produced by this design in its final iterative refinement. Our literature review identified two crucial skills that learners should develop to improve their CT: (i) decentering skills: the ability to decenter from one’s first emotional reactions and take into account different, contradictory values, and emotional reactions; (ii) certain scientific methods literacy skills: specifically defined here as the ability to understand scientific techniques and methods sufficiently to imagine potential uses and limits. Not discussed in this article but also relevant are other scientific information literacy skills, i.e., the ability to select and understand scientific articles and to produce text according to typical scientific practice. Below, we shall briefly outline the overall design approach, the learning goals, and the main guiding principles that can be used to generate specific learning designs such as the one presented in Section 4.

Learning is a process that can be guided and encouraged but not imposed. “One of the ways that teaching can take place is through shaping the landscape across which students walk. It involves the setting in place of epistemic, material and social structures that guide, but do not determine, what students do” (Goodyear 2015, p. 34). In that view, the materials and resources presented do not automatically map to learning gains; rather, the cognitive activities learners effectively practice determine the learning. Accordingly, the epistemic, material, and social structures (practical activities and productions) must be designed to encourage these cognitive activities. Goodyear (2015, p. 33) explains that “The essence of this view of teaching portrays design as having an indirect effect on student learning activity, working through the specification of worthwhile tasks (epistemic structures), the recommendation of appropriate tools, artefacts and other physical resources (structures of place), and recommendation of divisions of labor, etc. (social structures).”

Thinking of teachers as designers offers methods for dealing with complex issues, reframing problems, and working with students “to test and expand the understanding of the problem. Reframing the problem, for example by seeing the problem as a symptom of some larger problem, is a classic design move” (Goodyear 2015, p. 35). Successive iterations of the design in this project led to the new conceptualization of CT about popularized neuroscience presented here. “Typically, design-based research imports researchers’ ideas into a specific educational setting and researchers then work in partnership with teachers (the local inhabitants) to develop, test and refine successive iterations of an intervention” (Goodyear 2015, p. 41). Design is not a one-way process by which theory is applied to practice; Schön (1983) has shown that in the development of expertise, theory is informed by practice as much as practice is informed by theory, in a continuous process. This study is design-based research (DBR), a research paradigm that was developed as a way to carry out formative research for testing and refining educational designs based on theoretical principles derived from prior research (Barab 2006; Brown 1992; Collins et al. 2004; Sandoval and Bell 2004). In DBR, iterations of the design produce conclusions—including an enrichment of the theoretical framework and derived design rules—that lead to the optimization of the design and are fed into the next iteration. “Design-based research progresses through cycles of theoretical analysis, conjectures, design, implementation, analysis and evaluation which feed into adjusting the theory and deriving practical artefacts” (Mor and Mogilevsky 2013, p. 3). Analyzing the data from each design cycle led to reframing the problem and clarifying and focusing the education goals, which raised new research questions that in turn led to obtaining data more relevant to these renewed questions in the next iteration.

According to Collins et al. (2004), DBR is focused on the design and assessment of critical design elements. It is particularly well suited for exploratory research on learning environments with many variables that cannot be controlled individually—which rules out experimental or pseudoexperimental paradigms. Instead, design researchers try to optimize as much of the design as possible and to observe carefully how the different design elements are operating. As a qualitative approach, DBR is well suited to the creation of new theories (Miles et al. 2014). This choice is also ethically justified, since this is not a short experimental intervention but a semester-long course in which tightly controlled conditions might not offer the best learning conditions: in DBR, the design is iteratively adapted and offers to students the benefit of the best available design the research can provide at any time (Brown 1992). Better, more relevant data from each iteration were used to extract design principles and optimize the design offered to students the following year. DBR is similar to action research (Greenwood and Levin 1998) in the tightly interwoven student, teacher, and researcher implication and the feeding of information back to the community. In DBR, however, the design itself is the object of research and provides valuable insight into learning processes. Compared with other research paradigms, DBR is less about comparison with other published designs than about producing better questions, developing workable designs, and proposing design rules.

From this multiyear DBR approach emerged (i) the new conceptualization on which this article is based, (ii) the identification of educational goals focused on decentering skills and scientific methods literacy, (iii) the design principles presented in Section 3, and (iv) the methods for obtaining and discussing data relevant to these goals presented in Section 4.

3 From Theory to Design Conjectures

The method we used to guide the design of this educational module is strongly inspired by Sandoval and Bell 2004’s conjecture maps. We explained this method elsewhere and how we used it to help teachers in training to create, implement, and reflect on their educational designs (Lombard, Schneider & Weiss 2018). Central in this approach is the role of embodied conjectures. These are “design conjectures about how to support learning in a specific context, that are themselves based on theoretical conjectures of how learning occurs in particular domains” (Sandoval and Bell 2004, p. 215). In our model, conjectures (CJs) are implemented as design elements (DEs), which are specific items (generally activities that can be enacted) introduced into the design to produce educational effects, called expected effects (EEs), such as understanding and perspective-taking. These outcomes, being abilities or competencies (EEs here), are not directly measurable (Miles et al. 2014), and we therefore look for performed, observable activities that reflect them. EEs are therefore assessed through observable effects (OEs), such as student productions, observations, or other traces in which relevant indicators can be measured. The codebook used for the research is available in Appendix Table 1. In the proof-of-concept design, a simplified version was used by the teacher for assessment; the OEs used to measure the EEs are described in Section 4.2. The DEs describe and assess the effects of the critical design elements specifically introduced to implement the CJs. They imply that a basic workable learning design is available, e.g., analyzing articles in the category information processing models described by Joyce et al. (2000) and that teachers have the skills to implement this classical design. To summarize, conjecture maps explicitly state how conjectures (CJs), i.e., contextualized theoretical constructs, will be implemented with design elements (DEs), what the expected educational effects (EEs) are, and how these can be measured with observable effects (OEs) by teachers and researchers. Researchers and teachers use the same data but analyze them differently for different purposes. Teachers use OEs to measure student progression for formative assessment (Brookhart et al. 2008), for diagnostic assessment (Mottier Lopez 2015), to inform student guidance, or for student certification. Researchers in this project used these data to assess the efficiency of the design, i.e., to discuss the relevance of the OEs as measures of the EEs and the efficiency of the DEs in producing the EEs and to possibly question the CJs.

Educational strategies aiming to develop perspective-taking should be specifically designed to help students imagine and understand emotional and moral reactions to new research that are different from their own. Based on our theoretical discussion, the precise learning goals we aim to develop are scientific methods literacy and decentering competency. To compose the conjecture map (Sandoval and Bell 2004), we decompose these into four operationalized key skills, the expected effects (EEs):

-

Scientific information literacy: the ability to find, select, and use scientific text.

-

EE1: identify the typical, structural elements of a scientific article (the ones often missing in a popularized article), such as the methods and references section and communicate these elements, accurately and concisely, orally, and in writing.

-

EE1 is part of the design but is not analyzed in this article.

-

Scientific method literacy: The ability to understand how the research was carried out.

-

EE2: understand the techniques and methods presented in the scientific articles in order to assess the limits of scientific claims and identify several plausible possible uses of the techniques and methods introduced in the article.

-

-

Decentering competency: The ability to take some distance from one’s own emotional reactions to moral issues and to imagine and/or take into account other possible moral principles.

-

EE3: imagine different moral reactions to the possible uses of the techniques and methods presented in the article under study.

-

EE4: realize that one’s own reactions are not unique and consider other moral principles to assess each potential use without expressing one’s opinion.

-

The main point here is helping students realize that their own opinions are influenced by an ensemble of personal values and social belongings that are not absolute and can be put into perspective in order to develop decentering skills for CT. Values can be loosely defined here as what grounds a person’s judgments about what is good or bad and desirable or undesirable.

To inform the design of a learning environment to develop these educational goals, we summarize the theory discussed into a set of CJs. In other words, the educational design process is to be guided by several design hypotheses that we call CJs (Sandoval and Bell 2004). Each is explained below:

-

CJ1: Reading and analyzing scientific articles helps students improve the structure and content of their own scientific texts. Learners have to search the primary literature for specific knowledge, such as methods, and are guided to recognize and become familiar with the structure of scientific articles (Falk et al. 2008; Hoskins et al. 2007) and to elaborate their analysis in an imposed structure. Practiced repeatedly with constructive feedback, this is expected to improve their scientific literacy (Hand and Prain 2001).

-

CJ2: Sufficient understanding of the techniques and methods is needed to imagine the potential uses and limits of the student-studied research. We have seen that methods are often ignored in science teaching. Let us consider a recent paper presenting a method for producing images of the faces seen by a subject based on measurements of the neuronal activity of 200 brain neurons (in macaques) during facial visualization (Chang and Tsao 2017). Potentially, images of what a macaque—and probably a person—is seeing, remembering, and imagining could be produced on a computer screen with this neuroscience technique. Potential uses of this technology that raises important SSIs could include eventually being able to identify a criminal suspect’s face by recreating an accurate image of the face through neuronal analysis of the victim’s brain (a sort of direct, brain-to-paper police sketch). A good understanding of the research methods used and their limits is needed to assess the plausibility of this potential use.

-

CJ3: An array of potential uses of the scientific techniques studied can set the stage for cognitive empathy. Let us recall that emotional-only empathy and biases might narrow the attentional focus and prevent students from taking into account other possible emotional reactions by people with different values, from different social groups, etc. Additionally, debating opinions can unwittingly modify students’ opinions and could trigger personal, cultural, or religious sensitivities in the multicultural classrooms of today. This leads us to restrain students from stating their opinion. To encourage decentering and cognitive empathy, the theoretical discussion presented leads us to propose discussing potential new situations in which students can imagine what different people—with different values, from different cultures, etc.—could potentially use this new technique to do. In an abstract discussion of SSIs, it might be difficult to evoke others’ emotional reactions, since cognitive empathy is a process that requires imagining people’s reactions. It follows that SSIs should be contextualized in situations that the students can relate to and in which they can imagine others and their reactions.

-

CJ4: Framing SSIs as evoking different emotional reactions and expressing them in terms of conflicting values without mentioning one’s own opinion can develop decentering skills. Students should be encouraged to imagine possible uses, even some that might seem unacceptable to them, in order to explore possible reactions from people with different values and from different cultures and to use cognitive empathy in order to learn how to decenter when encountering a thorny and difficult SSI. Learners are encouraged to restrain their emotional empathy but to foster cognitive empathy, which is central to decentering. As an example, neuroimagery can be used to measure pain experience (Wager et al. 2004). The technique (the specific use of fMRI found in the methods) has many potential uses: to compare the effectiveness of and improve pain treatment, to detect fraudulent or simulated illness for insurance purposes, even to compare the pain induced by different torture treatments, etc. These situations can help students imagine the emotional reactions of other people. Refraining from expressing personal opinions could ultimately help to put them into perspective and discover the moral reasons that might cause rejection or adoption of this particular use. These can be expressed as dilemmas.

From the operational formulation of scientific literacy and decentering competency learning goals as four key skills, expressed here as EEs, and the theoretical design constructs, expressed as CJs (CJ1–4), we formulate the following research subquestions:

-

RQ1: How can this conceptualization (the CJs and EEs) be implemented into an operational learning design, and what would be the main DEs? More precisely,

-

1.

How can activities that develop scientific methods literacy skills (learning goal EE2) be designed?

-

2.

How can activities that develop decentering abilities (learning goals EE3 and EE4) be designed?

-

RQ2: Does the learning design help students improve the selected CT skills? This RQ2 is also divided into two subquestions:

-

1.

What evidence can be found that the design improves scientific methods literacy skills in students?

-

2.

What evidence can be found that the design improves decentering abilities in students?

4 From Design to a Proof-of-Principle Implementation

Our global research approach—DBR—has already been described in Section 2.5. Here, we describe the context and the method used to collect and analyze qualitative student data from a proof-of-principle semester course. The module was designed and implemented in a higher secondary biology class in Geneva, Switzerland, by one of the authorsFootnote 1 beginning in 2003. It was conducted over a period of 15 years with a total of ten different cohorts of students and refined after each implementation. The module we discuss was first implemented in autumn 2002–2003 and improved through 10 iterations until 2018–2019. In this contribution, we present and discuss the latest version of the design.

Over the course of this study, deep societal transformations, including the emergence of social media and the political turmoil caused by fake news or “alternative facts,” resulted in a shift in the goals of the design and implementation. Additionally, theoretical input from research on science epistemology and CT led to a clearer conceptualization and a better focus of the design, which is intrinsic to the DBR paradigm. Over a decade and a half, this project moved from an initial focus on discovering recent bioscience research that would be relevant for future citizens to a second, that is, discussing the nature of science. This led us to consider scientific methods literacy, which is needed to properly understand and put into perspective research findings. Furthermore, an explicit focus on developing and strengthening CT skills emerged—at a time when awareness of CT was gaining in importance. The classes also focused more specifically on neuroscience research, as it was gaining media coverage. Students’ difficulty in formulating independent opinions about complex and new SSIs that raised emotional reactions became more apparent. This eventually led us to explore various designs that encourage learners to put into perspective their own opinions when discussing SSIs and that develop decentering skills. The theoretical input from empathy research (Singer and Klimecki 2014) led to a focus on cognitive empathy. Taking into account Shamay-Tsoory et al. (2009) led to the exploration of possible design elements specifically geared towards practicing cognitive empathy to take emotions into account without reinforcing emotional biases and emotional empathy. Attempts to manage this while avoiding the pitfalls of opinion debate led to the focus on identifying dilemmas in the learning design principles and the proof-of-principle design (2018/2019 implementation) presented here.

4.1 Population, Data Collection, and Analysis

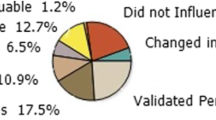

The data sources are student-produced artifacts—written papers from 2 to 3 home assignments and a written exam—and responses from an individual online anonymous survey administered at the end of the semester to assess students’ perceptions of their CT skills, specifically, decentering and scientific methods literacy.

In the Geneva higher secondary curriculum, students choose at the age of 16 one optional class (OC) composed of 4 semester-long modules (2 periods weekly). Students cannot choose their OC within their major, so students in this study neither have a strong background in biology nor in science generally. This study took place in the third module (ages 18–19). Classes included 13 to 24 students. Other modules with other teachers treated human’s influence on the environment and climate change, neurobiology, and microbiology. Data on student progression were collected from the cohort (13 students) of the autumn 2018–2019 semester. Four papers were analyzed: two to three written assignments handed in during the semester (3–8 pages, graded) and the final exam, each analyzing a different recent article about neuroscience. One student did not hand in all the assignments, so her data were omitted, leaving a cohort of 12 students whose data are presented in Fig. 3. All 13 completed the survey.

The third assignment was not mandatory for students who obtained full marks on assignments 1 and 2, so only 7 students handed in the third assignment. We analyzed the results of assignments 1 and 2 and the final exam. All 13 students gave permission for their anonymized papers to be analyzed for research purposes.

Data analysis was performed using mixed quali-quantitative methods (Miles et al. 2014.

To answer the second research subquestion, we present and compare the students’ first paper (completed at the very beginning of the semester) with their second paper. We then compare, by the same method, paper 2 with paper 3, when available, or the final exam. The EEs were observed, coded on a 3-point scale and analyzed using five indicators of decentering and perspective-taking skills: the identification of scientific methods and techniques (EE2), the quantity of moral dilemmas presented, the diversity of values presented, the quality of moral dilemmas presented (EE3), and the student’s decentered communication (EE4). The codebook is available in Appendix Table 1. Double coding of the first and last papers was applied until a 78% intercoder agreement was reached, and simple coding was then applied for the other papers. Size effects (Cohen’s d) were computed between the first and last papers.

The end-of-semester survey included open questions about students’ perception of their progression (comparing their first and last assignment); their approach towards scientific articles and popularized science; what they learned about the relations of science and society, about opinion building, and about refraining from giving their opinion; what they learned as they built moral dilemmas; what they learned about using cognitive empathy to approach SSIs and about distinguishing emotional and cognitive empathy; the design itself, its structure, the resources, and what they considered efficient; and if the learning was worth the effort. Many of the questions were used to improve the design over the years (DBR); however, a selection of responses relevant to this research will be presented and discussed.Footnote 2

We shall now present and discuss the proof-of-principle learning design that was then implemented in a class.

4.2 The Proof-of-Principle Learning Design

The first research question, RQ1, is a design question. It asks how a learning design that favors the development of scientific literacy and decentering competency can be implemented. The criteria for success are whether a reusable design can be defined, implemented, and evaluated. Below, we will present the key DEs implementing our theoretical CJs that could be used to attain the learning goals (EEs). The second research question (see Section 5) regards evaluating the effects in an implementation.

Using the CJ mapping design method described in Section 3, we will now present the sample learning design as a detailed conjecture map connecting the theory to DEs, learning goals, and effects (Fig. 1). Each CJ is connected to one or more DE that in turn leads to EEs. EEs (learning outcomes) can be shared and observed through OEs, e.g., student-produced artifacts such as texts or papers produced during assignments. The latter two can be used by teachers to support the teaching process and by researchers to evaluate the design.

CJ1 on scientific literacy was implemented as DE1.

-

DE1: Students write an individual paper according to a specific structure: an introduction; the techniques and methods used in the student-studied research; a list of their potential uses; and a table listing, for each use, the reasons why oneself or others might favor it in the form of opposing values (moral dilemmas). This DE is necessary to achieve EE1 (students identify the typical, structural elements of a scientific article, and communicate these elements). Three OEs (OE1, OE2, OE3) can be used to assess students’ scientific method literacy. In this study, OE2 and OE3 were scored between 1 (lowest) and 3 (highest) using the codebook in Appendix Table 1. OE1 (text structure) was not evaluated.

CJ2 (Sufficient understanding of the techniques and methods is needed to imagine the potential uses and limits of the student-studied research) is implemented with DE2 and DE3. First, students must learn about the method and then imagine possible uses of the research as well as different people’s emotional and moral reactions:

-

DE2: Students read a popularized article, try to identify the methods, write a section in an individual paper, and refer to the original article if the information in the popularized article is not sufficient. The EEs are EE1, as above, and EE2 (Students understand the techniques and methods presented in the scientific articles in order to imagine the potential uses and limits of scientific claims). Students must grasp the essence of the methods to produce an explanation of the methods that can be used to imagine possible uses. Learners realize that scientific claims are limited by methods and that popularized articles generally do not clearly explain the methods or discuss their limits. OE1 (text structure and elements) and OE2 (summary of methods) are used as observables.

-

DE3: Find or imagine a list of potential uses of the new methods and techniques—even some that might be offensive to oneself or to other people—and write a section in an individual paper. DE3 supports EE2 and EE3 (Students imagine different moral reactions towards the possible uses of the techniques and methods presented in the article under study). OE4 (table of dilemmas) includes several potential uses realistically linked to the methods and was scored between 1 (lowest) and 3 (highest) using the codebook in Appendix Table 1.

Decentering competency is the perspective-taking ability to take some distance from one’s own emotional reactions to moral issues and to imagine and/or take into account other possible moral positions. It relies on two CJs: CJ3 and CJ4. CJ3 (an array of potential uses of the scientific techniques studied can set the scene for cognitive empathy) is also implemented as DE3 (imagine uses of techniques and methods) and leads to the following expected and observable effects: EE3 (same as above), OE4 (table of dilemmas includes a diversity of moral values), and OE5 (moral dilemmas involve truly opposing contradictory values). The OEs are scored from 1 (lowest) to 3 (highest) using the codebook in Appendix Table 1). CJ4 focuses on decentering (framing SSIs as evoking different emotional reactions and expressing them in terms of conflicting values without mentioning one’s own opinion can develop decentering skills).

-

DE4: Students must create a table with at least two opposing values or moral principles on each line, e.g., “improvement of well-being” vs. “natural course of illness” or “knowledge progress” vs. “religious values considering early embryos as human life.” Alternatively, students could be asked to present the conflicting emotional reactions that other people might have according to their different values and social contexts. DE4 supports EE4: students realize that their own reactions are not unique and are capable of considering other values to assess each potential use without expressing their own opinion (decentering). The related OEs are OE5 (moral dilemmas involve truly opposing contradictory values) and OE6 (text uses decentered expression, no personal opinion, and balanced mention of other values), which are scored between 1 (lowest) and 3 (highest) using the codebook in Appendix Table 1.

4.3 Implementation of a Proof-of-Principle Learning Design

This abstract learning design was implemented in a classical information processing learning model (Joyce et al. 2000). The resulting learning design for the 2018/2019 class can be summarized in three phases, through which students produce (i) a description of methods (OE2), (ii) a list of potential uses (OE3), and (iii) a list of dilemmas (OE3, OE4) with opposing values (OE5) that uses decentered expression (OE6). A summary of the learning design that was implemented and studied is illustrated in Fig. 2.

For each of the three assignments, students were first given a popularized article on recent neuroscience research to read and were helped in class to understand the methods by identifying them in the original article from the primary literature (the student-studied research) in journals such as Nature, Science, and PNAS (DE1, DE2). Then, they were asked to use this understanding of the methods to elaborate a list of potential uses of these methods/techniques and discuss their plausibility, afterward creating a table relating each potential use to at least one moral dilemma between opposing moral principles. They had to produce (at home) a written text guided by a teacher-imposed structure:

-

1.

Introduction

-

2.

Methods and techniques: identify and describe the scientific methods and techniques used to obtain the results presented.

-

3.

Potential uses: identify or imagine potential uses of these techniques and methods and evaluate their plausibility.

-

4.

Moral dilemma: identify the moral dilemmas resulting from each of the potential uses and formulate them in terms of dilemmas (tensions between moral principles).

Students analyzed in detail three scientific articles for the written assignments. These artifacts were assessed and marked. The articles were as follows: (1) Tourbe (2004); original article: Wager et al. (2004). (2) Servan-Schreiber (2007); original article: Singer et al. (2004). (3) Peyrières (2008); original article: McClure et al. (2004). Another five articles were discussed only in the classroom, and the final exam was the fourth artifact. The exam was based on (4) Campus (2018); original article: Klimecki et al. (2018). For this class, the moral principles included benevolence, autonomy, equality, respect for life, pursuit of knowledge, and freedom of trade. They were empirically selected for their heuristic value, as the secondary students in this biology course did not have a strong background in philosophy, and the decentering goal required awareness of moral differences but not a very fine classification. Of course, other learning designs could use a different list tailored to the background of the students and goals of the curriculum. Students were required to produce a table that linked each potential use to a pair (or more) of conflicting reactions and moral values (a moral dilemma).

Over the course of the semester, feedback and assessment—at first focused mainly on scientific methods literacy—were progressively widened in scope to include potential uses and finally perspective-taking ability. In this proof-of-principle design, these assignments were graded using the OEs described above using what amounted to a simplified version of the rubric used for this research (see Appendix Table 1) and returned with written formative feedback highlighting specifically which items needed to be improved. Marks were improvement-weighted: progress was encouraged by a bonus on the next assignment when the items marked as wanting were improved on. This was inspired by knowledge improvement research (Scardamalia and Bereiter 2006) and was introduced as a strong incentive for students to improve. Through this iterative process, students were expected to gradually improve the selected skills and the texts produced. A final exam assessed the students’ skills acquired over the whole semester.