Abstract

Purpose

The Oxford Knee Score (OKS) is a validated 12-item measure of knee replacement outcomes. An algorithm to estimate EQ-5D utilities from OKS would facilitate cost-utility analysis on studies analyses using OKS but not generic health state preference measures. We estimate mapping (or cross-walking) models that predict EQ-5D utilities and/or responses based on OKS. We also compare different model specifications and assess whether different datasets yield different mapping algorithms.

Methods

Models were estimated using data from the Knee Arthroplasty Trial and the UK Patient Reported Outcome Measures dataset, giving a combined estimation dataset of 134,269 questionnaires from 81,213 knee replacement patients and an internal validation dataset of 45,213 questionnaires from 27,397 patients. The best model was externally validated on registry data (10,002 observations from 4,505 patients) from the South West London Elective Orthopaedic Centre. Eight models of the relationship between OKS and EQ-5D were evaluated, including ordinary least squares, generalized linear models, two-part models, three-part models and response mapping.

Results

A multinomial response mapping model using OKS responses to predict EQ-5D response levels had best prediction accuracy, with two-part and three-part models also performing well. In the external validation sample, this model had a mean squared error of 0.033 and a mean absolute error of 0.129. Relative model performance, coefficients and predictions differed slightly but significantly between the two estimation datasets.

Conclusions

The resulting response mapping algorithm can be used to predict EQ-5D utilities and responses from OKS responses. Response mapping appears to perform particularly well in large datasets.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Although condition-specific health-related quality-of-life (HRQoL) measures may be more sensitive and [1, 2] sufficient to assess efficacy, comparing incremental cost-effectiveness between conditions requires generic measures, such as the EQ-5D [3–5], that give health state preferences or utilities [1, 2]. Utilities are needed for calculation of quality-adjusted life-years (QALYs) and are scaled such that one equals perfect health, zero indicates death and negative values represent health states worse than death.

EQ-5D, the most widely used utility scale [6], includes five questions on mobility, self-care, pain, usual activities and anxiety/depression, each with three response levels [3–5]. Utility valuations for all 243 EQ-5D health states are based on time-trade-off valuations by 3,395 members of the UK general public [3, 4]. Alternative tariffs have been developed for other countries [7].

There is growing interest in algorithms that map one HRQoL measure onto another, thereby enabling estimation of QALYs from trials that include condition-specific measures but not utility instruments [8–10]. Most mapping studies use regression methods to directly predict utilities from responses or scores on condition-specific measures [8, 9]. However, response mapping models predicting patients’ responses to a multiattribute utility measure provide an alternative approach [9, 11–15]. Response mapping may provide better utility predictions as well as giving richer insights into the relationship between the two instruments, predicting the domains most affected by disease or treatment and calculating utilities for any tariff [11].

The Oxford Knee Score (OKS) is a widely used HRQoL measure that was developed and validated for the assessment of outcomes following knee replacement in comparative trials and cohort studies [16–18]. OKS is also increasingly used to assess eligibility for primary [19] or revision surgery [20], although it was not designed or validated for this purpose. It is also administered routinely to assess the performance of hospitals or surgeons in the UK and New Zealand Patient Reported Outcome Measures (PROMs) initiatives [21–24]. OKS includes 12 questions on knee symptoms and function, each with five levels. Scores on each question, which range from 4 (no problems) to 0 (severe problems), are summed without weighting to produce total scores ranging from 0 to 48 [18].

Since its development in 1998, OKS has been used in many large trials and cohort studies assessing the long-term durability of knee components that did not include utility measures [17]. A mapping algorithm predicting utilities from OKS would enable long-term data from these studies to inform cost-utility analyses.

This study estimates mapping models to predict utilities and/or responses to the three-level EQ-5D questionnaire based on responses and scores on the OKS. We also compare the performance of different mapping models and assess whether different datasets yield different mapping algorithms.

Methods

Data

Estimation datasets

Data from the Knee Arthroplasty Trial (KAT) and the UK PROMs initiative were combined to provide a large, diverse sample of knee replacement patients on which to develop a robust mapping algorithm and to test whether mapping models are sensitive to the dataset used. Following best practice [10] and to avoid over-fitting during model selection, 25 % of patients were allocated to the internal validation sample using computer-generated random numbers. All questionnaires from these patients were excluded from estimation models and were instead used to assess the prediction accuracy of each model and select the final model specification. The estimation sample and internal validation sample were then combined to estimate the final model, which was externally validated on a third dataset.

KAT comprised a randomized trial comparing different types of knee prosthesis, in which 2,352 patients underwent total knee replacement in the UK between 1999 and 2003. Patients completed OKS and EQ-5D pre-operatively, three and 12 months after knee replacement and annually thereafter for 8–11 years to date. All questionnaires received by 4 May 2011 that had complete responses to OKS and EQ-5D were included in mapping analyses, giving an estimation dataset of 12,961 questionnaires from 1,690 patients.

Within PROMs, all patients undergoing knee replacement in England are sent OKS and EQ-5D questionnaires pre-operatively and 6 months afterwards [22, 23]. We analyzed PROMs data on admissions for knee replacement up to 31 December 2010 that included 162,066 questionnaires with complete OKS and EQ-5D data from 106,320 patients. All questionnaires with complete data on EQ-5D and OKS were included in mapping analyses regardless of whether pre- and post-operative data were linked. This provided an estimation sample of 121,308 observations from 79,523 patients.

External validation dataset

The external validity of the best mapping algorithm was tested using a dataset from the Elective Orthopaedics Centre (EOC) that was not made available to the authors until after the final model was selected. This comprised a large observational cohort of patients undergoing hip or knee replacement at an NHS treatment centre serving four NHS trusts in South-West London from January 2004 onwards [25]. Patients completed EQ-5D and OKS pre-operatively and 6, 12 and/or 24 months afterwards. Although recruitment is ongoing, our analysis included only patients undergoing primary or revision knee replacement before 31 March 2009 to avoid overlap with PROMs. After excluding patients with incomplete data on OKS and/or EQ-5D, this external validation dataset included 10,002 observations from 4,505 patients.

Statistical methods

Model estimation

We first estimated direct utility mapping models by regressing responses to individual OKS questions directly onto EQ-5D utility using four functional forms:

-

Ordinary least squares (OLS) regression.

-

Generalized linear models (GLM) with log link or gamma family predicting EQ-5D disutility (where disutility = 1 − utility), which allow for the skewed distribution of utility values and prevent prediction of utilities >1.

-

Fractional logistic models, which constrain predictions to lie within the range determined by the EQ-5D tariff (1 to −0.594 [4]). These were implemented by using GLM with binomial family and logit link to predict utility0–1, where utility0–1 = (utility + 0.594)/1.594.

Two-part models were used to allow for the 9.6 % (17,184/179,482) of observations reporting perfect health (utility of one) on EQ-5D. For such models, the first part comprised a logistic regression model estimated on the entire estimation sample to predict which patients had perfect health, while the second part comprised an OLS model predicting EQ-5D utilities for those patients with utility <1.

We also developed and evaluated three-part models since 45.9 % (48,318/105,235) of pre-operative questionnaires indicated severe problems on ≥1 EQ-5D domain and therefore had substantially lower utility due to the N3 term in the EQ-5D tariff [4]. The first part of this model comprised multinomial logistic regression (mlogit) to predict whether patients had perfect health, severe problems on ≥1 EQ-5D domain or only mild–moderate problems. The second and third parts comprised OLS models to predict EQ-5D utility for the subset of patients with severe problems on ≥1 EQ-5D domain and for those with only mild–moderate problems, respectively.

We also used response mapping to predict the response level that patients selected for each of the five EQ-5D domains. These were estimated by fitting a separate mlogit or ordinal logistic regression (ologit) model for each EQ-5D domain, as described previously [11].

The explanatory variables for all models comprised 48 dummy variables indicating whether or not patients had a particular response level on each OKS question; response level 4 (no problems) comprised the comparison group. However, all models were also evaluated using two alternative sets of explanatory variables: 12 OKS question scores (rankings from 0 to 4); and total OKS (measured from 0 to 48 [18] based on unweighted summation of question scores). We also investigated whether adding sex into the best performing model improved prediction accuracy; to ensure that the mapping algorithm can be applied to all datasets, no other patient characteristics were added.

All models were estimated in Stata version 11 (StataCorp, College Station, TX). For all models, the cluster option within Stata was used to adjust standard errors to allow for clustering of observations within patients. Standard errors from two-part, three-part and response mapping models were also adjusted using seemingly unrelated regression to allow for correlations between EQ-5D domains [26].

Assessing model performance

Predicted EQ-5D utilities were estimated for each mapping model. Predictions from direct mapping models were estimated using the predict post-estimation command, with direct back-transformations applied to predictions from GLM and fractional logit models. For OLS models, any utilities predicted to be >1 were set to one. For two-part models, the expected utility for each patient was estimated as

where U equals the predicted utility conditional on imperfect health and Pr(Utility = 1) the predicted probability of having perfect health.

Similarly, for three-part models,

where Pr(N3) indicates the probability of having severe problems on ≥1 domain, U N3 the predicted utility conditional on this and U mild−moderate the predicted utility conditional on mild–moderate problems.

For response mapping models, the highest probability method (assuming that patients have the EQ-5D response level for which the predicted probability from multinomial/ordinal logistic regression is highest) has been shown to give biased predictions, and, in particular, underestimates the probability that patients will have severe problems [11, 14]. Instead, we generated predictions from response mapping models using the expected value method [14]. This is equivalent to the Monte Carlo method [11] given a large number of repeated Monte Carlo draws [14].

Models were selected based on the mean squared error (MSE) in the combined internal validation sample, where MSE equals the mean of squared differences between observed and predicted EQ-5D utility. Mean absolute error (MAE, the mean of absolute differences between observed and predicted EQ-5D utility) was also calculated.

Two further analyses assessed whether different datasets produced significantly different mapping models. Firstly, mapping models were estimated on the combined estimation dataset with a full set of interaction terms capturing the effect of data coming from KAT rather than PROMs on the coefficients for each OKS response. Secondly, each mapping model was re-estimated separately using each dataset to assess whether the model giving best predictions differed between datasets.

Results

Exploratory data analysis

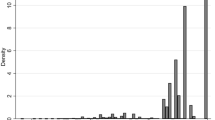

Across both KAT and PROMs, patients had poor pre-operative HRQoL, with mean OKS of 18.6 (SD: 7.9; range: 0, 48; Table 1) and mean utility of 0.39 (SD: 0.32, range: −0.594, 1), which is lower than those reported for many forms of cancer or cardiovascular disease [27]. In particular, 87.5 % (92,124/105,235) of patients had problems with mobility, usual activities and pain pre-operatively. HRQoL improved substantially following knee replacement to a mean OKS of 33.8 (SD: 10.2; range: 0, 48) and mean utility of 0.70 (SD: 0.27; range: −0.594, 1). Like some previous mapping datasets [10], post-operative EQ-5D utilities followed a trimodal distribution (Fig. 1).

Total OKS was highly correlated with EQ-5D utility (R 2 = 0.61; p < 0.001). All OKS items showed significant Spearman’s rank correlations with all EQ-5D domains (p < 0.0001), and all OKS and EQ-5D questions loaded strongly onto a single component explaining 40 % of the variance in pre-operative scores and 54 % post-operatively. Plotting mean utility against OKS question ranking suggested that response levels were approximately linear with respect to utility for all OKS items other than pain (which showed a larger drop in utility between levels 0 and 1 than between other levels) and washing/drying, walking, shopping and going downstairs (which showed a much smaller drop between levels 0 and 1).

Comparison of mapping model specifications

Eight mapping functions were evaluated using the combined estimation dataset (Table 2), which comprised 134,269 observations of 81,213 patients drawn from both the KAT and PROMs datasets.

Across all functional forms, models using dummies indicating responses to OKS questions as explanatory variables produced better predictions than those using question or total scores (data not shown). However, all models using OKS responses as explanatory variables showed some logical inconsistencies in coefficient values that contradicted the implicit ordering whereby OKS response level 4 is unambiguously best, followed by level 3, 2, 1 and then 0.

Based on MSE, the primary measure of prediction accuracy, a response mapping algorithm using mlogit gave best predictions (MSE: 0.0356; Table 2), followed by the three-part model (MSE: 0.0358). However, the three-part model had lower MAE than mlogit (0.1338 vs 0.1341). The ologit response mapping (MSE: 0.0359), two-part model (MSE: 0.0360) and OLS (MSE: 0.0363) also performed reasonably well. However, fractional logit and GLM models gave relatively poor predictions (MSE: 0.0367–0.0397) and systematically underestimated utilities by an average of 0.00063–0.0025. The mlogit model also overestimated utilities for those with utility <0.5 by less than any other model (mean residual: 0.160, vs 0.162–0.170) but underestimated utilities for patients with utility ≥0.5 by a larger amount than any model other than ologit or GLM with gamma link (mean residual: −0.078, vs −0.075 to −0.076).

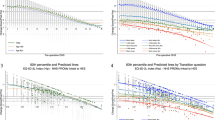

Impact of dataset on results

The relative performance of different model specifications differed between datasets (Fig. 2). When models were estimated using KAT data, a two-part model performed best in the KAT internal validation sample (MSE = 0.0331). However, mlogit performed best (MSE = 0.0356) among the models estimated on PROMs. Models estimated using the PROMs dataset gave more accurate predictions than those fitted on KAT for both pre-operative and post-operative observations. Models fitted on the PROMs or combined datasets also had up to 20 % fewer OKS items with counter-intuitive signs or rankings and converged more easily than those estimated using KAT.

Mean squared error (MSE) for models fitted on each dataset. Models were tested in their respective internal validation samples: for example, the performance of models estimated using KAT was tested on 25 % of the KAT sample. *Levels 0/1 (or levels 3/4) were merged for one or more OKS item in logistic regression models as problems with perfect prediction or non-symmetric/highly singular variance matrices arose with the first-part model when all response levels were included

We then fitted the best five models to the combined dataset with a full set of interaction terms capturing the effect of dataset on coefficient values. This suggested that 15–27 % of coefficients differed significantly between datasets (p < 0.05). Notably, all coefficients for the work OKS item were significantly higher and those for washing/drying were significantly lower for KAT than PROMs unless questionnaires more than 6 months post-operation were excluded, probably due to the effect of ageing and retirement during the longer KAT follow-up.

However, cross-validation prediction accuracy was good, with a two-part model fitted using KAT data having an MSE of 0.0361 in the PROMs validation sample and the mlogit PROMs model having an MSE of 0.0338 in KAT. The predictions from models estimated on the two datasets were also very similar: the mean absolute difference in predictions between the two-part model estimated on KAT data and the mlogit PROMs model was 0.031 (p < 0.0001), while the difference in predicted change from baseline at 3–6 months was 0.0042 (p = 0.003). Both models accurately predicted the mean utility in the other dataset: the mlogit PROMs model predicted the mean utility in KAT to be 0.665, vs an observed value of 0.654, while the two-part KAT model predicted the mean PROMs utility to be 0.496 vs an observed value of 0.503. Coefficient values and choice of model also differed between pre- and post-operative observations, although models of pre-operative utilities predicted post-operative utilities well (MSE: 0.0277) and vice versa (MSE: 0.0446).

Performance of best model

Although the relationship between OKS and EQ-5D differed significantly between datasets and following knee replacement, the magnitude of such differences was very small. Furthermore, mapping models fitted on a heterogeneous population including both baseline and post-treatment observations and those from trials and routine clinical practice are likely to be useful for most practical applications. The mlogit response mapping model fitted on the combined estimation dataset was therefore selected as the best model since it gave the lowest MSE overall for both pre- and post-operative observations.

Adding patient sex into this model reduced MSE by only 0.0000073, to 0.03554. The final model (Table 3; Appendix 1: Electronic supplementary material) therefore included only OKS responses and was fitted on the entire KAT and PROMs dataset (including the internal validation sample).

The final model accurately predicted EQ-5D utility in the combined KAT/PROMs sample (MSE: 0.0355; MAE: 0.134) and the external EOC sample (MSE: 0.0330; MAE: 0.129). Within EOC, 18 % of predictions were within 0.05 and 42 % within 0.10 of the observed utility value; predicted and observed utilities were strongly correlated (R 2: 0.69; Fig. 3a). The predicted proportions of patients with different response levels on each domain were very similar, but were significantly different from the observed proportions (p < 0.0001, based on chi-squared test in Microsoft Excel 2003): for example, the model predicted that 26 % of EOC questionnaires indicated some anxiety and depression, compared with the 28.5 % (2,848/10,002) observed. The model also accurately predicted mean utility (observed: 0.607; predicted: 0.597) in EOC. Like most mapping models [10], ceiling and floor effects produced heteroskedastic residuals, causing our model to slightly underestimate utilities for patients with high EQ-5D utility and overestimate utility for patients with low utility (Fig. 3b). Predicted utilities also had a smaller range (−0.29 to 0.95 vs −0.594 to 1) and standard deviation (0.26 vs 0.32) than observed values.

Performance of the best model in the external validation sample. a Scatter plot showing correlation between observed and predicted EQ-5D utility. b Scatter plot showing correlation between residual (predicted minus observed EQ-5D utility) and observed EQ-5D utility. c Mean squared error by OKS. d Mean squared error by observed EQ-5D

For all models, prediction accuracy was better for post-operative observations (Fig. 2) and observations with high utility (Fig. 3d) and markedly worse for patients with OKS between 11 and 20 than for those with better or worse knee function (Fig. 3c). Within the KAT baseline sample, predictions were also less accurate (MSE: 0.043) for those with poor pre-operative general health (ASA grade 3–4) and those with arthritis in other joints. The final model also accurately predicted change in utility following knee replacement (MSE: 0.0656; MAE: 0.192).

Discussion

We have developed a mapping algorithm that accurately predicts EQ-5D utility based on OKS responses; model performance was similar to previous mapping models, which have obtained MAEs between 0.0011 and 0.19 [8]. The mapping model shown in Table 3 can be used to predict responses to the EQ-5D questionnaire and EQ-5D utilities in situations where only OKS has been administered. In particular, this will facilitate cost-utility analyses of the numerous trials and registries that used OKS but no utility measure. Excel and Stata code developed to estimate predictions are available at http://www.herc.ox.ac.uk/downloads, and methods to estimate standard errors around predictions from the variance–covariance matrices of response mapping models (Appendix 1) are under development. The models described require patient-level data on OKS responses, although a simpler model for secondary data is available at http://www.herc.ox.ac.uk/downloads. However, mapping is no substitute for including a utility measure in future studies and does not overcome the limitations of either instrument [10].

In addition to producing more accurate predictions in this study, response mapping models naturally deal with non-Gaussian utility distributions and mirror the way utilities are calculated. Furthermore, while direct mapping models must be developed for specific tariffs, response mapping algorithms can be applied to any three-level EQ-5D tariff available now or in the future [11]. Although prediction accuracy varied with tariff, our algorithm gave accurate predictions of utilities in the external validation sample using the EQ-5D tariffs for Spain, Germany, Netherlands, Denmark, Japan, Zimbabwe and USA (MSE ≤ 0.055). Response mapping also gives richer insights into the relationship between the two instruments, for instance predicting the proportion of patients with different response levels on each domain. However, such models appear to perform much better when estimated on very large datasets. The three-part model specification we developed to deal with the N3 term in the UK EQ-5D tariff also performed very well; this specification may be particularly useful for other mapping applications where severe problems on EQ-5D domains are common.

Our dataset is (to our knowledge) the largest sample used for mapping analyses to date and covers the full range of EQ-5D and OKS scores. In particular, our large sample size appears to have overcome previously cited difficulties with mapping between Oxford Hip Score and EQ-5D, such as lack of overlap between pre- and post-operative scores and poor prediction of anxiety and depression [28]. Although the model performed well overall, predictions were less accurate for patients with OKS between 11 and 20, which appears to be due to uncertainty about which 54.3 % of such patients have severe problems on ≥1 domain, since MSE did not vary markedly with OKS when observed and predicted utilities were recalculated without the N3 term. The N3 term may also explain the general finding of higher accuracy for healthier patients [8]. However, the performance of our mapping algorithm in populations dissimilar to ours (e.g. patients with early arthritis) or for studies using non-English language questionnaires is unknown.

Although OKS includes questions directly relating to mobility, self-care, usual activities and pain, no OKS questions directly ask about psychological symptoms or strongly predict responses to the EQ-5D anxiety/depression question (mlogit pseudo-R 2: 0.14 for anxiety/depression, vs 0.36–0.55 for other domains). Nonetheless, we found that OKS predicts anxiety/depression responses reasonably accurately, probably as pain and poor knee function explain much of the anxiety/depression observed in this population. Nonetheless, any mapping algorithm between OKS and EQ-5D is likely to perform poorly in subgroups of patients who have psychological conditions that unrelated to their knee problems. Our mapping algorithm was also less accurate in patients with comorbidities or arthritis in other joints, probably due to OKS’ focus on knee problems.

Models using dummies indicating OKS response level as the explanatory variable gave better predictions than those modelling total or question scores. This demonstrates the advantages of modelling response levels for each question whenever the estimation dataset is large enough to estimate coefficients reliably. Regression analyses also indicate that some items (e.g. pain or impact on work) have more effect on utility than others (Table 3). OKS total score was nonetheless a strong predictor of EQ-5D, suggesting that the OKS scoring system (which assigns equal weight to all questions and assumes levels are equally spaced given the wording of questions and response levels) is a good measure of HRQoL.

However, coefficients for some OKS response levels had counter-intuitive signs or rankings (Table 3): for example, the coefficients showing the effect of being unable to walk at all without severe pain (0.35) or being able to walk only around the house (0.86) on having level 2 mobility were lower than the coefficient for walking 5–15 min (0.99). Such inconsistencies were less common in mapping models fitted on the larger PROMs dataset than on KAT, although 57 % of OKS items were inconsistent in the final model (Table 3). Similar inconsistencies have been observed previously [8, 11, 29]. These inconsistencies could cause the mapping algorithm to predict that a patient’s utility had fallen when their OKS profile was unambiguously improved. In principle, items could be omitted or levels merged to give a fully consistent mapping algorithm with higher face validity: particularly as the specific inconsistencies observed appeared to vary between datasets, suggesting that many such inconsistencies occurred by chance. However, we feel that it is more appropriate to use the mapping model giving highest prediction accuracy in the validation sample regardless of inconsistencies, rather than applying ad hoc methods that could give many different “consistent” algorithms. Furthermore, we found that omitting/merging OKS levels reduced prediction accuracy, suggesting that inconsistencies may reflect patients’ interpretation of the questions or genuine opposition between items.

Model choice and coefficient values were sensitive to the dataset used to estimate mapping models. However, while the predictions and coefficients differed significantly between datasets, such differences are unlikely to be large enough to affect the results of an economic evaluation: particularly as differences in change from baseline were smaller than those for absolute values. Longer post-operative follow-up in KAT may explain many of these differences, although differences could also arise from slight differences in methods of questionnaire administration/wording or secular trends between operation dates (1999–2003 for KAT and 2009–2010 for PROMs). Other explanations, such as differing patient characteristics or questionnaire translations, are unlikely in this case as cohorts were similar and all questionnaires were completed in English. Although the relationship between OKS and EQ-5D appears to differ slightly between pre- and post-operative observations, using different mapping algorithms for different timepoints could bias cost-effectiveness estimates. Furthermore, adding observations from trial data with long follow-up (KAT) to those from routine data (PROMs) is likely to increase the range of applications to which mapping algorithms can be applied.

Nonetheless, differences between datasets highlight the importance of external validation. Selecting models based on performance in an internal validation dataset not used in model estimation helps prevent over-fitting, while external validation provides a more rigorous test of predictive accuracy by assessing performance in a separate, independently collected dataset that was not used for model estimation or selection [10, 30]. Our model gave accurate predictions in both internal and external validation datasets, demonstrating that it is likely to perform well in other comparable populations.

Abbreviations

- EOC:

-

Elective Orthopaedics Centre [dataset]

- GLM:

-

Generalized linear model

- HRQoL:

-

Health-related quality of life

- KAT:

-

Knee Arthroplasty Trial

- MAE:

-

Mean absolute error

- MSE:

-

Mean squared error

- OLS:

-

Ordinary least squares

- OKS:

-

Oxford Knee Score

- PROMs:

-

Patient Reported Outcome Measures [initiative]

- QALY:

-

Quality-adjusted life-year

References

Kind, P. (2001). Measuring quality of life in evaluating clinical interventions: An overview. Annals of Medicine, 33(5), 323–327.

Brazier, J., & Dixon, S. (1995). The use of condition specific outcome measures in economic appraisal. Health Economics, 4(4), 255–264.

Williams, A. (1995). The measurement and valuation of health: A chronicle. The University of York discussion paper 136.

Dolan, P. (1997). Modeling valuations for EuroQol health states. Medical Care, 35(11), 1095–1108.

Szende, A., & Williams, A. (2004). Measuring self-reported population health: An international perspective based on EQ-5D. Rotterdam, Netherlands: EuroQol group.

Rasanen, P., Roine, E., Sintonen, H., Semberg-Konttinen, V., Ryynanen, O. P., & Roine, R. (2006). Use of quality-adjusted life years for the estimation of effectiveness of health care: A systematic literature review. International Journal of Technology Assessment in Health Care, 22(2), 235–241.

Szende, A., Oppe, M., & Devlin, N. (2007). EQ-5D value sets: Inventory, comparative review and user guide. Dordrecht, Netherlands: Springer.

Brazier, J. E., Yang, Y., Tsuchiya, A., & Rowen, D. L. (2010). A review of studies mapping (or cross walking) non-preference based measures of health to generic preference-based measures. European Journal of Health Economics, 11(2), 215–225.

Mortimer, D., & Segal, L. (2008). Comparing the incomparable? A systematic review of competing techniques for converting descriptive measures of health status into QALY-weights. Medical Decision Making, 28(1), 66–89.

Longworth, L., Rowen, D. (2011). NICE DSU technical support document 10: The use of mapping methods to estimate health state utility values. Report by the decision support unit. Sheffield: Decision Support Unit, ScHARR, University of Sheffield. http://www.nicedsu.org.uk/TSD%2010%20mapping%20FINAL_forthcoming.pdf. Accessed 23 August 2011.

Gray, A. M., Rivero-Arias, O., & Clarke, P. M. (2006). Estimating the association between SF-12 responses and EQ-5D utility values by response mapping. Medical Decision Making, 26(1), 18–29.

Rivero-Arias, O., Ouellet, M., Gray, A., Wolstenholme, J., Rothwell, P. M., & Luengo-Fernandez, R. (2010). Mapping the modified Rankin scale (mRS) measurement into the generic EuroQol (EQ-5D) health outcome. Medical Decision Making, 30(3), 341–354.

Tsuchiya A., Brazier J., McColl E., Parkin D. (2002). Deriving preference-based single indices from non-preference based condition-specific instruments: Converting AQLQ into EQ5D indices. Sheffield Health Economics Group discussion paper series 02/1.

Le, Q. A., & Doctor, J. N. (2011). Probabilistic mapping of descriptive health status responses onto health state utilities using bayesian networks: An empirical analysis converting SF-12 into EQ-5D utility index in a national US sample. Medical Care, 49(5), 451–460.

McKenzie, L., & van der Pol, M. (2009). Mapping the EORTC QLQ C-30 onto the EQ-5D instrument: The potential to estimate QALYs without generic preference data. Value in Health, 12(1), 167–171.

Dawson, J., Fitzpatrick, R., Murray, D., & Carr, A. (1998). Questionnaire on the perceptions of patients about total knee replacement. Journal of Bone and Joint Surgery. British Volume, 80(1), 63–69.

Isis Outcomes. (2011). The Oxford Knee Score.

Murray, D. W., Fitzpatrick, R., Rogers, K., Pandit, H., Beard, D. J., Carr, A. J., et al. (2007). The use of the Oxford hip and knee scores. Journal of Bone and Joint Surgery. British Volume, 89(8), 1010–1014.

Dakin H. A., Gray A., Murray D., Fitzpatrick R. (2012). Rationing of total knee replacement: Cost-effectiveness evidence from a pragmatic randomised controlled trial. BMJ Open, 2(1), e000332.

Reddy, K. I., Johnston, L. R., Wang, W., & Abboud, R. J. (2011). Does the Oxford Knee Score complement, concur, or contradict the American Knee Society Score? Journal of Arthroplasty, 26(5), 714–720.

Devlin, N. J., Parkin, D., & Browne, J. (2010). Patient-reported outcome measures in the NHS: New methods for analysing and reporting EQ-5D data. Health Economics, 19(8), 886–905.

Hospital Episode Statistics. (2011). Provisional monthly patient reported outcome measures (PROMs) in England: A guide to PROMs methodology. http://www.hesonline.nhs.uk/Ease/servlet/ContentServer?siteID=1937&categoryID=1583. Accessed 23 August 2011.

Hospital Episode Statistics. (2010). Provisional monthly patient reported outcome measures (PROMs) in England. April 2009–April 2010: Pre- and postoperative data: Experimental statistics.

Rothwell, A. G., Hooper, G. J., Hobbs, A., & Frampton, C. M. (2010). An analysis of the Oxford hip and knee scores and their relationship to early joint revision in the New Zealand Joint Registry. Journal of Bone and Joint Surgery. British Volume, 92(3), 413–418.

Hadfield S. G., Alazzawi S., Bardakos N. V., Field R. E. (2011). Monitoring clinical and patient reported outcomes for hip and knee replacement surgery, and their application to improving practice. Presented at the Health Services Research Network (HSRN) and Service Delivery and Organisation (SDO) Network joint annual conference, 7th–8th June 2011, Liverpool UK.

StataCorp. (2009). Suest—seemingly unrelated estimation. Stata Base Reference Manual: Release 11 (pp. 1800–1818). College Station, TX: StataCorp LP.

Tengs, T. O., & Wallace, A. (2000). One thousand health-related quality-of-life estimates. Medical Care, 38(6), 583–637.

Oppe, M., Devlin, N., & Black, N. (2011). Comparison of the underlying constructs of the EQ-5D and Oxford Hip Score: Implications for mapping. Value in Health, 14(6), 884–891.

Lundberg, L., Johannesson, M., Isacson, D. G., & Borgquist, L. (1999). The relationship between health-state utilities and the SF-12 in a general population. Medical Decision Making, 19(2), 128–140.

Eriksson L., Johansson E., Kettaneh-Wold N., Trygg J., Wikström C., Wold S. (2006). Appendix I: Model derivation, interpretation and validation. Multi- and megavariate data analysis Part 1: Basic principles and applications (2nd ed., pp. 372–378). Umea, Sweden: MKS Umetrics AB.

Acknowledgments

We would like to thank all KAT investigators and participants for their role in collecting KAT data: particularly Graeme Maclennan and colleagues at University of Aberdeen. KAT was funded by the National Institute for Health Research Health Technology Assessment (NIHR HTA) programme (project number 95/10/01) and will be published in full as an HTA report. PROMs data were reused with the permission of The Health and Social Care Information Centre, copyright 2011, with all rights reserved. We would also like to thank Gaye Hadfield and Richard Field for supplying the EOC data used for external validation; collection of limb arthroplasty data at the EOC is funded by The Orthopaedic Research and Education Foundation. The Health Economics Research Centre and the Oxford Musculoskeletal Biomedical Research unit obtain support from NIHR. The views and opinions expressed therein are those of the authors and do not necessarily reflect those of the HTA programme, NIHR, NHS, Information Centre or the Department of Health.

Open Access

This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

Author information

Authors and Affiliations

Corresponding author

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Dakin, H., Gray, A. & Murray, D. Mapping analyses to estimate EQ-5D utilities and responses based on Oxford Knee Score. Qual Life Res 22, 683–694 (2013). https://doi.org/10.1007/s11136-012-0189-4

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11136-012-0189-4