Abstract

Differences in the response-scale formats constitute a major challenge for ex-post harmonisation of survey data. Linear stretching of original response options onto a common range of values remains a popular response to format differences. Unlike its more sophisticated alternative, simple stretching proves readily applicable without requiring assumptions regarding scale length or access to auxiliary information. The transformation only accounts for response scale length, ignoring all other aspects of measurement quality, which makes the equivalence of harmonised survey variables questionable. This paper focuses on the inherent limitations of linear stretching based on a case study focusing on the measurements of trust in the European Parliament by the Eurobarometer and the European Social Survey—8 timewise corresponding survey waves in 14 European countries (2004–2018). Our analysis demonstrates that the linear stretch approach to harmonising question items with different underlying response scale formats does not make the results of the two surveys equivalent. Despite harmonisation, response scale effects are retained in the distributions of output variables.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

European cross-national comparative surveys have proliferated over the last few decades and displayed substantial improvements in methodological quality. The most notable progress came through establishing the European Social Survey (ESS 2002), which explicitly emphasised the standardisation of the survey process to boost comparability (Fitzgerald and Jowell 2010). The ESS joined already well-established comparative surveys, such as the Eurobarometer (EB, since 1974), the European Values Study (EVS, since 1981) or the International Social Survey Programme (ISSP, since 1985), together with other new high-quality projects, e.g., the European Quality of Life Survey (EQLS, since 2003), the Survey of Health, Ageing and Retirement in Europe (SHARE, since 2004). The assortment of independent cross-national survey projects opened new promising avenues for inquiry regarding comparisons of survey results and outcomes across different projects. Existing cross-project comparisons tend to focus on methodological issues, e.g., nonresponse (Groves and Peytcheva 2008; Peytcheva and Groves 2009), data quality (Slomczynski et al. 2017), or survey documentation standards (Kołczyńska and Schoene 2018). On the other hand, in empirical studies, the prevailing norm is for data from a particular project to be analysed separately from other projects, whose results would only sometimes feature in the introduction or the discussion section as descriptive reference points (e.g., Heath et al. 2009). Such an approach restricts the potential benefits of abundant cross-national comparative projects. Combining data from different survey projects might make it feasible to integrate information mitigating time-series or country-coverage lapses in any one project (Kołczyńska 2020; Singh 2020).

The general potential of cross-project data integration has long been recognised—not only regarding the European surveys (Dubrow and Tomescu-Dubrow 2016). Recent advances in survey-data recycling engender great hopes for attempting an integration of viewpoints established by different projects; this regards, in particular, ex-post data harmonisation projects, which employ a wide array of procedures that integrate diverse data sets into one meta-base containing a uniform set of variables. Ex-post harmonisation (henceforth, harmonisation) aims to merge data from different survey projects into a unified analysis-ready dataset, even if the original data were not intended for recycling (Granda et al. 2010). While complete cross-project data harmonisation is typically only possible for socio-demographic variables (Hoffmeyer-Zlotnik and Wolf 2003), it remains partially possible for narrow and specific issues, e.g., corruption (Wysmułek 2019), democratic values and protest behaviours (Słomczyński et al. 2016), European integration (Jabkowski and Cichocki 2022), general social trust (Bekkers et al. 2018), health lifestyles (VanHeuvelen and VanHeuvelen 2021) or national identities and religion (Bechert et al. 2020). In contrast to socio-demographic characteristics, cross-project harmonisation of substantive survey questions faces acute measurement problems. Even when surveys include the same topics, their questionnaire items tend to differ significantly, impacting their implied measurement quality. Therefore, any such contrasts lead to reasonable suspicions regarding the equivalence of harmonised results.

Any harmonisation has to contend with many significant contrasts between survey projects in terms of measurement and representation errors (Kołczyńska 2022). Our analysis focuses on the challenges to harmonisation resulting from the differences in the length of the underlying response scale. Based on a comparison of country-level means from two different survey projects (one using a 2-point and the other an 11-point scale), we take issue with the procedure of linear stretching, which remains common in harmonisations of response scales with different underlying lengths (e.g., Slomczynski et al. 2017; Bechert et al. 2020; Huijsmans et al. 2019; Bekkers et al. 2018; Klassen 2018). We argue that stretching different response scales into a common range of harmonised values may give a semblance of comparability; yet, the resulting mean aggregates retain the response scale effect, which proves especially prominent when harmonising scales of markedly different lengths. Furthermore, our analysis considers the impact of a “don’t know answer” as an explicit response option. This impact, rarely taken into account in ex-post harmonisation projects, proves markedly weaker than that of the response scale length, but still, it constitutes a confounding influence on the cross-project comparability of survey results.

Our analysis is based on a case study contrasting the estimates of trust in the European Parliament (EP trust, hereafter) by two leading European survey projects: the EB and the ESS. The topic of EP trust has received extensive analytical coverage based on survey results of both the EB (e.g., Anderson 1998; Rohrschneider 2002; Harteveld et al. 2013; Torcal and Christmann 2019) and the ESS (e.g., Muñoz et al. 2011; Dotti Sani and B. 2016; Schoene 2019; Grönlund and Setälä 2007). It is also always present in any Standard EB as well as the core module of the ESS, with both projects maintaining the same structure of their relevant question item over the last two decades. Both projects employ similar question wording, yet, they espouse diametrically opposed approaches to response-scale structure. EB questionnaires elicit dichotomous responses (“Tend to trust” vs. “Tend not to trust”), with an additional “Do not know” option (DK) explicitly offered to the respondent (note, EB does not record refusals). In turn, the ESS records responses on an 11-point ordinal scale ranging from 0 “No trust at all” to 11 “Complete trust”; two nonresponse options (“Don’t know” answer and “Refusal”) are not explicitly shown to the respondent, but the interviewer could record them regardless. We examine the challenges involved in harmonising data regarding EP trust from the ESS and the EB by comparing their country-level averages at comparable time points.

2 Research problem

Cross-project comparisons of survey results are methodologically interesting in their own right; however, understanding them proves crucial for all secondary data users attempting to use harmonisation procedures to mitigate the impact of time-series or country-coverage gaps within individual projects. Notably, the lapses in coverage prove vexing within the major European cross-national surveys. For instance, the EB only covers current member states and candidate countries of the European Union. Hence, it does not provide data on such countries as Norway or Switzerland and stopped conducting surveys in the United Kingdom after Brexit. On the other hand, analyses based on the ESS, which aspires to more comprehensive Europe-wide coverage, face the constant problem of intermittent participation—thirty-eight countries participated in at least one ESS round, but only seventeen in all nine. However, even if no single European survey project provides exhaustive coverage of European countries over time, the overall abundance of different projects means that most countries have been covered most of the time. Considering five major European projects (EB, ESS, EVS, EQLS, and the ISSP), only five micro-states and principalities have not appeared in any of their surveys in the period 2000–2020: Andorra, Lichtenstein, Monaco, San Marino, and the Vatican; other four developing countries have been covered less than five times (Albania, Belarus, Bosnia and Hercegovina, Moldova). On average, the other thirty-eight European countries were covered by 22 individual country-level surveys belonging to one of the five projects (Jabkowski and Kołczyńska 2020b).

The EB and ESS have an excellent potential for complementation, especially given the coverage lapses of the ESS. However, the differences in response scale format between the two projects constitute a major challenge for data harmonisation, which boils down to two principal problems: (1) the length of the question scale and (2) the availability of an explicit “don’t know” answer. These contrasts constitute a major cause for concern for secondary users of harmonised data (Table 1).

The overall length of the response scale is known to have a meaningful effect on response styles (Baumgartner and Steenkamp 2001; Van Vaerenbergh and Thomas 2012). The general consensus seems that offering respondents few response options does not provide enough space for reflecting their true opinions; therefore, longer scales supposedly lead to better measurements (Lundmark et al. 2015). However, when faced with very long response options, respondents tend to decrease their cognitive effort involved in expressing their opinions as scale points by choosing the middle response category (Kieruj and Moors 2010; Kieruj 2012); additionally, on multi-item scales, respondents tend to avoid the extremes (Weijters et al. 2013, 2010; De Langhe et al. 2011; Cabooter et al. 2016). On the other hand, when respondents are pushed to provide answers on a dichotomous scale, the tendency to acquiescence will affect the result, especially when their knowledge about the subject of interest is low (Krosnick and Presser 2010), as “agreeing” answers are usually construed as socially acceptable (Krosnick 1991). Thus, the typical monotonic rescaling of values onto a common range employs a simple linear stretching algorithm (de Jonge et al. 2017) and does not mitigate the effects of underlying scale lengths, as it assumes false equivalence between points on different response scales on which respondents react differently. Consequently, simple linear stretching tends to inflate harmonised mean averages when transforming shorter into longer scales (de Jonge et al. 2014; Batz et al. 2016; Dawes 2008).

Regarding the cognitive efforts of the respondent induced by questions concerning issues they do not know much about, another meaningful effect on response styles is the availability of an explicit “don’t know” option (DK). Thus, when faced with the sudden necessity to come up with an opinion, respondents are known to engage in various satisficing behaviours, with the “no opinion” option being chief among them (Krosnick et al., 2002). DK answers are known to be more likely with questions requiring high rates of cognitive effort, while “Refusals” tend to occur more in sensitive questions (Shoemaker et al. 2002). Notably, political questions typically elicit more DKs (Laurison 2015). Furthermore, including DK as a valid response along with other response categories increases its incidence (Bishop et al. 1986; Schuman and Presser 1996). Additionally, given that multinomial rating scales require more cognitive efforts compared to dichotomous scales (Krosnick et al. 2005), when “no opinion” options are not explicitly offered, the respondent may engage in other satisficing tactics, including answering at random (Krosnick 1991), blanket preference for “agree” (Krosnick et al. 1996), or a tendency to opt for the neutral answer on the response rating scale (Alwin and Krosnick 1991).

3 Data

Both the EB and the ESS belong to the most prominent comparative survey projects in Europe. The European Commission conducts the EB biannually to monitor public opinion in EU member states and occasionally in candidate countries (Nissen 2014). The project relies on multistage samples with random route procedures to select households and the closest-birthday rule for selecting respondents within households. National samples include approximately 1,000 respondents, with 500 respondents in smaller countries. Target populations in the Eurobarometer include the “population of the respective nationalities of the European Union Member States and other EU nationals, resident in each of the 28 Member States and aged 15 years and over” (EB 2018). The EB does not publish detailed survey documentation, e.g., it does not provide information on RRs or other fieldwork outcomes.

In turn, the ESS is an academically driven survey project of European societies conducted biennially since 2002. Despite aiming to cover the entire European continent, participation is skewed towards EU countries. Interviews are conducted face-to-face with “persons aged 15 and over resident within private households, regardless of their nationality, citizenship, language or legal status” (ESS 2018a). The ESS only allows probability samples and explicitly prohibits quota samples and substitutions of non-responding or non-contacted units. After discounting for design effects, all countries must achieve a minimum effective sample size of 1,500 respondents (or 800 in countries with populations of less than 2 million). The ESS makes exhaustive survey documentation readily available.

Our analysis is based on survey data from 8 up-to-date rounds of the ESS (2/2004; 3/2006; 4/2008; 5/2010; 6/2012; 7/2014; 8/2016; 9/2018) and 8 timewise corresponding spring waves of the EB (62.0/2004; 66.1/2006; 70.1/2008; 74.2/2010; 78.1/2012; 82.3/2014; 86.2/2016; 90.3/2018). Country coverage has been restricted to the 14 EU member states participating in both the EB and all ESS rounds. ESS 1 (2002) was left out along with its analogous EB wave, as it was only in 2004 that the major eastern enlargement of the EU occurred, which changed the status of Estonia, Hungary, Poland, and Slovenia, from candidate countries to member states. In total, the study encompasses 112 country surveys within each survey project, with the EB dataset of 119,783 respondents and the ESS including 215,746. The EB waves are usually conducted in a short span of no more than two weeks, while the ESS tends to have its fieldwork execution spread out over multiple months; hence, their fieldwork dates are not identical. Nevertheless, the timing differences are small enough to allow for an assumption that both surveys were measuring the same underlying state of public opinion in each of the included countries.

4 Data harmonisation procedures

Our analysis employs the widely-used procedure of linear stretching source data onto a common range (de Jonge et al. 2017), also known as the “per cent of the maximum possible score achievable” (Cohen et al. 1999). Simple linear stretching only takes into account the number of response options, disregarding the potential effects of question-wording, item difficulty, differences in the labelling of response scale points, distances between response points, differences in the distribution shapes or the explicit availability of item-nonresponse options (Kolen et al. 2004). Given the multiplicity of potential problems, the resulting biases in the harmonised data cannot be easily enumerated and identified (Singh 2021). Notwithstanding its drawbacks, simple linear stretching remains the sole feasible harmonisation option when at least one of the underlying response scales is dichotomous. For instance, both the observed score equating (Singh 2020; Kolen and Brennan 2004) and the reference distribution method (de Jonge 2017) aim to increase comparability by comparing response distributions; however, they do not apply to dichotomous variables, as the number of comparable response distributions for a 2-point scale is infinite (de Jonge et al. 2014).

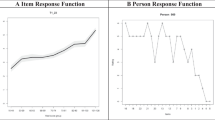

Simple linear stretching treats the extremes of underlying response scales as equivalent endpoints of a unidirectional common range, with all remaining response options mapped onto this range. Thus, regardless of the original labelling of response scales, responses are assigned consecutive natural numbers, from i = 1 to the maximum equal to the number of response options, and then the harmonised scores are calculated as \(\left( {i - 1} \right)/{ }\left( {{\text{maximum}} - 1} \right)\). For the dichotomous EB scale: “Tend not to trust” is mapped onto 0, while “Tend to trust” onto 1, with the “Don’t know” answer treated as item-nonresponse. For the 11-point ESS scale, the variable is treated as quasi-continuous with 11 equidistant response options ranging from “No trust at all” – 0, to “Complete trust” – 1, with item-nonresponse options of “Don’t know” and “Refusal. An anonymous reviewer of the first draft of this paper aptly pointed out that simple linear stretching could be improved by employing an alternative approach to the transformation of the underlying variable scores. In this modified procedure, a variant of which has been implemented in the Survey Data Recycling project (Slomczynski et al. 2016: 56–57), the response options are first mapped onto a sequence from i = 1 to the maximum equal to the number of response options by increments of 1, but then their harmonised scores are calculated as \(\left( {i - 0.5} \right)/{\text{maximum}}\). In other words, modified linear stretching cuts the [0;1] interval into as many equidistant segments as there are response options and then assigns each original score to the expected middle of each segment under the assumption of the symmetric distribution of true scores within each segment around its middle value. The 2-point scale would yield values of 0.25 and 0.75 instead of the original 0 and 1; the 11-point scale would be mapped on a sequence from 0.05 to 0.95 instead of the original 0 to 1. The two approaches to linear stretching are visualised in Fig. 1.

Linear stretching of a 2-point scale towards an 11-point scale represents a somewhat extreme case, exposing the problems inherent in the approach, which may not seem as apparent when the differences in the number of response options between harmonised variables are less dissimilar. Dichotomous responses represent not specific points on the true construct continuum but rather amalgamations of various construct intensities. Thus, the simple transformation of EB’s “Tend to trust” into the outermost response on the 11-point of the ESS seems counterintuitive, as it pushes respondents with different levels of trust to the harmonised endpoint, while actual survey respondents tend to avoid extreme options on multi-item scales (e.g., Weijters et al. 2010). Many among those choosing EB’s “Tend to trust” option are likely only trusting enough not to go for the polar opposite of “Tend not to trust”. The modified approach would move the harmonised values away from the extremes of the [0, 1] range. The displacement is likely most massive for dichotomous variables, as it alleviates the effect of pushing respondents to extremes they would likely not choose on multinomial rating scales.

Our analyses implement both approaches; however, the main thrust of our argument falls on the commonly used simple linear stretch. Hence, in the interest of clarity, the results presented in the main body of the paper relate to the simple approach, but the same procedures were conducted using the modified approach, with their results included in the online supplementary materials. These materials will be referenced in our discussion at the point when we compare the modified alternative to the simple linear stretching. Since the results yielded by the two approaches are only subtly different, we found that attempting to report them both in the paper would be confusing.

5 Analytical approach

In order to investigate the impact of responses-scale effects on between-project incomparability of harmonised values, we built multilevel regression models with the harmonised means of EP trust (\(\overline{EP trust}\)) as the dependent variable for each country-level survey. Introducing the second level of analysis, the values of \(\overline{EP trust}\) are nested within countries and years. To ensure the results of our analyses are robust to model design, we fit three models with different specifications. The models include project name (\(PROJECT\)) as a factor indicating the type of response-scale incorporated; it also includes the fraction of item-nonresponse (\(DK\_INR\)) obtained by the two projects as an indicator of satisficing behaviours and a proxy of measurement quality. Furthermore, as a proxy for sample quality, we incorporate the internal criterion of representativeness (Kohler 2007)—the absolute sample bias (\(Abs\_bias\))—a useful measure for secondary data users as it does not require design weights (Jabkowski et al. 2021), which are not available in the EB (Jabkowski and Kołczyńska 2020a), and generally not available in survey datasets (Zieliński et al. 2018).

First, we ran a multilevel cross-classified random-intercept and fixed-slopes Model 1, with surveys nested in countries and years. For surveys in country i, year t, and project p:

where \(\beta_{0}\) is the grand intercept, \(\gamma_{i}\) represents between-countries random intercepts, and \(\gamma_{i}\) means between-years random intercepts, the \(\beta\) terms are coefficients on the independent variables, and \(\varepsilon_{itp}\) is the residual.

Model 2 (with random-intercept and fixed-slopes) adds the interaction term to the regression in order to verify whether the \(PROJECT\) moderates the impact of \(DK\) and \(Abs\_bias\) on the survey-level mean values of EP trust, which is the standard procedure for verifying the existence of moderating effect of any of the variables in the regression analysis (Aguinis 2004; Jose 2013). Thus, for surveys in country i, year t, and project p:

where \(\beta_{0}\), \(\gamma_{i}\), \(\gamma_{k}\), \(\beta\), and \(\varepsilon_{itp}\) stand as above.

Finally, Model 3 extends Model 2 by releasing slopes of beta-coefficient between countries (i.e., we assumed the effects of \(DK\) and \(Abs\_bias\) on \(\overline{EP trust}\) may vary across countries). The specification of the Model 3 is as follows:

where \(\beta_{0}\), \(\gamma_{i}\), \(\gamma_{k}\), \(\beta\), and \(\varepsilon_{itp}\) stand as previously, \(\gamma_{2i}\) represents random slopes between countries for \(DK\), and \(\gamma_{3i}\) for \(Abs\_bias\). Additionally, we assumed \(\gamma_{2i} \sim N\left( {0;\theta_{2i} } \right)\) and \(\gamma_{3i} \sim N\left( {0;\theta_{3i} } \right)\).

The analysis was conducted in R (R Core Team 2022), using the following packages: tidyverse (Wickham et al. 2019), lme4 (Bates et al. 2015), and sjPlot (Lüdecke 2021).

6 Results

The harmonized assessments of EP trust differ substantially between the EB and ESS—a visual comparison is presented in Fig. 2. Every panel presents three distinct pieces of information: (1) circles in the lower deck represent the share of DKs in each respective survey of the EB and the ESS; (2) squares represent the percentage shares of the discrete options offered by the 11-point ESS scale—percentages are calculated for valid indications only, i.e., excluding DKs as item-missing; (3) EB and ESS trend-lines based on the harmonised country-level means for the 2004–2018 time-series (also calculated for valid scale indications). The results in the figure rely on the simple linear stretching procedure; for comparison with the modified approach, please consult the online supplementary materials.

Juxtaposing the rescaled country-year mean values of EP trust between the EB and the ESS, the EB (underlying 2-point scale) yields systematically different country-level mean aggregates than the ESS (underlying 11-point scale). Those patterns are apparent in the two trend lines presented on each country panel in Fig. 1. The absolute differences between rescaled means obtained in the EB and the ESS are significantly larger than zero, as confirmed by a one-tailed t-test (the absolute difference is equal to .167 (t = 20.39; p < .001)). For the modified linear stretch, the resulting differences between rescaled means prove smaller but remain significant (the absolute difference is equal to .10 (t = 23.20; p < .001). Hence, the simple linear stretching of different scales onto a common range does not make survey-specific averages mutually interchangeable, and neither does the modified approach despite the decrease in the absolute mean differences. Furthermore, the modified approach increases the similarity of time-series trends between the two survey projects. Crucially, in the simple approach, as presented in Fig. 2, the overall tendency for EB averages to be higher than that of the ESS is reversed in two apparent outlier cases of Spain and the United Kingdom. The modified approach, as visualised in the online supplementary materials, seems to make the trendlines for these two countries conform better to the overall pattern observed in all other countries included in the comparison.

On the other hand, both simple and modified linear stretching ignore the explicit availability of the no-opinion option, so their results are identical in this respect. The EB records DKs more often, which is apparent when looking at the relative size and fill transparency of the circles within each country panel in Fig. 2. The differences are statistically significant, as confirmed by a one-tailed paired t-test (the mean difference between country-year EB’s and ESS’s surveys is equal to .063 (t = 12.95, p < .001)). Thus, in line with theoretical expectations, the explicit offering of DK among the valid response options results in a higher share of respondents choosing it.

To estimate the response-scale effect on harmonised trust assessments, we built three multilevel regression models, which also incorporate item nonresponse (i.e., the fraction of DK answers) and absolute sample bias among the covariates and factors of the mean value of EP trust. Table 2 provides regression estimates (Est.) alongside standard errors (SE). The results presented rely on simple linear stretching; for those of the modified approach, please consult the online supplementary materials.

Model 1 tests whether the dichotomous response option in the EB results in the higher values of harmonised means of EP trust than those obtained based on the 11-point ESS response scale. It controls for the share of DK and the size of \(Abs\_bias\), as well as for sample-level averages being nested within countries and waves. As the regression parameter for the project is significantly below zero, i.e., harmonised EB country-level means are notably higher than the ESS country-level means (on average, ESS’s means are 15 pp. lower). Note that the results of model 1 echoes of our descriptive findings presented in Fig. 2.

As the EB and ESS have different approaches to presenting DK options, and both projects utilised different sampling and fieldwork procedures, the share of DK answers and the size of \(Abs\_bias\) may have different effects on the harmonised country-level means within each project. Hence, even if Model 1 does not provide evidence for the significant impact of DK and \(Abs\_bias\) on harmonised country-level mean values, this may stem from the moderating effect of the projects’ characteristics on both relationships. Model 2 partly supports this presumption: the ESS moderates the effect of DK on country-level harmonised means observed in the EB; however, the effect of \(Abs\_bias\) is similar for both projects (the latter is not statistically significant regardless of whether we consider EB or ESS data). Considering the impact of the fraction of the DK on the harmonised means of EP trust in the EB, the statistically significant beta-coefficient equal to .335 means the higher the share of DK answers, the higher the mean EP trust observed in each country survey; for the ESS, the relationship reversed. Figure 3 presents the moderating effect of the project on the relationship between the fraction of DK and the mean value of EP trust.

Note that when we included the moderating effect of the project on the impact of DK on average EP trust, we reduced the beta coefficient for the project (from − .15 in model 1 to − .07 in model 2). Still, the harmonised country-level mean values of EP trust are significantly higher when considering EB results (the project's effect remains significant).

Finally, model 3 allows for the between-country differences in slopes of beta coefficient for DK and \(Abs\_bias\) for controlling whether the effects of both variables are the same in every country. The regression coefficients remained practically the same; thus, the findings based on model 2 remain valid. However, the random-effects analysis demonstrates that countries differ in the size of the impact of DK and \(Abs\_bias\) on country-level averages of EP-trust; nevertheless, they remain similar when we consider the direction of the impact. In the EB, except for the UK, the effect of DK and \(Abs\_bias\) is uniformly positive (for the UK, negative). When we included the moderating effect of the ESS on DK impact on country-level means, the effect of DK is negative (except in the UK, where it is positive). As the ESS does not moderate the effect of \(Abs\_bias\), we can conclude cross-country variation in the direction of the impact of \(Abs\_bias\) on country-level averages remains the same as in the EB.

The same models have also been estimated for the modified approach to linear stretching (for details, consult the online supplementary materials). Despite the descriptive differences between the two approaches in country-level means, using the modified approach does not change the significance of estimated effects.

7 Discussion

Our case study of EP trust focuses on two extremely different scale formats (2-point and 11-point). The extreme difference magnifies the negative consequences of linear stretching, demonstrating that the harmonised results cannot be treated as equivalent without accounting for the response scale format. While such challenges to equivalence may seem lesser when harmonising variables of similar underlying scale lengths, linear stretching would also be problematic due to unrealistic assumptions regarding equidistance and equivalence of response options and the identical shapes of their empirical distributions. Dealing with 2-point scales only amplifies those inherent problems of stretching, which are not specific, however, to this particular scale format. The linear stretching onto a common range might provide a semblance of comparability, yet, the EB and the ESS yield systematically different results after harmonisation. Hence, not even in the relatively straightforward case of trust in the European Parliament, there seems to be no obvious way of using harmonisation to mitigate their respective coverage lapses by substituting missing information with data from other timewise corresponding country-level measurements.

In principle, the response-scale effects could be incorporated into analyses alongside other measurement characteristics whenever retained as meta-data by the producers of harmonised datasets. Regarding response scale characteristics, harmonisation projects usually provide ready access to information on the length of response scales alongside other characteristics of the underlying questionnaire items (Kołczyńska and Slomczynski 2018; Mauk 2020). These typically include methodological controls for harmonised variables, such as the presenting response option in an ascending or descending order, the number of source items used to create the target variable, the between-project differences in question-item conceptualisations, and the quality of survey documentation (Slomczynski and Tomescu-Dubrow 2018; Granda et al. 2010). On the other hand, the number of meta-characteristics within any general harmonisation, i.e., data recycling without specific research questions, must remain focused on encoding such characteristics in common demand due to obvious resource constraints. The catalogue of all potentially useful characteristics is too large for any general harmonisation. The type of the “no-option” response appears to be one of such less common characteristics; the degree of cognitive burden induced by a question item constitutes another example of this category. Including such more idiosyncratic variables and meta-characteristics typically means, however, that before being users the researchers have to become secondary data producers.

Even if all relevant meta-characteristics of underlying response scales are made available, there is no straightforward way for the secondary data users to incorporate them into analyses of harmonised data (Slomczynski et al. 2021). As our analysis demonstrates, the response scale effects are significant but not uniform across all countries and time points. These effects seem to be sensitive to the social and political context. In the case of trust in the European Parliament, far more is known about the topic than could be feasibly incorporated into a harmonised database. Some domain-specific information could be retained by specific harmonisation. For instance, a quasi-arbitrary weighting factor in a harmonisation scheme could represent the fact that survey questions regarding the abstract notion of parliamentary trust at the European level put a high cognitive burden on most respondents who may lack the relevant skills and knowledge to formulate informed judgments (Anderson 1998). Furthermore, many more variables could be targeted for harmonisation, given that trust in the European Parliament is known to be associated with a variety of other factors, e.g., support for EU membership (Gabel 2003), overall quality of national institutions (Rohrschneider 2002), general social trust (Dellmuth and Tallberg 2020) or life satisfaction (Listhaug and Ringdal 2008). However, there seem to be clear limits of size and complexity, restricting the amount of information that could be meaningfully incorporated even in a highly specific harmonisation.

No matter how exhaustive the inventory of harmonised meta-data, there seems to be no viable way to escape the reality that surveys are conducted in specific socio-political contexts. While such contextual embedding may have minimal impact on the measurement of socio-demographic characteristics, questions regarding substantive issues such as opinions or attitudes are likely to be significantly influenced by such contextual idiosyncrasies. For instance, the cases of Spain and the United Kingdom show that no analysis of trust in the European Parliament can abstract from the context of their political realities. In the UK, the debate over Brexit, i.e., the ultimate abandonment of EU membership, proved among the most polarising political topic of the decade (Hobolt et al. 2021). Unlike in most other EU member states, this translated into substantial and continuous public sphere attention devoted to European institutions (North et al. 2021). When it comes to Spain, at the time of the major shift in the EP-trust averages reported by the EB, the country was enduring a prolonged and devastating economic downturn, which was widely perceived in the context of the broader crisis of the Euro (the European common currency). While not as extreme as in the case of Greece, the Spanish position vis-à-vis the European institutions grew antagonistic, shattering the previously prevailing pro-European consensus (Real-Dato and Sojka 2020). The polarisation of opinion can also be observed when looking at the per cent-share of the most-negative answer on the ESS scale, yet, due to the overall dominance of the neutral option on the 11-point scale, this polarising shift does not register strongly on the ESS mean-average. On the other hand, the effects of underlying public opinion polarisation seem to translate into substantial shifts registered on a 2-point scale. While Spain and the UK present the most extreme cases of this pattern within the 14 countries under consideration, similar effects appear in France, Ireland, and Slovenia.

Concerning the effects of polarisation, the modified approach to linear stretching shows some promise for the harmonisation of dichotomous and multinomial response scales, as lessening scale polarisation makes the country-level averages more similar. However, despite some apparent advantages, the modified approach does not seem a necessarily superior alternative to simple linear stretching. Although it would constitute a targeted improvement for dichotomous variables, this approach has a potentially critical flaw from the point of view of harmonisation: it does away with a common variable range. As the variability of 2-point scales is constrained to [0.25, 0.75] and that of 11-point scales to [0.05, 0.95], the harmonised trust average for any category of EB respondents could not exceed 79% of the maximum potential average of the ESS. Hence, as minimums and maximums of variables become dependent upon their original response scales, harmonisation can no longer be construed as mapping onto a common range.

8 Conclusion

The popularity of linear stretching likely stems primarily from its ready applicability as well as conceptual and computational simplicity. Other ex-post data harmonisation methods, such as those based on reference distribution, scale interval or multiple imputations with calibration samples, all face meaningful applicability restrictions. For instance, they are not well suited for all types of response scales and prove especially thorny when combining dichotomous and polychotomous variables; furthermore, they require auxiliary information not usually made available in the datasets or published documentation (de Jonge et al. 2014; Kolen et al. 2004). Thus, these alternative methods usually prove impractical for secondary data users, especially those engaged in large-scale analyses (Jabkowski et al. 2021). Notwithstanding its practical applicability, simple linear stretching makes questionable assumptions that (1) the distances between the response options are equal, (2) the question wording and labelling of the response options are irrelevant to the analysis, (3) the response distributions have an identical shape. Consequently, linear stretching relies only on the number of response options of the primary scale, and the transformation ignores all additional scale-specific characteristics. Thus, such harmonisation usually fails to yield comparable mean scores (Batz et al. 2016).

Comparability of mean scores constitutes a crucial challenge for using harmonised data in substantive analyses of survey results. Without systematically accounting for the differences in measurement quality, data harmonisations based on simple linear stretching show limited utility for substantive cross-project comparisons or dealing with gaps in the geographical coverage of some survey projects. Stretching creates a semblance of cross-project equivalence by mapping the underlying response values onto a common range while ignoring the differences in their measurement quality. Ignoring differences does not neutralise them. They are retained in the distributions of variables, which is expressed in the significant differences in mean scores. Although the differences in measurement quality could be included in harmonisation projects and incorporated into modelling by secondary data users, our analysis suggests no straightforward way of achieving that goal.

Our analysis indicates that harmonisation based on simple linear stretching involves inherent and irredeemable flaws. This general conclusion is admittedly based on a narrow case of a case study of EB and ESS trust measurements in the European Parliament and identified systematic cross-project differences in country-level estimates over time. We attributable these differences to contrastive satisficing behaviours elicited by their divergent response-scale formats, i.e., EB’s dichotomous (“Tend to trust” vs. “Tend not to trust”) with an explicit DK option and ESS’s 11-point ordinal scale (from “No trust at all” to “Complete trust”) without and explicit DK option. Our study attested two major cross-project contrasts. Firstly, we found the EB registers a significantly higher incidence of DK answers, commonly treated as item-nonresponse in both survey projects. Secondly, when it comes to valid indications, after simple linear stretching of original responses onto a common range, EB tends to register higher levels of trust than the ESS. Even though the contrastive patterns in the results of the two survey projects can be attributed to their different response scale formats, these effects can be moderated by the socio-political contexts in which surveys are conducted in particular countries.

Research data and replication files

Replication materials and the integrated datasets are available in this repository https://osf.io/7mwz2/ to facilitate research openness, transparency, and reproducibility.

References

Aguinis, H.: Regression Analysis for Categorical Moderators. Guilford Press, New york (2004)

Alwin, D.F., Krosnick, J.A.: The reliability of survey attitude measurement: the influence of question and respondent attributes. Sociol. Methods Res. 20(1), 139–181 (1991). https://doi.org/10.1177/0049124191020001005

Anderson, C.J.: When in doubt, use proxies: attitudes toward domestic politics and support for european integration. Comp. Political Stud. 31(5), 569–601 (1998). https://doi.org/10.1177/0010414098031005002

Bates, D., Mächler, M., Bolker, B., Walker, S.: Fitting linear mixed-effects models using lme4. J. Stat. Softw. 67(1), 1–48 (2015). https://doi.org/10.18637/jss.v067.i01

Batz, C., Parrigon, S., Tay, L.: The impact of scale transformations on national subjective well-being scores. Soc. Indic. Res. 129(1), 13–27 (2016). https://doi.org/10.1007/s11205-015-1088-1

Baumgartner, H., Steenkamp, J.B.E.M.: Response styles in marketing research: a cross-national investigation. J. Mark. Res. 38(2), 143–156 (2001). https://doi.org/10.1509/jmkr.38.2.143.18840

Bechert, I., May, A., Quandt, M., Werhan, K.: ONBound—old and new boundaries: national identities and religion. Customized dataset. In: GESIS Data Archive, C. (ed.) (2020)

Bekkers, R., van der Meer, T., Uslaner, E., Wu, Z., de Wit, A., de Blok, L.: Harmonized trust database. In. (2018).

Bishop, G.F., Tuchfarber, A.J., Oldendick, R.W.: Opinions on fictitious issues: the pressure to answer survey questions. Public Opin. q. 50(2), 240–250 (1986). https://doi.org/10.1086/268978

Cabooter, E., Weijters, B., Geuens, M., Vermeir, I.: Scale format effects on response option interpretation and use. J. Bus. Res. 69(7), 2574–2584 (2016). https://doi.org/10.1016/j.jbusres.2015.10.138

Cohen, P., Cohen, J., Aiken, L.S., West, S.G.: The problem of units and the circumstance for POMP. Multivar. Behav. Res. 34(3), 315–346 (1999). https://doi.org/10.1207/S15327906MBR3403_2

Dawes, J.: Do data characteristics change according to the number of scale points used? An experiment using 5-point, 7-point and 10-point scales. Int. J. Mark. Res. 50(1), 61–104 (2008). https://doi.org/10.1177/147078530805000106

de Jonge, T.: Methods to increase the comparability in cross-national surveys, highlight on the scale interval method and the reference distribution method. In: Brulé, G., Maggino, F. (eds.) Metrics of Subjective Well-Being: Limits and Improvements, pp. 237–262. Springer International Publishing, Cham (2017)

de Jonge, T., Veenhoven, R., Arends, L.: Homogenizing responses to different survey questions on the same topic: proposal of a scale homogenization method using a reference distribution. Soc. Indic. Res. 117(1), 275–300 (2014). https://doi.org/10.1007/s11205-013-0335-6

de Jonge, T., Veenhoven, R., Kalmijn, W.: Diversity in Survey Questions on the Same Topic: Techniques for Improving Comparability, vol. 68. Springer, Cham (2017)

De Langhe, B., Puntoni, S., Fernandes, D., Van Osselaer, S.M.J.: The anchor contraction effect in international marketing research. J. Mark. Res. 48(2), 366–380 (2011). https://doi.org/10.1509/jmkr.48.2.366

Dellmuth, L.M., Tallberg, J.: Why national and international legitimacy beliefs are linked: Social trust as an antecedent factor. Rev. Int. Organ. 15(2), 311–337 (2020). https://doi.org/10.1007/s11558-018-9339-y

Dotti Sani, G.M., Beatrice, M.: Increasingly unequal? The economic crisis, social inequalities and trust in the European Parliament in 20 European countries. Eur. J. Political Res. 55(2), 246–264 (2016). https://doi.org/10.1111/1475-6765.12126

Dubrow, J.K., Tomescu-Dubrow, I.: The rise of cross-national survey data harmonization in the social sciences: emergence of an interdisciplinary methodological field. Qual. Quant. 50(4), 1449–1467 (2016)

EB: Standard Eurobarometer 90.3. European Commission (2018)

EB: Eurobarometer 90.3 Questionnaire. In. (2019)

ESS: ESS-4 2008 Documentation Report. Edition 5.5. Bergen, European Social Survey Data Archive, NSD–Norwegian Centre for Research Data for ESS ERIC. In. (2018a)

ESS: ESS Round 9 Source Questionnaire. In: ESS ERIC Headquarters c/o City, U.o.L. (ed.). (2018b)

ESS: Round 1: European social survey: ESS-1 2002 Documentation Report. Edition 6.2, European Social Survey Data Archive/Norwegian Social Science Data Services. In. (2002)

Fitzgerald, R., Jowell, R.: Measurement equivalence in comparative surveys: the European Social Survey (ESS)—from design to implementation and beyond. In: Harkness, J.A., Braun, M., Edwards, B., Johnson, T.P., Lyberg, L., Mohler, P.P., Pennell, B.-E., Smith, T.W. (eds.) Wiley Series in Survey Methodology. Survey Methods in Multinational, Multiregional, and Multicultural Contexts, pp. 485–495. John Wiley & Sons, New York (2010)

Gabel, M.: Public support for the european parliament*. JCMS J. Common Mark. Stud. 41(2), 289–308 (2003). https://doi.org/10.1111/1468-5965.00423

Granda, P., Wolf, C., Hadorn, R.: Harmonizing survey data. In: Survey Methods in Multinational. Multiregional, and Multicultural Contexts, pp. 315–332. John Wiley & Sons, New Jersey (2010)

Grönlund, K., Setälä, M.: Political trust, satisfaction and voter turnout. Comp. Eur. Politics 5(4), 400–422 (2007). https://doi.org/10.1057/palgrave.cep.6110113

Groves, R.M., Peytcheva, E.: The impact of nonresponse rates on nonresponse bias: a meta-analysis. Public Opin. q. 72(2), 167–189 (2008)

Harteveld, E., Meer, T., Vries, C.E.D.: In Europe we trust? Exploring three logics of trust in the European Union. Eur. Union Politics 14(4), 542–565 (2013)

Heath, A., Martin, J., Spreckelsen, T.: Cross-national comparability of survey attitude measures. Int. J. Public Opin. Res. 21(3), 293–315 (2009)

Hobolt, S.B., Leeper, T.J., Tilley, J.: Divided by the vote: affective polarization in the wake of the brexit referendum. Br. J. Political Sci. 51(4), 1476–1493 (2021). https://doi.org/10.1017/S0007123420000125

Hoffmeyer-Zlotnik, J.H., Wolf, C.: Comparing demographic and socio-economic variables across nations. In: Hoffmeyer-Zlotnik, J.H., Wolf, C. (eds.) Advances in Cross-national Comparison, pp. 389–406. Kluwer, New York (2003)

Huijsmans, T., Rijken, A., Gaidyte, T.: Harmonised PolPart Dataset. In. (2019)

Jabkowski, P., Kołczyńska, M.: Sampling and fieldwork practices in Europe: analysis of methodological documentation from 1,537 surveys in five cross-national projects, 1981–2017. Methodol. Eur. J. Res. Methods for the Behav. Sci. 16(3), 186–207 (2020a). https://doi.org/10.5964/meth.2795

Jabkowski, P., Cichocki, P., Kołczyńska, M.: Multi-project assessments of sample quality in cross-national surveys: the role of weights in applying external and internal measures of sample bias. J. Surv. Stat. Methodol. (2021). https://doi.org/10.1093/jssam/smab027

Jabkowski, P., Cichocki, P.: Reflecting europeanisation: cumulative meta-data of cross-country surveys as a tool for monitoring european public opinion trends. In. (2022)

Jabkowski, P., Kołczyńska, M.: Supplementary materials to: Sampling and fieldwork practices in Europe: Analysis of methodological documentation from 1,537 surveys in five cross-national projects, 1981–2017 (2020b). https://doi.org/10.23668/psycharchives.3461

Jose, P.E.: Doing Statistical Mediation and Moderation. Guilford Press, New york (2013)

Kieruj, N.D.: Question Format and Response Style Behavior in Attitude Research. Uitgeverij BOXPress, Amsterdam (2012)

Kieruj, N.D., Moors, G.: Variations in response style behavior by response scale format in attitude research. Int. J. Public Opin. Res. 22(3), 320–342 (2010). https://doi.org/10.1093/ijpor/edq001

Klassen, A.: Human Understanding Measured Across National (HUMAN) Surveys: Codebook for Country-Year Data. In (2018)

Kohler, U.: Surveys from inside: an assessment of unit nonresponse bias with internal criteria. Surv. Res. Methods 1(2), 55–67 (2007)

Kołczyńska, M.: Democratic values, education, and political trust. Int. J. Comp. Sociol. 61(1), 3–26 (2020). https://doi.org/10.1177/0020715220909881

Kołczyńska, M.: Combining multiple survey sources: a reproducible workflow and toolbox for survey data harmonization. Methodol. Innov. 15(1), 62–72 (2022). https://doi.org/10.1177/20597991221077923

Kołczyńska, M., Schoene, M.: Survey data harmonization and the quality of data documentation in cross-national surveys. Adv. Comp. Surv. Methods Multinatl Multiregional Multicult. Contexts (3MC) 12, 963–984 (2018)

Kołczyńska, M., Slomczynski, K.M.: Item Metadata as controls for ex post harmonization of international survey projects. In: Advances in Comparative Survey Methods, pp. 1011-1033. (2018)

Kolen, M.J., Brennan, R.L.: Test Equating, Scaling, and Linking. Springer, New York (2004)

Kolen, M.J., Brennan, R.L., Kolen, M.J.: Test Equating, Scaling, and Linking: Methods and Practices. Springer, New York (2004)

Krosnick, J.A.: Response strategies for coping with the cognitive demands of attitude measures in surveys. Appl. Cogn. Psychol. 5(3), 213–236 (1991)

Krosnick, J.A., Narayan, S., Smith, W.R.: Satisficing in surveys: initial evidence. N. Dir. Eval. 1996(70), 29–44 (1996). https://doi.org/10.1002/ev.1033

Krosnick, J.A., Holbrook, A.L., Berent, M.K., Carson, R.T., Michael Hanemann, W., Kopp, R.J., Cameron Mitchell, R., Presser, S., Ruud, P.A., Kerry Smith, V. Moody, W.R.: The impact of “no opinion” response options on data quality: non-attitude reduction or an invitation to satisfice?. Pub. Opin. Quart. 66(3), 371–403 (2002)

Krosnick, J.A., Judd, C.M., Wittenbrink, B.: The measurement of attitudes. In: Albarracin, D., Johnson, B.T., Zanna, M.P. (eds.) The Handbook of Attitudes, pp. 21–78. Lawrence Erlbaum, Mahwah (2005)

Krosnick, J.A., Presser, S.: Questionnaire design. In: JD, W., Marsden, P.V. (eds.) Handbook of Survey Research pp. 263-313. Emerald Group Publishing, 2010

Laurison, D.: The willingness to state an opinion: inequality, don’t know responses, and political participation. Sociol. Forum 30(4), 925–948 (2015). https://doi.org/10.1111/socf.12202

Listhaug, O., Ringdal, K.: Trust in political institutions: the nordic countries compared with Europe. In: Heikki, E., Fridberg, T., Hjerm, M., Ringdal, K. (eds.) Nordic Social Attitudes in a European Perspective, pp. 131–151. Edward Elgar Publishing Inc, Northampton (2008)

Lüdecke, D.: sjPlot: Data visualization for statistics in social science. R package version 2.8.10. (2021)

Lundmark, S., Gilljam, M., Dahlberg, S.: Measuring generalized trust: an examination of question wording and the number of scale points. Public Opin. q. 80(1), 26–43 (2015). https://doi.org/10.1093/poq/nfv042

Mauk, M.: Electoral integrity matters: how electoral process conditions the relationship between political losing and political trust. Qual. Quant. (2020). https://doi.org/10.1007/s11135-020-01050-1

Muñoz, J., Torcal, M., Bonet, E.: Institutional trust and multilevel government in the European Union: Congruence or compensation? Eur. Union Politics 12(4), 551–574 (2011). https://doi.org/10.1177/1465116511419250

Nissen, S.: The Eurobarometer and the process of European integration. Qual. Quant. 48(2), 713–727 (2014). https://doi.org/10.1007/s11135-012-9797-x

North, S., Piwek, L., Joinson, A.: Battle for Britain: analyzing events as drivers of political tribalism in twitter discussions of brexit. Policy Internet 13(2), 185–208 (2021). https://doi.org/10.1002/poi3.247

Peytcheva, E., Groves, R.M.: Using variation in response rates of demographic subgroups as evidence of nonresponse bias in survey estimates. J. off. Stat. 25(2), 193 (2009)

R_Core_Team: R: A Language and Environment for Statistical Computing. In. (2022)

Real-Dato, J., Sojka, A.: The rise of (Faulty) euroscepticism? The impact of a decade of crises in Spain. South Eur. Soc. Politics (2020). https://doi.org/10.1080/13608746.2020.1771876

Rohrschneider, R.: The democracy deficit and mass support for an EU-wide government. Am. J. Political Sci. 46(2), 463–475 (2002). https://doi.org/10.2307/3088389

Schoene, M.: European disintegration? Euroscepticism and Europe’s rural/urban divide. European Politics and Society 20(3), 348–364 (2019). https://doi.org/10.1080/23745118.2018.1542768

Schuman, H., Presser, S.: Questions and Answers in Attitude Surveys: Experiments on qUestion Form, Wording, and Context. Sage, Washington (1996)

Shoemaker, P.J., Eichholz, M., Skewes, E.A.: Item nonresponse: distinguishing between don’t know and refuse. Int. J. Public Opin. Res. 14(2), 193–201 (2002)

Singh, R.K.: The sum and its parts: the benefits of combining data from different surveys. GESIS Blog: Adventures Ex-Post Harmon. Frank. Creat. (2020). https://doi.org/10.34879/gesisblog.2020.22

Singh, R.K.: (Not) by any stretch of the imagination: A cautionary tale about linear stretching. GESIS Blog: Adventures Ex-Post Harmon. Frank. Creat. (2021). https://doi.org/10.34879/gesisblog.2021.30

Słomczyński, K.M., Tomescu-Dubrow, I., Jenkins, J.C.: Democratic Values and Protest Behavior: Harmonization of Data from International Survey Projects. IFiS Publishers, San Francisco (2016)

Slomczynski, K.M., Powalko, P., Krauze, T.: Non-unique records in international survey projects: the need for extending data quality control. Surv. Res. Methods 11(1), 1–16 (2017)

Slomczynski, K.M., Tomescu-Dubrow, I., Wysmulek, I.: Survey data quality in analyzing harmonized indicators of protest behavior: a survey data recycling approach. Am. Behav. Sci. (2021). https://doi.org/10.1177/00027642211021623

Slomczynski, K.M., Tomescu-Dubrow, I.: Basic principles of survey data recycling. In: Advances in Comparative Survey Methods pp. 937–962. (2018)

Torcal, M., Christmann, P.: Congruence, national context and trust in European institutions. J. Eur. Public Policy 26(12), 1779–1798 (2019). https://doi.org/10.1080/13501763.2018.1551922

Van Vaerenbergh, Y., Thomas, T.D.: Response styles in survey research: a literature review of antecedents, consequences, and remedies. Int. J. Public Opin. Res. 25(2), 195–217 (2012). https://doi.org/10.1093/ijpor/eds021

VanHeuvelen, T., VanHeuvelen, J.S.: Between-country inequalities in health lifestyles. Int. J. Comp. Sociol. 62(3), 203–223 (2021). https://doi.org/10.1177/00207152211041385

Weijters, B., Cabooter, E., Schillewaert, N.: The effect of rating scale format on response styles: the number of response categories and response category labels. Int. J. Res. Mark. 27(3), 236–247 (2010). https://doi.org/10.1016/j.ijresmar.2010.02.004

Weijters, B., Geuens, M., Baumgartner, H.: The effect of familiarity with the response category labels on item response to likert scales. J. Consum. Res. 40(2), 368–381 (2013). https://doi.org/10.1086/670394

Wickham, H., Averick, M., Bryan, J., Chang, W., McGowan, L.D.A., François, R., Grolemund, G., Hayes, A., Henry, L., Hester, J.: Welcome to the tidyverse. J. Open Source Softw 4(43), 1686 (2019)

Wysmułek, I.: Using public opinion surveys to evaluate corruption in Europe: trends in the corruption items of 21 international survey projects, 1989–2017. Qual. Quant. 53(5), 2589–2610 (2019). https://doi.org/10.1007/s11135-019-00873-x

Zieliński, M.W., Powałko, P., Kołczyńska, M.: The past, present, and future of statistical weights in international survey projects. In: Advances in Comparative Survey Methods. pp. 1035–1052. (2018)

Funding

This work was supported by a grant awarded by the National Science Centre, Poland: [Grant Number 2018/31/B/HS6/00403].

Author information

Authors and Affiliations

Contributions

All authors equally contributed to the study conception, design, analysis and writing. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Cichocki, P., Jabkowski, P. Response scale overstretch: linear stretching of response scales does not ensure cross-project equivalence in harmonised data. Qual Quant 57, 3729–3745 (2023). https://doi.org/10.1007/s11135-022-01523-5

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11135-022-01523-5