Abstract

The field of artificial neural networks is expected to strongly benefit from recent developments of quantum computers. In particular, quantum machine learning, a class of quantum algorithms which exploit qubits for creating trainable neural networks, will provide more power to solve problems such as pattern recognition, clustering and machine learning in general. The building block of feed-forward neural networks consists of one layer of neurons connected to an output neuron that is activated according to an arbitrary activation function. The corresponding learning algorithm goes under the name of Rosenblatt perceptron. Quantum perceptrons with specific activation functions are known, but a general method to realize arbitrary activation functions on a quantum computer is still lacking. Here, we fill this gap with a quantum algorithm which is capable to approximate any analytic activation functions to any given order of its power series. Unlike previous proposals providing irreversible measurement–based and simplified activation functions, here we show how to approximate any analytic function to any required accuracy without the need to measure the states encoding the information. Thanks to the generality of this construction, any feed-forward neural network may acquire the universal approximation properties according to Hornik’s theorem. Our results recast the science of artificial neural networks in the architecture of gate-model quantum computers.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

A quantum neural network encodes a neural network by the qubits of a quantum processor. In the conventional approach, biologically inspired artificial neurons are implemented by software as mathematical rate neurons. For instance, the Rosemblatt perceptron (1957) [1] is the simplest artificial neural network consisting of an input layer of N neurons and one output neuron behaving as a step activation function. Multilayer perceptrons [2] are universal function approximators, provided they are based on squashing functions. The latter consist of monotonic functions which compress real values in a normalized interval, acting as activation functions [3].

In principle, a quantum computer is suitable for performing tensor calculations typical of neural network algorithms [4, 5]. Indeed, the qubits can be arranged in circuits acting as layers of the quantum analogue of a neural network. If equipped with common activation functions such as the sigmoid and the hyperbolic tangent, they should be able to process deep learning algorithms such those used for problems of classification, clustering and decision making. As qubits are destroyed at the measurement event, in the sense that they are turned into classical bits, implementing an activation function in a quantum neural network poses challenges requiring a subtle approach. Indeed the natural aim is to preserve as much as possible the information encoded in the qubits while taking advantage of each computation at the same time. The goal therefore consists in delaying the measurement action until the end of the computational flow, after having processed the information through neurons with a suitable activation function.

Within the field of quantum machine learning (QML) [6, 7], if one neglects the implementation of quantum neural networks on adiabatic quantum computers [8], there are essentially two kind of proposals of quantum neural networks on a gate-model quantum computer. The first consists of defining a quantum neural network as a variational quantum circuit composed of parameterized gates, where nonlinearity is introduced by measurements operations [9,10,11]. Such quantum neural networks are empirically evaluated heuristic models of QML not grounded on mathematical theorems [12] Furthermore, this type of models based on variational quantum algorithms suffers from an exponentially vanishing gradient problem, the so-called barren plateau problem [13], which requires some mitigation techniques [14, 15]. Quite differently, the second approach seeks to implement a truly quantum algorithm for neural network computations and to really fulfill the approximation requirements of Hornik’s theorem [3, 16] perhaps at the cost of a larger circuit depth. Such approach pertains to semi-classical [17, 18] or fully quantum [19, 20] models whose nonlinear activation function is again computed via measurement operations.

Furthermore, quantum neural network proposals can be classified with respect to the encoding method of input data. Since a qubit consists of a superposition of the state 0 and 1, few encoding options are distinguishable by the relations between the number of qubits and the maximum encoding capability. The first is the 1-to-1 option by which each and every input neuron of the network corresponds to one qubit [19,20,21,22,23]. The most straightforward implementation consists in storing the information as a string of bits assigned to classical base states of the quantum state space. A similar 1-to-1 method consists in storing a superposition of binary data as a series of bit strings in a multi-qubit state. Such quantum neural networks are based on the concept of the quantum associative memory [24, 25]. Another 1-to-1 option is given by the quron (quantum neuron) [26]. A quron is a qubit whose 0 and 1 states stand for the resting and active neural firing state, respectively [26].

Alternatively, another encoding option consists in storing the information as coefficients of a superposition of quantum states [27,28,29,30,31,32]. The encoding efficiency becomes exponential as an n-qubit state is an element of a \(2^n\)-dimensional vector space. To exemplify, the treatment by a quantum neural network of a real image classification problem of few megabits makes the 1-to-1 option currently not viable [33]. Instead, the choice n-to-\(2^n\) allows to encode a megabit image in a state by using \(\sim 20\) qubits only.

However, encoding the inputs as coefficients of a superposition of quantum states requires an algorithm for generic quantum state preparations [34,35,36] or, alternatively, to directly feed quantum data to the network [37]. For instance, quantum encoding methods such as flexible representation of quantum images (FRQI) [38] have been proposed. Generally, to prepare an arbitrary n-qubit quantum state requires a number of quantum gates that scales exponentially in n. Nonetheless, in the long run, an encoding of kind n-to-\(2^n\) guarantees a better applicability to real problems than the options 1-to-1. Moreover, such encoding method satisfies the requirements of Hornik’s theorem in order to guarantee the universal function approximation capability [16]. Despite some relatively heavy constraints, such as the digital encoding and the fact that the activation function involves irreversible measurements, examples toward this direction have been reported [28, 30, 31]. Instead, differently from both the above proposals and from quantum annealing based algorithms applied to neural networks [8], we develop a fully reversible algorithm.

In a novel alternative approach, we define here a n-to-\(2^n\) encoding model that involves inputs, weights and bias in the interval \(\left[ -1,1\right] \in {\mathbb {R}}\). The model exploits the architecture of gate-model quantum computers to implement any analytical activation function at arbitrary approximation only using reversible operations. The algorithm consists in iterating the computation of all the powers of the inner product up to d-th order, where d is a given overhead of qubits with respect to the n used for the encoding. Consequently, the approximation of most common activation functions can be computed by rebuilding its Taylor series truncated at the d-th order.

The algorithm is implemented in the QisKit environment [39] to build a one-layer perceptron with 4 input neurons and different activation functions generated by power expansion such as hyperbolic tangent, sigmoid, sine and swish function, respectively, truncated up to the 10-th order. Already at the third order, which corresponds to the least number of qubits required for a nonlinear function, a satisfactory approximation of the activation function is achieved.

This work is organized as follows: in Sect. 2, the definitions and the general strategy are summarized; in Sect. 3, the quantum circuits for the computation of the power terms and next of the polynomial series are obtained. Next, in Sect. 4 the approximation of analytical activation functions algorithm is outlined while in Sect. 5 the computation of the amplitude is shown. Section 6 concerns the estimation of the perceptron output. The final Section is devoted to the conclusions.

2 Definitions and general strategy

In order to define our quantum version of the perceptron with continuous parameters and arbitrary analytic activation function, let us consider a one-layer perceptron. The latter represents the fundamental unit of a feed-forward neural network. A one-layer perceptron is composed of \(N_{in}\) input neurons and one output neuron equipped of an activation function \(f:{\mathbb {R}}\rightarrow I\) where I is a compact set. The output neuron computes the inner product between the vector of the input values \(\vec x = \left( x_0, x_1, \dots , x_{N_{in}-1}\right) \in {\mathbb {R}}^{N_{in}}\) and the vector of the weights \(\vec w = \left( w_0, w_1, \dots , w_{N_{in}-1}\right) \in {\mathbb {R}}^{N_{in}}\) plus a bias value \(b\in {\mathbb {R}}\). Such scalar value is taken as the argument of an activation function. The real output value \(y\in I\) of the perceptron is defined as \(y \equiv f\left( \vec w \cdot \vec x + b\right) \) as in Fig. 1a.

Here, we develop a quantum circuit that computes an approximation of y. The algorithm starts by calculating the inner product \(\vec w\cdot \vec x\) plus the bias value b. Next, it evaluates the output y by calculating an approximation of the activation function f. On a quantum computer, a measurement operation apparently represents the most straightforward implementation of a nonlinear activation function, as done for instance in Ref. [28] to solve a binary classification problem on a quantum perceptron. Such approach, however, cannot be generalized to build a multi-layered qubit-based feed-forward neural network.

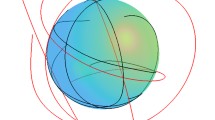

Graphical representation of a one-layer perceptron and relative qubit-based version. A one-layer perceptron architecture (a) is composed by an input layer of \(N_{in}\) neurons connected to the single output neuron. It is characterized by an input vector \(\vec x\), a weight’s vector \(\vec w\) and a bias b. In the classical version, the activation function takes as argument the value \(z=\vec w \cdot \vec x + b\) and it returns the perceptron output y. The quantum version (b) follows the same architecture but the calculus consists of a sequence of transformations of a n-qubits quantum state initialized with the coefficients of the inputs vector \(\vec x\) as probability amplitudes. The quantum states at each step are represented by a qsphere (graphical representation of a multi-qubit quantum state). In a qsphere, each point is a different coefficient of the superposition quantum state. Generally, the coefficients are complex and in a qsphere the modules of the coefficients are proportional to the radius of the points while the phases depend on the color. In the blue box, it is shown the starting quantum state with the inputs stored as probability amplitudes. Instead, in the green box it is shown the quantum state with the weights and the bias. In the first red box, at each step one qubit is added in order to store the power terms of z up to d (\(d=3\) in the Figure). In the last red box, the output of the perceptron is given by a series of rotations which compose a polynomial \(P^d(z)\) (\(d=3\) in the Figure).

First of all, measurement operations break the quantum algorithm and impose initialization of the qubits layer by layer, thus preventing a single quantum run of a multi-layer neural network. Secondly, other activation functions—beside that implied by the measurement operations, are more suitable to solve generic problems of machine learning.

We avoid both of these shortcomings with a new quantum algorithm, which is based on two theorems as detailed below. The quantum algorithm is composed of two steps (Fig. 1b). First, the powers of \(\vec w \cdot \vec x + b\) are stored as amplitudes of a multi-qubit quantum state. Next, the chosen activation function is approximated by building its polynomial series expansion through rotations of the quantum state. The rotation angles are determined by the coefficients of the polynomial series of the chosen activation function. They can be explicitly computed by our quantum algorithm. Let us first summarize the notation used throughout the text. Let \({\mathcal {H}}\) stand for the 2-dimensional Hilbert space associated to one qubit. Then, the \(2^n\)-dimensional Hilbert space associated to a register q of n qubits is written as \({\mathcal {H}}^{\otimes n}_q\equiv {\mathcal {H}}_{q_{n-1}}\otimes {\mathcal {H}}_{q_{n-2}}\otimes \ldots \otimes {\mathcal {H}}_{q_{0}}\). If we denote by \(\{\left| 0\right\rangle , \left| 1\right\rangle \}\) the computational basis in \({\mathcal {H}}\), then the computational basis in \({\mathcal {H}}^{\otimes n}_q\) reads \(\{\left| s_{n-1}s_{n-2}\ldots s_0\right\rangle ,\;s_k\in \{0,1\}\,,\; k=0,1,\ldots ,n-1\}\). An element \(\left| s_{n-1}s_{n-2}\ldots s_0\right\rangle \) of this computational basis can be alternatively written as \(\left| i\right\rangle \) where \(i\in \{0,1,\ldots ,2^n-1\}\) is the decimal integer number that corresponds to the bit string \(s_{n-1}s_{n-2}\ldots s_0\). In particular, if \(N=2^n\), then \(\left| N-1\right\rangle \equiv \left| 2^n-1\right\rangle \equiv \left| 11\ldots 1\right\rangle \equiv \left| 1\right\rangle ^{\otimes n}\). In this notation, the number of qubits of a register is indicated with a lowercase letter, such as n and d, while the dimension of the associated Hilbert space is indicated by the correspondent uppercase letter, such as \(N=2^n\) and \(D=2^d\).

The expression \(U_q^{\otimes n}=U_{q_{n-1}}\otimes U_{q_{n-2}}\otimes \cdots \otimes U_{q_{0}}\) represents a separable unitary transformation constructed with one-qubit transformations \(U_{q_j}\) acting on each qubit of the register q. A non-separable unitary multi-qubit transformation is usually written as \(U_q\) and, in some cases, simply U. Two registers a and q, respectively, with d and n qubit, can be compound in a single register supporting the \(N+D\) Hilbert space \({\mathcal {H}}_a^{\otimes d}\otimes {\mathcal {H}}_q^{\otimes n}\) with computational basis \(\{\left| i\right\rangle _a\left| j\right\rangle _q,\,i=1,\ldots ,D,\,j=1,\ldots ,N\}\). For brevity, we will use the compact notation \(O_q\) for \(\mathbbm {1}_a\otimes O_q\) and \(O_a\) for \(\mathbbm {1}_a\otimes O_q\) for operators O acting on only one of two registers. In particular, we write

for the \(D-\)dimensional projection onto the state \(\left| i\right\rangle \) of the q register.

Particular cases of unitary operators implementable on a circuital model quantum computer are the controlled gates. Let \(C_{q_i}U_{q_j}\) represent a controlled-U transformation: the operator U is applied on the qubit \(q_j\) (called target qubit) if \(q_i\) is in the state \(\left| 1\right\rangle \) (called control qubit). The transformation \({\bar{C}}_{q_i}U_{q_j}\) is a controlled transformation where the gate U is applied on the qubit \(q_j\) if \(q_i\) is in the state \(\left| 0\right\rangle \). Therefore, \({\bar{C}}_{q_i}U_{q_j}=X_{q_i}C_{q_i}U_{q_j}X_{q_i}\). In a more general case, a d-controlled operator has a notation of kind \(C_{a}^{d}U_{q_j}\) where, in such case, a is the set of the qubits control while \(q_j\) is the target.

In the following, two-qubit registers q and a of n and d qubits, respectively, are assumed to be assigned.

3 Computation of the polynomial series

As stated above, our aim is to build a \((n+d)\)-qubits quantum state containing the Taylor expansion of f(z) to order d, where \(z= \vec w \cdot \vec x + b\) up to a normalization factor. The number n of required qubits, in addition to d, is determined by the dimension of the input vector. We first need to encode the powers (\(1,z,z^2,\dots ,z^d\)) in the \((n+d)\) qubits. The following Lemma provides the starting point:

Lemma 1

Given two vectors \(\vec x, \vec w\in [-1,1]^{N_{in}}\) and a number \(b\in [-1,1]\), and given a register of n qubits such that \(N=2^n\ge N_{in}+3\), then there exists a quantum circuit realizing a unitary transformation \(U_z(\vec x, \vec w, b)\) such that

where \(\left| 0\right\rangle \equiv \left| 0\right\rangle ^{\otimes n}\) and \(\left| N-1\right\rangle \equiv \left| 1\right\rangle ^{\otimes n}\).

In Lemma 1, a \(n-\)qubit unitary operator \(U_z\) is defined by the requirement that Eq. 1 holds, where \(b\in [-1,1]\), \(\vec x=\left( x_0,\dots ,x_{N_{in}-1}\right) \) and \(\vec w=\left( w_0,\dots ,w_{N_{in}-1}\right) \), where \( N_{in}\le 2^n-3\) and \( x_i, w_i\in [-1,1]\). The existence of infinitely many such operators is trivially obvious from the purely mathematical point of view. The problem is to provide an explicit realization in terms of realistic quantum gates.

Proof

Let us define two vectors in \({\mathbb {R}}^{N}\): \(\vec v_x=\left( \vec x, 1, A_x, 0 \right) \) and \(\vec v_{w,b}=\left( \vec w, b, 0, A_{w,b} \right) \) where \( N\equiv 2^n\). In such vectors \( N-N_{in}-3\), coefficients are always null while the values \( A_x\) and \( A_{w,b}\) are suitable constants defined such that \(\vec v_x\cdot \vec v_x= \vec v_{w,b}\cdot \vec v_{w,b} = N_{in} + 1\).

It then follows that \(\vec v_{w,b}^T\vec v_x=\vec w \vec x +b\) \(\in \) \([-N_{in}-1, N_{in}+1]\). We now define two n-qubit quantum states \(\left| \psi _x\right\rangle \) and \(\left| \psi _{w,b}\right\rangle \) as follows

Then, by construction

The initialization algorithm mentioned above allows us to consider unitary transformations \(U_x={\mathcal {U}}\left( \vec v_{x}\right) \) and \(U_{w,b}=X^{\otimes n}{\mathcal {U}}^{\dag }\left( \vec v_{w,b}\right) \), where X stands for the quantum NOT gate, such that \(U_x\left| 0\right\rangle =\left| \psi _x\right\rangle \) and \( U_{w,b}\left| \psi _{w,b}\right\rangle =\left| 1\right\rangle \). It follows that

Comparing with Eq. 1, we see that \(U_z(\vec x, \vec w, b)=U_{w,b}U_x=X^{\otimes n}{\mathcal {U}}^{\dag }\left( \vec v_{w,b}\right) {\mathcal {U}}\left( \vec v_{x}\right) \). \(\square \)

Fundamental gates composition of the transformations \(U_{w,b}\) and \(U_{x}\). The transformations \({\mathcal {U}}\left( \vec v_x\right) \) and \({\mathcal {U}}\left( \vec v_{w,b}\right) \) encode, respectively, the coefficients of the vectors \(v_{x}\) and \(v_{w,b}\) in a superposition quantum state. They are composed of the inverse of the operators \(U_i\) where \(i=1,\dots ,n\) and \(Ph_3\), which introduces the phases of the probability amplitudes. The operators \(U_3\) (c), \(U_2\) (d) and \(U_1\) (e) are shown as composition of multi-controlled rotations \(R_y\) which are equal to a composition of gates \(R_y\) and Controlled-Not [40]. The transformation \(\scriptstyle Ph^{\dag }_3\) (a) introduces the phases of the amplitudes while \(Ph_3\)(b) removes them. The details of the arbitrary quantum state preparation circuit are described in Supplementary Note 1

Since the amplitudes of the states \(\left| \psi _x\right\rangle \) and \(\left| \psi _{w,b}\right\rangle \) are real, the phases are either 0 or \(\pi \) and it is no longer necessary to apply a series of multi-controlled \(R_Z\) to set them. A single diagonal transformation suffices, with either 1 or \(-1\) on the diagonal. For such purpose, hypergraph states prove effective [41]. Thanks to such kind of states, a small number of Z, CZ and multi-controlled Z gates are needed to achieve the transformation \({\mathcal {U}}\left( \vec v\right) \). The transformations, which introduce the phases of the amplitudes of a n-qubits quantum state, are summarized by an operator called \(Ph^{\dag }_n\) in the Fig. 2. More details about the strategy adopted for quantum–state initialization are reported in Supplementary Note 1 and Supplementary Note 2.

There are many alternatives to the states \(\left| \psi _x\right\rangle \) and \(\left| \psi _{w,b}\right\rangle \) which give the same inner product \(\left\langle \psi _{w,b}\vert \psi _x\right\rangle =z\). Defining the two vectors

then the transformations \({\mathcal {U}}\left( \vec v_x\right) \) and \({\mathcal {U}}\left( \vec v_{w,b}\right) \) applied on the state \(\left| 0\right\rangle ^{\otimes n}\) return two states, \(\left| \psi _x\right\rangle \) and \(\left| \psi _{w,b}\right\rangle \), respectively, such that \(\left\langle \psi _{w,b}\vert \psi _x\right\rangle =z\). The reason for the choice shown above is due to the phases to add. Since the values \(A_{w,b}\) and \(A_x\) do not appear in the inner product, then their phases are not relevant. Therefore, such states \(\left| \psi _x\right\rangle \) and \(\left| \psi _{w,b}\right\rangle \) make unnecessary a \((n-1)\)-controlled Z gate to adjust the phases of the amplitudes associated with \(\left| 0\right\rangle \) and \(\left| N-1\right\rangle \). In Fig. 2, the composition of the transformations \(U_x\) (Fig.2a) and \(U_{w,b}\) (Fig. 2b) is shown in the case of a one-layer perceptron with \(N_{in}=4\) neurons. In such case, the number of input neurons is 4. Since \(n = log_2N\) and \(N\ge N_{in}+3\) then, given \(N_{in}\) input neurons the minimum number of required qubits is \(n={\lceil }\log _2(N_{in}+3){\rceil }\). Therefore, with \(N_{in}=4\), n=3 qubits are required to store z in a quantum state.

The variable z generalizes in two respects the inner product of Ref. [28] where inputs and weights only take binary values \(\{-1,1\}\) and no bias is involved.

The transformation \(U_z(\vec x, \vec w, b)\) is a key building block of the quantum perceptron algorithm. Indeed, in our quantum circuit such transformation is iterated several times over the Hilbert space enlarged to \({\mathcal {H}}^{\otimes d}_a\otimes {\mathcal {H}}_q^{\otimes n}\) by the addition of another register a of d qubits. The existence of such a quantum circuit is guaranteed by the following theorem that provides its explicit construction:

Theorem 1

Let z be the real value in the interval \(\left[ -1,1\right] \) assumed by \(\left( \vec w \cdot \vec x + b\right) /\left( N_{in}+1\right) \), where \(\vec x, \vec w\in [-1,1]^{N_{in}}\) and \(b\in [-1,1]\). Let q and a be two registers of n and d qubits, respectively, with \(N=2^n\ge N_{in}+3\). Then, there exists a quantum circuit which transforms the two registers from the initial state \(\left| 0\right\rangle _a\left| 0\right\rangle _q\) to a \((n+d)\)-qubit entangled state \(|\psi ^d_{z}\rangle \) of the form

where

where \({\varvec{0}}\) is the null element of the Hilbert space and

where \(\left| z\right\rangle \) is not to be intended as a quantum state but as an element of space \({\mathcal {H}}_a\). The circuit is expressed by \(S_VX^{\otimes n}_q\) (Fig. 3a) where X is the quantum NOT gate and

with

Proof

The thesis of the theorem is the existence of a transformation which, acting on two registers of qubit q and a with n and d qubits, respectively, returns a state \(\left| \psi ^d_z\right\rangle \in {\mathcal {H}}_a^{\otimes d}\otimes {\mathcal {H}}_q^{\otimes n}\) as defined in Equation 5. The demonstration consists of the construction of such a circuit. For such purpose, let us define the d states \(\left| \psi _z^m\right\rangle \in {\mathcal {H}}_{a_{m-1}}\otimes {\mathcal {H}}_{a_{m-2}}\otimes \cdots \otimes {\mathcal {H}}_{a_{0}}\otimes {\mathcal {H}}_q^{\otimes n}\), where \(m=0,\dots ,d-1\)

where \(\left| {N-1}\right\rangle \left\langle {N-1}\right| _q|\psi _z^m\rangle _{\perp }=0\;\forall \, m\).

From such definition, it follows that the states \(\left| \psi _z^m\right\rangle \) are states of \((n+m)\)-qubits and \(\left| \psi _z^0\right\rangle \equiv \left| N-1\right\rangle _q\) is a n-qubits state.

The proof of the theorem is therefore reduced to demonstrating the existence of a sequence of transformations \(V_m\), where \(m=0,\dots ,d-1\), such that \( V_m\left| 0\right\rangle _{a_m}\left| \psi _z^m\right\rangle =\left| \psi _z^{m+1}\right\rangle \) where \(a_m\) is the m-th qubit in the register a. Therefore, \(V_m\) is a unitary transformation defined over the space \({\mathcal {H}}_{a_m}\otimes {\mathcal {H}}_{a_{m-1}}\otimes \cdots \otimes {\mathcal {H}}_{a_{0}}\otimes {\mathcal {H}}_q^{\otimes n}\).

Let us consider the following ansatz for the transformation \(V_m\):

whose graphical representation is given in Fig. 3a.

Let us apply \( V_m\), as defined in Equation 7, on the state \(\left| 0\right\rangle _{a_m}\left| \psi _z^m\right\rangle \).

The transformation \( C_{a_m}U_z(\vec x, \vec w, b)_q C_{a_m}X^{\otimes n}_q\) consists in the application of \( U_z(\vec x, \vec w, b)X^{\otimes n}\) on the qubits q controlled by the qubit \(a_m\) which means the transformation act only on \(\left| \psi _z^m\right\rangle _{\parallel }\) so focusing only on its subspace it results

Therefore, the transformation \( V_m\) applied on \(\left| 0\right\rangle _{a_m}\left| \psi _z^m\right\rangle \) returns the following state

To demonstrate that the state just obtained is \(\left| \psi _z^{m+1}\right\rangle \), the projection over the state \(\left| N-1\right\rangle _q\) must return \(\frac{1}{\sqrt{2^{m+1}}}\left| z\right\rangle ^{\otimes (m+1)}\left| N-1\right\rangle _q\) as from the definition of the states \(\left| \psi _z^{m}\right\rangle \). Let us apply the projection \( \left| {N-1}\right\rangle \left\langle {N-1}\right| {N-1}_q\) on the resulting state in the Eq. 10. Since \(\left| {N-1}\right\rangle \left\langle {N-1}\right| {N-1}_q\left| \psi _z^m\right\rangle _{\perp }=0\) by definition, and \(\left\langle N-1\right| U_z(\vec x, \vec w, b)\left| 0\right\rangle ^{\otimes n}_q=z\) as from Lemma 1, the result of the projection \( \left| {N-1}\right\rangle \left\langle {N-1}\right| {N-1}_q\) is the following.

Having demonstrated that \( V_m\left| 0\right\rangle _{a_m}\left| \psi _z^m\right\rangle =\left| \psi _z^{m+1}\right\rangle \), the proof of the existence of the transformation which returns \(\left| \psi _z^d\right\rangle \) if applied on \(\left| 0\right\rangle _a^{\otimes d}\left| 0\right\rangle _q^{\otimes n}\) proceeds by recursion. Indeed, by applying \( V_{d-1}\cdots V_1 V_0\) to the state \(\left| 0\right\rangle _a^{\otimes d}\left| \psi _z^0\right\rangle =\left| 0\right\rangle _a^{\otimes d}\left| N-1\right\rangle _q\) the resulting state will be \(\left| \psi _z^d\right\rangle \). \(\square \)

To summarize, the quantum circuit of the quantum perceptron algorithm starts by expressing the unitary operator which initializes the q and a registers from the state \(\left| 0\right\rangle _a\left| 0\right\rangle _q\) to the state \(\left| \psi ^d_{z}\right\rangle \). Such a unitary operator is expressed by \(S_V X^{\otimes n}\) where \(S_V\) is the subroutine of the quantum circuit which achieves the goal of the first step of the quantum perceptron algorithm, i.e., to encode the powers of z up to d in a quantum state as from the following Corollary.

Corollary 3.1

The state \(|\psi _z^d\rangle \) stores as probability amplitudes all the powers \(z^k\), for \(k=0,1,\ldots ,d\), up to a trivial factor. Indeed Eq. 5 in Theorem 1 implies

The first step of the quantum perceptron algorithm consists of the storage of all powers of \(z \equiv \left( \vec w \cdot \vec x + b\right) /\left( N_{in}+1\right) \) up to d in a \((n+d)\)-qubits state. The proof of Theorem 1 implies that the first step of the algorithm is the quantum circuit shown in the Fig. 3a, consisting of a subroutine composed by a Pauli gate X applied on each qubit in the register q and a transformation \(S_V=V_{d-1}\cdots V_0\). Indeed from the Corollary 3.1, the state \(\left| \psi _z^d\right\rangle =S_V X_q^{\otimes n}\left| 0\right\rangle _a^{\otimes d}\left| 0\right\rangle _q^{\otimes n}\) stores as probability amplitudes all the powers of z up to d less than a factor \(2^{-d/2}\). The proof of the Corollary 3.1 is straightforward as follows.

Proof

As shown above, the state \(\left| \psi _z^d\right\rangle \) can be written as \(\left| \psi _z^d\right\rangle =\left| \psi _z^d\right\rangle _{\perp }+\left| \psi _z^d\right\rangle _{\parallel }\) where \(\left| {N-1}\right\rangle \left\langle {N-1}\right| {N-1}_q\left| \psi _z^d\right\rangle _{\perp }=0\), therefore, \(_q\left\langle N-1\right| _a\left\langle 2^k-1\vert \psi _z^d\right\rangle = _q\left\langle N-1\right| _a\left\langle 2^k-1\vert \psi _z^d\right\rangle _{\parallel }\).

Since \(\left| \psi _z^d\right\rangle _{\parallel }=\frac{1}{\sqrt{2^d}}\left| z\right\rangle _a^{\otimes d}\left| N-1\right\rangle _q\) then

Let us rewrite \(\left| 2^k-1\right\rangle \) in a binary form \(\left| s_{d-1}s_{d-2}\cdots s_0\right\rangle \) where \(s_{j}=1\) from \(j=0\) to \(j=k-1\) and 0 otherwise.

The latter holds because, \(\forall j=0,\dots ,d-1\),

Therefore, \(_q\left\langle N-1\right| _a\left\langle 2^k-1\vert \psi _z^d\right\rangle =2^{-d/2}z^k\). \(\square \)

The next step of the algorithm consists in transforming the state \(|\psi _z^d\rangle \) so as to achieve a special recursively defined d-degree polynomial in z. Such step is identifiable with the subroutine \(S_U\) of the quantum perceptron circuit, see Fig. 3a.

By Eq. 5 in Theorem 1, there must exist a unitary operator \(S_U\) which acts as the identity on \({\mathcal {H}}_q\) and returns, when applied to \(\left| z\right\rangle ^{\otimes d}_a\), a new state which stores the polynomial. In fact, it holds the following

Theorem 2

Let \(\{f_k,\, k = 1,\ldots ,d\}\) be the family of polynomials in z defined by the following recursive law

with \(f_0(z) = 1\) and \(\vartheta _k\in \left[ -\frac{\pi }{2},\frac{\pi }{2}\right] \) for any \(k = 0,\ldots ,d-1\).

Then, there exists a family \(\{U_k,\,k = 1,\ldots ,d\}\) of unitary operators such that

These unitary operators are, in turn, defined by the recursive law

with \(U_0=\mathbbm {1}\).

The subroutine \(S_U\) shown in Fig. 3a corresponds to \(U_d\). The Proof of Theorem 2 follows.

Proof

The proof of the theorem follows two steps, namely the statement for the first term of the polynomial and an inductive step as follows. The first step consists of demonstrating that

with \(U_1=C_{a_0}X_{a_1}\bar{C}_{a_1}R_y(-2\vartheta _{0})_{a_0}\) as defined in Eq. 18. In the second step, instead, the proof proceeds for \(U_k\,\forall k=1,2,\dots ,d\) recursively.

It aims to prove \(_a\left\langle 0\right| U_k\left| z\right\rangle _a^{\otimes d}=f_{k}(z)\) assuming that \(_a\left\langle 0\right| U_{k-1}\left| z\right\rangle _a^{\otimes d}=f_{k-1}(z)\), where

as defined in Eq. 18. Let us preliminary consider the states \(U_k\left| z\right\rangle ^{\otimes d}_a\), where \(d\ge k\ge 1\). The state \(\left| z\right\rangle ^{\otimes d}_a\) is considered in the case with \(k=0\). Next, let us focus on the subspace of \({\mathcal {H}}^{\otimes d}_a\) defined as \({\mathcal {H}}_{\{0,1\}}=\{\left| 0\right\rangle ^{\otimes d},\left| 0\right\rangle ^{\otimes (d-1)}\left| 1\right\rangle \}\). The operator which projects the elements of \({\mathcal {H}}^{\otimes d}_a\) in the subspace \({\mathcal {H}}_{\{0,1\}}\) is \({\mathcal {P}}_{\{0,1\}}=\left| {0}\right\rangle \left\langle {0}\right| {0}_a+\left| {1}\right\rangle \left\langle {1}\right| {1}_a\).

Let us now move to the first step of the demonstration. The first operation consists of applying \(U_1\) to the state \(\left| z\right\rangle ^{\otimes d}_a\). Because of the definition of the state \(\left| z\right\rangle ^{\otimes d}_a\), it follows that \(\left\langle 2^i-1\vert z\right\rangle ^{\otimes d}_a=z^i\) where \(i=0,\dots ,d\) (Corollary 3.1), therefore, the projection over \({\mathcal {H}}_{\{0,1\}}\) of the state \(\left| z\right\rangle ^{\otimes d}_a\) is

The operator \(\bar{C}_{a_1}R_y(-2\vartheta _0)_{a_0}=X_{a_1}C_{a_1}R_y(-2\vartheta _0)_{a_0}X_{a_1}\) rotates the qubit \(a_0\), along the y-axis of the Bloch sphere of angle \(-2\vartheta _0\), only if the qubit \(a_1\) is in \(\left| 0\right\rangle \), therefore, such operator acts on the subspace \({\mathcal {H}}_{\{0,1\}}\).

The projection on such subspace of the state \(\bar{C}_{a_1}R_y(-2\vartheta _0)_{a_0}\left| z\right\rangle ^{\otimes d}_a\) is

Since \(C_{a_0}X_{a_1}\) is a controlled-NOT gate which acts only if the qubits \(a_0\) is in the state \(\left| 1\right\rangle \) then

which completes the first step of this demonstration. Let us now demonstrate the recursive step. Here, the only assumption is \(_a\left\langle 0\right| U_{k-1}\left| z\right\rangle ^{\otimes d}_a=f_{k-1}(z)\), therefore, differently from the previous step where the projection of \(\left| z\right\rangle ^{\otimes d}_a\) on the subspace \({\mathcal {H}}_{\{0,1\}}\) was known, here the projection of \(U_{k-1}\left| z\right\rangle ^{\otimes d}_a\) is equal to

where \(B_{k-1}\) is an unknown real value. Let us apply \(C_{a_0}X_{a_k}\bar{C}_{a_k}R_y(-2\vartheta _{k-1})_{a_0}\) on the state \(U_{k-1}\left| z\right\rangle ^{\otimes d}_a\) so as to obtain the state \(U_{k}\left| z\right\rangle ^{\otimes d}_a\). From Eq. 19:

To prove the theorem, \(B_{k-1}\) must be equal to \(z^k\) since \(f_{k}(z)=f_{k-1}(z)\cos {\vartheta _{k-1}}-z^k\sin {\vartheta _{k-1}}\).

The purpose of the second step of the proof can be achieved just proving that \(B_{k-1}=z^k\) \(\forall k=1,\dots ,d\). That is already proved for \(k=0\) because \(\left\langle 2^i-1\vert z\right\rangle ^{\otimes d}_a=z^i\) as said above. Let us prove that \(B_{k-1}=z^k\) for \(k=1\) while for \(k>1\) the proof will proceed recursively.

The state \(\left| 2^i-1\right\rangle \) is a state of the computational bases of \({\mathcal {H}}^{\otimes d}_a\). Writing such state in the binary version it results equal to \(\left| s_{d-1}s_{d-2}\cdots s_0\right\rangle \) where \(s_{j}=1\) from \(j=0\) to \(j=i-1\) and 0 otherwise.

As said before, the operator \(\bar{C}_{a_1}R_y(-2\vartheta _0)_{a_0}\) acts only on the state \(\left| s_{d-1}s_{d-2}\cdots s_0\right\rangle \) where \(s_{1}=0\), therefore, it does not act on the states \(\left| 2^i-1\right\rangle \) \(\forall i>1\). Instead, the operator \(C_{a_0}X_{a_1}\) acts on the state \(\left| s_{d-1}s_{d-2}\cdots s_0\right\rangle \) where \(s_{0}=1\) and it applies a NOT operation on the bit \(s_1\). That means the states \(\left| 2^i-1\right\rangle \) become \(\left| 2^i-1-2\right\rangle \) \(\forall i>1\). Therefore, since \(\left\langle 2^i-1\vert z\right\rangle =z^i\), thanks to \(U_{1}(\vartheta _{0})=C_{a_0}X_{a_1}\bar{C}_{a_1}R_y(-2\vartheta _{0})_{a_0}\) then \(\left\langle 2^i-1-2\right| U_{1}\left| z\right\rangle ^{\otimes d}_a=z^i\) \(\forall i> 1\). In particular, taking the value of i such that \(\left| 2^i-1-2\right\rangle =\left| 0\cdots 01\right\rangle \), that is \(i=2\), \(\left\langle 0\cdots 01\right| U_{1}\left| z\right\rangle ^{\otimes d}_a=z^2\) and, therefore, \(B_1=z^2\). Let us proceed recursively for \(k>1\) assuming that

The state \(\left| \scriptstyle {2^i-1-\sum _{h=1}^{k-1}2^{h}}\right\rangle \) written in a binary form is \(\left| s_{d-1}s_{d-2}\cdots s_0\right\rangle \) where \(s_{j}=1\) from \(j=k\) to \(j=i-1\) and for \(j=0\) while is 0 otherwise. In particular, for \(i=k\), \(\left| \scriptstyle {2^i-1-\sum _{h=1}^{k-1}2^{h}}\right\rangle =\left| 0\cdots 01\right\rangle \) and therefore \(_a\left\langle 1\right| U_{k-1}\left| z\right\rangle ^{\otimes d}_a=z^k\) which means \(B_{k-1}=z^k\). The recursive procedure consists of proving that \(\left\langle \scriptstyle {2^i-1-\sum _{h=1}^{k}2^{h}}\right| U_{k}\left| z\right\rangle ^{\otimes d}_a=z^i\) starting from the assumption in Eq. 20. Let us start from the state \(U_{k-1}\left| z\right\rangle ^{\otimes d}_a\) and let us apply on it the transformation \(C_{a_0}X_{a_k}\bar{C}_{a_k}R_y(-2\vartheta _{k-1})_{a_0}\). The transformation \(\bar{C}_{a_k}R_y(-2\vartheta _{k-1})_{a_0}\) acts only on the state \(\left| s_{d-1}s_{d-2}\cdots s_0\right\rangle \) where \(s_{k}=0\), therefore, it does not act on the states \(\left| \scriptstyle {2^i-1-\sum _{h=1}^{k-1}2^{h}}\right\rangle \) \(\forall i>k\). Instead \(C_{a_0}X_{a_k}\) is a bit-flip transformation which acts on the state \(\left| s_{d-1}s_{d-2}\cdots s_0\right\rangle \) only if \(s_{0}=1\) and it applies a NOT operation on the bit \(s_k\), therefore

That means

for \(d>i>k\) and, in particular, for \(i=k+1\), \(_a\left\langle 1\right| U_{k-1}\left| z\right\rangle ^{\otimes d}_a=z^k\) therefore \(B_{k}=z^{k+1}\). Such final result proves that \(B_{k}=z^{k+1}\) \(\forall k=0,\dots ,d-1\), therefore

The second step is therefore concluded and thus the proof of the theorem. \(\square \)

In Fig. 3a, \(S_U\) is the subroutine which achieves the second step of the perceptron algorithm, the composition of the polynomial expansion in z, and it is equal to \(U_d(\vec \vartheta _{d-1})\).

Quantum circuit of a qubit-based one-layer perceptron in two different cases. (a) The quantum circuit which returns the state \(|\psi ^d_{f(z)}\rangle \) encodes the value \(f_d(z)\) as stated by Theorems 1 and 2 . In a more general context, \(f_d(z)\) is the output value of a neuron of a deep neural network hidden layer, as no measurement is required when the information is sent to the next layer. The subroutines \(S_V\) and \(S_U\) are described in the upper boxes, where the general case of \(S_V\) is shown, and in the lower box, respectively. In the latter, it is shown a particular case at \(d=9\). Such value corresponds to the maximum order of \(d=9\) of the polynomial expansion used for approximating the activation functions tanh, sigmoid and sine. (b) A complete quantum circuit of a qubit-based one-layer perceptron is shown. In such case, by performing the measurements of all the qubits and averaging after many repetitions of the circuit, it is possible to estimate the output \(y_q\) of the one-layer perceptron

4 Approximation of analytical activation functions

The transformation \(S_U=U_d\otimes X^{\otimes n}\) applied on \(|\psi ^d_{z}\rangle \) returns a state with the probability amplitude associated to \(\left| 0\right\rangle _a\left| 0\right\rangle _q\) equal to \(2^{-d/2}f_d(z)\). Let us denote such final quantum state of \((n+d)\)-qubits as \(|\psi _{f(z)}^d\rangle \). Equation 16 defines \(f_d(z)\) as a d-degree polynomial with coefficients depending on d angles \(\vartheta _k,\,k=0,\ldots ,d-1\). From Theorem 2, there follows a Corollary which shows how to set such angles in order to approximate an arbitrary analytical activation function f(z) by \(f_d(z)\).

Corollary 4.1

Let f be a real analytic function over a compact interval I. If \(f_d\) is the top member of the family of polynomials defined in Theorem 2 (Eq. 16) then the angles \(\vartheta _k,\,k=0,\ldots ,d-1\) can be chosen in such a way that \(f_d(z)\) coincides with the d-order Taylor expansion of f(z) around \(z=0\), up to a constant factor \(C_d\) which depends on f and on the order d as

where \(a_k=\frac{1}{k!}f^{(k)}(0)\) is the first nonzero coefficient of the expansion and \(f^{(k)}\) the k-order derivative of f.

Proof

Let us denote with \( T_d(z)\) the truncated polynomial series expansion of an analytical function f at the order d expressed by \( T_d(z)=\sum _{i=0}^{d}a_i z^i\).

Let f be an analytical function then it exists k, where \(0\le k\le d\) and \(a_i=0 \forall i< k\), such that, factorizing \(a_k\), \(T_d(z)\) results

Let us consider the value \(f_d(z)\), defined by the Eq. 16. If \( \cos {\vartheta _i}\ne 0\) \(\forall i=k,\dots ,d-1\) and \( \vartheta _i=-\frac{\pi }{2}\) otherwise, then, factorizing any \( \cos {\vartheta _i}\ne 0\), it can be expressed as

where \(\scriptstyle A_{ik}=\prod _{j=k}^{i-1}\cos {\vartheta _j}\). Let us choose the angles \( \vartheta _i\) in order to satisfy the equality

Therefore, equalizing term by term in powers of z, the resulted equation for \(i\ge k\) is

Since the values \(A_{ik}\) depend by the angles \(\vartheta _k,\dots ,\vartheta _{i-1}\) then the angles \(\vartheta _i\) in turn depend on them. It means that the computation of all the angles must been ordered from \(\vartheta _k\) to \(\vartheta _{d-1}\). From such definition of the angles \(\vartheta _i\), where \(i=0,\dots ,d-1\), the Eq. 25 is satisfied. Therefore, \(f_d(z)\) is equal to the series expansion of f(z) at the order d less than a constant factor \(C_d\)

Its value is constant while z changes, and it depends on the coefficients \(a_i\) of the Taylor expansion of the function f where \(i=k,\dots ,d\). \(\square \)

5 Computation of the amplitude

To summarize, the quantum circuit so far defined employs \(n+d\) qubits and it performs two transformations: the first sends the state \(\left| 0\right\rangle _a^{\otimes d}\left| 0\right\rangle _q^{\otimes n}\) into \(|\psi _z^d\rangle \), as a consequence of Theorem 1, while the second is \( S_U\otimes X^{\otimes n}\) which returns a state having \(2^{-d/2}f_d(z)\) as probability amplitude corresponding to the state \(\left| 0\right\rangle _a\left| 0\right\rangle _q\). An important property of such quantum circuit, which is shown in Fig. 3a, is that it encodes the value \(f_d(z)\) (up to the constant \(2^{-d/2}\)), which is nonlinear with respects to the input values \(\vec x\), in a quantum state (the state \(|\psi ^d_{f(z)}\rangle \)). Indeed, in a generic context, the quantum circuit in Fig. 3a can be integrated in a circuit for a multi-layer qubit-based neural network. In such context, the value \(f_d(z)\) corresponds to the output value of a hidden neuron. The freedom left by the non-destroying activation function makes possible to build a deep qubit-based neural network. Each new layer receives quantum states, like the state prepared to enter the network at the first layer. As last result, here we explicitly show how to operate the last layer of the network, by focusing on the case of a one-layer perceptron.

While the circuit in Fig. 3a returns a state \(|\psi ^d_{f(z)}\rangle \) which has the value \(2^{-d/2}f_d(z)\) encoded as a probability amplitude, the circuit in Fig. 3b allows to estimate such amplitude. It implements a qubit-based version of a one-layer perceptron. Any quantum algorithm ends by extracting information from the quantum state of the qubits by measurement operations. Applying the measurement operations to the qubits of the circuit of Fig. 3a allows to estimate only the probability to measure a given quantum state, but from the probability it is not possible to compute the inherent amplitude. Indeed, the probability is the square module of the amplitude; therefore, it does not preserve the information about the phase factor (the sign for real values) of the amplitude. To achieve such a goal a straightforward method consists in defining a quantum circuit which returns a quantum state with a value \(\frac{1}{2}(1+2^{-d/2}f_d(z))\) stored as a probability amplitude, a task operated by the circuit in the Fig. 3b.

Such quantum circuit operates on a register l of a single qubit, in addition to the registers q and a, and it returns the probability \(\frac{1}{4}|1+2^{-d/2}f_d(z)|^2\) to observe the state \(\left| 0\right\rangle _l\left| 0\right\rangle _a\left| 0\right\rangle _q\). Let us now focus on such circuit devoted to the estimation of the perceptron output. Let us consider an n-qubits state \(\left| \psi \right\rangle \) with real amplitudes and a unitary operator U such that \( U\left| 0\right\rangle ^{\otimes n}=\left| \psi \right\rangle \). In order to estimate the amplitude \(\left\langle 0\vert \psi \right\rangle \), where \(\left| 0\right\rangle \equiv \left| 0\right\rangle ^{\otimes n}\), a three-step algorithm can be defined to achieve the goal. The algorithm foresees the use of \((n+1)\)-qubits, n of which to store \(\left| \psi \right\rangle \) labeled with q and one additional qubit l. The said three steps consist of a Hadamard gate on l, the transformation U applied to the qubits of the register q and controlled by l and another Hadamard gate on l. Indeed, starting from the state \(\left| 0\right\rangle _l\left| 0\right\rangle _q^{\otimes n}\), after the first Hadamard gate the \((n+1)\)-qubits state becomes \(\frac{1}{\sqrt{2}}\left( \left| 0\right\rangle +\left| 1\right\rangle \right) \left| 0\right\rangle ^{\otimes n}\).

With the controlled-U transformation, the state becomes \(\frac{1}{\sqrt{2}}\left( \left| 0\right\rangle \left| 0\right\rangle ^{\otimes n}+\left| 1\right\rangle \left| \psi \right\rangle \right) \) and, with the last Hadamard gate, \(\frac{1}{2}\left[ \left| 0\right\rangle \left( \left| 0\right\rangle ^{\otimes n}+\left| \psi \right\rangle \right) +\left| 1\right\rangle \left( \left| 0\right\rangle ^{\otimes n}-\left| \psi \right\rangle \right) \right] \). After a measurement of the \(n+1\) qubits, the probability to measure the state \(\left| 0\right\rangle _l\left| 0\right\rangle _q^{\otimes n}\) is \( P_{0}=\frac{1}{4}\left| 1+\left\langle 0\vert \psi \right\rangle \right| ^2\). After the estimation of \(P_0\) the amplitude \(\left\langle 0\vert \psi \right\rangle \) is achievable by reversing the formula, therefore \(\left\langle 0\vert \psi \right\rangle =2\sqrt{P_0}-1\). The square module is invertible in such case because \(\left| \left\langle 0\vert \psi \right\rangle \right| \le 1\). Let us apply such a method of amplitude estimation in the case of the quantum perceptron. The quantum circuit, exposed in the previous sections and shown in the Fig. 3a, applied on two-qubit registers q and a, each initialized in the state \(\left| 0\right\rangle \), it returns an \((n+d)\)-qubits state \(\scriptstyle \left| \psi ^d_{f(z)}\right\rangle \). The circuit is summarized as a series of X gates applied on the qubits in the register q, the subroutine \(S_V\) applied on the two-qubit registers followed by \(S_U\) applied on the register a and another series of X gates applied on the qubits in the register q. The circuit turns out \( X^{\otimes n}S_US_V X^{\otimes n}\left| 0\right\rangle ^{\otimes d}\left| 0\right\rangle ^{\otimes n}=\scriptstyle \left| \psi ^d_{f(z)}\right\rangle \). Therefore, to estimate \({_q}\!\left\langle 0\right| {_a}\!\left\langle 0\right| \scriptstyle \left| \psi ^d_{f(z)}\right\rangle =2^{-d/2}f_d(z)\), let us apply the amplitude estimation algorithm described above where the transformation U is \( X^{\otimes n}S_US_V X^{\otimes n}\) and, in turn, the state \(\left| \psi \right\rangle \) is the state \(\left| \psi \right\rangle ^d_{f(z)}\). Therefore, after a measurement all over the qubits, the probability to obtain the state \(\left| 0\right\rangle _l\left| 0\right\rangle _a^{\otimes d}\left| 0\right\rangle _q^{\otimes n}\) is

From the estimation of \(P_0\), it is possible to compute an estimation of \(2^{-d/2}f_d(z)\). Summarizing, the circuit, which allows to estimate the amplitude \(2^{-d/2}f_d(z)\), is \(H_lC_l(X^{\otimes n}S_US_VX^{\otimes n})H_l\) with a measurement for each qubits in the registers q, a and l, respectively. Notice that such quantum circuit is partially different with respect from the circuit in the Fig. 3b. As from Fig. 3b the subroutine \(S_V\) is not controlled by l. Indeed \(S_V\) is built such that \( S_V\left| 0\right\rangle ^{\otimes d}\left| 0\right\rangle ^{\otimes n}=\left| 0\right\rangle ^{\otimes d}\left| 0\right\rangle ^{\otimes n}\). Therefore, the circuit \(H_lC_l(X^{\otimes n}S_US_VX^{\otimes n})H_l\) and \(H_lC_l(X^{\otimes n}S_U)S_V C_l(X^{\otimes n})H_l\) allows to achieve the same purpose of building a state with \(P_0\) as probability to obtain the state \(\left| 0\right\rangle _l\left| 0\right\rangle _a^{\otimes d}\left| 0\right\rangle _q^{\otimes n}\) after a measurement for each qubits.

Let us remark that Theorems 1 and 2 imply that a quantum state with \(2^{-d/2}f_d(z)\) as superposition coefficient does exist and, since any quantum state \(\left| \psi \right\rangle \) is normalized then \(2^{-d/2}f_d(z)\le 1\). From such results, by defining \(P_0=\frac{1}{4}|1+2^{-d/2}f_d(z)|^2\) it is possible to reverse the equation to find \(f_d(z)\), once given the probability \(P_0\).

Therefore, the quantum perceptron algorithm consists of an estimation of the probability \(P_0\) feasible with a number s of measurement operations of all the qubits. The error over the estimation of \(P_0\) depends on the number of samples s. The resulting output of the qubit-based perceptron is written

where P is the estimation of \(P_0\) and \(C_d\) is defined in the Eq. 22. Hence \(y_q\) provide the estimation of the value \(f_d(z)\), which is the polynomial expansion of the activation function f at the order d. Once the estimation of \(P_0\) is obtained by a quantum computation, the value \(y_q\) is derived by a classical computation. The estimation of \(P_0\) is given by \(P=m/S\) where S is the total number of the measurements of \(\left| {0}\right\rangle \left\langle {0}\right| _l\otimes \left| {0}\right\rangle \left\langle {0}\right| _a\otimes \left| {0}\right\rangle \left\langle {0}\right| _q\) and m is the number of those measurements which return 1 as result. A second way to estimate \(y_q\) is given by the quantum amplitude estimation algorithm [42]. Briefly, let us consider a transformation \({\mathcal {A}}\) such that \({\mathcal {A}}\left| 0\right\rangle =\left| \Psi \right\rangle =\sqrt{1-a}\left| \psi _0\right\rangle +\sqrt{a}\left| \psi _1\right\rangle \) where \(\left| \psi _0\right\rangle \) and \(\left| \psi _1\right\rangle \) are n-qubits states and \(a\in \left[ 0,1\right] \), the quantum amplitude estimation algorithm computes, with an additional register of m qubits, a value \(\tilde{a}\) such that at most \(\left| \tilde{a}-a\right| \sim {\mathcal {O}}(M^{-1})\) where \(M=2^m\). Therefore, to apply to the case under consideration namely \(X^{\otimes n}S_US_V X^{\otimes n}\left| 0\right\rangle ^{\otimes d}\left| 0\right\rangle ^{\otimes n}=|\psi _{f(z)}^d\rangle \), one may take \({\mathcal {A}}=X^{\otimes n}S_US_V X^{\otimes n}\), \(\left| \Psi \right\rangle =|\psi _{f(z)}^d\rangle \) being the latter a state over \(n+d\) qubits, and \(\sqrt{a}=2^{-d/2}f_d(z)\), respectively, so that the output of the perceptron results as

where \(\gamma \) is the shift value used to make the function \(f_d(z)\in \left[ 0,1\right] \) within \(z\in \left[ -1,1\right] \).

6 Discussion

The results derived above allow to implement a multilayered perceptron of arbitrary size in terms of neurons per layer as well as number of layers. To test it, one may restrict the quantum algorithm to a one-layer perceptron. The tensorial calculus to obtain both z and \(f_d(z)\) has to be performed by a quantum computer and eventually ends with a probability estimation. The estimation P of the probability, accordingly over \(y_q\), is subject to two distinct kinds of errors: one depending on the quantum hardware and a random error due to the statistics of m which is hardware independent.

In the following discussions, the error is analyzed as a function of the required qubits (n and d) and the number of samples S. The case of a qubit-based one-layer perceptron with \(N_{in}=4\) is then explored in order to verify the capability of the algorithm to approximate f(z). Because of the significant number of quantum gates involved, a quantum simulator has been used.

6.1 Error estimation and analysis

From Eq. 29, \(y_q\) depends on P, the estimation of the probability that a measurement of \(\left| {0}\right\rangle \left\langle {0}\right| _l\otimes \left| {0}\right\rangle \left\langle {0}\right| _a\otimes \left| {0}\right\rangle \left\langle {0}\right| _q\) returns 1 as a result. The estimation P is subject to a random error. Indeed \(P=m/S\), where the number of successes m is a random variable that follows the binomial distribution

Therefore, \(Var[m]=SP_0(1-P_0) \le \tfrac{1}{4} S\) and \(Var[P]=P_0(1-P_0)/S \le \tfrac{1}{4S}\). Then, Eq. 29 implies that

where \(\sigma _{y_q}=(Var[y_q])^{1/2}\). This means that s must grow at least as \(2^d\) in order to hold \(\sigma _{y_q}\) constant in d. Since also \(C_d\) increases with d, S should grow even faster. The error \(\sigma _{y_q}\) is clearly hardware independent. The implementation on a quantum device, rather than a simulator, requests further analysis of the hardware-dependent errors.

Multi-qubit operations on a quantum device are subject to two kind of errors [43] [44]: namely the limited coherence times [45] of the qubits and the physical implementations of the gates [46]. Therefore, the number of gates (the circuit depth) has a double influence on the error: a large number of gates requires a longer circuit run time which is limited by the coherence times of the qubits moreover each gate introduces an error due to its fidelity with respect to the ideal gate. The evaluation of the number of gates gives an estimate of the hardware-dependent error. The number of gates of the proposed qubit–based perceptron algorithm is \(\sim 330\) for \(d=1\) and it increases by \(\sim 400\) when d increases by 1, in other words almost linearly with d. Instead, the number of gates depends exponentially on n [34]. Depending on the size of the problem and the maturity of the technology to implement the physical qubits, a real application should require to use logical qubits or at least to integrate quantum error correction coding embedded within the algorithm. These prerequisites are especially necessary if the quantum amplitude estimation algorithm is applied to estimate \(y_q\) as in Eq. 30. In such algorithm the depth of the circuit increases as \({\mathcal {O}}(MNd)\) instead \({\mathcal {O}}(Nd)\) of the vanilla method of the Eq. 29, but the error over the estimation of \(y_q\) decreases with \(M^{-1}\). Indeed, since at most \(\left| \tilde{a}-a\right| \sim {\mathcal {O}}(M^{-1})\) and the error over the average of \(\tilde{a}\) computed with S shots, is at most \(\frac{1}{\sqrt{S}M}\), from the error propagation of the Eq. 30 one has

where \(M=2^m\). Therefore, the error can be reduced exponentially by increasing m, the number of additional qubits of the quantum amplitude estimation algorithm. A further analysis of the impact of the noise of a quantum hardware on the output of the perceptron is present in the Supplementary Note 3, where the results of the calculations obtained with a simulated noise model are presented.

Enlarged view of the output of a quantum one-layer perceptron with different analytical activation functions. The output of the classical perceptron y (gray lines) as a function of \({\bar{z}}\) is computed with four different target activation functions f: a hyperbolic tangent tanh(z) (a), a logistic function sigmoid(z) (b), a sine function \(\sin (z)\) (c) and the swish function \(z\cdot sigmoid(z)\) (d). The estimation of the quantum perceptron output \(y_q\) approximates y at the polynomial expansion’s order of \(d=3\) (cyan line) computed with \(S=2^{16}\) samples, \(d=5\) (blue line) with \(S=2^{18}\), \(d=7\) (violet line) with \(S=2^{20}\) and \(d=9\) (red line) with \(S=2^{22}\) in the cases (a), (b) and (c) while for the swish function the same colors are of one extra order (\(d+1\)) and the same number of samples. In the lower right graphs, the plots of \(R_c\) and \(R_q\) are shown in function of \({\bar{z}}\) and at different order d. The gray regions delimit the interval of \({\bar{z}}\) rescaled by the factor k which is the relevant region of interest to behave as monotone activation function

6.2 Implementation of a quantum one-layer perceptron with \(N_{in}=4\)

In order to show a practical example, we consider now the implementation of a quantum one-layer perceptron with 4 input neurons. With \(N_{in}=4\), z is computed with \(n=3\) qubits. To test the algorithm, the analytic activation functions f to be approximated are the hyperbolic tangent, the sigmoid, the sine and the swish function [47], respectively (Fig. 2). To estimate the perceptron output \(y_q\), \(n+d+1\) qubits are required where d is the order of approximation of the activation function f. The output \(y_q\) is computed following Eq. 29 where the probability P is estimated with the measurement of s copies of the quantum circuit in the Fig. 3b. To evaluate the effectiveness of the algorithm in reconstructing the chosen activation function f, the output \(y_q\) is compared with f(z) at different values of the inputs \(x_i\), the weights \(w_i\) and the bias b, where \(i=0,1,2,3\). We extend the evaluation on different activation functions also to different orders d, in order to exhaustively check the algorithm.

The weight vector is set to \(\vec w=(1,1,1,1)\) and the bias to \(b=0\). The input vector \(\vec x\) varies as \(\vec x = {\bar{z}}(1,1,1,1)\) with \({\bar{z}}\in [-1,1]\). In order to make the approximation capability manifest, since plotting as a function of \({\bar{z}}\) the activation functions does not differ significantly from their linear approximation, we consider a rescaled horizontal axis by f(kz) with \(k=4\) for the sigmoid and the sine function, \(k=3\) for the swish function and \(k=2\) for the hyperbolic tangent. In Fig. 4 the different activation functions f are plotted versus \({\bar{z}} = \frac{5}{4}z\in [-1,1]\). The rescaled factor here employed only to better show the effectiveness of the algorithm, can be set arbitrarily through the computation of the angles \(\vartheta \), according to Theorem 2, by simply considering the Taylor expansions of f(kz). The case of random weight vectors and biases are reported in the Supplementary Figures.

The quantum perceptron algorithm has been developed in Python using the open–source quantum computing framework QisKit. The quantum circuit was run on the local quantum simulator qasm_simulator available in the QisKit framework. Using a simulator, the only error over the estimated value \(y_q\) is \(\sigma _{y_q}\). To keep \(\sigma _{y_q}\) constant, the number of samples S must increase with d to compensate the factor \(2^{d/2}C_d\) in Eq. 31. Figure 4 was obtained with the starting choice \(S=2^{16}\) and \(d=3\).

To evaluate how well \(y_q\) approximates the \(d-\) order Taylor expansion of a given activation function, let us define \(R_q=y-\tilde{y}_q\), where \(y=f(z)\) and \(\tilde{y}_q\) is its polynomial fit. The values \(R_q\) are compared with \(R_c=y-T_d\), where \(T_d\) is the Taylor expansion of order d. For \(k=1\), the full Taylor series of the activation functions under study converge in the interval \(\left[ -1,1\right] \) of \({\bar{z}}\). However, only in the case of the sine function the convergence holds true all over \({\mathbb {R}}\). As a consequence, for \(f(z) = \sin (4z)\), \(R_c\) goes to 0 for any value of \({\bar{z}}\) when d increases. For the other functions in Fig. 4 the convergence radius of the polynomial series is finite and it depends on k. In the case of \(\tanh (2z)\), for instance, the convergence radius is less than 1 and \(R_c\) does not decrease with d for \({\bar{z}}\) large enough. Therefore, it is more representative to compare \(R_q\) with \(R_c\) rather than the activation function itself.

To quantify the difference between \(R_q\) and \(R_c\), let us compute the mean square error (MSE) at different orders d in the gray region of \({\bar{z}}\) in Fig. 4. The value of MSE between \(R_q\) and \(R_c\) is almost always of the order \(10^{-5}\) for \(d=3\) (4 for the swish) and \(10^{-4}\) when d increases. For the hyperbolic tangent, the MSE is of the order \(10^{-3}\) when \(d=7\) and 9.

The reason of such difference between \(\tanh \) and the other functions is due to the trend of \(C_d\) with d. Indeed \(C_d\) increases with d, therefore, it is not sufficient to duplicate S when d increases by 1 because \(C_d\) becomes relevant. Such an effect is more evident if the rescale factor k is high. Indeed, for the examined functions, with \(k=1\) the contribution of \(C_d\) to the error of \(y_q\) is not relevant also for a high value of d, because \(C_d\) increase as a polynomial with d if \(k=1\). At higher values of k, \(C_d\) increases exponentially with d and it gives a relevant contribution to the error.

7 Conclusions

A \(n-to-2^n\) quantum perceptron approach is developed with the aim of implementing a general and flexible quantum activation function, capable to reproduce any standard classical activation function on a circuital quantum computer. Such approach leads to define a truly quantum Rosenblatt perceptron, scalable to multi-layered quantum perceptrons, by having prevented the need of performing a measurement to implement nonlinearities in the algorithm. To conclude, our quantum perceptron algorithm fills the lack of a method to create arbitrary activation functions on a quantum computer, by approximating any analytic activation functions to any given order of its power series, with continuous values as input, weights and biases. Unlike previous proposals, we have shown how to approximate any analytic function at arbitrary approximation, without the need to measure the states encoding the information. By construction, the algorithm bridges quantum neural networks implemented on quantum computers with the requirements to enable universal approximation as from Hornik’s theorem. Our results pave the way toward mathematically grounded quantum machine learning based on quantum neural networks.

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

References

Rosenblatt, F.: The perceptron, a perceiving and recognizing automaton Project Para (Cornell Aeronautical Laboratory, 1957)

SUTER, B.W.: The multilayer perceptron as an approximation to a bayes optimal discriminant function. IEEE Trans. Neural Netw. 1, 291 (1990)

Hornik, K.: Approximation capabilities of multilayer feed-forward networks. Neural Netw. 4, 251–257 (1991)

Preskill, J.: Quantum computing in the nisq era and beyond. Quantum 2, 79 (2018)

Aaronson, S.: Read the fine print. Nat. Phys. 11, 291–293 (2015)

Prati, E.: Quantum neuromorphic hardware for quantum artificial intelligence. In Journal of Physics: Conference Series, vol. 880

Biamonte, J., et al.: Quantum machine learning. Nature 549, 195 (2017)

Rocutto, L., Destri, C., Prati, E.: Quantum semantic learning by reverse annealing of an adiabatic quantum computer. Advanced Quantum Technologies 2000133 (2020)

Farhi, E., Neven, H.: Classification with quantum neural networks on near term processors. arXiv preprint arXiv:1802.06002 (2018)

Beer, K., et al.: Training deep quantum neural networks. Nat. Commun. 11, 1–6 (2020)

Benedetti, M., Lloyd, E., Sack, S., Fiorentini, M.: Parameterized quantum circuits as machine learning models. Quantum Sci. Technol. 4, 043001 (2019)

Broughton, M. et al.: Tensorflow quantum: A software framework for quantum machine learning. arXiv preprint arXiv:2003.02989 (2020)

McClean, J.R., Boixo, S., Smelyanskiy, V.N., Babbush, R., Neven, H.: Barren plateaus in quantum neural network training landscapes. Nat. Commun. 9, 1–6 (2018)

Grant, E., Wossnig, L., Ostaszewski, M., Benedetti, M.: An initialization strategy for addressing barren plateaus in parametrized quantum circuits. Quantum 3, 214 (2019)

Cerezo, M., Sone, A., Volkoff, T., Cincio, L., Coles, P.J.: Cost-function-dependent barren plateaus in shallow quantum neural networks. Nat. Commun. 12, 1791 (2021)

Hornik, K., Stinchcombe, M., White, H., et al.: Multilayer feed-forward networks are universal approximators. Neural Netw. 2, 359–366 (1989)

Daskin, A.: A simple quantum neural net with a periodic activation function. In 2018 IEEE International Conference on Systems, Man, and Cybernetics (SMC), 2887–2891 (IEEE, 2018)

Torrontegui, E., García-Ripoll, J.J.: Unitary quantum perceptron as efficient universal approximator. EPL (Europhys. Lett.) 125, 30004 (2019)

Cao, Y., Guerreschi, G. G., Aspuru-Guzik, A.: Quantum neuron: an elementary building block for machine learning on quantum computers. arXiv preprint arXiv:1711.11240 (2017)

Hu, W.: Towards a real quantum neuron. Nat. Sci. 10, 99–109 (2018)

da Silva, A.J., de Oliveira, W.R., Ludermir, T.B.: Weightless neural network parameters and architecture selection in a quantum computer. Neurocomputing 183, 13–22 (2016)

Matsui, N., Nishimura, H., Isokawa, T.: Qubit neural network: Its performance and applications. In Complex-Valued Neural Networks: Utilizing High-Dimensional Parameters, 325–351 (IGI Global, 2009)

da Silva, A.J., Ludermir, T.B., de Oliveira, W.R.: Quantum perceptron over a field and neural network architecture selection in a quantum computer. Neural Netw. 76, 55–64 (2016)

Ventura, D., Martinez, T.: Quantum associative memory. Inf. Sci. 124, 273–296 (2000)

da Silva, A. J., de Oliveira, R. L. F.: Neural networks architecture evaluation in a quantum computer. In 2017 Brazilian Conference on Intelligent Systems (BRACIS), 163–168 (IEEE, 2017)

Schuld, M., Sinayskiy, I., Petruccione, F.: The quest for a quantum neural network. Quantum Inf. Process. 13, 2567–2586 (2014)

Shao, C.: A quantum model for multilayer perceptron. arXiv preprint arXiv:1808.10561 (2018)

Tacchino, F., Macchiavello, C., Gerace, D., Bajoni, D.: An artificial neuron implemented on an actual quantum processor. npj Quantum Information 5, 26 (2019)

Kamruzzaman, A., Alhwaiti, Y., Leider, A., Tappert, C. C.: Quantum deep learning neural networks. In Future of Information and Communication Conference, 299–311 (Springer, 2019)

Tacchino, F. et al.: Quantum implementation of an artificial feed-forward neural network. Quantum Science and Technology (2020)

Maronese, M., Prati, E.: A continuous Rosenblatt quantum perceptron. Int. J. Quantum Inform. 19, 2140002 (2021)

Agliardi, G., Prati, E.: Optimal tuning of quantum generative adversarial networks for multivariate distribution loading. Quantum Rep. 4, 75–105 (2022)

Pritt, M., Chern, G.: Satellite image classification with deep learning. In 2017 IEEE Applied Imagery Pattern Recognition Workshop (AIPR), 1–7 (IEEE, 2017)

Shende, V.V., Bullock, S.S., Markov, I.L.: Synthesis of quantum-logic circuits. IEEE Trans. Comput. Aided Des. Integr. Circuits Syst. 25, 1000–1010 (2006)

Kuzmin, V.V., Silvi, P.: Variational quantum state preparation via quantum data buses. Quantum 4, 290 (2020)

Lazzarin, M., Galli, D. E., Prati, E.: Multi-class quantum classifiers with tensor network circuits for quantum phase recognition. Phys. Lett. A 128056 (2022)

Romero, J., Olson, J.P., Aspuru-Guzik, A.: Quantum autoencoders for efficient compression of quantum data. Quantum Sci. Technol. 2, 045001 (2017)

Le, P.Q., Dong, F., Hirota, K.: A flexible representation of quantum images for polynomial preparation, image compression, and processing operations. Quantum Inf. Process. 10, 63–84 (2011)

Wille, R., Van Meter, R., Naveh, Y.: Ibm’s qiskit tool chain: Working with and developing for real quantum computers. In 2019 Design, Automation & Test in Europe Conference & Exhibition (DATE), 1234–1240 (IEEE, 2019)

Mottonen, M., Vartiainen, J. J.: Decompositions of general quantum gates. arXiv preprint arXiv:cs/0504100 (2005)

Rossi, M., Huber, M., Bruß, D., Macchiavello, C.: Quantum hypergraph states. New J. Phys. 15, 113022 (2013)

Brassard, G., Hoyer, P., Mosca, M., Tapp, A.: Quantum amplitude amplification and estimation. Contemp. Math. 305, 53–74 (2002)

Cross, A.W., Bishop, L.S., Sheldon, S., Nation, P.D., Gambetta, J.M.: Validating quantum computers using randomized model circuits. Phys. Rev. A 100, 032328 (2019)

Tannu, S. S., Qureshi, M. K.: Not all qubits are created equal: a case for variability-aware policies for nisq-era quantum computers. In Proceedings of the Twenty-Fourth International Conference on Architectural Support for Programming Languages and Operating Systems, 987–999 (2019)

Bouchiat, V., Vion, D., Joyez, P., Esteve, D., Devoret, M.: Quantum coherence with a single cooper pair. Phys. Scr. 1998, 165 (1998)

Girvin, S.M.: Circuit qed: superconducting qubits coupled to microwave photons. Quantum Mach.: Measure. Control Eng. Quantum Syst. 113, 2 (2011)

Ramachandran, P., Zoph, B., Le, Q. V.: Searching for activation functions. arXiv preprint arXiv:1710.05941 (2017)

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

Marco Maronese and Enrico Prati declare that they are authors of an Italian patent pending entitled: “Method and system for performing quantum calculations in a quantum neural network and for implementing quantum neural networks” Nr. 102021000033071 of 30/12/2021. Claudio Destri declares that there are no competing interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Maronese, M., Destri, C. & Prati, E. Quantum activation functions for quantum neural networks. Quantum Inf Process 21, 128 (2022). https://doi.org/10.1007/s11128-022-03466-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11128-022-03466-0