Abstract

In this work, we introduce and investigate a class of matrix-free regularization techniques for discrete linear ill-posed problems based on the approximate computation of a special matrix-function. In order to produce a regularized solution, the proposed strategy employs a regular approximation of the Heavyside step function computed into a small Krylov subspace. This particular feature allows our proposal to be independent from the structure of the underlying matrix. If on the one hand, the use of the Heavyside step function prevents the amplification of the noise by suitably filtering the responsible components of the spectrum of the discretization matrix, on the other hand, it permits the correct reconstruction of the signal inverting the remaining part of the spectrum. Numerical tests on a gallery of standard benchmark problems are included to prove the efficacy of our approach even for problems affected by a high level of noise.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Object of this work is the numerical solution of the problem

obtained from the discretization of an ill-posed problem in the Hadamard sense [1], in which the right-hand side gn is the sum of the true signal \(\bar {\mathbf {g}}_{n}\) and of a noise vector ε with ∥ε∥ = δ.

A vast source of problems of this type is indeed the class of inverse problems, such as the numerical solution of Fredholm integral equations of the first kind with nondegenerate kernels, deblurring problems for images, and, more generally, the application of linear models for the reconstruction of corrupted signals.

The usual techniques for the solution of linear systems cannot be applied directly to (1) since their straightforward application would end up in the recovery of a non-physical/noisy corrupted solution [2]. A vast amount of ad-hoc techniques have been proposed in the literature to overcome this problem and they are broadly referred to as regularization techniques. In particular, we cite two strategies related to our proposal: regularizing preconditioning and hybrid methods.

Regularizing preconditioners have been developed to accelerate the convergence of iterative methods likes Landweber or CGLS, without spoiling the computed solution avoiding to amplify the high frequencies, where the noise lives. Many proposals define the preconditioner in a trigonometric matrix algebra [2,3,4,5,6], that can be applied only to shift-invariant operators. Since the preconditioner should preserve the structure of the coefficient matrix An,m (see [7]), we follow the more general approach to define it in a Krylov subspace as suggested in [8, 9].

The combination of a direct solution of the original problem in a small size Krylov subspace is usually referred as a hybrid method. In more detail, hybrid methods combine the iterative construction of the Krylov subspace with the exact solution of the Tikhonov problem projected into the computed Krylov subspace [10,11,12,13]. The small size of the Krylov space allows to efficiently solve the projected Tikhonov problem.

In this work, tackling problem (1) through the normal equation

we propose and investigate a class of regularization techniques based on a Matrix-Function approach. In detail, a regularized approximation of the solution of problem (1) is obtained as

where fα(z) is a suitable regularization of the inverse function applied to \(A_{m,n}^{T}A_{m,n}\), i.e., \(f_{\alpha }(A_{m,n}^{T}A_{m,n})A_{m,n}^{T}A_{m,n} \approx I \) and, at the same time, \(f_{\alpha }(A_{m,n}^{T}A_{m,n})\) does not propagate the noise ε presents in gn.

The matrix function fα is defined having the property

where hα(λ) is a differentiable approximation of the Heavyside step function in α, i.e.,

In this way, we are able to filter the spectrum of the inverse of \(A_{m,n}^{T}A_{m,n}\) clustering to zero the eigenvalues smaller than α and inverting the remaining ones. As a result, the eigencomponents of the eigenvalues clustered to zero are neglected in the approximate solution and the propagation of the noise due to the ill-conditioning is avoided.

In this paper, we consider a smooth approximation of the Heavyside step function (5) previously proposed in [14, 15] for the analysis of electronic structures. Moreover, in order to obtain a fast matrix function evaluation, we consider a Krylov subspace method of polynomial type based on either the Arnoldi or the Lanczos decomposition, according to the properties of the matrix Am,n. Hence, we obtain a hybrid method combining the approximate Heavyside step function with the iterative regularization due to the iterative computation of the Krylov subspace. Our proposal is independent of the particular structure of An,m and it allows to work in a matrix-free framework simply requiring only the matrix-vector product operation.

The parameter α will be fixed according to the noise level assumed to be known, while the computation of the Krylov basis is stopped according to the combination of the discrepancy principle with a new criterion based on the stagnation of the norm of the residual. The several numerical results presented include different kinds of inverse problems proving that the proposed framework is general and robust. To enhance the reproducibility of the presented results, the codes of the new algorithms are publicly available (see https://github.com/Cirdans-Home/IRfun).

The paper is organized as follows. In Section 2 we discuss the construction of the approximation (3) connecting it to the classic works in regularization [1]. In Section 3 we define our proposal for the actual computation of our matrix-function regularization and we provide two suitable stopping criteria. In Section 4 we apply the proposed procedures to several test problems proving the goodness of our proposal. Finally, Section 5 is devoted to some final comments.

2 Motivations and literature review

2.1 Filter-based regularization methods

In this section we briefly summarize the framework of filter-based regularization methods. The notation and results are entirely borrowed from [1] and the interested reader can find there further details. Let us consider a compact linear operator \(A:{\mathscr{X}} \rightarrow {\mathscr{Y}}\) between Hilbert spaces \({\mathscr{X}}\), \({\mathscr{Y}}\) and denote with \(A^{*} : {\mathscr{Y}} \rightarrow {\mathscr{X}}\) the adjoint operator of A. We denote by A‡ the Moore-Penrose inverse of A, and by D(A‡) its domain.

We are interested in an ill-posed (in the Hadamard-sense) linear operator equation of the form

The following results hold:

Theorem 1

Let g ∈ D(A‡). Then, Ax = g has a unique best-approximate solution, which is given by

We have, moreover, that

Theorem 2

Let g ∈ D(A‡); \(x \in \mathcal {X}\) is a least-square solution of Ax = g if and only if the normal equation

holds.

From the above results it follows that x‡ is the solution of (7), i.e.,

When considering problem (6) where only an approximation gδ of g is available and δ is the noise level, due to the unboundedness of A‡, the solution A‡gδ is not a good approximation of x‡ = A‡g (we suppose g to be attainable). This follows by the following simple observation: consider the singular value expansion (s.v.e.) of A \((\sigma _{i}; v_{i}, u_{i})_{i \in \mathbb {N} }\), then if g ∈ D(A‡), we have

which clearly shows that errors in < g,ui > are propagated with a factor of 1/σi.

In practice, problem (6) is approximated by a family of neighboring well-posed problems [1].

Definition 1

By regularization operator for A‡ we call any family of operators

with the following properties:

-

1.

\(R_{\alpha }:{\mathscr{Y}}\rightarrow {\mathscr{X}}\) is bounded for every α;

-

2.

For every g ∈ D(A‡) there exists a rule choice \(\alpha : \mathbb {R}_{+} \times {\mathscr{Y}} \rightarrow (0,\alpha _{0}) \subset \mathbb {R}\), α = α(δ,gδ), such that

$$ \lim \sup_{\delta \rightarrow 0} \{ \alpha(\delta, g^{\delta}): g^{\delta} \in \mathscr{Y}, \|g-g^{\delta} \|\leq \delta \}=0, $$and

$$ \lim \sup_{\delta \rightarrow 0}=\{\|R_{\alpha(\delta,g^{\delta})}g^{\delta} -A^{\dagger}g \|: g^{\delta} \in \mathscr{Y}, \|g-g^{\delta}\|\leq \delta \}=0. $$

Thus, a regularization method consists of a regularization operator and a parameter choice rule which is convergent in the sense that, if the regularization parameter is chosen according to that rule, then the regularized solutions converge as the noisy level tends to zero. Moreover, we have

Proposition 1

Let, for all α > 0, Rα be a continuous operator. Then, the family {Rα} is a regularization for A‡ if

We consider here a class of linear regularization methods based on spectral theory for selfadjoint compact linear operators. The basic idea is the following one: let {Eλ} be a spectral family for A∗A. The best-approximate solution x‡ = A‡g can be characterized as

When the above integral does not exist due to the fact that 1/λ has a pole in 0, then the problem Ax = g is ill-posed, and the idea is now to replace 1/λ by a parameter dependent family of functions fα(λ), i.e.,

i.e., we are considering

as regularization operator for A‡.

The following result gives sufficient conditions for Rα as in (11), to be a regularization operator for A‡:

Theorem 3

Let, for all α > 0 and an ε > 0, \(f_{\alpha } : [0,\|A\|^{2}+\varepsilon ) \rightarrow \mathbb {R}\) fulfills the following assumptions: fα is piece-wise continuous, and there exists a C > 0 such that

and

for all λ ∈ (0,∥A∥2]. Then, for all g ∈ D(A‡)

If y∉D(A‡), then \(\lim _{\alpha \to 0}\|f_{\alpha }(A^{*}A)A^{*}g\|=+\infty \).

2.2 Tikhonov regularization and Heavyside step function

A special choice for fα which fulfills the assumptions of Theorem 3 is

In this case we have

Formula (12), if on the one hand, clearly shows the regularization capabilities for this choice of fα(λ) since errors in < gδ,ui > are not propagated with factors 1/σi but with factors \(\sigma _{i}/({\sigma _{i}^{2}}+\alpha )\), on the other hand, it shows the limitation of choosing a uniform shift of all the singular values: this choice is responsible of an over-damping of the largest singular values.

Our proposal synthesizes and moves from the above observation. Let us consider (12) for a generic fα, we have

In order to provide a regularized solution \(x_{\alpha }^{\delta }\), using (8), the function fα should be s.t.

i.e., it should be

from which we recover (5). Observe that the function

clearly satisfies the hypothesis of Theorem 3, and hence

is a regularizing operator for A‡.

Remark 1

We remark in passing [1], that when the operator A is already selfadjoint positive semidefinite, one will not use Rα := fα(A∗A)A∗, but apply the theory of regularization methods for equations with selfadjoint operators, where Rα would be just fα(A).

Moreover, from now on, as it is customary, we will suppose that an approximation An,m of A in finite dimensional subspaces is available, i.e., that we have a discretization of the regularization operator [1]. In the following part we focus then on the construction of a regularization approach of hybrid type in which the regularization is effected by both the parameters defining the function fα(λ) and the choice of the projection subspace (see, e.g., [11,12,13]).

2.3 Regularizing preconditioners

In this section, for the sake of simplicity, we will suppose that An ≡ An,n is a symmetric positive definite matrix. Then, using Remark 1, instead of solving problem in (2), we can suppose to solve

During the last twenty years, intense research has been devoted the computation of an approximate solution of (15) by coupling the classic preconditioned Krylov iterative methods with preconditioners Pn able to prevent the propagation of the noisy corrupted components contained in gn, i.e.,

where Pn is a regularizing preconditioner [2,3,4, 16]. These can be heuristically intended as preconditioners Pn with a dual role; on the one hand they have to be able to speed up the convergence in the well-conditioned space of the spectra of An, and, simultaneously, on the other, they need to slow down the restoration of the most corrupted components of gn.

Just to fix ideas in the right order, we stress that this is not the only class of preconditioners used in regularization problems, indeed there exist ample casuistry in which the objective is the same one of classic preconditioning, i.e., accelerating the solution of the underlying linear system and this happens, for example, when looking for the solution of the linear systems arising in the Tikhonov regularization, consider, e.g., [5, 6].

The class of regularizing matrix algebras preconditionersPn is a well studied class of regularizing preconditioners and can be described in a very general setting (see [3, Definition 3.1]).

Such definition is built up mimicking the procedure that is usually followed to produce regularizing preconditioner for matrices An of Toeplitz type, i.e.,

for \(k \in \mathbb {Z}\) and κ a function in \(\mathbb {L}^{1}\) with one (or more) root(s). In this case the \(P_{n}^{-1}\) preconditioners are nothing more than matrices generated from a family of bounded functions approximating the unbounded function 1/κ. Specifically, if they are selected from an algebra \({\mathscr{M}}_{n}\) of matrices simultaneously diagonalized by an orthogonal transform Un [17, 18], i.e.,

then the construction of Pn can be achieved by applying a suitable filter fα to the diagonal term in the Schur decomposition of some suitably chosen matrix \(\overline {M}_{n} \in {\mathscr{M}}_{n}\) (e.g., the projection of An) . This is indeed nothing more than the computation of a filtering matrix-function fα(λ) on the particular \(\overline {M}\) chosen, since what is then built is simply

To this class of preconditioners belong all the combinations that can be built taking \({\mathscr{M}}_{n}\) as a trigonometric algebra and fα a suitable filtering function in the sense of [3, Definition 3.1]. The steps needed in this approach include a careful selection of the algebra (17) in which the problem is projected, and the selection of an appropriate filter function; both the choices are strictly connected with the structure of the sequence of matrices {An}n, and severely effect the quality of the restored solution. An analogous observation holds for all those regularizing structured preconditioners [7, 19], in which the preconditioner shares the same structure of the underlying matrix.

As a matter of fact, in many applications, it is not possible to retrace a useful structure in An in order to devise a proper matrix algebra \({\mathscr{M}}_{n}\).

The approach we propose in this work, synthesizing from the above presented techniques and from [8, 9], aims to be applied independently from the particular structure of An avoiding the necessity of devising an opportune matrix algebra \({\mathscr{M}}_{n}\). In particular, it allows to work with the matrices {An}n in a matrix-free framework, i.e., gathering information from the matrices just focusing on the matrix-vector product operation (Krylov-type techniques).

2.4 The matrix-function technique

To give a precise idea of the general framework of our approach, if An is symmetric and positive definite, we have

where α is a suitable threshold parameter and \(f:\mathbb {R}_{+}\rightarrow \mathbb {R}\).

Since the eigencomponents related to the {j : λj ≤ α} are those responsible for the propagation of the noise contained in gn—while the {j : λj > α} are the ones for which it is possible to reconstruct the signal without incurring in a noise propagation phenomenon—we devise the use of a fα(λ) such that

for hα(λ) the Heavyside step function in α as in (5). Accordingly to this choice, we are setting in (19)

To build such matrix function we need a suitable regular approximation of the Heavyside step function. This is a problem that has been addressed in a completely different setting for the analysis of electronic structures in quantum chemistry and solid state physics [14, 15]; we use hence the approximation

Since we want to avoid any occurrences of the computation of \(A_{n}^{-1}\mathbf {x}\) in this context, for computing fα(An)gn, we decide to recur to a Krylov subspace method of polynomial type based on either the Lanczos decomposition, if An are symmetric matrices, or the Arnoldi decomposition, if the An are nonsymmetric.

2.5 Fixing the parameters

As for all regularization methods, we need a suitable way of fixing the various parameters defining the method. In our case, we have to discuss the choice of the α, β for fα(λ).

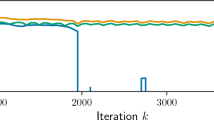

From Fig. 1, the effects of the two parameters are clear. The value of α regulates which part of the spectrum we are filtering, and the β how sharp is the threshold process. Of course, the choice of α and β should depend on the noise level and on the decay speed of the eigenvalues/singular values of An.

A reasonable heuristic for this choice is represented by a value of α that is slightly smaller than the level of noise and by a value of β that is such that 1/β << α, in order to avoid the instances represented by the dashed lines in Fig. 1, where some small eigenvalues end up being magnified in the inversion procedure.

Theorem 4

Given the regularization problem in (1) and the regularizing function fα(An) in (21), when \(\delta \rightarrow 0\), for the parameter choice α ∝ δ and 1/β < α, we find that

-

1.

If An,m = An, then \(f_{\alpha }(A_{n}) \mathbf {g}_{n} \rightarrow \bar {\mathbf {g}}_{n}\),

-

2.

otherwise, \(f_{\alpha }(A_{n,m}^{T} A_{n,m}) A_{n,m}^{T} \mathbf {g}_{n}\) converges to the least-square solution of (1).

Proof

We simply need to observe that, by (20) and (5), \(f_{\alpha }(\lambda ) \rightarrow \lambda ^{-1}\) when \(\delta \rightarrow 0\) for the parameter choice α ∝ δ and 1/β < α. □

3 Computing the matrix function

For the sake of readability, from this section on, we fix the dimension of the problem n. We will write A instead An, \(\overline {\mathbf {x}}\) instead \(\overline {\mathbf {x}}_{n}\) and g instead gn.

The core of our approach is the computation fα(A)g and for this task, we will exploit Krylov subspace methods. This section is devoted to a careful review of these techniques.

We have seen in (18) that for diagonalizable matrices, computing fα(A)g amounts to the computation of the function fα on the eigenvalues of the matrix A. This procedure can be defined also in a more general setting [20], for an arbitrary matrix A and filter function f analytic inside a closed contour Γ enclosing the spectrum of A: the matrix function-vector product f(A)g can be defined as

An efficient class of algorithms for computing (22) is represented by projection methods. Let Vk be an orthogonal matrix whose columns \(\mathbf {v}_{1},\dots , \mathbf {v}_{k}\) span an arbitrary subspace, then we can approximate f(A)g on that subspace as

In this work we are interested in projection methods such that the matrix Vk spans the k th Krylov subspace

In the case of generic square matrix the Arnoldi procedure with modified Gram-Schmidt builds an orthonormal basis Vk of \({\mathscr{K}}_{k}(A,\mathbf {g})\) satisfying the so-called Arnoldi relation

where Hk = (hi,j)i,j is an upper Hessenberg matrix of size k × k. Finally, the approximation in (23) is computed as

In Algorithm 1 we give a synthetic presentation for the matrix-function-times-vector computation which encompasses a reorthogonalization method.

When the matrix A is symmetric, Algorithm 1 can be greatly simplified by using the Lanczos procedure to generate the basis of the Krylov subspace \({\mathscr{K}}_{k}(A,\mathbf {g})\). By this procedure we build an orthonormal basis Vk of \({\mathscr{K}}_{k}(A,\mathbf {g})\) satisfying a modified version of the Arnoldi relation (24)

where Tk = diag(β,α,β) is a symmetric tridiagonal matrix of size k × k. Finally, the approximation in (23) is computed as

As for the nonsymmetric case, also the Lanczos algorithm can suffer from a loss of orthogonality in the computed vectors, thus the Algorithm 2 includes also a full reorthogonalization step.

As a matter of fact, we will use both Algorithm 1 and 2 in an iterative fashion and we need to provide a stopping rule to completely specify our proposal. In the next Section 3.1 we discuss and clarify this issue.

We conclude this section by highlighting a connection between our proposal and the standard approach with hybrid Krylov method. Specifically, we stress that under some hypotheses on the Krylov subspace the methods produce the same iterates.

Proposition 2

If \(V_{k} f_{\alpha }({V_{k}^{T}} A V_{k}) {V_{k}^{T}} \mathbf {e}_{1} \equiv V_{k} ({V_{k}^{T}} A V_{k})^{-1} {V_{k}^{T}} \mathbf {e}_{1}\) then

-

fk coincides with the k th iteration of the CG Algorithm, if A is SPD, and Algorithm 2 is used;

-

fk coincides with the k th iteration of the CGLS Algorithm, if fα is computed on ATA, and Algorithm 2 is used;

-

fk coincides with the k th iteration of the FOM Algorithm, if fα is computed on A, and Algorithm 1 is used.

Proof

All the properties follow straightforwardly from the standard construction of the CG, and CGLS Algorithm with the Lanczos orthogonalization procedure, and of FOM, for the Arnoldi orthogonalization procedure. We refer to [21] for the construction of the relative algorithms. □

3.1 The stopping criterion

A well-known stopping criterion for regularization is represented by the discrepancy principle. If we consider problem (1) and we denote by fk an approximation of the solution \(\overline {\mathbf {x}}\) obtained in an iterative fashion, the basic idea behind this criterion is to stop the iteration of the chosen method as soon as the norm of the residual rk = g − Afk is sufficiently small, typically of the same size of δ, i.e., the norm of the perturbation ε in the right-hand side of (1). In our setting, \(\mathbf {f}_{k}=V_{k} f_{\alpha }({V_{k}^{T}} A V_{k}) {V_{k}^{T}} \mathbf {g}\), and we can easily monitor the residuals

The discrepancy principle, based on this quantities, reads as

where η ≥ 1 and we call NoiseLevel the relative noise level ∥ε∥/∥g∥≡ δ/∥g∥. Observe now that, since fα does not coincide, in general, with the function 1/λ, the quantity ∥rk∥ is not guaranteed to converge to 0 as k increases, as it happens in the linear system solution framework. In general, it will stabilize when the dimension of the Krylov space increases: when \(k \rightarrow n\), fk will be an increasingly better approximation of f(A)g and hence \(\| \mathbf {g} - AV_{k} f_{\alpha }({V_{k}^{T}} A V_{k}) {V_{k}^{T}} \mathbf {g} \|\) will converge to the quantity ∥g − Af(A)g∥≠ 0 since f(A)≠A− 1.

In order to improve the stopping capabilities of our regularized reconstruction algorithm, we need to add a further stopping criterion. With this in mind, we consider the sequence of the residuals {ck := |∥rk∥−∥rk− 1∥|}k which is such that

and thus goes to zero as k increases. We select as a stopping criterion the following:

Heuristically this choice is supported by the fact that, due to the noise presence, it is not possible to discern among reconstructions giving rise to residuals which differ for a quantity of the same order of the noise level. Observe, moreover, that from (28), it is clear that the stopping residual (29) will be satisfied. Finally, we point out that performing the stopping check using the form in (29) is very cheap in space and time once the norm of residuals has been computed.

We stress again that the method we are using generates a solution that is in the Krylov subspace \({\mathscr{K}}_{k}(A,\mathbf {b})\), or either \({\mathscr{K}}_{k}(A^{T}A,A^{T}\mathbf {b})\), for the fixed choice of the parameter α made in Section 2.5. Using analogous techniques to the one in [11,12,13], it is possible to devise a suitable hybrid choice that selects adaptively an optimal threshold α within the given Krylov space of order k, i.e., we could connect the choice of k and α in an adaptive way.

In the following Algorithm 3 we present the full pseudo-code for our proposal in the case in which the Arnoldi procedure is used; the case for the Lanczos based procedure is obtained similarly from Algorithm 2.

4 Numerical experiments

All the numerical experiments are performed on a Linux machine with an Intel®; Xeon®; Platinum 8176 CPU, 2.10 GHz, with 84 Gb of RAM. The code is written and executed in Matlab 9.3.0.713579 (R2017b). The regularization routines used for comparison, and the test problems are generated with the packages AIR Tools II [22] and IR Tools [23]. The algorithm presented here, together with the example files generating the examples, is available as the IRfun MATLAB function on https://github.com/Cirdans-Home/IRfun.

In order to study the effectiveness of our stopping criteria, we organize the tests comparing the best achievable PSNR within maximum allotted iterations with the results obtained employing the stopping criteria discussed in Section 3.1. Moreover, some testing on the stability of the algorithm with respect to the choice of the optimization parameter α in fα(x) is investigated here.

The methods we used for comparison are the standard Krylov methods for regularization implemented in IR Tools [23], i.e., the CGLS, Preconditioned CGLS [4], Range Restricted GMRES (RR-GMRES), the hybrid GMRES, GMRES with ℓ1 penalty [24], and hybrid LSQR method [25]. Moreover, we consider also the solution with the Tikhonov regularization method in which we solve the auxiliary linear system with the CGLS method and compute the optimal parameter λ by means of the L-curve criterion using of the SVD of the matrix A. We report, moreover, the computational time for every method. To conclude, let us stress the fact that our proposal is a linear method exploiting the information from Kyrlov subspaces: for this reason, in the comparisons, we restrict ourselves only to the linear methods mentioned above not taking into account nonlinear techniques.

4.1 Deblurring problems: f α(A) for A symmetric

In all the examples in this section, the parameters α, and β for the matrix function regularization are selected as α = δ × 10− 1, and β = 109. We perform a fixed number of iterations (100) for each method, independently from the fact that a stopping criterion is satisfied or not; for all the comparison methods the discrepancy principle is used to pick the k at which the iterations are halted, while for our proposal we use the stopping criteria described in Section 3.1, i.e., the minimun between the two that satisfy the (27) and (29). The parameter λ for the Tikhonov method is set by using the SVD/L-curve criterion and the CGLS method is used to obtain the solution of the resulting linear system. We report in every table, both, the number of iterations and the achieved PSNR: the ones in brackets are relative to the best-reconstructed solution while the others, correspond to the results obtained by applying the stopping criteria. For the Tikhonov-CGLS method the two quantities coincide since the regularization is obtained by the shift λ, and the linear system is then solved to the highest accuracy. For the preconditioned CGLS we use the algebra preconditioner \(P_{(i)}(A):= {\mathscr{L}}_{A^{2^{i}}}^{\frac {1}{2^{i-1}}} {\mathscr{L}}_{A}^{-1}\) introduced by the authors in [4], where \({\mathscr{L}}_{A}\) is the projection of the matrix A on the space sdF of matrices simultaneously diagonalized by the two-level Fourier transform

Specifically, we always test the various preconditioners obtained for i = 1,…,8, and take the one giving the best results. Observe that in this way we always consider also the classic optimal and super-optimal preconditioners (see [4, 17]).

Satellite

The first example is the ‘satellite' image (Fig. 2) with a mild, medium, and severe Gaussian blur generated by the PRblurgauss() function. Since the images terminate with a zero boundary, we have used the zero Dirichlet boundary conditions to assemble the matrix A. To the right-hand side we add Gaussian noise of level

by means of the function PRnoise(b,‘gauss',delta).

For the sake of completeness, for this particular problem set, we compare our approach using as preconditioner for the CGLS method an approximate inverse Toeplitz preconditioner proposed in [19]. To have a fair comparison with the CGLS and the PCGLS methods we apply the fftPrec() from the Regularization Tools [26] package to the CGLS method (the associate threshold for fftPrec() has been set using the generalized cross validation method). In the following, this method will be denoted as fftPCGLS.

The results are collected in Tables 1, if reorthogonalization is used for the Lanczos method, and in Table 2 without reorthogonalization.

An example of the reconstructed ‘satellite' by the various algorithms is instead given in Fig. 3.

The first observation we can make on these cases is that the stopping criteria work efficiently on all the test cases. As a matter of fact, the PSNR for the best-reconstructed signal, and the one obtained from the stopping are comparable. For high levels of noise (δ ≥ 2e − 1) and severe blur level the combination of the matrix function routine and the stopping criteria deliver better results than the comparison methods in the majority of the cases. In particular, in the comparison with the hybrid Krylov methods, we should mention the fact that, in some cases, the latter achieves slightly better results in terms of combination of PSNR/stopping results but at the cost of having a higher execution time. Generally, we can observe that the timings of our proposal are smaller than the one needed for solving the problem with the Tikhonov approach and are comparable with the ones for the other Krylov-type methods. There is some improvement on the timings when no reorthogonalization is used at the cost of a slightly minor performance in terms of PSNR.

We are also interested in investigating the sensitiveness of the proposed approach with respect to the regularization parameters α and β of Section 2.5 in terms of achieved PSNR. In Fig. 4 we report the PSNR of the best reconstruction in 100 iterations obtained by the matrix function iterative regularization with the function fα(A), and varying the parameter α around the selected value in Section 2.5, i.e., α = δ × 10− 1.

Sensibility with respect to the choice of α and β for the matrix-function regularization algorithm based on the Lanczos procedure with reorthogonalization for the ‘satellite' test problem. The sensibility with respect to β is evaluated for the value of α selected for the experiments. a mild. b medium. c severe

We repeat the test for all the blur levels in Fig. 2 and the noise levels δ in (30). We report, moreover, the same stability test for β. What we observe is that the selected parameters lay, always, in a flat zone of the graph. This implies that even if we slightly vary them the resulting restoration quality is unchanged.

4.2 Space variant problems: f α(A)

We consider also the regularization of problems whose solution is not spatially invariant, namely we consider the first-kind Fredholm integral equation from Phillips [27]. This problem defines the function

and tries to recover it by means of the kernel K(s,t) = ϕ(s − t), with right-hand side \(g(s) = (6-|s|)(1+.5\cos \limits (s\pi /3)) + 9/(2\pi )\sin \limits (|s|\pi /3)\) on the interval [− 6,6]. The second problem of this class we decide to investigate is the discretization of 1D gravity surveying model problem [28] in which a mass distribution \(f(t) = \sin \limits (\pi t) + 0.5\sin \limits (2\pi t)\), is located at depth d = 0.25, while the vertical component of the gravity field g(s) is measured at the surface. The resulting problem is again a first-kind Fredholm integral equation, this time with kernel \(K(s,t) = d(d^{2} + (s-t)^{2})^{-\frac {3}{2}}\), in which the discretization of the source g(t) is obtained as g = Ax, where A is computed by means of a mid-point quadrature rule on the interval [0,1]. We perturb again the right-hand side by the noise in (30), and give the solution in Table 3.

The “–” reported for the RR-GMRES algorithm in Table 3b occurs when the algorithm generates a Hessenberg matrix that is numerically singular, and thus halts without giving back a result.

In Fig. 5 we report the PSNR of the best reconstruction in 100 iteration obtained by the matrix function iterative regularization with the function fα(A), and varying the parameter α around the selected value in Section 2.5, i.e., α = δ × 10− 1, from which we observe again that the selected parameter is located in a flat zone, i.e., small variations do not alter the resulting PSNR for the best reconstruction.

4.3 Tomography problem: \(f(A_{n,m}^{T}A_{n,m})\) for A n,m rectangular

Tomography problems are imaging problems in which the image has to be reconstructed from some of its sections obtained through the use of a penetrating wave, and it literally means a “slice view”. In general, we could expect to have to solve a problem of the form

Using the least-square interpretation of problem (31), we can then compute our regularized solution as

for the fα given in (21) by means of the matrix function algorithm based on the Lanczos orthogonalization process.

Parallel tomography

we consider the “line model” for creating a 2D X-ray tomography test problem with an N × N pixel domain, using p parallel rays for each angle 𝜃 generated by the paralleltomo routine from the AIRTOLSII package. To the right-hand side we add Gaussian noise of level δ as in (30). In this example the maximum number of iterations is fixed at 800. The results are collected in Table 4. The relaxation parameters for the Kaczmarz, the random Kaczmarz, Cimmino, and Landweber methods are set with the training routine train_relaxpar(A,b,x_true,@method,20), while the threshold parameter τ is computed with train_dpme(A,bl,x_true, @method,'DP',NoiseLevel,10,20,options).

On this set of test problems, we observe different behaviors for the considered methods allowing us to observe a couple of interesting facts. Firstly, we observe that for higher levels of noise the discrepancy principle combined with the Kaczmarz, random Kaczmarz, Landweber and Cimmino methods do not work well, i.e., the iteration at which it stops the method is very far from the one for which the best PSNR is achieved even though the discrepancy principle gives better results when combined with the standard CGLS algorithm.

Secondly, it is interesting to compare the best achievable reconstructions obtained by CGLS and by our proposal: the best obtained PSNR is the same in the two cases. This is due to the fact that the ill-conditioning of the matrices An,m obtained for this test problem is the result of very large singular values and not to the presence of decaying ones. This behavior is in accordance with Theorem 4, i.e., the function fα(x) is not “cutting out” the singular values with relatively small magnitude since they are not present, hence the solution computed by our method coincides with the one provided by the CGLS method. Even if on this dataset our proposal does not compare favorably with CGLS in terms of execution time, the results here obtained suggest its typical use-case, i.e., our proposal should be employed for problems exhibiting a fast decay of the smallest singular value to zero.

5 Conclusion and future perspectives

In this work we have introduced a hybrid Krylov method for the regularization of discrete inverse problems through the usage of matrix functions computed with Krylov methods. This construction generalizes the approach used for structured regularizing preconditioners to cases in which the opportune structure is not easily devised. The theoretical justification of the given approach is based on spectral filtering framework for the inverse, or the pseudo-inverse, of an ill-posed operator. We have discussed a heuristic for the stopping criterion and for the selection of the parameters of the filter that does not require further computations to be made on the system matrix, needing only the knowledge of the norm of the additive noise, and we plan to extend to a fully adaptive and automatic choice of the parameters in the style of [11,12,13].

The numerical results show that the proposed methods behave consistently throughout different applications, and are able to deal with cases in which the noise level is high (> 20%). The comparison with the standard Krylov-type methods is promising in terms of achieved PSNR and timings.

Moreover, the proposed methods behave better than both the Tikhonov method and the fixed point methods (Kaczmarz, Cimmino, Landweber) with trained parameters in several test cases.

Matter of future investigations is the usage of rational Krylov methods for the computation of the various matrix functions and the possible exploitation of different filtering functions within the same framework.

References

Engl, H.W., Hanke, M., Neubauer, A.: Regularization of inverse problems, vol. 375. Springer Science & Business Media (1996)

Hanke, M., Nagy, J., Plemmons, R.: Preconditioned iterative regularization for ill-posed problems. In: Numerical linear algebra (Kent, OH, 1992), pp 141–163. de Gruyter, Berlin (1993)

Estatico, C.: A classification scheme for regularizing preconditioners, with application to Toeplitz systems. Linear Algebra Appl. 397, 107–131 (2005). https://doi.org/10.1016/j.laa.2004.10.006

Cipolla, S., Di Fiore, C., Durastante, F., Zellini, P.: Regularizing properties of a class of matrices including the optimal and the superoptimal preconditioners. Numer. Linear Algebra Appl. 26(2). https://doi.org/10.1002/nla.2225, https://www.scopus.com/inward/record.uri?eid=2-s2.0-85058701148&doi=10.10022fnla.2225&partnerID=40&md5=ba11b64a73f577ff5405c1f3e696d885 (2019)

Donatelli, M., Hanke, M.: Fast nonstationary preconditioned iterative methods for ill-posed problems, with application to image deblurring. Inverse Probl. 29(9), 095008, 16 (2013). https://doi.org/10.1088/0266-5611/29/9/095008

Buccini, A.: Regularizing preconditioners by non-stationary iterated Tikhonov with general penalty term. Appl. Numer. Math. 116, 64–81 (2017). https://doi.org/10.1016/j.apnum.2016.07.009

Dell’Acqua, P., Donatelli, M., Estatico, C., Mazza, M.: Structure preserving preconditioners for image deblurring. J. Sci. Comput. 72(1), 147–171 (2017). https://doi.org/10.1007/s10915-016-0350-2

Brezinski, C., Novati, P., Redivo-Zaglia, M.: A rational Arnoldi approach for ill-conditioned linear systems. J. Comput. Appl. Math. 236(8), 2063–2077 (2012). https://doi.org/10.1016/j.cam.2011.09.032

Novati, P., Redivo-Zaglia, M., Russo, M.R.: Preconditioning linear systems via matrix function evaluation. Appl. Numer. Math. 62(12), 1804–1818 (2012). https://doi.org/10.1016/j.apnum.2012.07.001

O’Leary, D.P., Simmons, J.A.: A bidiagonalization-regularization procedure for large scale discretizations of ill-posed problems. SIAM J. Sci. Stat. Comput. 2(4), 474–489 (1981). https://doi.org/10.1137/0902037

Lewis, B., Reichel, L.: Arnoldi-Tikhonov regularization methods. J. Comput. Appl. Math. 226(1), 92–102 (2009). https://doi.org/10.1016/j.cam.2008.05.003

Gazzola, S., Novati, P.: Automatic parameter setting for Arnoldi-Tikhonov methods. J. Comput. Appl. Math. 256, 180–195 (2014). https://doi.org/10.1016/j.cam.2013.07.023

Gazzola, S., Novati, P., Russo, M.R.: On Krylov projection methods and Tikhonov regularization. Electron. Trans. Numer. Anal. 44, 83–123 (2015)

Benzi, M., Boito, P., Razouk, N.: Decay properties of spectral projectors with applications to electronic structure. SIAM Rev. 55(1), 3–64 (2013). https://doi.org/10.1137/100814019

Liang, W., Saravanan, C., Shao, Y., Baer, R., Bell, A.T., Head-Gordon, M.: Improved fermi operator expansion methods for fast electronic structure calculations. J. Chem. Phys. 119(8), 4117–4125 (2003)

Hanke, M.: Conjugate gradient type methods for ill-posed problems. Pitman Research Notes in Mathematics Series. Longman Scientific & Technical, Harlow, vol. 327 (1995)

Di Benedetto, F., Serra Capizzano, S.: A unifying approach to abstract matrix algebra preconditioning. Numer. Math. 82(1), 57–90 (1999). https://doi.org/10.1007/s002110050411

Cipolla, S., Di Fiore, C., Tudisco, F., Zellini, P.: Adaptive matrix algebras in unconstrained minimization. Linear Algebra Appl. 471, 544–568 (2015). https://doi.org/10.1016/j.laa.2015.01.010

Hanke, M., Nagy, J.: Inverse Toeplitz preconditioners for ill-posed problems. Linear Algebra Appl. 284(1-3), 137–156 (1998). https://doi.org/10.1016/S0024-3795(98)10046-0. ILAS Symposium on Fast Algorithms for Control, Signals and Image Processing (Winnipeg, MB, 1997)

Higham, N.J.: Functions of matrices. Society for Industrial and Applied Mathematics (SIAM), Philadelphia. https://doi.org/10.1137/1.9780898717778. Theory and computation (2008)

Saad, Y.: Iterative methods for sparse linear systems, 2nd edn. Society for Industrial and Applied Mathematics, Philadelphia (2003). https://doi.org/10.1137/1.9780898718003

Hansen, P.C., Jørgensen, J.S.: AIR Tools II: algebraic iterative reconstruction methods, improved implementation. Numer. Algorithm. 79(1), 107–137 (2018). https://doi.org/10.1007/s11075-017-0430-x

Gazzola, S., Hansen, P.C., Nagy, J.G.: IR Tools: a MATLAB package of iterative regularization methods and large-scale test problems. Numer. Algorithms. https://doi.org/10.1007/s11075-018-0570-7 (2018)

Calvetti, D., Lewis, B., Reichel, L.: GMRES, L-curves, and discrete ill-posed problems. BIT 42(1), 44–65 (2002). https://doi.org/10.1023/A:1021918118380

Chung, J., Nagy, J.G., O’Leary, D.P.: A weighted-GCV method for Lanczos-hybrid regularization. Electron. Trans. Numer. Anal. 28, 149–167 (2007/08)

Hansen, P.C.: Regularization Tools version 4.0 for Matlab 7.3. Numer. Algorithm. 46(2), 189–194 (2007). https://doi.org/10.1007/s11075-007-9136-9

Phillips, D.L.: A technique for the numerical solution of certain integral equations of the first kind. J. Assoc. Comput. Mach. 9, 84–97 (1962). https://doi.org/10.1145/321105.321114

Wing, G.M.: A primer on integral equations of the first kind. Society for Industrial and Applied Mathematics (SIAM), Philadelphia. https://doi.org/10.1137/1.9781611971675. The problem of deconvolution and unfolding, With the assistance of John D. Zahrt (1991)

Funding

Open Access funding provided by Università degli Studi di Padova within the CRUI-CARE Agreement. This work has been partially funded by the 2019 GNCS-INDAM Project “Tecniche innovative e parallele per sistemi lineari e non lineari di grandi dimensioni, funzioni ed equazioni matriciali ed applicazioni”.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Cipolla, S., Donatelli, M. & Durastante, F. Regularization of inverse problems by an approximate matrix-function technique. Numer Algor 88, 1275–1308 (2021). https://doi.org/10.1007/s11075-021-01076-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11075-021-01076-y