Abstract

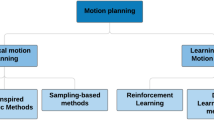

Rigid joint manipulators are limited in their movement and degrees of freedom (DOF), while continuum robots possess a continuous backbone that allows for free movement and multiple DOF. Continuum robots move by bending over a section, taking inspiration from biological manipulators such as tentacles and trunks. This paper presents an implementation of a forward kinematics and velocity kinematics model to describe the planar continuum robot, along with the application of reinforcement learning (RL) as a control algorithm. In this paper, we have adopted the planar constant curvature representation for the forward kinematic modeling. This choice was made due to its straightforward implementation and its potential to fill the literature gap in the field RL-based control for planar continuum robots. The intended control mechanism is achieved through the use of Deep Deterministic Policy Gradient (DDPG), a RL algorithm that is suited for learning controls in continuous action spaces. After simulating the algorithm, it was observed that the planar continuum robot can autonomously move from any initial point to any desired goal point within the task space of the robot. By analyzing the results, we wanted to recommend a future direction for research in the field of continuum robot control, specifically in the application of RL algorithms. One potential area of focus could be the integration of sensory feedback, such as vision or force sensing, to improve the robot’s ability to navigate complex environments. Additionally, exploring the use of different RL algorithms, such as Proximal Policy Optimization (PPO) or Trust Region Policy Optimization (TRPO), could lead to further advancements in the field. Overall, this paper demonstrates the potential for RL-based control of continuum robots and highlights the importance of continued research in this area.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Webster, R.J., Jones, B.A.: Design and kinematic modeling of constant curvature continuum robots: a review. Int. J. Robot. Res. 29(13), 1661–1683 (2010). https://doi.org/10.1177/0278364910368147

Robinson, G., Davies, J.B.C.: Continuum robots - a state of the art. Proceedings 1999 IEEE International Conference on Robotics and Automation (Cat. No.99CH36288C). 4, 2849–2854 (1999). https://doi.org/10.1109/ROBOT.1999.774029

Gravagne, I.A., Rahn, C.D., Walker, I.D.: Large deflection dynamics and control for planar continuum robots. IEEE/ASME Transactions on Mechatronics 8(2), 299–307 (2003). https://doi.org/10.1109/TMECH.2003.812829

Bailly, Y., Amirat, Y.: Modeling and control of a hybrid continuum active Catheter for Aortic Aneurysm Treatment. In: Proceedings of the 2005 IEEE International Conference on Robotics and Automation. 924–929 (2005). https://doi.org/10.1109/ROBOT.2005.1570235

Best, C.M., Gillespie, M.T., Hyatt, P., Rupert, L., Sherrod, V., Killpack, M.D.: A new soft robot control method: using model predictive control for a pneumatically actuated humanoid. IEEE Robot. Autom. Mag. 23(3), 75–84 (2016). https://doi.org/10.1109/MRA.2016.2580591

Penning, R.S., Jung, J., Ferrier, N.J., Zinn, M.R.: An evaluation of closed-loop control options for continuum manipulators. In: 2012 IEEE International Conference on Robotics and Automation. 5392–5397 (2012). https://doi.org/10.1109/ICRA.2012.6224735

Della Santina, C., Duriez, C., Rus, D.: Model-based control of soft robots: a survey of the state of the art and open challenges. IEEE Control Syst. Mag. 43(3), 30–65 (2023). https://doi.org/10.1109/MCS.2023.3253419

Jones, B.A., Walker, I.D.: Practical kinematics for real-time implementation of continuum robots. IEEE Trans. Robot. 22(6), 1087–1099 (2006). https://doi.org/10.1109/TRO.2006.886268

Burgner-Kahrs, J., Rucker, D.C., Choset, H.: Continuum robots for medical applications: a survey. IEEE Trans. Robot. 31(6), 1261–1280 (2015). https://doi.org/10.1109/TRO.2015.2489500

Mnih, V., Kavukcuoglu, K., Silver, D., Graves, A., Antonoglou, I., Wierstra, D., Riedmiller, M.A.: Playing Atari with deep reinforcement learning. CoRR (2013). http://arxiv.org/abs/1312.5602

Brown, N., Sandholm, T.:Libratus: The superhuman AI for no-limit Poker. Proceedings of the Twenty-Sixth International Joint Conference on Artificial Intelligence, IJCAI-17, 5226–5228 (2017)

Moravčík, M., Schmid, M., Burch, N., Lisý, V., Morrill, D., Bard, N., Davis, T., Waugh, K., Johanson, M., Bowling, M.: DeepStack: expert-level artificial intelligence in heads-up no-limit poker. Science 356(6337), 508–513 (2017) https://doi.org/10.1126/science.aam6960

Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A.A., Veness, J., Bellemare, M.G., Graves, A., Riedmiller, M., Fidjeland, A.K.: Ostrovski: Human-level control through deep reinforcement learning. Nature. 518, 529–533 (2015). https://doi.org/10.1038/nature14236

OpenAI: Openai five. OpenAI, OpenAI (2021) https://openai.com/five/

Silver, D., Huang, A., Maddison, C.J., Guez, A., Sifre, L., van den Driessche, G., Schrittwieser, J., Antonoglou, I., Panneershelvam, V., Lanctot, M., Dieleman, S., Grewe, D., Nham, J., Kalchbrenner, N., Sutskever, I., Lillicrap, T., Leach, M., Kavukcuoglu, K., Graepel, T., Hassabis, D.: Mastering the game of Go with deep neural networks and tree search. Nature 529(7587), 484–489 (2016). https://doi.org/10.1038/nature16961

Levine, S., Finn, C., Darrell, T., Abbeel, P.: End-to-end training of deep Visuomotor Policies. J. Mach. Learn. Technol. 17(1), 1334–1373 (2015). https://arxiv.org/abs/1504.00702

Gandhi, D., Pinto, L., Gupta, A.: Learning to fly by crashing (2017) https://arxiv.org/abs/1704.05588

Pinto, L., Andrychowicz, M., Welinder, P., Zaremba, W., Abbeel, P.: Asymmetric actor critic for image-based robot learning (2017). https://arxiv.org/abs/1710.06542

OpenAI: Solving rubik’s Cube with a robot hand. OpenAI (2022). https://openai.com/blog/solving-rubiks-cube

Pan, X., You, Y., Wang, Z., Lu, C.: Virtual to real reinforcement learning for autonomous driving (2017). https://arxiv.org/abs/1704.03952

Deng, Y., Bao, F., Kong, Y., Ren, Z., Dai, Q.: Deep direct reinforcement learning for financial signal representation and trading. IEEE Trans. Neural Netw. Learn. Syst. 28(3), 653–664 (2017). https://doi.org/10.1109/TNNLS.2016.2522401

François-Lavet, V.: Contributions to deep reinforcement learning and its applications in smartgrids, ULiège - Université de Liège (2017)

Liu, T., Tan, Z., Xu, C., Chen, H., Li, Z.: Study on deep reinforcement learning techniques for building energy consumption forecasting. Energy Build. 208, 109675 (2020). https://doi.org/10.1016/j.enbuild.2019.109675

Liu, J., Shou, J., Fu, Z., Zhou, H., Xie, R., Zhang, J., Fei, J., Zhao, Y.: Efficient reinforcement learning control for continuum robots based on Inexplicit Prior Knowledge. CoRR abs/2002.11573 (2020). https://arxiv.org/abs/2002.11573

Thuruthel, T.G., Falotico, E., Renda, F., Laschi, C.: Model-based reinforcement learning for closed-loop dynamic control of soft robotic manipulators. IEEE Trans. Robot. 35(1), 124–134 (2019). https://doi.org/10.1109/TRO.2018.2878318

Wu, Q., Gu, Y., Li, Y., Zhang, B., Chepinskiy, S.A., Wang, J., Zhilenkov, A.A., Krasnov, A.Y., Chernyi, S.: Position control of cable-driven robotic soft arm based on deep reinforcement learning. Information. 11(6), 310 (2020). https://doi.org/10.3390/info11060310https://www.mdpi.com/2078-2489/11/6/310

You, X., Zhang, Y., Chen, X., Liu, X., Wang, Z., Jiang, H., Chen, X.: Model-free control for soft manipulators based on reinforcement learning. In: 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2909–2915 (2017). https://doi.org/10.1109/IROS.2017.8206123

Lillicrap, Timothy P. and Hunt, Jonathan J. and Pritzel, Alexander and Heess, Nicolas and Erez, Tom and Tassa, Yuval and Silver, David and Wierstra, Daan: Continuous control with deep reinforcement learning (2015) https://arxiv.org/abs/1509.02971

Phaniteja, S., Dewangan, P., Guhan, P., Sarkar, A., Krishna, K.: A deep reinforcement learning approach for dynamically stable inverse kinematics of humanoid robots (2018). https://arxiv.org/abs/1801.10425

Shi, X., Guo, Z., Huang, J., Shen, Y., Xia, L.: A distributed reward algorithm for inverse kinematics of arm robot. 2020 5th International Conference on Automation, Control and Robotics Engineering (CACRE), 92–96 (2020)

de la Bourdonnaye, F., Teulière, C., Chateau, T., Triesch, J.: Within reach? learning to touch objects without prior models, 2019 Joint IEEE 9th International Conference on Development and Learning and Epigenetic Robotics (ICDL-EpiRob), 93–98 (2019)

Ghouri, U. H., Zafar, M.U., Bari, S., Khan, H., Khan, M.U.: Attitude control of quad-copter using deterministic policy gradient algorithms (DPGA). 2019 2nd International Conference on Communication, Computing and Digital systems (C-CODE) 149–153 (2019)

Rao, P., Peyron, Q., Lilge, S., Burgner-Kahrs, J.: How to Model Tendon-Driven Continuum Robots and Benchmark Modelling Performance. Frontiers in Robotics and AI 7(630245), 20 (2021). https://doi.org/10.3389/frobt.2020.630245

Hannan, M.W., Walker, I.D.: Kinematics and the implementation of an Elephant’s Trunk manipulator and other continuum style robots. J. Robot. Syst. 20(2), 45–63 (2003). https://doi.org/10.1002/rob.10070

Wang, S., Chaovalitwongse, W., Babuska, R.: Machine learning algorithms in Bipedal Robot control. IEEE Transactions on Systems, Man, and Cybernetics, Part C (Applications and Reviews) 42(5), 728–743 (2012). https://doi.org/10.1109/TSMCC.2012.2186565

Plappert, M.: keras-rl: Deep reinforcement learning for Keras. GitHub (2019). https://github.com/keras-rl/keras-rl

Sewak, M.: Temporal difference learning, SARSA, and Q-Learning. Deep Reinforcement Learning: Frontiers of Artificial Intelligence. 51-63 (2019)

Haarnoja, T., Zhou, A., Abbeel, P., Levine, S.: Soft actor-critic: off-policy maximum entropy deep reinforcement learning with a stochastic actor. CoRR abs/1801.01290 (2018). http://arxiv.org/abs/1801.01290

Fujimoto, S., van Hoof, H., Meger, D.: Addressing function approximation error in actor-critic methods. CoRR abs/1802.09477 (2018). http://arxiv.org/abs/1802.09477

Acknowledgements

This work was supported by the statutory grant No. 0211/SBAD/0121.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kargin, T.C., Kołota, J. A Reinforcement Learning Approach for Continuum Robot Control. J Intell Robot Syst 109, 77 (2023). https://doi.org/10.1007/s10846-023-02003-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10846-023-02003-0