Abstract

Design education has traditionally been deemed a face-to-face endeavor causing online learning to be disregarded as a viable teaching option. Nonetheless, the recent impact of COVID-19 pressured design schools to rapidly migrate online, impelling many educators to utilize this unfamiliar and largely dismissed methodology. The impending problems exposed with this sudden shift point to a significant gap in research. Accordingly, this study proposes a set of guidelines targeting design knowledge-building, based on an in-depth look at student experience during an online design course. Data were collected through a 63-item course efficiency survey (n = 59) and a series of semi-structured focus group interviews (n = 16) with the enrolled students. The following overarching themes emerged through iterative thematic analysis of the interview data: (1) flexibility and handling stress, (2) managing self-pacing issues (3) formal conversation platform, (4) content variety and access options. The themes were interpreted in relation to the survey findings and the broader research on learning. The proposed guidelines emphasize initially clear goals and objectives, pacing flexibility with progress guidance, content and communication variety, sense of presence and peer exposure, and individualized feedback. It is expected that the guidelines will be helpful in building, conducting, and evaluating future online design knowledge-building experiences.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

The research on online design learning spans 30 years back, with the term virtual design studio being coined in the early 1990s (Wojtowicz, 1995) and the groundwork on online design learning being laid at the very beginning of the twenty-first century (Broadfoot & Bennett, 2003; Kvan, 2001; Maher & Simoff, 1999). However, unlike the exponentially growing literature on online learning, the number of studies on online design learning has been lagging. This may be partly due to the assumption that design learning is first and foremost a face-to-face endeavor with a master-apprentice dynamic rooted in the mimetic learning practices of the past (Billett, 2014; Efland, 1990; MacDonald, 2004), some studies describing examples from as early as Ancient Greece (Efland, 1990; Green & Bonollo, 2003). Furthermore, previous research draws attention to the difficulties associated with the adoption and integration of technology by students (Akar et al., 2004), as well as the instructors (Pektas, 2007). Today, online design learning research covers a range of issues concerning distance collaboration (Akar et al., 2004; Bohemia et al., 2009a, 2009b; Rodriguez et al., 2018), blended learning solutions (Hill, 2017; Masdeu & Fuses, 2017; Pektas, 2012, 2015), comparison with physical studios (Gogu & Kumar, 2021; Saghafi et al., 2012), online sense of presence (Lotz et al., 2015; Jones et al., 2021), virtual worlds (Dadakoglu & Ozsoy, 2020; Grove & Steventon, 2008), social media (Fleischmann, 2014; Guler, 2015; Schadewitz & Zamenopoulos, 2009), to utilizing virtual reality and augmented reality (Maher et al., 2012; Nisha, 2019). Nevertheless, the existing literature is limited in number and scope, falling short of covering the breadth of problems that online design education faces (Cho & Cho, 2019; Fleischmann, 2020a; Jones et al., 2021; Lotz et al., 2018).

The very recent impact of COVID-19 pressured all higher education institutions, including design schools to rapidly migrate to online learning (Dignan, 2020; Sahu, 2020), impelling many design educators to utilize a methodology they previously dismissed. There have been online design learning applications during the pandemic, that showcased success (Ahmad et al., 2020; Fleischmann, 2020b; Marshalsey & Sclater, 2020). For the large part, however, the problems exposed by this sudden shift was addressed via improvised or temporary solutions based on casual observations, occasional student interactions, or interpreting teaching evaluations. With the rapidly widening adoption of online design learning, there’s a possibility of perpetuating negative misconceptions caused by sudden and unforeseen difficulties (Hodges et al., 2020), as the negative experiences of the educators might influence their outlook and attitude which are key in the acceptance of the developing learning theory and pedagogy (Allen & Seaman, 2008; Harasim, 2017).

Based on the rapid evolution of the web, corresponding technologies, modes of interaction, as well as their educational implementations (Almeida, 2017), online learning was already expected to occupy an exponentially increasing percentage of higher education curricula prior to the pandemic (Dignan, 2020; Lau & Ross, 2020; Rahim, 2020). Other research predicted that the advancing technology and expanding user interest to adopt and interact online should justify such an expansion for design learning as well (Guler, 2012; Oblinger & Oblinger, 2005). However, the current reality of a slow-growing base of research on online design learning depicts a stark contrast and points to a significant need for robust scientific output. Considering the prevalent tendency of design education to align itself with the changes in industry and society (Hall, 2016; Sireesha, 2018), the need for students to develop online collaboration and interaction competencies (Fleischmann, 2020a), as well as the high cost of physical design studio learning (Daalhuizen & Schoormans, 2018; Richburg, 2013) and the paradigm shift brought in by COVID-19, design education will surely transform moving forward. This is a strong indication that there is a need for further research on online design learning, especially with regard to curriculum design and structuring.

Theoretical background

Studio learning has been widely regarded as the signature delivery method in design education (Crowther, 2013), a method that typically involves a continuous dialogue between the instructor and the student, through which solutions for a given design problem are incrementally developed (Kurt, 2009; McClean, 2009; Schön, 1987). However, many studies identify another set of courses in design curricula, in addition to the design studio, often referred to as support, theory, lecture, or non-studio courses. Demirbas and Demirkan (2007, p. 346) identify four different course categories based on their specific focus on theory and practice: fundamental courses with a theory focus, technology-based courses with a balanced focus, artistic courses with a practice focus, and design studios as the fourth category. The existing online design learning literature have an overwhelming emphasis on studio learning, and non-studio courses are very rarely the subject of online design learning research, even though the credit and content standards established by leading educational accreditation organizations repeatedly underline their significance (Council of Interior Design Accreditation [CIDA], 2018; National Architectural Accrediting Board [NAAB], 2020; National Association of Schools of Art and Design [NASAD], 2020). This suggests a significant gap for research on non-studio courses, especially within the context of online learning.

Even though design education inherently follows a constructivist epistemology (Crowther, 2013), which involves the construction of knowledge as a byproduct of conversation and collaboration; design learning outside of the studio often borrow, at least partially, from objectivist and cognitivist epistemologies which fundamentally rely on the assumption of an external truth dictated by the instructor and assimilated by the mostly passive learner (Bates & Poole, 2003, Harasim, 2017) This approach often sparks criticism. It was previously claimed that students tend to perceive non-studio learning as a mere academic obstacle, often staying passive and disengaged (Allen, 1997). Typical non-studio teaching practices were described as front-loading skills and competencies that contradicted the more non-sequential nature of design learning, displaying weak ties to the projects running in parallel in the design studio (Gelernter, 1988; Rodriguez Bernal, 2017). Another common criticism has been the reliance on the ability of and the effort required from the instructor to impart the knowledge, which was believed to impede the consistency of learner experience. Online learning environments can address the passive nature of the learner and the lack of social interactions, as well as the consistency issues by providing a platform that inherently cultivates interaction and collaboration, an epistemology defined as collaborativism or the Online Collaborative Learning Theory by Harasim (2017).

Knowledge building, as defined by Bereiter and Scardamalia (2003), is a constructive approach to learning that involves a collective and deliberate contribution to the “creation” of knowledge by the members of a learning community. Knowledge building has been associated with design research (Gray, 2020), as a concept that suggests “in preparation for the design process”. Demirkan (2016) argues that knowledge-building covers “all” learning activities including but not limited to learning-by-doing endeavors such as those encountered in studio learning. Knowledge-building features a very strong community component; the interactions with community members contribute to the transformation, advancement, and consumption of the knowledge by members (Scardamalia & Bereiter, 2014). When considered in relation to the collaborativist theory outlined by Harasim (2017), the emphasis on the strong community interaction is revealed to be a common ground, which was also identified among the success factors of online knowledge-building by Swan et al., (2000, p. 379): transparent interface, robust instructor interaction, valued and dynamic discussion. Accordingly, in the context of this study, knowledge building is defined as the constructive learning process shaped by community interactions and collaboration that leads to the formation of a knowledge base in service of design problem solving.

In light of this exposition, it can be said that there’s a growing need for new research on online design learning in post-COVID context, especially regarding non-studio courses. Moreover, knowledge-building as a learning approach provides a substantial framework for structuring and conducting online non-studio design courses. Accordingly, this research aims to address the following research question “what are the underlying themes that define students’ online learning experiences and engagement in non-studio courses that focus on knowledge building in online design education?” Based on the findings revealed by the analysis of empirical evidence in conjunction with the broader learning research, a series of guidelines were proposed, to provide a foundation to build, conduct, and evaluate future online design knowledge-building experiences.

Methodology

This study investigates the online learning experience of design students enrolled in a 3rd year 3-credit Interior Architecture course that took place during the summer semesters of 2019, 2020, and 2021. Canvas learning management system was utilized to deliver the course content, administer tests, collect assignments, and to provide an interaction platform. Each installment ran for eight consecutive weeks, equaling to 6 class hours per week. The course featured materiality content in support of design studio courses, covering significant issues related to history, specification, application, sustainability, health, and safety. The content is associated with 20 accreditation criteria amongst the 118 stated in the accreditation guidebook published by the Council for Interior Design Accreditation (CIDA, 2018). According to the classification described in Demirbas and Demirkan (2007), this course can be classified as technology based, with a combined focus on both theoretical and practical knowledge. Over the three years, the course enrollment was between 20 and 27 students (24.3 on avg.), of ages 19–26 (20.4 on avg.), and on average 19.2% male to 80.8% female. The way the course was delivered stayed strictly the same through the three-year period during which data were collected. During this period no students dropped out of or failed to complete the course.

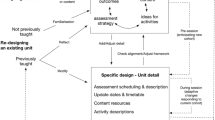

Course structure

The course featured four distinct learning and evaluation components: theoretical knowledge, analysis essays, material board design, and participation. These are intended to give students an opportunity to experience various modes of learning and gain credit through a variety of assignments. The theoretical knowledge component comprised 30% of the final grade, and delivered through 12 separate modules. The content involved a wide selection of text, images, and videos and the assessment method involved quizzes, with questions varying between 5 to 10 based on module content length (7.5 on average per module). The spatial analysis and professional roundtable components comprised 30% of the final grade. Students were expected to form inquiries and author essays on the analysis of image and video content provided, based on the acquired foundation with the modules. The material board design assignment comprised 30% of the final grade. Students were expected to creatively solve a design problem that required them to utilize the entirety of the knowledge gathered during the course to design a complex material scheme of a commercial space, considering the associated corporate identity, while addressing related sustainability, health, and safety concerns. This final assignment is in line with the research of Allen (1997), Armstrong (1997), and Bridges (2007) which suggest that students learn technical skills and incorporate them more readily into the design process when the skills are acquired on an as-needed basis. Students were expected to seek 2 written feedback from the course instructor, provided through email. Participation in discussion boards comprised 10% of the final grade. Each student was expected to post 10 comments, 1 grade point was received per comment, a word limit of 150 was expected to be met. The participations were posted in discussion boards that were tied to theoretical knowledge modules, analysis assignments, and the final material board assignment. Excluding the instructor’s comments, on average 301.3 comments were posted during each installment of the course and students typically went over the minimum of 10 posts, contributing 12.4 posts on average per individual.

There was no formal integration of social media platforms outlined in the syllabus, however, the students did set up a GroupMe group and communicated with their peers via this network. The instructor did not participate in this chat group.

Data collection

The data collection for this study involved two separate processes: a 63-item question course efficiency survey conducted at the end of each course installment (n = 59, 81% response rate) which provided a general overview of student opinion in relation to various aspects of the learning and three separate semi-structured focus group interviews conducted with 16 students, which provided a detailed picture of the learning experience (Creswell, 2007; Krueger & Casey, 2015). The 63-item course efficiency survey utilized a 5-point semantic differential scale system of response (Finstuen, 1977; Osgood et al., 1957), instead of the typical Likert scale. Semantic differential scale enabled identifying bipolar concepts that focused on the connotative meaning associated with positive and negative sentiments deeply related to the question at hand, positively affecting respondent engagement, as opposed to a vague and persistent agree/disagree scale (Rocereto et al., 2011). Previous research indicates extreme responses and additional bias with Likert scale usage; and the bias, even though still present, is more controlled in semantic differential scale use (Friborg et al, 2006; Wirtz & Lee, 2003).

The questionnaire was initially administered (n = 19, 95% response rate) at the completion of the first installment of the course to gather data on student opinion, which was utilized to inform the development of the focus group interview roadmap (Marshall & Rossman, 2011; Morgan, 1997). The course efficiency survey was further administered to the next two cohorts, following the 2020 and 2021 installments of the course, to a total of 59 students over three years. The questionnaire responses were then collated, average mean, median, and standard deviation values for each item were calculated (Appendix 1).

While the focus group interview roadmap acted as a guide for the semi-structured interviews, the emergent nature of the focus group interview was exploited fully through various phrasing and probes (Creswell, 2007), largely based on the talking points identified in the roadmap (Appendix 2). The initial responses to the course efficiency survey were also used to stimulate conversation during interviews, reveal general tendencies, and request further elaboration or clarification of participant responses.

The semi-structured focus group interviews were conducted during the Fall 2019 semester, following the completion of the first installment of the course. Participation in the focus group interviews were voluntary among enrolled students. The 16 students who participated in the focus group interviews were divided into three groups of 5–6 students, and each interview session followed the same roadmap. Each focus group session was conducted face-to-face with all participants physically present, taking between 1.5 to 2 h to complete. The entirety of the focus group interviews was recorded as audio and video. The multiple focus groups enabled a more robust identification of themes (Krueger, 2014). Notes of the interviewer along with non-verbal behavior indicators such as intonation, laughter, disinterest, as well as interruptions and group agreement indicators were added to transcript data to form connections with the semi-structured framework (Marshall & Rossman, 2011).

Analysis and discussion

The three separate focus group interview sessions yielded over 6 h of recordings which were later orthographically transcribed to 55 K words of text content. Transcriptions were interpreted in accordance with the iterative thematic analysis method outlined by Braun and Clarke (2006, 2012), an iterative approach where initial code labels are organized into meaningful categories, which are in turn used to reveal themes that were prominent in the data. Following the initial labeling process, 203 explicit labels were collated into 53 inclusive labels indicating prominent patterns in the data, from which the emerging 17 preliminary categories were further grouped, revealing 4 over-arching themes. These four overarching themes that have emerged from the focus group analysis are as follows: (1) flexibility and handling stress, (2) managing self-pacing issues, (3) formal conversation platform, and (4) content variety and access options.

Each specific over-arching theme and sub-themes are analyzed in the following sub-sections and discussed in relation to the broader online learning research. Each analysis is accompanied by relevant quotes from the focus group interviews that best exemplified the interpretation of the theme and captured the general sentiment. Quotes indicate verbatim remarks of students, initials of whom have been randomized to maintain anonymity. The data yielded by the 63-item course efficiency survey were utilized to assess if the themes revealed via the focus group data analysis were parallel with or contradictory to the anonymous survey results of the larger group, between three installments of the course over three years.

This study features two important limitations. The first limitation is the number of responses to the survey. Since the yearly enrollment to the course was limited to between 20 and 27, only 59 students participated in the survey over the course of three years. For this reason, survey data is only used to substantiate the thematic analysis. The second limitation pertains to the content of the subject course. Even though the subject course in this research has a balanced distribution of different types of content, assignments, and interactions, it is not a quintessential representation of all knowledge-building courses in the design curriculum. Further research looking at different design courses might be used to expand and refine the proposed guideline.

Flexibility and handling stress

Flexible learning has been defined as offering a set of options relating to modes of learning, communication, evaluation to enable students to customize and personalize their experience (Goode et al., 2007). Previous research claimed that a perception of flexibility and convenience in online courses provided more control and attracted learners (Lee & Choi, 2011; Mödritscher, 2006). In today’s emerging knowledge-based society, self-learning and -development are increasingly important, and research supports that online courses are highly suitable platforms to develop these skills (Salas, 2010). The design profession is highly responsive to technological and social changes; therefore, it can be claimed that self-learning skills will play an increasingly crucial role in building successful design careers.

Academic workload and financial problems were identified as two prominent stressors for college students (Sesay, 2019), higher levels of stress being inversely correlated to learner success (Struthers et al., 2000). Flexibility and stress relationship emerged to be a significant sub-theme during the analysis. Participating students expressed an appreciation for the flexibility attained with the soft deadlines, while the delivery method was still perceived as efficient. Survey results support this tendency (Table 1, Q15). A significant number of the students were either working a full-time job, dealing a personal/family matter or health issues at various points during the course. Students communicated repeatedly that flexibility helped to manage their own time and impede the build-up of stress, which was apparent on the survey results as well (Table 1, Q52). It should be noted that flexibility also aids the course adaptation period which can further mitigate the build-up of stress (Schafer, 2012). Students also expressed an appreciation for asynchronous alternatives to various activities, as blocking time created issues and stress. MU’s following comment is one example among several –

MU – “…with our Zoom [meeting] and everything, like, I was lucky that I was able to talk to my boss and like, like hey for these two or three hours, like I'm going to be away from my desk… I was so stressed out…”

The relationship between flexibility and motivation emerged as another significant sub-theme according to the analysis. Existing research suggests that flexibility correlates with student motivation (Ferrer-Caja & Weiss, 2000), conversely, a rigid and controlling learning environment cause students to lose initiative and can deteriorate learning efficacy (Amabile, 1996; Utman, 1997). Luskin and Hirsen’s (2010) research associated students’ perception of control with increased feelings of satisfaction, enjoyment, and confidence. Learning efficacy was also claimed to be positively correlated to learner control and responsibility (Kay, 2001), and correspondingly, design students should make better use of flexibility and an elevated sense of control considering the constructivist nature of design learning. Survey data reflects the perception of a more uniform distribution of workload during the course, a total workload that is in line with other similar courses, and a tendency to rate motivation as high (Table 1, Q14, Q04, Q26). Some participating students related flexibility to motivation cycles they experienced and claimed that flexibility helped them to adapt their schedule, and set up the pacing in accordance with their psychological state. OS’s following comment was one example –

OS – “There were some weeks I took a little bit longer to do things and some weeks I was feeling more productive and I would knock out like five modules at once. It just really gave me the freedom to do it at my own learning pace.”

Higher motivation and lower stress levels can also contribute to knowledge retention (Snyder, 2003). Participating students claimed that being able to take the time to understand the material was very useful and soft deadlines helped with knowledge retention. Parallel to the analysis, survey results also indicate a tendency to indicate an expectancy of high knowledge retention (Table 1, Q11). SC’s following comment encapsulates this –

SC – “I feel like it made retaining [knowledge] easier because I, if there was a deadline, I feel like I would have to rush to, like learn it and understand it, whereas when I can take my time with it and there's something that I was struggling with I didn't understand, I could sit there and work it out…”

Managing self-pacing issues

According to the thematic analysis, managing self-pacing issues emerged as another overarching theme and many of the participating students related the issue to flexibility, contrary to the survey input which indicates a positive perception of flexibility among the students (Table 2, Q28, Q10). Successful self-pacing as it relates to self-regulated learning has been tied to a conscious effort in understanding, planning, and monitoring course tasks and the ability to devise appropriate strategies to follow (Corno & Mandinach, 1983; Ridley et al., 1992). Self-directed learning demands management, monitoring, and motivation as core qualities (Fisher et al., 2001; Garrison, 1997), and accordingly, some students show great skill in self-regulated learning whereas others need support in varying forms. The analysis revealed that participating students were aware of the challenges self-pacing presented and the possibility to easily overwhelm oneself from the get-go. DC’s following comment summarizes the problem –

DC – “I feel like if you tried to do it all at once, if you didn't make yourself a schedule to go through, it's pretty easy to, like overwhelm yourself with the amount of work or [fall] really behind and then be overwhelmed at the end.”

Existing research suggests that setting clear educational, goals, guidelines, and schedules are one of the key elements for a successful online learning environment (Rollag, 2010; Schroeder-Moreno, 2010; Zimmerman, 2008), as they help the student devise a personal motivation strategy, helping them stay on track and prevent burnouts (Roper, 2007). Therefore, it can be claimed that a simple schedule with suggested deadlines should be useful. Considering the visual tendencies of design students (Guler & Atalayer, 2016; Mostafa & Mostafa, 2010) a visual schedule might prove more useful. The survey results indicate that students understood the course objectives and expectations and had a clear trajectory from the get go (Table 2, Q22, Q23), however, the analysis of the interview data revealed these were not necessarily sufficient for organizing a self-pacing strategy. For time management issues, students proposed and largely agreed that a well laid out schedule would alleviate some self-pacing problems. ED’s following comment is one among several on a need for a well laid-out schedule –

ED – “Hey, it's a good time to work on your material boards or where [the instructor] would say, I think that you should get this module done at least by now… I think if people were to have issues with time management that might be good for them to see like a laid out schedule in advance.”

Previous research associates clear subdivision of module content with increased student motivation and completion rates (Bonk et al., 2002; Pomales-García & Liu, 2006). Survey results indicate a general satisfaction with the organization and the size of the module content (Table 2, Q27). In parallel, participating students indicated that the module content being divided into easily consumable chunks helped with their self-pacing. BP’s following comment reflects the general student outlook –

BP – “I think the pages inside the module has really helped me because I know I'd be like, okay, I have like 30 more minutes before I have to go do something else and so I'd be like, okay, I can probably read like three of these pages before I go versus, like if it was all in one giant page I had to keep scrolling.”

Tello (2007) and Croxton (2014) claimed that student-instructor interactions directly influenced student satisfaction and persistence. Other research also revealed that direct modes of instruction, instructor immediacy, and social presence correlated with satisfaction, motivation, and course outcomes (Ondrey, 2017; Schutt et al., 2009). The survey responses indicate that students found the advice on course progress useful for self-pacing (Table 2, Q29). Furthermore, the analysis of the focus group data substantiates this as students claimed that receiving intermittent personal emails helped them pick up the pace. SC’s following comment encapsulates the general student view –

SC – “But I know [the instructor] sent me an e-mail [the instructor was] like, hey, I think you're falling behind a little bit. I'm like, oh, yeah I am, so I had to like force myself like to do a lot all at once and I was better about, like self-regulating.”

Formal conversation platform

The importance of the community setting in online design learning has been emphasized in early literature (Broadfoot & Bennett, 2003), as well as in later studies (Lotz et al., 2015); it is also a core quality of knowledge-building (Bereiter & Scardamalia, 2003). Numerous research relate higher sense of social presence to improved learning interactions, critical thinking skills, and overall satisfaction with a course (Garrison et al., 2010; Gunawardena & Zittle, 1997; Wei & Chen, 2012; Weinel et al., 2011). However, establishing social presence requires student participation, and reluctant students might need various incentives. Peterson (2001) suggests that turning participations into assignments might create a perception of responsibility, and Shea et al. (2000) suggest that assigning a greater percentage of grade points on discussion helps with student learning and satisfaction. On the other hand, Bates (2015 as cited in Harasim, 2017, p. 126) argued that awarding grades to participation diminished the extrinsic value of contribution and emphasized extrinsic motivations (see also Ryan & Deci, 2000). For this study, participation was incentivized with grade points based on the number of words and contributive merit during the course. According to the survey data, even though not very firmly, students expressed a sense of community (Table 3, Q40). One sub-theme that emerged during the analysis was the clear assessment of peer contributions. DC’s comment below is one example of students’ appreciation for clear participation assessment guidelines –

DC – “Yeah, I think it's nice to have the parameters to at least have like a base level for people who may not have otherwise written as much, you know, maybe it makes them go back and research something or read through something again…”

Research suggest that online learners are more susceptible to the feeling of isolation due to the overt physical separation from peers and the instructor (Lee & Choi, 2011; Willging & Johnson, 2009). The correlation between the perception of being part of a learning community and course satisfaction and completion rates underlines the significance of online interactions. The sustained interaction between the peers and the instructor is key to engaging students with the language, vocabulary, and activities associated with the target discipline (Harasim, 2017). It is especially important for design learning, as the proficiency of using professional terminology plays a significant role in career success. Participating students expressed that they perceived the peer interaction on the learning platform as a contributing factor to their learning experience, and a parallel claim can be made based on survey data (Table 3, Q43). Analysis of interview data indicates that students acknowledged that they were learning more efficiently as a communicating group compared to their self-research efforts. MS’s comment below encapsulates the perception of peer interactions –

MS – “I actually had to do a little bit of research to, like say for the spatial analysis I had to look up, like brick possibilities with translating it to the floor or something. I learned things that I shouldn't, I wouldn’t have learned if I only did that for a research paper and then I got some things wrong and people corrected me and [the instructor] corrected me and then I learned and so now I know like some things that I wouldn't have known if I only had to like research brick by myself…”

The advantages of asynchronous participation were another sub-theme that was repeatedly brought up during the focus group interviews by the participants. There is numerous research suggesting that, compared to the face-to-face counterpart, asynchronous online interactions result in a more thoughtful and in-depth conversation. Hew and Cheung (2013) argue that writing responses enable learners to have more time to process information. Other researchers claim that writing is associated with high-order and deeper learning (Garrison et al., 1999; Thomas, 2002). Martin (2001) states that in written communication, such as in discussion boards, subjects strive to compensate for missing communication traits by being more descriptive and transparent. In addition, learner discussions can be built around images and videos elevating the quality of peer contributions (De Choudhury & Sundaram, 2011; Shirky, 2009). Moreover, an asynchronous system can provide moderators more flexibility and control over events (Guler, 2015; Poynter, 2010). Lastly, in an online platform there’s no peer competition for opportunities to talk (Althaus, 1997), and as a result, every voice to be heard equally. According to the survey, students express comfort with sharing ideas, however, their belief in the contributions of their participation was somewhat limited (Table 3, Q44, Q47, Q49). The analysis of the focus group interviews reveals many parallels in student experience with the existing research. The below comment of NS shows one example of participants’ view on asynchronous participation –

NS – “I think like I was able to go more in depth because I had more time to think about it and I think people, like just in general, people post a lot more than they would have said like in a classroom environment.”

Fotaris et al. (2015), Fleischmann (2014), Guler (2015), and Pektas (2015) elaborate on how social media use can strengthen the sense of community and enhance peer exposure, interaction, dialogue and exchange. However, the research on social media trends suggest a transformation of the perception of social media as an extension of daily life and circle of friends, increased association with privacy and social life and users displaying a tendency towards separating it from educational platforms (Dahlstrom et al., 2015). The survey data indicates a similar trend among the participating students (Table 3, Q60). Furthermore, there is some serious criticism associated with the current functionality and use of social media. Research suggests that social media content revolves around short bursts of attention, that hinder deep and critical thought needed for education (Carr, 2010 as cited in Harasim, 2017, p. 139). Even though indicating a positive impact on learning of design, the limitations of social media have also been outlined by the research (Schnabel & Ham, 2012), including incompatibility with design content as well as noise and clutter created by posts. Another significant problem appears to be the limited means to organize and access information heap built through an educational process (Guler, 2015). Budge (2013), argued that social media primarily serves an augmentative role. More recent data from Marshalsey and Sclater (2020), also indicates low density of use for social media channels dedicated to personal use compared to the official learning or conferencing platforms. Parallel to the survey data, a general negative outlook on social media as an educational platform was apparent in the focus group analysis. Even though students utilized social media services, specifically GroupMe, to communicate with peers along with the course platform Canvas, these services were repeatedly deemed as less formal, less organized, and oftentimes distracting. Below comments from MU and NX encapsulate this claim –

MU – “And then we found ways to communicate with each other, like with our GroupMe and stuff to, like figure out what was successful and what wasn't successful.”

NX – “GroupMe is easy to use, it's very casual but it's not good for organization because it's all chronological… AO (interjects) – casual stuff in there, it was just, it was a lot sift through…”

Another important sub-theme revealed during the analysis was the ability to track peer progress. Research suggests, developing a sense of learning community has a significant impact on student motivation, completion rates, and course satisfaction (Pigliapoco & Bogliolo, 2008), however, in relatively isolated online learning environments, sustaining peer interaction and observation found to be difficult (Lee & Choi, 2011; Willging & Johnson, 2009). In relation to sustaining a sense of community, the ability to track progress impacts learner experience (Chiu et al., 2015), and this impact is found to be largely positive (Hsiao et al., 2012; Jin, 2017). The survey data depicts a positive perception of the ability to track peer progress (Table 3, Q48), during the interviews students expressed that discussion boards helped with tracking peer progress, and comparatively assess where they should be at any given time. NI’s comment below summarizes the students’ outlook –

NI – “…The discussions, those kind of, they helped just remind you to kind of stay on track… it was just kind of a good reminder because whenever like something popular for, like oh a new entry in the discussion, I was like, oh, yeah, I should probably, you know…”

Content variety and access options

In accordance with the processes and methods involved in design learning, such as prominent reliance on visual information (Guler & Atalayer, 2016; Mostafa & Mostafa, 2010) and drafting being identified as a key skill (Goldschmidt, 2014; Verstijnen et al., 1998), students are highly aware of their learning preferences. Accordingly, based on perceptual modality; the visual – verbal dimension formulated by Reinert (1976), and the aural – kinesthetic dimension (as it relates to drafting) outlined in the VARK model (Fleming & Baume, 2006; Fleming & Mills, 1992) were commonly mentioned during the focus group interviews by the participating students. KX’s following comment was one example among many recorded during the interviews –

KX – “So yeah, so I'm a very auditory learner. I learn best by actually being in lectures and having someone speak to me, I do miss that dialogue between the student and the teacher and having this back-and-forth conversation. So, I did have to shift a little bit into like reading mode.”

The influential study by Felder (1996) emphasizes the importance of instruction method compatibility with learning style variety. In relation to learning styles and strategies, choice has been identified as one major contributor to learner success (Deci & Ryan, 2000), and content aligning with student preferences were found to be easily understandable and motivating (Fleming & Baume, 2006; Dunn et al., 2002; Dunn et al., 1981). Even though providing content aligning with learner preferences has been shown to be beneficial, critical research argues that outcome and performance benefits do not justify the financial and time costs (Coffield et al., 2004; Papanagnou et al., 2016; Pashler et al., 2009; Reiner & Willingham, 2010). Kraemer et al. (2014) suggest that students somehow convert the provided information into content that is aligned with their learning preferences. Another critical research claims that excessive choice can be confusing, distracting, and mentally taxing for the learner (Van Merriënboer & Sluijsmans, 2009). The analysis of the focus group data indicates that students benefited from and appreciated the different types of content provided. It should be noted that the scientific accuracy and exact alignment with students’ learning preference claims were disregarded. The survey data support this finding (Table 4, Q16, Q19, Q20). MU’s comment below about the material board assignment is one example that encapsulates students’ perception –

MU - So like I am an application learner of like, if I like I can read something all day, but if I can't apply it to something that I'm not going to retain it… taking that material [sample] and put it into my board and knowing that it fit on my board for a specific reason was very efficient for me and it made me think about.

Critiques, as a type of feedback, are a foundational component of design education (Guler, 2015), crucial for grounding the knowledge base and relating it to the design problems students face (Green & Bonollo, 2003). Design students are familiar with the benefits and shortcomings of critiques which ultimately shape their educational expectations. On the other hand, research has associated feedback with a reduced sense of autonomy (Ryan & Deci, 2000) and suggested that it should be carefully regulated. According to the analysis, it is found that students appreciated the individualized critiques they received for their design assignment. According to the survey data, the number of feedbacks was perceived as moderate, however, the quality was high, supporting the previous finding (Table 4, Q37, Q38). Furthermore, some participating students stressed that the feedback was especially beneficial when these were open to interpretation and they had to think about what was being said. FE’s following comment captures this claim –

FE – “[The critiques] were nice because it was something that I had to interpret rather than like if [the instructor] would have been there with me I would have been like okay, this is what [the instructor] wants, he thinks I should move forward with.”

One of the major disadvantages of synchronous systems has been identified as the excessive leftover data heap and difficulties in backtracking (Chi & Lieberman, 2011). In online design learning where freedom from time and space limitations can be attained, the ease of archival and backtracking affects student performance positively (Guler, 2015). Allowing for a variety of ways to access the course material as well as a clear hierarchy was also deemed as helpful strategies to support learning (Zapalska & Brozik, 2006). The analysis of the focus group data shows parallels to the findings in previous research: a strategy involving clear subdivision and hierarchy of module content coupled with images and videos as markers may contribute to the successful backtracking of content. The survey results also corroborate ease of access (Table 4, Q55). ED’s following comment summarizes the advantages of easy backtracking –

ED – “I mean all of the different modules that [were provided], it was easy to follow along with because it was very, like subcategorized… if I went through [the quiz] and I missed one question, I kind of knew which one that was. I could go back and be like, okay I remember, it was in this section and it was underneath this subcategory. So I could go back and kind of review it a little better.”

Another important sub-theme that emerged from the analysis was offline access. Students mentioned how a printable/offline version of the content would have been made learning easier, especially in situations where access to the internet is limited. This particular notion bears an increased significance as COVID-19 revealed the importance of sustained internet connection for online learning as the diverse situations students are subject to become apparent (Nugroho et al., 2020; Netolicky, 2020; Rahim, 2020). FO’s following comment is one of several ways students dealt with a lack of internet connection –

FO – “…like the national parks especially had like zero Wi-Fi and service. And so unless I was at a building or a hotel I couldn't access the information just because it was you know through the internet. So, typically what I would do is the night before I would screenshot things so that I actually could access them.”

Proposed guideline

Guidelines can provide a concise basis for establishing useful teaching methodology and there is numerous research setting guidelines for various aspects of online learning (Rahim, 2020; Weerasinghe et al., 2009; vd Westhuizen, 2016; Yuan & Kim, 2014). Findings revealed by the thematic analysis of the focus group data were transformed into a 7-item guideline for setting up efficient design knowledge-building in online environments (Table 5). To ground the claims further, each guideline item is also accompanied by statements from relevant research. These statements provide further information that can be used to better implement each guideline item.

Implications and conclusions

This research investigated a group of design students’ experiences pertaining to an online design knowledge-building process that featured modules for theoretical knowledge base building, analysis modules for forming queries based on the newly acquired theoretical foundation, and a creative problem solving assignment that called for the utilization of this knowledge to design a material scheme, requiring students to express spatial design intent as well as address related sustainability, health, and safety concerns. Communication was built into all facets of the learning process and participation efforts impacted the final grade. The data for the study were collected through a 63-item course efficiency survey (n = 59) and a series of semi-structured focus group interviews (n = 16) with the enrolled students. The following overarching themes emerged through iterative thematic analysis of the interview data: (1) flexibility and handling stress, (2) managing self-pacing issues (3) formal conversation platform, (4) content variety and access options. The themes were interpreted in relation to the survey findings and the broader research on learning. A 7-item guideline was proposed to inform the development of improved online design knowledge-building experiences.

A number of the findings reaffirm and ground previously established knowledge by the broader online learning research within design education context, other findings highlight design education specific methods and approaches. The proposed guidelines underline the need for initially clear goals and objectives, pacing flexibility with progress guidance, content and communication variety, sense of presence and peer exposure, and individualized feedback. It is expected that this guideline will be part of a foundation that will become relevant with the very possible surge in published research on online design learning within the post-COVID-context, and also be helpful in building, conducting, and evaluating future online design knowledge-building experiences.

Future research involving courses featuring different online design knowledge-building setups with larger survey participation can be conducted to refine the proposed guideline. Even though the course presented in this research encapsulates numerous dimensions of design knowledge-building, some context-specific needs such as peer evaluation or group projects require further research. A future iteration of the guideline should also consider emerging technologies such as machine-learning driven adaptive learning processes, and natural language processing based automated response systems or evaluation techniques. By changing learning dynamics, such emerging technologies will necessitate the expansion and refinement of the proposed guideline.

References

Akar, E., Öztürk, E., Tuncer, B., & Wiethoff, M. (2004). Evaluation of a collaborative virtual learning environment. Education + Training, 46(6/7), 343–352. https://doi.org/10.1108/00400910410555259

Allen, E. (1997). Second studio: A model for technical teaching. Journal of Architectural Education, 51(2), 92–95.

Allen, I. E., & Seaman, J. (2008). NASULGC–Sloan national commission on online learning benchmarking study: Preliminary findings. The Sloan Consortium. Retrieved November 26, 2009, from www.sloan-c.org/publications/survey/pdf/entering_mainstream.pdf

Almeida, F. (2017). Concept and dimensions of Web 4.0. International Journal of Computers and Technology, 16(7), 7040–7046. https://doi.org/10.24297/ijct.v16i7.6446

Althaus, S. L. (1997). Computer-mediated communication in the university classroom: An experiment with online discussions. Communication Education, 46(3), 158–174. https://doi.org/10.1080/03634529709379088

Amabile, T. M. (1996). Creativity in context. Westview.

Armstrong, D. E. (1997). Teaching for transfer: Fostering transmission of knowledge between classroom and studio. Retrieved November 23, 2020, from https://www.acsa-arch.org/proceedings/Annual%20Meeting%20Proceedings/ACSA.AM.97/ACSA.AM.97.16.pdf

Bates, T. (2015). Teaching in a digital age. BC Open Textbooks. Retrieved November 23, 2020, from www.tonybates.ca/2011/03/28/irrodl-on-connectivism/

Bereiter, C., & Scardamalia, M. (2003). Learning to work creatively with knowledge. In E. De Corte, L. Verschaffel, N. Entwistle, & J. van Merriënboer (Eds.), Unravelling basic components and dimensions of powerful learning environments. EARLI Advances in Learning and Instruction Series.

Billett, S. (2014). Mimetic learning at work: Learning in the circumstances of practice. Springer.

Bohemia, E., Harman, K., & McDowell, L. (2009a). Intersections: The utility of an Assessment for Learning discourse for Design educators. Art, Design & Communication in Higher Education, 8(2), 123–134.

Bohemia, E., Smith, N., Harman, K., Duncan, T., Turnock, C., & Hwang, S. G. (2009b). Distributed collaboration between industry and university partners in HE. In International association of societies of design research 2009b conference, 18–22 October 2009b, Seoul, Korea.

Bonk, C. J., Olson, T. M., Wisher, R. A., & Orvis, K. L. (2002). Learning from focus groups: An examination of blended learning. International Journal of E-Learning & Distance Education/revue Internationale du e-Learning et la Formation à Distance, 17(3), 97–118.

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77–101.

Braun, V., & Clarke, V. (2012). Thematic analysis. In H. Cooper (Ed.), APA handbook of research methods in psychology, vol. 2. Research designs (Vol. 2, pp. 57–71). American Psychology Association.

Bridges, A. (2007). Problem-based learning in architectural education. In Proceedings of CIB (international council for building) 24th w78 conference (pp. 755–762). Retrieved November 10, 2020, from https://strathprints.strath.ac.uk/6150/6/strathprints006150.pdf

Broadfoot, O., & Bennett, R. (2003). Design studios: Online? Comparing traditional face-to-face Design Studio education with modern internet-based design studios. Apple University consortium academic and developers conference proceedings 2003 (pp. 9–21).

Carr, N. (2010). The shallows: What the internet is doing to our brains. W.W. Norton & Company.

Chi, P., & Lieberman, H. (2011). Raconteur: Integrating authored and real-time social media. In Proceedings of the SIGCHI conference on human factors in computing systems eCHI'11 (pp. 3165–3168). https://doi.org/10.1145/1978942.1979411

Chiu, P. S., Wu, T. T., Huang, Y. M., & Ho, H. L. (2015). The effect of peer’s progress on learning achievement in e-Learning: A social facilitation perspective. In Ubiquitous computing application and wireless sensor (pp. 537–542). Springer.

Cho, J. Y., & Cho, M. H. (2019). Students’ use of social media in collaborative design: A case study of an advanced interior design studio. Cognition, Technology, & Work., 22, 901–916. https://doi.org/10.1007/s10111-019-00597-w

Coffield, F., Moseley, D., Hall, E., & Ecclestone, K. (2004). Should we be using learning styles: What research has to say to practice. Learning and Skills Research Centre.

Corno, L., & Mandinach, E. B. (1983). The role of cognitive engagement in classroom learning and motivation. Educational Psychologist, 18(2), 88–108.

Council of Interior Design Accreditation [CIDA]. (2018). Professional standards. Retrieved November 23, 2020, https://www.accredit-id.org/professional-standards

Crowther, P. (2013). Understanding the signature pedagogy of the design studio and the opportunities for its technological enhancement. Journal of Learning Design, 6(3), 18–28.

Croxton, R. A. (2014). The role of interactivity in student satisfaction and persistence in online learning. Journal of Online Learning and Teaching, 10(2), 314–325.

Creswell, J. W. (2007). Qualitative inquiry & research design. Sage.

Daalhuizen, J., & Schoormans, J. (2018). Pioneering online design teaching in a MOOC format: Tools for facilitating experiential learning. International Journal of Design, 12(2), 1–14.

Dahlstrom, E., Brooks, D. C., Grajek, S., & Reeves, J. (2015). ECAR study of students and information technology. Retrieved November 10, 2020, from https://www.ferris.edu/it/central-office/pdfs-docs/StudentandInformationTechnology2014.pdf

De Choudhury, M., & Sundaram, H. (2011). Why do we converse on social media? An analysis of intrinsic and extrinsic network factors. In Proceedings of the 3rd ACM SIGMM internation workshop on social media (pp. 53–58). https://doi.org/10.1145/2072609.2072625

Deci, E. L., & Ryan, R. M. (2000). The ‘what’ and ‘why’ of goal pursuits: Human needs and the self-determination of behavior. Psychological Inquiry, 11(4), 227–268.

Demirbas, O. O., & Demirkan, H. (2007). Learning styles of design students and the relationship of academic performance and gender in design education. Learning and Instruction, 17(3), 345–359.

Demirkan, H. (2016). An inquiry into the learning-style and knowledge-building preferences of interior architecture students. Design Studies, 44, 28–51.

Dignan, L. (2020, November 10). Online learning gets its moment due to COVID-19 pandemic: Here’s how education will change. ZDNet. https://www.zdnet.com/article/online-learning-gets-its-moment-due-to-covid-19-pandemic-heres-how-education-will-change/

Dunn, R., Beaudry, J., & Klavas, A. (2002). Survey of research on learning styles. California Journal of Science, 2(2), 75–98.

Dunn, R., DeBello, T., Brennan, P., Krimsky, J., & Murrain, P. (1981). Learning style researchers define differences differently. Educational Leadership, 38(5), 372–375.

Efland, A. (1990). A history of art education: Intellectual and social currents in teaching the visual arts. Teacher’s College Press.

Felder, R. M. (1996). Matters of style. ASEE. Prism, 6(4), 18–23.

Ferrer-Caja, E., & Weiss, M. R. (2000). Predictors of intrinsic motivation among adolescent students in physical education. Research Quarterly for Exercise and Sport, 71(3), 267–279.

Finstuen, K. (1977). Use of Osgood’s semantic differential. Psychological Reports, 41(3), 1219–1222.

Fisher, M., King, J., & Tague, G. (2001). Development of a self-directed learning readiness scale for nursing education. Nurse Education Today, 21(7), 516–525. https://doi.org/10.1054/nedt.2001.0589

Fleischmann, K. (2014). Collaboration through Flickr & Skype: Can web 2.0 technology substitute the traditional design studio in higher design education? Contemporary Educational Technology, 5(1), 39–52.

Fleischmann, K. (2020a). Hands-on versus virtual: Reshaping the design classroom with blended learning. Arts and Humanities in Higher Education. https://doi.org/10.1177/1474022220906393

Fleischmann, K. (2020b). The online pandemic in design courses: Design higher education in digital isolation. In L. Naumovska (Ed.), The Impact of COVID19 on the international education system (pp. 1–16). Proud Pen. https://doi.org/10.51432/978-1-8381524-0-6_1

Fleming, N. D., & Baume, D. (2006). Learning styles again: VARKing up the right tree. Educational Development, 7(4), 4–7.

Fleming, N. D., & Mills, C. (1992). Not another inventory, rather a catalyst for reflection. To Improve the Academy, 246(11), 137–155.

Friborg, O., Martinussen, M., & Rosenvinge, J. H. (2006). Likert-based vs. semantic differential-based scorings of positive psychological constructs: A psychometric comparison of two versions of a scale measuring resilience. Personality and Individual Differences, 40(5), 873–884.

Garrison, D. R. (1997). Self-directed learning: Toward a comprehensive model. Adult Education Quarterly, 48(1), 18–33.

Garrison, D. R., Anderson, T., & Archer, W. (1999). Critical inquiry in a text-based environment: Computer conferencing in higher education. The Internet and Higher Education, 2(2–3), 87–105. https://doi.org/10.1016/S1096-7516(00)00016-6

Garrison, D. R., Cleveland-Innes, M., & Fung, T. S. (2010). Exploring causal relationships among teaching, cognitive and social presence: Student perceptions of the community of inquiry framework. The Internet and Higher Education, 13(1–2), 31–36.

Gelernter, M. (1988). Reconciling lectures and studios. Journal of Architectural Education, 41(2), 46–52.

Gogu, C. V., & Kumar, J. (2021). Social connectedness in online versus face-to-face design education: A comparative study in India. In Design for tomorrow—Volume 2 (pp. 407–416). Springer.

Goldschmidt, G. (2014). Modeling the role of sketching in design idea generation. In A. Chakrabarti, & L. Blessing (Eds.) ,An anthology of theories and models of design (pp. 433–450). Springer. https://doi.org/10.1007/978-1-4471-6338-1_21

Goode, S., Willis, R., Wolf, J., & Harris, A. (2007). Enhancing IS education with flexible teaching and learning. Journal of Information Systems Education, 18(3), 297–302.

Gray, C. M. (2020). Markers of Quality in Design Precedent. International Journal of Designs for Learning, 11(3), 1–12. https://doi.org/10.14434/ijdl.v11i3.31193

Green, L. N., & Bonollo, E. (2003). Studio-based teaching: History and advantages in the teaching of design. World Transactions on Engineering and Technology Education, 2(2), 269–272.

Grove, P. W., & Steventon, G. J. (2008). Exploring community safety in a virtual community: Using Second Life to enhance structured creative learning. In Learning in virtual environments international conference (pp. 154–171). Open University. Retrieved from http://www2.open.ac.uk/relive08/documents/ReLIVE08_conference_proceedings_Lo.pdf

Guler, K. (2012). Digital natives and the digitalization of interior design studio. Ubiquitous Learning: An International Journal, 4(1), 91–98.

Guler, K. (2015). Social media based learning in the design studio: A comparative study. Computers & Education, 87, 192–203. https://doi.org/10.1016/j.compedu.2015.06.004

Guler, K., & Atalayer, F. (2016). Assessing visual skill development in basic design education. Art, Design & Communication in Higher Education, 15(1), 71–88. https://doi.org/10.1386/adch.12.1.71_1

Gunawardena, C. N., & Zittle, F. J. (1997). Social presence as a predictor of satisfaction within a computer-mediated conferencing environment. American Journal of Distance Education, 11(3), 8–26. https://doi.org/10.1080/08923649709526970

Hall, P. A. (2016). Re-integrating design education: Lessons from history. Retrieved November 23, 2020, from https://ualresearchonline.arts.ac.uk/id/eprint/10356/1/287%2BHall.pdf

Harasim, L. (2017). Learning theory and online technologies (2nd ed.). Routledge.

Hew, K., & Cheung, W. (2013). Audio-based versus text-based asynchronous online discussion: Two case studies. Instructional Science: An International Journal of the Learning Sciences, 41(2), 365–380.

Hill, G. A. (2017). The ‘Tutorless Design Studio’: A radical experiment in blended learning. Journal of Problem Based Learning in Higher Education, 5(1), 111–125.

Hodges, C., Moore, S., Lockee, B., Trust, T., & Bond A. (2020). The difference between emergency remote teaching and online learning. Educause Review. Retrieved November 20, 2020, from https://er.educause.edu/articles/2020/3/the-difference-between-emergency-remote-teaching-and-online-learning

Hsiao, I. H., Guerra, J., Parra, D., Bakalov, F., König-Ries, B., & Brusilovsky, P. (2012). Comparative social visualization for personalized e-learning. In Proceedings of the international working conference on advanced visual interfaces (pp. 303–307). Retrieved September 23, 2020, from http://columbus.exp.sis.pitt.edu/jguerra/files/p303-hsiao.pdf

Hussman, P. R., & O’Loughlin, V. D. (2006). Another nail in the coffin for learning styles? Disparities among undergraduate anatomy students’ study strategies, class performance, and reported VARK learning styles. Anatomical Sciences Education, 12(1), 6–19. https://doi.org/10.1002/ase.1777

Jin, S. H. (2017). Using visualization to motivate student participation in collaborative online learning environments. Journal of Educational Technology & Society, 20(2), 51–62.

Jones, D., Lotz, N., & Holden, G. (2021). A longitudinal study of virtual design studio (VDS) use in STEM distance design education. International Journal of Technology and Design Education, 31(4), 839–865.

Kay, J. (2001). Learner control. User Modeling and User-Adapted Interaction, 11, 111–127.

Kraemer, D. J., Hamilton, R. H., Messing, S. B., DeSantis, J. H., & Thompson-Schill, S. L. (2014). Cognitive style, cortical stimulation, and the conversion hypothesis. Frontiers in Human Neuroscience, 8, 151–159. https://doi.org/10.3389/fnhum.2014.00015

Krueger, R. A. (2014). Focus groups: A practical guide for applied research. Sage.

Krueger, R. A. & Casey, M. A. (2015). Focus groups—A practical guide for applied research. Sage.

Kurt, S. (2009). An analytic study on the traditional studio environments and the use of constructivist studio in the architectural design education. Procedia Social and Behavioral Sciences, 11, 401–408.

Kvan, T. (2001). The pedagogy of virtual design studios. Automation in Construction, 10(3), 345–353. https://doi.org/10.1016/S0926-5805(00)00051-0

Lau, J., & Ross, J. (2020). Universities brace for lasting impact of coronavirus outbreak. Times Higher Education. Retrieved November 11, 2020, from https://www.timeshighereducation.com/news/universities-brace-lasting-impact-coronavirus-outbreak

Lee, Y., & Choi, J. (2011). A review of online course dropout research: Implications for practice and future research. Educational Technology Research and Development, 59(5), 593–618.

Lotz, N., Jones, D., & Holden, G. (2015). Social engagement in online design pedagogies. In R. Vande Zande, E. Bohemia, & I. Digranes (Eds.), Proceedings of the 3rd international conference for design education researchers (pp. 1645–1668). Aalto University.

Luskin, B., & Hirsen, J. (2010). Media psychology controls the mouse that roars. In K. E. Rudestam, & J. Schoenholtz-Read (Eds.), Handbook of online learning. Sag.

MacDonald, S. (2004). The history and philosophy of art education. Lutterworth Press.

Maher, M. L., & Simoff, S. J. (1999) Variations on the virtual design studio. In Proceedings of fourth international workshop on CSCW in design (pp. 159–165).

Maher, M. L., Simoff, S. J., & Cicognani, A. (2012). Understanding virtual design studios. Springer.

Marshall, C., & Rossman, G. (2011). Designing qualitative research (5th ed.). Sage.

Marshalsey, L., & Sclater, M. (2020). Together but apart: Creating and supporting online learning communities in an era of distributed studio education. International Journal of Art & Design Education, 39(4), 826–840. https://doi.org/10.1111/jade.12331

Martin, J. R. (2001). Language, register and genre: Analysing English in a global context. Routledge.

Masdeu, M., & Fuses, J. (2017). Reconceptualizating the design studio in architectural education: Distance learning and blended learning as transformation factors. Archnet IJAR, 11(2), 6–23. https://doi.org/10.26687/archnet-ijar.v11i2.1156.

McClean, D. (2009). Embedding learner independence in architecture education: Reconsidering design studio pedagogy (Unpublished doctoral dissertation). Robert Gordon University, Aberdeen, UK.

Mödritscher, F. (2006). E-learning theories in practice: A comparison of three methods. Journal of Universal Science and Technology of Learning, 28(1), 3–18.

Morgan, D. L. (1997). The focus group guidebook (Vol. 1). Sage.

Mostafa, M., & Mostafa, H. (2010). How do architects think? Learning styles and architectural education. ArchNet-IJAR: International Journal of Architectural Research, 4(2/3), 310–317.

National Architectural Accrediting Board [NAAB]. (2020). Conditions for accreditation, 2020 edition.

National Association of Schools of Art and Design [NASAD]. (2020). NASAD handbook 2020–2021.

Netolicky, D. M. (2020). School leadership during a pandemic: Navigating tensions. Journal of Professional Capital and Community. https://doi.org/10.1108/JPCC-05-2020-0017

Nisha, B. (2019). The pedagogic value of learning design with virtual reality. Educational Psychology, 39(10), 1233–1254.

Nugroho, W., Malinda, E. R., Rani, M. J. M., & Sebelas, B. (2020). An exploratory study on implementation of online learning by students during the COVID-19 pandemic. Pancaran Pendidikan FKIP Universitas Jember, 9(2), 25–38.

Oblinger, D., & Oblinger, J. (2005). Is it age or IT: First steps toward understanding the net generation. Educating the Net Generation, 2(1–2), 20.

Ondrey, Z. L. (2017). The relationship between teaching presence and student satisfaction in online learning (Unpublished doctoral dissertation). Wilkes University, Wilkes-Barre, PA.

Osgood, C. E., Suci, G., & Tannenbaum, P. (1957). The measurement of meaning. Univesity of Illinois Press.

Papanagnou, D., Serrano, A., Barkley, K., Chandra, S., Governatori, N., Piela, N., Wanner, G. K., & Shin, R. (2016). Does tailoring instructional style to a medical student’s self-perceived learning style improve performance when teaching intravenous catheter placement? A randomized controlled study. BMC Medical Education, 16(1), 2051–2058.

Pashler, H., McDaniel, M., Rohrer, D., & Bjork, R. (2009). Learning styles: Concepts and evidence. Psychological Science in the Public Interest, 9(3), 105–119.

Pektas, S. T. (2007). A structured analysis of CAAD education. Open House International, 32(2), 46–54.

Pektas, S. T. (2012). The blended design studio: An appraisal of new delivery modes in design education. Procedia - Social and Behavioral Sciences, 51, 692–697. https://doi.org/10.1016/j.sbspro.2012.08.226

Pektas, Ş. T. (2015). The virtual design studio on the cloud: A blended and distributed approach for technology-mediated design education. Architectural Science Review, 58(3), 255–265. https://doi.org/10.1080/00038628.2015.1034085

Peterson, R. M. (2001). Course participation: An active learning approach employing student documentation. Journal of Marketing Education, 23(3), 187–194.

Pigliapoco, E., & Bogliolo, A. (2008). The effects of psychological sense of community in online and face-to-face academic courses. International Journal of Emerging Technologies in Learning, 3(4), 60–69.

Pomales-García, C., & Liu, Y. (2006). Web-based distance learning technology: The impacts of web module length and format. American Journal of Distance Education, 20(3), 163–179. https://doi.org/10.1207/s15389286ajde2003_4

Poynter, R. (2010). The handbook of online and social media research: Tools and techniques for market researchers. John.

Rahim, A. F. A. (2020). Guidelines for online assessment in emergency remote teaching during the COVID-19 pandemic. Education in Medicine Journal, 12(2), 59–68. https://doi.org/10.21315/eimj2020.12.2.6

Reiner, C., & Willingham, D. (2010). The myth of learning styles. Change: The Magazine of Higher Learning, 42(5), 32–35.

Reinert, H. (1976). One picture is worth a thousand words? Not necessarily! The Modern Language Journal, 60(4), 160–168. https://doi.org/10.2307/326308

Richburg, J. E. (2013). Online learning as a tool for enhancing design education (Doctoral dissertation, Kent State University).

Ridley, D. S., Schutz, P. A., Glanz, R. S., & Weinstein, C. E. (1992). Self-regulated learning: The interactive influence of metacognitive awareness and goal-setting. Journal of Experimental Education, 60(4), 293–306. https://doi.org/10.1080/00220973.1992.9943867

Rocereto, J. F., Puzakova, M., Anderson, R. E., & Kwak, H. (2011). The role of response formats on extreme response style: A case of likert-type vs. semantic differential scales. In M. Sarstedt, M. Schwaiger, & C. R. Taylor (Eds.) Measurement and research methods in international marketing (Advances in International Marketing, Vol. 22) (pp. 53–71). Emerald Group Publishing Limited. https://doi.org/10.1108/S1474-7979(2011)0000022006.

Rodriguez, C., Hudson, R., & Niblock, C. (2018). Collaborative learning in architectural education: Benefits of combining conventional studio, virtual design studio and live projects. British Journal of Educational Technology, 49(3), 337–353.

Rodriguez Bernal, C. M. (2017). Student-centred strategies to integrate theoretical knowledge into project development within architectural technology lecture-based modules. Architectural Engineering and Design Management, 13(3), 223–242. https://doi.org/10.1080/17452007.2016.1230535

Rollag, K. (2010). Teaching business cases online through discussion boards: Strategies and best practices. Journal of Management Education, 34(4), 499–526.

Roper, A. R. (2007). How students develop online learning skills. Educause Quarterly, 30(1), 62–65.

Ryan, R. M., & Deci, E. L. (2000). Self-determination theory and the facilitation of intrinsic motivation, social development, and wellbeing. American Psychologist, 55(1), 68–78.

Saghafi, M., Franz, J., & Crowther, P. (2012). Perceptions of physical versus virtual design studio education. Archnet-IJAR, 6(1), 6–22.

Sahu, P. (2020). Closure of universities due to coronavirus disease 2019 (COVID-19): Impact on education and mental health of students and academic staff. Cureus. Retrieved November 11, 2020, from https://www.cureus.com/articles/30110-closure-of-universities-due-to-coronavirus-disease-2019-covid-19-impact-on-education-and-mental-health-of-students-and-academic-staff. https://doi.org/10.7759/cureus.7541

Salas, G. (2010). Teacher candidates’ self-directed learning readinesses. (Unpublished Master Thesis). Anadolu University, Turkey.

Scardamalia, M., & Bereiter, C. (2014). Knowledge building and knowledge creation: Theory, pedagogy, and technology. Cambridge Handbook of the Learning Sciences., 2, 397–417.

Schadewitz, N., & Zamenopoulos, T. (2009) Towards an online design studio: a study of social networking in design distance learning Conference Item. In International Association of Societies of Design Research (IASDR) Conference 2009, Seoul.

Schafer, C. L. (2012). Online learning and the process of change: The experiences of faculty and students at a two-year college (Unpublished Doctoral Dissertation). University of St. Thomas, St. Paul, MN.

Schnabel, M. A., & Ham, J. J. (2012). Virtual design studio within a social network. Journal of Information Technology in Construction., 17, 397–415.

Schön, D. A. (1987). Educating the reflective practitioner: Toward a new design for teaching and learning in the professions. Jossey-Bass.

Schroeder-Moreno, M. S. (2010). Enhancing active and interactive learning online-lessons learned from an online introductory agroecology course. NACTA Journal, 54(1), 21–30.

Schutt, M., Allen, B. S., & Laumakis, M. A. (2009). The effects of instructor immediacy behaviors in online learning environments. Quarterly Review of Distance Education, 10(2), 135–148.

Sesay, M. G. (2019). Perceived stress in college students: Prevalence, sources, and stress reduction activities (Unpublished Doctoral Dissertation). California Baptist University, Riverside, CA.

Shea, P., Fredericksen, E., Pickett, A., Pelz, W., & Swan, K. (2000). Measures of learning effectiveness in the Suny learning network. Retrieved November 11, 2020, from https://urresearch.rochester.edu/institutionalPublicationPublicView.action?institutionalItemId=2491

Shirky, C. (2009). Here comes everybody: The power of organizing without organizations. Penguin Press.

Sireesha, N. L. (2018). The effects of technology on architectural education. International Research Journal of Architecture and Planning, 3(1), 22–28.

Snyder, K. D. (2003). Ropes, poles, and space active learning in business education. Active Learning in Higher Education, 4(2), 159–167. https://doi.org/10.1177/1469787403004002004

Struthers, C. W., Perry, R. P., & Menec, V. H. (2000). An examination of the relation among academic stress, coping, motivation, and performance in college. Research in Higher Education, 41(5), 581–592.

Swan, K., Shea, P., Fredericksen, E., Pickett, A., Pelz, W., & Maher, G. (2000). Building knowledge building communities: Consistency, contact and communication in the virtual classroom. Journal of Educational Computing Research, 23(4), 359–383.

Tello, S. F. (2007). An analysis of student persistence in online education. International Journal of Information and Communication Technology Education (IJICTE), 3(3), 47–62. https://doi.org/10.4018/jicte.2007070105

Thomas, M. J. W. (2002). Learning within incoherent structures: The space of online discussion forums. Journal of Computer Assisted Learning, 18(3), 351–366. https://doi.org/10.1046/j.0266-4909.2002.03800.x

Thurmond, V. A., Wambach, K., Connors, H. R., & Frey, B. B. (2002). Evaluation of student satisfaction: Determining the impact of a web-based environment by controlling for student characteristics. The American Journal of Distance Education, 16(3), 169–189.

Utman, C. H. (1997). Performance effects of motivational state: A meta-analysis. Personality and Social Psychology Review, 1(2), 170–182. https://doi.org/10.1207/s15327957pspr0102_4

Van Merriënboer, J. J., & Sluijsmans, D. M. (2009). Toward a synthesis of cognitive load theory, four-component instructional design, and self-directed learning. Educational Psychology Review, 21(1), 55–66.

vd Westhuizen, D. (2016). Guidelines for online assessment for educators. Commonwealth of Learning. https://doi.org/10.13140/RG.2.2.31196.39040

Verstijnen, I. M., van Leeuwen, C., Goldschmidt, G., Hamel, R., & Hennessey, J. M. (1998). Creative discovery in imagery and perception: Combining is relatively easy, restructuring takes a sketch. Acta Psychologica, 99(2), 177–200. https://doi.org/10.1016/S0001-6918(98)00010-9

Weerasinghe, T. A., Ramberg, R., & Hewagamage, K. P. (2009). Guidelines to design online learning environments. Retrieved November 11, 2020, from https://www.researchgate.net/profile/Thushani_Weerasinghe/publication/257923909_Guidelines_to_Design_Successful_Online_Learning_Environments/links/5b0441e60f7e9be94bdba0ac/Guidelines-to-Design-Successful-Online-Learning-Environments.pdf

Wei, C. W., & Chen, N. S. (2012). A model for social presence in online classrooms. Educational Technology Research and Development, 60(3), 529–545. https://doi.org/10.1007/s11423-012-9234-9

Weinel, M., Bannert, M., Zumbach, J., Hoppe, H. U., & Malzahn, N. (2011). A closer look on social presence as a causing factor in computer-mediated collaboration. Computers in Human Behavior, 27(1), 513–521. https://doi.org/10.1016/j.chb.2010.09.020

Willging, P. A., & Johnson, S. D. (2009). Factors that influence students’ decision to dropout of online courses. Journal of Asynchronous Learning Networks, 13(3), 115–127.

Wirtz, J., & Lee, M. C. (2003). An examination of the quality and context-specific applicability of commonly used customer satisfaction measures. Journal of Service Research, 5(4), 345–355.

Wojtowicz, J. (1995). Virtual design studio. Hong Kong University Press.

Young, M. R. (2005). The motivational effects of the classroom environment in facilitating self-regulated learning. Journal of Marketing Education, 27(1), 25–40.

Yuan, J., & Kim, C. (2014). Guidelines for facilitating the development of learning communities in online courses. Journal of Computer Assisted Learning, 30(3), 220–232.

Zapalska, A., & Brozik, D. (2006). Learning styles and online education. Campus-Wide Information Systems, 23(5), 325–335.

Zimmerman, B. J. (2008). Investigating self-regulation and motivation: Historical background, methodological developments, and future prospects. American Educational Research Journal, 45(1), 166–183.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix 1: Course efficiency survey

Q. no | Question | Low KeyWord | High KeyWord | Mean | Mdn | Std. Dev |

|---|---|---|---|---|---|---|

1 | How would you rate your overall learning experience? | Frustrating | Satisfactory | 4.30 | 4 | 0.80 |

2 | How would you define your perception of the online course delivery method? | Tedious | Exciting | 3.79 | 4 | 0.95 |

3 | How would you rate the overall workload for this course? | Light | Demanding | 2.88 | 3 | 0.88 |

4 | How would you rate your overall motivation for learning during this course? | Low | High | 3.52 | 4 | 0.89 |

5 | How would you define your outlook towards the concepts regarding technology and the internet? | Hesitant | Fluent | 4.21 | 4 | 0.81 |

6 | How would you rate your ability of finding and learning information online, by yourself? | Low | High | 4.18 | 5 | 1.06 |

7 | How would you rate your tendency towards communicating/interacting with individuals online? | Reluctant | Eager | 3.12 | 3 | 1.15 |

8 | How would you rate your overall sense of engagement throughout this course? | Limited | Substantial | 3.73 | 4 | 0.75 |

9 | Have your expectations of the course been met? | Fell Short | Exceeded | 4.36 | 4 | 0.48 |