Abstract

Does political polarization lead to dysfunctional behavior? To study this question, we investigate the attitudes of supporters of Donald Trump and of Hillary Clinton towards each other and how these attitudes affect spiteful behavior. We find that both Trump and Clinton supporters display less positive attitudes towards the opposing supporters compared to coinciding supporters. More importantly, we show that significantly more wealth is destroyed if the opponent is an opposing voter. This effect is mainly driven by Clinton voters. This provides the first experimental evidence that political polarization leads to destructive behavior.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Political polarization is a widespread global phenomenon (Carothers and Donohue 2019), with consequences of great interest to the public and social scientists (Bail et al. 2018; Dixit and Weibull 2007). Particularly, the US is useful to study polarization and its consequences due to increasing polarization in current years (Boxell et al. 2020). Recent studies show that the polarization of the Democratic and Republican Parties is increasing and is higher than at any other time since the Civil War (Hare and Poole 2014). A current survey by the Pew Research center also demonstrates that partisanship division increased in recent years and not only on the party level (Pew Research Center 2017a). The Pew Research Center (2017a) shows that in 1994 more than one-third of self-identified Republicans were more liberal than the median Democrat—compared to just 5% in 2017. The same trend can be seen for Republicans. The study also illustrates that “Republicans and Democrats both say their friend networks are predominantly made up of people who are like-minded politically”(Pew Research Center 2017a) and most Democrats and Republicans state that they have few or no friends in the opposing party.

Partisanship does not only shape views (Bartels 2002) and influence political behavior (Campbell et al. 1980; Green et al. 2002) but also affects the decision where to live (Bishop and Cushing 2008), what to buy (Nunberg 2007) and how to name the own children (Oliver et al. 2016). Political polarization also has negative consequences. For example, it has been shown that political polarization corrodes civility in public discourse (Sunstein 2018), erodes public faith in institutions (Fomina 2019) and nourishes discontent with political parties (Carothers and Donohue 2019). Yet, it is an open question whether and how political polarization affects individual decision-making, particularly destructive behavior.

Our research examines whether and to what degree political polarization “spills over” into non-political, especially destructive behavior. To examine this question, we recruit from the population of American online workers without revealing anything about our interest in partisan spillovers. We then compare individual choices outside of the voting context but based on the (revealed) partisan preferences of the co-player. Our main question is whether partisanship produces spiteful decisions among the population at large outside of political contexts.

We, therefore, measure attitudes of supporters of Donald Trump and of supporters of Hillary Clinton towards coinciding voters and opposing voters. More importantly, we measure whether participants are willing to harm their counterparts (by reducing their payoff). We do so by adopting a design with no trade-off between the own and the others’ payoffs; that is, a setting of pure spite. To the best of our knowledge, we are the first to study spite in a political context, and more importantly, we are the first to study the “spill over” of partisan preferences into destructive behavior.

Previous research has already focused on whether partisanship affects non-political behavior. For example, Fowler and Kam (2007) demonstrates that partisanship influences decisions in dictator games. They show ingroup favoritism among co-partisans in the sense that dictators share more money with partisan recipients than non-partisans. In a similar vein, Carlin and Love (2013) investigate the effect of partisanship on trust behavior. They show that partisanship biases trust behavior in favor of co-partisans.Footnote 1 While there is a trade-off between the own payoff and the other’s payoff in dictator and trust games, we use a game where there is no monetary benefit for the decider in destroying the payoff of the other. Thus, we look at a systematically different situation. While e.g., Fowler and Kam (2007) can show that partisanship influences decisions in dictator games, it seems like this behavior is to benefit the ingroup without harming the out-group. Yet, the dictator game does not allow for a distinction between positive and negative discrimination. We use a design that identifies the dysfunctional aspect of behavior. Hence, the difference between a dictator game and our game is comparable to the difference between omitting help and directly harming others. Thus, unlike Fowler and Kam (2007) and Carlin and Love (2013) the main goal of our paper is to study dysfunctional behavior directly.

Investigating the effect of partisanship on non-political behavior is also closely linked to the literature on the ingroup–outgroup bias.Footnote 2 This literature investigates whether group affiliation (either induced or existing) leads to discriminatory behavior towards ingroup and/or outgroup-members.Footnote 3 One of the main findings in this strand of literature is that people discriminate positively towards the ingroup (ingroup favoritism). However, hostile discrimination against the outgroup (outgroup-hate) is rarely found.Footnote 4

One exception using natural groups is Weisel and Böhm (2015), who show that findings of outgroup-hate are actually more a form of help avoidance and less a form of direct harm to the outgroup. Similarly, Abbink and Harris (2019) study intergroup conflict among artificial and naturally occurring groups and find outgroup discrimination only among the naturally occurring groups. Moreover, Heap and Zizzo (2009) and Zizzo (2010) show negative discrimination in artificially induced groups and in cases where the perception of common fate is missing. Further, Brewer (2017) reviews the literature on ingroup-love and outgroup-hate and points out that most discrimination is positive and not negative. Balliet et al. (2014) apply meta-analytic techniques on cooperative decision-making studies of intergroup conflict and find support for ingroup favoritism, but little evidence for outgroup-hate. Most recently, Dimant (2020) provides evidence that ingroup-love and outgroup-hate function differently and are not necessarily two sides of the same coin. Specifically, Dimant (2020) finds, in a setting of political polarization, that ingroup-love occurs in the perceptional domain, whereas outgroup-hate occurs in the behavioral domain.

In the economic literature, Chen and Li (2009) show that ingroup-bias also transcends to economic decision-making.Footnote 5 More specifically they show that a match with an ingroup-member results in greater charity concerns and lower envy.Footnote 6 The most current advancements are made by Kranton and Sanders (2017) and Kranton et al. (2018). Kranton and Sanders (2017) show that people exhibit groupy and non-groupy preferences. More specifically, Kranton and Sanders (2017) use a minimal-group paradigm and political affiliation to investigate whether a person’s social preferences change depending on the matched partner type. They show that 40% of participants exhibit no bias, i.e., the participants do not change their social preferences, while 60% of participants switch from one social-preference classification to another (e.g., selfish to inequality-averse). Interestingly, the results in Kranton and Sanders (2017) indicate that ingroup-bias might result in a higher likelihood of being classified as dominance-seeking. Thus our work can be seen as complementary to Kranton and Sanders (2017) and provides even stronger support for the importance of partisanship on changes in social preferences. Thus, while the literature so far has mainly provided compelling evidence for ingroup-bias, there has been no sole focus on the destructive side of this bias. Different from the previous literature, we focus particularly on negative discrimination in the form of direct harm.

While the question at hand has not been answered so far, there has been some research on antisocial behavior. A prevalent theme in economic decision-making is the study of prosocial behavior. It has been demonstrated that people have prosocial preferences,Footnote 7 behave as conditional cooperators,Footnote 8 and are willing to sacrifices their own payoff for the benefit of another person.Footnote 9 However, the dark side of economic decision-making is emerging recently. This literature shows that a significant fraction of people are not maximizing payoff or behaving prosocially but are punishing antisocially (Herrmann et al. 2008), are burning money of others,Footnote 10 behaving spitefullyFootnote 11 and even are willing to pay for the antisocial behavior.Footnote 12 This literature has also established that even children display destructive behavior (Fehr et al. 2013), that observability might induce antisocial behavior (Bolton et al. 2018), and that social proximity matters for social preferences (Dimant and Hyndman 2019). Further, Dimant (2019) shows that antisocial behavior is more contagious than prosocial behavior and that social proximity amplifies this effect. Similarly, Bauer et al. (2018) provide evidence of social contagion of ethnic hostility, and Zizzo and Fleming (2011) show that social pressure is correlated with money-burning behavior. Most relevant for our paper in the context of antisocial behavior are the studies by Dimant (2020) and Gangadharan et al. (2019). Dimant (2020) focuses on political polarization (in the form of hate and love for Donald J. Trump) and finds outgroup-hate in a take-or-give dictator game. Gangadharan et al. (2019) study the effect of social identity (via university residential arrangements) on antisocial behavior and reveal that sharing the same social identity mitigates antisocial behavior. We contribute to this literature by focusing explicitly on the effect of political polarization on antisocial behavior.

In line with the previous literature on destructive behavior, we find that some participants are willing to destroy the resources of coinciding voters. More importantly, however, we show that, on the aggregate, participants behave significantly more spitefully towards their voting counterpart (i.e., opposing voters)—they increase the probability of destroying an opposing voter’s payoff by almost 15% relative to the probability of destroying a fellow voters’ payoff—which constitutes our main finding. Yet, it is important to understand whether this effect is found throughout or is rather driven by a subgroup. Of particular interest is the behavior of the rather distinct subgroups of Clinton and Trump voters. We find that Trump voters, who generally exhibit higher levels of spite, do not behave more spitefully towards opposing voters—there is no statistically significant effect of their opponents’ partisanship on their choices. By contrast, Clinton voters do behave increasingly more spitefully towards opposing voters—they increase the probability of destroying a Trump voters’ payoff by almost 34% relative to the probability of destroying a fellow Clinton voter’s payoff. Thus, our main result is driven primarily by the behavior of Clinton voters. We also see that participants significantly dislike their voting counterparts. The difference in behavior between Clinton and Trump voters seems to arise from an asymmetry in the intensity of the ingroup–outgroup bias. Clinton voters express strong antipathy toward Trump supporters, whereas Trump voters have a weaker aversion towards Clinton voters. Thus, we offer three main results:

-

1.

Participants report having more negative attitudes towards opposing voters.

-

2.

Participants behave significantly more spitefully towards their voting counterparts, which is mostly driven by Clinton voters.

-

3.

Clinton and Trump voters differ substantially in their attitudes and behavior towards opposing voters.

Altogether, we provide first evidence of spiteful behavior due to political affiliation, and we find first empirical support for the destructive effects of political division.

2 Design

In the following, we will present the recruitment, the procedure of the experiment, and the measures of interest.Footnote 13 We conducted the experiment in five waves: before the 58th US presidential election in late November 2016, after the inauguration of the president-elect in late January 2017, before the midterms in late October 2018, after the midterms in early November 2018 and after the 59th US presidential election in early January 2021.Footnote 14

2.1 Recruitment

Participants were recruited via Amazon’s Mechanical Turk (MTurk) which is an online labor market and frequently used by social scientists for conducting experiments.Footnote 15 Workers in MTurk can choose from human intelligence tasks (HITs), and are paid by the requester after performing the task. These tasks are typically relatively simple and quick (like answering surveys, transcribing data, classifying images, etc) (Horton et al. 2011; Paolacci et al. 2010). More importantly, MTurk samples tend to be more representative of the US population than typical student samples as MTurk samples are usually more diverse in age, ethnicity, education, and geographical location than student samples (Buhrmester et al. 2011; Paolacci et al. 2010). Further, the results obtained in MTurk are similar to results obtained via traditional methods (Buhrmester et al. 2011; Paolacci et al. 2010). For example, in a recent paper Arechar et al. (2018) show that even interactive experiments can be conducted very reliably online and that behavioral patterns observed in the lab can be replicated using an online experiment with an MTurk sample.

In addition to the more diverse sample, there are several advantages of using an online design for our experiment. First, participants’ anonymity can be sufficiently ensured, as we have only participants’ MTurk-ID, which might result in more reliable results concerning antisocial behavior. Secondly, reciprocity concerns can be minimized as participants have no way of meeting the other participants nor figuring out who was assigned as their partner (which might be possible in a laboratory setting, which might bias behavior in a more pro-social direction). Third, peer effects can be excluded for the reason mentioned above. Fourth, participants might be more open to revealing their true political identity.

To ensure a qualitative sample (i.e., participants understanding the task and paying attention), we restrict eligibility criteria. We restrict recruitment to US-based individuals with an approval rate of 97% or higher.Footnote 16 Further, we restrict recruiting to individuals with 500 or more approved HITs. In addition, individuals have to pass an attention check and comprehension questions to take part.

2.2 Procedure

Participants willing to take part in the study were directed to the online survey tool Qualtrics, where they were asked about their vote in the 58th US presidential election. Only participants who indicated to vote for either Donald Trump or Hillary Clinton were directed towards the consent form.Footnote 17 Undecided or independent voters were excluded from participation in the survey. All of the remaining participants were directed, after reading the consent form, to answer socio-demographic questions (gender, age, income, education).Footnote 18

Thereafter, the participants were presented with the experimental manipulation. The manipulation of the experiment was to let the participants either interact with a coinciding voter or an opposing voter.

The participants were told that at the beginning of the experiment, Trump voters were assigned the group color red, while Clinton voters were assigned the color blue. The manipulation across participants was to tell them which color their matched opponent will have (either red or blue).Footnote 19

To test comprehension and attentiveness, we asked whether participants understand the elements that appear on her screen, as some recent studies indicate the use of bots on MTurk. These simple questionnaire-elements include choices, payoffs, as well as information of her coplayer, i.e., whether their matched competitor was assigned the color blue, red, or green (which was a filler). Inattentive participants, those not comprehending the task, as well as potential bots were filtered out, as we are only interested in participants who have a basic comprehension of the task. Thus, failing to answer these questions correctly led to the exclusion of the experiment and the payment.

One potential concern of asking—as a basic comprehension test—which color the competitor was assigned to, might make the manipulation salient.Footnote 20 There are several responses to this concern. First, it is crucial for this study that participants have a basic comprehension of the task and the situation. Second, there have been several comprehension questions which would diffuse the focus on this particular manipulation question. Third, even if the question would result in a higher salience for the political position of the opponent, this would just result in a more realistic setting. Political attitudes and views are often presented and highlighted very saliently by real-world actors, as setting up yard signs, having political bumper stickers, wearing MAGA-hats, etc.Footnote 21

Overall, we have a 2 (Own Vote \(\in \{\)Clinton; Trump\(\}\)) \(\times\) 2 (Opponent’s Vote \(\in \{\)Clinton; Trump\(\}\)) design.

After answering the control question, we measured the spite behavior towards their co-player (either coinciding voter or opposing voter), which is explained in greater detail in Sect. 2.3. After completing the incentivized tasks, the participants had to answer a set of post-experimental questions.

To measure the participant’s attitudes towards the opposing voters, we used an adjusted version of the social distance questionnaire (Crandall 1991) and the feeling thermometer (Weisberg 1980). We also elicited the general spite tendency by using a questionnaire (Marcus et al. 2014).Footnote 22

At the end of the experiment, one of the items from the spite behavior was randomly selected to become payoff-relevant to ensure incentive compatibility.Footnote 23 The participants were informed that they would be paid within 1 week after determining the payoff, depending on their and their opponent’s decision.Footnote 24 A graphical representation of the procedure can be seen in Fig. 16 in supplementary material.

2.3 Own spite measure

Our main measure was aimed to mimic basic market interactions where spite has been observed. More specifically, our measure reflects a simplified and condensed version of a second-price auction where one player can reduce the payoff of the opponent by increasing the own bid (see Kimbrough and Reiss 2012, for such a situation). This measure consists of three distribution-decisions upon money. These distributions are shown in Table 1. We call this our own spite measure. We asked the participants to decide three times among nine possible allocations, similar to the SVO-Slider measure by Murphy et al. (2011). The participants were told that either their decision or their opponent’s decision would be implemented, depending on a computerized random draw.

In all sets, the allocation with the highest payoff for the other player also maximizes the own payoff. However, any deviation from this allocation reduces the payoff of the other player and never increases the own payoff. In contrast to a standard dictator game—where there is a trade-off between the own payoff and the payoff of the opponent—in this game, the participants who do not choose the Pareto-efficient outcome do this in order to harm the other player. Therefore, any deviation from the Pareto-efficient outcome resembles spiteful behavior in a market setting and can be interpreted as spite or joy-of-destruction.Footnote 25

In our measure, the spite score is the amount taken away relative to the maximally possible amount. The amount taken away can range between 0 and 60 points (reducing the payoff of the opponent in all three distributions) and, therefore, the spite score ranges between 0 and 1.Footnote 26 As spite is arguably rather rare, we designed the task such that the incentive to behave spitefully is rather high. In particular, we decided to have low stakes (i.e., each point in the distribution represents 0.2 cents)Footnote 27 so that behaving spitefully is easily detected. This way, first, we potentially increase the chance of finding spiteful behavior, and second, any null-findings would have a greater bearing.

2.4 Measures of attitudes

To measure the participant’s attitudes towards their co-player, we utilized the social distance questionnaire and the feeling thermometer.

2.4.1 Social distance questionnaire

The social distance questionnaire is designed “to measure social rejection and willingness to interact with an individual member of a social group” (Robinson et al. 1999, p. 341 ff). In our experiment, the respective social groups were Trump voters and Clinton voters. The questionnaire elicits the agreeableness upon seven items on a scale between one and seven. The participants were asked to rate how strongly they agree with statements about a person. For example, participants were asked to indicate how strongly they agree with the following statements made about a Trump voter: “This appears to be a likable person” or “I would like this person to move into my neighborhood.” The social distance score is the mean of seven item answers.Footnote 28 Higher scores indicate feeling closer to the individual member of the respective social group.

2.4.2 Feeling thermometer

The feeling thermometer is commonly used in polling (f.e. American national election studies), political sciences (Greene 1999; Kaid et al. 1992; Miller and Wlezien 1993) and also in medicine (Patrick et al. 1994; Jacobson et al. 1992; Schünemann et al. 2003). The feeling thermometer asks participants to imply how warm they feel towards a specific group or person. We asked participants to indicate their feeling towards Clinton voters, Trump voters, Republicans in general, and Democrats in general, on a scale between 0 and 10. Participants were told that if they had a positive feeling towards a group or feel favorably towards it, they should give it a score somewhere between 5 and 10, depending on their feeling. If they felt negatively, they should give a score between 0 and 5, and in case of no feeling, they should give a score of 5.

2.5 Framework

To help the reader place our results, we present a simple framework based on a model of antisocial behavior by Gangadharan et al. (2019). The monetary payoff of participant i is denoted by \(\pi _i(a)\) for each choice a. However, we also assume that participant i has a concern for the monetary payoff of the opponent \(\pi _j(a)\). Following Gangadharan et al. (2019), we assume this concern to be the difference between the own monetary payoff and the monetary payoff of the opponent weighted by the spite factor \(\alpha \in [-1,1]\). The utility function of participant i, therefore, is given by:

where u(x) denotes the utility from monetary payoff. For values of \(\alpha \in (0,1]\) we classify the preferences of the participant as spiteful and for values of \(\alpha \in [-1,0)\) we classify the preferences of the participant as altruistic. To account for the political identity of the opponent we assume that the regard for the opponent’s gain is shifted by \(\mu\). Thus, the utility function of participant influenced by political identity is given by:

Let \(\mu =0\) if participants share the same political identity as their opponent, and \(\mu >0\) otherwise.

We can easily see that the utility of i is increasing in \(\pi _j(a)\) if they are altruistic (\(\alpha \in [-1,0)\)) and is decreasing in \(\pi _j(a)\) if they are spiteful (\(\alpha \in (0,1]\)). More importantly, it is evident that the decreasing utility in \(\pi _j(a)\) is larger if the participants do not share the same political identity as their opponent. Thus, we hypothesize that participants behave more spitefully if they are paired with an opposing voter compared to a coinciding voter, i.e. political identity influences antisocial behavior.

3 Results

3.1 Participants

A total of 3535 participants (1888 females, 1647 males, 2180 Clinton voters, 1355 Trump voters) finished the survey.Footnote 29

As with most experimental studies, our sample does not perfectly represent the American population. However, in Online Appendix we elaborate in detail how our selected sample does show a striking similarity to the general populations’ patterns and reflects the attitudes of general Clinton and Trump voters rather reliably.

3.2 Spite behavior

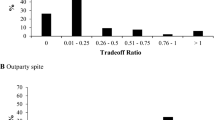

Results of the spite score. The figure on the top depicts how spitefully participants behave their partners. The left three bars show the spite behavior of Trump voters while the right three bars show the spite behavior of Clinton voters. Red bars denote the spite behavior towards outgroup-members (i.e., opposing voter), while blue bars denote the spite behavior towards ingroup-members (i.e., coinciding voter) averaged over all five waves. Green bars denote the spite behavior in the baseline treatment, which was collected only in the fifth wave, where no information upon the opponent was provided. Tie fighters depict 95% confidence intervals. P values are calculated using t-tests. The figure on the bottom depicts the distribution of the spite score by opponent. The red distribution denotes the spite behavior toward ingroup-members while the blue distribution denotes the spite behavior towards outgroup-members. (Color figure online)

To see how participants are behaviorally influenced by their opponent, we examine the spite score in this section. The spite score is a proportion between 0 and 1, and it represents how many points were taken away from the opponent relative to the maximally possible amount. For example, a spite score of .4 means that 40% of the payoff was destroyed relative to payoff that could have been destroyed. A distribution of the spite score is shown in the bottom panel of Fig. 1. The top panel of Fig. 1 depicts the aggregated spite behavior by opponent and vote. We can see that the spite behavior towards ingroup-members (i.e., coinciding voters) is substantial. On average 41% of participants behave spitefully towards an ingroup-member and overall participants exhibit a spite score of 0.19 towards ingroup-members. Note that this level of spite is not uncommon in the literature. For example, Levine (1998) demonstrates that 20% of subjects might be considered spiteful. Sadrieh and Schröder (2017) find in their experiment that about 37% of participants were willing to harm passive players. Further, Kimbrough and Reiss (2012) find that 34% of choices are spiteful and about 15% of choices are maximally spiteful, and Abbink and Sadrieh (2009) and Abbink and Herrmann (2011) find destruction rates between 9 and 40%.

To analyze the spite behavior more formally we will use a zero-inflated beta-regression. In Online Appendix we discuss in detail why we use this estimation approach and how it is estimated. Note that all our results are also robust to more common estimation methods like OLS and Tobit regressions as discussed in Online Appendix. Essentially the zero-inflated beta-regression estimates the decision to be spiteful in two parts: first, it estimates whether a participant decided to be spiteful or not (using a logistic regression); and second, it estimates conditionally on deciding to be spiteful, how spiteful participants decided to behave (using a beta regression).

On the aggregate (pooling over Clinton and Trump voters), we can see from Table 2 that the probability of a participant to behave spitefully towards an opposing voter (47%) is significantly higher than the probability of a participant to behave spitefully towards a fellow voter (41%). Specifically, the probability of spiteful behavior increases by 15% if a participant is to interact with an opposing voter. However, the intensity of spite, conditional on being spiteful, does not increase significantly if the deciding participant is interacting with an outgroup-member compared to an ingroup-member. Further, we see that spiteful behavior is indistinguishable between ingroup-members and neutral members.Footnote 30 Thus, we find evidence of negative discrimination.Footnote 31

Result B1 Participants are significantly more likely to behave spitefully towards an opposing voter compared to a coinciding voter.

Thus, the key insight is that the participants are more likely to behave spitefully towards people who voted differently in a non-political situation, and shows that partisanship spills over into the non-political realm. However, this result is driven mainly by the behavior of Clinton voters.

The disaggregated results can be found in Table 2.Footnote 32 The probability of a Clinton voter to behave spitefully towards a Trump voter is significantly higher than the probability of a Clinton voter to behave spitefully towards a Clinton voter. Specifically, Clinton voters increase the probability of spiteful behavior towards outgroup-members significantly from 35 to 47%, i.e. a relative increase of 34%. Once decided to behave spitefully, the probability of a Clinton voter to behave fully spitefully (taking away all points) towards a coinciding voter did not differ significantly from the probability of a Clinton voter to behave fully spitefully towards a Trump voter. We also see that Clinton voters do not behave more spitefully (neither in their decision to behave spitefully nor in their conditional behavior) towards a neutral baseline opponent. Thus, Clinton voters display more spiteful behavior towards outgroup-members, which is consistent with negative discrimination.

Concerning Trump voters, we see that they are significantly more likely to behave spitefully towards a coinciding voter compared to the likelihood of a Clinton voter behaving spitefully to a coinciding voter. However, Trump voters show no significant change in their spiteful behavior towards Clinton voters (neither in their decision to behave spitefully nor in their conditional behavior). We also see that Trump voters do not behave more spitefully (neither in their decision to behave spitefully nor in their conditional behavior) towards a neutral baseline opponent. Thus, while Trump voters generally behave more spitefully they do not differentiate between ingroup-members, outgroup-members and neutral opponents.

Thus, the antisocial behavior of Clinton voters is mainly driving the result B1. This means that Clinton voters engaged in relatively more dysfunctional behavior than Trump voters if paired with an outgroup-member compared to an ingroup-member. Thus, political polarization leads to more dysfunctional behavior—however, only for Clinton voters.

Result B2 Clinton voters are more likely to behave spitefully towards Trump voters compared to fellow Clinton voters. Trump voters, on the other hand, do not differentiate in their behavior between Clinton and Trump voters.

3.3 Attitudes

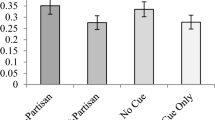

Attitudes and opponent. The figure shows the attitudes towards opponents. The figure on the left shows how close participants report to feel towards their ingroup-members and outgroup-members. The figure on the right shows how warm participants report to feel towards their ingroup-members and outgroup-members. The left two columns show the attitudes towards ingroup-members (i.e., coinciding voters) while the two right columns indicate the attitudes towards outgroup-members (i.e., opposing voters). Red bars denote the attitudes of Trump voters, while blue bars denote the attitudes of Clinton voters. Tie fighters denote 95% confidence intervals. P values are calculated using t-tests. (Color figure online)

To have a better understanding of the underlying reasoning for the observed behavior, we examine how attitudes are related to spiteful behavior. For that purpose, we investigate how the spite score is related to the spite questionnaire, feeling of closeness and feeling of warmth. The result of these estimations can be found in Table 3. It can be seen that the probability of behaving spitefully and behaving maximally spitefully (conditional on behaving spitefully) increased with increasing scores in the spite questionnaire. Comparably, it can be seen that the probability of behaving spitefully and behaving maximally spitefully decreased with the distance and the warmth participants felt towards their opponent. Thus, the kinder the attitude participants have towards their opponent the less spitefully is the behavior.

Result A0 Feeling of warmth, feeling of closeness, and the spite questionnaire measure are significantly correlated with spite behavior.

Next, we analyze the attitudes of Trump and Clinton voters towards coinciding and opposing voters. For that purpose, we examine the social distance questionnaire (Fig. 2a) and the feeling thermometer (Fig. 2b).Footnote 33 It can be seen that on average ingroup-members are considered much closer compared to outgroup-members (t(3220) = 72.4, \(p \le 0.001\)). Specifically, we find a significant and substantial gap—2.24 point difference on a 7 point scale, or a relative decrease in closeness of 44%—in the participants’ attitudes towards fellow partisans and opposing voters. We also find that ingroup-members are felt much warmer towards compared to outgroup-members (t(3220) = 102.8, \(p \le 0.001\)). This again represents a significant and substantial difference—a 5.14 point difference on a 10 point scale, or a relative decrease in feeling of warmth of 77%—and provides again evidence for substantial polarization.Footnote 34

Result A1 Attitudes towards outgroup-members are substantially worse compared to the attitudes towards ingroup-members.

Similar to the antisocial behavior, it can be seen that the difference in social distance and the feeling of warmth between ingroup-members and outgroup-members is bigger for Clinton voters compared to Trump voters (t(3219) = − 13.7, \(p \le 0.001\), t(3219) = 16.7, \(p \le 0.001\)). This is, in particular, due to Clinton voters’ attitudes towards outgroup-members, as Clinton and Trump voters do not differ significantly in their attitudes towards ingroup-members. Specifically, Clinton voters express almost 17%, 15% more antipathy toward Trump voters than the reverse in the measures of social distance and feeling of warmth, respectively.

Result A2 Clinton voters show more negative attitudes towards their outgroup-members than Trump voters do.

Thus, in conclusion, we find similar patterns in attitudes and in spite behavior. Outgroup-members are considered as more distant/less warm, and the participants behave more spitefully towards their outgroup-members. Clinton voters behave more spitefully towards their outgroup-members compared to their ingroup-members while Trump voters are indifferent. A similar observation is made in attitudes: Clinton and Trump voters have comparable attitudes towards ingroup-members but Clinton voters have significantly worse attitudes towards their outgroup-members than Trump voters do.Footnote 35

3.4 Wave effects

As mentioned in the design section, we conducted the experiment in five waves: in late November 2016 (before the 58th US presidential election), late January 2017 (after the inauguration), late October 2018 (before the midterms), early November 2018 (after the midterms), and early January 2021 (after the 59th US presidential election).

Figure 3 shows the changes in spite behavior and attitudes between the waves. From Fig. 3, we can see that there are some minor changes over time, which, however, do not follow a clear pattern, nor are the changes substantial and really meaningful. One of the bigger changes is the spiteful behavior of Trump voters towards Trump voters from before the election in 2016 to early January 2021. Here we see a rather substantial increase (which, however, is not significantly different from zero). One possible explanation for this increase is the insurrection of the Capitol also in early January 2021, which might have been very salient at the time of the experiment and sparked particularly negative attitudes of Trump voters towards fellow Trump voters. Figure 6 in Electronic supplementary material shows all the pairwise comparisons between waves in spite behavior and attitudes. From Fig. 6, we see that for spite, no change is significantly greater than zero. A similar result is obtained for the social distance with the exception that Trump voters changed to slightly more positive attitudes towards Clinton voters from before the 58th US presidential election to after the 59th US presidential election—which however, represents a change of just 0.36 points on a 7 point scale. For the feeling of warmth, we see some significant changes over time which are mostly due to the relatively negative attitudes of Trump voters towards Clinton voters before the 58th US presidential election. The biggest change is observed from before the 58th US presidential election to after the 59th US presidential election. However, even this change (0.81 points on a 10 point scale) is relatively small.

We can conclude that for each subgroup and each of the relevant measures, there are no systematic or relevant changes. Thus, behavior and preferences seem to be rather stable over this 4-year period.Footnote 36

Attitudes and spite over time. The figure depicts the spite behavior and attitudes over time (i.e., for each of the five waves). The panel on top represents the reported feeling of warmth. The panel in the middle represents the reported social distance. The panel on the bottom depicts the spite behavior. Black dotted lines show the average behavior/attitudes for each measure. Blue lines denote the behavior and attitudes of Clinton voters, while red lines represent the behavior and attitudes of Trump voters. Solid lines depict the behavior and attitudes towards ingroup-members (i.e., coinciding voter) while dashed lines depict the behavior and attitudes towards outgroup-members (i.e., opposing voter). The five waves were conducted: in late November 2016 (before the 58th US presidential election), late January 2017 (after the inauguration), late October 2018 (before the midterms), early November 2018 (after the midterms), and early January 2021 (after the 59th US presidential election). (Color figure online)

4 Discussion

This paper investigates whether partisanship—understood as the self-identified party affiliation—leads to dysfunctional behavior. In particular, we study which attitudes and, more importantly, which behavior voters show towards voters casting the same or the opposite vote. To the best of our knowledge, we are the first to study spite in a political context. For this purpose, we collected decisions of self-reported Clinton and Trump voters five times over a period of 4 years.

Most importantly, we were able to show that dysfunctional behavior—understood as the destruction of wealth—is significantly more likely if an opposing voter (outgroup) is impacted compared to a coinciding voter (ingroup). This effect is mainly driven by Clinton voters, who are significantly more likely to behave spitefully towards Trump voters compared towards fellow Clinton voters. This effect is not found for Trump voters—while Trump voters generally exhibit more spiteful behavior they do not differentiate between Trump voters and Clinton voters in their behavior.

These effects are supported by the attitudes of the voters: attitudes towards opposing voters are substantially and significantly more negative than attitudes towards coinciding voters. This effect is significantly stronger for Clinton voters. Further, the timing of the experiment does not substantially change attitudes, and it has no significant effect on dysfunctional behavior.

Several aspects of the results are worth elaborating on.

First, it is worth pointing out that we are not the first to find substantial polarization in the US (see Pew Research Center 2017a), but we are the first to show that this polarization leads to significantly increased destructive behavior (at least for Clinton voters). This is the main point of the paper, and this presents a significant and important contribution. We are able to show that even in a low key situation, like an online experiment, people are more likely to behave spitefully if matched with opposing voters. Hence, it seems plausible that in more salient situations where partisanship is even easier to detect and of more importance (e.g., collaborative work), the effect would be even stronger. More importantly, we know now that an increasing polarization leads to increased social and economic costs.

Second, it is interesting that the timing of the experiment hardly influenced attitudes and behavior. During this period Donald Trump won unexpectedly in 2016 and lost in 2020. However, these events seem not to spill over substantially into attitudes and behavior. This indicates that the destructive consequences of polarization are persistent and might be hard to eradicate.

Third, the differences between Clinton voters and Trump voters are worth elaborating on. On the one hand, it seems not too surprising that Trump voters did not differentiate between ingroup-members and outgroup-members because this would be perfectly in line with most papers on outgroup-bias who show that outgroup-bias lead rarely to purely hostile behavior (Brewer 1999, 2017; Balliet et al. 2014). It is also not too surprising that attitudes towards opposing voters are negative because this has also been shown in other papers (Tajfel 1970; Fowler and Kam 2007; Weisel and Böhm 2015).

However, it is puzzling that Clinton voters have significantly less positive attitudes towards their outgroup-members compared to Trump voters. More importantly, Clinton voters behave relatively more spitefully towards outgroup-members compared to ingroup-members—which cannot be found for Trump voters. This result is particularly interesting in the sense that it does not only show a mere group identity effect (as our aggregate results are driven by Clinton voters only) but an effect of political identity. The asymmetry in destructive behavior between Clinton and Trump voters suggests that political identity functions differently than just plain group identity.

One possible explanation for the asymmetry in behavior is that Trump voters are considered morally wrong in supporting Donald Trump. In that case, Mummendey and Wenzel (1999) argue theoretically that “inferior” groups are more likely to experience discrimination and hostility. Similarly, Brewer (1999) argues that negative discrimination might be present if participants are fighting for political power. Further support is provided by Parker and Janoff-Bulman (2013), who show that morality based groups lead to less positive emotions. More importantly, Weisel and Böhm (2015) demonstrate a significant increase in help avoidance if the group difference is morality-based: “When given the chance to benefit a strong-enmity outgroup, and even more so a morality-based outgroup, many group members decline to do so” (Weisel and Böhm 2015, p. 118). In Online Appendix we discuss differences in ascribed morality between Clinton and Trump voters in our experiment. We find that Clinton voters consider Trump voters substantially less moral than vice versa. In parallel, polls also reveal that a majority of Democrats express to feel angry going into the midterm elections of 2018 while only 30 percent of Republicans say the same.Footnote 37 This indicates that the group difference might be morality-based and consequently drive hostile behavior.

Another possible explanation for the heterogeneous effects between Clinton and Trump voters is the expectation of Clinton voters that Trump voters will generally behave more spitefully and therefore retaliate in expectation. Trump voters on the other hand just generally are more prone to spiteful behavior independent of the opponent. Thus, Clinton voters just increase their spiteful behavior to match the spiteful behavior of Trump voters, which results in a heterogeneous effect.

However, these explanations are only conjectures and it might be valuable for future research to take a closer look at the justifications and motivations of Clinton and Trump supporters to engage in hostile behavior.

While we believe the results to be robust, some possible limitations should be noted. First, our experiment might be prone to experimenter demand effects as the opponent’s political orientation is made salient. While this saliency is essential for the treatment to work, it might reveal the experiment’s purpose and, thus, lead participants to shift their behavior. To obtain a bound on a possible demand effect we conducted a demand-effect treatment as suggested in de Quidt et al. (2018) and reported in detail in Online Appendix. Inducing demand does not change the behavior in our setting.Footnote 38 Thus, while a demand effect cannot be excluded, we find that inducing a demand effect does not alter the behavior of participants substantially. Another limitation of our experiment is the non-representativeness of our sample. While our sample is much more representative of the US population than typical student samples, it is still not representative of the US population, as discussed in detail in supplementary material. Thus, such a selection might bias our results and reduce the generalizability of our findings. In Online Appendix, we try to deal with this issue by adjusting the weights of our estimations to make our sample artificially representative. While our results remain robust, we cannot exclude the possibility that our findings would differ using a representative sample. A similar concern is that sampling Trump and Clinton voters via MTurk might be problematic as, for example, Trump voters on MTurk are different from Trump voters in the general population. Reassuringly Huff and Tingley (2015) show that Mturkers behave similarly to the general population with regard to their voting behavior and suggest that Mturk is a great source to study voters. Further, we find striking similarities in demographics and voting patterns between our sample and nationally representative samples as discussed in supplementary material. Nevertheless, we should caution the reader that we cannot exclude the possibility that, for example, Clinton voters on Mturk are particularly spiteful compared to Clinton voters in general. It is also worth pointing out that we made our treatment rather salient by providing the political identity of their opponents to participants. While this was essential for the experiment it might reduce generalizablity. Partisan affiliation would most likely be less salient in the vast majority of human interaction. Thus, our experiment might not speak to everyday situations but primarily to environments where political identity is salient (such as around elections, rallies, etc.). Another concern we have to think about is possible spillover effects between the auction experiment (which is reported in Mill and Morgan (2020)) and the main task of this experiment (the spite task). It is possible that conducting an auction prior to the spite task might have increased spiteful behavior as participants might have been put into a competitive frame. Behavioral spillovers are discussed in detail in Dolan and Galizzi (2015). In particular, Cason and Gangadhara (2012), and Savikhin and Sheremeta (2012) find spillover effects between competitive games and cooperative games. However, we have two “competitive” games, which most likely will reduce the spillover effect. Further, we can see that the behavior in the SVO-task reported in Online Appendix is very similar to behavior reported in other studies (see also Footnote 19) which indicates that behavior has not been influenced substantially. More importantly, while such a spillover effect might shift overall behavior towards more spite, this spillover effect is identical between the treatments and also influences Clinton and Trump voters arguably to the same extend.Footnote 39 Thus, while the absolute level of spite might have been affected by the auction, the auction is unlikely to account for our heterogeneous results.

Given the growing polarization in the US, it is essential to understand which further-reaching consequences it has. This question is particularly relevant with regard to destructive behavior as it might reduce our societal progress, threaten our democratic values, and potentially curtail economic growth. Overall, the central message of this paper is: political polarization might boost destructive behavior. Increasing polarization not only manifests itself in differences in attitudes but also results in destructive behavior. The goal of future research has to be to figure out how to combat such destructive behavior and how to mitigate the political division.

Notes

For example, Halevy et al. (2008) study whether discrimination is driven by ingroup favoritism or outgroup-hate and show that discrimination is driven predominantly by outgroup-hate. Similarly, Greenwald and Pettigrew (2014) argue that ingroup favoritism is more significant as a basis for discrimination than outgroup-hate and Weisel and Zultan (2016) show that in conflict games ingroup-love (positive discrimination) is the main motive driving behavior. Further, Weisel (2015) show that harming the outgroup is correlated avoiding to help the outgroup. It has also been shown that ingroup favoritism is found in strategic games (Charness et al. 2007), in altruistic punishment behavior (Bernhard et al. 2006) and also in the context of markets (Filippin and Guala 2013; Li et al. 2011).

The seminal work of Chen and Li (2009) can be considered part of the emerging field of identity economics (see the seminal paper by Akerlof and Kranton 2000). However, also other studies prior to Chen and Li (2009) have shown the relevance of ingroup–outgroup bias in economics. For example, Goette et al. (2006) and Ahmed (2007) present experimental evidence of positive discrimination in economic games. Further, Tan and Bolle (2007) shows that intergroup competition can promote intragroup cooperation, and Cookson (2000) show that a “We”-framing in public-good experiments can increase cooperation behavior.

This paper is part of a bigger research project in the field of partisanship and economics. The focus of the companion paper (Mill and Morgan 2020) lies on auction behavior and builds on the same approach—using partisanship—as this paper and uses the same participants, however in Mill and Morgan (2020) we focus solely on auction-theory testing. Participants made the auction decisions in Mill and Morgan (2020) before the spite measure was introduced, and no feedback was given in between. The reason we do not combine both papers is threefold: (1) both papers are aimed at a different audience, (2) combining both papers would make the paper too long and most importantly (3) the paper would lose its focus as both papers are aimed at very different questions.

As there are no relevant differences between the waves for neither measure (neither the behavioral nor the attitudinal measure), we postpone the detailed discussion of wave differences and all questions related to the wave effects into Online Appendix. In most of the main part of the paper, we pool the data over all waves and present the aggregate results.

Participants’ location is verified through their IP addresses. Requesters can review the work done by MTurkers and decide to approve or reject the work. Approved work is paid as indicated in the contract, and rejected work is not paid. Hence, higher approval rates of workers indicate a higher quality of work.

As the experiment was conducted in five waves, a concern might be that participants will not be able to correctly recall their vote cast in the 58th US presidential election as more than 4 years have passed for some participants between their participation in our experiment and the 58th presidential election. In fact, recall bias is of considerable concern for many social sciences. For example, Himmelweit et al. (1978) show that there is considerable vote-recall-bias between elections. However, recent evidence suggests that the vote-recall-bias is very small with regard to presidential elections. For example, Rivers and Lauderdale (2016) show that 95% of surveyed panelists (who have been interviewed immediately after the US presidential election in 2012 and matched to their voter files) were able to recall their vote 4 years later correctly. Similarly, Reny et al. (2019) find, in line with Wright (1993) and Rivers and Lauderdale (2016), that only about 1% of participants misreport their vote in presidential elections. Further, evidence also suggests that vote-recall-bias is driven mainly by forgetfulness and not by measurement bias (van Elsas et al. 2013). Thus, vote-recall-bias should not present a major concern in our study as it would most likely affect only very few participants, and it would only serve to diminish our effects. Further, our results hold even if we were to focus on the first two waves only, where vote-recall-bias is very unlikely. In conclusion, we belief that vote-recall-bias does not present a threat to our identification.

After answering the demographic questions, the participants first took part in an auction experiment without feedback, as reported in Mill and Morgan (2020).

Using the word “opponent” to refer to the co-player might be considered problematic and maybe “co-player” would have been a better choice. However, we belief that using the word “opponent” did not affect the results substantially as the behavior of our participants in the SVO-task (reported in Online Appendix) is very similar to behavior reported in other studies. Specifically, if we calculate the SVO-Measure from the SVO-task (Murphy et al. 2011) we obtain a distribution very similar to the one reported by Murphy et al. (2011). Of particular relevance is the paper by Höglinger and Wehrli (2017) who also conduct the SVO-Measure for Mturkers. While we have a slightly different sample then Höglinger and Wehrli (2017) (due to our restricted eligibility and control questions) we estimate 51% of participants to be prosocial and 49% to be proself in the baseline treatment compared to 59% prosocials and 41% proselfs in Höglinger and Wehrli (2017). Thus, while our participants exhibit slightly more egoistic behavior we find very similar patterns to literature. Another piece of evidence suggesting that the word “opponent” did not influence spite behavior is an experimental manipulation we conducted as part of the extension experiment reported in Online Appendix. Part of the extension experiment was to refer to the co-player either as “opponent” or “co-player”. We find no significant change in behavior using the word “opponent” instead of “co-player”. This null-effect is found for Clinton and Trump voters alike.

A possible concern of the salient manipulation is a demand effect. To estimate the bounds of a possible demand effect in our setting, we applied the method suggested by de Quidt et al. (2018). The experiment and the corresponding results are discussed in greater detail in Online Appendix. Most notably, we find that inducing positive as well as negative demand on spiteful behavior does not change behavior significantly.

The motivation of people engaging in such behavior in real life is manifold, and some motives might raise concerns for the research question if participants could endogenously choose how strongly to signal their attitude. However, the strength of the signal was kept constant across treatment as participants were merely informed about the voting decision of their opponent.

The spite questionnaire is explained and analyzed in more detail in Online Appendix.

Hence, only one random problem was selected to become payoff relevant, which is arguably the only incentive-compatible mechanism (for a detailed argument see, Azrieli et al. 2015).

After finishing the collection, we matched the participants according to the instructions and paid them their bonus. The bonus payment was automated and implemented via AMS and R.

Note, that the spite behavior in the first row coincides with inequality aversion (see Kimbrough and Reiss 2012). The second row could potentially also be driven by inequality aversion; however, the corresponding parameters would exceed the typically observed inequality-aversion parameters (\(\alpha >1\)), and thus, the second row is better characterized by the preference of spite. The third row can only be explained by spite.

Note that using only the third decision as the main measure of spite leads to the same (and even stronger) results as presented below.

Hence, the payoff for this task ranged between 3 and 20 cents. Thus, this experiment can be seen as a low stake experiment. Note that Kirchkamp and Mill (2019) use the same measure (in a very different context and with a student sample in the lab) while paying 6 euro-cents per point (thus, the payoff for this task ranged between 90 and 600 euro-cents). The distribution of choices in Kirchkamp and Mill (2019) is very similar to the patterns observed in this paper, indicating that the stake-size does not affect behavior substantially. Interestingly, Forsythe et al. (1994) and Carpenter et al. (2005) provide further compelling evidence that mean allocations in dictator games with low stakes do not differ from allocations in dictator games with high stakes. Additionally, Camerer and Hogarth (1999) survey the experimental economics literature and shows that behavior is impacted mainly if tasks are incentivized. Thus, by making the experiment having low stakes, we do not distort the results but rather nudge behavior into the direction of spite to have sufficient variance in the data.

To see whether participants paid sufficient attention during our study and whether participants’ indicated vote for either Donald Trump or Hillary Clinton was sincere we discuss coherence in Online Appendix.

We conducted an additional experiment as part of the fifth wave in early January 2021 to obtain the behavior towards neutral members (\(N=314\)). This experiment was identical to the main experiment described in Sect. 2 with the exception that we did not provide participants with any information concerning their partner. Thus, we obtained data on the baseline spite behavior of Clinton and Trump voters.

Our results are split into two categories: behavioral and attitudinal. Thus, the first result is labeled B1 as we focus first on the behavioral results and discuss the attitudinal results thereafter.

To deal with potential selection effects between Clinton and Trump voters, we reestimate all regressions using propensity score matching in Online Appendix. All results prevail.

We discuss attitudes towards Democrats and Republicans in general and compare it to other studies in Online Appendix.

Similar results are obtained using linear regressions on the differences in attitudes and mixed-effects regressions on the repeated measures of attitudes. The results for the social distance measure are reported in Table 15 in supplementary material. The result for the feeling of warmth measure is reported in Table 14 in supplementary material.

In Online Appendix we further investigate how the attitudes mediate the decision to behave spitefully in a path analysis.

Therefore, we pooled the data in the main parts of the paper. In Online Appendix, however, we account for wave-effects and present the results for each wave separately.

This lack of a demand effect is not uncommon. Mummolo and Peterson (2018), for example, show that even financial incentives to respond in line with researcher expectations fail to consistently induce demand effect.

A discussion of potential heterogeneous effects can be found in supplementary material where we show that Clinton and Trump voters react similarly to a demand treatment and where we show that Clinton and Trump voters behave similarly with regard to more prosocial options.

References

Abbink, K., & Dogan, G. (2019). How to choose your victim. Games and Economic Behavior, 113, 482–496.

Abbink, K., & Harris, D. (2019). In-group favouritism and out-group discrimination in naturally occurring groups. PLoS ONE, 14(9), e0221616.

Abbink, K., & Herrmann, B. (2011). The moral costs of nastiness. Economic Inquiry, 49(2), 631–633.

Abbink, K., & Sadrieh, A. (2009). The pleasure of being nasty. Economics Letters, 105(3), 306–308.

Ahmed, A. M. (2007). Group identity, social distance and intergroup bias. Journal of Economic Psychology, 28(3), 324–337.

Akerlof, G. A., & Kranton, R. E. (2000). Economics and identity. The Quarterly Journal of Economics, 115(3), 715–753.

Alcantara, C., Uhrmacher, K., & Guskin, E. (2016). Clinton and trump’s demographic tug of war. The Washington Post. https://www.washingtonpost.com/graphics/politics/2016-election/the-demographic-groups-fueling-the-election/

Arechar, A. A., Gächter, S., & Molleman, L. (2018). Conducting interactive experiments online. Experimental Economics, 21(1), 99–131.

Azrieli, Y., Chambers, C. P., & Healy., P. J. (2015). Incentives in experiments: A theoretical analysis. Mimeo.

Bail, C. A., Argyle, L. P., Brown, T. W., Bumpus, J. P., Chen, H., Hunzaker, M. B. F., Lee, J., Mann, M., Merhout, F., & Volfovsky, A. (2018). Exposure to opposing views on social media can increase political polarization. Proceedings of the National Academy of Sciences, 115(37), 9216–9221.

Balliet, D., Wu, J., & Dreu, C. K. W. D. (2014). Ingroup favoritism in cooperation: A meta-analysis. Psychological Bulletin, 140(6), 1556–1581.

Bartels, L. M. (2002). Beyond the running tally: Partisan bias in political perceptions. Political Behavior, 24(2), 117–150.

Bartling, B., & Netzer, N. (2016). An externality-robust auction: Theory and experimental evidence. Games and Economic Behavior, 97, 186–204.

Bauer, M., Cahlíková, J., Chytilová, J., & Želinský, T. (2018). Social contagion of ethnic hostility. Proceedings of the National Academy of Sciences, 115(19), 4881–4886.

Bernhard, H., Fehr, E., & Fischbacher, U. (2006). Group affiliation and altruistic norm enforcement. American Economic Review, 96(2), 217–221.

Bishop, B. & Cushing, R. G. (2008). The big sort: Why the clustering of like-minded America is tearing us apart. Houghton Mifflin Harcourt.

Böhm, R., Rusch, H., & Baron, J. (2020). The psychology of intergroup conflict: A review of theories and measures. Journal of Economic Behavior and Organization, 178, 947–962.

Bolton, G., Dimant, E., & Schmidt, U. (2018). When a nudge backfires. Using observation with social and economic incentives to promote pro-social behavior. PPE working papers 0017, Philosophy, Politics and Economics, University of Pennsylvania.

Boxell, L., Gentzkow, M., & Shapiro, J. M. (2020). Cross-country trends in affective polarization. Working paper 26669, National Bureau of Economic Research.

Brewer, M. B. (1999). The psychology of prejudice: Ingroup love and outgroup hate? Journal of Social Issues, 55(3), 429–444.

Brewer, M. B. (2017). Intergroup discrimination: Ingroup love or outgroup hate? In C. G. Sibley & F. K. Barlow (Eds.), The Cambridge handbook of the psychology of prejudice (pp. 90–110). Cambridge University Press.

Buhrmester, M., Kwang, T., & Gosling, S. D. (2011). Amazon’s mechanical turk. Perspectives on Psychological Science, 6(1), 3–5.

Camerer, C. F., & Hogarth, R. M. (1999). The effects of financial incentives in experiments: A review and capital-labor-production framework. Journal of Risk and Uncertainty, 19(1), 7–42.

Campbell, A., Converse, P. E., Miller, W. E., & Stokes, D. E. (1980). The American Voter. University of Chicago Press.

Carlin, R. E., & Love, G. J. (2013). The politics of interpersonal trust and reciprocity: An experimental approach. Political Behavior, 35(1), 43–63.

Carothers, T., & Donohue, A. O. (Eds.). (2019). Democracies divided. Brookings Institution Press.

Carpenter, J., Verhoogen, E., & Burks, S. (2005). The effect of stakes in distribution experiments. Economics Letters, 86(3), 393–398.

Cason, T. N., & Gangadhara, L. (2012). Cooperation spillovers and price competition in experimental markets. Economic Inquiry, 51(3), 1715–1730.

Charness, G., Rigotti, L., & Rustichini, A. (2007). Individual behavior and group membership. American Economic Review, 97(4), 1340–1352.

Chen, R., & Chen, Y. (2011). The potential of social identity for equilibrium selection. The American Economic Review, 101(6), 2562–2589.

Chen, Y., Harper, F. M., Konstan, J., & Li, S. X. (2010). Social comparisons and contributions to online communities: A field experiment on movielens. American Economic Review, 100(4), 1358–98.

Chen, Y., & Li, S. X. (2009). Group identity and social preferences. American Economic Review, 99(1), 431–57.

Chen, Y., Li, S. X., Liu, T. X., & Shih, M. (2014). Which hat to wear? Impact of natural identities on coordination and cooperation. Games and Economic Behavior, 84, 58–86.

Cookson, R. (2000). Framing effects in public goods experiments. Experimental Economics, 3(1), 55–79.

Crandall, C. S. (1991). Multiple stigma and aids: Illness stigma and attitudes toward homosexuals and IV drug users in aids-related stigmatization. Journal of Community and Applied Social Psychology, 1, 165–172.

De Dreu, C. K. W. (2010). Social value orientation moderates ingroup love but not outgroup hate in competitive intergroup conflict. Group Processes and Intergroup Relations, 13(6), 701–713.

de Quidt, J., Haushofer, J., & Roth, C. (2018). Measuring and bounding experimenter demand. American Economic Review, 108(11), 3266–3302.

Difallah, D., Filatova, E., & Ipeirotis, P. (2018). Demographics and dynamics of mechanical turk workers. In Proceedings of the eleventh ACM international conference on web search and data mining. ACM.

Dimant, E. (2019). Contagion of pro- and anti-social behavior among peers and the role of social proximity. Journal of Economic Psychology, 73, 66–88.

Dimant, E. (2020). Hate trumps love: The impact of political polarization on social preferences.

Dimant, E., & Hyndman, K. (2019). Becoming friends or foes? How competitive environments shape altruistic preferences.

Dixit, A. K., & Weibull, J. W. (2007). Political polarization. Proceedings of the National Academy of Sciences, 104(18), 7351–7356.

Dolan, P., & Galizzi, M. M. (2015). Like ripples on a pond: Behavioral spillovers and their implications for research and policy. Journal of Economic Psychology, 47, 1–16.

Elgers, P. T., Haltiner, J. R., & Hawthorne, W. H. (1979). Beta regression tendencies: Statistical and real causes. The Journal of Finance, 34(1), 261–263.

Federal Bureau of Investigation, United States Department of Justice. (2016). Uniform crime reporting program data: County-level detailed arrest and offense data, 2014. https://doi.org/10.3886/ICPSR36399.v2

Fehr, E., & Gächter, S. (2002). Altruistic punishment in humans. Nature, 415(6868), 137–140.

Fehr, E., Glätzle-Rützler, D., & Sutter, M. (2013). The development of egalitarianism, altruism, spite and parochialism in childhood and adolescence. European Economic Review, 64, 369–383.

Fehr, E., Hoff, K., & Kshetramade, M. (2008). Spite and development. American Economic Review, 98(2), 494–99.

Filippin, A., & Guala, F. (2013). Costless discrimination and unequal achievements in an experimental tournament. Experimental Economics, 16(3), 285–305.

Fischbacher, U., & Gächter, S. (2010). Social preferences, beliefs, and the dynamics of free riding in public goods experiments. American Economic Review, 100(1), 541–56.

Fischbacher, U., Gächter, S., & Fehr, E. (2001). Are people conditionally cooperative? Evidence from a public goods experiment. Economics Letters, 71(3), 397–404.

Fomina, J. (2019). Of “Patriots” and citizens: Asymmetric populist polarization in Poland (pp. 126–150). Brookings Institution Press.

Forsythe, R., Horowitz, J. L., Savin, N., & Sefton, M. (1994). Fairness in simple bargaining experiments. Games and Economic Behavior, 6(3), 347–369.

Fowler, J. H., & Kam, C. D. (2007). Beyond the self: Social identity, altruism, and political participation. Journal of Politics, 69(3), 813–827.

Gangadharan, L., Grossman, P. J., Molle, M. K., & Vecci, J. (2019). Impact of social identity and inequality on antisocial behaviour. European Economic Review, 119, 199–215.

Glassman, B. & United States Census Bureau (2016). Selected economic characteristics by state: 2014 and 2015. https://www.census.gov/data/tables/2016/demo/income-poverty/glassman-acs.html

Goette, L., Huffman, D., & Meier, S. (2006). The impact of group membership on cooperation and norm enforcement: Evidence using random assignment to real social groups. American Economic Review, 96(2), 212–216.

Green, D., Palmquist, B., & Schickler, E. (2002). Partisan hearts and minds: Political parties and the social identities of voters. Yale University Press.

Greene, S. (1999). Understanding party identification: A social identity approach. Political Psychology, 20(2), 393–403.

Greenwald, A. G., & Pettigrew, T. F. (2014). With malice toward none and charity for some: Ingroup favoritism enables discrimination. American Psychologist, 69(7), 669–684.

Grün, B., Kosmidis, I., & Zeileis, A. (2012). Extended beta regression in R: Shaken, stirred, mixed, and partitioned. Journal of Statistical Software, 48(11), 1–25.

Halevy, N., Bornstein, G., & Sagiv, L. (2008). “In-group love” and “out-group hate” as motives for individual participation in intergroup conflict. Psychological Science, 19(4), 405–411.

Hare, C., & Poole, K. T. (2014). The polarization of contemporary American politics. Polity, 46(3), 411–429.

Heap, S. P. H., & Zizzo, D. J. (2009). The value of groups. American Economic Review, 99(1), 295–323.

Herrmann, B., & Thöni, C. (2009). Measuring conditional cooperation: A replication study in Russia. Experimental Economics, 12(1), 87–92.

Herrmann, B., Thöni, C., & Gächter, S. (2008). Antisocial punishment across societies. Science, 319(5868), 1362–1367.

Hewstone, M., Rubin, M., & Willis, H. (2002). Intergroup bias. Annual Review of Psychology, 53(1), 575–604.

Himmelweit, H. T., Biberian, M. J., & Stockdale, J. (1978). Memory for past vote: Implications of a study of bias in recall. British Journal of Political Science, 8(3), 365–375.

Höglinger, M., & Wehrli, S. (2017). Measuring social preferences on amazon mechanical turk. In B. Jann & W. Przepiorka (Eds.), Social dilemmas, institutions, and the evolution of cooperation. De Gruyter.

Horton, J. J., Rand, D. G., & Zeckhauser, R. J. (2011). The online laboratory: Conducting experiments in a real labor market. Experimental Economics, 14(3), 399–425.

Huff, C., & Tingley, D. (2015). Who are these people? Evaluating the demographic characteristics and political preferences of MTurk survey respondents. Research and Politics, 2(3), 205316801560464.

Hyatt, C., Campbell, W. K., Lynam, D. R., & Miller, J. D. (2018). Dr. jekyll or mr. hyde? president donald trump’s personality profile as perceived from different political viewpoints. Collabra: Psychology, 4(1).

Iyengar, S., & Westwood, S. J. (2015). Fear and loathing across party lines: New evidence on group polarization. American Journal of Political Science, 59(3), 690–707.

Jacobson, J. J., Maxson, B. B., Mays, K., & Kowalski, C. J. (1992). A utility analysis of dental implants. The International Journal of Oral and Maxillofacial Implants, 7(3), 381–388.

Jönsson, M. T., & Thor, G. (2012). Estimating coextinction risks from epidemic tree death: Affiliate lichen communities among diseased host tree populations of fraxinus excelsior. PLoS ONE, 7(9), 1–10.

Jordan, J. J., Hoffman, M., Bloom, P., & Rand, D. G. (2016). Third-party punishment as a costly signal of trustworthiness. Nature, 530(7591), 473–476.

Jordan, J. J., Sommers, R., Bloom, P., & Rand, D. G. (2017). Why do we hate hypocrites? Evidence for a theory of false signaling. Psychological Science.

Kaid, L. L., Leland, C. M., & Whitney, S. (1992). The impact of televised political ads: Evoking viewer responses in the 1988 presidential campaign. Southern Communication Journal, 57(4), 285–295.

Kimbrough, E. O., & Reiss, J. P. (2012). Measuring the distribution of spitefulness. PLoS ONE, 7(8), 1–8.

Kirchkamp, O. & Mill, W. (2019). Spite vs. risk: Explaining overbidding. A theoretical and experimental investigation. CESifo working paper no. 7631.

Kirk, A. & Patrick, S. (2016). Us election: How age, race and education are deciding factors in the race for president. The Telegraph. http://www.telegraph.co.uk/news/0/us-election-how-age-race-and-education-are-deciding-factors-in-t/

Kocher, M., Cherry, T., Kroll, S., Netzer, R. J., & Sutter, M. (2008). Conditional cooperation on three continents. Economics Letters, 101(3), 175–178.

Kranton, R., Pease, M., Sanders, S., & Huette, S. (2018). Groupy and not groupy behavior: Deconstructing bias in social preferences. Technical report, Mimeo.

Kranton, R. E., & Sanders, S. G. (2017). Groupy versus non-groupy social preferences: Personality, region, and political party. American Economic Review, 107(5), 65–69.

Laliberté, E., Adair, E. C., & Hobbie, S. E. (2012). Estimating litter decomposition rate in single-pool models using nonlinear beta regression. PLoS ONE, 7(9), 1–16.

Levine, D. K. (1998). Modeling altruism and spitefulness in experiments. Review of Economic Dynamics, 1(3), 593–622.

Li, S. X., Dogan, K., & Haruvy, E. (2011). Group identity in markets. International Journal of Industrial Organization, 29(1), 104–115. Special Issue: Experiments in Industrial Organization.

Mao, A., Dworkin, L., Suri, S., & Watts, D. J. (2017). Resilient cooperators stabilize long-run cooperation in the finitely repeated Prisoner’s dilemma. Nature Communications, 8.

Marcus, D. K., Zeigler-Hill, V., Mercer, S. H., & Norris, A. L. (2014). The psychology of spite and the measurement of spitefulness. Psychological Assessment, 26(2), 563–574.

Margolis, M. F. & Sances, M. W. (2016). Partisan differences in nonpartisan activity: The case of charitable giving. Political Behavior, 1–26.

Mill, W. & Morgan, J. (2020). Competition between friends and foes. Crc tr 224 discussion paper series, University of Bonn and University of Mannheim, Germany.

Miller, A. H., & Wlezien, C. (1993). The social group dynamics of partisan evaluations. Electoral Studies, 12(1), 5–22.

Mummendey, A., & Schreiber, H.-J. (1984). “Different” just means “better”: Some obvious and some hidden pathways to in-group favouritism. British Journal of Social Psychology, 23(4), 363–367.

Mummendey, A., & Wenzel, M. (1999). Social discrimination and tolerance in intergroup relations: Reactions to intergroup difference. Personality and Social Psychology Review, 3(2), 158–174.

Mummolo, J., & Peterson, E. (2018). Demand effects in survey experiments: An empirical assessment. American Political Science Review, 113(2), 517–529.

Murphy, R. O., Ackerman, K. A., & Handgraaf, M. J. J. (2011). Measuring social value orientation. Judgment and Decision Making, 6(8), 771–781.

Nunberg, G. (2007). Talking right: How conservatives turned liberalism into a tax-raising, latte-drinking, Sushi-eating, Volvo-driving, New York times-reading, body-piercing, Hollywood-loving, Left-wing Freak Show. PublicAffairs.

Oliver, J. E., Wood, T., & Bass, A. (2016). Liberellas versus konservatives: Social status, ideology, and birth names in the united states. Political Behavior, 38(1), 55–81.

Ospina, R., & Ferrari, S. L. (2012). A general class of zero-or-one inflated beta regression models. Computational Statistics and Data Analysis, 56(6), 1609–1623.

Paolacci, G., Chandler, J., & Ipeirotis, P. G. (2010). Running experiments on amazon mechanical turk. Judgment and Decision Making, 5(5), 411–419.

Parker, M. T., & Janoff-Bulman, R. (2013). Lessons from morality-based social identity: The power of outgroup “hate”, not just ingroup “love.” Social Justice Research, 26(1), 81–96.

Parrillo, V. N., & Donoghue, C. (2005). Updating the bogardus social distance studies: a new national survey. The Social Science Journal, 42(2), 257–271.

Patrick, D. L., Starks, H. E., Cain, K. C., Uhlmann, R. F., & Pearlman, R. A. (1994). Measuring preferences for health states worse than death. Medical Decision Making, 14(1), 9–18.

Pew Research Center (2014). Pew research center 2014 U.S. religious landscape study. http://www.pewforum.org/datasets/pew-research-center-2014-u-s-religious-landscape-study/

Pew Research Center (2016). Behind trump’s victory: Divisions by race, gender, education. Mimeo. https://www.pewresearch.org/fact-tank/2016/11/09/behind-trumps-victory-divisions-by-race-gender-education/

Pew Research Center (2017a). The partisan divide on political values grows even wider. Mimeo. http://www.people-press.org/2017/10/05/the-partisan-divide-on-political-values-grows-even-wider/

Pew Research Center (2017b). Partisans differ widely in views of police officers, college professors. Mimeo. http://www.people-press.org/2017/09/13/partisans-differ-widely-in-views-of-police-officers-college-professors/

Prediger, S., Vollan, B., & Herrmann, B. (2014). Resource scarcity and antisocial behavior. Journal of Public Economics, 119(C), 1–9.

Rand, D. G., Peysakhovich, A., Kraft-Todd, G. T., Newman, G. E., Wurzbacher, O., Nowak, M. A., & Greene, J. D. (2014). Social heuristics shape intuitive cooperation. Nature Communications, 5, 3677.

Reny, T. T., Collingwood, L., & Valenzuela, A. A. (2019). Vote switching in the 2016 election: How racial and immigration attitudes, not economics, explain shifts in white voting. Public Opinion Quarterly, 83(1), 91–113.