Abstract

Context

Automatic classification of mobile applications users’ feedback is studied for different areas of software engineering. However, supervised classification requires a lot of manually labeled data, and with introducing new classes or new platforms, new labeled data and models are required. Employing Pre-trained neural Language Models (PLMs) have found success in the Natural Language Processing field. However, their applicability has not been explored for app review classification.

Objective

We evaluate using PLMs for issue classification from app reviews in multiple settings and compare them with the existing models.

Method

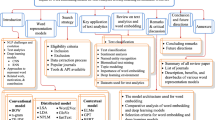

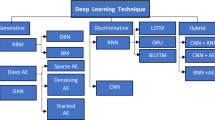

We set up different studies to evaluate the performance and time efficiency of PLMs compared to Prior approaches on six datasets: binary vs. multi-class, zero-shot, multi-task, and multi-resource settings. In addition, we train and study domain-specific (Custom) PLMs by incorporating app reviews in the pre-training. We report Micro and Macro Precision, Recall, and F1 scores and the time required for training and predicting with the models.

Results

Our results show that PLMs can classify the app issues with higher scores, except in multi-resource setting. On the largest dataset, results are improved by 13 and 8 micro- and macro-average F1-scores, respectively, compared to the Prior approaches. Domain-specific PLMs achieve the highest scores in all settings with less prediction time, and they benefit from pre-training with a larger number of app reviews. On the largest dataset, we obtain 98 and 92 micro- and macro-average F1-score (from 4.5 to 8.3 more F1-score compared to general pre-trained models), 71 F1-score in zero-shot setting, and 93 and 92 F1-score in multi-task and multi-resource settings, respectively, using the large domain-specific PLMs.

Conclusion

Although prior approaches achieve high scores in some settings, PLMs are the only models that can work well in the zero-shot setting. When trained on the app review dataset, the Custom PLMs have higher performance and lower prediction times.

Similar content being viewed by others

Notes

Kaggle is a subsidiary of Google and is an online platform for data scientists and machine learning practitioners: https://www.kaggle.com

Note that adding numbers in all categories will exceed the total number because some reviews belong to multiple groups. We will follow the steps in Guzman et al. (2015) to calculate the evaluation metrics for this dataset.

Stanford Question Answering Dataset: https://rajpurkar.github.io/SQuAD-explorer/explore/1.1/dev/

The Wiki-texts dataset is open sourced at https://dumps.wikimedia.org/.

We only use the readily available PLMs for this study and avoid fine-tuning other PLMs on Multi-NLI data in this setting, due to high computational expenses.

Here, users can be developers, practitioners, or researchers who want to use the studied models to classify issues related to mobile apps from user feedback.

References

Adhikari A, Ram A, Tang R, et al (2019) Docbert: Bert for document classification. arXiv preprint arXiv:1904.08398

Al-Hawari A, Najadat H, Shatnawi R (2021) Classification of application reviews into software maintenance tasks using data mining techniques. Softw Qual J 29:667–703

Ali M, Joorabchi ME, Mesbah A (2017) Same app, different app stores: A comparative study. In: 2017 IEEE/ACM 4th international conference on mobile software engineering and systems (MOBILESoft), pp 79–90

Allamanis M, Brockschmidt M, Khademi M (2018) Learning to represent programs with graphs. In: ICLR

Al-Subaihin AA, Sarro F, Black S et al (2021) App store effects on software engineering practices. IEEE Trans Softw Eng 47(2):300–319

Aralikatte R, Sridhara G, Gantayat N, et al (2018) Fault in your stars: An analysis of android app reviews. In: Proceedings of the ACM India joint international conference on data science and management of data. association for computing machinery, New York, NY, USA, CoDS-COMAD ’18, pp 57–66

Araujo AF, Gôlo MP, Marcacini RM (2022) Opinion mining for app reviews: an analysis of textual representation and predictive models. Autom Softw Eng 29(1):1–30

Araujo A, Golo M, Viana B, et al (2020) From bag-of-words to pre-trained neural language models: Improving automatic classification of app reviews for requirements engineering. In: Anais do XVII Encontro Nacional de Inteligência Artificial e Computacional, SBC. pp 378–389

Aslam N, Ramay WY, Xia K et al (2020) Convolutional neural network based classification of app reviews. IEEE Access 8:185619–185628

Bakiu E, Guzman E (2017) Which feature is unusable? detecting usability and user experience issues from user reviews. In: 2017 IEEE 25th International requirements engineering conference workshops (REW). pp 182–187

Bataa E, Wu J (2019) An investigation of transfer learning-based sentiment analysis in japanese. arXiv preprint arXiv:1905.09642

Bavota G, Linares-Vásquez M, Bernal-Cárdenas CE et al (2015) The impact of api change- and fault-proneness on the user ratings of android apps. IEEE Trans Softw Eng 41(4):384–407

Beltagy I, Lo K, Cohan A (2019) Scibert: A pretrained language model for scientific text. In: Proceedings of the 2019 conference on empirical methods in natural language processing and the 9th international joint conference on natural language processing (EMNLP-IJCNLP). Association for Computational Linguistics, Hong Kong, pp 3615–3620

Besmer AR, Watson J, Banks MS (2020) Investigating user perceptions of mobile app privacy: An analysis of user-submitted app reviews. Int J Inf Secur Priv (IJISP) 14(4):74–91

Biswas E, Karabulut ME, Pollock L, et al (2020) Achieving reliable sentiment analysis in the software engineering domain using bert. In: 2020 IEEE International conference on software maintenance and evolution (ICSME). pp 162–173

Blei DM, Ng AY, Jordan MI (2003) Latent dirichlet allocation. J Mach Learn Res 3(Jan):993–1022

Cao Y, Fard FH (2021) Pre-trained neural language models for automatic mobile app user feedback answer generation. In: 2021 36th IEEE/ACM international conference on automated software engineering workshops (ASEW). pp 120–125

Chalkidis I, Fergadiotis M, Malakasiotis P, et al (2020) Legal-bert: The muppets straight out of law school. In: Findings of the association for computational linguistics: EMNLP 2020. Association for Computational Linguistics, Online, pp 2898–2904

Chang WC, Yu HF, Zhong K, et al (2019) X-bert: extreme multi-label text classification with using bidirectional encoder representations from transformers. arXiv preprint arXiv:1905.02331

Chen F, Fard FH, Lo D, et al (2022) On the transferability of pre-trained language models for low-resource programming languages. In: 2022 IEEE/ACM 30th international conference on program comprehension (ICPC). pp 401–412

Chen N, Lin J, Hoi SCH, et al (2014) Ar-miner: Mining informative reviews for developers from mobile app marketplace. In: Proceedings of the 36th international conference on software engineering. Association for Computing Machinery, New York, NY, USA, ICSE 2014, pp 767–778

Cimasa A, Corazza A, Coviello C, et al (2019) Word embeddings for comment coherence. In: 2019 45th Euromicro conference on software engineering and advanced applications (SEAA). pp 244–251

Ciurumelea A, Schaufelbühl A, Panichella S, et al (2017) Analyzing reviews and code of mobile apps for better release planning. In: 2017 IEEE 24th International conference on software analysis, evolution and reengineering (SANER). pp 91–102

Clinchant S, Jung KW, Nikoulina V (2019) On the use of bert for neural machine translation. arXiv preprint arXiv:1909.12744

Dabrowski J, Letier E, Perini A, et al (2019) Finding and analyzing app reviews related to specific features: A research preview. In: International working conference on requirements engineering: foundation for software quality, Springer, pp 183–189

Deocadez R, Harrison R, Rodriguez D (2017) Preliminary study on applying semi-supervised learning to app store analysis. In: Proceedings of the 21st international conference on evaluation and assessment in software engineering. Association for Computing Machinery, New York, NY, USA, EASE’17, pp 320–323

Devlin J, Chang MW, Lee K, et al (2019) Bert: Pre-training of deep bidirectional transformers for language understanding. In: Proceedings of the 2019 conference of the north american chapter of the association for computational linguistics: human language technologies, Volume 1 (Long and Short Papers). Association for Computational Linguistics, Minneapolis, Minnesota, pp 4171–4186

Dhinakaran VT, Pulle R, Ajmeri N, et al (2018) App review analysis via active learning: Reducing supervision effort without compromising classification accuracy. In: 2018 IEEE 26th International requirements engineering conference (RE), pp 170–181

Di Sorbo A, Panichella S, Alexandru CV, et al (2016) What would users change in my app? summarizing app reviews for recommending software changes. In: Proceedings of the 2016 24th ACM SIGSOFT international symposium on foundations of software engineering. Association for Computing Machinery, New York, NY, USA, FSE 2016, pp 499–510

Edunov S, Baevski A, Auli M (2019) Pre-trained language model representations for language generation. In: Proceedings of the 2019 conference of the north american chapter of the association for computational linguistics: human language technologies, Volume 1 (Long and Short Papers). Association for Computational Linguistics, Minneapolis, Minnesota, pp 4052–4059

Feng Z, Guo D, Tang D, et al (2020) Codebert: A pre-trained model for programming and natural languages. In: Findings of the association for computational linguistics: EMNLP 2020. Association for Computational Linguistics, Online, pp 1536–1547

Finkelstein A, Harman M, Jia Y, et al (2014) App store analysis: Mining app stores for relationships between customer, business and technical characteristics. RN 14(10):24

Forman G, Scholz M (2010) Apples-to-apples in cross-validation studies: Pitfalls in classifier performance measurement. SIGKDD Explor Newsl 12(1):49–57

Fu B, Lin J, Li L, et al (2013) Why people hate your app: Making sense of user feedback in a mobile app store. In: Proceedings of the 19th ACM SIGKDD international conference on knowledge discovery and data mining. Association for Computing Machinery, New York, NY, USA, KDD ’13, pp 1276–1284

Gao C, Zeng J, Lyu MR, et al (2018) Online app review analysis for identifying emerging issues. In: Proceedings of the 40th international conference on software engineering. Association for Computing Machinery, New York, NY, USA, ICSE ’18, pp 48–58

Grano G, Ciurumelea A, Panichella S, et al (2018) Exploring the integration of user feedback in automated testing of android applications. In: 2018 IEEE 25th International conference on software analysis, evolution and reengineering (SANER). pp 72–83

Gu X, Kim S (2015) What parts of your apps are loved by users? (t). In: 2015 30th IEEE/ACM International conference on automated software engineering (ASE). pp 760–770

Guo B, Ouyang Y, Guo T et al (2019) Enhancing mobile app user understanding and marketing with heterogeneous crowdsourced data: A review. IEEE Access 7:68557–68571

Guo D, Ren S, Lu S, et al (2021) Graphcodebert: Pre-training code representations with data flow. In: Proceedings of the 2021 international conference on learning representations (ICLR)

Guo H, Singh MP (2020) Caspar: Extracting and synthesizing user stories of problems from app reviews. In: 2020 IEEE/ACM 42nd international conference on software engineering (ICSE). pp 628–640

Guzman E, Alkadhi R, Seyff N (2017) An exploratory study of twitter messages about software applications. Requir Eng 22(3):387–412

Guzman E, Alkadhi R, Seyff N (2016) A needle in a haystack: What do twitter users say about software? In: 2016 IEEE 24th international requirements engineering conference (RE), pp 96–105

Guzman E, El-Haliby M, Bruegge B (2015) Ensemble methods for app review classification: An approach for software evolution (n). In: 2015 30th IEEE/ACM International conference on automated software engineering (ASE). pp 771–776

Guzman E, Ibrahim M, Glinz M (2017b) A little bird told me: Mining tweets for requirements and software evolution. In: 2017 IEEE 25th International requirements engineering conference (RE). pp 11–20

Guzman E, Maalej W (2014) How do users like this feature? a fine grained sentiment analysis of app reviews. In: 2014 IEEE 22nd International Requirements Engineering Conference (RE). pp 153–162

Hadi MA, Fard FH (2020) Aobtm: Adaptive online biterm topic modeling for version sensitive short-texts analysis. In: 2020 IEEE International conference on software maintenance and evolution (ICSME). pp 593–604

Hadi MA, Yusuf INB, Thung F, et al (2022) On the effectiveness of pretrained models for api learning. In: 2022 IEEE/ACM 30th International conference on program comprehension (ICPC). pp 309–320

Haering M, Stanik C, Maalej W (2021) Automatically matching bug reports with related app reviews. In: 2021 IEEE/ACM 43rd international conference on software engineering (ICSE). pp 970–981

Hakala K, Pyysalo S (2019) Biomedical named entity recognition with multilingual bert. In: Proceedings of the 5th workshop on BioNLP open shared tasks. pp 56–61

Harkous H, Peddinti ST, Khandelwal R, et al (2022) Hark: A deep learning system for navigating privacy feedback at scale. 2022 IEEE Symposium on Security and Privacy (SP)

He D, Hong K, Cheng Y et al (2019) Detecting promotion attacks in the app market using neural networks. IEEE Wirel Commun 26(4):110–116

He H, Ma Y (2013) Imbalanced learning: foundations, algorithms, and applications. NA

Hemmatian F, Sohrabi MK (2019) A survey on classification techniques for opinion mining and sentiment analysis. Artif Intell Rev 52(3):1495–1545

Henao PR, Fischbach J, Spies D, et al (2021) Transfer learning for mining feature requests and bug reports from tweets and app store reviews. In: 2021 IEEE 29th International Requirements Engineering Conference Workshops (REW). pp 80–86

Hochreiter S, Schmidhuber J (1997) Long short-term memory. Neural Comput 9(8):1735–1780

Howard J, Ruder S (2018) Universal language model fine-tuning for text classification. In: Proceedings of the 56th annual meeting of the association for computational linguistics (Volume 1: Long Papers). Association for Computational Linguistics, Melbourne, Australia, pp 328–339

Huang Q, Xia X, Lo D et al (2020) Automating intention mining. IEEE Trans Softw Eng 46(10):1098–1119

Imamura K, Sumita E (2019) Recycling a pre-trained bert encoder for neural machine translation. In: Proceedings of the 3rd Workshop on Neural Generation and Translation, pp 23–31

Islam R, Islam R, Mazumder T (2010) Mobile application and its global impact. Int J Eng Technol 10(6):72–78

James G, Witten D, Hastie T et al (2013) An introduction to statistical learning, vol 112. Springer

Jha N, Mahmoud A (2019) Mining non-functional requirements from app store reviews. Empir Softw Eng 24(6):3659–3695

Johann T, Stanik C, Alizadeh B. AM, et al (2017) Safe: A simple approach for feature extraction from app descriptions and app reviews. In: 2017 IEEE 25th International requirements engineering conference (RE), pp 21–30

Joorabchi ME, Mesbah A, Kruchten P (2013) Real challenges in mobile app development. In: 2013 ACM / IEEE International symposium on empirical software engineering and measurement, pp 15–24

Ju Y, Zhao F, Chen S, et al (2019) Technical report on conversational question answering. arXiv preprint arXiv:1909.10772

Karimi A, Rossi L, Prati A (2021) Adversarial training for aspect-based sentiment analysis with bert. In: 2020 25th International conference on pattern recognition (ICPR), pp 8797–8803

Karmakar A, Robbes R (2021) What do pre-trained code models know about code? In: 2021 36th IEEE/ACM International conference on automated software engineering (ASE), pp 1332–1336

Kaur A, Kaur K (2022) Systematic literature review of mobile application development and testing effort estimation. J King Saud Univ Comput Inf Sci 34(2):1–15

Lan Z, Chen M, Goodman S, et al (2019) Albert: A lite bert for self-supervised learning of language representations. arXiv preprint arXiv:1909.11942

Li X, Bing L, Zhang W, et al (2019) Exploiting BERT for end-to-end aspect-based sentiment analysis. In: Proceedings of the 5th workshop on noisy user-generated text (W-NUT 2019). Association for Computational Linguistics, Hong Kong, China, pp 34–41

Li L, Ma R, Guo Q, et al (2020) Bert-attack: Adversarial attack against bert using bert. In: Proceedings of the 2020 conference on empirical methods in natural language processing (EMNLP). Association for Computational Linguistics, Online, pp 6193–6202

Liu Y, Lapata M (2019) Text summarization with pretrained encoders. arXiv preprint arXiv:1908.08345

Liu Y, Ott M, Goyal N, et al (2019) Roberta: A robustly optimized bert pretraining approach. arXiv preprint arXiv:1907.11692

Liu L, Ren X, Shang J, et al (2018) Efficient contextualized representation: Language model pruning for sequence labeling. In: Proceedings of the 2018 conference on empirical methods in natural language processing. Association for Computational Linguistics, Brussels, Belgium, pp 1215–1225

Liu W, Zhang G, Chen J, et al (2015) A measurement-based study on application popularity in android and ios app stores. In: Proceedings of the 2015 workshop on mobile big data. Association for Computing Machinery, New York, NY, USA, Mobidata ’15, pp 13–18

Lu M, Liang P (2017) Automatic classification of non-functional requirements from augmented app user reviews. In: Proceedings of the 21st international conference on evaluation and assessment in software engineering. Association for Computing Machinery, New York, NY, USA, EASE’17, pp 344–353

Luong K, Hadi M, Thung F, et al (2022) Arseek: Identifying api resource using code and discussion on stack overflow. In: 2022 IEEE/ACM 30th International conference on program comprehension (ICPC), pp 331–342

Maalej W, Kurtanović Z, Nabil H et al (2016) On the automatic classification of app reviews. Requir Eng 21(3):311–331

Maalej W, Nabil H (2015) Bug report, feature request, or simply praise? on automatically classifying app reviews. In: 2015 IEEE 23rd International requirements engineering conference (RE). pp 116–125

Martin W, Sarro F, Jia Y et al (2017) A survey of app store analysis for software engineering. IEEE Trans Softw Eng 43(9):817–847

Mekala RR, Irfan A, Groen EC, et al (2021) Classifying user requirements from online feedback in small dataset environments using deep learning. In: 2021 IEEE 29th International requirements engineering conference (RE), pp 139–149

Messaoud MB, Jenhani I, Jemaa NB et al (2019) A multi-label active learning approach for mobile app user review classification. International Conference on Knowledge Science. Springer, Engineering and Management, pp 805–816

Mikolov T, Chen K, Corrado G, et al (2013) Efficient estimation of word representations in vector space. In: ICLR Workshop

Mondal AS, Zhu Y, Bhagat KK, et al (2022) Analysing user reviews of interactive educational apps: a sentiment analysis approach. Interact Learn Environ 1–18

Nayebi M, Cho H, Ruhe G (2018) App store mining is not enough for app improvement. Empir Softw Eng 23(5):2764–2794

Nigam K, Lafferty J, McCallum A (1999) Using maximum entropy for text classification. IJCAI-99 workshop on machine learning for information filtering. Stockholom, Sweden, pp 61–67

Novielli N, Girardi D, Lanubile F (2018) A benchmark study on sentiment analysis for software engineering research. In: 2018 IEEE/ACM 15th International conference on mining software repositories (MSR), pp 364–375

Palomba F, Linares-Vásquez M, Bavota G, et al (2015) User reviews matter! tracking crowdsourced reviews to support evolution of successful apps. In: 2015 IEEE International conference on software maintenance and evolution (ICSME), pp 291–300

Palomba F, Salza P, Ciurumelea A, et al (2017) Recommending and localizing change requests for mobile apps based on user reviews. In: 2017 IEEE/ACM 39th International conference on software engineering (ICSE), pp 106–117

Panichella S, Di Sorbo A, Guzman E, et al (2015) How can i improve my app? classifying user reviews for software maintenance and evolution. In: 2015 IEEE International conference on software maintenance and evolution (ICSME), pp 281–290

Pennington J, Socher R, Manning CD (2014) Glove: Global vectors for word representation. In: Proceedings of the 2014 conference on empirical methods in natural language processing (EMNLP), pp 1532–1543

Peters ME, Ammar W, Bhagavatula C, et al (2017) Semi-supervised sequence tagging with bidirectional language models. In: Proceedings of the 55th annual meeting of the association for computational linguistics (Volume 1: Long Papers). Association for Computational Linguistics, Vancouver, Canada, pp 1756–1765

Peters ME, Neumann M, Iyyer M, et al (2018) Deep contextualized word representations. In: Proceedings of the 2018 conference of the North American chapter of the association for computational linguistics: human language technologies, Volume 1 (Long Papers). Association for Computational Linguistics, New Orleans, Louisiana, pp 2227–2237

Qiao Z, Wang A, Abrahams A, et al (2020) Deep learning-based user feedback classification in mobile app reviews. In: Proceedings of the 2020 Pre-ICIS sigdsa symposium

Qiu X, Sun T, Xu Y et al (2020) Pre-trained models for natural language processing: A survey. Sci China Technol Sci 63:1869–1900

Qiu X, Sun T, Xu Y et al (2020) Pre-trained models for natural language processing: A survey. Sci China Technol Sci 63(10):1872–1897

Rajpurkar P, Zhang J, Lopyrev K, et al (2016) SQuAD: 100,000+ questions for machine comprehension of text. In: Proceedings of the 2016 conference on empirical methods in natural language processing. Association for Computational Linguistics, Austin, Texas, pp 2383–2392

Reddy S, Chen D, Manning CD (2019) Coqa: A conversational question answering challenge. Trans Assoc Comput Linguist 7:249–266

Reimers N, Schiller B, Beck T, et al (2019) Classification and clustering of arguments with contextualized word embeddings. In: Proceedings of the 57th annual meeting of the association for computational linguistics. Association for Computational Linguistics, Florence, Italy, pp 567–578

Ren Y, Zhang Y, Zhang M, et al (2016) Improving twitter sentiment classification using topic-enriched multi-prototype word embeddings. In: Thirtieth AAAI conference on artificial intelligence

Rietzler A, Stabinger S, Opitz P, et al (2019) Adapt or get left behind: Domain adaptation through bert language model finetuning for aspect-target sentiment classification. arXiv preprint arXiv:1908.11860

Robbes R, Janes A (2019) Leveraging small software engineering data sets with pre-trained neural networks. In: 2019 IEEE/ACM 41st International conference on software engineering: new ideas and emerging results (ICSE-NIER). IEEE, pp 29–32

Ruder S, Plank B (2017) Learning to select data for transfer learning with Bayesian optimization. In: Proceedings of the 2017 conference on empirical methods in natural language processing. Association for Computational Linguistics, Copenhagen, Denmark, pp 372–382

Rustam F, Mehmood A, Ahmad M et al (2020) Classification of shopify app user reviews using novel multi text features. IEEE Access 8:30234–30244

Santiago Walser R, De Jong A, Remus U (2022) The good, the bad, and the missing: Topic modeling analysis of user feedback on digital wellbeing features. In: Proceedings of the 55th Hawaii International Conference on System Sciences

Sarro F, Al-Subaihin AA, Harman M, et al (2015) Feature lifecycles as they spread, migrate, remain, and die in app stores. In: 2015 IEEE 23rd International requirements engineering conference (RE), pp 76–85

Scalabrino S, Bavota G, Russo B et al (2019) Listening to the crowd for the release planning of mobile apps. IEEE Trans Softw Eng 45(1):68–86

Shah FA, Sirts K, Pfahl D (2018) Simple app review classification with only lexical features. In: ICSOFT, pp 146–153

Shen V, jie Yu T, Thebaut S, et al (1985) Identifying error-prone software-an empirical study. IEEE Trans Softw Eng SE-11(4):317–324

Silva CC, Galster M, Gilson F (2021) Topic modeling in software engineering research. Empir Softw Eng 26(6):1–62

Stanik C, Haering M, Maalej W (2019) Classifying multilingual user feedback using traditional machine learning and deep learning. In: 2019 IEEE 27th International requirements engineering conference workshops (REW), pp 220–226

Subedi IM, Singh M, Ramasamy V, et al (2021) Application of back-translation: A transfer learning approach to identify ambiguous software requirements. In: Proceedings of the 2021 ACM Southeast Conference. Association for Computing Machinery, New York, NY, USA, ACM SE ’21, pp 130–137

Sulistya A, Prana GAA, Sharma A et al (2020) Sieve: Helping developers sift wheat from chaff via cross-platform analysis. Empir Softw Eng 25(1):996–1030

Sun C, Huang L, Qiu X (2019) Utilizing BERT for aspect-based sentiment analysis via constructing auxiliary sentence. In: Proceedings of the 2019 conference of the North American chapter of the association for computational linguistics: human language technologies, Volume 1 (Long and Short Papers). Association for Computational Linguistics, Minneapolis, Minnesota, pp 380–385

Svyatkovskiy A, Deng SK, Fu S et al (2020) IntelliCode Compose: Code Generation Using Transformer. Association for Computing Machinery, New York, NY, USA, pp 1433–1443

Tang AK (2019) A systematic literature review and analysis on mobile apps in m-commerce: Implications for future research. Electron Commer Res Appl 37:100885

Tu M, Huang K, Wang G, et al (2020) Select, answer and explain: Interpretable multi-hop reading comprehension over multiple documents. In: Proceedings of the AAAI conference on artificial intelligence, pp 9073–9080

Van Nguyen T, Nguyen AT, Phan HD, et al (2017) Combining word2vec with revised vector space model for better code retrieval. In: 2017 IEEE/ACM 39th International conference on software engineering companion (ICSE-C). IEEE, pp 183–185

Vaswani A, Shazeer N, Parmar N, et al (2017) Attention is all you need. Advances in neural information processing systems 30

Villarroel L, Bavota G, Russo B, et al (2016) Release planning of mobile apps based on user reviews. In: 2016 IEEE/ACM 38th International Conference on Software Engineering (ICSE). pp 14–24

Von der Mosel J, Trautsch A, Herbold S (2022) On the validity of pre-trained transformers for natural language processing in the software engineering domain. IEEE Trans Softw Eng 1

Wada S, Takeda T, Manabe S, et al (2020) Pre-training technique to localize medical bert and enhance biomedical bert

Wallace E, Feng S, Kandpal N, et al (2019) Universal adversarial triggers for attacking and analyzing NLP. In: Proceedings of the 2019 conference on empirical methods in natural language processing and the 9th international joint conference on natural language processing (EMNLP-IJCNLP). Association for Computational Linguistics, Hong Kong, China, pp 2153–2162

Wang J, Wen R, Wu C et al (2020) Analyzing and Detecting Adversarial Spam on a Large-Scale Online APP Review System. Association for Computing Machinery, New York, NY, USA, pp 409–417

Wang C, Wang T, Liang P, et al (2019) Augmenting app review with app changelogs: An approach for app review classification. In: SEKE, pp 398–512

Wang C, Zhang F, Liang P, et al (2018) Can app changelogs improve requirements classification from app reviews? an exploratory study. In: Proceedings of the 12th ACM/IEEE International symposium on empirical software engineering and measurement. Association for Computing Machinery, New York, NY, USA, ESEM ’18

Wan Y, Zhao W, Zhang H, et al (2022) What do they capture? a structural analysis of pre-trained language models for source code. In: Proceedings of the 44th international conference on software engineering. Association for Computing Machinery, New York, NY, USA, ICSE ’22, pp 2377–2388

Wardhana JA, Sibaroni Y et al (2021) Aspect level sentiment analysis on zoom cloud meetings app review using lda. Jurnal RESTI (Rekayasa Sistem Dan Teknologi Informasi) 5(4):631–638

Wolf T, Debut L, Sanh V, et al (2019) Huggingface’s transformers: State-of-the-art natural language processing. arXiv preprint arXiv:1910.03771

Wu X, Zhang T, Zang L, et al (2019) Mask and infill: Applying masked language model for sentiment transfer. In: Proceedings of the 28th international joint conference on artificial intelligence, IJCAI-19. International joint conferences on artificial intelligence organization, pp 5271–5277

Xu H, Liu B, Shu L, et al (2019) BERT post-training for review reading comprehension and aspect-based sentiment analysis. In: Proceedings of the 2019 conference of the north american chapter of the association for computational linguistics: human language technologies, Volume 1 (Long and Short Papers). Association for Computational Linguistics, Minneapolis, Minnesota, pp 2324–2335

Yang X, Macdonald C, Ounis I (2018) Using word embeddings in twitter election classification. Inf Retrieval J 21(2):183–207

Yang Z, Dai Z, Yang Y, et al (2019) Xlnet: Generalized autoregressive pretraining for language understanding. Advances in neural information processing systems 32

Yang T, Gao C, Zang J, et al (2021) Tour: Dynamic topic and sentiment analysis of user reviews for assisting app release. In: Companion Proceedings of the Web Conference 2021. Association for Computing Machinery, New York, NY, USA, WWW ’21, pp 708–712

Yang Z, Qi P, Zhang S, et al (2018b) HotpotQA: A dataset for diverse, explainable multi-hop question answering. In: Proceedings of the 2018 conference on empirical methods in natural language processing. Association for Computational Linguistics, Brussels, Belgium, pp 2369–2380

Yang C, Xu B, Khan JY, et al (2022) Aspect-based api review classification: How far can pre-trained transformer model go. In: 2022 IEEE International Conference on Software Analysis, Evolution and Reengineering (SANER). IEEE Computer Society

Yatani K, Novati M, Trusty A, et al (2011) Review spotlight: A user interface for summarizing user-generated reviews using adjective-noun word pairs. In: Proceedings of the SIGCHI conference on human factors in computing systems. Association for Computing Machinery, New York, NY, USA, CHI ’11, pp 1541–1550

Yin W, Hay J, Roth D (2019) Benchmarking zero-shot text classification: Datasets, evaluation and entailment approach. In: Proceedings of the 2019 conference on empirical methods in natural language processing and the 9th international joint conference on natural language processing (EMNLP-IJCNLP). Association for Computational Linguistics, Hong Kong, China, pp 3914–3923

Zhang X, Wei F, Zhou M (2019) HIBERT: Document level pre-training of hierarchical bidirectional transformers for document summarization. In: Proceedings of the 57th annual meeting of the association for computational linguistics. Association for Computational Linguistics, Florence, Italy, pp 5059–5069

Zhang T, Xu B, Thung F, et al (2020) Sentiment analysis for software engineering: How far can pre-trained transformer models go? In: 2020 IEEE International conference on software maintenance and evolution (ICSME), pp 70–80

Zhang Z, Yang J, Zhao H (2021) Retrospective reader for machine reading comprehension. In: Proceedings of the AAAI conference on artificial intelligence, pp 14506–14514

Zhao L, Zhao A (2019) Sentiment analysis based requirement evolution prediction. Futur Internet 11(2):52

Zhao W, Guan Z, Chen L et al (2017) Weakly-supervised deep embedding for product review sentiment analysis. IEEE Trans Knowl Data Eng 30(1):185–197

Zhong M, Liu P, Chen Y, et al (2020) Extractive summarization as text matching. In: Proceedings of the 58th annual meeting of the association for computational linguistics. Association for Computational Linguistics, online, pp 6197–6208

Zhou Y, Su Y, Chen T et al (2021) User review-based change file localization for mobile applications. IEEE Trans Softw Eng 47(12):2755–2770

Acknowledgements

This research is supported by a grant from the Natural Sciences and Engineering Research Council of Canada RGPIN-2019-05175.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by: : Raula Gaikovina Kula, Jin Guo, Neil Ernst.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This article belongs to the Topical Collection: Special Issue on Registered Reports

This research is supported by a grant from the Natural Sciences and Engineering Research Council of Canada, RGPIN-2019-05175

Appendix

Appendix

Prediction times of the Prior and PLMs for app issue classification (RQ1). Each model is shown in a colored line. The trend of the prediction time for the datasets is similar for all models, which can be related to the characteristics and size of each dataset. Among all PLMs, XLNET has the highest and ALBERT has the lowest prediction time

Prediction times of the models in binary classification setting (RQ3). The XLNET is not shown in the plot as its prediction times are higher than other models with a large gap. The Custom ALBERT-based models have the lowest scores for \(D_4\), \(D_5\), and \(D_7\) datasets. RoBERTa-based models have higher prediction scores than ALBERT-based ones

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Hadi, M.A., Fard, F.H. Evaluating pre-trained models for user feedback analysis in software engineering: a study on classification of app-reviews. Empir Software Eng 28, 88 (2023). https://doi.org/10.1007/s10664-023-10314-x

Accepted:

Published:

DOI: https://doi.org/10.1007/s10664-023-10314-x